Integrating Network Pharmacology and Chemogenomic Libraries: A Systems Approach to Accelerating Multi-Target Drug Discovery

This article explores the integration of network pharmacology with chemogenomic libraries, a powerful synergy that is reshaping modern drug discovery.

Integrating Network Pharmacology and Chemogenomic Libraries: A Systems Approach to Accelerating Multi-Target Drug Discovery

Abstract

This article explores the integration of network pharmacology with chemogenomic libraries, a powerful synergy that is reshaping modern drug discovery. Aimed at researchers and drug development professionals, it covers the foundational shift from the 'one-drug-one-target' paradigm to a systems-level, multi-target approach. The content provides a methodological guide for constructing and applying chemogenomic libraries within network pharmacology frameworks, supported by real-world case studies in oncology and complex diseases. It also addresses key challenges in data reproducibility, library design, and analytical validation, offering practical troubleshooting and optimization strategies. Finally, the article evaluates advanced computational platforms, AI-driven validation techniques, and comparative analyses of leading tools, presenting a comprehensive resource for developing more effective, multi-targeted therapeutic strategies.

From Single Targets to Complex Networks: The Conceptual Foundation of Integrated Discovery

The traditional 'one-drug-one-target' paradigm, which has dominated drug discovery for decades, is increasingly proving inadequate for addressing complex diseases [1] [2]. This reductionist model, based on developing a single compound to modulate a single, specific target, often fails due to the inherent multifactorial nature of conditions like cancer, neurodegenerative disorders, and metabolic syndromes [1]. The pathogenesis of these diseases involves abnormalities across multiple biological processes, signalling pathways, and genetic networks, characterized by significant heterogeneity and adaptive resistance mechanisms [1]. Consequently, drugs developed under the single-target model have faced high failure rates in clinical trials, estimated at 60–70%, and often demonstrate limited efficacy or unforeseen side effects in real-world applications [2]. This has catalyzed a fundamental shift towards a more holistic, systems-level approach that embraces the complexity of biological systems, leading to the emergence of network pharmacology and chemogenomics as transformative disciplines in modern pharmacology [3] [4] [2].

Foundational Concepts: Network Pharmacology and Chemogenomics

This new paradigm is underpinned by two complementary fields:

- Network Pharmacology: This is a systems biology-based approach that analyzes the complex, multi-layered interactions between drugs, their targets, and associated diseases within biological networks [1] [2]. Instead of viewing a drug's action in isolation, it examines how a compound (or combination of compounds) modulates an entire network of targets and pathways to produce a therapeutic effect [1]. This is particularly suited for understanding the "multicomponent, multitarget, and multilevel" action of therapeutic agents, such as those found in Traditional Chinese Medicine (TCM), and for designing multi-target drugs or drug combinations [1] [5].

- Chemogenomics: This approach leverages large-scale, annotated chemical libraries to systematically probe the function of entire gene families (e.g., kinases, GPCRs) or the human proteome [4]. By screening diverse small molecules against a wide array of biological targets, chemogenomics aims to build comprehensive maps of chemical-to-biological activity space [4] [6]. These maps are invaluable for identifying starting points for drug discovery, understanding polypharmacology, and deconvoluting the mechanisms of action behind phenotypic screening hits [4].

The integration of network pharmacology with chemogenomic libraries creates a powerful framework for rational, multi-target drug discovery and development.

Quantitative Comparison of Pharmacological Paradigms

The table below summarizes the core differences between the traditional and modern pharmacological paradigms.

Table 1: Key Features of Traditional Pharmacology vs. Network Pharmacology

| Feature | Traditional Pharmacology | Network Pharmacology |

|---|---|---|

| Targeting Approach | Single-target | Multi-target / network-level [2] |

| Disease Suitability | Monogenic or infectious diseases | Complex, multifactorial disorders (e.g., cancer, neurodegeneration) [1] [2] |

| Model of Action | Linear (receptor–ligand) | Systems/network-based [2] |

| Risk of Side Effects | Higher (due to off-target effects) | Lower (enables network-aware prediction) [2] |

| Failure in Clinical Trials | Higher (~60-70%) | Lower (due to pre-network analysis) [2] |

| Technological Tools | Molecular biology, pharmacokinetics | Omics data, bioinformatics, graph theory, AI [2] |

| Personalized Therapy | Limited | High potential for precision medicine [2] |

Application Notes & Protocols

This section provides a detailed, actionable methodology for implementing a network pharmacology analysis integrated with a chemogenomic library, as applied to a specific disease context.

Protocol: A Workflow for Target Identification and Mechanism Deconvolution

Table 2: Research Reagent Solutions for Network Pharmacology

| Category | Tool/Database | Functionality |

|---|---|---|

| Drug & Compound Information | DrugBank, PubChem, ChEMBL [4] [2] | Provides drug structures, known targets, and pharmacokinetic data. |

| Gene-Disease Associations | DisGeNET, OMIM, GeneCards [5] [7] [8] | Sources for disease-linked genes, mutations, and functional annotations. |

| Target Prediction | SwissTargetPrediction, PharmMapper [5] [2] | Predicts protein targets for a compound based on its chemical structure. |

| Protein-Protein Interactions (PPI) | STRING, BioGRID [9] [2] | Databases of known and predicted protein-protein functional associations. |

| Pathway Analysis | KEGG, Reactome [4] [5] | Manually curated databases of biological pathways and processes. |

| Network Visualization & Analysis | Cytoscape [5] [7] | Open-source software platform for visualizing and analyzing complex networks. |

Objective: To identify the potential multi-target mechanisms of a natural product, Epimedium, in the treatment of Mild Cognitive Impairment (MCI) and Alzheimer's Disease (AD) [5].

Experimental Workflow:

Step-by-Step Methodology:

Identification of Active Ingredients:

- Retrieve all chemical compounds of the herb "Epimedium" from the TCMSP database (http://lsp.nwu.edu.cn/tcmsp.php) [5].

- Screening Criteria: Apply Absorption, Distribution, Metabolism, and Excretion (ADME) parameters to filter for bioactive compounds. Use an Oral Bioavailability (OB) ≥ 30% and Drug-likeness (DL) ≥ 0.18 as standard screening thresholds [5].

- Output: A finalized list of bioactive ingredients (e.g., Icariin) with their canonical SMILES or SDF structural formats downloaded from PubChem [5].

Target Prediction for Active Ingredients:

- Submit the structures of the active ingredients to target prediction platforms:

- Data Curation: Standardize all predicted target protein names to their official gene symbols using the UniProt database [5].

Acquisition of Disease-Associated Targets:

- Search for genes associated with "mild cognitive impairment" and "Alzheimer's disease" using disease databases [5]:

- GeneCards: A comprehensive database of human genes.

- DisGeNET: A platform integrating data on gene-disease associations.

- OMIM: A catalog of human genes and genetic disorders.

- Combine the results and remove duplicates to create a unified list of MCI/AD-related targets.

- Search for genes associated with "mild cognitive impairment" and "Alzheimer's disease" using disease databases [5]:

Identification of Common Targets and PPI Network Construction:

- Use a Venn analysis tool (e.g., Jvenn) to identify the overlapping targets between the Epimedium compound targets and the MCI/AD disease targets. These are the potential therapeutic targets [5].

- Input the list of common targets into the STRING database (https://string-db.org/) to generate a Protein-Protein Interaction (PPI) network. Set a high confidence score (e.g., >0.900) to ensure high-quality interactions [7].

- Import the PPI network into Cytoscape for visualization and further analysis [5] [7].

Topological Analysis and Hub Target Identification:

- Within Cytoscape, use built-in tools or plugins (e.g., CytoNCA) to perform network topology analysis [7].

- Calculate centrality measures such as Degree Centrality (number of connections), Betweenness Centrality (influence in information flow), and Closeness Centrality [2].

- Output: A ranked list of hub targets (e.g., AKT1, MAPK1, TP53, IL-6, TNF). These are the most influential nodes in the network and are prioritized for further investigation [5] [7] [8].

Gene Ontology (GO) and Kyoto Encyclopedia of Genes and Genomes (KEGG) Enrichment Analysis:

- Submit the list of common targets to functional enrichment tools like the DAVID bioinformatics database or the R package

clusterProfiler[4] [5]. - GO Analysis: Categorizes gene functions into Biological Process (BP), Cellular Component (CC), and Molecular Function (MF). For MCI/AD, expect enrichment in processes like "apoptosis," "inflammatory response," and "response to oxidants" [5] [7].

- KEGG Pathway Analysis: Identifies key signaling pathways that the targets are involved in. Expected pathways in neurodegeneration include the PI3K-Akt, MAPK, HIF-1, FoxO, and TNF signaling pathways [5] [10].

- Visualization: Generate bar plots or bubble charts to visualize the significantly enriched terms and pathways.

- Submit the list of common targets to functional enrichment tools like the DAVID bioinformatics database or the R package

Molecular Docking Validation:

- Objective: To validate the predicted interactions between the top active ingredients (e.g., Quercetin) and the hub target proteins (e.g., AKT1) [5] [7].

- Protocol: a. Retrieve the 3D crystal structure of the target protein from the Protein Data Bank (PDB). b. Prepare the protein and ligand files (e.g., adding hydrogen atoms, assigning charges) using tools like AutoDock Tools. c. Perform molecular docking using software such as AutoDock Vina to predict the binding affinity (reported in kcal/mol) and the binding pose [5] [7]. d. Analyze the results, focusing on compounds with strong binding affinities (e.g., < -5 kcal/mol) and key hydrogen bond or hydrophobic interactions within the protein's active site [7].

Case Study: Mechanism of Scar Healing Ointment (SHO)

A study on Scar Healing Ointment (SHO) exemplifies this protocol's output. Network pharmacology and molecular docking revealed key active ingredients (Quercetin, Beta-sitosterol) and hub targets (AKT1, MAPK1, TP53) in treating hypertrophic scars. The KEGG analysis indicated involvement in apoptosis and pathways like MAPK signaling. Molecular docking showed strong binding affinities, for example, between stigmasterol and MAPK1 (-5.31 kcal/mol) and alloimperatorin and ESR1 (-6.09 kcal/mol), forming multiple hydrogen bonds and supporting the predicted multi-target mechanism [7].

Visualizing the Multi-Target Mechanism of Action

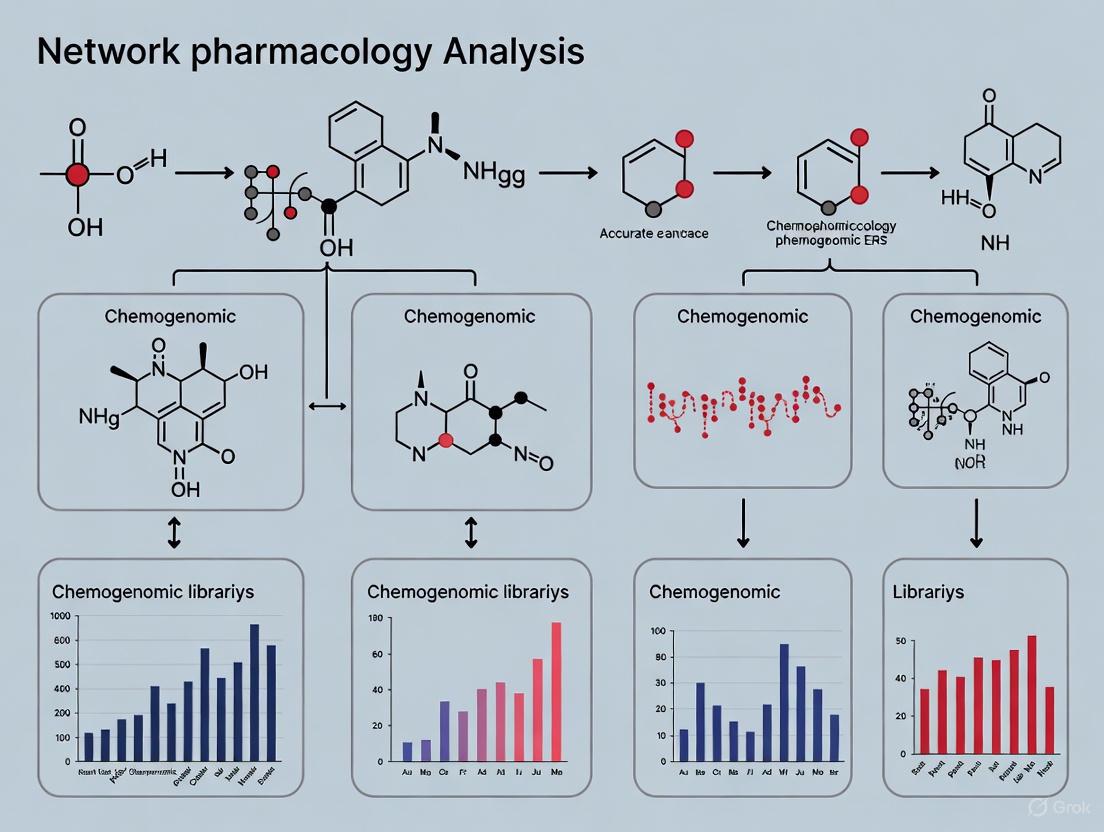

The following diagram synthesizes the findings from network pharmacology studies on natural products like Epimedium and SHO, illustrating how multiple components interact with a network of targets to modulate core signaling pathways.

The paradigm shift from 'one-drug-one-target' to a network-based model represents a fundamental evolution in pharmacology, aligning drug discovery with the complex, interconnected reality of biological systems [2]. The integration of chemogenomic libraries provides the experimental data to populate these networks, while network pharmacology offers the computational framework to interpret them and generate testable hypotheses [4]. This synergistic approach enables the rational design of multi-target therapies and the repurposing of existing drugs, offering a more effective strategy for treating complex diseases with higher success rates and fewer side effects [1] [2].

While challenges remain, including the need for high-quality data and sophisticated computational tools, the future of drug discovery is unequivocally systems-oriented. The continued development of chemogenomic resources, coupled with advances in artificial intelligence and multi-omics data integration, will further solidify network pharmacology as an indispensable pillar of modern, precision medicine [1] [2].

Chemogenomic libraries are systematic collections of well-characterized, target-annotated small molecules designed for probing biological systems. Their primary purpose is to bridge the gap between phenotypic screening and target-based drug discovery by providing a set of chemical probes with defined mechanisms of action. In the context of network pharmacology, which studies drug actions within complex biological networks, these libraries serve as essential tools for deconvoluting complex phenotypic responses and understanding polypharmacology [4] [11]. The fundamental principle of chemogenomics is the systematic screening of targeted chemical libraries against families of functionally related proteins—such as GPCRs, kinases, and proteases—with the dual goal of identifying novel drugs and elucidating the functions of novel drug targets [12].

The strategic value of these libraries lies in their target-focused design. Unlike diverse compound libraries for initial screening, chemogenomic libraries contain molecules where at least one primary target is known. When a compound from such a library produces a phenotypic change in a screening assay, it suggests that its annotated target or targets are involved in the observed biological effect [13]. This approach has gained prominence with the recognition that complex diseases often involve multiple molecular abnormalities, necessitating a systems-level understanding of drug action beyond the traditional "one target—one drug" paradigm [4].

Design Principles and Curation Strategies

Fundamental Design Objectives

The construction of a high-quality chemogenomic library requires balancing multiple, often competing, design objectives. The primary goal is to achieve comprehensive target coverage across biologically relevant protein families while maintaining compound quality and experimental practicality [14]. Key considerations include:

Target Space Definition: Library designers must first define a comprehensive list of proteins associated with biological processes or disease states. For example, in anticancer library development, this involves collating proteins implicated in hallmarks of cancer from resources like The Human Protein Atlas and PharmacoDB [14].

Cellular Potency: Compounds must possess adequate biological activity in cellular environments, not just in biochemical assays, to ensure relevance in phenotypic screening.

Target Selectivity: While perfect specificity is rare, compounds are selected and optimized for narrow target profiles to facilitate cleaner target deconvolution.

Chemical Diversity: Libraries should encompass diverse chemical scaffolds to mitigate structure-specific biases and enable structure-activity relationship analysis [4] [14].

Practical Curation Workflows

The curation of chemogenomic libraries follows rigorous, multi-stage processes to balance target coverage with practical screening constraints:

Table 1: Compound Set Definitions in Library Curation

| Compound Set Type | Definition | Typical Size | Target Coverage |

|---|---|---|---|

| Theoretical Set | In silico collection of all established target-compound pairs | ~300,000 compounds | 100% of defined target space |

| Large-Scale Set | Filtered collection retaining activity and diversity | ~2,200 compounds | ~100% of target space |

| Screening Set | Purchasable, experimentally practical collection | ~1,200 compounds | ~84% of target space |

The process typically begins with a theoretical set encompassing all known compound-target interactions for the defined target space. This initial collection undergoes sequential filtering: first, removing compounds lacking demonstrated cellular activity; second, selecting the most potent representatives for each target; and finally, filtering based on commercial availability and synthetic tractability [14]. Through this process, library size can be reduced 150-fold while maintaining majority target coverage [14].

A critical challenge in library design is managing the inherent polypharmacology of small molecules. Most compounds interact with multiple molecular targets, with drug molecules interacting with an average of six known targets [15]. This reality complicates target deconvolution from phenotypic screens. Libraries can be characterized by their Polypharmacology Index (PPindex), which quantifies overall target specificity, with steeper slopes indicating more target-specific collections [15].

Applications in Phenotypic Screening and Network Pharmacology

Phenotypic Screening and Target Deconvolution

Chemogenomic libraries are particularly valuable in phenotypic drug discovery (PDD), where compounds are screened in complex biological systems without prior knowledge of specific molecular targets. A primary application is target identification for hits discovered in phenotypic screens [4] [15]. When a compound from a chemogenomic library produces a phenotypic effect, researchers can immediately generate hypotheses about which molecular targets may be mediating the observed effect based on the compound's annotation [13].

The integration of chemogenomic libraries with high-content imaging technologies has proven particularly powerful. For example, the Cell Painting assay provides a high-dimensional morphological profile by staining multiple cellular components and extracting thousands of quantitative features [4]. When combined with chemogenomic library screening, this approach can connect specific morphological changes to modulation of particular targets or pathways [4] [16].

Table 2: Chemogenomic Library Applications in Drug Discovery

| Application Area | Specific Use Case | Research Example |

|---|---|---|

| Target Identification | Mode of action determination for traditional medicines | Identifying targets for traditional Chinese medicine and Ayurvedic formulations [12] |

| Pathway Elucidation | Gene discovery in biological pathways | Discovering YLR143W as diphthamide synthetase in yeast [12] |

| Network Pharmacology | Mapping drug-target-pathway-disease relationships | Building system pharmacology networks integrating multiple data sources [4] |

| Drug Repurposing | Identifying new therapeutic uses for existing compounds | Applying approved and investigational compounds to new disease contexts [14] |

Integration with Network Pharmacology

In network pharmacology research, chemogenomic libraries provide the critical experimental link between chemical perturbations and systems-level responses. By testing compounds with known targets in complex assays, researchers can:

- Construct drug-target-pathway-disease networks that reveal how modulating specific nodes affects broader biological systems [4] [11]

- Validate multi-target mechanisms of action, particularly relevant for traditional medicine formulations where multiple compounds act synergistically [12] [11]

- Identify network vulnerabilities in disease states, such as patient-specific cancer vulnerabilities revealed through screening in glioblastoma stem cells [14]

This approach effectively bridges traditional and modern drug discovery by providing a systems-level understanding of complex diseases and treatment mechanisms [11].

Experimental Protocols for Library Implementation

High-Content Phenotypic Profiling Protocol

The following protocol details a live-cell multiplexed screening approach for annotating chemogenomic libraries based on nuclear morphology and cellular health parameters [16]:

1. Cell Preparation and Plating

- Culture adherent cell lines (e.g., U2OS, HEK293T, MRC9) under standard conditions

- Seed cells in collagen-I coated 96-well or 384-well microplates at optimized densities (e.g., 1,500-4,000 cells/well for 96-well format)

- Allow cells to adhere for 12-24 hours under normal growth conditions

2. Compound Treatment

- Prepare compound stocks in DMSO and dilute in cell culture medium

- Apply compounds to cells across desired concentration range (typically 0.1 nM - 10 µM)

- Include DMSO vehicle controls and reference compounds (e.g., camptothecin, staurosporine, digitonin) as system controls

- Perform treatments in technical triplicates for statistical robustness

3. Staining and Live-Cell Imaging

- Prepare staining solution containing:

- 50 nM Hoechst33342 (nuclear stain)

- 20-50 nM MitoTracker Red/DeepRed (mitochondrial content)

- Recommended concentration BioTracker 488 Green Microtubule Cytoskeleton Dye (tubulin network)

- Add staining solution directly to culture medium without washing

- Incubate for 30-60 minutes at 37°C, 5% CO₂

- Acquire images at multiple time points (e.g., 0, 24, 48, 72 hours) using high-content imaging system

4. Image Analysis and Phenotype Classification

- Segment cells and extract morphological features using appropriate software

- Apply machine learning classifier to categorize cells into phenotypic classes:

- Healthy

- Early apoptotic

- Late apoptotic

- Necrotic

- Lysed

- Quantify population distributions and calculate IC₅₀ values for cytotoxicity

5. Data Integration and Annotation

- Correlate nuclear morphology features with overall cellular phenotype

- Generate time-dependent cytotoxicity profiles

- Annotate library compounds with phenotypic profiles and cellular health effects

Figure 1: Experimental workflow for high-content phenotypic profiling of chemogenomic libraries

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Reagents for Chemogenomic Library Implementation

| Reagent Category | Specific Examples | Function & Application |

|---|---|---|

| Live-Cell Dyes | Hoechst33342 (50 nM), MitoTracker Red, BioTracker 488 Microtubule Dye | Multiplex staining of cellular compartments for phenotypic profiling [16] |

| Reference Compounds | Camptothecin, Staurosporine, JQ1, Torin, Paclitaxel | Assay controls representing diverse mechanisms of action and cytotoxicity kinetics [16] |

| Cell Lines | U2OS, HEK293T, MRC9, patient-derived stem cells | Physiologically relevant screening models for phenotypic assessment [14] [16] |

| Data Resources | ChEMBL, KEGG, Gene Ontology, Disease Ontology | Target annotation, pathway analysis, and biological context [4] |

| Analysis Tools | CellProfiler, ScaffoldHunter, Neo4j, ClusterProfiler | Image analysis, chemoinformatics, and network visualization [4] |

Chemogenomic libraries represent a powerful infrastructure at the intersection of chemical biology and systems pharmacology. By providing systematically annotated collections of biologically active compounds, they enable researchers to connect phenotypic observations to molecular targets within complex biological networks. The continued refinement of library design principles—balancing target coverage, compound selectivity, and practical screening considerations—will further enhance their utility in deconvoluting complex biological mechanisms and accelerating the discovery of novel therapeutic strategies.

Network pharmacology represents a paradigm shift in drug discovery, moving away from the traditional "one drug–one target–one disease" model toward a more comprehensive "network-target, multiple-component therapeutics" approach [17]. This emerging discipline is based on the understanding that complex diseases like cancers, neurological disorders, and diabetes are often caused by multiple molecular abnormalities rather than single defects, necessitating therapeutic strategies that modulate multiple targets simultaneously [4]. The core principle of network pharmacology involves evaluating how drugs interact with therapeutic targets, their associated signaling pathways, and the biological functions linked to diseases to achieve beneficial therapeutic effects [17].

The development of network pharmacology is closely tied to advances in systems biology and omics technologies. Historically, drug discovery strategies assumed that a single-target mechanism was the best approach for obtaining target-specific therapeutics. However, both drugs and natural compounds frequently interact with multiple receptors, resulting in polyvalent pharmacological and pleiotropic therapeutic activities through multitarget interactions [17]. This understanding has fundamentally shifted the drug discovery paradigm and created new opportunities for understanding complex therapeutic interventions, including traditional Chinese medicine (TCM) and other natural product-based treatments [18] [19].

Fundamental Principles of Network Pharmacology

Polypharmacology and Network-Based Drug Action

Polypharmacology refers to the ability of drug molecules to modulate multiple targets simultaneously, creating network-wide effects that can produce superior therapeutic outcomes for complex diseases compared to single-target approaches [17]. This principle challenges the traditional expectation that selective ligands act on a single target and recognizes that drug promiscuity can be an intentional strategy rather than a source of unwanted effects [4] [17].

The network perspective reveals that disease phenotypes and drugs act on interconnected biological networks, where complementary mechanisms of action provide more therapeutic benefit with less toxicity and resistance [19]. This approach is particularly valuable for understanding the action of complex mixtures, such as botanical hybrid preparations and traditional Chinese medicine formulations, which inherently function through multi-target mechanisms [17].

The "Network Target" Concept

The "network target" concept forms the theoretical foundation of network pharmacology, proposing that disease phenotypes and drugs act on the same biological networks, pathways, or targets [19]. This framework allows researchers to understand how pharmacological interventions can affect the balance of network targets and subsequently influence disease phenotypes at multiple biological levels.

This concept is implemented through the construction of "drug–target–pathway–disease" relationship networks that integrate multiple data sources, including chemical biology data, pathway information, disease ontologies, and high-content screening data [4]. These networks enable the systematic analysis of how compounds modulate protein targets that may relate to morphological perturbations, phenotypes, and disease outcomes.

Table 1: Core Conceptual Frameworks in Network Pharmacology

| Concept | Definition | Research Application |

|---|---|---|

| Polypharmacology | The ability of a drug to interact with multiple molecular targets | Explains therapeutic effects of multi-target drugs and natural products |

| Network Target | Biological network that serves as the interface between drug action and disease phenotype | Provides framework for analyzing system-wide drug effects |

| Network Medicine | Understanding disease pathophysiology at the systems level | Basis for developing novel drugs that target disease networks rather than individual proteins |

| Multicomponent Therapeutics | Use of multiple active compounds to target network vulnerabilities | Rational design of combination therapies and complex herbal formulations |

Key Methodologies and Experimental Protocols

Construction of Network Pharmacology Databases

The foundation of network pharmacology research lies in the integration of heterogeneous data sources into a unified network database. The following protocol outlines the key steps for constructing a comprehensive network pharmacology database:

Protocol 1: Database Construction for Network Pharmacology Analysis

Compound Data Collection: Extract bioactivity data, molecular structures, and target information from databases such as ChEMBL, which contains standardized bioactivity data for millions of molecules and thousands of targets [4].

Pathway Information Integration: Incorporate pathway databases such as the Kyoto Encyclopedia of Genes and Genomes (KEGG) to map molecular interactions, reactions, and relation networks across various pathway categories including metabolism, cellular processes, and human diseases [4].

Ontology Annotation: Integrate Gene Ontology (GO) resources for functional annotation of proteins, including biological processes, molecular functions, and cellular components. Include Disease Ontology (DO) resources for disease classification and annotation [4].

Morphological Profiling Data: Incorporate high-content screening data such as morphological profiling from Cell Painting assays, which measure hundreds of morphological features across different cellular components to produce detailed cell profiles [4].

Graph Database Implementation: Utilize graph database systems like Neo4j to integrate these diverse data sources, creating nodes for molecules, scaffolds, proteins, pathways, and diseases, with edges representing relationships between them [4].

The resulting database enables complex queries across the integrated biological and chemical space, facilitating the identification of potential therapeutic targets and mechanisms of action.

Development and Application of Chemogenomic Libraries

Chemogenomic libraries represent curated collections of small molecules designed to modulate a diverse panel of drug targets involved in various biological effects and diseases. The following protocol describes the development and application of such libraries:

Protocol 2: Development of a Chemogenomic Library for Phenotypic Screening

Library Design and Curation: Select approximately 5,000 small molecules representing a large and diverse panel of drug targets, ensuring coverage of the druggable genome [4]. This selection should be based on comprehensive system pharmacology networks that integrate drug-target-pathway-disease relationships.

Scaffold Analysis and Diversity Optimization: Use software such as ScaffoldHunter to decompose each molecule into representative scaffolds and fragments through stepwise removal of terminal side chains and rings while preserving characteristic core structures [4]. This ensures structural diversity and appropriate coverage of chemical space.

Target Annotation and Validation: Annotate each compound with its known protein targets using databases such as ChEMBL, and validate these interactions through literature mining and experimental data where available [4].

Phenotypic Screening Application: Apply the chemogenomic library to cell-based phenotypic screening systems, such as those utilizing Cell Painting assays, to identify compounds that induce specific morphological profiles [4].

Target Deconvolution and Mechanism Analysis: Use the network pharmacology platform to identify proteins modulated by hit compounds that correlate with observed morphological perturbations and phenotypic outcomes [4].

Table 2: Essential Research Reagents and Databases for Network Pharmacology

| Resource Category | Specific Resources | Function and Application |

|---|---|---|

| Compound Databases | ChEMBL, TCMSP, HERB, TCMBank | Provide chemical structures, bioactivity data, and target annotations for small molecules and natural products |

| Target and Pathway Databases | KEGG, Gene Ontology, Disease Ontology | Offer pathway maps, functional annotations, and disease classification systems |

| Analysis Tools | ScaffoldHunter, cluster profiler (R package) | Enable scaffold analysis, GO enrichment, KEGG enrichment, and DO enrichment calculations |

| Network Visualization & Database | Neo4j, Cytoscape | Facilitate network construction, visualization, and complex querying of biological relationships |

| Experimental Data | Broad Bioimage Benchmark Collection (BBBC) | Provide morphological profiling data from high-content screening experiments |

Network Analysis and Target Identification

The core analytical process in network pharmacology involves the construction and analysis of biological networks to identify key targets and mechanisms:

Protocol 3: Network Analysis for Target Identification and Mechanism Deconvolution

Network Construction: Map disease phenotypic targets and drug targets together in a biomolecular network, establishing association mechanisms between diseases and drugs [19].

Enrichment Analysis: Perform GO enrichment, KEGG enrichment, and DO enrichment analyses using tools like the R package cluster profiler with appropriate adjustment methods (e.g., Bonferroni) and p-value cutoffs (e.g., 0.1) [4].

Network Target Identification: Analyze the network to identify key nodes and interaction patterns, focusing on network targets where disease phenotypes and drugs converge on the same networks, pathways, or targets [19].

Multi-omics Integration: Incorporate data from genomics, transcriptomics, proteomics, and metabolomics to validate network predictions and provide multi-layer evidence for proposed mechanisms [17] [20].

Experimental Validation: Design in vitro and in vivo experiments to validate predictions, using technologies such as molecular interaction assays (biofilm interference, plasma resonance, nano-liquid chromatography-mass spectrometry) and high-throughput screening approaches [18] [20].

Visualization of Network Pharmacology Workflows

The following diagrams illustrate key workflows and relationships in network pharmacology research, created using Graphviz DOT language with adherence to the specified color contrast requirements.

Chemogenomic Library Screening Workflow

Drug-Target-Pathway-Disease Network Relationships

Applications in Drug Discovery and Development

Network pharmacology has transformed multiple areas of drug discovery and development, particularly in the study of complex therapeutic interventions:

Understanding Traditional Medicine Mechanisms

Network pharmacology has become an essential tool for understanding the mechanisms of traditional medicine systems, particularly traditional Chinese medicine (TCM). The holistic, multi-target nature of TCM aligns perfectly with the network pharmacology approach [18] [19]. Through network analysis, researchers can identify key active ingredients in complex herbal formulations, predict their targets, and elucidate their mechanisms of action across multiple biological pathways [19] [20].

This approach has been successfully applied to study TCM interventions for various conditions, including COVID-19, where network pharmacology analyses predicted that the therapeutic effects of Chinese herbs are related to hypoxia response, immune/inflammation reactions, and viral infection regulation [18]. Similar approaches have illuminated the mechanisms of TCM formulations for ulcerative colitis, revealing multi-component, multi-target, and multi-pathway action mechanisms [20].

Drug Repurposing and Combination Therapy Design

Network pharmacology enables systematic drug repurposing by identifying new therapeutic applications for existing drugs based on their network properties [17]. By analyzing the position of drug targets within disease networks, researchers can identify unexpected connections between drugs and diseases, leading to new therapeutic indications.

Additionally, network pharmacology provides a rational framework for designing combination therapies that target multiple network vulnerabilities simultaneously [19]. This approach is particularly valuable for complex multifactorial diseases whose pathogenesis is modulated by diverse biological processes and molecular functions, where single-target therapies have shown limited efficacy [19].

Challenges and Future Perspectives

Despite significant advances, network pharmacology faces several challenges that must be addressed to fully realize its potential:

Technical and Methodological Challenges

The reproducibility of chemical composition and its influence on pharmacological activity remains a significant challenge, particularly for natural products and complex herbal mixtures [17]. Issues related to quality control, standardization, and optimal dosing also present obstacles in determining reproducible quality, safety, and efficacy [17].

Methodological challenges include selection of appropriate databases and algorithms, potential biases in data collection methods, and the need for standardized research protocols [19] [20]. The rapid evolution of databases and analysis tools also creates issues with version control and comparability across studies conducted at different times.

Integration with Emerging Technologies

The future development of network pharmacology is closely tied to integration with emerging technologies, particularly artificial intelligence and multi-omics approaches [17]. Integrative omics network pharmacology and AI-assisted analysis of natural products are opening new avenues for:

- Elucidation of the mechanisms of action of medicinal plants [17]

- Understanding synergistic therapeutic actions of complex bioactive components [17]

- Enhancing the quality and efficiency of natural product drug research [17]

- Predicting drug-herb interactions, adverse events, and potential toxic effects [17]

As these technologies mature, network pharmacology is poised to become an increasingly powerful paradigm for drug discovery, potentially transforming how we develop therapeutics for complex diseases.

The integration of network pharmacology, chemogenomic libraries, and machine learning is revolutionizing the discovery of therapeutic agents. This paradigm synergistically combines the holistic, multi-target perspective of network pharmacology with the comprehensive compound profiling of chemogenomics and the predictive power of computational intelligence. This application note details how this integrated framework accelerates the identification of novel drug candidates, validates the mechanisms of complex multi-component therapies, and provides detailed protocols for implementing this powerful discovery engine in modern drug development research.

Traditional "one drug–one target–one disease" paradigms have demonstrated limited efficacy for complex multifactorial diseases whose pathogenesis is modulated by diverse biological processes and various molecular functions [21]. Network pharmacology (NP) addresses this limitation by providing a systems-level understanding of drug actions through the lens of biological networks [11]. When combined with the structured compound libraries of chemogenomics and the pattern recognition capabilities of machine learning (ML), researchers gain an unprecedented capacity to identify and validate multi-target therapeutic strategies.

This synergistic integration is particularly valuable for elucidating the mechanisms of complex therapeutic interventions, such as Traditional Chinese Medicine (TCM), which are characterized by multi-component, multi-targeted, and integrative efficacy [22] [21]. The following sections present quantitative evidence of this synergy, detailed experimental protocols, and visualization of the integrated workflow that constitutes this powerful discovery engine.

Quantitative Evidence of Synergistic Value

Table 1: Performance Metrics of Machine Learning Models in Senotherapeutic Discovery

| Machine Learning Model | Accuracy | Specificity | Precision | Recall | F1-Score | Kappa |

|---|---|---|---|---|---|---|

| Random Forest (RF) | 0.88 | 0.92 | 0.90 | 0.92 | 0.89 | 0.76 |

| Support Vector Machine (SVM) | 0.76 | 0.71 | 0.71 | 0.83 | 0.76 | 0.54 |

| K-Nearest Neighbors (KNN) | 0.76 | 0.88 | 0.88 | 0.67 | 0.76 | 0.53 |

Data adapted from a study screening 65,339 compounds for senotherapeutic activity, where the Random Forest model demonstrated superior performance [23].

Table 2: Network Pharmacology Output in Disease Mechanism Studies

| Disease Model | Active Compounds Identified | Potential Targets | Key Signaling Pathways Identified |

|---|---|---|---|

| Immune Thrombocytopenia (ITP) [24] | 60 | 85 | PI3K-Akt signaling pathway |

| Rheumatoid Arthritis (RA) [22] | 16 | 52 | IL-17/NF-κB signaling |

| Radiation Pneumonitis (RP) [25] | 18 | 65 | AGE-RAGE, IL-17, HIF-1, NF-κB |

| Alzheimer's Disease (AD) [26] | 6 | 42 | IL-17, NF-κB, Neuroinflammatory pathways |

Experimental Protocols

Protocol 1: Integrated Network Pharmacology and Machine Learning Workflow

Purpose: To systematically identify potential therapeutic compounds from large chemogenomic libraries using network pharmacology and machine learning.

Materials:

- Compound libraries (e.g., TCMSP, DrugBank, PubChem)

- Disease target databases (e.g., GeneCards, DisGeNET, OMIM)

- Protein-protein interaction databases (e.g., STRING)

- Computational tools: R package, Python with scikit-learn, Cytoscape

Procedure:

Disease Target Identification:

Active Compound Screening:

- Screen chemogenomic libraries for bioactive compounds using ADME criteria:

- For specialized applications (e.g., senotherapeutics), apply Lipinski's Rule of Five to filter compounds with desirable medicinal chemistry properties [23].

Target Prediction and Network Construction:

- Predict compound targets using SwissTargetPrediction and TargetNet with probability thresholds (≥0.4 for SwissTargetPrediction, ≥0.8 for TargetNet) [22].

- Identify overlapping targets between compounds and disease.

- Construct Protein-Protein Interaction (PPI) networks using STRING database with confidence score >0.4 [25].

- Visualize and analyze networks using Cytoscape, identifying hub targets with CytoHubba plugin [24] [26].

Machine Learning Classification:

- Calculate molecular descriptors for all compounds (e.g., 39 descriptors as used in senotherapeutic study) [23].

- Train multiple ML models (Random Forest, SVM, KNN) using known active and inactive compounds as training data.

- Evaluate models using accuracy, specificity, precision, recall, F1-score, and Kappa value.

- Select compounds classified as active by multiple models to enhance robustness [23].

Experimental Validation:

Protocol 2: Mechanism Validation for Multi-Target Therapies

Purpose: To experimentally validate the mechanisms of action identified through network pharmacology analysis.

Materials:

- Animal model of disease (e.g., ITP mouse model, collagen-induced arthritis)

- Test compounds or herbal extracts

- Western blot equipment and reagents

- ELISA kits for cytokine detection

- Immunohistochemistry supplies

Procedure:

In Vivo Therapeutic Efficacy Assessment:

- Establish disease model (e.g., ITP model induced by anti-platelet serum injection) [24].

- Administer test compounds (e.g., YQZY decoction at 1.325 g/kg) for predetermined duration [24].

- Collect blood samples for hematological analysis (e.g., platelet counts) [24].

- Harvest tissue samples (spleen, joints) for histomorphological analysis (HE staining) [24] [22].

Molecular Mechanism Validation:

Pathway Confirmation:

- Validate key signaling pathways (e.g., PI3K-Akt, IL-17/NF-κB) through protein expression analysis of multiple pathway components.

- Compare pathway activation in treatment groups versus disease controls.

Visualization of the Integrated Workflow

Integrated Discovery Engine Workflow

Table 3: Essential Research Reagent Solutions for Integrated Pharmacology Research

| Resource Category | Specific Tools & Databases | Primary Function | Key Features |

|---|---|---|---|

| Compound Databases | TCMSP, TCMID, HERB, TCMBank, PubChem | Bioactive compound identification & ADME screening | OB, DL parameters; compound-structure relationships |

| Target Databases | SwissTargetPrediction, TargetNet, DrugBank | Prediction of compound-protein interactions | Probability scores; species-specific targeting |

| Disease Genetics | GeneCards, DisGeNET, OMIM, CTD | Disease-associated target identification | Relevance scores; gene-disease relationships |

| Network Analysis | STRING, Cytoscape, CytoHubba | PPI network construction & hub target identification | Confidence scores; topological analysis |

| Pathway Analysis | KEGG, GO, DAVID | Functional enrichment analysis | Pathway mapping; biological process annotation |

| Computational Tools | R (TCMNP package), Python ML libraries | Data processing, visualization & machine learning | Integrated workflows; customized analytics |

| Validation Tools | AutoDock, GCNConv-based deep learning | Molecular docking & binding affinity prediction | Binding energy calculation; interaction visualization |

The integration of network pharmacology with chemogenomic libraries and machine learning represents a paradigm shift in therapeutic discovery. This synergistic approach provides a powerful framework for addressing the complexity of human diseases, particularly for understanding multi-target interventions like traditional medicines. The protocols and resources detailed in this application note provide researchers with a structured methodology to leverage this integrated discovery engine, accelerating the identification and validation of novel therapeutic strategies with enhanced efficiency and predictive power.

Protein-protein interaction (PPI) networks are fundamental maps of the physical interactions between proteins within a cell, forming the backbone of cellular signaling, metabolic pathways, and structural complexes [27]. These networks provide a systems-level framework for understanding how biological processes are organized and controlled. In the context of disease, perturbations in PPI networks—caused by mutations affecting binding interfaces or causing dysfunctional allosteric changes—can trigger the onset and progression of complex multi-genic diseases [27] [28]. The study of PPI networks has therefore become indispensable for deciphering the molecular mechanisms underlying healthy and diseased states, facilitating the development of effective diagnostic and therapeutic strategies [27].

PPI networks are characterized by their scale-free topology, meaning most proteins have few connections, while a small subset of highly connected "hub" proteins play critical roles in network stability and function [27]. The structure and dynamics of these networks are frequently disturbed in complex diseases such as cancer, autoimmune disorders, and neurodegenerative conditions, suggesting that the networks themselves, rather than individual molecules, represent promising therapeutic targets [27] [28].

Analytical Framework: Network Topology and Disease Modules

The analysis of PPI network structure (topology) provides crucial insights into cellular evolution, molecular function, and network stability [27]. Key topological features help identify functionally relevant regions and disease-associated modules.

Table 1: Key Topological Indices for PPI Network Analysis

| Term | Definition | Biological Significance |

|---|---|---|

| Node (Vertices) | Each protein in the network [27] | Represents a functional entity in the cell. |

| Edge (Link) | Physical or functional interaction between proteins [27] | Represents a functional relationship or complex formation. |

| Hub | A "high-degree" node with many connections [27] | Often essential proteins; their disruption can have severe consequences [27]. |

| Modules | Groups of sub-networks with high internal connectivity [27] | Often correspond to functional units (e.g., protein complexes, pathways). |

| Degree (k) | The number of connections a node has [27] | Measures how connected a protein is within the network. |

| Betweenness Centrality | Measures how often a node occurs on shortest paths between others [27] | Identifies proteins that connect different modules. |

| Clustering Coefficient (C) | Measures the tendency of a node's neighbors to connect to each other [27] | Indicates the presence of tightly-knit groups or complexes. |

Disease modules are localized regions within the broader PPI network that are enriched for proteins associated with a specific pathological condition [27]. The dynamic modular structure of PPI networks means that these modules can change activity across different biological states, such as during disease progression or in response to treatment [27]. Identifying these modules is a primary goal of network pharmacology, as it allows for the understanding of complex disease mechanisms and the identification of multi-target intervention strategies.

Established Protocols for Mapping PPI Networks

Tandem Affinity Purification Coupled with Mass Spectrometry (TAP/MS)

The following protocol, modified for an SFB-tag system, is designed for high-confidence identification of protein interactors in mammalian cells [29].

Principle: This method uses a two-step purification process with a triple tag (S-, 2×FLAG-, and Streptavidin-Binding Peptide (SBP)) to isolate protein complexes with high specificity, significantly reducing nonspecific bindings compared to one-step affinity purification [29].

Table 2: Research Reagent Solutions for SFB-TAP/MS

| Reagent / Material | Function in the Protocol |

|---|---|

| cSFB-tagged Plasmid | Plasmid construct encoding the bait protein with a C-terminal S-2×FLAG-SBP tag for expression in cells [29]. |

| HEK293T Cells | A commonly used human cell line with high transfection efficiency for expressing the SFB-tagged bait protein [29]. |

| Streptavidin Beads | Binding matrix for the first purification step, capturing the SBP-tagged bait protein and its complexes [29]. |

| S Protein Beads | Binding matrix for the second purification step, capturing the S-tagged bait protein, enabling tandem purification [29]. |

| Biotin Elution Buffer | Mild elution condition for releasing the protein complex from Streptavidin beads without denaturing proteins [29]. |

| Mass Spectrometer | Instrument for identifying the individual proteins ("preys") within the purified complex [29]. |

Step-by-Step Protocol:

Plasmid Preparation (Timing: ~1 week)

- Construct a plasmid encoding your protein of interest (bait) fused to a C-terminal SFB tag.

- Amplify the gene from cDNA using Phusion DNA polymerase with primers containing attB1 and attB2 sequences for Gateway cloning [29].

- The choice of N- or C-terminal tagging should be validated to ensure correct subcellular localization of the bait protein, as tags can interfere with signal peptides [29].

Stable Cell Line Generation (Timing: ~2-3 weeks)

- Transfect HEK293T cells (or other suitable cell lines like HepG2, Sh-SY5Y) with the constructed plasmid.

- Select and expand stably expressing clones using appropriate antibiotics [29].

Tandem Affinity Purification (Timing: ~1 day)

- Cell Lysis: Lyse the stable cells under non-denaturing conditions to preserve protein complexes.

- First Purification: Incubate the cell lysate with Streptavidin beads. Wash the beads under denaturing conditions to remove weakly bound, nonspecific proteins.

- Elution: Elute the bound complexes using a biotin-containing buffer.

- Second Purification: Incubate the eluate from the first step with S protein beads. Perform washes to further increase specificity.

- Final Elution: Elute the purified protein complexes from the S beads for downstream analysis [29].

Mass Spectrometry and Data Analysis (Timing: ~1 week)

- Subject the purified protein sample to tryptic digestion and LC-MS/MS analysis.

- Identify interacting proteins ("preys") by sequencing the resulting peptides and searching protein databases.

- Perform at least two biological replicates for each bait protein to ensure high-confidence identification of bona fide interactors [29].

Workflow for SFB-TAP/MS PPI Mapping

Computational Analysis of PPI Data

After identifying potential interactors, computational tools are used to build and analyze the PPI network.

Network Construction:

Topological Analysis:

Module and Pathway Enrichment:

- Use functional enrichment tools (e.g., FunRich, Reactome Pathway) to identify biological pathways and processes that are statistically over-represented in your network module [30].

- This step translates the list of proteins into biologically meaningful insights, highlighting potential disease-relevant modules.

Computational Analysis of PPI Data

Integration with Network Pharmacology and Drug Discovery

The true power of PPI networks is realized when they are integrated into a network pharmacology framework. This approach moves beyond the "one target, one drug" model to a "network targets, multicomponent" paradigm, which is particularly suited for treating complex diseases [11] [30]. A key application is understanding the mechanism of traditional medicines, like Compound Fuling Granule (CFG) used for ovarian cancer, which inherently function through multi-target mechanisms [30].

Application Workflow in Network Pharmacology:

- Target Identification: Establish a PPI network related to a specific disease from databases (e.g., DisGeNET, TTD) and experimental data (e.g., TAP/MS) [30].

- Network Analysis: Isolate a disease module from the broader PPI network and identify its key hub and bottleneck proteins.

- Molecular Docking: Screen chemogenomic libraries by computationally docking small molecules into the three-dimensional structures of key targets within the disease module to evaluate binding affinity and potential efficacy [30]. Tools like PLIP can further analyze and visualize these interactions, including how drugs might mimic native protein-protein interactions [31].

- Multi-Target Strategy: Select a set of compounds that collectively modulate multiple key nodes in the disease module to disrupt the pathological network state effectively and robustly [11].

Table 3: Key Tools and Databases for Network Pharmacology

| Tool/Database | Type | Primary Function in Analysis |

|---|---|---|

| STRING | Database | Repository of known and predicted PPIs for network construction [11] [30]. |

| Cytoscape | Software Platform | Visualization and topological analysis of PPI networks [11] [30]. |

| DrugBank | Database | Information on drug targets and drug-like compounds for repurposing [11]. |

| PharmMapper | Computational Tool | Target prediction for active small molecules [30]. |

| PLIP (Protein-Ligand Interaction Profiler) | Computational Tool | Analyzes non-covalent interactions at molecular interfaces, useful for understanding how drugs mimic native PPIs [31]. |

| TCMSP | Database | Traditional Chinese Medicine systems pharmacology database for herbal compounds [30]. |

| Reactome Pathway | Database | Pathway enrichment analysis for functional interpretation [30]. |

Protein-protein interaction networks provide a foundational framework for understanding the molecular architecture of complex diseases. By mapping these networks experimentally with techniques like TAP/MS and analyzing them with computational tools, researchers can delineate critical disease modules. Integrating this knowledge with network pharmacology creates a powerful paradigm for drug discovery, enabling the rational design of multi-target therapies that can be sourced from chemogenomic libraries. This systems-level approach moves therapeutic intervention from single targets to network-wide rebalancing, offering a promising strategy for tackling complex, multi-genic diseases.

Building and Applying Integrated Workflows: A Step-by-Step Methodology

Within the paradigm of network pharmacology, understanding the complex polypharmacology of small molecules is paramount. A chemogenomic library is an indispensable resource for this, consisting of annotated chemical compounds designed to modulate a wide range of protein targets. When integrated with biological pathway and network data, such a library enables the systematic investigation of chemical effects across the proteome, facilitating target deconvolution, drug repurposing, and mechanism-of-action analysis [4] [32]. This application note provides a detailed protocol for the construction of a high-quality chemogenomic library, with a specific focus on source selection, rigorous data curation, and comprehensive scaffold analysis to ensure chemical diversity and biological relevance.

Source Selection and Data Acquisition

The first critical step involves aggregating chemical and biological data from robust, publicly available repositories. The selection of appropriate sources dictates the breadth and quality of the resulting library. The following table summarizes the recommended primary data sources.

Table 1: Key Data Sources for Chemogenomic Library Construction

| Data Type | Source | Key Information Provided | Utility in Library Construction |

|---|---|---|---|

| Bioactivity Data | ChEMBL [4] [33] [32] | Standardized bioactivity data (e.g., IC50, Ki), molecular structures, target information. | Primary source for compound-target interactions and building blocks for the library. |

| Pathway Information | Kyoto Encyclopedia of Genes and Genomes (KEGG) [4] | Manually drawn pathway maps representing molecular interactions, reactions, and relation networks. | Contextualizes targets within biological pathways and disease mechanisms. |

| Protein-Protein Interactions | SIGNOR [32] | Causal relationships between proteins, including activation, inhibition, and post-translational modifications. | Enables the construction of network pharmacology models around compound targets. |

| Morphological Profiles | Cell Painting (e.g., BBBC022 dataset) [4] | High-content imaging data quantifying cellular morphological features after chemical perturbation. | Provides phenotypic annotation for compounds, linking chemistry to phenotypic outcomes. |

| Gene-Disease Associations | Human Disease Ontology (DO) [4] | A structured, controlled vocabulary for human disease terms. | Annotates targets and compounds with their relevance to specific human diseases. |

The ChEMBL database serves as the foundational source for compounds and their bioactivities. It is critical to filter for records with defined bioassay data and, for initial simplicity, focus on human targets. The integration of pathway and protein-protein interaction (PPI) data from KEGG and SIGNOR, respectively, transforms a simple compound-target list into a rich network pharmacology platform [4] [32]. Furthermore, incorporating phenotypic profiling data from sources like the Cell Painting assay provides an independent layer of functional annotation, which is invaluable for phenotypic screening campaigns [4].

Data Curation and Standardization Workflow

The accuracy of a chemogenomic library is heavily dependent on rigorous data curation. Errors in chemical structures or bioactivities propagate through to flawed network pharmacology models and predictions. The following workflow outlines an integrated chemical and biological data curation protocol, adapted from best practices in the field [33].

Diagram 1: Integrated chemical and biological data curation workflow. The process ensures both structural integrity and biological data consistency.

Chemical Structure Curation

- Removal of Problematic Records: Filter out inorganic, organometallic compounds, mixtures, and large biologics, as standard molecular descriptors are not designed to handle them [33].

- Structural Cleaning: Use software like RDKit or ChemAxon JChem to detect and correct valence violations, normalize chemotypes, and standardize tautomeric forms using consistent rules [33]. Inconsistent tautomer representation is a common source of error in chemical databases.

- Stereochemistry Verification: Manually inspect compounds with multiple stereocenters, as errors are frequent. Cross-reference with databases like PubChem or ChemSpider, which offer crowd-curated structure verification [33].

Biological Data Curation

- Processing of Chemical Duplicates: Identify and merge records for the same compound tested in the same assay, which may have different internal IDs. Calculate a median bioactivity value (e.g., pIC50) for each unique compound-target pair to create a single, robust data point [33] [32].

- Flagging Suspicious Entries: Apply cheminformatics analyses to identify and flag outliers, such as compounds with highly similar structures but vastly different bioactivities, which may indicate an erroneous measurement [33].

Scaffold and Chemotype Analysis

Scaffold analysis decomposes complex molecular structures into core frameworks, enabling the assessment and enforcement of chemical diversity within the library. It also helps identify chemotypes—common chemical patterns recognized by target families—which can be used to predict novel drug-target interactions [32].

Scaffold Generation and Classification

Two complementary methodologies are recommended for scaffold analysis:

- HierS Algorithm: This algorithm, implemented in tools like ScaffoldGraph, systematically decomposes molecules. It removes all side chains and linkers to generate "basis scaffolds" (core ring systems) and then recursively removes individual ring systems to create a hierarchical tree of "superscaffolds" that retain linker connectivity [34]. This is particularly useful for scaffold hopping, as it generates a wide range of structurally related cores.

- Bemis-Murcko Scaffolds: A widely used method to extract a molecular framework by removing all terminal side chains and retaining only the ring systems and the linkers that connect them [32]. This provides a consistent way to group compounds by their central core.

Diagram 2: Hierarchical scaffold generation process using the HierS algorithm, producing both basis scaffolds and superscaffolds.

Application in Library Enumeration and Scaffold Hopping

Scaffold analysis is not merely for classification. It is a powerful tool for library design.

- Diversity Filtering: After generating scaffolds for all candidate compounds, filter the library to ensure broad coverage of different scaffold types. This avoids over-representation of common chemotypes and ensures the library probes diverse chemical space [4].

- Scaffold Hopping: Tools like ChemBounce can be used to generate novel compounds for the library via scaffold hopping. Given a known active molecule, it identifies its core scaffold and replaces it with a candidate from a large, synthesis-validated fragment library (e.g., derived from ChEMBL). The resulting molecules are then filtered for similarity to the original via Tanimoto and electron shape similarity to preserve pharmacophores and potential biological activity [34]. This is a practical method to expand the library with patentable, novel chemotypes with high synthetic accessibility.

Integration and Platform Implementation

To be functionally useful for network pharmacology analysis, the curated compounds, scaffolds, targets, and pathways must be integrated into an investigational platform. A graph database is the ideal structure for this purpose, as it natively represents the complex network of relationships between these entities [4] [32].

Platforms like SmartGraph utilize Neo4j to integrate this data, creating nodes for compounds, patterns (scaffolds), proteins, pathways, and diseases. Edges represent relationships such as "compound-haspattern," "compound-targets-protein," and "protein-participatesin-pathway" [32]. This allows for powerful queries, such as finding all shortest paths in the network between a set of compound hits from a phenotypic screen and a disease-associated protein, thereby generating testable hypotheses for their mechanism-of-action [32].

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions and Software Tools

| Item / Software | Function / Application | Key Features / Notes |

|---|---|---|

| ChEMBL Database | Primary source of bioactivity data and molecular structures. | Manually curated, standardized bioactivities; foundational for library building [4] [33]. |

| RDKit | Open-source cheminformatics toolkit. | Used for structural cleaning, descriptor calculation, fingerprint generation, and scaffold analysis [33]. |

| ScaffoldHunter | Software for interactive exploration of scaffold hierarchies. | Generates a hierarchical tree of scaffolds from a compound set, visualizing chemical space [4]. |

| ScaffoldGraph / HierS | Framework for scaffold analysis and decomposition. | Implements the HierS algorithm to generate basis scaffolds and superscaffolds systematically [34]. |

| Neo4j | Graph database management system. | Platform for integrating and querying the chemogenomic library as a network pharmacology knowledge base [4] [32]. |

| ChemBounce | Open-source scaffold hopping tool. | Generates novel compounds by replacing core scaffolds while preserving pharmacophores via shape similarity [34]. |

| Cell Profiler | Open-source software for high-content image analysis. | Processes Cell Painting data to extract morphological profiles for phenotypic annotation of compounds [4]. |

Network pharmacology represents a paradigm shift in drug discovery, moving from a "one target–one drug" model to a systems-level "one drug–multiple targets" approach that more accurately reflects the complexity of biological systems and polypharmacology of effective therapeutics [4]. This transition is particularly relevant for chemogenomic library research, where defining the relationship between chemical structures, their protein targets, and resulting phenotypic outcomes is paramount. The fundamental challenge in modern drug discovery lies in effectively integrating heterogeneous data sources—including OMICS, pathway information, and phenotypic profiles—to build comprehensive networks that predict drug behavior and therapeutic potential [11].

The integration of these diverse data types enables researchers to bridge the gap between phenotypic screening, which identifies observable biological effects without requiring prior knowledge of molecular targets, and target-based approaches, which focus on specific protein interactions [4]. This protocol details established methodologies for constructing unified network pharmacology frameworks that combine these disparate data sources, with particular emphasis on applications within chemogenomic library research and validation.

Key Concepts and Definitions

Table 1: Core Concepts in Heterogeneous Data Integration for Network Pharmacology

| Concept | Definition | Application in Network Pharmacology |

|---|---|---|

| Network Pharmacology | Interdisciplinary approach integrating systems biology, omics technologies, and computational methods to analyze multi-target drug interactions [11] | Provides framework for understanding complex drug-target-disease relationships |

| Chemogenomic Library | Collections of selective small molecules modulating protein targets across the human proteome, involved in phenotype perturbation [4] | Enables systematic screening against protein families; bridges chemical and biological spaces |

| Phenotypic Screening | Drug discovery approach observing compound effects on cells or tissues without requiring prior knowledge of molecular targets [4] | Identifies biologically active compounds; requires subsequent target deconvolution |

| Pathway Enrichment Analysis | Statistical technique identifying biological pathways over-represented in a gene list more than expected by chance [35] | Reveals mechanistic insights from OMICS data; connects targets to biological processes |

| Patient Similarity Networks (PSN) | Graph structures where patients are nodes and edges represent similarity based on clinical or biomolecular features [36] | Enables patient stratification and predictive modeling from heterogeneous health data |

| Heterogeneous Data Integration | Methodologies combining diverse data sources (multi-omics, clinical, imaging) into unified analytical frameworks [37] | Leverages complementary information from multiple data types for comprehensive analysis |

Experimental Protocols

Protocol 1: Building a System Pharmacology Network for Phenotypic Screening

This protocol outlines the construction of a comprehensive network integrating drug-target-pathway-disease relationships with morphological profiling data for target identification and mechanism deconvolution in phenotypic screening campaigns [4].

Materials and Reagents

Table 2: Essential Research Reagents and Computational Tools

| Item | Function/Application | Example Sources |

|---|---|---|

| ChEMBL Database | Provides bioactivity, molecule, target, and drug data from literature [4] | https://www.ebi.ac.uk/chembl/ |

| Cell Painting Assay | High-content imaging-based phenotypic profiling using fluorescent dyes [4] | Broad Bioimage Benchmark Collection (BBBC022) |

| KEGG Pathway Database | Manually drawn pathway maps for metabolism, cellular processes, human diseases [4] | https://www.kegg.jp/ |

| Gene Ontology (GO) | Computational models of biological systems with standardized terms [4] | http://geneontology.org/ |

| Disease Ontology (DO) | Machine-interpretable classification of human disease terms [4] | http://www.disease-ontology.org/ |

| Neo4j | NoSQL graph database for integrating heterogeneous data sources [4] | https://neo4j.com/ |

| ScaffoldHunter | Software for molecular scaffold analysis and decomposition [4] | Open-source tool |

| Cytoscape | Network visualization and analysis software [38] | http://cytoscape.org/ |

| R package clusterProfiler | Calculates GO and KEGG enrichment statistics [4] | Bioconductor package |

| STRING Database | Protein-protein interaction network construction [39] | https://string-db.org/ |

Step-by-Step Methodology

Step 1: Data Collection and Curation

- Obtain bioactivity data from ChEMBL database (version 22 or newer), including compounds with defined bioactivities (Ki, IC50, EC50) and their protein targets [4]

- Acquire morphological profiling data from public repositories such as the Broad Bioimage Benchmark Collection (BBBC022), containing approximately 1,779 morphological features from Cell Painting assays [4]

- Retrieve pathway information from KEGG (Release 94.1 or newer) and gene functional annotations from Gene Ontology (latest release) [4]

- Download disease-gene associations from Disease Ontology (release 45 or newer) [4]

Step 2: Data Preprocessing

- For morphological profiling data, calculate average values for each feature across technical replicates (typically 1-8 replicates per compound) [4]

- Filter morphological features to retain only those with non-zero standard deviation and inter-feature correlation less than 95% to reduce dimensionality [4]

- For compound data, extract molecular scaffolds using ScaffoldHunter software with deterministic rules: (i) remove terminal side chains while preserving double bonds attached to rings; (ii) iteratively remove one ring at a time until single ring remains [4]

- Standardize target protein identifiers to official gene symbols using UniProt database, limiting species to "Homo sapiens" where appropriate [39]

Step 3: Network Construction and Integration

- Implement Neo4j graph database with nodes representing molecules, scaffolds, proteins, pathways, and diseases [4]

- Establish relationships between nodes including "scaffold part of molecule," "molecule targets protein," "protein participates in pathway," and "pathway associated with disease" [4]

- Integrate morphological profiles by connecting compounds to their corresponding phenotypic fingerprints

- Apply appropriate similarity measures for patient similarity networks, which may include cosine similarity, Euclidean distance, or specialized kernel functions tailored to specific data types [36]

Step 4: Chemogenomic Library Design

- Select approximately 5,000 small molecules representing diverse drug targets across biological processes and disease areas [4]

- Apply scaffold-based filtering to ensure structural diversity while maintaining coverage of the druggable genome

- Curate final library to balance target coverage, chemical diversity, and suitability for phenotypic screening applications

Step 5: Validation and Application

- Employ the integrated network for target identification of phenotypic screening hits by connecting compounds with similar morphological profiles to known targets and pathways

- Use network proximity measures to prioritize potential mechanisms of action

- Validate predictions through orthogonal experimental approaches such as molecular docking or biological assays [39]

Protocol 2: Pathway Enrichment Analysis for Multi-Omics Data Integration

This protocol describes comprehensive pathway enrichment analysis of OMICS data to extract mechanistic insights from gene lists derived from genome-scale experiments, facilitating biological interpretation within network pharmacology frameworks [35].

Materials and Reagents

- g:Profiler: Web-based thresholded pathway enrichment tool (http://biit.cs.ut.ee/gprofiler/) [38]

- Gene Set Enrichment Analysis (GSEA): Desktop application for analyzing ranked gene lists using permutation-based tests [38]

- Cytoscape: Network visualization platform with EnrichmentMap app (version 3.6.0 or higher) [38]

- EnrichmentMap Pipeline Collection: Cytoscape apps including EnrichmentMap, clusterMaker2, WordCloud, AutoAnnotate [38]

- Pathway Databases: MSigDB, Reactome, Panther, NetPath, HumanCyc, WikiPathways [35]

Step-by-Step Methodology

Step 1: Gene List Definition from Omics Data

For RNA-seq or gene expression microarray data:

- Process raw data through standard normalization and quality control procedures

- Generate differentially expressed genes using appropriate statistical tests (e.g., t-test, limma)

- Create either:

- Flat gene list: Filter by statistical thresholds (e.g., FDR-adjusted p-value <0.05, fold-change >2)

- Ranked gene list: Sort all genes by differential expression score (e.g., t-statistic, fold-change) without filtering [35]

For genomic mutation data:

- Identify somatically mutated genes from exome or genome sequencing

- Rank genes by mutation significance (e.g., FDR q-value) and frequency [38]

Step 2: Pathway Enrichment Analysis

Option A: g:Profiler for flat gene lists

- Access g:Profiler web interface at http://biit.cs.ut.ee/gprofiler/

- Paste gene list into Query field and select "Ordered query" option

- Check "No electronic GO annotations" to increase annotation quality

- Set statistical parameters:

- Functional category size: min=5, max=350 genes

- Query/term intersection: min=3 genes

- Significance threshold: adjusted p-value (q-value) <0.05 [38]

- Select output as "Generic Enrichment Map (TAB)" format for Cytoscape compatibility

- Download results and corresponding GMT gene set file

Option B: GSEA for ranked gene lists

- Launch GSEA desktop application (requires Java installation)

- Load ranked gene list file (RNK format) and pathway gene set (GMT format)

- Run GSEA Preranked analysis with default parameters:

- Number of permutations: 1000

- Enrichment statistic: weighted

- Metric for ranking genes: Signal2Noise or t-test [38]

- Export enrichment results for visualization

Step 3: Visualization and Interpretation with EnrichmentMap

- Open Cytoscape and install EnrichmentMap Pipeline Collection (Apps → App Store)

- Import g:Profiler or GSEA results file

- Load corresponding pathway gene set database (GMT file)

- Build enrichment map with following parameters:

- FDR q-value cutoff: <0.05

- Similarity cutoff: overlap coefficient ≥0.375

- Apply automatic clustering using clusterMaker2

- Use AutoAnnotate to label clusters with representative terms [38]

- Interpret results by identifying major biological themes within clustered pathways

Protocol 3: Network Pharmacology Analysis for Traditional Medicine

This protocol adapts network pharmacology approaches for studying traditional medicines, exemplified by the analysis of Zuojinwan (ZJW) for gastric cancer treatment, providing a framework for identifying active compounds, targets, and mechanisms of action from complex mixtures [39].

Materials and Reagents

- TCMSP Database: Traditional Chinese Medicine Systems Pharmacology database (http://lsp.nwu.edu.cn/tcmsp.php) [39]

- BATMAN-TCM: Bioinformatics Analysis Tool for Molecular mechANism of TCM (http://bionet.ncpsb.org/batman-tcm/) [39]

- Swiss TargetPrediction: Compound target prediction tool (http://www.swisstargetprediction.ch/) [40]

- GeneCards: Human gene database (http://www.genecards.org) [39]

- DisGeNET: Database of gene-disease associations (https://www.disgenet.org/) [40]

- Metascape: Platform for GO enrichment and PPI analysis (http://metascape.org) [40]

- Molecular Operating Environment (MOE): Molecular docking software [39]

Step-by-Step Methodology

Step 1: Active Compound Screening

- Retrieve compound information for herbal constituents from TCMSP, BATMAN-TCM, and literature sources

- Apply pharmacokinetic filtering criteria: