Integrating KEGG and ChEMBL for Advanced Chemogenomic Analysis: A Comprehensive Guide for Drug Discovery

This article provides a comprehensive framework for integrating KEGG and ChEMBL databases to power modern chemogenomic analysis.

Integrating KEGG and ChEMBL for Advanced Chemogenomic Analysis: A Comprehensive Guide for Drug Discovery

Abstract

This article provides a comprehensive framework for integrating KEGG and ChEMBL databases to power modern chemogenomic analysis. Aimed at researchers and drug development professionals, it covers the foundational principles of these complementary resources, practical methodologies for data integration and network pharmacology, common troubleshooting strategies for data harmonization, and validation techniques through comparative analysis with other data sources. By synthesizing chemical, bioactivity, and pathway information, this guide enables more effective prediction of drug-target interactions, elucidation of mechanisms of action in phenotypic screening, and acceleration of multi-target drug discovery, ultimately facilitating the transition from a single-target to a systems pharmacology perspective in therapeutic development.

Understanding KEGG and ChEMBL: Complementary Foundations for Chemogenomics

ChEMBL is a manually curated, open-source database of bioactive molecules with drug-like properties, serving as a foundational resource for drug discovery and chemogenomics research [1] [2]. Maintained by the European Bioinformatics Institute (EMBL-EBI), its primary mission is to bridge genomic information and effective drug development by integrating chemical, bioactivity, and genomic data [2]. This makes it particularly valuable for researchers employing systems pharmacology approaches, which require understanding complex interactions between compounds and multiple biological targets rather than single target effects [3].

The scale of the database is substantial, with ChEMBL release 33 containing information extracted from over 88,000 publications and patents, 420 deposited datasets, and encompassing more than 20.3 million bioactivity measurements for 2.4 million unique compounds [4]. The data spans from 1974 to the present, enabling time-series analyses and trend assessments in drug discovery [4]. As a recognized Global Core Biodata Resource, ChEMBL provides the critical data infrastructure necessary for modern computational drug discovery, including target prediction, polypharmacology modeling, and machine learning applications [4].

Integration of ChEMBL with KEGG for Chemogenomic Analysis

Theoretical Foundation for Data Integration

Integrating ChEMBL with the Kyoto Encyclopedia of Genes and Genomes (KEGG) creates a powerful framework for chemogenomic analysis that connects chemical perturbations to systems-level biological responses [3]. This integration addresses a fundamental challenge in phenotypic drug discovery: deconvoluting the mechanisms of action induced by bioactive compounds by placing their protein targets within the context of broader biological pathways and disease networks [3].

The KEGG pathway database provides manually drawn pathway maps representing known molecular interactions, reactions, and relation networks across various categories including metabolism, cellular processes, genetic information processing, human diseases, and drug development [3]. When combined with ChEMBL's comprehensive repository of drug-target interactions, researchers can construct system pharmacology networks that reveal how chemical modulation of specific targets influences broader biological processes and potentially produces observable phenotypes [3].

Practical Applications of the Integrated Data

This integrated approach enables several key applications in drug discovery. For target identification and validation, researchers can map compounds with similar phenotypic profiles from ChEMBL to their protein targets and then determine if these targets cluster within specific KEGG pathways, suggesting critical nodes for therapeutic intervention [3] [5]. For drug repurposing, known drug-target interactions from ChEMBL can be connected to disease pathways in KEGG, identifying new therapeutic indications for existing drugs [5]. In mechanism of action deconvolution, morphological profiling data from phenotypic screens can be linked to both chemical structures in ChEMBL and biological pathways in KEGG to generate testable hypotheses about how compounds produce observed phenotypic effects [3]. Additionally, for side-effect prediction, understanding the network neighborhood of a drug's primary targets in KEGG pathways can help anticipate potential adverse outcomes by identifying biologically related proteins that might be inadvertently modulated [3].

Table 1: Key Data Sources for Integrated Chemogenomic Analysis

| Resource | Data Type | Role in Chemogenomic Analysis | Source |

|---|---|---|---|

| ChEMBL | Bioactive compounds, target interactions, drug-like properties | Provides chemical starting points and known biological activities | [1] [2] |

| KEGG | Pathways, diseases, functional annotations | Contextualizes targets within biological systems | [3] |

| Gene Ontology (GO) | Biological processes, molecular functions, cellular components | Adds functional annotation to protein targets | [3] |

| Disease Ontology (DO) | Human disease terms and relationships | Connects targets and pathways to human pathology | [3] |

Experimental Protocols for Chemogenomic Analysis

Protocol 1: Building an Integrated Drug-Target-Pathway Network

This protocol describes the construction of a systems pharmacology network integrating drug-target interactions from ChEMBL with pathway information from KEGG, following methodologies established in recent chemogenomics studies [3].

Materials and Reagents

- ChEMBL Database (version 33 or latest): Source of compound structures, bioactivities, and target associations [4]

- KEGG API: Programmatic access to pathway information [3]

- Neo4j Graph Database: Platform for network integration and querying [3]

- R packages: clusterProfiler (v3.14.3), DOSE (v3.12.0), org.Hs.eg.db (v3.10.0) for enrichment analysis [3]

Procedure

- Data Extraction from ChEMBL: Query the ChEMBL web services using specific filters to retrieve compounds with confirmed bioactivity data (e.g., Ki, IC50, EC50 ≤ 10 μM). Include associated protein targets and standardize activity measurements [6].

- Pathway Data Retrieval: Using the KEGG REST API, download pathway information for organisms of interest (e.g., human, mouse). Parse the data to extract gene-pathway associations [3].

- Identifier Mapping: Map ChEMBL target identifiers to standardized gene identifiers (e.g., Entrez ID, UniProt ID) using the cross-reference tables available in ChEMBL and the org.Hs.eg.db R package [3].

- Network Construction in Neo4j:

- Create nodes for: Compounds (with properties: ChEMBL ID, name, SMILES, molecular weight), Proteins (with properties: target ID, name, organism), Pathways (with properties: KEGG ID, name, category)

- Create relationships: (Compound)-[AFFECTS]->(Protein), (Protein)-[PARTICIPATES_IN]->(Pathway)

- Enrichment Analysis: For a set of compounds with similar phenotypic profiles, identify their shared targets and perform KEGG pathway enrichment analysis using the clusterProfiler R package with Bonferroni correction (p-value cutoff = 0.1) [3].

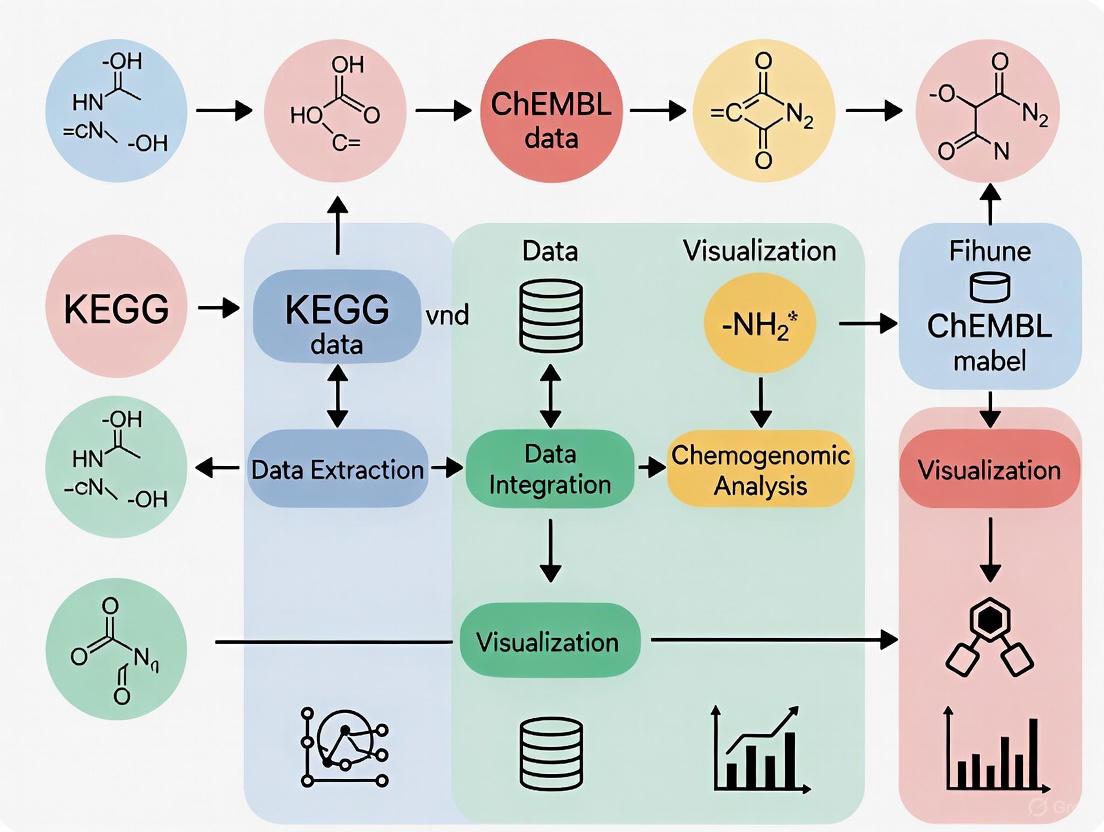

Diagram 1: Drug-Target-Pathway Network Construction Workflow

Protocol 2: Target Deconvolution for Phenotypic Screening Hits

This protocol utilizes integrated ChEMBL-KEGG data to identify potential mechanisms of action for compounds identified in phenotypic screens, adapting approaches used in pharmaceutical discovery pipelines [3] [7].

Materials and Reagents

- ChEMBL Bioactivity Data: Filtered for high-quality interactions (e.g., binding constants, pharmacology data) [2]

- Cell Painting Dataset: Morphological profiling data (e.g., BBBC022 from Broad Bioimage Benchmark Collection) [3]

- Scaffold Hunter Software: For structural analysis of bioactive compounds [3]

- CHEMGENIE or Similar Platform: Integrated chemogenomics database for polypharmacology prediction [7]

Procedure

- Phenotypic Screening: Conduct a Cell Painting or other phenotypic assay with a diverse compound library. Generate morphological profiles for each treatment condition [3].

- Similarity Searching: For each phenotypic hit, perform chemical similarity searches against ChEMBL using the web services API with a Tanimoto similarity cutoff of 80% [6].

- Target Enrichment Analysis:

- Compile all known targets for structurally similar compounds identified in step 2

- Perform statistical enrichment to identify targets over-represented among the phenotypic hits compared to the background library

- Calculate p-values using Fisher's exact test with multiple testing correction [7]

- Pathway Contextualization: Map enriched targets to KEGG pathways to identify biological processes potentially modulated by the phenotypic hits. Use the DOSE R package for disease ontology enrichment to suggest therapeutic applications [3].

- Scaffold Analysis: Using Scaffold Hunter, extract core chemical scaffolds from the active compounds and query ChEMBL for other compounds sharing these scaffolds but potentially targeting different proteins, exploring structure-activity relationships [3].

Table 2: Key Research Reagents and Tools for Chemogenomic Analysis

| Tool/Resource | Type | Function in Analysis | Source/Availability |

|---|---|---|---|

| ChEMBL Web Services | Programming interface | Programmatic access to bioactivity data | Public REST API [6] |

| Scaffold Hunter | Software | Structural decomposition and scaffold analysis | Open source [3] |

| Neo4j | Database | Graph-based data integration and querying | Commercial with free tier [3] |

| clusterProfiler | R package | Functional enrichment analysis | Bioconductor [3] |

| CellProfiler | Software | Image analysis for morphological profiling | Open source [3] |

Accessing and Utilizing ChEMBL Data

Programmatic Access and Data Retrieval

ChEMBL provides comprehensive web services that enable programmatic access to its data, facilitating integration into automated chemogenomic analysis pipelines [6]. The RESTful API supports multiple data formats including JSON, XML, and YAML, with pagination capabilities for handling large datasets [6].

Key API Endpoints for Chemogenomic Analysis:

- Molecule: Retrieve compound structures, properties, and synonyms

- Target: Access protein target information and classification

- Activity: Obtain bioactivity measurements (Ki, IC50, etc.)

- Mechanism: Explore drug mechanisms of action

- Target Component: Get sequence information for targets

Example API Queries:

- Retrieve compounds similar to aspirin:

https://www.ebi.ac.uk/chembl/api/data/molecule?molecule_structures__canonical_smiles__flexmatch=CC(=O)Oc1ccccc1C(=O)O[6] - Find kinase targets:

https://www.ebi.ac.uk/chembl/api/data/target?pref_name__contains=kinase[6] - Get bioactivities for a specific compound:

https://www.ebi.ac.uk/chembl/api/data/activity?molecule_chembl_id=CHEMBL25[6]

For large-scale analyses, the entire ChEMBL dataset can be downloaded via FTP in various formats including Oracle database dumps, PostgreSQL, and MySQL [8].

Data Filtering and Quality Considerations

When using ChEMBL data for chemogenomic analysis, several filtering strategies enhance data quality and relevance. Restrict bioactivities to specific measurement types (Ki, Kd, IC50, EC50) and apply confidence thresholds based on data provenance [4]. Consider target confidence scores provided in ChEMBL to prioritize well-annotated protein targets. Utilize the new flags for chemical probes and natural products introduced in recent releases to identify high-quality tool compounds [4]. For integration with KEGG, focus on human targets or apply orthology mapping for cross-species analyses.

Diagram 2: ChEMBL Data Analysis and Validation Workflow

The integration of ChEMBL with pathway resources like KEGG represents a powerful approach to modern chemogenomic analysis, enabling the transition from a reductionist "one target—one drug" paradigm to a systems-level understanding of polypharmacology [3]. The manually curated, high-quality data in ChEMBL provides the chemical foundation for building predictive models of drug-target interactions, while KEGG offers the biological context necessary for interpreting these interactions in disease-relevant pathways [3] [5].

As ChEMBL continues to grow—with deposited datasets now surpassing literature-extracted data in recent releases—its utility for chemogenomic applications expands accordingly [4]. Future developments will likely enhance the integration of chemical biology data with other -omics datasets, further empowering network pharmacology approaches to drug discovery and repurposing. The protocols outlined here provide a foundation for researchers to leverage these integrated resources for their own chemogenomic investigations, from target deconvolution in phenotypic screening to rational drug design based on systems-level understanding.

The Kyoto Encyclopedia of Genes and Genomes (KEGG) is a comprehensive database resource established in 1995 for understanding high-level functions and utilities of biological systems from genomic and molecular data [9]. It represents a foundational knowledge base that integrates systems, genomic, chemical, and health information into a unified framework. A primary objective of KEGG is to assign functional meanings to genes and genomes through the concept of functional orthologs, implemented via the KEGG Orthology (KO) system, enabling the reconstruction of molecular networks across diverse species [9]. This capability makes KEGG an indispensable tool for chemogenomic analysis, which seeks to understand the complex relationships between chemical compounds and their biological targets on a genome-wide scale. By integrating KEGG with bioactive molecule databases like ChEMBL, researchers can effectively bridge the gap between genomic information, pathway-level perturbations, and phenotypic effects induced by small molecules, thereby accelerating the translation of genomic data into effective new drugs [3] [1].

KEGG Database Architecture and Components

KEGG is organized as an integrated knowledge base comprising multiple interlinked databases. These can be broadly categorized into systems information, genomic information, chemical information, and health information, each playing a distinct role in biological interpretation.

Core Databases and Their Relationships

Table 1: Core Databases within the KEGG Resource

| Database Category | Database Name | Primary Content | Key Identifiers |

|---|---|---|---|

| Systems Information | KEGG PATHWAY | Molecular interaction and reaction networks | mapXXXXX |

| KEGG BRITE | Functional hierarchies | brXXXXX | |

| KEGG MODULE | Functional units | MXXXXX | |

| Genomic Information | KEGG ORTHOLOGY | Functional ortholog groups | KXXXXX |

| KEGG GENES | Gene catalogs | org:XXXXX | |

| KEGG GENOME | Organism information | TXXXXX | |

| Chemical Information | KEGG COMPOUND | Metabolites and small molecules | CXXXXX |

| KEGG GLYCAN | Glycans | GXXXXX | |

| KEGG REACTION | Biochemical reactions | RXXXXX | |

| KEGG ENZYME | Enzyme nomenclature | ECX.X.X.X | |

| Health Information | KEGG DRUG | Drug compounds | DXXXXX |

| KEGG DISEASE | Human diseases | HXXXXX | |

| KEGG NETWORK | Disease network variants | ntXXXXX |

The KEGG PATHWAY database forms the central organizing principle, containing manually drawn pathway maps representing molecular interaction, reaction, and relation networks [10]. These maps are categorized into seven broad areas: Metabolism, Genetic Information Processing, Environmental Information Processing, Cellular Processes, Organismal Systems, Human Diseases, and Drug Development [10]. Each pathway map is identified by a combination of a 2-4 letter prefix code and a 5-digit number, with prefixes indicating the type of pathway (e.g., 'map' for reference pathway, 'ko' for KO-based pathway, organism codes for species-specific pathways) [10].

The KEGG ORTHOLOGY (KO) system provides the critical linkage between genomic information and pathway knowledge. Each KO entry represents a conserved functional ortholog that serves as a node in KEGG pathway maps, BRITE hierarchies, and KEGG modules [9]. This architecture enables KEGG pathway mapping to uncover systemic features from KO-annotated genomes and metagenomes.

The chemical aspect of KEGG is represented by the KEGG LIGAND databases, which include KEGG COMPOUND for metabolic intermediates and other small molecules, KEGG GLYCAN for complex carbohydrates, KEGG REACTION for biochemical reactions, and KEGG DRUG for approved pharmaceutical compounds [11]. As of 2025, KEGG COMPOUND contained 19,541 entries, while KEGG DRUG contained 12,733 entries, with substantial cross-linking between these resources [11].

KEGG Database Architecture: This diagram illustrates the four main categories of KEGG databases and their constituent components, showing the integrated nature of the resource.

Protocols for KEGG in Chemogenomic Analysis

Protocol 1: Building an Integrated Drug-Target-Pathway Network

This protocol describes the construction of a systems pharmacology network integrating drug-target-pathway-disease relationships for chemogenomic analysis, adapted from methodologies successfully implemented in recent literature [3].

Materials and Reagents Table 2: Research Reagent Solutions for Network Pharmacology

| Item | Specification | Function in Protocol |

|---|---|---|

| ChEMBL Database | Version 22 or later | Source of bioactive molecule and target information |

| KEGG REST API | https://www.kegg.jp/kegg/rest/ | Programmatic access to KEGG data |

| Neo4j Graph Database | Community or Enterprise Edition | Storage and querying of network relationships |

| R Statistical Environment | Version 4.0 or higher | Data processing and analysis |

| clusterProfiler R package | Version 3.14.3 or higher | Functional enrichment analysis |

| ScaffoldHunter Software | Latest available version | Chemical scaffold analysis |

Procedure

Data Acquisition from ChEMBL

- Download the latest ChEMBL database release (version 22 or higher) containing standardized bioactivity data (Ki, IC50, EC50), molecular structures, and target information [3].

- Extract compounds with confirmed bioactivity measurements and their corresponding protein targets, focusing on human targets where possible.

- Filter compounds to include only those with drug-like properties based on Lipinski's Rule of Five and Veber's criteria.

KEGG Pathway and Disease Data Retrieval

- Access the KEGG PATHWAY database via the KEGG REST API to obtain pathway information, including gene-protein relationships and pathway hierarchies [10] [9].

- Retrieve KEGG DISEASE data to establish disease-gene relationships.

- Download KEGG DRUG information to identify approved pharmaceuticals and their targets.

- Use KEGG ORTHOLOGY to establish orthology relationships across species for comparative analysis.

Chemical Structure Processing

- Process chemical structures using ScaffoldHunter to decompose each molecule into representative scaffolds and fragments [3].

- Apply the following decomposition rules:

- Remove all terminal side chains preserving double bonds directly attached to a ring.

- Remove one ring at a time using deterministic rules in a stepwise fashion to retain characteristic core structures.

- Organize scaffolds into different levels based on their relationship distance from the original molecule node.

Graph Database Construction

- Implement a Neo4j graph database with the following node types: Molecule, Scaffold, Protein, Pathway, Disease, and Biological Process [3].

- Establish the following relationship types:

- (Molecule)-[HASSCAFFOLD]->(Scaffold)

- (Molecule)-[TARGETS]->(Protein)

- (Protein)-[PARTOFPATHWAY]->(Pathway)

- (Protein)-[ASSOCIATEDWITHDISEASE]->(Disease)

- (Pathway)-[ENRICHEDFOR]->(Biological Process)

- Import processed data from ChEMBL and KEGG into the appropriate nodes and relationships.

Network Validation and Enrichment Analysis

- Validate the integrated network by confirming known drug-target-pathway relationships from literature.

- Perform functional enrichment analysis using the clusterProfiler R package to identify overrepresented biological processes, molecular functions, and KEGG pathways [3].

- Conduct disease ontology enrichment using the DOSE R package with adjustment for multiple testing (Bonferroni method) and a p-value cutoff of 0.1 [3].

Expected Results Successful implementation will yield a comprehensive network typically comprising 5,000-10,000 small molecules, 1,000-2,000 protein targets, and 200-300 KEGG pathways, enabling systematic analysis of polypharmacology and drug repurposing opportunities.

Protocol 2: KEGG Mapper for Pathway-Based Transcriptomics and Chemogenomics Integration

This protocol utilizes KEGG Mapper tools to visualize and interpret transcriptomic data in the context of biological pathways, with integration of chemical perturbations.

Procedure

Data Preparation

- Generate a two-column dataset (space or tab separated) with KEGG identifiers in the first column and color specification in the second column [12].

- For gene expression data, use KEGG orthology (KO) identifiers (K numbers) or organism-specific gene identifiers.

- For chemical data, use KEGG COMPOUND (C numbers) or KEGG DRUG (D numbers) identifiers.

- Format color specification as "bgcolor,fgcolor" without spacing (e.g., "red,white" or "#ff0000,#ffffff").

Pathway Mapping with Color Tool

- Access the KEGG Mapper Color tool at https://www.kegg.jp/kegg/mapper/color.html [12].

- Select the appropriate search mode:

- Reference mode: For mapping against reference pathways using KO identifiers, EC numbers, or C/D numbers.

- Organism-specific mode: For mapping against species-specific pathways using native gene identifiers.

- Upload the prepared data file or paste directly into the input field.

- Set default background color for unmapped elements and choose whether to include aliases.

- Execute the search and review the colored pathway maps.

Interpretation and Analysis

- Identify pathways with significant enrichment of differentially expressed genes or chemical targets.

- Use the KEGG Mapper Search tool to find pathways containing specific genes or compounds of interest.

- Analyze pathway topology to identify key regulatory nodes and potential bottlenecks.

- Compare multiple conditions using different color schemes to visualize differential responses.

Integration with Chemogenomic Data

- Overlay chemical target information on transcriptomic pathway maps to identify direct and indirect effects of chemical perturbations.

- Use the KEGG NETWORK database to examine disease-associated perturbed networks and identify potential therapeutic targets [9].

- Correlate pathway enrichment patterns with chemical structure similarities identified through scaffold analysis.

KEGG Mapper Workflow: This diagram outlines the sequential steps for utilizing KEGG Mapper tools to visualize and interpret omics data in the context of biological pathways.

Application Notes and Case Studies

Case Study: Development of a Chemogenomic Library for Phenotypic Screening

Recent research demonstrates the powerful integration of KEGG with chemical biology resources for phenotypic drug discovery. A 2021 study developed a chemogenomic library of 5,000 small molecules representing a diverse panel of drug targets involved in various biological effects and diseases [3]. This library was constructed by:

Systems Pharmacology Network Construction: Integrating ChEMBL bioactivity data, KEGG pathways, Gene Ontology, Disease Ontology, and morphological profiling data from the Cell Painting assay into a Neo4j graph database [3].

Target Coverage Optimization: Ensuring representation of targets across all major KEGG pathway categories, including:

- Metabolism (Global and overview maps, Carbohydrate metabolism, Lipid metabolism)

- Environmental Information Processing (Membrane transport, Signal transduction)

- Cellular Processes (Transport and catabolism)

- Organismal Systems (Immune system, Endocrine system)

Scaffold-Based Diversity: Applying ScaffoldHunter to decompose molecules into representative scaffolds, ensuring structural diversity while maintaining coverage of the druggable genome [3].

This chemogenomic library enables target identification and mechanism deconvolution for phenotypic screening campaigns, effectively bridging target-based and phenotypic drug discovery paradigms.

Application Note: KEGG NETWORK for Analyzing Disease Perturbations

The KEGG NETWORK database, introduced more recently, provides a novel approach for representing disease-associated perturbed molecular networks [9]. This resource incorporates:

- Network Variants: Representations of perturbed molecular networks caused by human gene variants, viruses, pathogens, environmental factors, and drugs.

- Aligned Network Sets: Comparisons of how different viruses inhibit or activate specific cellular signaling pathways.

- Integration with Pathway Maps: Unified representation of reference pathways and network variation maps.

For chemogenomic analysis, KEGG NETWORK enables researchers to:

- Identify network-based biomarkers for disease stratification

- Predict drug efficacy based on network perturbation patterns

- Identify drug combination opportunities targeting complementary network nodes

- Understand resistance mechanisms through adaptive network changes

Table 3: KEGG NETWORK Cancer Type Color Codes

| Color Code | Cancer Type | Representative Subtypes |

|---|---|---|

| #ff0000 | Acute Myeloid Leukemia | H00003 |

| #ff1493 | Breast Cancer | H00031 |

| #00ffff | Prostate Cancer | H00024 |

| #ffff00 | Glioma, Neuroblastoma | H00042, H00043 |

| #0000ff | Colorectal Cancer | H00020 |

| #ffc0cb | Endometrial Cancer | H00026 |

| #00ff00 | Non-Small Cell Lung Cancer | H00014 |

| #993333 | Various Sarcomas | Multiple subtypes |

Practical Implementation

Accessing KEGG Programmatically

For large-scale chemogenomic analyses, programmatic access to KEGG is essential. The KEGG REST API provides access to all KEGG databases using simple HTTP requests:

Best Practices for Data Visualization

Effective visualization of KEGG-based analyses requires adherence to established practices:

Pathway Mapping Color Standards: Use KEGG's established color codes for consistent interpretation [13]. For example:

- Organism-specific pathways: #bfffbf to #66cc66

- Reference pathways: #bfbfff to #6666cc

- Disease genes: #ffcfff to #ff99ff

Multi-omics Integration: Use KEGG's split color mode to visualize data from multiple organisms or conditions simultaneously [13].

Network Visualization: When visualizing large networks, employ hierarchical layouts that emphasize key pathway modules and their interconnections.

Uncertainty Representation: Clearly distinguish between experimentally confirmed and predicted interactions in integrated networks, particularly when combining KEGG with predicted chemical-target interactions [14].

Quality Control and Validation

- Regularly update KEGG data extracts, as KEGG is continuously updated with new pathways and annotations [10].

- Validate KEGG-based predictions with orthogonal data sources and experimental confirmation.

- Use the manually curated portions of KEGG (pathway maps, KO definitions) as gold standards for evaluating automated predictions.

- Leverage KEGG's internal consistency checks through the use of KEGG Mapper modules that ensure proper pathway connectivity and stoichiometric consistency.

The paradigm of drug discovery has progressively shifted from a traditional "one drug–one target" approach toward a more holistic systems pharmacology perspective that acknowledges complex diseases are often caused by multiple molecular abnormalities rather than a single defect [15] [16]. Multi-target drug discovery has emerged as an essential strategy for treating complex diseases involving multiple molecular pathways, such as cancer, neurodegenerative disorders, and metabolic syndromes [15]. This transformation creates a critical need for methodologies that can effectively integrate chemical bioactivity data with pathway and disease context to enable rational polypharmacology – the deliberate design of drugs to interact with a pre-defined set of molecular targets for synergistic therapeutic effects [15].

This Application Note provides a structured framework and practical protocols for integrating chemical bioactivity data from sources like ChEMBL with pathway information from resources like KEGG to advance chemogenomic analysis in drug discovery. We present standardized workflows, data processing techniques, and visualization strategies to help researchers leverage these integrated datasets for identifying multi-target therapeutic strategies, repurposing existing drugs, and deconvoluting mechanisms of action in phenotypic screening.

Successful integration of chemical bioactivity with pathway context begins with a thorough understanding of available data resources, their coverage, and appropriate application scenarios. The following table summarizes key databases and their primary characteristics.

Table 1: Key Databases for Chemogenomic Analysis

| Database | Primary Focus | Data Content | Key Applications |

|---|---|---|---|

| ChEMBL [1] [17] | Bioactive molecules & drug-like compounds | >21 million bioactivity measurements; >2.4 million ligands; >16,000 targets [17] | Multi-target activity profiling; lead optimization; drug repurposing |

| KEGG PATHWAY [18] | Molecular interaction & reaction networks | Manually drawn pathway maps for metabolism, cellular processes, human diseases, and drug development [18] | Pathway enrichment analysis; network pharmacology; mechanistic studies |

| BindingDB [17] | Measured binding affinities | ~2.4 million binding measurements; ~1.3 million unique ligands; ~9,000 targets [17] | Binding affinity prediction; selectivity analysis; machine learning model training |

| GtoPdb [17] | Pharmacological targets & ligands | Curated data on 3,039 targets and 12,163 ligands with emphasis on drug targets [17] | Target prioritization; safety assessment; polypharmacology prediction |

The quantitative coverage and relationships between these resources reveal important patterns for research planning. Comparative analysis shows that ChEMBL, BindingDB, and GtoPdb collectively provide a robust foundation for computational pharmacology, with ChEMBL offering the most extensive compound coverage and BindingDB providing specialized binding affinity data [17]. Journal coverage analysis indicates that 2,360 articles are common to all three databases, while 38,912 are shared between ChEMBL and BindingDB, highlighting the complementary yet overlapping nature of these resources [17].

Integrated Protocol for Chemogenomic Analysis

Protocol: Building a Network Pharmacology Database

Purpose: To construct an integrated network pharmacology database that connects compounds, targets, pathways, and diseases for multi-target therapeutic discovery.

Materials and Reagents:

- Data Sources: ChEMBL database (version 22 or newer) [16], KEGG PATHWAY database [18], Disease Ontology [16], Gene Ontology [16]

- Software Tools: Neo4j graph database [16], R packages (clusterProfiler, DOSE, org.Hs.eg.db) [16], ScaffoldHunter [16]

Procedure:

- Compound and Target Collection:

- Download ChEMBL data and filter for compounds with at least one bioassay result

- Extract molecular structures (SMILES, InChIKey), bioactivity values (IC₅₀, Kᵢ, EC₅₀), and target information

- Retain only human targets or include ortholog mapping for other species

Pathway and Disease Annotation:

- Download KEGG pathway maps and create mappings between targets and pathways

- Annotate targets with Disease Ontology terms and Gene Ontology functional categories

- Integrate morphological profiling data from sources like Cell Painting if available [16]

Graph Database Construction:

- Define node types: Molecule, CompoundName, Target, Pathway, Disease, AssayResult

- Establish relationships: "TARGETS", "PARTOFPATHWAY", "ASSOCIATEDWITHDISEASE"

- Implement cross-references between entities using standardized identifiers

Scaffold Analysis:

- Process compounds using ScaffoldHunter to identify core chemical structures [16]

- Generate hierarchical scaffold trees by progressively removing terminal side chains and rings

- Incorporate scaffold nodes into the graph database with "HAS_SCAFFOLD" relationships

Enrichment Analysis Capability:

- Implement connectivity for GO, KEGG, and Disease Ontology enrichment analysis using clusterProfiler and DOSE R packages [16]

- Configure statistical parameters (Bonferroni adjustment, p-value cutoff of 0.1)

Troubleshooting Tips:

- Resolve identifier discrepancies between databases using bridge resources like UniProt

- Implement data quality filters based on confidence metrics from each source

- For large datasets, employ batch processing and index optimization in Neo4j

Protocol: Chemogenomic Library Design for Phenotypic Screening

Purpose: To design a targeted chemogenomic library for phenotypic screening that covers diverse biological pathways and enables mechanism deconvolution.

Materials and Reagents:

- Starting Compound Sets: Commercially available screening collections (e.g., NCATS MIPE, Pfizer chemogenomic library, GSK BDCS) [16]

- Annotation Resources: ChEMBL target annotations, KEGG pathway maps, GO biological processes

- Analysis Tools: Custom scripts for diversity analysis, clustering algorithms, and target coverage assessment

Procedure:

- Target Space Definition:

- Identify proteins and pathways relevant to the disease biology or phenotypic assay

- Extract compounds from ChEMBL with activity against these targets (considering potency and selectivity)

- Include compounds with known mechanisms to serve as reference tools

Diversity and Selectivity Optimization:

- Apply scaffold analysis to ensure structural diversity and reduce chemical redundancy

- Prioritize compounds with balanced polypharmacology profiles over highly selective agents for multi-target applications [19]

- Apply filters for drug-like properties (e.g., Lipinski's Rule of Five) when appropriate

Library Assembly and Annotation:

Validation and Profiling:

- Screen the library in the phenotypic assay of interest

- Use profiling data to build connectivity maps between chemical structures, targets, and observed phenotypes

- Apply enrichment analysis to identify overrepresented target classes and pathways among active compounds

Applications: This protocol has been successfully applied in precision oncology for identifying patient-specific vulnerabilities in glioblastoma and can be adapted to other disease areas [19].

Visualization and Data Integration Strategies

Effective visualization is crucial for interpreting complex chemogenomic data. The following diagram illustrates the core workflow for integrating chemical bioactivity with pathway and disease context:

Integrated Chemogenomic Analysis Workflow

For multi-target drug discovery applications, understanding the relationship between compound structures, their protein targets, and the pathways they modulate is essential. The following diagram illustrates this multi-scale relationship:

Multi-Scale Pharmacology Relationships

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Reagents and Resources for Chemogenomic Analysis

| Resource | Type | Primary Function | Application Notes |

|---|---|---|---|

| ChEMBL Database [1] [17] | Bioactivity Database | Provides curated bioactivity data for drug-like molecules | Essential for building compound-target networks; use for polypharmacology profiling |

| KEGG PATHWAY [18] | Pathway Database | Manually drawn molecular interaction and reaction networks | Critical for contextualizing targets in biological systems; use for enrichment analysis |

| Neo4j [16] | Graph Database Platform | Enables integration of heterogeneous data sources as connected networks | Ideal for representing complex drug-target-pathway-disease relationships |

| ScaffoldHunter [16] | Chemical Informatics Tool | Identifies and organizes molecular scaffolds from compound collections | Enables scaffold-based diversity analysis and chemotype-phenotype correlation |

| Cell Painting Assay [16] | Phenotypic Profiling Method | Provides high-content morphological profiles for compounds | Bridges chemical and phenotypic spaces for mechanism deconvolution |

| BindingDB [17] | Binding Affinity Database | Focuses on measured drug-target binding affinities (Kd, Ki, IC₅₀) | Superior for building quantitative structure-activity relationship models |

| clusterProfiler R Package [16] | Bioinformatics Tool | Performed GO and KEGG enrichment analysis | Statistical identification of overrepresented biological terms |

Advanced Applications and Case Studies

Machine Learning for Multi-Target Prediction

Background: Machine learning (ML) has emerged as a powerful toolkit for modeling the complex, nonlinear relationships inherent in biological systems and predicting multi-target activities [15]. ML approaches can prioritize promising drug-target pairs, predict off-target effects, and propose novel compounds with desirable polypharmacological profiles by learning from diverse data sources [15].

Methodology Overview:

- Feature Representation: Drugs can be encoded as molecular fingerprints, SMILES strings, or graph-based encodings; targets can be represented by sequences, structures, or network embeddings [15]

- Model Architectures: Approaches range from classical methods (Random Forests, SVMs) to advanced deep learning architectures (Graph Neural Networks, Transformers) [15]

- Multi-Task Learning: Frameworks that simultaneously predict activities against multiple targets to capture inherent relationships between targets [15]

Case Study: DMFF-DTA Model for Affinity Prediction The Dual Modality Feature Fused neural network for Drug-Target Affinity (DMFF-DTA) prediction exemplifies advanced ML applications [20]. This model integrates both sequence and structural information from drugs and proteins while addressing the size discrepancy between drug molecules and protein targets [20].

Key Innovations:

- Binding site-focused graph construction using AlphaFold2-predicted structures

- Dual-modality architecture that fuses sequence and graph features

- Feature balancing to handle the size imbalance between drug and protein graphs

Performance: DMFF-DTA demonstrates excellent generalization capabilities on unseen drugs and targets, achieving improvements of over 8% compared to existing methods [20]. The model has shown practical utility in pancreatic cancer drug repurposing through its accurate binding affinity predictions [20].

Drug Repurposing Through Integrated Analysis

Background: Drug repurposing offers a cost-effective and expedited alternative to traditional drug development pipelines, with the potential to address unmet clinical needs more rapidly [17]. Integrated analysis of chemical bioactivity and pathway context enables systematic identification of new therapeutic indications for existing drugs.

Methodology:

- Cross-Indication Analysis: Examine patterns of drug approvals across 28 therapeutic indication groups to identify areas with high repurposing potential [17]

- Pathway-Based Screening: Implement computational pipelines to predict repositioning opportunities based on pathway activity across disease contexts [17]

- Physicochemical Profiling: Analyze relationships between therapeutic indication groups and the physicochemical properties of their corresponding approved drugs to guide design of novel therapeutics [17]

Implementation Considerations:

- Leverage structured frameworks that map clinically approved drug indications into broader therapeutic groups (e.g., 28 groups for systematic analysis) [17]

- Manually classify targets into high-level biological families (e.g., 12 families) to facilitate therapeutic interpretation [17]

- Prioritize compounds with favorable ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) properties based on indication-specific profiling [17]

The integration of chemical bioactivity data from resources like ChEMBL with pathway and disease context from sources like KEGG represents a powerful approach for advancing drug discovery, particularly in the realm of multi-target therapeutics and drug repurposing. The protocols and strategies outlined in this Application Note provide researchers with practical methodologies for building integrated chemogenomic databases, designing targeted screening libraries, and applying advanced machine learning and visualization techniques.

As the field continues to evolve, promising directions include the increased incorporation of structural biology information (e.g., from AlphaFold2), application of more sophisticated deep learning architectures, and development of improved visualization tools for complex multi-scale data. By adopting these integrated approaches, researchers can more effectively navigate the complexity of biological systems and accelerate the development of safer, more effective therapeutics for complex diseases.

Modern drug discovery has shifted from the traditional "one drug, one target" paradigm toward a more holistic systems pharmacology strategy that acknowledges most complex diseases involve dysregulation of multiple molecular pathways [15]. This shift necessitates the integration of diverse, large-scale biological data to understand and exploit polypharmacology—the design of compounds to intentionally interact with multiple specific targets [15] [16]. Chemogenomics addresses this need by systematically investigating the biological effects of small molecules on a wide range of macromolecular targets [5]. The effectiveness of this approach is critically dependent on accessing and integrating high-quality, structured data describing compounds, their protein targets, the bioactivities between them, and the biological pathways in which these targets operate. Two indispensable resources for this integration are the ChEMBL database, a manually curated repository of bioactive molecules with drug-like properties, and the KEGG (Kyoto Encyclopedia of Genes and Genomes) database, a comprehensive resource representing molecular interaction and reaction networks [1] [21] [22]. This application note details the key data types and structures from these resources and provides a practical protocol for their integrated use in chemogenomic analysis.

Data Types and Structures

Compound Data (ChEMBL)

In ChEMBL, "compounds" refer to preclinical molecules with associated experimental bioactivity data, whereas "drugs" or "clinical candidate drugs" include marketed drugs and those progressing through clinical development pipelines, which may not necessarily have associated bioactivity data in ChEMBL [23]. A single molecule can exist in multiple categories; for example, an approved drug that was also extensively studied in the literature will be both an "Approved Drug" and a "Preclinical Compound" [23].

Table 1: Key Compound Data Attributes in ChEMBL

| Attribute | Description | Example Source/Calculation |

|---|---|---|

| Molecular Structure | Structural representation (e.g., SMILES, InChIKey) | Extracted from literature or deposited datasets [23] |

| Molecular Weight | Weight of the parent form of the molecule | Calculated using RDKit [23] |

| AlogP | Calculated lipophilicity (octanol/water partition coefficient) | Atomic contribution method [23] |

| PSA | Polar Surface Area | Sum of fragment-based contributions [23] |

| HBA/HBD | Hydrogen Bond Acceptor/Donor counts | SMARTS pattern matching [23] |

| RO5 Violations | Number of Lipinski's Rule of Five violations | Based on MW, AlogP, HBD, HBA [23] |

| Max Phase | Maximum clinical development phase | From sources like FDA, USAN, ClinicalTrials.gov [23] |

| Chirality Flag | Indicates if dosed as racemate, single isomer, or achiral | Curated for drugs and clinical candidates [23] |

The clinical development stage of a compound is summarized by its max_phase attribute, which ranges from 0.5 (Early Phase 1) to 4 (Approved Drug) [23]. Preclinical compounds with only bioactivity data have a null value for this field [23].

Target Data (ChEMBL and KEGG)

A "target" in ChEMBL is the entity with which a compound interacts to exert its effect. The database uses a sophisticated target model to distinguish between several target types [22]:

- SINGLE PROTEIN: For compounds interacting specifically with a monomeric protein.

- PROTEIN FAMILY: For compounds acting non-specifically on a family of proteins or when the assay cannot distinguish the specific family member.

- PROTEIN COMPLEX: For compounds interacting with a defined complex of proteins [22].

The KEGG database provides complementary target information by placing proteins within the context of broader biological systems. The KEGG Orthology (KO) system uses generic identifiers (K numbers) to represent functional orthologs, which serve as nodes in KEGG pathway maps [21]. This allows for the reconstruction of organism-specific molecular networks from genomic information [21].

Bioactivity Data (ChEMBL)

Bioactivity data in ChEMBL is extracted from the medicinal chemistry literature and other deposited data sources. It quantitatively describes the interaction between a compound and a target under specific assay conditions.

Table 2: Core Bioactivity Data in ChEMBL

| Data Type | Description | Significance |

|---|---|---|

| IC₅₀ | Half-maximal inhibitory concentration | Measures compound's potency to inhibit a target's function. |

| Kᵢ | Inhibition constant | Quantifies binding affinity for an inhibitor. |

| EC₅₀ | Half-maximal effective concentration | Measures potency for an agonist or activator. |

| Assay Type | Classification (e.g., binding, functional, ADMET) | Provides context for interpreting the activity value. |

| Target Mapping | Link to the specific protein, family, or complex | Defines the pharmacological context of the activity [22]. |

As of a recent update, ChEMBL contained over 1.3 million distinct compound structures and 12 million bioactivity data points mapped to more than 9,000 targets [22]. This data is essential for building predictive models for drug-target interactions (DTIs) and for investigating the selectivity and off-target effects of drugs [22] [5].

Pathway Data (KEGG)

KEGG PATHWAY is a collection of manually drawn pathway maps representing knowledge on molecular interaction, reaction, and relation networks [18] [21]. These maps are systematically categorized, providing a hierarchical organization of biological knowledge.

Table 3: KEGG PATHWAY Database Categories

| Category | Description | Example Pathways |

|---|---|---|

| Metabolism | Global and overview maps, carbohydrate, energy, lipid metabolism, etc. | map01100: Metabolic pathways; map00010: Glycolysis / Gluconeogenesis |

| Genetic Information Processing | Transcription, translation, replication, repair | map03010: Ribosome |

| Environmental Information Processing | Membrane transport, signal transduction | map04010: MAPK signaling pathway; map04020: Calcium signaling pathway |

| Cellular Processes | Transport, catabolism, cell growth, death | map04150: mTOR signaling pathway |

| Organismal Systems | Immune, endocrine, nervous, circulatory systems | map04630: JAK-STAT signaling pathway |

| Human Diseases | Cancers, infectious, substance dependence | map05200: Pathways in cancer |

| Drug Development | Chronology of anti-infectives, chemical structure maps | map07010: Chronology: Antiinfectives |

Each pathway map is identified by a unique identifier combining a 2-4 letter prefix and a 5-digit number (e.g., map05200 for a reference pathway, hsa05200 for the human-specific version) [18] [24]. In these maps, rectangular boxes typically represent genes or enzymes, while circles represent metabolites [24]. This structured visualization helps researchers interpret complex biological processes and place drug targets within their functional context.

Successful chemogenomic analysis relies on a suite of public databases and software tools.

Table 4: Essential Research Reagents and Resources for Chemogenomic Analysis

| Resource Name | Type | Function in Chemogenomic Analysis |

|---|---|---|

| ChEMBL | Database | Provides curated chemical structures, bioactivities (IC₅₀, Kᵢ, EC₅₀), and drug-target linkage data [1] [22]. |

| KEGG PATHWAY | Database | Supplies manually drawn pathway maps for contextualizing targets within biological systems [18] [21]. |

| KEGG ORTHOLOGY (KO) | Database | Provides a system of functional ortholog identifiers for linking genes/proteins to pathways and networks [21]. |

| Neo4j | Software Tool | A graph database platform ideal for integrating and querying heterogeneous network pharmacology data [16]. |

| RDKit | Software Tool | Cheminformatics library used for calculating compound properties like molecular weight, AlogP, and PSA [23]. |

| Cell Painting | Assay/Method | A high-content imaging assay that generates morphological profiles for connecting chemical perturbations to phenotypes [16]. |

| ScaffoldHunter | Software Tool | Used for analyzing and organizing chemical libraries based on their molecular scaffold hierarchies [16]. |

Integrated Data Schema and Relationship Diagram

The power of chemogenomics emerges from the integration of these discrete data types. The following diagram illustrates the logical relationships and workflow for integrating compound, target, bioactivity, and pathway data into a unified chemogenomic network.

Application Protocol: Building an Integrated Chemogenomic Network

This protocol outlines the steps for constructing a chemogenomic network by integrating ChEMBL and KEGG data, adapted from published research [16]. The goal is to create a system that links drugs, targets, pathways, and diseases, which can be used for target identification and mechanism of action deconvolution in phenotypic screening.

- ChEMBL Database: Download the latest version of the ChEMBL database (e.g., via FTP or web interface) [16] [22].

- KEGG PATHWAY & KO Data: Access the KEGG database via its REST API or dedicated FTP server [18] [21].

- Gene Ontology (GO) & Disease Ontology (DO): Obtain these resources from their official websites for functional and disease annotation [16].

- Software Tools:

Step-by-Step Procedure

Step 1: Data Acquisition and Preprocessing

- From ChEMBL, extract molecules with associated bioassay data. Retain key information including InChIKey, SMILES, standard type (e.g., IC₅₀, Kᵢ), standard value, and target information [16] [23].

- From KEGG, download pathway information and the KO to gene identifier mappings for your organism of interest (e.g., human) [16] [21].

Step 2: Building the Graph Database with Neo4j

- Create nodes in Neo4j for the following entities [16]:

Molecule: With properties likeinchi_key,smiles.Target: With properties liketarget_id,name,type(e.g., SINGLE PROTEIN).Pathway: With properties likepathway_id(e.g., hsa05200),name.Assay: With properties likeassay_type,standard_type,standard_value.Disease: From the Disease Ontology.Scaffold: Generated using ScaffoldHunter to represent core molecular structures [16].

- Create relationships between these nodes to capture biological and chemical logic [16]:

(Molecule)-[HAS_ACTIVITY {value: 5.2, type: "pIC50"}]->(Assay)(Assay)-[TARGETS]->(Target)(Target)-[PART_OF_PATHWAY]->(Pathway)(Molecule)-[HAS_SCAFFOLD]->(Scaffold)(Target)-[ASSOCIATED_WITH_DISEASE]->(Disease)

Step 3: Library Design and Scaffold Analysis

- To create a targeted screening library, filter compounds based on criteria such as cellular activity, chemical diversity, and target selectivity [19].

- Use ScaffoldHunter to organize the selected compounds by their hierarchical scaffold trees. This helps ensure coverage of diverse chemotypes and identifies representative core structures for the library [16].

Step 4: Functional Enrichment Analysis

- For a set of targets identified from a phenotypic screen (e.g., via Cell Painting), use the R package

clusterProfilerto perform KEGG pathway enrichment analysis. This identifies biological pathways that are statistically over-represented in your target list [16] [24]. - Similarly, use the

DOSEpackage to perform Disease Ontology enrichment analysis to uncover potential disease associations [16].

Step 5: Querying and Visualization

- Use Cypher (Neo4j's query language) to extract sub-networks of interest. For example, to find all compounds active against a specific pathway:

- Visualize the resulting networks directly in Neo4j Browser or export for further analysis to identify key network nodes and relationships.

Expected Results and Interpretation

Upon completion, you will have a unified graph database that allows for complex queries across chemical and biological spaces. For instance, you can:

- Identify Multi-Target Compounds: Find molecules that are annotated to hit multiple proteins within a disease-relevant pathway, suggesting potential as multi-target agents [15].

- Deconvolute Phenotypic Screens: Input a list of "hit" compounds from a phenotypic screen (like Cell Painting) into the network to identify which of their known protein targets are clustered in specific pathways, thereby proposing a mechanism of action [16].

- Propose Drug Repurposing: Discover existing drugs (with high

max_phase) that hit targets associated with a new disease pathway, suggesting new therapeutic indications [5].

The following diagram visualizes the multi-step workflow of this protocol, from data collection to application.

The integration of chemogenomics data is a cornerstone of modern drug discovery and chemical biology research, enabling the systematic study of interactions between small molecules and biological targets. Two of the most critical public resources in this domain are the Kyoto Encyclopedia of Genes and Genomes (KEGG) and the ChEMBL database. KEGG is an integrated database resource that incorporates genomic, chemical, and systemic functional information, particularly through its pathway maps, BRITE functional hierarchies, and KEGG modules [25]. ChEMBL is a manually curated database of bioactive molecules with drug-like properties, bringing together chemical, bioactivity, and genomic data to aid the translation of genomic information into effective new drugs [1]. Together, these resources provide complementary data types that, when integrated, offer a powerful platform for understanding complex chemical-biological interactions and facilitating drug discovery efforts.

For researchers in chemogenomics, understanding how to programmatically access and combine data from these resources is essential for building comprehensive datasets that link compound structures with their biological activities, molecular targets, and pathway contexts. This application note provides detailed protocols for accessing KEGG and ChEMBL data through their public APIs and download options, with a specific focus on integration methodologies for chemogenomic analysis.

KEGG Database Structure

KEGG is organized as a set of interconnected databases that can be broadly categorized into four main areas [25] [24]:

- Systems Information: Includes PATHWAY, MODULE, and BRITE databases

- Genomic Information: Includes GENES, GENOME, and ORTHOLOGY databases

- Chemical Information: Includes COMPOUND, GLYCAN, REACTION, and ENZYME databases

- Health Information: Includes DISEASE and DRUG databases

The most core databases are KEGG PATHWAY and KEGG ORTHOLOGY (KO). KEGG PATHWAY contains manually drawn pathway maps representing molecular interaction and reaction networks, while KO provides a classification of orthologous gene groups that serve as functional units in pathway maps [24]. Each pathway in KEGG is encoded with 2-4 prefixes and 5 numbers (e.g., map00010 for metabolic pathways, hsa04110 for human cell cycle) [24].

ChEMBL Database Structure

ChEMBL is a bioactivity database focused on drug-like small molecules, containing 2D structures, calculated properties, and abstracted bioactivities (e.g., binding constants, pharmacology, and ADMET data) [26]. The data is curated from selected articles in more than 200 journals and patents, with releases occurring approximately 2-3 times per year [26]. Each entity in ChEMBL (compounds, targets, assays, documents) is assigned a unique ChEMBL ID, while an internal compound identifier (molregno) is also maintained [26].

Comparative Database Analysis

Table 1: Key Characteristics of KEGG and ChEMBL Databases

| Characteristic | KEGG | ChEMBL |

|---|---|---|

| Primary Focus | Biological pathways and systemic functions | Bioactive molecules and drug discovery data |

| Core Content | Pathway maps, ortholog groups, compounds, diseases | Compound structures, bioactivities, target annotations |

| Data Curation | Manually created reference datasets with computationally generated organism-specific datasets | Manually curated from literature and deposited datasets |

| Update Frequency | Regular updates | 2-3 times per year [26] |

| Licensing | Custom license | Creative Commons Attribution-Share Alike 3.0 Unported [26] |

| Unique Identifiers | K numbers (KO), C numbers (compounds), D numbers (drugs) | ChEMBL IDs, molregno [26] |

KEGG Data Access Protocols

KEGG REST API

KEGG provides a REST-style API that offers direct programmatic access to its databases. The general form of the API URL is:

where <operation> specifies the action to be performed, and <argument> provides the specific parameters for that operation [27].

Core API Operations

The KEGG API supports several key operations [27]:

- info: Retrieves database release information and statistics

- list: Obtains a list of entry identifiers and associated names

- find: Searches for entries matching query keywords

- get: Retrieves full database entries in flat file format

- conv: Converts between KEGG and external identifiers

- link: Finds related entries across databases

Practical API Usage Examples

Table 2: Key KEGG API Operations and Examples

| Operation | URL Format | Example | Output |

|---|---|---|---|

| info | /info/<database> |

/info/kegg |

Database statistics |

| list | /list/<database> |

/list/pathway/hsa |

List of human pathways |

| find | /find/<database>/<query> |

/find/compound/glucose |

Compounds related to glucose |

| get | /get/<entry> |

/get/hsa:7535 |

Full entry for human gene ZAP70 |

| conv | /conv/<target_db>/<source_db> |

/conv/uniprot/hsa:7535 |

UniProt IDs for KEGG gene |

Code Implementation: Accessing KEGG Data

The following Python code demonstrates how to use the KEGG API to retrieve and parse pathway information:

KEGG Flat File Downloads

For large-scale analyses, KEGG provides complete database downloads in flat file format via its FTP server (https://www.kegg.jp/kegg/download/). These downloads are particularly useful for building local databases or performing comprehensive analyses that would be inefficient via API calls.

ChEMBL Data Access Protocols

ChEMBL Web Services

ChEMBL provides comprehensive web services that allow programmatic access to its data. The base URL for ChEMBL web services is https://www.ebi.ac.uk/chembl/ws. Unlike KEGG's REST-style API, ChEMBL's web services return data in JSON format, making it easily parseable in various programming environments [26].

Key ChEMBL Web Service Endpoints

- Compound Data:

/chembl/api/data/molecule?molecule_chembl_id__in=[CHEMBL_ID] - Bioactivity Data:

/chembl/api/data/activity?molecule_chembl_id__in=[CHEMBL_ID] - Target Information:

/chembl/api/data/target?target_chembl_id__in=[CHEMBL_ID] - Assay Details:

/chembl/api/data/assay?assay_chembl_id__in=[CHEMBL_ID]

Code Implementation: Accessing ChEMBL Data

ChEMBL Database Downloads

For large-scale analyses, the complete ChEMBL database is available for download via MySQL dumps from the ChEMBL FTP site [26]. This is the recommended approach for applications requiring extensive data mining or integration with local databases. The database follows a relational model with multiple tables connecting compounds, assays, targets, and activities.

Integrated Data Access Workflow for Chemogenomic Analysis

Protocol: KEGG and ChEMBL Data Integration

This protocol describes a systematic approach for integrating KEGG and ChEMBL data to enable comprehensive chemogenomic analysis.

Materials and Software Requirements

Table 3: Research Reagent Solutions for Data Integration

| Item | Function | Example/Note |

|---|---|---|

| KEGG API Access | Programmatic retrieval of pathway and compound data | REST-style interface [27] |

| ChEMBL Web Services | Programmatic retrieval of bioactivity data | JSON-based web services [26] |

| Python requests library | HTTP requests for API calls | Alternative: urllib |

| Python pandas library | Data manipulation and analysis | For structuring integrated data |

| Identifier mapping table | Cross-referencing between databases | UniProt IDs common bridge |

| Local database (optional) | Storing integrated dataset | SQLite, MySQL, or PostgreSQL |

Step-by-Step Procedure

Define Research Question and Scope

- Identify specific biological pathways, target classes, or compound families of interest

- Determine the required data types from each database

Retrieve Pathway Information from KEGG

- Use KEGG

listoperation to identify relevant pathways:list(pathway/<org>) - Use KEGG

getoperation to retrieve detailed pathway information - Extract gene/protein identifiers and compound information from pathway data

- Use KEGG

Retrieve Compound and Bioactivity Data from ChEMBL

- Use ChEMBL web services to find compounds targeting pathway components

- Retrieve bioactivity data (IC50, Ki, EC50) for these compounds

- Obtain compound structures and properties

Cross-Reference Identifiers

- Map KEGG gene identifiers to UniProt IDs using KEGG

convoperation - Use UniProt IDs as bridge to ChEMBL target information

- Map KEGG compound IDs to ChEMBL IDs using structure or identifier mapping

- Map KEGG gene identifiers to UniProt IDs using KEGG

Integrate Datasets

- Create unified data structure linking compounds, targets, activities, and pathways

- Resolve any identifier inconsistencies or missing data

- Apply quality filters based on data confidence levels

Validate and Curate Integrated Dataset

- Check for consistency between sources

- Resolve conflicts based on data quality metrics

- Annotate data with source information for traceability

Workflow Visualization

Workflow for KEGG-ChEMBL Data Integration

Example Application: Kinase Inhibitor Profiling

To illustrate the integrated data access approach, consider a research scenario focused on kinase inhibitors and their pathways:

Troubleshooting and Best Practices

Common Data Access Issues

Table 4: Troubleshooting Common Data Access Problems

| Problem | Possible Cause | Solution |

|---|---|---|

| KEGG API returns 400 error | Invalid database name or syntax error | Check database names in KEGG API documentation [27] |

| ChEMBL web service timeout | Large query or server issues | Implement pagination and retry logic |

| Identifier mapping failures | Different identifier systems | Use bridge databases like UniProt for cross-referencing |

| Missing bioactivity data | Limited compound coverage in ChEMBL | Expand search to related compounds or targets |

| Pathway gene missing in ChEMBL | Species-specific data limitations | Check orthologous targets or expand species scope |

Performance Optimization

- Batch Operations: Combine multiple requests when possible to reduce API calls

- Local Caching: Store frequently accessed data locally to minimize repeated queries

- Selective Retrieval: Request only needed data fields to improve response times

- Error Handling: Implement robust error handling for network issues or API limits

Data Quality Considerations

- Source Prioritization: Resolve conflicts between data sources based on reliability metrics

- Confidence Scoring: Implement scoring systems for integrated data quality

- Provenance Tracking: Maintain records of data sources and processing steps

- Regular Updates: Establish processes to refresh integrated datasets with new releases

The integration of KEGG and ChEMBL data through their public APIs and download options provides a powerful foundation for chemogenomic research. By following the protocols outlined in this application note, researchers can efficiently access, integrate, and analyze diverse data types spanning biological pathways, compound structures, and bioactivity profiles. The workflow described enables the construction of comprehensive datasets that support target identification, mechanism of action studies, and chemical biology exploration. As both resources continue to evolve, maintaining flexible data access strategies and staying informed about API updates will be essential for maximizing the value of these rich public resources in drug discovery and chemical biology research.

Practical Integration Strategies and Network Pharmacology Applications

In modern chemogenomic research, the integration of disparate biological and chemical data sources is paramount for uncovering new therapeutic insights. Data harmonization—the practice of combining data from different sources and transforming it into a compatible and comparable format—is essential for overcoming the challenges posed by heterogeneous datasets [28]. Within the context of integrating KEGG (Kyoto Encyclopedia of Genes and Genomes) and ChEMBL, harmonization primarily addresses syntactic (format), structural (schema), and semantic (meaning) inconsistencies [28]. This process enables researchers to create a unified view of compound-target-pathway relationships, facilitating large-scale analysis for drug discovery and target identification.

The integration of KEGG's rich pathway and disease information with ChEMBL's comprehensive bioactivity data for drug-like molecules creates a powerful resource for understanding complex biological systems [29] [30]. However, this integration presents significant challenges, particularly in reconciling different identifier systems and standardizing bioactivity measurements. This protocol provides detailed methodologies for mapping identifiers and standardizing bioactivity data, framed within a broader thesis on chemogenomic analysis.

Successful data harmonization between KEGG and ChEMBL relies on a collection of essential data resources and computational tools that constitute the researcher's toolkit. The table below catalogues these core components with their specific functions in the harmonization workflow.

Table 1: Essential Research Reagents and Resources for KEGG-ChEMBL Integration

| Resource Name | Type | Primary Function in Harmonization |

|---|---|---|

| ChEMBL Database [30] | Bioactivity Database | Provides curated bioactivity data (e.g., IC₅₀, Ki) for drug-like molecules and their targets, including approved drugs and clinical candidates. |

| KEGG DISEASE [31] | Pathway/Disease Database | Offers disease entries with associated genes, pathogens, and pathway maps, representing diseases as perturbed molecular network states. |

| KEGG PATHWAY [29] | Pathway Database | Contains molecular interaction and reaction networks for systemic cellular functions, used for pathway mapping and enrichment analysis. |

| SMILES (Simplified Molecular Input Line Entry System) [32] | Chemical Notation | A linear string notation describing chemical structure, used as a canonical identifier for cross-database chemical mapping. |

| RDKit [32] | Cheminformatics Toolkit | Converts SMILES to canonical form and generates molecular fingerprints for chemical similarity calculations and structure validation. |

| Tanimoto Coefficient [32] | Similarity Metric | Quantifies the chemical similarity between molecular fingerprints (e.g., Morgan fingerprints), enabling analog search and structure-based mapping. |

| LOINC (Logical Observation Identifiers Names and Codes) [33] | Terminology Standard | Provides standardized codes for identifying laboratory and clinical observations, supporting semantic harmonization. |

| SNOMED CT (Systematized Nomenclature of Medicine Clinical Terms) [33] | Clinical Terminology | Offers a comprehensive clinical vocabulary for accurate documentation and communication, facilitating semantic alignment. |

Protocol 1: Mapping Compound Identifiers Across KEGG and ChEMBL

Background and Principle

The first critical step in data harmonization is establishing reliable cross-references between compound identifiers in KEGG DRUG (D numbers) and ChEMBL (CHEMBL IDs). This is challenging due to differing database-specific identifiers, multiple chemical name synonyms, and varied structural representations [32] [30]. This protocol uses structural standardization and chemical similarity searching to create a robust mapping table.

Materials and Reagents

- Data Sources: KEGG DRUG database, ChEMBL database (via REST API)

- Software: RDKit (v.2024.03.1 or higher) for cheminformatics operations

- Programming Environment: Python or R scripting environment with necessary chemical informatics libraries

Experimental Procedure

Data Acquisition and Initial Processing

- Download KEGG DRUG Compounds: Use the KEGG API to retrieve all drug entries, including their D numbers, common names, and chemical structures in SMILES format.

- Extract ChEMBL Compounds: Query the ChEMBL database via its REST API to obtain CHEMBL IDs, preferred names, synonyms, and canonical SMILES for all approved drugs and clinical candidate drugs [30].

Chemical Structure Standardization

- Convert to Canonical SMILES: For each compound from both sources, use RDKit to parse the initial SMILES and generate a canonical, standardized SMILES string. This process ensures a uniform representation of the same chemical structure, resolving notational differences (e.g., 'CCO' vs. 'OCC' for ethanol) [32].

- Validate Chemical Structures: Apply RDKit's chemical validation functions to identify and flag any structures with chemical correctness issues.

Identifier Mapping Through Exact and Similarity Matching

- Exact Structure Match: Perform an exact match on the canonical SMILES between KEGG DRUG and ChEMBL compounds. Pairs with identical canonical SMILES receive the highest confidence mapping.

- Similarity-Based Mapping: For unmapped compounds, calculate chemical similarity using the following sub-steps:

- Generate Molecular Fingerprints: Using RDKit, create Morgan fingerprints (circular fingerprints with radius 2) for each compound.

- Calculate Tanimoto Similarity: Compute the Tanimoto coefficient (T) between fingerprint pairs using the formula: where NA and NB are the number of bits set in fingerprints A and B, respectively, and N_AB is the number of common bits set in both [32].

- Establish Similarity Threshold: Define a Tanimoto coefficient threshold of ≥0.85 to identify high-confidence structural analogs [32].

- Synonym-Based Cross-Reference: Augment structural mapping by comparing name synonyms from both databases. Filter synonyms by removing numerical identifiers without source context and standardizing case and punctuation.

Data Analysis and Quality Control

The results of the mapping procedure should be compiled into a comprehensive compound mapping table, with metrics to evaluate mapping quality.

Table 2: Compound Identifier Mapping Results Between KEGG and ChEMBL

| Mapping Method | Principle | Pairs Mapped | Confidence Level | Common Use Case |

|---|---|---|---|---|

| Exact Structure Match | Identical canonical SMILES | ~5,200 | Very High | Primary mapping for standardized compounds |

| Similarity Match | Tanimoto coefficient ≥0.85 | ~1,450 | High | Mapping salts, formulations, and close analogs |

| Synonym Match | Name-based alignment | ~850 | Medium | Resolving discrepancies in structural representation |

| Manual Curation | Expert review | ~300 | Variable | Complex natural products and biologics |

All mappings should be validated through manual inspection of a random sample (e.g., 5% of each mapping category). The final output is a harmonized compound table linking KEGG D numbers, ChEMBL IDs, canonical SMILES, and standard names.

Protocol 2: Standardizing Bioactivity Data for Pathway Analysis

Background and Principle

Bioactivity measurements in ChEMBL (e.g., IC₅₀, Ki, Kd) exhibit significant methodological variability, making direct comparison challenging [30] [34]. This protocol establishes a standardized framework for transforming heterogeneous bioactivity data into a uniform format compatible with KEGG pathway analysis, enabling meaningful cross-study comparisons and network-based modeling.

Materials and Reagents

- Data Source: ChEMBL bioactivity data (ACTIVITY table, MOLECULE_DICTIONARY, ASSAY table)

- Analytical Tools: Python/R with statistical packages (pandas, numpy, scipy)

- Reference Standards: pChEMBL value definition, BioAssay Ontology (BAO)

Experimental Procedure

Data Extraction and Categorization

Query ChEMBL Database: Extract bioactivity records linking ChEMBL compounds to specific protein targets, including:

Categorize by Assay Type: Classify assays according to the BioAssay Ontology (BAO) including:

- Binding assays (e.g., Ki, Kd)

- Functional assays (e.g., IC₅₀, EC₅₀)

- ADMET assays (Absorption, Distribution, Metabolism, Excretion, Toxicity)

Bioactivity Standardization and Transformation

- Unit Normalization: Convert all activity values to molar units (nM) using appropriate conversion factors.

- pChEMBL Transformation: Apply negative logarithmic transformation to create pChEMBL values for comparable potency/affinity measures using the formula: This transformation creates a consistent scale where higher values indicate greater potency [34].

- Measurement Type Harmonization:

- For IC₅₀ and EC₅₀ values, apply direct pChEMBL transformation.

- For Ki values (binding affinity), note the inherent relationship with IC₅₀ but maintain as distinct categories.

- For percentage inhibition/activation data at fixed concentrations, apply categorization (e.g., active: >50% inhibition, inactive: <25% inhibition).

Data Quality Filtering

Implement stringent quality controls to ensure data reliability:

- Validity Flags: Include only records marked as 'valid' in ChEMBL's data validity comments.

- Value Ranges: Filter out extreme outliers (e.g., standard_value < 0.001 or > 100,000,000)

- Specificity Filters: Prefer data from assays with known specific activity against intended targets.

Data Analysis and Integration with KEGG

The standardized bioactivity data can now be integrated with KEGG pathways for comprehensive chemogenomic analysis.

Table 3: Standardized Bioactivity Data Profile for Pathway Mapping

| Standardized Metric | Data Type | Value Range | KEGG Integration Purpose |

|---|---|---|---|

| pChEMBL Value | Continuous | 4-12 (typical) | Quantitative potency assessment for network perturbation modeling |

| Bioactivity Type | Categorical | Ki, IC₅₀, Kd, etc. | Mechanism of action classification in pathway contexts |

| Target UniProt ID | Identifier | N/A | Direct mapping to KEGG ORTHOLOGY (KO) system and pathway nodes |

| Activity Threshold | Binary | Active/Inactive | Discrete pathway perturbation analysis |

The integrated dataset enables the creation of compound-target-pathway networks where bioactivity potency (pChEMBL values) can be visualized as edge weights in KEGG pathway maps, highlighting key interactions and potential therapeutic targets.

Protocol 3: Integrated Chemogenomic Analysis

Background and Principle

This protocol combines the outputs of Protocol 1 (mapped compound identifiers) and Protocol 2 (standardized bioactivity data) to enable chemogenomic analysis within the KEGG framework. The approach treats diseases as perturbed states of molecular systems and uses drug-target interactions to understand network perturbations [31] [29].

Materials and Reagents

- Input Data: Harmonized compound mapping table (from Protocol 1), Standardized bioactivity data (from Protocol 2)