Improving Chemogenomic Signature Analysis: Strategies for Robustness, AI Integration, and Clinical Translation

This article provides a comprehensive guide for researchers and drug development professionals on advancing chemogenomic signature analysis.

Improving Chemogenomic Signature Analysis: Strategies for Robustness, AI Integration, and Clinical Translation

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on advancing chemogenomic signature analysis. It explores the foundational principles of systematically linking small molecules to genome-wide cellular responses for target identification and mechanism of action studies. The content covers cutting-edge methodological applications, from machine learning integration to phenotypic screening, and addresses critical challenges in data reproducibility, computational optimization, and experimental design. Through comparative analysis of validation frameworks and emerging technologies, we present a strategic roadmap for enhancing the predictive power and clinical relevance of chemogenomic signatures in accelerating therapeutic discovery.

Understanding Chemogenomic Signatures: From Basic Concepts to Systems-Level Responses

Core Concepts and FAQs

What is Chemogenomics?

Chemogenomics is a systematic research strategy that screens targeted chemical libraries of small molecules against families of drug targets (such as GPCRs, kinases, or proteases) with the dual goal of identifying novel drugs and elucidating the function of novel drug targets [1]. It integrates target and drug discovery by using active compounds (ligands) as probes to characterize proteome functions [1]. The interaction between a small compound and a protein induces a phenotype, allowing researchers to associate a protein with a molecular event [1].

What are the main experimental strategies in chemogenomics?

There are two primary experimental approaches, often described as "forward" and "reverse" chemogenomics [1] [2].

- Forward (Classical) Chemogenomics: This is a phenotype-based screening approach. The molecular basis of a desired phenotype (e.g., arrest of tumor growth) is unknown. Researchers screen for small molecules that induce this phenotype and then use the identified modulators as tools to discover the responsible protein target [1] [2].

- Reverse Chemogenomics: This is a target-based screening approach. It begins with a known, purified target protein (e.g., an enzyme). Researchers identify small molecules that perturb the target's function in an in vitro assay. The modulators are then analyzed in cellular or whole-organism tests to determine the biological phenotype resulting from target modulation [1] [2].

A persistent challenge in our lab is the poor reproducibility of chemogenomic signatures. How can this be addressed?

The reproducibility of chemogenomic fitness signatures is a recognized concern, but studies show that core biological responses are robust. A 2022 large-scale comparison of two independent yeast chemogenomic datasets (comprising over 35 million gene-drug interactions) found that despite different experimental protocols, the majority (66.7%) of the 45 major cellular response signatures identified in one dataset were also present in the other [3] [4]. To improve reproducibility in your experiments, consider the following:

- Adhere to Data Curation Best Practices: Implement a rigorous data curation workflow to flag or correct erroneous chemical structures and bioactivity measurements before analysis [5].

- Adopt a Meta-Analysis Approach: For drug repurposing studies, using an ensemble of multiple disease gene signatures, rather than a single signature, can significantly increase the reproducibility of top drug hits, as demonstrated in a lung cancer study where reproducibility improved from 44% to 78% [6].

- Validate with Orthogonal Methods: Confirm key findings using alternative techniques, such as CRISPR-based screens in mammalian cells, to constrain false positives [3].

How do I determine the Mode of Action (MoA) of a compound from a phenotypic screen?

Determining the MoA is a central application of chemogenomics [1]. The HaploInsufficiency Profiling and HOmozygous Profiling (HIPHOP) platform is a powerful method for this [3] [4].

- HIP (HaploInsufficiency Profiling): Screens a pool of heterozygous deletion strains for essential genes. A strain showing hypersensitivity to a drug (a fitness defect) often indicates that the drug's protein target is the product of the haploinsufficient gene [3] [4].

- HOP (Homozygous Profiling): Screens a pool of homozygous deletion strains for non-essential genes. It identifies genes involved in the drug's target biological pathway or those required for drug resistance [3] [4].

The combined HIPHOP profile provides a genome-wide view of the cellular response, directly identifying drug-target candidates and genes involved in resistance mechanisms [3].

Experimental Protocols & Workflows

Detailed Protocol: HIPHOP Chemogenomic Fitness Profiling in Yeast

The following protocol is synthesized from large-scale studies comparing methodologies [3] [4].

Principle: Competitive growth of a pooled collection of barcoded yeast deletion strains in the presence of a compound. Drug-sensitive strains are depleted from the pool, and their identity is revealed by sequencing the unique DNA barcodes.

Key Reagents and Materials Table: Essential Research Reagent Solutions for HIPHOP Profiling

| Reagent/Material | Function/Description |

|---|---|

| Barcoded Yeast Deletion Collections | Pooled strains; ~1,100 heterozygous (HIP) and ~4,800 homozygous (HOP) deletion mutants [3]. |

| Chemical Library | A collection of annotated small molecules for screening [7]. |

| Growth Medium (e.g., YPD) | Standard medium for culturing yeast strains [3]. |

| 48-well or 24-well Assay Plates | Platform for high-throughput culturing of yeast pools under different drug conditions [3]. |

| Robotic Liquid Handling System | For accurate and reproducible dispensing of cells and compounds [3]. |

| Plate Spectrophotometer or Cytomat Incubator | For monitoring cellular growth (Optical Density) over time [3]. |

| PCR Reagents & Primers | For amplification of barcode regions from genomic DNA for sequencing. |

| High-Throughput Sequencer | For quantifying the abundance of each strain via barcode sequencing [3]. |

Step-by-Step Procedure:

- Pool Preparation: Combine the entire collection of heterozygous (HIP) and homozygous (HOP) deletion strains into two separate, representative pools. Grow to mid-log phase.

- Compound Dispensing: Dispense the chemical library compounds into assay plates using a robotic system. Include negative control wells (e.g., with DMSO vehicle only) [3].

- Inoculation and Growth: Dilute the yeast pools to a standardized optical density (e.g., O.D.600 of 0.02) and inoculate them into the compound-containing plates. Grow the cultures for a defined number of cell generations (e.g., ~20 for HIP, ~5 for HOP) [3] [4].

- Sample Collection: Collect cells during log-phase growth or at a fixed time point. The method of collection (by doubling time vs. fixed time) can affect which slow-growing strains are detectable [4].

- Genomic DNA Extraction & Barcode Amplification: Isolate genomic DNA from the harvested cells. Use PCR to amplify the unique 20bp "UPTAG" and "DNTAG" barcodes from each strain.

- Sequencing and Raw Data Generation: Sequence the amplified barcodes using high-throughput sequencing to determine the relative abundance of each strain in the pool.

Data Analysis Pipeline:

- Strain Intensity Normalization: Normalize the raw barcode sequencing counts. Different pipelines use different methods (e.g., "best tag" selection based on control variability or averaging uptag/downtag signals) [3] [4].

- Fitness Defect (FD) Score Calculation: For each strain in each drug screen, calculate a fitness defect score. This is typically a robust z-score based on the log₂ ratio of the strain's abundance in the control condition versus the drug-treated condition [3] [4].

FD_ij = (log₂Ratio_ij - Median(log₂Ratio_j)) / MAD(log₂Ratio_j) - Identification of Significant Interactions: Apply statistical thresholds (e.g., z-score < -5 or p ≤ 0.001) to identify strains with significant sensitivity to the drug [3].

- Signature Analysis: Cluster the chemogenomic profiles to identify common response signatures and link them to biological processes and mechanisms of action [3].

Workflow: An Integrated Data Curation Pipeline

Before analyzing any chemogenomics data, rigorous curation is essential to ensure data quality and the reliability of subsequent models [5].

Data Presentation and Analysis

Comparison of Large-Scale Yeast Chemogenomic Datasets

The following table summarizes the key methodological differences between two major independent studies, which is critical for understanding sources of variability in results [3] [4].

Table: Quantitative Comparison of HIPHOP Screening Methodologies

| Parameter | HIPLAB (Academic) Dataset | NIBR (Novartis) Dataset |

|---|---|---|

| Total Screens | 3,356 | 2,725 |

| Unique Compounds | 3,250 | 1,776 |

| HET Strains | ~1,095 (Essential genes) | ~5,796 (Essential + Nonessential) |

| HOM Strains | ~4,810 | ~4,520 |

| Bioassay Concentration | IC₂₀ | IC₃₀ |

| Final Fitness Score | Robust z-score (MADL) | Normalized z-score (aMADL/Strain SD) |

| Significance Threshold | Standard normal distribution P ≤ 0.001 | z-score < -5 |

Key Public Consortia and Databases for Mammalian Chemogenomics

As the field moves toward mammalian systems, several key resources provide essential data [3].

Table: Key Public Resources for Mammalian Chemogenomic Data

| Consortium/Resource | Primary Focus | URL |

|---|---|---|

| BioGRID ORCS | Open Repository of CRISPR Screens | https://orcs.thebiogrid.org/ |

| PRISM | Multiplexed viability screening in cell lines | https://www.theprismlab.org/ |

| LINCS | Transcriptomic responses to chemical and genetic perturbations | https://lincsproject.org/LINCS/ |

| DepMap | Dependency mapping and drug sensitivity in cancer cell lines | https://depmap.org/portal/ |

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between forward and reverse chemogenomics? The core difference lies in the starting point of the investigation.

- Forward chemogenomics begins with an observed phenotype or cellular response and works to identify the small molecules that induce it and their protein targets [1] [8].

- Reverse chemogenomics starts with a specific, known protein target and screens for small molecules that modulate its activity, then analyzes the resulting phenotype [1] [9].

2. When should I choose a forward approach over a reverse approach?

- Use a forward approach when investigating a complex biological process or disease phenotype without a pre-defined molecular hypothesis. It is ideal for discovering novel therapeutic targets and mechanisms of action [8].

- Use a reverse approach when you have a well-validated, suspected therapeutic target (e.g., a specific kinase or receptor) and aim to discover drug candidates that engage with it [8] [9].

3. A common problem in forward chemogenomics is the difficulty of target identification after a phenotypic hit. How can this be addressed? Integrate chemogenomic profiling early. Using competitive fitness-based assays, such as HaploInsufficiency Profiling (HIP), can directly identify drug target candidates by revealing which heterozygous deletion strains are most sensitive to the compound [4] [10]. This provides a shortlist of likely targets for secondary validation.

4. Why might my reverse chemogenomics screen identify hits that fail to produce the expected phenotype in cellular or organismal models? This often occurs because cell-free biochemical assays used in reverse screens lack the full cellular context. The compound's activity may be affected by factors like cell permeability, metabolism, or off-target effects that neutralize the intended outcome [4]. Always follow up in vitro hits with cell-based or organismal phenotypic assays.

5. How reproducible are chemogenomic fitness signatures, and what factors affect this? Large-scale comparative studies have shown that chemogenomic response signatures are robust. Despite differences in experimental protocols and analytical pipelines between research groups, the majority of biological signatures (e.g., mechanisms of action, enriched biological processes) are conserved. Key factors affecting reproducibility include the method of strain pool cultivation (fixed time vs. doubling-based) and data normalization strategies [4].

Troubleshooting Experimental Issues

Table 1: Troubleshooting Forward Chemogenomics Screens

| Problem | Potential Cause | Recommended Solution |

|---|---|---|

| High false-positive hit rate | Non-specific compound toxicity or promiscuous binders. | Counter-screen hits in orthogonal assays; use structure-activity relationship (SAR) analysis to prioritize specific leads [9]. |

| Unable to identify compound's molecular target | The reference dataset for "guilt-by-association" is not comprehensive enough [10]. | Use direct target identification methods like HIPHOP profiling [4] [10] or chemoproteomics [9]. |

| Weak or noisy phenotypic readout | Assay not optimized for the biological system or compound concentration is sub-optimal. | Perform dose-response curves; use high-content imaging (e.g., Cell Painting) to extract richer, multivariate phenotypic data [11]. |

Table 2: Troubleshooting Reverse Chemogenomics Screens

| Problem | Potential Cause | Recommended Solution |

|---|---|---|

| Hit compounds are inactive in cellular models | Poor cell permeability, efflux, or compound instability in cell culture [4]. | Assess compound stability and cellular uptake; use chemical probes to confirm target engagement in cells [9]. |

| Uninterpretable phenotype despite target engagement | The target protein functions in a redundant pathway, or its inhibition requires specific conditions. | Combine with genetic knockdown (e.g., CRISPR-Cas9) to see if it phenocopies the drug effect; test in a panel of relevant cell lines [9]. |

| Off-target effects confounding the phenotype | The compound library contains molecules with limited selectivity [9]. | Screen against focused libraries with well-annotated selectivity profiles; use chemoproteomics to identify all binding partners in a cellular context [9] [11]. |

Core Principles and Workflows

Table 3: Comparison of Forward and Reverse Chemogenomics

| Feature | Forward Chemogenomics | Reverse Chemogenomics |

|---|---|---|

| Starting Point | Observable phenotype (e.g., arrest of tumor growth) [1] [8]. | Known, isolated protein target or gene family [1] [8]. |

| Primary Screening Method | Phenotypic assays on cells or whole organisms [8]. | Target-based high-throughput screening (HTS), often cell-free [8]. |

| Key Outcome | Identification of bioactive compounds and their associated molecular targets [1]. | Identification of ligands (hits) for a predefined target [1]. |

| Typical Follow-up | Target deconvolution using chemogenomic profiles or other genomic methods [10]. | Biological validation of the phenotype induced by target modulation [8]. |

| Main Challenge | Designing assays that facilitate subsequent target identification [1] [8]. | Translating in vitro activity to a relevant cellular or in vivo phenotype [4]. |

Experimental Workflow Diagrams

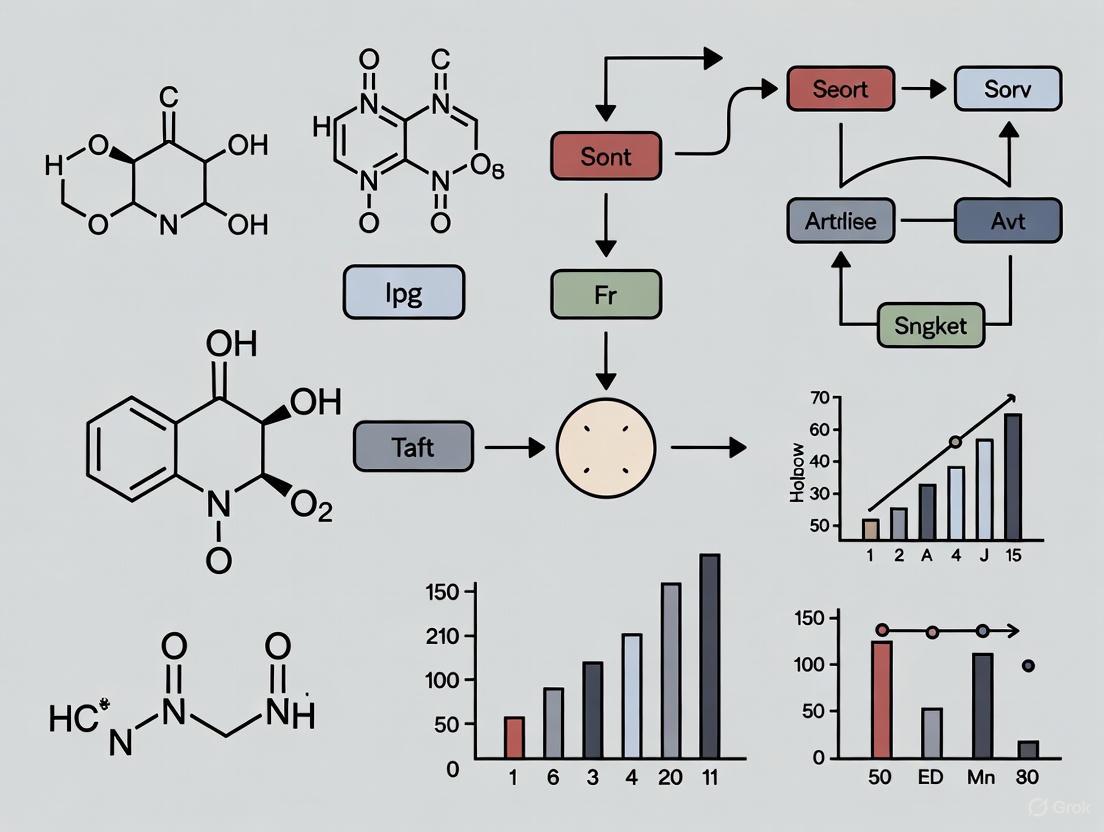

The following diagrams illustrate the core decision-making and experimental workflows for the two chemogenomic approaches.

Diagram 1: Choosing Between Forward and Reverse Chemogenomics. This flowchart guides the initial experimental design based on the research objective.

Diagram 2: Forward Chemogenomics Workflow. This workflow shows the process from phenotype observation to target identification, highlighting the key role of chemogenomic profiling.

The Scientist's Toolkit: Key Research Reagents & Platforms

Table 4: Essential Tools for Chemogenomic Signature Analysis

| Reagent / Platform | Function in Experiment | Key Consideration |

|---|---|---|

| Barcoded Yeast Knockout (YKO) Collections (Heterozygous & Homozygous) [4] [10] | Enables competitive fitness profiling (HIPHOP). HIP identifies drug targets; HOP identifies genes for drug resistance. | Ensure pool diversity; slow-growing strains may be lost in prolonged cultures [4]. |

| Focused Chemical Libraries (e.g., kinase-focused, GPCR-focused) [1] [11] | Provides a biased set of compounds to screen against a specific target family, increasing hit rates. | Library design should be informed by the structure-activity relationship homology (SAR) concept [1] [8]. |

| Annotated Compound Libraries (e.g., Prestwick, NCATS MIPE) [11] | Contains compounds with known bioactivity, enabling "guilt-by-association" analysis to predict Mechanism of Action (MoA). | Annotation quality and breadth are critical for accurate predictions [9]. |

| Cell Painting Assay / High-Content Imaging [11] | Provides a high-dimensional morphological profile for a compound, serving as a rich phenotypic fingerprint. | Generates large, complex data sets requiring specialized bioinformatic analysis [11]. |

| CRISPR-Cas9 or RNAi Libraries [9] | Used for functional genomic screens to validate targets identified in chemogenomic screens or to probe specific pathways. | Provides orthogonal evidence to strengthen target-phenotype linkages [9]. |

The Limited Cellular Response Hypothesis proposes that a cell's reaction to chemical perturbation is not infinite but is instead funneled through a finite set of core biological systems. First robustly demonstrated in Saccharomyces cerevisiae, this principle suggests that the genome-wide fitness signatures of thousands of distinct small molecules can be described by a limited network of conserved chemogenomic profiles [4] [12]. This hypothesis has profound implications for drug discovery, as it implies that mechanisms of action (MoA) can be systematically classified and that the cellular machinery responding to chemical stress is modular and predictable. For the researcher, this framework transforms the challenge of MoA deconvolution from an open-ended search into a structured mapping exercise against known response signatures. The following guide and FAQs are designed to help you navigate the technical and analytical challenges of generating and interpreting these fitness signatures within this conceptual framework.

Core Concept Visualization: The Limited Cellular Response Network

The diagram below illustrates the core principle of the hypothesis: diverse chemical perturbations converge on a limited set of cellular response signatures.

Key Experimental Evidence and Supporting Data

The foundational evidence for the Limited Cellular Response Hypothesis comes from large-scale comparative studies. The table below summarizes key quantitative findings from a major reproducibility study that compared two independent, large-scale yeast chemogenomic datasets [4] [13].

Table 1: Core Evidence from Comparative Analysis of Yeast Chemogenomic Datasets

| Metric | HIPLAB Dataset | NIBR Dataset | Combined Analysis Finding |

|---|---|---|---|

| Total Screens | 3,356 | 2,725 | Over 6,000 unique chemogenomic profiles analyzed |

| Unique Compounds | 3,250 | 1,776 | More than 35 million gene-drug interactions |

| Heterozygous (HIP) Strains | ~1,100 (Essential genes) | ~5,800 (Essential + Nonessential) | Different strain coverage, yet convergent signatures |

| Homozygous (HOP) Strains | ~4,800 | ~4,500 | ~300 fewer slow-growing strains in NIBR pool |

| Previously Identified Signatures | 45 Major Signatures | Not Applicable | 66.7% (30/45) conserved in the NIBR dataset |

| Biological Process Enrichment | Not Specified | Not Specified | 81% of robust signatures enriched for Gene Ontology (GO) terms |

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful chemogenomic screening relies on specific biological and computational tools. This table outlines key reagents and their critical functions in fitness profiling experiments.

Table 2: Essential Research Reagents and Resources for Fitness Profiling

| Reagent / Resource | Function in Experiment | Example & Notes |

|---|---|---|

| Barcoded Knockout Collections | Enables pooled growth of thousands of strains; strain identity tracked via unique DNA barcodes. | Yeast Heterozygous Deletion Pool (e.g., ~1,100 essential genes); Yeast Homozygous Deletion Pool (e.g., ~4,800 non-essential genes) [4]. |

| HIP/HOP Chemogenomic Platform | Genome-wide assay identifying drug targets (HIP) and resistance genes (HOP) via fitness defects [4] [13]. | HIP: Haploinsufficiency Profiling targets essential genes. HOP: Homozygous Profiling targets non-essential genes. |

| CRISPR Knockout Libraries | Enables genome-wide chemogenomic screens in human cell lines; equivalent to yeast knockout collections. | Genome-wide pooled CRISPR KO screens in human cells (e.g., NALM6 pre-B cell line) [14]. |

| Reference Drug Compounds | Compounds with known MoA; their chemogenomic profiles form the reference for classifying unknowns. | A diverse set of well-characterized inhibitors (e.g., antimalarials, metabolic inhibitors) [15]. |

| Public Data Repositories | Sources for comparing new chemogenomic profiles against existing datasets to infer MoA. | BioGRID, PRISM, LINCS, DepMap [4] [13]. |

Experimental Protocol Visualization: Core Chemogenomic Workflow

The following diagram outlines the standard workflow for generating a chemogenomic fitness signature, from pool creation to signature analysis.

Troubleshooting Guide & Frequently Asked Questions (FAQs)

FAQ 1: Despite the "limited response" theory, my novel compound's signature doesn't closely match any known profiles. What could explain this, and what are my next steps?

Answer: A novel signature is a significant finding, not necessarily a failure of the hypothesis. Consider these possibilities and actions:

- True Novel Mechanism: Your compound may act on a biological pathway not heavily represented in your reference database. The hypothesis posits a limited number of signatures, but this number is not zero. Your discovery could help define a new signature cluster.

- Technical Divergence: Differences in experimental protocol (e.g., dose, growth media, strain background) can cause profile shifts. Re-check your assay conditions against those used in public datasets (e.g., HIPLAB used IC₂₀, NIBR used IC₃₀) [4] [13].

- Actionable Steps:

- Profile at Multiple Concentrations: A true signature should be dose-dependent and become more robust at higher, yet still relevant, concentrations.

- Analyze Sub-networks: Instead of the whole profile, check if subsets of genes (e.g., those in a specific GO biological process) show coherence with known signatures.

- Validate with Genetics: Use your profile to identify candidate target genes and validate them through orthogonal assays (e.g., overexpression, targeted mutagenesis).

FAQ 2: I am struggling with reproducibility between replicates or when comparing to published studies. What are the key factors to control for?

Answer: Reproducibility is a known challenge, even between large-scale studies. Focus on these critical factors, which were key differentiators in the HIPLAB vs. NIBR comparison [4] [13]:

- Pool Composition and Growth: Ensure your mutant pool is healthy and all strains are represented. The NIBR dataset lost ~300 slow-growing homozygous mutants compared to HIPLAB, which can alter profiles [4]. Carefully control the number of cell doublings during the assay.

- Data Normalization and Scoring: The method for calculating Fitness Defect (FD) scores is critical.

- Recommendation: Adopt a standardized pipeline and apply it consistently. When comparing to public data, reprocess the raw data through your own pipeline if possible, or carefully note the analytical differences.

FAQ 3: How can I effectively transition my chemogenomic screening from yeast to mammalian cells while still leveraging this hypothesis?

Answer: The Limited Cellular Response Hypothesis is a conserved principle. The workflow is conceptually similar, but the tools differ.

- Key Technological Shift: Replace the yeast knockout collection with a genome-wide CRISPR/Cas9 knockout library in your chosen human cell line [4] [14]. Services like ChemoGenix offer streamlined screening in pre-B lymphocytic human cell lines (e.g., NALM6) as a starting point [14].

- Considerations for Complexity:

- Cell Line Choice: The response signature can be cell-type specific. Choose a model relevant to your biological question.

- Genetic Redundancy: Mammalian genomes have more redundancy, which can dilute fitness signals. Using sensitized genetic backgrounds (e.g., p53 knockout) can help.

- Data Resources: Leverage mammalian-specific consortia data from DepMap, PRISM, and LINCS for comparison [4]. These resources perform the same function for human cells as yeast chemogenomic databases.

FAQ 4: My chemogenomic profile for a compound is clean, but how do I move from a signature to a validated molecular target?

Answer: A chemogenomic signature is a starting point for validation, not the end. Follow this logical pathway:

- Prioritize Candidate Hits: From your profile, generate a list of the most sensitive strains (greatest FD scores in HIP) or resistant strains (in HOP). Genes whose heterozygotes are most sensitive are strong candidates for the direct drug target [4] [13].

- Perform Bioinformatics Enrichment: Analyze your gene list for enrichment in Gene Ontology (GO) terms, biological pathways (KEGG, Reactome), and protein-protein interaction networks. This tells you which process is being affected, often more reliably than a single gene.

- Employ Orthogonal Validation:

- Biochemical Assays: Test for direct binding if a candidate target is proposed.

- Genetic Validation: If deleting or knocking down a gene confers resistance, this is strong evidence for a specific interaction.

- Rescue Experiments: Show that expressing the wild-type gene resensitizes the resistant mutant.

- Multi-Species Comparison: If possible, see if the signature is conserved in other model organisms, which greatly strengthens the evidence.

Troubleshooting Guides and FAQs

HIPHOP Chemogenomic Profiling

Q1: Our HIPHOP chemogenomic profiles show poor reproducibility between replicates. What could be the cause and how can we improve this?

A: Poor reproducibility in HIPHOP screens often stems from variations in pool growth conditions or data normalization methods. Key considerations include:

- Growth Measurement: Ensure consistent measurement of cell doublings rather than fixed time points. The Novartis Institute of Biomedical Research (NIBR) protocol used fixed time points, while the HIPLAB protocol collected samples based on actual doubling time, which can affect the detection of slow-growing strains [4].

- Data Processing: Implement robust batch effect correction. The HIPLAB dataset normalized logged raw average intensities across all arrays using a variation of median polish that incorporated batch effect correction, whereas the NIBR dataset normalized by "study id" without specific batch effect correction [4].

- Strain Pool Integrity: Be aware that overnight growth (~16 hours) can lead to the loss of approximately 300 slow-growing homozygous deletion strains from the pool. Adjust growth conditions to maintain library complexity [4].

Q2: How can we validate that a chemogenomic signature from a HIPHOP screen is biologically relevant?

A: To validate chemogenomic signatures, leverage the fact that the cellular response to small molecules is limited and can be described by a network of conserved signatures. Cross-reference your signatures with large-scale datasets. For example, a comparison of two large-scale yeast chemogenomic datasets (HIPLAB and NIBR) revealed that the majority (66.7%) of 45 major cellular response signatures identified in one dataset were also present in the other, providing strong evidence for their biological relevance [4].

CRISPR Genome Editing

Q3: We are getting low prime-editing efficiency in hard-to-transfect cells like hiPSCs. How can we improve this?

A: Low editing efficiency in such cells is common with transient transfection. Implement the piggyBac prime-editing (PB-PE) system for sustained expression [16].

- Methodology: Clone your prime-editor and pegRNA expression cassettes into a piggyBac transposon vector. Co-transfect this with a plasmid expressing the piggyBac transposase. The transposase integrates the prime-editing cargo from the plasmid into the host genome, leading to stable, long-term expression. This allows extended time for the prime-editor to act, overcoming limitations of transient transfection.

- Evidence: This approach has been shown to achieve prime-editing in more than 50% of hiPSC cells after antibiotic selection, even with non-optimized transfection protocols [16].

Q4: After successful CRISPR/Cas9 mutagenesis in a vegetatively propagated plant, how can we cleanly remove the transgene cassette?

A: Use a piggyBac-mediated transgenesis system for temporary CRISPR/Cas9 expression [17].

- Workflow:

- Integration: Design a construct where the CRISPR/Cas9 cassette is flanked by piggyBac inverted terminal repeats (ITRs) and introduce it into the plant genome.

- Mutation Induction: Allow time for CRISPR/Cas9 to induce targeted mutations in the endogenous gene.

- Excision: Express the piggyBac transposase (PBase) to catalyze the precise excision of the transgene cassette from the genome. The piggyBac system is "footprint-free," meaning it leaves no unwanted sequences behind [17] [18].

- Proof of Concept: This method has been successfully demonstrated in rice, where precise excision of the piggyBac transposon was achieved after mutation induction, leaving behind only the desired targeted mutation [17].

PiggyBac Mutagenesis

Q5: Our piggyBac mutagenesis screen has identified a candidate driver gene. How can we functionally validate its cooperation with a known oncogene in vivo?

A: A powerful approach is to combine piggyBac mutagenesis with genetically engineered mouse models (GEMMs) in a conditional manner.

- Protocol:

- Mouse Model: Generate mice that conditionally express an initiating driver (e.g., EGFRvIII in neural tissues) and a conditional piggyBac transposon.

- Mutagenesis: Cross these with mice expressing a conditional transposase (e.g., Cre-recombinase). Upon Cre activation, the transposase "jumps" the piggyBac transposon throughout the genome, creating new mutations in somatic cells.

- Analysis: Analyze the resulting tumors for piggyBac insertions to identify genes that cooperate with the initial driver [19].

- Application: This method was used to identify 281 known and novel drivers that cooperate with mutant EGFR in glioma formation, which were then validated for their clinical relevance in human glioma datasets [19].

Q6: When using piggyBac for gene editing with a selection marker, how do we remove the marker cleanly after selection?

A: Use an excision-only piggyBac transposase (PBx).

- Procedure: After selection and isolation of edited cells, transfert with a plasmid expressing PBx. This mutant transposase is competent for excision but is defective for re-integration, preventing the transposon from re-inserting elsewhere in the genome [18].

- Enrichment: To enrich for cells that have excised the cassette, include a negative selection marker (e.g., thymidine kinase) within the piggyBac transposon. After excision, apply a negative selection drug (e.g., fialuridine) to kill any cells that still retain the transposon [18] [16].

Table 1: Key Performance Metrics for Featured Platforms

| Platform | Metric | Reported Value | Experimental Context |

|---|---|---|---|

| piggyBac Transgenesis | Successful Transposition Rate [17] | ~1% to 3.6% of transgenic callus lines | Rice callus transformation from extrachromosomal T-DNA |

| PB-Prime Editing (PB-PE) | Editing Efficiency [16] | >50% of hiPSCs | After antibiotic selection in a traffic light reporter system |

| HIPHOP Profiling | Signature Conservation [4] | 66.7% (30 of 45 signatures) | Overlap between two independent large-scale yeast chemogenomic datasets |

| piggyBac Mutagenesis | Candidate Cooperating Drivers Identified [19] | 281 genes | In vivo screen for EGFR-mutant glioma drivers in mice |

Experimental Protocols

This protocol is designed to achieve CRISPR/Cas9 mutagenesis followed by complete removal of the transgene.

- Vector Construction: Clone the CRISPR/Cas9 and a positive selection marker (e.g., hygromycin phosphotransferase, hpt) expression cassettes inside the piggyBac inverted terminal repeats (ITRs). Place a hyperactive piggyBac transposase (e.g., hyPBase) and a negative selection marker (e.g., diphtheria toxin A subunit, DT-A) outside the ITRs on the same T-DNA.

- Transformation: Transform rice calli via Agrobacterium-mediated transformation using the constructed vector.

- Selection & Screening: Select transformed calli on hygromycin-containing media. Use PCR screening with primers inside and across the piggyBac ITRs to identify lines where transposition occurred from the T-DNA into the genome, rather than random T-DNA integration.

- Mutation Induction: Regenerate plants from positive callus lines. The stable integration of piggyBac allows for continuous expression of CRISPR/Cas9, inducing mutations at the target locus.

- Transgene Excision: Cross the regenerated plants with a stable transposase (PBase) expresser, or re-introduce PBase transiently. The PBase will catalyze the precise excision of the piggyBac transposon (carrying CRISPR/Cas9 and the marker) from the genome.

- Validation: Use sequencing to confirm the presence of the desired targeted mutation at the endogenous gene and the absence of the piggyBac transposon.

This protocol uses a library of piggyBac mutants to deduce drug mechanisms of action.

- Library Preparation: Create a library of P. falciparum clones, each with a single piggyBac transposon insertion, in a uniform genetic background (e.g., NF54).

- Drug Treatment: Treat the wild-type and each mutant clone in the library with a panel of antimalarial drugs and metabolic inhibitors. Perform quantitative dose-response assays to determine the half-maximal inhibitory concentration (IC50) for each drug in each clone.

- Data Normalization: Normalize the IC50 of each mutant for a given drug to the IC50 of the wild-type parasite for that same drug. This generates a fitness value for each mutant under drug pressure.

- Profile Generation & Clustering: Create a matrix of normalized fitness values (mutants x drugs). Use hierarchical clustering and correlation analysis (e.g., Spearman correlation) to group drugs with similar chemogenomic profiles and mutants with similar fitness responses.

- Mechanism Inference: Identify clusters where drugs with known, shared mechanisms of action group together. Novel compounds clustering with these can be inferred to have similar mechanisms. Mutants that show strong hypersensitivity or resistance can point to genes involved in the drug's pathway.

Workflow and Pathway Visualizations

PiggyBac temporary CRISPR workflow for plants [17]

Chemogenomic profiling with PiggyBac mutants [15]

Comparison of CRISPR editing techniques [16]

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Featured Experimental Platforms

| Reagent / Tool | Function / Description | Key Feature / Application |

|---|---|---|

| piggyBac Transposon Vector | A plasmid containing DNA cargo flanked by piggyBac Inverted Terminal Repeats (ITRs). | Enables genomic integration and precise, footprint-free excision of the cargo. Cargo capacity >200 kb [18]. |

| piggyBac Transposase (PBase) | An enzyme that catalyzes the cut-and-paste transposition of the piggyBac transposon. | Required for initial integration. Often provided on a separate helper plasmid [17] [18]. |

| Excision-only Transposase (PBx) | A mutant piggyBac transposase competent for excision but defective for re-integration. | Prevents re-integration of the transposon after excision, enabling clean removal of selection cassettes [18]. |

| Hyperactive PBase (hyPBase) | A codon-optimized and mutated version of PBase with higher activity. | Increases transposition efficiency. Can be optimized for specific organisms (e.g., rice, OshyPBase) [17]. |

| Prime Editor (PE) Construct | A fusion protein of Cas9 nickase and reverse transcriptase, used with a pegRNA. | Mediates all 12 possible base-to-base conversions, as well as small insertions and deletions, without double-strand breaks [16]. |

| pegRNA | Extended guide RNA containing a primer binding site (PBS) and a reverse transcriptase template (RTt). | Directs the prime editor to the target locus and templates the desired edit [16]. |

| Traffic Light Reporter (TLR) | A lentiviral reporter construct with two out-of-frame fluorescent proteins. | Enables simultaneous estimation of precise gene correction (one color) and error-prone indel formation (another color) [16]. |

| HIP/HOP Yeast Knockout Collection | A barcoded collection of ~1100 heterozygous (HIP) and ~4800 homozygous (HOP) yeast deletion strains. | Allows for pooled, competitive growth assays under drug pressure to identify drug targets (HIP) and resistance genes (HOP) [4]. |

Frequently Asked Questions (FAQs)

Q1: What are conserved chemogenomic signatures, and why are they important in drug discovery?

Conserved chemogenomic signatures are patterns of gene expression or fitness response to chemical compounds that are shared across different species, from microorganisms to human cells. These signatures represent fundamental, evolutionarily maintained biological pathways that cells use to respond to stress, including drug treatments. Their importance in drug discovery is twofold: they can reveal the primary mechanism of action of uncharacterized compounds, and they help identify critical resistance pathways that may cause treatment failure in the clinic. By studying these conserved responses, researchers can prioritize drug targets that are fundamental to cell survival and understand resistance mechanisms that may emerge across diverse patient populations [20] [4].

Q2: My chemogenomic profiles show poor reproducibility between technical replicates. What could be causing this?

Several technical factors can affect reproducibility in chemogenomic assays. Based on large-scale comparisons of yeast chemogenomic datasets, the most common issues include:

- Growth measurement inconsistencies: Differences in how cell growth is measured (fixed time points vs. actual doubling times) can introduce significant variation [4].

- Strain pool composition: Variances in the representation of slow-growing deletion strains between different pool preparations affect results. Studies have shown pools can differ by ~300 strains due to overnight growth conditions [4].

- Normalization methods: The use of different data processing pipelines, particularly in how control samples are handled and batch effects are corrected, dramatically impacts replicate consistency [4].

- Barcode performance: Variation in uptag and downtag performance for individual strains without proper quality control filtering [4].

Q3: How can I determine if a signature I've identified is truly conserved across species?

To validate signature conservation, employ this multi-step approach:

- Start with computational comparison: Use tools like CACTI to mine chemogenomic databases and identify analogous responses in model organisms [21].

- Cross-species profiling: Test your compound or condition in multiple systems (e.g., yeast, bacteria, mammalian cells) and look for overlapping gene sets or pathways. Research has demonstrated that early resistance signatures in cancer cells show remarkable similarity to responses in bacteria and fungi [20].

- Functional validation: Use genome-wide CRISPR screens in mammalian cells or deletion collections in model organisms to confirm that the same genes confer resistance/sensitivity across species [20].

- Pathway enrichment analysis: Tools like clusterProfiler can identify if the same biological processes (e.g., oxidative phosphorylation, EMT, hypoxia signaling) are enriched across species [20].

Q4: What are the best practices for identifying a compound's mechanism of action using chemogenomic approaches?

For reliable mechanism of action (MOA) determination:

- Use complementary assays: Combine HIP (haploinsufficiency profiling) and HOP (homozygous profiling) assays to directly identify drug targets and resistance pathways [10].

- Leverage multiple reference datasets: Query your profiles against established chemogenomic compendia like CACTI, which integrates ChEMBL, PubChem, and BindingDB [21].

- Apply guilt-by-association: Compare unknown compound profiles to those of compounds with known MOA, but validate with secondary assays [10].

- Implement competitive fitness assays: Use barcoded libraries grown in pools rather than individual strain assays for more quantitative results [10].

- Integrate morphological profiling: Combine with Cell Painting assays to connect molecular changes to phenotypic outcomes [11].

Troubleshooting Guides

Problem 1: Inconsistent Chemical-Genetic Interactions Across Platforms

Issue: Significant differences in fitness defect scores when the same compound is screened using different chemogenomic platforms.

Solution:

- Standardize normalization: Implement robust z-score normalization that accounts for plate-to-plate variation and batch effects [4].

- Harmonize strain sets: Ensure consistent representation of slow-growing strains by controlling pool growth conditions and number of doublings [4].

- Apply cross-platform thresholds: Use the AUCell method with background distributions from control gene sets to establish consistent activity thresholds [20].

- Validate with orthogonal methods: Confirm key hits using individual strain growth assays or complementary CRISPR screens [20] [10].

Table: Key Differences Between Major Chemogenomic Screening Platforms

| Parameter | HIPLAB Protocol | NIBR Protocol | Impact on Results |

|---|---|---|---|

| Collection time | Based on actual doubling time | Fixed time points | Affects slow-growing strain representation |

| Strain detection | ~4800 homozygous strains | ~300 fewer detectable strains | Missing data for slow-growers |

| Data normalization | Batch effect correction + median polish | Normalized by "study id" only | Different variance structure |

| Control samples | Median signal of controls | Average intensity of controls | Affects ratio calculations |

Problem 2: Failure to Detect Evolutionarily Conserved Responses

Issue: Inability to identify transcriptional signatures that are shared between model organisms and human systems.

Solution:

- Apply non-parametric statistics: Use permutation-based methods (1000+ permutations) to define conserved differentially methylated positions or expressed genes, as demonstrated in pan-cancer methylation studies [22].

- Implement ensemble meta-analysis: Combine multiple disease signatures (e.g., 21 lung cancer signatures) to improve detection of conserved drug responses, increasing reproducibility from 44% to 78% [23].

- Use cross-species gene set enrichment: Tools like fgsea with evolutionarily informed gene sets can reveal conserved pathways despite poor individual gene overlap [20].

- Leverage integrated databases: Query resources like ImmuneSigDB, which contains manually annotated conserved immune signatures across humans and mice [24].

Table: Quantitative Evidence for Conserved Resistance Signatures Across Species [20]

| Experimental System | Conserved Pathways Identified | Validation Method | Key Finding |

|---|---|---|---|

| Ovarian cancer cells | Oxidative phosphorylation, EMT, Hypoxia, MYC signaling | CRISPR knockout of signature genes | Knockout sensitized cells to Prexasertib |

| E. coli drug response | Shared transcriptional states with cancer resistance | Comparative transcriptomics | Evolutionarily conserved stress responses |

| C. albicans drug response | Overlapping gene expression with mammalian resistance | Cross-species GSEA | Conserved epigenetic mechanisms |

| Clinical datasets | 72-gene resistance signature | Analysis of premalignant lesions | Signature distinguished progressing vs. benign lesions |

Problem 3: High False Positive Rates in Target Identification

Issue: Chemogenomic screens suggest implausible or unverifiable drug targets.

Solution:

- Apply multi-omic confirmation: Integrate fitness profiles with transcriptional data and protein-binding information to triangulate true targets [22].

- Use directed chemogenomic libraries: Employ target-family focused libraries (kinases, GPCRs) with known target annotations to improve identification accuracy [11].

- Implement structural validation: Perform molecular docking studies with identified targets, as demonstrated in mur ligase inhibitor discovery [1].

- Leverage cofitness networks: Analyze genes with similar fitness profiles across conditions to identify functional modules and reduce false assignments [1].

Experimental Workflow for Conservation Analysis:

Problem 4: Challenges in Translating Signatures to Clinical Relevance

Issue: Conserved signatures identified in model systems fail to predict patient outcomes or therapy response.

Solution:

- Incorporate tumor microenvironment: Analyze how conserved signatures interact with immune cell populations, as demonstrated in methylation studies linking Hypo-MS4 to CD4+ T cell regulation [22].

- Validate in clinical trial datasets: Test signatures in multiple independent clinical cohorts (e.g., 72-gene resistance signature distinguished responders from non-responders across trials) [20].

- Account for tissue context: Use tissue-specific regulatory networks and factor binding data (e.g., FOXA1 in cancer subtypes) to improve clinical prediction [22].

- Implement ensemble methods: Combine multiple conserved signatures rather than relying on single markers to improve prognostic value [23].

Experimental Protocols

Protocol 1: Identifying Conserved Resistance Signatures Using Integrated Transcriptomics

Purpose: To define evolutionarily conserved transcriptional signatures of drug resistance across cancer types and species.

Materials:

- Treatment-naive and drug-resistant cell populations (minimum 3 biological replicates)

- RNA extraction kit (quality threshold: RIN > 8.5)

- scRNA-seq platform (10X Genomics recommended)

- CRISPR-Cas9 knockout library (Brunello genome-wide or focused)

- Reference datasets: E. coli and C. auris drug response transcriptomes [20]

Methods:

Generate resistance signature:

- Treat cells with IC50 drug concentration for 10 days

- Extract RNA from surviving cells at day 0 and day 10

- Identify differentially expressed genes using Seurat's FindAllMarkers() (log2FC > 0, adjusted p < 0.05) [20]

- Aggregate ranked gene lists using Robust Rank Aggregation (RRA) retaining genes with adjusted rank p < 0.05

Validate signature conservation:

- Process single-cell RNA-seq data from early timepoints (days 0, 3, 7)

- Calculate fold changes relative to all other timepoints

- Evaluate enrichment using Gene Set Enrichment Analysis (fgsea package)

- Compare to bacterial/fungal profiles using Spearman correlation

Functional validation:

- Perform genome-wide CRISPR knockout screen in presence of drug

- Transduce OVCAR8 cells at MOI ~0.3, select with puromycin

- Culture treated (30 nM Prexasertib) and control populations for 10 generations

- Extract gDNA and sequence barcodes to identify sensitizing knockouts [20]

Analysis:

- Use AUCell to estimate signature activity thresholds against background distributions

- Calculate odds ratios for signature activity in cell clusters using Fisher's exact test

- Define resistance-activated clusters (RACs) as those with OR > 1 and adjusted p < 0.05

Protocol 2: Chemogenomic Profiling for Mechanism of Action Studies

Purpose: To determine compound mechanism of action through comparative chemogenomic profiling.

Materials:

- Barcoded yeast deletion collection (YKO)

- Compound library of interest

- HPLC-grade DMSO for vehicle controls

- Robotic liquid handling system

- Barcode sequencing platform

Methods:

Pool preparation:

- Combine heterozygous and homozygous deletion strains in rich medium

- Grow to mid-log phase, aliquot for pre-treatment reference sample

- Add compound at multiple concentrations (typically 0.5x, 1x, 2x IC50)

- Incubate with shaking for 12-16 generations

Sample processing:

- Collect cells by centrifugation, extract genomic DNA

- Amplify barcodes with indexing primers for multiplexing

- Sequence on Illumina platform (minimum 500x coverage)

Data analysis:

- Count barcode reads, normalize to pre-treatment sample

- Calculate fitness defects as log2(compound/control) ratios

- Convert to robust z-scores using median and MAD across all strains

- Compare to reference compound profiles using Pearson correlation [4]

Troubleshooting Notes:

- Include quality control strains with known sensitivity patterns

- Monitor pool complexity by tracking unique barcodes detected

- Use batch correction when screening large compound libraries

- Validate key hits with individual strain growth assays

Research Reagent Solutions

Table: Essential Resources for Conserved Signature Research

| Reagent/Resource | Function/Application | Key Features | Example Sources |

|---|---|---|---|

| Barcoded deletion collections | Genome-wide fitness profiling | Strain-specific molecular barcodes for competitive growth assays | YKO (yeast), Brunello CRISPR (human) [20] [4] |

| Chemogenomic databases | Target prediction and MOA analysis | Integrated bioactivity data from multiple sources | CACTI, ChEMBL, PubChem, BindingDB [21] |

| Pathway analysis tools | Biological interpretation of signatures | Gene set enrichment, ontology mapping | clusterProfiler, fgsea, GSEA [20] |

| Reference transcriptional profiles | Conservation analysis | Cross-species drug response data | ImmuneSigDB, DrugMatrix, LINCS [24] |

| Morphological profiling platforms | Phenotypic screening integration | High-content image analysis of cell painting assays | Cell Painting, BBBC022 dataset [11] |

Data Analysis Pathways

Computational Framework for Signature Conservation:

Advanced Methodologies and Real-World Applications in Modern Drug Discovery

Frequently Asked Questions & Troubleshooting Guides

This section addresses common challenges in drug-target interaction (DTI) prediction, providing targeted solutions for researchers.

FAQ 1: My deep learning model performs poorly on a small, imbalanced dataset. Should I abandon deep learning?

- Challenge: Deep learning models typically require large amounts of data to learn effective feature representations and avoid overfitting. Performance can be disappointing on small, imbalanced datasets commonly found in chemogenomics [25].

- Solution: You do not necessarily need to abandon deep learning. Consider these strategies:

- Start with Shallow Methods: For small datasets, state-of-the-art shallow methods like Random Forest (RF) with expert-based descriptors can outperform deep learning [25]. Use them as a strong baseline.

- Data Augmentation: Employ techniques like multi-view learning (combining expert-based and learnt features) or transfer learning. Pre-training molecular and protein encoders on larger, auxiliary datasets can significantly boost performance on your primary, smaller dataset [25].

- Advanced Sampling: Integrate down-sampling methods like NearMiss (NM) to balance the number of positive and negative interaction samples in your training data, which has been shown to improve model performance [26].

FAQ 2: How can I make my DTI predictions more reliable and avoid overconfident false positives?

- Challenge: Traditional deep learning models lack inherent probability calibration and can produce high-probability predictions even for out-of-distribution samples, leading to overconfidence in incorrect results [27].

- Solution: Integrate Uncertainty Quantification (UQ) into your pipeline.

- Evidential Deep Learning (EDL): Frameworks like EviDTI use EDL to provide confidence estimates alongside predictions. This allows you to prioritize drug-target pairs with high prediction probabilities and high confidence for experimental validation, thereby reducing the risk and cost associated with false positives [27].

- Model Calibration: Use EDL to calibrate prediction errors, ensuring that the predicted probabilities more accurately reflect the true likelihood of interaction [27].

FAQ 3: What is the best deep learning framework for prototyping and deploying DTI models?

- Challenge: Choosing between PyTorch and TensorFlow involves trade-offs between prototyping flexibility and production deployment efficiency.

- Solution: The choice depends on your project's primary focus.

- For Rapid Prototyping and Research: PyTorch is often preferred due to its intuitive, Pythonic syntax and dynamic computation graph, which allows for immediate evaluation of operations and easier debugging [28].

- For Large-Scale Production Deployment: TensorFlow has historically offered robust tools for deploying models in production environments (e.g., using TensorFlow Serving) and strong support for distributed training and Google's TPUs [28].

- Note: The gap between the two frameworks is narrowing. TensorFlow 2.x adopted eager execution for more dynamic development, and PyTorch has enhanced its production readiness with TorchScript and TorchServe [28] [29].

FAQ 4: How can I effectively represent drugs and targets for DTI prediction models?

- Challenge: The performance of both shallow and deep learning models is heavily dependent on the input representations of drugs and target proteins [27].

- Solution: Utilize comprehensive and multi-dimensional feature descriptors.

- Drug Representations:

- 2D Topological Graphs: Use Graph Neural Networks (GNNs) to process molecular graphs, learning from atom and bond properties [25] [27].

- 3D Spatial Structures: Employ geometric deep learning (e.g., GeoGNN) to encode the spatial conformations of molecules [27].

- Molecular Fingerprints: Extract a variety of expert-based fingerprint descriptors and their counting vectors using tools like PaDEL-Descriptor [26].

- Target Representations:

- Drug Representations:

Performance Comparison: Deep vs. Shallow Learning

The table below summarizes the performance of various methods on gold-standard DTI datasets, providing a quantitative basis for method selection. AUROC (Area Under the Receiver Operating Characteristic Curve) values are used for comparison.

Table 1: Performance Comparison (AUROC %) on Gold-Standard Datasets

| Method Category | Method Name | Enzymes | Ion Channels | GPCRs | Nuclear Receptors |

|---|---|---|---|---|---|

| Shallow Learning | Random Forest (RF) + NearMiss [26] | 99.33 | 98.21 | 97.65 | 92.26 |

| Shallow Learning | kronSVM [25] | Information Missing | Information Missing | Information Missing | Information Missing |

| Shallow Learning | Matrix Factorization (NRLMF) [25] | Information Missing | Information Missing | Information Missing | Information Missing |

| Deep Learning | EviDTI (on DrugBank dataset) [27] | 82.02 (Accuracy) | - | - | - |

| Deep Learning | Chemogenomic Neural Network (CN) [25] | Competitive on large datasets | Competitive on large datasets | Competitive on large datasets | Competitive on large datasets |

Experimental Protocols

This section provides detailed methodologies for key experiments cited in this guide.

This protocol outlines the steps for implementing a high-performing shallow learning approach for DTI prediction on imbalanced datasets.

- Feature Extraction:

- Drug Features: Use the PaDEL-Descriptor software to extract 12 types of drug feature descriptors, including 10 molecular fingerprints and their counting vectors.

- Target Features: Extract 6 types of protein sequence descriptors based on the amino acid sequence of the target protein.

- Feature Concatenation & Dimensionality Reduction:

- Concatenate the drug and target feature vectors for each drug-target pair.

- Apply the Random Projection method to reduce the dimensionality of the combined feature vector, simplifying model computation.

- Dataset Balancing:

- To address class imbalance, apply the NearMiss (NM) down-sampling algorithm to the majority class (non-interacting pairs) to control its sample size relative to the minority class (interacting pairs).

- Model Training and Prediction:

- Train a Random Forest (RF) classifier on the balanced, dimensionality-reduced dataset.

- Use the trained model to predict novel drug-target interactions.

This protocol describes the setup for a deep learning approach that learns representations directly from molecular graphs and protein sequences.

- Input Representation:

- Drug Input: Represent a molecule by its 2D molecular graph

G=(V,E), where nodesVare atoms (with attributes like atom type) and edgesEare bonds (with attributes like bond type). - Target Input: Represent a protein by its amino acid sequence.

- Drug Input: Represent a molecule by its 2D molecular graph

- Encoder Architecture:

- Molecular Graph Encoder: Implement a Graph Neural Network (GNN). At each layer

l, the representationh_i^(l)of a nodeiis updated by aggregating representations from its neighboring nodesN(i). A global molecular representationm^(l)is obtained by summing all node representations at that layer. - Protein Sequence Encoder: Use a recurrent neural network (RNN) or transformer to process the amino acid sequence and generate a fixed-size protein representation.

- Molecular Graph Encoder: Implement a Graph Neural Network (GNN). At each layer

- Interaction Prediction:

- Combination: Combine the final drug and protein representations (e.g., via concatenation).

- MLP on Pairs: Feed the combined representation into a feed-forward neural network (Multi-Layer Perceptron) to perform the final binary classification (interaction vs. non-interaction).

This protocol details the steps for implementing an evidential deep learning model to obtain reliable predictions with confidence estimates.

- Feature Encoding with Pre-trained Models:

- Protein Feature Encoder:

- Use the pre-trained protein language model ProtTrans to generate an initial feature representation from the protein sequence.

- Process this representation through a Light Attention (LA) module to capture local residue-level interactions.

- Drug Feature Encoder:

- For 2D topology: Use the pre-trained model MG-BERT to get an initial drug representation, followed by a 1DCNN for further feature extraction.

- For 3D structure: Convert the drug's 3D structure into an atom-bond graph and a bond-angle graph. Encode these graphs using a GeoGNN module.

- Protein Feature Encoder:

- Evidence Collection and Uncertainty Estimation:

- Concatenate the final drug and target representations.

- Feed the combined vector into an evidential layer. The output of this layer is the parameter

α, which defines a Dirichlet distribution.

- Prediction and Uncertainty Calculation:

- Calculate the predicted probability of interaction from the parameters

α. - Simultaneously, calculate the predictive uncertainty (e.g., as the inverse of the total evidence). Use this uncertainty to filter and prioritize predictions for experimental validation.

- Calculate the predicted probability of interaction from the parameters

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for DTI Prediction Experiments

| Item Name | Function / Explanation |

|---|---|

| Gold Standard Dataset [26] | A benchmark dataset curated by Yamanishi et al., containing known DTIs for Enzymes, Ion Channels, GPCRs, and Nuclear Receptors. Used for model training and comparative performance evaluation. |

| PaDEL-Descriptor [26] | Software used to calculate a comprehensive set of molecular descriptors and fingerprints from drug structures, which serve as expert-based features for machine learning models. |

| ProtTrans [27] | A pre-trained protein language model. Used to generate powerful, contextual numerical representations directly from protein amino acid sequences, capturing evolutionary and structural information. |

| MG-BERT [27] | A pre-trained model for molecular graphs. Used to generate informed initial representations of drugs based on their 2D topological structure, which can be fine-tuned for the DTI task. |

| NearMiss (NM) [26] | An under-sampling algorithm used to balance imbalanced datasets by reducing the number of majority class samples (non-interacting pairs), thus mitigating model bias. |

| Evidential Deep Learning (EDL) [27] | A framework that allows neural networks to not only make predictions but also quantify the uncertainty associated with each prediction, improving decision-making reliability. |

Experimental Workflow and Model Architectures

DTI Prediction Workflow

EviDTI Model Architecture

Technical Support: Frequently Asked Questions (FAQs)

FAQ 1: What are the most critical steps for preparing chemical and protein descriptors to avoid model failure?

The most critical step is using multi-scale descriptors to create a comprehensive representation of both compounds and protein targets. Relying on a single type of descriptor can lead to missing key interaction information, a phenomenon known as the "activity cliff," where highly similar compounds have unexpectedly large differences in activity [30] [31]. The recommended descriptors are:

- For Chemical Structures: Use a combination of at least two descriptor types.

- For Protein Targets: Integrate sequence and functional information.

FAQ 2: Our model performance is poor for targets with limited bioactivity data. How can we address this?

This is a common challenge, often termed the "cold start" problem [32]. Chemogenomic models are specifically designed to mitigate this by leveraging information from similar proteins.

- Leverage Protein Similarity: Chemogenomic methods can "share ligands" across targets with similar protein sequences. This allows the model to extrapolate and make predictions for under-characterized targets based on data from well-characterized, similar ones [30] [31].

- Use Feature-Based Methods: Models that use fundamental features of drugs and targets (e.g., molecular descriptors, protein sequences) can handle new drugs and targets better than methods relying solely on known interaction networks, as features can always be extracted for a new entity [32].

FAQ 3: How do I validate a chemogenomic model and interpret its predictive performance?

Robust validation is essential. Do not rely solely on internal cross-validation.

- Use External Datasets: Always test the final model on a completely held-out external dataset not used during training. For example, one study validated their model using external datasets containing natural products, achieving >45% of known targets enriched in the top-10 predictions [31].

- Employ Top-(k) Analysis: A key metric for target prediction is the "fraction of known targets identified in the top-(k) list." For instance, a validated model showed 26.78% of known targets in the top-1 prediction and 57.96% in the top-10, representing enrichments of approximately 230-fold and 50-fold, respectively [31].

- Compare Against State-of-the-Art: Benchmark your model's top-(k) prediction performance against other established methods to confirm equivalent or superior ability [31].

FAQ 4: What is the difference between a ligand-based method and a chemogenomic method?

The core difference lies in the information used for prediction.

- Ligand-Based Methods: Rely solely on the similarity between a query compound and known active compounds for a specific target. They do not use any information about the protein target itself, which can be a major limitation [30] [31].

- Chemogenomic Methods: Integrate information from both the ligand (compound) space and the target space (e.g., protein sequences). This provides a more comprehensive view of the interaction landscape and can improve predictions, especially for novel targets [30] [31] [32].

Experimental Protocol: Building an Ensemble Chemogenomic Model

The following workflow details the key steps for constructing a robust ensemble chemogenomic model for target prediction, based on established methodologies [30] [31].

Step 1: Dataset Curation

- Source Bioactivity Data: Extract compound-target interaction data from public chemogenomic databases such as ChEMBL and the BindingDB [30] [31].

- Define Targets: Focus on a specific set of proteins (e.g., 859 human targets from ChEMBL). Include associated protein information like sequences and Gene Ontology (GO) terms from the UniProt database [30] [31].

- Outcome: A dataset consisting of thousands of compound-target pairs, each with a bioactivity value (e.g., Ki, IC50).

Step 2: Data Pre-processing

- Handle Replicates: For compound-target pairs with multiple bioactivity values, use the median value if the differences are within one order of magnitude. Exclude pairs with larger discrepancies [30] [31].

- Define Activity Threshold: Convert continuous bioactivity data into a binary classification problem. A common threshold is:

Step 3: Descriptor Calculation

Calculate multiple descriptors for both compounds and proteins to create a multi-scale representation for each compound-target pair. Table: Essential Research Reagents & Datasets

| Resource Name | Type/Function | Key Utility in Model Building |

|---|---|---|

| ChEMBL Database | Bioactivity Database | Source of validated compound-target interactions and bioactivity data [30] [31]. |

| UniProt Database | Protein Information Database | Source of protein sequences and Gene Ontology (GO) terms for target representation [30] [31]. |

| Mol2D Descriptors | Molecular Descriptor Set | Provides 2D chemical information (constitutional, topological, charge) [30] [31]. |

| ECFP4 Fingerprints | Molecular Fingerprint | Captures circular substructures of a molecule for similarity searching [31]. |

| Gene Ontology (GO) | Functional Annotation | Provides context on biological process, molecular function, and cellular component for protein targets [30] [31]. |

Step 4: Model Construction & Ensemble Learning

- Train Base Models: Construct multiple individual chemogenomic models using different descriptor combinations and machine learning algorithms (e.g., XGBoost) [30].

- Build the Ensemble: Combine the predictions of the base models into a final ensemble model. The ensemble model with the best performance on validation metrics is selected as the final prediction tool [30] [31].

Step 5: Model Validation

- Cross-Validation: Perform stratified 10-fold cross-validation to assess model stability and avoid overfitting [31].

- External Validation: Test the model on a completely independent external dataset not seen during training or cross-validation [31].

- Performance Benchmarking: Compare the model's top-(k) prediction performance against other state-of-the-art target prediction methods [31].

Performance Metrics and Data

The following table summarizes quantitative performance data from a validated ensemble chemogenomic model, providing benchmarks for expected outcomes [31].

Table: Ensemble Model Target Prediction Performance

| Validation Strategy | Top-1 Hit Rate (%) | Top-5 Hit Rate (%) | Top-10 Hit Rate (%) | Enrichment Fold (vs. Random) |

|---|---|---|---|---|

| Stratified 10-Fold Cross-Validation | 26.78 | - | 57.96 | ~230 (Top-1)~50 (Top-10) |

| External Validation (Natural Products) | - | - | >45.00 | - |

Advanced Troubleshooting: Addressing Complex Workflow Issues

Problem: Inability to predict targets for compounds with novel scaffolds (the "Cold Start" problem for drugs).

Solution: Implement a feature-based machine learning or deep learning approach.

- Root Cause: Network-based inference methods often fail for new drugs because they rely on existing similarity relationships in the interaction network [32].

- Solution Strategy: Use models that learn from the fundamental features of the compounds and proteins. Since features (e.g., molecular descriptors, protein sequences) can always be generated for a new compound or target, these models can make predictions even in the absence of similar known interactions [32].

- Consideration: The reliability of automatically learned feature representations in deep learning models can be an issue, and their interpretability is often lower than that of models using manually defined features [32].

Technical Support Center: Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Why do different gene signatures for the same disease show poor overlap and how can this be addressed?

Different gene signatures for the same disease often show poor overlap due to both biological variability (different patient populations, disease subtypes) and technical variability (different platforms, experimental protocols) across studies [23]. This heterogeneity directly affects the quality and reproducibility of computational drug predictions. To address this challenge, implement meta-analysis frameworks that use an ensemble of disease signatures rather than individual signatures as input [23]. This approach leverages all available transcriptional knowledge on a disease, significantly increasing the reproducibility of top drug hits from 44% to 78% according to one lung cancer study [23].

Q2: What are the key considerations when designing a signature-driven drug repurposing pipeline?

When designing your repurposing pipeline, focus on these critical elements:

- Signature Quality: Ensure your disease signature has strong association with the disease phenotype and genotype. For example, in lung cancer research, the interspecies KRAS gene signature (iKRASsig) was tightly associated with KRAS genotype and patient survival outcomes [33].

- Reference Database: Utilize comprehensive chemogenomic databases like Connectivity Map (CMap) to find drugs that reverse your disease signature [33].

- Validation Strategy: Include both computational validation (assessing statistical significance of repurposing scores) and experimental validation (in vitro and in vivo models) to confirm predictions [33].

Q3: How can I determine if my chemogenomic fitness profiling data is reliable?

To assess the reliability of your chemogenomic fitness data:

- Compare your dataset with independent datasets generated by different laboratories using different experimental and analytical pipelines [4].

- Check for robust chemogenomic response signatures characterized by consistent gene signatures and enrichment for biological processes across datasets [4].

- Evaluate the conservation of signatures between datasets; one study found 66% of chemogenomic signatures were conserved across independent screens [4].

Q4: What experimental approaches can I use to validate predicted drug combinations?

For validating predicted drug combinations:

- Conduct pairwise pharmacological screens in relevant cell lines using concentrations equal to or lower than IC50 values [33].

- Calculate combination indices (CI) using software like Compusyn to identify synergistic combinations (typically CI < 0.8 indicates synergy) [33].

- Test combinations across multiple model systems including 2D cultures, 3D organoids, and in vivo models to confirm genotype-specific effects [33].

Troubleshooting Common Experimental Issues

Problem: Poor reproducibility of drug predictions across different disease signatures

Solution: Implement an established meta-analysis framework that takes a collection of disease signatures as input and outputs drugs that consistently reverse pathological gene changes across multiple signatures [23]. This approach significantly increases reproducibility by leveraging the large number of disease signatures in the public domain rather than relying on individual signatures.

Problem: Difficulty interpreting mechanisms of action for repurposed drugs

Solution:

- Perform RNA sequencing on cells treated with single drugs to identify distinct clustering patterns in principal component analysis [33].

- Analyze how different drugs reverse expression of distinct gene clusters within your disease signature [33].

- Use proteome profiling to link drug effects to specific protein expression changes, such as MYC dysregulation in response to PKC inhibitor-based combinations [33].

Problem: Uncertain translation of predicted drug combinations to clinical relevance

Solution:

- Prioritize FDA-approved drugs or compounds in advanced clinical stages for repurposing [33].

- Search for structurally related analogs of predicted drugs that are already clinically approved [33].

- Test whether newer targeted therapies can substitute for drugs in your initial predictions (e.g., KRASG12C inhibitors replacing MEK inhibitors in combinations) [33].

Quantitative Data Tables

Table 1: Performance Comparison of Signature-Based Drug Repurposing Approaches

| Method | Signature Input | Reproducibility of Top Hits | Key Advantages |

|---|---|---|---|

| Individual Signature Analysis | Single disease signature | 44% | Simple implementation |

| Meta-Analysis Framework | Ensemble of 21 signatures | 78% | Increased reproducibility, leverages public data |

Table 2: Synergistic Drug Combinations for Mutant KRAS Lung Adenocarcinoma (LUAD)

| Drug Combination | Combination Index | Antiproliferative Effect | Genotype Specificity |

|---|---|---|---|

| Trametinib + Lestaurtinib | CI < 0.8 | Significant growth inhibition | Mutant KRAS specific |

| Trametinib + Midostaurin | CI < 0.8 | Cytotoxic response | Mutant KRAS specific |

| Sotorasib + Midostaurin | CI < 0.8 | Strong antitumor effect | KRASG12C specific |

Experimental Protocols

Protocol 1: Signature-Driven Drug Repurposing Workflow

Signature Development:

- Curate gene signatures from public databases or generate your own using transcriptomic data from diseased vs. normal tissues

- Validate signature association with clinical outcomes (e.g., survival analysis)

Connectivity Map Query:

- Submit your signature to the Connectivity Map database

- Identify compounds with negative repurposing scores (indicating signature reversal)

- Filter for drugs with repurposing scores < -0.3 for further investigation [33]

Experimental Validation:

- Screen predicted drugs in relevant cell lines

- Test synergistic combinations in pairwise format

- Validate in 3D cultures and in vivo models

Protocol 2: Chemogenomic Fitness Profiling

Strain Pool Construction:

- Create barcoded heterozygous and homozygous knockout collections

- Grow strains competitively in a single pool [4]

Drug Exposure and Sequencing:

- Expose pooled strains to compounds of interest

- Quantify fitness by barcode sequencing

- Calculate fitness defect (FD) scores as robust z-scores [4]

Data Analysis:

- Identify heterozygous strains with greatest FD scores as likely drug targets

- Analyze homozygous deletion strains for genes involved in drug resistance pathways

Pathway Diagrams

Signature-Driven Drug Repurposing in KRAS-Mutant Cancer

Meta-Analysis Framework for Improved Reproducibility

Research Reagent Solutions

Table 3: Essential Research Materials for Signature-Based Drug Repurposing

| Reagent/Resource | Function | Example Sources/References |

|---|---|---|

| Connectivity Map (CMap) | Database linking gene expression signatures to small molecules | Broad Institute [33] |

| iKRASsig | Interspecies KRAS gene signature for lung cancer research | Nature Communications [33] |

| HIPHOP chemogenomic platform | Genome-wide chemical-genetic interaction profiling | BMC Genomics [4] |

| Mutant KRAS LUAD cell lines | In vitro models for validation (e.g., H1792, H2009) | Nature Communications [33] |

| Trametinib | MEK1/2 inhibitor for combination studies | FDA-approved, Nature Communications [33] |

| Midostaurin (PKC412) | Multi-tyrosine kinase PKC inhibitor | FDA-approved for AML, Nature Communications [33] |

| Sotorasib | KRASG12C inhibitor for targeted therapy | FDA-approved, Nature Communications [33] |

| Chemogenomic profiling mutants | Library of strains for mechanism of action studies | Scientific Reports [15] |