Hybrid Quantum-Classical CNNs: Revolutionizing Drug Discovery Through Enhanced Binding Affinity Prediction

Accurate prediction of protein-ligand binding affinity is crucial for accelerating drug discovery, yet remains computationally demanding for classical models.

Hybrid Quantum-Classical CNNs: Revolutionizing Drug Discovery Through Enhanced Binding Affinity Prediction

Abstract

Accurate prediction of protein-ligand binding affinity is crucial for accelerating drug discovery, yet remains computationally demanding for classical models. This article explores how hybrid quantum-classical convolutional neural networks (HQCNNs) are emerging as a transformative solution, offering significant reductions in model complexity and training time while maintaining or even improving predictive performance. We provide a comprehensive analysis of HQCNN architectures tailored for binding affinity prediction, examining their foundational principles, methodological implementations, optimization strategies for NISQ devices, and comparative validation against state-of-the-art classical models. Targeted at researchers and drug development professionals, this review synthesizes current advancements and practical considerations for deploying quantum-enhanced machine learning in computational biology and pharmaceutical research.

The Quantum Leap in Drug Discovery: Fundamentals of Hybrid CNNs for Binding Affinity

Accurately predicting the binding affinity between a protein and a small molecule ligand is a central challenge in computer-aided drug design, as it directly influences the efficacy and specificity of therapeutic compounds [1]. The ability to identify molecules that bind uniquely and robustly to a target protein while minimizing interactions with others is crucial for reducing the expenses associated with experimental protocols in drug discovery [2]. Traditional computational methods for assessing binding affinity include physics-based simulations, molecular docking with scoring functions, and more recently, deep learning approaches [1]. Despite advancements, these methods face significant limitations in terms of computational cost, accuracy, and generalizability, creating a substantial bottleneck in the drug development pipeline.

The drug discovery process requires evaluating thousands to millions of potential ligands against target proteins, necessitating robust computational methods to prioritize candidates for experimental testing [1]. While experimental techniques like isothermal titration calorimetry and surface plasmon resonance can directly measure binding affinity, they are complex, expensive, and time-consuming, making large-scale screening impractical [1]. This has driven the development of computational approaches, though each comes with distinct limitations that hinder their widespread effectiveness in real-world drug design scenarios.

Fundamental Limitations of Classical Computational Methods

Physics-Based Simulation Methods

Physics-based methods rely on biophysical models of protein-ligand structures to estimate binding affinities but face severe computational constraints. All-atom molecular dynamics simulations model the temporal behavior of drug-protein complexes but are exceptionally computationally expensive, often requiring expert knowledge and domain expertise [2]. Quantum mechanical calculations, including semiempirical, density-functional theory, and coupled-cluster approaches, can provide high accuracy but become impractical for studying larger protein-ligand structures due to exponential scaling of computational requirements [2] [3]. As system size increases, these methods quickly become infeasible, limiting their application in large-scale virtual screening.

Traditional Scoring Functions

Traditional scoring functions used in molecular docking represent another class of approaches with notable limitations:

Table 1: Limitations of Traditional Scoring Function Categories

| Type | Basis | Key Limitations |

|---|---|---|

| Force-Field Based | Bonded and non-bonded interactions (electrostatic, van der Waals, bonding terms) | Based on incomplete physical models with approximations for simplified computation [2] [1] |

| Empirical | Parameterized fitting to experimental binding data | Limited by the quality and size of training data; sacrifice accuracy for speed [2] [4] |

| Knowledge-Based | Statistical analysis of atom-atom contact frequencies in known structures | Heavy reliance on manual planning and complex operations [1] |

These scoring functions are typically based on simplified physical models and approximations to maintain computational tractability, which inherently limits their accuracy [1]. While less time-consuming than rigorous simulation methods, they sacrifice predictive accuracy, particularly for novel protein-ligand complexes that differ significantly from those in their training sets.

Data Biases and Generalization Challenges

A critical limitation in current binding affinity prediction is the data leakage between popular training datasets and benchmark test sets, which severely inflates perceived performance metrics. Recent research has revealed substantial train-test data leakage between the PDBbind database and Comparative Assessment of Scoring Function (CASF) benchmark datasets [5]. This leakage means that nearly half of CASF complexes do not present genuinely new challenges to trained models, as nearly 600 high-similarity pairs were identified between training and test complexes [5].

The fundamental problem arises from similarity clusters within training data, where nearly 50% of training complexes are part of such clusters according to structure-based filtering algorithms [5]. This redundancy encourages models to settle for easily attainable local minima in the loss landscape through memorization rather than learning generalizable patterns of molecular interactions. When state-of-the-art models are retrained on properly filtered datasets that eliminate this leakage, their performance drops substantially, indicating that previously reported high performance was largely driven by data leakage rather than genuine understanding of protein-ligand interactions [5].

The Deep Learning Approach: Promise and Limitations

Classical Deep Learning Models

Deep learning methods, particularly three-dimensional convolutional neural networks (3D CNNs), have recently attracted significant attention for their ability to improve upon traditional physics-based methods [2] [4]. Unlike traditional machine learning approaches that require hand-curated feature engineering, deep learning models can learn directly from atomic structures of protein-ligand pairs, automatically extracting relevant features from raw structural data [2] [1].

These 3D CNNs represent atoms and their properties in 3D space, capturing local molecular structure and relationships between atoms [2]. However, these representations are high-dimensional matrices requiring millions of parameters to describe even a single data sample [2]. This high dimensionality necessitates complex deep learning models with substantial computational requirements to uncover hidden patterns that correlate with binding affinity.

Computational Complexity of Deep Learning Approaches

The computational burden of deep learning approaches for binding affinity prediction manifests in several critical areas:

- Model Complexity: Training 3D CNNs requires finding optimal values for millions of parameters that minimize a suitable loss function, with more complex models requiring longer execution times [2].

- Data Scalability: As datasets grow (the PDBBind database has expanded from 800 complexes in 2002 to over 14,000 samples in 2020 with anticipated 20% annual growth), computational demands increase correspondingly [2].

- Hardware Dependence: While training processes can be accelerated using powerful GPUs, the fundamental algorithmic complexity remains a limiting factor [2].

According to Hoeffding's theorem, highly complex machine learning models require large amounts of data to reduce prediction variance, as expressed by the inequality ( E{\textrm{out}} \leq E{\textrm{in}} + \mathcal{O} \left( \sqrt{\frac{K}{N{\textrm{samples}}}} \right) ), where ( K ) represents model complexity and ( N{\textrm{samples}} ) the number of data samples [2]. This relationship highlights the fundamental tradeoff between model complexity and data requirements – complex models needed for accurate affinity prediction demand enormous datasets to avoid overfitting.

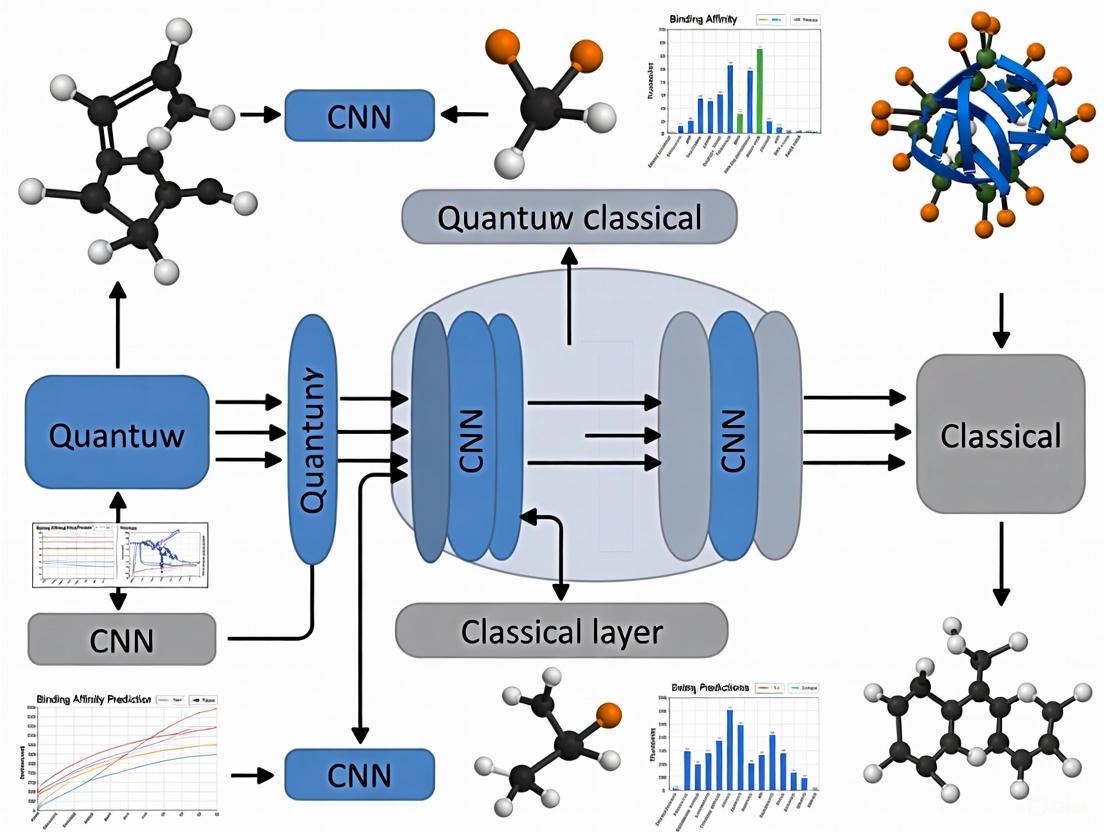

Figure 1: Computational bottlenecks in classical binding affinity prediction methods

Experimental Protocols for Method Evaluation

Standardized Benchmarking Protocol

To ensure fair comparison between different binding affinity prediction methods, researchers should adhere to standardized evaluation protocols:

Dataset Preparation: Use the PDBbind CleanSplit dataset, which applies structure-based filtering to eliminate train-test data leakage [5]. This involves:

- Applying multimodal filtering based on protein similarity (TM scores), ligand similarity (Tanimoto scores), and binding conformation similarity (pocket-aligned ligand RMSD)

- Removing training complexes that closely resemble any test complex

- Eliminating training complexes with ligands identical to those in test complexes (Tanimoto > 0.9)

- Resolving similarity clusters within the training dataset itself

Evaluation Metrics: Assess model performance using multiple error metrics:

- Root mean squared error (RMSE)

- Mean absolute error (MAE)

- Coefficient of determination (R²)

- Pearson correlation coefficient

- Spearman correlation coefficient [2]

Training Procedure: Implement early stopping when validation performance converges (typically around 50 epochs) to prevent overfitting [2].

Hybrid Quantum-Classical CNN Implementation

The hybrid quantum-classical convolutional neural network represents a promising approach to address classical computational bottlenecks:

Table 2: Performance Comparison of Classical vs. Hybrid Quantum-Classical CNNs

| Metric | Classical 3D CNN | Hybrid Quantum-Classical CNN | Improvement |

|---|---|---|---|

| Model Complexity | High (reference baseline) | 20% reduction in parameters [2] | Significant |

| Training Time | Reference baseline | 20-40% reduction [2] | Substantial |

| Prediction Accuracy | Maintained on test sets | Maintained on test sets [2] | Comparable |

| Hardware Utilization | GPU-accelerated | Quantum-circuit enhanced GPU optimization [2] | More efficient |

Implementation Protocol:

- Network Architecture: Replace the first convolutional layer of a classical 3D CNN with a quantum circuit, reducing the number of training parameters by approximately 20% while maintaining predictive performance [2].

- Quantum Circuit Design: Employ parameterized quantum circuits within the quantum layer, constructed using PyTorch tensors for integration into the neural network's computational graph and efficient GPU optimization [2].

- Error Mitigation: For quantum hardware deployment, implement error mitigation techniques like data regression error mitigation, which remains effective with error probabilities lower than p=0.01 and circuits with up to 300 gates [2].

Figure 2: Hybrid quantum-classical CNN workflow for binding affinity prediction

Essential Research Reagents and Computational Tools

Key Datasets and Benchmarks

Table 3: Essential Research Resources for Binding Affinity Prediction

| Resource | Type | Application | Key Features |

|---|---|---|---|

| PDBbind Database | Dataset | Training & validation | Over 14,000 protein-ligand complexes with binding affinity data [2] |

| PDBbind CleanSplit | Curated Dataset | Robust evaluation | Structure-filtered version eliminating train-test leakage [5] |

| CASF Benchmark | Benchmark Suite | Performance assessment | Standardized test sets for scoring function comparison [5] |

| DUD-E Dataset | Dataset | Virtual screening training | 102 targets, >20,000 active molecules, >1 million decoys [4] |

| CSAR-NRC HiQ | Dataset | Pose prediction training | 466 ligand-bound co-crystals of distinct targets [4] |

Software and Implementation Tools

Researchers should familiarize themselves with several key software frameworks and tools:

- TenCirChem: A Python library for quantum computational chemistry that facilitates the implementation of variational quantum eigensolver (VQE) workflows for molecular property calculations [3].

- Smina: A molecular docking software package used for generating protein-ligand poses for training datasets, utilizing the AutoDock Vina scoring function with enhancements [4].

- RDKit: Open-source cheminformatics software used for generating 3D ligand conformers and manipulating chemical structures in preparation for docking studies [4].

- PyTorch Quantum Integration: Custom integration of quantum circuits as layers in classical deep learning models, represented as PyTorch tensors for seamless GPU acceleration [2].

The computational bottleneck in binding affinity prediction stems from fundamental limitations in classical approaches, including the high computational cost of physics-based methods, simplified approximations in traditional scoring functions, and the massive parameter requirements of deep learning models. These challenges are further compounded by dataset issues such as train-test leakage and redundancy, which artificially inflate performance metrics and limit real-world generalization.

Hybrid quantum-classical neural networks represent a promising direction for addressing these bottlenecks, demonstrating significant reductions in model complexity (20%) and training time (20-40%) while maintaining predictive accuracy [2]. As quantum hardware continues to advance and error mitigation techniques improve, these hybrid approaches are poised to overcome the current limitations of purely classical methods, potentially revolutionizing the role of computational approaches in drug discovery pipelines.

Future research should focus on developing more robust dataset splitting methodologies, advancing quantum error correction techniques for deeper quantum circuits, and exploring novel quantum neural network architectures specifically optimized for molecular property prediction tasks.

The pursuit of quantum advantage—the point where quantum computers solve problems that are practically infeasible for classical computers—represents a paradigm shift in computational science. Within machine learning (ML), this translates to developing quantum algorithms that offer superior efficiency or performance for specific learning tasks. In the Noisy Intermediate-Scale Quantum (NISQ) era, characterized by quantum processors with limited qubit counts and without full error correction, the most viable path toward this advantage lies in hybrid quantum-classical architectures. These systems leverage the unique capabilities of quantum processors for specific subroutines while relying on classical computers for the remainder of the computation [6]. For applied fields like drug discovery, this hybrid approach is already demonstrating tangible benefits, such as reduced computational complexity and faster training times for complex models like Convolutional Neural Networks (CNNs) used in molecular property prediction [2].

This document details the core quantum principles underpinning these advances, provides structured experimental data, and outlines specific protocols for implementing hybrid quantum-classical neural networks. The content is framed within the critical task of binding affinity prediction, a central challenge in drug design where accurately selecting candidate molecules from vast pools can drastically reduce experimental costs [2] [7].

Core Quantum Principles for Machine Learning

Quantum computing harnesses unique phenomena from quantum mechanics to process information in ways fundamentally different from classical computers. Three principles are particularly critical for machine learning applications.

Superposition

In classical computing, a bit exists in one state: 0 or 1. A quantum bit (qubit), however, can exist in a superposition of both 0 and 1 states simultaneously. This is represented as |ψ⟩ = α|0⟩ + β|1⟩, where α and β are complex numbers denoting the probability amplitudes of each state. This property allows a quantum computer to explore a vast number of possibilities in parallel. For instance, a register of n qubits in superposition can simultaneously represent 2^n different states, enabling quantum algorithms to process information on an exponentially larger scale than classical counterparts with the same number of bits [8] [9].

Entanglement

Entanglement is a powerful correlation that can exist between qubits. When qubits become entangled, the state of one qubit cannot be described independently of the state of the others; measuring one instantly influences the result of measuring the other, no matter the physical distance between them. This "spooky action at a distance," as Einstein called it, allows quantum computers to create highly complex, correlated states that are intractable to simulate on large classical computers. In ML, entanglement can be harnessed to build sophisticated models that capture intricate relationships within data [8] [9].

Interference

Quantum interference is the process by which the probability amplitudes of qubit states combine. Through careful algorithm design, these waves can be made to undergo constructive interference for correct answers (increasing their probability) and destructive interference for wrong answers (decreasing their probability). This process of amplifying solutions and canceling out noise is the mechanism that distills the vast potential of superposition into a focused, correct output when the qubits are measured. Interference is, in essence, the engine that drives quantum computation toward a useful result [8] [9].

Application in Drug Discovery: Binding Affinity Prediction

Predicting the binding affinity between a potential drug molecule and its target protein is a cornerstone of computational drug discovery. Classical deep learning methods, particularly 3D Convolutional Neural Networks (3D CNNs), have shown superior performance by learning directly from the atomic structure of protein-ligand pairs. However, these models are exceptionally complex and time-intensive to train, creating a significant bottleneck [2].

The Hybrid Quantum-Classical CNN Approach

A promising solution is the integration of a quantum layer into a classical CNN architecture. In one demonstrated approach, the first convolutional layer of a classical 3D CNN is replaced with a variational quantum circuit [2] [10]. This hybrid model is applied to the same 3D grid representations of protein-ligand complexes, such as those from the PDBBind dataset. The quantum circuit is responsible for the initial feature extraction from the high-dimensional input data.

Demonstrated Performance Advantages

Empirical results on standardized datasets reveal the practical benefits of this hybrid approach. The table below summarizes key performance metrics comparing a classical 3D CNN to its hybrid quantum-classical counterpart.

Table 1: Performance comparison of classical and hybrid quantum-classical CNNs for binding affinity prediction on the PDBBind dataset. [2]

| Model Type | Model Complexity | Training Time Savings | Performance on Test Set |

|---|---|---|---|

| Classical 3D CNN | Baseline | Baseline | Optimal |

| Hybrid Quantum-Classical CNN | 20% reduction | 40% reduction | Maintains optimal performance |

This quantitative data shows that the hybrid model achieves a 20% reduction in model complexity (number of trainable parameters) while maintaining the predictive performance of the classical CNN. Furthermore, the training process is significantly accelerated, yielding up to a 40% reduction in training time. This substantial speed-up can greatly accelerate the iterative process of model design and hyperparameter tuning in drug discovery pipelines [2].

Experimental Protocols for Hybrid Quantum-Classical CNNs

This section provides a detailed methodology for implementing and evaluating a hybrid quantum-classical CNN for binding affinity prediction, as validated by recent research.

The following diagram illustrates the end-to-end experimental workflow, from data preparation to model evaluation.

Detailed Methodology

Data Preparation and Preprocessing

- Data Source: Utilize the PDBBind database (e.g., the 2020 refined and core sets) [2]. The refined set is used for training and validation, while the core set is reserved for independent testing.

- 3D Representation: For each protein-ligand complex, generate a 3D voxelized grid. Each voxel encodes atomistic and chemical features based on the spatial positions of atoms within the complex [2].

- Data Splitting: Partition the refined set into training and validation subsets (e.g., an 80/20 split) to monitor training and employ early stopping.

Quantum Circuit Design and Integration

- Circuit Architecture: The hybrid model replaces the first convolutional layer with a parameterized quantum circuit. The design of this circuit is critical.

- Qubit Encoding: Map the input features from a local 3D patch of the grid onto the initial state of a multi-qubit system. This can be achieved through angle-encoding techniques (e.g., using rotation gates).

- Variational Ansatz: Employ a hardware-efficient variational ansatz composed of layers of single-qubit rotation gates (RY, RZ) and two-qubit entangling gates (CNOT). The structure should facilitate the creation of superposition and entanglement.

- Measurement: Measure the qubits in the computational basis to obtain a classical output value. This output, which is a function of the input data and the variational parameters, becomes the feature map for the subsequent classical layers.

Model Training and Evaluation

- Training Loop:

- Perform a forward pass: the 3D input is processed by the quantum layer, then by the remaining classical convolutional and fully connected layers.

- Calculate the loss (e.g., Mean Squared Error) between the predicted and experimental binding affinity.

- Use a classical optimizer (e.g., Adam) to update both the parameters of the quantum circuit and the weights of the classical layers via backpropagation.

- Early Stopping: Halt training when performance on the validation set converges (e.g., after approximately 50 epochs) to prevent overfitting [2].

- Performance Metrics: Evaluate the final model on the held-out core test set using multiple metrics: Root Mean Squared Error (RMSE), Mean Absolute Error (MAE), Coefficient of Determination (R²), Pearson correlation coefficient, and Spearman correlation coefficient [2].

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful implementation of hybrid quantum-classical models requires a suite of specialized software and hardware tools. The table below catalogs the key components.

Table 2: Essential research reagents and computational tools for hybrid quantum-classical ML in drug discovery.

| Tool / Resource | Type | Function & Application |

|---|---|---|

| PDBBind Database | Dataset | A curated collection of experimental protein-ligand complexes with binding affinity data, serving as the primary benchmark [2]. |

| Quantum Simulator (e.g., Qiskit, Cirq) | Software | A classical software tool that emulates a quantum computer, used for algorithm design, testing, and debugging without requiring quantum hardware [2]. |

| PyTorch / TensorFlow | Software | Classical machine learning frameworks with automatic differentiation; essential for building and training the hybrid model end-to-end [2]. |

| Variational Quantum Circuit (VQC) | Algorithm | The parameterized quantum program that acts as a layer within the neural network, performing feature extraction on input data [2]. |

| NISQ Quantum Processor | Hardware | Current-generation quantum hardware (e.g., superconducting qubits, trapped ions) for running optimized quantum circuits in final validation [6] [9]. |

The integration of quantum computing principles with classical machine learning presents a compelling path toward a tangible quantum advantage in practical domains like drug discovery. By leveraging superposition, entanglement, and interference, hybrid quantum-classical CNNs can achieve performance parity with state-of-the-art classical models while demonstrating significant reductions in model complexity and training time. The protocols and tools outlined herein provide a foundational framework for researchers and scientists to explore and advance this cutting-edge paradigm. As quantum hardware continues to mature, these hybrid approaches are poised to become indispensable tools for accelerating the pace of drug design and development.

The accurate prediction of protein-ligand binding affinity is a cornerstone of computational drug discovery, as it directly influences the identification of potential therapeutic compounds [2] [11]. Traditional methods, including molecular dynamics simulations and physics-based calculations, are often hampered by high computational costs and extensive time requirements, creating bottlenecks in the drug development pipeline [2]. The advent of deep learning, particularly convolutional neural networks (CNNs), has demonstrated superior performance in binding affinity prediction by learning directly from atomic structures without relying on hand-curated features [2] [12]. However, these classical models are becoming increasingly complex and resource-intensive as dataset sizes grow, with the PDBBind database expanding from 800 complexes in 2002 to over 14,000 samples in 2020 [2].

Quantum machine learning (QML) has emerged as a promising paradigm to address these computational challenges. By leveraging fundamental quantum mechanical principles such as superposition and entanglement, quantum computers can process information in ways that are theoretically intractable for classical systems [11] [13]. The current practical implementation of these advantages comes through hybrid quantum-classical architectures, which integrate specialized quantum circuits within classical deep learning frameworks [2] [14]. These hybrid models strategically deploy quantum processing where it provides maximal benefit while relying on established classical methods for other computational tasks, creating a synergistic relationship that enhances overall efficiency and performance in binding affinity prediction [2] [11] [12].

Performance Benchmarks of Hybrid Architectures

Quantitative Performance Metrics

Empirical studies demonstrate that hybrid quantum-classical models achieve competitive performance while offering significant efficiency gains. The table below summarizes key quantitative findings from recent research on hybrid architectures for binding affinity prediction.

Table 1: Performance Metrics of Hybrid Quantum-Classical Models for Binding Affinity Prediction

| Model Architecture | Performance Metrics | Efficiency Gains | Reference |

|---|---|---|---|

| Hybrid Quantum-Classical CNN | Maintains performance of classical counterpart (Similar RMSE, MAE, R²) | 20% reduction in model complexity; 20-40% training time savings | [2] [7] |

| Multilayer Perceptron QNNs | ~20% higher accuracy on one unseen dataset; Lower accuracy on others | Training times "several orders of magnitude shorter" than classical | [13] |

| Hybrid Quantum Neural Network (HQNN) | Comparable or superior to classical neural networks | Achieved parameter-efficient model feasible for NISQ devices | [11] |

| Residual Hybrid Quantum-Classical Model | Up to 55% accuracy improvement over quantum baselines | Maintains low computational cost; enhances privacy | [15] |

Comparative Analysis of Architectural Efficiency

The performance advantages of hybrid architectures extend beyond raw accuracy metrics. Hybrid quantum-classical convolutional neural networks have demonstrated the capability to reduce model complexity by 20% while maintaining prediction performance comparable to fully classical models [2]. This complexity reduction directly translates to significant cost and time savings of up to 40% during the training stage, substantially accelerating the drug design process [2] [7]. Furthermore, hybrid models exhibit faster convergence and stabilization during training, achieving optimal performance in fewer epochs compared to classical counterparts [12].

The efficiency gains are particularly notable in scenarios with limited data availability. Quantum blocks function as compact, efficient learning modules that enable models to learn effectively from smaller datasets while reducing edge-case errors and maintaining stronger performance on complex or noisy inputs [14]. This sample efficiency is valuable in drug discovery contexts where experimentally determined binding affinities remain scarce and expensive to obtain. Additionally, certain hybrid quantum-classical architectures have demonstrated enhanced privacy protections against inference attacks, achieving stronger privacy guarantees without explicit noise injection techniques that typically reduce accuracy [15].

Experimental Protocols for Hybrid Model Implementation

Protocol 1: Hybrid Quantum-Classical CNN for 3D Structural Data

This protocol outlines the methodology for implementing a hybrid quantum-classical CNN that processes 3D structural data of protein-ligand complexes, based on the approach detailed by Domingo et al. [2].

Data Preparation and Preprocessing:

- Dataset Curation: Utilize the PDBBind dataset (2020 release), containing over 14,000 protein-ligand complexes with experimentally determined binding affinities. Partition data into training (refined set), validation, and test (core set) subsets following standard practice in the field [2].

- 3D Structural Representation: Represent each protein-ligand complex as a 3D grid structure with voxel resolution of 1.0 Å. Each grid point encodes atom properties including atom type, partial charge, and interaction features, creating a 4D tensor (width × depth × height × channels) for each complex [2].

- Data Normalization: Apply z-score normalization to continuous features and one-hot encoding to categorical atom type features to ensure optimal quantum circuit performance.

Hybrid Model Architecture:

- Classical Feature Extraction: Implement initial classical convolutional layers with 3D kernels to process input grids and extract spatial-hierarchical features from the protein-ligand structures.

- Quantum Layer Integration: Replace the first fully-connected layer with a quantum circuit consisting of approximately 300 quantum gates. Employ angle embedding for data encoding and a parameterized quantum circuit with rotational gates (RZ, RY, RX) and entangling gates (CNOT) to create a rich feature space [2].

- Measurement and Classical Post-Processing: Perform measurement in the Z-basis to extract classical outputs from the quantum circuit. Feed these quantum-processed features into subsequent classical fully-connected layers for final binding affinity prediction.

Training Configuration:

- Loss Function: Use Mean Squared Error (MSE) between predicted and experimental binding affinities (pKd values) as the primary optimization metric.

- Optimization: Employ the Adam optimizer with an initial learning rate of 0.001 and batch size of 32. Implement early stopping with a patience of 15 epochs based on validation set performance.

- Validation Metrics: Monitor multiple error metrics including Root Mean Squared Error (RMSE), Mean Absolute Error (MAE), Coefficient of Determination (R²), Pearson correlation coefficient, and Spearman correlation coefficient to ensure comprehensive model evaluation [2].

Figure 1: Workflow for Hybrid Quantum-Classical CNN Protocol

Protocol 2: HQDeepDTAF Framework for Sequence and Structural Data

This protocol details the implementation of the Hybrid Quantum DeepDTAF (HQDeepDTAF) framework, which processes protein and ligand information without requiring full 3D complex structures [11].

Multi-Modal Data Preparation:

- Protein Data Processing:

- Extract amino acid sequences from protein data banks.

- Encode sequences using learned embeddings (128-dimensional).

- For binding pocket information, extract discontinuous sequences and secondary structure elements (SSEs).

- Ligand Data Processing:

- Represent compounds using Simplified Molecular Input Line Entry System (SMILES) strings.

- Tokenize SMILES strings and embed them using learned embeddings (128-dimensional).

- Feature Concatenation: Concatenate protein and ligand representations to form a unified feature vector for each protein-ligand pair.

Hybrid Quantum Neural Network Design:

- Classical Encoder: Process protein and ligand inputs through separate classical neural networks (CNNs for proteins, GNNs or CNNs for ligands) to generate fixed-size feature representations.

- Quantum Module: Implement a hybrid quantum neural network (HQNN) with data re-uploading strategy to enhance expressivity without increasing qubit count. Use 4-8 qubits with alternating rotation and entanglement layers, maintaining circuit depth compatible with NISQ device limitations [11].

- Hybrid Embedding Scheme: Employ a feature map that reduces classical feature dimensions to match available qubit count while preserving critical information through principal component analysis or trainable classical compression layers.

Training and Noise Mitigation:

- Variational Training: Utilize gradient-based optimization with parameter-shift rules to train quantum circuit parameters alongside classical network weights.

- Noise Simulation and Mitigation: For deployment on real quantum hardware, implement error mitigation strategies including zero-noise extrapolation and measurement error mitigation to enhance result fidelity in noisy environments [11].

- Validation on Benchmark Sets: Evaluate model performance on curated test sets containing entirely unseen samples to assess generalization capability and quantum advantage [13].

Table 2: Research Reagent Solutions for Hybrid Quantum-Classical Experiments

| Resource Category | Specific Tools & Platforms | Primary Function | Implementation Considerations |

|---|---|---|---|

| Quantum Software Frameworks | CUDA-Q, PyTorch (with quantum extensions) | Quantum circuit design, simulation, and hybrid model training | Enables GPU-accelerated quantum simulations; Provides automatic differentiation [2] [16] |

| Quantum Hardware Platforms | ORCA Computing PT-1, Photonic QPUs | Execution of quantum circuits with real quantum effects | Room-temperature operation; 4 photons in 8 optical modes; ~600W power consumption [16] |

| Classical Computational Resources | NVIDIA H100/V100 GPUs, AWS ParallelCluster | Accelerated training of classical components and quantum simulations | Essential for processing large molecular datasets and complex model architectures [17] [16] |

| Datasets | PDBBind (2020), Binding affinity databases | Training and validation data for model development | Contains 14,000+ protein-ligand complexes with experimental binding affinities [2] [11] |

| Workload Management | Slurm, AWS Batch, Hybrid Job Schedulers | Orchestration of hybrid quantum-classical workflows | Manages resource allocation between CPU, GPU, and QPU resources [16] |

Implementation Considerations for Research Applications

Hardware and Software Integration

Successfully implementing hybrid quantum-classical models requires careful consideration of the hardware and software ecosystem. The integration of quantum processing units (QPUs) with high-performance computing (HPC) environments represents a significant advancement in making quantum resources accessible to researchers [16]. Platforms such as NVIDIA CUDA-Q provide a unified programming model for hybrid algorithms, enabling seamless execution across CPU, GPU, and QPU resources from within a single program [16]. This integration is particularly valuable for variational quantum algorithms that require iterative feedback loops between classical optimization routines and quantum circuit execution.

For drug discovery researchers, cloud-based quantum computing services such as Amazon Braket offer managed access to multiple quantum hardware providers, high-performance simulators, and tools for hybrid quantum-classical algorithms [17]. These services are integrated with established AWS infrastructure, allowing research teams to incorporate quantum resources into existing computational workflows without significant infrastructure investments. When designing hybrid models, researchers should consider implementing modular architectures where quantum components can be easily substituted with classical simulations during development and deployed to actual quantum hardware for production runs [14] [17].

Optimization Strategies for NISQ-Era Devices

Current quantum hardware operates in the Noisy Intermediate-Scale Quantum (NISQ) era, characterized by limited qubit counts, short coherence times, and vulnerability to environmental noise [11]. To achieve practical results under these constraints, researchers should adopt several key strategies:

Circuit Design Optimization: Implement shallow quantum circuits with minimal gate depth to reduce susceptibility to decoherence and gate errors. Studies demonstrate that "small and shallow quantum circuits win" in the NISQ era, as large, deep circuits remain slow and unreliable [14].

Qubit Efficiency: Employ encoding strategies that maximize information density per qubit. Angle embedding maintains constant circuit depth but requires O(N) qubits, while amplitude encoding provides logarithmic qubit scaling with respect to input size but induces polynomially increasing circuit depth [11].

Error Mitigation: Incorporate advanced error mitigation techniques such as zero-noise extrapolation, measurement error mitigation, and probabilistic error cancellation to enhance the fidelity of quantum computations despite hardware imperfections [2] [11].

Strategic Placement: Carefully select where to insert quantum components within classical architectures. Research indicates that "placement matters far more than quantity" - a single well-chosen insertion point will outperform scattering quantum layers throughout the model [14]. Common effective patterns include quantum heads (Q-Head) placed before final decision layers or quantum pooling (Q-Pool) replacing conventional pooling operations [14].

Figure 2: Advanced Hybrid Architecture with Residual Connections

Hybrid quantum-classical architectures represent a pragmatic and promising approach to enhancing binding affinity prediction in computational drug discovery. By strategically integrating quantum circuits within established classical deep learning frameworks, researchers can already achieve significant efficiency gains including reduced model complexity, faster training times, and improved parameter efficiency [2] [13] [12]. The experimental protocols outlined in this document provide practical methodologies for implementing these hybrid models, with considerations for both 3D structural data and sequence-based representations of protein-ligand interactions.

As quantum hardware continues to advance, hybrid architectures are poised to deliver increasingly substantial advantages. Future research directions should focus on developing standardized benchmarking methodologies for hybrid quantum-classical models, exploring novel quantum architectures specifically designed for molecular representation learning, and establishing best practices for deploying these models in production drug discovery pipelines. The integration of hybrid quantum-classical approaches with emerging computational paradigms, such as federated learning for privacy-preserving multi-institutional collaborations, presents particularly promising opportunities for advancing drug discovery while protecting sensitive intellectual property [15].

In computational drug discovery, accurately predicting the binding affinity between a protein and a ligand is a fundamental yet challenging task. Classical computational methods, including deep learning, have made significant progress but face challenges related to computational intensity and model complexity [18] [2]. The emergence of hybrid quantum-classical convolutional neural networks (QCCNNs) offers a promising pathway to overcome these limitations by leveraging the unique properties of quantum mechanics [2] [12].

This application note details the core quantum properties of superposition and entanglement, and explains their specific roles in enhancing feature extraction within QCCNNs for binding affinity prediction. We provide experimental protocols, quantitative performance comparisons, and implementation guidelines to enable researchers to leverage these quantum advantages in their computational workflows.

Theoretical Foundation: Key Quantum Properties

Quantum Superposition

Superposition is a fundamental quantum principle that distinguishes quantum bits (qubits) from classical bits. Unlike a classical bit, which is definitively in a state of 0 or 1, a qubit can exist in a linear combination of both states simultaneously [19] [20].

- Mathematical Representation: A qubit's state |ψ⟩ is described as |ψ⟩ = α|0⟩ + β|1⟩, where α and β are complex numbers called probability amplitudes. The probability of measuring |0⟩ is |α|² and |1⟩ is |β|², with |α|² + |β|² = 1 (Born rule) [20] [21].

- Computational Impact: This capability allows a quantum computer with

nqubits to represent 2ⁿ possible states concurrently. This exponential scaling underpins quantum parallelism, enabling quantum algorithms to evaluate multiple solutions simultaneously [20].

Quantum Entanglement

Entanglement is a powerful quantum phenomenon where two or more qubits become intrinsically correlated. The quantum state of each qubit cannot be described independently of the others, even when physically separated [19].

- Computational Impact: Entanglement creates non-classical correlations that exponentially increase the expressive power of a quantum computer. When combined with superposition,

nentangled qubits can manipulate a state space of 2ⁿ dimensions, a feat that would require an exponential amount of classical computational resources to simulate [19] [21].

Table 1: Computational Power Scaling with Qubit Count

| Number of Qubits (n) | Equivalent Classical States (2ⁿ) | Classical Computing Equivalent |

|---|---|---|

| 2 | 4 | 4 Bits |

| 13 | 8,192 | 1 Kilobyte (KB) |

| 50 | ~1.13 × 10¹⁵ | 1 Petabyte (PB) |

| 100 | ~1.27 × 10³⁰ | 1 Exabyte (EB) |

| 300 | ~2.04 × 10⁹⁰ | Incalculably large |

The Role of Quantum Properties in Feature Extraction for Drug Discovery

In hybrid QCCNNs, classical layers first perform initial feature extraction from raw input data, such as 3D molecular structures [2]. These features are then encoded into a quantum circuit, where superposition and entanglement perform a non-linear transformation, mapping the data into a high-dimensional quantum feature space.

Enhanced Representation of Molecular Data

- Superposition enables the simultaneous analysis of multiple molecular configurations or interaction patterns within a single quantum state [20]. This allows the model to explore a vast chemical space more efficiently than classical models.

- Entanglement captures complex, non-local interactions between different parts of a molecule or protein-ligand complex. It can model correlated phenomena that are challenging for classical feature extractors, such as long-range electronic interactions in a protein pocket [22].

Empirical Evidence from Binding Affinity Prediction

Studies replacing classical layers with variational quantum circuits (VQCs) in CNN architectures have demonstrated tangible benefits, as shown in the performance data below.

Table 2: Empirical Performance of Hybrid Quantum-Classical Models in Drug Discovery

| Model / Study | Dataset | Key Performance Metric | Result | Quantum Contribution |

|---|---|---|---|---|

| Hybrid Quantum CNN [2] | PDBbind (2020) | Training Parameter Count | 20% reduction vs. classical CNN | Maintained performance with fewer parameters |

| Training Time | 20-40% savings | More efficient convergence | ||

| QKDTI (QSVR) [22] | Davis | Prediction Accuracy | 94.21% | Outperformed classical models |

| KIBA | Prediction Accuracy | 99.99% | Superior generalization | |

| BindingDB | Prediction Accuracy | 89.26% | Validated on independent data | |

| VQR-based Hybrid Model [12] | - | Training Stabilization | Achieved faster stabilization | Reduced number of training epochs required |

Experimental Protocols

Protocol 1: Implementing a Hybrid Quantum-Classical CNN for Binding Affinity Prediction

This protocol outlines the steps for constructing and training a hybrid QCCNN, where a quantum circuit replaces one or more classical fully connected layers [2] [12].

Workflow Overview

Materials and Reagents Table 3: Essential Research Reagent Solutions for Hybrid QML Experiments

| Item | Function / Description | Example / Specification |

|---|---|---|

| Classical Compute Cluster | Executes classical neural network layers and data pre-processing. | High-performance GPU (e.g., NVIDIA A100/A6000) |

| Quantum Processing Unit (QPU) or Simulator | Executes quantum circuits. For NISQ era, simulators are often used. | IBM Quantum, Google Cirque, Amazon Braket, CUDA-enabled simulators (e.g., NVIDIA cuQuantum) |

| Quantum Machine Learning Framework | Provides libraries for building and training hybrid models. | Pennylane, Qiskit Machine Learning, TorchQuantum |

| Biomolecular Dataset | Curated dataset of protein-ligand complexes with binding affinity labels. | PDBbind (refined & core sets), Davis, KIBA, BindingDB |

Procedure

Data Preprocessing and Feature Extraction [2] [12]

- Input: 3D structures of protein-ligand complexes from PDBbind.

- Process: Represent the complex as a 3D grid (e.g., 20Å × 20Å × 20Å). Each voxel encodes atom properties (type, charge, etc.).

- Action: Pass the 3D grid through a classical 3D CNN to extract high-level feature maps.

- Output: Flatten the feature maps into a 1D feature vector.

Quantum Feature Mapping [18] [22]

- Input: The classical feature vector from Step 1.

- Action: Normalize the feature vector and encode it into a quantum state using a parameterized gate strategy like angle embedding. Each feature value rotates a specific qubit around a Bloch sphere axis (e.g., using RY/RZ gates).

- Rationale: This step maps the classical data non-linearly into a high-dimensional quantum Hilbert space.

Variational Quantum Circuit (Feature Transformation) [18] [12]

- Action: Apply a sequence of parameterized quantum gates (e.g., entangling gates like CNOT or CZ, followed by single-qubit rotation gates) to the encoded state.

- Role of Entanglement: The entangling gates create correlations between qubits, enabling the model to capture complex, non-linear relationships within the feature data.

- Role of Superposition: The circuit operates on a superposition of all possible basis states, allowing for complex computations on the feature representation simultaneously.

Measurement and Classical Post-Processing [2]

- Action: Measure the expectation values of a set of observables (e.g., Pauli-Z operator on each qubit). This process collapses the superposition and yields classical numerical values.

- Action: Feed these classical outputs into a final classical fully-connected regression layer to produce the final predicted binding affinity value (e.g., pKd or pKi).

Protocol 2: Evaluating Quantum Expressibility and Entangling Capability

This protocol is critical for designing an effective quantum circuit, ensuring it is sufficiently powerful (expressive) for the task without being prohibitively deep for NISQ devices [18] [11].

Logical Flow of Circuit Design and Evaluation

Procedure

Define a Circuit Ansatz: Choose a parameterized quantum circuit architecture, specifying the number of qubits, the number of layers (depth), and the types of gates (e.g., RY, RZ, CNOT).

Quantify Expressibility [18]:

- Method: Generate a large set of parameters for the circuit and collect the resulting output states. Compare the distribution of these states to the distribution of states from a Haar-random unitary (which has maximum expressibility) using a statistical distance metric (e.g., Kullback-Leibler divergence).

- Outcome: A lower divergence indicates higher expressibility, meaning the circuit can generate a wider range of quantum states.

Quantify Entangling Capability [18]:

- Method: Use metrics such as the entanglement entropy or the Meyer-Wallach measure.

- Action: Prepare the circuit in a simple initial state (e.g., |0⟩^⊗n), run the circuit with randomized parameters, and calculate the chosen entanglement metric on the output state.

- Outcome: This measures the circuit's ability to generate entanglement between qubits, which is directly linked to its computational power.

Noise Simulation and Model Selection:

- Action: Run the candidate circuits on a quantum simulator that incorporates noise models (e.g., gate error, decoherence) representative of real NISQ hardware.

- Goal: Select the circuit that offers the best trade-off between high expressibility/entanglement and resilience to noise, leading to a robust and efficient hybrid model.

The Noisy Intermediate-Scale Quantum (NISQ) era represents a pivotal period in computational science, characterized by quantum processors containing from 50 to a few hundred qubits that operate without full error correction [23] [24]. For drug discovery researchers and pharmaceutical development professionals, this era presents both significant constraints and unprecedented opportunities. The practical application of NISQ devices faces fundamental challenges including limited qubit coherence times, gate infidelities, and restricted qubit connectivity [24] [25]. However, through carefully designed hybrid quantum-classical frameworks, these limitations can be mitigated to tackle specific, high-value problems in the drug discovery pipeline.

Central to this endeavor is the integration of quantum computing with classical machine learning architectures, particularly for critical tasks like binding affinity prediction. The hybrid quantum-classical convolutional neural network (HQ-CNN) represents an emerging paradigm that leverages quantum computational advantages while operating within current hardware constraints [2] [7] [10]. This application note examines the practical implementation of such approaches, providing detailed protocols and analytical frameworks to guide researchers in leveraging NISQ-era technologies for drug discovery applications.

NISQ-Era Constraints: A Realistic Assessment

Hardware Limitations and Their Implications

Table 1: Primary NISQ Hardware Constraints and Research Implications

| Constraint Category | Specific Limitations | Practical Research Implications |

|---|---|---|

| Qubit Scale | ~50-1000 qubits (e.g., IBM Condor: 1,121 qubits) [25] | Limits system size for molecular simulations; necessitates active space approximations and embedding techniques [3] |

| Coherence Times | Limited decoherence times (micro- to milliseconds) [24] | Restricts quantum circuit depth and complexity; requires shallow ansatz designs [24] |

| Gate Infidelities | Error rates ~0.1-1% for single- and two-qubit gates [24] | Introduces computational inaccuracies; necessitates error mitigation strategies [24] [3] |

| Qubit Connectivity | Restricted connectivity (e.g., heavy-hex lattice in IBM processors) [25] | Impacts ansatz design efficiency; may require additional SWAP operations increasing circuit depth [24] |

| Measurement Fidelity | Readout errors typically ~1-3% [24] | Affects result reliability; demands measurement error mitigation techniques [24] |

Algorithmic Constraints in the NISQ Context

The hardware limitations of NISQ devices directly constrain algorithmic design, particularly for quantum chemistry applications. Deep quantum circuits required for exact molecular simulations exceed current coherence times, necessitating approximate methods [26] [3]. The Variational Quantum Eigensolver (VQE) has emerged as a leading algorithmic framework for molecular energy calculations, employing parameterized quantum circuits with classical optimization loops [24] [3]. This approach trades circuit depth for increased measurement counts, aligning better with NISQ constraints than quantum phase estimation algorithms that require deeper circuits.

For binding affinity prediction, the hybrid quantum-classical convolutional neural network represents another adaptive framework, where specific convolutional layers are replaced with quantum circuits designed to process high-dimensional data more efficiently [2] [7]. This approach reduces the classical parameter count by approximately 20% while maintaining predictive accuracy, demonstrating how strategic quantum-classical partitioning can optimize within NISQ constraints [2].

Hybrid Quantum-Classical CNN for Binding Affinity Prediction: Application Protocol

Experimental Workflow and Implementation

The following diagram illustrates the complete workflow for implementing a hybrid quantum-classical CNN for binding affinity prediction:

Research Reagent Solutions

Table 2: Essential Research Reagents and Computational Tools for HQ-CNN Implementation

| Tool/Category | Specific Examples | Function/Purpose | Implementation Notes |

|---|---|---|---|

| Quantum Software Platforms | IBM Qiskit, Google Cirq, Amazon Braket | Quantum circuit design, simulation, and execution | Qiskit particularly suited for NISQ algorithm development with error mitigation modules [24] |

| Classical Machine Learning Frameworks | PyTorch, TensorFlow with quantum plugins | Classical neural network implementation and hybrid training loops | PyTorch enables gradient computation through quantum circuits via parameter-shift rules [2] |

| Chemical Datasets | PDBBind (2020 version: 14,000+ complexes) [2] | Training and validation data for binding affinity prediction | Core set used for testing; refined set for training/validation with early stopping [2] |

| Quantum Simulators | Qiskit Aer, Google Quantum Virtual Machine | Algorithm validation and debugging without quantum hardware access | Enable simulation of noisy quantum devices with configurable error models [24] |

| Error Mitigation Tools | Zero-Noise Extrapolation (ZNE), Measurement Error Mitigation | Enhancement of result accuracy from noisy quantum devices | ZNE particularly valuable for deep circuits; measurement mitigation essential for readout errors [24] |

| Molecular Visualization & Analysis | RDKit, PyMOL, OpenBabel | Molecular structure preprocessing and feature extraction | Critical for converting molecular structures to 3D grids for CNN input [2] |

Detailed Experimental Protocol

Data Preparation and Preprocessing

- Dataset Acquisition: Download the PDBBind dataset (2020 version containing over 14,000 protein-ligand complexes with experimentally measured binding affinities) [2].

- Data Partitioning: Divide the dataset into refined set (for training and validation) and core set (for final testing), maintaining temporal splits to avoid data leakage.

- 3D Structural Processing:

- Generate 3D voxelized representations (typically 20Å × 20Å × 20Å grids with 1Å resolution)

- Encode atom types, partial charges, and interaction properties across channels

- Apply random rotations and translations for data augmentation during training

Hybrid Model Architecture Configuration

- Classical Component: Implement 3D convolutional layers with increasing filter depth (e.g., 32, 64, 128 filters) with 3×3×3 kernels, ReLU activation, and batch normalization.

- Quantum Layer Integration:

- Replace the first convolutional layer with a quantum circuit of equivalent function

- Design parameterized quantum circuits with hardware-efficient ansatz

- Implement quantum feature maps using ZZFeatureMap or similar encoding strategies

- Quantum Circuit Design:

- Utilize hardware-efficient ansatz with alternating rotation and entanglement layers

- Employ linear qubit connectivity with parallel CNOT gates to minimize circuit depth [24]

- Implement measurement in Pauli-Z basis for expectation value extraction

The following diagram details the quantum convolutional layer design and its integration point:

Training and Optimization Protocol

- Hybrid Training Loop:

- Initialize both classical and quantum parameters using Xavier/Glorot initialization

- Utilize Adam optimizer with learning rate 0.001 and betas (0.9, 0.999)

- Implement mini-batch training with batch size 32-64 depending on available memory

- Convergence Monitoring: Track RMSE, MAE, R², Pearson, and Spearman metrics on validation set with early stopping (patience=10 epochs) to prevent overfitting [2].

- Error Mitigation Implementation:

- Apply measurement error mitigation using matrix inversion on calibration data

- Implement Zero-Noise Extrapolation (ZNE) with folding techniques for circuit-depth error mitigation

- Utilize dynamical decoupling where applicable for idle qubit coherence preservation

Performance Metrics and Benchmarking

Table 3: Performance Comparison: Classical vs. Hybrid Quantum-Classical CNN

| Performance Metric | Classical 3D CNN | Hybrid Quantum-Classical CNN | Improvement/Savings |

|---|---|---|---|

| Model Complexity (Parameters) | ~1.2M | ~960,000 | 20% reduction [2] |

| Training Time | Baseline reference | 20-40% reduction | Hardware-dependent [2] |

| Inference Time | Baseline reference | Comparable | No significant difference reported [2] |

| Prediction Accuracy (RMSE) | 1.24 pKd | 1.25 pKd | Statistically equivalent performance [2] |

| Generalization Capacity | Comparable across test sets | Maintained performance on core set | Properly designed quantum layers preserve model capacity [2] |

| Resource Consumption (Quantum) | N/A | ~300 gates, 4-8 qubits | Compatible with current NISQ devices [2] |

Complementary NISQ Applications in Drug Discovery

Molecular Energy Calculations with VQE

Beyond binding affinity prediction, VQE represents a fundamental NISQ-era algorithm for molecular energy calculations, which form the basis for more complex drug discovery simulations [24] [3]. The following protocol outlines a standardized approach for molecular energy estimation:

Molecular Hamiltonian Preparation:

- Generate molecular Hamiltonian in second quantization using STO-3G or 6-31G basis sets

- Apply qubit transformation (Jordan-Wigner or Bravyi-Kitaev) to obtain Pauli string representation

- Utilize tapering techniques to reduce qubit requirements by exploiting symmetry

Ansatz Selection and Optimization:

- Implement hardware-efficient ansatz with alternating rotation and entanglement layers

- Employ circuit depth optimization techniques (e.g., QuantumNAS) to minimize gate count [24]

- Utilize iterative gate pruning to remove redundant parameters and reduce circuit depth

Measurement Optimization:

- Implement Pauli term grouping using commutative measurements to reduce shot requirements

- Apply measurement error mitigation through calibration matrix inversion

- Utilize shot allocation strategies based on coefficient magnitudes for variance reduction

Error Mitigation Strategy Integration

Effective error mitigation is essential for obtaining meaningful results from NISQ devices. The following integrated strategy provides a comprehensive approach:

Table 4: Layered Error Mitigation Protocol for NISQ Algorithms

| Mitigation Layer | Specific Techniques | Implementation Protocol | Expected Improvement |

|---|---|---|---|

| Compilation-Level | Noise-adaptive qubit mapping, Dynamical decoupling | Map logical qubits to physical qubits with best coherence properties and lowest gate errors; insert identity gates arranged as dynamical decoupling sequences during idle periods | 10-30% error reduction depending on device noise heterogeneity [24] |

| Circuit-Level | Circuit optimization, Gate decomposition | Decompose gates to native gate set; cancel consecutive redundant gates; use commutation rules to optimize circuit depth | 5-15% reduction in circuit depth and error accumulation [24] |

| Measurement-Level | Readout error mitigation, Clustering measurements | Construct calibration matrix from preparation and measurement of all basis states; group commuting Pauli terms to reduce measurement overhead | 20-50% reduction in measurement errors; up to 80% reduction in required measurements [24] |

| Post-Processing-Level | Zero-Noise Extrapolation (ZNE), Probabilistic error cancellation | Execute same circuit at multiple noise levels (via unitary folding or pulse stretching); extrapolate to zero-noise limit; apply quasi-probability methods for error cancellation | 40-70% reduction in coherent and incoherent errors depending on circuit depth [24] |

The NISQ era presents a constrained but promising landscape for drug discovery applications. Through hybrid quantum-classical approaches such as the HQ-CNN for binding affinity prediction, researchers can already achieve meaningful computational advantages including reduced model complexity and training time savings while maintaining predictive accuracy [2]. The practical implementation of these technologies requires careful attention to hardware limitations, strategic error mitigation, and algorithm co-design optimized for current quantum processing units.

As quantum hardware continues to evolve—with roadmaps projecting 4,000+ qubit processors by 2025 and fault-tolerant quantum computing by 2029—the capabilities for drug discovery applications will expand significantly [25]. The protocols and methodologies outlined in this application note provide a foundation for researchers to develop quantum-ready capabilities today while preparing for more advanced applications in the coming years. By establishing expertise in hybrid quantum-classical algorithms and error mitigation strategies, drug discovery teams can position themselves to leverage quantum advantages as hardware capabilities mature, potentially transforming computational approaches to molecular simulation and binding affinity prediction.

Architectural Blueprints: Implementing Hybrid Quantum-Classical CNNs for Binding Affinity

The integration of quantum circuits within classical convolutional neural networks (CNNs) has emerged as a promising architectural paradigm, particularly for computationally intensive tasks like protein-ligand binding affinity prediction. These hybrid quantum-classical convolutional neural networks (HQCNNs) leverage the unique properties of quantum computation—superposition, entanglement, and interference—to create more expressive feature representations while potentially reducing classical parameter counts [2] [27]. In the specific context of drug discovery, where accurately predicting binding affinity is both computationally demanding and scientifically valuable, HQCNNs offer a pathway to maintain high predictive performance with reduced model complexity [2] [11].

The fundamental premise of the hybrid quantum-convolutional layer lies in its ability to operate within exponentially large Hilbert spaces, enabling the compact representation and manipulation of complex protein-ligand interaction patterns that are challenging for classical networks to capture efficiently [27]. This capability stems from parameterized quantum circuits (PQCs) that perform highly non-linear transformations on input data, effectively creating complex decision boundaries and feature mappings [28] [27]. When strategically positioned within classical CNN architectures, these quantum layers can enhance the network's ability to discern subtle structural determinants of binding affinity from molecular structure data.

For drug discovery professionals, the practical value of these architectures manifests in demonstrated performance improvements, including a 20% reduction in model complexity and 20-40% savings in training time while maintaining prediction accuracy comparable to fully classical models [2]. This efficiency gain is particularly valuable in early-stage drug screening, where evaluating vast chemical spaces against target proteins requires immense computational resources. The following sections detail the structural formulation, experimental validation, and practical implementation of these hybrid layers for binding affinity prediction.

Structural Formulation of Hybrid Layers

Quantum Circuit Architecture and Components

The hybrid quantum-convolutional layer functions as a feature transformation module, typically replacing an early convolutional layer in a classical CNN [2]. Its core component is a parameterized quantum circuit (PQC) constructed from several fundamental elements:

Quantum State Encoding: Classical data (e.g., molecular features or image patches) must be encoded into quantum states. Angle encoding is frequently employed for molecular data, where classical values determine rotation angles of quantum gates [29] [11]. For example, a feature value ( xi ) might be encoded via an ( Ry(x_i) ) rotation gate. This method maintains constant circuit depth regardless of input dimension, though it requires ( O(N) ) qubits [11].

Entangling Layers: Following encoding, entangling gates create quantum correlations between qubits. The Ising coupling gate has demonstrated particular effectiveness in multi-channel image classification, outperforming more commonly used rotation gates and controlled-NOT (CNOT) gates in certain architectures [29]. These gates implement the quantum convolutional kernel, with the specific pattern of entanglement (e.g., linear chain or lattice configurations) significantly influencing performance.

Variational Parameters: The quantum circuit contains trainable parameters (( \theta )) that are optimized classically. These typically correspond to angles in rotation gates (e.g., ( Rx(\thetai), Ry(\thetai), Rz(\thetai) )) and are adjusted during training to minimize the binding affinity prediction error [28] [27].

Quantum Measurement: The final component involves measuring the quantum state to extract classical features for subsequent layers. Expectation values of Pauli operators (e.g., ( \langle Z \rangle )) are commonly used, generating feature maps that are passed to classical layers [27].

Table 1: Core Components of a Hybrid Quantum-Convolutional Layer

| Component | Implementation Options | Key Considerations |

|---|---|---|

| State Encoding | Angle encoding, amplitude encoding, basis encoding | Angle encoding balances efficiency with NISQ feasibility [11] |

| Entanglement | Ising coupling gates, CNOT gates, CZ gates | Ising gates show advantage for multi-channel data [29] |

| Variational Parameters | Rotation angles, gate selection parameters | Number impacts expressivity and trainability [28] |

| Measurement | Pauli expectations, quantum tomography | Pauli-Z expectations common for feature extraction [27] |

Integration with Classical Architecture

The PQC integrates with the classical CNN through a carefully designed interface. For protein-ligand binding affinity prediction, molecular structures are typically represented as 3D grids [2]. The hybrid processing follows this sequence:

Classical Pre-processing: Input complexes are converted to 3D structural representations, with atomic properties mapped to grid values.

Quantum Convolution: Local patches from the 3D grid are encoded into quantum states using angle encoding [2] [11]. The PQC processes these patches, with measurement results forming output feature maps.

Channel Handling: For multi-channel inputs, different strategies exist. Some architectures process each channel through separate PQCs then combine outputs [29], while others encode inter-channel information directly into the quantum circuit [28].

Classical Post-processing: The quantum-derived features are passed to subsequent classical layers (e.g., fully connected layers) for final binding affinity prediction [11].

This integration creates a cohesive pipeline where quantum layers handle complex feature transformation while classical layers manage broader pattern recognition and regression tasks.

Performance Analysis and Comparative Evaluation

Quantitative Performance Metrics

HQCNNs for binding affinity prediction have demonstrated compelling performance advantages. In one comprehensive study, a hybrid quantum-classical 3D CNN achieved comparable accuracy to fully classical models while reducing training parameters by 20% and training time by 20-40%, depending on hardware configuration [2]. This efficiency gain is particularly valuable in drug discovery contexts where model retraining with new compounds is frequent.

For multi-class classification tasks relevant to molecular interaction profiling, quantum-convolutional fusion has shown accuracy improvements across various datasets. On CIFAR-10 image classification (a proxy for complex feature learning), one hybrid model achieved 92.40% accuracy using Ising coupling gates [29]. Another study reported a 94.3% accuracy in the Lab color space, outperforming classical CNN performance of 92.8% in RGB space on the same architecture [28].

Table 2: Performance Comparison of HQCNN Architectures

| Architecture | Application | Key Metric | Performance | Classical Comparison |

|---|---|---|---|---|

| Hybrid 3D CNN [2] | Binding Affinity Prediction | Parameter Reduction | 20% fewer parameters | Comparable accuracy |

| Hybrid 3D CNN [2] | Binding Affinity Prediction | Training Time | 20-40% reduction | Same hardware |

| MHQCNN [29] | CIFAR-10 Classification | Accuracy | 92.40% | Superior to other hybrid models |

| HQCNN-Lab [28] | Multi-space Classification | Accuracy | 94.3% | 92.8% for classical CNN |

| Distributed QCNN [30] | Medical Image Classification | Qubit Reduction | 8-qubit circuit on 5-qubit hardware | Maintained performance |

Qubit Efficiency and Scalability

A significant innovation in hybrid quantum-convolutional design is the implementation of distributed techniques via quantum circuit splitting. This approach allows an 8-qubit QCNN to be reconstructed using only 5 qubits, nearly halving the quantum resource requirements while maintaining model performance [30]. This is particularly relevant for binding affinity prediction where complex molecular interactions might otherwise require substantial quantum resources.

The scalability of these models is further enhanced through optimized encoding strategies. While amplitude encoding offers logarithmic qubit scaling with respect to input size, it typically requires circuit depths that grow polynomially with data dimension [11]. In contrast, angle encoding maintains constant circuit depth, making it more suitable for current NISQ devices, though it requires more qubits for high-dimensional data [11].

Experimental Protocols and Implementation

Workflow for Binding Affinity Prediction

Implementing a hybrid quantum-classical CNN for binding affinity prediction follows a structured workflow that integrates quantum and classical processing stages. The diagram below illustrates the complete experimental pipeline:

Protocol 1: Molecular Data Preparation and Classical Feature Extraction

Objective: Prepare protein-ligand complexes from the PDBbind database [2] [11] for input into the hybrid network.

Materials:

- PDBbind database (e.g., v2020 with ~14,000 complexes)

- Molecular visualization software (PyMOL, Chimera)

- Classical CNN (MobileNetV2 recommended [30])

Procedure:

- Data Retrieval: Download the refined set and core set of PDBbind database. The core set serves as the test set, while the refined set is divided into training and validation subsets (80/20 split) [2].

- 3D Structure Preparation:

- For each protein-ligand complex, generate a 3D grid with 1Å resolution centered on the binding pocket.

- Map atomic properties (element type, partial charge, hydrophobicity) to separate channels in the grid.

- Normalize each channel to zero mean and unit variance.

- Classical Feature Extraction:

- Implement a lightweight CNN (e.g., MobileNetV2) for initial feature extraction.

- Use the inverse residual structure with linear bottlenecks to reduce dimensionality while preserving features [30].

- Output a feature vector of reduced dimensionality for quantum processing.

- Data Augmentation:

- Apply random rotations and translations to the 3D grids.

- Add Gaussian noise with zero mean and 0.01 standard deviation to increase robustness.

Quality Control: Monitor the distribution of binding affinity values (pKd) across splits to ensure representative sampling of the affinity range.

Protocol 2: Quantum Circuit Implementation and Training

Objective: Implement and train the hybrid quantum-convolutional layer for binding affinity prediction.

Materials:

- Quantum simulation framework (TensorFlow Quantum, Pennylane)

- Parameterized quantum circuit with rotation and entangling gates

- Classical optimizer (Adam, learning rate 0.001)

Procedure:

- Quantum State Encoding:

- Implement angle encoding using ( Ry ) gates for the feature vector elements.

- For a feature vector ( x = (x1, x2, ..., xn) ), apply ( Ry(xi) ) to qubit ( i ).

- For larger feature vectors, employ dimensionality reduction or segment across multiple circuits.

- Parameterized Quantum Circuit:

- Construct a circuit with alternating layers of rotation and entanglement:

- Rotation layers: Apply ( Ry(\thetai) ) gates with trainable parameters ( \theta ).

- Entanglement layers: Implement Ising coupling gates or CNOT gates in a linear chain topology [29].

- For multi-channel data, implement inter-channel information exchange through additional two-qubit parameterized unitary operators before pooling [28].

- Construct a circuit with alternating layers of rotation and entanglement:

- Quantum Measurement:

- Measure expectation values of Pauli-Z operators on each qubit.

- For ( n ) qubits, this generates an ( n )-dimensional feature vector for the classical layers.

- Hybrid Training:

- Initialize quantum circuit parameters with uniform random values in [0, ( 2\pi )].

- Use mean squared error (MSE) loss between predicted and experimental pKd values.

- Employ early stopping with patience of 10 epochs based on validation loss.

- For limited quantum hardware access, implement a two-stage training: pretrain on simulated data, then fine-tune with experimental data [31].

Troubleshooting: If training plateaus, reduce learning rate or increase the number of entanglement layers. Monitor for barren plateaus by tracking gradient magnitudes.

Visualization of Hybrid Layer Architecture

The structural configuration of the hybrid quantum-convolutional layer involves specific quantum circuit components arranged to maximize feature extraction efficiency. The following diagram details the internal architecture of the quantum processing unit:

Research Reagent Solutions

Implementing hybrid quantum-convolutional layers requires both computational tools and conceptual frameworks. The following table details essential resources for researchers developing these architectures for binding affinity prediction:

Table 3: Essential Research Resources for Hybrid Quantum-Convolutional Implementation

| Resource Category | Specific Tool/Platform | Application in HQCNN Development |

|---|---|---|

| Quantum Simulation | TensorFlow Quantum [29] | Hybrid model integration and training |

| Quantum Simulation | Pennylane [28] | Quantum circuit definition and optimization |

| Molecular Data | PDBbind Database [2] [11] | Protein-ligand complexes with binding affinities |

| Classical Deep Learning | PyTorch/TensorFlow [2] | Classical CNN components and optimization |

| Quantum Hardware | NISQ Devices [30] [11] | Experimental validation on quantum processors |

| Encoding Methods | Angle Encoding [29] [11] | Classical-to-quantum data transformation |

| Error Mitigation | Data Regression Error Mitigation [2] | Noise handling for circuits with <300 gates |

| Circuit Optimization | Quantum Circuit Splitting [30] | Resource reduction for limited qubit devices |

The strategic fusion of quantum convolutional layers with classical neural architectures represents a promising advancement for binding affinity prediction in computational drug discovery. By leveraging parameterized quantum circuits as feature transformation modules within established CNN pipelines, researchers can achieve comparable or superior predictive performance with reduced parameter counts and training time. The structural formulations and experimental protocols detailed in this work provide a foundation for implementing these hybrid layers, with specific considerations for molecular data processing and NISQ device constraints. As quantum hardware continues to evolve, these hybrid architectures offer a practical pathway toward quantum advantage in critical pharmaceutical applications, balancing expressivity with implementation feasibility.