How Attention Mechanisms Revolutionize Binding Affinity Prediction in Drug Discovery

This article explores the transformative role of attention mechanisms in computational models for predicting drug-target binding affinity (DTA), a critical task in modern drug discovery.

How Attention Mechanisms Revolutionize Binding Affinity Prediction in Drug Discovery

Abstract

This article explores the transformative role of attention mechanisms in computational models for predicting drug-target binding affinity (DTA), a critical task in modern drug discovery. Aimed at researchers and drug development professionals, it provides a comprehensive analysis spanning from foundational concepts to cutting-edge applications. The article details how attention mechanisms enable models to dynamically focus on critical molecular features, such as specific protein residues and ligand atoms, thereby improving prediction accuracy and interpretability. It covers diverse methodological implementations, including graph, sequence, and hybrid models, alongside strategies for troubleshooting common optimization challenges like gradient conflicts and data bias. Finally, the article presents a comparative validation of state-of-the-art models, highlighting performance benchmarks and the tangible impact of these AI advancements on accelerating the drug development pipeline.

The Core Concept: How Attention Mechanisms Focus on Critical Drug-Target Interactions

The process of drug discovery is notoriously slow and expensive, requiring over a decade and billions of dollars to bring a single drug to market [1]. At the heart of this challenge lies drug-target binding affinity (DTA) prediction—the computational task of determining how tightly a small molecule (drug) binds to its protein target. Accurate affinity prediction is crucial as it determines the therapeutic efficacy of a drug candidate; a molecule must bind with sufficient strength to elicit a desired biological response without causing harmful side effects [2] [3]. While traditional experimental methods for assessing binding affinities, such as high-throughput screening, are resource-intensive and often impractical for exploring vast chemical spaces, computational approaches have emerged as indispensable tools in modern medicinal chemistry [4].

The field is currently undergoing a radical transformation driven by deep learning (DL). Early computational strategies relied mainly on physics-based methods like molecular docking and molecular dynamics (MD) simulations, which provide detailed structural insights but demand extensive computational resources and accurate structural input [4] [5]. Recent advances in artificial intelligence have introduced powerful data-driven paradigms that complement and extend these physics-based strategies, leading to more accurate and efficient affinity predictions [5]. This technical guide explores the core problem of binding affinity prediction, with a particular focus on how attention mechanisms—a transformative architecture in deep learning—are advancing the state of the art in this critical domain of drug discovery.

The Evolution of Binding Affinity Prediction

From Traditional Methods to Deep Learning

The journey of binding affinity prediction methodologies has evolved from manual feature-based approaches to sophisticated end-to-end deep learning models. Pre-deep learning era techniques primarily relied on statistical and classical machine learning methods that leveraged manually curated descriptors or features of drugs and targets [6]. These methods, however, depended solely on available clinical data and required iterative analysis through standard statistical methods susceptible to errors [6].

With the advent of deep learning, the field witnessed a paradigm shift. Deep learning models demonstrated the ability to handle large datasets, learn complex non-linear relations, and automatically extract relevant features through networks of artificial neurons, diminishing the challenge of manual feature selection [6]. Early deep learning approaches utilized simpler feature extraction methods using convolutional neural networks (CNNs) and recurrent neural networks from one-dimensional sequential information of drugs and targets [6]. While these approaches showed superior results to earlier methods, they primarily addressed drugs and proteins in their primary-structural forms, often ignoring their three-dimensional configurations and specific binding pocket information [6].

The Rise of Attention Mechanisms

Attention mechanisms have revolutionized numerous fields of artificial intelligence by enabling models to dynamically focus on the most relevant parts of their input when making predictions. In the context of binding affinity prediction, attention mechanisms provide a powerful framework for identifying critical molecular interactions that drive binding strength between drugs and their protein targets.

The fundamental principle behind attention mechanisms is their ability to assign importance weights to different components of the input data, allowing the model to emphasize features that contribute most significantly to the binding affinity while suppressing less relevant information. This capability is particularly valuable in drug discovery, where binding interactions are often governed by a sparse set of critical residues and molecular substructures rather than being uniformly distributed across the entire protein-ligand interface [4].

Attention Mechanisms in Modern DTA Prediction Models

Architectural Foundations and Implementation

Contemporary DTA prediction models implement attention mechanisms through various specialized architectures that operate at different granularities of the protein-ligand complex. The hierarchical attention framework has emerged as a particularly effective design pattern, enabling models to capture both local atomic interactions and global contextual information [4].

At the molecular level, graph attention networks (GATs) have proven highly effective for processing drug molecules represented as molecular graphs. These networks operate on atom-level features, where each node (atom) attends to its neighboring nodes to compute updated feature representations that capture both chemical properties and local topological environments [4]. For protein sequences, self-attention mechanisms (similar to those in transformer architectures) enable the model to identify functionally important residues and motifs regardless of their positional distance in the primary sequence [4].

Table 1: Key Attention Mechanisms in DTA Prediction

| Attention Type | Operational Scope | Key Function | Representative Model |

|---|---|---|---|

| Hierarchical Attention | Multi-scale features | Dynamically fuses local structural and global contextual information | HPDAF [4] |

| Graph Attention | Molecular graphs | Captures atom-level interactions and chemical environments | GraphDTA [2] |

| Self-Attention | Protein sequences | Identifies functionally critical residues and domains | DeepDTA variants [1] |

| Cross-Attention | Protein-ligand pairs | Models interaction patterns between drug and target features | Multimodal models [6] |

| Gradient Alignment | Multitask learning | Mitigates conflicts between affinity prediction and drug generation | DeepDTAGen (FetterGrad) [2] |

Case Study: HPDAF's Hierarchical Dual-Attention Framework

The HPDAF (Hierarchically Progressive Dual-Attention Fusion) framework exemplifies the sophisticated application of attention mechanisms in modern DTA prediction [4]. This model integrates three types of biochemical information—protein sequences, drug molecular graphs, and structural data from protein-binding pockets—through specialized feature extraction modules.

HPDAF employs a novel hierarchical attention-based mechanism that combines these diverse features through two complementary attention systems: the Modality-Aware Calibration Network (MACN) and the Attribute-Aware Calibration Network (AACN) [4]. The MACN operates as a modality-specific local feature enhancer that identifies critical patterns within each data type (sequences, graphs, pockets), while the AACN functions as a global context calibrator that captures interdependencies across different modalities [4].

This dual-attention approach enables HPDAF to dynamically emphasize the most relevant structural and sequential information, achieving a 7.5% increase in Concordance Index and a 32% reduction in Mean Absolute Error compared to DeepDTA on the CASF-2016 benchmark dataset [4]. The attention weights provide intrinsic interpretability, allowing researchers to identify which protein residues, molecular substructures, and pocket regions contribute most significantly to the predicted binding affinity.

Case Study: DeepDTAGen and the FetterGrad Algorithm

DeepDTAGen represents another innovative application of attention mechanisms through its multitask learning framework, which simultaneously predicts drug-target binding affinities and generates novel target-aware drug variants [2]. This model faces the optimization challenge of gradient conflicts between distinct tasks, which can impede convergence and reduce model performance.

To address this, DeepDTAGen introduces the FetterGrad algorithm, a novel approach that maintains gradient alignment between tasks by minimizing the Euclidean distance between their respective gradients during training [2]. This attention-based gradient regulation ensures that the shared feature space learns representations beneficial for both affinity prediction and drug generation, mitigating the biased learning that commonly plagues multitask architectures.

The FetterGrad algorithm demonstrates how attention-inspired mechanisms can operate at the optimization process level rather than just the feature representation level, expanding the applications of attention in drug discovery pipelines. On benchmark datasets (KIBA, Davis, BindingDB), DeepDTAGen achieves competitive performance with MSE of 0.146, CI of 0.897, and r²m of 0.765 on the KIBA test set while simultaneously generating valid, novel, and unique drug candidates [2].

Experimental Protocols and Methodologies

Benchmarking Strategies and Evaluation Metrics

Rigorous evaluation of DTA prediction models requires standardized benchmark datasets and appropriate performance metrics. The most commonly used datasets include KIBA, Davis, BindingDB, and PDBbind [2] [1]. These datasets provide experimentally validated binding affinities for protein-ligand complexes, typically reported as Kd, Ki, or IC50 values, which are converted to log-scaled measurements (pKd, pKi, pIC50) for model training and evaluation [1].

For the affinity prediction task, standard evaluation metrics include Mean Squared Error (MSE), Concordance Index (CI), R squared (r²m), and Area Under Precision-Recall Curve (AUPR) [2]. The Concordance Index is particularly important as it measures the model's ability to correctly rank affinities, which is often more critical in drug discovery applications than absolute value prediction [2].

Table 2: Performance Comparison of Recent DTA Models on Benchmark Datasets

| Model | KIBA (MSE/CI/r²m) | Davis (MSE/CI/r²m) | BindingDB (MSE/CI/r²m) | Key Innovation |

|---|---|---|---|---|

| DeepDTAGen [2] | 0.146/0.897/0.765 | 0.214/0.890/0.705 | 0.458/0.876/0.760 | Multitask learning with FetterGrad |

| HPDAF [4] | - | - | - | Hierarchical dual-attention fusion |

| GraphDTA [2] | 0.147/0.892/0.687 | -/-/- | -/-/- | Graph representation of drugs |

| GDilatedDTA [2] | -/0.918/- | -/-/- | 0.483/0.867/0.730 | Dilated convolutional layers |

| SSM-DTA [2] | -/-/- | 0.219/0.890/0.689 | -/-/- | State space models |

Addressing Data Bias and Generalization Challenges

A critical methodological consideration in DTA prediction is the potential for data leakage between training and test sets, which can severely inflate performance metrics and lead to overestimation of model capabilities [7]. Recent research has revealed that standard benchmarks exhibit a substantial level of train-test data leakage, with nearly 50% of test complexes in CASF benchmarks having highly similar counterparts in the training data [7].

To address this issue, the PDBbind CleanSplit protocol was introduced, which employs a structure-based filtering algorithm to eliminate data leakage and redundancies within the training set [7]. This algorithm assesses similarity between protein-ligand complexes using a combined evaluation of protein similarity (TM scores), ligand similarity (Tanimoto scores), and binding conformation similarity (pocket-aligned ligand RMSD) [7].

When state-of-the-art models are retrained on the CleanSplit dataset, their performance typically drops substantially, confirming that previously reported high scores were largely driven by data leakage rather than genuine generalization capability [7]. This highlights the importance of rigorous dataset partitioning strategies for accurate model evaluation.

Table 3: Key Research Reagents and Computational Resources

| Resource | Type | Function | Application in DTA Research |

|---|---|---|---|

| PDBbind [7] [4] | Database | Comprehensive collection of protein-ligand complexes with binding affinities | Primary source of training and benchmarking data |

| ChEMBL [8] [9] | Database | Bioactivity data for drug-like molecules | Supplementary binding affinity data |

| BindingDB [2] [9] | Database | Measured binding affinities for protein-ligand interactions | Model training and validation |

| AutoDock Vina [8] | Software Tool | Molecular docking and virtual screening | Generating protein-ligand interaction features |

| RDKit [8] | Cheminformatics Library | Chemical informatics and machine learning | Processing drug molecules and generating molecular descriptors |

| ESM-2 [10] | Protein Language Model | Protein sequence embedding | Generating contextual protein representations |

| PLIP [8] | Analysis Tool | Protein-Ligand Interaction Profiler | Extracting interaction features from complexes |

| FEP [3] [9] | Simulation Method | Free Energy Perturbation | High-accuracy affinity calculation for validation |

Challenges and Future Directions

Despite significant advances, binding affinity prediction still faces several fundamental challenges. The interpretability of deep learning models remains a concern, as researchers need to understand the structural basis of predictions to guide molecular design [1]. While attention mechanisms provide some intrinsic interpretability through their weight distributions, more sophisticated visualization and explanation techniques are needed to fully bridge this gap.

The issue of generalization to novel protein families and chemical spaces continues to challenge the field. Models often perform poorly on targets with limited training data or structurally unique binding sites [7] [10]. Recent approaches addressing this challenge include transfer learning from protein language models [7] and few-shot learning techniques that leverage limited reference data as anchor points for predicting unknown query states [10].

Future research directions likely include greater integration of physical principles with data-driven approaches, developing more robust benchmarking protocols, and creating unified multimodal frameworks that simultaneously leverage structural, sequential, and interaction data [5] [3]. As one study notes, "Bridging physics-based and data-driven approaches not only improves predictive power and efficiency, but also enables exploration of the vast chemical and biological spaces central to modern drug discovery" [5].

Binding affinity prediction remains a cornerstone of computational drug discovery, with profound implications for accelerating therapeutic development and reducing costs. Attention mechanisms have emerged as a transformative architectural component, enabling models to dynamically focus on critical molecular features and interactions that govern binding strength. Through hierarchical attention frameworks, cross-modal alignment, and innovative optimization techniques, modern DTA prediction models are achieving unprecedented accuracy while providing valuable interpretability insights.

As the field progresses, the integration of physical principles with data-driven approaches, coupled with rigorous benchmarking protocols and sophisticated multitask learning frameworks, will further enhance the reliability and applicability of these tools. For researchers and drug development professionals, understanding these architectural advances is essential for leveraging computational predictions to guide experimental efforts and ultimately bring life-saving medications to patients more efficiently.

The accurate prediction of binding affinity between potential drug molecules and target proteins is a cornerstone of modern drug discovery. This process, which determines the strength of interaction between a ligand and its biological target, has traditionally relied on handcrafted molecular features and classical machine learning approaches. However, the immense complexity of molecular interactions, where both short- and long-range dependencies influence binding, presents a fundamental computational challenge. This whitepaper examines how attention mechanisms have emerged as an evolutionary necessity in computational models to address these challenges, transforming the field of binding affinity prediction. We trace the development from simple feature-based models to sophisticated dynamic focus architectures, demonstrating how attention provides a biological and computational imperative for managing complex information in drug discovery pipelines. By framing this evolution within the context of broader research on attention across neural systems, we reveal how selective amplification mechanisms have become indispensable for capturing the intricate relationships governing molecular recognition.

The Biological and Computational Imperative for Attention

Evolutionary Foundations of Attention

Attention represents a convergent computational strategy that has emerged independently across biological and artificial systems facing resource constraints. Research indicates that attention-like mechanisms exhibit remarkable evolutionary conservation across vertebrates, with the optic tectum/superior colliculus system maintaining structural and functional consistency for over 500 million years [11]. Even simple organisms like C. elegans with only 302 neurons demonstrate sophisticated attention-like behaviors in food seeking and predator avoidance [11]. This conservation across evolutionary timescales suggests that selective information processing represents a fundamental optimization principle for complex systems operating under energy constraints.

From an information-theoretic perspective, attention mechanisms address universal energy constraints on information processing. Karbowski's work on information thermodynamics reveals that information processing costs energy, creating selective pressure for efficient processing mechanisms across all computational substrates [11]. This mathematical imperative explains why similar attention-like mechanisms emerge in biological neural systems, artificial intelligence architectures, and even chemical reaction networks [11]. The formose reaction, for instance, demonstrates selective amplification across up to 10⁶ different molecular species, achieving >95% accuracy on classification tasks through purely chemical processes [11].

The Shift from Static to Dynamic Processing in Binding Affinity Prediction

Traditional computational models for drug-target affinity (DTA) prediction relied on static feature representations that failed to capture the dynamic nature of molecular interactions. Early methods including Kernel Partial Least Squares, Support Vector Regression (SVR), and Random Forest (RF) Regression utilized handcrafted features that offered limited capacity to represent complex protein-ligand interactions [12]. The advent of deep learning introduced architectures like DeepDTA, which employed one-dimensional convolutional neural networks (CNNs) to process Simplified Molecular Input Line Entry System (SMILES) sequences for ligands and protein sequences [12]. While these models advanced beyond traditional machine learning approaches, they remained constrained by their inability to adaptively focus on critical interaction sites or capture long-range dependencies within molecular structures.

The fundamental limitation of these pre-attention architectures was their treating all input features equally, regardless of their relative importance for predicting binding affinity. This approach ignored the biological reality that specific residues and molecular substructures contribute disproportionately to binding interactions. As drug discovery researchers faced increasing pressure to accurately model complex molecular interactions, the computational field experienced evolutionary pressure toward more sophisticated processing mechanisms—mirroring the evolutionary development of attention in biological systems [13].

Attention Mechanisms in Modern Binding Affinity Prediction

Core Architectural Principles

Modern binding affinity prediction models have converged on attention mechanisms that implement a consistent mathematical framework: selective amplification combined with normalization [11]. This architecture enables models to dynamically prioritize the most relevant molecular features while suppressing less informative ones. The mechanism operates through three fundamental processes:

- Amplification: Increasing the influence of certain input signals based on their computed importance

- Normalization: Applying built-in mechanisms (like divisive normalization) to process amplified signals

- Apparent Selection: Creating what appears to be selective filtering through the combination of amplification and normalization [11]

In practical terms, this framework allows DTA prediction models to learn which amino acid residues, ligand functional groups, and interaction patterns most significantly influence binding strength, then dynamically adjust their computational focus accordingly.

Key Implementations and Methodologies

Recent research has produced several innovative architectures that implement attention mechanisms for binding affinity prediction:

DEAttentionDTA utilizes dynamic word embeddings and self-attention mechanisms to process 1D sequence information of proteins, incorporating global sequence features of amino acids, local features of the active pocket site, and linear representation of ligand molecules in SMILES format [14]. The model employs a dynamic word-embedding layer based on a 1D convolutional neural network for embedding encoding, with self-attention correlating the three input modalities [14].

AttentionMGT-DTA adopts a multi-modal approach, representing drugs and targets as molecular graphs and binding pocket graphs respectively [15]. The architecture employs two attention mechanisms to integrate information between different protein modalities and drug-target pairs, enabling comprehensive capture of interaction information [15]. This approach demonstrates high interpretability by explicitly modeling interaction strength between drug atoms and protein residues.

DAAP (Distance plus Attention for Affinity Prediction) introduces atomic-level distance features combined with attention mechanisms to capture specific protein-ligand interactions based on donor-acceptor relations, hydrophobicity, and π-stacking atoms [12]. This approach argues that distances encompass both short-range direct and long-range indirect interaction effects while attention mechanisms capture levels of interaction effects [12].

Table 1: Performance Comparison of Attention-Based DTA Prediction Models

| Model | Dataset | MSE | CI | R² | Key Innovation |

|---|---|---|---|---|---|

| DeepDTAGen | KIBA | 0.146 | 0.897 | 0.765 | Multitask learning with FetterGrad algorithm |

| DAAP | CASF-2016 | - | 0.876 | 0.909 | Distance features + attention |

| AttentionMGT-DTA | Benchmark datasets | - | - | - | Multi-modal graph representation |

Note: Performance metrics vary across datasets and experimental setups. MSE = Mean Squared Error, CI = Concordance Index, R² = Correlation Coefficient.

DeepDTAGen represents a recent innovation implementing a multitask learning framework that performs both DTA prediction and novel drug generation simultaneously using a common feature space [2]. To address optimization challenges in multitask learning, the model incorporates the FetterGrad algorithm, which mitigates gradient conflicts between tasks by minimizing the Euclidean distance between task gradients [2]. On the KIBA dataset, DeepDTAGen achieved performance of 0.146 MSE, 0.897 CI, and 0.765 ( {r}_{m}^{2} ), demonstrating significant improvement over previous approaches [2].

Experimental Protocols and Methodologies

Model Architecture Design

The implementation of attention mechanisms in binding affinity prediction follows carefully designed experimental protocols. For DEAttentionDTA, the architecture processes three linear sequences (global protein features, local pocket features, and ligand SMILES) through a dynamic word-embedding layer based on 1D CNN, followed by self-attention correlation [14]. The DAAP methodology employs a five-fold cross-validation approach to evaluate model robustness, with results averaged across multiple runs to ensure reliability [12]. The input feature set includes distance matrices, sequence-based features for specific protein residues, and SMILES sequences, with an attention mechanism to weigh the significance of various input features [12].

Evaluation Metrics and Validation

Comprehensive evaluation is essential for validating attention-based DTA models. Standard metrics include:

- Mean Squared Error (MSE): Measures average squared differences between predicted and actual values

- Concordance Index (CI): Evaluates the ranking quality of predictions

- Correlation Coefficient (R): Assesses linear relationship between predictions and experimental values

- Area Under Precision-Recall Curve (AUPR): Measures performance under class imbalance

For generative tasks in multitask models like DeepDTAGen, additional metrics include Validity (proportion of chemically valid molecules), Novelty (proportion not in training data), and Uniqueness (proportion of unique molecules) [2]. These rigorous evaluation protocols ensure that attention mechanisms provide genuine improvements in predictive performance rather than simply adding model complexity.

Implementation Toolkit

Successful implementation of attention mechanisms for binding affinity prediction requires specific computational resources and methodological approaches. The following toolkit represents essential components for researchers developing attention-based DTA models:

Table 2: Research Reagent Solutions for Attention-Based DTA Prediction

| Resource Category | Specific Tools/Approaches | Function/Purpose |

|---|---|---|

| Input Features | Distance matrices (DAAP) [12] | Capture short- and long-range molecular interactions |

| Molecular graphs (AttentionMGT-DTA) [15] | Represent structural information for drugs and targets | |

| Dynamic embeddings (DEAttentionDTA) [14] | Encode sequence and structural information | |

| Architecture Components | Self-attention mechanisms [14] [12] | Model long-range dependencies in sequences |

| Graph attention networks [15] | Process structural representations | |

| Multi-modal attention [15] | Integrate different representation types | |

| Training Strategies | FetterGrad algorithm (DeepDTAGen) [2] | Resolve gradient conflicts in multitask learning |

| Five-fold cross-validation [12] | Ensure model robustness and reliability | |

| Ensemble averaging [12] | Improve predictive performance and stability |

Visualization of Attention Architectures

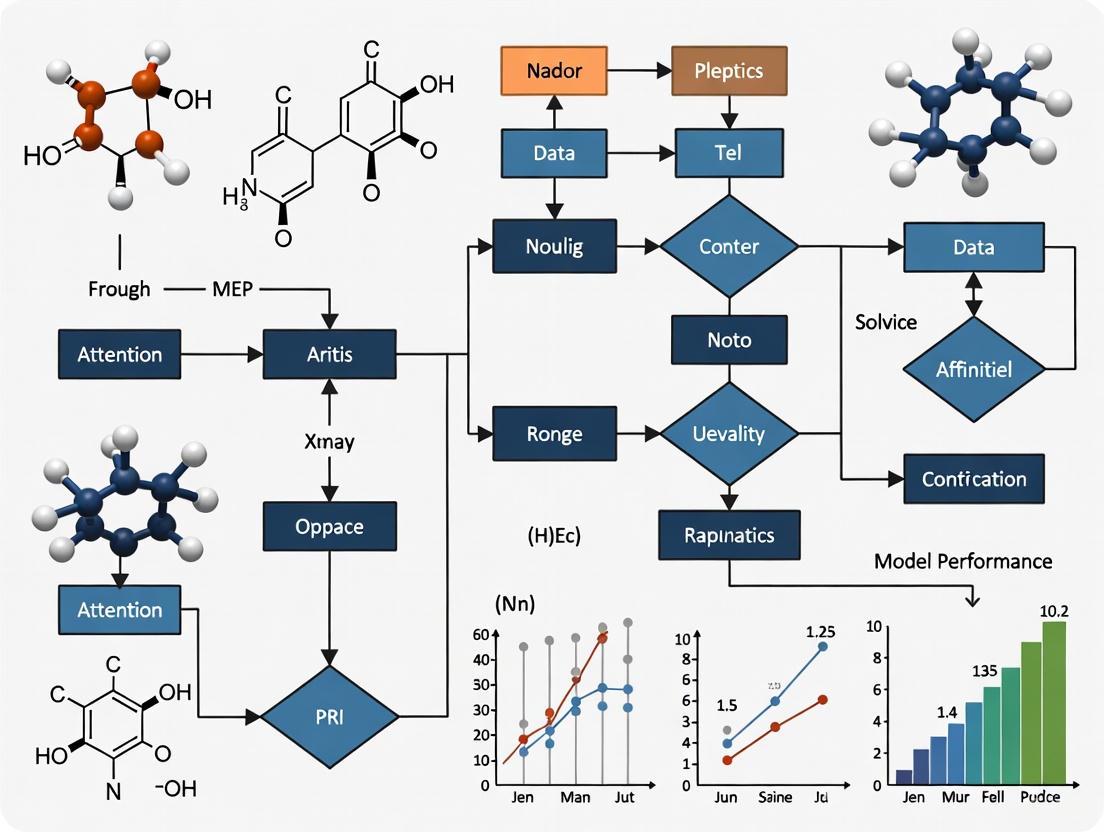

The following diagram illustrates the core architecture of an attention mechanism for drug-target binding affinity prediction, showing how different molecular representations are integrated through attention:

Diagram 1: Attention Mechanism Architecture for DTA Prediction. This diagram illustrates how different molecular representations are processed through attention mechanisms to generate binding affinity predictions.

Emerging Research Directions

The evolution of attention mechanisms in binding affinity prediction continues to advance along several promising trajectories. Hierarchical attention architectures that operate at multiple biological scales—from atomic interactions to structural motifs—represent a frontier for capturing the nested complexity of molecular recognition [2]. The integration of geometric deep learning with attention mechanisms shows particular promise for modeling 3D protein-ligand interactions without relying on costly 3D convolutional operations [15]. Additionally, the development of explainable attention mechanisms that provide interpretable insights into molecular determinants of binding affinity will be crucial for building trust in these models and guiding medicinal chemistry optimization [15] [12].

Another significant direction involves cross-species attention mechanisms inspired by comparative studies of attention across biological systems. Research has revealed striking similarities in exogenous orienting across humans, monkeys, rats, and mice, with all four species showing approximately 25-30ms reaction time benefits for validly cued targets [13]. However, humans exhibit dramatically superior performance in conflict resolution tasks compared to other primates [13]. These evolutionary insights may inform the development of attention mechanisms that better handle conflicting molecular signals or noisy biological data.

The progression from simple feature-based models to dynamic attention architectures in binding affinity prediction represents a necessary evolution driven by fundamental computational constraints. Attention mechanisms provide a mathematically principled approach to the resource allocation problems inherent in processing complex molecular information, mirroring solutions that evolved in biological systems over millions of years. The success of models like DEAttentionDTA, AttentionMGT-DTA, DAAP, and DeepDTAGen demonstrates that selective amplification—the core computation underlying attention—delivers substantial improvements in predicting drug-target interactions. As attention mechanisms continue to evolve, they will likely incorporate more sophisticated biological principles, including the critical dynamics observed in neural systems [11] and the multi-network interactions characteristic of primate attention [13]. This ongoing synthesis of biological insight and computational innovation will accelerate drug discovery by providing increasingly accurate predictions of molecular interactions.

Attention mechanisms have revolutionized the field of computational drug discovery by providing a powerful framework for predicting molecular interactions. This technical guide details the core principles of attention scoring as applied to drug-target binding affinity (DTA) prediction and related tasks. We examine how these mechanisms generate dynamic, context-aware representations of proteins and ligands by selectively focusing on structurally and chemically salient regions. This document provides an in-depth analysis of attention-based architectures, their experimental validation, and practical implementation guidelines for research scientists working at the intersection of deep learning and molecular modeling.

The accurate prediction of drug-target interactions (DTI) and binding affinities (DTA) represents a cornerstone of modern computational drug discovery. Traditional methods often relied on manually curated features or simpler neural architectures that struggled to capture the complex, non-linear relationships governing molecular recognition [6]. The introduction of attention mechanisms has addressed these limitations by enabling models to dynamically weigh the importance of different molecular regions during interaction prediction.

Attention scoring functions as an information-filtering system that mimics cognitive attention, allowing models to focus on critical binding motifs, functional groups, and structural elements while suppressing less relevant information [16]. This capability is particularly valuable in molecular contexts where binding events are often mediated by specific, localized interactions rather than global sequence or structure similarity. Modern attention-based approaches have evolved from simple feature extraction to sophisticated architectures that incorporate graph-based representations, cross-attention between molecular pairs, and docking-aware physical constraints [6] [17].

The fundamental shift enabled by attention mechanisms is the move from static molecular representations to dynamic, context-aware embeddings. Where previous methods represented proteins with fixed feature vectors regardless of their binding partners, contemporary attention-based models generate context-dependent representations that adapt based on the specific molecular interaction being analyzed [17]. This paradigm shift has significantly improved predictive accuracy in binding affinity estimation and opened new avenues for generative molecular design.

Fundamental Principles of Attention Scoring

Mathematical Foundation

At its core, attention scoring computes a weighted sum of values, with weights derived through compatibility functions between queries and keys. In molecular applications, this translates to focusing on relevant structural components during interaction prediction. The standard attention mechanism can be formalized as:

Attention(Q, K, V) = softmax(ƒ_scoring(Q, K)) · V

Where:

- Q (Query) represents the current focus of attention—often embeddings of a drug compound or specific protein residues of interest.

- K (Keys) constitute the available information segments—typically all potential binding sites or molecular substructures.

- V (Values) contain the actual representations to be weighted and summed, frequently corresponding to feature vectors of atomic environments.

- ƒ_scoring is the scoring function that determines compatibility between Q and K [6] [17].

For molecular applications, several scoring functions have proven effective:

- Scaled Dot-Product: Score(Q, K) = Q·Kᵀ/√dₖ (Most common in transformer architectures)

- Additive/Bahdanau: Score(Q, K) = vᵀ·tanh(W·Q + U·K) (Often used in encoder-decoder models)

- Bilinear: Score(Q, K) = Q·W·K (Captures pairwise interactions more explicitly)

The softmax normalization transforms these raw scores into a probability distribution that sums to 1, ensuring the output represents a coherent weighted average rather than merely scaled features.

Molecular Adaptation of Attention

In drug-target interaction contexts, the abstract Q, K, V triplets take on specific molecular interpretations:

- For protein sequence analysis, keys and values might represent individual amino acid residues, with queries being specific functional domains or binding pockets.

- For small molecule representation, keys and values could correspond to atomic neighborhoods or chemical functional groups.

- In structure-based approaches, geometric relationships inform the scoring function, incorporating spatial proximity and orientation constraints [16] [17].

A critical advancement in molecular attention is the incorporation of physical interaction constraints. The Docking-Aware Attention (DAA) framework enhances standard attention by integrating docking prediction scores directly into the attention mechanism:

DAAAttention(Q, K, V) = softmax(ƒscoring(Q, K) + λ·ƒ_docking(Q, K)) · V

Where ƒ_docking represents computationally derived physical interaction scores, and λ is a learnable weighting parameter that balances learned attention patterns with physics-based constraints [17]. This hybrid approach grounds the otherwise purely data-driven attention mechanism in biophysical principles, improving both interpretability and predictive accuracy.

Attention Variants in Molecular Contexts

Specialized Attention Mechanisms

Table 1: Specialized Attention Mechanisms for Molecular Applications

| Mechanism | Key Innovation | Molecular Application | Advantages |

|---|---|---|---|

| Docking-Aware Attention (DAA) [17] | Integrates molecular docking scores into attention weights | Enzyme reaction prediction, binding affinity estimation | Combines data-driven learning with physical constraints; dynamic protein representations |

| Graph-Based Attention [6] | Applies attention to graph representations of molecules | Drug-target affinity prediction using molecular graphs | Captures both atomic properties and topological structure |

| Cross-Attention [6] | Computes attention between two distinct molecular entities | Drug-target interaction prediction | Models intermolecular relationships explicitly |

| Multimodal Attention [6] [18] | Fuses information from multiple molecular representations | Integrating sequence, structure, and binding data | Leverages complementary information sources |

| Channel-Wise Attention [19] | Adjusts weights across feature channels dynamically | Object recognition in molecular images; feature selection | Enhances discriminative features for specific tasks |

Implementation Architectures

Multiple architectural frameworks have emerged to implement these attention mechanisms effectively:

Transformer-based Architectures adapted from natural language processing have been successfully applied to protein sequences and small molecule SMILES strings. These models utilize multi-headed self-attention to capture long-range dependencies in molecular sequences, with specialized pre-training approaches like ChemBERTa and ProtBERT generating powerful molecular embeddings [6].

Graph Attention Networks (GATs) operate on molecular graphs where atoms represent nodes and bonds represent edges. Graph attention computes weighted averages of neighboring node features, enabling the model to prioritize chemically important atomic neighborhoods during message passing [6].

Multimodal Fusion Architectures combine attention across different molecular representations. For example, DeepDTAGen employs shared feature spaces that allow simultaneous prediction of binding affinity and generation of novel drug candidates through aligned attention patterns across predictive and generative tasks [2].

Experimental Protocols & Validation

Benchmarking Methodologies

Rigorous experimental protocols are essential for validating attention-based molecular models. Standard evaluation approaches include:

Binding Affinity Prediction: Models are typically evaluated on benchmark datasets including KIBA, Davis, and BindingDB using standardized metrics:

Table 2: Performance Metrics for Attention-Based DTA Models

| Model | Dataset | MSE (↓) | CI (↑) | r²m (↑) | Key Innovation |

|---|---|---|---|---|---|

| DeepDTAGen [2] | KIBA | 0.146 | 0.897 | 0.765 | Multitask learning with gradient alignment |

| GraphDTA [6] | KIBA | 0.147 | 0.891 | 0.687 | Graph neural networks for molecular representation |

| DeepDTAGen [2] | Davis | 0.214 | 0.890 | 0.705 | Multitask learning with gradient alignment |

| Docking-Aware Attention [17] | Reaction Prediction | - | - | 62.2% Accuracy | Incorporates docking physics |

Cold-Start Testing: Evaluates model performance on novel drug-target pairs with no similar examples in training data, testing generalization capability [2].

Interpretability Analysis: Visualizes attention weights to identify binding hotspots and validate that the model focuses on biophysically plausible regions [16] [17].

Case Study: Docking-Aware Attention Implementation

The Docking-Aware Attention framework exemplifies rigorous experimental validation [17]:

Input Representation:

- Protein structures encoded as residue sequences with 3D coordinates

- Molecular compounds represented as graphs or SMILES strings

- Docking poses generated using molecular docking software

Architecture Specifications:

- Multi-headed attention (8-16 heads) for capturing different interaction types

- Docking integration layer combining learned attention scores with physical interaction potentials

- Residual connections and layer normalization for training stability

- Task-specific output heads for affinity prediction or reaction classification

Training Protocol:

- Pretraining on large-scale molecular databases (e.g., ChEMBL, BindingDB)

- Fine-tuning on task-specific datasets with reduced learning rate

- Regularization via dropout and weight decay

- Optimization using Adam or AdamW with gradient clipping

Validation Metrics:

- Prediction accuracy on held-out test sets

- Ablation studies quantifying contribution of docking component

- Attention visualization to verify focus on known binding sites

- Statistical significance testing via multiple random initializations

Research Reagent Solutions

Table 3: Essential Research Resources for Molecular Attention Studies

| Resource Category | Specific Tools/Databases | Primary Function | Access Information |

|---|---|---|---|

| Benchmark Datasets | KIBA, Davis, BindingDB [6] [2] | Provide standardized data for training and evaluating DTA models | Publicly available from original publications |

| Structural Databases | Protein Data Bank (PDB) [20], EMDB [20] | Source of 3D protein structures for structure-based methods | https://www.rcsb.org/, https://www.ebi.ac.uk/emdb/ |

| Molecular Representation | RDKit, OpenBabel | Process and featurize small molecules for model input | Open-source cheminformatics toolkits |

| Deep Learning Frameworks | PyTorch, TensorFlow, DeepGraph | Implement attention architectures and training pipelines | Open-source with molecular biology extensions |

| Specialized Models | ChemBERTa [6], ProtBERT [6] | Pre-trained language models for molecular sequence embedding | HuggingFace Model Repository |

| Evaluation Metrics | Concordance Index (CI), MSE, r²m [2] | Quantify model performance for comparison and validation | Standard implementations in scientific computing libraries |

Implementation Workflows

Standard Experimental Pipeline

The following diagram illustrates a comprehensive workflow for implementing attention mechanisms in molecular binding studies:

Molecular Attention Workflow

Docking-Aware Attention Architecture

For structure-based approaches, the Docking-Aware Attention mechanism incorporates physical constraints:

Docking-Aware Architecture

Future Directions & Challenges

Despite significant advances, several challenges remain in attention-based molecular modeling. Interpretability continues to be a priority, with ongoing research developing better visualization techniques for explaining why models focus on specific molecular regions [16] [17]. Data efficiency presents another challenge, as attention mechanisms typically require large training datasets, prompting investigation into few-shot and zero-shot learning approaches [16].

Emerging research directions include geometric attention that explicitly respects molecular symmetry and 3D constraints, multi-scale attention operating simultaneously on atomic, residue, and domain levels, and cross-modal attention integrating diverse data sources such as genomic context, phenotypic screening results, and chemical synthesis constraints [6] [2]. The integration of attention with generative models for de novo drug design represents another frontier, where attention mechanisms guide the generation of novel compounds with optimized binding characteristics [2].

As attention mechanisms continue to evolve, their capacity to create dynamic, context-aware molecular representations will likely play an increasingly central role in computational drug discovery. The principles outlined in this document provide a foundation for researchers to understand, implement, and advance these powerful computational techniques in their molecular modeling workflows.

The accurate prediction of protein-ligand binding affinity is a cornerstone of modern drug discovery, as the strength of this interaction largely determines a drug candidate's efficacy. Central to this process are three fundamental types of non-covalent interactions: donor-acceptor pairs, hydrophobic effects, and π-stacking. These interactions collectively govern molecular recognition, influencing both the stability and specificity of protein-ligand complexes. Recent advancements in deep learning have revolutionized binding affinity prediction, with attention-based neural networks emerging as particularly powerful tools. These models excel at identifying and weighing the contribution of these key interactions from complex structural data, providing researchers with both predictive accuracy and mechanistic insights. By focusing on these critical interaction types and understanding how computational models prioritize them, drug development professionals can more effectively guide the design and optimization of novel therapeutic compounds.

Fundamental Mechanisms of Key Interactions

Donor-Acceptor Interactions

Donor-acceptor interactions, primarily hydrogen bonds and halogen bonds, are directional and among the most specific molecular interactions in biological systems. They form when an electron-rich donor atom (such as oxygen or nitrogen in hydroxyl or amine groups) shares a lone pair with an electron-deficient acceptor atom (like the oxygen in a carbonyl group). The strength of these interactions is highly dependent on distance, angle, and the local chemical environment, making them critical for determining ligand orientation within a binding pocket. In computational models, these are often represented by distances between specific donor and acceptor atoms, with closer distances indicating stronger potential interactions. Their directionality and specificity make them indispensable for molecular recognition in drug-target interactions.

Hydrophobic Interactions

Hydrophobic interactions refer to the tendency of non-polar molecules or molecular regions to associate in aqueous environments, primarily driven by the entropic gain from releasing ordered water molecules rather than direct attractive forces. When non-polar ligand surfaces contact non-polar protein surfaces, structured water molecules at the interface are displaced, increasing system entropy and making the binding thermodynamically favorable. These interactions are non-directional and depend on the surface area of contact; larger non-polar surfaces typically yield stronger hydrophobic effects. In binding affinity prediction, these are often quantified through solvent-accessible surface area (SASA) calculations or by identifying and measuring contacts between non-polar atoms.

π-Stacking Interactions

π-stacking involves attractive interactions between aromatic rings, a common feature in drugs and protein residues. These interactions are more complex than once thought, involving a combination of dispersion forces, electrostatic complementarity, and sometimes weak covalent character. The classic model involves two primary orientations: face-to-face stacked (often offset) and perpendicular T-shaped arrangements. The interaction energy depends on the relative orientation and electronic properties of the rings; electron-rich and electron-deficient rings can exhibit enhanced stacking through donor-acceptor complementarity [21]. Notably, non-aromatic planar systems like quinoid rings can also participate in strong stacking interactions, sometimes even more pronounced than those between fully delocalized aromatic systems [21]. In radical systems, these interactions can involve significant covalent contribution, termed "pancake bonding" [21].

Table 1: Characteristics of Key Molecular Interactions

| Interaction Type | Strength Range (kcal/mol) | Distance Dependence | Directionality | Primary Physical Origin |

|---|---|---|---|---|

| Donor-Acceptor | -1 to -10 | Strong (1/r) | High | Electrostatic, Orbital Overlap |

| Hydrophobic | -0.1 to -1 per Ų | Weak | None | Entropic (Solvent Reorganization) |

| π-Stacking | -0.5 to -5 | Moderate (1/r³ to 1/r⁶) | Moderate | Dispersion, Electrostatic, Charge Transfer |

Computational Representation and Feature Engineering

Structural Feature Extraction

Accurate binding affinity prediction begins with transforming three-dimensional structural information of protein-ligand complexes into quantifiable features. For donor-acceptor interactions, this involves identifying all potential donor and acceptor atoms in both molecules and calculating their pairwise distances and angles. Hydrophobic interactions are typically captured by mapping non-polar atoms and calculating contact surfaces or counting proximal atom pairs. π-stacking features require detecting aromatic systems and quantifying their spatial relationships, including inter-plane distances, offset distances, and orientation angles. These geometric descriptors form the foundational feature set that machine learning models use to learn relationship patterns between interaction geometries and binding strengths.

Distance-Based Feature Engineering

Recent advances have demonstrated that atomic-level distance features provide superior representation of protein-ligand interactions compared to traditional grid-based or adjacency-based representations. The DAAP (Distance plus Attention for Affinity Prediction) method exemplifies this approach, employing precise distances between donor-acceptor atoms, hydrophobic atoms, and π-stacking atoms as primary input features [22]. These distance measurements directly capture both short-range direct interactions and long-range indirect interaction effects that influence binding. This representation is more computationally efficient than 3D grid-based methods and provides more direct interaction information than sequence-based representations. When combined with attention mechanisms, these distance features enable models to focus on the most critical atomic interactions for affinity prediction.

Table 2: Experimental Protocols for Key Interaction Analysis

| Method Category | Key Steps | Output Metrics | Applicable Interactions |

|---|---|---|---|

| X-ray Charge Density Analysis | 1. Collect high-resolution X-ray diffraction data2. Perform multipole modeling of electron density3. Calculate interaction energies using quantum chemical methods | Electron density distribution, Interaction energies, Bond critical points | π-stacking (including pancake bonding), Donor-acceptor |

| MD/MM-PBSA/GBSA | 1. Run molecular dynamics simulation of complex2. Extract multiple snapshots from trajectory3. Calculate gas-phase enthalpies and solvation energies for each snapshot4. Average results across snapshots | Binding free energy decomposition, Enthalpic and solvation contributions | All three interaction types (hydrophobic, donor-acceptor, π-stacking) |

| Distance-Based Feature Extraction | 1. Identify relevant atom types (donor/acceptor, hydrophobic, aromatic)2. Compute pairwise distances between protein and ligand atoms3. Encode distances with attention-weighted features | Distance matrices, Attention weights, Binding affinity predictions | All three interaction types simultaneously |

Attention Mechanisms in Binding Affinity Prediction

Fundamentals of Attention in Neural Networks

Attention mechanisms in deep learning enable models to dynamically focus on the most relevant parts of input data when making predictions, mimicking human cognitive attention. In binding affinity prediction, attention mechanisms process complex protein-ligand interaction data and assign importance weights to different molecular features. This allows models to prioritize strong donor-acceptor pairs, significant hydrophobic contacts, and optimal π-stacking arrangements while ignoring less relevant interactions. The attention mechanism operates by computing a weighted sum of input features, where the weights are learned during training and determined by the features' contextual relevance to binding affinity. This capability is particularly valuable for pharmaceutical research, as it not only improves prediction accuracy but also provides interpretable insights into which specific atomic interactions drive binding.

Integration with Molecular Representations

Attention mechanisms integrate with various molecular representations to enhance binding affinity prediction. Graph Attention Networks (GATs) apply attention to molecular graphs, where atoms represent nodes and bonds represent edges, enabling the model to focus on critical substructures and atomic environments [23] [24]. Sequence-based models use attention to identify important residues in protein sequences or functional groups in ligand SMILES strings. 3D structural models apply spatial attention to focus on key regions in the binding pocket. For example, the BAPA model uses descriptor embeddings with attention to highlight important local structures in protein-ligand complexes [25], while DAAP combines distance features with attention to capture both short- and long-range interaction effects [22]. This integration allows models to effectively weigh the contribution of donor-acceptor pairs, hydrophobic contacts, and π-stacking interactions based on their relative importance.

Diagram 1: Attention mechanism workflow for binding affinity prediction

Advanced Architectures and Implementation

State-of-the-Art Models

Recent binding affinity prediction models demonstrate how attention mechanisms effectively capture key molecular interactions. The DAAP model achieves state-of-the-art performance (Pearson R = 0.909 on CASF-2016 benchmark) by using atomic-level distance features for donor-acceptor, hydrophobic, and π-stacking atoms combined with attention mechanisms [22]. The BAPA model employs descriptor embeddings with attention to highlight important local structural descriptors, outperforming traditional methods across multiple benchmarks [25]. Graph-based approaches like XGDP utilize graph attention networks to learn latent molecular features while preserving structural information, enabling identification of active substructures in drugs and significant genes in cancer cells [24]. These architectures successfully address the limitation of earlier methods that used fixed, predefined interaction terms by allowing the model to dynamically determine which interactions matter most in different binding contexts.

Experimental Implementation and Protocols

Implementing attention-based binding affinity prediction requires careful experimental design and data processing. For the DAAP approach, the protocol involves: (1) identifying donor, acceptor, hydrophobic, and π-stacking atoms in protein and ligand structures; (2) computing pairwise distances between these specific atom types; (3) encoding these distances along with protein sequence features of relevant residues; (4) processing through attention layers that learn to weight the importance of different interactions; and (5) employing ensemble averaging of multiple models for robust prediction [22]. For MD-based approaches like the "ML/GBSA" attempt described in Rowan's research, the protocol includes running molecular dynamics simulations, extracting snapshots, calculating gas-phase enthalpies and solvation energies, and attempting to learn a correction term [26]. Critical to success is proper dataset construction with strict splitting to prevent data leakage and ensure model generalizability.

Table 3: Research Reagent Solutions for Interaction Studies

| Reagent/Resource | Type | Primary Function | Example Applications |

|---|---|---|---|

| PDBbind Database | Curated Database | Provides experimental protein-ligand structures with binding affinity data | Training and benchmarking binding affinity prediction models |

| RDKit | Cheminformatics Library | Converts SMILES strings to molecular graphs; computes molecular descriptors | Drug representation; feature extraction for machine learning |

| OpenMM | Molecular Dynamics Engine | Runs MD simulations for MM/PBSA and MM/GBSA calculations | Conformational sampling; free energy calculations |

| CASF Benchmark Sets | Standardized Benchmark | Provides consistent evaluation framework for scoring functions | Method comparison; performance validation |

| Graph Attention Networks (GATs) | Deep Learning Architecture | Learns node representations with attention to important neighbors | Molecular property prediction; drug response modeling |

Interpretation and Explainability of Models

Mechanistic Insights from Attention Weights

Attention mechanisms provide crucial interpretability by revealing which specific interactions contribute most significantly to binding affinity predictions. The learned attention weights effectively quantify the relative importance of different donor-acceptor pairs, hydrophobic contacts, and π-stacking interactions in specific protein-ligand complexes. For example, high attention weights on specific donor-acceptor distances may indicate critical hydrogen bonds that anchor the ligand in the binding pocket. Similarly, strong attention on particular hydrophobic contacts may highlight regions where desolvation provides major driving force for binding. For π-stacking, attention patterns can reveal whether face-to-face or T-shaped geometries are more favorable in different contexts. This interpretability transforms binding affinity prediction from a black box into a tool for generating testable hypotheses about molecular recognition mechanisms.

Visualization of Interaction Networks

Advanced visualization techniques leverage attention weights to create interaction heatmaps that highlight critical binding determinants. These visualizations show protein residues and ligand atoms color-coded by their attention scores, providing immediate visual identification of key interaction hotspots. For instance, the BAPA model demonstrates how attention mechanisms can capture binding sites in protein-ligand complexes, with high-attention regions corresponding to known functional sites [25]. Similarly, explainable graph neural networks like XGDP use attribution methods such as GNNExplainer and Integrated Gradients to identify salient functional groups of drugs and their interactions with significant genes in cancer cells [24]. These visualization approaches help researchers quickly identify which specific molecular features to optimize during drug design campaigns.

Diagram 2: Attention weight distribution across interaction types

Performance Benchmarks and Validation

Quantitative Assessment

Rigorous benchmarking demonstrates that attention-based models leveraging donor-acceptor, hydrophobic, and π-stacking features achieve state-of-the-art performance in binding affinity prediction. The DAAP method achieves remarkable metrics on the CASF-2016 benchmark: Pearson Correlation Coefficient (R) of 0.909, Root Mean Squared Error (RMSE) of 0.987, Mean Absolute Error (MAE) of 0.745, and Concordance Index (CI) of 0.876 [22]. These results represent significant improvements (2% to 37%) over previous methods across multiple benchmark datasets. The BAPA model similarly outperforms existing methods on CASF-2013 and CSAR NRC-HiQ sets, demonstrating the generalizability of the approach [25]. These benchmarks confirm that explicitly modeling these three key interaction types with attention mechanisms provides both accuracy and robustness across diverse protein-ligand systems.

Generalization and Robustness Testing

Proper validation of attention-based binding affinity models requires rigorous generalization testing beyond standard benchmarks. This involves constructing test datasets with minimal structural similarity to training complexes to evaluate performance on truly novel targets. The DAAP approach demonstrates strong generalization through five-fold cross-validation with low standard deviations in performance metrics (e.g., R = 0.847 ± 0.002 when trained on PDBbind2020) [22]. Methods like BAPA have been tested using protein-structural and ligand-structural similarity measures to ensure evaluation on non-redundant complexes [25]. These rigorous validation protocols provide confidence that models learning to focus on fundamental physical interactions (donor-acceptor, hydrophobic, and π-stacking) rather than memorizing specific structural motifs will translate effectively to novel drug targets.

The integration of attention mechanisms with fundamental molecular interaction principles represents a paradigm shift in binding affinity prediction. By focusing on donor-acceptor pairs, hydrophobic interactions, and π-stacking, researchers can develop models that achieve both high accuracy and meaningful interpretability. Current state-of-the-art approaches demonstrate that distance-based features combined with attention weighting provide superior performance compared to traditional grid-based or sequence-based representations. Future research directions include developing more sophisticated attention mechanisms that can capture multi-scale interactions, integrating temporal dynamics from molecular simulations, and improving model interpretability for direct drug design guidance. As these models continue to evolve, their ability to identify and quantify the key interactions driving molecular recognition will accelerate the discovery of novel therapeutics across diverse disease areas.

The process of drug discovery is notoriously expensive, time-consuming, and prone to failure, often requiring over a decade and billions of dollars to bring a single drug to market [4]. In response, artificial intelligence has emerged as a potent substitute, providing strong solutions to challenging biological issues such as Drug-Target Binding (DTB) prediction [6]. Deep learning models, in particular, have demonstrated a remarkable ability to predict drug-target affinity (DTA)—the strength of interaction between a drug molecule and a protein target—by learning complex patterns from large datasets. However, these models have often been treated as "black boxes," making accurate predictions without offering insights into the underlying biochemical rationale. This lack of interpretability poses a significant barrier to adoption by medicinal chemists and biomedical researchers who require mechanistic understanding to guide drug design.

The introduction of attention mechanisms has begun to fundamentally reshape this landscape. Originally developed for neural machine translation, attention allows models to dynamically focus on relevant parts of their input while filtering out less important information [27]. In the context of DTA prediction, this capability enables models to highlight which specific amino residues in a protein sequence and which molecular substructures in a drug compound contribute most significantly to binding affinity predictions. This selective focus mimics human cognitive attention and provides a powerful window into model decision-making. Modern architectures based on the Transformer model, which relies exclusively on attention mechanisms, have further advanced the field by capturing long-range dependencies and complex contextual relationships within molecular structures [28] [27]. This technological evolution is transforming computational drug discovery from a black-box prediction task into an interpretable research tool that can generate testable hypotheses about molecular interactions.

The Architectural Foundation of Attention Mechanisms

From Basic Attention to Self-Attention

The development of attention mechanisms addressed critical limitations in recurrent neural networks (RNNs), particularly their difficulty handling long sequences due to vanishing gradients and their sequential computation nature that impedes parallelization [27]. Early attention mechanisms, pioneered in neural machine translation systems, allowed decoder networks to focus on relevant parts of the input sequence when generating each word of the output, rather than relying solely on a fixed-length compressed representation of the entire input [27]. This approach utilized encoder output vectors containing richer information than the final hidden state, providing a more nuanced view of the input to the decoder.

The transformative breakthrough came with Vaswani et al.'s 2017 introduction of the self-attention mechanism and the Transformer architecture [28] [27]. Unlike previous attention mechanisms that focused on relationships between input and output sequences, self-attention computes attention scores between all pairs of elements within a single sequence. This enables the model to capture contextual relationships between different input parts and learn rich, contextualized representations. The self-attention mechanism calculates these scores by comparing each element in the input sequence to every other element, allowing the model to weigh the importance of different aspects relative to each other. These attention scores then create a weighted sum of the input elements, which passes through a feedforward neural network to produce the final output [27].

Multi-Head Attention and Computational Formalism

The Transformer architecture enhanced basic self-attention through multi-head attention, which allows the model to jointly attend to information from different representation subspaces at different positions [28]. This is particularly valuable in molecular modeling where multiple interaction types (e.g., hydrophobic, ionic, hydrogen bonding) may operate simultaneously between a drug and its target. The attention mechanism operates through three fundamental components: the Query (Q), Key (K), and Value (V) matrices. For each element in the sequence, these matrices are derived through learned linear transformations, enabling the model to project inputs into different representation spaces optimized for attention computation.

The core attention function is implemented as scaled dot-product attention:

[ \text{Attention}(Q, K, V) = \text{softmax}\left(\frac{QK^T}{\sqrt{d_k}}\right)V ]

where (dk) is the dimension of the key vectors, and the scaling factor (\frac{1}{\sqrt{dk}}) prevents the softmax function from entering regions with extremely small gradients [28]. The multi-head attention mechanism extends this by employing multiple sets of Q, K, V matrices in parallel:

[ \text{MultiHead}(Q, K, V) = \text{Concat}(\text{head}1, \ldots, \text{head}h)W^O ]

where each head is computed as:

[ \text{head}i = \text{Attention}(QWi^Q, KWi^K, VWi^V) ]

This architectural foundation enables the model to capture diverse relationship types within the input data, making it particularly well-suited for modeling complex biomolecular interactions where multiple binding modalities may coexist.

Attention Mechanisms in Drug-Target Affinity Prediction

The Evolution of DTA Prediction Models

Early computational strategies for Drug-Target Affinity (DTA) prediction relied mainly on physics-based methods like molecular docking and molecular dynamics simulations [4]. While these approaches offer detailed structural insights, they typically demand extensive computational resources and accurate structural input, limiting their applicability in large-scale drug screening. The emergence of data-driven machine learning approaches constructed predictive models by learning from known drug-target binding data, reducing reliance on computationally intensive simulations. Initial ML approaches, such as KronRLS and SimBoost, utilized simple drug-target similarity metrics to predict binding affinities [4].

With advancements in deep learning, more sophisticated models emerged. Sequence-based models like DeepDTA utilized drug molecular sequences (e.g., SMILES strings) and protein sequences, demonstrating improved prediction performance but often failing to fully capture complex structural interactions [4]. Graph-based deep learning methods subsequently emerged, providing richer representations of molecular structures by encoding drugs and proteins as graph structures. Models like GraphDTA represented drug molecules as graphs and used graph neural networks (GNN) to model their interactions with proteins [4]. Further improvements came from recognizing the significance of protein-binding pockets—the specific regions where drug molecules bind to proteins [4].

Table 1: Evolution of Deep Learning Approaches in DTA Prediction

| Model Type | Representative Models | Key Innovations | Limitations |

|---|---|---|---|

| Sequence-Based | DeepDTA, WideDTA | Uses SMILES strings and protein sequences; CNN architecture | Fails to capture structural molecular information [2] |

| Graph-Based | GraphDTA, DGraphDTA | Represents drugs as molecular graphs; uses GNNs | Limited atom features; protein representation challenges [2] [29] |

| Pocket-Aware | PocketDTA, DeepDTAF | Integrates protein-binding pocket information | Requires pocket structure data [4] |

| Multimodal | HPDAF, MDNN-DTA | Combines multiple data types (sequence, graph, structure) | Complex integration of heterogeneous features [4] [29] |

| Multitask with Attention | DeepDTAGen | Predicts affinity and generates drugs; uses shared features | Optimization challenges from gradient conflicts [2] |

Incorporating Attention into DTA Prediction

The integration of attention mechanisms has addressed critical limitations in previous DTA prediction approaches. For example, the recently developed HPDAF (Hierarchically Progressive Dual-Attention Fusion) framework introduces a novel hierarchical attention-based mechanism that integrates three types of biochemical information: protein sequences, drug molecular graphs, and structural interaction data from protein-binding pockets [4]. This approach employs specialized modules for each data type and uses attention to dynamically emphasize the most relevant structural and sequential information. The model's dual-attention mechanism consists of Modality-Aware Cross-attention Networks (MACN) and Affinity-Calibrated Attention Networks (AACN), which work together to focus on crucial local features while grasping broader, interdependent global information [4].

Another innovative approach, MDNN-DTA, addresses the challenge of protein feature extraction by designing a specific Protein Feature Extraction (PFE) block that captures both global and local features of protein sequences, supplemented by a pre-trained ESM model for biochemical features [29] [30]. The model further employs a Protein Feature Fusion (PFF) block based on attention mechanisms to efficiently integrate multi-scale protein features [29]. This approach demonstrates how attention can bridge different representation spaces—using Graph Convolutional Networks (GCN) for drug molecules and Convolutional Neural Networks (CNN) for protein sequences, with attention facilitating their integration [29] [30].

Experimental Protocols and Methodologies

Benchmark Datasets and Evaluation Metrics

Robust evaluation of DTA prediction models requires standardized datasets with experimentally validated binding affinities. The most widely adopted benchmarks include KIBA, Davis, BindingDB, and the PDBbind database [2] [4]. These datasets provide binding affinity values typically reported as -logK(i), -logK(d), or -logIC(_{50}) values, where lower values indicate stronger affinity [29]. The PDBbind database offers particularly high-quality data as it contains extensive drug-target complexes with experimentally measured binding affinities [4].

Table 2: Key Benchmark Datasets for DTA Prediction

| Dataset | Content Description | Affinity Measures | Key Applications |

|---|---|---|---|

| KIBA | Large-scale dataset with kinase inhibitors | KIBA scores | General DTA benchmarking [2] |

| Davis | Kinase family protein-drug interactions | K(_d) values | Kinase-specific binding prediction [2] |

| BindingDB | Diverse drug-target pairs with binding data | K(d), K(i), IC(_{50}) | Broad applicability domain testing [2] |

| PDBbind | Curated protein-ligand complexes from PDB | K(d), K(i), IC(_{50}) | Structure-aware model training [4] |

Evaluation metrics for DTA prediction models must assess both prediction accuracy and ranking capability. The most commonly used metrics include:

- Mean Squared Error (MSE): Measures the average squared difference between predicted and actual values, with lower values indicating better performance [2]

- Concordance Index (CI): Evaluates the ranking quality of predictions, representing the probability that predictions for two random drug-target pairs are correctly ordered [2]

- R(^2) or R(_m^2): Measures the proportion of variance in the binding affinity that is predictable from the input features [2]

- Area Under Precision-Recall Curve (AUPR): Particularly important for interaction prediction where positive instances may be rare [2]

Implementation of Attention-Based DTA Models

The implementation of attention-based DTA models follows a systematic workflow that can be divided into four key phases: data representation, feature extraction, attention-based fusion, and affinity prediction. The following diagram illustrates this generalized experimental workflow:

For the DeepDTAGen model, which implements a multitask framework for both DTA prediction and drug generation, researchers developed a specialized optimization algorithm called FetterGrad to address gradient conflicts between the distinct tasks [2]. The experimental protocol involves:

- Data Preparation: Represent drugs as SMILES strings or molecular graphs, and proteins as amino acid sequences or structural graphs

- Feature Extraction: Use dedicated encoders for drugs (typically GNNs) and proteins (CNNs or Transformers)

- Attention Fusion: Apply multi-head attention to integrate features across modalities

- Multitask Optimization: Implement FetterGrad to align gradients between affinity prediction and drug generation tasks by minimizing Euclidean distance between task gradients [2]

The HPDAF framework implements a more specialized approach with its dual-attention mechanism:

- Modality-Specific Processing: Extract features from protein sequences, drug graphs, and pocket structures using specialized modules

- Hierarchical Attention: Apply Modality-Aware Cross-attention Networks (MACN) to enhance local features within each modality

- Global Calibration: Use Affinity-Calibrated Attention Networks (AACN) to model cross-modality dependencies and global context [4]

Quantitative Performance of Attention-Based Models

Benchmark Comparisons

Comprehensive evaluations on standard datasets demonstrate the performance advantages of attention-based DTA prediction models. The following table summarizes key results from recent studies:

Table 3: Performance Comparison of Attention-Based DTA Models on Benchmark Datasets

| Model | Dataset | MSE | CI | R(_m^2) | Key Innovation |

|---|---|---|---|---|---|

| DeepDTAGen [2] | KIBA | 0.146 | 0.897 | 0.765 | Multitask with FetterGrad |

| DeepDTAGen [2] | Davis | 0.214 | 0.890 | 0.705 | Multitask with FetterGrad |

| DeepDTAGen [2] | BindingDB | 0.458 | 0.876 | 0.760 | Multitask with FetterGrad |

| HPDAF [4] | CASF-2016 | - | +7.5% CI* | - | Dual-attention fusion |

| GraphDTA [2] | KIBA | 0.147 | 0.891 | 0.687 | Graph representation |

| GDilatedDTA [2] | KIBA | - | 0.920 | - | Dilated convolution |

| SSM-DTA [2] | Davis | 0.219 | 0.890 | 0.689 | Semantic similarity |

Note: *Compared to DeepDTA baseline; exact values not provided in source

The DeepDTAGen model demonstrates particularly strong performance, outperforming traditional machine learning models (KronRLS and SimBoost) on the KIBA dataset by achieving a 7.3% improvement in CI and 21.6% improvement in R(m^2), while reducing MSE by 34.2% [2]. Compared to the second-best deep learning model (GraphDTA), DeepDTAGen attained an improvement of 0.67% in CI and 11.35% in R(m^2) while reducing MSE by 0.68% [2]. On the Davis dataset, the model showed a 2.4% improvement in R(_m^2) and 2.2% reduction in MSE compared to SSM-DTA [2].

Ablation Studies and Component Analysis

Ablation studies provide crucial insights into the contribution of attention mechanisms to overall model performance. For the MDNN-DTA model, researchers conducted systematic experiments demonstrating that the Protein Feature Fusion (PFF) block based on attention mechanisms significantly enhanced feature integration and prediction accuracy [29]. Similarly, HPDAF's hierarchical attention mechanism was shown to be responsible for its performance gains, with the dual-attention approach enabling more effective integration of heterogeneous biochemical features [4].

The FetterGrad algorithm in DeepDTAGen addresses a fundamental challenge in multitask learning: gradient conflicts between distinct tasks [2]. By minimizing the Euclidean distance between task gradients, this approach mitigates optimization challenges and enables more stable training. The algorithm demonstrates how attention to optimization dynamics complements architectural innovations in advancing model performance.

Visualizing Attention: From Model Decisions to Scientific Insights

Mapping Attention to Biological Structures

The true power of attention mechanisms in DTA prediction lies in their ability to provide interpretable insights into the model's decision-making process. By examining attention weights, researchers can identify which specific amino acid residues in a protein and which molecular substructures in a drug compound the model deems most important for binding affinity. The following diagram illustrates how attention maps onto biological structures to provide interpretable insights: