High-Throughput Screening Protocols for Chemogenomic Libraries: A Modern Guide from Foundation to Validation

This article provides a comprehensive guide to high-throughput screening (HTS) protocols specifically for chemogenomic libraries, tailored for researchers and drug development professionals.

High-Throughput Screening Protocols for Chemogenomic Libraries: A Modern Guide from Foundation to Validation

Abstract

This article provides a comprehensive guide to high-throughput screening (HTS) protocols specifically for chemogenomic libraries, tailored for researchers and drug development professionals. It covers the foundational principles of designing and acquiring high-quality small molecule libraries, detailed methodological workflows for biochemical and cell-based assays, strategies for troubleshooting common issues and optimizing screen performance, and finally, rigorous approaches for assay validation and data interpretation. By synthesizing current best practices and emerging technologies, this resource aims to equip scientists with the knowledge to efficiently design, execute, and interpret robust chemogenomic screens, thereby accelerating the discovery of novel bioactive compounds.

Building Your Foundation: Principles of Chemogenomic Library Design and Curation

Chemogenomics represents a systematic strategy in drug discovery that investigates the interaction between targeted chemical libraries and families of functionally related proteins [1] [2]. In principle, it aims to identify all possible drug-like molecules that can interact with all potential biological targets, though in practice, it focuses on the systematic analysis of chemical-biological interactions against specific protein families such as G-protein-coupled receptors (GPCRs), kinases, phosphodiesterases, ion channels, and serine proteases [1]. This approach has evolved over the past two decades into a more formally applied strategy for discovering target- and subtype-specific ligands, moving beyond the traditional one-target-at-a-time paradigm [1] [3].

The fundamental premise of chemogenomics lies in its integrative nature, bridging target discovery and drug development by using active compounds as probes to characterize proteome functions [2]. The completion of the human genome project provided an abundance of potential targets for therapeutic intervention, with estimates suggesting 2,000-5,000 potential drug targets, yet current pharmaceuticals target only approximately 500 of these proteins [2] [3]. Chemogenomics addresses this gap by leveraging the structural and functional relationships within protein families to accelerate the identification of novel drugs and drug targets [2].

Design Principles and Strategic Approaches

Core Design Strategies

The construction of targeted chemical libraries typically includes known ligands for at least one, and preferably several, members of a target family [2]. This approach capitalizes on the observation that ligands designed for one family member will often bind to additional family members, enabling the collective compounds in a targeted chemical library to bind to a high percentage of the target family [2]. A key concept in this design is the identification of "privileged structures" - scaffolds such as benzodiazepines that frequently produce biologically active analogs within a target family, particularly in GPCRs [1].

Another significant approach is the Selective Optimization of Side Activities (SOSA) strategy, which involves modifying the selectivity of biologically active compounds to generate new drug candidates from the side activities of therapeutically used drugs [1]. This approach leverages existing safety profiles and known biological activities as starting points for new drug development.

Experimental Frameworks

Chemogenomics employs two primary experimental approaches, each with distinct methodologies and applications:

Forward Chemogenomics (Classical Approach): This method begins with a particular phenotype of interest, often with unknown molecular basis, and identifies small molecules that interact with this function [2]. Once modulators are identified, they serve as tools to identify the proteins responsible for the phenotype. For example, a loss-of-function phenotype such as arrested tumor growth would first identify compounds inducing this effect, followed by target identification [2].

Reverse Chemogenomics: This approach first identifies small compounds that perturb the function of a specific enzyme in vitro, then analyzes the phenotype induced by the molecule in cellular tests or whole organisms [2]. This method confirms the role of the enzyme in the biological response and has been enhanced by parallel screening capabilities and lead optimization across multiple targets within a family [2].

Library Specificity and Polypharmacology Considerations

A critical consideration in chemogenomic library design is the balance between target specificity and polypharmacology. Research has quantified this balance through a "polypharmacology index" (PPindex), which measures the overall target specificity of compound libraries [4]. Libraries can be compared using this index, with larger values (slopes closer to a vertical line) indicating more target-specific libraries, and smaller values (slopes closer to a horizontal line) indicating more polypharmacologic libraries [4].

Table 1: Polypharmacology Index (PPindex) Comparison of Selected Chemogenomic Libraries

| Library Name | PPindex (All Data) | PPindex (Without 0-Target Bin) | PPindex (Without 0- and 1-Target Bins) |

|---|---|---|---|

| DrugBank | 0.9594 | 0.7669 | 0.4721 |

| LSP-MoA | 0.9751 | 0.3458 | 0.3154 |

| MIPE 4.0 | 0.7102 | 0.4508 | 0.3847 |

| Microsource Spectrum | 0.4325 | 0.3512 | 0.2586 |

| DrugBank Approved | 0.6807 | 0.3492 | 0.3079 |

The presence of polypharmacology presents both challenges and opportunities. While excessive polypharmacology can complicate target deconvolution in phenotypic screens, appropriate polypharmacology can enhance therapeutic efficacy, as most drug molecules interact with six known molecular targets on average, even after optimization [4].

Practical Applications in Drug Discovery

Target Identification and Validation

Chemogenomics has proven particularly valuable in identifying novel therapeutic targets. For example, in antibacterial development, researchers have capitalized on existing ligand libraries for the murD enzyme in the peptidoglycan synthesis pathway [2]. Using the chemogenomics similarity principle, they mapped the murD ligand library to other members of the mur ligase family (murC, murE, murF, murA, and murG) to identify new targets for known ligands [2]. Structural and molecular docking studies revealed candidate ligands for murC and murE ligases that would be expected to function as broad-spectrum Gram-negative inhibitors, since peptidoglycan synthesis is exclusive to bacteria [2].

Mechanism of Action Elucidation

Chemogenomic approaches have been successfully applied to determine the mode of action (MOA) for traditional medicines, including Traditional Chinese Medicine (TCM) and Ayurveda [2]. Compounds from traditional medicines often possess "privileged structures" and have comprehensively known safety profiles, making them attractive as lead structures for developing new molecular entities [2]. Database containing chemical structures of traditional medicine compounds along with their phenotypic effects enables in silico analysis to predict ligand targets relevant to known phenotypes [2].

In a case study evaluating TCM "toning and replenishing medicine," researchers identified sodium-glucose transport proteins and PTP1B (an insulin signaling regulator) as targets linking to the hypoglycemic phenotype [2]. Similarly, for Ayurvedic anti-cancer formulations, target prediction programs enriched for targets directly connected to cancer progression such as steroid-5-alpha-reductase and synergistic targets like the efflux pump P-gp [2].

Pathway Elucidation

Chemogenomics has enabled the identification of previously unknown genes in biological pathways. A notable example emerged thirty years after the posttranslationally modified histidine derivative diphthamide was first identified, when chemogenomics approaches helped discover the enzyme responsible for the final step in its synthesis [2]. Researchers utilized Saccharomyces cerevisiae cofitness data (representing similarity of growth fitness under various conditions between different deletion strains) to identify the YLR143W gene as having the highest cofitness with strains lacking known diphthamide biosynthesis genes [2]. Subsequent experimental assays confirmed YLR143W as the missing diphthamide synthetase, resolving a three-decade mystery [2].

Protocol: Application of Chemogenomic Libraries in Phenotypic Screening for Glioblastoma

Background and Principle

This protocol describes the application of targeted chemogenomic libraries for identifying patient-specific vulnerabilities in glioblastoma (GBM) stem cells, based on recently published methodologies [5]. The approach utilizes a strategically designed library of 1,211 compounds targeting 1,386 anticancer proteins, optimized for library size, cellular activity, chemical diversity, availability, and target selectivity [5]. The library covers a wide range of protein targets and biological pathways implicated in various cancers, making it applicable to precision oncology initiatives.

Experimental Workflow

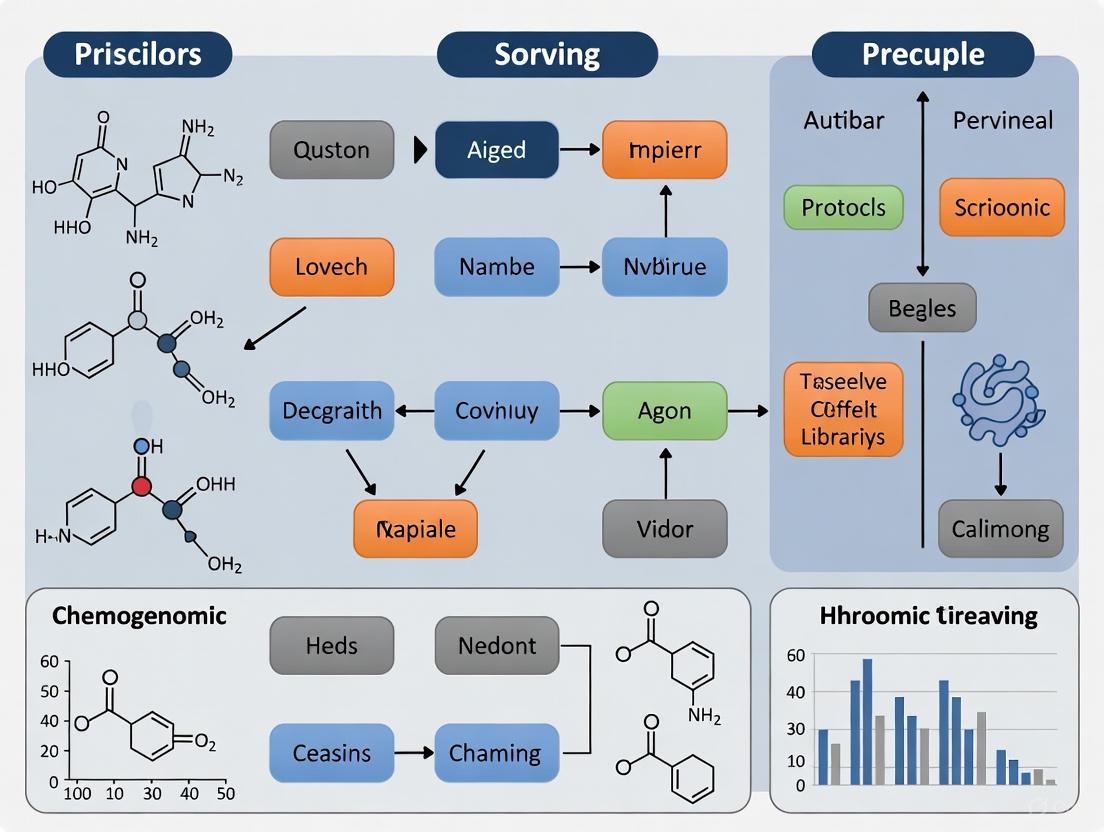

The following diagram illustrates the complete experimental workflow from library design through hit identification:

Materials and Reagents

Table 2: Essential Research Reagents and Solutions

| Reagent/Solution | Function/Purpose | Specifications |

|---|---|---|

| C3L Chemical Library | Targeted screening compounds | 1,211 compounds targeting 1,386 anticancer proteins [5] |

| Glioblastoma Stem Cells | Primary screening system | Patient-derived, maintain subtype characteristics |

| Cell Culture Media | Cell maintenance and expansion | Serum-free, neural stem cell optimized |

| High-Content Imaging System | Phenotypic quantification | Automated confocal microscope with live-cell capability |

| Viability Assay Reagents | Cell survival measurement | Multiparametric (metabolic activity, apoptosis, necrosis) |

| Data Analysis Platform | Data processing and visualization | Custom web platform (C3L Explorer) |

Step-by-Step Procedure

Library Preparation and Plate Formatting

- Compound Stock Solutions: Prepare 10 mM DMSO stock solutions of all 1,211 compounds. Store at -20°C in sealed, polypropylene plates.

- Intermediate Dilutions: Create intermediate 100 µM working stocks in complete cell culture medium immediately before screening.

- Assay Plate Formatting: Dispense compounds into 384-well imaging plates using acoustic dispensing technology (e.g., Beckman Echo 655) to achieve final screening concentration of 1 µM in 50 µL total volume.

- Control Wells: Include the following controls in each plate:

- Negative controls: DMSO only (0.1% final concentration)

- Positive controls: Staurosporine (1 µM final concentration) for maximal cell death

Cell Preparation and Compound Treatment

- Cell Culture: Maintain patient-derived glioma stem cells in serum-free neural stem cell media with appropriate growth factors. Use cells between passages 3-10 for screening.

- Cell Plating: Harvest cells at 70-80% confluence and seed at 2,000 cells/well in 384-well plates containing pre-dispensed compounds.

- Incubation: Incubate cells with compounds for 96 hours at 37°C, 5% CO₂ with >95% humidity.

High-Content Imaging and Analysis

- Staining: After 96-hour incubation, add multiparametric viability staining cocktail including:

- Hoechst 33342 (nuclear staining, 1 µg/mL)

- Annexin V-Alexa Fluor 488 (apoptosis marker)

- Propidium iodide (necrosis marker, 1 µM)

- Incubate for 45 minutes at 37°C

- Image Acquisition: Use automated confocal high-content imager (e.g., Molecular Devices ImageXpress Micro Confocal) to acquire 9 fields per well at 20× magnification across all fluorescence channels.

- Image Analysis: Quantify cell survival parameters using automated image analysis software:

- Total cell count (Hoechst channel)

- Apoptotic index (Annexin V positive cells)

- Necrotic index (Propidium iodide positive cells)

- Morphological parameters (cell size, shape, nuclear morphology)

Data Processing and Hit Identification

- Data Normalization: Normalize cell survival data to plate-based controls:

- 0% survival = average of staurosporine-treated wells

- 100% survival = average of DMSO-treated wells

- Quality Control: Apply Z'-factor calculation for each plate (accept plates with Z' > 0.5)

- Hit Selection: Define hits as compounds reducing cell viability to <30% of control in a patient-specific manner.

- Pathway Analysis: Cluster hits by target annotation and pathway mapping to identify patient-specific vulnerabilities associated with GBM molecular subtypes.

Expected Results and Interpretation

The screening of glioma stem cells from multiple GBM patients is expected to reveal highly heterogeneous phenotypic responses across patients and molecular subtypes [5]. Patient-specific vulnerabilities should emerge, with different compounds showing efficacy in different patient-derived lines based on their molecular profiles. Compounds targeting specific pathways (e.g., kinase inhibitors, epigenetic regulators) should show differential activity based on the genetic background of each GBM subtype.

Integration with High-Throughput Screening Technologies

Screening Platform Requirements

Modern implementation of chemogenomic libraries requires sophisticated high-throughput screening (HTS) infrastructure. Core components include:

- Automated Robotic Systems: Fully automated integrated robotic screening systems capable of processing 384- and 1536-well plates [6]

- Liquid Handling: Automated liquid handlers (e.g., Agilent Bravo) with acoustic dispensers (e.g., Beckman Echo 655) for non-contact nanoliter compound transfers [6]

- Detection Systems: Multimode microplate readers (fluorescence, luminescence, absorbance) and high-content imaging systems with live-cell capabilities [6]

- Library Management: Compound management systems for storage, retrieval, and quality control of chemical libraries [7]

Advanced Screening Methodologies

The application of chemogenomic libraries has been enhanced through integration with advanced screening technologies:

- Ultra-High-Throughput Screening (uHTS): uHTS can process >300,000 compounds per day using 1536-well plate formats with typical volumes of 1-2 µL, though fluid handling remains a technical challenge [7]

- High-Content Screening: Automated confocal imaging systems (e.g., Molecular Devices ImageXpress Micro Confocal) enable multiparametric phenotypic analysis in cell-based systems [6]

- CRISPR-Chemogenomic Integration: Genome-scale chemogenomic CRISPR screens using libraries such as TKOv3 (containing 70,948 sgRNAs targeting 18,053 genes) enable genetic and chemical perturbation in parallel [8]

Several publicly available chemogenomic libraries provide starting points for researchers:

Table 3: Selected Accessible Chemogenomic Libraries

| Library Name | Size | Key Features | Access Information |

|---|---|---|---|

| C3L Library | 1,211 compounds | Targets 1,386 anticancer proteins; optimized for precision oncology [5] | Available through published protocols |

| MIPE 4.0 | 1,912 compounds | Small molecule probes with known mechanism of action [4] | NIH Mechanism Interrogation PlatE |

| LSP-MoA | Not specified | Optimized chemical library targeting the liganded kinome [4] | Laboratory of Systems Pharmacology |

| Stanford HTS Collection | 225,000+ small molecules | Diverse screening collection with 15,000 cDNAs and genome-wide siRNA libraries [6] | Available through Stanford HTS @ The Nucleus core facility |

Chemogenomic libraries represent a powerful resource for modern drug discovery, enabling systematic exploration of chemical-biological interactions across target families. The strategic design of these libraries - balancing target coverage, polypharmacology, and chemical diversity - enhances their utility in both target-based and phenotypic screening approaches. As illustrated in the glioblastoma screening protocol, carefully designed chemogenomic libraries can reveal patient-specific vulnerabilities that may inform personalized therapeutic strategies. The continued refinement of library design principles, coupled with advances in screening technologies and data analysis methods, promises to further accelerate the identification of novel therapeutic agents across a broad range of diseases.

The discovery of bioactive compounds is a cornerstone of modern medicinal chemistry and chemical biology, underpinning efforts in drug discovery and fundamental biomedical research [9]. The design and sourcing of high-quality compound collections are critical first steps in high-throughput screening (HTS) protocols for chemogenomic research. These collections must balance diversity with biological relevance to efficiently identify novel chemical starting points against therapeutic targets [10]. Contemporary strategies increasingly draw inspiration from natural products and privileged scaffolds to enhance the probability of discovering compounds with meaningful bioactivity [9]. This application note details the key components, strategic considerations, and practical methodologies for assembling and utilizing diverse, targeted, and bioactive compound collections within an integrated HTS framework, providing researchers with actionable protocols for building effective screening libraries.

Strategic Design of Compound Collections

Foundational Principles for Library Design

The fitness of a screening collection relies on upfront filtering to eliminate problematic compounds while optimizing physicochemical properties, structural uniqueness, and molecular complexity [10]. Several strategic considerations inform library design:

Biological Relevance: Modern library design emphasizes the biological relevance of compounds, moving beyond purely structural diversity to include functional and phenotypic considerations [9]. This involves selecting compounds that occupy biologically relevant chemical space, often inspired by natural products or known bioactive scaffolds.

Lead-Likeness: Collections should prioritize compounds with "lead-like" qualities, possessing favorable physicochemical properties that increase the likelihood of successful optimization into drug candidates [10]. Early combinatorial libraries often failed due to poor property profiles, leading to increased emphasis on smaller, more focused libraries with better optimization potential.

Application-Specific Design: Library composition should align with the intended screening goals. Organizations with specific research programs targeting limited target classes (e.g., kinases or GPCRs) benefit from focused libraries containing privileged scaffolds for those target families, while organizations screening diverse targets require broader structural diversity [10] [11].

Cheminformatics Filtering Strategies

Robust cheminformatics filtering is essential for crafting high-quality libraries. A multi-step filtering approach ensures the removal of problematic compounds while selecting for desirable characteristics [10]:

Table 1: Key Cheminformatics Filters for Library Design

| Filter Type | Purpose | Examples/Parameters |

|---|---|---|

| Problematic Functionality | Remove compounds with known assay interference potential | PAINS (Pan-Assay Interference Compounds), REOS (Rapid Elimination of Swill), redox cyclers, reactive functional groups [10] |

| Physicochemical Properties | Ensure favorable drug-like or lead-like properties | Molecular weight, lipophilicity (cLogP), hydrogen bond donors/acceptors, polar surface area [10] [7] |

| Structural Diversity | Maximize coverage of chemical space | Murcko scaffolds and frameworks, structural fingerprints, clustering algorithms [11] |

| Complexity & 3Dimensionality | Enhance ability to target challenging interactions | Molecular complexity indices, fraction of sp3 carbons, chiral centers [10] |

The following workflow outlines the strategic process for designing and sourcing a bioactive compound collection:

Figure 1: Strategic Workflow for Compound Collection Design and Sourcing

Composition of Specialized Libraries

Diversity Libraries

Diversity libraries aim to maximize structural variety within drug-like chemical space, providing broad coverage for target-agnostic screening campaigns. These collections are characterized by high scaffold diversity and balanced physicochemical properties. For example, the BioAscent Diversity Set, originally part of MSD's screening collection, contains approximately 86,000 compounds selected by medicinal chemists for diversity and good medicinal chemistry starting points [11]. The set exemplifies key diversity library characteristics with approximately 57,000 different Murcko Scaffolds and 26,500 Murcko Frameworks, demonstrating extensive structural variety [11].

Smaller, strategically designed diversity subsets (e.g., 3,000-12,000 compounds) can effectively represent larger collections while conserving screening resources. These subsets balance structural fingerprint and physicochemical descriptor diversity, with some enriched in bioactive chemotypes and pharmacologically active compounds identified using Bayesian models [11].

Targeted and Focused Libraries

Focused libraries contain compounds biased toward specific target classes or biological processes, offering enhanced hit rates for known target families. These libraries leverage "privileged scaffolds" with proven activity against particular protein families (e.g., kinases, GPCRs, nuclear receptors) [10]. Common categories include:

- Kinase-focused libraries: Featuring hinge-binding motifs and ATP-competitive scaffolds

- GPCR-targeted libraries: Containing known pharmacophores for G-protein coupled receptors

- Epigenetic libraries: Focused on targets like histone deacetylases, methyltransferases, and bromodomains

- Protein-protein interaction inhibitors: Designed to target challenging interfacial surfaces

Bioactive and Chemogenomic Libraries

Bioactive collections consist of compounds with known biological activities and well-annotated mechanisms of action, making them particularly valuable for phenotypic screening and target deconvolution [12] [11]. These libraries facilitate the rapid connection of observed phenotypes to potential molecular targets through known bioactivity profiles.

The BioAscent chemogenomic library exemplifies this approach, comprising over 1,600 diverse, highly selective, and well-annotated pharmacological probe molecules [11]. Similarly, researchers have developed comprehensive chemogenomic libraries of 5,000 small molecules representing a large and diverse panel of drug targets involved in diverse biological effects and diseases [12]. These libraries are powerful tools for phenotypic screening and mechanism of action studies, as compounds with known mechanisms can help illuminate the biological pathways underlying observed phenotypes.

Table 2: Comparison of Major Compound Library Types

| Library Type | Size Range | Primary Applications | Key Characteristics | Examples |

|---|---|---|---|---|

| Diversity Library | 50,000-500,000+ compounds | Novel target identification, broad screening | Maximum structural diversity, drug-like properties | BioAscent Diversity Set (86,000 compounds) [11] |

| Focused/Targeted Library | 1,000-50,000 compounds | Specific target families (kinases, GPCRs, etc.) | Privileged scaffolds, target-class biased | Kinase-focused, GPCR-focused libraries [10] |

| Bioactive/Chemogenomic Library | 1,000-10,000 compounds | Phenotypic screening, target ID, MoA studies | Annotated activities, known mechanisms | BioAscent Chemogenomic (1,600 probes) [11] |

| Fragment Library | 500-10,000 compounds | Fragment-based drug discovery | Low MW (<300), high ligand efficiency | BioAscent Fragment Library (>10,000 compounds) [11] |

Experimental Protocols for Library Screening and Profiling

Quantitative High-Throughput Screening (qHTS)

Quantitative HTS has emerged as a powerful approach for profiling compound libraries with concentration-response curves across multiple doses, providing rich datasets for hit identification and prioritization [13]. The following protocol outlines a standardized qHTS approach for biochemical assays:

Protocol 1: Biochemical qHTS for Enzyme Inhibitors

Assay Miniaturization: Transfer biochemical assays to 1,536-well plate formats with 4-5μL final assay volumes to maximize throughput and conserve reagents [13].

Compound Dispensing: Utilize automated liquid-handling robots for nanoliter-scale compound dispensing. Prepare compound plates in DMSO with standardized concentrations (e.g., 2mM or 10mM stocks) [7] [11].

Concentration-Response Formatting: Implement serial dilutions (typically 1:5 or 1:3) across multiple concentrations (e.g., 0.5nM-50μM) to generate full concentration-response curves for each compound [13].

Assay Conditions Optimization:

- Utilize substrates and co-factors at or above Km values

- Maintain reactions at <20% conversion to ensure linear kinetics

- Include appropriate positive and negative controls on each plate

- Implement robust statistical validation with Z' factors >0.5 [13]

Detection Method Selection: Employ appropriate detection methods based on assay requirements:

Data Analysis: Process raw data to generate concentration-response curves, classifying compounds based on curve class, potency (IC50/EC50), and efficacy (% inhibition/activation) [13].

Phenotypic Screening with Morphological Profiling

Image-based high-content screening combined with morphological profiling provides powerful phenotypic characterization of compound effects. The Cell Painting assay represents a particularly comprehensive approach for generating rich morphological data [12]:

Protocol 2: Cell Painting Assay for Phenotypic Profiling

Cell Culture and Plating:

- Culture appropriate cell lines (e.g., U2OS osteosarcoma cells) under standard conditions

- Plate cells in multiwell plates (96-, 384-, or 1536-well format) at optimized densities

- Perturb cells with test compounds at relevant concentrations and timepoints [12]

Staining and Fixation:

- Stain cells with a multiplexed dye cocktail targeting multiple cellular compartments:

- Mitochondria (e.g., MitoTracker)

- Endoplasmic reticulum

- Nucleus

- Golgi apparatus

- F-actin cytoskeleton

- Fix cells at appropriate timepoints post-treatment [12]

- Stain cells with a multiplexed dye cocktail targeting multiple cellular compartments:

High-Throughput Microscopy:

- Acquire images using automated high-throughput microscopes

- Capture multiple fields per well across relevant fluorescence channels

- Ensure consistent imaging parameters across plates and batches [12]

Image Analysis and Feature Extraction:

- Utilize automated image analysis software (e.g., CellProfiler) to identify individual cells and cellular compartments

- Extract morphological features (size, shape, texture, intensity, granularity, correlation, etc.) for each cell and compartment

- Generate cell profiles representing the morphological state under each treatment condition [12]

Data Processing and Analysis:

- Aggregate single-cell data to well-level profiles

- Perform quality control to remove poor-quality wells

- Normalize data and perform batch correction if necessary

- Use dimensionality reduction and clustering to identify compounds with similar morphological profiles [12]

The integration of morphological profiling with chemogenomic libraries creates powerful system pharmacology networks connecting compound structure to target pathway and phenotypic outcome, facilitating mechanism of action studies [12].

Computational and Bioinformatics Integration

Machine Learning and QSAR Modeling

Modern compound discovery increasingly integrates experimental HTS data with computational prediction to expand chemical diversity and optimize resource utilization [13]. The following workflow illustrates this integrated approach:

Figure 2: Integrated Experimental-Computational Screening Workflow

Protocol 3: Integrated ML-Experimental Screening Pipeline

Initial Experimental Screening:

- Conduct qHTS of a structurally diverse, annotated compound library (10,000-20,000 compounds) against relevant biological assays [13]

- Generate high-quality concentration-response data for model training

Descriptor Calculation and Feature Engineering:

- Calculate molecular descriptors (e.g., fingerprints, physicochemical properties, 3D descriptors) for all screened compounds

- Perform feature selection to identify descriptors most relevant to biological activity

Model Training and Validation:

- Implement multiple machine learning algorithms (e.g., random forest, support vector machines, neural networks)

- Train models to predict compound activity using molecular descriptors as input and HTS results as output

- Validate model performance using cross-validation and hold-out test sets

- Apply models to virtually screen larger chemical libraries (>100,000 compounds) [13]

Hit Expansion and Validation:

- Select top predicted compounds based on model scores, structural novelty, and commercial availability

- Procure and experimentally test selected compounds in confirmatory assays

- Iteratively refine models based on new experimental results

This integrated approach was successfully implemented for discovering aldehyde dehydrogenase (ALDH) inhibitors, where screening of ~13,000 compounds informed models that virtually screened 174,000 compounds, leading to the identification of novel, selective ALDH probe candidates [13].

Public data repositories provide invaluable resources for compound selection and bioactivity profiling. Key resources include:

PubChem: The largest public chemical database containing over 60 million unique chemical structures and 1 million biological assays from more than 350 contributors [14]. PubChem provides programmatic access through PUG-REST interfaces for large-scale data retrieval.

ChEMBL: A manually curated database of bioactive molecules with drug-like properties containing bioactivity data (IC50, Ki, EC50), molecular information, and target annotations [12].

Commercial Compound Vendors: Numerous vendors offer pre-plated screening collections with diverse chemical libraries, fragment libraries, and targeted sets.

Essential cheminformatics tools for library design and analysis include software from ACD Labs, OpenEye, Tripos, Accelrys, MOE, Pipeline Pilot, and Schrodinger for performing structural, physicochemical, ADME, complexity, and diversity filtering [10].

Research Reagent Solutions

Table 3: Essential Research Reagents and Resources for Compound Screening

| Resource Category | Specific Examples | Key Function | Application Notes |

|---|---|---|---|

| Diversity Libraries | BioAscent Diversity Set (86,000 compounds) [11] | Broad screening for novel target identification | Originally from MSD collection; selected for medicinal chemistry starting points |

| Chemogenomic Libraries | BioAscent Chemogenomic Library (1,600 probes) [11] | Phenotypic screening, mechanism of action studies | Highly selective, well-annotated pharmacological probes |

| Fragment Libraries | BioAscent Fragment Library (>10,000 compounds) [11] | Fragment-based drug discovery | Balanced library with bespoke fragments; suitable for SPR-based screening |

| Specialized Compound Sets | LOPAC1280, NPACT, NCATS Medicinal Chemistry collections [13] | Annotated compounds for assay development and model training | Contain approved, bioactive, and structurally diverse compounds |

| PAINS/Interference Sets | BioAscent PAINS Set [11] | Assay validation and interference compound identification | Used during assay development to identify and mitigate false positives |

| Public Data Resources | PubChem, ChEMBL, BindingDB [14] [12] | Bioactivity data mining and compound selection | Provide extensive bioactivity data for informed library design |

| Cheminformatics Tools | Pipeline Pilot, MOE, OpenEye, RDKit [10] | Library design, filtering, and analysis | Enable physicochemical property calculation, diversity analysis, and scaffold mining |

The strategic sourcing and design of diverse, targeted, and bioactive compound collections form the foundation of successful high-throughput screening campaigns in chemogenomic research. By integrating thoughtful library design with robust experimental protocols and computational approaches, researchers can significantly enhance the efficiency and output of their drug discovery pipelines. The protocols and strategies outlined in this application note provide a framework for assembling high-quality compound collections, implementing effective screening methodologies, and leveraging public data resources to advance chemical biology and therapeutic development. As the field continues to evolve, the integration of phenotypic screening with chemogenomic libraries and machine learning approaches promises to further accelerate the discovery of novel bioactive compounds with meaningful therapeutic potential.

The success of high-throughput screening (HTS) campaigns in drug discovery is fundamentally dependent on the quality of the chemical libraries screened [10]. Curating a library with desirable physicochemical properties and without problematic functionalities dramatically increases the probability of identifying genuine, optimizable hit compounds. Among the various cheminformatic tools available for library curation, Lipinski's Rule of Five (Ro5) and the Rapid Elimination of Swill (REOS) filters have established themselves as critical, foundational components of a robust screening library design [15] [10].

Framed within a broader thesis on high-throughput screening protocols for chemogenomic libraries, this application note details the practical methodologies for implementing these filters. The Ro5 provides a rule-of-thumb to prioritize compounds with a higher likelihood of oral bioavailability, while REOS systematically removes compounds containing reactive or promiscuous functional groups that are likely to generate assay interference or false-positive results [15] [16] [10]. Their combined application ensures a library enriched with "drug-like," high-quality agents suitable for probing diverse biological targets.

Background and Principles

Lipinski's Rule of Five (Ro5)

Lipinski's Rule of Five predicts that a chemical compound with pharmacological activity is likely to have poor oral absorption or permeability if it violates more than one of the following criteria [16] [17]:

- Molecular weight (MW) < 500 Daltons

- Octanol-water partition coefficient (Log P) < 5

- Number of hydrogen bond donors (HBD) < 5

- Number of hydrogen bond acceptors (HBA) < 10

The "Rule of Five" name originates from the fact that all cutoffs are multiples of five. It is crucial to note that the Ro5 is a guideline for oral bioavailability and not a predictor of pharmacological activity [16]. Furthermore, it primarily applies to compounds that are not substrates for active transporters, and numerous important drug classes, such as natural products, antibiotics, and some newer modalities, fall outside this rule [16] [18].

Rapid Elimination of Swill (REOS)

REOS is a computational filter designed to remove compounds with undesirable properties or substructures from screening libraries [15] [10]. It typically eliminates molecules based on two criteria:

- Undesirable physicochemical properties (e.g., molecular weight or log P outside a desired range).

- Problematic functional groups that are associated with chemical reactivity, assay interference, or toxicity. These include, but are not limited to, alkyl halides, aldehydes, Michael acceptors, and anhydrides [10].

The goal of REOS is to create a "clean" library, reducing the time and resources wasted on following up false positives generated by promiscuous or reactive compounds, often referred to as Pan-Assay Interference Compounds (PAINS) [10].

The Synergy in Practice

The practical synergy of these filters is exemplified by the library curation workflow at Stanford Medicine's HTS facility. Their process involves standardizing molecular structures, applying a modified Lipinski filter, and then passing the molecules through a REOS filter to eliminate reactive functionalities, resulting in a final, diverse screening collection [15].

Application Protocols

This section provides detailed, step-by-step protocols for applying the Ro5 and REOS filters to a chemical library prior to a screening campaign.

Protocol 1: Applying Lipinski's Rule of Five Filter

Objective: To filter a compound library and select molecules that comply with Lipinski's Rule of Five, thereby having a higher probability of oral bioavailability.

Materials & Reagents:

- Input Library: A digital library of compounds in SDF (Structure-Data File) or SMILES (Simplified Molecular Input Line Entry Specification) format.

- Cheminformatics Software: A software package capable of calculating molecular descriptors (e.g., OpenEye Toolkits, Schrodinger's Canvas, RDKit, ChemAxon).

- Computing Environment: A standard desktop computer or server with sufficient processing power for the library size.

Procedure:

- Data Standardization: Load the input library into your chosen cheminformatics software. Initiate a standardization procedure to ensure consistency. This should include:

- Clearing and setting formal charges.

- Stripping salts and counterions.

- Generating a canonical tautomer for each molecule [15].

- Descriptor Calculation: For each standardized molecule in the library, calculate the following four physicochemical descriptors:

- Molecular Weight (MW)

- Calculated Log P (e.g., XLogP, CLogP)

- Number of Hydrogen Bond Donors (HBD)

- Number of Hydrogen Bond Acceptors (HBA)

- Rule Application: Apply the following logical filter to each compound. A compound is considered compliant if it has no more than one violation of the criteria listed in Table 1.

- Output: Generate a new chemical library file (e.g., SDF) containing only the compounds that pass the filter.

Table 1: Lipinski's Rule of Five Criteria for Filtering

| Physicochemical Property | Threshold Value | Calculation Method |

|---|---|---|

| Molecular Weight (MW) | < 500 Daltons | Sum of atomic masses |

| Partition Coefficient (Log P) | < 5 | Calculated octanol-water coefficient (e.g., CLogP) |

| Hydrogen Bond Donors (HBD) | ≤ 5 | Count of OH and NH groups |

| Hydrogen Bond Acceptors (HBA) | ≤ 10 | Count of O and N atoms |

Protocol 2: Applying the REOS Filter

Objective: To remove compounds with reactive functional groups, undesirable physicochemical properties, or structural features known to cause assay interference.

Materials & Reagents:

- Input Library: The Lipinski-filtered library from Protocol 1 (or a raw library for standalone use).

- Cheminformatics Software: As in Protocol 1, with substructure search capabilities.

- Functional Group List: A predefined set of SMARTS patterns or structural queries for undesirable groups.

Procedure:

- Property-Based Filtering: Apply initial filters based on broad physicochemical properties to remove "obviously" undesirable compounds. The example from Stanford's protocol uses:

- Number of Atoms > 0

- (Number of N and O atoms <= 10)

- (100 <= MW >= 500)

- Number of H-Bond Donors <= 5

- (-5 <= AlogP <= 5) [15]

- Reactive Group Filtering: Using the substructure search function, screen the library against a comprehensive list of problematic functional groups. Table 2 provides a list of key functional groups to flag and remove.

- Curation and Output: Remove all compounds that match the undesirable substructure queries. The resulting library is now filtered through both REOS and Lipinski's Rule of Five.

Table 2: Key Functional Groups for REOS-Based Filtering

| Functional Group Category | Example Functional Groups | Rationale for Exclusion |

|---|---|---|

| Electrophiles / Reactive | Alkyl halides, Aldehydes, Epoxides, Michael acceptors, Anhydrides | Potential covalent, non-specific binding to proteins [10] |

| Potential Assay Interferers | Acyl hydrazides, Dihydroxyarenes, Trihydroxyarenes, Aminothiazoles | Redox cycling, fluorescence quenching, spectroscopic interference [10] |

| Toxicophores | Aziridines, Peroxides, Isocyanates | General reactivity associated with toxicity |

The following workflow diagram illustrates the sequential integration of both protocols for comprehensive library curation.

Successful implementation of the aforementioned protocols requires a suite of software tools and databases. The following table details key resources for researchers curating chemogenomic libraries.

Table 3: Essential Research Reagent Solutions for Library Curation

| Tool / Resource Name | Function / Application | Example Use Case in Protocol |

|---|---|---|

| Pipeline Pilot (SciTegic) | Data pipelining and informatics platform | Automating the multi-step workflow of standardization, descriptor calculation, and filtering [15] |

| RDKit | Open-source cheminformatics toolkit | Calculating molecular descriptors (MW, HBD, HBA, LogP) and performing substructure searches [10] |

| SMARTS Patterns | Language for specifying molecular substructures | Defining reactive functional groups (e.g., aldehydes, Michael acceptors) for the REOS filter [10] |

| Rule of 5/BDDCS | Extended classification system | Predicting drug disposition for compounds both meeting and violating Ro5 [18] |

| PAINS Filters | Set of structural alerts for assay interferents | Supplementing the REOS filter to remove promiscuous compounds [10] |

Concluding Remarks

The rigorous application of Lipinski's Rule of Five and REOS filters is a critical, non-negotiable step in the curation of high-quality chemogenomic libraries for high-throughput screening. These protocols provide a robust defense against the inclusion of compounds with poor developmental potential or a high propensity for generating false-positive results. By systematically applying these filters, researchers can construct screening collections that are significantly enriched for lead-like, drug-gable compounds, thereby increasing the efficiency and success rate of downstream drug discovery and chemical biology efforts. As the field evolves with new therapeutic modalities, these principles remain foundational, even as they are adaptively extended for "beyond Rule of 5" chemical space.

Pan-Assay Interference Compounds (PAINS) are chemical compounds that produce false-positive readouts in high-throughput screening (HTS) assays through non-specific interference mechanisms rather than through targeted biological activity [19]. These nuisance compounds represent a significant challenge in early drug discovery, as they can misdirect research efforts and consume substantial resources. It is estimated that a typical academic screening library contains approximately 5-12% PAINS, with over 400 structural classes identified, more than half of which fall under 16 easily recognizable groups [19]. The insidious nature of PAINS lies in their ability to masquerade as promising hits, leading researchers to pursue dead-end compounds that cannot be developed into viable therapeutics.

The impact of PAINS on the drug discovery process is both profound and costly. A revealing case study from Dr. Michael Walters' lab at the University of Minnesota illustrates this problem starkly. In a screen of over 225,000 compounds targeting the histone acetyltransferase Rtt109, initial results identified 1,500 apparent hits [20] [19]. However, after rigorous triage and counter-screening, only three compounds proved to be genuine inhibitors [20] [19]. This represents a false-positive rate of over 99.8%, demonstrating how PAINS can completely overwhelm a screening campaign. Without proper identification and filtering, these compounds can skew the scientific literature as they are published and re-validated as promising hits, creating a cycle of misdirected research [19].

Mechanisms of Assay Interference

Understanding the chemical mechanisms by which PAINS interfere with assays is fundamental to developing effective countermeasures. These compounds employ diverse strategies to generate false signals across various assay technologies, making them particularly challenging to identify through single-method screening.

Key Interference Mechanisms

Thiol Reactivity: Many PAINS chemotypes act as electrophiles that covalently modify cysteine residues in protein targets. This non-specific reactivity can lead to apparent inhibition across multiple unrelated targets. Studies using techniques like protein mass spectrometry and ALARM NMR have confirmed that these compounds form covalent adducts with cysteines on multiple proteins [20]. For example, in a CPM-based assay that detects free thiols, numerous PAINS were found to react with the CoA byproduct or the fluorescent probe itself, mimicking genuine enzymatic inhibition [20].

Chemical Aggregation: Some PAINS form colloidal aggregates in aqueous assay buffers that non-specifically sequester proteins, leading to apparent inhibition. These aggregates can range in size from 30 nm to 1,000 nm and have been shown to inhibit a wide variety of enzymes [20]. The addition of detergents like Triton X-100 can sometimes mitigate this interference, but not all aggregate-based inhibition is reversed by such measures [20].

Chelation: Compounds with specific metal-chelating motifs can interfere with assays that require metal cofactors. By sequestering essential metal ions, these PAINS disrupt enzymatic activity without truly engaging the target's active site [19]. Common chelating motifs include catechols, hydroxyphenyl hydrazones, and certain nitrogen-containing heterocycles [19].

Redox Activity: Some PAINS are redox-active and can generate reactive oxygen species under assay conditions, leading to oxidation of assay components or protein targets. This mechanism is particularly problematic in cell-based assays where oxidative stress can produce confounding biological effects [20] [19].

Fluorescence and Signal Interference: Compounds with intrinsic fluorescence or those that quench fluorescence can directly interfere with optical readouts, especially in fluorescence-based assays. Other PAINS may absorb light at critical wavelengths or produce reaction products that generate signals indistinguishable from genuine activity [20] [19].

Table 1: Common PAINS Chemotypes and Their Mechanisms of Interference

| Chemotype | Primary Interference Mechanism | Assay Technologies Affected |

|---|---|---|

| Ene Rhodanines | Thiol reactivity, Covalent modification | CPM-based assays, Thiol-detection assays |

| Isothiazolones | Electrophilicity, Cysteine oxidation | Multiple assay types |

| Curcuminoids | Redox activity, Metal chelation | Antioxidant assays, Metal-dependent enzymes |

| Toxoflavins | Redox cycling, Reactive oxygen species generation | Cell-based assays, Oxidative stress readouts |

| Catechols | Metal chelation, Oxidative degradation | Metal-dependent enzymes, Kinase assays |

| Hydroxyphenyl Hydrazones | Metal chelation, Aggregate formation | Multiple assay types |

| Quinones | Redox activity, Thiol reactivity | Multiple assay types |

Experimental Protocols for PAINS Identification

Implementing robust experimental protocols for PAINS identification is essential for any high-throughput screening campaign. The following section provides detailed methodologies for detecting and eliminating these problematic compounds.

Orthogonal Assay Counter-Screening Protocol

Purpose: To distinguish true target engagement from assay interference through the use of alternative detection technologies.

Materials:

- Test compounds dissolved in DMSO at 10 mM stock concentration

- Target protein in appropriate assay buffer

- Primary assay reagents (e.g., CPM-based detection system)

- Orthogonal assay reagents (e.g., antibody-based detection, radiometric assay)

- 384-well assay plates

- Plate reader capable of multiple detection modes

Procedure:

- Prepare compound dilutions in assay buffer containing 0.01% Triton X-100 to mitigate aggregate formation. Include positive and negative controls on each plate.

- Perform primary screening using your standard assay protocol (e.g., CPM-based thiol detection for HAT activity) [20].

- Simultaneously, run orthogonal assay using fundamentally different detection chemistry. For histone acetyltransferase activity, this might include:

- Antibody-based detection of acetylated histone products [20]

- Radiometric assays using ³H-acetyl-CoA if facilities permit

- Mass spectrometry-based direct detection of reaction products

- Compare dose-response curves between primary and orthogonal assays.

- Flag compounds that show significant discrepancies in IC50 values (>10-fold difference) or demonstrate steep Hill slopes (typically >2), which may indicate cooperative binding or aggregation behavior [20].

- Confirm findings with additional counter-screens as described below.

Expected Results: True inhibitors will demonstrate consistent activity across both primary and orthogonal assays, while PAINS will typically show significant variation in potency between different detection methods.

Thiol-Reactivity Counter-Screen Using ALARM NMR

Purpose: To identify compounds that covalently modify cysteine residues in proteins, a common mechanism of PAINS interference.

Materials:

- La antigen protein (or other cysteine-rich protein)

- NMR buffer: 20 mM sodium phosphate, 50 mM NaCl, 10% D₂O, pH 7.0

- Test compounds at 10 mM in DMSO

- DTT solution (1M)

- NMR spectrometer

Procedure:

- Express and purify La antigen or obtain commercially.

- Prepare NMR samples containing 50-100 μM La antigen in NMR buffer.

- Add test compounds to a final concentration of 50-100 μM (1:1 molar ratio with protein).

- Incubate samples for 2-24 hours at room temperature.

- Acquire ¹H-¹⁵N HSQC spectra of La antigen before and after compound addition.

- Monitor chemical shift changes specifically in cysteine-containing regions of the spectrum.

- Include reduced and oxidized controls (with and without DTT) to distinguish redox activity from direct covalent modification.

Interpretation: Compounds that cause significant chemical shift perturbations in cysteine-containing regions indicate thiol reactivity. These compounds should be deprioritized unless covalent inhibition is a desired mechanism [20].

Aggregation Detection Protocol Using Dynamic Light Scattering

Purpose: To identify compounds that form colloidal aggregates in assay buffers.

Materials:

- Test compounds at 10 mM in DMSO

- Assay buffer (identical to screening conditions)

- Dynamic Light Scattering instrument

- 0.22 μm filters

Procedure:

- Prepare compound solutions in assay buffer at 10-50 μM final concentration.

- Incubate solutions for 1 hour at room temperature.

- Filter a portion of each sample through 0.22 μm filters.

- Measure particle size distribution in both filtered and unfiltered samples using DLS.

- Compare scattering signals before and after filtration.

Interpretation: Compounds that show significant light scattering signals (>50 nm particles) in unfiltered samples that decrease after filtration indicate aggregation behavior. The addition of non-ionic detergents (0.01% Triton X-100) can sometimes resolve this issue, but aggregated compounds should generally be considered suspect [20].

Computational Filtering and Triage Strategies

Computational methods provide the first line of defense against PAINS in high-throughput screening campaigns. When implemented properly, these filters can significantly reduce the number of nuisance compounds that progress to expensive experimental follow-up.

PAINS Filter Implementation Protocol

Purpose: To computationally identify and flag potential pan-assay interference compounds before they enter screening campaigns or during hit triage.

Materials:

- Compound library in SMILES or SDF format

- Cheminformatics software (e.g., RDKit, KNIME, Pipeline Pilot)

- PAINS substructure filters (available from published sources)

- Access to compound management database

Procedure:

- Obtain current PAINS substructure definitions from reputable sources in SMARTS pattern format.

- Load compound structures into your cheminformatics platform.

- Perform substructure searching using the PAINS SMARTS patterns.

- Flag compounds containing any PAINS motifs.

- Apply additional context-dependent filters:

- Exclude compounds with reactive functional groups (e.g., alkyl halides, Michael acceptors)

- Flag compounds with extreme physicochemical properties (e.g., logP > 5, molecular weight > 500)

- Identify compounds with similarity to known frequent hitters

- Implement decision rules for flagged compounds:

- Automatic exclusion from primary screens

- Flagging for additional counter-screening

- Segregation into lower-priority screening tiers

Considerations: While computational filtering is essential, it should not be applied dogmatically. Some PAINS filters may generate false positives, potentially eliminating valuable chemical matter. Filters should be regularly updated as new interference mechanisms are characterized [19].

Table 2: Computational Tools for PAINS Identification

| Tool/Method | Key Features | Limitations |

|---|---|---|

| SMARTS Pattern Matching | Identifies known PAINS substructures | May miss novel interference motifs |

| Frequent Hitter Analysis | Flags compounds active in multiple unrelated assays | Requires extensive screening history |

| Physicochemical Property Filtering | Identifies compounds with poor drug-like properties | May eliminate valid chemical matter |

| Machine Learning Classifiers | Can identify novel PAINS-like compounds | Requires large training datasets |

Successful identification and mitigation of PAINS requires a combination of specialized reagents, computational tools, and experimental approaches. The following table details key resources for establishing a robust PAINS triage workflow.

Table 3: Research Reagent Solutions for PAINS Identification

| Reagent/Resource | Function | Application Notes |

|---|---|---|

| CPM (N-[4-(7-diethylamino-4-methylcoumarin-3-yl)phenyl]maleimide) | Thiol-reactive fluorescent probe | Used in counter-screens for thiol-reactive compounds; emits fluorescence upon reaction with free thiols [20] |

| La Antigen | Cysteine-rich protein for ALARM NMR | Contains multiple cysteine residues that serve as sensors for electrophilic compounds; used to detect thiol reactivity [20] |

| Triton X-100 | Non-ionic detergent | Disrupts compound aggregates; include at 0.01% in assay buffers to mitigate aggregation-based interference [20] |

| Glutathione (GSH) | Biological thiol for reactivity assessment | Used to assess compound reactivity with biological thiols; reactive compounds form GSH adducts detectable by LC-MS [20] |

| DTT (Dithiothreitol) | Reducing agent | Distinguishes redox-active compounds from direct covalent binders; used in ALARM NMR and other counter-screens [20] |

| Orthogonal Assay Kits | Alternative detection methods | Antibody-based, radiometric, or mass spectrometry-based detection to confirm activity across different platforms [20] |

Workflow Visualization and Decision Trees

Implementing a systematic workflow for PAINS triage is critical for efficient hit identification in high-throughput screening. The following diagrams visualize key processes for identifying and mitigating assay interference.

PAINS Triage Workflow

PAINS Interference Mechanisms

Addressing the challenge of PAINS requires a multifaceted approach combining computational filtering, experimental counter-screening, and careful data interpretation. The protocols and strategies outlined in this application note provide a framework for implementing a robust PAINS triage workflow in high-throughput screening campaigns. By integrating these practices into standard screening protocols, researchers can significantly reduce the time and resources wasted on pursuing artifactual compounds.

Successful PAINS mitigation ultimately depends on maintaining a balance between appropriate caution and scientific opportunity. While problematic compounds should be identified and eliminated early, it is equally important to avoid overzealous filtering that might discard valuable chemical matter. Context matters—some PAINS motifs may be acceptable in certain therapeutic contexts, particularly if the interference mechanism is understood and controlled for. Regular review and updating of PAINS filters as new information emerges will ensure that screening campaigns remain both efficient and effective in identifying genuine therapeutic starting points.

The escalating crisis of antimicrobial resistance necessitates innovative strategies in antibacterial drug discovery [21] [22]. High-throughput screening (HTS) of chemogenomic libraries remains a cornerstone of this effort; however, the limited chemical diversity of traditional synthetic libraries and the frequent rediscovery of known scaffolds from conventional natural product libraries have constrained progress [21] [22]. This application note details emerging protocols designed to overcome these hurdles by systematically integrating complex natural products and complexity-oriented synthetic libraries into HTS campaigns. We focus on practical methodologies that leverage mechanistic informed phenotypic screening and advanced chemical biology to explore underexplored regions of the biologically relevant chemical space (BioReCS), thereby enhancing the probability of identifying novel antibacterial agents [23] [22].

Library Design and Curation

Strategic Library Composition for Expanded BioReCS Coverage

The concept of the Biologically Relevant Chemical Space (BioReCS) provides a framework for understanding the relationship between molecular structures and their biological activities [23]. Effective library design aims to sample both heavily explored and underexplored regions of this space. Key domains include drug-like small molecules, natural products, peptides, macrocycles, and metallodrugs [23].

Table 1: Key Public Compound Databases for Library Curation

| Database Name | Primary Focus | Key Features | Utility in HTS |

|---|---|---|---|

| ChEMBL [23] [24] | Bioactive drug-like molecules | Manually curated bioactivity data from literature; >1.6M molecules; >11,000 targets [24]. | Target annotation, polypharmacology prediction, library design. |

| PubChem [23] | Small molecules and bioassays | Massive repository of chemical information and biological activity screening data. | Access to massive bioactivity dataset for preliminary virtual screening. |

| Dark Chemical Matter [23] | Inactive Compounds | Collection of compounds consistently inactive across numerous HTS campaigns. | Defining non-bioactive chemical space; filtering out likely inert structures. |

| InertDB [23] | Curated Inactive & AI-Generated Molecules | Database of 3,205 experimentally confirmed and 64,368 AI-generated putative inactive molecules. | Training machine learning models to distinguish bioactive from inactive compounds. |

Protocol: Constructing an Integrated Natural Product and Chemogenomic Library

Objective: To assemble a screening library that maximizes chemical diversity and biological relevance by integrating natural products with a targeted chemogenomic set.

Materials:

- Source Compounds: Natural product extracts or pure compounds, commercial synthetic molecules, compounds from in-house collections.

- Software: Chemoinformatics toolkit (e.g., RDKit, KNIME), database management system (e.g., Neo4j for network pharmacology [24]).

- Key Reagents: Solvents (DMSO, ethanol), cell culture media for pre-screening viability assays.

Procedure:

- Define Library Scope and Filter: Establish criteria based on the screening goal (e.g., targeting Gram-negative bacteria may require compounds beyond traditional "rule of 5" space [23]). Apply calculated properties (e.g., logP, molecular weight) and structural filters to remove compounds with undesirable functional groups (PAINS) [22].

- Acquire and Curate Natural Products:

- Source Selection: Prioritize under-explored ecological niches (marine sediments, endophytes) to increase novelty [21] [22].

- Standardization: If using extracts, employ prefractionation to reduce complexity and mitigate antagonistic/synergistic effects [22]. For pure natural product libraries, ensure structures are verified and sourced from reputable databases.

- Integrate Chemogenomic Compounds: Select 3,000-5,000 synthetic molecules representing a diverse panel of pharmacological targets, as exemplified in prior network pharmacology approaches [24]. This set should cover a wide range of protein families and biological pathways.

- Assess and Enrich Diversity:

- Scaffold Analysis: Use software like ScaffoldHunter [24] to categorize compounds by their core structures. Aim for a high ratio of unique scaffolds to total compounds.

- Descriptor Analysis: Calculate molecular descriptors (e.g., topological, physicochemical) and use dimensionality reduction techniques (e.g., t-SNE, PCA) to visualize library coverage of chemical space. Actively incorporate molecules from underexplored subspaces like macrocycles or metallodrugs [23].

- Library Formatting and Storage: Dissolve compounds in DMSO to a standardized concentration (e.g., 10 mM). Store in barcoded plates at -20°C or -80°C to ensure stability.

High-Throughput Screening Assay Protocols

Comparative Analysis of HTS Assay Modalities

The choice of assay modality is critical and should be aligned with the library's composition and the discovery objectives.

Table 2: Key HTS Assay Modalities for Antibacterial Discovery

| Assay Type | Principle | Advantages | Disadvantages | Suitable Library Types |

|---|---|---|---|---|

| Cellular Target-Based (CT-HTS) [22] | Measures compound effect on bacterial cell viability or growth. | Identifies intrinsically active agents with cell permeability; uncovers novel mechanisms. | Target deconvolution can be challenging; may identify non-specific cytotoxins. | Ideal for first-pass screening of complex natural product extracts and diverse synthetic libraries. |

| Molecular Target-Based (MT-HTS) [22] | Measures compound interaction with a purified protein or enzymatic target. | High mechanistic specificity; amenable to ultra-HTS. | Hits may lack cell permeability or activity in physiological contexts. | Best for targeted synthetic and chemogenomic libraries where the mechanism is predefined. |

| Mechanism-Informed Phenotypic (Reporter-Based) HTS [22] | Uses engineered bacteria with reporters (e.g., GFP, luciferase) linked to a specific pathway. | Provides mechanistic clues within a phenotypic context; high sensitivity. | Requires prior knowledge of the target pathway; reporter construction can be complex. | Effective for both natural products and synthetic libraries when a specific pathway is targeted. |

| Virulence/Quorum Sensing Targeting HTS [22] | Screens for inhibitors of virulence factors or quorum-sensing without killing bacteria. | Potential for narrower resistance development; targets pathogenicity. | Does not directly kill bacteria, may be ineffective in immunocompromised hosts. | Suitable for all library types, especially for anti-virulence therapeutic development. |

Protocol: Mechanism-Informed Phenotypic Screening Using a Reporter Assay

Objective: To identify compounds that inhibit a specific bacterial virulence pathway (e.g., quorum-sensing) using a reporter-gene assay in a high-throughput format.

Materials:

- Bacterial Strain: Reporter strain with a key promoter of interest (e.g.,

lasIin P. aeruginosa) fused to a readily detectable reporter gene (e.g.,gfp,luciferase). - Assay Plates: 384-well black-walled, clear-bottom microplates.

- Key Reagents: Growth medium (e.g., LB), positive control inhibitor (e.g., known quorum-sensing inhibitor), negative control (DMSO), detection reagent (if needed).

- Instrumentation: Automated liquid handler, multi-mode microplate reader (for fluorescence/luminescence), or high-content imaging system.

Procedure:

- Assay Development and Validation:

- Culture the reporter strain to mid-log phase.

- Optimize cell density, induction conditions, and signal-to-background ratio using positive and negative controls.

- Establish Z'-factor to confirm assay robustness for HTS (Z' > 0.5 is acceptable).

- Compound Screening:

- Using an automated liquid handler, transfer 10-50 nL of library compounds (from a 10 mM stock) to assay plates. Include control wells on each plate.

- Add 40 μL of the diluted reporter bacterial culture to each well.

- Incubate plates under optimal growth conditions for the predetermined period (e.g., 16-18 hours at 37°C).

- Signal Detection and Hit Identification:

- Measure reporter signal (fluorescence/luminescence) using a plate reader.

- Normalize raw data to plate-based positive and negative controls.

- Define hit compounds as those that reduce reporter activity by a statistically significant threshold (e.g., >3 standard deviations from the mean of negative controls) without significantly inhibiting growth, which can be assessed in a parallel viability assay.

- Counter-Screening and Hit Confirmation:

- Counter-screen primary hits against a constitutive promoter reporter strain to rule out general transcription/translation inhibitors or fluorescent interferers.

- Re-test confirmed hits in dose-response to determine IC₅₀ values.

The Scientist's Toolkit: Essential Research Reagents and Materials

A successful screening campaign relies on a carefully selected set of reagents and tools.

Table 3: Key Research Reagent Solutions for Integrated HTS

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Prefractionated Natural Product Libraries [22] | Reduces complexity of crude extracts, simplifying hit deconvolution. | Prefractionate extracts (e.g., by HPLC) into simpler fractions before screening to isolate active components. |

| Chemogenomic Library (e.g., 5000 compounds) [24] | Provides a diverse set of molecules with annotated or predicted activities across a wide range of human targets. | Used for phenotypic screening to probe complex biology; target annotation aids in mechanism of action studies. |

| Cell Painting Assay Kits [24] | Enables high-content morphological profiling using fluorescent dyes. | Detects subtle phenotypic changes; generates rich data for comparing compound effects and predicting MoA. |

| Reporter Bacterial Strains [22] | Engineered strains with fluorescent or luminescent reporters for specific pathways (e.g., virulence, stress). | Enables mechanism-informed phenotypic screening; critical for targeting specific bacterial behaviors. |

| Network Pharmacology Databases (e.g., ChEMBL, KEGG) [24] | Integrated databases linking compounds, targets, pathways, and diseases in a graph format (e.g., Neo4j). | Essential for in-silico target prediction, polypharmacology assessment, and mechanistic deconvolution of hits. |

Advanced Data Analysis and Hit Triage

Workflow for Integrated Screening Data Analysis

Post-screening data analysis is a multi-stage process designed to prioritize the most promising hits for further development.

Protocol: Hit Triage and Mechanism of Action Prediction

Objective: To filter and prioritize primary hits based on chemical properties, novelty, and potential mechanism of action using computational and network pharmacology tools.

Materials:

- Software: Chemoinformatics suite, Neo4j database with integrated pharmacology network [24], statistical analysis software (e.g., R, Python).

- Data: Primary hit list with potency data (e.g., % inhibition, IC₅₀), chemical structures (SMILES), and assay data.

Procedure:

- Remove Problematic Compounds and Pan-Assay Interference Compounds (PAINS): Filter hits against PAINS substructure filters and other undesirable chemical motifs to eliminate promiscuous or artifactual binders [22].

- Assess Chemical Novelty and Scaffold Analysis:

- Leverage Morphological and Network Pharmacology for MoA Prediction:

- If Cell Painting or other high-content data is available, compare the morphological profiles of hits to reference compounds with known mechanisms [24].

- Query a pre-built network pharmacology graph database [24]. Input the list of hits to find connections to known targets, pathways (KEGG), and biological processes (Gene Ontology). This helps generate testable hypotheses about the MoA.

- Final Prioritization: Rank compounds based on a weighted score incorporating potency, selectivity, chemical tractability, scaffold novelty, and confidence in the predicted MoA.

Visualization of the Biologically Relevant Chemical Space

Understanding the positioning of your library and hits within the broader chemical universe is crucial for strategic discovery.

Executing Your Screen: A Step-by-Step Guide to HTS Assay Protocols and Automation

High-Throughput Screening (HTS) is a fundamental approach in modern drug discovery, enabling the rapid testing of thousands to millions of chemical compounds to identify novel drug leads [7]. The selection of an appropriate assay format—biochemical or cell-based—represents one of the most critical decisions in designing a successful HTS campaign for chemogenomic library research. Biochemical assays utilize purified target proteins to measure binding affinity or enzymatic inhibition in a controlled environment, while cell-based assays employ living cells to evaluate compound effects within a more physiologically relevant context [25]. Each approach offers distinct advantages and limitations, with the optimal choice being dictated by the biological target, the desired information about compound mechanism of action, and the specific research objectives within the chemogenomic screening paradigm.

The growing emphasis on physiologically relevant data has driven increased adoption of cell-based HTS approaches, particularly those employing advanced models such as 3D cell cultures and organoids that better mimic human tissue environments [26]. However, biochemical assays remain indispensable for target-focused screening strategies, especially when detailed mechanistic information about compound-target interactions is required. This application note provides a structured framework for selecting between biochemical and cell-based assay formats, with specific protocols and decision guidelines optimized for screening chemogenomic libraries.

Comparative Analysis of Assay Formats

Fundamental Principles and Applications

Biochemical assays directly measure molecular interactions between compounds and purified biological targets, typically enzymes, receptors, or protein-protein complexes. These assays are conducted in controlled buffer systems that optimize target stability and function, but often lack the complexity of the intracellular environment [27]. The primary readouts for biochemical assays include binding affinity (Kd), enzymatic inhibition (IC50, Ki), and kinetic parameters. Common detection technologies include fluorescence polarization (FP), fluorescence resonance energy transfer (FRET), time-resolved FRET (TR-FRET), surface plasmon resonance (SPR), and mass spectrometry [25].

Cell-based assays evaluate compound effects in the context of living cellular systems, providing information about cellular permeability, toxicity, and functional activity within complex biological pathways. These assays can be further categorized into phenotypic assays (measuring downstream cellular responses without pre-specified molecular targets) and target-based cellular assays (measuring modulation of specific targets in their cellular context) [25]. Advanced cell-based approaches include high-content screening (HCS) with multiparametric imaging, reporter gene assays, and pathway-specific biosensors that provide spatial and temporal information about compound effects [28].

Quantitative Comparison of Performance Characteristics

Table 1: Comparative Performance of Biochemical and Cell-Based Assays in HTS

| Parameter | Biochemical Assays | Cell-Based Assays |

|---|---|---|

| Throughput | Very high (up to 100,000 compounds/day) [7] | Moderate to high (dependent on complexity) [25] |

| Cost per Compound | Lower (miniaturized formats, simpler reagents) | Higher (cell culture expenses, complex detection) |

| Biological Relevance | Lower (isolated system) | Higher (cellular context, pathway integration) [29] |

| False Positive Rate | Variable (assay interference common) [7] | Generally lower (biological filters apply) |

| Z' Factor | Typically >0.7 (robust) | Typically 0.4-0.7 (more variable) [30] |

| Information Content | Target engagement only | Includes permeability, toxicity, functional activity [29] |

| Automation Compatibility | Excellent (homogeneous formats available) | Good (requires sterile conditions, variable incubation times) |

| Primary Applications | Enzyme inhibitors, receptor antagonists, binding studies | Functional modulators, phenotypic screening, toxicology [30] |

Target-Specific Format Recommendations

Table 2: Optimal Assay Format Selection for Different Target Classes

| Target Class | Recommended Format | Rationale | Example Methods |

|---|---|---|---|

| Kinases | Biochemical for primary screening | Direct measurement of enzymatic inhibition; well-established robust assays | TR-FRET, FP, radiometric [28] |

| GPCRs | Cell-based for functional screening | Assessment of signaling in physiological context; detection of allosteric modulators | Reporter gene, second messenger (cAMP, Ca2+), biosensors [25] |

| Ion Channels | Cell-based for functional effects | Measurement of channel activity and electrophysiological consequences | FLIPR, electrophysiology, thallium flux [28] |

| Protein-Protein Interactions | Combination approach | Biochemical for direct binders; cell-based for functional consequences | FRET, SPR (biochemical); two-hybrid, split-luciferase (cellular) [25] |

| Epigenetic Targets | Biochemical for primary screening | Direct assessment of enzymatic activity on substrates | TR-FRET, fluorescence-based, ALPHAscreen [7] |

| Undefined Targets | Phenotypic cell-based | Target-agnostic approach focusing on functional outcomes | High-content imaging, reporter genes, viability [31] |

Experimental Protocols

Protocol 1: Biochemical HDAC Inhibitor Screening Assay

Principle: This fluorescence-based assay detects histone deacetylase (HDAC) inhibition using a fluorogenic substrate that becomes fluorescent upon deacetylation and developer treatment [29]. The protocol utilizes the FLUOR DE LYS platform for high-throughput compatibility.

Workflow Diagram:

Step-by-Step Procedure:

- Plate Preparation: Dispense 10 μL of test compounds (from chemogenomic library) or controls into black 384-well microplates using automated liquid handling. Include positive controls (known HDAC inhibitors) and negative controls (DMSO only).

- Enzyme Addition: Add 10 μL of purified HDAC enzyme (diluted in assay buffer to appropriate concentration) to all wells except background controls.

- Substrate Addition: Add 5 μL of FLUOR DE LYS substrate solution (prepared according to manufacturer's specifications) to all wells using multidispense capability.

- Enzymatic Reaction: Incubate plates for 60-90 minutes at 25°C to allow deacetylation reaction to proceed. The deacetylated substrate becomes sensitized for developer reaction.

- Developer Addition: Add 10 μL of FLUOR DE LYS developer solution containing trichostatin A to stop the enzymatic reaction and initiate fluorescence development.

- Signal Development: Incubate plates for 30 minutes at 25°C to allow full fluorescence development.

- Detection: Measure fluorescence intensity using a plate reader with excitation at 355 nm and emission at 460 nm.

- Data Analysis: Calculate percentage inhibition relative to controls and determine IC50 values using appropriate curve-fitting algorithms.

Validation Parameters:

- Z' factor >0.6 using control inhibitors

- Signal-to-background ratio >5:1

- Coefficient of variation <10% for control wells

Protocol 2: Cell-Based GPCR Activation Assay

Principle: This protocol utilizes a biosensor approach to monitor G-protein coupled receptor (GPCR) activation by measuring intracellular second messenger accumulation or reporter gene expression [25]. The example describes a cAMP accumulation assay for Gαs-coupled receptors.

Workflow Diagram:

Step-by-Step Procedure: