High-Throughput Phenotypic Screening Compound Annotation: Strategies, Successes, and Future Directions

This article provides a comprehensive overview of modern approaches for annotating compounds identified through high-throughput phenotypic screening (HTS).

High-Throughput Phenotypic Screening Compound Annotation: Strategies, Successes, and Future Directions

Abstract

This article provides a comprehensive overview of modern approaches for annotating compounds identified through high-throughput phenotypic screening (HTS). Aimed at researchers and drug development professionals, it explores the foundational principles distinguishing phenotypic from target-based discovery, details advanced methodological frameworks including high-content imaging and automated flow cytometry, addresses key challenges in hit validation and target deconvolution, and evaluates comparative strategies for data integration and analysis. By synthesizing recent successes and technological advancements, this resource serves as a practical guide for leveraging phenotypic screening to expand druggable target space and accelerate the discovery of first-in-class therapeutics.

Rediscovering Phenotypic Screening: Core Principles and Resurgence in Drug Discovery

The process of modern drug discovery is primarily built upon two distinct screening paradigms: target-based and phenotypic screening. These strategies represent fundamentally different approaches to identifying new therapeutic compounds. Target-based discovery is a hypothesis-driven approach that focuses on modulating a specific, known molecular target, such as a protein, enzyme, or receptor, implicated in a disease process [1]. In contrast, phenotypic discovery is an empirical approach that observes the overall effects of compounds on cells, tissues, or whole organisms without requiring prior knowledge of specific molecular targets [2] [3].

The strategic choice between these paradigms has significant implications for drug discovery outcomes. A landmark analysis revealed that between 2000 and 2008, phenotypic approaches were responsible for generating 28 first-in-class small molecule medicines, compared to 17 from target-based strategies [4]. This surprising finding sparked renewed interest in phenotypic screening within the pharmaceutical industry, though both approaches continue to play complementary roles in modern drug development [5].

Paradigm Comparison: Core Principles and Strategic Applications

The following table summarizes the fundamental characteristics, advantages, and challenges of each drug discovery paradigm:

Table 1: Comparative Analysis of Phenotypic and Target-Based Drug Discovery Approaches

| Aspect | Phenotypic Discovery | Target-Based Discovery |

|---|---|---|

| Fundamental Principle | Observes effects on whole biological systems; target-agnostic [3] | Focuses on modulation of a specific, predefined molecular target [1] |

| Screening Context | Cells, tissues, or whole organisms with disease-relevant biology [4] | Isolated proteins or simplified cellular systems [1] |

| Key Advantage | Identifies novel mechanisms; captures biological complexity; successful for first-in-class medicines [2] [4] | High efficiency and throughput; precise optimization; streamlined mechanism of action [1] |

| Primary Challenge | Resource-intensive; complex target deconvolution; optimization without known target [1] | Requires deep understanding of disease biology; risk of target validation failures [1] |

| Ideal Application | Diseases with poorly understood mechanisms; seeking novel biology; complex pathophysiology [1] [6] | Well-validated targets; structure-based drug design; repurposing opportunities [1] |

| Mechanism of Action | Identified after compound discovery (target deconvolution) [2] | Known before compound discovery [1] |

| Notable Examples | Artemisinin (malaria), lithium (bipolar disorder) [1] | Imatinib (CML), trastuzumab (HER2+ breast cancer) [1] |

Quantitative Success Metrics and Historical Impact

The comparative productivity of these approaches has been quantitatively assessed in several analyses:

Table 2: Success Rates and Output Metrics of Discovery Paradigms

| Metric | Phenotypic Discovery | Target-Based Discovery |

|---|---|---|

| First-in-Class Medicines (2000-2008) | 28 drugs [4] | 17 drugs [4] |

| Target Validation Requirement | Not required initially | Essential prerequisite |

| Chemical Optimization Path | Can be challenging without target knowledge [1] | Highly precise with known target [1] |

| Attrition Risk Factors | Toxicity from unknown mechanisms; optimization challenges [1] | Incorrect target hypothesis; poor translation to complex systems [1] |

| Regulatory Approval Precedent | Possible without full mechanism (e.g., lithium, aspirin) [1] | Typically requires extensive target validation |

Experimental Protocols and Methodologies

Protocol 1: High-Content Phenotypic Screening Workflow

This protocol outlines the implementation of a high-content phenotypic screen using live-cell imaging to classify compounds across multiple drug classes [7].

Principle: Utilize optimal reporter cell lines (ORACLs) whose phenotypic profiles accurately classify training drugs across multiple mechanistic classes in a single-pass screen [7].

Materials and Reagents:

- Triply-labeled A549 reporter cell lines (or other disease-relevant lines)

- pSeg plasmid for cell segmentation (mCherry for cytoplasm, H2B-CFP for nucleus)

- Central Dogma (CD)-tagged proteins with YFP for various cellular pathways

- Compound library with appropriate controls (DMSO vehicle)

- Live-cell imaging medium

- 384-well microplates for high-throughput screening

- High-content imaging system with environmental control

Procedure:

- Cell Culture and Plating:

- Maintain triply-labeled reporter cells in appropriate culture conditions.

- Plate cells in 384-well microplates at optimized density for 48-hour growth.

- Allow cells to adhere for 24 hours before compound addition.

Compound Treatment:

- Transfer compound library using liquid handling systems.

- Include DMSO vehicle controls and known reference compounds for each mechanistic class.

- Use appropriate concentration ranges (typically 1 nM-10 μM) in duplicate or triplicate.

Live-Cell Imaging:

- Place plates in environmentally controlled imaging system (37°C, 5% CO₂).

- Acquire images every 12 hours for 48 hours using automated microscopy.

- Capture multiple fields per well to ensure adequate cell numbers for statistical analysis.

Image Analysis and Feature Extraction:

- Segment individual cells using nuclear and cytoplasmic markers.

- Extract ~200 morphological and protein expression features (size, shape, intensity, texture, localization).

- Perform quality control to remove artifacts or poorly segmented cells.

Phenotypic Profile Generation:

- For each feature, calculate Kolmogorov-Smirnov (KS) statistics comparing compound-treated vs. DMSO control distributions.

- Concatenate KS scores across all features to generate phenotypic profile vectors.

- Repeat for multiple time points and compound concentrations as needed.

Compound Classification:

- Compute similarity metrics between compound profiles and reference drug classes.

- Use machine learning classifiers (k-nearest neighbors, random forest, SVM) to assign mechanistic annotations.

- Validate predictions through orthogonal assays for top hits.

Protocol 2: Target-Based Screening Cascade

Principle: Identify compounds that modulate the activity of a specific, predefined molecular target through biochemical and cellular assays [1].

Materials and Reagents:

- Purified target protein (enzyme, receptor)

- Biochemical assay reagents (substrates, cofactors, detection systems)

- Cell lines overexpressing the target protein

- Counter-screen targets for selectivity assessment

- Compound library in DMSO stock solutions

- High-throughput screening microplates (1536-well or 384-well)

- Detection instrumentation (plate readers, FLIPR)

Procedure:

- Biochemical Primary Screening:

- Develop optimized assay conditions for target protein (buffer, pH, ionic strength).

- Implement robust high-throughput screening (≥100,000 compounds) at single concentration.

- Use appropriate signal window (Z' > 0.5) and controls for quality assurance.

Hit Confirmation:

- Retest primary hits in concentration-response (8-point, 1:3 serial dilution).

- Confirm dose-dependent activity and calculate IC₅₀/EC₅₀ values.

- Exclude promiscuous or non-specific compounds using additional assay metrics.

Cellular Target Engagement:

- Develop cellular assay measuring target modulation (phosphorylation, reporter gene, second messenger).

- Evaluate compound activity in relevant cellular context.

- Assess membrane permeability and potential efflux issues.

Selectivity Profiling:

- Screen against related target family members (e.g., kinase panels, GPCR arrays).

- Identify selective compounds with minimal off-target activity.

- Use computational models to predict potential off-target interactions.

Mechanism of Action Studies:

- Perform binding assays (SPR, ITC) to determine affinity and kinetics.

- Conduct structural studies (crystallography, Cryo-EM) for rational optimization.

- Validate target-specific cellular effects using genetic approaches (RNAi, CRISPR).

Signaling Pathways and Experimental Workflows

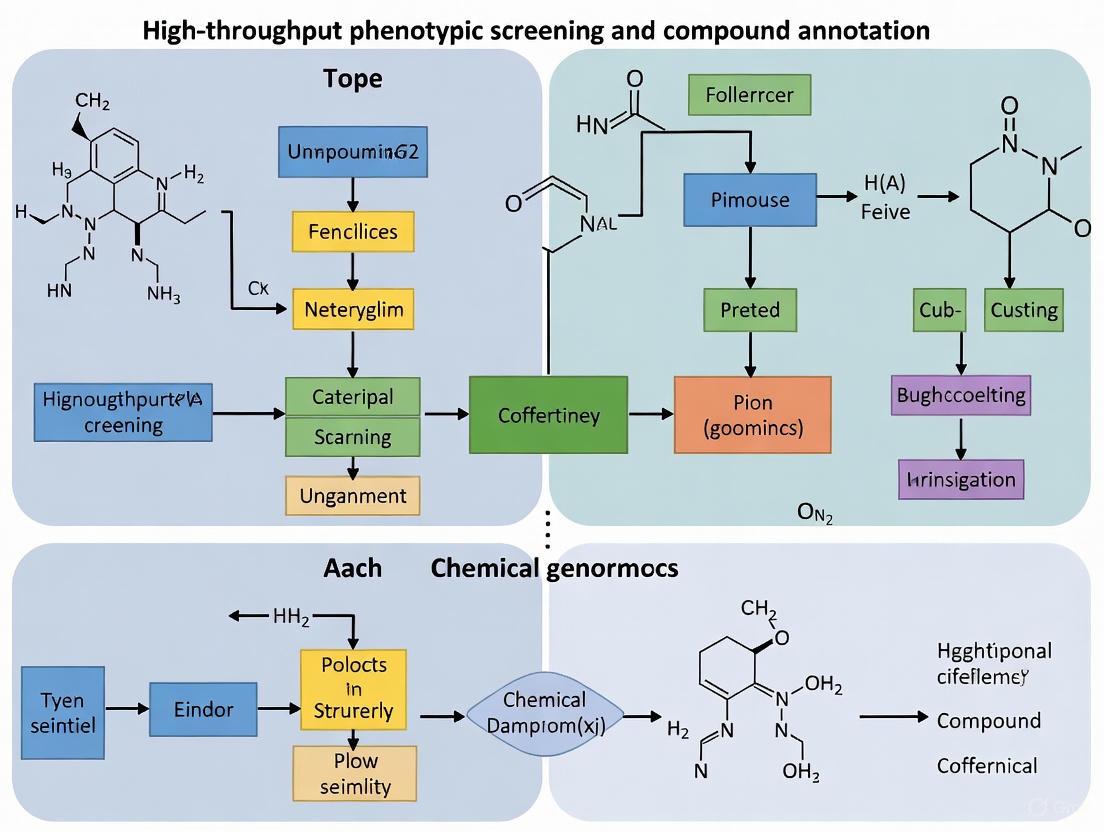

The following diagrams illustrate the fundamental workflows for both phenotypic and target-based drug discovery approaches:

Diagram 1: Phenotypic Screening Workflow

Diagram 2: Target-Based Screening Workflow

The Scientist's Toolkit: Essential Research Reagents and Solutions

The following table details key reagents and materials essential for implementing both phenotypic and target-based screening approaches:

Table 3: Essential Research Reagents for Drug Discovery Screening

| Reagent/Material | Function/Purpose | Application Context |

|---|---|---|

| Reporter Cell Lines | Express fluorescent tags for cellular and protein localization; enable live-cell imaging [7] | Phenotypic Screening |

| CD-Tagging System | Genomic labeling of endogenous proteins with YFP while preserving function [7] | Phenotypic Profiling |

| pSeg Plasmid System | Expresses mCherry (cytoplasm) and H2B-CFP (nucleus) for automated cell segmentation [7] | High-Content Imaging |

| Chemical Libraries | Diverse collections of compounds for screening; includes diversity-oriented synthesis compounds [8] | Both Approaches |

| Patient-Derived Cells | Primary cells from patients that maintain disease-relevant biology [4] | Phenotypic Screening (Relevant Models) |

| Purified Target Proteins | Isolated proteins for biochemical assay development; recombinant or native forms [1] | Target-Based Screening |

| High-Content Imaging Systems | Automated microscopy platforms for multi-parameter cellular analysis [7] [9] | Phenotypic Screening |

| CRISPR/Cas9 Tools | Gene editing for target validation and generation of disease models [4] | Both Approaches |

| Optimal Reporter Cell Lines (ORACL) | Reporter lines selected for optimal classification of compounds across drug classes [7] | Phenotypic Screening |

Emerging Technologies and Future Directions

The field of drug discovery is evolving with new technologies that bridge both phenotypic and target-based approaches. Pharmacotranscriptomics-based drug screening (PTDS) has emerged as a third class of drug screening that detects gene expression changes following drug perturbation [10]. Artificial intelligence is becoming a core driver powering the advancement of PTDS, enabling analysis of drug-regulated gene sets, signaling pathways, and complex disease mechanisms [10] [9].

The integration of phenotypic data with multi-omics approaches (transcriptomics, proteomics, metabolomics) and AI represents the future of drug discovery [9]. This integrated approach allows researchers to start with biological complexity, add molecular depth through omics technologies, and use computational algorithms to reveal patterns that would be difficult to detect through single-dimensional approaches [9]. Platforms like PhenAID demonstrate how AI can integrate cell morphology data with omics layers to identify phenotypic patterns correlating with mechanism of action, efficacy, and safety [9].

These technological advances are particularly valuable for studying complex diseases like Alzheimer's, where phenotypic screening offers opportunities to uncover novel therapeutic mechanisms beyond single-target approaches that have historically shown limited success [1]. As these integrated approaches mature, they promise to enhance the efficiency and success rates of both phenotypic and target-based drug discovery paradigms.

Phenotypic Drug Discovery (PDD) has experienced a major resurgence following the observation that a majority of first-in-class medicines between 1999 and 2008 were discovered empirically without a predefined drug target hypothesis [11]. Modern PDD is defined by its focus on modulating a disease phenotype or biomarker rather than a pre-specified target to provide therapeutic benefit, serving as an accepted discovery modality in both academia and the pharmaceutical industry [11]. This approach has consistently demonstrated a disproportionate ability to deliver first-in-class drugs with novel mechanisms of action, challenging reductionist target-based strategies that dominated drug discovery in recent decades [11] [12]. The resurgence reflects a renewed appreciation for the complexities of disease physiology and the limitations of focusing exclusively on single molecular targets with well-validated hypotheses.

The power of PDD lies in its target-agnostic, biology-first strategy that provides tool molecules to link therapeutic biology to previously unknown signaling pathways, molecular mechanisms, and drug targets [11]. Unlike Target-Based Drug Discovery (TDD), which relies on an established causal relationship between a molecular target and disease state, PDD employs chemical interrogation of disease-relevant biological systems without preconceived notions of target engagement [11]. This empirical approach has expanded the "druggable target space" to include unexpected cellular processes and revealed new classes of drug targets that would likely have been missed through purely target-based approaches [11]. As drug discovery faces challenges with productivity and the need for innovative therapies, PDD offers a powerful complementary approach to traditional methods.

Quantitative Analysis of PDD Success in First-in-Class Drug Discovery

An analysis of recent drug discoveries reveals the significant contribution of phenotypic approaches to first-in-class medicines. The following table summarizes key approved or clinical-stage compounds originating from phenotypic screens, demonstrating the breadth of therapeutic areas and novel mechanisms enabled by this approach.

Table 1: Notable First-in-Class Medicines Discovered Through Phenotypic Screening

| Drug/Compound | Therapeutic Area | Key Molecular Target/Mechanism | Novel Aspect of Target or Mechanism |

|---|---|---|---|

| Ivacaftor, Tezacaftor, Elexacaftor [11] | Cystic Fibrosis | CFTR channel gating and folding | Identified correctors that enhance CFTR folding and trafficking - an unexpected mechanism |

| Risdiplam, Branaplam [11] | Spinal Muscular Atrophy | SMN2 pre-mRNA splicing | Modulates pre-mRNA splicing by stabilizing U1 snRNP complex - unprecedented drug target |

| SEP-363856 [11] | Schizophrenia | Unknown (TAAR1 and 5-HT1A likely involved) | Discovered without targeting dopamine or serotonin receptors directly |

| Lenalidomide [11] | Multiple Myeloma | Cereblon E3 ubiquitin ligase | Redirects ubiquitin ligase activity - novel mechanism only elucidated post-approval |

| Daclatasvir [11] | Hepatitis C | NS5A protein | Target has no known enzymatic function - importance discovered through phenotypic screening |

| KAF156 [11] | Malaria | Unknown (cycloalkylcarboxamide group) | New chemotype with unknown target effective against resistant malaria |

| Crisaborole [11] | Atopic Dermatitis | Phosphodiesterase-4 (PDE4) | Identified through phenotypic screening despite known target |

The disproportionate success of PDD in generating first-in-class therapies stems from its ability to address the incompletely understood complexity of diseases [12]. Between 1999 and 2008, phenotypic screening approaches were responsible for a majority of first-in-class drugs, highlighting its potential for innovative therapeutic discovery [11]. This success rate has prompted a re-evaluation of drug discovery strategies across the industry and stimulated renewed investment in phenotypic approaches despite their unique challenges.

The expansion of "druggable" target space through PDD represents one of its most significant contributions [11]. Successful phenotypic campaigns have revealed unexpected cellular processes as viable therapeutic targets, including pre-mRNA splicing, target protein folding, trafficking, translation, and degradation [11]. These processes were not previously considered druggable through conventional target-based approaches. Furthermore, PDD has revealed novel mechanisms of action for traditional target classes and unveiled entirely new classes of drug targets such as bromodomains, pseudokinases, and regulatory proteins without enzymatic activity [11].

Key Methodologies and Experimental Protocols in Modern PDD

Core Principles of Phenotypic Screening Design

Modern phenotypic screening employs carefully designed experimental systems that balance physiological relevance with practical screening considerations. The "rule of 3" provides a framework for predictive phenotypic assays, emphasizing three key characteristics: a measurable output that is clinically relevant, a system with cellular and architectural complexity, and a stimulus that reflects disease pathophysiology [12]. This framework ensures that phenotypic screens maintain strong connections to human disease biology while remaining feasible for implementation in screening environments.

Critical to success is the establishment of a "chain of translatability" that connects the phenotypic endpoint measured in the screening system to clinically relevant outcomes in human disease [12]. This requires careful consideration of the disease model system, the phenotypic endpoints measured, and their relationship to the human disease pathophysiology. The chain of translatability strengthens the predictive value of phenotypic screens and increases the likelihood that hits identified in screening will demonstrate efficacy in clinical settings.

Protocol: Implementation of a Phenotypic Screen for Novel Therapeutic Discovery

Objective: Identify novel compounds that modulate a disease-relevant phenotype without preconceived target hypotheses.

Materials and Reagents:

- Disease-relevant cellular model: Primary cells, iPSC-derived cells, or engineered cell lines that recapitulate key disease features

- Compound library: Diverse chemical libraries including known bioactives, FDA-approved drugs, and novel synthetic compounds

- Phenotypic readout system: High-content imaging, transcriptomic profiling, or functional metabolic assays

- Validation tools: CRISPR-based functional genomics tools, target-specific pharmacological inhibitors

Procedure:

- Disease Model Establishment (Timeline: 2-4 weeks)

- Select or engineer a cellular system that faithfully recapitulates key aspects of human disease pathophysiology

- Validate the model system using known disease-relevant perturbations and positive control compounds

- Optimize assay conditions for robustness and reproducibility using Z'-factor calculations (>0.5 acceptable)

Primary Screening (Timeline: 1-2 weeks)

- Screen compound libraries at appropriate concentrations (typically 1-10 μM) in disease-relevant models

- Include appropriate controls (positive, negative, vehicle) on each plate

- Implement quality control metrics to identify and exclude problematic assays

Hit Confirmation (Timeline: 2-3 weeks)

- Retest primary hits in dose-response format to confirm activity and determine potency (EC50/IC50)

- Assess compound toxicity in parallel to identify selective phenotypic modulators

- Exclude promiscuous or non-specific compounds using counter-screens

Mechanistic Exploration (Timeline: 4-8 weeks)

- Employ multi-parameter profiling to characterize the nature of phenotypic changes

- Utilize chemoproteomic, genetic, or biochemical approaches for target identification

- Apply functional genomics (CRISPR screens) to identify genes that modify compound activity

Troubleshooting:

- Poor assay robustness may require model system re-engineering or alternative readout selection

- High hit rates may necessitate more stringent hit selection criteria or additional orthogonal assays

- Lack of dose-response may indicate non-specific compound effects or assay limitations

Diagram 1: PDD Experimental Workflow

Protocol: Target Deconvolution for Phenotypic Hits

Objective: Identify the molecular target(s) responsible for observed phenotypic effects of confirmed hits.

Materials and Reagents:

- Chemical probes: Biotinylated or photoaffinity analogs of active compounds

- Omics technologies: RNA sequencing, proteomic profiling, or cellular painting assays

- Genetic tools: CRISPR knockout/activation libraries, siRNA collections

- Interaction mapping: Affinity purification reagents, mass spectrometry systems

Procedure:

- Chemical Biology Approaches (Timeline: 4-6 weeks)

- Design and synthesize affinity-based probes from active compound scaffolds

- Perform pull-down experiments with active and inactive probe analogs

- Identify specifically bound proteins using quantitative mass spectrometry

Functional Genomics (Timeline: 3-5 weeks)

- Conduct genome-wide CRISPR screens to identify genes essential for compound activity

- Perform overexpression screens to identify genes that confer resistance

- Validate candidate targets using orthogonal approaches (RNAi, CRISPRi/a)

Multi-omics Profiling (Timeline: 2-4 weeks)

- Generate transcriptomic, proteomic, or epigenomic profiles of compound-treated cells

- Compare profiles to reference databases of compounds with known mechanisms

- Apply bioinformatic approaches to infer potential mechanisms of action

Mechanistic Validation (Timeline: 4-8 weeks)

- Engineer cellular systems with altered candidate target expression/function

- Assess correlation between target modulation and phenotypic effects

- Determine binding affinity and direct engagement using biophysical methods

Troubleshooting:

- Redundant targets may require combinatorial genetic approaches

- Polypharmacology may complicate identification of therapeutically relevant targets

- Weak compound-target interactions may necessitate more sensitive detection methods

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of phenotypic screening requires carefully selected reagents and tools that enable biologically relevant assessment of compound activity. The following table outlines key research reagent solutions essential for modern PDD campaigns.

Table 2: Essential Research Reagents for Phenotypic Drug Discovery

| Reagent Category | Specific Examples | Function in PDD |

|---|---|---|

| Disease Modeling Systems | iPSC-derived cells, organoids, primary cell co-cultures | Provide physiologically relevant systems for phenotypic assessment |

| Phenotypic Readout Technologies | High-content imaging, live-cell metabolic assays, single-cell RNA sequencing | Enable multiparameter assessment of compound effects on disease phenotypes |

| Compound Libraries | Diverse small molecules, fragment libraries, macrocycles, covalent inhibitors | Provide chemical starting points for phenotypic screening |

| Functional Genomics Tools | CRISPR knockout libraries, inducible expression systems, degron technologies | Facilitate target identification and validation |

| Bioanalytical Platforms | Affinity purification reagents, activity-based probes, chemoproteomic platforms | Support target deconvolution and mechanism of action studies |

| Pathway Reporting Systems | Biosensors, pathway-specific reporter gene constructs | Enable monitoring of specific pathway modulation in complex systems |

The selection of appropriate disease models represents perhaps the most critical reagent choice in PDD [11]. Modern approaches increasingly utilize complex model systems including induced pluripotent stem cell (iPSC)-derived cells, organoids, and co-culture systems that better recapitulate human disease biology [11] [12]. These systems provide the cellular and architectural complexity necessary for detecting therapeutically relevant phenotypes while maintaining feasibility for screening applications.

Advanced readout technologies represent another essential component of the phenotypic screening toolkit [11]. High-content imaging, live-cell metabolic monitoring, and single-cell omics approaches enable rich characterization of compound effects on disease-relevant phenotypes [12]. These technologies move beyond single-parameter assessments to provide multiparameter profiles of compound activity, facilitating both hit identification and early mechanistic classification.

Integration of Artificial Intelligence and Advanced Technologies

The application of artificial intelligence and machine learning represents a transformative development in phenotypic drug discovery [13] [14]. AI approaches are being deployed across multiple aspects of PDD, from experimental design and image analysis to target prediction and compound optimization [13]. The integration of multimodal data—including imaging, transcriptomic, proteomic, and chemical information—enables more sophisticated pattern recognition and prediction of compound activity in complex biological systems [14].

AI and machine learning partnerships with large-scale data generation are transforming biotechnology and pharma, particularly in drug discovery [14]. From generative AI to unlock novel drug candidates to virtual cells that glean insights across multimodal biology, the field is witnessing an exponential curve of AI innovation that is poised to enhance and potentially overhaul the design and validation of novel therapeutics [14]. These approaches are particularly valuable for PDD, where the complexity of data often exceeds human analytical capacity.

At the regulatory level, the FDA has established initiatives like the AI Council and AI Review Rapid Response Team to address the growing use of AI in drug development [13]. Regulatory scientists are developing expertise in evaluating AI-enabled approaches, including their application to phenotypic screening and target identification [13]. This regulatory evolution is critical for ensuring that innovative AI-powered PDD approaches can successfully transition to approved therapies.

Diagram 2: AI in Phenotypic Screening

Phenotypic Drug Discovery has re-established itself as a powerful approach for identifying first-in-class medicines with novel mechanisms of action [11]. Its resurgence reflects a growing recognition that reductionist target-based approaches, while valuable, cannot address all therapeutic needs—particularly for complex, polygenic diseases with incompletely understood biology [11] [12]. The disproportionate contribution of PDD to innovative therapies highlights its continued importance in the drug discovery landscape.

The future of PDD will be shaped by several converging trends, including the development of more physiologically relevant model systems, advances in AI and machine learning, and improved approaches for target deconvolution [11] [14]. These developments will address current challenges in phenotypic screening while enhancing its predictive value and efficiency. Furthermore, the growing appreciation for polypharmacology—once viewed as a liability but now recognized as a potential advantage for certain disease contexts—aligns well with the target-agnostic nature of phenotypic approaches [11].

As drug discovery continues to evolve, PDD will likely remain an essential component of a balanced research strategy that combines the strengths of both phenotypic and target-based approaches [12]. Its unique ability to reveal unexpected biology and deliver first-in-class therapies ensures that phenotypic screening will continue to drive innovation in pharmaceutical research, particularly when applied to diseases with high unmet need and incomplete biological understanding. The ongoing challenge for researchers will be to strategically deploy PDD where its strengths can be maximized while continuing to develop technologies that address its historical limitations.

Biological Models for Phenotypic Screening

The choice of a biological model is the foundational step in phenotypic screening, as it determines the physiological relevance and translational potential of the findings. Models range from simple 2D cell cultures to complex whole organisms [15].

Table 1: Comparison of Biological Models Used in Phenotypic Screening

| Model Type | Throughput | Physiological Relevance | Key Applications | Examples |

|---|---|---|---|---|

| 2D Cell Cultures | High | Low | Basic functional assays, cytotoxicity screening | A549 cells, H9C2 cells, J774 cells [16] |

| 3D Organoids & Spheroids | Medium | High | Cancer research, neurological disease, tissue architecture [15] | Patient-derived organoids |

| iPSC-Derived Models | Medium | High | Patient-specific drug screening, disease modeling [15] | iPSC-derived cardiomyocytes, neurons |

| Zebrafish Embryos | Medium-High | Medium-High | Neuroactive drug screening, toxicology, cardiovascular development [16] [15] | Gridlock mutant embryos for aortic coarctation [16] |

| Rodent Models | Low | High | Pharmacodynamics, pharmacokinetics, systemic effects [15] | Disease-specific in vivo models |

The following workflow outlines a generalized protocol for initiating a phenotypic screen, from model selection to hit identification:

Figure 1: Generalized Phenotypic Screening Workflow

Protocol: Cell-Based Phenotypic Screening Using a Viability Assay

Purpose: To identify compounds that alter cell viability in a disease-relevant cell model. Materials:

- Biological model (e.g., J774 macrophage foam cells, H9C2 cardiomyocytes) [16]

- Phenotypic Screening Library (e.g., 5,760-compound library from Enamine) [17]

- 384-well or 1536-well microplates

- Robotic liquid-handling device

- High-content imaging system or plate reader

Procedure:

- Cell Seeding: Seed cells into 384-well microtiter plates at a density optimized for confluency after the assay duration (e.g., 3,000-5,000 cells per well for adherent lines) [16].

- Compound Treatment: Using a robotic liquid handler, transfer individual compounds from the chemical library to assigned wells. Include DMSO-only wells as negative controls and wells with a reference cytotoxic compound as positive controls [16] [17].

- Incubation: Incubate the plates under standard cell culture conditions (e.g., 37°C, 5% CO2) for a predetermined period (e.g., 48-72 hours).

- Viability Assay: Add a cell viability reagent such as MTT and incubate for 2-4 hours. Measure the resulting signal using a microtiter plate reader [16].

- Data Acquisition: Read the plates using an appropriate detector (e.g., spectrophotometer for absorbance).

- Hit Identification: Normalize data using the "Z score" or "B score" method to correct for plate-to-plate variability and positional effects. Compounds exhibiting a statistically significant change in viability (e.g., Z score > 3) are considered primary hits [16].

Chemical Libraries for Phenotypic Discovery

The chemical library is a critical variable, as its composition directly influences the biological space that can be probed. Libraries for phenotypic screening are designed for maximal chemical and biological diversity to increase the probability of identifying novel mechanisms of action [17] [18] [19].

Table 2: Commercially Available Phenotypic Screening Libraries

| Library Name (Vendor) | Compound Count | Key Design Features | Includes Annotated Bioactives |

|---|---|---|---|

| Phenotypic Screening Library (Enamine) [17] | 5,760 | Balanced biological & structural diversity; includes approved drugs & potent inhibitors | Yes (≥2,000 compounds) |

| Phenotypic Screening Library (Otava) [18] | 5,000 | Maximal chemical space coverage; based on approved drugs & bioactive templates | Yes |

| BioDiversity Phenotypic Library (Life Chemicals) [19] | 15,900 | Prioritizes bioactivity diversity; includes natural product-like compounds | Yes (6,300+ compounds) |

| ChemDiversity Phenotypic Library (Life Chemicals) [19] | 7,600 | Optimized for structural diversity; lead-like and drug-like compounds | No |

Protocol: Library Management and Screening Preparation

Purpose: To prepare and quality-control a chemical library for a high-throughput phenotypic screen. Materials:

- Chemical library in DMSO (e.g., 10 mM stock solutions)

- Echo-qualified low-dead-volume (LDV) microplates (e.g., 384-well or 1536-well format)

- Acoustic liquid handler (e.g., Echo)

Procedure:

- Library Formatting: Obtain the library pre-plated in a screening-compatible format. A typical format is 10 mM DMSO solutions in 384-well, Echo Qualified LDV microplates, with the first and last two columns empty for controls [17].

- Compound Transfer: Use an acoustic liquid handler to transfer nanoliter volumes of compounds from the source library plates to assay plates containing the biological model. This ensures precise, contact-less delivery.

- Control Setup: Fill the empty perimeter wells of the assay plate with appropriate negative (e.g., DMSO) and positive control compounds.

- Liquid Handling: Use robotic liquid-handling devices for all subsequent reagent additions to ensure consistency and throughput [16].

Detection Readouts and Target Deconvolution

Modern phenotypic screens employ a variety of readout technologies to capture complex biological information. The choice of readout must align with the phenotypic question being asked.

Readout Technologies

- High-Content Imaging: Uses automated microscopy to capture multicolor fluorescence images of cells, quantifying changes in morphology, protein localization, and cell number [15]. The Cell Painting assay is a prominent example that uses multiple fluorescent dyes to label various cellular components, generating rich morphological profiles [20].

- Transcriptomic Profiling: Measures gene expression changes across thousands of genes in response to compound treatment. The L1000 assay is a high-throughput, low-cost method that directly measures ~1,000 landmark genes and infers the rest [20].

- Luciferase Reporter Assays: Utilize genes encoding luciferase under the control of a pathway-specific promoter (e.g., ABCA1 promoter for cholesterol efflux) [16]. Activation of the pathway leads to luminescence, which is easily quantified with a plate reader.

- Visual Inspection: Used in whole-organism screens (e.g., zebrafish) to identify morphological or developmental abnormalities. While labor-intensive, it can be automated with advanced imaging [16].

Protocol: High-Content Imaging for a Phenotypic Screen

Purpose: To quantify changes in cell morphology and fluorescence intensity using high-content imaging. Materials:

- Cells plated in 384-well imaging plates

- Fixative (e.g., 4% paraformaldehyde)

- Permeabilization buffer (e.g., 0.1% Triton X-100)

- Fluorescent dyes or antibodies (e.g., for nuclei, actin, mitochondria)

- High-content imaging system (e.g., ImageXpress)

Procedure:

- Cell Fixation and Staining: After compound treatment, fix cells with 4% paraformaldehyde for 15 minutes. Permeabilize with 0.1% Triton X-100 and stain with a panel of fluorescent dyes or antibodies to mark cellular structures of interest [20].

- Image Acquisition: Use a high-content imager to automatically acquire multiple images per well across different fluorescence channels using a 20x objective.

- Image Analysis: Extract hundreds of morphological features (e.g., texture, shape, intensity) using software like CellProfiler. These features form a "morphological profile" for each treated well [20].

- Hit Calling: Use machine learning models to compare the profiles of compound-treated wells to controls. Compounds that induce a significant and reproducible phenotypic change are classified as hits.

Target Deconvolution

Once a phenotypic hit is identified, determining its mechanism of action (MoA) is a critical next step. The process of target identification, or deconvolution, can be technically challenging [21] [15].

Figure 2: Target Deconvolution Workflow for Phenotypic Hits

Protocol: Target Identification via Bead/Lysate-Based Affinity Capture [21]

Purpose: To identify the direct protein target(s) of a small molecule hit from a phenotypic screen. Materials:

- Phenotypic hit compound

- Sepharose beads for immobilization

- Cell lysate from the relevant biological model

- Mass spectrometry system

Procedure:

- Compound Immobilization: Covalently link the hit compound to a solid support, such as Sepharose beads. A control bead (e.g., with a structurally similar but inactive compound) should be prepared in parallel.

- Lysate Incubation: Incubate the compound-conjugated beads with cell lysate prepared from the model system used in the primary screen. Allow sufficient time for protein binding.

- Washing and Elution: Wash the beads extensively with buffer to remove non-specifically bound proteins. Elute the specifically bound proteins.

- Protein Identification: Digest the eluted proteins with trypsin and analyze the resulting peptides by liquid chromatography-tandem mass spectrometry (LC-MS/MS).

- Data Analysis: Compare the proteins identified from the hit compound beads to those from the control beads. Proteins significantly enriched with the hit compound are considered candidate targets. A "uniqueness index" can help discriminate true targets from background binders [21].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents and Tools for Phenotypic Screening

| Item | Function/Purpose | Example Vendors/Formats |

|---|---|---|

| Curated Phenotypic Libraries | Provides chemically and biologically diverse compounds for screening; increases hit rate for novel MoAs | Enamine, OTAVAchemicals, Life Chemicals [17] [18] [19] |

| Echo-Qualified Microplates | Enable precise, non-contact transfer of nanoliter volumes of compound solutions via acoustic dispensing | 384-well or 1536-well LDV plates [17] |

| Robotic Liquid Handlers | Automate reagent addition and compound transfer to ensure consistency and enable high-throughput screening | Various manufacturers |

| High-Content Imaging Systems | Automated microscopes for capturing quantitative, multiparametric data on cell morphology and fluorescence | ImageXpress, CellInsight |

| Cell Painting Kits | Standardized fluorescent dye kits for staining multiple organelles to generate rich morphological profiles | Commercial kits available |

| L1000 Assay Kits | High-throughput, low-cost gene expression profiling for transcriptomic-based compound characterization | LINCS Consortium |

| Analysis Software (CellProfiler) | Open-source software for extracting quantitative features from biological images | CellProfiler, ImageJ |

| Affinity Capture Beads | Solid supports for immobilizing small molecules to pull down and identify their direct protein targets | Sepharose, Agarose beads [21] |

Phenotypic Drug Discovery (PDD) is an approach that focuses on the observable traits or phenotype of cells or organisms in response to drug treatment, rather than relying primarily on specific molecular targets [22]. Drugs discovered through this approach may have better therapeutic relevance as they are tested in conditions that closely mimic human disease [22]. This methodology represents a fundamental shift from the traditional target-based approach and has proven particularly effective for discovering first-in-class medicines with novel mechanisms of action, especially for complex, multifactorial diseases [23] [6].

The renewed interest in PDD stems from the recognition that diseases such as cancer, neurodegenerative disorders, and diabetes are often characterized by multifactorial etiologies, necessitating innovative therapeutic strategies that single-target drugs cannot adequately address [23]. PDD offers a pathway to uncover novel therapeutic pathways and expand the diversity of viable drug candidates without predefined molecular biases [22] [23]. The integration of artificial intelligence (AI) and high-throughput screening technologies has further accelerated the potential of PDD by enabling multi-modal data integration and sophisticated analysis of complex biological systems [24].

The PDD Workflow: From Phenotypic Screening to Target Identification

The following diagram illustrates the comprehensive workflow for phenotypic drug discovery, highlighting key stages from system preparation to clinical application:

Diagram 1: Comprehensive PDD Workflow. This workflow outlines the integrated process from biological system establishment to clinical candidate identification, emphasizing the cyclical nature of target discovery and validation.

Key Technological Platforms in Modern PDD

High-Content Imaging and Cell Painting

The Cell Painting assay represents a cornerstone technology in modern PDD, utilizing multiplexed fluorescent dyes to label multiple cellular components and generate rich morphological profiles [22]. This approach allows for the systematic quantification of cellular phenotypes in response to compound treatment, creating distinctive "morphological fingerprints" for different mechanism-of-action classes. The data generated through high-content imaging provides a comprehensive view of compound effects that can be mined using AI and machine learning approaches [22] [6].

Pharmacotranscriptomics in PDD

Pharmacotranscriptomics-based drug screening (PTDS) has emerged as the third major class of drug screening alongside target-based and phenotype-based approaches [10]. This methodology detects gene expression changes following drug perturbation in cells on a large scale and analyzes the efficacy of drug-regulated gene sets, signaling pathways, and complex diseases by combining artificial intelligence [10]. PTDS enables researchers to connect phenotypic changes to transcriptional networks, providing a powerful bridge between traditional PDD and molecular understanding.

AI-Driven Multimodal Integration

Artificial intelligence serves as the core engine for modern PDD, enabling the integration of diverse data modalities including morphological profiles, transcriptomic data, and chemical structures [22] [24]. Models such as PhenoModel utilize dual-space contrastive learning frameworks to effectively connect molecular structures with phenotypic information, creating a foundation for predicting compound activities across multiple biological systems [22]. This AI-driven approach dramatically enhances the efficiency, accuracy, and scalability of active compound discovery compared to traditional methods [24].

Experimental Protocols and Methodologies

Protocol: High-Content Phenotypic Screening Using Cell Painting

Purpose: To identify compounds inducing biologically relevant phenotypic changes in disease-relevant cellular models.

Materials and Reagents:

- U2OS osteosarcoma cells or other disease-relevant cell lines

- Cell Painting staining cocktail:

- 5 μM Hoechst 33342 (nuclei)

- 1 μM MitoTracker Deep Red (mitochondria)

- 1:2000 Phalloidin (Alexa Fluor 488 conjugate, actin cytoskeleton)

- 1:500 Concanavalin A (Alexa Fluor 647 conjugate, endoplasmic reticulum)

- 1:500 Wheat Germ Agglutinin (Alexa Fluor 555 conjugate, Golgi apparatus and plasma membrane)

- Cell culture medium appropriate for cell line

- 384-well imaging-optimized microplates

- Compound libraries for screening

- Formaldehyde solution (3.7% in PBS)

- Permeabilization buffer (0.1% Triton X-100 in PBS)

Procedure:

- Cell Seeding: Seed U2OS cells at optimal density (500-1000 cells/well) in 384-well plates and incubate for 24 hours at 37°C, 5% CO₂.

- Compound Treatment: Treat cells with test compounds at appropriate concentrations (typically 1-10 μM) and include DMSO controls. Incubate for 24-48 hours based on experimental requirements.

- Fixation and Staining:

- Aspirate medium and fix cells with 3.7% formaldehyde for 20 minutes at room temperature.

- Permeabilize cells with 0.1% Triton X-100 for 10 minutes.

- Add Cell Painting staining cocktail and incubate for 60 minutes in the dark.

- Wash twice with PBS and maintain in PBS for imaging.

- Image Acquisition: Acquire images using a high-content imaging system (e.g., ImageXpress Micro Confocal) with 20x or 40x objective. Capture 9-25 fields per well to ensure adequate cell sampling.

- Image Analysis: Extract morphological features using CellProfiler, measuring ~1,500 morphological features per cell. Generate phenotypic profiles for each compound treatment.

- Hit Identification: Apply machine learning algorithms to cluster compounds based on phenotypic profiles and identify novel active compounds.

Protocol: AI-Powered Hit Triage and Mechanism Prediction

Purpose: To prioritize hits from phenotypic screens and predict potential mechanisms of action using multimodal AI approaches.

Materials and Reagents:

- Phenotypic profiles from Cell Painting assay

- Compound chemical structures (SMILES notation)

- Transcriptomic data (RNA-seq) from compound treatments

- Computational resources (GPU-accelerated workstations)

- PhenoModel or similar multimodal AI framework [22]

Procedure:

- Data Preprocessing: Normalize morphological features using z-score normalization. Standardize chemical structures into canonical SMILES format.

- Multimodal Embedding: Process chemical structures, phenotypic profiles, and transcriptomic data through dedicated encoders to generate aligned representations in a shared latent space.

- Similarity Analysis: Calculate cosine similarity between query compounds and reference compounds with known mechanisms of action.

- Mechanism Prediction: Employ k-nearest neighbors algorithm in the multimodal embedding space to predict potential targets and mechanisms of action.

- Pathway Enrichment: Perform gene set enrichment analysis on transcriptomic data to identify signaling pathways modulated by hit compounds.

- Visualization: Generate UMAP projections of the multimodal embedding space to visualize relationship between compounds.

Protocol: Target Deconvolution Using Genetic Validation

Purpose: To experimentally validate predicted targets and establish causal relationships between target engagement and phenotypic outcomes.

Materials and Reagents:

- CRISPR-Cas9 system for gene knockout

- siRNA libraries for gene knockdown

- Antibodies for immunoblotting and immunocytochemistry

- Target-specific chemical probes

- Phenotypic assay reagents

Procedure:

- Genetic Perturbation: Implement CRISPR-Cas9 mediated knockout or siRNA knockdown of predicted target genes in relevant cell models.

- Compound Sensitivity Testing: Treat genetically modified cells with hit compounds and assess changes in phenotypic responses.

- Rescue Experiments: Re-express wild-type and mutant forms of target genes in knockout cells to confirm specificity.

- Biochemical Validation: Perform target engagement assays including cellular thermal shift assay (CETSA) or drug affinity responsive target stability (DARTS).

- Pathway Analysis: Assess downstream signaling pathways through phosphoproteomics or targeted pathway arrays.

Research Reagent Solutions for PDD

Table 1: Essential Research Reagents for Phenotypic Drug Discovery

| Reagent Category | Specific Examples | Function in PDD |

|---|---|---|

| Cell Models | Primary human cells, iPSC-derived cells, 3D organoids, Microphysiological systems | Provide biologically relevant systems for phenotypic assessment that closely mimic human disease [6] |

| Staining Reagents | Cell Painting cocktail, Vital dyes, Organelle-specific fluorescent probes | Enable multiplexed morphological profiling and high-content analysis [22] |

| Compound Libraries | Diverse small molecule collections, Natural product libraries, Targeted chemotypes | Source of chemical perturbations for phenotypic screening [23] |

| Genomic Tools | CRISPR-Cas9 libraries, siRNA collections, cDNA expression vectors | Facilitate target validation and genetic perturbation studies [25] |

| Detection Reagents | High-content imaging reagents, Multiplexed assay kits, Antibody panels | Enable quantification of phenotypic endpoints and pathway activities |

| AI/Computational Tools | PhenoModel, Image analysis pipelines, Multimodal learning frameworks | Support data integration, hit triage, and mechanism prediction [22] [24] |

Case Studies and Applications

Discovery of Novel Cancer Inhibitors

PhenoModel, a multimodal phenotypic drug design foundation model, has demonstrated significant utility in discovering novel potential inhibitors of multiple cancer cells [22]. Building from this model, PhenoScreen was developed and successfully identified several phenotypically bioactive compounds against osteosarcoma and rhabdomyosarcoma cell lines [22]. This approach effectively connected molecular structures with phenotypic information without requiring prior knowledge of specific molecular targets, leading to the identification of novel therapeutic pathways.

Multi-Target Drug Discovery for Complex Diseases

The multi-target drug discovery paradigm represents a pivotal advancement in addressing complex health conditions, and PDD plays a crucial role in this context [23]. Natural products have been particularly valuable in this regard, as they frequently exhibit multi-target activity. For instance, propolis, a natural antioxidant, has shown efficacy in mitigating diabetes-induced testicular injury through its effects on oxidative stress and DNA damage repair [23]. Similarly, the traditional herbal formulation YinChen WuLing Powder (YCWLP) was found to target the SHP2/PI3K/NLRP3 pathway for non-alcoholic steatohepatitis (NASH) treatment, demonstrating how PDD can elucidate complex mechanisms of multi-component therapies [23].

Target Identification for Cognitive Disorders

Mendelian randomization and colocalization analyses have identified 72 druggable genes with causal associations to cognitive performance, providing novel targets for cognitive dysfunction treatment [25]. Notably, both blood and brain expression quantitative trait loci of ERBB3 were negatively associated with cognitive performance, suggesting it as a promising target for cognitive enhancement [25]. This genetic evidence-based approach complements phenotypic screening by prioritizing targets with human genetic validation.

Data Analysis and Interpretation

Quantitative Analysis of PDD Outcomes

Table 2: Performance Metrics of AI-Enhanced Phenotypic Screening Platforms

| Platform Component | Performance Metric | Baseline Performance | AI-Enhanced Performance |

|---|---|---|---|

| Hit Identification | Positive predictive value | 15-25% | 45-60% [24] |

| Mechanism Prediction | Accuracy for novel targets | 20-30% | 65-80% [22] |

| Target Validation | Success rate in confirmatory assays | 25-35% | 55-70% [25] |

| Lead Optimization | Timeline for candidate selection | 18-24 months | 8-12 months [24] |

| Novel Target Discovery | Targets per screening campaign | 0.5-1 | 3-5 [22] |

Signaling Pathways Identified Through PDD

The following diagram illustrates key signaling pathways frequently modulated by compounds identified through phenotypic screening:

Diagram 2: Key Pathways Modulated by Phenotypic Compounds. This diagram illustrates the diverse signaling pathways and biological processes that have been successfully targeted through phenotypic screening approaches, demonstrating the expansion of druggable space.

Phenotypic Drug Discovery represents a powerful approach for expanding the druggable space and identifying novel therapeutic mechanisms. By focusing on phenotypic outcomes in biologically relevant systems, PDD bypasses the limitations of target-centric approaches and enables the discovery of first-in-class medicines for complex diseases [6]. The integration of advanced technologies including high-content imaging, transcriptomic profiling, and artificial intelligence has significantly enhanced the efficiency and success rate of PDD campaigns [22] [24] [10].

The future of PDD will likely involve even greater integration of human-based model systems, including microphysiological systems and patient-derived organoids, to enhance translational relevance [6]. Additionally, the application of multimodal AI frameworks that can simultaneously analyze chemical, phenotypic, and multi-omics data will further accelerate the deconvolution of mechanisms of action and target identification [22] [24]. As these technologies mature, PDD is poised to deliver an expanding pipeline of novel therapeutic agents targeting previously inaccessible biological pathways, ultimately addressing unmet medical needs across a broad spectrum of human diseases.

The shift from target-based to phenotypic screening strategies has been pivotal in developing therapies for complex genetic diseases. This approach, which identifies compounds based on their ability to modify disease-relevant cellular phenotypes rather than interacting with predefined molecular targets, has yielded two of the most transformative success stories in modern medicine: CFTR correctors for cystic fibrosis (CF) and SMN2 splicing modulators for spinal muscular atrophy (SMA). Both cases exemplify how high-throughput phenotypic screening, coupled with sophisticated assay development and medicinal chemistry, can produce effective precision medicines for previously untreatable conditions. The following sections detail the experimental workflows, key reagents, and mechanistic insights that enabled these breakthroughs, providing a framework for researchers pursuing similar strategies for other genetic disorders.

CFTR Correctors: Restoring Protein Function in Cystic Fibrosis

Disease Context and Therapeutic Strategy

Cystic fibrosis is a lethal autosomal recessive disease caused by mutations in the cystic fibrosis transmembrane conductance regulator (CFTR) gene, which codes for an epithelial chloride and bicarbonate channel [26]. The most prevalent mutation, F508del (a deletion of phenylalanine at position 508), is present in approximately 85-90% of CF patients and causes protein misfolding, leading to endoplasmic reticulum retention and degradation [27] [28]. This results in minimal CFTR function at the cell surface. The therapeutic strategy focused on discovering small molecules termed "correctors" that would facilitate proper folding and trafficking of F508del-CFTR to the cell membrane, and "potentiators" that would enhance channel function once at the membrane [27].

Key Experimental Protocol: High-Throughput FRET-Based Screening

Primary Screening Assay for CFTR Modulators

- Objective: Identify small molecules that restore chloride ion flow in F508del-CFTR expressing cells.

- Cell Line: Fischer Rat Thyroid (FRT) cells stably expressing F508del-CFTR, provided by Dr. Michael Welsh's laboratory [27].

- Critical Reagents:

- FRET Sensors: A pair of voltage-sensitive fluorescent dyes developed by Jesús González. The donor dye fluoresces blue, while the acceptor dye fluoresces orange when in close proximity [27].

- Compound Libraries: Diverse chemical libraries screened at a scale of thousands of compounds per day.

- Procedure:

- Plate F508del-CFTR FRT cells in 384-well microplates.

- Load cells with the pair of FRET dyes.

- Treat cells with test compounds and incubate at a lowered temperature (27°C) to permit some F508del-CFTR trafficking to the membrane (for potentiator screening) or at 37°C with corrector pre-treatment (for corrector screening) [27].

- Activate CFTR channel function using forskolin (to increase cAMP) and a phosphodiesterase inhibitor.

- Measure fluorescence emission shifts using a high-throughput plate reader. Chloride efflux causes a positive membrane potential change, leading the acceptor dye to move away from the donor. This reduces energy transfer, decreasing orange emission and increasing blue emission [27].

- Primary Hit Selection: Compounds causing a significant fluorescence shift (Z' factor > 0.5) are selected for secondary screening.

- Throughput: >1 million compounds screened within two years [27].

Secondary Assays and Lead Optimization

- Electrophysiology: Using Using chamber assays on patient-derived bronchial epithelial cells to confirm CFTR-dependent chloride current.

- Biochemical Trafficking Assays: Western blotting to assess the maturation of F508del-CFTR (shift from band B to band C) [27].

- Medicinal Chemistry: Iterative cycles of chemical modification by Vertex chemists, led by Sabine Hadida, to improve compound potency, metabolic stability, and safety profile [27].

The diagram below illustrates the logical workflow and decision points in this screening pipeline.

Key Research Reagents and Solutions

Table 1: Essential Research Tools for CFTR Corrector Development

| Reagent / Solution | Function in Research | Specific Example / Note |

|---|---|---|

| FRET Dye Pairs | Real-time measurement of membrane potential changes resulting from CFTR-mediated chloride efflux. | Proprietary voltage-sensitive dyes from Aurora Biosciences/Vertex [27]. |

| FRT Cell Line | A standardized epithelial cell model for high-throughput screening. | Engineered to stably express F508del-CFTR [27]. |

| Patient-Derived Bronchial Epithelial Cells | A physiologically relevant secondary validation system. | Cells obtained from CF patients during lung transplants; grown as monolayers at air-liquid interface [27]. |

| Ussing Chamber Setup | Gold-standard functional validation of CFTR-dependent ion transport. | Measures transepithelial short-circuit current [27]. |

Outcomes and Clinical Impact

This systematic approach led to the discovery of ivacaftor, the first CFTR potentiator approved for the G551D mutation, and subsequently to correctors lumacaftor and tezacaftor [27] [28]. The triple-combination therapy (elexacaftor/tezacaftor/ivacaftor) represents the culmination of this effort, transforming CF from a fatal disease to a manageable condition for most patients. Clinical trials demonstrated significant improvements in lung function (e.g., 6.8% increase in FEV₁ with tezacaftor-ivacaftor), quality of life, and a reduction in pulmonary exacerbation rates [28].

SMN2 Splicing Modulators: Targeting the Genetic Backup in Spinal Muscular Atrophy

Disease Context and Therapeutic Strategy

Spinal muscular atrophy (SMA) is a severe neuromuscular disorder and a leading genetic cause of infant mortality. It is caused by homozygous loss-of-function of the SMN1 gene, leading to deficient levels of survival motor neuron (SMN) protein [29] [30]. A nearly identical paralog gene, SMN2, exists but undergoes alternative splicing that predominantly skips exon 7, producing a truncated and unstable SMNΔ7 protein (only ~10% of SMN2 transcripts produce full-length, functional protein) [29]. The therapeutic strategy focused on discovering small molecules and antisense oligonucleotides that modulate SMN2 splicing to promote exon 7 inclusion, thereby increasing functional SMN protein levels [29] [31].

Key Experimental Protocol: Splicing-Modifier Screening

Cell-Based Splicing Reporter Assay

- Objective: Identify compounds that increase the inclusion of exon 7 in SMN2 transcripts.

- Cell Line: Engineered cell lines (e.g., HEK293, motor neuron precursors) containing an SMN2 minigene splicing reporter. This reporter often fuses SMN2 genomic sequences (including introns 6-7 and exon 7) to a luciferase or fluorescent protein gene, where the reporter's expression is dependent on exon 7 inclusion [29] [31].

- Critical Reagents:

- SMN2 Splicing Reporter Construct: The core tool for high-throughput screening.

- Compound Libraries: Diverse small-molecule libraries for nusinersen (ASO) and risdiplam (small molecule) discovery.

- Procedure:

- Plate reporter cells in 384-well or 1536-well microplates.

- Treat cells with test compounds for 24-48 hours.

- Measure reporter signal (e.g., luminescence or fluorescence) as a proxy for exon 7 inclusion.

- Hit Selection: Compounds causing a statistically significant increase in reporter signal are selected.

- Secondary Validation:

- RT-PCR and qPCR: Confirm increased exon 7 inclusion in endogenous SMN2 mRNA in SMA patient fibroblasts [29] [30].

- Western Blot: Quantify increases in full-length SMN protein levels.

- Cell Viability Assays: Test rescue of SMA phenotypes in patient-derived motor neurons.

- In Vivo Testing: Validate efficacy in severe SMA mouse models (e.g., Taiwanese model) [31].

The discovery paths for the two approved SMN2-targeting therapies, nusinersen and risdiplam, are summarized below.

Key Research Reagents and Solutions

Table 2: Essential Research Tools for SMN2 Splicing Modulator Development

| Reagent / Solution | Function in Research | Specific Example / Note |

|---|---|---|

| SMN2 Splicing Reporter | High-throughput quantification of exon 7 inclusion efficiency. | Minigene constructs with genomic SMN2 sequence driving a luciferase or fluorescent protein reporter [29] [31]. |

| SMA Patient Fibroblasts | A personalized disease model for secondary validation and mechanistic studies. | Primary fibroblasts from SMA patients with varying SMN2 copy numbers; used to measure endogenous SMN mRNA and protein [30]. |

| Antisense Oligonucleotides (ASOs) | Tools for target validation and therapeutic agents. | 2'-O-methoxyethyl-modified (MOE) ASOs for nusinersen; target intronic splicing silencer N1 (ISS-N1) in SMN2 intron 7 [29]. |

| SMA Mouse Model | Preclinical in vivo efficacy testing. | Severe SMA model (e.g., Taiwanese SMNΔ7 mice) used to demonstrate increased survival and improved motor function [31]. |

Outcomes and Clinical Impact

The screening campaigns yielded two distinct therapeutic classes:

- Nusinersen (Spinraza): An ASO that binds to a specific sequence (ISS-N1) in SMN2 intron 7, blocking the binding of splicing repressors and promoting exon 7 inclusion [29].

- Risdiplam (Evrysdi): An orally available small molecule that binds to the SMN2 pre-mRNA, stabilizing the interaction with the spliceosome and enhancing the recognition of exon 7 [31].

Clinical trials demonstrated dramatic improvements in survival and motor function. For risdiplam, a clinical trial showed that after 24 months, 32% of treated patients showed significant improvement and a further 58% were stabilized [31]. Real-world studies of nusinersen show significant variability in outcomes, with factors such as SMN2 copy number, age at treatment initiation, and pre-treatment SMN levels influencing efficacy [30].

Comparative Analysis and Future Directions

The successes in CF and SMA, while targeting different diseases, share a common foundation in phenotypic screening and a deep understanding of disease pathophysiology. The quantitative outcomes of the resulting therapies are summarized below.

Table 3: Comparative Analysis of Key Therapeutic Outcomes

| Therapeutic Class | Representative Drug | Key Molecular Effect | Validated Clinical Outcome |

|---|---|---|---|

| CFTR Corrector/Potentiator | Tezacaftor/Ivacaftor [28] | Increases CFTR protein at cell surface and enhances channel open probability. | FEV₁ increase: +6.8% (absolute % predicted) [28]. |

| CFTR Corrector/Potentiator | Lumacaftor/Ivacaftor [28] | Increases CFTR protein at cell surface and enhances channel open probability. | FEV₁ increase: +2.4 to 5.2% (absolute % predicted) [28]. |

| SMN2 Splicing Modulator (ASO) | Nusinersen [29] | Increases inclusion of exon 7 in SMN2 mRNA. | Improvement in motor function scores; variable outcomes in real-world studies [30]. |

| SMN2 Splicing Modulator (Small Molecule) | Risdiplam [31] | Increases inclusion of exon 7 in SMN2 mRNA. | 32% of patients significantly improved, 58% stabilized in motor function after 24 months [31]. |

These case studies highlight critical factors for success:

- Robust Assay Development: The FRET-based chloride flux assay and the SMN2 splicing reporter were both biologically relevant and amenable to miniaturization and automation.

- Patient-Derived Materials: Using patient fibroblasts and bronchial epithelial cells ensured physiological relevance during validation.

- Iterative Medicinal Chemistry: Continuous compound optimization was essential for achieving drug-like properties.

Future directions include the development of next-generation modulators with higher efficacy and broader applicability, as well as combinatorial approaches. Furthermore, the principles established here—using phenotypic screens to target the root cause of genetic diseases—are now being applied to a growing number of conditions, solidifying the role of high-throughput phenotypic screening as a cornerstone of modern precision medicine.

Advanced Methodologies: From High-Content Imaging to Automated Flow Cytometry

High-content imaging (HCI) coupled with the Cell Painting assay represents a transformative approach in phenotypic screening, enabling researchers to capture complex morphological responses to chemical or genetic perturbations. This powerful methodology generates multiparametric phenotypic profiles by extracting hundreds of quantitative features from cellular images, providing an unbiased characterization of cell state without presupposing specific molecular targets [32] [33]. Unlike conventional targeted assays that measure predefined endpoints, this comprehensive profiling captures subtle, system-wide changes, making it invaluable for mechanism of action (MOA) identification, functional genomics, and drug discovery [32] [9].

The core strength of this technology lies in its ability to convert visual biological information into high-dimensional data profiles suitable for computational analysis. By measuring features related to cell morphology, subcellular organization, and spatial relationships, researchers can identify characteristic "fingerprints" for different biological states [34]. These profiles enable the classification of unknown compounds or genes based on similarity to well-annotated references, facilitating drug repurposing, lead optimization, and toxicity assessment [32] [33].

Key Cellular Components and Research Reagents

The Cell Painting assay employs a carefully selected combination of fluorescent dyes to illuminate multiple organelles, creating a comprehensive picture of cellular morphology. The standard staining panel targets eight broadly relevant cellular components [32].

Table 1: Essential Research Reagents for Cell Painting Assays

| Cellular Component | Staining Reagent | Function in Assay |

|---|---|---|

| Nuclei | Hoechst 33342 / DAPI | DNA binding dye marking nuclei and enabling cell counting and cell cycle analysis [33] [34] |

| Endoplasmic Reticulum | Concanavalin A, Alexa Fluor 488 conjugate | Binds to glycoproteins on the ER membrane, highlighting structure and distribution [33] |

| Actin Cytoskeleton | Phalloidin, fluorescent conjugate | Binds to and labels filamentous actin, revealing cell shape and cytoskeletal integrity [33] |

| Golgi Apparatus & Plasma Membrane | Wheat Germ Agglutinin (WGA) | Binds to sugar residues on the Golgi apparatus and plasma membrane [33] |

| Mitochondria | MitoTracker Deep Red | Cell-permeant dye accumulating in active mitochondria, indicating network health [33] |

| RNA / Nucleoli | SYTO 14 | Cell-permeant green fluorescent nucleic acid stain that labels nucleoli and cytoplasmic RNA [33] |

This multiplexed staining strategy enables the simultaneous visualization of a cell's major structural elements. An advanced variation known as Live Cell Painting utilizes a single, metachromatic dye such as acridine orange (AO), which highlights nucleic acids and acidic compartments in live cells, facilitating dynamic, real-time phenotypic profiling [35].

Experimental Workflow and Protocol

The following diagram illustrates the complete end-to-end process for a standard Cell Painting experiment.

Cell Seeding and Treatment

Begin by seeding an appropriate cell line (e.g., U2OS) into multi-well plates (e.g., 384-well μClear plates) at an optimized density (e.g., 2,000 cells/well) and allow cells to adhere for 24 hours [33]. Subsequently, treat cells with the experimental perturbations, which can include small molecules (typically in a dilution series), genetic perturbations (e.g., siRNA, CRISPR), or other bioactive agents. Include appropriate controls such as DMSO vehicle controls and positive control compounds in the same plate [33] [34]. A critical consideration in experimental design is the distribution of control wells across all rows and columns of the plate to facilitate the later detection and correction of positional artifacts [34].

Staining and Fixation

After treatment (typically 24-48 hours), perform the staining procedure. The following protocol is adapted from Bray et al. and manufacturer application notes [33]:

- Live Cell Staining: Incubate cells with MitoTracker Deep Red (500 nM) in pre-warmed medium for 30 minutes at 37°C in the dark [33].

- Fixation: Remove the medium and fix cells with formaldehyde (3.2-4% vol/vol) for 20 minutes at room temperature.

- Permeabilization: Wash cells with 1X HBSS (Hanks' Balanced Salt Solution), then permeabilize with Triton X-100 (0.1%) for 20 minutes at room temperature.

- Multiplexed Staining: Prepare a master staining solution in a blocking solution (e.g., 1% BSA in HBSS) containing:

- Phalloidin (e.g., 5 μL/mL) to label F-actin.

- Concanavalin A, Alexa Fluor 488 conjugate (e.g., 100 μg/mL) to label the endoplasmic reticulum.

- Hoechst 33342 (e.g., 5 μg/mL) to label DNA.

- Wheat Germ Agglutinin (WGA), fluorescent conjugate (e.g., 1.5 μg/mL) to label Golgi and plasma membrane.

- SYTO 14 (e.g., 3 μM) to label RNA and nucleoli.

- Incubate cells with the staining solution for 30 minutes at room temperature in the dark.

- Final Wash: Remove the staining solution and wash cells three times with 1X HBSS before sealing the plates with adhesive foil for imaging.

High-Content Image Acquisition

Acquire images using a high-content imaging system (e.g., ImageXpress Micro Confocal System) equipped with a 20x objective or higher and appropriate filter sets for the dyes used [33]. To ensure data quality and account for potential plate irregularities, acquire multiple fields of view per well (e.g., 4-9 sites). For improved focus, consider acquiring a small Z-stack (e.g., 3 images) and applying a best-focus projection algorithm [33]. The outcome is a high-dimensional image set across five or more channels, each capturing distinct organizational information of the cell.

Data Analysis and Phenotypic Profiling

The transformation of raw images into actionable biological insights involves a multi-step computational workflow, detailed in the diagram below.

Image Analysis and Feature Extraction

Using specialized image analysis software (e.g., IN Carta, CellProfiler), images are processed to identify and segment individual cells and organelles [33] [34]. Advanced methods like deep learning semantic segmentation (e.g., SINAP module in IN Carta) can improve the accuracy of segmenting challenging features [33]. For each segmented object, hundreds of morphological features are extracted, which can be categorized as follows:

- Intensity Features: Mean, median, and total intensity of each stain.

- Morphological Features: Size, shape, and perimeter of cells and nuclei (e.g., area, eccentricity, solidity).

- Textural Features: Haralick textures that quantify patterns within organelles (e.g., contrast, correlation) [34].

- Spatial and Relational Features: Distance between organelles, counts of subcellular objects, and correlations between channels.

A typical analysis can extract over 1,500 morphological features per cell, creating a rich, high-dimensional profile [32].

Data Processing and Profiling

The extracted single-cell data requires robust processing to generate meaningful phenotypic profiles. Key steps include:

- Quality Control: Remove outlier wells, for instance, those with low cell counts (e.g., <50 cells) that may indicate high toxicity or technical failure [33].

- Data Normalization: Account for technical variability such as positional effects across the plate, which are common in intensity-based features. Strategies include using two-way ANOVA on control wells to detect row/column effects and applying correction algorithms like median polish [34].

- Feature Selection and Data Reduction: Use techniques like Principal Component Analysis (PCA) to reduce the dimensionality of the data while preserving the most relevant biological information [33] [34].

- Profile Generation and Comparison: Create phenotypic profiles for each treatment by aggregating data from the cell population. A powerful approach is to use the full distribution of cellular features rather than just well-averaged means. The Wasserstein distance metric has been shown to be superior for detecting differences between these complex distributions [34].

Table 2: Key Statistical and Computational Methods for Phenotypic Profiling

| Analysis Step | Method/Tool | Application and Purpose |

|---|---|---|

| Dimension Reduction | Principal Component Analysis (PCA) | Reduces feature space dimensionality for visualization and downstream analysis [33] |

| Population Summarization | Percentile-based Summarization | Summarizes cell population on the well level, achieving high classification accuracy [36] |

| Distribution Comparison | Wasserstein Distance Metric | Superior for detecting differences in cell feature distributions compared to other metrics [34] |

| Phenotypic Clustering | Hierarchical Clustering | Groups treatments (compounds/genes) with similar phenotypic profiles to infer functional relationships [33] |

| Data Visualization | Cytoscape Styles | Encodes phenotypic data as visual properties (color, size) in network visualizations [37] |

Applications in Phenotypic Screening and Drug Discovery

The integration of high-content imaging and Cell Painting into phenotypic screening pipelines has enabled several powerful applications that accelerate drug discovery and biological research.

Mechanism of Action Elucidation: By clustering compounds based on the similarity of their morphological profiles, researchers can infer a novel compound's MOA based on its proximity to compounds with known targets [32] [33]. For example, compounds like chloroquine and tetrandrine, which both affect autophagy, cluster together in phenotypic space [33].

Functional Genomics: Applying Cell Painting to cells perturbed by RNAi or CRISPR allows for the functional annotation of genes. Genes with similar loss- or gain-of-function phenotypes can be clustered, suggesting they operate in the same pathway or protein complex [32].

Disease Signature Reversion: Cell Painting can model disease phenotypes in human cells. These disease-specific morphological signatures can then be screened against compound libraries to identify therapeutics that revert the phenotype to a wild-type state, a strategy successfully used for drug repurposing in rare diseases [32] [9].

Library Enrichment: Profiling a large compound library with Cell Painting enables the selection of a smaller, phenotypically diverse screening set. This approach maximizes the diversity of biological effects screened while minimizing redundancy and cost, proving more powerful than selection based on chemical structure alone [32].

The future of this field lies in the integration of phenotypic data with other omics modalities (e.g., transcriptomics, proteomics) and AI-powered analysis. Platforms like PhenAID demonstrate how AI can integrate cell morphology with omics layers to predict bioactivity and mechanism of action, creating a new, more effective operating system for drug discovery [9].