High-Content Multiparametric Analysis of Cellular Events: A 2025 Guide for Drug Discovery

This article provides a comprehensive guide to high-content multiparametric analysis, a powerful technique combining automated microscopy with advanced computational methods to extract quantitative data from complex cellular systems.

High-Content Multiparametric Analysis of Cellular Events: A 2025 Guide for Drug Discovery

Abstract

This article provides a comprehensive guide to high-content multiparametric analysis, a powerful technique combining automated microscopy with advanced computational methods to extract quantitative data from complex cellular systems. Tailored for researchers, scientists, and drug development professionals, we cover the foundational principles of technologies like flow and mass cytometry, delve into methodological applications in phenotypic screening and toxicity studies, and address key challenges in data analysis and integration of 3D cell cultures. Furthermore, we explore validation strategies and compare computational approaches like FlowSOM and t-SNE, offering actionable insights to harness this technology for accelerating therapeutic discovery and development.

What is High-Content Multiparametric Analysis? Unpacking the Core Concepts

Defining High-Content Screening (HCS) and Multiparametric Analysis in Modern Biology

High-Content Screening (HCS) is an advanced imaging-based approach that combines automated microscopy, image processing, and quantitative data analysis to investigate complex cellular processes and phenotypes [1] [2]. Unlike traditional assays that focus on a single endpoint, HCS captures multiple quantitative parameters simultaneously from biological samples, typically cells or whole organisms, providing deeper insights into toxicity, efficacy, and disease mechanisms [3]. A key differentiator from High-Throughput Screening (HTS) is HCS's capacity for multiparametric analysis, enabling the extraction of numerous spatial and temporal measurements from a single experiment [3] [1]. This technology has become indispensable in pharmaceutical research, drug discovery, and basic biological research, with the global HCS market projected to grow from $3.1 billion in 2023 to $5.1 billion by 2029 [2].

Key Principles and Methodological Framework

Core Principles of HCS

HCS operates on several fundamental principles that distinguish it from other screening approaches. It provides spatially and temporally resolved information on cellular events, allowing researchers to observe phenomena within specific cellular compartments or organelles over time [1]. Through automated image analysis, HCS enables the unbiased quantification of complex cellular phenotypes, moving beyond investigator-selected measurements to comprehensive population-wide analysis [1] [4]. The integration of multiplexed assays allows researchers to measure multiple biological markers within a single experiment, significantly enhancing data efficiency and biological insight [2]. Furthermore, HCS bridges the critical gap between high information content and high throughput in biological experiments, making it possible to conduct large-scale screening without sacrificing biological complexity [4].

Standard HCS Workflow

The HCS process follows a structured, multi-stage workflow that ensures reproducibility and robust data generation [3]:

Sample Preparation: Cells or model organisms (e.g., zebrafish embryos) are treated with test compounds at defined concentrations and placed in multi-well plates suitable for automated imaging.

Automated Imaging: High-resolution fluorescence or brightfield microscopy captures cellular or whole-organism responses. This step is performed using automated microscopes that can image hundreds to thousands of samples per day.

Quantitative Data Extraction: Advanced image analysis software measures key morphological, functional, and intensity-based parameters from the acquired images.

AI-Based Pattern Recognition: Machine learning models identify significant phenotypic changes across complex datasets, enabling the detection of subtle patterns that might escape human observation.

Data Interpretation and Decision-Making: The extracted multiparametric data are analyzed statistically and used to rank compounds, assess toxicity, and identify lead candidates for further development.

Table 1: Key Technologies Enabling Modern HCS

| Technology | Key Function | Representative Examples |

|---|---|---|

| High-Resolution Fluorescence Microscopy | Visualizes cellular structures and protein interactions with high clarity | ImageXpress Micro Confocal System [2] |

| Live-Cell Imaging | Enables continuous observation of cell behavior over time | Incucyte Live-Cell Analysis System [2] |

| 3D Cell Culture & Organoid Screening | Provides physiologically relevant tissue models for more predictive screening | Nunclon Sphera Plates for 3D spheroid formation [2] |

| Automated Image Analysis Software | Extracts quantitative data from complex cellular images | Harmony Software, CellProfiler [2] [5] |

| Cloud-Based Data Storage & Analysis | Manages large volumes of image data and enables collaborative analysis | ZEN Data Storage system [2] |

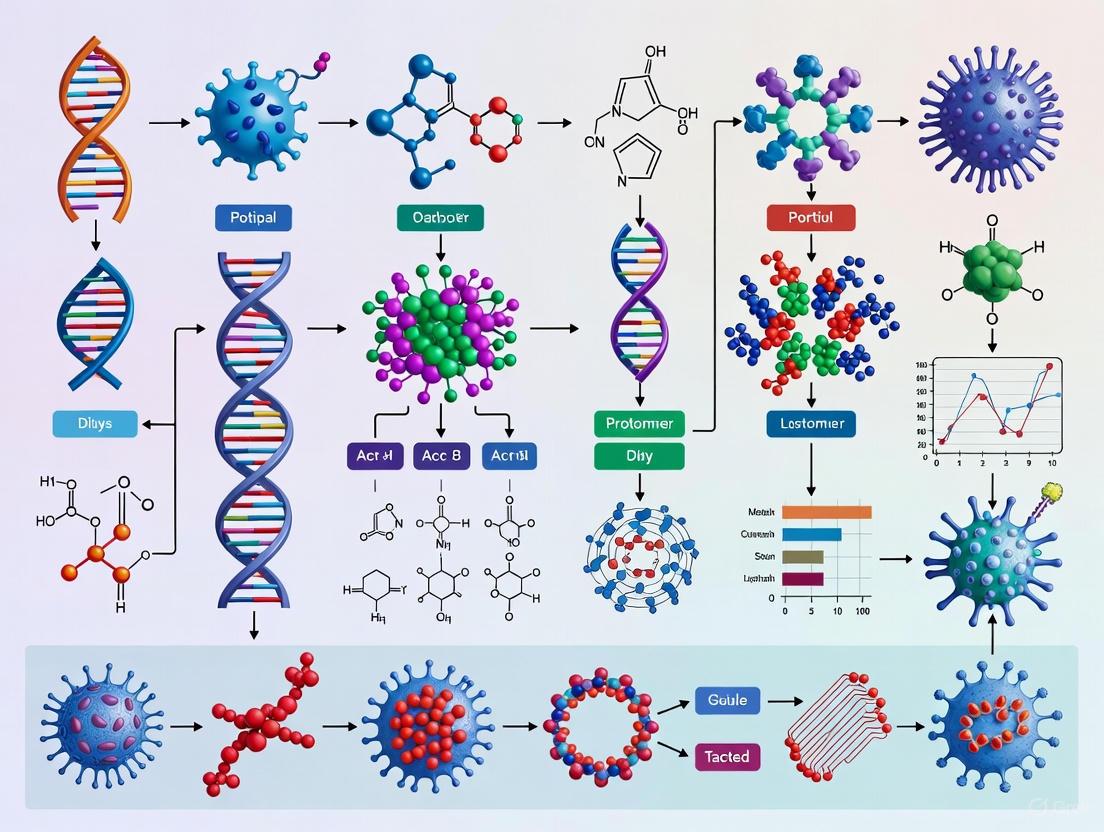

Diagram 1: Standard HCS Workflow

Applications in Modern Biological Research

Drug Discovery and Development

HCS has become a cornerstone technology in pharmaceutical research, with applications spanning all stages of drug discovery. In primary compound screening, HCS enables the evaluation of thousands to hundreds of thousands of compounds for their effects on complex cellular phenotypes, going beyond single-target approaches to identify substances that alter cellular states in desired manners [1] [4]. For toxicology assessment, HCS provides detailed profiles of compound effects on cellular morphology and function. For example, zebrafish HCS allows for developmental toxicity screening through large-scale phenotypic analysis of live embryos, detecting teratogenic effects by scoring multiple morphological and physiological parameters [3]. In cardiotoxicity screening, automated, imaging-based multiparametric analysis evaluates key cardiac endpoints including heart rate, contractility, and rhythm abnormalities in real-time, identifying potential cardiac risks before advancing to mammalian studies [3]. HCS also plays a crucial role in evaluating ADME properties (absorption, distribution, metabolism, and excretion), providing critical information about drug candidate behavior in biological systems [4].

Disease Mechanism Elucidation

The multiparametric capabilities of HCS make it particularly valuable for unraveling complex disease mechanisms. In cancer research, HCS enables the characterization of tumor cell behavior, drug responses, and spatial relationships within the tumor microenvironment. For example, the MARQO pipeline has been used to analyze multiplexed tissue images from cancer patients, identifying CD8+ T cell enrichment in hepatocellular carcinoma responders to neoadjuvant immunotherapy [6]. For neurological disorders, HCS facilitates the study of neuronal morphology, synapse formation, and protein aggregation in models of Alzheimer's disease, Parkinson's disease, and other neurodegenerative conditions [2]. In infectious disease research, HCS platforms have been deployed to discover antimalarial compounds by monitoring parasite growth and host cell interactions, identifying promising candidates like bromophycolide A from marine natural product libraries [4]. Furthermore, chemical genetics approaches using HCS aim to functionally annotate the genome by identifying small molecules that act on specific gene products, creating chemical tools to probe protein function even when genetic knockouts are lethal [1].

Advanced Cellular Model Characterization

The advent of more physiologically relevant cellular models has increased the importance of HCS for their comprehensive characterization. 3D cell culture and organoid screening provide more accurate representations of human tissues, and HCS enables the quantitative assessment of complex structures that cannot be adequately evaluated with traditional methods [2]. Stem cell research utilizes HCS to monitor differentiation processes, identify distinct cellular subpopulations, and quantify changes in pluripotency markers over time [2]. Microfluidic organ-on-chip models, such as blood-brain barrier systems, benefit from HCS analysis to evaluate barrier integrity, cellular organization, and functional responses to compounds in controlled microenvironments [7].

Table 2: Quantitative Parameters in Representative HCS Applications

| Application Area | Key Measurable Parameters | Biological Significance |

|---|---|---|

| Developmental Toxicology (Zebrafish) | Body length, tail curvature, heart rate, organ morphology, spontaneous movement | Identifies teratogenic effects and developmental delays [3] [5] |

| Cardiotoxicity Screening | Heart rate, contractility, rhythm abnormalities, cardiomyocyte apoptosis | Predicts clinical cardiotoxicity risks [3] |

| Cancer Immunotherapy Response | CD8+ T cell density, spatial distribution, tumor infiltration, immune cell co-localization | Correlates with treatment efficacy and patient outcomes [6] |

| Nuclear Phenotype Analysis | Nuclear size, shape, lamin protein expression, telomere organization | Classifies lymphoma subtypes and predicts deformability [8] |

Detailed Experimental Protocols

Protocol 1: Multiplexed Tissue Analysis Using MARQO Pipeline

The MARQO (Multiplex-imaging Analysis, Registration, Quantification, and Overlaying) pipeline enables start-to-finish, single-cell resolution analysis of whole-slide tissue samples, particularly valuable for cancer immunotherapy studies [6].

Materials and Reagents:

- Tissue sections (4-5 μm thickness) on charged slides

- Multiplex immunohistochemistry/immunofluorescence staining reagents

- Primary antibodies validated for multiplexing

- Nuclear counterstain (DAPI or haematoxylin)

- Antigen retrieval solutions

- Automated staining platform (optional but recommended)

- MARQO software platform

Procedure:

- Sample Preparation and Staining:

- Perform multiplex staining using validated antibodies according to established protocols (MICSSS, CyCIF, CODEX, or other multiplex methods)

- Include appropriate nuclear counterstaining in each cycle for iterative segmentation

- Scan slides using high-resolution whole-slide scanner

Image Preprocessing and Registration:

- Import whole-slide images into MARQO pipeline

- Perform elastic image registration to align multiple staining cycles

- Apply tissue masking to focus analysis on relevant regions

- Split images into evenly sized tiles for parallel processing

Nuclear Segmentation:

- Perform iterative nuclear segmentation across all stains using StarDist algorithm

- Apply composite segmentation mask by retaining nuclear objects detected in ≥60% of iterations within 3μm centroid distance

- Eliminate hypothesized red blood cells and artifacts based on morphological features

Cell Phenotyping and Quantification:

- Apply unsupervised clustering with mini-batch k-means to identify distinct cell populations

- Perform user-guided classification through graphical interface to binarize positivity for each marker

- Quantify marker co-expression patterns and cellular densities

Spatial Analysis:

- Determine spatial relationships between identified cell populations

- Analyze cellular proximities and organizational patterns within defined tissue regions

- Export data for statistical analysis and visualization

Validation: Compare MARQO's segmentation performance with manual pathologist curation using metrics including Dice coefficient and cell detection accuracy. Validate cell classification against known marker expression patterns and establish reproducibility across technical replicates [6].

Protocol 2: Zebrafish Developmental Toxicology Screening

Zebrafish provide a whole-organism model for developmental toxicity screening, combining physiological relevance with high-throughput capability [3] [5].

Materials and Reagents:

- Wild-type or transgenic zebrafish embryos

- Egg water (60 μg/mL sea salt in reverse osmosis water)

- Test compounds dissolved in appropriate vehicle (typically DMSO ≤0.1%)

- 96-well or 384-well plates with transparent bottoms

- Methylcellulose or low-melting point agarose for immobilization

- Automated imaging system (e.g., VAST BioImager)

- Image analysis software (e.g., FishInspector)

Procedure:

- Embryo Collection and Compound Exposure:

- Collect zebrafish embryos 0-4 hours post-fertilization (hpf)

- Array individual embryos into wells containing 100-200 μL egg water

- Add test compounds at desired concentrations using liquid handling system

- Incubate at 28.5°C until desired developmental stage (typically 24-120 hpf)

Sample Preparation for Imaging:

- At appropriate timepoints, transfer embryos to imaging plates

- Immobilize embryos using 1.2% low-melting point agarose or 3% methylcellulose

- Orient embryos consistently for standardized imaging

Automated Image Acquisition:

- Acquate brightfield and/or fluorescence images using automated microscopy

- Use Vertebrate Automated Screening Technology (VAST) or similar platform for consistent positioning

- Capture multiple focal planes and magnifications as required for phenotypic assessment

Multiparametric Phenotype Analysis:

- Use FishInspector software to annotate and quantify morphological structures

- Calculate specimen features including length, tail curvature, eye size, and body shape

- Score developmental abnormalities using standardized toxicity scales

- Export Regions of Interest (ROIs) as JSON and CSV files for further analysis

Data Management and Analysis:

- Upload images and metadata to OMERO system for centralized management

- Apply statistical analysis to identify significant phenotypic changes

- Establish dose-response relationships for toxic effects

- Compare with positive and negative controls for assay validation

Quality Control: Include negative control (vehicle only) and positive control (known teratogen) in each plate. Monitor embryo viability throughout exposure period. Establish Z-factor for assay robustness. Ensure consistent imaging parameters across all experimental groups [3] [5].

Diagram 2: FAIR Data Management Workflow for HCS

Essential Research Reagents and Materials

Table 3: Research Reagent Solutions for HCS

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Fluorescent Antibodies | Specific detection of cellular proteins and modifications | Immunofluorescence staining for protein localization and expression levels [6] [4] |

| CRISPR Libraries | Gene editing and functional genomics screening | Identification of gene functions in oncology and genetic disorders [2] |

| 3D Cell Culture Systems | Physiologically relevant tissue models | Nunclon Sphera Plates for spheroid and organoid formation [2] |

| Bio-Plex Multiplex Immunoassays | Simultaneous analysis of multiple proteins | Cancer biology and immunology research [2] |

| Live-Cell Dyes and Reporters | Dynamic monitoring of cellular processes | Fluorescent probes for second messengers, viability, and organelle function [1] [4] |

| Microfluidic Platforms | Controlled microenvironments for single-cell analysis | C1 Single-Cell Auto Prep System for stem cell research and oncology [2] |

Data Management and Analysis Frameworks

The massive datasets generated by HCS experiments present unique data management challenges, with single experiments often producing hundreds of thousands of images and associated metadata [5]. Effective HCS data management requires specialized frameworks that ensure data integrity, accessibility, and reproducibility.

OMERO-Based Data Management

The OMERO (Open Microscopy Environment Remote Objects) platform provides a flexible open-source solution for managing HCS datasets and metadata [5]. OMERO connects a PostgreSQL relational database with a filesystem-based image repository and HDF-based tabular data store, supporting a wide range of microscopy formats and integrating with analytical tools. Implementation typically involves:

- Centralized Repository: Storing images and metadata in a structured database accessible to authorized researchers

- Metadata Annotation: Associating experimental conditions, assay settings, and sample details with corresponding images

- Workflow Integration: Using tools like ezomero Python library to connect analysis workflows with data management tasks

- Collaborative Sharing: Enabling controlled access for collaborators and public dissemination through platforms like Bio Image Archive

Workflow Management Systems

Workflow Management Systems (WMS) such as Galaxy and KNIME provide crucial infrastructure for creating reproducible, semi-automated workflows for HCS bioimaging data management [5]:

Galaxy Platform: Offers user-friendly interface for processing extensive datasets, versioning tools, and sharing workflows. The OMERO-suite within Galaxy simplifies data transfer and metadata management with OMERO instances.

KNIME Analytical Platform: Enables creation of modular pipelines supporting over 140 image formats, with capabilities for preprocessing, segmentation, feature extraction, and classification. KNIME integrates with OMERO through Python scripts and ezomero code blocks.

These WMS platforms facilitate the transition from local file-based storage to automated, agile image data management frameworks, reducing human error and enhancing data consistency and reproducibility across international research institutions [5].

The field of High-Content Screening continues to evolve with emerging technologies that enhance its capabilities and applications. Artificial intelligence and machine learning are increasingly integrated into image analysis pipelines, improving pattern recognition and enabling the identification of subtle phenotypic changes that escape conventional analysis [3] [6]. The development of more sophisticated 3D models and organ-on-chip systems provides increasingly physiologically relevant contexts for screening, while advanced multiplexing technologies now enable the simultaneous assessment of 20 or more markers in single cells within tissue contexts [6] [2]. Microfluidic platforms continue to advance single-cell analysis capabilities, allowing high-content screening with minimal sample usage [2] [8].

The integration of HCS with multiparametric analysis represents a paradigm shift in biological research, enabling systems-level understanding of cellular responses to genetic, chemical, and environmental perturbations. As these technologies become more accessible and sophisticated, they will continue to drive innovations in drug discovery, functional genomics, and personalized medicine. The ongoing development of standardized workflows, data management frameworks, and analytical tools will further enhance the reproducibility and impact of HCS research across the biological sciences [5].

For researchers implementing HCS approaches, success depends on careful experimental design, robust validation of imaging and analysis pipelines, and adoption of FAIR (Findable, Accessible, Interoperable, Reusable) data management principles. By leveraging the full potential of high-content multiparametric analysis, scientists can uncover novel biological insights and accelerate the development of new therapeutic strategies for human diseases.

The transition from two-dimensional (2D) to three-dimensional (3D) cell culture represents a fundamental paradigm shift in high-content screening (HCS) and drug discovery. This evolution addresses a critical limitation of traditional methods: their poor predictive power for clinical outcomes. A pivotal example illustrates this point; a promising cancer therapy successfully cleared preclinical hurdles using 2D models where cells spread unnaturally on plastic, isolated from real-world complexities. However, in Phase I human trials, the therapy failed badly. The failure was attributed to the model system—in patients, tumors are not flat but exist as dense, three-dimensional ecosystems. This realization underscored that when models do not mimic human biology, results do not translate to clinical success, catalyzing the move toward 3D cell culture systems that provide tissue-like realism [9].

Modern 3D cultures, including spheroids and organoids, self-assemble into structures that restore morphological and functional features of human tissues. They facilitate complex extracellular matrix (ECM) interactions and create natural gradients of oxygen, nutrients, and pH. This realistic microenvironment is crucial for accurate disease modeling, leading to more physiologically relevant gene expression profiles, drug resistance behavior, and toxicological predictions [9]. The implementation of 3D cell cultures, alongside advanced cell models like stem cells and primary cells, is poised to significantly improve the predictability of drug efficacy and toxicity in humans before compounds enter clinical trials, thereby reducing the high attrition rates in pharmaceutical development [10].

Comparative Analysis: 2D vs. 3D Cell Culture Platforms

The choice between 2D and 3D cell culture is strategic, with each platform offering distinct advantages and limitations. The table below provides a structured comparison of their key characteristics.

Table 1: Quantitative Comparison of 2D vs. 3D Cell Culture Systems

| Feature | 2D Cell Culture | 3D Cell Culture |

|---|---|---|

| Growth Pattern | Monolayer; flat, uniform expansion [9] | Three-dimensional; expands in all directions [9] |

| Cell-Cell & Cell-ECM Interactions | Limited; forced polarity, unnatural contact [9] [10] | Dynamic and physiologically relevant; realistic spatial organization [9] [10] |

| Spatial Organization | None [9] | High; mimics tissue architecture (e.g., spheroids, organoids) [9] |

| Tissue Mimicry | Poor [9] | High; recapitulates in vivo physiology [9] [10] |

| Gene Expression Profiles | Altered due to unnatural growth surface [9] | More in vivo-like fidelity [9] |

| Drug Response | Often overestimates efficacy; lacks resistance mechanisms [9] | More predictive; accurately models drug penetration and resistance [9] [10] |

| Gradient Formation (O₂, nutrients, pH) | Absent; uniform exposure [9] | Present; creates heterogeneous cellular microenvironments [9] [10] |

| Cost & Infrastructure | Inexpensive; simple protocols, standard equipment [9] | Higher cost; requires specialized materials and protocols [9] |

| Throughput & Scalability | High; compatible with High-Throughput Screening (HTS) [9] | Moderate to high; newer technologies are improving HTS compatibility [9] [10] |

| Primary Applications | High-throughput compound screening, basic cytotoxicity, genetic manipulation [9] | Disease modeling (e.g., cancer), toxicology, personalized therapy, stem cell research [9] |

Key 3D Technologies and Their Applications in HCS

A suite of technologies has been developed to facilitate 3D cell culture, each with unique advantages for high-content multiparametric analysis.

Table 2: Key 3D Cell Culture Technologies and Their Characteristics in HCS

| Technology | Key Principle | Advantages for HCS | Disadvantages / Challenges |

|---|---|---|---|

| Multicellular Spheroids | Self-aggregation of cells into 3D clusters [10] | Easy protocols, scalable to different plate formats, compliant with HTS/HCS, high reproducibility [10] | Simplified architecture, ensuring uniform size can be challenging [10] |

| Organoids | Stem cells or organ progenitors self-organize into tissue-specific structures [10] | Patient-specific, in vivo-like complexity and architecture, ideal for personalized medicine [10] | Can be variable, less amenable to HTS, hard to reach in vivo maturity, may lack key cell types like vasculature [10] |

| Scaffold-Based Systems (Hydrogels) | Cells embedded in a supportive biomaterial (e.g., collagen, Matrigel, synthetic polymers) that mimics the ECM [9] [10] [11] | Applicable to microplates, amenable to HTS/HCS, high reproducibility, co-culture ability [10] | Simplified architecture, potential for variability across material lots [10] |

| Microfluidics (Organs-on-Chips) | Cells cultured in microfluidic channels to simulate vascular flow and mechanical forces [10] [11] | In vivo-like architecture and microenvironment, precise control of chemical and physical gradients [10] | Generally difficult to adapt to HTS, often lack fully functional vasculature [10] |

| 3D Bioprinting | Layer-by-layer deposition of cell-laden bioinks to create custom 3D structures [10] [11] | Custom-made architecture, control over chemical and physical gradients, high-throughput production potential [10] | Challenges with cells and materials, issues with tissue maturation, lack of vasculature in most current models [10] |

The 3D cell culture industry reflects this technological diversity. The market, valued at $1040.75 Million in 2022 and projected to grow at a CAGR of 15% through 2030, is segmented into scaffold-based, scaffold-free, microfluidics, and bioreactor products. Scaffold-based systems dominated revenue in 2024, while scaffold-free systems are growing at the fastest rate. In terms of application, cancer research accounts for 34% of applications, leveraging 3D models to study tumor microenvironments and personalized oncology. The regenerative medicine segment is also expanding rapidly, driven by the potential of organoid development to address the global organ shortage [11].

Experimental Protocols for High-Content Analysis of 3D Models

Protocol: Generation and Drug Screening of Tumor Spheroids Using Low-Adhesion Plates

This protocol is optimized for high-throughput drug screening and multiparametric analysis of cancer cell lines.

Research Reagent Solutions:

- Ultra-Low Attachment (ULA) Microplates: Surface-coated plates to minimize cell adhesion and promote self-aggregation into a single spheroid per well [10].

- Appropriate Cell Culture Medium: Formulated to support the specific cell line under investigation (e.g., SW-480, HCT-116 colon cancer cells) [9] [10].

- Matrigel or Synthetic Hydrogels: Optional, to provide an extracellular matrix (ECM) scaffold for more complex models [10] [11].

- CellTiter 96 AQueous Non-Radioactive Cell Proliferation Assay (MTS) Kit: For assessing cell viability and compound cytotoxicity in 3D cultures [9].

- Test Compounds: e.g., Chemotherapeutic agents like Doxorubicin, Fluorouracil, or Oxaliplatin [9] [10].

- Paraformaldehyde (4% in PBS): For spheroid fixation.

- Permeabilization Buffer (e.g., 0.1% Triton X-100 in PBS): For intracellular antibody staining.

- Immunofluorescence Staining Reagents: Primary and secondary antibodies, phalloidin (for F-actin), and DAPI (for nuclei) [9].

- Mounting Medium for 3D Imaging: To preserve spheroid structure during microscopy.

Methodology:

- Cell Seeding:

- Harvest and count cells. Prepare a single-cell suspension in complete medium.

- Seed cells into the ULA microplate at an optimized density (e.g., 1,000 - 10,000 cells/well in a 96-well format). The optimal density must be determined empirically for each cell line to form a single, well-defined spheroid.

- Centrifuge the plate at a low speed (e.g., 300-500 x g for 3-5 minutes) to gently aggregate cells at the bottom of the well.

- Incubate the plate at 37°C, 5% CO₂ for 72-96 hours to allow for spheroid formation.

Compound Treatment & Viability Assessment:

- After spheroid formation, carefully aspirate a portion of the medium and replace it with fresh medium containing the test compounds at the desired concentrations. Include vehicle controls.

- Incubate for the desired treatment period (e.g., 72-120 hours).

- For viability analysis, add the MTS reagent directly to the wells according to the manufacturer's instructions. Incubate for 1-4 hours, monitoring for color development.

- Record the absorbance at 490nm using a plate reader. Note that 3D cultures often show higher resistance to chemotherapeutic agents compared to 2D cultures, a phenomenon observed in vivo [9] [10].

Multiparametric High-Content Imaging and Analysis:

- For immunofluorescence, carefully aspirate the medium and wash spheroids with PBS.

- Fix spheroids with 4% PFA for 30-60 minutes at room temperature.

- Permeabilize and block with an appropriate buffer (e.g., 1% BSA, 0.1% Triton X-100 in PBS) for 1-2 hours.

- Incubate with primary antibodies (e.g., against Cleaved Caspase-3 for apoptosis, Ki-67 for proliferation) diluted in blocking buffer overnight at 4°C.

- Wash thoroughly and incubate with fluorescently conjugated secondary antibodies and DAPI for 2-4 hours at room temperature.

- Image the entire spheroid using a confocal microscope or a high-content imaging system with Z-stacking capability to capture 3D data.

- Analyze images using HCS software to extract multiparametric data, including:

- Spheroid volume and morphology.

- Total cell count (DAPI+).

- Proliferation index (Ki-67+).

- Apoptotic index (Caspase-3+).

- Drug penetration depth (if using a fluorescent drug analog).

Protocol: Establishing Patient-Derived Organoids for Personalized Therapy Screening

This protocol outlines the creation of organoids from patient tissue samples, enabling functional precision medicine and the assessment of therapy response in a clinically relevant model.

Research Reagent Solutions:

- Patient Tissue Sample: Surgically resected tumor or biopsy material.

- Digestion Enzyme Mix: e.g., Collagenase/Dispase in PBS, to dissociate tissue into single cells or small clusters.

- Basement Membrane Extract (BME): A commercially available product like Matrigel, which provides a complex ECM scaffold essential for organoid growth [10].

- Advanced Organoid Culture Medium: A defined medium, often containing specific growth factors (e.g., EGF, Noggin, R-spondin) to support the growth and differentiation of stem/progenitor cells from the tissue of origin [10].

- Cryopreservation Medium: FBS with 10% DMSO, for biobanking organoid lines.

Methodology:

- Tissue Processing and Initiation of Organoid Culture:

- Mince the patient tissue sample into small fragments (~1-2 mm³) using sterile scalpels.

- Digest the tissue fragments with an enzyme mix for 30-60 minutes at 37°C with gentle agitation. Triturate periodically to aid dissociation.

- Pass the cell suspension through a cell strainer (70-100 µm) to remove undigested fragments and obtain a single-cell/small-cluster suspension.

- Centrifuge the filtrate and resuspend the cell pellet in cold BME. Plate the BME-cell mixture as small droplets in pre-warmed culture plates.

- Polymerize the BME droplets at 37°C for 20-30 minutes, then carefully overlay with organoid culture medium.

- Culture at 37°C, 5% CO₂, replacing the medium every 2-3 days.

Expansion, Passaging, and Biobanking:

- Organoids are typically passaged every 1-2 weeks. For passaging, mechanically disrupt and enzymatically digest the BME dome and the organoids within.

- Re-embed the dissociated organoid fragments in fresh BME to initiate new cultures for expansion or screening.

- For biobanking, dissociate organoids, resuspend in cryopreservation medium, and freeze slowly before transferring to liquid nitrogen for long-term storage.

High-Content Drug Screening and Phenotypic Analysis:

- For screening, seed a defined number of dissociated organoid cells in BME into 96-well plates suitable for imaging.

- Once organoids have re-formed, treat with a panel of clinically relevant therapeutics.

- After treatment, fix and stain for relevant markers (e.g., live/dead stains, apoptosis, differentiation markers).

- Image using high-content confocal microscopy and perform 3D image analysis to quantify organoid viability, size, morphology, and marker expression in response to each drug. This data can be used to identify the most effective therapy for the patient [9] [10].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for 3D Cell Culture and HCS

| Item | Function/Principle | Example Applications |

|---|---|---|

| Ultra-Low Attachment (ULA) Plates | Surface coating minimizes cell adhesion, forcing cells to self-aggregate into spheroids. Well geometry ensures single spheroid formation per well [10]. | High-throughput spheroid formation for drug screening; scalable across microplate formats [9] [10]. |

| Basement Membrane Extracts (BME/Matrigel) | A complex, reconstituted hydrogel derived from animal tumors that provides a biologically active scaffold mimicking the native extracellular matrix (ECM) [10]. | Essential for culturing organoids and other sensitive cell types that require ECM support for survival and differentiation [10]. |

| Synthetic Hydrogels (PeptiGels) | Chemically defined, tunable polymers that offer a reproducible and animal-free alternative to BME. Properties like stiffness and degradability can be engineered [11]. | Tissue engineering, creating more controlled and reproducible microenvironments for mechanistic studies [11]. |

| Hanging Drop Plates | Platforms where cells are suspended in a droplet of media from the top of a well, promoting aggregation into a spheroid by gravity without surface contact [10]. | Spheroid formation, particularly for co-culture studies where different cell types can be combined in the droplet [10]. |

| Microfluidic Chips (Organs-on-Chips) | Devices with micro-channels that allow for continuous perfusion, application of mechanical forces (e.g., shear stress), and creation of complex, multi-cellular tissue interfaces [10] [11]. | Modeling physiological organ functions and diseases; preclinical testing of drug efficacy and safety in a more dynamic system [10]. |

| 3D-Bioprinting Bioinks | Cell-laden hydrogels (often combined with synthetic polymers) that are used as "inks" in 3D printers to create custom, architecturally complex tissue constructs layer-by-layer [10] [11]. | Fabrication of patient-specific tissue models for transplantation, disease modeling, and advanced drug testing platforms [11]. |

Future Outlook: Integrated Workflows and Intelligent Design

The future of 3D cell culture in HCS is not a simple replacement of 2D but lies in hybrid workflows and AI integration. Leading laboratories are adopting a tiered approach: using 2D models for initial high-throughput screening due to their speed and cost-effectiveness, followed by 3D models for predictive secondary screening, and finally, patient-derived organoids for personalized therapy selection [9]. This multi-model strategy optimizes resources while maximizing biological relevance.

The integration of Artificial Intelligence (AI) and Machine Learning (ML) is set to revolutionize the field. These tools enable predictive analytics based on complex 3D imaging data, enhancing the accuracy of gene expression analysis and phenotypic screening. AI can optimize culture conditions, improve reproducibility, and reduce research timelines by rapidly identifying patterns in high-content, multiparametric datasets that are beyond human discernment [11]. Furthermore, regulatory bodies like the FDA and EMA are increasingly considering 3D data in submissions, signaling a broader acceptance of these advanced models in the drug development pipeline [9]. By 2028, most pharmaceutical R&D pipelines are expected to adopt these integrated, intelligent workflows, combining the speed of flat models, the realism of 3D systems, and the personalization of organoids to deliver more effective therapies to patients faster [9].

High-content analysis (HCA), also known as high-content screening (HCS), is a powerful approach that combines automated microscopy, high-throughput imaging, and multiparametric data analysis to investigate complex biological processes in cellular samples and 3D organoids [12]. This technology has become a cornerstone in biomedical research and drug discovery, enabling scientists to quantitatively analyze large sets of visual data at single-cell resolution [12] [13]. By leveraging automated imaging systems and sophisticated software algorithms, HCA facilitates the investigation of multiple parameters simultaneously to characterize cellular phenotypes on a large scale, making it particularly valuable for drug discovery, toxicology studies, and basic research applications [12].

The fundamental strength of HCA lies in its ability to extract rich, quantitative data from complex biological systems. Modern HCA platforms can rapidly analyze millions of cells, revealing the heterogeneity of responses that exist within cell populations across various manipulations, from genome-wide screens to small-molecule library analyses [14]. The integration of artificial intelligence and machine learning has further enhanced these systems, improving phenotypic profiling capabilities and accelerating scientific discovery through robust quantitative analysis of complex biological images and datasets [12].

Core Technological Components

Automated Imaging Systems

Automated microscopy systems form the hardware foundation of HCA, transforming traditional fluorescence microscopy into a high-throughput, quantitative tool [14]. These systems incorporate several critical components that work in concert to enable rapid, high-quality image acquisition.

Imaging Modalities: HCA systems primarily utilize two imaging approaches: widefield and confocal microscopy. Widefield imaging is the most commonly used technique (72% of users), followed by confocal imaging (64% of users) [15]. Confocal imaging is particularly valuable for 3D cell culture applications, tissue slice imaging, and visualization of small intracellular organelles, as it eliminates out-of-focus light, resulting in clearer images [15]. Recent advancements include laser-based line scanning confocal systems with adjustable apertures that maximize flexibility while maintaining exceptional image quality [15].

Key Hardware Components: Modern HCA systems feature sCMOS cameras for enhanced sensitivity, oil immersion objectives for high-resolution imaging, and automated components for scanning microtiter plates and integrating with robotic plate-handling systems [15]. Throughput capabilities have significantly improved, with some systems achieving acquisition rates up to 125 frames per second, enabling new applications such as analysis of calcium flux in beating cardiomyocytes [15]. These systems are also equipped with environmental control capabilities (temperature, CO₂, O₂) to maintain cell viability during live-cell imaging experiments [15].

Image Acquisition Software

Image acquisition software serves as the control center for HCA systems, coordinating hardware components and managing imaging parameters. Platforms like MetaXpress Acquire Software provide intuitive interfaces and guided workflows that streamline even complex imaging assays, enabling researchers to start generating data quickly [12].

These software solutions offer features such as automated focus maintenance, multi-site acquisition, and time-lapse experiment coordination [15]. Recent advancements include robust autofocus algorithms, enhanced tools for quickly reviewing scan data, and significant improvements in overall system flexibility and throughput [15]. The software also handles the massive data volumes generated by HCA systems, often incorporating compatibility with open standards like OME-TIFF to facilitate interoperability across platforms and integration with Laboratory Information Management Systems (LIMS) and electronic lab notebooks [13].

Image Analysis and Data Extraction

The analytical component of HCA transforms raw images into quantitative data through sophisticated image processing algorithms. Segmentation—the identification of specific cellular elements—serves as the cornerstone of high-content analysis [14]. This process typically begins with fluorescent dyes that label cellular compartments such as nuclei (e.g., Hoechst 33342, HCS NuclearMask stains) or entire cells (e.g., HCS CellMask stains) [14].

Once segmentation is achieved, the software can quantify additional fluorescent reporters for various cellular processes, extracting multiple parameters per cell [14]. Modern HCA software can evaluate a median of 6-10 different parameters per assay, providing a comprehensive view of cellular responses [15]. The integration of AI and machine learning has significantly enhanced these capabilities, allowing for more accurate phenotypic classification and automated decision-making [12] [13]. These systems can analyze complex biological images to quantify features such as protein expression, organelle morphology, and subcellular localization across large cell populations.

Table 1: Common Segmentation and Labeling Tools for High-Content Analysis

| Segmentation Tool | Ex/Em (nm) | Cellular Target | Primary Function |

|---|---|---|---|

| HCS NuclearMask Blue stain | 350/461 | Nucleus | Nuclear segmentation and cell identification |

| Hoechst 33342 dye | 350/461 | Nucleus | DNA content analysis and cell cycle assessment |

| HCS CellMask Green stain | 493/516 | Whole cell | Cytoplasmic segmentation and cell shape analysis |

| CellMask Green plasma membrane stain | 522/535 | Plasma membrane | Delineation of cell boundaries and membrane studies |

| CellTracker Deep Red stain | 630/660 | Whole cell | Live cell tracking and proliferation studies |

Performance Specifications and Comparison

The performance of HCA systems is characterized by several key specifications that determine their suitability for different research applications. Understanding these parameters is essential for selecting the appropriate instrumentation for specific experimental needs.

Table 2: High-Content Screening System Performance and Application Metrics

| Performance Parameter | Typical Range/Specification | Application Context |

|---|---|---|

| Throughput | Up to 125 fps frame rate | High-speed applications like calcium flux in cardiomyocytes |

| Multiplexing Capacity | Median of 3 dyes per assay | Simultaneous analysis of multiple cellular targets |

| Parameters Evaluated | 6-10 parameters per assay | Comprehensive cellular profiling |

| Spatial Resolution | Up to 60x magnification with oil immersion objectives | Subcellular detail and organelle visualization |

| 3D Culture Compatibility | <25% of current assays (increasing) | Biologically relevant disease modeling |

| Cell Types Used | Tumor cell lines (29%), Primary cells (22%) | Various biological contexts from simplified to complex models |

Key Performance Features: Sensitivity and resolution are ranked as the most important features when purchasing an HCS system, followed by image analysis software capabilities and throughput [15]. Modern systems address these needs through advancements such as variable aperture technology that maximizes flexibility while maintaining image quality, and automated image analysis that balances powerful capabilities with user-friendly interfaces to shorten learning curves [15].

The market for HCA systems is evolving toward greater accessibility, with some platforms now available at a fraction of the cost of traditional HCS systems while still providing broad imaging and detection capabilities [15]. This trend is expanding the adoption of HCA technology beyond large pharmaceutical companies to include academic institutions and smaller research laboratories.

Experimental Protocols and Applications

Cell Health and Cytotoxicity Assessment

Multiparametric HCA assays provide comprehensive readouts of cell health and compound cytotoxicity, making them valuable tools for drug discovery and safety assessment.

Protocol: Multiparametric Cell Health and Mitochondrial Toxicity Assay

Cell Preparation: Plate cells (e.g., HeLa or U2OS) on a 96-well plate at a density of 5,000 cells/well and allow to adhere overnight [14].

Compound Treatment: Treat cells with test compounds across an appropriate dose range (e.g., 0.375 μM to 50 μM for cytochalasin D) for a specified duration (e.g., 4 hours) [14].

Cell Staining:

- Fix and permeabilize cells using appropriate buffers

- Stain with primary antibody against target protein (e.g., anti-tubulin) followed by fluorescent secondary antibody (e.g., Alexa Fluor 594)

- Counterstain with organelle-specific probes (e.g., Alexa Fluor 488 phalloidin for actin)

- Add whole-cell stain (e.g., HCS CellMask Near-IR stain)

- Include nuclear counterstain (e.g., Hoechst 33342) [14]

Image Acquisition: Acquire images using a high-content analysis platform (e.g., Thermo Scientific CellInsight CX7 LZR) with a 20x objective [14].

Analysis: Quantify parameters such as mean fiber area (actin), cell number, mitochondrial membrane potential, and cell viability using HCA software algorithms [14].

This approach enables simultaneous assessment of multiple toxicity parameters, including prelethal indicators such as loss of mitochondrial membrane potential, which often precedes cell death and provides valuable early indicators of compound cytotoxicity [14].

Apoptosis and Autophagy Analysis

HCA enables detailed mechanistic studies of cell death pathways through multiplexed assays that capture spatial and temporal information.

Protocol: Caspase Activation and Apoptosis Detection

Cell Treatment: Treat cells (e.g., U2OS) with apoptosis inducers across a concentration range (e.g., staurosporine from 0 to 1 μM) for a defined period (e.g., 4 hours) [14].

Staining Procedure:

- Incubate cells with fluorogenic CellEvent Caspase-3/7 Green Detection Reagent

- Add nuclear counterstain (Hoechst 33342) without washing steps to preserve fragile apoptotic cells [14]

Image Acquisition and Analysis: Acquire images using an HCS platform and quantify the percentage of cells showing caspase activation based on green fluorescence localized to the nucleus [14].

The fluorogenic nature of the CellEvent reagent provides significant advantages for dynamic studies. Since the reagent is nonfluorescent until cleaved by activated caspases, no washing steps are required, preserving the entire apoptotic population including fragile cells and facilitating time-lapse imaging studies [14].

Diagram 1: Apoptosis detection workflow using fluorogenic caspase substrate.

3D Cell Culture and Complex Models

The application of HCA to 3D cell models represents a significant advancement in biological relevance and predictive capability.

Protocol: 3D Spheroid Analysis

Spheroid Generation: Form spheroids using appropriate methods (hanging drop, ultra-low attachment plates, or bioreactors) [15].

Compound Treatment: Apply test compounds across desired concentration ranges, ensuring adequate penetration into 3D structures.

Staining Optimization: Use validated staining protocols for 3D cultures, considering extended incubation times for adequate probe penetration [15].

Image Acquisition: Employ confocal imaging systems with Z-stacking capabilities to capture full spheroid architecture [15].

Image Analysis: Apply specialized 3D analysis algorithms to quantify parameters such as spheroid volume, viability, and morphology through the entire structure.

The transition from 2D to 3D cell-based models is accelerating, driven by the need for more biologically relevant and predictive assay systems [16]. 3D cell culture was rated as the HCS task that most requires confocal imaging, highlighting the technical considerations for these complex models [15].

Research Reagent Solutions

Successful HCA experiments depend on well-validated reagents specifically optimized for high-content applications. The following table details essential materials and their functions in HCA workflows.

Table 3: Essential Research Reagents for High-Content Analysis

| Reagent Category | Specific Examples | Function in HCA |

|---|---|---|

| Nuclear Stains | HCS NuclearMask stains, Hoechst 33342 | Nuclear segmentation, cell identification, and DNA content analysis |

| Cytoplasmic Stains | HCS CellMask stains, CellTracker dyes | Whole-cell segmentation, cell shape analysis, and live-cell tracking |

| Viability Indicators | LIVE/DEAD reagents, viability dyes | Discrimination of live/dead cells, exclusion of non-viable cells from analysis |

| Apoptosis Detectors | CellEvent Caspase-3/7 reagents | Fluorogenic detection of caspase activation as early apoptosis indicator |

| Mitochondrial Probes | HCS Mitochondrial Health Kit | Simultaneous measurement of mitochondrial membrane potential and cell health |

| Metabolic Stress Indicators | CellROX reagents, HCS LipidTox stains | Measurement of reactive oxygen species and phospholipidosis/steatosis |

| Immunofluorescence Reagents | Alexa Fluor-conjugated antibodies | Specific target detection with high photostability for multiplexing |

| Cell Proliferation Markers | 5-ethynl-2´-deoxyuridine (EdU) | Click chemistry-based detection of newly synthesized DNA |

Integrated Workflow and Future Directions

The complete HCA workflow integrates each technological component into a seamless pipeline from sample preparation to data visualization. Modern systems are increasingly focusing on interoperability, with support for open data standards and API integrations that facilitate connection with laboratory information management systems (LIMS) and electronic lab notebooks [13].

Diagram 2: Integrated high-content analysis workflow from sample to data.

Emerging Trends and Future Outlook: The HCA landscape is evolving rapidly, with several key trends shaping future developments. AI and machine learning integration is enhancing automated phenotypic classification and analysis capabilities [12] [16]. The transition from 2D to 3D cell-based models is accelerating, providing more biologically relevant systems for drug discovery [16]. There is also increasing automation of cell-based assays to improve reproducibility and throughput, and growing integration with CRISPR screening platforms for real-time genome-wide functional analysis [16].

The global HCA market is projected to expand from USD 1.9 billion in 2025 to USD 3.1 billion by 2035, reflecting the growing adoption and importance of this technology in biomedical research [16]. This growth is driven by increased adoption of image-based drug discovery, phenotypic screening, and precision oncology platforms in early-stage translational research and preclinical trials [16]. As these trends continue, HCA systems will become increasingly accessible, powerful, and integrated into the digital research ecosystem, further solidifying their role as essential tools for modern cell biology research and drug development.

High-content analysis (HCA), also known as high-content screening, is a powerful approach that uses automated, high-throughput imaging systems to investigate large sets of visual data obtained from biological samples [12]. This methodology enables the simultaneous extraction of multiple parameters from individual cells in their physiologic context, providing both quantitative and qualitative data on features such as intensity, size, distance, and spatial distribution of fluorescent markers [17]. The multiplexed functional screening allows researchers to characterize cellular and 3D organoid phenotypes and study complex biological processes on a large scale, making it particularly valuable for drug discovery, toxicology, and basic research applications [12].

The transition from conventional single-parameter assays to multiparametric analysis represents a fundamental shift in biological research. Where traditional approaches might measure a single endpoint such as cell viability, multiparametric HCA can simultaneously capture diverse parameters including nuclear morphology, mitochondrial membrane potential, reactive oxygen species production, glutathione levels, and vacuolar density from the same sample [18]. This comprehensive profiling enables researchers to identify complex patterns and subtle phenotypic changes that would be invisible in simpler assays, providing unprecedented insight into cellular events and their alteration by chemical or genetic perturbations [17].

Key Parameters in Cell Health Assessment

Multiparametric assays simultaneously quantify numerous cellular characteristics to provide a comprehensive view of cell health and function. The table below summarizes critical parameters measured in typical HCA experiments for toxicity assessment and their biological significance.

Table 1: Key Multiparametric Readouts for Cell Health Assessment

| Readout | Detection Method | Biological Significance | Expected Change in Toxicity |

|---|---|---|---|

| Cellular ATP Levels | Luciferase-based luminescence [18] | Indicator of metabolic activity and cell viability [18] | Decrease [18] |

| Nuclear Count | Hoechst 33342 staining [18] | Terminal cell health parameter for detecting acute toxicity [18] | Decrease [18] |

| Nuclear Size | Hoechst 33342 staining [18] | Subtle marker of cell health; can increase or decrease [18] | Variable [18] |

| Reactive Oxygen Species (ROS) | CellROX Green staining [18] | Main determinant of intracellular redox state; activates cell death pathways [18] | Increase [18] |

| Mitochondrial Membrane Potential (MMP) | MitoTracker Red CMXRos [18] | Direct indicator of mitochondrial health [18] | Increase or decrease [18] |

| Mitochondrial Structure | MitoTracker Deep Red FM [18] | Changes in morphology indicate toxic exposure [18] | Increased fragmentation or swelling [18] |

| Glutathione (GSH) Levels | ThiolTracker Violet [18] | Cellular antioxidant stabilizing redox state [18] | Increase or decrease [18] |

| Vacuolar Density | ThiolTracker Violet [18] | Cellular response to osmotic pressure changes [18] | Increase [18] |

| Chromatin Condensation | HCS NuclearMask Deep Red [18] | Early apoptotic marker [18] | Increase [18] |

Experimental Protocols for Multiparametric Analysis

Protocol 1: Measurement of Cellular ATP Content Using Luminescence

Principle: Metabolically active cells maintain high intracellular ATP levels, which can be quantified using a luciferase enzyme that converts luciferin to oxyluciferin in the presence of Mg²⁺, O₂, and ATP. This reaction produces luminescence proportional to ATP concentration [18].

Materials:

- CellTiter-Glo 2.0 Cell Viability Assay (Promega, cat. no. G9242) [18]

- Complete medium with antibiotics (DMEM or EMEM) [18]

- HepG2 cells (MilliporeSigma, cat. no. 85011430) [18]

- Greiner Bio-One 384-well Polystyrene Cell Culture Microplates [18]

- Plate reader capable of measuring luminescence (e.g., EnVision 2105) [18]

Procedure:

- Thaw CellTiter-Glo 2.0 reagent overnight at 4°C and equilibrate to room temperature before use [18].

- Plate HepG2 cells (passage number 8-20) at a density of 4.0 × 10³ cells per 50 μl of complete medium in 384-well plates. Include control wells with medium only (positive control) and cells with DMSO only (negative control) [18].

- Pin transfer 300 nl of test compounds in 10 mM DMSO to experimental wells, resulting in 60 μM working concentration and 0.6% DMSO final concentration. Transfer 0.6% DMSO only to control wells [18].

- Incubate plates for 6 or 24 hours in a humidified 37°C, 5% CO₂ incubator [18].

- Add CellTiter-Glo 2.0 reagent to each well, mix thoroughly, and incubate at room temperature for 10 minutes to stabilize luminescence signal [18].

- Measure luminescence using a plate reader [18].

Protocol 2: Multiparametric High-Content Analysis of Mitochondrial Function and ROS

Principle: This multiplexed assay simultaneously measures cell count, nuclear morphology, mitochondrial membrane potential, mitochondrial structure, and reactive oxygen species using automated microscopy and fluorescence-based dyes [18].

Materials:

- HepG2 cells (passage 8-20) [18]

- Complete DMEM or EMEM with antibiotics [18]

- Hoechst 33342 (nuclear staining) [18]

- MitoTracker Red CMXRos (mitochondrial membrane potential) [18]

- MitoTracker Deep Red FM (mitochondrial structure) [18]

- CellROX Green (reactive oxygen species) [18]

- IN Cell Analyzer imager (Cytiva) or similar high-content imaging system [18]

- Image analysis software (e.g., IN Cell Developer/INCarta) [18]

Procedure:

- Plate HepG2 cells at appropriate density (e.g., 4.0 × 10³ cells/well in 384-well plates) and incubate overnight [18].

- Treat cells with test compounds for 6 or 24 hours as described in Protocol 1 [18].

- Prepare staining solution containing all fluorescent dyes at optimized concentrations in pre-warmed medium [18].

- Remove treatment medium and add staining solution to cells. Incubate for 30-45 minutes at 37°C, 5% CO₂ [18].

- Replace staining solution with fresh pre-warmed medium or PBS [18].

- Image plates using high-content imaging system with appropriate filters and exposure settings [18].

- Analyze images using automated algorithms: identify and mask nuclei first, then determine cell boundaries or areas around nuclei for subsequent measurements [18].

Protocol 3: Analysis of Glutathione Levels and Vacuolar Density

Principle: This protocol measures glutathione (GSH) levels as a key cellular antioxidant and evaluates vacuolar density as an indicator of cellular stress responses using ThiolTracker Violet staining [18].

Materials:

- ThiolTracker Violet staining solution (GSH and vacuolar density) [18]

- HCS NuclearMask Deep Red (nuclear masking) [18]

- Pre-warmed Hanks' Balanced Salt Solution (HBSS) or phenol-free medium [18]

Procedure:

- Culture and treat cells as described in previous protocols [18].

- Prepare working solution of ThiolTracker Violet in DMSO and dilute in pre-warmed HBSS or phenol-free medium to final concentration of 10-20 μM [18].

- Remove culture medium and wash cells gently with PBS [18].

- Add ThiolTracker Violet staining solution and incubate for 30 minutes at 37°C [18].

- Remove staining solution and wash twice with PBS [18].

- Add NuclearMask Deep Red stain diluted in PBS and incubate for 10-15 minutes at room temperature [18].

- Image cells using high-content imager with appropriate laser lines and filter sets [18].

- Quantify GSH levels based on ThiolTracker Violet intensity normalized to cell number, and assess vacuolar density through morphological analysis [18].

Data Analysis Strategies for Multiparametric Datasets

Dimension Reduction Techniques

The analysis of multiparametric HCS data presents significant computational challenges, as each experiment with n siRNA oligonucleotides represented by m image descriptors creates an n·m dimensional matrix that cannot be easily visualized or interpreted [17]. Dimension reduction serves as an essential first step in processing these complex datasets, with several established approaches available:

Multidimensional Scaling (MDS): A non-linear mapping approach that rearranges objects in an efficient manner to arrive at a configuration that best approximates the observed distances [17]. MDS uses minimization algorithms that evaluate different configurations with the goal of maximizing goodness-of-fit [17].

Self-Organizing Maps (SOM): An artificial neural network method that projects data from input space to a lower-dimensional output space [17]. Effectively, SOM functions as a vector quantization algorithm that creates reference vectors in a high-dimensional input space (with each dimension representing one image descriptor) [17].

Principal Component Analysis (PCA): A statistical technique that transforms the original variables into a new set of uncorrelated variables called principal components, which are ordered by the amount of variance they explain from the original dataset [19].

Table 2: Software Tools for Multiparametric Data Analysis

| Software Name | Type | Key Features | Source |

|---|---|---|---|

| CellMine | Commercial | Integrates screening data with images and links to compound information [17] | BioImagene [17] |

| AcuityXpress | Commercial | Integrates image acquisition, analysis, and informatics [17] | Molecular Devices [17] |

| Genedata | Commercial | Supports quality control and analysis of large-volume screening datasets [17] | Genedata [17] |

| R-project | Open Source | Statistical computing and graphics; highly customizable [17] | R Foundation [17] |

| CellHTS2 | Open Source | Analyzes cell-based high-throughput RNAi screens [17] | Bioconductor [17] |

| Weka | Open Source | Collection of machine learning algorithms for data mining [17] | University of Waikato [17] |

Classification and Hit Identification Strategies

When analyzing HCS data to identify whether a particular siRNA is similar to controls, four key characteristics must be considered in multiparametric analysis: absolute image descriptor value (whether the signal is at high or low level), subtractive degree of change between groups (difference in descriptor across samples), fold change between groups (ratio of descriptor across samples), and reproducibility of the measurement [17].

Current methodologies for analyzing large-scale RNAi data sets typically rely on ranking data based on single image descriptors or significance values [17]. However, identifying patterns of image descriptors and grouping genes into classes based on multiparametric analysis provides much greater insight into biological function and relevance [17]. Classification techniques essentially evaluate these four characteristics for each siRNA in various ways to rank those most similar to controls [17].

Comparative studies have evaluated different strategies for summarizing cell populations on the well level, with percentile values demonstrating high classification accuracy [19]. As expected, dimension reduction typically leads to a lower degree of discrimination between control samples, but enables more manageable data exploration [19].

Research Reagent Solutions

Table 3: Essential Reagents for Multiparametric Cell Health Assays

| Reagent/Catalog Number | Function | Application in HCA |

|---|---|---|

| CellTiter-Glo 2.0 (G9242) [18] | Measures cellular ATP via luciferase reaction [18] | Viability and metabolic activity assessment [18] |

| Hoechst 33342 [18] | Nuclear staining dye [18] | Cell counting and nuclear morphology analysis [18] |

| MitoTracker Red CMXRos [18] | Mitochondrial membrane potential sensor [18] | Assessment of mitochondrial function [18] |

| MitoTracker Deep Red FM [18] | Mitochondrial structure marker [18] | Analysis of mitochondrial morphology and network [18] |

| CellROX Green [18] | Reactive oxygen species detection [18] | Quantification of oxidative stress [18] |

| ThiolTracker Violet [18] | Glutathione levels and vacuolar density [18] | Redox state and stress response evaluation [18] |

| HCS NuclearMask Deep Red [18] | Nuclear counterstain for fixed cells [18] | Chromatin condensation and nuclear morphology [18] |

Workflow and Data Analysis Visualization

HCA Informatics Data Pipeline

Multiparametric Data Analysis Flow

From Data to Discovery: Methodologies and Real-World Applications in Drug Development

In the field of high-content multiparametric analysis of cellular events, the transition to fully automated workflows is not merely a convenience but a necessity for robust, reproducible, and scalable research. These integrated systems streamline the entire experimental process, from initial sample preparation to final automated imaging and data analysis, thereby minimizing manual intervention, reducing human error, and enabling the acquisition of large, statistically powerful datasets [20]. This application note provides a detailed protocol and framework for establishing such an automated workflow, specifically designed for researchers, scientists, and drug development professionals engaged in complex cellular screening. The integration of advanced instrumentation with sophisticated data management is critical for unlocking the full potential of high-content screening (HCS) in drug discovery and basic research [21].

Key Research Reagent Solutions

The following table catalogues essential materials and reagents crucial for successful automated high-content screening experiments.

Table 1: Essential Research Reagents and Materials for Automated HCS Workflows

| Item Name | Function/Application |

|---|---|

| Cell Lines/3D Organoid Models | Primary biological models used for phenotypic and multiparametric analysis; the choice dictates the relevant cellular events studied [20]. |

| Assay-Ready Cells | Pre-plated, often engineered cells (e.g., reporter lines) ready for compound treatment, reducing preparation steps in automated workcells. |

| Liquid Reagents | Includes cell culture media, buffers, fixatives, permeabilization agents, fluorescent dyes, and antibodies for immunolabeling [22]. |

| Chemical Compounds/Biotherapeutics | The library of small molecules, siRNAs, or biologics (e.g., antibodies) screened for their effect on cellular phenotypes [23]. |

| Microtiter Plates | Standardized plates (e.g., 96-well, 384-well) compatible with automated liquid handlers and imagers, ensuring consistent experimental format [20]. |

Quantitative Comparison of Automated HCS System Performance

Selecting an appropriate automated imaging system is a cornerstone of workflow design. The following table summarizes key performance metrics for a benchmark high-content screening system, providing a basis for comparison and planning.

Table 2: Performance Metrics of the ImageXpress HCS.ai High-Content Screening System [20]

| Performance Parameter | Specification / Metric |

|---|---|

| Throughput (96-well plates) | 40 plates in ~2 hours; 80 plates in ~4 hours (hands-off operation) |

| Imaging Mode | Label-free imaging for assay readiness assessment over time |

| Analysis Software | Integrated IN Carta Image Analysis Software with AI modules (e.g., SINAP, Phenoglyphs) |

| Automation Level | Full walkaway automation for plate handling, imaging, and analysis |

| System Scalability | Modular design, scalable from benchtop systems to fully integrated custom workcells |

| Data Output | Multiparametric phenotypic data from 2D cells or 3D organoid models |

Experimental Protocol: An Automated Workflow for High-Content Screening

This protocol outlines a generalized, automated workflow for high-content screening of cellular events, integrating instrumentation and data management as described in the search results.

The following diagram illustrates the logical flow and integration points of the automated HCS workflow.

Detailed Methodological Steps

Phase 1: Automated Sample Preparation and Treatment

Automated Cell Culture and Seeding:

- Utilize an integrated automated system, such as one featuring a collaborative robot (e.g., PreciseFlex 400) and an automated CO2 incubator (e.g., LiCONiC Wave STX44), to maintain consistent culture conditions [20].

- Program the liquid handler (e.g., Beckman Coulter Biomek i7) to seed cells into microtiter plates at a predetermined density. This ensures uniformity across all wells and plates, a critical factor for reproducible results.

Compound Treatment and Manipulation:

- Following an appropriate incubation period for cell attachment, use the automated liquid handler to perform media exchanges, add chemical compounds or biologics from compound libraries, and introduce fluorescent dyes or antibodies for labeling [20].

- Integrated plate washers (e.g., AquaMax) and centrifuges (e.g., Bionex Solutions HiG4) can be incorporated into the workcell for more complex assay steps requiring washing and centrifugation.

Phase 2: Automated Imaging and AI-Powered Analysis

Automated Image Acquisition:

- The robotic system transfers assay plates from the incubator to the high-content imager (e.g., ImageXpress HCS.ai system). Intuitive scheduling software (e.g., Biosero Green Button Go) manages this entire process, enabling walkaway operation [20].

- Configure the acquisition software (e.g., MetaXpress Acquire) to capture multiple images per well across different channels (e.g., brightfield and fluorescence) at specified time points for kinetic assays.

AI-Driven Image Analysis and Hit Identification:

- Upon completion of imaging, automatically transfer data to integrated analysis software (e.g., IN Carta Image Analysis Software) [21].

- Utilize built-in AI analysis modules (e.g., SINAP for synapse analysis or Phenoglyphs for complex phenotype classification) to perform batch analysis without the need for manual pipeline development [21]. The software will extract multiparametric data (e.g., cell count, morphology, fluorescence intensity, texture) from the images.

- Implement hit identification criteria within the software (e.g., Genedata Screener) to automatically rank and flag samples based on the analyzed phenotypic features [24].

Phase 3: Data Management for FAIR Compliance

- Structured Data Management and Storage:

- To manage the massive amounts of images and metadata generated, transfer all data to a dedicated image data management platform like OMERO (Open Microscopy Environment Remote Objects) [5].

- Use Workflow Management Systems (WMS) such as Galaxy or KNIME to create semi-automated scripts that facilitate the structured and reproducible transfer of images and associated metadata from local storage to the OMERO instance, ensuring data consistency and provenance tracking [5].

- This centralized repository links images with all relevant experimental metadata (reagents, protocols, analytic outputs), making data Findable, Accessible, Interoperable, and Reusable (FAIR) for users, collaborators, and the broader community [5].

Integrated Data Management Workflow Diagram

The management of the vast and complex data generated by HCS is an integral part of the automated workflow, as depicted below.

High-content multiparametric analysis of cellular events has become a cornerstone of modern biological research and drug development. Technologies such as mass cytometry (CyTOF) and high-parametric flow cytometry enable the simultaneous measurement of dozens of cellular parameters at single-cell resolution, generating incredibly complex datasets. To extract meaningful biological insights from this high-dimensional data, researchers are increasingly turning to sophisticated computational approaches. This application note provides detailed protocols and frameworks for implementing two powerful machine learning techniques—clustering via FlowSOM and dimensionality reduction via t-SNE and UMAP—within the context of multiparametric cellular analysis. These methods enable unbiased identification of cell populations and visualization of high-dimensional relationships, facilitating deeper understanding of cellular heterogeneity in applications ranging from immunology to oncology research.

Theoretical Foundations

Dimensionality Reduction: t-SNE and UMAP

Dimensionality reduction techniques are essential for visualizing and interpreting high-dimensional data by projecting it into a lower-dimensional space while preserving meaningful relationships.

t-Distributed Stochastic Neighbor Embedding (t-SNE) is a non-linear dimensionality reduction technique that excels at visualizing high-dimensional data in 2D or 3D space. The algorithm works by preserving local relationships, ensuring that data points close in high-dimensional space remain close in the low-dimensional projection [25]. t-SNE operates through several key steps:

- Computing High-Dimensional Similarities: For each data point, t-SNE constructs a probability distribution over all other points where similar points have high probability of being picked as neighbors [25].

- Perplexity Parameter: This parameter influences the number of nearest neighbors considered, balancing attention between local and global data structure (typically between 5-50) [25].

- Low-Dimensional Embedding: The algorithm initializes points randomly in the low-dimensional space and uses gradient descent to minimize the Kullback-Leibler divergence between the high-dimensional and low-dimensional probability distributions [25].

A critical advancement in t-SNE was the introduction of the Student-t distribution in the low-dimensional space, which addresses the "crowding problem" by allowing moderately distant points in high-dimensional space to be more accurately represented [25].

Uniform Manifold Approximation and Projection (UMAP) is a more recent dimensionality reduction technique that often provides superior runtime performance and better preservation of global data structure compared to t-SNE [26]. UMAP constructs a topological representation of the data and then optimizes a low-dimensional equivalent. Key advantages include:

- Preservation of more global data structure than t-SNE

- Faster computation times, especially for large datasets

- Ability to handle very high-dimensional data efficiently [27]

Clustering: FlowSOM

FlowSOM is an unsupervised clustering algorithm that utilizes Self-Organizing Maps (SOM) for analyzing high-dimensional cytometry data. The method combines the efficiency of SOM with the visualization capabilities of Minimal Spanning Trees (MST) to provide an automated clustering solution that outperforms many traditional algorithms in speed and accuracy [28] [26].

The algorithm operates through three main stages:

- Grid Node Initialization: Nodes are randomly distributed across the feature space

- Training: The algorithm calculates distances between each cell and each node, followed by positional updates through "attraction" of nearby data points

- Termination: Processing stops after nodes have updated for a predetermined number of iterations [28]

FlowSOM excels at identifying unique cellular subsets and visualizing relationships through a two-level clustering approach and star charts that show marker expression patterns across all cells [26].

Comparative Analysis of Methods

Table 1: Characteristics of High-Dimensional Data Analysis Methods

| Method | Primary Function | Key Parameters | Strengths | Limitations |

|---|---|---|---|---|

| t-SNE | Dimensionality reduction, visualization | Perplexity (5-50), Learning rate (10-1000), Iterations (≥1000) [25] [29] | Excellent local structure preservation, produces well-separated clusters [25] [30] | Computationally intensive, stochastic results, does not preserve global structure well [30] |

| UMAP | Dimensionality reduction, visualization | Number of neighbors, Minimum distance, Metric [26] | Faster computation, better global structure preservation [26] | Can oversimplify complex relationships, parameter sensitivity |

| FlowSOM | Clustering, population identification | rlen (iterations), grid dimensions (xdim, ydim), Learning rate (alpha) [28] | Fast clustering, handles large datasets, standardized reproducible analysis [28] [26] | Requires parameter optimization, results vary with parameters [28] |

Table 2: Performance Comparison of Dimension Reduction Methods for CyTOF Data (Based on Comprehensive Benchmarking) [31]

| Method | Global Structure Preservation | Local Structure Preservation | Downstream Analysis Performance | Overall Ranking |

|---|---|---|---|---|

| SAUCIE | High | High | High | Top performer |

| SQuaD-MDS | Excellent | Moderate | Moderate | Top performer |

| scvis | High | High | Moderate | Top performer |

| UMAP | Moderate | Moderate | Excellent | High performer |

| t-SNE | Low | Excellent | Moderate | Medium performer |

Application Protocols

FlowSOM Clustering Protocol for High-Dimensional Cytometry Data

Materials and Reagents:

- High-dimensional cytometry data (CyTOF or spectral flow cytometry) in FCS format

- R statistical environment (version 4.2.3 or higher)

- FlowSOM R package (version 2.6.0 or higher)

- flowCore package for data handling

Procedure:

Data Preprocessing

Parameter Optimization

- Systematically vary key parameters to identify optimal settings:

- For large datasets (>10^6 cells), increase rlen values to ensure stable clustering [28]

SOM Construction and Clustering

- Build Self-Organizing Map using

BuildSOMfunction with optimized parameters - Perform metaclustering to group similar SOM nodes

- Visualize results using Minimum Spanning Trees (MST) and star charts for marker expression patterns

- Build Self-Organizing Map using

Validation

- Compare FlowSOM clusters with manual gating results where applicable

- Verify biological relevance of identified populations through known marker expression

- Assess clustering stability by running multiple iterations with different random seeds

Troubleshooting Notes:

- Address bugs in publicly available FlowSOM package by implementing debugged version [28]

- For unstable clustering, increase rlen values and ensure adequate learning rate [28]

- Larger grid dimensions capture finer details but increase computational complexity [28]

t-SNE and UMAP Protocol for Data Visualization

Materials and Reagents:

- Processed single-cell data (cytometry or single-cell RNA sequencing)

- Python environment with scikit-learn, umap-learn, and matplotlib packages