Graph Neural Networks for Protein-Ligand Interactions: From Foundations to Clinical Applications

This article provides a comprehensive exploration of Graph Neural Networks (GNNs) and their transformative role in predicting protein-ligand interactions, a critical task in modern drug discovery.

Graph Neural Networks for Protein-Ligand Interactions: From Foundations to Clinical Applications

Abstract

This article provides a comprehensive exploration of Graph Neural Networks (GNNs) and their transformative role in predicting protein-ligand interactions, a critical task in modern drug discovery. Tailored for researchers, scientists, and drug development professionals, it covers the foundational principles of GNNs for modeling biomolecular structures, details cutting-edge architectural methodologies and their specific applications, addresses critical challenges such as data bias and model generalization, and presents rigorous validation frameworks and performance comparisons. By synthesizing the latest research, this review serves as a strategic guide for leveraging GNNs to accelerate the identification of therapeutic candidates and improve the efficiency of the drug design pipeline.

The Foundations of GNNs in Modeling Biomolecular Structures and Interactions

Why Graphs? Representing Proteins and Ligands as Network Structures

In computational drug discovery, accurately predicting the binding affinity between a protein and a ligand is a critical yet challenging task. Traditional sequence-based deep learning models often struggle to capture the spatial relationships and complex three-dimensional structures that dictate these interactions [1]. Graph Neural Networks (GNNs) have emerged as a powerful solution to this limitation by naturally representing protein-ligand complexes as molecular graphs, where nodes represent atoms and edges represent the chemical bonds or interactions between them [1] [2]. This representation allows GNNs to capture intricate topological information and spatial relationships within the complex, enabling more precise modeling of molecular interactions than sequence-based approaches [1].

The fundamental advantage of graph structures lies in their ability to model the non-Euclidean geometry of molecular systems. Where conventional deep learning architectures like CNNs and LSTMs process regularly structured data, GNNs operate directly on graph-structured data, making them uniquely suited for representing the irregular and complex connectivity patterns found in biomolecules [2]. This capability is particularly valuable for protein-ligand interaction modeling because it preserves the critical structural information that determines binding behavior, allowing researchers to move beyond simplified molecular fingerprints or sequence representations to more physically accurate models of molecular interactions [2].

Graph Construction Methodologies

Molecular Graph Representation

Representing protein-ligand complexes as graphs requires precise methodological decisions to capture biologically relevant interactions. In typical implementations, proteins and ligands are represented as molecular graphs where nodes correspond to atoms and edges represent either covalent bonds or spatial proximities [1]. A crucial step in this process involves defining the protein-ligand interaction region using a distance threshold, commonly 5.0 Å, which includes only protein residues within this range around the ligand to balance prediction accuracy with computational efficiency [1]. This focused approach centers the analysis on the binding pocket where interactions actually occur.

Graph construction involves creating two distinct graph types: one for inter-molecular interactions (between protein and ligand atoms) and another for intra-molecular interactions (within each molecule) [1]. This separation allows the model to capture both the binding interactions and the internal structural constraints of each molecule. The representation method typically applies the same node feature representation for both protein and ligand atoms without additional feature distinctions, ensuring generality and scalability across different molecular systems [1].

Node and Edge Feature Engineering

Comprehensive featurization of nodes and edges is essential for conveying structural and chemical information to the graph neural network. Node features typically incorporate multiple atomic properties that influence molecular interactions and bonding behavior. The table below summarizes the core node features used in state-of-the-art implementations:

Table: Core Node Features for Protein-Ligand Graph Representation

| Feature | Description | Representation |

|---|---|---|

| Atom Type | Elemental identity | One-hot encoding: 'C', 'N', 'O', 'S', 'F', 'P', 'Cl', 'Br', 'I', 'B', 'Si', 'Fe', 'Zn', 'Cu', 'Mn', 'Mo', 'Other' |

| Atom Degree | Number of covalent bonds | Integer value 0-5 |

| Formal Charge | Electronic charge | Real value |

| Chirality | Spatial arrangement | 'R', 'S', 'Other' |

| Number of Hydrogens | Hydrogen count | Integer value 0-4 |

| Aromaticity | Participation in aromatic system | Boolean |

Edge features typically represent either Euclidean distance between atoms or node degree connections [1]. Some advanced implementations employ edge augmentation strategies to improve model robustness, which may include randomly deleting certain edges (particularly those exceeding 4 Å) to simulate structural noise from docking errors, while also randomly adding new edges to enrich graph connectivity diversity [1]. This approach enhances the model's ability to generalize across varying data qualities.

Experimental Dataset Preparation

Robust experimental validation requires carefully curated datasets with reliable binding affinity measurements. The PDBbind database serves as the primary data source for most contemporary research, providing high-quality protein-ligand complexes with experimentally determined binding affinities (Kd, Ki, or IC50 values) [1] [2]. Standard practice involves using PDBbind v2020, which contains 19,443 complexes that are randomly divided into training (N = 16,954) and validation (N = 2,000) sets, with careful exclusion of samples overlapping with test sets and those unprocessable by RDKit [1].

For standardized benchmarking, the CASF-2016 core set (N = 285) serves as the primary test set due to its diverse and non-redundant collection of protein-ligand complexes across 57 clusters [1] [2]. Additional validation often employs the CSAR-NRC set (N = 85) to further evaluate model generalization capability [1]. To address potential data similarity issues between training and test sets, some researchers implement time-based splits, using complexes deposited before 2019 for training/validation and those deposited after 2019 for testing, providing a more realistic assessment of performance on novel complexes [2].

Advanced Graph Neural Network Architectures

Edge-Enhanced Graph Neural Networks

Recent advances in GNN architectures for protein-ligand affinity prediction have introduced specialized edge enhancement mechanisms to better capture molecular interaction information. The Edge-enhanced Interaction Graph Network (EIGN) exemplifies this approach with three main components: a normalized adaptive encoder, a molecular information propagation module, and an output module [1]. A key innovation in EIGN is its edge update mechanism that integrates node feature information into edge features, enhancing the representational power of edge features for capturing interaction information between nodes [1]. This design enables the model to leverage enriched edge information during message passing, allowing it to capture more nuanced atomic interactions.

EIGN employs separate processing streams for inter- and intra-molecular interactions, addressing the limitation in earlier models that combined these interaction types and potentially missed local structural details [1]. The refined modeling of interactions within protein-ligand complexes through dedicated message-passing modules represents a significant architectural advancement. Experimental results demonstrate that this approach achieves a root mean squared error of 1.126 and Pearson correlation coefficient of 0.861 on CASF-2016, outperforming state-of-the-art methods [1].

Fusion Models with Ligand Feature Extraction

To address data heterogeneity and imbalance between proteins and ligands, fusion models like LGN incorporate additional ligand feature extraction to effectively capture both local and global features within protein-ligand complexes [2]. LGN specifically handles the significant volume discrepancy between proteins (typically hundreds of nodes) and ligands (typically dozens of nodes) by creating separate processing streams, with the ligand graph processed independently without protein nodes to obtain purified ligand structural information [2].

This architecture generates molecular descriptors in the form of vectors that embed structural information, which are then combined with interaction fingerprints to create a comprehensive representation [2]. The integration of these complementary information sources significantly enhances predictive performance, with LGN achieving Pearson correlation coefficients of up to 0.842 on the PDBbind 2016 core set compared to 0.807 when using complex graph features alone [2]. The integration of ensemble learning techniques further improves model robustness against data similarity effects [2].

Experimental Results and Performance Metrics

Quantitative Performance Comparison

Rigorous benchmarking against established datasets demonstrates the performance advantages of graph-based approaches for protein-ligand binding affinity prediction. The following table summarizes the quantitative performance of leading GNN models on standard test sets:

Table: Performance Comparison of GNN Models on Protein-Ligand Affinity Prediction

| Model | Test Set | RMSE | Pearson Correlation (Rp) | MAE |

|---|---|---|---|---|

| EIGN | CASF-2016 | 1.126 | 0.861 | Not reported |

| LGN | CASF-2016 | Not reported | 0.842 | Not reported |

| LGN | PDBbindv2016 core set | Not reported | 0.842 | Not reported |

| LGN (complex features only) | PDBbindv2016 core set | Not reported | 0.807 | Not reported |

Performance metrics standardly include Root Mean Square Error (RMSE), Pearson correlation coefficient (Rp), and Mean Absolute Error (MAE) [2]. The mathematical definitions for these metrics are:

- Pearson Correlation Coefficient: ( Rp = \frac{\sum{i=1}^{n}(f(xi)-\overline{f(x)})(Yi-\overline{Y})}{\sqrt{\sum{i=1}^{n}(f(xi)-\overline{f(x)})^2\sum{i=1}^{n}(Yi-\overline{Y})^2}} ) [2]

- Root Mean Square Error: ( RMSE = \sqrt{\frac{1}{N}\sum{i=1}^{n}(Yi-f(x_i))^2} ) [2]

- Mean Absolute Error: ( MAE = \frac{1}{N}\sum{i=1}^{n}|Yi-f(x_i)| ) [2]

Experimental Validation Protocols

Comprehensive model evaluation extends beyond basic performance metrics to include ablation studies, feature importance analysis, and data similarity analysis [1]. Ablation studies systematically remove specific model components to isolate their contribution to overall performance, validating architectural choices like the edge update mechanism in EIGN or the ligand feature extraction in LGN [1] [2]. Feature importance analysis identifies which node and edge features most significantly impact prediction accuracy, informing future feature selection strategies.

Data similarity analysis examines the relationship between training and test set composition, addressing concerns that models may perform well on complexes similar to those in training but poorly on novel structures [2]. This has led to the adoption of time-split validation protocols where models trained on older complexes are tested on recently discovered ones, providing a more realistic assessment of real-world applicability [2]. Additional validation on external datasets like CSAR-NRC further establishes generalization capability beyond standard benchmarks [1].

Implementation and Visualization Toolkit

Graph Visualization Tools

Effective visualization of protein-ligand graph structures is essential for model interpretation and validation. While NetworkX provides basic graph visualization functionality, its documentation explicitly recommends dedicated visualization tools for sophisticated applications [3]. The following tools represent the current standard for graph visualization in structural biology research:

Table: Essential Tools for Graph Visualization and Analysis

| Tool | Primary Function | Application in Protein-Ligand Research |

|---|---|---|

| Cytoscape | Network visualization and analysis | Visualization of complex biomolecular interactions |

| Gephi | Graph visualization and exploration | Analysis of large-scale network properties |

| Graphviz | Graph layout algorithms | Automated layout of molecular graphs |

| PGF/TikZ | LaTeX typesetting | Publication-quality graph diagrams |

| Grave | Network visualization with Matplotlib | Python-based simple graph plotting |

NetworkX supports export to formats compatible with these specialized tools, such as GraphML for Cytoscape and DOT for Graphviz [3]. The to_latex() function in NetworkX enables direct export to LaTeX format using the TikZ library, particularly valuable for generating publication-quality figures [3]. For Python-based workflows, Grave provides a simplified visualization API built on Matplotlib with sensible defaults for network drawing [4].

Practical Implementation Workflow

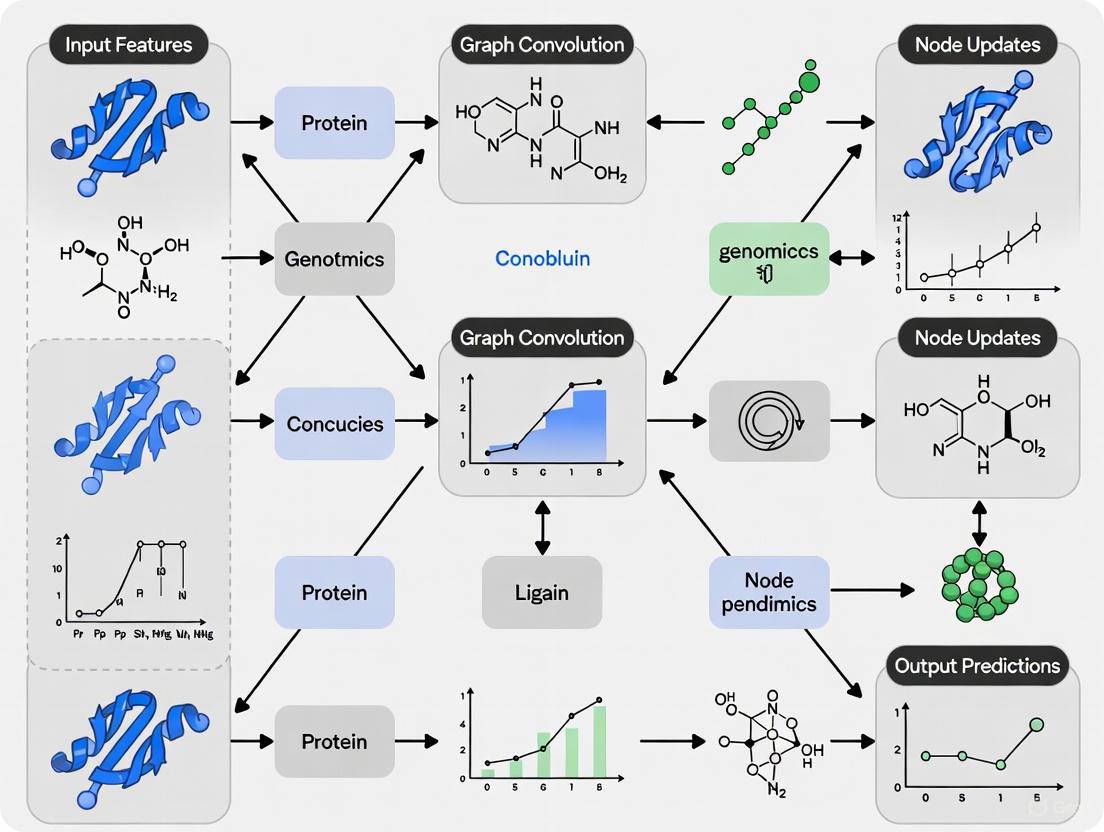

Diagram: Protein-Ligand Graph Analysis Workflow

The implementation workflow for graph-based protein-ligand affinity prediction follows a systematic process from data preparation to model evaluation. The diagram above outlines the key stages, beginning with structure preparation and progressing through graph construction, featurization, model training, and performance evaluation.

Essential Research Reagents and Computational Tools

Table: Essential Research Reagents and Computational Tools

| Item | Function | Application Context |

|---|---|---|

| PDBbind Database | Source of protein-ligand complexes with binding affinity data | Primary data source for training and validation [1] [2] |

| CASF-2016 Core Set | Standardized benchmark for model comparison | Performance evaluation and method comparison [2] |

| RDKit | Cheminformatics and machine learning tools | Molecular descriptor calculation and graph processing [2] |

| NetworkX | Python package for complex network analysis | Graph construction and basic analysis [3] |

| Graphviz | Graph visualization software | Layout algorithms for molecular graphs [3] |

| PyTorch/TensorFlow | Deep learning frameworks | GNN model implementation and training |

Successful implementation requires appropriate access to computational resources capable of handling 3D structural data and graph neural network training. The PDBbind database provides the fundamental experimental data, while tools like RDKit enable processing of molecular structures into graph representations [2]. Specialized visualization tools like Cytoscape and Graphviz facilitate the interpretation and communication of results, complementing the analytical capabilities of NetworkX and deep learning frameworks [3].

The accurate prediction of protein-ligand interactions is a cornerstone of modern drug discovery, enabling researchers to identify promising therapeutic candidates more efficiently and at a lower cost [5]. In recent years, Graph Neural Networks (GNNs) have emerged as powerful computational tools for this task, capable of natively representing the non-Euclidean structure of molecular data [6] [7]. These models operate directly on graph-based representations of biological molecules, where atoms constitute nodes and chemical bonds form edges, thereby preserving critical structural information that is lost in grid-based or vector representations [8]. Within this domain, three core architectural paradigms have demonstrated particular efficacy: Graph Convolutional Networks (GCNs), Graph Attention Networks (GATs), and Message Passing Neural Networks (MPNNs). Framed within the broader thesis that GNNs are revolutionizing protein-ligand interaction research, this technical guide provides an in-depth examination of these architectures, their experimental implementations, and their performance in predicting binding affinity—a key parameter in early-stage drug development.

Core Architectural Principles

Graph Convolutional Networks (GCNs)

GCNs generalize the operation of convolutional neural networks to graph-structured data. They learn node representations by aggregating feature information from a node's local neighborhood, with each neighbor's contribution typically being normalized by the node degrees [5]. In the context of protein-ligand scoring, models like HGScore leverage GCNs to process heterogeneous graphs of protein-ligand complexes, separating edges according to their class (inter- or intra-molecular) [9]. This allows the network to discriminate information flow based on edge type, leading to more informative complex representations. A significant challenge with vanilla GCNs is their limited depth due to problems like over-smoothing, where node representations become indistinguishable after several layers. To address this, advanced implementations like PLA-Net incorporate strategies from computer vision, such as residual and dense connections, to enable the training of deeper networks and the learning of more global chemical information [5].

Graph Attention Networks (GATs)

GATs introduce an attention mechanism into the neighborhood aggregation process, allowing the model to assign different levels of importance to each neighbor node [8]. Unlike GCNs, which use fixed, pre-defined weighting schemes, a GAT layer computes attention coefficients for each edge using a learnable function of the node features [8]. The core operation of a GATv2 layer, as used in the GrASP model for binding site prediction, can be formalized as shown in the workflow below. This capability is particularly valuable in biological contexts, as not all atomic interactions contribute equally to binding. For instance, when identifying druggable binding sites, a GAT can learn to attend more strongly to specific protein surface atoms that are critical for ligand binding, thereby improving both prediction accuracy and interpretability [8].

Message Passing Neural Networks (MPNNs)

The MPNN framework provides a generalized and flexible abstraction for GNNs, unifying many specific architectures [10]. It formalizes the operation of a GNN into two phases: a message-passing phase and a readout phase. During the message-passing phase, each node receives "messages" from its neighboring nodes over several time steps, progressively refining its own representation. The readout phase then aggregates all node representations into a graph-level embedding for downstream tasks like binding affinity prediction [10]. The Proximity Graph Network (PGN) is a prime example of an MPNN application for protein-ligand complexes. PGN constructs a unified graph containing both ligand atoms and proximal protein atoms, connecting them with "proximity edges" that allow information to flow between the two molecules during learning [10]. This explicit modeling of the intermolecular interface is a key reason for its strong performance in affinity prediction tasks.

Quantitative Performance Comparison

The efficacy of these core architectures is demonstrated through rigorous benchmarking on public datasets like PDBBind and CASF. The following table summarizes the reported performance of various GNN-based models on key prediction tasks.

Table 1: Performance of GNN Architectures on Protein-Ligand Interaction Tasks

| Model | Core Architecture | Task | Dataset | Performance |

|---|---|---|---|---|

| PLA-Net [5] | GCN | Target-Ligand Interaction | Actives as Decoys | 86.52% mAP (102 targets) |

| APMNet [7] | Cascade GCN | Binding Affinity | PDBBind v2016 | Pearson R: 0.815, RMSE: 1.268 |

| GrASP [8] | GAT | Binding Site Prediction | PDB Structures | State-of-the-art recovery & precision |

| PGN (PFP) [10] | MPNN | Affinity Prediction | PDBBind | Strong generalization, comparable to SOTA |

| PLAIG [11] | Hybrid GNN | Binding Affinity | PDBBind v2019 | PCC: 0.78 (Refined Set), PCC: 0.82 (Core Set 2016) |

| HGScore [9] | Heterogeneous GCN | Scoring/Ranking/Docking | CASF 2013/2016 | Among best AI methods |

Performance metrics indicate that while all three architectures deliver strong results, their strengths can be task-dependent. GCN-based models like PLA-Net and HGScore have shown exceptional performance in binary interaction prediction and scoring power [5] [9]. GATs, with their inherent interpretability, excel in tasks like binding site identification where understanding which atoms the model "attends to" is valuable [8]. The MPNN framework, as implemented in PGN and PLAIG, demonstrates robust and generalized capabilities for the critical task of binding affinity regression, a direct predictor of compound potency [10] [11].

Experimental Protocols and Methodologies

Data Preparation and Featurization

A critical first step in applying GNNs to protein-ligand problems is the construction and featurization of molecular graphs. The standard data source is the PDBbind database, which provides curated protein-ligand complexes with associated experimental binding affinities (e.g., as K(d) or K(i)) [9]. A common preprocessing step, used by models like HGScore and PLAIG, is to define the protein's binding pocket as all residues with at least one heavy atom within a cutoff distance (e.g., 10 Å) of any ligand atom [11] [9]. The featurization of nodes (atoms) and edges (bonds) is crucial for model performance. Typical atom features include atomic number, degree, formal charge, aromaticity, and whether it belongs to the ligand or protein [10]. Edge features often encompass bond type (single, double, etc.), aromaticity, and, for inter-molecular "proximity edges," the distance between atoms [10].

Model Training and Evaluation

Training GNNs for binding affinity prediction is typically framed as a regression task, using loss functions like Smooth L1 Loss (e.g., in APMNet [7]) to minimize the difference between predicted and experimental affinity values (often pK(d) or pK(i)). Standard evaluation metrics include:

- Pearson Correlation Coefficient (PCC/R): Measures the linear correlation between predicted and true values.

- Root Mean Square Error (RMSE): Measures the average magnitude of prediction errors.

- Mean Absolute Error (MAE): Similar to RMSE but less sensitive to large errors.

To ensure generalizability, models are trained and tuned on a refined set of PDBBind and then evaluated on a separate, high-quality core set (e.g., CASF 2013 or 2016) that was not used during training [7] [9]. This protocol helps prevent overfitting and provides a fair comparison against other methods.

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Computational Tools and Datasets for GNN-based Protein-Ligand Research

| Resource Name | Type | Primary Function | Relevance to GNN Workflow |

|---|---|---|---|

| PDBbind [9] | Database | Comprehensive collection of protein-ligand complexes with binding affinities. | Provides the primary structured data for training and benchmarking models. |

| RDKit | Software | Cheminformatics and machine learning toolkit. | Used for molecule graph processing, feature calculation, and file format conversions. |

| PyTorch Geometric | Library | A PyTorch-based library for deep learning on graphs. | Provides the core building blocks for implementing GCN, GAT, and MPNN models. |

| OpenBabel | Software | Chemical toolbox for file format conversion and descriptor calculation. | Often used alongside RDKit for preprocessing molecular structures. |

| MGLTools | Software | Preparation and analysis of molecular structures. | Used to convert protein and ligand files into .pdbqt format for docking and analysis. |

| sc-PDB [8] | Database | Annotated database of druggable binding sites. | Used for training and evaluating binding site prediction models like GrASP. |

Integrated Workflow and Architectural Comparison

Implementing a GNN for protein-ligand interaction prediction involves a multi-stage pipeline that integrates the components previously discussed. The workflow begins with data preparation, where 3D structures of protein-ligand complexes are converted into graph representations and featurized. The choice of GNN architecture (GCN, GAT, or MPNN) then dictates how information is propagated and transformed through the graph to learn a meaningful representation of the complex. Finally, a readout function generates a prediction for the target property, such as a binding affinity score or an interaction probability.

Each architecture offers distinct advantages. GCNs provide a strong, computationally efficient baseline. GATs offer built-in interpretability through their attention weights, which can highlight key interacting atoms. MPNNs, as a general framework, offer great flexibility in the design of message and update functions, potentially capturing complex physical interactions. A critical consideration for all architectures is the risk of memorization. Studies have shown that some GNNs may predominantly memorize training ligand data rather than learning fundamental interaction patterns, which can limit their performance on novel chemotypes [12]. Techniques such as principal component analysis (PCA) and ensemble learning with stacking regressors, as employed in PLAIG, can help mitigate this overfitting and improve generalization [11].

GCNs, GATs, and MPNNs form the foundational toolkit for applying graph neural networks to protein-ligand interaction research. Each architecture provides a unique mechanism for learning from the complex, non-Euclidean structure of biological molecules, leading to significant advances over traditional scoring functions. GCNs offer a balanced approach of efficiency and performance, GATs bring interpretability to the forefront, and the flexible MPNN framework allows for the explicit modeling of intricate intermolecular interactions. The ongoing integration of physical constraints, better regularization to prevent memorization, and the development of more holistic molecular representations are poised to further enhance the predictive power and real-world impact of these models. As these core architectures continue to evolve, they solidify the role of GNNs as an indispensable technology in the computational drug discovery pipeline.

Defining and Predicting Binding Affinity (pKd, pKi, IC50)

The accurate prediction of binding affinity is a cornerstone of computational drug discovery, directly influencing the efficacy and optimization of potential therapeutics. This whitepaper examines the critical task of defining and predicting key affinity metrics—pKd, pKi, and IC50—within the framework of graph neural networks (GNNs). We explore how modern GNN architectures, coupled with advanced training paradigms like transfer learning and rigorous dataset curation, are overcoming historical challenges to achieve robust generalizability in predicting protein-ligand interactions. The discussion is supported by quantitative data, detailed experimental protocols, and visualizations of the underlying workflows, providing a technical guide for researchers and drug development professionals.

Binding affinity quantifies the strength of interaction between a protein and a ligand, making it a critical parameter in drug discovery for prioritizing lead compounds. It is typically measured through experimental assays and reported as dissociation constant (Kd), inhibition constant (Ki), or half maximal inhibitory concentration (IC50). For computational modeling, these values are often transformed into logarithmic scales (pKd = -log10(Kd), pKi = -log10(Ki), pIC50 = -log10(IC50)) to linearize the relationship with binding energy. The primary challenge in affinity prediction lies in developing models that can generalize beyond their training data to accurately score novel protein-ligand complexes, a task for which graph neural networks have recently shown significant promise [13].

Graph Neural Networks for Affinity Prediction

Graph Neural Networks (GNNs) have emerged as a powerful class of algorithms for molecular property prediction due to their natural ability to represent and learn from molecular structures. In the context of protein-ligand interactions, GNNs model the complex as a graph where atoms are nodes and bonds are edges, effectively capturing the topological and spatial features critical for binding [14].

A key advancement in this domain is the move towards sparse graph modeling of interactions. Unlike architectures that process the entire protein, which can be computationally prohibitive, these models focus on the binding pocket, the local region where the ligand binds. This approach, utilized by the GEMS (Graph neural network for Efficient Molecular Scoring) model, constructs a heterogeneous graph that includes both protein and ligand atoms, enabling a detailed representation of the interaction interface [13]. This method has been shown to maintain high prediction performance on independent benchmarks, suggesting a genuine understanding of intermolecular interactions rather than data memorization [13].

Another transformative strategy is transfer learning in a multi-fidelity setting. Drug discovery often involves a screening funnel where inexpensive, low-fidelity data (e.g., from high-throughput screening) is abundant, while high-fidelity experimental data is sparse and costly to acquire. GNNs can be pre-trained on large volumes of low-fidelity data to learn generalizable molecular representations, which are then fine-tuned on smaller, high-fidelity datasets. This approach has been demonstrated to improve model performance on sparse high-fidelity tasks by up to eight times while using an order of magnitude less high-fidelity training data [14]. Critical to the success of this transfer is the use of adaptive readout functions, which replace simple, fixed operations (like sum or mean) with neural network-based operators (e.g., attention mechanisms) to aggregate atom-level embeddings into molecule-level representations, thereby enhancing the model's expressive power [14].

Experimental Protocols & Workflows

A Protocol for Robust Model Training with PDBbind CleanSplit

Accurate evaluation of a GNN's predictive power requires a training and testing protocol that prevents data leakage. The following methodology outlines the use of the PDBbind CleanSplit dataset to ensure genuine generalization [13].

- 1. Dataset Acquisition: Obtain the PDBbind database (general set) and the Comparative Assessment of Scoring Functions (CASF) benchmark dataset.

- 2. Structure-Based Filtering:

- Input: All protein-ligand complexes from the PDBbind training set and CASF test set.

- Similarity Calculation: For each potential train-test pair, compute three similarity metrics:

- Protein Similarity: Using TM-score.

- Ligand Similarity: Using Tanimoto coefficient based on molecular fingerprints.

- Binding Conformation Similarity: Using pocket-aligned ligand root-mean-square deviation (RMSD).

- Exclusion: Remove any training complex that exceeds predefined similarity thresholds (e.g., TM-score > 0.7, Tanimoto > 0.9, RMSD < 2.0 Å) with any complex in the CASF test set.

- 3. Redundancy Reduction: Apply a clustering algorithm to the filtered training set to identify and remove highly similar complexes within the training data itself, ensuring a more diverse and non-redundant dataset.

- 4. Model Training: Train the GNN model (e.g., GEMS) exclusively on the resulting PDBbind CleanSplit training set.

- 5. Validation: Evaluate the trained model's performance on the strictly independent CASF benchmark to obtain a true measure of its generalization capability.

A Protocol for High-Throughput Virtual Screening with DENVIS

The DENVIS (deep neural virtual screening) pipeline demonstrates an end-to-end protocol for virtual screening that bypasses the computational bottleneck of molecular docking [15].

- 1. Input Preparation:

- Protein Pocket: Represent the target protein's binding pocket as a graph. Nodes are featurized using a combination of atomic features (e.g., atom type, hybridization) and molecular surface features (e.g., shape, curvature).

- Ligand Library: Represent each small molecule in a screening library as a graph, with nodes as atoms (featurized with element type, charge, etc.) and edges as bonds (featurized with bond type, distance, etc.).

- 2. Model Processing: Feed the protein pocket graph and each ligand graph into a GNN model (often an ensemble of GNNs for improved robustness).

- 3. Interaction Scoring: The GNN processes the graphs and outputs a predicted binding affinity score (e.g., pKi) for each protein-ligand pair.

- 4. Hit Identification: Rank all ligands in the library by their predicted affinity and select the top-ranking compounds for further experimental validation. This workflow offers several orders of magnitude faster screening times compared to docking-based methods [15].

A Protocol for Multi-Fidelity Transfer Learning

This protocol leverages datasets of varying fidelity to improve predictions on small, high-quality datasets [14].

- 1. Low-Fidelity Pre-training:

- Train a GNN with an adaptive readout function on a large, low-fidelity dataset (e.g., millions of data points from primary high-throughput screening).

- 2. High-Fidelity Fine-tuning:

- Take the pre-trained model and fine-tune its parameters on a small, high-fidelity dataset (e.g., thousands of data points from confirmatory assays).

- Strategies can include:

- Label Augmentation: Using the pre-trained model to generate low-fidelity labels for the high-fidelity data, which are then used as input features for the high-fidelity model.

- Direct Fine-tuning: Initializing the high-fidelity model with the weights from the low-fidelity model and training it further on the high-fidelity data.

- 3. Evaluation: Assess the fine-tuned model on a held-out test set of high-fidelity data to quantify the performance gain over a model trained from scratch.

The following workflow diagram illustrates the multi-fidelity transfer learning protocol.

Data & Benchmarking

The performance of predictive models is highly dependent on the quality and structure of the training data. The curation of the PDBbind CleanSplit dataset has revealed significant data leakage in previous benchmarks, leading to inflated performance metrics [13].

Table 1: Key Datasets for Binding Affinity Prediction

| Dataset Name | Description | Key Feature | Role in Model Development |

|---|---|---|---|

| PDBbind [13] | A comprehensive collection of protein-ligand complexes with experimental binding affinity data. | Provides structural and affinity data for training. | Traditional benchmark source, but contains redundancies and data leakage with test sets. |

| CASF Benchmark [13] | A benchmark set used for the comparative assessment of scoring functions. | Standard set for evaluating prediction accuracy. | Previously contained complexes highly similar to PDBbind training set, inflating scores. |

| PDBbind CleanSplit [13] | A curated version of PDBbind with reduced train-test leakage and internal redundancy. | Ensures strict separation between training and test data. | Enables genuine evaluation of model generalization; recommended for robust model training. |

| QMugs [14] | A dataset of ~650,000 drug-like molecules with 12 quantum mechanical properties. | Contains multi-fidelity quantum properties. | Useful for pre-training and transfer learning studies in a molecular design context. |

When models are retrained on the CleanSplit dataset, the performance of many state-of-the-art models drops substantially, underscoring the previous overestimation of their capabilities [13]. In contrast, models like GEMS, which employ sparse graph architectures and transfer learning, maintain high performance, demonstrating true generalization.

Table 2: Comparative Model Performance on CASF Benchmark

| Model / Approach | Key Architectural / Training Feature | Reported Performance (on original splits) | Performance on PDBbind CleanSplit | Generalization Assessment |

|---|---|---|---|---|

| Classical Docking (AutoDock Vina) [13] | Force-field based scoring function. | Limited accuracy | N/A | Poor to moderate |

| GenScore, Pafnucy [13] | Deep-learning based scoring functions. | Excellent benchmark performance | Performance drops markedly | Overestimated due to data leakage |

| GEMS (GNN) [13] | Sparse graph model; transfer learning from language models. | State-of-the-art | Maintains high performance | Robust, based on genuine understanding of interactions |

| Multi-Fidelity GNN [14] | Transfer learning with adaptive readouts. | Improves performance by up to 8x in low-data regimes | N/A | Excellent for sparse high-fidelity tasks |

The Scientist's Toolkit

The following table lists key software and data resources essential for research in GNN-based prediction of binding affinity.

Table 3: Essential Research Reagents & Resources

| Resource Name | Type | Function / Application |

|---|---|---|

| PDBbind CleanSplit [13] | Dataset | A filtered training dataset designed to eliminate data leakage, enabling robust model training and evaluation. |

| MAGPIE [16] | Software | A tool for visualizing and analyzing thousands of interactions between a target ligand and its protein binders, useful for interpreting model predictions and identifying interaction hotspots. |

| DENVIS [15] | Software Pipeline | An end-to-end GNN-based pipeline for high-throughput virtual screening that avoids the docking step, drastically reducing screening time. |

| GEMS [13] | Model | A GNN architecture that uses a sparse graph model and transfer learning to achieve state-of-the-art generalization on binding affinity prediction. |

| Adaptive Readouts [14] | Algorithmic Component | Neural network-based operators (e.g., attention mechanisms) that replace simple sum/mean operations in GNNs to create more expressive molecular representations, crucial for transfer learning. |

The prediction of binding affinity is being transformed by graph neural networks. The critical lessons for researchers are the paramount importance of rigorous dataset curation, as exemplified by PDBbind CleanSplit, and the power of advanced modeling strategies such as sparse graph architectures, transfer learning across fidelities, and adaptive readout functions. These approaches collectively address the historical pitfalls of data leakage and model memorization, paving the way for the development of predictive tools that can genuinely accelerate drug discovery and the understanding of protein-ligand interactions.

The accurate prediction of protein-ligand binding affinity is a cornerstone of computational drug discovery. In this field, the PDBbind database and the Comparative Assessment of Scoring Functions (CASF) benchmark have established themselves as foundational resources for developing and evaluating graph neural network (GNN) models. PDBbind provides a comprehensive collection of experimental binding affinities (Kd, Ki, IC50) for protein-ligand complexes sourced from the Protein Data Bank (PDB), offering a structured repository for training machine learning models. The CASF benchmark, in turn, provides standardized test sets and evaluation metrics to objectively compare the performance of different scoring functions, including modern GNNs. Together, these resources form an essential ecosystem for advancing structure-based drug design, though recent research has revealed critical challenges that must be addressed to ensure proper model generalization.

For GNNs specifically, which learn molecular representations from graph-structured data of protein-ligand complexes, these databases provide the fundamental training ground and testing arena. However, a significant issue identified in recent literature is the problem of data leakage between PDBbind and the CASF benchmarks. Studies have revealed that nearly half (49%) of CASF complexes have exceptionally similar counterparts in the PDBbind training set, creating an inflated perception of model performance that doesn't translate to genuinely novel targets. This revelation has prompted the development of new dataset splitting strategies and more rigorous evaluation protocols that are crucial for researchers to understand when developing GNN models for binding affinity prediction.

Critical Analysis of Data Integrity and Benchmarking Practices

The Data Leakage Problem in Standard Benchmarks

Recent investigations have uncovered substantial data leakage between the PDBbind database and CASF benchmarks, severely compromising the reliability of reported model performance metrics. When models are trained on PDBbind and evaluated on CASF benchmarks, the high structural similarity between training and test complexes enables prediction through memorization rather than genuine learning of protein-ligand interactions. Researchers discovered this issue through a structure-based clustering algorithm that identified complexes with similar protein structures (TM scores), ligand structures (Tanimoto scores > 0.9), and comparable binding conformations (pocket-aligned ligand root-mean-square deviation) [13].

The extent of this leakage is substantial, with nearly 600 high-similarity pairs detected between PDBbind training and CASF complexes, affecting 49% of all CASF complexes. This means nearly half the test complexes do not present truly novel challenges to trained models. This leakage explains why some GNNs achieve competitive CASF performance even when critical protein or ligand information is omitted from inputs, indicating they aren't learning genuine interaction principles but exploiting dataset biases [13]. One analysis demonstrated that a simple similarity-based algorithm that predicts affinity by averaging labels from the five most similar training complexes could achieve Pearson R = 0.716 on CASF2016, competitive with some published deep learning models [13].

PDBbind CleanSplit: A Solution for Robust Evaluation

To address data leakage concerns, researchers have proposed PDBbind CleanSplit, a refined training dataset curated through structure-based filtering to eliminate train-test leakage and internal redundancies. This approach implements a multimodal filtering algorithm that combines protein similarity, ligand similarity, and binding conformation similarity to identify and remove problematic overlaps [13].

The CleanSplit methodology involves two crucial filtering steps:

- Removing train-test leakage: Excluding all training complexes that closely resemble any CASF test complex, plus any training complexes with ligands identical to those in the test set (Tanimoto > 0.9)

- Reducing training set redundancy: Iteratively removing complexes from the training set to resolve internal similarity clusters, eliminating memorization opportunities

This filtering results in the removal of approximately 4% of training complexes due to train-test similarity and an additional 7.8% due to internal redundancies [13]. The resulting dataset enables genuine evaluation of model generalization to unseen protein-ligand complexes, as demonstrated by the substantial performance drop observed in state-of-the-art models when retrained on CleanSplit versus the original PDBbind.

Table 1: Impact of PDBbind CleanSplit on Model Generalization

| Model Type | Performance on Standard Split | Performance on CleanSplit | Interpretation |

|---|---|---|---|

| Previous State-of-the-Art Models | High benchmark performance (e.g., GenScore, Pafnucy) | Substantial performance drop | Original performance largely driven by data leakage |

| GEMS (GNN with transfer learning) | High benchmark performance | Maintains high performance | Genuine generalization capability to unseen complexes |

Experimental Protocols for Robust Model Development

Data Preprocessing and Preparation

Proper data preprocessing is essential for developing GNNs that generalize well to novel protein-ligand complexes. The standard workflow begins with data acquisition from PDBbind, followed by rigorous filtering to eliminate both train-test leakage and internal redundancies. For GNN-based approaches, molecular structures are typically converted into graph representations where atoms constitute nodes and chemical bonds form edges [17].

Advanced node feature initialization incorporates both atomic properties and topological context using circular algorithms inspired by Extended-Connectivity Fingerprints (ECFP). This approach generates atom identifiers by hashing chemical properties (Daylight atomic invariants) and iteratively updating them with neighborhood information, effectively capturing both atomic characteristics and molecular topology [17]. For protein representation, common approaches include using residue-level features or pocket-centered representations focused on the binding site.

The following Graphviz diagram illustrates the complete workflow from data collection to model evaluation:

GNN Model Training with Uncertainty Quantification

Implementing GNN training with proper regularization and uncertainty quantification is critical for producing reliable models. The PIGNet framework provides a representative example of modern training protocols, utilizing multiple data sources including original complexes, docking poses, random screening, and cross-screening data [18].

For robust training, the following practices are recommended:

- Uncertainty-aware training: Implement Monte Carlo dropout with a dropout rate of 0.2 (higher than the standard 0.1) during both training and inference, performing multiple stochastic forward passes to estimate predictive uncertainty

- Multi-task learning: Simultaneously optimize for affinity prediction on different data types (original structures, docking poses) to improve generalization

- Structured regularization: Use physical constraints or energy-based losses to incorporate domain knowledge

Training should be monitored using both validation performance and early stopping based on independent test sets that exhibit minimal similarity to training data. The model checkpoints that achieve best performance on these rigorous validation metrics should be selected for final evaluation [18].

Benchmarking Protocols and Performance Assessment

Comprehensive model evaluation requires rigorous benchmarking across multiple test sets and metrics. The standard protocol involves testing on CASF-2016 benchmark components (scoring, ranking, docking, screening) and additional independent sets like CSAR1 and CSAR2 [18]. For each benchmark, researchers must provide three key inputs: the directory of preprocessed complex data, the directory of keys for data access, and the file listing complex keys with binding affinities.

To execute proper benchmarking:

- Scoring power evaluation: Calculate Pearson's R and RMSE between predicted and experimental binding affinities

- Ranking power evaluation: Assess Spearman's correlation for congeneric series

- Docking power evaluation: Measure success in identifying native poses among decoys

- Screening power evaluation: Evaluate virtual screening enrichment factors

For critical interpretation, results should be compared against baseline methods and ablation studies that test model components. Particularly informative are ablations that omit protein nodes from input graphs, which test whether models genuinely learn interactions versus memorizing ligand properties [13].

Table 2: Essential Benchmarking Metrics for Protein-Ligand Affinity Prediction

| Benchmark Type | Key Metrics | Evaluation Focus | Interpretation Guidelines |

|---|---|---|---|

| Scoring Power | Pearson's R, RMSE | Accuracy of absolute affinity prediction | R > 0.8 indicates strong performance; significant drop from standard split to CleanSplit suggests overfitting |

| Ranking Power | Spearman's ρ | Relative ordering of similar complexes | Critical for lead optimization; ρ > 0.6 indicates useful ranking capability |

| Docking Power | Pose identification success rate | Ability to identify native binding poses | Success rate > 0.8 indicates strong pose discrimination |

| Screening Power | Enrichment Factors (EF1%, EF10%) | Virtual screening performance | EF10% > 10 indicates useful screening utility |

Implementation Tools and Research Reagent Solutions

Successful implementation of GNNs for binding affinity prediction requires specific computational tools and resources. The following table summarizes essential components of the researcher's toolkit:

Table 3: Research Reagent Solutions for GNN Development

| Tool Category | Specific Tools | Function | Implementation Notes |

|---|---|---|---|

| Deep Learning Frameworks | PyTorch | Model implementation and training | Provides flexible GNN implementation; required for PIGNet [18] |

| Cheminformatics | RDKit | Molecular graph construction and feature calculation | Essential for processing SMILES strings and generating molecular graphs [17] |

| Structural Biology | BioPython, ASE | Protein structure processing and analysis | Handles PDB files and structural operations [18] |

| Scientific Computing | NumPy, SciPy | Numerical operations and statistics | Fundamental data manipulation and metric calculations |

| Specialized Scoring | Smina | Molecular docking and scoring | Provides docking capabilities and traditional scoring functions [18] |

| Model Interpretation | GNNExplainer, Integrated Gradients | Explaining model predictions and identifying important features | Critical for validating learned interaction patterns [17] |

Advanced Considerations and Future Directions

Explaining GNN Predictions and Validating Learned Physics

As GNNs become more prevalent in binding affinity prediction, interpreting their predictions and validating the underlying reasoning has become essential. Explainable AI techniques such as GNNExplainer and Integrated Gradients can identify which atoms and residues contribute most to predictions, helping researchers verify whether models learn biophysically plausible interaction patterns [17]. Studies analyzing GNN learning characteristics have found that while models increasingly prioritize interaction information for predicting high affinities, they still show strong dependence on ligand memorization [19].

Ablation studies that systematically remove or shuffle different input components (protein nodes, ligand nodes, spatial information) provide critical insights into what models actually learn. These analyses have revealed that some GNNs can maintain reasonable performance even when protein information is omitted, indicating they may rely heavily on ligand-based memorization rather than genuine interaction understanding [19]. For this reason, rigorous ablation studies should be standard practice in model development and evaluation.

Integration with Complementary Benchmarks

While PDBbind and CASF provide foundational resources, researchers should consider complementary benchmarks to thoroughly assess model capabilities. The PLA15 benchmark offers quantum-chemical estimates of protein-ligand interaction energies at the DLPNO-CCSD(T) level, enabling validation against higher-level theoretical references [20]. Evaluation on PLA15 has revealed significant performance variations across methods, with semi-empirical quantum methods (g-xTB) currently outperforming many neural network potentials on interaction energy prediction [20].

Additionally, the Open Force Field protein-ligand benchmark provides carefully curated datasets for free energy calculations, emphasizing proper benchmark construction and preparation practices [21]. Using such complementary benchmarks helps develop more comprehensive models that capture both empirical affinities and physical interaction energies.

PDBbind and CASF benchmarks provide essential foundations for developing GNN models of protein-ligand interactions, but must be used with careful attention to data leakage and evaluation rigor. The recent introduction of PDBbind CleanSplit addresses critical concerns about train-test contamination, enabling more reliable assessment of model generalization. Successful implementation requires comprehensive benchmarking across multiple metrics and test sets, incorporation of uncertainty quantification, and rigorous interpretation using explainable AI techniques. By adhering to these practices and utilizing the provided experimental protocols, researchers can develop more robust and reliable GNN models that genuinely advance computational drug discovery.

The accurate prediction of protein-ligand interactions (PLI) represents a cornerstone of modern drug discovery, dictating the efficacy and safety profiles of small-molecule therapeutics. Traditional computational methods have relied heavily on explicit three-dimensional structural information of protein-ligand complexes, obtained through resource-intensive techniques like molecular dynamics simulations and molecular docking. However, the emergence of graph neural networks (GNNs) has introduced a paradigm shift, enabling researchers to predict bioactivity from simpler sequence-based and graph-based representations without direct access to complex structural data. This technical guide explores the innovative computational frameworks that leverage heterogeneous biological knowledge—from primary protein sequences to proteomic networks—to predict PLI through an informational spectrum that bridges 2D sequences and 3D structural insights.

Recent advances demonstrate that lightweight GNNs, trained with quantitative PLIs of limited proteins and ligands, can successfully predict the strength of unseen interactions despite having no direct access to structural information about protein-ligand complexes [22]. This structure-free approach challenges conventional paradigms by encoding the entire chemical and proteomic space within heterogeneous graphs that encapsulate primary protein sequence, gene expression, protein-protein interaction networks, and structural similarities between ligands. Surprisingly, these methods perform competitively with, or even exceed, the capabilities of structure-aware models [22], suggesting that biological and chemical knowledge embedded through representation learning may substantially enhance current PLI prediction methodologies.

Computational Frameworks for PLI Prediction

Graph Neural Network Architectures

Graph neural networks have emerged as particularly suitable architectures for PLI prediction due to their innate ability to process non-Euclidean data structures that naturally represent molecular systems. In typical implementations, proteins and ligands are represented as graphs where nodes correspond to amino acid residues or atoms, and edges represent their interactions or bonds. Message-passing mechanisms then allow information to flow across these graphs, enabling the model to learn complex interaction patterns critical for predicting binding affinity.

Multiple GNN architectures have been adapted for PLI prediction, each with distinct characteristics:

- Graph Convolutional Networks (GCNs) apply convolutional operations to graph structures, aggregating feature information from neighboring nodes [23].

- Graph Attention Networks (GATs) incorporate attention mechanisms that assign varying importance to different neighbors during feature aggregation [23].

- Graph Isomorphism Networks (GINs) offer enhanced discriminative power through theoretically grounded architectures that can capture structural similarities [23].

Studies evaluating these architectures have revealed that while GNNs show promising performance, they exhibit distinct learning characteristics. Some models demonstrate a tendency to memorize ligand training data rather than comprehensively learning protein-ligand interaction patterns [19]. However, certain GNN architectures increasingly prioritize interaction information when predicting high-affinity complexes, suggesting they can learn meaningful interaction patterns despite the memorization tendency [19].

The Knowledge Graph Paradigm: G-PLIP

A groundbreaking approach in structure-free PLI prediction is the G-PLIP model, which operates without direct structural information about protein-ligand complexes [22]. Instead, it derives predictive power from a heterogeneous knowledge graph that integrates multiple biological data modalities:

- Primary protein sequences

- Gene expression patterns

- Protein-protein interaction (PPI) networks

- Structural similarities between ligands

This integrative approach embeds rich biological and chemical knowledge directly into the model's architecture, enabling competitive performance with structure-aware methods while operating at a fraction of the computational cost [22]. The success of G-PLIP suggests that existing PLI prediction methods may be substantially improved by incorporating representation learning techniques that capture broader biological context.

Multi-Scale Integration: Graph-of-Graphs

For more complex prediction tasks, researchers have developed a "graph-of-graphs" approach that integrates protein-protein interaction networks with high-resolution structural information [23]. This multi-scale framework operates at two distinct levels:

- Macro-scale: Models proteins as nodes within a PPI network, incorporating topological and protein-level features

- Micro-scale: Represents each protein as a graph of amino acid residues, leveraging structure-based features

This architecture has proven effective for predicting complex biological properties like the mode of inheritance of genetic diseases and functional mechanisms of variants [23], demonstrating the power of hierarchical graph-based representations for biological prediction tasks.

Experimental Protocols and Methodologies

Data Curation and Preparation

High-quality dataset construction is fundamental to effective PLI prediction models. A comprehensive pocket-centric structural dataset for advancing drug discovery includes high-quality information on more than 23,000 pockets, 3,700 proteins across 500 organisms, and nearly 3,500 ligands [24]. The careful curation process involves multiple systematic steps:

Protein Selection and Filtering:

- Initial metadata is extracted from the entire PDB database

- Heterodimer complexes (HD dataset) representing protein-protein interactions and protein-ligand complexes (PL dataset) are identified

- Cross-referencing ensures protein-ligand pairs associate with heterodimer complexes

- Quality filters include resolution thresholds (≤3.5Å for X-ray, ≤3Å for cryo-EM) and difference between R-free and R-factor (≤0.07) [24]

Structure Processing:

- Removal of heteroatoms and water molecules

- Repair of incomplete amino acids using FoldX software

- Protonation with OPLS-AA force field using GROMACS

- Conversion to .mol2 format for compatibility [24]

Pocket Detection and Classification:

- VolSite employed for pocket detection and characterization

- Adjustment of parameters to accommodate PPI pocket characteristics

- Classification into three pocket types:

- Orthosteric competitive (PLOC): Direct competition with protein partner's epitope

- Orthosteric non-competitive (PLONC): No direct competition but functional influence

- Allosteric (PLA): Situated near orthosteric binding pockets without direct overlap [24]

Feature Engineering and Encoding

Effective feature representation is crucial for model performance. Multiple encoding strategies have been developed:

Conventional Chemical Features:

- 1024-bit extended-connectivity fingerprints (ECFP) with a diameter of 6 atoms

- 1444 physicochemical properties calculated using PaDEL-Descriptor [25]

Docking-Based Protein-Ligand Interaction Features (DPLIFE):

- Docking scores from AutoDock Vina

- Protein-ligand interaction profiles covering 185 residues

- Interaction type encoding: 0 (no interaction), 1 (hydrophobic), 2 (π-π stacking), 3 (π-cation), 5 (salt bridges), 6 (hydrogen bonds) [25]

Biological and Network Features:

- 78 protein-level features covering structural, functional, evolutionary, and regulatory properties

- 73 residue-level features reflecting structural, sequence-based, biochemical, and evolutionary characteristics [23]

Model Training and Validation

Robust model development requires careful experimental design:

Data Splitting Strategies:

- Cluster human protein sequences using MMseqs2 with stringent thresholds (20% sequence identity, 20% alignment coverage)

- Assign protein clusters to training (80%), validation (10%), and test (10%) sets to minimize information leakage [23]

Hyperparameter Optimization:

- Evaluation of hidden layer sizes across multiple values (128, 64, 32, 16, 8)

- Learning rate variation across five values (10⁻² to 5×10⁻⁴)

- Training with binary cross-entropy loss for up to 100 epochs with early stopping [23]

Performance Evaluation:

- Assessment using standard metrics: F₁ score, precision, recall, mean squared error (MSE)

- Comparison against baseline models (DOMINO, MOI-Pred, SVM implementations) [23]

Table 1: Performance Comparison of GNN Architectures for PLI Prediction

| Model Type | F₁ Score | Precision | Recall | Best Application |

|---|---|---|---|---|

| GCN | 0.745 | 0.776 | 0.725 | Functional effect prediction |

| GAT | 0.750 | 0.770 | 0.731 | MOI prediction |

| GIN | 0.671 | 0.764 | 0.621 | - |

| LDA (DOMINO) | 0.685 | 0.721 | 0.654 | Baseline comparison |

Table 2: Dataset Characteristics for PLI Model Development

| Dataset Component | Scale/Size | Application in Models |

|---|---|---|

| Pockets | 23,000+ | Feature extraction, binding site characterization |

| Proteins | 3,700+ across 500+ organisms | Training and validation across diverse targets |

| Ligands | Nearly 3,500 | Chemical space representation, interaction mapping |

| PPI Network | 17,248 nodes, 375,494 edges | Biological context integration |

Case Study: METTL3 Inhibitor Prediction

A recent implementation demonstrating the integration of machine learning and protein-ligand interaction profiling focused on the discovery of METTL3 inhibitors [25]. METTL3 has emerged as a key enzyme in tumorigenesis by enhancing the translation efficiency of oncogenic transcripts, making it a promising therapeutic target for cancers including acute myeloid leukemia.

The research team developed a METTL3 inhibitory bioactivity (pIC50) prediction model (ML3-mix-DPLIFE) by combining machine learning, protein-ligand docking, and protein-ligand interaction analysis [25]. The approach encoded conventional physicochemical properties, chemical fingerprints, and docking-based protein-ligand interaction features (DPLIFE) while leveraging auto-stacking of six algorithms. A feature selection algorithm further optimized the model (ML3-mix-DPLIFE-FS), resulting in a promising mean squared error (MSE) of 0.261 and a Pearson's correlation coefficient (CC) of 0.853 on an independent test dataset [25].

This case study exemplifies the practical application of the informational spectrum approach, successfully integrating 2D chemical information with 3D structural insights through docking to predict bioactivity without requiring complete structural characterization of each protein-ligand complex.

Table 3: Computational Tools for PLI Prediction Research

| Tool/Resource | Function | Application Context |

|---|---|---|

| RDKit | Cheminformatics toolkit | Generation of 3D ligand structures, fingerprint calculation |

| AutoDock Vina | Protein-ligand docking | Binding pose prediction, interaction analysis |

| PLIP (Protein-Ligand Interaction Profiler) | Interaction analysis | Extraction of residue-specific interaction features |

| VolSite | Pocket detection and characterization | Binding site identification and analysis |

| FoldX | Protein structure repair | Fixing incomplete amino acids in structural data |

| GROMACS | Molecular dynamics | Structure protonation and preparation |

| AlphaFold Database | Protein structure prediction | Source of high-quality predicted structures |

| STRINGdb, BioGRID, HuRI | Protein-protein interactions | PPI network construction for biological context |

| AutoGluon | Automated machine learning | Model stacking and ensemble prediction |

Visualization of Methodological Frameworks

Heterogeneous Knowledge Graph Architecture

Diagram Title: Knowledge Graph Integration for PLI Prediction

Multi-Scale Graph-of-Graphs Framework

Diagram Title: Multi-Scale Graph-of-Graphs Architecture

The evolving landscape of PLI prediction demonstrates a clear trajectory from structure-dependent approaches toward integrative frameworks that leverage the informational spectrum from 1D sequences to 3D structures. Graph neural networks serve as the unifying computational fabric that enables this integration, transforming heterogeneous biological knowledge into predictive models with competitive accuracy. The key insight emerging from recent research is that biological context—encapsulated in protein-protein interaction networks, gene expression patterns, and evolutionary constraints—provides critical information that can compensate for limited structural data.

As the field advances, the most promising approaches will likely combine physical principles with data-driven learning, leveraging the strengths of both paradigms. The integration of docking-based interaction features with sequence-based and network-based information represents an important step in this direction, offering both predictive accuracy and structural interpretability. For drug discovery professionals, these computational advances translate to accelerated hit identification, reduced experimental costs, and the ability to navigate complex biological systems with increasing sophistication. The informational spectrum approach to PLI prediction thus represents not merely a technical improvement, but a fundamental shift in how we conceptualize and compute molecular interactions in silico.

Advanced GNN Architectures and Their Drug Discovery Applications

The accurate prediction of protein-ligand interactions (PLIs) constitutes a critical step in therapeutic design and discovery, influencing various molecular-level properties including substrate binding, product release, and target protein function [26]. While experimental characterization of these interactions remains the most accurate method, it is notoriously time-consuming and labor-intensive, creating an pressing need for robust computational approaches [26] [27]. Traditional computational methods, including molecular dynamics and molecular docking, offer solutions but face significant limitations in computational expense and accuracy [26]. With the advent of deep learning, particularly graph neural networks (GNNs), researchers have found powerful tools for modeling the complex spatial relationships in biomolecular structures [1].

A fundamental challenge in PLI prediction lies in the representation of the protein-ligand complex and how the interactions between these distinct molecules are captured computationally [26]. This technical guide explores a sophisticated paradigm within GNN architectures: parallel networks that separately process protein and ligand representations before integrating their information. This approach represents a significant departure from traditional single-graph methods, potentially offering enhanced interpretability, reduced reliance on prior knowledge of interactions, and improved performance in predicting binding affinity and activity [26] [27]. Framed within the broader thesis of GNN applications in PLI research, this document provides an in-depth examination of the core architectures, methodologies, and experimental protocols that underpin these parallel GNN systems, serving as a comprehensive resource for researchers, scientists, and drug development professionals.

Core Architectural Paradigms

The Case for Parallel Graph Architectures

Most existing deep learning models for PLI prediction rely heavily on two-dimensional protein sequence data and SMILES string representations for ligands [26] [27]. While accessible due to data abundance, these sequence-based approaches fail to capture crucial three-dimensional structural information governing molecular interactions [26] [28]. Binding events occur within specific three-dimensional pockets of the target protein, where the protein-ligand complex forms due to conformational changes in both molecules post-translation [26]. Structure-based methods that leverage 3D structural data therefore offer a more physiologically relevant foundation for interaction prediction [26].

Graph neural networks have emerged as particularly powerful tools for modeling these spatial relationships and three-dimensional structures within intermolecular complexes [1]. By representing proteins and ligands as molecular graphs with nodes (atoms) and edges (bonds or interactions), GNNs can effectively capture both internal molecular topology and external interaction patterns [26] [1]. However, conventional GNN architectures for PLI often combine inter- and intra-molecular interactions within a single graph representation, which may limit their ability to capture local structural details and complex interaction patterns [1]. The parallel GNN paradigm addresses this limitation by processing protein and ligand graphs through separate model pathways before integration, enabling more nuanced feature learning and representation [26].

Key Parallel GNN Architectures

GNNF: Domain-Aware Featurization with Early Integration

The GNNF (Graph Neural Network with distinct Featurization) architecture serves as a base implementation that employs expert-informed featurization to enhance domain-awareness while maintaining an integrated graph structure [26] [27]. In this approach, the protein and ligand adjacency matrices are combined into a single matrix, with edges added between protein and ligand nodes based on distance matrices obtained from docking simulations or co-crystal structures [26]. The architecture employs distinct, domain-specific featurization for protein and ligand atoms, incorporating biochemical information processed through cheminformatics tools like RDKit to make the model more physics-informed [26].

Table 1: GNNF Architecture Specifications

| Component | Implementation Details | Domain Awareness |

|---|---|---|

| Graph Structure | Single combined graph with protein-ligand interaction edges | Interaction edges based on spatial proximity (≤5.0Å) [1] |

| Node Featurization | Domain-specific features for protein vs. ligand atoms [26] | Biochemical features via RDKit [26] |

| Attention Mechanism | Single GAT layer processes combined feature matrix [26] | Dual learning pathways: PLI adjacency & ligand adjacency [26] |

| Interaction Modeling | Early embedding strategy with simultaneous learning [27] | Dependent on prior knowledge of interactions [26] |

The GNNF attention head utilizes a joined feature matrix for the ligand and target protein, which passes through one Graph Attention Network (GAT) layer that learns attention based on the protein-ligand interaction adjacency matrix and a second GAT layer that learns attention based on the ligand adjacency matrix [26]. The outputs of these two GAT layers are subtracted in the final step of each attention head, enabling the model to capture complex interaction patterns [26]. This "early embedding" strategy allows simultaneous learning of representations for the protein and ligand complex as a unified system [27].

GNNP: Limited Prior Knowledge with Separate Processing

The GNNP (Parallel Graph Neural Network) architecture represents a novel implementation that uniquely learns interactions with limited prior knowledge by processing protein and ligand graphs in separate, parallel streams [26] [27]. This approach removes the dependency on pre-computed protein-ligand interaction information, instead learning the interaction patterns directly from the separate molecular representations [26]. In the absence of co-crystal structures, this is particularly valuable as it eliminates the need for docking simulations to model PLI [26].

In GNNP, the 3D structures of the protein and ligand are initially embedded separately based on their individual adjacency matrices, which represent internal bonding interactions [26]. The attention head passes separate features for the protein and ligand to individual GAT layers that learn attention based on their respective adjacency matrices [26]. The outputs of these parallel GAT layers are concatenated in the final step of each attention head [26]. This discrete representation enables the model to process protein and ligand structures directly without requiring prior knowledge of their interaction patterns, which would otherwise need to be computed through physics-based simulations [26].

Table 2: GNNP Architecture Specifications

| Component | Implementation Details | Knowledge Requirements |

|---|---|---|

| Graph Structure | Separate protein and ligand graphs [26] | No combined adjacency matrix required [26] |

| Node Featurization | Separate feature matrices maintained [26] | Biochemical features via RDKit [26] |

| Attention Mechanism | Parallel GAT layers for protein and ligand [26] | Separate attention learning pathways [26] |

| Interaction Modeling | Late integration via concatenation [26] | No prior interaction knowledge needed [26] |

| Docking Dependency | Independent of docking simulations [26] | Can work directly with 3D structures [26] |

The fundamental strategy of GNNP involves learning embedding vectors of the ligand graph and protein graph independently and subsequently combining the two embedding vectors for prediction [27]. This "late integration" approach provides a foundation for novel implementation of structural analysis that requires no docking input except for separate protein and ligand 3D structures [27]. This parallelization makes GNNP particularly valuable for high-throughput screening applications where docking would be computationally prohibitive [26].

Experimental Framework and Methodologies

Data Preparation and Complex Representation

The foundation of effective parallel GNN training lies in appropriate data preparation and molecular representation. Publicly available databases such as PDBbind provide high-quality protein-ligand complexes with experimentally measured binding affinities (e.g., Kd, Ki), forming a reliable foundation for building and validating PLI prediction models [1]. The PDBbind v2020 database contains 19,443 complexes which can be partitioned into training (16,954), validation (2,000), and test sets using standardized benchmarks like CASF-2013 (195 complexes) and CASF-2016 (285 complexes) [1].

In graph-based representations, protein-ligand complexes are structured as graphs where nodes represent atoms and edges represent bonds or interactions [1]. For parallel GNN architectures, separate graphs are constructed for the protein and ligand components. The protein graph typically focuses on binding pocket residues within a specific distance threshold (e.g., 5.0Å) around the ligand, balancing prediction accuracy and computational cost [1]. This threshold-based selection of interaction regions is consistent across multiple implementations [1].

Node featurization incorporates domain-specific biochemical information to enhance model performance. Typical atom-level features include atom type, degree, hybridization, valence, partial charge, aromaticity, and hydrogen bonding capabilities [26]. These features are processed through one-hot encoding and transformed into vector representations, providing a rich descriptive foundation for the GNN to learn relevant patterns [26] [1]. Edge representations may utilize Euclidean distance or node degree information, with some implementations incorporating an edge augmentation strategy that randomly adds or removes edges to simulate structural noise and enhance model robustness [1].

Implementation Protocols

Network Architecture Configuration

The implementation of parallel GNNs requires specific architectural configurations to effectively process separate protein and ligand representations:

GNNP Implementation Protocol:

- Input Representation: Prepare separate graph structures for protein binding pocket and ligand using RDKit [26] [1].

- Feature Engineering: Implement domain-aware featurization for protein and ligand atoms as specified in Table 1 of the original research [26].

- Parallel GAT Streams: Configure separate GAT layers for protein and ligand graphs with independent attention mechanisms [26].

- Embedding Separation: Maintain separate embedding vectors throughout initial processing stages [27].

- Integration Layer: Concatenate the final embeddings from both streams for prediction [26].

- Output Head: Implement task-specific output layers for classification (activity prediction) or regression (affinity prediction) [26].

GNNF Implementation Protocol:

- Graph Combination: Construct a unified graph incorporating both protein and ligand nodes [26].

- Interaction Edges: Add edges between protein and ligand nodes based on spatial proximity (≤5.0Å) [1].

- Joint Feature Matrix: Create a combined feature matrix maintaining domain-specific features [26].