Generative AI for De Novo Molecular Design: 2025 Landscape, Methods, and Clinical Impact

This article provides a comprehensive overview of the transformative role of generative artificial intelligence (AI) in de novo molecular design for drug discovery.

Generative AI for De Novo Molecular Design: 2025 Landscape, Methods, and Clinical Impact

Abstract

This article provides a comprehensive overview of the transformative role of generative artificial intelligence (AI) in de novo molecular design for drug discovery. Tailored for researchers and drug development professionals, it explores the foundational principles establishing generative AI as a paradigm shift from traditional methods. The content delves into the key architectural frameworks—including variational autoencoders, generative adversarial networks, transformers, and diffusion models—and their practical applications in designing novel, optimized molecules. It further addresses critical challenges such as data bias, model interpretability, and synthesizability, offering insights into advanced optimization strategies like reinforcement learning and multi-objective optimization. Finally, the article examines the validation landscape through real-world clinical candidates and benchmarking, synthesizing key takeaways and future directions for integrating generative AI into biomedical research and clinical pipelines.

The New Paradigm: How Generative AI is Reshaping Molecular Discovery

The paradigm of molecular discovery is undergoing a fundamental transformation, shifting from the screening of existing compound libraries to the computational creation of novel biological entities. De novo design represents this core paradigm shift, moving beyond traditional modification of natural templates to the generation of entirely novel molecular structures with predefined functions [1]. This approach leverages generative artificial intelligence to explore vast regions of the biochemical space that remain inaccessible to conventional methods, enabling researchers to design proteins, antibodies, and small molecules with atomic-level precision [2] [3]. The following application notes and protocols detail the methodologies, validation frameworks, and reagent solutions driving this transformative change in biomedical research.

Historical Context and Definition

Traditional drug discovery has relied heavily on screening natural products or modifying existing molecular scaffolds, approaches inherently limited by evolutionary history and experimental throughput [1]. De novo design fundamentally transcends these constraints by enabling the computational creation of molecules from first principles rather than through modification of natural templates [1]. Where conventional methods perform local searches within known biochemical space, de novo design employs generative AI to explore entirely novel regions of the protein functional universe, designing custom biomolecules with tailored architectures and binding specificities [2].

This paradigm shift represents a move from "discovery by luck" to "discovery by design" [4]. The implications are profound: instead of being limited to incremental improvements on natural templates, researchers can now engineer molecular solutions optimized for specific therapeutic challenges, including targets previously considered "undruggable" [3].

Market Trajectory and Adoption

Table 1: Market Growth Indicators for AI in Drug Discovery

| Metric | 2024/2025 Value | 2034 Projection | CAGR | Source |

|---|---|---|---|---|

| Global Generative AI in Drug Discovery Market | $250-318.55 million | $2847.43 million | 27.42% | [5] |

| Broader AI in Pharmaceuticals Market | $1.94 billion | $16.49 billion | 27% | [6] |

| AI-Driven Drug Success Rate (Phase I) | 80-90% | N/A | N/A | [7] [8] |

| Traditional Drug Success Rate (Phase I) | 40-65% | N/A | N/A | [8] |

The remarkable growth trajectory highlighted in Table 1 reflects strong confidence in AI-driven approaches. This investment is fueled by demonstrated efficiencies, including development timelines potentially reduced from 10+ years to 3-6 years and cost reductions of up to 70% through better compound selection [7]. The significantly higher Phase I success rates for AI-designed molecules further validates the de novo approach's ability to generate viable candidates with optimized properties.

Technological Foundations

Key Architectural Frameworks

Generative AI for molecular design employs several specialized architectures, each with distinct advantages for de novo creation:

- Diffusion Models (e.g., RFdiffusion): progressively denoise random structures to generate novel protein backbones and antibody complementarity-determining regions (CDRs) with atomic-level precision [3]. These models can be fine-tuned on specific protein classes and conditioned on framework structures and target epitopes.

- Generative Adversarial Networks (GANs): simultaneously train generator and discriminator networks to create realistic molecular structures, particularly effective for small molecule design and medical image synthesis [9].

- Variational Autoencoders (VAEs): learn compressed representations of molecular space, enabling sampling and optimization in continuous latent spaces [10].

- Transformer-based Architectures: process biological sequences as linguistic data, predicting novel protein sequences and optimizing molecular properties through attention mechanisms [7].

Comparative Analysis: Traditional vs. De Novo Approaches

Table 2: Methodological Comparison in Molecular Design

| Aspect | Traditional Approaches | AI-Driven De Novo Design |

|---|---|---|

| Starting Point | Existing natural templates or compound libraries | First principles and functional specifications |

| Exploration Scope | Local search near known scaffolds | Global search across theoretical biochemical space |

| Throughput | 2,500-5,000 compounds over 5 years | Millions of virtual compounds in hours [7] |

| Primary Constraint | Experimental screening capacity | Computational resources and data quality |

| Typical Output | Optimized versions of existing molecules | Novel molecular architectures not found in nature |

| Dependency | Availability of suitable starting templates | Specification of desired function or properties |

The comparison in Table 2 illustrates the fundamental shift in methodology. De novo design explores the "protein functional universe"—the theoretical space encompassing all possible protein sequences, structures, and biological activities [1]. This universe remains largely unexplored because natural proteins represent only a tiny fraction of what is theoretically possible, constrained by evolutionary history rather than optimized for human therapeutic applications [1].

Application Notes: Success Stories and Methodologies

De Novo Antibody Design with RFdiffusion

Background: Antibody discovery has traditionally relied on immunization, random library screening, or isolation from patients [3]. These methods are laborious, time-consuming, and often fail to identify antibodies interacting with therapeutically relevant epitopes.

Protocol: RFdiffusion-Based Antibody Design

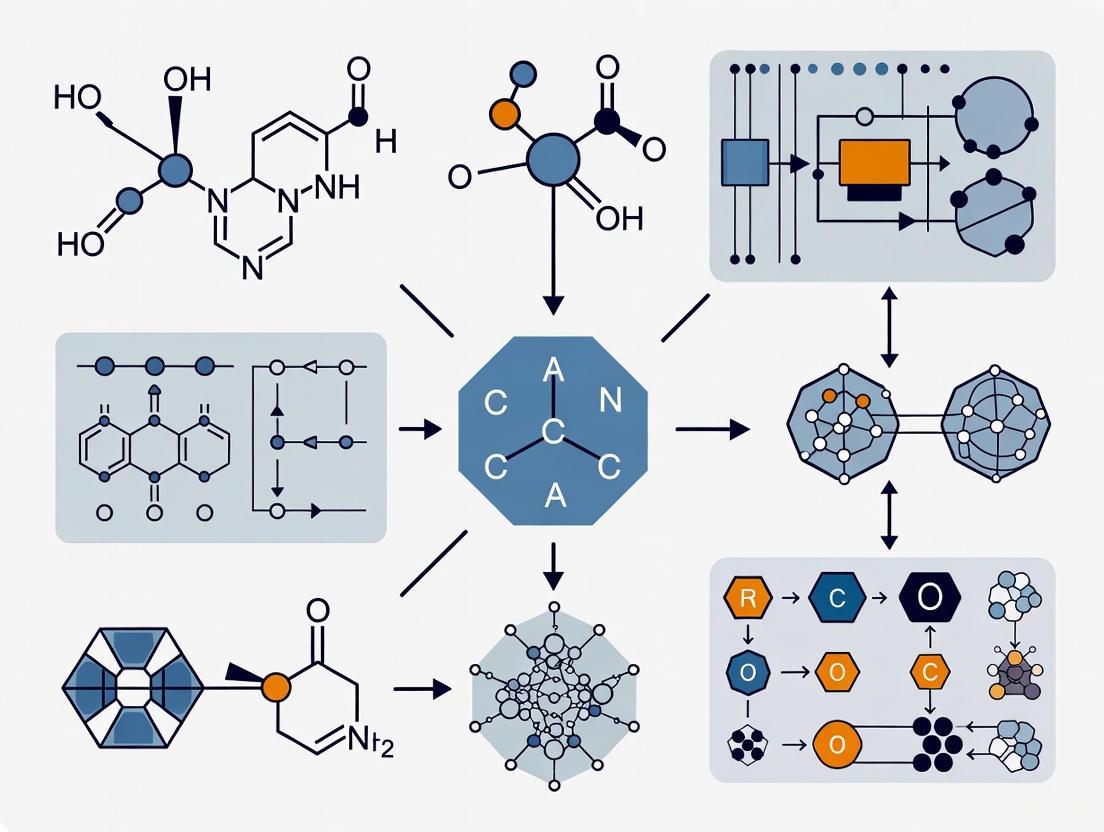

Figure 1: Computational workflow for de novo antibody design.

Step-by-Step Methodology:

Input Specification: Define target epitope coordinates and select antibody framework structure (e.g., humanized VHH framework for single-domain antibodies) [3].

Conditional Generation: Fine-tuned RFdiffusion network corrupts and denoises backbone structures while maintaining framework conditioning through the template track, which provides pairwise distances and dihedral angles as invariant structural references [3].

CDR Sampling: The network designs novel complementarity-determining region (CDR) loops and optimizes rigid-body placement relative to the target epitope. Hotspot residues can be specified to direct binding to specific epitopes [3].

Sequence Design: ProteinMPNN designs sequences for the generated backbone structures, optimizing for stability and expressibility while maintaining structural integrity [3].

In Silico Validation: Fine-tuned RoseTTAFold predicts complex structures between designed antibodies and targets. Designs with high self-consistency (agreement between designed and predicted structures) are prioritized for experimental testing [3].

Experimental Screening: Express designed antibodies using yeast surface display and screen for binding against target antigens. Typical initial affinities range from tens to hundreds of nanomolar Kd [3].

Affinity Maturation: Employ continuous evolution systems like OrthoRep to improve binding affinity while maintaining epitope specificity, potentially achieving single-digit nanomolar affinities [3].

Key Results: This protocol has successfully generated VHH binders targeting influenza haemagglutinin, Clostridium difficile toxin B (TcdB), RSV, SARS-CoV-2 RBD, and IL-7Rα [3]. Cryo-EM structures confirmed atomic-level accuracy of designed CDR loops, with high-resolution data verifying precise molecular recognition.

End-to-End Small Molecule Design

Background: Insilico Medicine's development of ISM001-055 (Rentosertib) for idiopathic pulmonary fibrosis represents the first fully AI-designed drug to reach Phase IIa clinical trials [8] [4].

Protocol: Integrated Target and Molecule Discovery

Figure 2: End-to-end AI drug discovery pipeline.

Step-by-Step Methodology:

Target Identification: PandaOmics AI platform analyzes multi-omic data to identify novel disease targets. For IPF, TNIK (Traf2 and NCK-interacting kinase) was identified as a novel fibrosis driver, previously studied primarily in cancer contexts [8] [4].

Generative Chemistry: Chemistry42 platform employs 30 AI models working in parallel to generate molecular structures optimized for target binding, selectivity, and drug-like properties [8].

Real-Time Optimization: Models share feedback and efficacy scores iteratively, exploring chemical space and refining compounds based on predictive ADMET (absorption, distribution, metabolism, excretion, toxicity) properties [7].

Experimental Validation: Top candidates undergo synthesis and in vitro testing, with results fed back into AI models for continuous improvement.

Key Results: The program advanced from target discovery to preclinical candidate in approximately 18 months and to Phase I trials in under 30 months—roughly half the industry average timeline [8] [4]. Phase IIa trials demonstrated dose-dependent improvement in forced vital capacity (98.4 mL improvement vs. 62.3 mL decline in placebo) [4].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for AI-Driven De Novo Design

| Reagent/Category | Function | Example Implementations |

|---|---|---|

| Generative Modeling Software | Creates novel molecular structures from scratch | RFdiffusion (antibody/protein design) [3], Chemistry42 (small molecule generation) [8], Chroma [2] |

| Protein Sequence Design Tools | Optimizes amino acid sequences for generated backbones | ProteinMPNN [3], Rosetta sequence design [1] |

| Structure Prediction Networks | Validates designed structures and filters candidates | Fine-tuned RoseTTAFold [3], AlphaFold2 [2], AlphaFold3 [10] |

| Expression Systems | Produces designed proteins for experimental validation | Yeast surface display [3], E. coli expression [3], Mammalian cell systems |

| Affinity Maturation Platforms | Improves binding strength of initial designs | OrthoRep continuous evolution system [3], Phage display |

| Validation Technologies | Confirms structural accuracy and binding modes | Cryo-electron microscopy [3], Surface plasmon resonance [3] |

| Specialized Datasets | Trains and validates AI models | Protein Data Bank structures [3], AlphaFold Protein Structure Database [1], Proprietary binding data |

Discussion and Future Directions

The protocols and application notes presented demonstrate that de novo molecular design has transitioned from theoretical concept to practical toolset. The combination of generative architectures like RFdiffusion with robust experimental validation pipelines enables researchers to create functional proteins and antibodies with atomic-level precision [3]. The success of end-to-end platforms in producing clinical candidates validates the entire paradigm [8] [4].

Nevertheless, significant challenges remain. The "black box" nature of many deep learning models creates interpretability challenges for regulatory submissions [7] [9]. Data quality and scarcity continue to limit model generalizability, particularly for rare targets [5] [10]. The translation from computational design to in vivo efficacy remains non-trivial, as evidenced by failures like Recursion's REC-994 despite promising cellular data [8] [4].

Future developments will likely focus on integrating physicochemical priors through differentiable physical models, overcoming data scarcity via transfer learning, and enabling multimodal fusion of structural, omic, and phenotypic data [10]. As these technical challenges are addressed, de novo design promises to fundamentally expand drug discovery beyond nature's template library, unlocking therapeutic possibilities across previously inaccessible target classes.

The drug discovery and development pipeline is an interdisciplinary process engaging multiple research phases to generate effective therapies, yet it is characterized by lengthy cycle times and high failure rates for drug discovery projects prior to preclinical development [11]. Traditional drug discovery can take over a decade and costs approximately $2.8 billion on average, with nine out of ten therapeutic molecules failing Phase II clinical trials and regulatory approval [12]. This economic burden and temporal inefficiency have created an imperative for accelerated approaches that can reduce both time and cost while maintaining scientific rigor.

Generative artificial intelligence (AI) has recently started to gear up its application in various sectors of the pharmaceutical industry, revolutionizing molecular design by providing advanced tools for generating novel molecular structures tailored to specific functional properties [12] [13]. The integration of AI technologies addresses the vast chemical space comprising >10^60 molecules, which fosters the development of numerous drug molecules but traditionally limits the drug development process due to technological constraints [12]. This review quantifies the economic and temporal drivers necessitating accelerated discovery approaches and provides detailed protocols for implementing these technologies.

Quantitative Landscape of Drug Discovery Economics

The economic challenges in pharmaceutical research and development have prompted increased industrialization, creating a need for precise productivity indicators [14]. The pressure to reduce both costs and development timelines has become a central focus across industry and academia, with emphasis on developing more biologically relevant and diverse approaches to discovering chemical starting points [11].

Table 1: Economic and Temporal Challenges in Traditional Drug Discovery

| Metric | Value | Impact |

|---|---|---|

| Average Development Cost | $2.8 billion | High capital investment with significant risk [12] |

| Development Timeline | >10 years | Extended time-to-market for critical therapies [12] |

| Clinical Trial Attrition Rate | 90% failure in Phase II | High resource waste and inefficiency [12] |

| HTS Daily Sample Analysis | Up to 10,000 reactions/hour | Throughput limitations in lead identification [11] |

| Data Volume Challenges | Overwhelming data generation | Computational bottlenecks in analysis [15] |

The industrialization of drug discovery has evolved through distinct phases of technology maturity, from fluid phases with extensive experimentation to specific phases emphasizing cost reduction [14]. This evolution creates an increased need to measure processes more precisely to gain efficiency, presenting challenges in maintaining researcher motivation and creativity while implementing rigorous performance metrics [14].

High-Throughput Mass Spectrometry: Accelerated Analytical Framework

Recent technological developments in mass spectrometry (MS) and automation have revolutionized the application of MS for high-throughput screens, allowing the targeting of unlabeled biomolecules in high-throughput assays [11]. These label-free MS assays are often cheaper, faster, and more physiologically relevant than competing assay technologies, expanding the breadth of targets for which high-throughput assays can be developed compared to traditional approaches [11].

Acoustic Ejection Mass Spectrometry (AEMS) Protocol

Principle: AEMS combines acoustic droplet ejection with an open port interface (OPI) and electrospray ionization mass spectrometry to achieve ultra-fast, high-throughput screening by transferring nanoliter sample droplets into the mass spectrometer without contact [15].

Materials:

- SCIEX Echo MS+ system or equivalent acoustic droplet ejector

- ZenoTOF 7600 mass spectrometer or equivalent high-speed MS

- 384 or 1536 well source plates

- DMSO-based compound libraries

Procedure:

- Sample Preparation: Prepare compound libraries in DMSO at appropriate concentrations (typically 1-10 mM). AEMS tolerates the presence of water in DMSO samples, permitting analysis of aged compound libraries [15].

- System Calibration: Calibrate acoustic ejector for precise nanoliter volume transfer (typically 2.5-10 nL).

- Plate Loading: Transfer samples to source plates using automated liquid handlers compatible with 384 or 1536 well formats.

- AEMS Analysis: Program the system to operate at one sample per second analysis speed. The system directly aspirates fluidic samples from screening plates, rapidly removes non-volatile assay components in an online fractionation step, and delivers purified analytes to the mass spectrometer [11] [15].

- Data Acquisition: Implement fast polarity switching and MS2 fragmentation capabilities for comprehensive metabolite profiling. The Orbitrap Exploris 240 MS provides sensitive high-resolution accurate mass measurements suitable for this application [16].

- Data Processing: Utilize intelligent data acquisition modes and advanced data processing software (e.g., Thermo Scientific Compound Discoverer) with predefined processing templates to enable metabolite profiling and identification [16].

Figure 1: AEMS Workflow for Ultra-High-Throughput Screening

Affinity Selection Mass Spectrometry (AS-MS) Protocol

Principle: AS-MS is a label-free high-throughput screening technology for hit identification that enables screening of large collections of small molecules, natural products, or peptides in pools of various compressions [17]. This approach allows the simultaneous assessment of multiple compounds, significantly reducing the amount of target required and screening duration [17].

Materials:

- Target protein (typically 0.1-1 µM in assay)

- Compound pools (5-2000 compounds per pool)

- Size exclusion chromatography (SEC) columns

- Automated liquid handling systems

- High-resolution mass spectrometer

Procedure:

- Pool Design: Design compound pools using redundancy-minimizing algorithms to avoid mass redundancy and enable unambiguous hit assignment. The iterative permutation approach involves randomly assembling pools with specified numbers of compounds, scoring and sorting according to mass redundancy, then permuting compounds from pools with highest redundancy until minimum maximum mass redundancy is achieved [17].

- Incubation: Incubate target protein with compound pools (typically 0.1-1 µM per compound, depending on maximal DMSO concentration tolerated in assay) for 30-60 minutes at appropriate temperature.

- Complex Separation: Separate target-ligand complexes from unbound compounds using size exclusion chromatography (SEC), ultrafiltration, or frontal affinity chromatography (FAC).

- Complex Denaturation: For affinity capture methods, denature ligand-target complex and filter to release bound ligands.

- MS Analysis: Analyze filtrate by LC-MS to determine structures of binding ligands. Implement data-independent acquisition MS strategies for global proteomics and phosphoproteomics analysis when required [11].

- Hit Identification: Process data using dedicated AS-MS software (e.g., Virscidian or Mestrelab solutions) to identify true binders before engaging valuable time and resources for hit evaluation [17].

Table 2: Research Reagent Solutions for Accelerated Drug Discovery

| Reagent/Technology | Function | Application Context |

|---|---|---|

| Orbitrap Exploris 240 MS | High-resolution accurate mass measurements | Metabolite identification and lead optimization [16] |

| Thermo Scientific Compound Discoverer | Automated data processing with predefined templates | Metabolite profiling and identification [16] |

| RapidFire System | Automated microfluidic sample collection and purification | High-throughput ESI-MS analysis with cycling times of 2.5s per sample [11] |

| Acoustic Dispensers | Nanoliter volume compound transfer | Generation of high-compression pools with minimal compound consumption [17] |

| ZenoTOF 7600 System | Electron Activated Dissociation (EAD) | Producing distinctive MS/MS fragments for structural elucidation [15] |

Generative AI Framework for De Novo Molecular Design

Generative AI models have emerged as a transformative tool for addressing complex challenges in drug discovery, enabling the design of structurally diverse, chemically valid, and functionally relevant molecules [13]. These approaches are particularly valuable within the context of the economic and temporal imperatives, as they significantly reduce the trial-and-error processes traditionally associated with molecular design.

Property-Guided Molecular Generation Protocol

Principle: Property-guided generation advances molecular design by offering a guided approach to generating molecules with desirable objectives, combining predictive models with generative architectures to direct exploration of chemical space toward regions with higher probabilities of success [13].

Materials:

- Chemical databases (ChEMBL, PubChem, ZINC)

- Hardware: GPU-accelerated computing infrastructure

- Software: Python with PyTorch/TensorFlow, RDKit, deep learning frameworks

Procedure:

- Data Curation: Compile training datasets from public and proprietary sources. Implement rigorous data cleaning to address contamination, standardization, and population bias issues prevalent in public datasets [18] [19].

- Model Selection: Choose appropriate generative architecture based on task requirements:

- Variational Autoencoders (VAEs): Encode input data into lower-dimensional latent representation and reconstruct from sampled points; suitable for smooth latent space exploration [13].

- Generative Adversarial Networks (GANs): Employ generator and discriminator networks in adversarial training; effective for generating novel molecular structures [13].

- Transformer Models: Utilize self-attention mechanisms for sequence-based molecular generation; capable of learning complex dependencies in molecular data [13].

- Model Training: Train selected model on curated dataset. For VAEs, minimize reconstruction loss while enforcing latent space regularization. For GANs, alternate between generator and discriminator updates until equilibrium reached.

- Property Prediction: Integrate property prediction models into the generative process. The Guided Diffusion for Inverse Molecular Design (GaUDI) framework combines an equivariant graph neural network for property prediction with a generative diffusion model, achieving 100% validity in generated structures while optimizing for single and multiple objectives [13].

- Latent Space Exploration: Perform Bayesian optimization in the learned latent space to identify regions with desirable properties. This approach is particularly valuable when dealing with expensive-to-evaluate objective functions such as docking simulations or quantum chemical calculations [13].

- Molecular Generation: Decode promising latent vectors to generate novel molecular structures with optimized properties.

Figure 2: Generative AI Framework for Molecular Design

Reinforcement Learning Optimization Protocol

Principle: Reinforcement learning (RL) has emerged as an effective tool in molecular design optimization, training an agent to navigate through molecular structures toward desirable chemical properties such as drug-likeness, binding affinity, and synthetic accessibility [13].

Materials:

- Molecular environment simulator (e.g., OpenAI Gym customized for chemistry)

- Reward function defining target molecular properties

- RL algorithms (Deep Q-Networks, Policy Gradient methods)

Procedure:

- Environment Setup: Create molecular environment that allows sequential modification of molecular structures through atom or bond additions/removals.

- Reward Function Design: Define comprehensive reward function incorporating multiple objectives:

- Drug-likeness (QED score)

- Target binding affinity (predicted or calculated)

- Synthetic accessibility (SA score)

- Structural similarity constraints when required

- Agent Training: Train RL agent using selected algorithm. The Graph Convolutional Policy Network (GCPN) uses RL to sequentially add atoms and bonds, constructing novel molecules with targeted properties [13].

- Exploration-Exploitation Balance: Implement Bayesian neural networks to manage uncertainty in action selection, combined with techniques like randomized value functions and robust loss functions to enhance the balance between exploring new chemical spaces and refining known high-reward regions [13].

- Multi-objective Optimization: For complex tasks, employ multi-objective reward structures. DeepGraphMolGen exemplifies this approach, employing a graph convolution policy and multi-objective reward to generate molecules with strong binding affinity to specific targets while minimizing off-target interactions [13].

- Validation: Experimentally validate top-generated compounds through synthesis and biochemical assays.

Integrated AI-MS Workflow for Accelerated Discovery

The combination of generative AI with high-throughput mass spectrometry creates a powerful synergistic workflow that addresses both the economic and temporal imperatives in modern drug discovery.

AI-Driven Design with MS Validation Protocol

Principle: This integrated approach leverages AI for rapid molecular design and MS for experimental validation, creating a closed-loop optimization system that significantly reduces design-test cycles.

Materials:

- Generative AI platform

- High-throughput mass spectrometry system

- Automated compound management and sample preparation

- Data integration and analysis pipeline

Procedure:

- AI-Driven Molecular Generation: Use property-guided generative models to design novel compounds targeting specific therapeutic objectives.

- Virtual Screening: Apply multi-parameter optimization to prioritize candidates for synthesis, including predicted affinity, solubility, and metabolic stability.

- Automated Synthesis & Plating: Utilize automated chemistry platforms and acoustic dispensing for efficient compound preparation and plating in appropriate formats for MS analysis.

- High-Throughput MS Screening: Implement AEMS or AS-MS protocols for rapid experimental validation of AI-generated compounds.

- Data Integration & Model Retraining: Feed experimental results back into AI models to improve prediction accuracy and guide subsequent design cycles. This requires statistical discipline in data management to ensure traceability and reproducibility [19].

- Iterative Optimization: Repeat cycles until compounds with desired properties are identified, typically achieving significant reductions in both time and cost compared to traditional approaches.

The economic and temporal imperatives in drug discovery have created an urgent need for accelerated approaches that can reduce both development timelines and costs while maintaining scientific rigor. The integration of generative AI with high-throughput mass spectrometry technologies represents a transformative framework that directly addresses these challenges. Through the implementation of the detailed protocols outlined in this review—including acoustic ejection mass spectrometry, affinity selection mass spectrometry, property-guided molecular generation, and reinforcement learning optimization—researchers can significantly accelerate the discovery and development of novel therapeutic agents. These advanced approaches enable more efficient exploration of chemical space, rapid experimental validation, and continuous model improvement through iterative design-test cycles, ultimately contributing to reduced attrition rates and more efficient translation of discoveries to clinical applications.

The process of drug discovery is undergoing a fundamental transformation, shifting from traditional, labor-intensive trial-and-error workflows to sophisticated, generative artificial intelligence (AI)-driven approaches. This paradigm shift represents nothing less than a redefinition of the speed and scale of modern pharmacology, replacing cumbersome human-driven processes with AI-powered discovery engines capable of compressing timelines and expanding chemical and biological search spaces [20]. Traditional molecular design has long been constrained by computational and experimental limitations, relying on combinatorial synthesis and optimization in a process that typically requires 14.6 years and approximately $2.6 billion to bring a new drug to market [6]. In stark contrast, AI-enabled workflows have demonstrated the potential to reduce the time and cost of bringing a new molecule to the preclinical candidate stage by up to 40% and 30%, respectively, for complex targets [6].

Generative AI (GenAI) has emerged as a transformative tool for addressing the complex challenges of drug discovery, enabling the design of structurally diverse, chemically valid, and functionally relevant molecules [13]. By leveraging sophisticated algorithms trained on vast chemical libraries and experimental data, GenAI models can propose novel molecular structures that satisfy precise target product profiles, including potency, selectivity, and absorption, distribution, metabolism, and excretion properties [20]. This capabilities shift is evidenced by the remarkable compression of early-stage research and development timelines, with multiple AI-derived small-molecule drug candidates reaching Phase I trials in a fraction of the typical ~5 years needed for discovery and preclinical work [20]. For instance, Insilico Medicine's generative-AI-designed idiopathic pulmonary fibrosis drug progressed from target discovery to Phase I in just 18 months, compared to the multi-year timelines characteristic of traditional approaches [20].

Table 1: Key Performance Metrics Comparison Between Traditional and AI-Driven Workflows

| Performance Metric | Traditional Approach | AI-Driven Approach | Improvement Factor |

|---|---|---|---|

| Discovery to Preclinical Timeline | 4-5 years | 12-18 months [20] | 70-80% reduction |

| Cost to Preclinical Candidate | Industry standard | Up to 40% reduction [6] | Significant cost saving |

| Design Cycle Efficiency | Industry baseline | ~70% faster, 10× fewer compounds [20] | Substantial efficiency gain |

| Clinical Success Rate | ~10% candidates succeed | Potential to increase probability [6] | Meaningful improvement |

| Compounds Synthesized | Hundreds to thousands | 10× fewer required [20] | Dramatic reduction |

Fundamental Divergences: Core Philosophical and Methodological Differences

The divergence between traditional and generative AI-driven molecular design extends beyond mere implementation to foundational philosophical and methodological differences. Traditional trial-and-error workflows operate on a sequential "design-make-test-analyze" cycle that is both time-intensive and resource-prohibitive, requiring extensive manual intervention at each stage and limiting the exploration of chemical space to relatively narrow domains [21]. This approach relies heavily on researcher intuition, historical data, and systematic but slow experimental iteration, creating a fundamental bottleneck in molecular optimization.

Generative AI approaches, conversely, embrace a parallelized, multi-parameter optimization strategy that leverages deep learning architectures to explore chemical spaces with unprecedented breadth and depth [13]. These systems employ sophisticated generative models—including Generative Adversarial Networks (GANs), Variational Autoencoders (VAEs), diffusion models, and transformer-based architectures—each with unique characteristics suited to different aspects of molecular generation [13]. Unlike traditional methods that optimize for single parameters sequentially, GenAI models can simultaneously optimize multiple molecular properties, including target binding affinity, solubility, metabolic stability, and synthetic accessibility, through techniques such as reinforcement learning, multi-objective optimization, and Bayesian optimization [13].

This philosophical divergence creates a fundamental shift from "problem-solving" to "solution-generation." Traditional methods typically begin with a known molecular scaffold and iteratively modify it to improve specific properties—a deductive approach. Generative AI, however, operates inductively, using learned chemical principles and structure-property relationships to generate novel molecular structures de novo that inherently possess desired functional characteristics [13]. This represents a transition from human-guided exploration to AI-driven creation, with the algorithm proposing candidate molecules that may exist outside conventional chemical intuition yet still satisfy complex therapeutic requirements.

Technical Architectures: A Comparative Analysis

Traditional Molecular Design Infrastructure

Traditional molecular design relies on established computational chemistry frameworks centered on quantitative structure-activity relationship (QSAR) modeling, molecular docking simulations, and molecular dynamics calculations. These approaches depend heavily on hand-crafted molecular descriptors and force-field parameters that require significant domain expertise to implement effectively [21]. The infrastructure typically involves high-performance computing clusters running specialized software for quantum chemical calculations such as density functional theory (DFT), which provide accurate but computationally expensive predictions of molecular properties [21]. This creates a fundamental scalability limitation, as the exponential growth of chemical space with molecular size makes comprehensive exploration computationally prohibitive.

The traditional workflow employs sequential, modular components with clearly defined interfaces: compound libraries are screened using virtual or physical high-throughput screening, hits are optimized through systematic structural modification, lead compounds undergo experimental validation, and promising candidates advance to preclinical development. Each stage generates data that informs the next, but integration between stages is often manual, creating bottlenecks and discontinuities in the design process. While reliable and well-understood, this architecture fundamentally limits the exploration of novel chemical space and relies heavily on prior knowledge and existing compound libraries.

Generative AI Architecture for Molecular Design

Generative AI architectures for molecular design employ fundamentally different technical frameworks built around deep learning models capable of learning complex chemical representations directly from data. These systems typically utilize several interconnected components: (1) chemical representation layers that encode molecular structures as graphs, strings (SMILES), or 3D coordinates; (2) generative models that create novel molecular structures; (3) predictive models that estimate molecular properties; and (4) optimization algorithms that guide the generation toward desired characteristics [13].

The most advanced implementations create integrated, iterative workflows where these components operate in a tightly coupled fashion. For example, a workflow might employ a VAE to learn a continuous latent representation of chemical space, property prediction networks to estimate target properties for generated molecules, and reinforcement learning or Bayesian optimization to navigate the latent space toward regions containing molecules with optimized property profiles [13]. This creates a closed-loop system where each iteration improves both the generative model and the quality of candidates, progressively focusing on the most promising regions of chemical space.

Table 2: Generative AI Model Architectures and Their Molecular Design Applications

| Model Architecture | Key Characteristics | Molecular Applications | Advantages |

|---|---|---|---|

| Variational Autoencoders (VAEs) | Encodes inputs to latent space; enables smooth interpolation [13] | Inverse molecular design, latent space optimization [13] | Continuous representation; enables optimization in latent space |

| Generative Adversarial Networks (GANs) | Generator-discriminator competition; iterative training [13] | Image synthesis, molecular generation [13] | High-quality sample generation; adversarial training |

| Transformer Models | Self-attention mechanisms; parallelizable architecture [13] | Sequence-based molecular generation [22] | Captures long-range dependencies; transfer learning capability |

| Diffusion Models | Progressive noising and denoising; probabilistic modeling [13] | High-quality molecular generation [13] | State-of-the-art sample quality; stable training |

Diagram 1: Architectural comparison between traditional and AI-driven workflows

Experimental Protocols and Methodologies

Protocol: Traditional Hit-to-Lead Optimization

Objective: To systematically optimize a screening hit compound through iterative structural modification to improve potency, selectivity, and drug-like properties.

Materials and Reagents:

- Primary Reference Compound: Initial hit compound identified from screening

- Analog Libraries: Commercially available or synthetically accessible structural analogs

- Assay Reagents: Cell lines, enzymes, substrates, and buffers for biochemical and cellular assays

- Analytical Instruments: HPLC systems for compound purity analysis, LC-MS for structural characterization

- Computational Tools: Molecular modeling software (e.g., Schrödinger Suite, MOE) for structure-based design

Procedure:

- Initial Compound Characterization: Determine baseline potency (IC50/EC50), selectivity against related targets, and preliminary ADME properties of the hit compound.

- Structure-Activity Relationship (SAR) Analysis: Design and synthesize or acquire structural analogs focusing on systematic modification of different regions of the molecule.

- Iterative Testing Cycle: a. Test compounds in primary assay to establish potency b. Evaluate selective compounds in counter-screens and secondary assays c. Assess promising compounds for early ADME properties (e.g., metabolic stability, permeability) d. Analyze data to identify key structural features driving activity and properties

- Lead Candidate Selection: Advance compounds meeting predefined criteria (e.g., potency <100 nM, selectivity >10-fold, acceptable ADME profile) for further optimization.

- Cycle Repetition: Repeat steps 2-4 until compounds meet lead candidate criteria.

Timeline: Each optimization cycle typically requires 3-6 months, with 4-8 cycles often needed to identify a lead candidate.

Protocol: Generative AI-Driven Molecular Optimization

Objective: To generate novel molecular structures with optimized multi-property profiles using generative AI models.

Materials and Reagents:

- Training Data: Curated datasets of chemical structures with associated properties (e.g., ChEMBL, ZINC, proprietary corporate databases)

- Computational Infrastructure: GPU-accelerated computing resources for model training and inference

- Generative Models: Pre-trained or custom-built generative architectures (VAEs, GANs, diffusion models, or transformers)

- Property Predictors: Machine learning models for predicting molecular properties (e.g., random forests, graph neural networks)

- Validation Assays: High-throughput experimental systems for validating AI-generated compounds

Procedure:

- Model Initialization and Training: a. Preprocess chemical structure data into appropriate representation (e.g., SMILES, molecular graphs) b. Train generative model on chemical library to learn chemical space distribution c. Train property prediction models on structure-property data

- Goal-Directed Generation: a. Define target property profile (e.g., potency range, solubility, metabolic stability) b. Implement optimization strategy (reinforcement learning, Bayesian optimization, or conditional generation) c. Generate candidate molecules satisfying target criteria

- Virtual Screening and Prioritization: a. Filter generated molecules for chemical validity, novelty, and synthetic accessibility b. Apply property predictors to rank candidates by desired profile c. Select top candidates for further evaluation

- Experimental Validation: a. Synthesize or acquire top-priority compounds b. Test in relevant biological assays and ADME models

- Iterative Refinement: a. Incorporate experimental results back into training data b. Retrain or fine-tune models based on new data c. Repeat generation cycle with refined models

Timeline: Initial generation cycle requires 2-4 weeks, with subsequent cycles of 1-2 weeks as models improve with additional data.

Table 3: Research Reagent Solutions for AI-Driven Molecular Design

| Reagent/Category | Specific Examples | Function in Workflow |

|---|---|---|

| Generative Models | GraphVAE, MolGPT, REINVENT | de novo molecular structure generation from learned chemical space |

| Property Predictors | Graph Neural Networks, Random Forests, Support Vector Machines | Rapid prediction of molecular properties without expensive simulations |

| Optimization Methods | Reinforcement Learning, Bayesian Optimization, Multi-objective Optimization | Guided exploration of chemical space toward desired property profiles |

| Molecular Representations | SMILES, SELFIES, Molecular Graphs, 3D Coordinates | Encoding chemical structures for machine learning processing |

| Benchmark Datasets | MOSES, GuacaMol, ChEMBL, ZINC | Training and evaluation of generative models and property predictors |

Quantitative Performance Benchmarking

The quantitative advantages of generative AI approaches over traditional molecular design workflows become evident across multiple performance dimensions. AI-driven platforms report design cycles approximately 70% faster than traditional methods while requiring 10× fewer synthesized compounds to identify viable candidates [20]. This efficiency gain translates to substantial cost reductions, with AI-enabled workflows demonstrating the potential to reduce drug discovery costs by up to 40% and slash development timelines from five years to as little as 12-18 months [6].

Clinical pipeline progression provides further validation of these accelerated timelines. By mid-2025, over 75 AI-derived molecules had reached clinical stages, representing exponential growth from essentially zero AI-designed drugs in human testing at the start of 2020 [20]. Notable examples include Insilico Medicine's Traf2- and Nck-interacting kinase inhibitor (ISM001-055) for idiopathic pulmonary fibrosis, which demonstrated positive Phase IIa results, and the Nimbus-originated TYK2 inhibitor, zasocitinib (TAK-279), which advanced to Phase III clinical trials, exemplifying the transition of AI-designed molecules into late-stage clinical testing [20].

Perhaps most significantly, generative AI approaches demonstrate potential to improve the probability of clinical success—a crucial metric in an industry where traditionally only about 10% of candidates successfully navigate clinical trials [6]. By analyzing large datasets and identifying promising drug candidates with optimized property profiles earlier in the process, AI-driven methods increase the likelihood that molecules entering clinical development will successfully advance through trials. Industry projections suggest that by 2025, 30% of new drugs will be discovered using AI, marking a substantial shift in the drug discovery process [6].

Diagram 2: Performance metrics comparison between traditional and AI-driven approaches

Case Studies: Real-World Validation

Exscientia: Automated Precision Chemistry Platform

Exscientia's AI-driven platform exemplifies the paradigm shift in molecular design through its "Centaur Chemist" approach, which integrates algorithmic creativity with human domain expertise to iteratively design, synthesize, and test novel compounds [20]. The platform employs deep learning models trained on extensive chemical libraries and experimental data to propose molecular structures satisfying precise target product profiles. A distinctive innovation in Exscientia's approach is the incorporation of patient-derived biology into the discovery workflow through the acquisition of Allcyte in 2021, which enables high-content phenotypic screening of AI-designed compounds on real patient tumor samples [20]. This patient-first strategy enhances translational relevance by ensuring candidate drugs demonstrate efficacy not only in conventional in vitro systems but also in ex vivo disease models.

Exscientia achieved a significant milestone in 2020 when its algorithmically generated drug, DSP-1181, became the world's first AI-designed drug to enter Phase I trials for obsessive-compulsive disorder [20]. By 2023, the company had designed eight clinical compounds, both in-house and with partners, reaching development "at a pace substantially faster than industry standards" [20]. These include candidates for immuno-oncology (e.g., A2A receptor antagonist EXS-21546) and oncology (e.g., CDK7 inhibitor GTAEXS-617) [20]. The 2024 merger between Exscientia and Recursion Pharmaceuticals, valued at $688 million, created an integrated platform combining Exscientia's strengths in generative chemistry with Recursion's extensive phenomics and biological data resources, further accelerating the AI-driven drug discovery pipeline [20].

Iterative Deep Learning Workflow for Inverse Molecular Design

A sophisticated implementation of generative AI for molecular design demonstrates the power of iterative deep learning workflows for inverse design of molecules with specific optoelectronic properties [21]. This approach combines (1) the density-functional tight-binding method for dynamic generation of property training data, (2) a graph convolutional neural network surrogate model for rapid and reliable predictions of chemical and physical properties, and (3) a masked language model for molecular generation [21]. The workflow addresses a fundamental challenge in computational molecular design: the prohibitive cost of brute-force screening of entire chemical spaces.

In practice, this iterative workflow begins with the GDB-9 molecular dataset, which is fed into quantum chemical methods to compute target properties like the HOMO-LUMO gap [21]. A graph convolutional neural network surrogate model is then trained to predict these properties based solely on molecular structures, achieving prediction speeds orders of magnitude faster than quantum chemical calculations [21]. The masked language model generates novel molecular structures, which are evaluated by the surrogate model, with promising candidates selected for further iteration. Crucially, the workflow incorporates continuous model refinement, with the surrogate model retrained on newly generated molecules to maintain predictive accuracy as the chemical space expands beyond the initial training distribution [21]. This approach exemplifies the self-improving nature of advanced AI-driven molecular design systems, where each iteration enhances both the generative capabilities and predictive accuracy of the platform.

Implementation Roadmap: Transitioning to AI-Enhanced Workflows

For research organizations transitioning from traditional to AI-enhanced molecular design, a phased implementation strategy maximizes adoption success while managing risk. The roadmap begins with infrastructure assessment and development, evaluating existing computational resources, data quality and accessibility, and team capabilities. This phase typically includes procurement of GPU-accelerated computing resources, implementation of data standardization protocols, and initiation of training programs to build AI literacy across the research organization.

The second phase focuses on pilot program implementation, selecting well-defined projects with clear success metrics for initial AI deployment. Suitable pilot projects have several key characteristics: (1) availability of high-quality training data, (2) established experimental validation assays, (3) clear molecular design objectives, and (4) appropriate scope—neither too trivial to demonstrate value nor too complex to achieve meaningful progress. During this phase, organizations may leverage pre-trained models or established platforms (e.g., Orion, DeepChem) to accelerate initial implementation while building internal expertise.

The third phase involves workflow integration and scaling, incorporating successful AI approaches into standard research processes and expanding application across the portfolio. This requires developing robust pipelines for data generation, model training, compound generation, and experimental validation, with continuous feedback loops to improve model performance. Successful organizations establish cross-functional teams combining domain expertise (medicinal chemists, pharmacologists) with AI specialists to ensure generated molecules satisfy both computational metrics and practical drug discovery constraints.

Finally, continuous improvement and innovation focuses on staying current with rapidly advancing generative AI methodologies while contributing to the field through publication and collaboration. The most advanced implementations feature fully automated design-make-test-analyze cycles, where AI systems not only design molecules but also prioritize synthesis and testing, dynamically reallocating resources based on emerging data. This represents the culmination of the paradigm shift—transitioning from AI as a tool to AI as an active partner in the molecular design process.

The paradigm shift from traditional trial-and-error workflows to generative AI-driven molecular design represents a fundamental transformation in how we discover and optimize therapeutic compounds. The evidence demonstrates that AI approaches offer substantial advantages across multiple dimensions: dramatically compressed timelines, significantly reduced costs, expanded exploration of chemical space, and improved decision-making through multi-parameter optimization. As generative AI models continue to evolve—incorporating more sophisticated architectures, larger and higher-quality training datasets, and more accurate property predictors—their impact on molecular design will likely accelerate.

The future trajectory points toward increasingly integrated and autonomous discovery systems, where generative AI operates seamlessly across target identification, compound design, experimental planning, and clinical development. Emerging trends such as the combination of generative AI with automated synthesis and screening technologies promise to further accelerate the design-make-test cycle, while advances in explainable AI will enhance researcher trust and collaboration with these systems [20]. The organizations that successfully navigate this paradigm shift—embracing AI as a core capability while maintaining essential human expertise and oversight—will be positioned to lead the next era of therapeutic innovation, delivering better medicines to patients faster and more efficiently than ever before.

Artificial intelligence (AI) has progressed from an experimental curiosity to a clinical utility, fundamentally reshaping the landscape of drug discovery and development. By leveraging massive datasets, advanced algorithms, and high-performance computing, AI tools uncover patterns and insights that would be nearly impossible for human researchers to detect unaided [23]. This shift replaces labor-intensive, human-driven workflows with AI-powered discovery engines capable of compressing traditional timelines, expanding chemical and biological search spaces, and redefining the speed and scale of modern pharmacology [20]. The culmination of this progress is the emergence of AI-designed therapeutic candidates now actively progressing through human clinical trials, marking a concrete step forward in bringing AI-enabled drug discovery into the clinic [24]. This application note details the key milestones, experimental protocols, and reagent solutions that underpin this transformative era in pharmaceutical research.

The pipeline of AI-discovered drugs has experienced exponential growth. As of April 2024, at least 31 drugs developed by eight leading AI companies were undergoing human clinical trials [25]. The distribution of these candidates across development phases is summarized in Table 1.

Table 1: Clinical Status of AI-Designed Drug Candidates (as of April 2024)

| Clinical Phase | Number of Candidates | Notable Status Updates |

|---|---|---|

| Phase II/III | 9 | One reporting non-significant findings [25] |

| Phase I/II | 5 | One discontinued [25] |

| Phase I | 17 | One trial ended [25] |

| Completed Phase I (as of Dec 2023) | 21 | Success rate of 80-90%, significantly higher than traditional ~40% [26] |

This clinical progress is reflected in the significant financial investment the sector has attracted. In 2024 alone, global venture funding for AI in drug discovery reached $3.3 billion [27], with nearly $5.6 billion invested in biotech AI the previous year, accounting for nearly 30% of all healthcare startup funding [25].

Profiles of Leading AI Drug Discovery Platforms

Several pioneering AI-native biotech firms have demonstrated tangible progress in reducing development timelines and increasing efficiency. Their approaches and clinical-stage assets are profiled in Table 2.

Table 2: Leading AI Drug Discovery Platforms and Clinical-Stage Assets

| Company (AI Approach) | Key Clinical Candidate | Indication | Reported Milestone & Timeline |

|---|---|---|---|

| Insilico Medicine (Generative AI, Target ID) | ISM001-055 (TNK inhibitor) | Idiopathic Pulmonary Fibrosis (IPF) | Phase IIa in 18 months from target discovery; positive Phase IIa results showing safety and signs of efficacy [24] [20] |

| Exscientia (Generative Chemistry, "Centaur Chemist") | DSP-1181 | Obsessive-Compulsive Disorder (OCD) | First AI-designed molecule to enter human trials (Phase I) [23] [20] |

| Schrödinger (Physics + ML) | Zasocitinib (TAK-279) | Autoimmune Conditions | Phase III; exemplifies physics-enabled design [20] |

| Recursion (Phenomics-first, AI) | REC-994 | Cerebral Cavernous Malformation | Promising Phase II data meeting primary safety/tolerability endpoints [25] |

Experimental Protocols for AI-Driven Molecular Design

The transition of AI-designed molecules to the clinic is underpinned by robust and iterative experimental protocols. The following section details a specific methodology for generative AI workflow integrating active learning.

Protocol: Variational Autoencoder (VAE) with Nested Active Learning (AL) Cycles

This protocol describes a workflow integrating a variational autoencoder with two nested active learning cycles, iteratively refined using chemoinformatics and molecular modeling predictors [28]. Its application has successfully generated novel, diverse, and drug-like molecules with high predicted affinity for targets like CDK2 and KRAS, with experimental validation yielding a high hit rate (8 out of 9 synthesized molecules showed in vitro activity for CDK2) [28].

Materials and Reagents

- Target Protein Structure: PDB file for the protein of interest (e.g., CDK2, KRAS).

- Compound Libraries: For initial training, use large, general-purpose libraries (e.g., ZINC, ChEMBL). For target-specific fine-tuning, use libraries with known actives for your target.

- Software & Computational Tools:

- VAE Framework: A deep learning framework (e.g., PyTorch, TensorFlow) with a configured VAE for molecular SMILES generation.

- Cheminformatics Suite: RDKit or OpenBabel for structure validation, descriptor calculation, and filter application.

- Molecular Docking Software: AutoDock, AutoDock Vina, or Glide.

- Molecular Dynamics (MD) Suite: GROMACS, AMBER, or OpenMM for absolute binding free energy (ABFE) calculations.

- Hardware: High-Performance Computing (HPC) cluster with multiple CPU nodes and high-memory GPU accelerators.

Procedure

Step 1: Data Preparation and Initial VAE Training

- Represent training molecules as SMILES strings.

- Tokenize SMILES strings and convert them into one-hot encoding vectors.

- Train the VAE on a large, general molecular dataset to learn the fundamental rules of chemical structure and validity.

- Perform initial fine-tuning of the pre-trained VAE on a target-specific training set to bias the model towards relevant chemical space.

Step 2: Nested Active Learning Cycles This involves two interconnected loops: an inner cycle focused on chemical properties and an outer cycle focused on target affinity.

- Molecule Generation: Sample the fine-tuned VAE to generate a batch of novel molecular structures.

Inner AL Cycle (Chemical Property Optimization): a. Validation & Filtering: Pass generated molecules through cheminformatic oracles (filters) for: - Chemical Validity (e.g., via RDKit). - Drug-Likeness (e.g., Lipinski's Rule of Five). - Synthetic Accessibility (SA) Score. b. Similarity Assessment: Assess molecular similarity against the cumulative set of molecules that have passed filters in previous cycles to promote diversity. c. Fine-Tuning: Use the molecules that pass all filters (the "temporal-specific set") to further fine-tune the VAE, guiding subsequent generation towards drug-like and synthesizable structures. d. Repeat Steps 1-2 of the Inner AL Cycle for a predefined number of iterations.

Outer AL Cycle (Target Affinity Optimization): a. Molecular Docking: Take the accumulated "temporal-specific set" and run molecular docking simulations against the target protein structure. b. Selection: Transfer molecules that meet a predefined docking score threshold to a "permanent-specific set." c. Fine-Tuning: Use this high-quality, target-specific set to fine-tune the VAE, pushing the generative process towards structures with higher predicted affinity. d. Return to Step 1, initiating a new round of Inner AL cycles, now using the updated "permanent-specific set" for similarity comparisons.

Step 3: Candidate Selection and Experimental Validation

- After multiple Outer AL cycles, apply stringent filtration to the "permanent-specific set."

- Perform advanced molecular modeling (e.g., Monte Carlo simulations with Protein Energy Landscape Exploration (PEL) or Absolute Binding Free Energy (ABFE) calculations) on top-ranked candidates to refine and validate predictions.

- Select final candidates for chemical synthesis and in vitro biological assay (e.g., IC₅₀ determination).

The following workflow diagram illustrates this complex, iterative process:

Diagram 1: VAE with Nested Active Learning for Drug Design. This workflow integrates generative AI with iterative, physics-based refinement to optimize for drug-like properties and target affinity [28].

Property-Guided Generation with Reinforcement Learning (RL)

An alternative or complementary protocol to the VAE-AL approach involves the use of reinforcement learning (RL) for goal-directed molecular generation [13].

Procedure

- Agent and Environment Setup: Define the RL agent (e.g., a graph convolutional policy network) and the environment (the chemical space).

- Action Space Definition: Allow the agent to take actions that modify molecular structure (e.g., adding or removing atoms/bonds).

- Reward Function Shaping: Create a multi-objective reward function to guide the agent. Reward components can include:

- Predicted binding affinity from a surrogate model.

- Drug-likeness and selectivity metrics.

- Synthetic Accessibility (SA) score.

- Penalties for structural similarity to known compounds to encourage novelty.

- Model Training: Train the RL agent to maximize the cumulative reward, iteratively generating molecules that improve against the defined objectives.

- Validation: Subject the top-performing generated molecules to in silico validation (e.g., docking, MD) and subsequent experimental testing.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Successful implementation of the aforementioned protocols relies on a suite of computational and experimental tools. Key components of this "toolkit" are listed below.

Table 3: Essential Research Reagents & Solutions for AI-Driven Molecular Design

| Tool/Reagent Name | Type | Primary Function in Workflow | Key Feature/Benefit |

|---|---|---|---|

| RDKit | Cheminformatics Software | Molecular representation, descriptor calculation, validity/SA filtering [28] | Open-source; provides critical functions for processing and filtering generated molecules |

| AutoDock Vina | Molecular Docking Software | Structure-based virtual screening; provides affinity predictions (docking scores) [29] [28] | Fast, accurate; serves as the "affinity oracle" in active learning cycles |

| AlphaFold2/3 [26], Boltz-2 [30] | Protein Structure Prediction | Generates high-accuracy 3D protein structures for targets with unknown experimental structures | Enables structure-based design without reliance on experimental crystallography |

| PharmBERT | Domain-Specific Large Language Model (LLM) | Extracts pharmacokinetic (ADME) and safety information from textual drug labels [26] | Enhances efficiency of text-related regulatory work and critical information extraction |

| CETSA (Cellular Thermal Shift Assay) | In vitro Target Engagement Assay | Validates direct drug-target binding in intact cells and native tissue environments [29] | Provides physiologically relevant confirmation of mechanistic action, bridging in silico predictions and cellular efficacy |

| GROMACS/AMBER | Molecular Dynamics (MD) Software | Performs Absolute Binding Free Energy (ABFE) calculations and binding pose stability analysis [28] | Provides high-precision, physics-based validation of binding affinity and mode |

The journey of AI-designed molecules from concept to clinic represents a paradigm shift in pharmaceutical R&D. The field has moved beyond proof-of-concept to deliver multiple clinical-stage assets, with early data suggesting potentially higher success rates in early-phase trials [26]. The experimental protocols, such as the integration of generative models with active learning and reinforcement learning, provide a rigorous, data-driven framework for discovering novel therapeutics. While challenges remain—including the need for broader validation across therapeutic areas and the refinement of models to handle extreme biological complexity [23]—the foundational tools and milestones established to date firmly position AI as an indispensable engine for the next generation of drug discovery.

Architectures in Action: A Technical Guide to Generative Models and Their Real-World Applications

Generative artificial intelligence (GenAI) has emerged as a transformative tool in computational molecular design, enabling the exploration of vast chemical spaces estimated to contain up to 10^60 possible molecules [31] [32]. This exploration is crucial for accelerating drug discovery and materials science, where traditional methods face fundamental limitations in efficiently navigating this immense structural diversity [33]. Core generative architectures—including Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), Transformers, and Diffusion Models—each offer unique mechanisms for addressing the complex challenges of de novo molecular design. These deep learning models have revolutionized computer-aided molecular design (CAMD) by moving beyond virtual screening of existing libraries to the automated generation of novel molecular structures with optimized properties [32] [13]. This article provides a comprehensive overview of these foundational architectures, their performance characteristics, experimental protocols, and implementation frameworks tailored for researchers and drug development professionals working in generative AI for molecular design.

Core Architectural Principles

Variational Autoencoders (VAEs) operate by encoding input data into a lower-dimensional latent representation and then reconstructing it from sampled points in this continuous space. This approach ensures a smooth latent space, enabling realistic data generation and making VAEs particularly valuable for molecular design tasks [13]. The conditional VAE (CVAE) variant incorporates property information directly into both encoding and decoding processes, allowing for explicit control over multiple molecular properties during generation [33].

Generative Adversarial Networks (GANs) employ two competing neural networks: a generator that creates synthetic data and a discriminator that distinguishes real from generated data. This adversarial training process enables the generation of increasingly realistic molecular structures [13].

Transformer networks, originally developed for natural language processing, utilize self-attention mechanisms to process sequential data like SMILES strings. Their architecture includes encoder-decoder structures with multi-head attention and positional encoding, allowing them to capture long-range dependencies in molecular representations [34] [13].

Diffusion models generate data through a progressive denoising process. They work by gradually adding noise to training data and then learning to reverse this process, effectively generating novel structures from random noise [35] [32]. These models have demonstrated remarkable potential across diverse domains of generative AI, including molecular design [32].

Quantitative Performance Comparison

Table 1: Performance benchmarks of generative architectures on molecular design tasks

| Architecture | Representation | Validity Rate | Reconstruction Accuracy | Uniqueness | Novelty | Key Strengths |

|---|---|---|---|---|---|---|

| VAE (NP-VAE) | Graph | 100% [31] | 90.4% [31] | High [31] | High [31] | High interpretability, smooth latent space, property control [31] [33] |

| Transformer | SMILES/Sequence | Varies | - | Moderate [34] | Moderate [34] | Flexible architecture, attention mechanism [34] [13] |

| Diffusion Model | Graph/3D | 100% (GaUDI) [13] | - | High [35] | High [35] | High-quality generation, 3D structure capability [35] [32] |

| GAN (GCPN) | Graph | >90% [13] | - | High [13] | High [13] | Adversarial training, sequential molecular construction [13] |

Table 2: Specialized capabilities across molecular design applications

| Architecture | Large Molecule Handling | 3D Complexity | Multi-property Optimization | Synthetic Accessibility |

|---|---|---|---|---|

| VAE | Excellent (NP-VAE) [31] | Good (chirality support) [31] | Excellent (CVAE) [33] | Moderate |

| Transformer | Moderate | Limited | Good (conditioning) | Moderate |

| Diffusion Model | Good | Excellent (equivariant) [35] [32] | Excellent (guided) [13] | High [32] |

| GAN | Moderate | Limited | Good (RL integration) [13] | High (GCPN) [13] |

Application Notes & Experimental Protocols

Variational Autoencoders for Natural Product-Inspired Design

Protocol 1: NP-VAE for Large Molecular Structures with 3D Complexity

Background: Natural products often possess complex structures with chirality, presenting challenges for conventional generative models. NP-VAE addresses this by combining molecular decomposition into fragment units with tree structures, Extended Connectivity Fingerprints (ECFP), and Tree-LSTM networks [31].

Experimental Workflow:

Step-by-Step Procedure:

Data Preparation:

- Curate datasets from DrugBank and natural product libraries containing large molecular structures (MW > 500)

- Apply stereochemical descriptors to encode chirality using RDKit

- Split data into training (76,000 compounds), validation (5,000), and test sets (5,000) [31]

Model Configuration:

- Implement graph-based VAE with 12 million parameters

- Configure Tree-LSTM encoder with hierarchical attention mechanism

- Set latent dimension to 256 with Gaussian prior

- Initialize fragment vocabulary from training set clusters [31]

Training Protocol:

- Optimize using Adam with learning rate 0.001

- Apply gradient clipping at norm 1.0

- Use batch size of 32 for 100 epochs

- Monitor reconstruction loss and KL divergence [31]

Latent Space Exploration:

- Apply interpolation between known active compounds

- Perform gradient-based optimization for target properties

- Implement novelty screening against training set [31]

Validation Metrics:

- Reconstruction accuracy: >90.4% on test set [31]

- Validity rate: 100% (through fragment-based generation) [31]

- Novelty: >80% unseen structures in generated compounds [31]

- Chirality preservation: >95% in generated stereocenters [31]

Conditional VAEs for Multi-Property Optimization

Protocol 2: CVAE for Simultaneous Multi-Property Control

Background: Molecular properties are often correlated, making independent optimization challenging. CVAE addresses this by incorporating property conditions directly into both encoder and decoder, enabling simultaneous control of multiple properties [33].

Experimental Workflow:

Step-by-Step Procedure:

Condition Vector Formulation:

- Encode continuous properties (MW, LogP, TPSA) with min-max normalization to [-1, 1]

- Represent integer properties (HBD, HBA) as one-hot vectors

- Concatenate all properties into condition vector c [33]

Model Architecture:

- Implement 3-layer LSTM with 500 hidden units for both encoder and decoder

- Set embedding dimension to 300

- Use latent dimension of 128

- Apply stochastic write-out with 100 samples per latent vector [33]

Training Procedure:

- Use cross-entropy loss for reconstruction term

- Apply KL weight annealing from 0 to 1 over first 50 epochs

- Implement teacher forcing with probability 0.5

- Train for 120 epochs with early stopping [33]

Property-Specific Generation:

- Set target property values in condition vector

- Sample from prior distribution N(0,I) for structural diversity

- Apply beam search during decoding for improved validity [33]

Validation Metrics:

- Multi-property satisfaction: >75% for all five target properties [33]

- Structural validity: >85% valid SMILES [33]

- Uniqueness: >90% non-duplicate structures [33]

- Property range achievement: Successful generation beyond training set ranges [33]

Diffusion Models for 3D Molecular Generation

Protocol 3: Equivariant Diffusion for Structure-Based Design

Background: Equivariant diffusion models generate molecules with 3D structural information, maintaining rotational and translational equivariance. This is crucial for structure-based drug design where molecular geometry determines binding affinity [35] [32].

Experimental Workflow:

Step-by-Step Procedure:

Data Preparation:

- Obtain 3D molecular structures from databases like PDBbind

- Align molecules to common coordinate framework

- Compute electron density maps for protein pockets [32]

Diffusion Process Configuration:

- Set noise schedule to cosine-based with 1000 steps

- Implement equivariant graph neural network for score estimation

- Configure rotational and translational invariant features [35]

Conditional Generation Setup:

- Integrate property prediction network for guidance

- Implement classifier-free guidance scale of 2.5

- Set conditioning on protein pocket features [32]

Sampling Procedure:

- Initialize from random noise with protein pocket constraints

- Apply ancestral sampling with 100 steps

- Use correction steps for geometric constraints [35]

Validation Metrics:

- 3D structure validity: >95% with correct bond lengths/angles [35]

- Binding affinity: Improved over baseline methods [32]

- Synthetic accessibility: >80% with retrosynthetic analysis [32]

- Diversity: >70% unique scaffolds in generated set [35]

The Scientist's Toolkit

Table 3: Essential research reagents and computational tools for generative molecular design

| Category | Tool/Resource | Specification | Application Context |

|---|---|---|---|

| Chemical Databases | ZINC [33] | ~5 million drug-like molecules | Training data for generative models |

| DrugBank [31] | Approved drugs with structures | Domain-specific training | |

| Natural Product Libraries [31] | Complex structures with chirality | Specialized model development | |

| Software Libraries | RDKit [31] [33] | Cheminformatics toolkit | Molecular validation, descriptor calculation |

| PyTor [31] | Deep learning framework | Model implementation | |

| TensorFlow [13] | Deep learning framework | Model implementation | |

| Molecular Representations | SMILES [33] | String-based representation | Sequence model input |

| Molecular Graphs [31] | Atom/bond representation | Graph neural network input | |

| ECFP [31] | Extended Connectivity Fingerprints | Structural features for models | |

| Evaluation Metrics | Reconstruction Accuracy [31] | Proportion of accurately reconstructed molecules | Model performance assessment |

| Validity Rate [31] | Chemically valid structures | Generation quality | |

| Novelty [31] | Unseen structures in training set | Generation creativity | |

| Uniqueness [31] | Non-duplicate structures | Generation diversity |

The four core generative architectures—VAEs, GANs, Transformers, and Diffusion Models—each offer distinct advantages for molecular design challenges. VAEs provide interpretable latent spaces and effective property control, particularly through specialized implementations like NP-VAE for complex natural products and CVAE for multi-property optimization. Transformers offer flexible sequence processing but may require careful validation to avoid statistical artifacts without biological learning. Diffusion models excel at high-quality 3D molecular generation with precise spatial control, while GANs enable adversarial training for realistic molecular generation. The optimal architectural selection depends on specific research requirements: latent space exploration (VAEs), 3D structure generation (diffusion models), protein-sequence-based generation (transformers), or adversarial refinement (GANs). Future directions include hybrid architectures, improved integration of domain knowledge, and enhanced synthetic accessibility prediction to bridge the gap between computational generation and experimental realization.

The application of generative artificial intelligence (AI) has transcended beyond small molecule discovery, establishing a new paradigm for the de novo design of complex biomolecules. This evolution marks a critical expansion in computational molecular science, enabling the precise design of proteins, antibodies, and peptides with tailored functions. Where traditional methods relied on immunization, random library screening, or structural analogs, generative AI now enables the atomically accurate, rational design of biomolecules from first principles [3]. This shift is powered by advanced architectures—including diffusion models, transformer networks, and specialized language models—that learn the complex language of biomolecular structure and function [36] [13]. These technologies have matured beyond theoretical potential to demonstrate experimental success, yielding novel bioactive entities validated against challenging disease targets. This document outlines application notes and standardized protocols for leveraging these advanced generative AI tools, providing researchers with practical methodologies for integrating computational design into experimental workflows for developing next-generation biotherapeutics and synthetic proteins.

Application Note: De Novo Antibody Design with RFdiffusion

Background and Principle