From In Silico to In Vitro: A Practical Framework for Validating Chemogenomic Predictions in Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on the critical process of validating chemogenomic predictions using robust in vitro assays.

From In Silico to In Vitro: A Practical Framework for Validating Chemogenomic Predictions in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the critical process of validating chemogenomic predictions using robust in vitro assays. It covers the foundational principles of chemogenomics, the selection and development of appropriate methodological approaches, strategies for troubleshooting and optimization, and the final steps for rigorous validation and comparative analysis. By bridging the gap between computational predictions and experimental confirmation, this framework aims to enhance the efficiency and success rate of translating potential drug-target interactions into validated leads, ultimately accelerating the drug discovery pipeline.

Chemogenomics Unveiled: Laying the Groundwork for Predictive Drug Discovery

Chemogenomics represents a paradigm shift in early drug discovery, integrating large-scale genomic data with chemical screening to elucidate interactions between small molecules and biological targets across entire genomes or proteomes. This approach provides a systems-level framework for understanding mechanisms of drug action (MoA), enabling simultaneous exploration of multiple drug-target interactions rather than focusing on single targets in isolation [1] [2]. The fundamental premise of chemogenomics lies in its ability to connect chemical space with biological space, creating a comprehensive map of interactions that accelerates both target identification and validation processes [1].

The drug discovery pipeline has traditionally been a cost-intensive endeavor with high attrition rates, where chemogenomic approaches now offer a strategic advantage. By predicting drug-target interactions (DTIs) early in the discovery process, chemogenomics reduces the target search space, indirectly decreasing the overall cost, time, and labor invested in bringing a drug to market [1]. This is particularly valuable given that conventional drug development processes face a clinical success rate of only 19%, significantly lower than expected rates [1]. Chemogenomic methods have thus gained substantial traction as in silico alternatives to complement traditional wet-lab experiments, supporting data-driven decision-making through the availability of extensive bioinformatics and genetic databases [1].

Core Methodologies in Chemogenomics

Experimental and Computational Approaches

Chemogenomic methodologies can be broadly categorized into experimental screening approaches and computational prediction frameworks, each with distinct advantages and applications.

Experimental chemogenomic profiling utilizes systematic screening of chemical compounds against comprehensive genetic libraries. In model organisms like Saccharomyces cerevisiae, two primary assays form the backbone of these approaches: HaploInsufficiency Profiling (HIP) and Homozygous Profiling (HOP) [2]. The HIP assay exploits drug-induced haploinsufficiency, where heterozygous strains deleted for one copy of an essential gene show specific sensitivity when exposed to a drug targeting that gene product. The complementary HOP assay interrogates nonessential homozygous deletion strains to identify genes involved in drug target biological pathways and those required for drug resistance [2]. The combined HIPHOP chemogenomic profile provides a comprehensive genome-wide view of the cellular response to specific compounds, directly identifying drug target candidates while also revealing resistance mechanisms [2].

For computational prediction, multiple algorithmic strategies have been developed:

Table 1: Comparison of Computational Chemogenomic Approaches

| Method Category | Key Principles | Advantages | Limitations |

|---|---|---|---|

| Similarity Inference Methods | Based on "wisdom of crowd" principle using chemical/structural similarities [1] | High interpretability for justifying predictions [1] | May miss serendipitous discoveries; often uses binary interaction data rather than continuous binding affinity [1] |

| Network-based Methods | Utilize topological features of drug-target bipartite networks [1] | Do not require 3D protein structures or negative samples [1] | Suffer from "cold start" problem for new drugs; biased toward high-degree nodes [1] |

| Feature-based Machine Learning | Use manually extracted features from drugs and targets [1] | Can handle new drugs/targets without similarity information [1] | Feature selection is difficult; class imbalance issues in classification [1] |

| Deep Learning Methods | Employ neural networks for automatic feature learning [1] [3] | Avoid labor-intensive manual feature extraction [1] | Low interpretability; reliability of learned features may not match chemical knowledge [1] |

| Matrix Factorization | Decompose interaction matrices into lower-dimensional representations [1] | Do not require negative samples [1] | Better at modeling linear than non-linear relationships [1] |

Emerging Integrated Frameworks

Recent advances have introduced multitask learning frameworks that simultaneously predict drug-target interactions and generate novel drug candidates. The DeepDTAGen model exemplifies this approach by using shared feature representations for both predicting drug-target binding affinity and generating target-aware drug variants [4]. This integration addresses the intrinsically interconnected nature of these tasks in pharmacological research, potentially increasing clinical success rates by ensuring generated drugs are conditioned on specific target interactions [4].

Another innovative approach is DrugMAN, which integrates heterogeneous biological networks using graph attention networks and mutual attention mechanisms. This method extracts network-specific features for drugs and targets from multiplex functional interaction networks, then captures interaction patterns between them to improve prediction accuracy, particularly in real-world scenarios [3].

Experimental Design and Validation Workflows

Standardized Chemogenomic Profiling Protocols

Robust chemogenomic profiling requires standardized experimental workflows and validation frameworks. For NR4A nuclear receptor research, a comprehensive profiling approach was established using orthogonal assay systems to validate modulator activity [5]. This included:

- Gal4-hybrid-based and full-length receptor reporter gene assays to determine cellular NR4A modulation across all three receptor subtypes (NR4A1, NR4A2, NR4A3)

- Selectivity screening against a representative panel of nuclear receptors outside the NR4A family

- Cell-free binding validation using isothermal titration calorimetry (ITC) and differential scanning fluorimetry (DSF)

- Compound quality control through HPLC purity analysis, mass spectrometry identification, kinetic solubility assessment, and multiplex toxicity monitoring [5]

This multi-layered validation strategy ensures that chemical tools used in functional and phenotypic studies have well-characterized activities and specificities, addressing concerns that incompletely profiled tools can compromise biological findings [5].

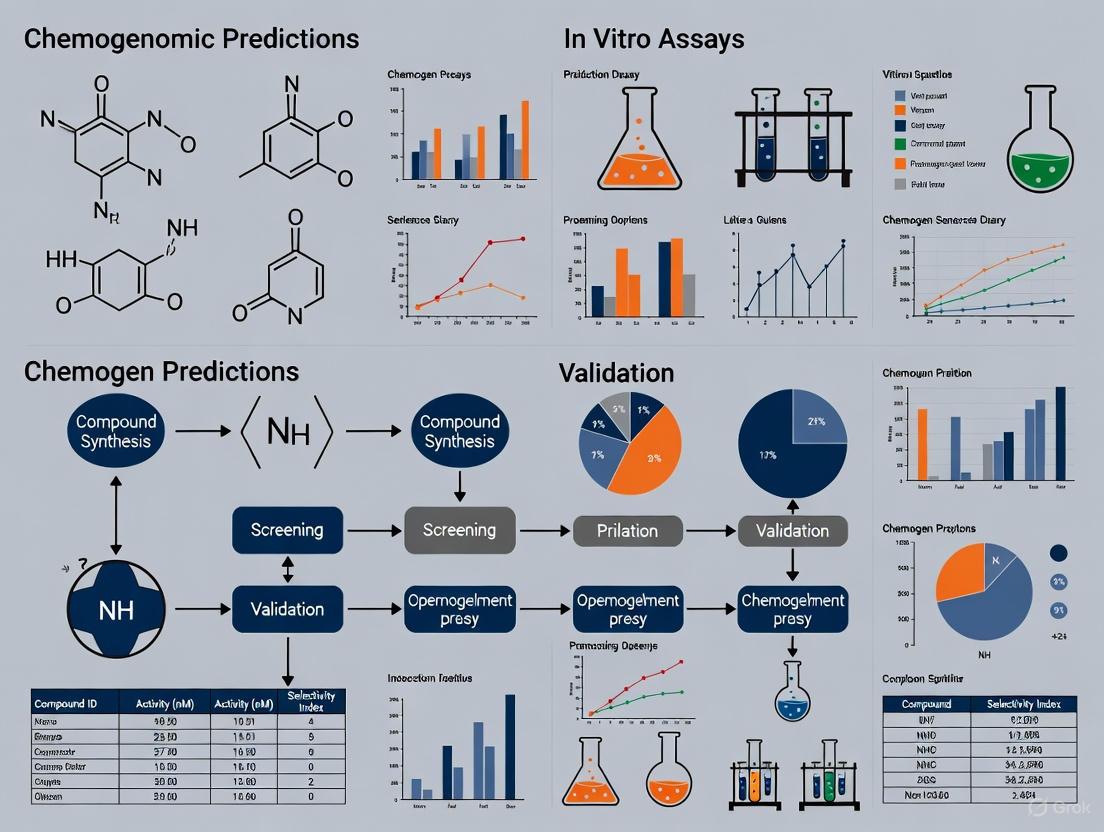

The diagram below illustrates a standardized workflow for chemogenomic prediction and validation:

Validation with Benchmark Datasets

Rigorous comparison of prediction methods requires standardized benchmarking. A 2025 systematic evaluation compared seven target prediction methods (MolTarPred, PPB2, RF-QSAR, TargetNet, ChEMBL, CMTNN, and SuperPred) using a shared dataset of FDA-approved drugs [6]. The study employed ChEMBL version 34 as the reference database, containing 15,598 targets, 2,431,025 compounds, and 20,772,701 interactions [6]. To ensure data quality, researchers filtered for high-confidence interactions with a minimum confidence score of 7 (indicating direct protein complex subunits assigned) and excluded non-specific or multi-protein targets [6].

Performance assessment in such benchmarks typically employs multiple metrics including Mean Squared Error (MSE), Concordance Index (CI), R-squared (r²m), and Area Under Precision-Recall Curve (AUPR) for binding affinity prediction, while drug generation tasks are evaluated based on Validity, Novelty, and Uniqueness of generated compounds [4].

Performance Comparison of Prediction Methods

Quantitative Benchmarking Results

Independent comparative studies provide crucial insights into the relative performance of different chemogenomic prediction approaches. A precise comparison study conducted in 2025 revealed that MolTarPred emerged as the most effective method among seven evaluated target prediction tools [6]. The study further optimized MolTarPred by demonstrating that Morgan fingerprints with Tanimoto scores outperformed MACCS fingerprints with Dice scores [6].

For drug-target binding affinity prediction, the DeepDTAGen multitask framework achieved state-of-the-art performance across multiple benchmark datasets:

Table 2: Performance Comparison of DeepDTAGen with Previous Methods on Binding Affinity Prediction

| Dataset | Best Previous Method | DeepDTAGen Performance | Improvement Over Previous Best |

|---|---|---|---|

| KIBA | GraphDTA (CI: 0.891) [4] | MSE: 0.146, CI: 0.897, r²m: 0.765 [4] | 0.67% CI improvement, 11.35% r²m improvement [4] |

| Davis | SSM-DTA (r²m: 0.689) [4] | MSE: 0.214, CI: 0.890, r²m: 0.705 [4] | 2.4% r²m improvement, 2.2% MSE reduction [4] |

| BindingDB | GDilatedDTA (CI: 0.868) [4] | MSE: 0.458, CI: 0.876, r²m: 0.760 [4] | 0.9% CI improvement, 4.1% r²m improvement [4] |

The DrugMAN model demonstrated particularly strong performance in challenging real-world scenarios, showing the smallest decrease in AUROC, AUPRC, and F1-Score from warm-start to cold-start conditions compared to traditional methods like SVM, RF, DeepPurpose, DTINet, and NeoDTI [3]. This robustness highlights the advantage of integrating heterogeneous biological networks, especially when limited chemogenomic data is available for specific targets.

Experimental Validation Case Studies

Robust validation of chemogenomic predictions requires confirmation through experimental assays. In a notable case study, Archetype Therapeutics utilized generative chemogenomics to identify novel and repurposed small molecules for intercepting invasion in lung adenocarcinoma [7]. Their AI-platform screened billions of potential drugs virtually before advancing candidates to experimental validation. The resulting molecules demonstrated significant efficacy in both in vitro and in vivo (GEMM and xenograft) models, substantially outperforming previously published molecules for preventing metastasis in early-stage lung adenocarcinoma [7]. This successful translation from computational prediction to biological validation exemplifies the power of integrated chemogenomic approaches.

For NR4A receptor research, comparative profiling under uniform conditions revealed significant deviations from published activities for several putative ligands, with some compounds showing complete lack of target binding and modulation [5]. This underscores the importance of orthogonal validation, as compounds with flawed characterization data can lead to erroneous biological conclusions. From an initial set of literature-reported NR4A modulators, only eight chemically diverse compounds were validated as direct NR4A modulators suitable for reliable target identification studies [5].

Successful chemogenomics research requires leveraging specialized reagents, databases, and computational tools. The following table summarizes key resources for establishing a chemogenomics research pipeline:

Table 3: Essential Research Resources for Chemogenomics

| Resource Category | Specific Tools/Databases | Key Applications | Technical Considerations |

|---|---|---|---|

| Bioactivity Databases | ChEMBL [6], BindingDB [6], DrugBank [3] | Training data for prediction models; reference for ligand-target interactions | ChEMBL ideal for novel protein targets; DrugBank better for drug indications [6] |

| Chemical Tools | Validated NR4A modulators (agonists/inverse agonists) [5] | Target identification and validation studies | Require orthogonal validation (ITC, DSF, reporter assays) [5] |

| Target Prediction Servers | MolTarPred [6], PPB2 [6], TargetNet [6] | Ligand-centric target fishing | Performance varies; MolTarPred currently top-performing [6] |

| Experimental Models | Yeast HIPHOP platform [2], Cell-based reporter assays [5] | Genome-wide chemogenomic profiling | Yeast systems provide standardized, reproducible fitness signatures [2] |

| Advanced Frameworks | DeepDTAGen [4], DrugMAN [3] | Integrated prediction and generation | DrugMAN excels in cold-start scenarios; DeepDTAGen enables multitask learning [3] [4] |

Chemogenomics has established itself as an indispensable approach in modern drug discovery, effectively bridging the gap between genomic sciences and chemical screening. The integration of diverse methodological approaches—from similarity-based methods to deep learning frameworks—provides researchers with a powerful toolkit for elucidating drug-target interactions across entire biological systems.

The most successful implementations combine computational predictions with orthogonal experimental validation, creating iterative refinement cycles that enhance both target identification and compound optimization. As evidenced by recent advances, future progress in chemogenomics will likely come from increased integration of heterogeneous data sources, development of multitask learning frameworks that simultaneously address prediction and generation tasks, and improved handling of cold-start scenarios for novel target classes.

For researchers embarking on chemogenomic studies, the current evidence supports a strategy that leverages multiple complementary methods rather than relying on a single approach, utilizes high-confidence benchmark datasets for method validation, and incorporates orthogonal experimental assays at early stages to verify computational predictions. This integrated methodology will maximize the potential of chemogenomics to accelerate drug discovery and improve our understanding of complex drug-target interaction networks.

The Crucial Role of In Vitro Validation in the Drug Discovery Pipeline

In the modern drug discovery landscape, where artificial intelligence (AI) and computational methods generate vast numbers of potential targets and candidates, the role of rigorous in vitro validation has never been more critical. These experimental assays form the essential bridge between in silico predictions and clinical success, providing the first real-world test of a molecule's biological activity. This guide examines the performance of various in vitro validation strategies, providing experimental data and protocols to help researchers navigate this complex, high-stakes phase of development.

The Validation Imperative: From In Silico to In Vitro

The first half of 2025 saw continued innovation in oncology therapeutics, with eight novel FDA approvals including targeted therapies, antibody-drug conjugates, and treatments for rare cancers [8]. This progress occurs against a challenging backdrop of persistently high attrition rates (approximately 95%) for novel drug discovery [8]. This high failure rate underscores why in vitro validation is not merely a procedural step, but a crucial strategic filter to mitigate risk before candidates advance to more costly in vivo studies and clinical trials.

The relationship between computational prediction and experimental validation represents a fundamental workflow in modern drug discovery:

Comparative Performance of In Vitro Validation Platforms

Different in vitro models offer varying strengths and limitations for validating chemogenomic predictions. The table below summarizes key performance characteristics of the primary platforms used in contemporary drug discovery pipelines:

| Model Type | Key Applications | Advantages | Limitations | Translational Relevance |

|---|---|---|---|---|

| 2D Cell Lines [8] | - High-throughput cytotoxicity screening- Drug efficacy testing- Initial biomarker hypothesis generation | - Reproducible & standardized- Cost-effective- Large established collections | - Limited tumor heterogeneity- Does not reflect tumor microenvironment | Moderate for initial target validation |

| 3D Organoids [8] | - Investigate drug responses- Evaluate immunotherapies- Predictive biomarker identification | - Faithfully recapitulates original tumor- Preserves tumor architecture- Suitable for HTS | - Complex and time-consuming to create- Cannot fully represent complete TME | High, especially for patient-specific responses |

| PDX-Derived Models [8] | - Biomarker discovery and validation- Clinical stratification- Drug combination strategies | - Most clinically relevant preclinical model- Preserves tumor heterogeneity- Mirrors patient responses | - Expensive and resource-intensive- Not suitable for HTS- Time-consuming | Very High, considered "gold standard" |

Experimental Protocols for Target Validation

Protocol 1: Cellular Target Engagement Using CETSA

The Cellular Thermal Shift Assay (CETSA) has emerged as a leading method for validating direct drug-target interactions in physiologically relevant environments [9].

Workflow Overview:

Detailed Methodology:

- Cell Treatment: Expose intact cells to varying concentrations of the test compound (typically 1-100 µM) for relevant exposure times (e.g., 1-6 hours) to allow cellular penetration and target engagement [9].

- Heat Denaturation: Divide cell suspensions into aliquots and heat at different temperatures (typically 45-65°C gradient) for 3-5 minutes using a precise thermal cycler [9].

- Cell Lysis: Freeze-thaw cycles or mechanical lysis to disrupt cells while preserving protein integrity from engaged targets [9].

- Protein Quantification: Analyze soluble target protein levels via Western blot, mass spectrometry, or ELISA. Recent advances enable high-resolution MS quantification of drug-target engagement, as demonstrated for DPP9 in rat tissue [9].

- Data Analysis: Calculate melting temperature (Tm) shifts. Stabilization of the target protein's thermal profile indicates direct binding and successful target engagement [9].

Protocol 2: Advanced Phenotypic Screening for Malaria Transmission-Blocking Compounds

Recent innovations in phenotypic screening demonstrate the sophistication of modern in vitro validation. A 2025 study established a robust platform for identifying Plasmodium falciparum transmission-blocking drugs using engineered parasites [10].

Key Experimental Steps:

- Parasite Engineering: Utilize transgenic NF54/iGP1_RE9Hulg8 parasites engineered to conditionally produce large numbers of stage V gametocytes expressing a red-shifted firefly luciferase viability reporter [10].

- Compound Exposure: Incubate mature stage V gametocytes with test compounds across a concentration range (typically 0.1 nM-10 µM) for 72 hours [10].

- Viability Assessment: Quantify gametocyte viability through luciferase reporter activity, providing a sensitive, quantitative readout of compound efficacy [10].

- Counter-Screening: Assess specificity by testing compounds against asexual blood stage parasites to identify stage-specific versus pan-active antimalarials [10].

- Secondary Validation: Confirm transmission-blocking activity in Standard Membrane Feeding Assays (SMFA) where mosquitoes feed on compound-exposed gametocytes [10].

The Scientist's Toolkit: Essential Research Reagents

Successful in vitro validation requires specialized reagents and tools. The following table outlines essential solutions for establishing robust validation workflows:

| Research Reagent | Function/Purpose | Application Context |

|---|---|---|

| CETSA Platform [9] | Measures drug-target engagement via thermal stability shifts in intact cells | Mechanistic validation of direct target binding in physiologically relevant systems |

| Engineered Reporter Cell Lines [10] | Express viability or pathway-specific reporters (e.g., luciferase) for compound screening | High-content phenotypic screening (e.g., malaria gametocyte viability assays) |

| Patient-Derived Organoids [8] | 3D cultures that preserve tumor architecture and genetic features | Assessment of tumor-specific drug responses and biomarker discovery |

| PDX-Derived Cells [8] | Cell lines originating from patient-derived xenograft models | Bridge between in vitro and in vivo studies; biomarker hypothesis generation |

| Clinical Database Resources (ChEMBL) [6] | Curated bioactivity data from scientific literature | Benchmarking and validation of target prediction methods |

Strategic Implementation for Pipeline Success

The most effective drug discovery pipelines employ these validation tools not in isolation, but as part of an integrated, multi-stage approach:

This sequential framework enables researchers to leverage the unique advantages of each model. For example, initial biomarker hypotheses generated through high-throughput screening of PDX-derived cell lines can be refined using 3D organoids and ultimately validated in PDX models before clinical trials [8]. This systematic approach builds a robust evidentiary chain that de-risks pipeline progression and increases the probability of clinical success.

Future Directions in Validation Science

The field of in vitro validation continues to evolve rapidly. Several trends are shaping its future development:

- AI Integration: Machine learning models are increasingly used to predict compound efficacy and prioritize molecules for in vitro testing, with recent studies showing 50-fold enrichment rates compared to traditional methods [9].

- Complex Model Systems: As the FDA reduces animal testing requirements for certain drug classes, advanced models like organoids are gaining regulatory acceptance as complementary approaches [8].

- Functional Relevance: Technologies that provide direct, in situ evidence of drug-target interaction, such as CETSA, are transitioning from specialized tools to strategic assets essential for decision-making [9].

In conclusion, while computational methods have dramatically accelerated the initial phases of drug discovery, rigorous in vitro validation remains the critical gatekeeper ensuring that only the most promising candidates advance through the development pipeline. By implementing the comparative frameworks and experimental approaches outlined in this guide, research teams can enhance their decision-making, compress development timelines, and ultimately increase their chances of translational success.

The experimental prediction of drug-target interactions (DTIs) is an expensive, time-consuming, and tedious process, creating a critical bottleneck in modern drug discovery pipelines [1]. Chemogenomic approaches have emerged as powerful computational strategies that leverage both chemical and genomic information to address this challenge, significantly narrowing the search space for interaction candidates that warrant further wet-lab investigation [1] [11]. These methods fundamentally frame DTI prediction as a machine learning problem, utilizing known interactions along with the properties of drugs and targets to train predictive models [11]. The growing importance of polypharmacology—understanding how drugs interact with multiple targets—has further intensified the need for reliable computational methods that can reveal hidden drug-target relationships for drug repurposing and safety profiling [6].

This guide provides a comprehensive comparison of three principal chemogenomic methodologies: ligand-based approaches, molecular docking, and machine learning-based methods. We objectively evaluate their performance characteristics, experimental requirements, and practical implementation considerations, with a specific focus on validating computational predictions through subsequent in vitro assays. As the field progresses, the integration of artificial intelligence with traditional computational methods has begun to transform the drug discovery landscape, enabling rapid screening of billions of compounds and improving the accuracy of binding affinity predictions [12] [13]. Understanding the relative strengths and limitations of each approach is essential for researchers selecting appropriate strategies for specific drug discovery scenarios.

Comparative Analysis of Chemogenomic Approaches

Table 1: Overall comparison of the three main chemogenomic approaches

| Approach | Core Principle | Data Requirements | Strengths | Limitations |

|---|---|---|---|---|

| Ligand-Based | "Wisdom of the crowd" principle using similarity between query molecule and known ligands [6] | Known ligands with annotated targets; compound structures [1] [6] | High interpretability; does not require protein structures; fast predictions [1] [6] | Struggles with novel targets/compounds (cold start problem); limited serendipitous discoveries [1] [6] |

| Molecular Docking | Predicts binding pose and affinity through computational simulation of physical interactions [14] [15] | 3D protein structures; compound structures [1] [15] | Provides structural insights; models physical interactions; can handle novel compounds [14] [15] | Limited by protein structure availability/quality; computationally intensive; scoring function inaccuracies [1] [6] |

| Machine Learning | Learns interaction patterns from known chemogenomic data using algorithms [1] [11] | Known drug-target interactions; compound and protein features [1] [11] | Handles new drugs/targets via features; no negative samples needed for some methods; high accuracy potential [1] [16] | Black-box nature; requires extensive training data; feature selection critical [1] [11] |

Table 2: Performance comparison of specific methods across different evaluation scenarios

| Method | Approach Category | Warm Start Performance | Cold Start Performance | Key Findings |

|---|---|---|---|---|

| ColdstartCPI [16] | Machine Learning (Induced-fit theory) | High performance | Excels, especially for unseen compounds and proteins | Treats proteins/compounds as flexible; outperforms state-of-the-art sequence-based models |

| MolTarPred [6] | Ligand-Centric (2D similarity) | Effective for known chemical space | Limited by ligand similarity | Most effective method in benchmark; performance depends on fingerprint choice |

| EnsemKRR [11] | Machine Learning (Ensemble) | AUC: 94.3% | Not specifically evaluated | Combines dimensionality reduction with ensemble learning |

| CoBDock [15] | Docking (Consensus blind docking) | Superior binding site and mode prediction vs. other blind docking | Not applicable | Machine learning consensus of multiple docking/cavity detection tools |

| ML-Guided Docking [13] | Hybrid (ML + Docking) | Identifies >87% of top-scoring compounds | Not applicable | Reduces docking computation by >1,000-fold for billion-compound libraries |

Experimental Protocols and Workflows

Ligand-Centric Similarity Searching (MolTarPred Protocol)

Ligand-centric methods operate on the principle that chemically similar compounds are likely to share molecular targets [6]. The experimental workflow for implementing similarity-based target prediction involves several standardized steps:

Database Preparation: Compile a comprehensive database of known ligand-target interactions, such as ChEMBL (version 34 contains 2.4 million compounds, 15,598 targets, and 20.8 million interactions) [6]. Filter entries to retain only high-confidence interactions (e.g., confidence score ≥7 in ChEMBL, indicating direct protein complex subunits assigned) and remove duplicates and non-specific targets.

Molecular Representation: Convert query compounds and database molecules into appropriate molecular representations. Common fingerprints include MACCS keys or Morgan fingerprints (hashed bit vector with radius two and 2048 bits) [6].

Similarity Calculation: Compute structural similarity between query molecule and all database compounds using Tanimoto similarity for Morgan fingerprints or Dice scores for MACCS fingerprints [6].

Consensus Prediction: Identify the top similar ligands (typically 1-15 nearest neighbors) from the database and extract their annotated targets. The frequency of target appearances among nearest neighbors indicates prediction confidence [6].

Validation: For experimental validation, select top-predicted targets for in vitro binding assays or functional cellular assays to confirm the computational predictions.

Structure-Based Molecular Docking (CoBDock Protocol)

Molecular docking predicts how small molecules bind to protein targets by exploring binding poses and scoring affinities [14] [15]. The CoBDock protocol implements a consensus blind docking approach:

Target Preparation:

- Input protein structure in PDB format and remove undesired elements (water, free ions, bound ligands) using PyMOL.

- Add hydrogen atoms with Pdb2Pqr software at physiological pH 7.4, using AMBER force field and propka for titration states [15].

Ligand Preparation:

- Input ligand structures in SMILES, PDB, MOL, MOL2, or SDF formats.

- Add hydrogens to polar atoms using Open Babel at pH 7.4 and convert to appropriate formats for docking programs [15].

Parallel Blind Docking and Cavity Detection:

Consensus Binding Site Prediction:

- Superimpose a 10Å-resolution grid over the entire protein surface.

- Assign each predicted binding mode and detected cavity to the closest grid box.

- Use a trained machine learning model to score and rank grid locations based on consensus from all methods [15].

Local Docking and Validation:

- Perform local docking with PLANTS at the top-ranked binding site to generate final pose predictions.

- Experimental validation typically involves X-ray crystallography of protein-ligand complexes to verify binding poses, or binding affinity assays (ITC, SPR) to quantify interaction strength [15].

Workflow for consensus blind docking (CoBDock)

Machine Learning Framework (ColdstartCPI Protocol)

ColdstartCPI represents a modern machine learning approach inspired by induced-fit theory, treating both compounds and proteins as flexible molecules during binding [16]:

Data Collection and Preprocessing:

- Collect compound structures as SMILES strings and protein sequences as amino acid sequences.

- Source known drug-target interactions from databases like ChEMBL, BindingDB, or DrugBank.

- Implement rigorous data splitting strategies (warm start, compound cold start, protein cold start, blind start) to evaluate generalization capability [16].

Feature Extraction:

- Use Mol2Vec to generate substructure feature matrices for compounds, capturing semantic features of drug substructures.

- Apply ProtTrans to create amino acid feature matrices for proteins, encoding structural and functional information [16].

- Generate global representations of compounds and proteins using pooling functions on the feature matrices.

Feature Space Unification:

- Process features through four separate Multi-Layer Perceptrons (MLPs) to unify feature spaces and decouple feature extraction from CPI prediction [16].

Transformer-Based Interaction Modeling:

- Construct a joint matrix representation of the compound-protein pair.

- Feed the joint matrix into a Transformer module to learn compound and protein features by extracting inter- and intra-molecular interaction characteristics [16].

- This flexible representation allows compound features to change depending on binding proteins and vice versa, aligning with induced-fit theory.

Prediction and Experimental Validation:

- Concatenate the final compound and protein features.

- Process through a three-layer fully connected neural network with dropout to predict interaction probability [16].

- Experimental validation includes literature searches for known interactions, molecular docking simulations, binding free energy calculations, and molecular dynamics simulations to verify top predictions [16].

Performance Benchmarking and Experimental Validation

Performance Across Different Scenarios

The generalization capability of chemogenomic methods varies significantly across different validation scenarios, particularly between warm start (where drugs and targets appear in the training set) and cold start (predicting interactions for novel drugs or targets) conditions [16]:

- Ligand-based methods like MolTarPred perform well in warm start scenarios but struggle with cold start problems, particularly for compounds with low similarity to known database entries [1] [6].

- Traditional docking approaches can handle novel compounds but depend heavily on the availability and quality of protein structures [1] [15].

- Advanced machine learning methods like ColdstartCPI demonstrate robust performance across both warm start and cold start conditions, achieving area under receiver operating characteristic curve (AUROC) values exceeding 0.9 in warm start and maintaining competitive performance (AUROC >0.85) in challenging cold start scenarios [16].

Table 3: ColdstartCPI performance across different scenarios

| Evaluation Setting | AUROC | AUPRC | Key Advantage |

|---|---|---|---|

| Warm Start | >0.9 | >0.85 | Benefits from task-relevant feature extraction |

| Compound Cold Start | >0.85 | >0.8 | Handles novel compounds effectively |

| Protein Cold Start | >0.85 | >0.8 | Generalizes to unseen proteins |

| Blind Start | >0.8 | >0.75 | Works with completely novel drug-target pairs |

Validation with Experimental Assays

Computational predictions require rigorous experimental validation to confirm biological relevance. Successful validation strategies include:

Binding Affinity Assays: Surface Plasmon Resonance (SPR) and Isothermal Titration Calorimetry (ITC) provide quantitative measurements of binding strength (Kd values) for predicted interactions [6].

Functional Cellular Assays: Cell-based reporter assays or phenotypic screening confirm whether predicted interactions translate to functional biological effects in relevant cellular contexts [6].

Structural Validation: X-ray crystallography or cryo-electron microscopy of protein-ligand complexes provides atomic-level confirmation of binding modes predicted by docking studies [14] [15].

Drug Repurposing Case Studies: Experimental validation of predictions for specific disease areas demonstrates real-world utility. For example, ColdstartCPI predictions for Alzheimer's Disease, breast cancer, and COVID-19 were validated through literature evidence, docking simulations, and binding free energy calculations [16].

Experimental validation workflow for computational predictions

Research Reagent Solutions

Table 4: Essential research reagents and databases for chemogenomic research

| Resource | Type | Function | Application Context |

|---|---|---|---|

| ChEMBL [6] | Database | Manually curated database of bioactive molecules with drug-like properties | Primary source for ligand-target interactions; training data for machine learning models |

| Protein Data Bank (PDB) [14] | Database | Repository of 3D protein structures determined by X-ray, NMR, Cryo-EM | Source of protein structures for molecular docking studies |

| AutoDock Vina [15] | Software | Molecular docking tool with empirical scoring function | Structure-based virtual screening and binding pose prediction |

| Mol2Vec [16] | Algorithm | Unsupervised machine learning for compound representation | Generates substructure-aware features for machine learning |

| ProtTrans [16] | Algorithm | Protein language model for sequence representation | Generates structural and functional protein features from sequences |

| SPR/Biacore [6] | Instrument | Surface plasmon resonance for binding affinity measurement | Experimental validation of binding affinity (Kd) |

| ITC | Instrument | Isothermal titration calorimetry for thermodynamics | Measures binding affinity and thermodynamic parameters |

| Enamine REAL [13] | Compound Library | Make-on-demand chemical library (70B+ compounds) | Ultralarge virtual screening for hit identification |

The comparative analysis of ligand-based, docking, and machine learning approaches reveals a complementary landscape of chemogenomic methodologies, each with distinct advantages for specific drug discovery scenarios. Ligand-based methods offer interpretability and speed but struggle with novelty, while docking provides physical insights but depends on structural data. Machine learning approaches, particularly recent induced-fit theory-guided models like ColdstartCPI, demonstrate superior performance in cold-start scenarios and show promising generalization capabilities [16].

The emerging trend of hybrid approaches that combine multiple methodologies represents the most promising direction for future research. Machine learning-guided docking screens exemplify this integration, achieving unprecedented efficiency gains—reducing computational requirements by more than 1,000-fold while maintaining high sensitivity in identifying true binders [13]. These integrated workflows enable practical virtual screening of multi-billion compound libraries, dramatically expanding the explorable chemical space for drug discovery.

For researchers validating chemogenomic predictions with in vitro assays, the selection of methodology should align with the specific discovery context: ligand-based approaches for target fishing of compounds with known analogs, docking for structure-enabled targets, and machine learning for scenarios with limited structural information or challenging cold-start problems. As artificial intelligence continues to transform computational drug discovery, the convergence of these approaches with experimental validation will accelerate the identification of novel therapeutic candidates and expand our understanding of polypharmacology.

In the field of chemogenomics, the reliable prediction of drug-target interactions (DTIs) is fundamental to accelerating drug discovery and repurposing efforts. Public bioactivity databases serve as the foundational infrastructure for building predictive computational models. Among these, ChEMBL and DrugBank have emerged as two of the most comprehensive and widely used resources by researchers and drug development professionals. These databases provide curated information on bioactive molecules, their protein targets, and experimentally determined interactions, enabling the training and validation of machine learning models for target prediction. The strategic selection of a database directly impacts the predictive performance of chemogenomic models and the success of subsequent experimental validation [17].

This guide provides an objective comparison of DrugBank and ChEMBL within the context of validating chemogenomic predictions. It details their respective contents, access models, and applicability for different research scenarios, supported by experimental data and methodologies from recent scientific literature.

Database Comparison: ChEMBL vs. DrugBank

The table below provides a detailed, side-by-side comparison of the core characteristics of ChEMBL and DrugBank, highlighting their distinct strategic focuses.

Table 1: Strategic Comparison of ChEMBL and DrugBank

| Feature | ChEMBL | DrugBank |

|---|---|---|

| Primary Focus | Large-scale bioactivity data for drug-like compounds and pre-clinical candidates [18] | Comprehensive drug data, including detailed drug and mechanism-of-action information [19] [18] |

| Core Content | Bioactivity data (e.g., IC₅₀, Kᵢ) from scientific literature and patents; extensive SAR data [20] [18] | FDA-approved and experimental drugs, with rich pharmacological and pharmaceutical data [19] [18] |

| Target Coverage | Extensive, focusing on a broad range of protein targets (e.g., kinases, GPCRs, enzymes) for research [20] [6] | Mappings to primary drug targets, with a focus on established therapeutic mechanisms [19] |

| Data Model | Manually curated bioactivity data integrated with drug information; distinction between research compounds and drugs [18] | Integrated drug and target information, with half of record data devoted to drug and half to pharmacological properties [19] |

| Access Model | Fully open-access [18] [17] | Freely available for non-commercial use; not fully open-access [18] |

| Ideal Use Case | Building generalizable target prediction models for novel compounds and protein targets [6] | Predicting new indications for known drugs and understanding established drug-target pathways [6] |

Performance Evaluation in Target Prediction

Independent, comparative studies are essential for objectively evaluating the utility of databases in practical research. One systematic benchmark study evaluated seven different target prediction methods, many of which are trained on ChEMBL data, on a shared dataset of FDA-approved drugs [6].

Table 2: Performance of ChEMBL-Based Target Prediction Methods

| Prediction Method | Type | Underlying Algorithm | Key Performance Finding |

|---|---|---|---|

| MolTarPred [6] | Ligand-centric | 2D similarity search | Identified as the most effective method in the benchmark study. |

| RF-QSAR [6] | Target-centric | Random Forest | Performance validated on a shared benchmark dataset. |

| CMTNN [6] | Target-centric | Multitask Neural Network | Performance validated on a shared benchmark dataset. |

| EnsemKRR Model [11] | Chemogenomic | Kernel Ridge Regression Ensemble | Achieved highest AUC (94.3%) for DTI prediction using ChEMBL data. |

The study concluded that ChEMBL is more suitable for predicting interactions with novel protein targets due to its extensive chemogenomic data, whereas DrugBank is ideal for predicting new drug indications against known targets because of its focus on drug-related information [6]. Furthermore, a separate study developed an ensemble chemogenomic model using ChEMBL and BindingDB data, reporting that 57.96% of known targets were identified in the top-10 predictions, representing an approximately 50-fold enrichment over random guessing [20].

Experimental Protocols for Validation

Protocol 1: Building an Ensemble Chemogenomic Model

This protocol is adapted from a study that developed a high-performance ensemble model for target prediction [20].

- Data Collection: Extract compound-target interactions with associated bioactivity values (e.g., Kᵢ ≤ 100 nM for positive set) from ChEMBL and other databases like BindingDB.

- Descriptor Calculation:

- Model Training: Construct multiple individual chemogenomic models (e.g., using XGBoost) by combining the different molecular and protein descriptors. Each model learns to differentiate interacting compound-target pairs from non-interacting ones [20].

- Ensemble Construction: Select and combine the best-performing individual models into a final ensemble model to improve overall prediction accuracy and robustness [20].

- Target Prediction: For a query compound, create compound-target pairs with all potential targets in the database. Input these pairs into the ensemble model and rank the targets based on the output interaction scores. The top-k ranked targets are the highest-confidence predictions [20].

Protocol 2: Benchmarking Target Prediction Methods

This protocol outlines the methodology for a fair and precise comparison of different prediction tools, as seen in a recent benchmark study [6].

- Dataset Curation:

- Source a large set of ligand-target interactions from a recent ChEMBL release (e.g., version 34).

- Apply strict filtering: include only bioactivities with standard values (IC₅₀, Kᵢ, EC₅₀) below 10,000 nM; exclude non-specific protein targets; remove duplicate compound-target pairs.

- To prevent bias, create a separate benchmark set of FDA-approved drugs that are excluded from the main training database.

- Model Selection and Execution: Select a representative set of stand-alone and web-server prediction methods (e.g., MolTarPred, PPB2, RF-QSAR). Run these methods on the benchmark dataset.

- Performance Assessment: Evaluate methods based on their ability to recall the known targets for the benchmark drugs, with a focus on top-k prediction accuracy (e.g., whether the true target appears in the top 1 or top 10 predictions) [6].

Workflow Visualization

The following diagram illustrates the logical workflow for building and applying a chemogenomic model, culminating in experimental validation.

The Scientist's Toolkit: Research Reagent Solutions

The table below lists key computational and experimental "reagents" – databases, software, and assays – essential for conducting research in this field.

Table 3: Essential Research Reagents for Chemogenomic Prediction and Validation

| Research Reagent | Type | Function & Application |

|---|---|---|

| ChEMBL Database [18] [6] | Data Resource | Provides a vast, open-access repository of bioactive molecules and curated drug-target interactions for training predictive models. |

| DrugBank Database [19] [18] | Data Resource | Offers comprehensive information on drugs, their mechanisms, and targets, ideal for studies on drug repurposing and established pharmacology. |

| MolTarPred [6] | Software Tool | A ligand-centric, 2D similarity-based prediction method identified as a top-performing tool for target prediction. |

| EnsemKRR [11] | Software/Algorithm | An ensemble learning method that combines multiple classifiers to achieve high accuracy in predicting drug-target interactions. |

| Binding Affinity Assays (e.g., Kᵢ, IC₅₀) [20] [6] | Experimental Assay | Measures the strength of interaction between a compound and a purified target protein, used for experimental validation of computational predictions. |

| Gene Expression Profiling (e.g., CMap) [21] | Experimental/Data Resource | Measures transcriptomic changes in response to drug treatment; can be used for target prediction independent of chemical structure. |

Understanding Forward and Reverse Chemogenomics for Target Validation

Target validation is a critical stage in the drug discovery pipeline, establishing a causal link between the modulation of a target protein and a desired therapeutic effect [1] [22]. Within this process, chemogenomics has emerged as a powerful system-based strategy that utilizes small molecules as probes to elucidate the relationship between a biological target and a phenotypic outcome [23] [24] [22]. This paradigm operates on two complementary axes: forward chemogenomics and reverse chemogenomics. Both approaches are foundational to validating chemogenomic predictions, yet they differ fundamentally in their starting points and methodological workflows [23] [24]. This guide provides an objective comparison of these two strategies, detailing their performance, key experimental protocols, and essential reagent solutions, thereby offering a framework for researchers to select the appropriate methodology for their target validation challenges.

Strategic Comparison: Forward vs. Reverse Chemogenomics

The core distinction between forward and reverse chemogenomics lies in their initial discovery trigger. Forward chemogenomics begins with the observation of a phenotypic change in a cell or organism and aims to identify the molecular target responsible, effectively moving from phenotype to target [23] [24]. Conversely, reverse chemogenomics starts with a specific, isolated protein target and seeks compounds that modulate its activity, subsequently analyzing the resulting phenotype in a biological system, thus moving from target to phenotype [23] [24] [25]. This fundamental difference dictates their respective applications, advantages, and limitations within a research project aimed at in vitro validation.

Table 1: High-Level Strategic Comparison of Forward and Reverse Chemogenomics

| Feature | Forward Chemogenomics | Reverse Chemogenomics |

|---|---|---|

| Starting Point | Phenotypic screen in cells or whole organisms [23] [24] | Specific, known protein target (e.g., enzyme, receptor) [23] [24] |

| Primary Goal | Identify the molecular target(s) underlying an observed phenotype [23] | Find modulators (e.g., inhibitors) for a given target and validate its biological role [23] [24] |

| Typical Screening | Phenotypic assays (e.g., cell growth, morphology) [23] | Target-based in vitro assays (e.g., enzymatic activity, binding) [23] [24] |

| Key Challenge | Deconvoluting the mechanism of action and identifying the specific protein target [23] [24] | Confirming that target modulation produces the desired phenotypic effect in a biologically relevant system [23] |

Table 2: Comparison of Experimental Performance and Output

| Aspect | Forward Chemogenomics | Reverse Chemogenomics |

|---|---|---|

| Target Identification | Directly identifies novel, sometimes unexpected, targets [23] [24] | Requires a pre-selected, hypothesized target [23] |

| Hit Rate for Phenotypic Effect | High, as screening is based on the desired phenotype [23] | Variable; a potent in vitro inhibitor may not yield the desired cellular phenotype [23] |

| Suitability for Orphan Targets | Excellent for elucidating function of uncharacterized targets [23] | Less suitable unless the target is already cloned and available for screening [23] |

| Technical & Computational Demand | High, due to complex target deconvolution steps [23] [25] | Lower initial demand, but requires a robust in vitro assay [23] |

| Risk of Off-Target Effects | Discovered late, after phenotypic confirmation [24] | Can be assessed early via counter-screens and selectivity panels [24] |

Experimental Protocols for Target Validation

The validation of chemogenomic predictions relies on robust and well-established experimental methodologies. Below are detailed protocols for the key assays employed in both forward and reverse chemogenomics approaches.

Forward Chemogenomics: Phenotypic Screening & Target Deconvolution

Objective: To identify small molecules that induce a desired phenotype and subsequently determine their protein target(s) [23] [24]. Workflow Overview:

- Phenotypic Assay Development: A cell-based or whole-organism assay is designed to report on a specific disease-relevant phenotype (e.g., inhibition of tumor growth, alteration in cell morphology, or reversal of a disease marker) [23].

- High-Throughput Screening (HTS): A diverse library of small molecules is screened against the phenotypic assay to identify "hits" that produce the desired effect [23] [24].

- Target Deconvolution: This is the critical and most challenging step. Several methods can be employed:

- Chemogenomic Profiling in Model Organisms: In yeast, for example, a pool of barcoded gene deletion strains is grown competitively in the presence of the hit compound. Strains that are hypersensitive (or resistant) to the compound are identified by sequencing the barcodes, directly implicating the deleted genes in the compound's mechanism of action [25]. This can identify the direct target or genes in pathways that buffer the target.

- Haploinsufficiency Profiling (HIP): Used for essential genes, this method screens a library of heterozygous yeast deletion strains. A strain becomes hypersensitive if the compound inhibits the product of the single remaining gene copy, pointing to the direct target [25].

- Expression-Based Profiling: The genome-wide mRNA expression profile of cells treated with the compound is compared to a reference database of profiles from cells treated with compounds of known mechanism or from genetic mutants. The best-matched profile suggests a similar mechanism of action (guilt-by-association) [25].

- In Vitro Validation: The putative target identified is validated using biochemical assays, such as measuring direct binding (e.g., Surface Plasmon Resonance) or functional inhibition in a purified system [22].

Reverse Chemogenomics: Target-Focused Screening & Phenotypic Validation

Objective: To discover compounds that interact with a predefined protein target and then validate that this interaction produces a relevant biological phenotype [23] [24]. Workflow Overview:

- Target Selection & Assay Development: A purified protein target (e.g., a kinase, protease, or GPCR) is used to develop a high-throughput in vitro assay. This assay measures direct binding or functional modulation of the target (e.g., fluorescence-based enzymatic activity assay) [23] [24].

- High-Throughput Screening (HTS): A focused or diverse chemical library is screened against the target-based assay to identify "hits" that modulate its activity [23].

- Hit Characterization & Optimization: Potent hits are characterized for potency (IC50/Ki), selectivity against related targets, and binding mode (e.g., via crystallography). Medicinal chemistry is often used to optimize lead compounds [23].

- Phenotypic Validation: The optimized compounds are then tested in a cellular or whole-organism model to determine if target modulation produces the anticipated therapeutic phenotype. For example, a kinase inhibitor identified in an enzymatic assay would be tested for its ability to block a downstream signaling pathway or inhibit cancer cell proliferation [23] [24] [22].

Workflow Visualization

The following diagrams, generated using Graphviz DOT language, illustrate the logical workflows and decision processes for both forward and reverse chemogenomics approaches.

Forward Chemogenomics: From Phenotype to Target

Reverse Chemogenomics: From Target to Phenotype

The Scientist's Toolkit: Key Research Reagent Solutions

The execution of chemogenomic studies depends on specialized reagents and tools. The table below details essential materials and their functions for setting up these experiments.

Table 3: Essential Research Reagents for Chemogenomic Target Validation

| Research Reagent / Tool | Function in Chemogenomics | Key Application Notes |

|---|---|---|

| Barcoded Yeast Deletion Libraries (e.g., YKO collection) [25] | Genome-wide competitive fitness profiling in a model organism. Allows for direct target identification via HIP/HOP assays. | Essential for efficient target deconvolution in forward chemogenomics in yeast. Available as homozygous, heterozygous, and DAmP collections [25]. |

| Focused Chemical Libraries [23] | Targeted libraries enriched with compounds known to bind specific protein families (e.g., GPCRs, kinases). | Increases hit rates in reverse chemogenomics. Based on the "privileged structure" concept and SAR homology [23]. |

| Diverse Compound Libraries | Screening a wide array of chemical space to find novel starting points for target modulation or phenotypic effect. | Used in both forward phenotypic screens and reverse target-based screens to identify novel chemotypes [23]. |

| Purified Recombinant Target Proteins | The essential reagent for developing in vitro assays in reverse chemogenomics. | Requires a robust protein production and purification pipeline. Protein quality is critical for assay performance [23]. |

| Phenotypic Reporter Assays | Quantifying complex cellular phenotypes (e.g., pathway activation, cell death, differentiation) in a high-throughput format. | The core of forward chemogenomics screens. Requires careful validation to ensure relevance to the disease biology [23]. |

| Reference Bioactive Compound Sets (e.g., with known MOA) [25] | Used as controls and for building reference profiles in expression-based or fitness-based profiling. | Enables "guilt-by-association" approaches for MOA prediction in forward chemogenomics [25]. |

Forward and reverse chemogenomics represent two powerful, complementary strategies for target validation within drug discovery. The choice between them hinges on the research question and available starting points. Forward chemogenomics is ideal for uncovering novel biology and therapeutic targets from phenotypic observations but faces the significant challenge of target deconvolution. Reverse chemogenomics offers a more direct path to drug development for well-hypothesized targets but carries the risk that target modulation may not yield the desired phenotypic outcome. A modern research program often integrates both approaches, using forward chemogenomics for novel target discovery and reverse chemogenomics for the rational optimization and validation of lead compounds, thereby creating a powerful, iterative cycle for advancing therapeutic candidates.

Building the Bridge: A Methodological Guide to In Vitro Assay Development for Chemogenomics

In modern drug discovery, the journey from a computational prediction to a validated drug candidate is bridged by experimental assays. Chemogenomic models can rapidly identify potential drug-target interactions from millions of possibilities, but these in silico predictions require empirical validation to confirm real-world biological activity [6] [26]. This validation process predominantly relies on two complementary approaches: binding assays, which measure the physical interaction between a compound and its target, and enzymatic activity assays, which quantify the functional modulation of enzyme activity. Understanding the distinction, application, and limitations of these methods is fundamental for researchers aiming to translate computational hypotheses into therapeutic leads effectively.

The choice between binding and activity assays is not merely technical but strategic, impacting the quality, relevance, and ultimate success of a drug discovery campaign. While binding assays determine the affinity and strength of the molecular interaction, enzymatic assays reveal functional consequences, providing critical insights into the mechanism of action and efficacy of potential inhibitors [27] [28]. This guide provides a detailed comparison of these two foundational methods, offering experimental data and protocols to inform assay selection within the context of chemogenomic validation.

Core Principles and Direct Comparison

At their core, these assays answer different but related questions. A binding assay asks, "Does the compound physically bind to the target?" whereas an enzymatic activity assay asks, "Does the compound alter the target's function?"

Fundamental Distinctions

- Binding Assays measure the formation of a physical complex between a small molecule (ligand) and its biological target (e.g., protein, receptor). The key parameter is the equilibrium dissociation constant (Kd), which quantifies the concentration of ligand required to occupy half the binding sites at equilibrium. A lower Kd indicates higher affinity [29] [28].

- Enzymatic Activity Assays measure the catalytic turnover of an enzyme, typically by monitoring the depletion of a substrate or the formation of a product over time. The key parameter is the half-maximal inhibitory concentration (IC50), which measures the potency of an inhibitor. It is related to the inhibition constant (Ki) through the Cheng-Prusoff equation for competitive inhibition:

Ki = IC50 / (1 + [S]/Km), where [S] is the substrate concentration and Km is the Michaelis constant [30] [28].

Comparative Analysis: Strengths, Limitations, and Applications

The table below summarizes the critical characteristics of each assay type to guide initial selection.

| Feature | Binding Assays | Enzymatic Activity Assays |

|---|---|---|

| What It Measures | Physical interaction and affinity (Kd) | Functional modulation of catalytic activity (IC50, Ki) |

| Key Output | Affinity (Kd, Ka), binding kinetics | Potency (IC50), enzyme kinetics (Km, Vmax), mechanism of action |

| Primary Application | Target engagement, affinity screening, binding kinetics | Functional screening, mechanism of action studies, hit validation |

| Throughput | Typically high (e.g., using DSF, SPR) | High, especially with fluorescence/luminescence formats [31] [30] |

| Functional Insight | Indirect; binding does not guarantee inhibition [27] | Direct; measures the functional outcome of binding |

| Correlation to Cellular Activity | Can be weaker, as it ignores cellular permeability and context [28] | Stronger, but can still differ due to cell membrane and intracellular conditions [28] |

| Key Advantage | Can screen inactive kinases or proteins; measures affinity directly. | Confirms compound efficacy and provides mechanistic data. |

| Technical Complexity | Often simpler, label-free options (e.g., DSF) [27] | Can be complex, requiring active enzyme and coupled systems [30] |

Experimental Evidence and Correlation Data

Theoretical distinctions are borne out in experimental data. A seminal study directly compared these methods by screening 244 kinase inhibitors against 15 different kinase constructs using both Differential Scanning Fluorimetry (DSF—a binding assay) and a mobility shift activity assay [27].

Key Experimental Findings

- Baseline Correlation is Weak: Initial comparisons using single-dose activity measurements showed a poor correlation, where only 49% of compounds with a strong binding signal (Tm shift >4°C) also showed potent inhibition (IC50 <0.5 µM) [27].

- Correlation Can Be Improved: The study demonstrated that correlation significantly improves when more precise screening conditions are used. This includes using kinase constructs that include additional regulatory domains beyond just the catalytic domain and determining full IC50 dose-response curves instead of single-point inhibition percentages [27].

- Context is Critical: The functional outcome of binding is highly dependent on the enzyme's conformational state. For example, single-molecule FRET studies on adenylate kinase have shown that ligand binding and conformational dynamics are tightly coupled, and external factors like urea can alter both dynamics and activity without denaturing the enzyme [32].

This evidence underscores that while binding is a prerequisite for inhibition, the relationship is not always straightforward. Enzymatic activity assays are therefore indispensable for confirming that binding leads to the desired functional outcome.

Decision Workflow and Experimental Protocols

Selecting the appropriate assay depends on the research question, stage of the project, and available resources. The following workflow and detailed protocols provide a practical guide for implementation.

Assay Selection Workflow

The diagram below outlines a logical decision-making process for selecting between binding and enzymatic activity assays, particularly in the context of validating computational predictions.

Detailed Experimental Protocols

Protocol 1: Binding Assay using Differential Scanning Fluorimetry (DSF)

DSF is a popular, low-cost binding assay that detects ligand-induced thermal stabilization of a protein [27].

- Principle: A fluorescent dye binds to hydrophobic patches of the protein exposed during thermal denaturation. Ligand binding stabilizes the protein, increasing its melting temperature (Tm).

- Procedure:

- Reaction Setup: In a PCR plate, mix purified protein (e.g., kinase catalytic domain) with the candidate compound and the fluorescent dye (e.g., SYPRO Orange).

- Thermal Ramp: Load the plate into a real-time PCR instrument and gradually increase the temperature (e.g., from 25°C to 95°C) while monitoring fluorescence.

- Data Analysis: Plot fluorescence vs. temperature. Determine the Tm for the protein with and without the compound. A positive ΔTm indicates binding.

- Key Considerations: Run in duplicate or triplicate. Include a DMSO-only control. Compounds with intrinsic fluorescence can interfere [27].

Protocol 2: Enzymatic Activity Assay using Mobility Shift

This is a robust, non-radiometric activity assay that directly measures substrate-to-product conversion [27].

- Principle: The kinase-mediated transfer of a phosphate group to a peptide substrate changes its net charge. Phosphorylated and non-phosphorylated peptides are separated via capillary electrophoresis and quantified by fluorescence.

- Procedure:

- Reaction Setup: Combine the active kinase, ATP (at a concentration of 2-4x its Km), the fluorescently-labeled peptide substrate, and the inhibitor compound in a suitable buffer.

- Incubation and Quenching: Allow the enzymatic reaction to proceed for a set time, then stop it with a quenching buffer.

- Separation and Detection: Load the mixture onto a microfluidics chip (e.g., Caliper system). An electric field separates the substrate and product, which are detected by their fluorescence.

- Data Analysis: Calculate the reaction velocity. Determine the IC50 by fitting the velocity data at varying inhibitor concentrations to a dose-response curve [27].

- Key Considerations: Use ATP concentrations near the Km to ensure sensitivity to competitive inhibitors. Use an active, preferably full-length, kinase construct for physiologically relevant results [27].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful assay execution relies on high-quality reagents and instruments. The following table details key solutions for setting up binding and enzymatic activity assays.

| Item | Function & Application |

|---|---|

| Purified Protein Target | The isolated enzyme or protein used in both assay types. Full-length constructs including regulatory domains can improve correlation with cellular activity [27]. |

| SYPRO Orange Dye | A fluorescent dye used in DSF binding assays that binds to hydrophobic regions exposed during protein unfolding [27]. |

| Fluorescently-Labeled Peptide Substrate | A custom peptide serving as the phosphate acceptor in kinase activity assays (e.g., mobility shift). Its fluorescence allows for detection post-separation [27]. |

| Adenosine Triphosphate (ATP) | The essential co-substrate for kinase reactions. Its concentration must be carefully optimized (near Km) for sensitive inhibitor detection [27]. |

| Cytoplasm-Mimicking Buffer | A buffer designed to replicate intracellular conditions (high K+, crowding agents, specific pH). It can help align biochemical assay results with cell-based data [28]. |

| High-Throughput Microplates | 384- or 1536-well plates used to miniaturize assay volumes and increase screening throughput for both binding and activity assays [31] [33]. |

Binding and enzymatic activity assays are not competing techniques but rather complementary tools in the drug developer's arsenal. Binding assays offer a direct, function-agnostic measure of target engagement, making them ideal for initial, high-throughput affinity screening of compounds identified through chemogenomic models. Conversely, enzymatic activity assays provide functional validation, confirming that binding translates into the desired pharmacological effect and offering deeper mechanistic insights.

The future of assay development lies in creating more physiologically relevant conditions. As research highlights, performing biochemical assays in buffers that mimic the intracellular environment—considering factors like macromolecular crowding, viscosity, and salt composition—can significantly improve the correlation between biochemical Kd/IC50 values and cellular activity data [28]. This alignment is critical for building more predictive chemogenomic models and accelerating the successful translation of in silico predictions into viable therapeutic candidates. By strategically employing both binding and activity assays, researchers can build a robust and iterative cycle of computational prediction and experimental validation, ultimately de-risking the journey of drug discovery.

The growing complexity of drug discovery, particularly in the era of chemogenomics, demands experimental strategies that can efficiently validate predictions against multiple biological targets or pathways simultaneously. Universal assay platforms address this need by enabling high-content multiplexed analyses from a single sample, thereby accelerating the validation of computational predictions while conserving precious reagents and cellular materials. These platforms are characterized by their ability to integrate multiple data types, such as protein and RNA expression, within a single experimental run, providing a more comprehensive view of cellular responses to perturbation [34]. The drive toward these integrated systems is further underscored by the limitations of traditional, sequential approaches to data collection, which are often inadequate for capturing the complex, interconnected nature of biological systems as identified by chemogenomic analyses.

The core value of these platforms lies in their capacity for multiplexing, defined as the simultaneous evaluation of several experimental elements. This dramatically increases analytical throughput and reduces the time and cost burdens associated with investigating individual components in isolation [34]. For researchers validating chemogenomic models, which often generate vast lists of potential gene-compound interactions, this multiplexing capability is not merely a convenience but a necessity. It allows for the direct experimental interrogation of complex hypotheses regarding multi-target pharmacology and polypharmacology, which are increasingly recognized as fundamental to understanding drug efficacy and safety.

Comparative Analysis of Platform Technologies

This section objectively compares the performance, throughput, and applications of the major high-throughput screening platforms used for multi-target analysis, providing a foundation for selecting the appropriate technology for specific chemogenomic validation goals.

The selection of a universal assay platform involves trade-offs between throughput, content, and physiological relevance. High-Throughput Flow Cytometry (HTFC) excels in single-cell, multiparameter analysis, while integrated digital platforms provide a unified data architecture for the entire discovery workflow. AI-driven predictive models represent a complementary in silico approach that can prioritize experiments.

Table 1: Core Technology Comparison for Multi-Target Screening Platforms

| Platform Technology | Key Strengths | Typical Throughput | Multiplexing Capacity | Primary Applications in Chemogenomic Validation |

|---|---|---|---|---|

| High-Throughput Flow Cytometry (HTFC) | Single-cell resolution; Multi-parameter protein detection; Cell sorting capability | 50,000+ wells/day (384/1536-well) [35] | High (5+ colors, polychromatic) [36] | Immunophenotyping; Signaling profiling; Cell cycle analysis; Intracellular cytokine detection [36] |

| Integrated Digital Discovery Platforms | Unified data model; Workflow harmonization; AI/ML integration; Traceability from sequence to function | Process-wide (Design-Make-Test-Analyze cycles) [37] | Heterogeneous data integration (sequence, binding, expression) [37] | Antibody/biological optimization; Developability assessment; Large-molecule candidate management [37] |

| AI/ML with Metabolic Modeling (e.g., CALMA) | Simultaneous potency/toxicity prediction; Mechanistic interpretability; Pathway-level insight | In silico screening of vast combination spaces [38] | Analyzes multiple metabolic subsystems and pathways concurrently [38] | Prioritizing combination therapies; Identifying synergistic/antagonistic drug interactions; Mitigating toxicity [38] |

Quantitative Performance Metrics

A critical step in platform selection is the evaluation of empirical performance data. The following table summarizes key quantitative benchmarks for flow cytometry and AI-driven approaches, providing a basis for comparing their predictive accuracy and experimental efficiency.

Table 2: Experimental Performance Metrics of Screening Platforms

| Platform & Assay | Validated Prediction Accuracy / Correlation | Key Experimental Readouts | Sample Consumption |

|---|---|---|---|

| HTFC: CAR-T Cytotoxicity (Solid Tumors) | Functional characterization in a single assay [35] | Tumor cell killing; Immune cell activation markers; Cytokine secretion [35] | Adaptable to 384-/1536-well formats [35] |

| HTFC: Primary Cell Profiling | Multiplexed functional readouts in one well [35] | Cell surface markers; Intracellular phospho-proteins; Cytokines [35] | ~1/10th the cells of conventional methods [35] |

| AI Model: CALMA (E. coli) | R = 0.56, p ≈ 10⁻¹⁴ (171 pairwise combinations) [38] | Drug combination potency score; Toxicity score [38] | In silico (uses GEM-simulated flux profiles) [38] |

| AI Model: CALMA (M. tuberculosis) | R = 0.44, p ≈ 10⁻¹³ (232 multi-way combinations) [38] | Drug combination potency score; Treatment regimen efficacy [38] | In silico (uses GEM-simulated flux profiles) [38] |

Detailed Experimental Protocols for Platform Implementation

High-Throughput Flow Cytometry for Multiplexed Cell Signaling

This protocol, adapted from AstraZeneca's integrated systems, is designed for high-content analysis of cell signaling pathways in primary immune cells, enabling validation of chemogenomic predictions on kinase inhibitor function and immune cell activation [35].

Key Research Reagent Solutions:

- Fluorochrome-conjugated Antibodies: For simultaneous detection of cell surface and intracellular epitopes. Selection requires careful panel design to minimize spectral overlap.

- Cell Barcoding Dyes (e.g., Palladium-based): Allows pooling of multiple samples, reducing technical variation and reagent consumption [35].

- Fixation/Permeabilization Buffers: Gentle, proprietary buffers are crucial for preserving surface epitopes while allowing intracellular antibody access.

- PrestoBlue Viability Reagent: A metabolism-based assay multiplexed with other probes to assess cell health [34].

- HyperCyt Sampling System: An automated sampler that serially aspirates samples from microplates, separating them with air bubbles for continuous acquisition, enabling throughput of 50,000+ wells per day [35].

Workflow:

- Cell Preparation and Stimulation: Isolate primary cells (e.g., PBMCs) and plate in 384-well plates. Stimulate with cytokines, inhibitors, or other perturbagens in a dose-response format.

- Cell Barcoding and Staining: Barcode individual wells with unique combinations of fluorescent cell barcoding dyes. Pool wells, then incubate with antibody cocktails against surface markers (e.g., CD3, CD4, CD8). This step significantly reduces hands-on time and inter-well variability.

- Fixation and Permeabilization: Treat cells with a cross-linking fixative followed by a gentle permeabilization buffer. This critical step must be optimized to retain the integrity of surface markers while allowing access to intracellular targets.

- Intracellular Staining: Incubate with antibodies against intracellular targets (e.g., phospho-STAT5, phospho-S6, Ki-67) to quantify signaling pathway activation and cell cycle status.

- HTFC Acquisition: Acquire data using a high-throughput flow cytometer (e.g., IntelliCyt system) equipped with a HyperCyt autosampler. The system automatically parses data from the continuous stream into individual well-based files.

- Data Analysis: Use specialized software (e.g., Genedata Screener) for automated population gating, dose-response curve fitting, and calculation of IC₅₀/EC₅₀ values. The multiparameter data allows for deep analysis of heterogeneous cell responses.

HTFC Multiplexed Signaling Workflow: This diagram outlines the key steps for a high-throughput flow cytometry assay, from cell preparation to automated data analysis.

Integrated AI & Metabolic Modeling for Combination Therapy Prediction

The CALMA (Combinatorial Antibiotic Therapy with Machine Learning) protocol provides a framework for predicting the potency and toxicity of drug combinations, serving as an in silico universal platform to guide experimental validation [38].

Key Research Reagent Solutions:

- Genome-Scale Metabolic Models (GEMs): Computational models (e.g., iJO1366 for E. coli, iEK1008 for M. tuberculosis) containing the organism's full metabolic network [38].

- Chemogenomic/Transcriptomic Data: Used as constraints to simulate organism-specific metabolic states under drug treatment.

- Artificial Neural Network (ANN) Architecture: A customized model where the input layer structure is mapped to GEM subsystems, integrating mechanistic biology with deep learning.

Workflow:

- Flux Simulation under Drug Perturbation: Utilize GEMs to simulate metabolic reaction fluxes at a steady state. Constrain the models with chemogenomic (for E. coli) or transcriptomic (for M. tuberculosis) data from individual drug treatments.

- Joint Profile Feature Engineering: Process the individual reaction flux profiles. Discretize fluxes based on differential activity and generate joint profile features for drug combinations. These features (sigma and delta scores) mathematically represent the similarity and uniqueness of metabolic impacts between drugs.

- ANN Model for Prediction: Input the joint profile features into the custom ANN. The model's architecture groups inputs by metabolic subsystems (e.g., central carbon metabolism, cell wall synthesis), reflecting biological organization. The network then predicts both a potency score (efficacy against the pathogen) and a toxicity score (adverse effect on human cells).

- Experimental Validation: Prioritize drug combinations predicted to be high-potency and low-toxicity for in vitro validation. Use cell viability assays in bacterial cultures and human cell lines to confirm synergistic potency and reduced cytotoxicity, respectively [38].