From Functional Features to Data Patterns: Comparing Traditional Pharmacophore and Modern Informacophore Approaches in Drug Discovery

This article provides a comprehensive comparison between traditional pharmacophore modeling and the emerging informacophore paradigm in computer-aided drug design.

From Functional Features to Data Patterns: Comparing Traditional Pharmacophore and Modern Informacophore Approaches in Drug Discovery

Abstract

This article provides a comprehensive comparison between traditional pharmacophore modeling and the emerging informacophore paradigm in computer-aided drug design. Aimed at researchers, scientists, and drug development professionals, it explores the foundational concepts of both approaches, detailing their methodological workflows and key applications in virtual screening, lead optimization, and scaffold hopping. The content addresses common limitations and optimization strategies, and presents a rigorous comparative analysis of their performance, validation metrics, and suitability for different drug discovery scenarios. By synthesizing insights across these four core intents, this review serves as a strategic guide for selecting and implementing these complementary computational techniques to accelerate therapeutic development.

Pharmacophore vs. Informacophore: Understanding the Core Concepts and Evolutionary Journey

In the field of computer-aided drug design, the pharmacophore concept serves as an indispensable abstract model for understanding and predicting molecular recognition. According to the official definition by the International Union of Pure and Applied Chemistry (IUPAC), a pharmacophore represents "the ensemble of steric and electronic features that is necessary to ensure the optimal supramolecular interactions with a specific biological target structure and to trigger (or to block) its biological response" [1] [2] [3]. This definition emphasizes that pharmacophores do not represent specific molecular structures or functional groups, but rather an abstract description of the stereoelectronic molecular properties essential for biological activity. The fundamental premise underlying this concept is that structurally diverse molecules sharing common pharmacophoric features should be recognized by the same biological target and exhibit similar biological profiles [1].

The historical development of the pharmacophore concept dates back to the pioneering work of Lemont Kier, who popularized the term in 1967 and used it in a 1971 publication [2]. Despite common misconceptions, Paul Ehrlich, often credited with the concept, actually used the term "toxicophore" instead, and the modern pharmacophore concept differs significantly from his original ideas [2] [3]. The traditional pharmacophore has evolved to become a cornerstone in medicinal chemistry, providing a framework for describing, explaining, and visualizing ligand-target binding modes in an intuitive manner that resonates with medicinal chemists [1]. This conceptual framework enables researchers to transcend specific chemical scaffolds and focus on the essential molecular interaction capacities required for biological activity, thereby facilitating critical drug discovery processes such as virtual screening, lead optimization, and scaffold hopping [1] [4].

Core Steric and Electronic Features of Traditional Pharmacophores

Fundamental Feature Types and Their Geometric Representations

The traditional pharmacophore model abstracts key molecular interactions into a limited set of fundamental feature types, each with specific geometric representations and complementary interaction partners. These features capture the essential steric and electronic properties that molecules must possess to interact effectively with biological targets. The table below summarizes the core pharmacophore features, their geometric representations, and their roles in molecular recognition.

Table 1: Fundamental pharmacophore features and their characteristics

| Feature Type | Geometric Representation | Complementary Feature Type(s) | Interaction Type(s) | Structural Examples |

|---|---|---|---|---|

| Hydrogen-Bond Acceptor (HBA) | Vector or Sphere | HBD | Hydrogen-Bonding | Amines, Carboxylates, Ketones, Alcoholes, Fluorine Substituents |

| Hydrogen-Bond Donor (HBD) | Vector or Sphere | HBA | Hydrogen-Bonding | Amines, Amides, Alcoholes |

| Aromatic (AR) | Plane or Sphere | AR, PI | π-Stacking, Cation-π | Any aromatic Ring |

| Positive Ionizable (PI) | Sphere | AR, NI | Ionic, Cation-π | Ammonium Ion, Metal Cations |

| Negative Ionizable (NI) | Sphere | PI | Ionic | Carboxylates |

| Hydrophobic (H) | Sphere | H | Hydrophobic Contact | Halogen Substituents, Alkyl Groups, Alicycles |

Source: Adapted from [1]

The choice of feature set profoundly impacts model quality, with current software packages striving to balance generality and selectivity [1]. Overly specific feature sets may miss structurally diverse active compounds, while excessively general features may lack discriminatory power. The geometric representation of these features (spheres, vectors, or planes) depends on the directional nature of the interactions they represent. For instance, vector representations are typically used for directed interactions like hydrogen bonding, while spheres suffice for undirected interactions such as hydrophobic contacts [1].

Incorporating Shape Constraints and Exclusion Volumes

Beyond the core electronic features, traditional pharmacophore models incorporate shape constraints to account for spatial restrictions imposed by the binding site architecture. This is typically achieved through exclusion volumes that represent receptor areas where ligand atoms cannot occupy space without causing steric clashes [1]. These volumes can vary in size and are strategically placed based on the union of molecular shapes of aligned known actives or, more reliably, from X-ray structures of ligand-receptor complexes [1]. The inclusion of shape constraints ensures that pharmacophore models not only identify molecules capable of forming key interactions but also those with compatible three-dimensional shapes that can be accommodated within the binding site without unfavorable steric interactions [1].

Methodological Approaches to Pharmacophore Model Development

Structure-Based Pharmacophore Modeling

Structure-based pharmacophore modeling leverages three-dimensional structural information about biological targets, typically obtained from X-ray crystallography or NMR spectroscopy, to derive pharmacophore features directly from ligand-receptor interactions [1] [4]. When a protein-ligand complex structure is available, the atomic coordinates guide precise placement of pharmacophoric features based on observed interactions, while receptor structure information facilitates the incorporation of shape constraints [1]. The workflow for structure-based pharmacophore modeling involves several critical steps: protein preparation, ligand-binding site detection, pharmacophore feature generation, and selection of relevant features for ligand activity [4].

Table 2: Comparison of structure-based pharmacophore generation techniques

| Method Aspect | X-ray Crystallography-Based | NMR Spectroscopy-Based |

|---|---|---|

| Protein Flexibility | Limited to crystal contacts and multiple structures | Inherently captures flexibility through ensemble of models |

| Pharmacophore Elements | More elements, often including peripheral features | Focused on essential, conserved interactions |

| Model Refinement | Often requires dropping peripheral elements for optimal performance | Optimal performance with all elements |

| Data Requirements | High-resolution structure with or without bound ligand | Ensemble of NMR models |

| Key Advantage | High precision of feature placement | Better representation of dynamic binding site |

Source: Adapted from [5]

As revealed in comparative studies, pharmacophore models derived from NMR ensembles often outperform those from crystal structures due to better representation of protein flexibility. NMR-based models naturally focus on the most essential interactions, while crystal structures may include peripheral, non-essential pharmacophore elements that arise from decreased protein flexibility in crystalline states [5].

Ligand-Based Pharmacophore Modeling

When three-dimensional target structures are unavailable, pharmacophore models can be derived exclusively from known active ligands through ligand-based approaches [1] [4]. This methodology requires a set of active molecules that bind to the same receptor site in the same orientation, and involves several key steps: selecting a training set of structurally diverse active molecules, generating low-energy conformations for each molecule, superimposing all combinations of these conformations, and abstracting the common molecular features into a pharmacophore hypothesis [2]. The fundamental assumption is that molecules sharing a common binding mode and biological activity will contain similar spatial arrangements of chemical features responsible for target recognition [1].

The quality of ligand-based pharmacophore models depends heavily on the conformational analysis and molecular alignment steps. The set of conformations that results in the best fit across active molecules is presumed to represent the bioactive conformation [2]. Additionally, the inclusion of known inactive compounds in the training set can help identify features that should be excluded from the model, thereby enhancing its discriminatory power [2]. The resulting model represents the largest common denominator of chemical features shared by active molecules, transformed into an abstract representation of essential pharmacophore elements [2].

Experimental Validation and Performance Assessment

Benchmark Comparisons with Docking-Based Virtual Screening

The performance of pharmacophore-based virtual screening has been rigorously evaluated against docking-based methods in comprehensive benchmark studies across multiple protein targets. These comparisons provide valuable experimental data on the relative strengths and limitations of each approach under standardized conditions.

Table 3: Performance comparison of pharmacophore-based vs. docking-based virtual screening across eight protein targets

| Screening Method | Average Enrichment Factor | Hit Rate at 2% Database | Hit Rate at 5% Database | Key Strengths |

|---|---|---|---|---|

| Pharmacophore-Based (Catalyst) | Higher in 14/16 test cases | Much higher | Much higher | Better discrimination of actives from decoys |

| Docking-Based (DOCK) | Lower | Lower | Lower | Detailed binding pose prediction |

| Docking-Based (GOLD) | Lower | Lower | Lower | Handling of protein flexibility |

| Docking-Based (Glide) | Lower | Lower | Lower | Accurate scoring functions |

Source: Adapted from [6]

In a landmark study evaluating eight structurally diverse protein targets, pharmacophore-based virtual screening outperformed docking-based methods in retrieving active compounds from databases in the majority of test cases [6]. The superior enrichment factors and hit rates demonstrated by pharmacophore-based approaches highlight their effectiveness as powerful tools in early drug discovery stages, particularly for rapidly filtering large chemical databases to identify potential hit compounds [6].

Experimental Protocols for Pharmacophore Model Validation

The validation of pharmacophore models follows standardized experimental protocols to ensure their predictive power and reliability. A typical validation workflow includes several critical steps: First, a database of known active compounds and decoy molecules is prepared, with care taken to ensure structural diversity and appropriate activity cutoffs [5]. The pharmacophore model is then used as a search query against this database, and its ability to correctly identify active compounds while rejecting decoys is quantified using metrics such as enrichment factors, hit rates, and receiver operating characteristic curves [5] [6].

Rigorous validation also includes assessing the model's sensitivity to the inclusion or exclusion of specific pharmacophore features, as demonstrated in studies where truncation of peripheral features in crystal-based models improved or maintained performance [5]. Additionally, the generation of multiple conformations for test compounds (typically with a heavy-atom RMSD constraint of 2Å and energy cutoff of 25 kcal/mol) ensures comprehensive coverage of potential binding orientations [5]. This systematic approach to validation provides medicinal chemists with confidence in applying pharmacophore models for virtual screening and lead optimization campaigns.

The Traditional Pharmacophore in the Age of Informatics

Comparison with Emerging Informacophore Approaches

While the traditional pharmacophore is rooted in human-defined heuristics and chemical intuition, recent advances in data science have catalyzed the emergence of the "informacophore" concept, which extends the traditional approach by incorporating data-driven insights derived from computed molecular descriptors, fingerprints, and machine-learned representations of chemical structure [7]. This evolution represents a paradigm shift from intuition-based methods to predictive analytics leveraging ultra-large chemical datasets.

Table 4: Traditional pharmacophore vs. informacophore approaches

| Aspect | Traditional Pharmacophore | Informacophore |

|---|---|---|

| Basis | Human-defined heuristics and chemical intuition | Data-driven patterns from large datasets |

| Features | Steric and electronic features (HBA, HBD, hydrophobic, etc.) | Combined structural, computed descriptors, and ML representations |

| Interpretability | High - directly mappable to chemical structures | Variable - can be challenging to interpret |

| Data Requirements | Limited to known actives and structural biology data | Ultra-large chemical libraries and bioactivity data |

| Scaffold Exploration | Scaffold hopping within defined chemical space | Broader exploration of patentable chemical space |

Source: Adapted from [7]

The informacophore framework leverages machine learning algorithms to process vast amounts of chemical information rapidly and accurately, identifying hidden patterns beyond human heuristic capacity [7]. However, this enhanced predictive power often comes at the cost of interpretability, as learned features may become opaque and difficult to link back to specific chemical properties [7]. Hybrid approaches that combine interpretable chemical descriptors with machine-learned representations are emerging to bridge this interpretability gap, maintaining the chemical intuition valued by medicinal chemists while harnessing the power of big data [7].

Integration with Modern Deep Learning Approaches

Traditional pharmacophore concepts are finding new relevance in guiding modern deep learning approaches for bioactive molecular generation. Methods like the Pharmacophore-Guided deep learning approach for bioactive Molecule Generation (PGMG) use pharmacophore hypotheses as bridges to connect different types of activity data, enabling flexible generation without further fine-tuning in different drug design scenarios [8]. These approaches represent pharmacophores as complete graphs with nodes corresponding to pharmacophore features, allowing spatial information to be encoded as distances between node pairs [8].

The integration of pharmacophore guidance with deep learning demonstrates how traditional medicinal chemistry concepts can enhance cutting-edge AI methods. By providing biologically meaningful constraints, pharmacophore guidance improves the efficiency of exploring chemical space and increases the likelihood of generating biologically active compounds with desired properties [8] [9]. This synergy between traditional knowledge and modern algorithms represents a promising direction for computational drug discovery, potentially accelerating the identification of novel therapeutic candidates while maintaining interpretability and chemical feasibility.

Research Reagent Solutions: Essential Tools for Pharmacophore Modeling

The experimental implementation of pharmacophore modeling and validation relies on a suite of specialized computational tools and databases that constitute the essential "research reagents" in this field.

Table 5: Essential research reagents for pharmacophore modeling and validation

| Tool/Database | Type | Primary Function | Key Applications |

|---|---|---|---|

| MOE | Software Suite | Pharmacophore model generation and virtual screening | Structure-based and ligand-based pharmacophore modeling |

| LigandScout | Software | 3D pharmacophore modeling from protein-ligand complexes | Structure-based pharmacophore generation |

| Catalyst/HipHop | Software | 3D pharmacophore modeling and virtual screening | Ligand-based pharmacophore generation and screening |

| Phase | Software | Pharmacophore model development and 3D-QSAR | Complex pharmacophore modeling and activity prediction |

| ChEMBL | Database | Bioactivity data for known active compounds | Training set creation and model validation |

| Protein Data Bank | Database | 3D structures of proteins and complexes | Structure-based pharmacophore generation |

| BOSS | Software | Molecular minimization and conformational analysis | Probe minimization in structure-based modeling |

| OMEGA | Software | Conformation generation for small molecules | Preparing compound databases for virtual screening |

Source: Adapted from [1] [2] [5]

These tools enable the entire pharmacophore modeling workflow, from initial data preparation through model generation and validation. The availability of comprehensive bioactivity databases like ChEMBL and structural databases like the Protein Data Bank provides the essential experimental foundation for developing and testing pharmacophore models, while specialized software implements the algorithms for feature identification, molecular alignment, and virtual screening [5] [4].

The traditional pharmacophore, with its focus on the essential steric and electronic features required for molecular recognition, remains a fundamental concept in drug discovery. Its power lies in the abstract representation of key interaction patterns independent of specific molecular scaffolds, enabling medicinal chemists to transcend structural biases and identify novel active compounds. While emerging informacophore approaches leverage big data and machine learning to enhance predictive power, they build upon the foundational framework established by traditional pharmacophore modeling. The integration of these approaches—combining the interpretability and chemical intuition of traditional methods with the scalability and pattern recognition capabilities of modern informatics—represents the most promising path forward for accelerating drug discovery and addressing unmet medical needs.

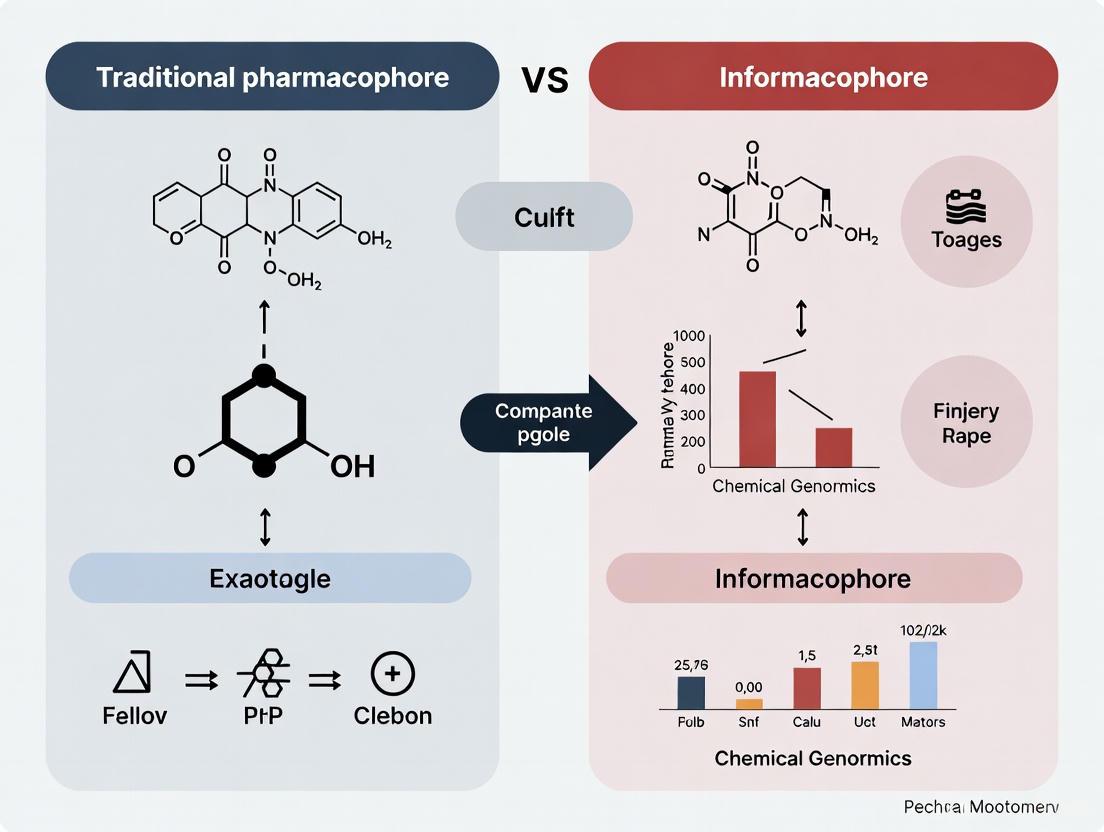

Diagram 1: Traditional pharmacophore modeling workflow, showing structure-based and ligand-based approaches converging to model validation and application.

The International Union of Pure and Applied Chemistry (IUPAC) defines a pharmacophore as "the ensemble of steric and electronic features that are necessary to ensure the optimal supramolecular interactions with a specific biological target structure and to trigger (or to block) its biological response" [10]. This definition establishes the pharmacophore as an abstract concept representing the essential functional components required for molecular recognition, rather than a specific molecular structure itself [3]. In practical terms, a pharmacophore captures the key molecular interaction capacities of a compound class toward their biological target through features including hydrogen-bond acceptors (HBA), hydrogen-bond donors (HBD), hydrophobic areas (H), positively and negatively ionizable groups (PI/NI), aromatic rings (AR), and metal-coordinating regions [4].

The emerging concept of the informacophore extends this foundational principle by integrating data-driven insights with traditional chemical intuition. The informacophore represents "the minimal chemical structure, combined with computed molecular descriptors, fingerprints, and machine-learned representations of its structure, that are essential for a molecule to exhibit biological activity" [7]. This evolution from human-defined heuristics to computational feature extraction represents a paradigm shift in how scientists conceptualize and optimize molecular interactions in drug discovery.

Table 1: Fundamental Definitions and Conceptual Frameworks

| Concept | IUPAC Definition | Core Components | Primary Application |

|---|---|---|---|

| Pharmacophore | "Ensemble of steric and electronic features for optimal supramolecular interactions" [10] | HBA, HBD, Hydrophobic, Ionizable, Aromatic features [4] | Structure-based and ligand-based drug design |

| Informacophore | "Minimal structure with computed descriptors and machine-learned representations" [7] | Molecular descriptors, fingerprints, ML representations, bioactivity data [7] | Data-driven drug discovery and AI-assisted molecular design |

| Supramolecular Chemistry | "Field related to species of greater complexity than molecules held together by intermolecular interactions" [11] | Supermolecules, membranes, vesicles, micelles, solid-state structures [11] | Drug delivery systems, material science, nanotechnology |

Methodological Comparison: Traditional vs. Contemporary Approaches

Traditional Pharmacophore Modeling Workflows

Traditional pharmacophore modeling employs two established methodological frameworks: structure-based and ligand-based approaches. Structure-based pharmacophore modeling relies on the three-dimensional structure of a macromolecular target, typically obtained from X-ray crystallography, NMR spectroscopy, or computational homology modeling [4]. The workflow initiates with critical protein structure preparation, including evaluation of residue protonation states, hydrogen atom positioning, and correction of structural artifacts. Subsequent binding site detection utilizes programs such as GRID or LUDI to identify potential interaction sites through geometric and energetic analyses [4]. Pharmacophore features are then generated through meticulous analysis of the interaction landscape between the target and known active ligands, with careful selection of only the most essential features for biological activity incorporated into the final model [4].

Ligand-based pharmacophore modeling represents a complementary approach employed when structural information for the biological target is unavailable. This methodology develops 3D pharmacophore hypotheses through comparative analysis of the physicochemical properties and spatial arrangements of known active ligands [4] [3]. Using tools like HypoGen or Phase, researchers identify common molecular interaction features across structurally diverse compounds that exhibit the desired biological activity, creating models that reflect the essential steric and electronic requirements for target engagement without explicit knowledge of the receptor structure [12].

Informacophore Development and Implementation

The informacophore framework incorporates machine learning and large-scale data analytics to transcend the limitations of human pattern recognition in chemical space. Whereas traditional pharmacophore models rely on medicinal chemists' intuition and visual structural motif recognition, informacophores leverage machine learning algorithms capable of processing vast chemical information repositories to identify patterns beyond human cognitive capacity [7]. This approach becomes particularly valuable when navigating ultra-large chemical spaces, such as the "make-on-demand" virtual libraries offered by suppliers like Enamine and OTAVA, which contain 65 and 55 billion novel compounds respectively [7].

The computational workflow for informacophore development typically involves featurization of molecular structures through descriptor calculation and fingerprint generation, followed by model training using various machine learning architectures (including deep learning models) on bioactivity data, and finally validation through both computational metrics and experimental verification in iterative design-make-test-analyze cycles [7]. A prominent example of this methodology is the Pharmacophore-Guided deep learning approach for bioactive Molecule Generation (PGMG), which uses graph neural networks to encode spatially distributed chemical features and transformer decoders to generate novel bioactive molecules matching specified pharmacophore hypotheses [8].

Table 2: Methodological Comparison of Implementation Approaches

| Methodological Aspect | Traditional Pharmacophore | Informacophore Approach |

|---|---|---|

| Feature Identification | Manual analysis of protein-ligand interactions or ligand alignment [4] | Automated extraction via ML algorithms from large datasets [7] |

| Data Requirements | Known active ligands or protein structure [4] | Large-scale bioactivity data, molecular descriptors [7] |

| Key Software/Tools | Catalyst, Discovery Studio, LigandScout, Phase [4] [3] | Deep learning frameworks, custom ML pipelines, PGMG [8] |

| Model Interpretability | High - features directly mappable to chemical functionalities [4] | Variable - potential "black box" challenge with complex models [7] |

| Scalability | Limited by human expertise and dataset size [7] | High - capable of screening billions of compounds [7] |

Experimental Protocols and Validation Frameworks

Structure-Based Pharmacophore Modeling Protocol

The validation of structure-based pharmacophore models follows a rigorous experimental protocol to ensure biological relevance:

- Protein Preparation: Retrieve the 3D structure from the Protein Data Bank (PDB) and preprocess using tools like Molecular Operating Environment (MOE) or Discovery Studio. Critical steps include adding hydrogen atoms, optimizing protonation states of residues, correcting missing atoms/regions, and energy minimization [4].

- Binding Site Analysis: Identify the ligand-binding site using computational tools such as GRID or LUDI, which employ probe-based methods to detect energetically favorable interaction regions. Alternatively, analyze co-crystallized ligands if available [4].

- Feature Generation and Selection: Extract potential pharmacophore features from the binding site, including hydrogen bond donors/acceptors, hydrophobic regions, and ionic interaction sites. Select the most biologically relevant features through conservation analysis across multiple ligand complexes or essential residue identification through mutagenesis data [4].

- Exclusion Volume Definition: Add exclusion volumes to represent steric constraints of the binding pocket, preventing false positives with unfavorable steric clashes [4].

- Virtual Screening Validation: Employ the validated pharmacophore model as a 3D query to screen compound databases. Top-ranking compounds proceed to in vitro testing for experimental validation of predicted activity [4].

Informacophore Development and Testing Protocol

The development and validation of informacophores incorporate both computational and experimental phases:

- Data Curation and Featurization: Collect large-scale bioactivity data from sources like ChEMBL. Generate comprehensive molecular descriptors and fingerprints using tools such as RDKit [8].

- Model Training: Implement machine learning architectures (e.g., graph neural networks, transformers) to learn the mapping between chemical features and biological activity. For generative applications, employ latent variable models to handle the many-to-many relationship between pharmacophores and molecules [8].

- Computational Validation: Evaluate generated molecules using multiple metrics: validity (chemical correctness), uniqueness (structural novelty), novelty (distinct from training set), and drug-likeness (adherence to physicochemical property guidelines) [8].

- Experimental Confirmation: Subject computationally prioritized compounds to biological functional assays including enzyme inhibition, cell viability, and pathway-specific readouts to establish real-world pharmacological relevance [7].

- Iterative Optimization: Use experimental results to refine the informacophore model, creating a continuous feedback loop for improved predictive performance [7].

Comparative Performance Analysis

Virtual Screening Performance

Traditional pharmacophore models have demonstrated consistent performance in virtual screening applications. When applied to database screening, these models typically achieve hit rates of 1-10% for compounds exhibiting micromolar activity, substantially outperforming random screening [4]. The strength of pharmacophore approaches lies in their scaffold-hopping capability—identifying structurally diverse compounds that share essential interaction features—making them particularly valuable for intellectual property expansion and lead series diversification [4] [3].

Informacophore-based screening methods show enhanced performance in navigating ultra-large chemical spaces. In benchmark studies, the PGMG approach generated molecules with strong docking affinities while maintaining high scores of validity (95.14%), uniqueness (98.98%), and novelty (85.60%) [8]. This demonstrates the capability of informacophore-guided approaches to explore chemical space more efficiently while maintaining structural novelty and drug-like properties.

Drug Discovery Timeline and Cost Implications

The traditional drug discovery pipeline remains lengthy and expensive, requiring an average of $2.6 billion and over 12 years from target identification to clinical approval [7]. Pharmacophore-based methods have historically helped accelerate the early hit identification phase, but still depend heavily on medicinal chemist intuition and iterative optimization cycles.

Informacophore approaches promise significant acceleration in the discovery phase by reducing biased intuitive decisions that may lead to systemic errors [7]. Case studies like Halicin, a novel antibiotic discovered using a neural network trained on molecules with known antibacterial properties, demonstrate how informacophore-like approaches can identify promising candidates with exceptional efficiency [7]. The automated analysis of ultra-large datasets enables more objective and precise decisions in compound prioritization, potentially compressing the discovery timeline by several years.

Table 3: Performance Metrics in Practical Applications

| Performance Metric | Traditional Pharmacophore | Informacophore Approach |

|---|---|---|

| Virtual Screening Hit Rate | 1-10% for µM activites [4] | High novelty (85.6%) and uniqueness (98.98%) [8] |

| Scaffold Hopping Efficiency | High - identifies diverse chemotypes [3] | Superior - navigates broader chemical space [7] |

| Typical Discovery Timeline | Several months to years for lead optimization [7] | Potentially reduced through accelerated screening [7] |

| Success Case Examples | Captopril, Lovastatin [7] | Halicin, Baricitinib repurposing [7] |

| Data Dependency | Moderate - limited by known actives or structures [4] | High - requires large datasets for optimal performance [7] |

Integration with Supramolecular Chemistry in Drug Delivery

Both pharmacophore and informacophore concepts find practical application within the broader context of supramolecular chemistry, particularly in drug delivery systems. Supramolecular chemistry—the study of species of greater complexity than molecules held together by intermolecular interactions—provides the theoretical foundation for understanding how pharmacophore features engage with biological targets [11]. These supramolecular interactions play pivotal roles in various aspects of drug delivery, including biocompatibility, drug loading, stability, crossing biological barriers, targeting, and controlled release [13].

Successful clinical applications of supramolecular principles include Sugammadex, a gamma-cyclodextrin derivative that exploits host-guest chemistry to reverse neuromuscular blockade through enhanced van der Waals and hydrophobic interactions [13]. Similarly, liposomal formulations like Doxil leverage supramolecular assembly for improved drug delivery, where phospholipids self-assemble into vesicles that encapsulate therapeutic agents [13]. These examples underscore how the abstract features defined in pharmacophore models manifest as concrete supramolecular interactions in biological systems.

Table 4: Key Research Resources for Pharmacophore and Informacophore Implementation

| Resource Category | Specific Tools/Software | Primary Function | Application Context |

|---|---|---|---|

| Pharmacophore Modeling | Discovery Studio, Catalyst, LigandScout, MOE [4] [3] | Structure-based and ligand-based pharmacophore development | Traditional pharmacophore modeling |

| Machine Learning Frameworks | PyTorch, TensorFlow, RDKit [8] | Descriptor calculation, model implementation, featurization | Informacophore development |

| Chemical Databases | ZINC, ChEMBL, Enamine, OTAVA [7] [12] | Source of compounds for screening and training data | Both approaches |

| Structural Databases | Protein Data Bank (PDB) [4] | Source of 3D protein structures for structure-based design | Traditional pharmacophore modeling |

| Specialized Algorithms | HypoGen, Phase, PGMG [8] [12] | Quantitative pharmacophore modeling, molecule generation | Both approaches |

Visualizing Methodological Workflows

Comparative Workflows in Molecular Design: This diagram illustrates the distinct methodological pathways between traditional pharmacophore and informacophore approaches, highlighting the human expert-driven versus data-driven processes that ultimately converge on validated bioactive compounds.

The IUPAC definition of a pharmacophore as an ensemble of features for optimal supramolecular interactions provides the foundational framework for understanding molecular recognition events in drug discovery [10]. Traditional pharmacophore approaches continue to offer high interpretability and successful application in many drug discovery campaigns, particularly when structural information or known active ligands are available [4]. The informacophore paradigm extends this established concept by integrating computational descriptors and machine-learned representations, enabling navigation of exponentially expanding chemical spaces [7].

Rather than representing competing methodologies, these approaches form a complementary continuum in modern drug discovery. Traditional pharmacophore models provide chemically intuitive frameworks that align with medicinal chemists' understanding of structure-activity relationships, while informacophores leverage the pattern recognition capabilities of machine learning to identify complex, non-intuitive relationships in large chemical datasets [7]. The most effective drug discovery strategies increasingly incorporate both methodologies, using informacophores for broad chemical space exploration and traditional pharmacophore approaches for focused optimization, ultimately accelerating the development of novel therapeutic agents through their synergistic application.

The systematic identification of key molecular features is fundamental to rational drug design. The pharmacophore, defined by the International Union of Pure and Applied Chemistry (IUPAC) as "the ensemble of steric and electronic features that is necessary to ensure the optimal supra-molecular interactions with a specific biological target structure and to trigger (or to block) its biological response," has long served as the cornerstone for this process [4] [14]. Traditionally, this involves characterizing features like hydrogen bond donors (HBDs), hydrogen bond acceptors (HBAs), hydrophobic areas (H), and positively or negatively ionizable groups (PI/NI) [4] [15]. These features represent the essential chemical functionalities a molecule must possess to interact effectively with a biological target.

A paradigm shift is underway with the emergence of the informacophore, a data-driven extension of the classic model. While the traditional pharmacophore relies on human-defined heuristics and chemical intuition, the informacophore incorporates computed molecular descriptors, fingerprints, and machine-learned representations of chemical structure [7]. This evolution frames a critical comparison: the intuitive, feature-centric traditional pharmacophore versus the data-rich, pattern-based informacophore. This guide objectively compares the performance of these two approaches in identifying key pharmacophoric features, providing experimental protocols and data to inform researchers and drug development professionals.

Feature-by-Feature Comparison of Traditional and Informacophore Approaches

The following section details the defining characteristics, strengths, and limitations of each approach for identifying critical pharmacophore features.

The Traditional Pharmacophore Approach

Traditional pharmacophore modeling is a well-established strategy that abstracts key functional groups into generalized features. It operates on the theory that molecules sharing common chemical functionalities in a similar spatial arrangement will exhibit similar biological activity [4].

- Core Principle: The approach creates an abstract model of stereo-electronic features necessary for binding, represented as geometric entities like spheres, planes, and vectors in 3D space [4]. The most relevant features include Hydrogen Bond Donors (HBD), Hydrogen Bond Acceptors (HBA), Hydrophobic areas (H), and Positively/Negatively Ionizable groups (PI/NI) [4] [15].

- Methodology: It is divided into two main methodologies:

- Structure-Based: Uses the 3D structure of a macromolecule target (e.g., from X-ray crystallography or homology modeling) to identify essential interaction points in the binding site. This often involves analyzing a protein-ligand complex or using tools like GRID and LUDI to map interaction fields [4].

- Ligand-Based: Derives common features from a set of known active ligands by aligning them and identifying their shared chemical functionalities, without requiring target structure information [4] [14].

- Performance and Limitations: This approach is highly interpretable, as features directly correspond to chemical intuitions. However, its reliance on pre-defined feature types and human expertise can introduce bias. It may also struggle with the complexity of multi-target activities or when active ligands are structurally diverse [7].

The Informacophore Approach

The informacophore represents a modern, data-driven paradigm that leverages machine learning (ML) and large-scale chemical data analysis to define the minimal structural requirements for biological activity.

- Core Principle: The informacophore is defined as the minimal chemical structure combined with computed molecular descriptors, fingerprints, and machine-learned representations essential for a molecule's biological activity [7]. It acts as a "skeleton key" identifying molecular features that trigger biological responses.

- Methodology: This approach uses ML algorithms to process vast amounts of chemical information from ultra-large virtual libraries, identifying hidden patterns beyond human heuristic capacity [7]. It utilizes various molecular representations:

- Molecular Descriptors and Fingerprints: Tools like CATS (Chemically Advanced Template Search) descriptors capture pharmacophore patterns, while MACCS keys or MAP4 fingerprints represent substructural features [9].

- Learned Representations: Deep learning models, such as graph neural networks, can create complex, high-dimensional representations of molecules that encapsulate pharmacophoric properties in a latent space [7] [8].

- Performance and Advantages: By reducing intuitive bias, the informacophore can systematically explore chemical space and identify non-intuitive feature relationships. It is particularly powerful for screening ultra-large libraries (billions of compounds) that are infeasible to test empirically [7]. A challenge, however, is the potential opacity of machine-learned models, making direct interpretation of features more difficult compared to traditional methods [7].

Comparative Analysis of Feature Identification

The table below summarizes the core differences between the two approaches in handling key pharmacophoric features.

Table 1: Comparative Analysis of Traditional Pharmacophore vs. Informacophore Approaches

| Aspect | Traditional Pharmacophore | Informacophore |

|---|---|---|

| Core Basis | Human-defined chemical features and intuition [4] | Data-driven, computed descriptors and ML patterns [7] |

| Feature Representation | 3D points, spheres, vectors (HBD, HBA, H, PI/NI) [4] | Molecular fingerprints, latent space vectors, learned embeddings [7] [9] |

| Interpretability | High; directly maps to chemical functionalities [4] | Variable; can be lower due to model complexity (the "black box" problem) [7] |

| Handling of Uncertainty | Fixed tolerance ranges (e.g., spatial distance) [16] | Implicitly managed through probabilistic models and similarity metrics [9] [8] |

| Scalability | Limited by the need for manual refinement and expert knowledge [4] | High; designed for automated analysis of ultra-large chemical libraries [7] |

| Dependency on Prior Knowledge | Requires either a known protein structure or a set of active ligands [4] | Can operate with minimal prior knowledge by learning from broad chemical databases [8] |

Experimental Performance and Validation Data

Objective comparison requires quantitative data from virtual screening and generative design experiments, which evaluate the ability of each approach to identify compounds with desired biological activity.

Performance Metrics in Virtual Screening

Virtual screening is a primary application where pharmacophore and informacophore models are used to prioritize compounds from large databases for biological testing. Key metrics include Enrichment Factor (EF), which measures the model's ability to "enrich" a selection of compounds with true actives, and the docking score, a computational proxy for predicted binding affinity [17].

Table 2: Performance Comparison in Virtual Screening Tasks

| Model / Method | Target / Benchmark | Key Performance Metric | Result | Reference |

|---|---|---|---|---|

| PharmacoForge (Generative Pharmacophore) | LIT-PCBA benchmark | Enrichment Factor (EF) | Surpassed other automated pharmacophore generation methods | [17] |

| Pharmacophore Search (General) | DUD-E dataset | Screening Speed | Orders of magnitude faster than molecular docking | [17] |

| PGMG (Pharmacophore-Guided Generation) | Estrogen Receptor (PDB: 8AWG) | Docking Score (vs. Baseline) | -6.47 to -7.09 (vs. -8.65 for baseline) | [9] |

| Traditional Pharmacophore (Structure-Based) | Not Specified | Computational Cost | Lower than iterative docking; requires protein structure | [4] |

Performance in Generative Molecular Design

In de novo molecule generation, models are tasked with creating novel, drug-like compounds that satisfy specific constraints. The "informacophore" approach, employing machine learning, shows distinct advantages in scalability and novelty.

Table 3: Performance in Generative Molecular Design

| Model / Method | Validity | Uniqueness | Novelty | Reference |

|---|---|---|---|---|

| PGMG (Pharmacophore-Guided) | High (comparable to top models) | High (comparable to top models) | Best in class (high ratio of available molecules) | [8] |

| Reinforcement Learning (FREED++) | High | 84.5% - 100% | 84.5% - 100% | [9] |

| SMILES LSTM (Benchmark) | High | High | Lower than PGMG | [8] |

| Syntalinker (Benchmark) | High | High | Lower than PGMG | [8] |

Detailed Experimental Protocols

To ensure reproducibility and provide practical guidance, this section outlines standard protocols for key experiments cited in the performance comparison.

Protocol 1: Structure-Based Pharmacophore Modeling

This protocol details the creation of a pharmacophore model using a protein's 3D structure [4].

- Protein Preparation: Obtain the 3D structure of the target protein from the RCSB Protein Data Bank (PDB). Critically evaluate the structure for quality, including resolution and any missing residues. Prepare the structure by adding hydrogen atoms, assigning correct protonation states, and optimizing hydrogen bonds.

- Ligand-Binding Site Identification: Define the binding site of interest. This can be done manually based on known literature or the location of a co-crystallized ligand. Alternatively, use automated tools like GRID or LUDI to detect potential binding pockets based on geometric and energetic properties [4].

- Pharmacophore Feature Generation: Analyze the binding site to identify key interaction points. Software will generate potential features (HBD, HBA, H, PI/NI) based on complementary protein residues.

- Feature Selection and Model Creation: From all generated features, select those that are essential for ligand bioactivity. This selection can be based on conservation in multiple protein-ligand structures, energy contribution to binding, or key functional residues from mutagenesis studies. Incorporate spatial constraints and exclusion volumes to represent the binding pocket's shape [4].

Protocol 2: Ligand-Based Ensemble Pharmacophore Modeling

This protocol is used when a protein structure is unavailable but a set of active ligands is known [14].

- Ligand Preparation and Conformational Analysis: Collect a set of diverse, known active ligands. Prepare each molecule by energy minimization and generate a set of low-energy conformations for each to account for flexibility.

- Molecular Alignment: Superimpose the active conformations of all ligands, aiming to maximize the overlap of their common chemical features.

- Pharmacophore Feature Extraction: For each aligned ligand, identify and map its key pharmacophoric features (e.g., hydrogen bond donors, acceptors, hydrophobic centers).

- Feature Clustering and Hypothesis Generation: Cluster the spatial coordinates of each feature type (e.g., all donor points) across all aligned ligands using an algorithm like k-means. Select the most representative clusters to define the final ensemble pharmacophore model, which captures the common features of the active set [14].

Protocol 3: Pharmacophore-Guided Molecular Generation with ML

This protocol describes a machine learning approach for generating novel molecules that match a given pharmacophore, as exemplified by PGMG [8] and other RL frameworks [9].

- Pharmacophore Representation: Represent the input pharmacophore as a complete graph. Each node corresponds to a pharmacophore feature (e.g., HBA, HBD), and edges represent the spatial distances between them. This graph is encoded using a graph neural network (GNN) [8].

- Model Architecture and Training: Employ a deep generative model architecture, such as a transformer decoder or a variational autoencoder, which is trained to translate the pharmacophore graph representation (and a latent variable) into a valid molecular structure (e.g., in SMILES format) [8].

- Reinforcement Learning (RL) Optimization: For frameworks like FREED++, design a reward function that balances multiple objectives. This function typically combines pharmacophoric similarity (e.g., using CATS descriptors and cosine similarity) with structural diversity (e.g., using MACCS keys and Tanimoto coefficient) and drug-likeness (QED score) [9].

- Sampling and Validation: Given a target pharmacophore, sample latent variables from a prior distribution and use the trained decoder to generate novel molecules. Validate the output molecules for validity, uniqueness, novelty, and synthetic accessibility (SA) score [9] [8].

Workflow Visualization

The diagram below illustrates the fundamental logical and operational differences between the traditional pharmacophore and informacophore approaches in a drug discovery pipeline.

This section catalogs key software, databases, and computational tools essential for conducting research in both traditional and informacophore-based approaches.

Table 4: Essential Research Reagents and Resources

| Category | Item/Software | Function/Brief Explanation | Relevant Approach |

|---|---|---|---|

| Software & Tools | RDKit [14] [8] | Open-source cheminformatics toolkit used for feature identification, fingerprint generation, and molecular manipulation. | Both |

| GRID, LUDI [4] | Software for identifying potential interaction sites and favorable binding regions on a protein structure. | Traditional | |

| Pharmit, Pharmer [17] | Interactive tools for rapid pharmacophore-based virtual screening of compound libraries. | Traditional | |

| PharmacoForge [17] | A diffusion model for generating 3D pharmacophores conditioned on a protein pocket. | Informacophore | |

| PGMG [8] | A pharmacophore-guided deep learning model for generating bioactive molecules. | Informacophore | |

| Databases | RCSB Protein Data Bank (PDB) [4] | Primary repository for 3D structural data of proteins and nucleic acids, essential for structure-based design. | Both |

| BindingDB [18] | Database of measured binding affinities, focusing on interactions between drug targets and molecules. | Both | |

| ChEMBL [9] | Manually curated database of bioactive molecules with drug-like properties, containing SAR data. | Both | |

| Enamine, OTAVA [7] | Suppliers of "make-on-demand" ultra-large tangible chemical libraries for virtual screening. | Informacophore | |

| Molecular Representations | CATS Descriptors [9] | Chemically Advanced Template Search descriptors; capture pharmacophore patterns for similarity search. | Informacophore |

| MACCS Keys [9] | Molecular ACCess System; a binary fingerprint representing the presence/absence of 166 common substructures. | Informacophore | |

| MAP4 Fingerprint [9] | MinHashed Atom-Pair fingerprint; a more expressive molecular representation combining atom-pair relationships. | Informacophore |

The conceptual foundation of modern drug discovery was laid over a century ago by Paul Ehrlich (1854-1915), a German physician and Nobel laureate whose pioneering work established the fundamental principles of targeted therapy [19] [20]. Ehrlich introduced the revolutionary concept of the "magic bullet" (Zauberkugel)—a therapeutic agent that could selectively target disease-causing organisms without harming host cells [19] [20]. His research on cell-specific dye staining led to the side-chain theory, which proposed that cells possess specific receptors that interact with particular molecules, effectively establishing the first receptor-ligand interaction theory [19]. This theoretical framework, developed in the late 19th century, has evolved through decades of scientific advancement into today's computational approaches for drug design, creating a direct conceptual lineage from Ehrlich's foundational ideas to contemporary pharmacophore and informacophore methodologies [4] [7].

This guide objectively compares traditional pharmacophore modeling with the emerging informacophore approach, examining their performance through the lens of Ehrlich's original conceptual framework and providing experimental data to illustrate their respective capabilities in modern drug discovery pipelines.

Historical Foundations: Paul Ehrlich's Enduring Legacy

Core Conceptual Contributions

Paul Ehrlich's work established three pivotal concepts that continue to inform computational drug design:

Side-Chain Theory (1897): Ehrlich postulated that cells have specific side chains (receptors) that interact with complementary molecules (ligands), forming the basis of modern receptor theory [19]. He proposed that these interactions followed precise molecular complementarity, much like a key fitting into a lock.

Magic Bullet Concept: Ehrlich envisioned ideally targeted therapeutic agents that would selectively bind to pathogens or diseased cells while sparing healthy tissues [20]. This concept of selective toxicity became the fundamental goal of modern chemotherapy.

Systematic Drug Screening: In developing Salvarsan (arsphenamine), the first synthetic antimicrobial agent effective against syphilis, Ehrlich and his team systematically synthesized and tested 605 arsenic compounds over three years before identifying an effective candidate [19] [20]. This methodical approach established the prototype for modern high-throughput screening methodologies.

Table 1: Paul Ehrlich's Key Contributions to Targeted Therapy

| Concept | Year | Core Principle | Modern Computational Equivalent |

|---|---|---|---|

| Side-Chain Theory | 1897 | Cellular receptors specifically interact with complementary molecules | Molecular docking and receptor-ligand interaction simulations |

| Magic Bullet | 1906-1909 | Selective targeting of disease-causing organisms | Target-specific drug design with minimized off-target effects |

| Systematic Screening | 1907-1909 | Methodical testing of compound libraries | Virtual High-Throughput Screening (vHTS) |

| Structure-Activity Relationship | 1909 | Chemical structure determines biological effect | Quantitative Structure-Activity Relationship (QSAR) modeling |

Historical Trajectory to Computational Implementation

The evolution from Ehrlich's concepts to contemporary computational methods follows a clear trajectory. Ehrlich's side-chain theory, which explained how toxins and antitoxins interact through specific molecular configurations, directly informed the development of the pharmacophore concept in the 20th century [4]. His systematic approach to screening chemical compounds established the methodological foundation for today's virtual screening protocols [21]. The magic bullet ideal of selective targeting remains the ultimate objective of both pharmacophore and informacophore approaches, though pursued with increasingly sophisticated computational tools.

Methodological Frameworks: Traditional Pharmacophore vs. Modern Informacophore

Traditional Pharmacophore Modeling

The pharmacophore concept, directly descending from Ehrlich's side-chain theory, is defined by the International Union of Pure and Applied Chemistry (IUPAC) as "the ensemble of steric and electronic features that is necessary to ensure the optimal supramolecular interactions with a specific biological target structure and to trigger (or to block) its biological response" [4]. Traditional pharmacophore modeling encompasses two primary approaches:

Structure-Based Pharmacophore Modeling relies on three-dimensional structural information of the target protein, typically obtained from X-ray crystallography, NMR spectroscopy, or cryo-electron microscopy [4] [22]. The methodology involves:

- Protein Preparation: Evaluating and optimizing the quality of the target structure, including protonation states, hydrogen atom placement, and correction of structural errors [4].

- Ligand-Binding Site Detection: Identifying potential binding pockets using tools such as GRID or LUDI that analyze protein surface properties [4].

- Feature Generation and Selection: Mapping interaction points (hydrogen bond donors/acceptors, hydrophobic areas, charged groups) and selecting those essential for bioactivity [22].

Ligand-Based Pharmacophore Modeling is employed when the receptor structure is unknown, using the physicochemical properties and spatial arrangements of known active ligands [22]. This approach:

- Identifies common chemical features among active compounds

- Accounts for ligand conformational flexibility

- Requires extensive screening to determine protein targets and corresponding binding ligands [22]

Table 2: Traditional Pharmacophore Feature Definitions

| Feature Type | Chemical Description | Role in Molecular Recognition |

|---|---|---|

| Hydrogen Bond Acceptor (HBA) | Atoms that can accept hydrogen bonds (e.g., O, N) | Forms specific directional interactions with donor groups |

| Hydrogen Bond Donor (HBD) | Hydrogen atoms attached to electronegative atoms | Creates strong, specific bonds with acceptor atoms |

| Hydrophobic Areas (H) | Non-polar regions (e.g., alkyl chains) | Drives desolvation and van der Waals interactions |

| Positively Ionizable (PI) | Basic groups (e.g., amines) | Forms electrostatic interactions with acidic groups |

| Negatively Ionizable (NI) | Acidic groups (e.g., carboxylic acids) | Creates salt bridges with basic residues |

| Aromatic (AR) | Pi-electron systems (e.g., phenyl rings) | Enables pi-pi stacking and cation-pi interactions |

Informacophore Approach

The informacophore represents an evolution of the traditional pharmacophore concept, defined as "the minimal chemical structure, combined with computed molecular descriptors, fingerprints, and machine-learned representations of its structure, that are essential for a molecule to exhibit biological activity" [7]. This approach leverages machine learning and large-scale data analysis to overcome human cognitive limitations in pattern recognition across ultra-large chemical spaces.

Key characteristics of the informacophore approach include:

- Data-Driven Insights: Incorporates molecular descriptors, fingerprints, and machine-learned representations beyond human-defined heuristics [7]

- Ultra-Large Library Screening: Capable of processing make-on-demand virtual libraries containing billions of compounds [7]

- Reduced Human Bias: Minimizes intuition-based decisions that may lead to systemic errors [7]

- Multi-Modal Representation: Combines structural, physicochemical, and learned features into unified activity predictors [8]

Diagram 1: Workflow comparison between traditional pharmacophore and informacophore approaches

Performance Comparison: Experimental Data and Case Studies

Virtual Screening Performance Metrics

Multiple studies have quantitatively compared the performance of traditional pharmacophore methods against informacophore and other machine learning approaches across various target classes:

Table 3: Virtual Screening Performance Comparison

| Screening Method | Library Size | Hit Rate | Time Requirements | Cost per Compound | Key Limitations |

|---|---|---|---|---|---|

| Traditional Pharmacophore | Thousands to millions | 0.021% (HTS) to 35% (vHTS) [21] | Days to weeks | Low computational cost | Limited by human-defined features; scaffold bias |

| Informacophore (ML-Based) | Billions (make-on-demand) [7] | 6.3% improvement in available molecule ratio [8] | Hours to days | Moderate computational cost | Requires extensive training data; model interpretability challenges |

| Experimental HTS | ~400,000 compounds [21] | 0.021% [21] | Months to years | High laboratory costs | Low hit rate; extensive assay development |

Case Study: Tyrosine Phosphatase-1B Inhibitors

A direct comparison at Pharmacia (now Pfizer) demonstrated the efficiency of computational approaches versus traditional high-throughput screening [21]:

- Virtual Screening Approach: 365 compounds screened → 127 effective inhibitors identified (34.8% hit rate)

- Traditional HTS: 400,000 compounds tested → 81 showed inhibition (0.021% hit rate)

This case demonstrates how computational methods, including pharmacophore-based screening, achieve dramatically higher efficiency in lead identification compared to traditional experimental approaches.

Deep Learning Implementation: PGMG Case Study

The Pharmacophore-Guided deep learning approach for bioactive Molecule Generation (PGMG) represents a modern implementation combining pharmacophore principles with informacophore-like machine learning [8]. Experimental results demonstrate:

- Novelty and Diversity: PGMG generated molecules with strong docking affinities while maintaining high scores of validity (93.5%), uniqueness (83.7%), and novelty (82.4%) [8]

- Latent Variable Integration: Introduction of latent variables solved the many-to-many mapping problem between pharmacophores and molecules, enhancing structural diversity [8]

- Performance Metrics: PGMG showed 6.3% improvement in the ratio of available molecules compared to traditional generative models [8]

Experimental Protocols and Methodologies

Structure-Based Pharmacophore Modeling Protocol

Objective: Generate a structure-based pharmacophore model from a protein-ligand complex structure.

Materials and Software:

- Protein Data Bank (PDB) structure file

- Molecular modeling software (e.g., MOE, Discovery Studio, Schrödinger)

- Protein preparation tools (e.g., PROPREP, Protein Preparation Wizard)

- Pharmacophore generation module (e.g., LigandScout, PharmaGist)

Methodology:

- Protein Structure Preparation:

- Add hydrogen atoms and assign protonation states at physiological pH

- Optimize hydrogen bonding network using algorithms like PROPKA

- Correct structural anomalies (missing residues, atomic clashes)

- Perform energy minimization with force fields (e.g., OPLS4, CHARMM)

Binding Site Analysis:

- Identify binding pocket from co-crystallized ligand location

- Characterize interaction sites using GRID molecular interaction fields

- Map hydrophobic, hydrogen bonding, and electrostatic regions

Pharmacophore Feature Generation:

- Extract chemical features from protein-ligand interactions

- Define hydrogen bond donors/acceptors with vector directions

- Identify hydrophobic and aromatic regions

- Map charged features (positive/negative ionizable areas)

Model Validation:

- Test model against known active and inactive compounds

- Calculate Guner-Henry scoring metrics (enrichment factors)

- Validate through molecular docking studies

Informacophore Model Development Protocol

Objective: Develop a machine learning-driven informacophore model for bioactivity prediction.

Materials and Software:

- Ultra-large chemical library (e.g., Enamine: 65B compounds, OTAVA: 55B compounds) [7]

- Molecular descriptor calculation tools (e.g., RDKit, Dragon)

- Machine learning frameworks (e.g., TensorFlow, PyTorch)

- High-performance computing infrastructure with GPU acceleration

Methodology:

- Data Curation and Preprocessing:

- Collect bioactivity data from public repositories (ChEMBL, BindingDB)

- Calculate molecular descriptors and fingerprints (ECFP, MACCS)

- Standardize structures and remove duplicates

- Split data into training, validation, and test sets (80/10/10%)

Feature Representation Learning:

- Train graph neural networks on molecular structures

- Extract learned representations from intermediate layers

- Combine with traditional chemical descriptors

- Apply dimensionality reduction techniques (PCA, t-SNE)

Predictive Model Training:

- Implement ensemble methods (random forests, gradient boosting)

- Train deep neural networks with multi-task learning

- Optimize hyperparameters through Bayesian optimization

- Employ cross-validation to prevent overfitting

Model Interpretation and Validation:

- Apply SHAP (SHapley Additive exPlanations) for feature importance

- Validate against external test sets

- Perform prospective prediction with experimental confirmation

- Compare against traditional pharmacophore models

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Essential Research Reagents and Computational Tools

| Tool/Category | Specific Examples | Primary Function | Access Method |

|---|---|---|---|

| Protein Structure Databases | RCSB PDB, AlphaFold DB | Provides 3D structural data for targets | Web access, API |

| Chemical Libraries | ZINC20, ChEMBL, Enamine REAL | Source compounds for virtual screening | Commercial & academic access |

| Pharmacophore Modeling Software | LigandScout, MOE, Discovery Studio | Structure-based & ligand-based pharmacophore generation | Commercial licenses |

| Machine Learning Platforms | TensorFlow, PyTorch, DeepChem | Implement informacophore models | Open source |

| Molecular Dynamics Software | GROMACS, AMBER, CHARMM | Simulate protein-ligand interactions & flexibility | Academic & commercial |

| Validation Assays | Enzyme inhibition, Cell viability, ADMET | Experimental confirmation of computational predictions | Laboratory implementation |

Diagram 2: Essential components and workflow in modern computational drug discovery

Comparative Analysis: Advantages, Limitations, and Applicability

Performance Across Drug Discovery Metrics

Table 5: Comprehensive Comparison of Pharmacophore vs. Informacophore Approaches

| Evaluation Metric | Traditional Pharmacophore | Informacophore | Interpretation |

|---|---|---|---|

| Interpretability | High (human-defined features) | Moderate to Low (black-box models) | Pharmacophore offers clearer structure-activity relationship |

| Chemical Space Coverage | Limited by human intuition | Extensive (billions of compounds) | Informacophore accesses broader structural diversity |

| Scaffold Hopping Capability | Moderate | High (data-driven pattern recognition) | ML approaches identify novel chemotypes beyond human intuition |

| Resource Requirements | Moderate computational resources | High computational resources | Informacophore requires significant GPU/CPU infrastructure |

| Target Flexibility | Works well with structural data | Adaptable to novel targets with limited data | Informacophore transfers learning across target classes |

| Implementation Timeline | Days to weeks | Weeks to months (model training) | Pharmacophore provides faster initial implementation |

Synergistic Integration in Drug Discovery Pipelines

Rather than mutually exclusive approaches, traditional pharmacophore and informacophore methods demonstrate significant complementarity:

Hybrid Workflow Implementation:

- Initial Screening: Apply informacophore models to ultra-large libraries for hit identification

- Lead Optimization: Use traditional pharmacophore models for structure-based optimization

- Multi-Target Profiling: Employ informacophore for off-target prediction and toxicity assessment

- Experimental Validation: Confirm computational predictions through biological functional assays [7]

Successful Case Studies:

- Halicin Antibiotic Discovery: Neural network identification followed by biological validation of broad-spectrum antibiotic activity [7]

- Kinase Inhibitor Development: Combined structure-based pharmacophore with machine learning scoring functions [23]

- GPCR-Targeted Compounds: Ultra-large library docking with pharmacophore constraints [23]

The conceptual journey from Paul Ehrlich's magic bullets to contemporary computational methods represents a remarkable evolution in drug discovery philosophy. Ehrlich's fundamental insight—that therapeutic efficacy depends on specific molecular interactions—remains as relevant today as it was a century ago. The comparative analysis demonstrates that traditional pharmacophore and modern informacophore approaches each offer distinct advantages:

Traditional pharmacophore modeling provides interpretable, structure-based hypotheses grounded in medicinal chemistry principles, offering transparency in decision-making and efficient scaffold-based optimization. Informacophore approaches leverage machine learning to identify complex, multi-dimensional patterns beyond human perception, enabling exploration of ultra-large chemical spaces and identification of novel chemotypes.

The most effective drug discovery pipelines strategically integrate both methodologies, using informacophore for broad exploration of chemical space and traditional pharmacophore for focused optimization and mechanistic interpretation. This synergistic approach honors Ehrlich's legacy while leveraging contemporary computational power, creating a drug discovery paradigm that combines the interpretability of traditional methods with the scalability of machine learning. As these computational approaches continue to evolve, they remain firmly grounded in the fundamental principle Ehrlich established: that targeted molecular recognition is the foundation of effective therapeutic intervention.

The field of medicinal chemistry is undergoing a profound transformation, driven by the integration of artificial intelligence and the availability of ultra-large chemical datasets. This shift is moving the discipline from traditional, intuition-based methods toward a more quantitative, data-driven paradigm. At the heart of this transition lies the evolution from the classical pharmacophore to the modern informacophore [7]. For decades, the pharmacophore has been a cornerstone of rational drug design, defined by the International Union of Pure and Applied Chemistry (IUPAC) as "the ensemble of steric and electronic features that is necessary to ensure the optimal supramolecular interactions with a specific biological target structure and to trigger (or to block) its biological response" [4] [3]. This abstract representation identifies key molecular interaction features—such as hydrogen bond donors/acceptors, hydrophobic areas, and charged groups—spatially arranged to complement a biological target [4].

The emerging informacophore concept extends this foundational idea by integrating computed molecular descriptors, fingerprints, and machine-learned representations of chemical structure that are essential for biological activity [7]. Similar to a skeleton key unlocking multiple locks, the informacophore captures the minimal chemical features that trigger biological responses through in-depth analysis of ultra-large datasets [7]. This paradigm represents more than an incremental improvement; it constitutes a fundamental shift from human-defined heuristics to data-intelligent molecular patterns discovered through machine learning, potentially reducing biased intuitive decisions that may lead to systemic errors while significantly accelerating drug discovery processes [7].

Comparative Analysis: Fundamental Principles and Definitions

Conceptual Frameworks

Table 1: Core Conceptual Differences Between Pharmacophore and Informacophore

| Aspect | Traditional Pharmacophore | Informacophore |

|---|---|---|

| Definition | Ensemble of steric and electronic features for optimal supramolecular interactions with a biological target [4] [3] | Minimal chemical structure combined with computed molecular descriptors, fingerprints, and machine-learned representations essential for activity [7] |

| Basis | Human-defined heuristics and chemical intuition [7] | Data-driven insights from ultra-large chemical datasets and machine learning [7] |

| Feature Representation | Spatial arrangement of chemical functionalities (HBA, HBD, hydrophobic, ionizable groups) [4] | Molecular descriptors, fingerprints, and learned representations from ML models [7] |

| Interpretability | Highly interpretable; based on recognizable chemical features [7] | Potentially opaque; relies on machine-learned patterns that may not be directly explainable [7] |

| Data Requirements | Limited to known active compounds or protein structures [4] | Ultra-large datasets of potential lead compounds (billions of molecules) [7] |

| Underlying Approach | Structure-based or ligand-based modeling [4] | Inverse cheminformatics and pattern recognition in high-dimensional space [7] |

Methodological Foundations

The traditional pharmacophore approach operates through two primary methodologies: structure-based and ligand-based modeling [4]. Structure-based pharmacophore modeling relies on the three-dimensional structure of a macromolecular target, typically obtained from sources like the Protein Data Bank, to derive complementary interaction features [4]. The process involves protein preparation, ligand-binding site detection, pharmacophore feature generation, and selection of relevant features for ligand activity [4]. When experimental structural data is unavailable, computational techniques like homology modeling or molecular docking provide alternative strategies [4].

In contrast, ligand-based pharmacophore modeling develops 3D pharmacophore hypotheses using only the physicochemical properties of known active ligands, without requiring target structure information [4]. This approach is particularly valuable when structural data for the target protein is scarce or unavailable. The fundamental theory underpinning both traditional methods is that compounds sharing common chemical functionalities in similar spatial arrangements will likely exhibit biological activity on the same target [4].

The informacophore paradigm transcends these traditional boundaries by incorporating machine learning algorithms that can process vast amounts of information rapidly and accurately, identifying hidden patterns beyond human recognition capacity [7]. This approach leverages ultra-large, "make-on-demand" virtual libraries consisting of billions of novel compounds that haven't been physically synthesized but can be readily produced [7]. To navigate this expansive chemical space, informacophore-based methods employ ultra-large-scale virtual screening for hit identification, as direct empirical screening of billions of molecules remains infeasible [7].

Experimental Performance and Benchmarking Data

Quantitative Performance Metrics

Table 2: Experimental Performance Comparison of Representative Approaches

| Metric | Traditional Pharmacophore | PGMG [8] | Pharmacophore-Guided RL [9] |

|---|---|---|---|

| Validity | Not applicable (screening existing compounds) | 0.947 | Not explicitly reported |

| Uniqueness | Not applicable | 0.995 | Not explicitly reported |

| Novelty | Limited to chemical space of screened library | 0.879 | 84.5%-100% |

| Docking Score | Varies by specific application | Strong docking affinities reported | -6.47 to -7.09 |

| QED (Drug-likeness) | Not optimized directly | Captures distribution of training molecules | 0.34-0.59 |

| Synthetic Accessibility Score | Not considered in initial screening | Not explicitly reported | 4.61-4.72 |

| Pharmacophore Similarity | Fundamental to approach | High fit to given pharmacophores | 0.83-0.94 (Cosine) |

Case Studies and Experimental Validation

The practical utility of these approaches is best demonstrated through specific case studies. Traditional pharmacophore methods have contributed to numerous successful drug discovery campaigns, with their effectiveness well-established in the literature [4]. However, the informacophore paradigm has enabled several groundbreaking applications that highlight its potential.

The PGMG (Pharmacophore-Guided deep learning approach for bioactive Molecule Generation) framework demonstrates how pharmacophore guidance can be integrated with deep learning for molecular generation [8]. This approach uses a graph neural network to encode spatially distributed chemical features and a transformer decoder to generate molecules [8]. A key innovation is the introduction of latent variables to model the many-to-many mapping between pharmacophores and molecules, enhancing diversity in the generated compounds [8]. In evaluation, PGMG generated molecules with strong docking affinities while achieving high scores of validity (0.947), uniqueness (0.995), and novelty (0.879) [8].

In a separate study, a pharmacophore-guided reinforcement learning approach was implemented within the FREED++ framework, incorporating both structural and pharmacophoric similarity assessments against reference compounds [9]. This method employed CATS descriptors to capture pharmacophore patterns and MACCS keys or MAP4 fingerprints to represent structural features [9]. The reward function was explicitly designed to maximize pharmacophoric similarity while minimizing structural similarity to reference molecules, generating novel compounds likely to retain biological activity while exhibiting sufficient structural novelty for patentability [9]. In a case study targeting alpha estrogen receptor modulators for breast cancer, generated compounds maintained high pharmacophoric fidelity (cosine similarity 0.83-0.94) to known active molecules while introducing substantial structural novelty (84.5%-100%) [9].

Methodologies and Experimental Protocols

Traditional Pharmacophore Modeling Workflow

Informacophore-Guided Molecular Generation

Detailed Experimental Protocols

Protocol 1: Structure-Based Pharmacophore Modeling

This protocol outlines the key steps for developing structure-based pharmacophore models [4]:

Protein Structure Preparation: Obtain the 3D structure of the target protein from the Protein Data Bank (PDB). Critically evaluate structure quality, including residue protonation states, positioning of hydrogen atoms (typically absent in X-ray structures), presence of non-protein groups, and any missing residues or atoms. Address stereochemical and energetic parameters to ensure biological-chemical relevance [4].

Ligand-Binding Site Detection: Identify the ligand-binding site through manual analysis of areas with residues suggested to have key roles from experimental data (e.g., site-directed mutagenesis or X-ray structures of protein-ligand complexes). Alternatively, employ bioinformatics tools like GRID or LUDI that inspect protein surfaces to identify potential binding sites based on geometric, energetic, or evolutionary properties [4].