From Algorithm to Assay: A 2025 Guide to Validating AI-Generated Drug Candidates

This article provides a comprehensive framework for researchers and drug development professionals to bridge the gap between in silico predictions and biological reality.

From Algorithm to Assay: A 2025 Guide to Validating AI-Generated Drug Candidates

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to bridge the gap between in silico predictions and biological reality. It explores the critical role of functional assays in validating AI-generated drug candidates, covering foundational principles, current methodological applications, strategies for troubleshooting common pitfalls, and rigorous benchmarking approaches. By synthesizing the latest trends and technologies, this guide aims to equip scientists with the knowledge to build robust, translatable AI-driven discovery pipelines that mitigate risk and increase the likelihood of clinical success.

The Critical Bridge: Why Biological Validation is Non-Negotiable in AI-Driven Discovery

The integration of artificial intelligence (AI) into pharmaceutical research represents nothing less than a paradigm shift, replacing labor-intensive, human-driven workflows with AI-powered discovery engines capable of compressing timelines and expanding chemical and biological search spaces [1]. By mid-2025, AI has progressed from experimental curiosity to clinical utility, with AI-designed therapeutics now in human trials across diverse therapeutic areas [1]. This transition promises to drastically shorten early-stage research and development timelines and cut costs by using machine learning (ML) and generative models to accelerate tasks that traditionally relied on cumbersome trial-and-error approaches [1].

Multiple AI-derived small-molecule drug candidates have reached Phase I trials in a fraction of the typical ~5 years needed for discovery and preclinical work, with some cases occurring within the first two years [1]. For instance, Insilico Medicine's generative-AI-designed idiopathic pulmonary fibrosis (IPF) drug progressed from target discovery to Phase I in just 18 months [1]. Similarly, pharma tech company Exscientia reports in silico design cycles approximately 70% faster and requiring 10x fewer synthesized compounds than industry norms [1].

However, this accelerated progress raises a critical question: Is AI truly delivering better success, or just faster failures? Despite accelerated progress into clinical stages, no AI-discovered drug has received full regulatory approval yet, with most programs remaining in early-stage trials [1]. This reality underscores the urgent need for robust validation frameworks, particularly through biological functional assays, to ensure that AI-accelerated discoveries translate into genuine therapeutic breakthroughs rather than merely expedited disappointments.

Quantitative Landscape: AI's Impact on Drug Development Timelines and Pipelines

Clinical Pipeline Progress of Leading AI Companies

The AI drug discovery sector has demonstrated tangible progress in advancing candidates through clinical development. By the end of 2024, over 75 AI-derived molecules had reached clinical stages, representing exponential growth since the first examples appeared around 2018-2020 [1]. The table below summarizes the clinical pipeline status of leading AI-driven drug discovery companies as of 2025:

Table 1: Clinical Pipeline Status of Leading AI Drug Discovery Companies

| Company | Key AI Platform Focus | Lead Clinical Candidate(s) | Therapeutic Area | Development Phase |

|---|---|---|---|---|

| Insilico Medicine | Generative chemistry | ISM001-055 (TNK inhibitor) | Idiopathic Pulmonary Fibrosis | Phase IIa (positive results) [1] |

| Exscientia | Generative AI design, patient-derived biology | EXS-74539 (LSD1 inhibitor) | Oncology | Phase I (initiated 2024) [1] |

| GTAEXS-617 (CDK7 inhibitor) | Solid Tumors | Phase I/II [1] | ||

| Recursion | Phenomic screening & AI | RXC-007 (undefined) | Neurovascular condition | Phase II (safe but limited efficacy) [2] |

| Schrödinger | Physics-enabled molecular design | Zasocitinib (TAK-279, TYK2 inhibitor) | Immunological disorders | Phase III [1] |

| BenevolentAI | Knowledge-graph target discovery | Undisclosed programs | Multiple | Early clinical (restructured 2024) [1] [2] |

Comparative Performance Metrics: AI vs Traditional Approaches

AI-driven drug discovery platforms claim significant advantages over traditional methods across key performance metrics. The following table quantifies these improvements based on reported data from leading AI companies:

Table 2: Performance Comparison: AI-Driven vs Traditional Drug Discovery

| Performance Metric | Traditional Discovery | AI-Driven Discovery | Exemplary Company/Platform |

|---|---|---|---|

| Early Discovery Timeline | 4-6 years | 1-2 years | Insilico Medicine (18 months target-to-P1) [1] |

| Compound Synthesis Efficiency | High hundreds to thousands | 10x fewer compounds | Exscientia (70% faster design cycles) [1] |

| Preclinical Cost | $50-100 million+ | Significant reduction claimed | Multiple platforms [3] |

| Clinical Success Rate | ~10% from Phase I to approval | To be determined (most in early trials) | Industry aggregate [1] |

| Target Identification | Months to years | Weeks to months | AI knowledge-graph platforms [4] |

The AI drug discovery market reflects this growing adoption, projected to skyrocket from $3.24 billion in 2024 to $65.83 billion by 2033, representing a robust CAGR of over 39.74% [5]. This growth is fueled by increasing R&D spending, demands for compressed timelines, and strategic collaborations between traditional pharmaceutical companies and AI specialists [5].

Validation Imperative: Biological Assays as the Critical Bridge

The "Faster Failures" Dilemma and Quality Control Challenges

Despite promising acceleration, the AI drug discovery sector faces significant challenges. Recent developments highlight what industry observers call the "faster failures" dilemma – the risk that AI primarily accelerates the identification of non-viable candidates rather than increasing genuine success rates [1]. In 2024-2025, several AI biotech companies experienced setbacks, with Recursion tabling three prospective drugs in cost-cutting efforts following its merger with Exscientia, and BenevolentAI delisting from the stock exchange before merging with Osaka Holdings [2].

These struggles coincide with a broader conversation around generative AI's occasional failure to deliver quickly on lofty promises of productivity and efficiency. An MIT report found 95% of generative AI pilots at companies failed to accelerate revenue [2]. As one industry expert noted, "No matter how much data you have, human biology is still a mystery" [2]. This biological complexity necessitates robust validation systems to ensure that AI-predicted candidates demonstrate genuine therapeutic potential.

Technical limitations also present substantial hurdles. The drug development process is intentionally bottlenecked to ensure safety and efficacy, and AI typically addresses only specific segments of this pipeline [2]. As one expert explained, "That one early bottleneck of auditioning compounds is not the be-all and end-all of satisfying shareholders by announcing, 'We have approval for this compound as a drug'" [2]. This highlights why biological validation remains indispensable despite AI's computational power.

Essential Biological Validation Methodologies

Robust validation of AI-generated drug candidates requires a multi-dimensional approach leveraging complementary experimental techniques. The following methodologies represent critical components of an effective validation strategy:

Table 3: Essential Validation Methodologies for AI-Generated Drug Candidates

| Validation Method | Key Function | Specific Techniques | Data Output |

|---|---|---|---|

| Genetic Approaches | Establish target's role in disease mechanisms | CRISPR-Cas9 KO, CRISPR-i/siRNA KD, Overexpression via transfection/transduction [4] | Phenotypic confirmation of target-disease linkage |

| Expression Profiling | Assess target presence/distribution in diseased vs. healthy tissues | RNA-seq, Protein quantification, Tissue staining [4] | Differential expression patterns, tissue specificity |

| Functional Assays | Measure biological activity and target modulation effects | Biochemical assays (cell-free), Cell-based signaling assays [4] | Potency, efficacy, mechanism of action |

| Phenotypic Analysis | Understand comprehensive biological impact | HCS Morphology, Multi-electrode arrays, Transcriptomics/Proteomics [4] | Multiparametric phenotypic fingerprints, pathway effects |

These validation methodologies enable researchers to transition from in silico predictions to wet-lab confirmation, building essential confidence in AI-generated targets and candidates before advancing to costly clinical development stages [4]. As noted by Axxam, a company specializing in target validation, "By integrating evidence within interconnected knowledge networks, analytics can begin to trace biological pathways from mechanisms of action to patient impact providing insights with greater confidence" [4].

Integrated Workflow for AI Candidate Validation

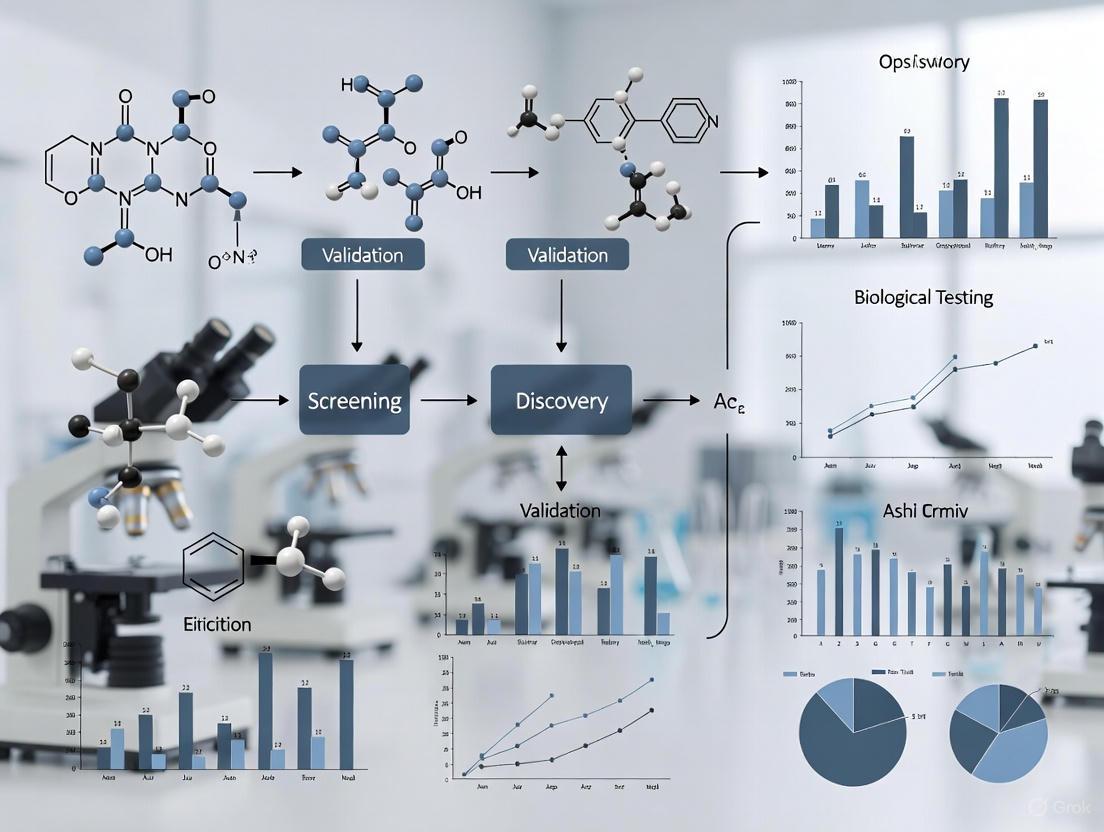

The diagram below illustrates a comprehensive validation workflow that integrates computational AI approaches with experimental biological assays:

Diagram 1: Integrated validation workflow for AI-generated drug candidates

This integrated workflow emphasizes the critical importance of transitioning from computational predictions to experimental validation across multiple biological contexts. As emphasized by technologies showcased at ELRIG's Drug Discovery 2025 conference, there is a growing focus on human-relevant models such as 3D cell cultures and organoids to improve biological predictiveness [6]. Companies like mo:re are developing automated platforms like the MO:BOT that standardize 3D cell culture to improve reproducibility and reduce the need for animal models [6]. As mo:re's CEO explained, "If you can present verified, human-relevant results to regulators, you build confidence and shorten timelines" [6].

Case Study: Integrated AI and Experimental Validation in Colorectal Cancer

Research Methodology and Experimental Workflow

A recent study on colorectal cancer (CRC) demonstrates the powerful synergy between AI-driven discovery and experimental validation [7]. Researchers analyzed 100 unselected Colombian patients with CRC to identify pathogenic (P) and likely pathogenic (LP) germline variants using next-generation sequencing (NGS). The study employed the BoostDM artificial intelligence method to identify oncodriver germline variants with potential implications for disease progression, comparing its results with the AlphaMissense pathogenicity prediction model [7].

The experimental workflow integrated computational and laboratory validation techniques as follows:

Diagram 2: AI and experimental validation workflow in colorectal cancer research

Key Findings and Experimental Outcomes

The study revealed that 12% of patients carried pathogenic/likely pathogenic (P/LP) variants according to ACMG/AMP criteria [7]. Using the BoostDM AI method, researchers identified oncodriver variants in 65% of cases, demonstrating AI's enhanced detection capability beyond conventional methods [7].

The performance evaluation showed strong concordance between AI predictions and functional validation. The average overall AUC (Area Under the Curve) values were 0.788 for the entire BoostDM dataset and 0.803 for the genes within the study panel, with individual gene AUC values ranging from 0.606 to 0.983 [7]. Functional validation through minigene assays revealed the generation of aberrant transcripts, potentially linked to the molecular etiology of the disease [7].

Research Reagent Solutions for AI Validation

The following table details key research reagents and materials used in this integrated AI-experimental study, representing essential components for similar validation workflows:

Table 4: Research Reagent Solutions for AI-Driven Target Validation

| Reagent/Material | Function in Validation Workflow | Specific Application in CRC Study |

|---|---|---|

| Next-Generation Sequencing Kits | Comprehensive multigene analysis for variant identification | Whole-exome sequencing of 100 CRC patients [7] |

| Bioinformatics Pipelines (BWA, SAMtools) | Processing and alignment of sequencing data | Read mapping to hg19 reference genome [7] |

| AI Prediction Platforms (BoostDM, AlphaMissense) | Pathogenicity prediction and variant prioritization | Identification of oncodriver germline variants [7] |

| Minigene Assay Systems | Functional validation of splicing mutations | Analysis of intronic variants' impact on transcript processing [7] |

| CRISPR-Cas9 Tools | Genetic validation through targeted gene modulation | Not explicitly detailed but referenced as key validation approach [4] |

| High-Content Screening Platforms | Multiparametric phenotypic analysis | Morphological profiling and phenotypic fingerprinting [4] |

This case study exemplifies how integrating advanced genomic analysis with artificial intelligence enhances variant detection beyond conventional methods, while functional validation provides crucial insights into potential pathogenicity [7]. The findings underscore the necessity of a multifaceted approach to unravel the complex genetic landscape of human diseases.

Regulatory and Implementation Landscape

Evolving Regulatory Frameworks for AI in Drug Development

As AI transforms drug development, regulatory frameworks are evolving to oversee its implementation. The U.S. Food and Drug Administration (FDA) and European Medicines Agency (EMA) have adopted distinct approaches reflecting their broader regulatory philosophies [8]. The FDA employs a flexible, dialog-driven model that encourages innovation via individualized assessment but can create uncertainty about general expectations [8]. In contrast, the EMA has established a structured, risk-tiered approach that may slow early-stage AI adoption but provides more predictable paths to market [8].

By fall 2024, the FDA had received over 500 submissions incorporating AI components across various stages of drug development, yet stakeholders continue to report insufficient guidance about regulatory requirements for AI/ML applications, particularly in clinical phases [8]. The EMA's framework, articulated in its 2024 Reflection Paper, establishes a regulatory architecture that systematically addresses AI implementation across the entire drug development continuum [8].

Implementation Challenges and Strategic Considerations

Despite AI's promising potential, implementation faces significant challenges. Data privacy and regulatory compliance present substantial hurdles, as pharmaceutical research depends on sensitive patient health information and genomic data that must meet regulations like HIPAA and GDPR [5]. Any unauthorized access or misuse of data can lead to major legal and ethical issues [5].

Additionally, high implementation costs and technical complexity slow AI adoption in the pharmaceutical industry. Developing and integrating AI platforms requires massive investment in computing capabilities, technical expertise, and data management systems [5]. Small and medium-sized pharma firms may face particular financial and technical challenges in implementation [5].

The regulatory landscape is further complicated by emerging technical requirements. The EMA's framework mandates three key elements: traceable documentation of data acquisition and transformation, explicit assessment of data representativeness, and strategies to address class imbalances and potential discrimination [8]. The EMA expresses a clear preference for interpretable models but acknowledges the utility of black-box models when justified by superior performance, requiring explainability metrics and thorough documentation in such cases [8].

The integration of AI into drug discovery presents a dual reality of remarkable promise and substantial peril. On one hand, AI-driven platforms have demonstrated unprecedented capabilities to compress early-stage timelines from the traditional 4-6 years to as little as 1-2 years, while significantly reducing the number of compounds requiring synthesis and testing [1] [3]. The exponential growth of AI-derived molecules reaching clinical stages—with over 75 candidates by the end of 2024—testifies to the technology's transformative potential [1].

However, the fundamental challenge remains: without robust biological validation, AI may primarily deliver faster failures rather than better successes. The recent setbacks experienced by several AI biotech companies highlight the persistent uncertainties in translating computational predictions to clinical successes [2]. As one industry expert aptly noted, "No matter how much data you have, human biology is still a mystery" [2].

The path forward requires a balanced approach that leverages AI's computational power while maintaining rigorous experimental validation. Integrated workflows that combine AI-driven target identification with comprehensive biological functional assays offer the most promising framework for ensuring that accelerated timelines yield genuinely therapeutic breakthroughs rather than merely expedited disappointments. As the field evolves, the successful integration of AI into drug discovery will depend on maintaining this crucial balance between computational innovation and biological validation—harnessing the power of artificial intelligence while respecting the enduring complexity of human physiology.

In the evolving landscape of pharmaceutical research, the definition and application of biological functional assays have become pivotal in translating computational predictions into therapeutic realities. As artificial intelligence (AI) rapidly transforms drug discovery by identifying potential drug candidates with unprecedented speed, the scientific community faces a critical validation gap [9]. Functional assays provide the essential experimental bridge between in silico predictions and demonstrated biological effect, serving as the definitive proof mechanism for AI-generated drug candidates. The 2015 American College of Medical Genetics and Genomics (ACMG) and the Association for Molecular Pathology (AMP) guidelines established that "well-established" functional studies can serve as authoritative evidence in variant classification, articulating that such assays must reflect the biological environment and be analytically sound [10]. This framework, though developed for clinical genetics, provides a crucial foundation for understanding the role of functional validation across biomedical research.

The fundamental challenge in contemporary drug development lies in moving beyond correlative predictive metrics to establish causal mechanistic relationships. While AI algorithms can rapidly sift through vast chemical spaces and predict biological activity against specific drug targets, these computational approaches ultimately generate hypotheses that require experimental verification [9]. Functional assays represent the critical methodology for closing this validation loop, providing direct evidence of a compound's effect on biological systems. In functional precision oncology, for example, these assays have gained prominence precisely because they can capture complex biological responses that purely genomic approaches may miss [11]. This article explores how properly defined and executed functional assays provide the necessary mechanistic proof to advance AI-predicted compounds from computational hits to validated therapeutic candidates.

Defining Biological Functional Assays: Key Principles and Components

Conceptual Framework and Core Characteristics

Biological functional assays are experimental systems designed to directly measure a specific biological activity or capacity of a molecule, pathway, or cellular process in response to experimental perturbation. Unlike purely descriptive or correlative measurements, functional assays establish causal relationships between an intervention and a biological outcome. According to evaluations by Clinical Genome Resource (ClinGen) Variant Curation Expert Panels (VCEPs), well-established functional assays share several defining attributes: they must be reflective of the relevant biological environment, analytically sound, properly validated, reproducible, and robust across experimental replicates [10].

The core value proposition of functional assays lies in their ability to capture the complex interplay between genetic, epigenetic, and microenvironmental factors that influence biological outcomes [11]. This is particularly important in the context of AI-generated drug candidates, where computational predictions based on structural features or physicochemical properties require confirmation in biologically relevant systems. Functional assays provide this confirmation by measuring actual biological responses rather than predicting them, thus serving as the crucial validation step that moves beyond predictive metrics to mechanistic proof.

Essential Components of Validated Functional Assays

The development of a well-validated functional assay requires careful attention to multiple experimental parameters. Analysis of VCEP recommendations reveals that several key components are consistently identified as essential for assay validation:

- Appropriate Controls: Including positive, negative, and baseline controls to establish assay performance and provide reference points for interpreting results.

- Replication Strategy: Implementing sufficient technical and biological replicates to ensure statistical robustness and reproducibility.

- Quantification Thresholds: Establishing clear cut-off values that distinguish positive from negative results based on statistical significance and biological relevance.

- Validation Measures: Demonstrating that the assay consistently measures what it purports to measure through correlation with known standards or clinical outcomes [10].

These components form the foundation of assay reliability and must be explicitly addressed when developing functional assays for validating AI-generated drug candidates. The specific implementation of these components varies depending on the biological context and disease mechanism, reflecting the need for disease-specific assay validation [10].

Comparative Analysis of Functional Assay Platforms

The landscape of functional assay platforms encompasses a diverse array of technologies, each with distinct advantages, limitations, and applications in drug discovery. Understanding these differences is crucial for selecting the appropriate validation strategy for AI-generated compounds.

Table 1: Comparison of Major Functional Assay Platforms in Drug Discovery

| Platform Type | Key Features | Applications | Strengths | Limitations |

|---|---|---|---|---|

| 2D Cell Viability Assays (e.g., MTT, ATP-luminescence) | Single-cell suspensions in monolayer format | High-throughput drug screening; initial compound validation | Rapid, scalable, cost-effective; suitable for large compound libraries | Lacks 3D architecture and microenvironmental fidelity [11] |

| 3D Organoid Cultures | Patient-derived cells forming 3D structures | Personalized therapeutic testing; disease modeling | Preserves tumor histology and architecture; strong clinical correlation [11] | Technically challenging; variable success rates between samples |

| Patient-Derived Xenografts (PDX) | Human tumor tissues implanted in immunocompromised mice | Preclinical efficacy assessment; biomarker discovery | Maintains tumor-stroma interactions; high physiological relevance [11] | Time-consuming and expensive; limited throughput |

| Single-Cell Multi-Omics Assays (e.g., Tapestri Platform) | Simultaneous DNA+RNA profiling at single-cell resolution | Mapping clonal evolution; linking genotype to phenotype | Directly connects mutations to functional consequences; reveals heterogeneity [12] | Specialized equipment required; complex data analysis |

| Phenotypic Profiling Systems (e.g., BioMAP) | Multi-parameter readouts across primary human cell systems | Mechanism-of-action classification; toxicity screening | Provides rich contextual data; captures complex biology [13] | Reference database dependent; specialized expertise required |

Platform Selection Considerations

The choice of functional assay platform depends heavily on the specific validation requirements and stage of the drug discovery pipeline. For initial high-throughput screening of AI-generated compounds, 2D cell viability assays offer practical advantages of scale and efficiency. However, as candidates progress toward preclinical development, more physiologically relevant systems like 3D organoids and PDX models provide greater predictive validity for clinical outcomes [11]. The emerging category of single-cell multi-omics platforms represents a particularly powerful approach for AI validation, as it can directly connect genetic alterations (predicted by AI) to functional consequences (measured experimentally) within the same cells [12].

Recent advances in functional assay technology have particularly impacted oncology drug development, where traditional genomic approaches have shown limited success for many cancer types. In soft tissue sarcomas, for example, functional assays using patient-derived materials have demonstrated promising correlation with clinical responses, providing a complementary approach to target-based drug discovery [11]. This application highlights the growing importance of functional validation in contexts where mechanistic complexity exceeds the predictive capacity of current AI models.

Experimental Protocols for Key Assay Types

3D Organoid Culture and Drug Sensitivity Testing

Methodology Overview: Patient-derived organoid cultures preserve the tumor architecture and some degree of microenvironmental complexity, making them highly relevant for functional validation of AI-predicted compounds [11].

Step-by-Step Protocol:

- Tumor Tissue Processing: Mechanically dissociate fresh patient tumor samples into small fragments (0.5-1 mm³) using sterile surgical blades.

- Enzymatic Digestion: Incubate tissue fragments with collagenase/hyaluronidase enzyme mix (1-2 mg/mL) for 30-60 minutes at 37°C with gentle agitation.

- Matrix Embedding: Resuspend digested tissue in reduced-growth factor basement membrane extract (BME) and plate as domes in pre-warmed culture plates.

- Culture Maintenance: Feed cultures every 2-3 days with specialized medium containing Wnt agonist R-spondin 1, Noggin, and EGF to maintain stemness.

- Drug Treatment: Passage organoids 3-5 times before experimental use. Dissociate to single cells and plate in BME for drug testing.

- Viability Assessment: After 7-14 days of drug exposure, measure cell viability using ATP-based luminescence assays normalized to vehicle-treated controls.

- Data Analysis: Calculate IC₅₀ values using non-linear regression and compare to clinical response data when available.

Validation Parameters: Establish reproducibility through technical and biological replicates (typically n≥3). Include reference compounds with known clinical activity as positive controls. Define response thresholds based on statistical significance (typically p<0.05) and effect size (e.g., >50% inhibition vs control) [11].

Single-Cell Multi-Omics Functional Profiling

Methodology Overview: The Tapestri Single-Cell Targeted DNA + RNA Assay enables simultaneous measurement of genotypic and transcriptional readouts within individual cells, directly linking mutations to functional consequences [12].

Step-by-Step Protocol:

- Sample Preparation: Create single-cell suspensions from patient samples or cell lines, ensuring viability >80% and concentration of 100-200 cells/μL.

- Microfluidic Partitioning: Load cells into the Tapestri instrument where individual cells are encapsulated into droplets with barcoded beads.

- Lysis and Hybridization: Lyse cells within droplets to release nucleic acids, which hybridize to barcoded primers on the beads.

- Target Amplification: Perform PCR amplification of targeted DNA mutations (up to 1,000 amplicons) and cDNA synthesis for RNA expression (up to 200 transcripts).

- Library Preparation: Recover barcoded nucleic acids and prepare sequencing libraries using standard NGS protocols.

- Sequencing and Analysis: Sequence libraries on Illumina platforms and analyze data using Mission Bio's integrated bioinformatics pipeline.

- Data Integration: Correlate mutation status with gene expression patterns at single-cell resolution to identify functional relationships.

Validation Parameters: Assess assay sensitivity using cell lines with known mutation status. Establish detection thresholds for variant allele frequency (typically >1%) and gene expression changes (typically >2-fold). Verify technical reproducibility through replicate samples [12].

Figure 1: Single-Cell Multi-Omics Functional Profiling Workflow

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of functional assays requires carefully selected reagents and materials that maintain biological relevance while providing experimental robustness. The following table details key solutions for functional assay research:

Table 2: Essential Research Reagent Solutions for Functional Assays

| Reagent/Solution | Function | Application Examples | Key Considerations |

|---|---|---|---|

| Basement Membrane Extract (BME) | Provides 3D scaffolding for organoid growth | 3D organoid culture; invasion assays | Lot-to-lot variability; growth factor content [11] |

| Collagenase/Hyaluronidase Mix | Tissue dissociation while preserving cell viability | Primary tissue processing; PDX establishment | Concentration optimization; exposure time critical [11] |

| ATP-Luminescence Reagents | Quantifies metabolically active cells | Cell viability assays; high-throughput screening | Linear range establishment; interference by certain compounds |

| Barcoded Primers/Beads | Enables single-cell multiplexing | Single-cell RNA/DNA sequencing; clonal tracking | Barcode diversity; capture efficiency [12] |

| Specialized Media Formulations | Maintains cell phenotype and function | Primary cell culture; stem cell maintenance | Growth factor stability; batch consistency [13] |

| Viability Stains | Distinguishes live/dead cells | Flow cytometry; microscopy applications | Compatibility with other fluorophores; toxicity concerns |

Signaling Pathways and Experimental Workflows

Functional assays typically measure outputs within specific signaling pathways that are relevant to disease mechanisms. Understanding these pathways is essential for proper assay design and interpretation.

Figure 2: Functional Assay Validation Pathway for AI-Generated Candidates

Biological functional assays represent the indispensable critical step in translating AI-generated predictions into mechanistically validated therapeutic candidates. As defined by rigorous standards such as those established by ClinGen VCEPs, well-validated functional assays must be reflective of the biological environment, analytically sound, and properly controlled [10]. The comparative analysis presented herein demonstrates that modern functional assay platforms—from 3D organoids to single-cell multi-omics—offer increasingly sophisticated approaches for establishing mechanistic proof that moves beyond correlative predictive metrics.

For researchers and drug development professionals, the integration of these functional validation strategies into AI-driven discovery pipelines represents a strategic imperative. The experimental protocols and methodologies detailed in this guide provide a foundation for implementing these critical assays, while the essential reagent solutions and workflow visualizations offer practical resources for laboratory implementation. As AI continues to transform the initial stages of drug discovery, robust functional assays will play an increasingly vital role in ensuring that computational predictions translate into genuine therapeutic advances, ultimately bridging the gap between predictive metrics and mechanistic proof.

The pharmaceutical industry is undergoing a computational revolution, with artificial intelligence (AI) and in silico methodologies dramatically accelerating early drug discovery. AI-designed therapeutics are now entering human trials across diverse therapeutic areas, compressing discovery timelines that traditionally required 4-5 years down to 18-24 months in notable cases [1] [14]. This paradigm shift replaces labor-intensive, human-driven workflows with AI-powered discovery engines capable of exploring vast chemical and biological search spaces [1]. However, this acceleration creates a critical bottleneck: translational relevance—the ability of computational predictions to reliably correlate with biological outcomes in living systems. As of 2025, while over 75 AI-derived molecules had reached clinical stages, none have achieved full regulatory approval, raising fundamental questions about whether AI is delivering faster success or merely accelerating failures [1] [14]. This comparison guide examines the experimental frameworks and validation strategies that bridge the in silico to in vivo gap, providing researchers with methodologies to assess the translational relevance of computational predictions.

Validation Frameworks: Establishing Credibility for Computational Models

Tiered Validation Approaches for Computational Predictions

Regulatory agencies increasingly accept in silico evidence in submissions, but require rigorous "qualification" of computational methods [15]. A structured, tiered validation scheme adapted from next-generation sequencing (NGS) validation provides a robust framework for computational drug discovery:

- Tier 1 (Technical Performance): Demonstrates the computational method can correctly process well-characterized reference data to generate high-quality outputs, establishing technical reproducibility [16].

- Tier 2 (Algorithmic Validation): Establishes the bioinformatics pipeline can accurately identify variations or relationships from reference standards, confirming analytical sensitivity for known positive controls [16].

- Tier 3 (Pathogenic Variant Detection): Specifically validates the ability to detect clinically relevant outcomes (e.g., pathogenic variants, efficacy signals, toxicity concerns) through targeted challenge sets, addressing最难检测scenarios most likely to produce false negatives in real-world applications [16].

For regulatory submissions, the ASME V&V-40 technical standard provides a methodological framework for credibility assessment of computational models, emphasizing context of use definition, risk analysis for acceptability thresholds, and comprehensive verification, validation, and uncertainty quantification [15].

The Regulatory Landscape for In Silico Evidence

Regulatory agencies worldwide are establishing pathways for computational evidence. The FDA's 2025 decision to phase out mandatory animal testing for many drug types signals a fundamental shift toward accepted in silico methodologies [14]. Model-informed drug development programs and virtual bioequivalence studies have gained regulatory acceptance as primary evidence in select cases, particularly when traditional trials are impractical or unethical [14]. This evolving landscape underscores the growing importance of robust validation frameworks to establish regulatory-grade credibility for computational predictions.

Comparative Analysis: In Silico-to-In Vivo Workflows

Workflow Comparison of Leading AI Drug Discovery Platforms

Table 1: Comparison of AI Platform Approaches to In Silico-In Vivo Validation

| Platform/Company | Primary AI Approach | In Silico Validation Methods | In Vivo Correlation Strategy | Clinical Stage Examples |

|---|---|---|---|---|

| Exscientia | Generative Chemistry + Automated Design-Make-Test | Centaur Chemist approach (AI-human collaboration), Patient-derived biology screening | High-content phenotypic screening on patient tumor samples, Ex vivo disease models | CDK7 inhibitor (GTAEXS-617) Phase I/II, LSD1 inhibitor (EXS-74539) Phase I [1] |

| Insilico Medicine | Generative Adversarial Networks (GANs) + Reinforcement Learning | Target identification via AI-predicted binding affinities, Generative chemistry | In vivo models for disease-specific efficacy validation | ISM001-055 (idiopathic pulmonary fibrosis) Phase IIa [1] |

| Schrödinger | Physics-based + Machine Learning | Mixed physical/ML models screening billions of compounds, Molecular dynamics simulations | Traditional in vivo pharmacological profiling | TYK2 inhibitor (zasocitinib/TAK-279) Phase III [1] |

| BenevolentAI | Knowledge-Graph Repurposing | AI analysis of drug-target interactions from large datasets | Validation in disease-relevant animal models | Baricitinib repurposing for COVID-19 [17] |

Case Study: RXR-Activating Compound Discovery

A 2025 study exemplifies the complete in silico-to-in vivo workflow for identifying retinoid-X receptor (RXR) activating chemicals, providing quantitative performance data at each stage [18]:

Table 2: Validation Results for RXR-Activating Compound Discovery

| Validation Stage | Methodology | Key Performance Metrics | Outcomes |

|---|---|---|---|

| In Silico Screening | Machine learning (NR-Toxpred model) on 57,277 chemicals | MCC: 0.87, Specificity: 100%, Sensitivity: 80%, Accuracy: 90% | 109 predicted RXR-active chemicals, 104 within applicability domain [18] |

| Molecular Docking | Ensemble docking with multiple RXRα conformations | Docking scores: -16.44 to -4.18 (mean: -8.87) | Identified binding poses and affinity rankings [18] |

| Binding Free Energy | MM-PBSA with explicit-solvent molecular dynamics | MM-PBSA values: -77.15 to -32.03 (mean: -49.79) | Binding stability assessments [18] |

| In Vitro Validation | Tox21 high-throughput screening (cHTS) | Dose-response activation curves | Confirmed RXR activation for tert-butylphenols [18] |

| In Vivo Validation | Xenopus laevis precocious metamorphosis assay | Morphological changes, thyroid hormone potentiation | 3 tert-butylphenols potentiated TH action at nanomolar concentrations [18] |

Experimental Protocols for Cross-Platform Validation

Detailed Methodologies for Key Validation Experiments

In Silico Molecular Docking and Dynamics Protocol (adapted from [18])

- Protein Preparation: Retrieve RXRα structures from PDB. Remove native ligands, add hydrogen atoms, assign partial charges using appropriate force fields.

- Ligand Preparation: Obtain chemical structures from databases (e.g., Food Contact Chemicals Database). Generate 3D conformations, optimize geometry, assign atomic charges.

- Ensemble Docking: Perform molecular docking with multiple rigid receptor conformations using AutoDock Vina or similar software. Use grid boxes encompassing binding pocket.

- Molecular Dynamics: Run explicit-solvent MD simulations (AMBER or GROMACS) for top-ranked poses. Production phase: 100 ns simulation time.

- Binding Free Energy Calculations: Use Molecular Mechanics Poisson-Boltzmann Surface Area (MM-PBSA) method on stable trajectory segments. Calculate per-residue energy decomposition.

In Vivo Xenopus laevis Precocious Metamorphosis Assay (adapted from [18])

- Animal Husbandry: House Xenopus laevis tadpoles (Nieuwkoop and Faber stage 45-46) in reconstituted reverse-osmosis water at 22°C with 12:12 light:dark cycle.

- Chemical Exposure: Expose tadpoles (n=10-15 per group) to test chemicals dissolved in DMSO (final concentration ≤0.1%) with or without sub-metamorphic thyroid hormone (T3) concentrations.

- Morphological Scoring: Assess developmental progression daily using standardized scoring systems measuring tail resorption, gill degeneration, and hindlimb growth.

- Statistical Analysis: Compare treatment groups to controls using ANOVA with post-hoc testing. Significance threshold: p<0.05.

Computational Toxicology Workflow

The following diagram illustrates the complete in silico to in vivo validation workflow for identifying environmental chemicals that disrupt nuclear receptor signaling:

Research Reagent Solutions for Validation Studies

Essential Materials for In Silico-to-In Vivo Workflows

Table 3: Key Research Reagents for Validation Studies

| Reagent/Resource | Function in Validation | Examples/Sources |

|---|---|---|

| Reference Materials | Benchmarking computational predictions | NIST Genome in a Bottle samples, CDC GeT-RM DNA [16] |

| Curated Variant Lists | Establishing "must-test" challenge sets | ACMG CFTR variants, GeT-RM/ClinGen actionable variants [16] |

| In Silico Mutagenesis Tools | Supplementing physical reference materials | Custom bioinformatics pipelines for FASTQ mutagenesis [16] |

| Structural Databases | Molecular docking and dynamics | Protein Data Bank (PDB), AlphaFold Protein Structure Database [18] [17] |

| Chemical Databases | Compound sourcing and characterization | Food Contact Chemicals Database, CoMPARA, PubChem [18] |

| High-Throughput Screening | Intermediate in vitro validation | Tox21 program, EPA CompTox Chemicals Dashboard [18] |

| Model Organisms | In vivo functional validation | Xenopus laevis, zebrafish, rodent disease models [18] [19] |

Bridging the in silico to in vivo gap requires systematic, multi-tiered validation frameworks that progress from computational predictions to biological function. The most successful approaches integrate computational predictions with experimental validation across multiple biological scales, as demonstrated by the RXR-activating compound case study where machine learning predictions successfully identified compounds with nanomolar potency in vivo [18]. As regulatory agencies increasingly accept in silico evidence [14] [15], establishing standardized validation protocols becomes essential for translating computational predictions into clinically relevant therapeutics. The workflows, experimental protocols, and reagent solutions presented in this guide provide researchers with a structured approach to demonstrating translational relevance, ultimately accelerating the development of safer, more effective treatments through computational drug discovery.

The integration of artificial intelligence (AI) into drug discovery has catalyzed a paradigm shift, moving from theoretical promise to tangible clinical impact. By mid-2025, the landscape is characterized by an exponential growth in the number of AI-derived drug candidates entering human trials, with over 75 such molecules reaching clinical stages by the end of 2024 [1]. This surge signals a new era where AI-powered discovery engines are compressing traditional timelines, expanding chemical and biological search spaces, and redefining the speed and scale of modern pharmacology [1]. The critical validation of these AI-generated hypotheses through rigorous biological functional assays remains the cornerstone of this transformation, ensuring that computational acceleration translates into safe and effective therapeutics.

Tracking the Surge: AI-Derived Candidates in the Clinic

The following table summarizes notable AI-derived drug candidates that have progressed to clinical stages, illustrating the diversity of approaches and therapeutic areas.

Table 1: Selected AI-Derived Drug Candidates in Clinical Stages

| Drug Candidate | Company/Platform | AI Approach | Therapeutic Area & Target | Latest Reported Clinical Stage (2024-2025) |

|---|---|---|---|---|

| ISM001-055 [1] | Insilico Medicine | Generative Chemistry | Idiopathic Pulmonary Fibrosis (TNK inhibitor) | Phase IIa (Positive results reported) [1] |

| Zasocitinib (TAK-279) [1] | Schrödinger (originated by Nimbus) | Physics-Enabled ML Design | Immunology (TYK2 inhibitor) | Phase III [1] |

| GTAEXS-617 [1] | Exscientia | Generative Chemistry | Oncology (CDK7 inhibitor) | Phase I/II [1] |

| EXS-74539 [1] | Exscientia | Generative Chemistry | Oncology (LSD1 inhibitor) | Phase I (IND approval in 2024) [1] |

| DSP-1181 [1] | Exscientia (with Sumitomo Dainippon Pharma) | Generative Chemistry | Obsessive Compulsive Disorder | Phase I (First AI-designed drug to enter trials, 2020) [1] |

This clinical progress was achieved through record-breaking timelines. For instance, Insilico Medicine's fibrosis drug advanced from target discovery to Phase I in under 30 months, a fraction of the typical 5-year timeline for discovery and preclinical work [1] [20]. Exscientia has also reported design cycles approximately 70% faster and requiring 10-fold fewer synthesized compounds than industry norms [1].

Comparative Analysis of Leading AI Drug Discovery Platforms

Different AI platforms employ distinct technological strategies to navigate the discovery pipeline. The table below compares the approaches of leading companies that have successfully advanced candidates into the clinic.

Table 2: Comparison of Leading AI Drug Discovery Platforms and Their Clinical Output

| Company/Platform | Core AI Technology | Key Differentiators | Therapeutic Focus Examples | Reported Clinical-Stage Output |

|---|---|---|---|---|

| Exscientia [1] | Generative Chemistry, Automated Design | "Centaur Chemist" integrating AI with human expertise; patient-derived biology [1] | Oncology, Immuno-oncology, Inflammation [1] | Multiple clinical compounds designed in-house and with partners [1] |

| Insilico Medicine [1] | Generative Chemistry, Target Discovery | End-to-end AI platform from target discovery to lead optimization [1] | Idiopathic Pulmonary Fibrosis, Oncology [1] | AI-designed drug (ISM001-055) in Phase IIa trials [1] |

| Schrödinger [1] | Physics-Based Simulation & ML | Fuse physics-based methods with machine learning for molecular design [1] | Immunology, Oncology [1] | TYK2 inhibitor (Zasocitinib) in Phase III trials [1] |

| BenevolentAI [1] [20] | Knowledge-Graph Driven Target Discovery | AI-powered analysis of vast scientific literature and data to propose novel targets and drugs [1] | Undisclosed | Platform used for rapid lead optimization; partners have advanced candidates [20] |

| Recursion [1] | Phenomics-First Screening | High-content cellular phenotyping with AI-driven pattern recognition [1] | Oncology, Rare Diseases [1] | Multiple candidates in clinical stages; merged with Exscientia in 2024 [1] |

The 2024 merger of Recursion and Exscientia exemplifies a strategic trend to create integrated "AI drug discovery superpowers," combining Recursion's extensive phenomic data with Exscientia's automated precision chemistry [1].

Validating AI Candidates: Core Experimental Methodologies

The transition from in silico predictions to viable clinical candidates hinges on experimental validation. AI-generated hypotheses must be confirmed through well-established functional assays that provide direct, measurable evidence of biological activity, target engagement, and safety.

Target Identification and Validation

AI platforms leverage large knowledge graphs to propose novel drug targets. These computational predictions require wet-lab confirmation to establish their role in disease mechanisms [4] [21].

Key Experimental Protocols:

- Genetic Modulation: Techniques like CRISPR/Cas9-mediated knock-out (KO) or siRNA-mediated knock-down (KD) are used in disease-relevant cellular models (e.g., primary cells, iPSCs) to validate if modulating the target produces the expected phenotypic effect [4].

- Expression Profiling: Assessing differential target expression in healthy versus diseased tissues (e.g., via RNA-seq) helps correlate the target with disease progression [4].

- Functional Cellular Assays: Cell-based assays measure downstream effects of target modulation, such as changes in proliferation, apoptosis, or pathway activation (e.g., calcium signaling, reporter gene assays) [4].

Candidate Screening and Optimisation

For AI-designed small molecules or antibodies, the primary validation involves assessing binding, potency, and specificity.

Key Experimental Protocols:

- Surface Plasmon Resonance (SPR): A gold-standard biophysical assay for quantifying binding affinity (KD), kinetics (kon, koff), and specificity of candidate molecules (e.g., antibodies, small molecules) to their purified targets [22].

- High-Throughput Screening (HTS): AI-prioritized compound libraries are screened in automated, cell-based or biochemical assays to confirm biological activity and determine IC50/EC50 values [1] [20].

- High-Content Imaging and Phenotypic Screening: Platforms like Recursion's use automated microscopy to capture multichannel images of treated cells. AI then analyzes the resulting morphological "phenoprints" to infer mechanism of action and detect off-target effects [1] [4].

Therapeutic Antibody and Biologics Validation

AI is particularly transformative for biologics discovery, as demonstrated by platforms like Jura Bio's VISTA, which generates massive-scale, AI-ready functional datasets for antibody and CAR-T development [23].

Key Experimental Protocols:

- Engineered Cell-Based Binding Assays: The VISTA platform delivers designed antibody sequences (e.g., scFvs) into human cells and tests binding against an array of DNA-barcoded targets and off-targets simultaneously. Single-cell sequencing reads out the sequence and its functional binding profile, creating a rich training dataset for AI models [23].

- Epitope Binning and Specificity Screening: For challenging targets like intracellular oncoproteins presented on HLA (e.g., PRAME, MAGE-A4), assays must demonstrate that TCR-mimic (TCRm) antibodies are highly specific to the peptide-HLA complex, with minimal off-target binding to other pHLAs [23].

The Scientist's Toolkit: Essential Reagents and Assays

Successful validation of AI-derived candidates relies on a suite of established and emerging research tools.

Table 3: Key Research Reagent Solutions for Validating AI-Derived Candidates

| Reagent / Assay Solution | Primary Function in Validation | Key Application Example |

|---|---|---|

| CRISPR/Cas9 Reagents [4] | Target gene knock-out to establish causal link between target and disease phenotype. | Functional validation of novel AI-predicted targets in iPSC-derived cells [4]. |

| siRNA/shRNA Libraries [4] | Target gene knock-down for high-throughput functional genomics screening. | Rapid validation of multiple AI-proposed targets in parallel [4]. |

| Surface Plasmon Resonance (SPR) Kits [22] | Label-free, quantitative analysis of binding affinity and kinetics. | Confirmatory testing of AI-designed antibody-antigen or small molecule-target interactions [22]. |

| Multiplexed Immunofluorescence Kits [4] | High-content imaging to capture complex phenotypic changes in cells. | Used in phenomic screening platforms (e.g., Recursion) to generate data for AI analysis [4]. |

| Engineered Cell Lines [23] | Provide a human cellular context for testing biologics (e.g., antibodies, CARs). | Jura Bio's VISTA system uses engineered human cells to test scFv binding at massive scale [23]. |

| Multi-Electrode Array (MEA) Platforms [4] | Measure functional electrical activity in neurons or cardiomyocytes. | Critical for neurotoxicity or cardiotoxicity screening of AI-designed compounds [4]. |

The surge of AI-derived candidates into clinical stages is a definitive marker of a technological revolution in drug discovery. The compelling data from 2024-2025 demonstrates that AI platforms can consistently generate clinical candidates at an unprecedented pace. However, the integration of AI with high-quality, massively scaled functional data is what ultimately de-risks the journey from digital design to clinical reality [23]. As the field matures, the focus will increasingly shift toward optimizing this human-AI collaboration, improving the explainability of AI models, and navigating the evolving regulatory landscape for AI-derived therapeutics [1] [24]. The continued synergy between computational power and robust experimental biology promises to deliver a new generation of precision medicines to patients faster than ever before.

The Validation Toolbox: Key Functional Assays for Confirming AI-Generated Hits

In modern drug discovery, particularly following the AI-driven identification of drug candidates, confirming that a compound physically engages its intended target in a physiologically relevant context is a critical step. The Cellular Thermal Shift Assay (CETSA) has emerged as a powerful, label-free biophysical technique that directly measures drug-target engagement in intact cells and tissues [25]. Its principle is based on ligand-induced thermal stabilization, where a drug bound to its target protein enhances the protein's thermal stability, reducing its susceptibility to denaturation and precipitation under heat stress [26] [25]. Unlike traditional methods that require chemical modification of compounds or work with purified proteins, CETSA operates in native cellular environments, providing a bridge between computational predictions and biological reality, and offering functional validation for AI-generated drug candidates [25].

CETSA in the Landscape of Target Engagement Assays

CETSA is one of several label-free methods developed to overcome the limitations of traditional affinity-based approaches. The following table provides a comparative overview of CETSA against other key techniques.

Table 1: Comparison of Label-Free Target Engagement Methods

| Method | Sensitivity | Throughput | Application Scope | Key Advantages | Major Limitations |

|---|---|---|---|---|---|

| CETSA | High (thermal stabilization) [25] | Medium (Western Blot) to High (MS/HTS) [25] [27] | Intact cells, target engagement, off-target effects [25] | Operates in native cellular environments; detects membrane proteins; suitable for diverse modalities [26] [25] | Requires protein-specific antibodies for WB; limited to soluble proteins in HTS formats [25] |

| DARTS | Moderate (protease-dependent) [25] | Low to Medium [25] | Cell lysates, purified proteins, novel target discovery [25] | Label-free; no compound modification; cost-effective [25] | Sensitivity depends on protease choice; challenges with low-abundance targets [25] |

| SPROX | High (domain-level stability shifts) [25] | Medium to High [25] | Lysates, weak binders, domain-specific interactions [25] | Provides binding site information via methionine oxidation [25] | Limited to methionine-containing peptides; requires MS expertise [25] |

| Affinity-Based (AfBPP) | High (if reagents are available) [25] | Low [25] | Purified proteins, lysates, validated target analysis [25] | High specificity; compatible with MS or fluorescence [25] | Requires compound modification (e.g., biotinization); may alter binding properties [25] |

A key differentiator for CETSA is its unique ability to confirm target engagement in intact cells, making it ideal for assessing drug action under physiological conditions, studying membrane proteins, and understanding complex cellular events like drug resistance [25]. Its compatibility with high-throughput MS formats enables proteome-wide screening for both on-target and off-target interactions [26].

Core Principles and Key Experimental Protocols of CETSA

The fundamental CETSA protocol involves heating drug-treated and control samples across a temperature gradient. In intact cells, drug-bound target proteins remain stable and soluble, while unbound proteins denature and aggregate. Cells are lysed, and the soluble fraction is analyzed to quantify the remaining stable protein [25].

The following diagram illustrates the core CETSA workflow, from sample preparation to data analysis.

Detailed Methodologies

- Sample Preparation and Heating: Live cells or tissue samples are treated with the drug or a control vehicle. The samples are then aliquoted and heated to a range of precisely controlled temperatures (e.g., from 37°C to 65°C) [25].

- Cell Lysis and Soluble Protein Isolation: Post-heating, cells are lysed, typically through multiple freeze-thaw cycles (e.g., rapid freezing in liquid nitrogen followed by thawing at 37°C). The soluble proteins are separated from the denatured and aggregated proteins by high-speed centrifugation or filtration [25].

- Quantification and Data Analysis: The remaining soluble target protein in the supernatant is quantified. This can be done via:

- Western Blot (WB-CETSA): Used for hypothesis-driven validation of specific, known target proteins. It requires a specific antibody but is widely accessible [25].

- Mass Spectrometry (MS-CETSA or TPP): Enables unbiased, proteome-wide profiling of thermal stability, allowing for the simultaneous quantification of thousands of proteins and the discovery of novel targets or off-target effects [25] [28]. The data is used to generate thermal melting curves, where the protein melting point (Tm) is defined. A positive shift in Tm (ΔTm) in the drug-treated sample indicates successful target engagement [26] [25].

Advanced CETSA Derivative Protocols

- Isothermal Dose-Response CETSA (ITDR-CETSA): This variant uses a fixed temperature (close to the protein's Tm) and a gradient of drug concentrations. It generates a dose-response curve, allowing for the calculation of the half-maximal effective concentration (EC50), which provides a quantitative measure of drug-binding affinity and potency in cells [25].

- Two-Dimensional Thermal Proteome Profiling (2D-TPP): This comprehensive approach combines a temperature range (TPP-TR) with a compound concentration range (TPP-CCR). It provides a high-resolution view of drug-protein interactions, simultaneously revealing binding dynamics and affinity [25].

- CETSA-Luminex Integrated Platform: An innovative hybrid platform that combines CETSA with Luminex xMAP bead-based technology. It allows for rapid, high-throughput multiplexed screening of drug interactions with dozens of pre-selected protein targets (e.g., cytokines) in a single well, bridging the gap between wide proteomic screens and targeted validation [27].

Validating AI-Driven Discoveries with CETSA: A Case Study

The integration of AI-based screening with CETSA validation is a powerful paradigm in modern drug discovery. A 2025 study exemplifies this approach, where a deep learning model (TransformerCPI) was used to screen over 1,100 natural compounds from a Chinese herb library for binding to the pan-cancer marker CD133 [29]. The AI identified two candidates, Polyphyllin V (PP10) and Polyphyllin H (PP24) [29].

Despite their structural similarity, biological validation revealed distinct mechanisms. CETSA and other binding assays were crucial in confirming that both compounds directly bound to CD133, providing the foundational validation for the AI prediction. Subsequent mechanistic studies showed that while both compounds bound CD133, they affected different downstream pathways: PP10 suppressed the PI3K-AKT pathway, while PP24 inhibited the Wnt/β-catenin pathway [29]. This case highlights CETSA's critical role in confirming AI-predicted targets and underscores that AI can identify binders, but biological assays are essential for elucidating complex downstream mechanisms.

Signaling Pathways of AI-Identified Compounds

The diagram below summarizes the distinct mechanisms of action for the two AI-identified compounds, Polyphyllin V and H, as validated through biological assays.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of CETSA relies on specific reagents and instruments. The following table details key solutions required for a typical MS-CETSA workflow.

Table 2: Essential Research Reagent Solutions for CETSA

| Item | Function/Application | Key Considerations |

|---|---|---|

| Appropriate Cell Line or Tissue | The biological system for studying target engagement in a native environment. | Selection is critical; should express the target protein and reflect the physiological context of interest [25] [28]. |

| Compound of Interest | The drug candidate whose target engagement is being measured. | Solubility, stability, and cell permeability must be optimized for the cellular assay [25]. |

| Lysis Buffer | To disrupt cells and release proteins after heating, while preserving the stability state. | Must be compatible with downstream quantification (MS or WB); often contains protease and phosphatase inhibitors [25]. |

| Protein Quantification Platform | To measure the remaining soluble protein post-heating. | MS-CETSA: Requires a high-resolution mass spectrometer and isobaric labeling tags (e.g., TMT) for multiplexing [25] [28]. WB-CETSA: Requires specific, high-quality antibodies against the target protein [25]. |

| Thermocycler or Heat Blocks | For precise and controlled heating of multiple samples across a temperature gradient. | Temperature accuracy and uniformity across samples are paramount for reproducible melting curves [25]. |

| Centrifuge | To separate soluble proteins from denatured aggregates after lysis. | Must maintain low temperature during centrifugation to prevent artifactual protein refolding or denaturation [25]. |

CETSA has firmly established itself as an indispensable tool for direct target engagement validation in physiologically relevant settings. Its unique ability to work in intact cells and tissues, combined with its label-free nature, provides a critical data layer that strengthens the drug discovery pipeline. As the field increasingly relies on AI for initial candidate screening, CETSA and its advanced derivatives offer the necessary biological functional validation to bridge the gap between in silico predictions and successful clinical outcomes, ultimately de-risking drug development and driving the discovery of novel therapeutics.

The pharmaceutical industry is undergoing a significant transformation in preclinical drug development, moving away from traditional models that often fail to faithfully recapitulate human-specific responses toward more physiologically relevant systems [30]. Patient-derived models, particularly organoids and advanced cell cultures, are emerging as powerful tools that integrate authentic human biology early in the drug discovery pipeline [31]. These technologies preserve patient-specific genetic, epigenetic, and phenotypic features, enabling more accurate prediction of therapeutic efficacy and safety while supporting the advancement of precision medicine [30].

This comparison guide objectively evaluates the performance of patient-derived model systems against conventional approaches, with particular emphasis on their role in validating AI-generated drug candidates through biological functional assays. We present structured experimental data, detailed methodologies, and analytical frameworks to assist researchers in selecting appropriate model systems for their specific applications in phenotypic screening.

Comparative Performance Analysis: Patient-Derived Models vs. Conventional Systems

Table 1: Performance comparison of different preclinical screening platforms

| Screening Platform | Physiological Relevance | Predictive Value for Clinical Response | Personalization Capacity | Throughput Potential | Technical Complexity |

|---|---|---|---|---|---|

| Patient-Derived Organoids (PDOs) | High (3D architecture, multiple cell types) | Moderate to High (depends on protocol standardization) | High (retain patient-specific features) | Moderate (improving with automation) | High (specialized expertise needed) |

| Patient-Derived Cell Cultures (PDCs) | Moderate (typically 2D, limited heterogeneity) | Moderate (correlation demonstrated in hematological cancers) | High (direct patient origin) | High (adaptable to HTS formats) | Moderate (standard cell culture techniques) |

| Traditional Cell Lines | Low (immortalized, simplified systems) | Low (poor clinical correlation documented) | None (non-patient specific) | Very High (well-established HTS) | Low (standardized protocols) |

| Animal Models | Variable (species-specific differences) | Variable (high false-positive rate in clinical translation) | Limited (humanized models possible) | Low (cost and time-intensive) | Moderate to High |

Table 2: Experimental validation metrics for drug response prediction in patient-derived models

| Model System | Correlation Metric | Performance Value | Experimental Context | Reference |

|---|---|---|---|---|

| PDC Recommender System | Spearman Correlation (all drugs) | 0.791 | GDSC1 dataset, 81 cell lines | [32] |

| PDC Recommender System | Hit Rate in Top 10 Predictions | 6.6/10 correct | Selective drug identification | [32] |

| Compressed Phenotypic Screening | Hit Identification Accuracy | Consistently identified compounds with largest effects | Pooled screening with computational deconvolution | [33] |

| KGDRP Framework | Cold-start Scenario Improvement | 12% increase in Spearman's Correlation | Integration of PDD and TDD data | [34] |

Experimental Protocols and Methodologies

Patient-Derived Organoid Generation and Screening

Core Protocol: Establishment of patient-derived organoids from tumor biopsies for high-content phenotypic screening [30] [31].

Tissue Acquisition and Processing: Obtain fresh tumor biopsies via core needle or surgical resection. Mechanically dissociate tissue into fragments <1 mm³ using surgical scalpels or gentle mechanical chopping. Enzymatically digest with collagenase/hyaluronidase solution (1-3 mg/mL) for 30-60 minutes at 37°C with gentle agitation.

Cell Culture and Organoid Formation: Embed tissue fragments in extracellular matrix (Matrigel or similar) droplets. Plate matrix-cell mixture in pre-warmed culture plates and polymerize for 20-30 minutes at 37°C. Overlay with organoid-specific medium containing niche factors (Wnt-3A, R-spondin, Noggin), growth factors (EGF, FGF-10), and small molecules (A83-01, SB202190).

Expansion and Passaging: Culture for 7-14 days with medium changes every 2-3 days. Passage at 70-90% confluence using mechanical disruption and enzymatic digestion. For biobanking, cryopreserve in freezing medium containing 10% DMSO and controlled-rate freezing.

High-Content Phenotypic Screening: Plate organoids in 384-well format using automated liquid handling systems. Treat with compound libraries (typically 1-10 µM concentration range) for 5-7 days. Fix with 4% PFA and stain with multiplexed fluorescent dyes for high-content imaging.

Image Acquisition and Analysis: Acquire images using high-throughput confocal microscopy. Process with AI-powered segmentation algorithms for organoid identification and morphological feature extraction. Quantify phenotypic responses including viability, morphology, and differentiation status.

Machine Learning-Based Drug Response Prediction

Core Protocol: Transfer learning approach for predicting drug responses in new patient-derived cell lines [32].

Historical Database Establishment: Collate historical drug sensitivity profiles across diverse patient-derived cell lines. Include full-dose response curves (0.1 nM - 100 µM) for 100-500 compounds. Curate dataset to include AUC, IC50, and Emax values with standardized normalization procedures.

Probing Panel Selection: Select 30-50 representative compounds as probing panel based on mechanism diversity and response variance. Optimize panel using feature selection algorithms to maximize predictive power for full compound library.

New Sample Screening: Screen new patient-derived cell line against probing panel only. Generate dose-response curves using cell viability assays (CellTiter-Glo or similar). Perform technical triplicates to ensure data quality.

Model Training and Prediction: Train random forest model (50 trees, default parameters) using historical database. Use probing panel responses from new sample as input features. Predict responses across full compound library for the new sample.

Experimental Validation: Validate top 10-30 predicted hits experimentally. Compare prediction accuracy using Spearman correlation and hit identification rates in top-ranked compounds.

Compressed Phenotypic Screening with Pooled Perturbations

Core Protocol: Pooling approach to increase throughput of phenotypic screens with high-content readouts [33].

Pool Design: Combine N perturbations into unique pools of size P, ensuring each perturbation appears in R distinct pools. For 316-compound library, implement 10-fold compression with 32 pools.

Screening Execution: Treat cells with pooled compounds at standardized concentration (typically 1 µM). Incubate for determined time period (24 hours for acute responses). Fix and stain with Cell Painting cocktail: Hoechst 33342 (nuclei), concanavalin A-AlexaFluor 488 (ER), MitoTracker Deep Red (mitochondria), phalloidin-AlexaFluor 568 (F-actin), wheat germ agglutinin-AlexaFluor 594 (Golgi/plasma membrane), SYTO14 (nucleoli/RNA).

Image Acquisition and Feature Extraction: Acquire 5-channel images using high-content imaging system. Segment individual cells and extract 886 morphological features. Normalize data using plate-based controls and batch correction algorithms.

Computational Deconvolution: Apply regularized linear regression with permutation testing to infer individual compound effects from pooled measurements. Calculate Mahalanobis distance between control and perturbation vectors to quantify effect size.

Hit Identification: Cluster compounds based on morphological profiles. Validate top hits from compressed screening in conventional individual compound assays.

Visualizing Integration Workflows

AI Validation via Phenotypic Screening - Workflow integrating AI-generated candidates with patient-derived models for functional validation.

Compressed Phenotypic Screening - Experimental and computational workflow for pooled screening with deconvolution.

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Key research reagent solutions for patient-derived model screening

| Reagent/Platform | Function | Application Notes |

|---|---|---|

| Matrigel/ECM Matrices | Provides 3D scaffolding for organoid growth | Basement membrane extract supporting polarized tissue structures; lot-to-lot variability requires qualification |

| Cell Painting Assay Kits | Multiplexed morphological profiling | 6-fluorophore system staining 8+ organelles; generates ~1,500 morphological features per cell |

| CellXpress.ai System | Automated organoid culture | Maintains consistent perfusion for large-scale organoid production (6-15 million per batch) |

| 3D Ready Organoids | Assay-ready organoid models | Pre-qualified for high-throughput screening; reduces protocol development time |

| CRISPR-Based Perturbation Systems | Functional genomic screening | Enables genetic validation of AI-predicted targets in human-relevant contexts |

| Multi-Omics Integration Platforms | Data integration and analysis | Combines transcriptomic, proteomic, and phenotypic data for mechanism elucidation |

| BioHG Knowledge Graphs | Biological network analysis | Integrates PPI, GO, pathway data for target prioritization [34] |

Patient-derived models represent a transformative approach for integrating human biological complexity early in drug discovery. The experimental data and methodologies presented in this guide demonstrate that organoids and advanced cell cultures provide superior physiological relevance compared to traditional systems, with machine learning frameworks further enhancing their predictive power for clinical responses [30] [32].

The convergence of patient-derived models, AI-generated candidates, and high-content phenotypic screening creates a powerful framework for validating therapeutic hypotheses in human-relevant systems before clinical investment. As regulatory agencies increasingly accept these human-relevant models [31], their strategic implementation will be crucial for reducing attrition rates and advancing precision medicine.

Researchers should select model systems based on their specific application needs, considering the trade-offs between physiological complexity, throughput capacity, and technical feasibility outlined in this comparison guide. The continued standardization and automation of these platforms will further enhance their reliability and broad adoption across the pharmaceutical industry.

The Design-Make-Test-Analyze (DMTA) cycle is the core iterative framework of modern medicinal chemistry, driving the optimization of drug candidates from initial hits to clinical development candidates. [35] In traditional drug discovery, this process is often hampered by sequential execution, data integration barriers, and resource coordination inefficiencies, typically resulting in cycle times of several months. [35] The integration of Artificial Intelligence (AI) is fundamentally transforming this workflow, compressing timelines and enhancing the quality of resulting candidates. [1] AI-guided DMTA cycles accelerate lead optimization by employing generative AI for molecular design, automation and AI-planning for synthesis, high-throughput screening for testing, and machine learning for data analysis. [26] [36] This guide provides an objective comparison of leading AI platforms and experimental approaches, focusing on their validation through biological functional assays—a critical step for establishing translational confidence in AI-generated drug candidates.

Platform Performance Comparison

The following tables compare the performance and functional validation strategies of major AI-driven drug discovery platforms that have advanced candidates into clinical development.

Table 1: Clinical-Stage AI Drug Discovery Platforms (2024-2025)

| Platform/Company | Core AI Approach | Lead Clinical Candidate(s) | Therapeutic Area | Reported Discovery Timeline | Clinical Stage (as of 2025) |

|---|---|---|---|---|---|

| Insilico Medicine | Generative Chemistry & Target Discovery | ISM001-055 (TNK Inhibitor) | Idiopathic Pulmonary Fibrosis | ~18 months (Target to Phase I) [1] | Phase IIa (Positive Results) [1] |

| Exscientia | Generative AI & Automated Design | DSP-1181; EXS-21546; GTAEXS-617 | Oncology, Immunology [1] | ~70% faster design cycles; 10x fewer compounds [1] | Phase I/II (Pipeline Prioritization in 2023) [1] |

| Schrödinger | Physics-Enabled ML Design | Zasocitinib (TAK-279) | Immunology (TYK2 Inhibition) [1] | Information Missing | Phase III [1] |

| Recursion | Phenomics-First AI | Multiple (Integrated with Exscientia post-merger) [1] | Oncology, Rare Disease [1] | Information Missing | Phase I/II [1] |

| BenevolentAI | Knowledge-Graph Target Discovery | Information Missing | Information Missing | Information Missing | Information Missing |

Table 2: Comparative Analysis of AI-Driven DMTA Acceleration

| Performance Metric | Traditional DMTA | AI-Accelerated DMTA | Key Supporting Data |

|---|---|---|---|

| Cycle Time (Design → Analyze) | Several months per cycle [35] | Weeks per cycle [26] | Hit-to-Lead phase compressed from months to weeks [26] |

| Compound Design Efficiency | High fraction of proposed compounds are not "drug-like" [36] | High success rate in generating drug-like candidates [36] | Eli Lilly's generative AI output 100% drug-like compounds vs. 1% with prior methods [36] |

| Synthesis Efficiency | Labor-intensive, low-throughput | AI-planned routes and automated execution | Exscientia reports 10x fewer synthesized compounds needed [1] |

| Target Validation Integration | Often separate from main cycle | Integrated functional validation (e.g., CETSA) | CETSA used for quantitative, in-cell target engagement [26] |

| Success Rate in Clinical Translation | High attrition rate (~90% failure) [37] | To be determined (Most candidates in early trials) [1] | Multiple AI-derived molecules in clinical stages, but none yet approved [1] |

Experimental Protocols for Validating AI-Generated Candidates

The credibility of AI-generated drug candidates hinges on rigorous validation through biologically relevant functional assays. The following protocols are critical for confirming predicted mechanisms of action.

Protocol 1: Cellular Thermal Shift Assay (CETSA) for Target Engagement

CETSA is a cornerstone functional assay that measures drug-target binding in intact cells, bridging the gap between computational prediction and cellular efficacy. [26]

- Objective: To confirm direct, physical engagement between an AI-predicted drug candidate and its intended protein target within a physiologically relevant cellular environment.

- Materials:

- Cell line expressing the target protein (endogenous or engineered)

- AI-generated drug candidate (lyophilized powder, ≥95% purity)

- Vehicle control (e.g., DMSO)

- Thermal heater (e.g., PCR machine)

- Lysis buffer (with protease and phosphatase inhibitors)

- Centrifugation equipment

- Target protein detection method (e.g., Western Blot, ELISA, or High-Resolution Mass Spectrometry)

- Procedure:

- Cell Treatment: Treat two aliquots of cells with either the candidate drug or vehicle control for a predetermined time (e.g., 1-2 hours).

- Heat Challenge: Subject the cell aliquots to a range of elevated temperatures (e.g., 45-65°C) for 3-5 minutes in a thermal heater.

- Cell Lysis: Lyse the heat-challenged cells using a detergent-free buffer.

- Protein Solubility Separation: Centrifuge the lysates at high speed (e.g., 20,000 x g) to separate the soluble (non-denatured) protein from the insoluble (aggregated) protein.