Focused vs. Diverse Screening Libraries: A Strategic Guide for Hit Identification in Drug Discovery

Selecting the optimal screening library is a critical, early-stage decision in drug discovery that significantly impacts project timelines, costs, and success.

Focused vs. Diverse Screening Libraries: A Strategic Guide for Hit Identification in Drug Discovery

Abstract

Selecting the optimal screening library is a critical, early-stage decision in drug discovery that significantly impacts project timelines, costs, and success. This article provides a comprehensive comparison of focused and diverse screening libraries for researchers and drug development professionals. It explores the foundational principles of both strategies, details their design methodologies and practical applications, and offers solutions for common optimization challenges. By synthesizing current data on performance validation and hit rates, this guide delivers actionable insights to help scientists align their library selection with specific project goals, from novel target exploration to lead optimization.

Core Concepts: Defining Focused and Diverse Library Strategies

What is a Focused Library? Targeting Protein Families and Known Pharmacophores

In the quest for new therapeutics, drug discovery teams are consistently challenged with optimizing their initial screening strategies to efficiently identify high-quality chemical starting points. While high-throughput screening (HTS) of vast, diverse compound libraries is a mainstay in the industry, the use of focused libraries—collections designed around specific protein targets or pharmacophores—has emerged as a powerful strategy to increase screening efficiency and hit rates. This guide provides an objective comparison of focused and diverse library approaches, detailing the design methodologies, experimental protocols, and performance data that define their utility in modern drug discovery.

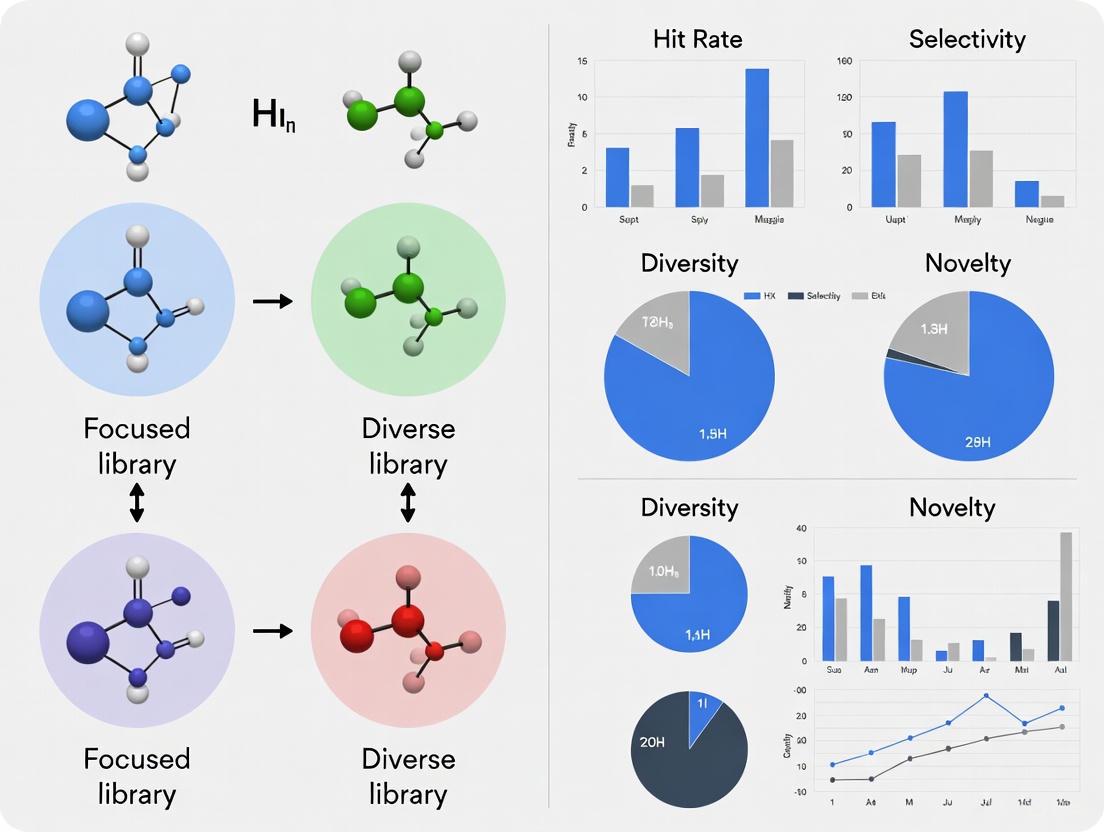

Focused vs. Diverse Libraries: A Strategic Comparison

Focused and diverse libraries serve complementary roles in the drug discovery pipeline. The table below summarizes their core distinctions and strategic applications.

| Feature | Focused Library | Diverse Library |

|---|---|---|

| Design Principle | Designed or assembled with a specific protein target or protein family in mind [1]. | Designed to cover a broad area of chemical space with minimal redundancy [2]. |

| Typical Size | Smaller, often containing 100-500 compounds for a given chemotype [1]. | Large, often containing 100,000 to over 1 million compounds [3] [2]. |

| Primary Application | Targeted screening when structural or ligand data for the target is available [1] [4]. | Initial screening for novel targets with little prior knowledge; phenotypic screening [3] [2]. |

| Key Advantages | • Higher hit rates• Discernable initial Structure-Activity Relationships (SAR)• Reduced hit-to-lead times [1]. | • Broad exploration of chemical space• Potential for serendipitous, novel hits [2]. |

| Informed By | Target structural data, chemogenomic models, or known ligand properties [1]. | Molecular descriptors, physicochemical properties, and scaffold diversity analysis [2]. |

The fundamental premise of screening a focused library is that fewer compounds need to be screened to obtain hits, and these hits often exhibit clearer structure-activity relationships, facilitating rapid follow-up [1].

Design and Synthesis of Focused Libraries

The construction of a focused library is a rational process that leverages existing knowledge. The following workflow illustrates the primary design pathways.

Structure-Based Design

When a high-resolution protein structure is available, computational methods like molecular docking are used to design scaffolds and select substituents that complement the binding site. For example, kinase-focused libraries often feature scaffolds with a hydrogen bond donor-acceptor pair to mimic ATP binding in the hinge region, with side chains designed to access additional selectivity pockets [1].

Ligand-Based Design

In the absence of structural data, known active ligands can serve as templates. Techniques like pharmacophore modeling and similarity searching are used to identify novel compounds that share essential features with known actives, a process known as "scaffold hopping" [1] [4]. The SpotXplorer approach exemplifies this by designing minimal fragment libraries that maximize coverage of experimentally confirmed binding pharmacophores derived from protein-fragment complexes in the Protein Data Bank (PDB) [5].

Chemogenomic Design

For target families like GPCRs and ion channels, where structural data may be scarce but sequence and mutagenesis data are abundant, models can be built to predict the properties of binding sites across the entire family. This allows for the design of libraries that can interact with multiple related targets [1].

Key Experimental Protocols and Validation

The value of a focused library is ultimately determined through experimental validation. Below are detailed protocols and data from seminal studies.

Case Study 1: Validating a Pharmacophore-Focused Fragment Library

The SpotXplorer0 pilot library of 96 fragments was designed to cover 425 non-redundant binding pharmacophores identified from PDB analysis [5].

- Experimental Protocol:

- Library Design: Fragments were selected from commercial sources to maximally cover the identified pharmacophores, with an optimization algorithm ensuring diversity and coverage.

- Biochemical Screening: The library was screened against a panel of targets including GPCRs (5-HT1A, 5-HT6, 5-HT7 receptors) and proteases (Factor Xa, thrombin) using cell-based radioligand binding and chromogenic assays.

- Validation Metric: The efficiency was measured by calculating the percentage of known pharmacophores for each target that were covered by the fragment hits identified.

- Performance Data: The screening successfully identified multiple diverse fragment hits for each target. Retrospective analysis showed that the hits contained, on average, 70% of the known pharmacophores for these targets, validating the library's design principle [5].

Case Study 2: Chemoproteomic Screening for Glo1 Inhibitors

This study re-screened an existing focused library of 1,800 indole-containing molecules to find new ligands for glyoxalase 1 (Glo1) [6].

- Experimental Protocol:

- Screening Method: Gel-based protein profiling using a photo-affinity indole probe. The technique identified molecules that could compete with the probe for binding to Glo1.

- Hit-to-Lead: Structure optimization of the initial hits yielded a potent inhibitor (Molecule 9).

- Cellular Validation: The inhibitor's activity was confirmed in cells, where it increased cellular methylglyoxal levels and suppressed osteoclast formation.

- Performance Data: The study exemplifies how chemical proteomics can exploit existing focused libraries to discover uncharacterized ligand-protein pairs, leading to a novel inhibitor with a confirmed mechanism of action [6].

Case Study 3: Barcode-Free Screening of Massive Libraries

A 2025 study introduced "Self-Encoded Libraries" as a breakthrough in affinity selection screening, allowing for the untagged screening of hundreds of thousands of small molecules [7].

- Experimental Protocol:

- Library Pooling: A library of nearly 500,000 members was pooled in a single experiment.

- Affinity Selection: The pool was exposed to immobilized target proteins (e.g., Carbonic Anhydrase IX, CAIX).

- Hit Identification: Bound ligands were identified using advanced mass spectrometry (SIRIUS-COMET tool) that decodes hits based on their mass signature and fragmentation patterns, without DNA barcodes.

- Performance Data: The method successfully identified known and novel nanomolar binders for CAIX. Furthermore, it identified inhibitors for Flap Endonuclease 1 (FEN1), a target previously considered intractable for DNA-encoded library technology due to its DNA-binding site, which the large DNA tag would interfere with [7]. This highlights a key technical advantage of tag-free methods for specific target classes.

The Scientist's Toolkit: Key Reagents & Solutions

Successful implementation of focused library screening relies on specialized tools and reagents.

| Tool / Reagent | Function / Description | Example Use Case |

|---|---|---|

| Photo-affinity Probe [6] | A chemical probe containing a photoreactive group that covalently captures protein-ligand interactions upon irradiation. | Chemoproteomic competition profiling to identify binders from a focused library [6]. |

| Spectral Library [8] | A curated database of peptide spectra used to identify and quantify proteins in mass spectrometry-based proteomics. | Critical for Data-Independent Acquisition (DIA) analysis in single-cell proteomics and target engagement studies [8]. |

| Self-Encoded Library [7] | A massive small molecule library screened without DNA barcodes; hits are decoded via their intrinsic mass signature using MS/MS. | Ultra-high-throughput affinity selection screening for historically challenging targets like DNA-binding proteins [7]. |

| Pharmacophore Model [5] [9] | An abstract model representing the spatial and electronic features essential for a molecule to interact with a biological target. | Designing the SpotXplorer0 library; used as a filter in virtual screening to select compounds from a focused set [5]. |

| Negative Image-Based (NIB) Model [9] | A pseudo-ligand model that represents the shape and electrostatic potential of a protein's binding cavity. | Used in docking rescoring (R-NiB) to improve enrichment of active ligands by comparing docking poses to the cavity's negative image [9]. |

Focused libraries represent a sophisticated, knowledge-driven approach to early drug discovery. The experimental data demonstrates their capacity to deliver higher hit rates and richer initial SAR than diverse screening sets, ultimately accelerating the path to lead optimization. The field continues to evolve with innovations like barcode-free massive screening [7] and enrichment-driven pharmacophore optimization [9] pushing the boundaries of speed and accuracy.

The choice between a focused or diverse strategy is not a binary one; they are complementary. Many successful campaigns employ a sequential strategy, starting with a diverse set to scout chemical space, followed by more focused screens to efficiently optimize promising hits [2]. As structural and ligand data continue to expand, the design and application of focused libraries will become increasingly precise, solidifying their role as an indispensable tool for the modern drug discoverer.

What is a Diverse Library? Maximizing Coverage of Chemical Space

In the field of drug discovery, a diverse library is a collection of compounds designed to cover a broad swath of chemical space by encompassing a wide variety of molecular structures, scaffolds, and physicochemical properties [10]. The primary goal is to maximize the probability of identifying novel hit compounds during screening, particularly for targets with few known active chemotypes or in phenotypic assays where the mechanism of action is unknown [10].

This guide compares the performance of diverse screening libraries against focused libraries, providing objective data and methodologies to inform your screening strategy.

Core Concepts: Diverse vs. Focused Libraries

The fundamental distinction between diverse and focused libraries lies in their design philosophy and application. The following table outlines their core characteristics.

Table 1: Key Characteristics of Diverse and Focused Compound Libraries

| Feature | Diverse Library | Focused Library |

|---|---|---|

| Design Principle | Maximize structural and chemical diversity to broadly explore chemical space [10]. | Enrich compounds predicted to interact with a specific protein target or target family (e.g., kinases, GPCRs) [1] [3]. |

| Primary Use Case | Phenotypic screening, novel target probing, projects with little prior ligand data [10]. | Targets with abundant structural or ligand data, seeking higher hit rates and familiar SAR [1] [10]. |

| Typical Hit Rate | Generally lower, but hits can be more novel and spread across multiple targets/processes [1] [10]. | Generally higher, as the library is pre-enriched for binders to a specific target class [1]. |

| Chemical Space | Broad, heterogeneous coverage of many chemotypes [10]. | Narrow, deep coverage of specific, "privileged" chemotypes for a target family [1]. |

| Outcome | Identifies multiple, potentially novel scaffolds for further development [10]. | Delivers hit clusters with discernable structure-activity relationships (SAR) [1]. |

Experimental Comparisons and Performance Data

Direct comparisons in screening campaigns reveal the complementary strengths of diverse and focused approaches.

Case Study: Fragment Screening against AmpC β-lactamase

A study screening the enzyme AmpC β-lactamase provides quantitative data comparing an empirical diverse fragment screen with a computational focused approach [11].

Table 2: Experimental Results from NMR and Virtual Screening of AmpC β-lactamase

| Screening Method | Library Size | Confirmed Hits | Hit Rate | Exemplary Inhibitor Potency (Kᵢ) | Ligand Efficiency (LE) | Chemotype Novelty (Avg. Tanimoto Coefficient) |

|---|---|---|---|---|---|---|

| Diverse (NMR Screening) | 1,281 compounds | 9 inhibitors | 0.7% (9/1281) | 0.2 mM | 0.14 - 0.31 | High (Avg. 0.21) |

| Focused (Virtual Screening) | 290,000 compounds | 10 inhibitors | 0.003% (10/290k) | 0.03 mM | 0.19 - 0.43 | Lower than NMR hits |

Key Findings [11]:

- Novelty vs. Potency: The diverse NMR screen discovered fragments with higher topological novelty, while the focused virtual screen identified fragments with higher potency and ligand efficiency.

- Coverage: The focused screen successfully targeted chemotype holes missing from the smaller diverse empirical library.

- Synergy: The study concluded that combining both methods enables the discovery of unexpected chemotypes while efficiently filling gaps in coverage.

Methodologies for Library Design and Analysis

Designing a Diverse Library for Global Health

A 2023 project designing a 30,000-compound diverse library for neglected diseases details a robust protocol for maximizing novel chemical space coverage [12].

Workflow Overview:

Protocol Details [12]:

- Basis Set Creation: A representative subset of the vast virtual library (Enamine REAL) is generated to guide the selection of viable chemical reactions and building blocks.

- Filtering for Liabilities: Compounds are filtered to remove pan-assay interference compounds (PAINS), toxicophores, and reactive functionalities. Drug-likeness criteria like the Rule of Five and Veber's parameters are applied.

- Super-Set Generation: A larger set of compounds is enumerated from the selected reactions, adhering to strict "hit-like" physicochemical property ranges (e.g., Molecular Weight 320-420, LogP 0-4.5).

- Diversity Selection: A MaxMin diversity algorithm is applied to the super-set to select a final library that maximizes the coverage of chemical space with the fewest compounds.

Assessing Target Family Coverage

For focused libraries, a key methodological consideration is evaluating coverage and bias—whether the library can probe many members of a protein family or is biased toward a few specific targets [13].

Method: In silico target profiling can predict the interaction of each compound in a library across a panel of related targets. This generates a ligand-target interaction matrix, allowing researchers to visualize and optimize the library's coverage of the target family space before any physical screening occurs [13].

The Scientist's Toolkit: Essential Reagents and Solutions

The construction and screening of high-quality compound libraries rely on several key components.

Table 3: Essential Research Reagents and Solutions for Compound Library Screening

| Tool / Reagent | Function / Description | Application in Screening |

|---|---|---|

| Pre-plated Diversity Sets | Chemically diverse compounds formatted in 96- or 384-well plates [10]. | Ready-to-use for high-throughput screening (HTS) in robotic systems [3] [10]. |

| Fragment Libraries (e.g., SLVer-Bio) | Collections of low molecular weight compounds (150-300 Da) optimized for solubility and 3D character [3]. | Fragment-Based Drug Discovery (FBDD); identifying low-affinity binders as efficient starting points [11]. |

| REAL Space / Virtual Libraries | Massive (billions) collections of easily synthesizable virtual compounds [12] [14]. | Source for designing novel, bespoke diverse libraries or for virtual screening to focus efforts [12]. |

| Covalent Fragment Libraries | Fragments featuring electrophilic warheads (e.g., acrylamides, chloroacetamides) [14]. | Screening for covalent binders to target previously "undruggable" targets [14]. |

| Target-Immobilized NMR (TINS) | A biophysical technique that detects binding of fragments to a protein target immobilized on a solid support [11]. | Primary screening for low-affinity fragment binding; used to identify the 9 novel AmpC inhibitors [11]. |

| Surface Plasmon Resonance (SPR) | A label-free technique for measuring binding kinetics (KD) and affinity in real-time [11]. | Secondary, confirmatory assay to validate primary screening hits [11]. |

Choosing between a diverse or focused strategy is not always mutually exclusive. The trends in library design and use point toward a hybrid, intelligent approach [15] [14].

Performance Comparison Summary:

- Start with Diversity: For exploratory research, phenotypic screening, or against targets with little prior information, a diverse library maximizes the chance of finding completely novel chemical starting points [10].

- Focus for Efficiency: For well-characterized target families like kinases or GPCRs, a focused library will typically yield higher hit rates and more immediately tractable SAR, saving time and resources [1] [3].

- The Future is Integrated: The most effective modern strategies use diverse screens for novel discovery, followed by focused or AI-enabled libraries for lead optimization. They also leverage large virtual spaces to expand chemical coverage on demand [15] [12] [14].

The key to maximizing coverage of chemical space lies in understanding that no single library can cover it all. A strategic combination of broad-scale diverse screening for novelty and target-tailored focused screening for efficiency, powered by modern computational and AI tools, provides the most robust path to successful hit identification in drug discovery.

In the pursuit of new chemical probes and therapeutics, the construction of screening libraries is a foundational step that can determine the success or failure of a drug discovery campaign. Two predominant strategic philosophies guide this process: the Similar Property Principle (SPP), which leverages known active compounds to select structurally similar molecules, and Broad Exploration, which emphasizes wide coverage of chemical space to identify novel chemotypes. The SPP operates on the principle that "structurally similar molecules are likely to have similar biological activity" [16], making it the cornerstone of focused library design and ligand-based virtual screening. In contrast, Broad Exploration, often implemented through diversity-based library design, seeks to maximize the structural variety within a collection to increase the probability of discovering unprecedented lead matter, particularly when prior structure-activity relationship (SAR) knowledge is limited [2]. This guide objectively compares the performance, applications, and underlying methodologies of these two approaches, providing a framework for researchers to select the optimal strategy for their specific discovery context.

Conceptual Foundations and Strategic Rationale

The Similar Property Principle (Focused Design)

The Similar Property Principle (SPP) is the theoretical basis for focused screening. It enables researchers to exploit existing knowledge, such as a known active compound or a protein structure, to select compounds with a higher prior probability of activity [16] [2]. This approach is highly efficient, as it minimizes the number of compounds that need to be screened. The key implementation of SPP is ligand-based virtual screening, where large databases are ranked in descending order of their structural similarity to a reference molecule with known biological activity [16].

The core strength of this approach is its ability to rapidly identify close analogs and refine SAR. However, it is constrained by the "activity cliff" phenomenon, where small structural modifications can lead to drastic changes in biological properties, potentially causing promising chemotypes to be overlooked [16]. Furthermore, its effectiveness is inherently limited by the quality and relevance of the starting query compound.

The Broad Exploration Strategy (Diverse Design)

The Broad Exploration strategy aims to sample a wide and representative region of drug-like chemical space. Its primary goal is scaffold diversity—ensuring a variety of different chemotypes are represented—which is crucial when little is known about a target or when seeking to identify novel lead matter [2]. This approach is grounded in the reality that even large corporate screening collections, typically containing 1-10 million compounds, represent only a tiny fraction of the estimated ~10^13 drug-like compounds thought to exist [2].

The main advantage of Broad Exploration is its potential for scaffold hopping, the identification of active compounds that belong to different lead series from the target compound. Such novel chemotypes offer several advantages, including opportunities for novel intellectual property and potentially improved physicochemical or ADMET properties [2]. The trade-off is that this approach typically requires screening larger numbers of compounds, making it more resource-intensive.

Quantitative Performance Comparison

The following tables synthesize experimental data from published studies to compare the performance outcomes of the two strategies across key metrics.

Table 1: Comparative Performance in Hit Identification

| Performance Metric | Similar Property Principle (Focused) | Broad Exploration (Diverse) | Supporting Evidence |

|---|---|---|---|

| Typical Hit Rate | Generally higher hit rates | Lower hit rates, but more novel chemotypes | Cluster-based rational subset design gave higher hit rates than random subsets in Pfizer simulation [2] |

| Scaffold Novelty | Lower (analogs of known actives) | Higher (potential for scaffold hopping) | Designed to maximize scaffold diversity and identify new lead series [2] |

| Chemical Space Coverage | Narrow, focused around query | Broad, representative of drug-like space | Aim is to maximize coverage while minimizing redundancy [2] |

| Resource Efficiency | High (fewer compounds screened) | Lower (more compounds screened) | Efficient when prior knowledge exists; minimizes number of compounds to screen [2] |

Table 2: Practical Implementation and Library Composition

| Implementation Characteristic | Similar Property Principle (Focused) | Broad Exploration (Diverse) | Contextual Example |

|---|---|---|---|

| Library Size | Smaller, targeted sets | Larger collections (>100,000 compounds) | European Lead Factory: 500,000 compounds [17]; St. Jude: ~575,000 compounds [18] |

| Primary Selection Method | Structural similarity to known actives | Maximizing structural diversity and drug-likeness | Virtual screening based on similarity [16] vs. diversity analysis [2] |

| Typical Library Composition | Targeted analogs, series expansions | Diversity-oriented, drug-like compounds, natural products | St. Jude "Focused" sub-library vs. "Diversity" sub-library [18] |

| Optimal Use Case | Target class with known ligands, lead optimization | Novel targets, phenotypic screens, initial lead discovery | When structure-activity information is available vs. when little is known about the target [2] |

Experimental Protocols and Methodologies

Implementing the Similar Property Principle: Similarity Searching

The core experimental protocol for applying the SPP is similarity searching, which involves quantifying structural similarity between a query molecule and database compounds.

- Step 1: Molecular Representation (Fingerprinting). The query molecule and database compounds are encoded into molecular fingerprints. Common fingerprints include [16] [19] [20]:

- Extended Connectivity Fingerprints (ECFP): Circular fingerprints that capture radial atom environments. ECFP4 (radius=2) and ECFP6 (radius=3) are standard variants [20].

- MACCS Keys: A structural key of 166 predefined chemical fragments [19].

- All-Shortest Paths (ASP): Encodes paths between all pairs of atoms in the molecule. Benchmarking has shown that ASP paired with the Braun-Blanquet similarity coefficient provides superior performance in predicting biological activity [16].

- Step 2: Similarity Calculation. The similarity between the query and each database compound is calculated using a similarity coefficient. While the Tanimoto coefficient is the most widely used, studies have found that the Braun-Blanquet coefficient can offer superior performance, particularly when combined with ASP fingerprints [16].

- Step 3: Compound Ranking and Selection. Database compounds are ranked in descending order of similarity to the query. The top-ranking compounds are selected for screening.

Implementing Broad Exploration: Diversity-Based Selection

The protocol for designing a diverse screening library focuses on maximizing scaffold coverage and ensuring drug-like properties.

- Step 1: Define the Source Pool. A large collection of commercially available or in-house compounds is assembled. For example, the European Lead Factory library integrates compounds from pharmaceutical companies with completely new compounds from library synthesis [17].

- Step 2: Apply Drug-Likeness and Quality Filters. Compounds are filtered to remove undesirable chemotypes. Standard practice includes [18] [15]:

- Adherence to Lipinski's Rule of Five to ensure favorable oral bioavailability.

- Elimination of compounds with Pan-Assay Interference Compounds (PAINS) motifs and other toxicophores or reactive functional groups.

- Assessment of physicochemical properties like molecular weight, calculated logP (clogP), and polar surface area to maintain a "drug-like" profile.

- Step 3: Diversity Analysis and Subset Selection. A diverse subset is selected using methods such as [2]:

- Clustering: Compounds are clustered based on structural fingerprints, and a representative subset is selected from each cluster.

- Dissimilarity-Based Selection: An iterative algorithm is used to select compounds that are maximally dissimilar to each other.

- Scaffold-Centric Analysis: The collection is analyzed based on molecular frameworks to ensure a wide variety of core chemotypes are represented.

- Step 4: Quality Control (QC). For a physical library, rigorous QC is essential. The St. Jude Children's Research Hospital, for instance, uses LCMS to confirm that >87% of compounds in their test set maintained >80% purity after years of storage [18].

The following workflow diagrams summarize the key decision points and experimental processes for each strategy.

Diagram 1: Similar Property Principle Workflow

Diagram 2: Broad Exploration Workflow

The Scientist's Toolkit: Key Research Reagents and Solutions

The following table details essential materials and computational tools used in the design and implementation of both screening strategies.

Table 3: Essential Reagents and Tools for Library Design and Screening

| Tool/Reagent | Function/Purpose | Relevance to Strategy |

|---|---|---|

| Molecular Fingerprints (e.g., ECFP, MACCS) | Numerical representation of chemical structure for computational comparison. | Core to both; enables similarity calculation (SPP) and diversity analysis (Broad). |

| Similarity Coefficients (e.g., Tanimoto, Braun-Blanquet) | Algorithm to quantify the degree of structural overlap between two fingerprint vectors. | Critical for ranking compounds in SPP-based virtual screening [16]. |

| Automated Storage System (e.g., Brooks Life Sciences) | Manages large physical compound collections (e.g., millions of tubes) in DMSO at -20°C. | Essential for maintaining a large, diverse library for Broad Exploration [18]. |

| LCMS (Liquid Chromatography-Mass Spectrometry) | Quality control instrument to verify compound identity and purity after purchase and storage. | Crucial for both strategies to ensure screening results are reliable [18]. |

| Drug-like Filters (e.g., Rule of 5, PAINS) | Computational rules to remove compounds with poor pharmacokinetics or assay-interfering motifs. | Applied in both strategies, but foundational for building a high-quality diverse library [18] [15]. |

| Clustering Algorithms | Computational methods to group structurally similar compounds together. | Primarily used in Broad Exploration to select representative subsets and analyze coverage [2]. |

Integrated and Modern Approaches

The distinction between focused and diverse screening is increasingly blurred in modern drug discovery, where integrated and iterative approaches are becoming standard.

- Sequential Screening: This iterative process starts with a small, diverse set to derive initial structure-activity information, which is then used to select more focused sets in subsequent screening rounds [2]. This hybrid approach leverages the strengths of both strategies.

- Ultra-Large Virtual Screening: Advances in computational power now allow for the docking of billions of virtual compounds [21]. This represents a form of in silico Broad Exploration, which can be followed by the synthesis and testing of a very small, focused set of top-ranking hits, dramatically increasing efficiency.

- AI-Enhanced Library Design: Machine learning models, particularly support vector machines (SVMs), can significantly enhance the power of chemical fingerprints for predicting biological activity, offering a fivefold improvement relative to unsupervised similarity-based approaches [16]. Furthermore, AI can assist in triaging hits and guiding the design of novel compounds to fill gaps in chemical space [15].

Both the Similar Property Principle and Broad Exploration are validated strategies with distinct strengths and optimal application domains. The SPP, implemented through focused libraries and similarity searching, offers a highly efficient path to potent analogs and is most effective when prior knowledge of active chemotypes exists. In contrast, Broad Exploration, implemented through diverse library design, is a powerful strategy for novel lead discovery, scaffold hopping, and interrogating targets with limited prior SAR. The modern drug discovery workflow does not force a binary choice but often synergistically combines both, for example, by using diverse sets for initial probing and focused approaches for lead optimization. The choice of strategy ultimately depends on the project goals, available resources, and the existing knowledge of the biological target.

In modern drug discovery, screening libraries are systematically organized collections of chemical compounds used to identify initial hit molecules against biological targets. The strategic choice between diverse libraries, which maximize coverage of chemical space, and focused libraries, designed around specific target knowledge, represents a critical early decision that significantly influences the success and efficiency of screening campaigns [22]. This guide provides an objective comparison of these two predominant library strategies, detailing their key characteristics, performance data, and ideal applications to inform selection for specific research objectives.

Comparative Analysis of Library Characteristics

Table 1: Key Characteristics of Diverse and Focused Screening Libraries

| Characteristic | Diverse Libraries | Focused Libraries |

|---|---|---|

| Typical Library Size | 100,000 to over 500,000 compounds [18] [3] | ~100 to 5,000 compounds [1] [3] |

| Primary Design Basis | Structural diversity and maximum coverage of drug-like chemical space [2] [15] | Known ligands, target structure, or specific protein family (e.g., kinases, GPCRs) [1] |

| Key Physicochemical Filters | Lipinski's Rule of Five, removal of PAINS and toxicophores [18] [15] | Target-family specific properties (e.g., hinge-binding motifs for kinases) [1] |

| Typical Hit Rates | Lower hit rates, but broader biological profile [2] | Higher hit rates for the intended target [1] |

| Ideal Use Cases | Novel target exploration, phenotypic screening, initial scouting [3] [2] | Target-based screening, lead optimization, scaffold hopping [1] [3] |

| Representative Examples | Corporate HTS collections (1-10M compounds) [2]; Academic libraries (~575,000 compounds) [18] | Kinase library (2,000 cmpds) [3]; CNS-penetrant library (7,100 cmpds) [3] |

Experimental Data and Performance Metrics

Performance in Real-World Screening Campaigns

Empirical data from screening campaigns provides tangible evidence of the differing performance profiles between library types.

Hit Rate Comparison: Focused libraries consistently yield higher hit rates against their intended targets compared to diverse sets. For instance, a kinase-focused library would be expected to produce a significantly higher hit rate when screened against a novel kinase target than a general diverse library [1]. One provider of target-focused libraries reported that their collections have led to over 100 patent filings and contributed to the discovery of several clinical candidates, underscoring the efficiency of this approach for generating valuable intellectual property and development candidates [1].

Case Study: The SJCRH Library Analysis: An academic institution's analysis of its ~575,000 compound library, which contains both diverse and focused sub-libraries, revealed distinct physicochemical property distributions. The "Focused" subset tended to contain compounds with higher molecular weight, lipophilicity, and number of aromatic rings compared to the "Diversity" subset, reflecting the common practice of adding functional groups for potency optimization during focused library design [18]. This real-world data confirms that the etiology of a library (its design basis) directly shapes the chemical properties of its constituents.

Quality Control and Integrity Assessment

The utility of any screening library depends on the physical integrity of its compounds. A rigorous quality control (QC) protocol is essential for reliable results.

- SJCRH QC Protocol: A study assessed the quality of a representative subset of compounds stored in DMSO at -20°C over several years. The protocol involved:

- Sample Selection: Randomly selecting 779 compounds from both long-term storage (96-way tubes) and frequently used (384-way tubes) formats.

- LCMS Analysis: Using ultra-performance liquid chromatography with ultraviolet and evaporative light-scattering detectors to determine purity.

- Identity Confirmation: Employing mass spectrometry to verify compound identity.

- Results: The study found that 87.4% of tested compounds met the QC criteria of >80% purity, demonstrating good stability under proper storage conditions and validating the library's continued usability for screening [18]. This protocol provides a template for researchers to periodically validate their own collections.

Methodologies and Experimental Protocols

Design and Enhancement Workflows

The creation and maintenance of high-quality libraries follow structured workflows. The diagrams below illustrate the generalized processes for designing diverse libraries and enhancing existing collections.

Diagram 1: Diverse library design workflow emphasizing broad chemical space coverage and quality control.

Diagram 2: Library enhancement workflow to combat "novelty erosion" and maintain a modern collection.

Target-Focused Library Design Methodologies

The design of focused libraries employs sophisticated, target-aware strategies. The specific methodology depends on the available structural and ligand information.

Structure-Based Design: This approach is used when 3D structural information about the target (e.g., from X-ray crystallography) is available. It often involves computational docking of minimally substituted scaffolds into the target's binding site to assess binding poses. Successful scaffolds are then diversified with substituents designed to interact with specific sub-pockets [1]. For example, in kinase library design, scaffolds are evaluated against a panel of representative kinase structures in different conformational states (active/inactive, DFG-in/DFG-out) to ensure broad applicability across the kinome [1].

Ligand-Based Design: When structural data is scarce but known ligands exist, this method uses the properties of those active molecules to design new ones. Techniques include scaffold hopping—identifying novel core structures that maintain the essential spatial arrangement of functional groups—to discover chemotypes distinct from known actives [1] [2]. Molecular fingerprints and similarity metrics are commonly used to select compounds from larger collections that are structurally similar to known active ligands [23].

Essential Research Reagents and Materials

Successful screening campaigns rely on high-quality reagents and robust infrastructure. The following table details key solutions referenced in the studies.

Table 2: Key Research Reagent Solutions for Screening

| Reagent / Solution | Function in Screening | Key Considerations |

|---|---|---|

| Protein Reagents [24] | Biological target for affinity selection and functional assays. | Purity, conformational integrity, and correct folding are critical. Quality assessed by SEC, DLS, DSF. |

| Automated Storage System [18] | Robotic management of compound DMSO solutions at -20°C. | Maintains sample integrity, enables efficient cherry-picking (e.g., Brooks Life Sciences system). |

| DNA-Encoded Libraries (DELs) [22] [24] | Ultra-high-throughput affinity screening via DNA barcoding. | Screen billions of compounds in a single tube; requires DNA-compatible chemistry. |

| LCMS Instrumentation [18] | Quality control for compound purity and identity. | Uses UPLC with UV and evaporative light-scattering detection. |

| Fragment Libraries [18] [3] | Low molecular weight (<300 Da) compounds for FBDD. | High solubility and structural diversity are crucial (e.g., Rule of 3 compliance). |

| Virtual Screening Software [3] [23] | In silico compound prioritization using AI/docking. | Filters vast virtual libraries to identify synthesizable, drug-like candidates. |

The choice between diverse and focused screening libraries is not a matter of superiority but of strategic alignment with project goals. Diverse libraries are the tool of choice for exploring novel biology and generating serendipitous discoveries, offering wide coverage of chemical space at the cost of lower hit rates. In contrast, focused libraries provide an efficient, knowledge-driven path to higher-quality hits for well-characterized target classes, streamlining the early discovery process. A robust quality control protocol, as detailed herein, is non-negotiable for either library type to ensure the integrity of screening results. Ultimately, many successful drug discovery programs leverage a hybrid strategy, initiating campaigns with a diverse scout screen to gather initial data, then transitioning to focused approaches for lead generation and optimization.

The composition of screening libraries has undergone a profound transformation, shifting from a paradigm of sheer quantity to one of strategic quality. This evolution has been driven by the need to improve the efficiency and success rates of drug discovery, moving away from massive, undirected collections to carefully curated and designed libraries. This guide compares the performance of two dominant modern library strategies: diverse libraries and focused libraries.

The Historical Pivot in Library Design

The earliest drug discovery efforts often relied on serendipitous findings from natural products or historical compound archives [15]. The introduction of High-Throughput Screening (HTS) in the 1990s created a demand for large compound libraries, which were initially fueled by in-house archives and combinatorial chemistry [15]. However, the promise of combinatorial chemistry often fell short; these libraries frequently lacked complexity and clinical relevance, with very few drugs, such as the kidney cancer treatment Sorafenib, tracing their origins back to purely combinatorial sources [15].

This failure prompted a strategic shift. The field moved from quantity-driven assembly to quality-focused design, guided by frameworks like Lipinski’s Rule of Five and filters for toxicity and assay interference [15]. This new approach prioritized molecular properties, scaffold diversity, and target-class relevance, giving rise to specialized subsets like covalent inhibitors and CNS-penetrant compounds [15]. The central lesson learned was that the quality of the initial screening set is a critical determinant of downstream success, as poor-quality libraries generate false positives and waste resources [15].

Performance Comparison: Focused vs. Diverse Libraries

The modern screening landscape is largely defined by two complementary approaches: target-focused libraries and diverse libraries. The table below summarizes their core characteristics, performance metrics, and ideal applications.

Table 1: Comparative Analysis of Focused vs. Diverse Screening Libraries

| Feature | Target-Focused Libraries | Diverse Libraries |

|---|---|---|

| Design Principle | Designed or selected to interact with a specific protein target or family (e.g., kinases, GPCRs) [1]. | Aim to maximize the coverage of chemical space and structural diversity without a specific target in mind [25]. |

| Typical Size | Smaller, often containing 100-500 compounds [1]. | Larger, often containing over 1 million compounds [25]. |

| Key Performance Metric (Hit Rate) | Significantly higher hit rates compared to diverse sets; hit clusters show discernable structure-activity relationships (SAR) [1]. | Lower hit rates, but capable of identifying novel starting points for targets with no prior chemical knowledge [1] [25]. |

| Hit Quality | Hits are often potent and selective, dramatically reducing hit-to-lead timelines [1]. | Hit quality can be variable; requires extensive triage to identify chemically tractable leads [15]. |

| Primary Application | Ideal for well-characterized target classes or when a target is known. Best for biochemical or target-based assays [1]. | Essential for phenotypic screens where the molecular target is unknown, and for exploring new biology [26] [25]. |

| Limitations | Requires prior knowledge of the target or target family. Can introduce a bias toward known chemotypes, limiting novelty [1] [27]. | Can contain many inactive compounds; prone to false positives from assay interferents; high cost and resource requirements [26] [28]. |

Supporting Experimental Data

A pivotal study analyzing over 30,000 compounds demonstrated that biological performance diversity does not always correlate with chemical diversity [26]. Researchers used high-dimensional cell morphology profiling ("cell painting") to measure the biological performance of compounds. They found that libraries selected for diverse biological profiles achieved higher hit rates in a variety of unrelated cell-based HTS assays than those selected for chemical diversity alone [26]. This provides experimental evidence that directly measuring biological activity can create more effective screening collections.

Furthermore, the performance of target-focused libraries is well-documented. For example, BioFocus' SoftFocus kinase libraries, designed using structural information, have led to over 100 patent filings and multiple co-crystal structures, directly contributing to clinical candidates [1].

Experimental Protocols for Library Analysis

To objectively compare and select libraries, researchers employ several key experimental and computational protocols.

Protocol 1: Cell Morphology Profiling for Performance Diversity

This protocol assesses the biological performance of a compound library directly, providing a filter to enrich for bioactive molecules.

Table 2: Key Research Reagent Solutions for Cell Morphology Profiling

| Research Reagent | Function in the Experiment |

|---|---|

| U-2 OS Osteosarcoma Cells | A human cell line used as a model system to treat with compounds and observe phenotypic changes [26]. |

| Multiplexed-Cytological (MC) "Cell-Painting" Assay Kits | A suite of fluorescent dyes staining six different cellular compartments/organelles, enabling high-content imaging of cell morphology [26]. |

| Automated High-Content Microscopy Systems | Automated imaging systems to capture thousands of high-resolution images of treated cells in an efficient manner [26]. |

| Image Analysis Software (e.g., CellProfiler) | Software to extract quantitative data (812 morphological features) from the cellular images to create a profile for each compound [26]. |

Workflow:

- Cell Treatment: U-2 OS cells are treated with each library compound at a single concentration (e.g., 48 hours) [26].

- Staining and Imaging: Cells are stained with the fluorescent CellPainting panel and imaged using automated high-content microscopy [26].

- Feature Extraction: Image analysis software quantifies hundreds of morphological features (e.g., cell size, shape, texture, organelle morphology) to create a unique "profile" for each compound [26].

- Hit Identification: Compounds that induce a significant change in morphology compared to DMSO controls are classified as active using a statistical measure like the multidimensional perturbation value (mp value) [26].

- Library Enrichment: The set of compounds active in the CellPainting assay is significantly enriched for hits in independent cell-based HTS campaigns, demonstrating its utility as a predictive filter [26].

Figure 1: Cell Morphology Profiling Workflow

Protocol 2: Cheminformatics Filtering for Library Curation

This computational protocol is used to remove problematic compounds and ensure desirable physicochemical properties.

Workflow:

- Standardize Structures: Convert compound structures into a standard format (e.g., SMILES, SDF) [28].

- Remove Problematic Compounds: Apply filters (e.g., PAINS - Pan Assay Interference Compounds, REOS - Rapid Elimination of Swill) to remove compounds with functional groups known to cause false positives [28]. This includes eliminating redox-cycling compounds, alkylators, and aggregators [28].

- Apply Property Filters: Filter compounds based on lead-like or drug-like properties, such as molecular weight, lipophilicity (cLogP), and hydrogen bond donors/acceptors (e.g., Lipinski's Rule of Five) [15] [28].

- Assess Diversity & Complexity: Use cheminformatics software to analyze and ensure scaffold diversity and molecular complexity. Techniques include Principal Component Analysis (PCA) and Principal Moments of Inertia (PMI) plots [25].

- Final Curated Library: The output is a cleaned, high-quality library ready for screening.

Figure 2: Cheminformatics Library Curation Workflow

Table 3: Essential Tools for Modern Library Design and Screening

| Tool / Resource | Category | Function in Library Design & Screening |

|---|---|---|

| Lipinski's Rule of Five | Computational Filter | A predictive model for assessing drug-likeness based on physicochemical properties [15]. |

| PAINS/REOS Filters | Computational Filter | Sets of structural alerts used to identify and remove compounds with high potential for assay interference [28]. |

| CellPainting Assay | Biological Profiling | A high-content, image-based assay used to measure the biological performance diversity of a compound library [26]. |

| DNA-Encoded Libraries (DEL) | Screening Technology | Extremely large libraries (billions of compounds) screened as mixtures via affinity selection, with compounds identified by DNA barcoding [24]. |

| AI/ML Platforms | Computational Design | Uses predictive models to virtually screen chemical space and design novel compounds with a higher likelihood of activity [15]. |

| Structure-Based Design | Focused Library Design | Utilizes protein crystallographic data to design libraries that fit a specific target's binding site [1]. |

| Diversity-Oriented Synthesis (DOS) | Chemistry | A synthetic strategy to produce small molecules with high scaffold and stereochemical diversity, exploring broader chemical space [26] [25]. |

The evolution from quantity-driven to quality-focused collections is a cornerstone of modern drug discovery. The choice between diverse and focused libraries is not a matter of superiority, but of strategic alignment with the screening goal. Focused libraries offer efficiency and higher hit rates for known targets, while diverse libraries remain indispensable for exploring novel biology. The most successful screening strategies now leverage both, augmented by advanced profiling techniques and computational tools like AI, to build intelligent libraries that maximize the probability of finding high-quality chemical starting points [15] [27].

Library Design and Implementation: From Concept to Screening

In modern drug discovery, the initial choice of a chemical library is a pivotal strategic decision that can determine the success or failure of a screening campaign. The debate between using target-focused libraries versus diverse screening collections represents a fundamental divide in approach, each with distinct advantages and limitations. Target-focused libraries, built using structural data and chemogenomic principles, prioritize efficiency and knowledge-based selection by concentrating on compounds with a higher probability of interacting with specific target classes or binding sites [29]. In contrast, diverse libraries emphasize broad chemical space coverage, aiming to identify novel chemotypes without strong preconceptions about required structural features, which is particularly valuable for poorly characterized targets or phenotypic screening [30].

This guide provides an objective performance comparison of these competing strategies, presenting quantitative data and detailed experimental methodologies to inform library selection. We examine how target-focused libraries leverage the growing wealth of structural biology information and sophisticated chemogenomic annotations to achieve superior enrichment rates, while also considering scenarios where diverse library screening maintains strategic value. By analyzing direct experimental comparisons and benchmarking studies, we aim to equip researchers with evidence-based criteria for matching library strategy to specific project goals and target biology.

Quantitative Performance Comparison: Focused vs. Diverse Libraries

Rigorous benchmarking studies provide crucial insights into the relative strengths of different library design strategies. The performance advantages of target-focused approaches become particularly evident when examining enrichment metrics and hit rates across various target classes.

Table 1: Performance Metrics for Different Library Design Strategies

| Library Strategy | Primary Application | Typical Hit Rate | Enrichment Factor | Key Advantages |

|---|---|---|---|---|

| Target-Focused | Known target classes, lead optimization | 5-20% [31] | 8-40 folds [32] | Higher hit rates, knowledge-driven, better target engagement |

| Structure-Based | Targets with known structures | ~14-44% [33] | EF1% = 16.72 [33] | Direct pose prediction, physics-based scoring |

| Diverse/Chemogenomic | Phenotypic screening, novel target space | Variable (highly target-dependent) | Not consistently reported | Target-agnostic, novel chemotype identification |

Table 2: Docking Program Performance in Structure-Based Library Design

| Docking Program | Pose Prediction Accuracy (RMSD < 2Å) | Screening Power (AUC) | Key Strengths |

|---|---|---|---|

| Glide | 100% [32] | 0.61-0.92 [32] | Superior pose prediction and virtual screening accuracy |

| GOLD | 82% [32] | Not specified | Good balance of accuracy and speed |

| AutoDock | 59% [32] | Not specified | Widely accessible, moderate performance |

| RosettaVS | Not specified | Top 1% EF = 16.72 [33] | Excellent enrichment, models receptor flexibility |

The data reveal that target-focused libraries, particularly those designed using structure-based approaches, consistently achieve higher hit rates and enrichment factors compared to diverse screening collections. In a notable example, a target-focused campaign against the ubiquitin ligase KLHDC2 and sodium channel NaV1.7 yielded exceptional hit rates of 14% and 44% respectively, with all hits demonstrating single-digit micromolar binding affinity [33]. This performance substantially exceeds typical hit rates from diverse library screens, which often fall below 1% [34].

Experimental Protocols for Library Design and Validation

Structure-Based Focused Library Design Protocol

The development of a target-focused library requires meticulous attention to structural data and binding site characteristics. The following protocol has been validated for targets with known three-dimensional structures:

- Target Preparation: Obtain the protein structure from experimental methods (X-ray crystallography, cryo-EM) or prediction tools (AlphaFold, RoseTTAFold). Remove redundant chains, ligands, waters, and cofactors while retaining essential structural components. For COX enzyme benchmarks, researchers edited protein structures with DeepView software to create single-chain inputs and added heme molecules where missing [32].

- Binding Site Characterization: Define the binding pocket using the coordinates of a reference ligand or through computational detection of concave surface regions. For consistency in benchmarking studies, complexes were superimposed onto a reference structure (e.g., 5KIR for COX studies), and ligands not occupying the same site were excluded [32].

- Compound Selection and Docking: Screen a diverse chemical library against the defined binding site using multiple docking programs to mitigate individual algorithm biases. In benchmarking studies, programs like Glide, GOLD, AutoDock, FlexX, and Molegro Virtual Docker have been systematically evaluated [32].

- Pose Validation and Scoring: Evaluate docking poses using root-mean-square deviation (RMSD) calculations relative to experimental structures when available. An RMSD value less than 2 Å indicates proper docking outcomes [32].

- Library Assembly: Select top-ranking compounds based on consensus scoring and chemical diversity to create the focused library. Include chemically related analogs to explore structure-activity relationships around identified hits.

Chemogenomic Library Design Methodology

For targets without structural information, chemogenomic approaches provide a powerful alternative for focused library design:

- Target Family Analysis: Identify the protein family and relevant structural motifs of the target. Collect known ligands for related targets from databases like ChEMBL, which contains over 1.6 million molecules with bioactivities against 11,224 unique targets [29].

- Scaffold Analysis and Selection: Use tools like ScaffoldHunter to decompose known active compounds into representative scaffolds and fragments through stepwise removal of terminal side chains and rings [29].

- Network Pharmacology Integration: Build a system pharmacology network integrating drug-target-pathway-disease relationships using graph databases like Neo4j. This enables identification of compounds that modulate specific biological pathways [29].

- Library Optimization: Apply filters for cellular activity, chemical diversity, availability, and target selectivity. One proven approach resulted in a minimal screening library of 1,211 compounds targeting 1,386 anticancer proteins [31].

- Morphological Profiling Integration: Incorporate high-content imaging data from assays like Cell Painting when available, linking compound-induced morphological changes to target modulation [29].

Diagram 1: Focused Library Design Workflow. This workflow integrates both structure-based and chemogenomic approaches for comprehensive library design.

Virtual Screening Validation Protocol

Validating the performance of a designed library requires rigorous benchmarking against known active and decoy compounds:

- Dataset Curation: Collect known active compounds and generate decoy molecules with similar physicochemical properties but different 2D topology. Public resources like ChEMBL, BindingDB, and Directory of Useful Decoys (DUD) provide standardized datasets for validation [30].

- Performance Assessment: Execute virtual screening using the integrated library design strategy and calculate enrichment metrics. Critical metrics include:

- Experimental Confirmation: Select top-ranked compounds for experimental testing to determine actual hit rates. For the RosettaVS platform, this process led to the discovery of high-affinity binders for both KLHDC2 and NaV1.7 targets, with subsequent X-ray crystallography validating the predicted binding poses [33].

Practical Applications and Case Studies

Protein Family-Specific Library Design

The target-focused approach demonstrates particularly strong performance for well-characterized protein families. In cyclooxygenase (COX) inhibitor development, systematic benchmarking of docking programs revealed that Glide correctly predicted binding poses (RMSD < 2Å) for 100% of studied co-crystallized ligands of COX-1 and COX-2 enzymes, while other docking methods achieved only 59-82% success rates [32]. This precision in pose prediction directly translates to more effective focused library design for related targets.

Virtual screening results treated by ROC analysis demonstrated that optimized structure-based methods can achieve areas under the curve (AUCs) ranging between 0.61-0.92 with enrichment factors of 8-40 folds for COX targets [32]. This substantial enrichment means that researchers can identify active compounds with significantly higher efficiency compared to random screening.

Phenotypic Screening Applications

Target-focused libraries also show value in phenotypic screening contexts when designed using chemogenomic principles. In glioblastoma research, a physically available library of 789 compounds covering 1,320 anticancer targets was deployed to profile patient-derived glioma stem cells [31]. The approach successfully identified patient-specific vulnerabilities and revealed highly heterogeneous phenotypic responses across patients and GBM subtypes, demonstrating how target-focused libraries can maintain relevance in complex phenotypic assays.

Diagram 2: Phenotypic Screening Deconvolution. Chemogenomic libraries enable target identification in phenotypic screening by linking morphological profiles to specific target modulations.

Successful implementation of target-focused library strategies requires access to specific computational tools, databases, and experimental resources. The following table details essential components of the modern library design toolkit:

Table 3: Essential Research Reagents and Resources for Library Design

| Resource Category | Specific Tools/Resources | Key Function | Access Considerations |

|---|---|---|---|

| Structural Data Resources | Protein Data Bank (PDB), AlphaFold Database | Provide 3D protein structures for binding site analysis | Publicly available |

| Bioactivity Databases | ChEMBL, BindingDB, PubChem | Source of compound bioactivity data for chemogenomic mapping | Publicly available |

| Docking Software | Glide, GOLD, AutoDock, RosettaVS | Predict ligand binding poses and affinities | Commercial and free options available |

| Chemogenomic Platforms | ScaffoldHunter, Neo4j with custom databases | Analyze compound scaffolds and build pharmacology networks | Open source and commercial options |

| Compound Libraries | Enamine, eMolecules, in-house collections | Source of compounds for screening | Purchase required for physical compounds |

| Validation Tools | DUD-E, CASF-2016 benchmarks | Assess docking and virtual screening performance | Publicly available |

The comparative analysis presented in this guide demonstrates that target-focused libraries, when appropriately designed using structural data and chemogenomic principles, consistently outperform diverse libraries in hit rate and enrichment efficiency. However, the optimal strategy depends heavily on project-specific parameters:

Implement target-focused library strategies when:

- The target structure is known or accurately predictable

- The target belongs to a well-characterized protein family with known ligands

- Project goals include rapid identification of lead compounds with defined mechanisms

- Resources for compound screening and validation are limited

Consider diverse library screening when:

- The target is poorly characterized with limited structural or ligand information

- The goal is identification of novel chemotypes or mechanisms of action

- Conducting phenotypic screening without predefined molecular targets

- Exploring polypharmacology or multi-target interventions

The most successful drug discovery programs often employ an integrated approach, using target-focused libraries for initial lead identification followed by diverse analog screening to explore surrounding chemical space during optimization. As artificial intelligence accelerates virtual screening platforms and chemical space coverage expands into billions of compounds [33] [35], the strategic advantage of well-designed focused libraries becomes increasingly pronounced. By applying the experimental protocols and performance benchmarks outlined in this guide, researchers can make evidence-based decisions in their library design strategies, ultimately accelerating the discovery of novel therapeutic agents.

The construction of a diverse screening set represents a foundational step in modern drug discovery, balancing the imperative to explore vast chemical spaces with the practical constraints of screening capacity. The similar property principle—that structurally similar molecules likely share similar biological activities—provides the fundamental rationale for diversity analysis, aiming to maximize structural space coverage while minimizing redundancy [2]. This practice is particularly crucial when little is known about a biological target, as diverse sets enable broader exploration of structure-activity relationships compared to focused libraries designed around known actives.

The landscape of chemical space is astronomically large, with conservative estimates suggesting approximately 10^13 drug-like molecules, far surpassing the 1-10 million compounds typically found in corporate screening collections [2]. This disparity has driven the development of sophisticated computational methods to select optimal subsets that efficiently sample this expansive territory. Whereas early diversity analysis relied primarily on two-dimensional fingerprints and physicochemical descriptors, contemporary approaches increasingly emphasize scaffold diversity and multiobjective optimization to ensure representation of varied chemotypes while maintaining favorable drug-like properties [2] [15].

The debate between random versus rational diversity selection continues, with simulation studies revealing conflicting outcomes. Some studies from organizations like Pfizer have demonstrated that rationally designed subsets yield higher hit rates than random selections, while other research has found minimal differences between the approaches [2]. This contradiction underscores that the performance of diversity methods depends significantly on context-specific factors, including target class, library size, and descriptor choice.

Computational Methodologies for Diversity Analysis

Molecular Descriptors and Representation

The foundation of any diversity analysis lies in the molecular descriptors used to represent and compare chemical structures. These computational representations capture key structural and physicochemical properties that influence biological activity.

Fingerprint-Based Descriptors: Derived from two-dimensional connection tables, these encode molecular substructures as bit strings, enabling rapid similarity calculations through measures like Tanimoto coefficients. They remain widely used for their computational efficiency and proven utility in similarity searching [2] [36].

Physicochemical Descriptors: These include calculated properties such as molecular weight, logP (lipophilicity), topological polar surface area, hydrogen bond donors/acceptors, and number of rotatable bonds. Such descriptors help enforce drug-likeness through rules like Lipinski's Rule of 5 while ensuring property diversity [15] [36].

Molecular Scaffolds: Focusing on core structures that characterize groups of molecules, scaffold-based diversity aims to ensure representation of different chemotypes. This approach supports "scaffold hopping" to identify active compounds belonging to different lead series from known actives, offering advantages in intellectual property and optimization potential [2].

3D Descriptors: Based on molecular conformations, these capture stereochemical and shape-based properties that can be critical for interaction with biological targets, though at higher computational cost [2].

Modern cheminformatics tools like RDKit provide extensive support for calculating these descriptors and performing structural analysis, forming the computational backbone of diversity selection workflows [36].

Selection Methods and Clustering Approaches

Once molecular descriptors are calculated, selection methods identify representative subsets that maximize diversity.

Dissimilarity-Based Selection: Methods such as MaxMin and MaxSum select compounds iteratively to maximize the minimum distance between selected molecules, directly optimizing for diversity [2].

Clustering: Grouping similar compounds based on descriptor similarity using algorithms like k-means or hierarchical clustering, then selecting representatives from each cluster. This approach ensures coverage of different regions of chemical space [2].

Partitioning Schemes: Chemical space is divided into cells based on descriptor ranges, with compounds selected from each cell. This method guarantees coverage but may select outliers in sparsely populated regions [2].

Multiobjective Optimization: Advanced methods like Pareto ranking simultaneously optimize multiple criteria—such as diversity, drug-likeness, and cost—acknowledging the practical trade-offs in library design [2].

Chemical Space Mapping: Visualization techniques like UMAP (Uniform Manifold Approximation and Projection) project high-dimensional descriptor data into two or three dimensions, enabling intuitive exploration of library diversity and coverage [36] [37].

Table 1: Comparison of Diversity Selection Methods

| Method | Key Principle | Advantages | Limitations |

|---|---|---|---|

| Dissimilarity-Based | Maximize minimum distance between selected compounds | Computationally efficient, direct diversity optimization | May select outliers, sensitive to descriptor choice |

| Clustering | Group similar compounds, select from each cluster | Ensures coverage of distinct chemotypes, intuitive | Cluster results depend on algorithm and parameters |

| Partitioning | Divide space into cells, sample from each | Guarantees space coverage, computationally simple | May over-represent sparse regions, sensitive to binning |

| Multiobjective Optimization | Simultaneously optimize multiple criteria | Balances diversity with other key properties | Computationally intensive, requires parameter tuning |

Experimental Protocols and Benchmarking Data

Evolutionary Algorithms for Ultra-Large Libraries

Confronted with make-on-demand libraries containing billions of compounds, traditional exhaustive screening becomes computationally prohibitive, especially when incorporating receptor flexibility. The REvoLd (RosettaEvolutionaryLigand) protocol addresses this through an evolutionary algorithm that explores combinatorial chemical space without enumerating all molecules [38].

Experimental Protocol:

- Initialization: Generate a random start population of 200 ligands from the combinatorial library [38].

- Evaluation: Dock each ligand against the target using flexible protein-ligand docking in RosettaLigand [38].

- Selection: Select the top 50 scoring individuals based on docking scores to advance to the next generation [38].

- Reproduction:

- Iteration: Repeat the evaluation-selection-reproduction cycle for 30 generations, balancing convergence and exploration [38].

Performance Data: In benchmarks across five drug targets, REvoLd screened between 49,000-76,000 unique molecules per target, achieving hit rate improvements of 869 to 1622-fold compared to random selection. The algorithm consistently identified hit-like molecules while exploring diverse regions of chemical space, with different runs revealing distinct scaffolds due to stochastic optimization [38].

AI-Accelerated Virtual Screening Platforms

The OpenVS platform exemplifies the integration of active learning with physics-based docking to efficiently screen ultra-large libraries [33].

Experimental Protocol:

- Initial Sampling: Dock a diverse subset of the library (typically 0.1-1%) using a rapid docking mode (VSX) [33].

- Model Training: Train a target-specific neural network on the docking results to predict binding scores [33].

- Active Learning: Iteratively select promising compounds based on model predictions, dock them, and retrain the model [33].

- Final Ranking: Apply high-precision docking (VSH) with full receptor flexibility to top candidates identified through active learning [33].

Performance Data: On the CASF-2016 benchmark, the RosettaGenFF-VS scoring function achieved a top 1% enrichment factor (EF1%) of 16.72, significantly outperforming other methods (second-best EF1% = 11.9). In real-world applications against targets KLHDC2 and NaV1.7, this approach identified hits with 14% and 44% hit rates, respectively, with screening completed in under seven days [33].

Focused Library Design with Computational Filtering

The construction of targeted screening libraries demonstrates how diversity principles apply within specific therapeutic domains, as illustrated by the Antioxidant Screening Library [37].

Experimental Protocol:

- Machine Learning Prediction: Apply models trained on bioactivity data (e.g., ABTS and DPPH assays from ChEMBL) to identify compounds with predicted antioxidant activity [37].

- Structure-Based Filtering: Remove undesirable scaffolds using PAINS (Pan-Assay Interference Compounds) filters and toxicophore alerts [37].

- Pharmacophore Prioritization: Flag molecules containing known antioxidant motifs (phenols, catechols, conjugated dienes) [37].

- Diversity Analysis: Apply dimensionality reduction (UMAP) and clustering to ensure representation of distinct chemotypes [37].

Performance Data: This methodology produced a focused set of 443 drug-like compounds with predicted antioxidant activity, balancing structural diversity with target relevance. The library contains clusters of structurally similar compounds for structure-activity relationship studies alongside diverse chemotypes for novel discovery [37].

Comparative Performance Analysis

Table 2: Performance Comparison of Screening Approaches

| Method | Library Size | Screening Efficiency | Hit Rate Enhancement | Key Advantages |

|---|---|---|---|---|

| Traditional HTS | 1-10 million compounds | Low: Requires experimental testing of entire library | Baseline | Experimentally validated results, no computational bias |

| Evolutionary Algorithms (REvoLd) | Billions (make-on-demand) | High: 49,000-76,000 compounds screened per target | 869-1622x over random | Explores vast spaces with minimal docking, identifies novel scaffolds |

| AI-Accelerated Platform (OpenVS) | Multi-billion compounds | Very High: Days instead of months | 14-44% hit rates in case studies | Combines physics-based docking with ML triage, open-source platform |

| Focused Library Design | Hundreds to thousands | Maximum: Pre-filtered for target relevance | Varies by application | High probability of activity, suitable for established target classes |

The performance data reveals several key trends. First, advanced computational methods enable exploration of chemical spaces orders of magnitude larger than traditional HTS approaches. Second, hit rates do not necessarily diminish with larger starting libraries when smart selection algorithms are applied. Third, different approaches offer complementary strengths—evolutionary algorithms excel at novel scaffold discovery, AI-accelerated platforms provide speed and accuracy, and focused libraries offer efficiency for well-characterized target classes.

Visualization of Method Workflows

Evolutionary Algorithm Screening Workflow

AI-Accelerated Screening Platform

Essential Research Reagents and Tools

Table 3: Key Research Reagents and Computational Tools

| Resource | Type | Function in Library Design | Access |

|---|---|---|---|

| Enamine REAL Library [38] | Make-on-Demand Chemical Library | Source of synthetically accessible compounds for ultra-large screening | Commercial |

| RDKit [36] [37] | Cheminformatics Toolkit | Calculate molecular descriptors, fingerprints, and structural filters | Open Source |

| RosettaLigand [38] [33] | Molecular Docking Software | Flexible protein-ligand docking with full receptor flexibility | Academic License |

| PubChem [36] [39] | Chemical Database | Source of compounds and bioactivity data for library design | Free |

| ZINC [36] [39] | Commercial Compound Database | Source of purchasable compounds for virtual screening | Free |

| ChEMBL [37] | Bioactivity Database | Source of experimental data for model training and validation | Free |

| MolPILE [39] | Pretraining Dataset | Large-scale, curated dataset for molecular machine learning | Publicly Available |

The methodology for building diverse screening sets has evolved dramatically from simple fingerprint-based diversity analysis to sophisticated algorithms capable of navigating billion-compound spaces. The experimental data demonstrates that modern approaches—including evolutionary algorithms, AI-accelerated platforms, and computationally-focused library design—can achieve remarkable efficiencies and hit rates that surpass random screening by orders of magnitude.

The integration of these methods creates powerful synergies: evolutionary algorithms discover novel scaffolds, AI-platforms enable rapid screening of ultra-large spaces, and focused library design efficiently targets specific therapeutic domains. Future developments will likely involve greater incorporation of synthetic accessibility constraints earlier in the selection process, more sophisticated treatment of receptor flexibility, and increased use of generative models to create entirely novel compounds that fill diversity gaps [15] [36].

As chemical libraries continue to expand into the billions of readily available compounds, the strategic implementation of these descriptor, clustering, and selection methods will become increasingly critical for maximizing discovery outcomes while managing computational and experimental resources. The continuing benchmarking and validation of these approaches against experimental data will further refine their application across different target classes and discovery scenarios.

The evolution of screening libraries from general diverse collections to targeted, specialized sets represents a paradigm shift in modern drug discovery. This strategic refinement addresses the high attrition rates in late-stage clinical development by ensuring that initial screening hits possess not only activity but also favorable physicochemical properties and reduced liability profiles. Specialized libraries, including natural product collections, fragment libraries, and covalent inhibitor libraries, enable researchers to interrogate biological targets through distinct yet complementary approaches, each offering unique advantages for probing challenging therapeutic targets. The selection between focused screening (using target-informed libraries) and diverse screening (emphasizing broad chemical space coverage) constitutes a fundamental strategic decision that directly influences screening outcomes and downstream success [2] [15].

This guide provides an objective comparison of these three specialized library types, presenting quantitative performance data, detailed experimental protocols, and practical implementation frameworks to inform library selection and deployment within integrated drug discovery campaigns.

Comparative Analysis of Library Characteristics and Performance

Table 1: Key Characteristics of Specialized Screening Libraries

| Parameter | Natural Product Libraries | Fragment Libraries | Covalent Inhibitor Libraries |

|---|---|---|---|