FAIR Data Principles in Bioinformatics: A Practical Guide to Implementation, Challenges, and Impact

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on applying FAIR (Findable, Accessible, Interoperable, Reusable) data principles in bioinformatics.

FAIR Data Principles in Bioinformatics: A Practical Guide to Implementation, Challenges, and Impact

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on applying FAIR (Findable, Accessible, Interoperable, Reusable) data principles in bioinformatics. It covers the foundational rationale behind FAIR, practical methodologies for implementation across diverse data types, solutions to common technical and cultural barriers, and a comparative analysis with other data frameworks. By synthesizing current use cases, challenges, and future directions, this resource aims to equip life sciences organizations with the knowledge to enhance data-driven discovery, improve collaboration, and accelerate translational research.

The 'Why' Behind FAIR: Understanding the Foundational Principles and Their Critical Role in Modern Bioinformatics

The volume, complexity, and creation speed of data in life sciences research are increasing at an unprecedented rate [1] [2]. In bioinformatics, researchers increasingly rely on computational systems to manage and extract meaning from this deluge of multi-modal data, which can include genomic sequences, imaging data, proteomics, and clinical records [3]. This dependency on computational support necessitates a structured framework to ensure that digital assets are not merely stored, but are genuinely usable for advanced analytics, artificial intelligence (AI), and machine learning (ML) applications. The FAIR Guiding Principles—standing for Findable, Accessible, Interoperable, and Reusable—provide exactly this framework [1].

Originally published in 2016 in Scientific Data, the FAIR principles were designed to enhance data stewardship by emphasizing machine-actionability, meaning the capacity of computational systems to find, access, interoperate, and reuse data with minimal human intervention [1] [4]. For bioinformatics and drug development professionals, the adoption of FAIR principles is transformative. It accelerates discovery by enabling faster time-to-insight, improves data return on investment (ROI), supports AI and multi-modal analytics, ensures reproducibility and traceability, and enables better collaboration across traditional organizational silos [3]. This guide provides a technical breakdown of each FAIR principle, detailing its components, significance, and practical application within bioinformatics research.

The Pillars of FAIR: A Detailed Technical Breakdown

The four pillars of FAIR are interrelated yet independent principles that together ensure digital objects are optimized for both human and computational use.

Findable – The Foundation for Discovery

The first step in (re)using data is finding it. Findability ensures that data and metadata are easy to locate for both humans and computers, which is an essential component of the FAIRification process [1].

Core Components:

- Persistent Identifiers: Datasets must be assigned a globally unique and persistent identifier (PID), such as a Digital Object Identifier (DOI) or a UUID [3] [4]. This provides an immutable reference to the data, ensuring it can be uniquely and permanently cited and discovered.

- Rich Metadata: Data must be described with a plurality of accurate and relevant metadata [5]. This metadata provides the contextual information (who, what, when, where, why, and how) that makes the dataset discoverable and understandable.

- Indexed in Searchable Resources: Both metadata and data should be registered or indexed in a searchable resource, such as a domain-specific repository (e.g., GenBank) or a general-purpose one (e.g, Zenodo, Dataverse) [1] [4]. This ensures that search engines and other discovery tools can locate them.

Bioinformatics Application: In a typical bioinformatics scenario, a dataset from a proteomics experiment would be assigned a DOI, described with rich metadata using a standard like the Proteomics Standards Initiative (PSI), and deposited in a repository like PRIDE. This allows other researchers (or their computational agents) to easily discover this dataset through a simple search [3].

Accessible – Retrieval with Clarity

Once found, users must know how they can be accessed. Accessibility emphasizes the retrieval of data and metadata using standardized, open protocols.

Core Components:

- Standardized Retrieval Protocols: Data and metadata should be retrievable by their identifier using a standardized communications protocol that is open, free, and universally implementable (e.g., HTTPS, APIs) [5].

- Authentication and Authorization: The protocol should allow for authentication and authorization procedures where necessary [5]. It is critical to understand that FAIR does not mean "open." Data can be restricted and behind a secure login while still being FAIR, as long as the access conditions and the process to obtain authorization are clear to both humans and machines [3].

- Metadata Persistence: Metadata should remain accessible, even when the data is no longer available [5]. This provides a record of the data's existence and context, which is valuable for tracking research outputs even if the dataset itself is deprecated.

Bioinformatics Application: A clinical genomics dataset containing sensitive patient information may be stored in a controlled-access database like dbGaP. While the data itself is not publicly open, its metadata is freely accessible and clearly outlines the procedure for researchers to apply for access, thus fulfilling the principle of Accessibility [3] [6].

Interoperable – Ready for Integration

Data usually needs to be integrated with other data and used within applications or workflows for analysis, storage, and processing. Interoperability ensures that datasets can be combined and used alongside other data and tools [1].

Core Components:

- Standardized Vocabularies and Ontologies: Data and metadata should use a formal, accessible, shared, and broadly applicable language for knowledge representation [7]. This is achieved through the use of controlled vocabularies, keywords, and ontologies (e.g., GO for gene ontology, MeSH for medical subjects, SNOMED CT for clinical terms) [2] [6].

- Qualified References: Metadata should include qualified references to other metadata and data [4]. This means that references to related digital objects (e.g., a dataset that builds upon another) are not just simple links but are accompanied by context about the relationship.

Bioinformatics Application: A transcriptomics study might describe its samples using terms from the Cell Ontology (CL) and its analytical methods using the EDAM ontology. This allows a computational workflow to automatically understand the nature of the samples and the methods used, enabling seamless integration with complementary datasets from other public repositories for a meta-analysis [3] [6].

Reusable – Maximizing Future Utility

The ultimate goal of FAIR is to optimize the reuse of data. Reusability ensures that data and metadata are well-described enough to be replicated, combined in different settings, and used for future investigations [1].

Core Components:

- Rich Provenance and Description: Data must be associated with detailed provenance and described with a plurality of accurate and relevant attributes [5]. Provenance documents the origin, history, and processing steps of the data (the "lineage").

- Clear Usage License: Data must be released with a clear and accessible data usage license (e.g., Creative Commons, Open Data Commons) [4] [8]. This removes legal ambiguity about how the data can be used, modified, and shared.

- Community Standards: Data should meet domain-relevant community standards [5]. Adhering to standards that are widely accepted in a field (e.g., MIAME for microarray data, BIDS for brain imaging) ensures the data is structured in a familiar and reliable way for other researchers.

Bioinformatics Application: A reusable dataset in bioinformatics would be one that is shared with a comprehensive README file, a clear MIT or CC-BY license, and details about the computational environment (e.g., a Docker container) used to generate the results. This level of documentation allows another research team to not only understand the data but also to replicate the analysis in their own environment [6].

Table 1: Summary of FAIR Principles and Their Core Requirements

| Principle | Core Objective | Key Requirements | Example in Bioinformatics |

|---|---|---|---|

| Findable | Easy discovery by humans and machines | Persistent Identifiers (e.g., DOI), Rich Metadata, Indexed in a searchable resource [1] [4] | A genome sequence deposited in GenBank with a unique accession number. |

| Accessible | Retrievable upon discovery | Standardized protocols (e.g., HTTPS), Clear authentication/authorization rules, Persistent metadata [5] | Controlled-access data in dbGaP with a documented data access request process. |

| Interoperable | Ready for integration with other data | Standardized vocabularies & ontologies, Qualified references to other data [4] [2] | Using Gene Ontology (GO) terms to annotate gene function in a dataset. |

| Reusable | Optimized for future use | Clear usage license, Detailed provenance, Meets community standards [1] [8] | A transcriptomics dataset shared with a CC-BY license and MIAME-compliant metadata. |

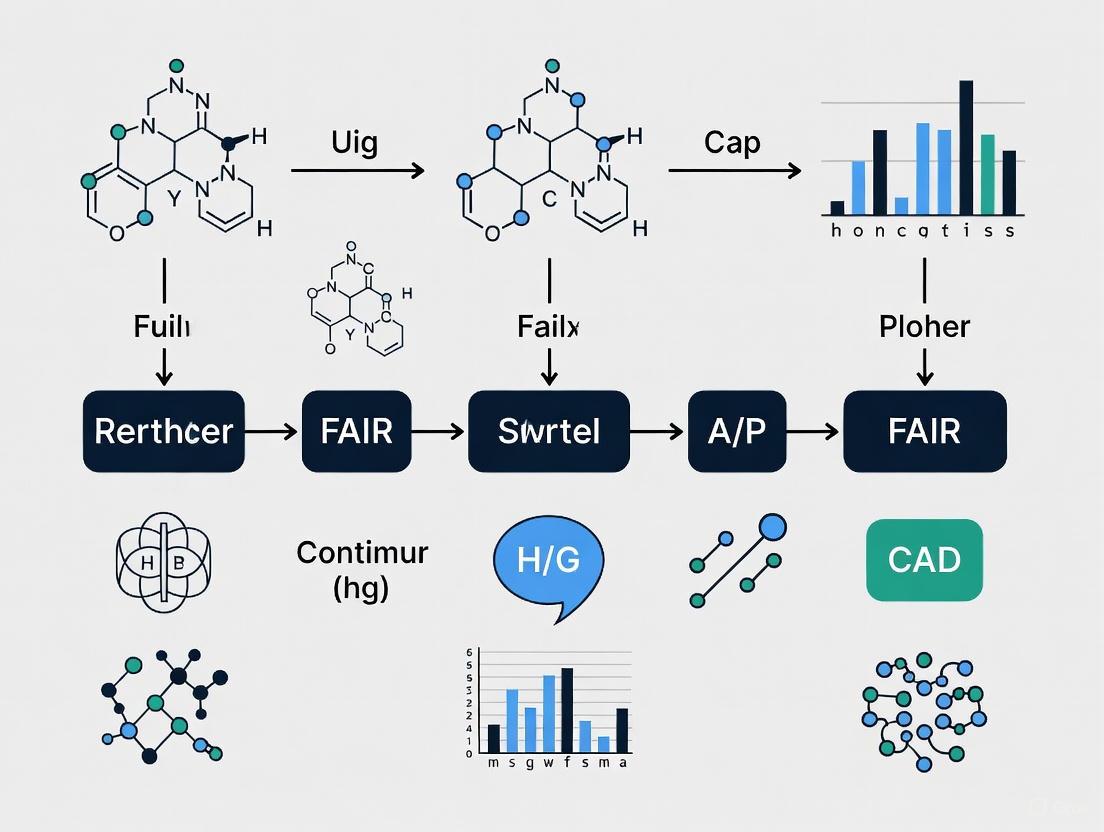

The FAIRification Process: A Step-by-Step Workflow

Implementing the FAIR principles, often called "FAIRification," is a process that can be broken down into a series of actionable steps. The following workflow diagram outlines the key stages and decision points in making research data FAIR.

FAIRification Workflow for Research Data

Detailed Methodologies for FAIRification

Step 1: Retrieve and Analyze Non-FAIR Data The process begins by accessing all relevant data and performing a comprehensive analysis. This involves examining the data's structure, identifying the methodologies used for data generation, and understanding its provenance (origin and history) [2]. The goal is to establish a baseline and identify the specific gaps that need to be addressed to achieve FAIRness.

Step 2: Define a Semantic Model To ensure interoperability, a semantic model must be defined. This involves selecting community- and domain-specific ontologies and controlled vocabularies (e.g., MeSH for medical sciences, dbSNP for genetic variations) to describe the dataset entities in an unambiguous, machine-actionable format [2] [6]. This step moves data from being merely understandable to humans to being interpretable by machines.

Step 3: Make Data Linkable The defined semantic model is then applied to the raw data using Semantic Web or Linked Data technologies (e.g., RDF - Resource Description Framework). This process transforms the data into a "linkable" state, where entities within the dataset are connected to each other and to external resources in a structured web of data, enhancing both interoperability and discoverability [2].

Step 4: Assign License and Metadata A critical step for reusability is assigning a clear data usage license (e.g., Creative Commons) that informs users of their rights and obligations [2]. Concurrently, rich metadata is created to describe the data. This metadata must be comprehensive enough to support the FAIR principles, providing context and enabling discovery without necessarily accessing the data itself [2].

Step 5: Publish FAIR Data The final step is to publish the FAIRified data, along with its metadata and license, in a trusted repository [7]. The repository should assign a persistent identifier (PID) and ensure the data is indexed by search engines. The data can now be accessed by users, with authentication and authorization procedures in place if necessary [2].

Table 2: Essential Research Reagent Solutions for FAIR Bioinformatics

| Tool Category | Example Solutions | Function in FAIRification |

|---|---|---|

| Persistent Identifier Services | DOI, UUID, PURL | Assigns a globally unique and permanent identifier to datasets, ensuring permanent citability and findability (Findable) [2]. |

| Metadata Standards & Ontologies | MeSH, GO, EDAM, SNOMED CT | Provides standardized, machine-readable vocabularies to describe data, enabling seamless integration and interpretation (Interoperable) [2] [6]. |

| Trusted Data Repositories | GenBank, PRIDE, Zenodo, Dataverse, dbGaP | Hosts data and metadata, provides PIDs, ensures long-term preservation and access, often with access control (Accessible) [2] [6]. |

| Data Management Platforms | REDCap, Electronic Lab Notebooks (ELNs) | Helps in structuring data collection, managing metadata, and documenting provenance from the start of a project (Reusable) [6]. |

FAIR in Action: Principles for Research Software (FAIR4RS)

The conceptual framework of FAIR has proven so powerful that it has been extended beyond data to encompass research software. In 2022, the FAIR for Research Software (FAIR4RS) Working Group released a community-endorsed set of principles to address the unique challenges of making software findable, accessible, interoperable, and reusable [5].

Research software is defined as "source code files, algorithms, scripts, computational workflows, and executables that were created during the research process or for a research purpose" [5]. The relationship between FAIR data and FAIR software is symbiotic, as illustrated below.

The Symbiotic Relationship Between FAIR Data and FAIR Software

Key Adaptations of FAIR4RS Principles

The FAIR4RS principles adapt the original guidelines to the specifics of software, emphasizing its executability, composite nature, and continuous evolution [5].

- Findable: Software is assigned a globally unique and persistent identifier, which can be a DOI for a specific release or a Software Heritage ID (SWHID) for the source code artifact. Different versions and components of the software are assigned distinct identifiers [5].

- Accessible: Software is retrievable by its identifier using a standardised protocol (e.g., HTTPS from a Git repository). The protocol allows for authentication and authorization where necessary, and metadata about the software remains accessible even if the software itself is no longer available [5].

- Interoperable: Software interoperates via APIs and by exchanging data in standard formats. It reads, writes, and exchanges data in a way that meets domain-relevant community standards and includes qualified references to other objects (e.g., datasets, other software) [5].

- Reusable: Software is both usable (can be executed) and reusable. This is achieved by describing it with accurate attributes, including a clear and accessible license, associating it with detailed provenance (e.g., version control history), specifying dependencies, and ensuring it meets domain-relevant standards [5].

Bioinformatics Application: A computational workflow for single-cell RNA sequencing analysis, such as a collection of Snakemake or Nextflow scripts, can be made FAIR by depositing a specific version in Zenodo to obtain a DOI (Findable), hosting the code on a public GitHub repository (Accessible), using standard file formats like H5AD or LOOM for its inputs and outputs (Interoperable), and documenting it thoroughly with a license, a Conda environment file listing all dependencies, and a container image for execution (Reusable) [5].

The FAIR principles represent a fundamental shift in how the research community, particularly in data-intensive fields like bioinformatics, approaches data management and stewardship. By providing a structured framework that emphasizes machine-actionability, FAIR enables researchers and institutions to overcome the challenges posed by data volume and complexity. The implementation of these principles—making data Findable, Accessible, Interoperable, and Reusable—is not a one-time event but a strategic process that enhances collaboration, accelerates innovation, and ensures the long-term value and integrity of research assets.

The journey to full FAIR compliance involves technical, organizational, and cultural changes, including potential challenges such as fragmented data systems, a lack of standardized metadata, and the cost of transforming legacy data [3]. However, the benefits are clear: from enabling faster time-to-insight in drug discovery pipelines to supporting the rigorous reproducibility demanded by regulatory bodies. As the principles evolve and their application expands to include critical digital objects like research software, their role in building a robust, efficient, and collaborative research ecosystem in bioinformatics and beyond will only become more pronounced.

The FAIR Guiding Principles—Findable, Accessible, Interoperable, and Reusable—were formally introduced in a seminal 2016 paper in Scientific Data [9]. This manuscript provides an in-depth technical guide to the genesis, core tenets, and practical implementation of these principles, with a specific focus on their transformative impact on bioinformatics research. We detail the original rationale, provide actionable protocols for achieving FAIR compliance, and visualize the core relationships and workflows essential for researchers and drug development professionals navigating the modern data-intensive landscape.

The increasing volume, complexity, and creation speed of data in the life sciences have necessitated a paradigm shift in data stewardship [1]. Humans increasingly rely on computational support to manage these digital assets, highlighting an urgent need for infrastructure that improves the reuse of scholarly data [9]. Prior to FAIR, the digital ecosystem often prevented researchers from extracting maximum benefit from their investments. Data was frequently stored in fragmented repositories with inconsistent descriptors, creating significant barriers to discovery and reuse for both humans and machines [9] [2].

The FAIR Principles emerged from a workshop in Leiden, Netherlands, in 2014, named 'Jointly Designing a Data Fairport' [9]. A diverse consortium of stakeholders from academia, industry, funding agencies, and scholarly publishers convened with the goal of designing a concise and measurable set of guidelines to enhance the reusability of digital assets [9] [2]. The product of this collaboration was first formally published in 2016 as "The FAIR Guiding Principles for scientific data management and stewardship" [9]. A critical differentiator of FAIR from peer initiatives is its specific emphasis on enhancing the ability of machines to automatically find and use data, in addition to supporting its reuse by individuals [1] [9].

The Core FAIR Guiding Principles

The FAIR principles are a set of independent but related guidelines for scientific data management and stewardship, structured around four foundational pillars: Findability, Accessibility, Interoperability, and Reusability [1] [10]. The principles refer to three types of entities: data (any digital object), metadata (information about that digital object), and infrastructure [1].

Table 1: The Core FAIR Guiding Principles and Their Requirements

| Principle | Core Objective | Key Requirements |

|---|---|---|

| Findable [1] | The first step in (re)using data is to find it. Metadata and data should be easy to find for both humans and computers. | F1. (Meta)data are assigned a globally unique and persistent identifier [10].F2. Data are described with rich metadata [10].F3. Metadata clearly and explicitly include the identifier of the data they describe [10].F4. (Meta)data are registered or indexed in a searchable resource [10]. |

| Accessible [1] | Once found, users need to know how data can be accessed, including authentication and authorisation. | A1. (Meta)data are retrievable by their identifier using a standardised communications protocol [10].A1.1 The protocol is open, free, and universally implementable [10].A1.2 The protocol allows for an authentication and authorization procedure, where necessary [10].A2. Metadata are accessible, even when the data are no longer available [10]. |

| Interoperable [1] | Data must be integrated with other data and work with applications or workflows. | I1. (Meta)data use a formal, accessible, shared, and broadly applicable language for knowledge representation [10].I2. (Meta)data use vocabularies that follow FAIR principles [10].I3. (Meta)data include qualified references to other (meta)data [10]. |

| Reusable [1] | The ultimate goal is to optimise the reuse of data. | R1. (Meta)data are richly described with a plurality of accurate and relevant attributes [10].R1.1. (Meta)data are released with a clear and accessible data usage license [10].R1.2. (Meta)data are associated with detailed provenance [10].R1.3. (Meta)data meet domain-relevant community standards [10]. |

The Critical Role of Machine-Actionability

A defining feature of the FAIR principles is their emphasis on machine-actionability—the capacity of computational systems to find, access, interoperate, and reuse data with minimal human intervention [1] [10]. This is crucial because the scale of data in modern research, particularly in fields like genomics, makes manual handling impractical [11]. The principles ensure that data provides sufficient information for a computational agent to autonomously identify its type, determine its usefulness, and take appropriate action, thereby enabling large-scale, data-intensive science [9] [2].

The FAIRification Framework: A Step-by-Step Experimental Protocol

Implementing the FAIR principles, a process often called "FAIRification," involves a structured process. The following protocol, synthesized from community practices, provides a actionable methodology for researchers to make their data FAIR [2].

Protocol 1: The FAIRification Workflow for Research Data

Objective: To systematically transform conventional research datasets into FAIR-compliant digital assets.

Inputs: Raw data files (e.g., sequencing reads, clinical data tables, experimental measurements), associated documentation.

Required Tools & Infrastructure: A version control system (e.g., Git), a data repository that issues Persistent Identifiers (PIDs) (e.g., Zenodo, FigShare, or a domain-specific archive), and access to relevant ontology portals (e.g., OBO Foundry, FAIRsharing.org) [12] [2].

Procedure:

- Retrieve and Analyze Non-FAIR Data: Fully access and examine the target dataset. Analyze its structure and identify differences between data elements, including inconsistent identification methodologies and incomplete provenance information [2].

- Define a Semantic Model: Select community- and domain-specific ontologies and controlled vocabularies (e.g., SNOMED CT for clinical terms, Gene Ontology for gene functions) to describe the dataset entities unambiguously in a machine-actionable format [2]. This step is critical for achieving Interoperability.

- Make Data Linkable: Apply the semantic model to the data using Semantic Web or Linked Data technologies (e.g., RDF, JSON-LD). This creates explicit, machine-readable links within and between datasets, enhancing both Interoperability and Findability [2].

- Assign a License and Metadata:

- Licensing: Attach a clear and accessible data usage license (e.g., CCO, MIT, or a custom license) to define the terms of reuse, fulfilling part of the Reusability principle (R1.1) [10] [2].

- Metadata Curation: Describe the data with rich metadata. This includes the unique identifier from the upcoming step, detailed provenance (R1.2), and the community standards employed (R1.3). This supports all FAIR principles, especially Findability and Reusability [11] [2].

- Publish FAIR Data: Deposit the data, along with its comprehensive metadata, into a suitable repository that issues a Persistent Identifier (PID) such as a Digital Object Identifier (DOI) [2]. This action directly satisfies the Findability principle (F1, F4) and enables standardised Accessibility (A1).

FAIR in Bioinformatics: From Theory to Practice

In bioinformatics, the FAIR principles have been extended to encompass research software—including scripts, computational workflows, and packages—which is fundamental to the field [12] [13]. The FAIR for Research Software (FAIR4RS) Working Group has reformulated the principles to address unique characteristics of software, such as its executability, composite nature, and versioning [13].

Table 2: Essential Toolkit for FAIR Bioinformatics Research

| Tool Category | Example Solutions | Function in FAIR Compliance |

|---|---|---|

| Persistent Identifiers | DOI, SWHID [13] | Provides a globally unique and persistent identifier for datasets and software (F1). |

| Data Repositories | Zenodo, FigShare, European Genome-phenome Archive [11] [9] | Indexes data and metadata in a searchable resource, often providing a PID (F4). |

| Metadata Standards | MIAME, CEDAR [11] | Provides domain-relevant community standards for describing data (R1.3). |

| Ontologies & Vocabularies | Gene Ontology (GO), SNOMED CT, FAIRsharing Registry [11] [12] | Enables interoperability by providing standard, machine-readable terms for data annotation (I1, I2). |

| Research Software Registries | bio.tools, Research Software Directory [13] | Makes research software findable and citable by providing rich metadata and identifiers (F1, F2). |

Logical Architecture of FAIR Principles

The following diagram illustrates the hierarchical and interconnected nature of the FAIR principles, demonstrating how they build upon one another to achieve the ultimate goal of reusable data.

Discussion and Future Directions

Since their publication, the FAIR principles have gained remarkable traction, evolving from a proposed guideline to a global movement. They were endorsed by the G20 leaders in 2016 and have been adopted by major funding agencies and publishers [10]. In bioinformatics and biopharma, implementing FAIR principles enables faster time-to-insight, improves data ROI, supports AI and multi-modal analytics, and ensures reproducibility and traceability [3]. Organizations like AstraZeneca have embarked on initiatives to FAIRify historical assay data to build more reliable models [2].

The movement continues to evolve with the development of complementary frameworks. The CARE Principles for Indigenous Data Governance (Collective benefit, Authority to control, Responsibility, and Ethics) ensure that data governance also addresses the interests of Indigenous peoples [10] [3]. Furthermore, the emergence of the FAIR4RS Principles ensures that the critical research software underpinning bioinformatics receives the same rigorous stewardship as data [13].

While challenges remain—including fragmented data systems, a lack of standardized metadata, and cultural resistance—the FAIR principles provide a proven, actionable framework for maximizing the value of research data and paving the way for accelerated discovery in bioinformatics and drug development [11] [3].

In the era of data-intensive science, particularly in fields like bioinformatics, the volume, complexity, and creation speed of data have surpassed human capacity for manual management [1]. The FAIR Guiding Principles—emphasizing Findability, Accessibility, Interoperability, and Reuse of digital assets—were established precisely to address this challenge, with a core emphasis on machine-actionability [1]. Machine-actionability refers to the capacity of computational systems to find, access, interoperate, and reuse data with none or minimal human intervention [1]. This shift is not merely technical but fundamental to advancing scientific discovery in bioinformatics and drug development, where it enables the integration and analysis of complex datasets at scale. This paper explores the critical role of machine-actionable frameworks, demonstrating how they transform data management from an administrative exercise into a dynamic, integral component of the research lifecycle.

The Limitations of Traditional Data Management

Traditional data management practices, particularly those centered around static documents, present significant bottlenecks. Data Management Plans (DMPs), which describe data used and produced during research, are typically created as free-form text documents [14]. This format renders them opaque to computational systems. They are often perceived by researchers as an annoying administrative exercise rather than a useful part of research practice, leading to generic answers that lack the specificity required for effective data reuse [14] [15]. The current manifestation of a DMP—a static document often created before a project begins—only contributes to the perception that DMPs are an annoying administrative exercise and do not support data management activities [14]. This passive-document model fails to integrate with the dynamic, automated workflows that characterize modern, data-intensive bioinformatics research.

The Machine-Actionable Paradigm: Definitions and Core Components

A machine-actionable approach structures information consistently so that computers can be programmed against this structure, enabling automated exchange, integration, and validation of information [15]. The core components of this paradigm include:

Machine-Actionable Data Management Plans (maDMPs)

Machine-actionable DMPs (maDMPs) represent a transformative evolution from static documents to dynamic, integrated components of the research infrastructure. They contain an inventory of key information about a project and its outputs, structured to be read and acted upon by software services [14]. This enables parts of the DMP to be automatically generated and shared, thus reducing administrative burdens and improving the quality of information [14]. For example, information from a DMP can trigger automated processes, such as a repository setting information on backup strategy and preservation policy in response to a data steward choosing that particular repository for data deposit [15].

Machine-Actionable Metadata Models

Metadata is the cornerstone of the FAIR principles [16]. Machine-actionable metadata models provide formal, structured representations of reporting guidelines, moving away from ambiguous narratives intended for human consumption [16]. These models are typically built using modern web technologies like JSON-Schema and JSON-LD, which decouple annotation requirements from a domain model and support the injection of semantic meaning through links to established ontologies [16]. This allows for automatic validation of metadata compliance and facilitates the creation of intelligent authoring tools.

Table 1: Key Differences Between Traditional and Machine-Actionable Approaches

| Feature | Traditional Approach | Machine-Actionable Approach |

|---|---|---|

| Format | Free-form text document [14] | Structured data (e.g., JSON) [16] |

| Creation | Manually filled questionnaires [14] | Automatically populated from existing systems [15] |

| Interoperability | Low; information siloed | High; information can be exchanged between systems [14] |

| Dynamic Updates | Static; rarely updated | Live; can be updated as the project evolves [14] |

| Validation | Manual review | Automated checks against schemas [16] |

A Technical Framework for Implementation

Implementing machine-actionable systems requires a cohesive technical framework built on shared standards and identifiers.

The Application Profile for maDMPs

The Research Data Alliance (RDA) DMP Common Standards Working Group developed an application profile for machine-actionable DMPs. An application profile is a metadata design specification that uses a selection of terms from multiple metadata vocabularies, with added constraints, to meet application-specific requirements [15]. This profile serves as a common data model for exchanging DMP information, allowing for the atomization of information into specific, structured fields that can be consumed by various services [15].

Essential Technical Elements

The following elements are critical for a functional machine-actionable ecosystem:

- Persistent Identifiers (PIDs): The use of PIDs—such as ORCIDs for researchers, DOIs for datasets, and ROR IDs for institutions—is fundamental. They provide unambiguous links between entities described in a DMP [14].

- Common Data Models: As exemplified by the maDMP application profile, a common model ensures that all systems interpret the information in the same way [14] [15].

- Machine-Readable Policies: Policies for data access, licensing, and preservation must be expressed in a machine-readable language to enable automated compliance checking and action [14].

Diagram 1: Automated Workflow Enabled by Machine-Actionable DMPs

Experimental Protocols and Methodologies for Bioinformatics

Protocol: Creating a Machine-Actionable Metadata Profile for Flow Cytometry Data

This protocol details the process of formalizing a narrative reporting guideline, like the MIflowCyt standard, into a machine-actionable metadata profile.

- Checklist Decomposition: Analyze the textual MIflowCyt checklist and decompose it into its simplest, reusable entities (e.g.,

Sample,Instrument,Antibody) [16]. - Schema Definition: For each entity, create a JSON Schema file. The schema unambiguously defines the properties, data types, cardinality, and constraints for each field.

- Semantic Annotation: Create a JSON-LD context file to annotate each entity and field in the JSON Schema with terms from relevant ontologies (e.g., OBI, EFO). This injects explicit, machine-readable meaning.

- Validation: Use the resulting JSON Schemas to validate instance documents (e.g., metadata from a FlowRepository experiment). Software agents can automatically check for completeness and compliance against the standard [16].

Protocol: Automated Validation and Compliance Assessment of Dataset Metadata

This methodology enables the automated FAIRness assessment of dataset metadata at scale.

- Profile Retrieval: A software agent retrieves the canonical, machine-actionable profile (set of JSON Schemas) for the relevant reporting standard.

- Metadata Harvesting: The agent harvests the dataset's metadata, ideally through a standardized API.

- Schema Validation: The agent validates the harvested metadata (JSON instance) against the JSON Schemas. This checks syntactic and structural compliance.

- Semantic Validation: The agent checks that the values in the metadata fields use the correct controlled terms and ontologies as specified in the JSON-LD context.

- Report Generation: The agent generates a quantitative report on the degree of annotation compliance, providing a verifiable measure of FAIRness [16].

Table 2: The Scientist's Toolkit: Essential Reagents for Machine-Actionable Bioinformatics

| Item Name | Function in Machine-Actionable Research |

|---|---|

| JSON-Schema | A vocabulary to annotate and validate JSON documents, used to define the structure of metadata models [16]. |

| JSON-LD | A lightweight syntax to serialize Linked Data in JSON, used to add semantic context to metadata without disrupting the underlying data structure [16]. |

| Persistent Identifier (PID) | A long-lasting reference to a digital object, person, or organization (e.g., DOI, ORCID). Critical for creating unambiguous links in machine-readable data [14]. |

| Controlled Vocabulary/Ontology | A structured set of standard terms and their relationships (e.g., EDAM, OBI). Ensures consistent, machine-interpretable meaning in metadata [16]. |

| Application Profile | A metadata specification that combines terms from multiple vocabularies with constraints to meet specific application needs, such as the RDA's maDMP profile [15]. |

Quantitative Benefits and Stakeholder Impact

The implementation of machine-actionable systems creates tangible, measurable benefits for all stakeholders in the research data lifecycle. The following table summarizes the quantitative and qualitative impacts.

Table 3: Stakeholder Benefits from Machine-Actionable Data Management

| Stakeholder | Key Quantitative & Qualitative Benefits |

|---|---|

| Researcher | Automated DMP creation; streamlined data preservation; automated reporting; recognition via data citation [14]. |

| Funder | Structured information enables automated compliance monitoring, replacing manual processes [14]. |

| Repository Operator | Receives information on costs, licenses, and metadata upfront; enables capacity planning and facilitates data ingest [14]. |

| Bioinformatician | Rich, structured metadata allows for automatic discovery and integration of datasets into analysis workflows (e.g., bulk RNA-Seq, single-cell). |

| Research Institution | Gets a holistic view of data created within the institution, enabling better planning of data management infrastructure [14]. |

Diagram 2: How Machine-Actionability Enables each FAIR Principle

The emphasis on machine-actionability is a critical response to the realities of data-intensive science. By transforming data and its descriptions from passive documents into active, structured components of the digital research ecosystem, we unlock new potentials for discovery. For bioinformatics and drug development, this shift is not optional but essential. It reduces administrative burdens, enhances data quality, and, most importantly, creates a robust foundation for the large-scale, automated data integration and analysis that will drive the next generation of scientific breakthroughs. The tools, standards, and frameworks—such as the RDA's maDMP application profile and machine-actionable metadata models—are now available. Widespread adoption across the research community is the necessary next step to fully realize the promise of FAIR and empower both humans and machines in the collective endeavor of scientific exploration.

The exponential growth in volume and complexity of biological data has rendered traditional data management practices insufficient, creating an urgent need for a systematic approach to data stewardship. The FAIR Guiding Principles—ensuring that digital assets are Findable, Accessible, Interoperable, and Reusable—establish a framework for managing this deluge of scientific data [9]. These principles emphasize machine-actionability, recognizing that computational systems must be able to autonomously find and use data due to the scale and complexity that exceeds human processing capabilities [1]. Within bioinformatics and drug development, where data integration and reuse are fundamental to advancement, the implementation of FAIR principles has transitioned from a recommendation to a critical necessity.

The absence of FAIR data management creates significant economic and scientific inefficiencies that impede research progress and innovation. This technical guide quantifies these impacts through empirical studies and economic analyses, providing bioinformatics researchers and drug development professionals with evidence-based insights for strategic data management planning. By examining concrete implementation case studies and their outcomes, we demonstrate how FAIRification serves as a fundamental enabler for advanced analytics, collaborative science, and accelerated discovery timelines.

Quantifying the Economic Impact of Non-FAIR Data

Multiple independent studies have attempted to quantify the substantial economic costs incurred when research data fails to meet FAIR standards. These analyses consider both direct financial losses and opportunity costs resulting from inefficient data handling practices.

Macroeconomic Costs

At a macroeconomic level, the European Commission conducted a comprehensive analysis estimating that the absence of FAIR research data costs the European economy at least €10.2 billion annually [17] [18] [19]. This conservative estimate accounts for measurable indicators including researcher time spent searching for and attempting to reuse non-FAIR data, additional storage costs for redundant data copies, unnecessary licensing fees, research retractions, and redundant studies receiving double funding.

When accounting for broader impacts on innovation through parallels with the European open data economy, this figure rises by an additional €16 billion annually [17] [18]. This brings the total estimated impact to €26.2 billion per year in lost value for the European economy alone [20]. These staggering figures highlight the massive inefficiency introduced into the research ecosystem when data cannot be readily discovered and reused.

Table 1: Estimated Annual Economic Impact of Non-FAIR Research Data in the EU

| Cost Category | Conservative Estimate (€) | Including Innovation Impact (€) |

|---|---|---|

| Direct research inefficiencies | 10.2 billion | 10.2 billion |

| Lost innovation opportunity | Not quantified | 16 billion |

| Total Impact | 10.2 billion | 26.2 billion |

Organizational and Project-Level Costs

At the organizational level, the financial impact of poor data quality is similarly significant. Gartner research indicates that the average financial impact of poor data quality on organizations is $15 million per year [18] [19]. In the pharmaceutical sector, where research and development costs for a single new drug can reach $2.8 billion, the ability to reuse high-quality data represents a substantial opportunity for cost savings [21].

Empirical evidence from implementation studies demonstrates the potential for efficiency gains. A survey of experts using the FAIR4Health solution reported time savings of 56.57% in research data management activities, resulting in estimated savings of €16,800 per month for the surveyed organization [20]. These savings primarily stem from reduced time spent on data cleaning, preprocessing, curation, validation, normalization, and standardization tasks.

Table 2: FAIR4Health Solution Impact on Research Management Outcomes

| Metric | Before FAIR Implementation | With FAIR4Health Solution | Improvement |

|---|---|---|---|

| Time spent on data management tasks | Baseline | 56.57% reduction | 56.57% time saved |

| Economic cost | Baseline | €16,800/month saved | Significant cost saving |

| Key areas of improvement | Data cleaning, preprocessing, curation, validation, normalization, standardization | Streamlined processes | Major efficiency gains |

Methodologies for Quantifying FAIR Implementation Impact

The FAIR4Health Impact Assessment Protocol

The FAIR4Health project developed a rigorous methodology to analyze the impact of FAIR implementation on health research management outcomes, specifically measuring time and economic savings [20]. This protocol provides a reproducible framework for assessing FAIR implementation benefits.

Experimental Design

The study employed a comparative survey methodology distributed to data management experts with expertise in using the FAIR4Health solution. Participants had experience with both traditional research data management and the FAIR4Health approach, enabling direct comparison [20].

The survey instrument contained four structured sections:

- General Information: Collected demographic and professional background of participants, including organization type, research experience, and typical dataset sizes.

- General Data Science Practices: Assessed existing difficulties in finding and accessing appropriate data, organizational endorsement of FAIR principles, and technical approaches including AI techniques and metadata standards.

- Standalone Research without FAIR4Health: Documented current time investment per task in typical data management processes for recent research projects.

- Research with FAIR4Health Tools: Captured time investment for the same data management tasks using FAIR4Health tools (Data Curation Tool, Data Privacy Tool, and FAIR4Health platform).

Task-Specific Time Tracking

Participants provided detailed time expenditure data for specific research data management tasks:

- Data cleaning: Including pre-processing, curation, and validation activities to ensure data quality.

- Data normalization, standardization, and semantic modeling: Covering data integration and interoperability efforts across disparate sources.

- Data federation and exploratory analysis: Encompassing initial data exploration and hypothesis generation.

The protocol specifically asked researchers to reference a recently completed research project to ensure accurate recall and realistic time estimates for both scenarios [20].

Economic Calculation Method

The economic analysis converted time savings into financial metrics using the following approach:

- Recorded monthly time investment in research data management tasks.

- Calculated the proportional time reduction (56.57%) achieved through FAIR4Health tools.

- Converted time savings to financial metrics based on researcher compensation and operational costs.

- Aggregated savings across the organization to determine total economic impact (€16,800 monthly savings) [20].

FAIRification Workflow and Technical Infrastructure

The FAIR4Health project implemented a structured FAIRification workflow based on GO FAIR guidance, adapted with specific restrictions and new steps for health data requirements [20]. This technical framework provides a replicable model for bioinformatics implementations.

Diagram 1: FAIRification workflow for health data

Core Technical Components

The FAIR4Health solution implemented two specialized applications to support the FAIRification workflow:

Data Curation Tool (DCT): Designed to extract, transform, and load existing healthcare and health research data into HL7 FHIR repositories, ensuring structural and semantic interoperability [20].

Data Privacy Tool (DPT): Implemented anonymization and de-identification techniques to address privacy challenges presented by sensitive health data, enabling compliant sharing and analysis [20].

The platform incorporated Privacy-Preserving Distributed Data Mining (PPDDM) methods to facilitate federated use of AI algorithms without transferring sensitive data between clinical sites. This approach generated partial models at each health data owner's facility, with the platform creating merged models from these distributed computations [20].

Scientific Impact of FAIR Data in Bioinformatics and Drug Discovery

Accelerating Discovery Through Enhanced Data Reusability

The implementation of FAIR principles directly addresses critical bottlenecks in bioinformatics and pharmaceutical research. In drug discovery, where bringing a new medicine to market costs between $900 million and $2.8 billion [21], the ability to reuse existing data represents a substantial opportunity for efficiency gains. It has been estimated that availability of high-quality, reusable data could reduce capitalised R&D costs by approximately $200 million for each new drug brought to the clinic [21].

FAIR data enables the creation of "virtual clinical cohorts" from electronic health records, which can serve as placebo or control arms in Phase 2 and 3 trials [21]. This approach both reduces the number of participants required for clinical studies and increases the chance that all participants receive the therapeutic benefit of the investigational treatment.

Enabling Advanced Analytical Approaches

The pharmaceutical industry increasingly relies on artificial intelligence and machine learning to extract insights from complex biological data. These approaches are highly dependent on the quality, consistency, and scope of training data [21]. FAIR data provides the essential foundation for effective AI/ML implementation by ensuring that data assets include all supplemental details needed for machines to identify, qualify, and use data, even if they have never been encountered before [22].

The COVID-19 pandemic highlighted the urgent need for FAIR data implementation, as researchers struggled to rapidly access and integrate virus, patient, and therapeutic discovery data from disparate sources [23]. The availability of such data in FAIR format could have accelerated the pandemic response by enabling large-scale, integrated analysis [23].

Implementation Framework and Research Toolkit

Successful FAIR implementation requires both technical infrastructure and organizational commitment. The following research toolkit outlines essential components for establishing FAIR-compliant bioinformatics research environments.

Table 3: FAIR Implementation Research Toolkit

| Tool Category | Representative Solutions | Function in FAIRification Process |

|---|---|---|

| Data Curation Tools | Data Curation Tool (DCT) [20], CENtree [18] | Extract, transform, and load data into standardized formats; support ontology management for data organization |

| Semantic Annotation | TERMite [18], Ontology Services | Named Entity Recognition coupled with controlled vocabularies to create rich, machine-readable data |

| Data Discovery Platforms | SciBite Search [18], FAIR4Health Platform [20] | Enable federated search across multiple data resources using semantic queries |

| Repository Infrastructure | HL7 FHIR Repositories [20], General-purpose repositories (Dataverse, FigShare) [9] | Provide standardized, persistent storage for FAIR data with unique identifiers |

| Privacy-Preserving Tools | Data Privacy Tool (DPT) [20] | Implement anonymization and de-identification techniques for sensitive data |

Implementation Challenges and Mitigation Strategies

Organizations implementing FAIR principles face several categories of challenges:

Technical Challenges: Associated with infrastructure, tools, and methodologies required for FAIRification, including persistent identifier services, metadata registries, and ontology services [23]. Mitigation requires engagement of IT professionals, data stewards, and domain experts.

Financial Challenges: Related to resources required to establish and maintain physical data infrastructures, employ personnel, and ensure long-term sustainability [23]. Successful implementation requires alignment with organizational business goals and development of a long-term data strategy.

Legal Challenges: Correspond to requirements for processing and sharing data, particularly regarding accessibility rights and compliance with data protection regulations like GDPR [23]. Mitigation requires involvement of data protection officers and legal consultants.

Organizational Challenges: Include providing training to personnel and developing an organizational culture that values and rewards FAIR data management practices [23]. Successful implementation requires engagement of data champions and data owners throughout the organization.

The empirical evidence and economic analyses presented in this technical guide demonstrate that the cost of maintaining non-FAIR data ecosystems is substantial, both in direct financial terms and in lost scientific opportunity. The quantified economic impact—€10.2-26.2 billion annually in the European Union alone—provides a compelling business case for strategic investment in FAIR implementation [20] [17].

For bioinformatics researchers and drug development professionals, FAIR data principles represent more than a data management framework—they serve as a fundamental enabler for 21st century scientific discovery. The implementation of FAIR principles allows research organizations to transition from fragmented, single-use data practices to integrated, reusable data assets that power advanced analytics, cross-disciplinary collaboration, and accelerated discovery timelines.

As the volume and complexity of biological data continue to grow, the strategic adoption of FAIR principles will increasingly determine which organizations can effectively leverage their data assets for scientific advancement and therapeutic innovation. The evidence clearly indicates that the cost of non-FAIR data is not merely financial—it is measured in delayed treatments, duplicated efforts, and missed opportunities for scientific breakthrough.

In the rapidly evolving world of biopharmaceutical research, data has emerged as both a critical asset and a significant challenge. The volume, complexity, and creation speed of data continue to accelerate, with organizations generating vast amounts of information from genomics, imaging, real-world evidence, and digital trial endpoints [24]. Yet much of this valuable data remains underutilized due to silos, inconsistent formats, weak metadata, and limited interoperability [24]. This data dilemma hampers analytics, delays regulatory submissions, and ultimately slows innovation in therapeutic development.

Against this backdrop, two distinct frameworks for data management and sharing have gained prominence: FAIR data principles and Open Data. While these terms are often misunderstood or used interchangeably, they represent fundamentally different approaches with specific goals and implications for biopharma [25]. The FAIR principles—Findable, Accessible, Interoperable, and Reusable—provide a framework for enhancing the utility of data, particularly for computational analysis, without necessarily making it publicly available [3]. Open Data, by contrast, focuses on making data freely available to everyone without restrictions, emphasizing transparency and collaborative innovation [25].

Understanding the distinction between these approaches is crucial for biopharma organizations seeking to maximize the value of their data assets while navigating the complex landscape of intellectual property, patient privacy, and regulatory requirements. This technical guide examines the key differences between FAIR and Open Data, their practical implications for bioinformatics research and drug development, and provides actionable methodologies for implementation within biopharma organizations.

Conceptual Foundations: Demystifying FAIR and Open Data

The FAIR Data Principles

The FAIR data principles were formally defined in 2016 through a seminal publication by Wilkinson et al., establishing guidelines to enhance the reusability of digital assets in scientific research [9]. These principles were developed to address the urgent need to improve infrastructure supporting the reuse of scholarly data, with particular emphasis on enhancing the ability of machines to automatically find and use data [9]. The acronym FAIR represents four foundational principles:

Findable: Data and metadata should be easy to find for both humans and computers through the assignment of persistent identifiers, rich metadata description, and registration in searchable resources [1]. This foundational step ensures that digital objects can be discovered through standard search operations with minimal specialized knowledge of the particular data resource.

Accessible: Once found, data should be retrievable by their identifier using a standardized communications protocol, which should be open, free, and universally implementable [1]. The protocol may include an authentication and authorization step where necessary, but metadata should remain accessible even when the data is no longer available.

Interoperable: Data must be able to be integrated with other data and work across applications or workflows for analysis, storage, and processing [1]. This requires the use of a formal, accessible, shared, and broadly applicable language for knowledge representation, along with qualified references to other metadata.

Reusable: The ultimate goal of FAIR is to optimize the reuse of data through rich description of their attributes with multiple accurate and relevant attributes, clear usage licenses, detailed provenance, and adherence to domain-relevant community standards [1] [13].

A distinctive emphasis of the FAIR principles is their focus on machine-actionability—the capacity of computational systems to find, access, interoperate, and reuse data with minimal human intervention [9]. This focus responds to the increasing volume and complexity of data in modern research, which exceeds human capacity for manual processing and analysis.

The Open Data Paradigm

Open Data represents a different philosophical approach to data sharing, rooted in principles of transparency, collaboration, and unrestricted access to promote innovation and societal benefit [25]. The core characteristics of Open Data include:

Availability and Access: Data must be freely available to everyone, preferably by downloading over the internet without paywalls or complex permissions at no more than a reasonable reproduction cost [25].

Reuse and Redistribution: There should be no legal or technical restrictions on how the data can be utilized, with terms that permit reuse and redistribution, including intermixing with other datasets [25].

Universal Participation: Anyone should be able to use, reuse, and redistribute Open Data without discrimination against fields of endeavor or against persons or groups [25].

In the life sciences sector, Open Data has been instrumental in accelerating research by providing unrestricted access to key datasets such as The Cancer Genome Atlas (TCGA) [25]. During the COVID-19 pandemic, for example, the availability of open genomic data on the SARS-CoV-2 virus enabled researchers worldwide to collaborate in developing vaccines and treatments [25].

Key Differences Between FAIR and Open Data

While FAIR and Open Data share common goals of enhancing data utility and promoting collaboration, they differ in several fundamental aspects that have significant implications for biopharma organizations. The table below summarizes these key distinctions:

Table 1: Comparative Analysis of FAIR Data vs. Open Data

| Aspect | FAIR Data | Open Data |

|---|---|---|

| Accessibility | Can be open or restricted based on use case; emphasizes defined access conditions | Always open to all without restrictions |

| Primary Focus | Ensures data is machine-readable and reusable | Promotes unrestricted sharing and transparency |

| Metadata Requirements | Rich metadata is essential for findability and reusability | Metadata may be present but is not strictly required |

| Interoperability Standards | Emphasizes standardized vocabularies and formats for integration | Doesn't necessarily adhere to specific interoperability standards |

| Licensing | Varies—can include access restrictions based on sensitivity | Typically utilizes open licenses like Creative Commons |

| Primary Users | Designed for researchers, institutions, and machines | Designed for public and scientific communities |

| Ideal Application | Structured data integration in R&D; proprietary data | Democratizing access to large public datasets |

Perhaps the most critical distinction lies in their approach to accessibility. FAIR data doesn't necessarily mean the data is open to everyone—the "Accessible" component specifically refers to data being "retrievable by their identifier using a standardized communications protocol" with the possibility of "an authentication and authorization procedure where necessary" [1]. This allows for appropriate data protection when required for patient privacy, intellectual property considerations, or competitive advantage in biopharma research [25].

The emphasis on machine readability also differentiates FAIR principles. FAIR data places strong emphasis on making data machine-actionable, which is crucial in life sciences where large-scale data analysis often requires computational methods [25]. Open Data, while it may be machine-readable, doesn't have this as a primary focus, potentially limiting its utility for automated analysis pipelines and AI/ML applications [3].

Furthermore, FAIR principles stress the importance of rich metadata and clear documentation to ensure data can be properly understood and reused, while Open Data may lack sufficient metadata, limiting its utility for complex research applications [25]. The FAIR framework also emphasizes the use of standardized vocabularies and formats to ensure data can be easily integrated and analyzed across different platforms, whereas Open Data doesn't necessarily adhere to specific interoperability standards [25].

Practical Implementation in BioPharma

The FAIRification Process: A Methodological Framework

Implementing FAIR principles—a process often called "FAIRification"—requires a systematic approach to transform existing data practices. The following workflow outlines the key stages in the FAIRification process for biopharma research data:

FAIRification Workflow for Biopharma Data

Based on established implementation frameworks [2], the FAIRification process can be broken down into five methodical steps:

Step 1: Retrieve and Analyze Non-FAIR Data The initial phase involves comprehensive assessment of existing data assets to evaluate their current state and identify specific gaps in FAIR compliance. This requires full access to data with examination of structure and differences between data elements, including identification methodologies and provenance tracking [2]. For biopharma organizations, this typically involves auditing diverse data sources—from clinical trial records and genomic sequences to high-throughput screening results—to establish a baseline for FAIRification efforts.

Step 2: Define Semantic Model This critical step involves selecting and implementing community- and domain-specific ontologies along with controlled vocabularies to describe dataset entities in an unambiguous, machine-actionable format [2]. In biopharma contexts, this might include standards like SNOMED CT for clinical terminology, HUGO Gene Nomenclature Committee (HGNC) terms for genomics, or CDISC standards for clinical trial data. The semantic model provides the foundational framework that enables meaningful data integration and interpretation.

Step 3: Make Data Linkable The defined semantic model is applied to the raw data to create explicit relationships and connections using Semantic Web or Linked Data technologies [2]. This transformation enables computational systems to traverse and reason across connected data points, facilitating advanced analytics and knowledge discovery. For example, connecting drug compound data to their protein targets and associated disease pathways through standardized identifiers creates a networked knowledge graph that can power drug repurposing initiatives.

Step 4: Assign License and Metadata A crucial but often overlooked aspect of FAIRification involves establishing clear usage rights through appropriate data licensing alongside comprehensive metadata description [2]. The data needs to be described by rich metadata to ensure the FAIR principles are supported, with careful attention to usage restrictions necessary for proprietary compounds, patient privacy, or competitive considerations. This balanced approach enables appropriate data sharing while protecting legitimate interests.

Step 5: Publish FAIR Data The final step involves publishing the FAIRified data in appropriate repositories or platforms alongside the relevant license and metadata, making it discoverable and accessible to authorized users [2]. The data can now be indexed by search engines and accessed by users, with implementation of authentication and authorization protocols where necessary to maintain appropriate access controls.

FAIR Data Assessment Methodology

Evaluating the FAIRness of existing data assets requires systematic assessment methodologies. One validated approach involves using structured questionnaires with strong internal consistency (Cronbach's α = 0.84) [26]. The following table outlines key assessment criteria across the FAIR dimensions:

Table 2: FAIR Data Assessment Criteria and Implementation Indicators

| FAIR Principle | Assessment Criteria | Implementation Indicators |

|---|---|---|

| Findable | Persistent identifiers assigned to datasets | Use of DOIs, UUIDs, or other persistent identifier schemes |

| Rich metadata provided | Inclusion of descriptive, structural, and administrative metadata | |

| Metadata searchable and indexable | Registration in searchable resources or data catalogs | |

| Accessible | Standardized retrieval protocol | Data retrievable via standard protocols (e.g., HTTPS, APIs) |

| Authentication and authorization clarity | Well-defined access procedures when restrictions apply | |

| Metadata persistence | Metadata remains accessible even if data becomes unavailable | |

| Interoperable | Use of formal knowledge representation | Standardized vocabularies, ontologies, and formal languages |

| Qualified references to other data | Use of persistent identifiers when referencing related objects | |

| Community standards compliance | Adherence to domain-relevant standards and formats | |

| Reusable | Clear usage licenses | Machine-readable license information |

| Detailed provenance information | Clear documentation of data origin and processing history | |

| Community standards alignment | Meets domain-relevant standards for data quality |

Organizations can implement this assessment framework through systematic audits of their data assets, scoring each criterion to establish FAIRness baselines and track improvement over time. The maturity of FAIR implementation can be measured using standardized indicators that evaluate both the technical and organizational aspects of data management [27].

Essential Infrastructure and Research Reagents

Successful FAIR implementation in biopharma requires both technical infrastructure and standardized research reagents. The following table details key components of the FAIR data technology stack:

Table 3: FAIR Data Implementation Toolkit for Biopharma Research

| Component | Function | Examples/Standards |

|---|---|---|

| Persistent Identifiers | Provide long-lasting references to digital objects | Digital Object Identifiers (DOIs), Uniform Resource Locators (URLs), Persistent URLs (PURLs) [2] |

| Metadata Standards | Describe dataset context, quality, and characteristics | Descriptive, structural, administrative, reference, and statistical metadata [2] |

| Ontologies & Vocabularies | Enable semantic interoperability through standardized terminology | SNOMED CT (clinical terms), HGNC (gene nomenclature), CDISC (clinical trials) [24] |

| Data Repositories | Provide FAIR-compliant storage and access infrastructure | GenBank, Worldwide Protein Data Bank, The Cancer Genome Atlas, institutional repositories [9] |

| Authentication & Authorization | Manage secure access to sensitive or proprietary data | Login credentials, API keys, OAuth protocols, role-based access controls [2] |

| Data Catalogs | Enable discovery of distributed data assets | Metadata-driven search platforms, data inventory systems [24] |

Real-World Applications and Use Cases in BioPharma

FAIR Data Implementation in Drug Discovery

The practical impact of FAIR principles extends across the biopharma value chain, with significant demonstrated benefits in drug discovery and development. At AstraZeneca, systematic FAIRification of historical assay data, including their protocols, has enabled more reliable modeling and enhanced decision-making in early-stage drug discovery [2]. By applying FAIR principles to assay data and their associated metadata, researchers can more effectively make sense of existing data assets and build predictive models that accelerate target identification and validation.

Another compelling example comes from the United Kingdom's Oxford Drug Discovery Institute, where researchers used FAIR data in databases powered by AI to speed Alzheimer's drug discovery by reducing gene evaluation time from a few weeks to a few days [3]. This dramatic acceleration was enabled by the machine-actionable nature of FAIR data, which allowed computational systems to efficiently traverse and analyze complex biological relationships.

Clinical Trials and Regulatory Applications

In the clinical trials domain, FAIR data principles help integrate protocol, patient, imaging and outcome data, accelerating site selection, patient matching, real-world evidence linkage and regulatory submissions [24]. The implementation of metadata-driven search and retrieval of datasets for regulatory submissions has demonstrated potential to cut weeks or months out of preparation timelines, representing significant value in a highly regulated environment where time-to-market directly impacts patient access and commercial success [24].

The BeginNGS coalition provides another illustrative use case, where researchers accessed reproducible and traceable genomic data from the UK Biobank and Mexico City Prospective Study using query federation, helping to discover false positive DNA differences and reduce their occurrence to less than 1 in 50 subjects tested [3]. This example highlights how FAIR data supports scientific rigor and quality control in genomic medicine.

Integration of FAIR and Open Data Strategies

Progressive biopharma organizations increasingly recognize the value of combining FAIR and Open Data approaches in a complementary strategy. A common pattern involves using FAIR principles to manage proprietary datasets internally while contributing anonymized, aggregated data to open repositories for public benefit [25]. Government-funded research institutions often follow FAIR principles internally and publish open data externally to comply with transparency mandates [25].

This hybrid approach enables organizations to balance competitive advantage with scientific collaboration, accelerating innovation while protecting legitimate intellectual property interests. It also demonstrates how FAIR and Open Data, while conceptually distinct, can be strategically integrated to maximize both scientific and business value.

The distinction between FAIR and Open Data has profound implications for pharmaceutical, biotechnology, and healthcare industries operating in an increasingly data-intensive research environment [25]. FAIR data principles offer a nuanced and flexible approach that can accommodate the need for data protection while still maximizing the value of research data [25]. This makes FAIR particularly well-suited to the complex needs of biopharma, where balancing data sharing with intellectual property protection, patient privacy, and competitive advantage remains an ongoing challenge.

Organizations that successfully operationalize FAIR principles achieve measurable advantages including faster insights, more efficient regulatory pathways, stronger collaboration, and accelerated innovation [24]. The implementation journey requires leadership commitment, modern data architecture, and a culture that values data stewardship [24]. While the path to comprehensive FAIR implementation presents significant challenges—including fragmented data systems, lack of standardized metadata, cultural resistance, and technical debt associated with legacy data [3]—the incremental gains can deliver meaningful value throughout the drug development pipeline.

As the life sciences continue to generate increasingly complex and voluminous data, the principles of FAIR data are likely to become even more critical [25]. While open data will continue to play an important role, particularly in publicly funded research, the structured approach of FAIR data is better suited to the sophisticated needs of biopharma organizations [25]. By adopting FAIR data principles, companies can enhance the value of their data assets, improve collaboration and data sharing, accelerate the pace of discovery and innovation, ensure better compliance with regulatory requirements, and increase the reproducibility of research findings [25].

The transformation from application-centric to data-centric research paradigms, enabled by FAIR implementation, represents a fundamental shift in how biopharma organizations conceptualize and utilize their most valuable digital assets. Those who embrace this transformation position themselves to maximize research value in an increasingly competitive and complex therapeutic landscape.

From Theory to Practice: A Step-by-Step Methodology for Implementing FAIR in Bioinformatics Workflows

In the data-intensive world of modern bioinformatics, the ability to effectively manage and steward digital assets is a critical conduit for knowledge discovery and innovation [9]. The vast volume, complexity, and speed of data generation in fields like genomics and drug development mean that humans increasingly rely on computational support. This reality underpins the FAIR Guiding Principles, which aim to make digital assets Findable, Accessible, Interoperable, and Reusable [1]. The principles place specific emphasis on enhancing the ability of machines to automatically find and use data, in addition to supporting its reuse by individuals [9]. This technical guide focuses on the first pillar of FAIR—Findability—by providing a detailed examination of how to implement its two core components: persistent identifiers and rich metadata. Findability is the essential first step in (re)using data; without it, even the most valuable datasets remain hidden and underutilized. For researchers, scientists, and drug development professionals, mastering these components is not merely a technical exercise but a fundamental requirement for accelerating discovery, ensuring reproducibility, and maximizing the return on research investments.

The Core Principles of Findability

The FAIR principles define findability as the state where "(meta)data and data are easy to find for both humans and computers" [1]. This is operationalized through four key principles:

- F1: (Meta)data are assigned a globally unique and persistent identifier.

- F2: Data are described with rich metadata.

- F3: Metadata clearly and explicitly include the identifier of the data they describe.

- F4: (Meta)data are registered or indexed in a searchable resource.

Principles F1 and F2 form the foundational, actionable core of making data findable. A globally unique and persistent identifier (F1) acts as a permanent, unambiguous reference to a digital object, removing ambiguity in the meaning of published data [28]. Rich metadata (F2) provides the contextual information that enables both humans and machines to understand what the data is, how it was generated, and its potential utility. Principle F3 ensures the metadata and data are inextricably linked, while F4 guarantees that this information can be discovered through search engines and data registries [1] [29].

Implementing Persistent Identifiers

What Constitutes a Persistent Identifier?

A persistent identifier (PID) is more than just a random string of characters. To comply with FAIR principle F1, an identifier must be:

- Globally Unique: The identifier must be assigned by a service that uses algorithms guaranteeing its uniqueness, ensuring it cannot be reused or reassigned to refer to another digital object [28].

- Persistent: The identifier must be backed by a commitment to its long-term resolvability, meaning the link it provides will remain active and functional into the foreseeable future, overcoming the common problem of link rot [28].

The poor example of the number "163483"—which can refer to a student ID, a bovine protease, and a sewing machine part—highlights the critical importance of globally unique identifiers to prevent such ambiguity [28].

Technical Specifications and Service Providers

A PID system typically consists of two parts: the identifier itself and a resolving service that directs users to the current location of the described digital object. The table below summarizes common PID services and their primary applications in bioinformatics.

Table 1: Common Persistent Identifier Services for Bioinformatics Data

| Identifier Type | Example | Primary Use Case | Example Service/Registry |

|---|---|---|---|

| Digital Object Identifier (DOI) | doi:10.4121/uuid:5146dd0... |

Citing published datasets, articles, and supplementary materials [30]. | DataCite, Crossref, Zenodo |

| Archival Resource Key (ARK) | https://escholarship.org/uc/item/9p9863nc |

Providing persistent, long-term access to research objects. | EZID, NAAN |

| Universally Unique Identifier (UUID) | 5146dd06-98e4-426c... |

Providing unique identifiers for data records within a system. | Various software libraries |

| Accession Number (e.g., EPI_ISL) | EPI_ISL_402124 (for SARS-CoV-2 sequence) |

Identifying specific data records within specialized databases [29]. | GISAID, GenBank, UniProt |

The GISAID database provides a powerful, real-world example of F1 implementation in bioinformatics. It mints a globally unique and persistent identifier (an EPI_ISL ID) for each data record, such as EPI_ISL_402124 for the official reference SARS-CoV-2 sequence. This allows for granular traceability of a single genetic sequence and its associated metadata. Furthermore, GISAID mints an EPI_SET ID and a corresponding DOI for any curated collection of sequences, facilitating easy citation and data availability statements in scientific publications [29].

Designing and Applying Rich Metadata

The Role of Metadata in Findability

Rich metadata is the descriptive backbone that makes data discoverable and understandable. While a persistent identifier allows a dataset to be found, it is the metadata that explains what is being found and why it is relevant. As emphasized by the FAIR principles, machine-readable metadata is essential for the automatic discovery of datasets and services [1]. Without high-quality metadata, data remains a cryptic artifact, its potential for reuse severely limited.

Metadata Standards and Controlled Vocabularies