Evaluating Cellular Potency: Strategies for Assessing Compound Libraries in Drug Discovery

This article provides a comprehensive framework for researchers and drug development professionals to evaluate the biological potency of compounds across diverse screening libraries.

Evaluating Cellular Potency: Strategies for Assessing Compound Libraries in Drug Discovery

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to evaluate the biological potency of compounds across diverse screening libraries. It covers foundational principles of library design and quality control, explores methodological approaches for cell-based potency assays, addresses common troubleshooting and optimization challenges, and outlines strategies for assay validation and comparative analysis. By integrating these elements, the article aims to guide the selection and application of potency assays to ensure consistent, reliable, and biologically relevant data for advancing therapeutic candidates.

Building a Foundation: Principles of Library Design and Potency Assessment

Defining Potency in Cellular Contexts and Its Critical Role in Drug Discovery

In modern drug discovery, cellular potency is a critical parameter that measures a compound's biological activity within a physiologically relevant cellular environment. Unlike biochemical assays that assess compound binding in purified systems, cellular potency evaluations capture the complex interplay of cell permeability, target engagement, metabolic processing, and functional activity in living systems. The accurate determination of cellular potency has become increasingly important for prioritizing lead compounds, predicting efficacious doses, and reducing late-stage attrition in the drug development pipeline.

The evaluation of cellular potency across diverse compound libraries presents significant technical challenges, particularly in accurately identifying and quantifying compound activity in complex biological matrices. Recent advances in analytical technologies, including liquid chromatography combined with high-resolution mass spectrometry (LC-HRMS) and cellular target engagement assays, have transformed how researchers measure and interpret cellular potency data. These methodologies provide the foundation for reliable comparison of compound libraries and enhance the predictive power of early-stage discovery efforts.

Experimental Platforms for Cellular Potency Assessment

Analytical Instrumentation for Compound Identification

Liquid chromatography combined with high-resolution mass spectrometry (LC-HRMS) has emerged as a cornerstone technology for suspect screening (SS) and non-target screening (NTS) in metabolomics and environmental toxicology [1]. This platform enables researchers to identify and quantify compounds within complex cellular matrices, providing essential data for potency determination. The technology's utility extends across multiple stages of drug discovery, from initial compound library screening to mechanistic studies of drug action.

Two primary acquisition modes are employed in LC-HRMS analysis: data-dependent acquisition (DDA) and data-independent acquisition (DIA). DDA operates using a top-n strategy where the highest intensity m/z values in a spectrum are selected for MS2 acquisition, yielding relatively clean spectra with few interferences. In contrast, DIA performs MS2 acquisition in parallel for co-eluting ions within a selected m/z range, generating composite fragmentation spectra that are more challenging to interpret but provide comprehensive coverage of detectable compounds [1]. The choice between these acquisition modes represents a critical trade-off between spectrum quality and compound coverage, with significant implications for potency assessment across compound libraries.

Cellular Target Engagement Technologies

Cellular Thermal Shift Assay (CETSA) has emerged as a leading technology for validating direct target engagement in intact cells and tissues, providing functional evidence of cellular potency [2]. This method measures the thermal stabilization of protein targets upon compound binding in physiologically relevant environments, bridging the gap between biochemical potency and cellular efficacy.

Recent advancements have integrated CETSA with high-resolution mass spectrometry to quantify drug-target engagement in complex biological systems. A 2024 study demonstrated this approach by measuring dose- and temperature-dependent stabilization of DPP9 in rat tissue, confirming target engagement both ex vivo and in vivo [2]. This capability to provide quantitative, system-level validation makes CETSA particularly valuable for cellular potency assessment, as it confirms that compounds not only bind their intended targets but do so under physiologically relevant conditions.

Comparative Performance of Identification Tools

Experimental Protocol for Tool Evaluation

A rigorous methodology was employed to evaluate the performance of various identification tools using both DDA and DIA HRMS spectra [1]. The experimental design challenged software tools with a diverse set of 32 compounds including pesticides, veterinary drugs, and their metabolites, with particular attention to isomeric compounds that present significant identification challenges.

Sample preparation involved analyzing compounds both in solvent standards and spiked into complex feed extracts to evaluate performance in clean versus biologically relevant matrices. Three mix solutions (A, B, and C) were prepared in methanol with compound concentrations ranging from 40-2000 µg/L, reflecting maximum residue limits and ensuring detectability [1]. Compounds were strategically distributed across mixes to avoid co-elution of compounds with identical molecular formulas.

Instrumental analysis was performed using LC-HRMS with both DDA and DIA acquisition modes. For DDA analysis, a standard top-n approach was implemented where the most intense ions were fragmented. For DIA, wider isolation windows were used to fragment multiple ions simultaneously, creating more complex composite spectra. This direct comparison allowed researchers to evaluate how acquisition mode impacts identification success rates across different software platforms [1].

Performance Comparison Across Platforms

The performance evaluation of four HRMS-spectra identification tools revealed significant differences in their capabilities to annotate compounds using DDA and DIA spectra [1]. The results provide crucial guidance for selecting appropriate tools based on acquisition mode and sample complexity.

Table 1: Compound Identification Success Rates in Solvent Standards

| Identification Tool | DDA Success Rate | DIA Success Rate |

|---|---|---|

| mzCloud | 84% | 66% |

| MSfinder | >75% | 72% |

| CFM-ID | >75% | 72% |

| Chemdistiller | >75% | 66% |

Table 2: Compound Identification Success Rates in Spiked Feed Extract

| Identification Tool | DDA Success Rate | DIA Success Rate |

|---|---|---|

| mzCloud | 88% | 31% |

| MSfinder | >75% | 75% |

| CFM-ID | >75% | 63% |

| Chemdistiller | >75% | 38% |

The mass spectral library mzCloud demonstrated the highest success rate for DDA spectra, with 84% and 88% of compounds correctly identified in the top three matches for solvent standards and spiked feed extract, respectively [1]. However, its performance declined significantly with DIA spectra, particularly in complex matrices (31% success rate in spiked feed extract), highlighting the limitations of direct spectral matching for complex fragmentation data.

The in silico tools (MSfinder, CFM-ID, and Chemdistiller) performed well with DDA data, all achieving identification success rates above 75% for both solvent standards and spiked feed extract [1]. MSfinder provided the highest identification success rates using DIA spectra (72% and 75% for solvent standard and spiked feed extract, respectively), suggesting that its rule-based in silico fragmentation prediction using hydrogen rearrangement rules is particularly suited to handling complex DIA spectra. CFM-ID, which utilizes hybrid machine learning and rule-based fragmentation prediction, performed almost similarly in solvent standard (72%) though slightly less effectively in spiked feed extract (63%) [1].

Emerging Trends and Strategic Implications

Artificial Intelligence and In Silico Approaches

Artificial intelligence has evolved from a disruptive concept to a foundational capability in modern drug discovery R&D [2]. Machine learning models now routinely inform target prediction, compound prioritization, pharmacokinetic property estimation, and virtual screening strategies. Recent research demonstrates that integrating pharmacophoric features with protein-ligand interaction data can boost hit enrichment rates by more than 50-fold compared to traditional methods [2]. These approaches not only accelerate lead discovery but improve mechanistic interpretability, an increasingly important factor for regulatory confidence and clinical translation.

In silico screening has become a frontline tool for triaging large compound libraries early in the pipeline [2]. Computational approaches such as molecular docking, QSAR modeling, and ADMET prediction enable prioritization of candidates based on predicted efficacy and developability, reducing the resource burden on wet-lab validation. Platforms like AutoDock and SwissADME are now routinely deployed to filter for binding potential and drug-likeness before synthesis and in vitro screening [2].

Cellular Target Engagement and Validation

The shift toward cellular potency assessment reflects the growing recognition that biochemical binding assays alone are insufficient for predicting compound efficacy in physiological systems. As molecular modalities become more diverse—encompassing protein degraders, RNA-targeting agents, and covalent inhibitors—the need for physiologically relevant confirmation of target engagement has never been greater [2].

CETSA has emerged as a leading approach for addressing this need, enabling researchers to confirm pharmacological activity where it matters most: in the biological system of interest [2]. By providing direct, in situ evidence of drug-target interaction, technologies like CETSA have transitioned from optional validation methods to strategic assets that strengthen decision-making with functionally validated target engagement data.

Integration of Multidisciplinary Pipelines

Drug discovery teams are increasingly composed of multidisciplinary experts spanning computational chemistry, structural biology, pharmacology, and data science [2]. This integration enables the development of predictive frameworks that combine molecular modeling, mechanistic assays, and translational insight, leading to earlier and more confident go/no-go decisions while reducing late-stage surprises.

The convergence of computational and experimental approaches is particularly evident in the hit-to-lead (H2L) phase, which is being rapidly compressed through AI-guided retrosynthesis, scaffold enumeration, and high-throughput experimentation (HTE) [2]. These platforms enable rapid design–make–test–analyze (DMTA) cycles, reducing discovery timelines from months to weeks. In a 2025 study, deep graph networks were used to generate over 26,000 virtual analogs, resulting in sub-nanomolar MAGL inhibitors with over 4,500-fold potency improvement over initial hits [2].

Research Reagent Solutions

Table 3: Essential Research Reagents for Cellular Potency Assessment

| Reagent / Material | Function in Experimentation |

|---|---|

| LC-HRMS System | High-resolution mass spectrometry for precise compound identification and quantification in complex matrices [1] |

| ULC Grade Solvents (Methanol, Acetonitrile) | High-purity mobile phase components for chromatographic separation to minimize background interference [1] |

| Reference Standards | Authenticated compounds for method validation, calibration curves, and positive controls in potency assays [1] |

| Cell Culture Systems | Physiologically relevant cellular environments for assessing target engagement and functional potency [2] |

| CETSA Reagents | Components for Cellular Thermal Shift Assay to measure target engagement in intact cellular systems [2] |

| Formic Acid/Acetic Acid | Mobile phase modifiers for optimal chromatographic separation and ionization efficiency in MS detection [1] |

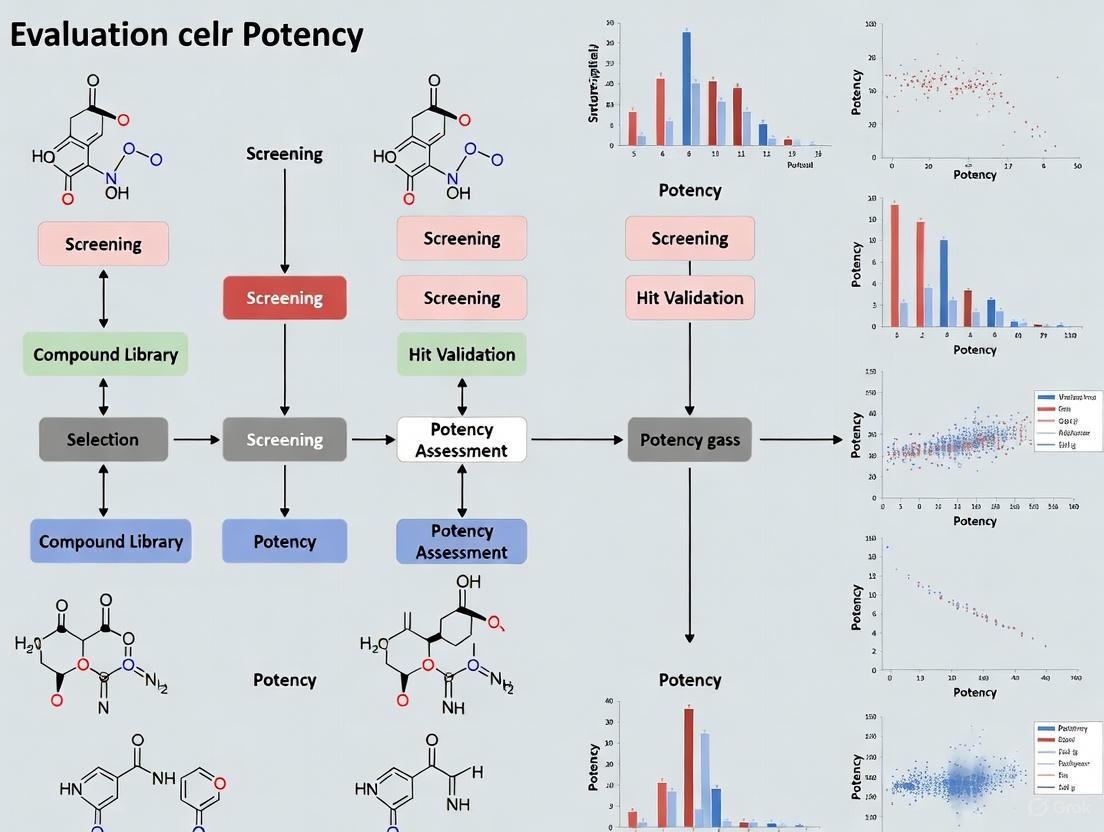

Experimental Workflow Visualization

Workflow for Cellular Potency Assessment

Technology Selection Framework

Technology Selection Decision Framework

The accurate assessment of cellular potency across compound libraries requires careful selection and integration of analytical technologies, with performance varying significantly based on acquisition mode and sample complexity. As demonstrated in the comparative evaluation, MSfinder emerges as the most versatile tool for DIA data in complex matrices, while mzCloud provides excellent performance for DDA spectra but struggles with complex DIA data. The integration of cellular target engagement assays like CETSA with advanced computational tools creates a powerful framework for establishing robust structure-activity relationships in physiologically relevant contexts, ultimately enhancing the predictive power of early discovery efforts and increasing the likelihood of clinical success.

The quality of a compound library is a key determining factor for the success of any high-throughput screening (HTS) campaign aimed at identifying lead compounds for drug discovery [3]. In both academic and industrial settings, screening libraries represent a significant investment and major asset for research institutions and companies engaged in drug discovery [3]. An ideal screening collection should be representative of biologically relevant chemical space, composed of chemically attractive compounds with tractable synthetic accessibility, and free of undesirable chemical functionalities [3]. The fundamental importance of library quality is underscored by the estimate that transitioning a therapeutic from research to clinical application can cost up to $2.8 billion, with low-quality initial hits necessitating extensive optimization efforts that consume years and significant resources [4].

This guide objectively compares screening library components across key parameters—diversity, purity, and annotation—within the context of evaluating cellular potency. We present synthesized experimental data and standardized protocols to enable direct comparison of library performance, providing researchers with a framework for selecting appropriate compound sources for their specific drug discovery applications.

Library Diversity: Structural and Property Space Considerations

Diversity Design Strategies

Diversity-based library design attempts to explore appropriate chemical space by optimizing biological relevance and compound diversity to provide multiple starting points for further hit/lead development [5]. For target classes with limited numbers of known active chemotypes or for phenotypic assays, structural diversity in screening libraries is strictly recommended, as this can increase the chances of detecting multiple promising scaffolds [5]. The rationale behind this approach is the belief that chemical diversity ultimately implies biological diversity and that a chemically diverse screening library should cover a broad spectrum of targets and molecular processes [5].

Two primary strategies exist for assembling diverse libraries:

- Diversity-based libraries: Designed for targets with few known active chemotypes, optimizing coverage of chemical space using molecular scaffolds and chemical descriptors [5]

- Focused libraries: Intended for well-studied targets (kinases, GPCRs, nuclear receptors) enriched with known active chemotypes using structure-based or ligand-based approaches [6] [5]

Table 1: Diversity Metrics Across Commercial and Institutional Libraries

| Library Source | Library Size | Average MW | Average ClogP | Average HBD | Average HBA | Diversity Method |

|---|---|---|---|---|---|---|

| BOC Sciences [7] | 50,000 | 356.64 | 2.61 | 3.85 | 1.49 | Daylight fingerprints, Tanimoto similarity |

| St. Jude Children's Research Hospital [3] | 575,000 | Varies by sublibrary | Varies by sublibrary | Varies by sublibrary | Varies by sublibrary | Multiple sub-libraries (Bioactives, Diversity, Focused, Fragments) |

| University of Dundee [6] | 57,438 | Lead-like properties | 0-4 | <4 | <7 | Lead-like focus, clustering, visual inspection |

| Korea Chemical Bank [8] | 7,040 | Not specified | Filtered for cytotoxicity | Filtered for cytotoxicity | Filtered for cytotoxicity | Virtual screening, clustering, druggability assessment |

Lead-like versus Drug-like Properties

A critical consideration in library design is the choice between "lead-like" and "drug-like" compounds. The University of Dundee implemented a strategy selecting compounds that are smaller and less hydrophobic than typical drugs to leave opportunities for optimization during lead development [6]. Their criteria included ClogP/ClogD between zero and four, fewer than four hydrogen-bond donors, fewer than seven hydrogen-bond acceptors, and between ten and twenty-seven heavy atoms [6]. This approach reflects the understanding that molecular weight, lipophilicity, and the number of hydrogen-bond donors and acceptors typically increase during the lead optimization process [6].

The St. Jude Children's Research Hospital library employed a balanced approach, classifying their screening collection into four sub-libraries: Bioactives (molecules with known biological function), Diversity (commercial screening libraries following Rule of Five criteria), Focused (molecules for specific targets), and Fragments (low molecular weight compounds for fragment-based screening) [3]. Linear discriminant analysis revealed that despite differences in their etiology, the median compound from each of the four sub-libraries displayed a similar distribution of physicochemical property values, with Bioactives showing the broadest distribution [3].

Experimental Data: Cytotoxicity Profiling of a Diversity Library

Cytotoxicity profiling of the Korea Chemical Bank (KCB) diversity library provides valuable experimental data on the practical outcomes of diversity library design [8]. Researchers screened a subset of 5,181 compounds randomly selected from the 7,040-compound library using the WST-1 assay in five mammalian cell lines (HEK293, HFL1, HepG2, NIH3T3, and CHOK1) at concentrations of 30 µM and 10 µM, following 24 h and 48 h incubation periods [8]. Cytotoxic compounds were defined as those exhibiting >50% inhibition at 30 µM after 48 h.

The results demonstrated that only 17 compounds showed consistent cytotoxicity across all five cell lines [8]. Comparative analysis of physicochemical properties revealed that cytotoxic compounds exhibited higher lipophilicity (ALogP/LogD) and a greater number of aromatic rings relative to non-cytotoxic compounds [8]. These findings indicate that the majority of the KCB diversity library comprised non-cytotoxic compounds, reflecting effective pre-filtering of toxic physicochemical properties during library design [8].

Compound Purity and Integrity: Quality Control Standards

Quality Control Experimental Protocols

Quality control remains a major technical challenge facing scientists who screen chemical libraries [9]. To ensure accurate screening results, library providers and users should implement rigorous QC protocols. The standard methodology for quality control assessment involves:

Liquid Chromatography-Mass Spectrometry (LCMS) Analysis Protocol:

- Sample Preparation: Compound DMSO solutions are diluted in appropriate solvent mixtures compatible with LCMS systems [3]

- Instrumentation: Ultra-performance liquid chromatography system equipped with ultraviolet and evaporative light scattering detectors [3]

- Purity Calculation: Purity is calculated as the average of the two detection methods [3]

- Identity Confirmation: Mass spectrometry confirms compound identity [3]

- Validation Standards: Minimum purity threshold of 80% for usable compounds, with ideal collections showing >90% purity for most compounds [3]

The St. Jude Children's Research Hospital implemented a robust QC procedure where they randomly check 12.5% of the compounds from a vendor plate by LCMS to confirm identity and purity at the time of purchase [3]. This protocol represents a practical approach to balance comprehensive quality assessment with practical resource constraints.

Experimental QC Data from Long-term Storage Studies

Long-term storage stability is a critical factor for library integrity. Experimental data from St. Jude Children's Research Hospital provides insight into compound stability under typical storage conditions [3]. They assessed compound integrity after several years of storage in DMSO at -20°C in both 96-well and 384-well formats.

Table 2: Quality Control Assessment After Long-term Storage

| Storage Format | Sample Size | >90% Purity | 80-90% Purity | <80% Purity | Overall Pass Rate (>80%) |

|---|---|---|---|---|---|

| 96-way tubes [3] | 523 compounds | 77.8% | 9.6% | 12.6% | 87.4% |

| 384-way tubes [3] | 256 compounds | Similar profile to 96-way tubes | Similar profile to 96-way tubes | Similar profile to 96-way tubes | 87.4% (combined) |

| Industry Standard (GSK) [3] | Not specified | Not specified | Not specified | Not specified | 89% (after 6 years at -20°C) |

The study found little difference in quality between compounds stored in either tube format, and no significant correlation between purity and molecular weight, calculated logP, or the time since acquisition [3]. These results were encouraging and comparable to those reported by GSK, where 89% of compounds showed >80% purity after 6 years of storage at -20°C in sealed 384 deep-well blocks [3].

Impact of QC Failures on Research Outcomes

Inadequate quality control can significantly impact research outcomes and lead to erroneous conclusions. Nature Chemical Biology has highlighted cases where validating the structures of compound 'hits' from chemical screens presented challenges [9]. In one instance, a compound initially identified as a screening hit failed to have activity when independently synthesized [9]. In another case, the structure of 'mirin' was incorrectly assigned in the original library, but the correct and misassigned structures were similar enough that standard analytical data did not readily reveal an error [9].

These examples underscore the importance of the "gold standard" validation experiment, which demonstrates that an independently synthesized hit compound has the same chemical characterization data and biological activity as the compound identified in the screen [9]. Library creators and suppliers need to adopt and enforce greater quality control standards to guarantee the integrity of chemical libraries, while users need to validate the chemical identities of their screening hits [9].

Compound Annotation and Screening Technologies

Annotation Methods for Hit Identification

Accurate compound annotation is crucial for hit identification and validation. Traditional methods include:

- DNA-encoded libraries (DELs): Feature small molecules linked to unique DNA sequences, enabling exploration of drug-like chemical space in affinity selection [10]

- Barcode-free self-encoded libraries (SELs): Combine tandem mass spectrometry with custom software for automated structure annotation, eliminating need for external tags [10]

Each approach has distinct advantages and limitations. DEL technology allows screening of vast libraries but requires DNA-compatible chemistry and is unsuitable for nucleic acid-binding targets [10]. SEL platforms enable direct screening of over half a million small molecules in a single experiment without encoding tags, making them suitable for targets like FEN1, a DNA-processing enzyme inaccessible to DELs [10].

Workflow Diagram: Library Assembly and Screening

The following diagram illustrates the key decision points in library assembly and screening strategy:

Library Assembly and Screening Workflow

Experimental Data: Annotation Performance Metrics

Recent technological advances have significantly improved annotation capabilities. Research on self-encoded libraries demonstrates that structure annotation based on MS/MS fragmentation spectra is essential for unequivocal compound identification, especially with high degrees of mass degeneracy in large libraries [10]. In decoding experiments, each nanoLC-MS/MS run produced approximately 80,000 MS1 and MS2 scans, making manual analysis impractical and highlighting the need for automated structure annotation [10].

Automated annotation using SIRIUS 6 and CSI:FingerID software enables reference spectra-free structure annotation of small molecules by scoring predicted molecular fingerprints against fingerprints of database structures [10]. For affinity selection experiments, the complete space of potential structures is known, and the computationally enumerated library can be used as a structure database to score compounds against, improving annotation accuracy [10].

Research Reagent Solutions: Essential Materials and Tools

Table 3: Essential Research Reagents for Screening Library Quality Assessment

| Reagent/Technology | Function/Purpose | Example Applications | Performance Metrics |

|---|---|---|---|

| LC-MS Systems [3] | Compound purity and identity confirmation | Quality control of screening libraries | >80% purity threshold for usable compounds |

| Automated Storage Systems [3] | Compound library management at -20°C | Brooks Life Sciences systems holding DMSO solutions | 87.4% compound integrity after long-term storage |

| Tanimoto Similarity Algorithm [6] [7] | Compound diversity assessment based on structural fingerprints | Daylight fingerprints for clustering | Threshold 0.71-0.77 for diverse subsets |

| PAINS Filters [3] | Identification of compounds with suspect chemical moieties | Filtering reactive, unstable, or promiscuous compounds | Removes pan-assay interference compounds |

| SIRIUS 6 & CSI:FingerID [10] | Automated structure annotation of small molecules | Decoding hits from self-encoded libraries | Handles 80,000+ MS1 and MS2 scans per run |

| Pipeline Pilot [3] | Calculation of molecular descriptors | Analysis of physicochemical properties | Nine standard descriptors (MW, clogP, TPSA, etc.) |

The comparative analysis presented in this guide demonstrates that library quality encompasses multiple dimensions—diversity, purity, and annotation—that collectively determine screening success. Key findings indicate that lead-like properties with appropriate complexity [6], rigorous quality control protocols [3] [9], and advanced annotation technologies [10] significantly enhance the probability of identifying valid hits suitable for optimization.

Researchers should select screening libraries based on comprehensive quality assessment data rather than size alone, applying the standardized experimental protocols and comparison metrics outlined herein. As library technologies evolve, emerging approaches including self-encoded libraries [10] and ultra-large virtual screening [4] offer promising avenues for expanding accessible chemical space while maintaining high standards of quality and annotation.

In modern drug discovery, interrogating the physicochemical properties of small molecules is a critical step in predicting their behavior in complex biological systems. The pursuit of cellular potency is often guided by computational tools that decode molecular characteristics into predictive models. Two primary computational approaches—molecular descriptors and structural alerts—serve as foundational methodologies for these predictions. Molecular descriptors provide quantitative, continuous measures of a compound's physicochemical nature, while structural alerts offer discrete, binary flags for specific functional groups associated with undesirable properties like toxicity.

Framed within a broader thesis on evaluating cellular potency, this guide objectively compares the performance, application, and limitations of descriptor-based and alert-based approaches. As drug discovery increasingly leverages diverse compound libraries, understanding the strategic implementation of these tools becomes paramount for researchers aiming to optimize efficacy while mitigating safety risks early in the development pipeline.

Comparative Analysis: Molecular Descriptors vs. Structural Alerts

The following table summarizes the core characteristics, strengths, and limitations of molecular descriptor and structural alert approaches.

Table 1: Comparison of Molecular Descriptors and Structural Alerts

| Feature | Molecular Descriptors | Structural Alerts |

|---|---|---|

| Nature of Information | Quantitative, continuous | Qualitative, binary (presence/absence) |

| Data Representatio | Numerical vectors (e.g., molecular weight, logP) | Structural patterns (e.g., aromatic nitro groups) |

| Primary Applications | Predictive QSAR/QSPR models, potency prediction, property optimization | Rapid toxicity risk assessment, early-stage hazard filtering |

| Interpretability | Varies; some require expert interpretation | Generally high and chemically intuitive |

| Model Dependency | Often used in complex machine learning models | Can be applied as standalone rules |

| Key Strength | Enables nuanced prediction of continuous properties | Offers high-speed, transparent screening for known risks |

| Main Limitation | May miss specific, rare toxicophores | Can be overly simplistic, leading to false negatives/positives |

Performance Evaluation in Key Drug Discovery Applications

Predicting Ionic Conductivity in Ionic Liquids

A systematic study comparing feature types for predicting ionic liquid conductivity demonstrated the performance impact of descriptor choice. Researchers used a dataset of 2,684 ionic liquids to evaluate graph neural networks (GNNs) for structural feature extraction against traditional molecular descriptors [11].

Table 2: Performance Comparison for Ionic Conductivity Prediction [11]

| Feature Set Used | Mean Absolute Error (MAE) | Root Mean Squared Error (RMSE) | Coefficient of Determination (R²) |

|---|---|---|---|

| Structural Features Only (GNN) | 0.509 | 0.738 | 0.925 |

| Molecular Descriptors Only | 0.592 | 0.831 | 0.905 |

| Combined Features | 0.470 | 0.677 | 0.937 |

The study concluded that models using only structural features learned through GNNs outperformed those using only pre-defined molecular descriptors, suggesting that learned structural representations can capture information relevant to physicochemical properties more effectively. However, the best prediction performance was achieved by combining both structural and molecular features, highlighting the complementary nature of these approaches [11].

Assessing Mitochondrial Toxicity Risk

In safety assessment, a large-scale study created a dataset of 5,761 compounds (824 mitochondrial toxicants, 4,937 non-toxicants) to evaluate machine learning and structural alerts for predicting mitochondrial toxicity [12].

Molecular Descriptor Approach: The team calculated 25 interpretable 2D descriptors and trained multiple machine learning models. The dataset's size enabled robust model training, and the descriptors successfully captured significant differences in the physicochemical property space between toxic and non-toxic compounds [12].

Structural Alert Approach: Using substructure analysis algorithms (SARpy, RDKit, MOE), the researchers identified 17 structural alerts with high positive predictive value (PPV > 0.6). These alerts included specific functional groups like polyhalogenated chains and aromatic nitro groups, providing a chemically intuitive mechanism for risk assessment [12].

Performance Insight: The combination of both methods proved most effective. Machine learning models offered broad screening capability, while the derived structural alerts provided immediate, interpretable flags for specific toxicophores and helped elucidate potential modes of action [12].

Experimental Protocols for Method Evaluation

Benchmarking Compound Activity Prediction (CARA Protocol)

The Compound Activity benchmark for Real-world Applications (CARA) provides a standardized protocol for evaluating predictive models, focusing on two key drug discovery stages [13].

1. Data Curation and Assay Classification:

- Source data from public repositories (e.g., ChEMBL), grouping activity data by Assay ID [13].

- Classify assays into two types based on compound similarity:

2. Data Splitting:

- Implement separate train/test splitting schemes tailored to VS and LO tasks to reflect their distinct data distribution patterns [13].

3. Model Evaluation:

- Use metrics that avoid performance overestimation, considering the biased distribution of real-world data [13].

- Evaluate under both few-shot (limited task-specific data) and zero-shot (no task-specific data) scenarios [13].

Workflow for Developing and Validating Structural Alerts

The methodology for deriving structural alerts for mitochondrial toxicity demonstrates a rigorous, multi-step process [12].

1. Data Collection and Standardization:

- Aggregate data from multiple public sources (ChEMBL, PubChem, literature) [12].

- Standardize chemical structures using a workflow (e.g., in KNIME platform):

- Break bonds to metals, neutralize charges, apply functional group standardization rules [12].

- Remove duplicates using InChIKeys and compounds with ambiguous activity labels [12].

2. Substructure Analysis:

- Apply multiple fragmentation algorithms (SARpy, RDKit, MOE) with different settings to generate comprehensive substructure lists [12].

- Calculate the Positive Predictive Value (PPV) for each fragment: PPV = (Number of active compounds containing the fragment) / (Total number of compounds containing the fragment) [12].

3. Alert Filtering and Validation:

- Set a minimum occurrence threshold and a minimum PPV (e.g., 0.6) [12].

- Manually inspect remaining fragments for chemical integrity and completeness, discarding incomplete ring systems or ubiquitous substructures [12].

Diagram 1: Structural Alert Derivation Workflow

Table 3: Key Research Reagent Solutions for Computational Analysis

| Resource/Solution | Function | Application Context |

|---|---|---|

| ChEMBL Database | Curated database of bioactive molecules with drug-like properties | Primary source for compound activity data; provides assay results and molecular structures [13] [14] [12] |

| RDKit | Open-source cheminformatics library | Calculates molecular descriptors, performs substructure analysis, and standardizes chemical structures [11] [12] |

| KNIME Analytics Platform | Graphical analytics platform for data mining | Creates workflows for data standardization, descriptor calculation, and model building [12] |

| CARA Benchmark | Curated benchmark for compound activity prediction | Evaluates model performance in real-world virtual screening and lead optimization scenarios [13] |

| SARpy | Algorithm for automatic extraction of structural alerts | Generates meaningful substructures from datasets of active compounds [12] |

Integrated Workflow for Cellular Potency Optimization

Combining molecular descriptors and structural alerts creates a powerful, integrated workflow for cellular potency optimization within compound library design. This approach leverages the strengths of both methods while mitigating their individual limitations.

Diagram 2: Integrated Screening Workflow

This synergistic approach is particularly valuable for addressing the complex interplay between potency and safety. Studies probing the links between in vitro potency and ADMET properties have revealed that an excessive focus on nanomolar potency can introduce biases in physicochemical properties that are diametrically opposed to desirable ADMET characteristics [14]. Integrated screening helps identify compounds that balance potency with favorable drug-like properties.

Molecular descriptors and structural alerts are complementary tools in the computational chemist's arsenal. Molecular descriptors excel in providing quantitative, continuous data for predictive modeling of complex properties like ionic conductivity [11], while structural alerts offer rapid, interpretable filtering for known toxicity risks [12].

The most effective strategy for interrogating physicochemical properties in cellular potency assessment leverages both approaches: using structural alerts for initial, high-throughput risk assessment and molecular descriptors within machine learning models for nuanced prediction and optimization. This integrated methodology, implemented within robust benchmarking frameworks like CARA [13], provides a comprehensive approach to navigating the complex trade-offs between efficacy and safety in modern drug discovery.

The systematic classification and application of compound libraries are fundamental to modern drug discovery, directly influencing the efficiency and success of identifying viable therapeutic candidates. Within the context of evaluating cellular potency, the strategic selection of an appropriate compound library is a critical first step that determines the quality of initial hits and the subsequent trajectory of the entire discovery pipeline. These libraries are not merely collections of chemicals; they are carefully curated and designed sets of molecules that serve distinct purposes in the multi-stage journey from target identification to lead compound optimization [15].

This guide provides a comparative analysis of four principal library types: Bioactive Compound Libraries, Diversity Sets, Focused Libraries, and Fragment Libraries. Each category possesses unique characteristics, optimal use cases, and performance metrics in biological screening. For researchers aiming to assess cellular potency, understanding the composition, strengths, and limitations of each library type enables a more rational screening strategy, ensuring that the right tool is used for the right job, thereby conserving resources and accelerating the discovery timeline [16]. The integration of advanced technologies, including artificial intelligence (AI) and high-throughput cellular thermal shift assays (CETSA), is further refining the utility of these libraries by providing deeper mechanistic insights and improving the predictability of early-stage screening outcomes [2].

Library Classifications and Comparative Analysis

Compound libraries are broadly categorized based on their design principles, chemical space coverage, and intended application in the drug discovery workflow. The following table summarizes the core characteristics of the four main sub-library types.

Table 1: Core Characteristics of Compound Sub-Libraries

| Library Type | Design Principle | Typical Size | Primary Screening Context | Key Advantages |

|---|---|---|---|---|

| Bioactive Compound Libraries | Collection of compounds with known or reported biological activity [17]. | 1,000 - 18,000+ compounds [17] [18]. | Target-based and phenotypic screening for drug repurposing and mechanism deconvolution. | Compounds have validated biological activity and clear targets; lower risk of non-specific effects. |

| Diversity Sets | Maximize structural and scaffold variety to broadly sample chemical space [19] [20]. | 1,000 - 50,000+ compounds [20] [16]. | Phenotypic screening and initial target-agnostic screening of new targets. | Maximizes chance of finding a hit against novel or less-understood targets [16]. |

| Focused Libraries | Compounds selected for predicted activity against a specific protein target or target family [21]. | 100 - 500 compounds per design hypothesis [21]. | Target-based screening against well-characterized target families (e.g., kinases, GPCRs). | Higher hit rates and more interpretable Structure-Activity Relationships (SAR) [21]. |

| Fragment Libraries | Collections of very small, low molecular weight compounds that represent minimal binding motifs [22]. | Information missing | Fragment-Based Drug Discovery (FBDD) using biophysical techniques. | High ligand efficiency; covers a vast chemical space with fewer compounds. |

The quantitative properties of these libraries can be further broken down to aid in selection. The table below provides representative data on the composition and properties of available commercial libraries, highlighting their suitability for different stages of research.

Table 2: Quantitative Comparison of Representative Commercial Libraries

| Library Name | Library Type | Total Compounds | Key Structural Metrics | Key Property Metrics |

|---|---|---|---|---|

| TargetMol Bioactive Library [17] | Bioactive | 18,720 | Based on 10,102 unique Bemis-Murcko scaffold classes. | 67% comply with Lipinski's Rule of Five; 54% highly orally absorbable. |

| Enamine Discovery Diversity Set-10 [19] | Diversity | 10,240 | Designed for high scaffold and building block diversity. | Novel, lead-like compounds; filtered for PAINS and undesirable motifs. |

| Otava PrimScreen [20] | Diversity | 1,000 - 10,000 | Average molecular diversity score of 0.868 - 0.891. | Curated for drug-like properties. |

| MCE Diversity Library [16] | Diversity | 50,000 | Representative diversity set for phenotypic and target-based HTS. | Information missing |

| Sygnature Leadfinder HTS [23] | Diversity (Virtual) | 8 million (in silico) | Optimized for broad, lead-like chemical space with stringent filters. | Information missing |

Experimental Protocols for Library Screening in Cellular Potency Assays

Evaluating cellular potency requires a robust experimental workflow that moves from library selection to validated hits. The following protocol outlines a generalized yet comprehensive approach for screening compound libraries in cell-based assays.

High-Throughput Screening (HTS) Workflow for Cellular Potency

1. Library Preparation and Plating:

- Procedure: Commercially available libraries are typically supplied as 10 mM DMSO solutions in 96-well or 384-well plates [17] [19]. Upon arrival, store libraries at recommended temperatures (often -80°C) and minimize freeze-thaw cycles. Using an automated liquid handler, perform a dilution series in cell culture medium to achieve the desired final testing concentrations, ensuring the final concentration of DMSO is kept low (e.g., 0.1-1.0%) to avoid cytotoxicity.

- Critical Reagents: Pre-plated compound library (e.g., TargetMol Bioactive Library or Enamine DDS-10), cell culture medium, DMSO.

2. Cell Seeding and Compound Treatment:

- Procedure: Seed cells expressing the target of interest (e.g., a specific kinase, ion channel, or disease-relevant pathway) into assay plates at a density optimized for logarithmic growth. After cell attachment, add the pre-diluted compounds to the cells. Include appropriate controls on each plate: vehicle control (DMSO), positive control (known potent activator/inhibitor), and negative control (no cells).

- Critical Reagents: Relevant cell line (primary, immortalized, or engineered), fetal bovine serum (FBS), antibiotics (Penicillin-Streptomycin), trypsin/EDTA.

3. Incubation and Potency Signal Development:

- Procedure: Incubate cells with compounds for a predetermined time (e.g., 24-72 hours) under standard culture conditions (37°C, 5% CO2). The endpoint measurement depends on the assay: it could be cell viability (ATP quantitation via CellTiter-Glo), reporter gene activity (luciferase), phosphorylation status (ELISA or Western Blot), or caspase activity for apoptosis.

- Critical Reagents: CellTiter-Glo Luminescent Cell Viability Assay, Luciferase assay reagents, phospho-specific antibodies, caspase substrates.

4. Detection, Data Acquisition, and Hit Validation:

- Procedure: Read the assay plates using appropriate detectors (luminescence plate reader, fluorometer, etc.). Normalize data to the positive and negative controls on each plate. Calculate the percentage of activity or inhibition for each compound. Compounds showing significant activity (e.g., >50% inhibition/activation at a set concentration) are designated as "hits." These primary hits must be re-screened in dose-response (e.g., a 10-point IC50 curve) to confirm potency and efficacy.

- Critical Reagents: Hit compounds for resupply, DMSO for dose-response curves.

The following diagram illustrates the key decision-making workflow for selecting a compound library based on the research goal, and the subsequent experimental process for determining cellular potency.

The Scientist's Toolkit: Essential Reagents and Solutions

The following table details key reagents and materials required for executing the cellular potency screening protocols described above.

Table 3: Essential Research Reagent Solutions for Cellular Potency Screening

| Item | Function/Description | Example Use Case in Protocol |

|---|---|---|

| Pre-plated Compound Library | Collections of compounds in DMSO at standardized concentrations (e.g., 10 mM) in microtiter plates [17] [19]. | The starting point for all screening; provides the test agents. |

| Cell Line | A biologically relevant cellular system (primary, immortalized, or engineered) that models the disease or target pathway. | Used in the cell seeding and compound treatment step to provide the biological context for potency measurement. |

| Viability/Range Assay Kit | Reagents for quantifying cell health or a specific biochemical activity (e.g., CellTiter-Glo for ATP, Caspase-Glo for apoptosis). | The key reagent in the "Incubation and Potency Signal Development" step to read out the cellular response. |

| Automated Liquid Handler | Robotics system for precise, high-volume transfer of liquids, essential for miniaturization and reproducibility. | Used in "Library Preparation and Plating" to accurately dilute and transfer compounds and reagents. |

| Microplate Reader | Instrument for detecting optical signals (luminescence, fluorescence, absorbance) from assay plates. | Used in the "Data Acquisition" phase to collect raw data on cellular responses. |

| CETSA Reagents | Cellular Thermal Shift Assay reagents for confirming direct target engagement of hits within a cellular environment [2]. | Used in the "Hit Validation" phase to provide mechanistic confirmation that a hit compound binds the intended target. |

The strategic selection of compound sub-libraries—whether Bioactive, Diversity, Focused, or Fragment—is a foundational decision that directly shapes the outcome of cellular potency research. As the field advances, the integration of AI-driven in-silico screening and robust cellular validation techniques like CETSA is creating a more predictable and efficient discovery ecosystem [2]. By understanding the distinct profile and application of each library type, and by employing the detailed experimental frameworks and toolkits provided, researchers can make informed choices that maximize the potential of their screening campaigns, mitigate risks, and accelerate the journey toward discovering novel and potent therapeutic agents.

In the realm of drug discovery, compound integrity—encompassing chemical identity, purity, and concentration—is a foundational element that directly influences the reliability of cellular potency measurements. Hits identified through high-throughput screening (HTS) campaigns frequently undergo evaluation through cheminformatics and empirical approaches before confirmation. However, the integrity of these compounds often remains unverified at this critical decision point, as compounds in screening collections can undergo various changes such as degradation, polymerization, and precipitation during storage [24] [25]. This unknown integrity status presents a significant risk: potency measurements derived from cellular or biochemical assays may reflect artifacts of compound decomposition rather than true biological activity. When compound integrity assessment is performed as a separate, subsequent step, it can increase the overall cycle time by weeks due to sample reacquisition and lengthy analytical procedures, thereby delaying project timelines [24].

The context of cellular potency evaluation adds layers of complexity to this challenge. It is well understood that potency measured with recombinant enzyme and potency measured in a cellular environment may not coincide. While decreases in cellular potency are often anticipated, increases in compound potency can also occur in physiologically relevant settings due to factors including cellular metabolism of compounds, protein-protein interactions, post-translational modifications, and asymmetric intracellular localization of compounds [26] [27]. These phenomena make it imperative to ensure that the starting material is of known quality, thereby ensuring that observed potency shifts are biologically relevant rather than analytical artifacts. Thus, implementing robust QC practices for assessing compound integrity after storage is not merely a quality control measure but a crucial enabler for accurate interpretation of cellular potency data across different compound libraries.

Methodologies for Compound Integrity Assessment

Multiple analytical techniques are available for evaluating compound integrity, each with distinct strengths, limitations, and throughput considerations. The choice of methodology often depends on the specific integrity parameter being assessed (identity, purity, or concentration), the required throughput, and available instrumentation.

Core Analytical Technologies

Liquid Chromatography-Mass Spectrometry (LC-MS) stands as the workhorse for comprehensive integrity assessment, enabling simultaneous evaluation of compound identity through mass detection and purity through chromatographic separation. Modern implementations utilizing ultra-high-pressure liquid chromatography (UHPLC) platforms have significantly enhanced throughput, with systems capable of analyzing approximately 2,000 samples per instrument per week [24] [25]. This high-speed capability enables concurrent assessment of compound integrity during concentration-response curve (CRC) studies, providing chemists with simultaneous data on both compound quality and biological activity [25].

For concentration determination, traditional UV detection faces limitations with compounds lacking chromophores. This challenge has led to the adoption of complementary detection techniques:

- Evaporative Light Scattering Detector (ELSD) responds to all compounds less volatile than the mobile phase, making it particularly valuable for detecting compounds without UV chromophores [28].

- Chemiluminescent Nitrogen Detector (CLND) provides an equimolar response for all nitrogen-containing compounds, enabling quantification using a single nitrogen calibration standard and making it highly effective for concentration determination of diverse compound libraries [28].

Nuclear Magnetic Resonance (NMR) spectroscopy also finds application in compound integrity assessment, particularly for quantifying small amounts of material through integration of the total proton spectrum. While accurate and sensitive, throughput considerations and the need for specialized interpretation have somewhat limited its widespread implementation for routine QC [28].

Comparative Analysis of Integrity Assessment Methodologies

Table 1: Comparison of Key Compound Integrity Assessment Methodologies

| Methodology | Primary Applications | Throughput | Key Strengths | Significant Limitations |

|---|---|---|---|---|

| LC-UV/MS | Identity confirmation, purity assessment | High (~2000 samples/week) [24] | Comprehensive data (identity + purity); widely available | May miss non-UV active/ poorly ionizing compounds |

| ELSD | Purity assessment, concentration determination | Medium-High | Universal detection for non-volatile compounds; handles gradient elution [28] | Less sensitive than UV; not suitable for volatile compounds |

| CLND | Concentration determination | Medium | Universal response for N-containing compounds; single-point calibration [28] | Limited to nitrogen-containing compounds |

| NMR | Identity confirmation, quantification | Low-Medium | Structure-elucidation capability; absolute quantification [28] | High instrument cost; requires expert interpretation |

| Acoustic Auditing | Volume verification, DMSO hydration status | Very High | Non-invasive; rapid assessment of sample conditions [28] | Does not assess identity or purity directly |

Innovative Approaches and Workflow Integration

A paradigm shift in integrity assessment involves moving from post-assay analysis to parallel assessment, where compound integrity data are collected concurrently with the CRC stage of HTS. This approach can be implemented either through parallel processing of two distributions from the same liquid sample or serially using the original source liquid sample [24] [25]. This methodology ensures that both compound integrity and CRC potency results become available to medicinal chemists simultaneously, significantly enhancing the decision-making process for hit follow-up and progression.

Emerging non-destructive techniques like acoustic auditing offer complementary capabilities for routine QC monitoring. This technology can rapidly and non-invasively determine water concentration in DMSO stocks and check for low wells due to evaporation or exhaustive usage, thereby preventing researchers from measuring the activity of null transfers [28]. While not replacing chromatographic methods for comprehensive characterization, such technologies provide valuable intermediate QC checkpoints.

Experimental Protocols for Integrity Assessment

Protocol 1: Rapid Integrity Assessment Parallel to HTS

This protocol describes the procedure for implementing concurrent compound integrity assessment during concentration-response testing, enabling simultaneous availability of potency and integrity data [24] [25].

Workflow Overview:

Materials and Reagents:

- Source Compounds: HTS hits in DMSO solution (typically 1-10 mM concentration)

- Analytical Instrumentation: UHPLC system coupled with UV and mass spectrometric detection

- Liquid Handling System: Automated pipetting station for parallel aliquoting

- Chromatography Columns: Reversed-phase C18 column (1.7-2.0 μm particle size)

- Mobile Phases: A: Water with 0.1% formic acid; B: Acetonitrile with 0.1% formic acid

- Microplates: 96-well or 384-well plates compatible with UHPLC autosamplers

Step-by-Step Procedure:

- Sample Preparation: Using an automated liquid handler, prepare two identical sets of aliquots from the original HTS hit source plate.

- Parallel Distribution: Distribute one set of aliquots to concentration-response testing and the second set to compound integrity analysis.

- UHPLC-UV/MS Analysis:

- Chromatographic Conditions: Apply a fast gradient separation (typically 3-5 minutes) with increasing organic modifier (acetonitrile or methanol) content.

- UV Detection: Monitor at multiple wavelengths (e.g., 214 nm, 254 nm) to detect compounds with different chromophores.

- Mass Spectrometry: Operate in positive and negative ionization modes with electrospray ionization for mass confirmation.

- Data Analysis:

- Identity Confirmation: Compare observed mass with expected molecular weight (within ±5 Da tolerance).

- Purity Assessment: Integrate chromatographic peaks and calculate percentage of target compound (typically >90% purity acceptable).

- Concentration Estimation: Compare UV response with standards or use alternative detection (CLND) for absolute quantification.

- Data Integration: Correlate integrity results with CRC potency data to inform hit triaging decisions.

Quality Control Considerations: Include system suitability standards and quality control samples in each analysis batch. Monitor chromatographic performance (retention time stability, peak shape) and mass accuracy throughout the sequence.

Protocol 2: Compound Storage Integrity Monitoring

This protocol outlines a comprehensive approach for assessing compound integrity after long-term storage, providing critical data on collection quality and stability [29] [28] [30].

Workflow Overview:

Materials and Reagents:

- Storage Plates: Compound library stored in DMSO in 96-well or 384-well microplates

- Analytical Instrumentation: LC-UV-ELSD-MS system, acoustic auditor, CLND detector

- Reference Standards: Known compounds for system calibration and performance monitoring

- Solid Phase Extraction Plates: For conversion of trifluoroacetate salts to freebase form if needed

- Sealing Materials: Heat-sealing foils or adhesive seals to prevent moisture ingress

Step-by-Step Procedure:

- Study Design:

- Sample Selection: Randomly select representative compounds from the storage collection (minimum 0.5-1% of total library).

- Stratification: Include compounds with varying storage durations and chemical properties.

- Non-Invasive Assessment:

- Acoustic Auditing: Use acoustic technology to determine DMSO hydration status and well volumes across storage plates [28].

- Visual Inspection: Check for precipitation or discoloration.

- Comprehensive Chromatographic Analysis:

- LC-UV-ELSD-MS Analysis: Perform chromatographic separation with dual detection (UV and ELSD) to capture compounds regardless of chromophore presence, with mass spectrometric confirmation.

- CLND Quantification: For nitrogen-containing compounds, use CLND for accurate concentration determination without compound-specific calibration.

- Data Interpretation:

- Integrity Scoring: Assign integrity scores based on purity, identity confirmation, and concentration accuracy.

- Trend Analysis: Identify patterns of degradation related to compound structure or storage conditions.

- Collection Health Reporting: Generate comprehensive report on collection status with recommendations for remediation or repurification.

Quality Control Considerations: Implement regular QC of liquid handling equipment and track volume remaining in storage containers. Include control compounds with known stability profiles in each analysis batch.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Research Reagents and Materials for Compound Integrity Assessment

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Deep Well Storage Plates | High-density compound storage | Reduce evaporation risk; enable automation compatibility; prevent cross-contamination [31] |

| Anhydrous DMSO | Primary solvent for compound dissolution | High purity essential; control water content (<0.1%) to minimize hydrolysis [28] |

| SPE Cartridges (PL-HCO3 MP SPE) | Conversion of TFA salts to freebase | Reduces compound degradation during storage; improves stability [28] |

| UHPLC Columns (C18, 1.7-2.0μm) | High-resolution chromatographic separation | Enable fast analysis (3-5 min/sample); maintain peak capacity [24] |

| Mobile Phase Additives (Formic Acid) | Modulate ionization and separation | Enhance MS detection sensitivity; improve chromatographic peak shape |

| Quality Control Standards | System performance verification | Include compounds with varying properties to ensure analytical system suitability |

| Sealing Materials (Heat-Sealing Foils) | Prevent moisture ingress and evaporation | Critical for long-term storage integrity; compatible with automated retrieval [31] |

Impact on Cellular Potency Assessment

The relationship between compound integrity and cellular potency measurements is multifaceted and critically important. When compound integrity is compromised during storage, the resulting cellular potency data becomes unreliable and can lead to erroneous conclusions about structure-activity relationships [27].

Proper compound integrity assessment becomes particularly crucial when interpreting discrepancies between biochemical and cellular potency measurements. While decreases in cellular potency are often anticipated due to factors like limited cell permeability or efflux mechanisms, increases in cellular potency can occur through biological mechanisms including:

- Metabolic activation of prodrug compounds within cellular environments

- Altered protein-protein interactions in physiological contexts compared to recombinant systems

- Post-translational modifications that create or expose binding sites

- Asymmetric intracellular distribution leading to local concentration effects [26] [27]

Without verification of compound integrity prior to cellular testing, it becomes impossible to distinguish true biological potency enhancement from artifacts resulting from compound degradation or transformation during storage. For example, a compound that partially degrades during storage might show apparent increased potency if the degradation product is more active than the parent compound, leading to misguided medicinal chemistry optimization efforts.

Implementation of the integrity assessment protocols described herein enables researchers to:

- Confirm that tested material corresponds to the intended chemical structure

- Verify that potency measurements are not biased by impurities or degradation products

- Make informed decisions about structure-activity relationships based on reliable compound quality

- Identify genuine biological phenomena leading to potency shifts in cellular contexts

Robust quality control practices for assessing compound integrity after storage are essential components of reliable drug discovery programs, particularly in the context of cellular potency evaluation across diverse compound libraries. The integration of rapid integrity assessment methodologies—including UHPLC-UV/MS platforms, complementary detection techniques like ELSD and CLND, and innovative non-destructive monitoring such as acoustic auditing—provides comprehensive tools for ensuring compound quality.

The parallel assessment approach, which generates compound integrity data concurrently with concentration-response studies, represents a significant advancement over traditional sequential workflows, reducing decision cycle times and enhancing the quality of hit triaging decisions [24] [25]. Furthermore, the implementation of systematic storage integrity monitoring protocols offers valuable insights into collection-wide compound stability, enabling proactive management and maintenance of screening libraries.

As drug discovery efforts increasingly focus on complex physiological systems and phenotypic screening approaches, the verification of compound integrity becomes ever more critical for deriving meaningful biological conclusions. By adopting these best practices, research organizations can ensure that observed cellular potency data reflects genuine structure-activity relationships rather than storage artifacts, thereby accelerating the identification and optimization of high-quality therapeutic candidates.

Methodological Approaches: Implementing Cell-Based Potency Assays

In the rigorous field of drug development, potency testing stands as a critical gatekeeper, ensuring that biological products possess the specific ability or capacity to affect their intended result before they are released for clinical use [32]. While various analytical methods exist, cell-based bioassays have emerged as the unequivocal gold standard for quantifying the biological activity of complex therapeutics [33]. This guide provides an objective comparison of cell-based and non-cell-based potency assays, framing the evaluation within the context of cellular potency assessment for compound libraries. We summarize supporting experimental data, detail essential methodologies, and visualize the core concepts to equip researchers and drug development professionals with the knowledge to implement robust potency testing strategies.

Potency is defined by regulatory agencies as "the specific ability or capacity of the product to affect a given result" and is considered a Critical Quality Attribute (CQA) that must be measured for each product lot [32]. Unlike small molecule drugs, biologics—including monoclonal antibodies, cell and gene therapies, and other complex modalities—function through intricate, multifaceted biological mechanisms. Consequently, their potency cannot be fully characterized by mere physicochemical properties or quantitative analysis of a single component.

The primary objective of a potency assay is to reflect the therapeutic Mechanism of Action (MoA) and, ideally, correlate with clinical outcomes [32]. Regulatory authorities, including the FDA and EMA, strongly recommend the use of cell-based potency assays whenever possible to meet the complexity of the functionality of the biological compound [33]. These functional assays provide a systems-level view, capturing the cumulative effect of a drug's interaction with a living biological system, which is why they are often required as a release specification for market approval.

Comparative Analysis: Cell-Based vs. Biochemical Potency Assays

Choosing the appropriate potency assay is a strategic decision that impacts every stage of drug development. The following table provides a direct comparison between the two primary categories of potency assays.

Table 1: Comparative Analysis of Cell-Based and Biochemical Potency Assays

| Feature | Cell-Based Assays | Biochemical (Ligand-Binding) Assays |

|---|---|---|

| Biological Context | Full physiological context with intact cellular pathways and systems [34] | Isolated system focusing on a specific binding interaction (e.g., antigen-antibody) |

| Mechanism of Action (MoA) Reflection | Measures functional, biologically relevant activity; can reflect complex, multi-step mechanisms [33] [32] | Measures binding affinity or concentration; may not reflect true biological function |

| Data Output | Functional response (e.g., cell death, proliferation, cytokine release, reporter activity) [34] [35] | Quantitative concentration of the analyte (e.g., ng/mL) |

| Therapeutic Modalities | Ideal for biologics, cell therapies (e.g., CAR-T), gene therapies, cancer immunotherapies [33] [32] | Suitable for well-characterized proteins where binding is the primary MoA |

| Regulatory Stance | Expected and strongly preferred by health agencies for potency where applicable [33] | Accepted for certain product types but may be insufficient for complex biologics |

| Throughput | Lower throughput, more complex execution [33] | High-throughput, easier to automate and miniaturize |

| Variability | Inherently higher due to biological systems; requires careful control strategies [33] | Generally lower variability and more robust |

| Information Gained | Functional potency, cell permeability, acute cytotoxicity, stability inside cells [34] | Specific analyte concentration and binding kinetics |

The increased complexity of modern biotherapeutic modalities, such as gene therapies and cancer immunotherapies, has magnified the importance of this functional approach. For these drugs, an "assay matrix"—a combination of multiple bioassays—is often needed to fully demonstrate potency by detecting the effectiveness of gene delivery, protein expression, and the downstream effect of transgenes [33].

Key Experimental Data and Methodologies

Quantitative Data from Assay Types

The selection of a cell-based assay is dictated by the drug's MoA. The table below summarizes common assay types and the quantitative data they generate.

Table 2: Common Cell-Based Assay Types and Data Outputs

| Assay Type | Measurable Parameters (Quantitative Readouts) | Typical Experimental Output | Relevance to Potency |

|---|---|---|---|

| Reporter Gene Assays [34] [35] | Transcriptional activity (e.g., Luciferase, GFP intensity) | Luminescence (RLU), Fluorescence (RFU) | Measures activation or inhibition of a specific signaling pathway targeted by the drug. |

| Cell Proliferation/ Cytotoxicity Assays [34] | Cell growth or death | Cell count, viability (%), IC50/EC50 values | Directly measures the drug's ability to kill target cells (e.g., oncology) or support growth (e.g., growth factors). |

| Second Messenger Assays (e.g., Calcium flux) [34] | Intracellular signaling events | Fluorescence intensity, kinetic curves | Probes early signaling events following receptor engagement, demonstrating target engagement and activation. |

| Cytokine Release Assays [32] | Secretion of specific proteins (e.g., IFN-γ, IL-2) | Concentration (pg/mL) via ELISA/MSD | Functional readout for immune cell activation (e.g., CAR-T potency). |

| High-Content Screening (HCS) [35] | Multiparametric: protein expression, localization, morphology, post-translational modifications | Multiplexed fluorescence metrics, spatial data | Provides a systems-level view of phenotypic response, ideal for complex MoAs. |

Detailed Experimental Protocol: A CAR-T Cell Potency Example

A robust potency assay for a Chimeric Antigen Receptor T-cell (CAR-T) therapy must quantify its critical biological function: target cell killing. The following protocol outlines a standard co-culture cytotoxicity assay.

Objective: To quantify the specific lytic activity of a CAR-T product against antigen-positive tumor cells.

Materials:

- Effector Cells: The CAR-T cell therapy product.

- Target Cells: Tumor cell line expressing the target antigen. For a regulatory-ready assay, consider standardized tools like TruCytes custom cell mimics to ensure consistency [32].

- Culture Medium: Appropriate medium (e.g., RPMI-1640 with 10% FBS).

- Equipment: CO2 incubator, laminar flow hood, plate reader (for downstream detection).

- Detection Reagent: A kit such as the LYSO-ID Red cytotoxicity kit for lysosome-perturbing activity or a similar dye to measure cell death [34].

Methodology:

- Target Cell Preparation:

- Harvest the target cells during log-phase growth.

- Label the cells with a fluorescent dye if required by the detection method (e.g., a membrane dye or a viability dye).

- Seed the target cells into a 96-well U-bottom plate at a predetermined density (e.g., 10,000 cells per well).

Effector Cell Addition:

- Serially dilute the CAR-T cell product to create a range of Effector-to-Target (E:T) ratios (e.g., 40:1, 20:1, 10:1, 5:1).

- Add the diluted effector cells to the wells containing the target cells. Include control wells for spontaneous target cell death (target cells alone) and maximum target cell death (target cells with a lysis buffer).

Co-Culture Incubation:

- Incubate the co-culture plate for a specified duration (e.g., 18-24 hours) at 37°C in a 5% CO2 atmosphere.

Viability/Cytotoxicity Measurement:

- Following incubation, centrifuge the plate and measure the signal indicating cell death according to the detection kit's protocol. For a homogeneous assay, this could involve adding a fluorescent dye like LYSO-ID Red and reading fluorescence after a set period [34].

- Calculate the specific cytotoxicity (%) using the formula:

[1 - (Experimental Lysis - Spontaneous Lysis) / (Maximum Lysis - Spontaneous Lysis)] * 100

Data Analysis:

- Plot the percentage of specific cytotoxicity against the E:T ratios.

- The potency of the CAR-T lot can be reported as the EC50 (the effective concentration of cells required to achieve 50% maximum cytotoxicity) or the percentage of cytotoxicity at a fixed E:T ratio, relative to a reference standard.

This functional data, often combined with a cytokine release assay (e.g., IFN-γ measurement), provides a comprehensive picture of CAR-T potency that aligns directly with its biological MoA [32].

Visualizing the Workflow and Signaling Pathways

Conceptual Workflow for Cell-Based Potency Assay Development

The following diagram illustrates the logical flow and key decision points in developing a robust cell-based potency assay.

Signaling Pathway for a Reporter Gene Potency Assay

Many biologics, such as cytokine therapies or targeted antibodies, act by modulating specific intracellular signaling pathways. A reporter gene assay is a powerful tool to quantify this activity. The diagram below depicts a generalized pathway for a drug that activates a transcription factor.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful development and execution of cell-based potency assays depend on high-quality, well-characterized reagents. The following table catalogs key solutions and their critical functions.

Table 3: Essential Reagents for Cell-Based Potency Assays

| Research Reagent / Solution | Function & Application in Potency Testing |

|---|---|

| Pathway-Targeted Reporter Cell Lines [33] [35] | Engineered cells containing a reporter gene (e.g., luciferase) under the control of a pathway-specific response element. Used in HTS to screen for agonists/antagonists. |

| Validated Antibodies for IHC/Flow Cytometry [35] | Essential for detecting and quantifying specific protein markers, phosphorylation events (post-translational modifications), and characterizing cell phenotypes in HCS. |

| Apoptosis & Cytotoxicity Kits (e.g., LYSO-ID Red) [34] | Fluorescent probes and kits to measure cell death mechanisms (e.g., caspase activation, lysosomal mass, membrane integrity), a key potency readout for many therapies. |

| Second Messenger Detection Kits (e.g., FLUOFORTE Calcium Assay) [34] | Fluorogenic dyes optimized to monitor rapid signaling events like intracellular calcium flux, providing insights into early target engagement. |

| Cytokine Detection Assays (e.g., ELISA/MSD) [32] | Immunoassays to quantify secreted proteins like IFN-γ, providing a functional readout for immune cell activation and potency. |

| Custom Cell Mimics (e.g., TruCytes) [32] | Synthetic particles or cells engineered to present specific antigens. They act as standardized, reproducible target cells in functional assays (e.g., for CAR-T testing), overcoming the variability of tumor cell lines. |

| SCREEN-WELL Compound Libraries [34] | Pharmaceutically relevant compound libraries used during assay development for validation and as controls to ensure the assay can reliably identify active compounds. |

Cell-based bioassays remain the gold standard for potency testing because they uniquely deliver a functional, physiologically relevant measurement of a biological product's activity, directly reflecting its Mechanism of Action [33]. While they present challenges in development time, variability, and execution complexity compared to biochemical methods, their ability to capture the complexity of biological systems is unmatched.

The strategic imperative for developers is to initiate potency assay development early in the drug development process [32]. This allows for the selection of an assay with a clear path to regulatory qualification, guides critical process decisions, and enables confident scale-up and comparability studies. Investing in a robust, mechanism-based potency assay is not merely a regulatory checkbox; it is a foundational element that de-risks development, builds regulatory trust, and ultimately accelerates the delivery of effective therapies to patients.

Understanding a compound's Mechanism of Action (MoA)—the specific biochemical interactions through which it produces a pharmacological effect—is a cornerstone of modern drug discovery [36]. A well-defined MoA is crucial for drug development, helping to rationalize phenotypic findings, anticipate side effects, and guide repurposing efforts [37]. This knowledge is especially critical when evaluating the cellular potency of compounds from diverse libraries, as it moves beyond simply measuring an effect to understanding the biological basis for that effect. Designing assays that accurately mimic the relevant MoA ensures that potency data is biologically relevant and predictive of clinical efficacy, forming a reliable bridge between high-throughput screening and therapeutic application.

The central challenge lies in moving from a simple confirmation of biological activity to a deeper, systems-level understanding of how a compound engages with its cellular environment. This requires a thoughtful integration of assay formats, where the choice of method is driven by the specific biological questions being asked about the compound's interaction with its target and downstream pathways [38]. This guide provides a structured comparison of assay platforms and methodologies, offering experimental protocols and data analysis frameworks to empower researchers to select and implement the most appropriate tools for robust MoA-driven potency assessment.

Assay Platform Comparison: Selecting the Right Tool for the Job

A wide array of platforms is available for measuring compound activity, each with distinct strengths and limitations. The choice of platform should be guided by the nature of the target, the required sensitivity, and the specific stage of the drug discovery pipeline [38].

Table 1: Comparison of Ligand Binding Assay Platforms for MoA Studies

| Platform | Principle of Detection | Key Advantages | Key Limitations | Best Suited for MoA Stage |

|---|---|---|---|---|

| ELISA | Enzyme-linked colorimetric or chemiluminescent readout | Universally accepted; high specificity; robust | Lower sensitivity than newer platforms; limited dynamic range | Target engagement validation |

| Gyrolab | Microfluidic nanoscale immunoassay | Very low sample consumption; high automation; excellent reproducibility | Specialized equipment required | Pharmacodynamic biomarker analysis |