Ensemble Methods for Protein-Ligand Binding Affinity Prediction: Boosting Accuracy and Generalization in Drug Discovery

Accurate prediction of protein-ligand binding affinity is a critical challenge in structure-based drug design.

Ensemble Methods for Protein-Ligand Binding Affinity Prediction: Boosting Accuracy and Generalization in Drug Discovery

Abstract

Accurate prediction of protein-ligand binding affinity is a critical challenge in structure-based drug design. While single-model predictors often suffer from low generalization, ensemble methods are emerging as a powerful solution, combining multiple models to significantly enhance predictive performance and robustness. This article explores the foundational principles, methodological advances, and practical applications of ensemble learning in this domain. We detail how techniques like bagging, boosting, and stacking are being implemented in state-of-the-art frameworks such as EBA and MULTICOM_ligand to achieve superior results on benchmarks like CASF2016 and in real-world drug screening scenarios. Furthermore, we address key troubleshooting strategies for common pitfalls like data leakage and overfitting, and provide a comparative analysis of ensemble performance against traditional single-model approaches. This synthesis provides researchers and drug development professionals with a comprehensive guide to leveraging ensemble methods for more reliable and effective virtual screening.

The Power of Many: Why Ensembles Outperform Single Models in Binding Affinity Prediction

Accurate prediction of protein-ligand binding affinity is a critical step in computational drug discovery, essential for identifying new drug candidates and therapeutic targets while reducing clinical trial failure rates [1]. While deep learning models have demonstrated potential in accelerating this identification process, their translation to real-world drug discovery has been significantly hampered by a fundamental limitation: poor generalization to novel structures [1]. Single-model predictors frequently achieve impressive performance on benchmark datasets during testing yet fail dramatically when confronted with never-before-seen proteins or ligands. This application note examines the mechanistic causes of this generalization problem, presents quantitative evidence of single-model limitations, and introduces experimental protocols that lay the groundwork for more robust ensemble-based solutions.

Quantitative Evidence of Single-Model Limitations

Performance Degradation on Novel Structures

Multiple independent studies have documented the systematic failure of single-model approaches when predicting interactions for novel chemical structures. The core issue lies in what has been termed "shortcut learning" – where models leverage statistical artifacts in training data rather than learning underlying physicochemical principles that govern binding interactions [1].

Table 1: Comparative Performance of Single-Model vs. Configuration Model on BindingDB Dataset

| Model Type | AUROC | AUPRC | Generalization Capability |

|---|---|---|---|

| DeepPurpose (Transformer-CNN) | 0.86 ± 0.005 | 0.64 ± 0.009 | Fails on novel structures |

| Network Configuration Model | 0.86 ± 0.005 | 0.61 ± 0.009 | Relies solely on topological shortcuts |

| AI-Bind Pipeline | Improved | Improved | Successfully generalizes to novel targets |

The striking similarity in performance between a sophisticated deep learning model (DeepPurpose) and a simple network configuration model that completely ignores molecular features reveals the fundamental flaw: state-of-the-art models often rely on topological shortcuts in the protein-ligand interaction network rather than learning meaningful structure-activity relationships [1].

Annotation Imbalance and Topological Shortcuts

The protein-ligand binding landscape follows a fat-tailed distribution where most proteins and ligands have few binding annotations, while a small number of "hub" nodes accumulate disproportionately many records [1]. This annotation imbalance creates a statistical bias that single-model predictors exploit instead of learning genuine binding determinants.

Table 2: Annotation Imbalance in BindingDB Data

| Parameter | Proteins | Ligands |

|---|---|---|

| Degree exponent (γ) | 2.84 | 2.94 |

| Spearman correlation (k, 〈Kd〉) | -0.47 | -0.29 |

| Annotation imbalance (ρ) | Close to 0 or 1 | Close to 0 or 1 |

This topological shortcut mechanism explains why models achieving AUROC scores of 0.86 in cross-validation fail to generalize to novel targets – they essentially learn to recognize frequently interacting proteins and ligands rather than the structural features that enable binding [1].

Mechanistic Analysis of Single-Model Failures

The Topological Shortcut Pathway

Current single-model architectures exhibit a systematic tendency to bypass feature learning in favor of topological heuristics. The following diagram illustrates this problematic pathway:

This shortcut learning phenomenon represents a fundamental architectural limitation of single-model approaches. Rather than processing the complex physicochemical information contained in protein sequences and ligand structures, models default to simpler topological patterns, severely compromising their utility for novel drug target identification [1].

Input Representation Limitations

Single-model approaches suffer from inherent limitations in their capacity to capture the complex, multi-scale interactions that determine binding affinity:

- 1D sequence-based methods (DeepDTA, CAPLA) utilize protein sequences and ligand SMILES strings but fail to incorporate 3D structural information and struggle to capture short-range direct interactions [2].

- Structure-based methods (KDEEP, Pafnucy) employ 3D grids or molecular graphs but require extensive computational resources and may miss long-range interactions [2].

- Hybrid methods attempt to combine structural and sequence features but often generate noisy representations with overlapping information capture [2].

The generalization capability remains a key challenge across all these architectures. For example, the CAPLA model performs well on benchmark CASF2016 and CASF2013 datasets but shows poor performance on CSAR-HiQ datasets, demonstrating how single-model approaches often fail to transfer across different experimental conditions [2].

Experimental Protocols for Assessing Generalization

Protocol: Evaluating Novel Target Prediction

Purpose: To quantitatively assess model performance degradation on novel protein targets and ligands not represented in training data.

Materials:

- BindingDB dataset (or equivalent protein-ligand interaction database)

- Deep learning framework (PyTorch/TensorFlow)

- Model implementation (DeepPurpose or similar architecture)

Procedure:

- Stratified Data Partitioning: Split the protein-ligand interaction network such that test set proteins and ligands have no annotations in the training data [1].

- Feature Extraction:

- Represent proteins by amino acid sequences and convert to structural descriptors

- Represent ligands by SMILES strings and compute molecular fingerprints

- Baseline Establishment: Train a configuration model that uses only degree information for benchmarking [1].

- Model Training: Implement and train the target model using standard architectures.

- Generalization Assessment: Compare performance metrics (AUROC, AUPRC) between:

- Standard random cross-validation

- Novel target/ligand cross-validation

Interpretation: A significant performance drop (≥15% in AUPRC) in novel target prediction indicates substantial reliance on topological shortcuts rather than feature learning [1].

Protocol: Annotation Imbalance Quantification

Purpose: To measure the degree of annotation imbalance and its correlation with model predictions.

Materials:

- Protein-ligand interaction network data

- Statistical analysis software (Python/R)

Procedure:

- Degree Calculation: For each protein i and ligand j, compute:

- Total degree: k = k⁺ + k⁻

- Positive degree: k⁺ (binding annotations)

- Negative degree: k⁻ (non-binding annotations)

- Degree Ratio Calculation: Compute annotation balance ρᵢ = kᵢ⁺/kᵢ for each node [1].

- Correlation Analysis: Calculate Spearman correlation between node degree and average Kd values.

- Prediction Bias Assessment: Plot model-predicted binding probabilities against ρ values.

Interpretation: Strong correlation (|r| > 0.4) between predicted binding probability and ρ indicates significant model dependency on topological shortcuts rather than molecular features [1].

The Research Toolkit: Essential Materials and Reagents

Table 3: Key Research Reagents and Computational Tools

| Item | Function | Application Context |

|---|---|---|

| BindingDB Dataset | Source of protein-ligand binding annotations | Training and benchmarking predictive models |

| PDBBind Database | Curated protein-ligand complexes with affinity data | Model training and validation |

| DeepPurpose Framework | Deep learning toolkit for binding prediction | Implementing and testing single-model architectures |

| SMILES Strings | 1D representation of ligand chemical structures | Featurization for sequence-based methods |

| Molecular Fingerprints | Fixed-length vector representations of molecules | Capturing chemical features for machine learning |

| AUROC/AUPRC Metrics | Quantitative performance assessment | Evaluating model generalization capability |

Pathway to Robust Prediction: Overcoming Single-Model Limitations

The systematic failures of single-model predictors necessitate a paradigm shift toward more robust approaches. The evidence suggests that future methodologies must explicitly address the topological shortcut problem through innovative training strategies and architectural improvements:

Emerging solutions like AI-Bind demonstrate that combining network-based sampling strategies with unsupervised pre-training can significantly improve binding predictions for novel proteins and ligands [1]. Similarly, ensemble methods that integrate multiple feature representations and model architectures show substantially improved generalization capabilities, with some implementations achieving Pearson correlation coefficients up to 0.914 on benchmark datasets [2].

These approaches collectively address the fundamental limitation of single-model predictors by forcing the learning of genuine molecular features rather than allowing reliance on topological shortcuts, thereby creating more reliable predictive tools for novel drug discovery applications.

In computational drug discovery, accurately predicting the binding affinity between a protein and a small molecule (ligand) is a fundamental challenge. The strength of this interaction directly influences a drug candidate's efficacy and safety, making its precise estimation crucial for virtual screening and lead optimization [3] [4]. Conventional scoring functions, often based on linear regression of a few energy terms, have long struggled with the complex, non-linear physical chemistry governing molecular recognition [3].

Ensemble learning has emerged as a powerful machine learning paradigm that addresses these limitations. Rather than relying on a single model, ensemble methods combine predictions from multiple base learners to achieve superior accuracy, robustness, and generalization compared to any individual constituent [5] [6]. This approach is particularly well-suited for protein-ligand binding affinity prediction, where capturing diverse and complex interactions from high-dimensional data is essential. Research has consistently demonstrated that ensemble models significantly outperform conventional scoring functions and even single complex models [3] [7].

This article details the core principles of the three primary ensemble techniques—Bagging, Boosting, and Stacking—and provides application notes for their implementation in binding affinity prediction.

Core Principles and Mechanisms

Bagging (Bootstrap Aggregating)

Principle: Bagging aims to reduce the variance of machine learning models by creating multiple versions of the original training data through bootstrap sampling (sampling with replacement) and then aggregating the predictions of models trained on each of these data subsets [5].

Key Mechanism:

- Bootstrap Sampling: From a dataset of size N, multiple subsets, each of size N, are created by random sampling with replacement. This means individual data points can appear multiple times in a single subset, while others may be omitted.

- Parallel Training: Base learners (typically high-variance models like deep decision trees or neural networks) are trained independently on each bootstrap sample.

- Aggregation: For regression tasks (like predicting binding affinity), the final prediction is the average of the predictions from all individual models.

Bagging is highly effective because the aggregation process smooths out the noisy predictions of individual learners. A prominent example is the Random Forest algorithm, which combines bagging with random feature selection for added diversity [5] [6]. In binding affinity prediction, the BgN-Score function, which employs an ensemble of neural networks via bagging, demonstrated a more than 25% improvement in prediction accuracy over conventional scoring functions [3].

Boosting

Principle: Boosting is a sequential technique that converts a collection of "weak" learners (models that perform slightly better than random guessing) into a single strong learner. It focuses on training new models to correct the errors made by previous ones.

Key Mechanism:

- Sequential Training: Models are trained one after the other.

- Adaptive Weighting: After each iteration, the training data is re-weighted: misclassified or poorly predicted instances have their weights increased, forcing subsequent learners to focus more on these difficult cases.

- Weighted Combination: The final model is a weighted sum (or vote) of all the weak learners, where models with better performance are assigned higher weights.

Boosting algorithms, such as Gradient Boosting Machines (GBM), XGBoost, and CatBoost, are widely used in binding affinity prediction due to their high predictive power [8] [6]. The BsN-Score scoring function, which uses boosting to combine neural networks, achieved a Pearson's correlation coefficient of 0.816 in binding affinity prediction, showcasing its state-of-the-art performance [3].

Stacking (Stacked Generalization)

Principle: Stacking combines multiple different types of models (heterogeneous base learners) using a meta-learner. The premise is that different algorithms can capture diverse patterns in the data, and a smarter model can learn how to best combine these perspectives.

Key Mechanism:

- Base-Layer Predictions: Diverse base models (e.g., Support Vector Machines, Random Forests, Graph Neural Networks) are trained on the original training data.

- Meta-Feature Generation: The predictions from these base models are used as input features (meta-features) for a new dataset.

- Meta-Learner Training: A final model (the meta-learner) is trained to make the final prediction based on these meta-features.

Stacking is a powerful advanced technique that can capture complex interactions between the predictions of various models. The StackCPA model is a successful application of this principle, using a stacking layer that integrates LightGBM, XGBoost, and CatBoost to predict compound-protein affinity based on multi-scale pocket features [8]. Similarly, the EBA (Ensemble Binding Affinity) method explores all possible ensembles of 13 different deep learning models to achieve superior performance, with one ensemble reaching a Pearson correlation of 0.914 on the CASF-2016 benchmark [7].

Comparative Analysis

Table 1: Comparative Summary of Bagging, Boosting, and Stacking

| Feature | Bagging | Boosting | Stacking |

|---|---|---|---|

| Primary Goal | Reduce variance | Reduce bias | Improve predictive accuracy by leveraging strengths of diverse models |

| Training Style | Parallel | Sequential | Two-phase (base learners then meta-learner) |

| Focus on Data | Bootstrap samples of the entire dataset | Successively focuses on mispredicted instances | Original training data for base learners; base model predictions for meta-learner |

| Base Learner Diversity | Typically homogeneous (same algorithm) | Typically homogeneous (same algorithm) | Encourages heterogeneous (different algorithms) |

| Advantages | Reduces overfitting, robust to noise, easily parallelized | Often higher accuracy, can handle complex relationships | Can model complex interactions between different model predictions, potentially the highest performance |

| Disadvantages | Less interpretable, can be computationally expensive | Prone to overfitting on noisy data, requires careful tuning | Computationally very expensive, complex to train and validate, high risk of overfitting without careful cross-validation |

| Example in Affinity Prediction | BgN-Score (Bagged Neural Networks) [3] | BsN-Score (Boosted Neural Networks) [3], SimBoost [8] | StackCPA [8], EBA [7] |

Experimental Protocols for Ensemble Construction in Affinity Prediction

This section outlines a generalized protocol for developing and benchmarking ensemble learning models for protein-ligand binding affinity prediction, based on established methodologies in the field [8] [7].

Data Preparation and Feature Engineering

Dataset Curation:

- Source: Obtain a high-quality dataset of protein-ligand complexes with experimentally measured binding affinities (e.g., Kd, Ki, IC50), typically expressed as pK (pKd, pKi, etc.) for regression. The PDBbind database is the most widely used benchmark [3] [8] [9].

- Splitting: Divide the data into training, validation, and test sets. A time-split strategy (e.g., complexes from before a certain year for training/validation and after for testing) is recommended to better simulate real-world drug discovery scenarios and assess model generalizability [9].

- Ensure the test set is non-redundant and diverse, such as the CASF core sets, to avoid data leakage and enable fair benchmarking [3] [9].

Feature Extraction: Generate multi-scale features for each protein-ligand complex. The choice of features can vary, but common approaches include:

- Physicochemical & Geometrical Features: Hand-crafted features characterizing atom-level interactions, distances, angles, and energy terms [3].

- Structural Graph Representations: Represent the protein, ligand, and/or complex as a graph where nodes are atoms or residues and edges are bonds or spatial proximities. Use graph neural networks or embedding techniques (e.g., Mol2vec, graph2vec) to learn features [8] [9].

- Sequential & Textual Representations: Use protein amino acid sequences and ligand SMILES strings as input for 1D convolutional or transformer-based models [6].

- Pocket Multi-scale Features: Extract features at different granularities: atomic, residue, and subdomain levels of the protein binding pocket [8].

Model Training and Validation

Base Learner Training:

- For Bagging (e.g., Random Forest): Train multiple decision trees on different bootstrap samples of the training data. For neural network ensembles like BgN-Score, train multiple NNs on bootstrap samples [3].

- For Boosting (e.g., XGBoost): Sequentially train decision trees, where each new tree is fitted to the residual errors of the combined previous trees.

- For Stacking (e.g., StackCPA, EBA):

- Step 1: Train a diverse set of base models (e.g., LightGBM, XGBoost, CatBoost, Graph Neural Networks, 3D-CNNs) on the training data using k-fold cross-validation [8] [7].

- Step 2: Use the cross-validated predictions from these base models on the training set (to avoid overfitting) as features to train a meta-learner (e.g., a linear regression model or another boosting algorithm).

Hyperparameter Optimization: Use the validation set and techniques like grid search or Bayesian optimization to tune hyperparameters for both base learners and meta-learners. Key parameters include tree depth, learning rate (for boosting), number of estimators, and network architecture.

Evaluation Metrics: Rigorously evaluate the final model on the held-out test set using standard metrics for regression:

- Pearson's Correlation Coefficient (R): Measures the linear correlation between predicted and experimental values.

- Root Mean Square Error (RMSE): Measures the average magnitude of prediction errors.

- Mean Absolute Error (MAE): Similar to RMSE but less sensitive to large errors.

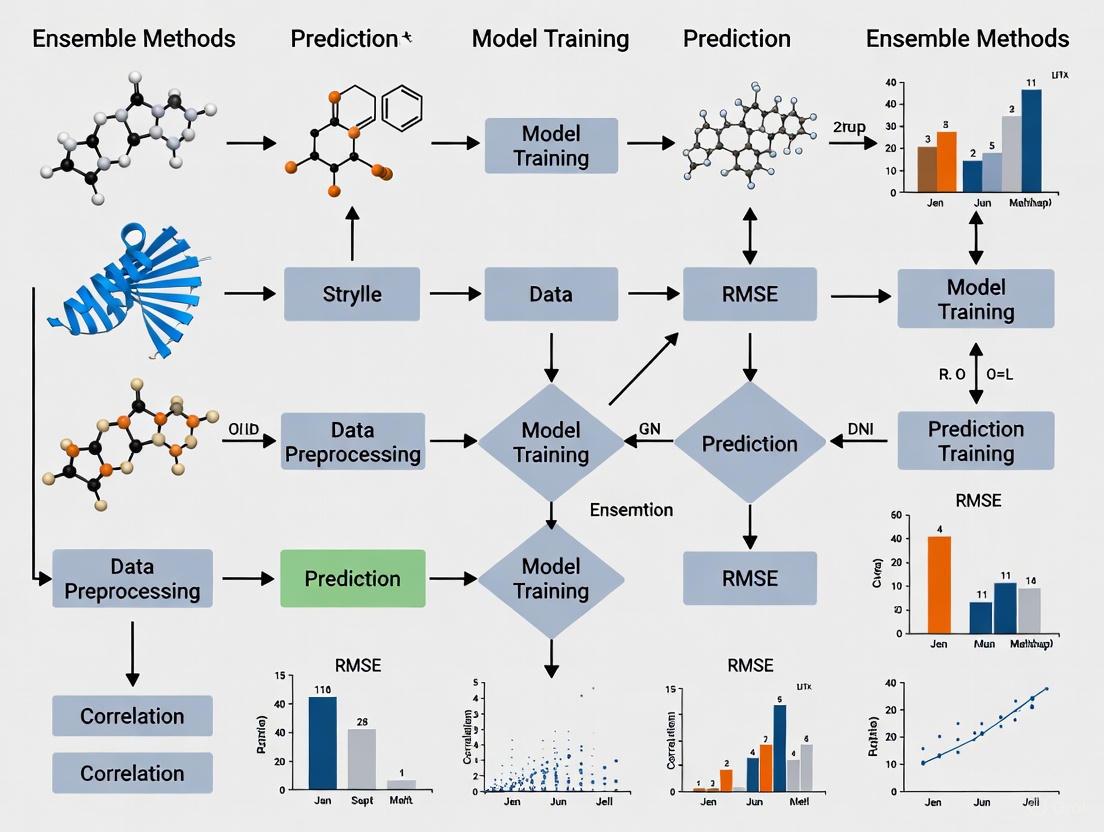

Workflow Visualization

The following diagram illustrates a generalized stacking workflow for binding affinity prediction, integrating multiple feature types and model architectures.

Table 2: Essential Tools and Datasets for Ensemble-based Affinity Prediction

| Category | Item / Resource | Function & Utility |

|---|---|---|

| Benchmark Datasets | PDBbind [8] [9] | A curated database of protein-ligand complexes with experimental binding affinities; the standard benchmark for training and testing scoring functions. |

| CASF (Core Sets) [3] [9] | A diverse, non-redundant subset of PDBbind, specifically designed for objective benchmarking of scoring functions. | |

| Feature Extraction | RDKit | Open-source cheminformatics software used for calculating molecular descriptors, fingerprints, and handling molecular data. |

| Mol2vec [8] | An unsupervised machine learning approach to learn vector representations of molecular substructures, analogous to Word2vec in NLP. | |

| AlphaFold Protein Structure Database [8] | A database of highly accurate predicted protein structures, overcoming the limitation of scarce experimentally determined structures. | |

| Base Learning Algorithms | XGBoost, LightGBM, CatBoost [8] [6] | High-performance gradient boosting frameworks that are commonly used as base learners or meta-learners in ensemble stacks. |

| Graph Neural Networks (GNNs) [10] [9] | Neural networks that operate directly on graph-structured data, ideal for learning representations of molecules and protein pockets. | |

| 3D Convolutional Neural Networks (3D-CNNs) [7] [6] | Used to process 3D structural representations (voxelized grids) of protein-ligand complexes. | |

| Evaluation Metrics | Pearson's R, RMSE, MAE [7] [9] | Standard statistical metrics used to quantify the predictive performance and accuracy of binding affinity models. |

Ensemble learning methods have fundamentally advanced the state of the art in protein-ligand binding affinity prediction. By strategically combining multiple models, these techniques mitigate the limitations of individual learners and conventional scoring functions, leading to marked improvements in accuracy and robustness. As the field progresses, the integration of more diverse and sophisticated base models—particularly those leveraging deep learning on 3D structural and graph data—within ensemble frameworks like stacking, promises to further accelerate the discovery of novel therapeutic agents. The experimental protocols and resources outlined herein provide a foundational roadmap for researchers aiming to deploy these powerful methods in computer-aided drug design.

Accurate prediction of protein-ligand binding affinity is a critical challenge in computational drug discovery, with deep learning models increasingly employed to enhance prediction accuracy. However, these models often suffer from high variance and bias, severely limiting their generalization capability to novel protein-ligand complexes. Recent research has revealed that benchmark performance metrics have been substantially inflated by data leakage and dataset redundancies, leading to overestimated real-world performance. This application note examines the statistical foundation of ensemble methods as a robust solution to these limitations, demonstrating how strategic combination of multiple models reduces variance, mitigates bias, and delivers consistently superior performance across diverse benchmarking scenarios. We provide detailed protocols for implementing ensemble strategies and validate their effectiveness through comprehensive experimental results.

The field of computational drug design relies on accurate scoring functions to predict binding affinities for protein-ligand interactions, a crucial task for virtual screening and drug development. While deep learning approaches have revolutionized binding affinity prediction, their real-world application has been hampered by a significant generalization gap. Alarmingly, recent investigations have revealed that train-test data leakage between the PDBbind database and Comparative Assessment of Scoring Function (CASF) benchmark datasets has severely inflated performance metrics of current deep-learning models [11].

This data leakage problem is substantial in scale. A structure-based clustering analysis identified that nearly 600 similarities exist between PDBbind training and CASF complexes, affecting 49% of all CASF complexes [11]. This means nearly half of the test complexes do not present genuinely new challenges to trained models, enabling accurate prediction through memorization rather than genuine understanding of protein-ligand interactions. When this leakage is addressed through proper dataset splitting, the performance of state-of-the-art models drops markedly, exposing their limited generalization capability [11].

The core statistical challenges manifest as high variance (models are sensitive to specific training data and exhibit large performance fluctuations across different test sets) and high bias (models make simplifying assumptions that prevent them from capturing complex protein-ligand interaction patterns). Ensemble methods address both limitations through strategic combination of diverse models, leveraging the statistical principle that aggregated predictions from multiple base learners exhibit reduced variance and more stable performance across diverse test scenarios.

Statistical Foundation of Ensemble Methods

The Bias-Variance Tradeoff in Binding Affinity Prediction

The bias-variance tradeoff provides a fundamental framework for understanding the limitations of single-model approaches in binding affinity prediction. Bias arises from overly simplistic assumptions in model architecture, leading to systematic errors in predicting affinities for complexes with novel structural features. Variance reflects a model's sensitivity to specific training data, resulting in unstable performance across different protein families or ligand types.

Single-model architectures inevitably struggle with this tradeoff. Graph neural networks may capture spatial relationships effectively but overlook important sequential motifs, while convolutional approaches process structural grids but miss long-range interactions. Sequence-based methods utilize evolutionary information but lack critical 3D structural context [2]. Each architecture introduces distinct biases that limit overall predictive performance.

Ensemble methods circumvent this limitation by combining multiple base learners with diverse inductive biases. The aggregated prediction F(x) for a protein-ligand complex x can be represented as:

F(x) = Σ wi * fi(x)

where fi(x) represents the prediction of base model i, and wi represents its weight in the ensemble. This aggregation reduces overall variance without increasing bias, as the errors of individual models tend to cancel out [2].

Diversity Mechanism in Ensemble Construction

The effectiveness of ensemble methods depends critically on the diversity of base models. In binding affinity prediction, this diversity can be achieved through multiple strategies:

- Architectural diversity: Combining models with different structural inductive biases (CNNs, GNNs, Transformers)

- Feature diversity: Utilizing different input representations (sequence, structure, interaction fingerprints)

- Training diversity: Employing different training subsets or initialization parameters

Research demonstrates that ensembles incorporating diverse feature representations and architectural approaches achieve significantly more robust performance than any single model architecture [2] [9]. The ensemble approach enables different models to capture complementary aspects of protein-ligand interactions, leading to more comprehensive understanding.

Ensemble Implementation Protocols

Diverse Base Model Generation Protocol

This protocol outlines the systematic creation of diverse base models for ensemble construction in binding affinity prediction.

Materials and Reagents

- PDBbind database (general set, refined set, and core set for benchmarking)

- Hardware: GPU-accelerated computing environment

- Software: Python with deep learning frameworks (PyTorch/TensorFlow), RDKit for ligand featurization

Procedure

Feature Diversity Implementation

- Extract 1D sequential features: Protein sequences, ligand SMILES strings

- Generate 2D structural features: Atom-type matrices, interaction fingerprints

- Construct 3D structural features: Molecular graphs, atomic coordinate grids

- Calculate specialized features: Angle-based feature vectors for short-range direct interactions [2]

Architectural Diversity Implementation

- Implement Convolutional Neural Networks (CNNs) with 3D convolutional layers for spatial feature extraction from structural grids

- Implement Graph Neural Networks (GNNs) with message passing for molecular graph analysis

- Implement Transformer architectures with self-attention mechanisms for sequence context modeling

- Implement Hybrid architectures (e.g., CNN-BiGRU with attention) to capture both local and global molecular information [12]

Training Configuration Diversity

- Train each model architecture on different feature combinations

- Utilize varied training hyperparameters (learning rates, batch sizes)

- Apply different random initializations for weight initialization

- Employ bootstrap sampling to create varied training data subsets

Model Validation

- Validate each base model on a held-out validation set

- Assess diversity through correlation analysis of prediction errors

- Select models with strong individual performance and low error correlation for ensemble inclusion

Timing

- Base model training: 24-72 hours per model (varies by architecture and dataset size)

- Ensemble construction: 2-4 hours

Ensemble Integration and Validation Protocol

This protocol details the integration of trained base models into a unified ensemble and rigorous validation of ensemble performance.

Procedure

Ensemble Integration Methods

- Averaging: Compute simple or weighted average of base model predictions

- Stacking: Train a meta-model on base model predictions

- Boosting: Sequentially add models to focus on previously mispredicted complexes

Cross-Validation Framework

- Implement stratified k-fold cross-validation (k=5) preserving protein family distribution

- For each fold:

- Train all base models on k-1 folds

- Generate predictions on the held-out fold

- Train ensemble integrator on base model predictions

- Aggregate results across all folds for robust performance estimation

Generalization Assessment

- Evaluate on strictly independent test sets (CASF core sets)

- Test on temporally split data (complexes deposited after training set cutoff)

- Validate on diverse protein families not represented in training

- Assess performance on different affinity ranges and complex types

Statistical Significance Testing

- Perform paired t-tests comparing ensemble vs. individual model performance

- Calculate confidence intervals for performance metrics

- Implement permutation tests to verify result significance

Troubleshooting

- If ensemble performance matches best base model only, increase base model diversity

- If ensemble underperforms, adjust ensemble weighting scheme

- If overfitting occurs, increase regularization in meta-model or reduce ensemble complexity

Performance Benchmarking and Analysis

Quantitative Performance Comparison

Table 1: Performance Comparison of Individual vs. Ensemble Methods on CASF-2016 Benchmark

| Method | Architecture Type | Pearson's R | RMSE | MAE |

|---|---|---|---|---|

| Pafnucy | 3D CNN | 0.780 | 1.420 | 1.150 |

| GenScore | Graph Neural Network | 0.816 | 1.310 | 1.020 |

| CAPLA | Sequence-based | 0.795 | 1.380 | 1.110 |

| EBA (Ensemble) | Multiple architectures with diverse features | 0.857 | 1.195 | 0.951 |

| GEMS (with CleanSplit) | Graph Neural Network with transfer learning | 0.842 | 1.240 | 0.980 |

Table 2: Impact of Data Splitting Strategy on Model Performance

| Data Partitioning Method | Pearson's R | RMSE | Generalization Assessment |

|---|---|---|---|

| Random splitting | 0.70 (average) | 1.35 (average) | Overoptimistic, inflated metrics |

| UniProt-based splitting | 0.52 (average) | 1.68 (average) | More realistic but challenging |

| CleanSplit (structure-based) | 0.55-0.65 (single models) | 1.55-1.65 (single models) | Eliminates data leakage |

| Ensemble with CleanSplit | 0.75-0.85 | 1.20-1.35 | Maintains performance without leakage |

Generalization Across Diverse Benchmarks

Ensemble methods demonstrate particularly strong advantages when evaluated on strictly independent test sets that eliminate data leakage. The EBA framework maintains robust performance across multiple challenging benchmarks, achieving 15% improvement in Pearson correlation and 19% improvement in RMSE on CSAR-HiQ test sets compared to the second-best predictor [2]. This cross-benchmark consistency highlights the ability of ensemble methods to mitigate the variance problem that plagues single-model approaches.

When evaluated using the rigorous PDBbind CleanSplit protocol which removes structurally similar complexes between training and test sets, ensemble methods maintain high prediction accuracy while single-model performance drops substantially [11] [2]. This demonstrates that ensemble predictions are based on genuine understanding of protein-ligand interactions rather than exploitation of dataset similarities.

Visualization of Ensemble Frameworks

Ensemble Construction Workflow

Ensemble Model Construction

Data Partitioning Impact

Data Splitting Effects

Research Reagent Solutions

Table 3: Essential Research Tools for Ensemble Binding Affinity Prediction

| Resource | Type | Function | Application Context |

|---|---|---|---|

| PDBbind Database | Data Resource | Curated collection of protein-ligand complexes with binding affinity data | Primary training and benchmarking data source |

| CASF Benchmark | Evaluation Framework | Standardized benchmark for scoring function assessment | Rigorous generalization testing |

| CleanSplit Protocol | Data Partitioning | Structure-based filtering to eliminate data leakage | Creating truly independent training-test splits |

| RDKit | Cheminformatics | Ligand structure analysis and descriptor calculation | Feature extraction for small molecules |

| ESM-2 | Protein Language Model | Protein sequence embedding and feature extraction | Transfer learning for protein representations |

| PLAsformer | Software | Hybrid CNN-BiGRU with attention mechanism | Base model for local and global feature capture |

| LGN | Software | Graph neural network with ligand feature enhancement | Base model for graph-based representation |

Ensemble methods provide a statistically rigorous solution to the critical challenges of high variance and bias in protein-ligand binding affinity prediction. By strategically combining diverse base models, ensembles effectively mitigate the limitations of individual architectures and feature representations, leading to robust performance gains particularly evident under rigorous evaluation protocols that eliminate data leakage. The implementation protocols and benchmarking analyses presented in this application note provide researchers with practical guidance for developing ensemble approaches that maintain predictive accuracy across diverse protein families and ligand types, ultimately accelerating computational drug discovery through more reliable affinity prediction.

In computational research, particularly in high-stakes fields like drug discovery, the accuracy and robustness of predictive models are paramount. Ensemble learning has emerged as a powerful paradigm that addresses these demands by combining multiple machine learning models to achieve performance that surpasses that of any single constituent model. This approach is especially valuable in protein-ligand binding affinity prediction, where the complexity of molecular interactions, high-dimensional data, and limited experimental datasets present significant challenges. Ensemble methods mitigate these issues by leveraging the collective power of multiple learners, thereby reducing variance, minimizing bias, and enhancing generalization capability [2] [13].

The efficacy of ensemble methods was compellingly demonstrated in a recent study on binding affinity prediction, where an ensemble of 13 deep learning models (EBA) achieved a Pearson correlation coefficient (R) of 0.914 and a root mean square error (RMSE) of 0.957 on the CASF2016 benchmark. This represented a significant improvement of over 15% in R-value and 19% in RMSE compared to single-model predictors on certain test sets [2]. Such performance gains underscore why understanding the core components of ensemble architectures—base learners, weak versus strong learners, and meta-models—is essential for researchers aiming to develop state-of-the-art predictive systems in structural bioinformatics and computer-aided drug design.

Defining the Core Terminology

Base Learners

Base learners (also referred to as base models, base estimators, or component models) are the fundamental building blocks of any ensemble system. These are individual machine learning models whose predictions are combined to form the ensemble's final output [13] [14]. In practice, base learners can be homogeneous (all of the same type, such as an ensemble of decision trees in a Random Forest) or heterogeneous (of different types, such as combining a support vector machine, a neural network, and a decision tree) [13] [15]. The diversity among base learners is a critical factor in ensemble performance, as it enables the capturing of complementary patterns in the data, which is particularly valuable when dealing with the complex, multi-scale interactions that determine protein-ligand binding affinity [2] [16].

Weak Learners vs. Strong Learners

The concepts of weak and strong learners originate from computational learning theory and provide a formal framework for characterizing model performance.

Table 1: Characteristics of Weak vs. Strong Learners

| Feature | Weak Learner | Strong Learner |

|---|---|---|

| Formal Definition (Binary Classification) | Performs slightly better than random guessing (>50% accuracy) [17] [13] | Achieves arbitrarily high accuracy [17] |

| Colloquial Meaning | Model that performs slightly better than a naive baseline [17] | Model that achieves high, near-optimal performance [17] |

| Typical Examples | Decision stumps, shallow decision trees [17] [14] | Well-tuned Logistic Regression, SVM, Deep Neural Networks [17] |

| Training Case | Easy to train, computationally inexpensive [17] | Difficult to train, computationally expensive [17] |

| Desirability | Not desirable for final prediction due to low skill [17] | Highly desirable as a final predictor [17] |

| Primary Ensemble Role | Fundamental building block in boosting ensembles [17] [18] | Used as base learners in stacking or as the target output of boosting [17] |

In the context of protein-ligand binding affinity prediction, the formal definition based on binary classification accuracy is often extended to regression tasks. Here, a weak learner would be one whose predictions are slightly more accurate than those made by a simple baseline (e.g., predicting the mean affinity), while a strong learner would demonstrate high correlation with experimental binding measurements and low error metrics [2] [19].

Meta-Models (Meta-Learners)

A meta-model (also known as a meta-learner or blender) is a higher-level model that learns how to optimally combine the predictions of base learners [13] [16]. Instead of making direct predictions from raw input features, the meta-model is trained on the outputs of the base learners, which serve as meta-features. The fundamental hypothesis is that this meta-learning process can capture the relative strengths and weaknesses of each base learner under different conditions, leading to more accurate and robust final predictions than simple averaging or voting schemes [15]. In stacking ensembles, which are particularly relevant for heterogeneous ensembles, the meta-model is trained on predictions generated via cross-validation to prevent data leakage and overfitting [18].

Theoretical Framework and Relationships

The theoretical foundation for combining weak learners stems from a crucial finding in computational learning theory: weak and strong learnability are equivalent. This means that a strong learner can be constructed from an ensemble of sufficiently many weak learners [17]. This proof provided the theoretical basis for the development of boosting algorithms, which explicitly transform collections of weak learners into a single strong learner through sequential, adaptive training processes [17] [14].

The relationship between these components varies significantly across different ensemble methodologies:

- Bagging (Bootstrap Aggregating): Primarily uses moderately strong but high-variance base learners (e.g., fully grown decision trees) trained in parallel on bootstrap samples of the training data. The final prediction typically results from averaging (regression) or majority voting (classification) without a dedicated meta-model [17] [20] [16].

- Boosting: Sequentially trains weak learners (e.g., decision stumps), with each new learner focusing on the errors of its predecessors. The combination is typically a weighted sum, and the entire ensemble functions as a strong learner without a separate meta-model [17] [18] [14].

- Stacking (Stacked Generalization): Employs diverse, often strong base learners, and uses a meta-model to learn how to best combine their predictions. The meta-model—which can be a linear model like logistic regression or a more complex algorithm—is trained on hold-out predictions from the base learners [18] [16] [15].

Table 2: Component Roles in Different Ensemble Methods

| Ensemble Method | Typical Base Learner Type | Presence of Meta-Model | Combination Mechanism |

|---|---|---|---|

| Bagging | Strong, high-variance (e.g., deep trees) [17] | No | Averaging or majority vote [20] [16] |

| Random Forest (Bagging extension) | Strong, decorrelated trees [16] | No | Averaging or majority vote [20] |

| Boosting (e.g., AdaBoost, GBM) | Weak (e.g., decision stumps) [17] [14] | No | Weighted sum based on sequential error correction [18] [14] |

| Stacking | Strong, heterogeneous (e.g., SVM, RF, NN) [15] | Yes | Learned combination via a meta-model [18] [16] |

The following diagram illustrates the fundamental relationships and workflow between these components in a generic ensemble system:

Application in Protein-Ligand Binding Affinity Prediction

Research Context and Significance

Accurate prediction of protein-ligand binding affinity is a central challenge in structure-based drug design, as it directly influences the efficacy and selectivity of potential therapeutic compounds [2] [21]. Traditional computational approaches, including force-field, empirical, and knowledge-based scoring functions, often struggle with generalization across diverse protein families and binding modes due to their rigid functional forms and simplifying assumptions [19] [21]. Machine learning, and particularly ensemble methods, have emerged as powerful alternatives that can learn complex relationships between structural features and binding affinities directly from experimental data [2] [19].

The Ensemble Binding Affinity (EBA) study exemplifies the successful application of ensemble principles in this domain. The researchers trained 13 deep learning models using different combinations of five input features, then explored all possible ensembles to identify optimal combinations. Their best ensemble significantly outperformed existing state-of-the-art methods across multiple benchmark datasets, demonstrating the practical value of combining diverse base learners to achieve superior predictive performance and generalization [2]. This approach effectively addresses key challenges in binding affinity prediction, such as capturing both short-range and long-range molecular interactions and mitigating the limitations of individual feature representations.

Implementation Protocol: Developing an Ensemble for Binding Affinity Prediction

Objective: To construct a stacking ensemble model for predicting protein-ligand binding affinity using diverse structural and sequence-based features.

Materials and Computational Reagents:

Table 3: Essential Research Reagent Solutions for Binding Affinity Ensemble

| Reagent / Resource | Type/Description | Purpose in Protocol |

|---|---|---|

| PDBbind Database [2] [19] | Curated database of protein-ligand complexes with experimental binding affinities | Primary source of training and testing data |

| Molecular Feature Sets [2] | 1D sequential, structural features, angle-based features, etc. | Input representations for base learners |

| Cross-Attention/Self-Attention Networks [2] | Deep learning architectures for capturing molecular interactions | Base learner implementation for feature learning |

| Scikit-learn Library [18] [20] | Python machine learning library | Provides ensemble frameworks and meta-models |

| Cross-Validation Framework [18] | Resampling procedure (e.g., 5-fold CV) | Prevents overfitting in meta-model training |

Step-by-Step Procedure:

Data Preparation and Feature Engineering

Base Learner Selection and Training

- Select a diverse set of base learning algorithms. In the EBA study, 13 deep learning models with different feature combinations were used [2]. For heterogeneous stacking, consider:

- Train each base learner on the full training set using appropriate hyperparameters.

Generate Cross-Validation Predictions for Meta-Training

- For each base learner, perform K-fold cross-validation (e.g., 5-fold) on the training data [18].

- Collect the out-of-fold predictions for each training instance. These predictions become the meta-features for the meta-model training.

- Optionally, also generate predictions on the hold-out validation set to enrich the meta-training data.

Train the Meta-Model

- Construct a meta-training dataset where:

- Features: The collected cross-validation predictions from all base learners.

- Target: The true binding affinity values from the original training data.

- Train a meta-model (e.g., Logistic Regression for classification, Linear Regression for binding affinity prediction) on this dataset [18] [15].

- The meta-model learns the optimal weighting or combination of the base learners' predictions.

- Construct a meta-training dataset where:

Final Model Evaluation and Deployment

- Train each base learner on the entire training set.

- The final ensemble model is defined by the trained base learners and the trained meta-model.

- Evaluate the ensemble performance on the held-out test set using appropriate metrics (e.g., Pearson's R, RMSE for binding affinity prediction) [2].

- Compare ensemble performance against individual base learners and baseline methods to quantify improvement.

The following workflow diagram visualizes this stacking protocol for binding affinity prediction:

Advanced Considerations and Best Practices

Diversity and Complementarity of Base Learners

The performance gain in ensemble methods stems largely from the diversity and complementarity of the base learners. In the context of binding affinity prediction, this can be achieved by:

- Input Feature Diversity: Using different feature representations (e.g., 1D sequences, 2D distances, 3D structural features) for different base learners, as implemented in the EBA method [2]. This ensures that various aspects of protein-ligand interactions are captured.

- Algorithmic Diversity: Combining different learning algorithms (e.g., tree-based methods, neural networks, kernel methods) that make different structural assumptions about the data [15].

- Data Diversity: Training base learners on different subsets or bootstrapped samples of the training data, as in bagging [20] [16].

Mitigating Overfitting in Meta-Learning

The stacking process introduces additional complexity that can lead to overfitting if not properly regulated. Key strategies include:

- Using Cross-Validated Predictions: Training the meta-model on out-of-fold predictions from base learners, rather than on predictions made on their own training data, is essential to prevent data leakage [18].

- Regularizing the Meta-Model: Applying appropriate regularization to the meta-model (e.g., L1 or L2 regularization in linear models) to prevent it from overfitting to the meta-features [15].

- Feature Selection for Meta-Features: Reducing the dimensionality of meta-features by selecting the most informative base learner predictions or using dimensionality reduction techniques.

Computational Efficiency and Scalability

Ensemble methods, particularly those involving complex base learners like deep neural networks, can be computationally intensive. Practical considerations for large-scale binding affinity prediction include:

- Parallelization: Bagging methods are naturally parallelizable, as base learners can be trained independently on different computational nodes [14].

- Sequential Training: Boosting methods require sequential training, which can be time-consuming but may be optimized through efficient implementation [14].

- Resource Management: For very large datasets or model architectures, distributed computing frameworks and GPU acceleration may be necessary to achieve feasible training times.

As the field progresses, ensemble methods are poised to play an increasingly critical role in AI-driven drug discovery pipelines, particularly with the growing availability of structural and interaction data, the phasing out of animal testing by regulatory agencies, and the emergence of more sophisticated AI virtual cells (AIVCs) for in silico biomolecular simulation [21].

Building Better Predictors: A Guide to Implementing Ensemble Architectures

Accurate prediction of protein-ligand binding affinity (PLA) is a fundamental prerequisite for structure-based drug discovery, serving as a critical preliminary stage that can significantly reduce costs and accelerate the development of novel therapeutics [2] [22]. The prediction of protein-ligand interactions presents a substantial computational challenge due to the complex interplay of molecular forces and structural dynamics that govern binding. While traditional methods relied on physics-based simulations or hand-crafted feature engineering, recent advances in machine learning, particularly deep learning, have revolutionized the field by enabling end-to-end learning from raw molecular data [2] [9].

A key insight driving modern approaches is that no single molecular representation comprehensively captures all aspects of protein-ligand interactions. Sequence-based descriptors offer accessibility but may lack structural precision, while structure-based methods provide geometrical accuracy but often require experimentally determined structures that may be unavailable [2] [23]. This limitation has motivated the development of integrative strategies that combine complementary descriptor types to achieve more robust and generalizable prediction models [2] [22].

The context of this application note is situated within a broader thesis on ensemble methods for PLA prediction, which posits that combining diverse feature representations and multiple models can overcome limitations inherent in single-modality, single-model approaches [2]. By strategically integrating 1D sequence information, 2D structural graphs, and 3D interaction descriptors, researchers can create more powerful prediction systems that maintain accuracy across diverse protein families and ligand types, ultimately accelerating computational drug discovery.

Diversity of Molecular Descriptors

1D Sequence-Based Descriptors

One-dimensional sequence descriptors utilize the primary amino acid sequences of proteins and the simplified molecular-input line-entry system (SMILES) representations of ligands to predict binding affinities. These methods leverage advances in natural language processing, treating biological sequences as textual data that can be processed with deep learning architectures.

Protein Language Models (pLMs) such as ESM-2 have emerged as particularly powerful tools for generating informative sequence embeddings [24]. These models, pre-trained on millions of protein sequences, learn fundamental principles of protein structure and function that transfer effectively to binding prediction tasks. The key advantage of sequence-based approaches is their applicability to proteins without experimentally determined structures, significantly expanding their utility in early-stage drug discovery [23] [24].

However, sequence-only methods face inherent limitations in capturing the spatial arrangements critical for molecular recognition. As noted in studies of methods like DeepDTA and CAPLA, these approaches may struggle to incorporate 3D structural information and often require large training datasets to achieve competitive performance [2].

Structural Graph Descriptors

Structural graph descriptors represent protein-ligand complexes as graph structures where nodes correspond to atoms and edges represent chemical bonds or spatial proximity relationships. This representation naturally captures the topological features of molecular complexes and enables the application of graph neural networks (GNNs) for affinity prediction.

Atom-level graphs treat both protein and ligand atoms as nodes within a unified graph, with edges determined either by covalent bonds or by spatial proximity within a defined cutoff distance (typically 4-5 Å) [22] [9]. These graphs can be enriched with chemical features such as atom types, hybridization states, and aromaticity flags.

Multi-scale graph representations further enhance modeling capabilities by incorporating both atom-level and bond-level information. The Knowledge-enhanced and Structure-enhanced Method (KSM), for instance, employs dual graphs including an atom-atom graph with atomic distances as edges and a bond-bond graph with bond angles as edges, creating a more comprehensive structural representation [22].

A significant challenge in structural graph approaches is the data heterogeneity between proteins and ligands. Proteins typically contain hundreds to thousands of atoms, while ligands are much smaller, often comprising only a few dozen atoms. This volume gap can lead to models that overfit to protein features while underutilizing ligand information [9].

3D Interaction Descriptors

Three-dimensional interaction descriptors explicitly encode the spatial relationships and chemical complementarity between proteins and ligands, providing critical information about binding geometry and interaction patterns.

Voxelized representations discretize the 3D space surrounding the binding site into a grid of volumetric pixels (voxels), with each voxel encoded using one-hot vectors to indicate the presence of specific atom types [12]. This representation allows the application of 3D convolutional neural networks that can learn spatial hierarchies of interaction features.

Geometric learning approaches incorporate relative spatial information including distances, angles, and sometimes dihedral angles between atoms in the complex. As demonstrated by the KSM method, combining distance and angle information enables more discriminative representation learning than distance-only schemes, helping to distinguish between molecular structures with similar distances but different spatial arrangements [22].

Interaction fingerprints provide another valuable 3D descriptor type, encoding specific protein-ligand interactions such as hydrogen bonds, hydrophobic contacts, and pi-stacking into binary or continuous-valued vectors that can be efficiently processed by machine learning models [9].

Experimental Protocols

Protocol 1: Sequence-Based Binding Site Prediction with LaMPSite

LaMPSite provides a methodology for predicting ligand binding sites using only protein sequences and ligand molecular graphs, without requiring 3D protein structures [24].

Input Preparation:

- Obtain protein amino acid sequence from UniProt or similar databases.

- Generate residue-level embeddings using ESM-2 protein language model (30B parameter version recommended).

- Compute unsupervised contact maps from ESM-2 for geometric constraints.

- For ligands, process 2D molecular graphs (excluding hydrogen atoms) using RDKit.

- Generate initial 3D conformer for each ligand using RDKit's distance geometry.

- Compute ligand atom embeddings using a Graph Neural Network (GNN).

Interaction Modeling:

- Compute protein-ligand interaction embedding as element-wise product of protein residue embeddings and ligand atom embeddings.

- Update interaction embedding using geometric constraints from predicted protein contact maps and ligand distance maps.

- Apply mean pooling to interaction embedding to generate residue-level binding propensity scores.

Output:

- Rank residues by binding propensity scores.

- Cluster high-scoring residues using contact map information to define binding site boundaries.

- Validate predictions against known binding sites using metrics including DCC (distance center of mass) and DCA (distance closest atom).

This protocol achieves competitive performance with methods requiring experimental structures, making it particularly valuable for proteins without structural data [24].

Protocol 2: Structure-Based Affinity Prediction with Ensemble Learning

This protocol outlines the Ensemble Binding Affinity (EBA) method, which combines multiple deep learning models with diverse input features to achieve robust affinity prediction [2].

Feature Extraction:

- Extract five complementary input features:

- Protein sequences (1D)

- Ligand SMILES sequences (1D)

- Structural features from complexes

- Angle-based features for short-range interactions

- 3D spatial descriptors

- Embed protein sequences using pre-trained protein language models.

- Process ligand SMILES with chemical-aware tokenization.

Model Training:

- Train 13 separate deep learning models using different combinations of the five input features.

- Implement cross-attention and self-attention mechanisms to capture both short and long-range interactions.

- Use PDBbind v2016 or v2020 datasets for training, with standardized splitting protocols.

Ensemble Construction:

- Evaluate all possible combinations of the 13 trained models.

- Select optimal ensemble based on Pearson correlation and RMSE on validation sets.

- Implement weighted averaging of ensemble member predictions.

Validation:

- Evaluate final ensemble on benchmark datasets including CASF-2016, CASF-2013, and CSAR-HiQ.

- Compare performance against state-of-the-art single models.

- Assess generalization capability on temporally split test sets.

This ensemble approach demonstrates significant improvements, achieving Pearson correlation coefficients up to 0.914 on CASF-2016 benchmark [2].

Protocol 3: Knowledge-Enhanced Structure-Based Prediction (KSM)

The KSM protocol integrates sequence and structure information through a specialized graph neural network architecture for enhanced affinity prediction [22].

Graph Construction:

- Build atom-atom graph with protein and ligand atoms as nodes.

- Define edges based on covalent bonds and spatial proximity (cutoff 5 Å).

- Construct bond-bond graph with bonds as nodes and bond angles as edges.

- Initialize node features with chemical properties (atom type, hybridization, etc.).

- Encode edge features with spatial information (distances, angles).

Multi-View Representation Learning:

- Process protein sequences through 1D convolutional layers.

- Process ligand SMILES through molecular graph encoders.

- Apply knowledge-enhanced and structure-enhanced GNN (KSGNN) to atom-atom and bond-bond graphs.

- Implement message passing with spatial geometry awareness.

Attentive Pooling and Prediction:

- Apply attentive pooling layer (APL) to cluster bond nodes with spatial information.

- Generate hierarchical graph-level representations.

- Concatenate sequence and structure representations for final affinity prediction.

- Regularize models with dropout and weight decay to prevent overfitting.

This protocol demonstrates improvements of 0.0536 and 0.19 RMSE on PDBbind core set and CSAR-HiQ dataset, respectively, compared to 18 baseline methods [22].

Integrated Workflow

The strategic integration of diverse molecular descriptors enables comprehensive modeling of protein-ligand interactions. The following workflow diagram illustrates how 1D sequence, structural graph, and 3D interaction descriptors can be combined within an ensemble framework for enhanced binding affinity prediction.

Diagram 1: Integrated workflow for combining diverse molecular descriptors in protein-ligand binding affinity prediction. The framework processes 1D sequence, structural graph, and 3D interaction descriptors through specialized neural architectures, followed by feature fusion and ensemble prediction.

Performance Comparison

The integration of diverse molecular descriptors consistently demonstrates improved performance across benchmark datasets. The following table summarizes quantitative results from recent studies implementing feature diversity strategies.

Table 1: Performance comparison of feature diversity strategies on benchmark datasets

| Method | Descriptor Types | Dataset | Pearson (R) | RMSE | MAE |

|---|---|---|---|---|---|

| EBA [2] | Ensemble (1D+3D) | CASF-2016 | 0.914 | 0.957 | - |

| PLAsformer [12] | 1D+3D Fusion | PDBbind-2016 | 0.812 | 1.284 | - |

| KSM [22] | Sequence+Structure | PDBbind Core | - | 0.836* | - |

| LGN [9] | Complex+Ligand Graphs | PDBbind-2016 | 0.842 | - | - |

| Single Model [2] | 1D Sequence | CASF-2016 | ~0.79 | ~1.18 | - |

| GEMS [11] | Structure-Only (CleanSplit) | CASF-2016 | 0.816 | 1.210 | - |

*Note: * indicates improvement over baselines; KSM reports improvement of 0.0536 over previous methods.

The performance advantages of feature-diverse approaches are particularly evident in their generalization capabilities. Methods like EBA show significant improvements of more than 15% in Pearson correlation and 19% in RMSE on CSAR-HiQ test sets compared to single-model approaches [2]. Similarly, the structure-enhanced KSM method demonstrates superior performance on the challenging CSAR-HiQ dataset with an improvement of 0.19 in RMSE [22].

The Scientist's Toolkit

Successful implementation of feature diversity strategies requires specialized computational tools and resources. The following table outlines essential research reagents and their functions in descriptor integration workflows.

Table 2: Essential research reagents and computational tools for descriptor integration

| Tool/Resource | Type | Primary Function | Application Example |

|---|---|---|---|

| ESM-2 [24] | Protein Language Model | Generates residue-level embeddings from sequence | Sequence-based binding site prediction in LaMPSite |

| RDKit [23] | Cheminformatics Toolkit | Ligand conformer generation & molecular graph processing | 3D conformer initialization for geometric learning |

| HMMER [25] | Sequence Analysis | Profile HMM construction for binding site descriptors | Identifying conserved binding motifs from sequences |

| PDBbind [9] | Database | Curated protein-ligand complexes with binding affinities | Training and benchmarking affinity prediction models |

| CASF Benchmark [11] | Evaluation Suite | Standardized assessment of scoring functions | Comparative performance validation |

| CleanSplit [11] | Data Partitioning | Eliminates train-test leakage in PDBbind | Robust generalization assessment |

| Graph Neural Networks [22] | Deep Learning Architecture | Learns representations from molecular graphs | Structure-based affinity prediction in KSM |

| 3D CNN [12] | Deep Learning Architecture | Processes voxelized molecular structures | Learning from 3D interaction descriptors |

The strategic integration of 1D sequence, structural graph, and 3D interaction descriptors represents a paradigm shift in protein-ligand binding affinity prediction. As demonstrated by the experimental protocols and performance benchmarks outlined in this application note, feature diversity strategies consistently outperform single-descriptor approaches across multiple evaluation scenarios.

The ensemble framework emerging from recent research emphasizes that complementary molecular representations capture distinct yet interdependent aspects of binding interactions. Sequence descriptors provide evolutionary and functional context, structural graphs encode topological relationships, and 3D interaction descriptors model spatial complementarity. When combined through sophisticated machine learning architectures, these diverse perspectives enable more accurate, robust, and generalizable prediction systems.

For researchers and drug development professionals, the practical implication is clear: leveraging feature diversity through ensemble methods provides a tangible path toward more reliable computational drug discovery. The protocols and resources detailed in this document offer implementable strategies for advancing predictive capabilities in protein-ligand interaction studies, ultimately contributing to accelerated therapeutic development.

In the field of structure-based drug discovery, the accurate prediction of protein-ligand binding affinity is a critical challenge with substantial implications for reducing the time and cost associated with novel therapeutic development [2]. Traditional computational methods have often struggled to balance accuracy with generalization across diverse protein-ligand complexes. Recently, ensemble learning strategies that integrate multiple deep learning models have emerged as a powerful approach to overcome these limitations [2]. Central to the success of these advanced ensembles are cross-attention and self-attention mechanisms, which enable models to capture complex interaction patterns between proteins and ligands that were previously intractable with conventional methods.

This architecture deep dive explores how these attention mechanisms are engineered and integrated within modern ensemble frameworks for binding affinity prediction. By examining their fundamental principles, implementation architectures, and experimental applications, we provide researchers with both theoretical understanding and practical protocols for leveraging these advanced computational techniques in drug discovery workflows.

Theoretical Foundations of Attention Mechanisms

Core Concepts and Definitions

At its core, an attention mechanism in deep learning is a technique that enables models to dynamically focus on specific parts of their input when generating outputs, much like human cognitive attention [26]. This capability is particularly valuable in tasks where context is essential, as it allows models to weigh the importance of different input elements rather than treating all elements uniformly.

The fundamental building blocks of most attention mechanisms consist of three components [26]:

- Queries: Representations related to the current context or what the model is looking for

- Keys: Representations of the available input elements that can be attended to

- Values: The actual content associated with each key that gets aggregated in the output

The attention process mathematically computes a weighted average of values, where the weights are derived from compatibility functions between queries and keys [27] [26]. This operation allows the model to selectively focus on the most relevant information for a given task.

Self-Attention vs. Cross-Attention

Self-attention (also called intra-attention) operates within a single sequence or set of elements, allowing each element to attend to all other elements in the same set [28] [26]. This mechanism captures internal dependencies and contextual relationships, making it particularly powerful for understanding complex structural patterns. In protein-ligand affinity prediction, self-attention can model long-range interactions within protein structures or within ligand molecules that traditional convolutional networks might miss [2].

Cross-attention extends this concept by enabling interaction between two different sequences or sets of representations [29] [30]. Also known as encoder-decoder attention, this mechanism allows elements from one domain (e.g., ligand features) to attend to elements from another domain (e.g., protein features). This is especially valuable for tasks requiring the integration of heterogeneous information sources, such as capturing the critical binding interactions between a protein's active site and a ligand's functional groups [29].

Table: Comparison of Self-Attention and Cross-Attention Mechanisms

| Characteristic | Self-Attention | Cross-Attention |

|---|---|---|

| Operational Domain | Single set of elements | Two different sets of elements |

| Primary Function | Capture internal dependencies | Model interactions between domains |

| Query Source | Elements from the input set | Elements from one modality |

| Key/Value Source | Same input set | Different modality |

| Applications in Drug Discovery | Protein structure analysis, Ligand chemistry encoding | Protein-ligand interaction mapping, Binding site analysis |

Attention Mechanisms in Protein-Ligand Affinity Prediction

Architectural Implementation Patterns

In modern protein-ligand binding affinity prediction systems, attention mechanisms are implemented in several distinct architectural patterns:

The Ensemble Binding Affinity (EBA) framework employs both self-attention and cross-attention layers to extract short and long-range interactions from protein-ligand complexes [2]. EBA utilizes thirteen different deep learning models with varying combinations of five input features, then ensembles them to achieve state-of-the-art performance. The self-attention components in EBA capture complex structural patterns within proteins and ligands independently, while cross-attention layers model the interaction dynamics between them [2].

The PLAGCA (Protein-Ligand binding Affinity prediction with Graph Cross-Attention) method introduces a hierarchical approach that combines global sequence features with local structural interactions [29]. PLAGCA uses sequence encoding and self-attention to extract global features from protein FASTA sequences and ligand SMILES strings, while simultaneously employing graph neural networks with cross-attention to capture local interaction features from protein binding pockets and ligand molecular structures [29]. These disparate feature representations are then concatenated and processed through multi-layer perceptrons for final affinity prediction.

CheapNet addresses computational efficiency concerns through a novel interaction-based model that integrates atom-level representations with hierarchical cluster-level interactions via cross-attention [30]. By employing differentiable pooling of atom-level embeddings, CheapNet captures essential higher-order molecular representations while maintaining reasonable computational demands—a critical consideration for large-scale virtual screening applications.

Ensemble Strategies with Attention Mechanisms

The true power of attention mechanisms in binding affinity prediction emerges when they are deployed within ensemble frameworks. The EBA method demonstrates that combining models with different feature attention patterns can significantly enhance both accuracy and generalization capability [2]. By creating ensembles from models trained on different combinations of input features—including simple 1D sequential data and structural features—EBA achieves a Pearson correlation coefficient of 0.914 and RMSE of 0.957 on the CASF2016 benchmark, representing improvements of over 15% in R-value and 19% in RMSE compared to single-model approaches [2].

Table: Performance Comparison of Attention-Based Ensemble Methods on Benchmark Datasets

| Method | Attention Mechanism | CASF2016 (R) | CASF2016 (RMSE) | CSAR-HiQ (R) | CSAR-HiQ (RMSE) |

|---|---|---|---|---|---|

| EBA (Ensemble) [2] | Cross-attention + Self-attention | 0.914 | 0.957 | >0.87* | <1.15* |

| PLAGCA [29] | Graph Cross-Attention | Not specified | Not specified | Not specified | Not specified |

| CheapNet [30] | Hierarchical Cross-attention | State-of-the-art (exact values not provided) | State-of-the-art (exact values not provided) | State-of-the-art (exact values not provided) | State-of-the-art (exact values not provided) |

| CAPLA [2] | Self-attention (single model) | 0.79 (approximate) | 1.18 (approximate) | 0.72 (approximate) | 1.33 (approximate) |

Note: Exact CSAR-HiQ values for EBA not provided in available literature, but reported as >11% improvement in R and >14% improvement in RMSE over CAPLA [2].

Experimental Protocols and Implementation

Protocol: Implementing Cross-Attention for Binding Site Analysis

Objective: Implement and validate a cross-attention mechanism for identifying critical interaction regions in protein-ligand complexes.

Materials and Data Preparation:

- Protein Structures: Obtain 3D coordinates from PDBBind database [2]

- Ligand Representations: Process SMILES strings and generate 3D conformations

- Binding Affinity Data: Curate experimental Kd, Ki, or IC50 values from PDBBind or CSAR-HiQ datasets

Methodology:

- Feature Extraction:

- Encode protein sequences using learned embeddings from FASTA format

- Encode ligand structures using graph convolutional networks from SMILES strings

- Extract structural interaction features (distances, angles, molecular properties)

Cross-Attention Implementation:

- Project protein and ligand features into shared dimensional space

- Compute attention scores using compatibility function between protein queries and ligand keys

- Generate attended representations using softmax-normalized weights

- Combine with self-attention layers for intra-domain feature refinement

Training Protocol:

- Loss Function: Mean squared error between predicted and experimental binding affinities

- Optimization: Adam optimizer with learning rate scheduling

- Regularization: Dropout and weight decay to prevent overfitting

- Validation: k-fold cross-validation on benchmark datasets

Ensemble Integration:

- Train multiple models with varying feature combinations and initialization

- Aggregate predictions using weighted averaging based on validation performance

- Calibrate ensemble weights on hold-out validation set

Protocol: Ablation Study for Attention Component Analysis

Objective: Systematically evaluate the contribution of different attention mechanisms to overall model performance.

Experimental Design:

- Baseline Model: Implement architecture without attention mechanisms

- Variants:

- Model with self-attention only (protein and ligand separately)

- Model with cross-attention only (protein-ligand interactions)

- Full model with both self-attention and cross-attention

- Evaluation Metrics: Pearson R, RMSE, MAE on standardized test sets

- Statistical Analysis: Paired t-tests for performance differences across multiple runs

Visualization Architectures

Workflow of Ensemble Binding Affinity Prediction with Attention Mechanisms

Graph Cross-Attention Mechanism in PLAGCA Architecture

Research Reagent Solutions

Table: Essential Computational Tools for Attention-Based Binding Affinity Prediction

| Tool/Resource | Type | Function | Implementation Notes |

|---|---|---|---|

| PDBBind Database [2] | Data Resource | Curated protein-ligand complexes with experimental binding affinity data | Use updated versions (2016/2020) for benchmarking |

| CASF Benchmark [2] | Evaluation Framework | Standardized benchmark for scoring function assessment | Includes core sets of diverse complexes |

| Graph Neural Networks [29] | Algorithm | Representation learning for molecular structures | Implement with PyTorch Geometric or DGL |

| Cross-Attention Layers [2] [29] | Algorithm | Modeling protein-ligand interactions | Custom implementation with multi-head support |