Democratizing Bioinformatics: Building End-to-End Workflows with Multi-Agent Systems

Developing complete bioinformatics workflows demands deep expertise in both genomics and computational techniques, creating significant barriers for researchers.

Democratizing Bioinformatics: Building End-to-End Workflows with Multi-Agent Systems

Abstract

Developing complete bioinformatics workflows demands deep expertise in both genomics and computational techniques, creating significant barriers for researchers. While large language models offer some assistance, they often lack the nuanced guidance required for complex tasks and are resource-intensive. This article explores how multi-agent systems built on specialized, fine-tuned small language models can bridge this gap. We cover the foundational principles of these systems, their practical methodology in automating pipeline creation, crucial troubleshooting and optimization strategies for scalable deployment, and a comparative validation of current systems like BioAgents and BioMaster against human expert performance. Aimed at researchers, scientists, and drug development professionals, this guide provides a comprehensive overview for leveraging multi-agent AI to streamline and democratize robust bioinformatics analysis.

The Rise of Multi-Agent Systems in Bioinformatics: Core Concepts and Driving Needs

The journey from raw sequencing data to identified genetic variants is a cornerstone of modern genomics, enabling discoveries in areas from personalized medicine to evolutionary biology. This process, known as variant calling, aims to identify single nucleotide polymorphisms (SNPs) and small insertions and deletions (indels) by comparing sequencing data from a sample to a reference genome [1] [2]. While conceptually simple—in principle, it involves counting mismatches between reads and a reference sequence—the process is complicated in practice by multiple sources of error, including amplification biases, sequencing machine errors, and software mapping artifacts [3]. A robust variant calling workflow must therefore incorporate data preparation methods that correct or compensate for these various error modes to produce high-confidence variant calls.

The challenge of constructing these end-to-end workflows is a key illustration of why multi-agent systems are being developed for bioinformatics. Developing such workflows requires diverse domain expertise, posing challenges for both junior and senior researchers as it demands a deep understanding of both genomics concepts and computational techniques [4] [5]. The multi-stage process involves complex procedural dependencies that integrate diverse data types and tools, creating significant barriers to automation and clear interpretability [4]. This paper details the core experimental protocols for a standard variant calling workflow and frames them within the context of developing multi-agent systems to democratize and automate these complex analyses.

Core Experimental Protocol: From FASTQ to VCF

A typical variant calling workflow can be divided into three main sections that are meant to be performed sequentially: (1) from FASTQ to analysis-ready BAM files (data pre-processing), (2) variant calling, and (3) variant filtering [3]. The end product is a Variant Call Format (VCF) file containing identified genetic variations along with quality metrics [6].

Table 1: Key Bioinformatics Tools for Variant Calling Workflow Stages

| Workflow Stage | Software/Tool | Primary Function | Website/Source |

|---|---|---|---|

| Read Alignment | BWA (Burrows-Wheeler Aligner) | Maps sequencing reads to reference genome | http://bio-bwa.sourceforge.net/ |

| Bowtie2 | Short read alignment | http://bowtie-bio.sourceforge.net/bowtie2/index.shtml | |

| STAR | RNA-seq read alignment | ||

| Sequence Alignment/Map Processing | SAMtools | Manipulates SAM/BAM files; variant calling | http://samtools.sourceforge.net/ |

| Picard Tools | Processes sequence alignment data | ||

| Variant Calling | GATK (Genome Analysis Toolkit) | Multiple-sequence realignment, SNP/indel discovery | http://software.broadinstitute.org/gatk/ |

| bcftools | SNP/indel calling from BAM files | ||

| SOAPsnp | Consensus calling and SNP detection | http://soap.genomics.org.cn/ | |

| Quality Control | FastQC | Quality control of raw sequencing data | http://www.bioinformatics.babraham.ac.uk/projects/fastqc |

| Trim Galore / cutadapt | Read trimming and adapter removal | ||

| Genome Assembly | SPAdes | Genome assembly for Illumina data | http://bioinf.spbau.ru/spades |

| Velvet | De novo sequence assembler | https://www.ebi.ac.uk/~zerbino/velvet/ |

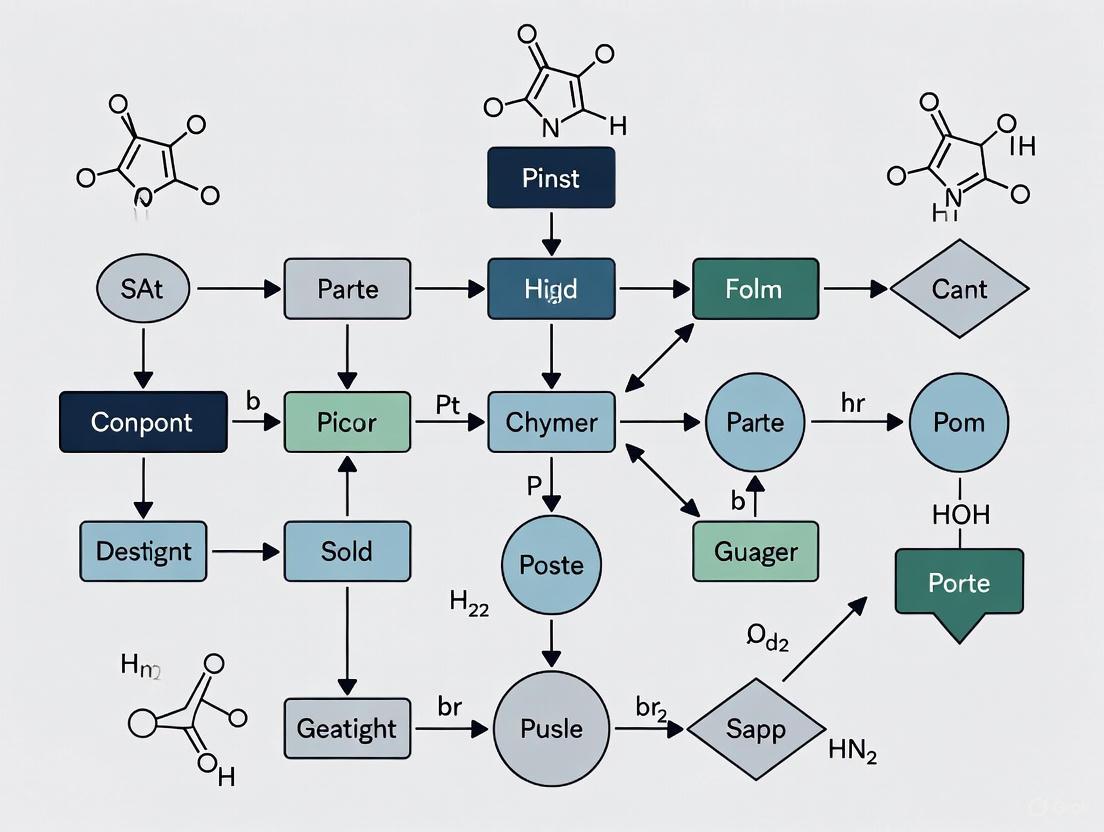

The following diagram illustrates the complete workflow from raw sequencing data to filtered variants, showing the sequential relationship between major stages and key file format transformations:

Data Pre-processing and Quality Control

When sequencing data is received from a provider, it is typically in a raw state (one or several FASTQ files) that is not suitable for immediate variant calling analysis [3]. The initial processing stages are critical for ensuring downstream results are accurate and reliable.

Quality Control and Trimming: The first step involves assessing raw read quality using tools like FastQC, which generates statistics including basic sequence metrics, quality scores, GC content, adapter content, and overrepresented sequences [7]. Sequencing machines are imperfect and wet-lab experiments can introduce contaminants, making quality control essential. Trimming tools like Cutadapt, Trim Galore, or Trimmomatic are then used to remove adapter sequences, barcodes, and low-quality base calls [6] [7].

Read Alignment to Reference Genome: The next step is alignment (mapping), which determines where in the genome the reads originated. This typically involves first indexing the reference genome for use by an aligner, then aligning the reads. The Burrows-Wheeler Aligner (BWA) is commonly used for mapping low-divergent sequences against large reference genomes [1] [3]. The BWA-MEM algorithm is recommended for high-quality queries as it is faster and more accurate. An example command is:

SAM/BAM File Processing: The alignment outputs a SAM (Sequence Alignment/Map) file, a tab-delimited text file containing alignment information for each read [1]. SAM files are converted to their binary equivalent, BAM files, to reduce size and allow indexing. This is done using SAMtools:

BAM files are then sorted by genomic coordinates, which is required by many downstream tools:

Variant Calling and Filtering

Once reads are properly aligned and processed, variant discovery can proceed. The key challenge with NGS data is distinguishing which mismatches represent real mutations and which are just noise [2].

Variant Calling with BCFtools: A common approach for variant calling uses bcftools. The process involves two main steps: First, calculating read coverage of positions in the genome using mpileup:

Second, detecting single nucleotide variants (SNVs) using call. For haploid organisms like bacteria, the command would be:

Variant Calling with GATK: For more complex analyses, particularly in human genetics, the Genome Analysis Toolkit (GATK) provides a robust framework. GATK's Best Practices recommend using the HaplotypeCaller, which is more sophisticated than older tools like the UnifiedGenotyper, except when analyzing non-diploid organisms or pooled samples [3]. GATK workflows typically include additional processing steps like duplicate marking, local realignment around indels, and base quality score recalibration (BQSR) to correct for systematic errors in base quality scores [7] [3].

Variant Filtering: The initial variant calls represent a "high-sensitivity" call set that prioritizes finding true variants at the potential cost of including false positives. The next step involves filtering to achieve the desired balance between sensitivity and specificity [3]. GATK's Variant Quality Score Recalibration (VQSR) uses machine learning to train a Gaussian mixture model on various variant features to filter false positives [7]. For smaller datasets where VQSR isn't appropriate, hard-filtering methods can be applied based on metrics like quality depth (QD), mapping quality (MQ), and read position (ReadPosRankSum).

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Essential Research Reagent Solutions for Variant Calling Workflows

| Reagent/Resource | Function/Purpose | Example Sources/Formats |

|---|---|---|

| Reference Genomes | Baseline for read alignment and variant comparison | NCBI RefSeq (https://www.ncbi.nlm.nih.gov/refseq), ENSEMBL |

| Sequencing Adapters | Library preparation; removed during trimming | Illumina TruSeq, Nextera |

| Quality Control Tools | Assess read quality and adapter content | FastQC, FastQ Screen |

| Trimming Tools | Remove adapters and low-quality bases | cutadapt, Trim Galore, Trimmomatic |

| Sequence Aligners | Map reads to reference genome | BWA, Bowtie2, STAR (RNA-seq) |

| Alignment Processing Tools | Convert, sort, index, and statistics on BAM files | SAMtools, Picard Tools |

| Variant Callers | Identify SNPs and indels | GATK, bcftools, VarScan |

| Variant Annotation Tools | Add functional context to variants | SnpEff, VEP (Variant Effect Predictor) |

| Visualization Tools | Visual inspection of alignments and variants | IGV (Integrative Genomics Viewer) |

Multi-Agent Systems for Bioinformatics Workflow Automation

The Challenge of Bioinformatics Workflow Development

The complexity of the variant calling workflow exemplifies why multi-agent systems represent a promising solution for bioinformatics challenges. Developing end-to-end bioinformatics workflows requires diverse domain expertise, posing challenges for both junior and senior researchers as it demands a deep understanding of both genomics concepts and computational techniques [4]. Bioinformaticians often mine question-answer platforms like Biostars for similar problems, search for reproducible scientific workflow examples on GitHub, or refer to the methods sections of recently published papers for code [4]. This complexity presents a steep learning curve for newcomers and poses challenges for experts to stay current with new techniques and analysis-specific software versions [4].

BioAgents: A Multi-Agent System for Bioinformatics

To address these challenges, the BioAgents system leverages a multi-agent approach built on small language models fine-tuned on bioinformatics data and enhanced with retrieval augmented generation (RAG) [4] [5]. This system employs multiple specialized agents, each tailored to handle specific tasks such as tool selection, workflow generation, and error troubleshooting, enabling a modular and efficient approach to solving bioinformatics challenges [4]. Unlike systems that rely solely on large language models, BioAgents uses a smaller, more efficient model (Phi-3) to maintain high performance while significantly reducing computational resources [4].

The system incorporates specialized agents fine-tuned on different aspects of bioinformatics knowledge. One agent focuses on conceptual genomics tasks, fine-tuned on bioinformatics tools documentation from Biocontainers and the software ontology [4]. A second agent uses RAG on nf-core documentation and the EDAM ontology to provide workflow-specific guidance [4]. This modular approach allows each agent to develop deep expertise in its respective domain while being coordinated by a central reasoning agent.

Performance and Implementation

In evaluations across use cases of varying difficulty, BioAgents demonstrated performance comparable to human experts on conceptual genomics questions but showed limitations in code generation tasks, particularly as workflow complexity increased [4]. For complex workflows like SARS-CoV-2 genome analysis, the system could provide a logical series of steps (quality control, assembly, annotation, variant characterization, phylogenetic analysis) but sometimes omitted steps, requiring users to fill in gaps [4].

The system incorporates self-evaluation to enhance output reliability, where the reasoning agent assesses response quality against a defined threshold, with below-threshold outputs being reprocessed [4]. However, this iterative process revealed diminishing returns, where repeated refinements could negatively impact output quality [4]. The architecture also provides transparent guidance by explaining rationales for tool selection and identifying additional information needed for optimal responses, improving interpretability and user trust [4].

The following diagram illustrates how a multi-agent system decomposes the variant calling workflow across specialized agents, demonstrating the coordination required for end-to-end workflow construction:

The variant calling workflow from FASTQ to VCF represents a complex, multi-stage process that requires significant expertise in both genomics concepts and computational methods. While established tools and protocols exist for each step—quality control, alignment, and variant calling—the integration of these steps into a robust, reproducible workflow remains challenging. Multi-agent systems like BioAgents offer a promising approach to democratizing this process by providing specialized assistance for different aspects of workflow development. By decomposing the problem across multiple specialized agents and incorporating transparent reasoning, these systems can help researchers navigate the complexities of bioinformatics analysis while maintaining the rigor necessary for scientific discovery. As these systems evolve, particularly in addressing current limitations in complex code generation, they have the potential to significantly accelerate genomic research and make sophisticated bioinformatics analyses accessible to a broader range of scientists.

What Are Multi-Agent Systems? Specialization, Coordination, and Task Breakdown

A Multi-Agent System (MAS) is a computerized system composed of multiple interacting intelligent agents that work collectively to perform tasks on behalf of a user or another system [8] [9]. Each agent within a MAS possesses individual properties and a degree of autonomy but behaves collaboratively to achieve desired global properties that would be difficult or impossible for an individual agent or monolithic system to accomplish [8] [9]. These systems are characterized by three key principles: autonomy (agents are at least partially independent and self-aware), local views (no agent possesses a full global view of the system), and decentralization (no single designated controlling agent) [9].

The transition from single-agent to multi-agent architectures represents a significant evolution in artificial intelligence system design [10]. While single AI agents operate independently and excel at specialized tasks, they often struggle with problems requiring diverse expertise or extended reasoning chains [11]. Multi-agent systems address these limitations by distributing cognitive labor across multiple specialized agents, enabling more sophisticated problem-solving approaches through collaboration and coordination [10]. This architectural approach is particularly valuable for completing large-scale, complex tasks that can encompass hundreds or even thousands of agents [8].

Core Architectural Patterns and Specialization

System Architectures and Agent Structures

Multi-agent systems can operate under various architectural patterns, each with distinct advantages for different application scenarios. The two primary network architectures are centralized and decentralized networks [8]. In centralized networks, a central unit contains the global knowledge base, connects the agents, and oversees their information flow, providing ease of communication but creating a potential single point of failure. In decentralized networks, agents share information with their neighboring agents instead of a global knowledge base, offering greater robustness and modularity at the cost of coordination complexity [8].

Beyond network topology, MAS can be organized into different structural patterns, each enabling different specialization strategies as shown in Table 1.

Table 1: Multi-Agent System Architectural Patterns and Specialization Strategies

| Architecture Type | Description | Specialization Approach | Key Features |

|---|---|---|---|

| Hierarchical Structure [8] | Tree-like structure with varying agent autonomy levels | Decision-making authority distributed among multiple agents with clear roles | Defined roles, supervision, optimized workflow |

| Holonic Structure [8] | Agents grouped into holarchies (wholes that are also parts) | Leading agents contain multiple subagents while appearing as singular entities | Self-organization, goal-oriented collaboration, component reuse |

| Coalition Structure [8] | Temporary agent unification to boost performance | Agents temporarily unite to enhance utility, then disperse | Dynamic regrouping, performance-based formation |

| Team Structure [8] | Agents cooperate to improve group performance | High interdependence with hierarchical organization | Strong dependencies, shared objectives, coordinated action |

| Cooperative Agents [11] | Work together toward shared goals | Resource sharing, task division based on capabilities | Resource sharing, live updates, efficient task division |

| Heterogeneous Systems [11] | Combine diverse agent skills | Skill-based task assignment, collaborative solutions | Diverse expertise, strength merging, personalized support |

Specialization in Bioinformatics MAS

In bioinformatics applications, specialization enables MAS to tackle complex workflows that require diverse expertise. The BioAgents system exemplifies this approach with specialized agents fine-tuned for distinct aspects of bioinformatics analysis [4]. This system employs a reasoning agent coordinating with two specialized agents: one focused on conceptual genomics tasks (fine-tuned on bioinformatics tools documentation from Biocontainers and software ontology), and another specializing in workflow generation (using Retrieval-Augmented Generation on nf-core documentation and the EDAM ontology) [4].

This specialization strategy addresses a critical challenge in bioinformatics: developing end-to-end workflows demands deep expertise in both genomics and computational techniques [4]. A single agent struggles with the multi-step biomedical reasoning required as task complexity increases, often requiring multiple attempts to generate correct solutions and struggling with integrating knowledge across different tools, data formats, and analysis techniques [4]. Through strategic specialization, MAS can distribute these cognitive demands across multiple expert agents.

Coordination Mechanisms and Protocols

Communication and Coordination Frameworks

Effective coordination in multi-agent systems requires standardized communication frameworks that enable agents to share information, negotiate tasks, and coordinate responses [12]. Agent communication typically involves message passing using structured formats like FIPA (Foundation for Intelligent Physical Agents) standards or custom protocols tailored to specific applications [12]. The Model Context Protocol (MCP) has emerged as a particularly advanced framework addressing the "disconnected models problem" – the difficulty of maintaining coherent context across multiple agent interactions [10] [13].

MCP provides a standardized framework for connecting AI models with external data sources and tools, enabling more effective context retention and sharing across agent interactions [10] [13]. The protocol employs a client-server architecture that cleanly separates AI models (clients) from data sources and tools (servers), using JSON-RPC for communication between components [13]. This architecture supports flexible deployment patterns and enables agents to maintain contextual continuity across extended reasoning chains and collaborative problem-solving sessions [10].

Coordination Algorithms and Task Allocation

Multi-agent coordination employs sophisticated algorithms to manage agent interactions and optimize task allocation. These algorithms can be categorized into several distinct approaches, each with particular strengths for different coordination challenges as detailed in Table 2.

Table 2: Coordination Algorithms in Multi-Agent Systems

| Algorithm Type | Purpose | Key Characteristics | Bioinformatics Application |

|---|---|---|---|

| Consensus Algorithms [12] | Achieve agreement across agents | Fault-tolerant, distributed decision-making | Agreeing on variant calling methods across specialized agents |

| Market Mechanisms [12] | Resource allocation through virtual markets | Economic efficiency, scalability | Bidding for computational resources in cloud-based genomics analysis |

| Swarm Intelligence [12] | Collective behavior optimization | Emergent intelligence, self-organization | Coordinating multiple alignment agents in genome assembly |

| Game Theory Models [12] | Strategic interaction analysis | Nash equilibrium, optimal strategies | Resolving conflicting interpretations of genomic evidence |

Task allocation mechanisms represent another critical coordination component in MAS. These mechanisms include auction-based allocation (where agents bid on tasks based on capabilities and current workload), hierarchical assignment (higher-level agents delegate to subordinates), and consensus-based distribution (agents collectively decide task assignments through negotiation) [12]. The choice of allocation strategy significantly impacts system performance, particularly in complex bioinformatics workflows where tasks have varying computational demands and dependencies.

Diagram 1: MAS Coordination Architecture for Bioinformatics Workflows. This diagram illustrates the orchestration pattern between specialized agents in a bioinformatics multi-agent system.

Task Breakdown Strategies in Bioinformatics MAS

Workflow Decomposition Methodology

Task breakdown in multi-agent systems involves decomposing complex problems into manageable components that can be distributed across specialized agents [10]. In bioinformatics applications, this decomposition follows logical workflow boundaries that reflect the natural structure of genomic analysis pipelines. The BioAgents system implements a sophisticated task breakdown strategy evaluated across three complexity levels of bioinformatics workflows [4].

For Level 1 tasks (Easy), such as providing quality metrics on FASTQ files, the system performs basic decomposition into quality control steps and appropriate tool selection. For Level 2 tasks (Medium), such as aligning RNA-seq data against a human reference genome, decomposition involves coordinating multiple specialized steps including reference genome selection, alignment algorithm choice, parameter optimization, and output processing. For Level 3 tasks (Hard), such as assembling, annotating, and analyzing SARS-CoV-2 genomes from sequencing data, the system performs comprehensive decomposition into data acquisition, quality control, assembly, annotation, variant identification, and phylogenetic analysis [4].

This hierarchical task decomposition enables MAS to handle the complex, multi-stage pipelines that characterize modern bioinformatics workflows, which typically require integrating diverse data types and managing procedural dependencies that pose significant barriers to automation [4].

Orchestrator-Worker Patterns in Research Systems

The orchestrator-worker pattern represents a particularly effective task breakdown strategy for research-oriented MAS. Anthropic's Research system exemplifies this approach, where a lead agent analyzes user queries, develops a research strategy, and spawns subagents to explore different aspects simultaneously [14]. These subagents act as intelligent filters by iteratively using search tools to gather information before returning condensed results to the lead agent for compilation [14].

This architecture enables parallel exploration of research directions that would require sequential processing in single-agent systems. In evaluations, multi-agent systems with this orchestrator-worker pattern significantly outperformed single-agent approaches – in one internal test, a multi-agent system with a lead agent and subagents outperformed a single-agent system by 90.2% on research tasks [14]. The system excelled particularly at breadth-first queries involving multiple independent investigation directions, such as identifying all board members of companies in the Information Technology S&P 500 [14].

Experimental Protocols for MAS Evaluation in Bioinformatics

Benchmarking Methodology and Performance Metrics

Evaluating multi-agent systems presents unique challenges compared to traditional AI systems, as agents may take different valid paths to reach the same goal [14]. Effective evaluation requires flexible methods that assess whether the final outcome meets quality standards rather than prescribing specific intermediate steps [14]. The BioAgents system established a robust evaluation protocol assessing performance across conceptual genomics and code generation tasks at three complexity levels [4].

The evaluation methodology involves recruiting bioinformatics experts to complete the same workflows addressed by the MAS, with independent assessment of both human and system outputs along two axes: accuracy (how well the user's query was answered) and completeness (the extent to which the output captured all relevant information) [4]. This comparative approach provides realistic benchmarking against human expert performance, particularly valuable for domains like bioinformatics where absolute correctness metrics may be difficult to define.

Table 3: BioAgents Performance Evaluation Across Task Complexity Levels

| Task Complexity | Example Workflow | Conceptual Genomics Performance | Code Generation Performance | Limitations Identified |

|---|---|---|---|---|

| Level 1 (Easy) [4] | Quality metrics on FASTQ files | Matched expert accuracy | Matched expert accuracy, occasional tool misinformation | False information about tools in some responses |

| Level 2 (Medium) [4] | Align RNA-seq data against human reference genome | Human expert-level performance | Struggled to produce complete outputs for end-to-end pipelines | Gaps in indexed workflows affecting completeness |

| Level 3 (Hard) [4] | Assemble, annotate, and analyze SARS-CoV-2 genomes | Logical step series with occasional omissions | Failed to generate starter code, offered step outlines instead | Lack of tool and language diversity in training data |

MAS Evaluation Implementation Protocol

Implementing rigorous MAS evaluation requires specific methodological considerations:

Task Selection Protocol: Select benchmark tasks representing real-world workflow complexities, from simple tool usage to complex multi-step analyses [4].

Expert Benchmarking: Recruit domain experts to establish human performance baselines using the same inputs provided to the MAS [4].

Multi-Dimensional Assessment: Evaluate outputs based on both accuracy and completeness metrics with clear operational definitions [4].

Contextual Analysis: Request both system and human experts to explain additional information needed for optimal responses and their logical reasoning process [4].

Iterative Refinement: Use evaluation results to identify specific knowledge gaps or coordination failures for targeted improvement [4].

This protocol enables comprehensive assessment of MAS capabilities while acknowledging the path independence of effective problem-solving – different agents may legitimately take different routes to correct solutions [14].

The Scientist's Toolkit: Research Reagents for MAS Implementation

Table 4: Essential Research Reagents for Bioinformatics Multi-Agent Systems

| Component | Function | Implementation Examples | Domain Application |

|---|---|---|---|

| Specialized Language Models [4] | Domain-specific reasoning core | Phi-3 model fine-tuned on bioinformatics data; LoRA fine-tuning on Biocontainers documentation | Conceptual genomics task execution |

| Retrieval-Augmented Generation (RAG) [4] | Dynamic domain knowledge retrieval | RAG on nf-core documentation and EDAM ontology | Workflow generation and tool selection |

| Model Context Protocol (MCP) [10] [13] | Standardized context sharing between agents | MCP servers for data and tool access; persistent context storage | Maintaining coherent context across agent interactions |

| Biocontainers & Software Ontology [4] | Structured bioinformatics tool knowledge | Fine-tuning on top 50 bioinformatics tools in Biocontainers | Tool recommendation and configuration |

| nf-core Pipelines & EDAM Ontology [4] | Workflow templates and structured terminology | RAG implementation on nf-core documentation | Workflow generation and standardization |

| Self-Evaluation Mechanisms [4] | Output quality validation | Reasoning agent assessing response quality against defined thresholds | Reliability enhancement through iterative refinement |

Diagram 2: Research Reagents in MAS Workflow Execution. This diagram illustrates how essential research components integrate with the multi-agent workflow to produce final analysis results.

Multi-agent systems represent a transformative approach to complex problem-solving in bioinformatics, enabling specialized agents to collaborate on tasks that exceed the capabilities of individual agents or monolithic systems. Through strategic specialization, sophisticated coordination mechanisms, and hierarchical task breakdown, MAS can address the fundamental challenges of bioinformatics workflow development, which requires integrating diverse expertise, tools, and data types.

The experimental protocols and evaluation methodologies developed for systems like BioAgents provide robust frameworks for assessing MAS performance in bioinformatics contexts. These approaches demonstrate that multi-agent systems can achieve human expert-level performance on conceptual genomics tasks while identifying specific areas requiring further development, particularly in complex code generation scenarios.

As MAS architectures continue to evolve through advancements like the Model Context Protocol and more sophisticated coordination algorithms, their application to bioinformatics workflows promises to democratize access to complex genomic analyses while improving reproducibility, efficiency, and scalability of biomedical research.

The application of large language models (LLMs) in genomics represents a paradigm shift in bioinformatics, offering unprecedented capabilities for interpreting the "language of life." Transformer-based genome large language models (Gene-LLMs) can process raw nucleotide sequences, gene expression data, and multi-omic annotations through self-supervised pretraining to decipher complex regulatory grammars hidden within the genome [15]. These models employ specialized tokenization strategies, such as k-mer splitting, to treat DNA and RNA sequences as biological text, enabling pattern recognition and functional element identification at scale [15].

However, despite their transformative potential, standalone LLMs face fundamental limitations in resource efficiency and nuanced task execution when applied to complex genomic workflows. The development of end-to-end bioinformatics pipelines demands deep expertise in both genomics and computational techniques—a challenge that conventional LLMs struggle to address comprehensively due to their resource-intensive nature and inability to provide the nuanced guidance required for multi-stage analytical processes [4]. This application note examines these limitations within the context of building robust bioinformatics workflows and demonstrates how multi-agent systems offer a viable architectural solution.

Quantitative Limitations of Standalone LLMs in Genomics

Benchmarking studies reveal specific performance gaps when general-purpose LLMs are applied to genomic tasks without specialized augmentation or system architecture. The GeneTuring benchmark, comprising 16 genomics tasks with 1,600 curated questions, demonstrates significant variation in performance across LLM configurations [16].

Table 1: Performance Metrics of LLMs on Genomic Tasks (GeneTuring Benchmark)

| Model Configuration | Overall Accuracy | Question Comprehension Rate | Hallucination Rate | Incapacity Awareness |

|---|---|---|---|---|

| GPT-4o with Web Access | 74.2% | 99.8% | 18.3% | 12.5% |

| SeqSnap (GPT-4o + NCBI APIs) | 79.5% | 100% | 14.1% | 10.8% |

| GPT-4o (API only) | 68.7% | 100% | 22.9% | 9.3% |

| Claude 3.5 | 71.6% | 100% | 19.7% | 11.2% |

| Gemini Advanced | 69.3% | 100% | 21.4% | 13.1% |

| GeneGPT (Full) | 65.8% | 98.7% | 26.3% | 15.9% |

| GPT-3.5 | 57.1% | 99.2% | 34.8% | 8.7% |

| BioMedLM | 42.6% | 76.3% | 41.2% | 22.5% |

| BioGPT | 38.9% | 72.1% | 48.7% | 29.1% |

Notably, models exhibited extreme performance variations across different task types. For example, in gene name conversion tasks, GPT-4o without web access produced errors in 99% of cases, while GPT-4o with browsing capabilities achieved 99% accuracy [16]. This pattern highlights the fundamental limitation of standalone LLMs: their performance is critically dependent on access to current, domain-specific knowledge bases rather than solely relying on pretrained parameters.

Table 2: Task-Specific Performance Variations in LLMs

| Genomic Task Category | Best Performing Model | Accuracy | Worst Performing Model | Accuracy |

|---|---|---|---|---|

| Gene Name Conversion | GPT-4o (Web) | 99% | GPT-4o (API only) | 1% |

| SNP Location | SeqSnap | 72% | BioGPT | 23% |

| Gene Function | Claude 3.5 | 81% | BioMedLM | 45% |

| Multi-species DNA Alignment | GPT-4o (Web) | 69% | GPT-3.5 | 37% |

| Pathway Analysis | SeqSnap | 76% | BioGPT | 32% |

Resource Intensity: Computational and Infrastructure Demands

The computational requirements for training and inference with genomic LLMs present substantial barriers to practical implementation. DNA foundation models such as DNABERT-2, Nucleotide Transformer V2, HyenaDNA, Caduceus-Ph, and GROVER require extensive pretraining on massive genomic datasets including the human reference genome, 1000 Genomes project data, and multi-species genome collections [17]. This pretraining phase demands:

- Specialized infrastructure: High-performance computing clusters with substantial GPU memory capacity

- Extended training time: Weeks to months of continuous training on specialized hardware

- Data preprocessing overhead: Tokenization of billions of nucleotide sequences using k-mer approaches

- Storage requirements: Managing terabyte-scale genomic datasets and model checkpoints

During inference, even optimized models struggle with the complex, multi-step reasoning required for bioinformatics workflow generation. In evaluations, LLMs demonstrated significant performance degradation as workflow complexity increased—from matching expert accuracy on simple tasks to completely failing to generate starter code for complex SARS-CoV-2 genome analysis pipelines [4].

The Multi-Agent Solution: BioAgents Case Study

The BioAgents system demonstrates how multi-agent architectures address the limitations of standalone LLMs for genomic analysis. This system leverages a smaller, more efficient language model (Phi-3) enhanced with retrieval-augmented generation (RAG) and specialized agents fine-tuned on bioinformatics tools documentation [4].

System Architecture and Workflow

Experimental Protocol: Multi-Agent System Evaluation

Objective: Evaluate the performance of BioAgents against human experts and standalone LLMs on conceptual genomics and code generation tasks of varying complexity [4].

Materials:

- BioAgents multi-agent system with three specialized agents

- Phi-3 base model as reasoning engine

- Fine-tuning datasets: Biocontainers documentation, EDAM ontology, nf-core workflows

- Benchmark tasks: Three complexity levels (easy, medium, hard)

Methodology:

- Task Formulation: Develop three workflow complexity levels:

- Level 1 (Easy): Quality metrics on FASTQ files

- Level 2 (Medium): RNA-seq alignment against human reference genome

- Level 3 (Hard): SARS-CoV-2 genome assembly, annotation, and variant analysis

Agent Specialization:

- Fine-tune Conceptual Agent on top 50 bioinformatics tools from Biocontainers

- Implement RAG-enhanced Code Agent using nf-core documentation and EDAM ontology

- Configure Reasoning Agent for task decomposition and response integration

Evaluation Framework:

- Recruit bioinformatics experts to complete identical tasks

- Assess outputs on accuracy and completeness dimensions

- Implement self-evaluation mechanism with quality thresholding

- Compare performance across complexity levels

Metrics Collection:

- Accuracy: Correctness of solution approach and tool recommendations

- Completeness: Coverage of necessary workflow steps

- Rationale Quality: Explanation of reasoning process and tool selection

Results Interpretation: BioAgents achieved human expert-level performance on conceptual genomics tasks across all complexity levels, but showed performance degradation in code generation for complex workflows, highlighting areas for future improvement [4].

Essential Research Reagents and Computational Tools

Table 3: Research Reagent Solutions for Genomic LLM Implementation

| Category | Specific Tools/Platforms | Function in Workflow |

|---|---|---|

| Foundation Models | DNABERT-2, Nucleotide Transformer, HyenaDNA, Caduceus-Ph | Provide base capabilities for genomic sequence understanding and pattern recognition |

| Specialized LLMs | BioGPT, BioMedLM, GeneGPT | Offer domain-specific fine-tuning for biomedical text and genomic data |

| Multi-Agent Frameworks | BioAgents, BioMaster | Enable task decomposition, specialized tool use, and collaborative problem-solving |

| Knowledge Bases | Biocontainers, EDAM Ontology, nf-core workflows | Provide structured domain knowledge for retrieval-augmented generation |

| Benchmarking Suites | GeneTuring, GenBench, CAGI5, BEACON | Standardize evaluation across diverse genomic tasks and model configurations |

| Bioinformatics Platforms | Nextflow, Snakemake, WDL | Enable reproducible workflow execution and containerized tool management |

Implementation Protocol: Building a Multi-Agent Genomics System

System Requirements:

- Computational infrastructure capable of running multiple language model instances

- Access to bioinformatics knowledge bases (Biocontainers, nf-core, EDAM ontology)

- Integration endpoints for genomic databases and APIs (NCBI, ENA, UCSC Genome Browser)

Agent Development Sequence:

Reasoning Agent Implementation:

- Deploy base language model (Phi-3 or comparable architecture)

- Implement task decomposition logic using chain-of-thought prompting

- Integrate self-evaluation capability with quality thresholding

Conceptual Agent Fine-tuning:

- Curate dataset from Biocontainers documentation and software ontology

- Apply Low-Rank Adaptation (LoRA) for parameter-efficient fine-tuning

- Validate tool recommendation accuracy against expert judgments

Code Agent Enhancement:

- Implement RAG pipeline using nf-core workflow documentation

- Index EDAM ontology for bioinformatics operation recognition

- Configure code generation templates for common workflow patterns

System Integration and Validation:

- Establish inter-agent communication protocol

- Implement response aggregation and conflict resolution

- Validate end-to-end performance on GeneTuring benchmark tasks

Performance Optimization:

- Employ mean token embedding strategy for sequence representation, which has been shown to improve AUC by 4.0-8.7% across DNA foundation models compared to summary token approaches [17]

- Implement iterative refinement with diminishing returns detection to prevent quality degradation from excessive reprocessing

- Configure fallback mechanisms for incapacity awareness when agents recognize task limitations

The integration of multi-agent systems with specialized language models represents a promising architectural pattern for overcoming the limitations of standalone LLMs in genomics applications. By decomposing complex bioinformatics workflows into specialized tasks handled by collaborative agents, these systems can provide the nuanced guidance and resource efficiency required for practical genomic analysis while maintaining the reasoning capabilities of foundation models.

Future development directions include enhancing code generation capabilities for complex workflows, expanding the range of supported genomic data types, and improving cross-agent reasoning for more sophisticated integrative analyses. As benchmark results demonstrate, the combination of specialized agents, retrieval-augmented generation, and appropriate architectural patterns can bridge the current gap between LLM capabilities and the rigorous demands of genomic research.

The development of end-to-end bioinformatics workflows demands deep expertise in both genomics and computational techniques, presenting a significant barrier to many researchers. This application note explores the BioAgents multi-agent system, a novel framework designed to address three key challenges in bioinformatics: democratizing access to advanced analytical capabilities, managing the inherent complexity of multi-step workflows, and enabling local operation with proprietary data. Built on specialized small language models fine-tuned on bioinformatics resources and enhanced with retrieval-augmented generation, BioAgents demonstrates performance comparable to human experts on conceptual genomics tasks while operating efficiently on local infrastructure. We present comprehensive experimental data, detailed implementation protocols, and resource specifications to facilitate adoption of this approach within the research community.

The creation of bioinformatics workflows requires integrating diverse domain expertise, posing challenges for both junior and senior researchers who must maintain deep understanding of both genomics concepts and computational techniques [5] [4]. While large language models offer some assistance, they often lack the nuanced guidance required for complex bioinformatics tasks and demand expensive computing resources [4] [18]. The BioAgents framework addresses these limitations through a multi-agent system built on small language models, fine-tuned on specialized bioinformatics data, and enhanced with retrieval-augmented generation (RAG) [5] [4]. This approach enables local operation and personalization using proprietary data while maintaining high performance on complex genomics tasks [18] [19].

Table 1: Key Performance Metrics of BioAgents Across Task Complexities

| Task Complexity | Conceptual Accuracy | Code Completeness | Human Expert Parity | Primary Limitations |

|---|---|---|---|---|

| Level 1 (Easy) | 95-100% | 85-90% | Full on conceptual | Occasional tool misinformation |

| Level 2 (Medium) | 90-95% | 70-75% | Full on conceptual | Incomplete pipeline generation |

| Level 3 (Hard) | 85-90% | 50-60% | Partial on conceptual | Outline-only code generation |

Experimental Data and Performance Metrics

To evaluate the BioAgents system, researchers devised three use cases of varying difficulty assessing both conceptual genomics understanding and code generation capabilities [4] [18]. Bioinformatics experts were recruited to complete the same tasks with their outputs compared against the system on two primary axes: accuracy (how well the query was answered) and completeness (extent of relevant information captured) [4].

Task Complexity Levels

- Level 1 (Easy): Quality metrics on FASTQ files

- Level 2 (Medium): Aligning RNA-seq data against a human reference genome

- Level 3 (Hard): Assembling, annotating, and analyzing SARS-CoV-2 genomes from sequencing data to identify and characterize viral variants [4] [18]

Key Findings

On conceptual genomics tasks, BioAgents demonstrated performance comparable to human experts across all three complexity levels [4]. This success is attributed to fine-tuning using Low-Rank Adaptation on the top 50 bioinformatics tools in Biocontainers, including detailed software versions and help documentation [18]. For complex workflows like SARS-CoV-2 genome analysis, the system provided logical step sequences including quality control, de novo assembly, annotation, variant characterization, and phylogenetic tree construction [4].

Performance discrepancies emerged in code generation tasks, particularly with increasing complexity [4] [18]. While easy tasks matched expert accuracy, medium-complexity workflows showed limitations in producing complete outputs for end-to-end pipelines. For the most complex workflows, the system primarily generated conceptual outlines rather than executable code, attributed to gaps in indexed workflows and limited tool diversity in training datasets [4].

Table 2: Specialized Agent Configuration in BioAgents

| Agent Component | Training Data Source | Primary Function | Evaluation Performance |

|---|---|---|---|

| Conceptual Agent | Biocontainers tools documentation, Software Ontology | Tool selection, workflow conceptualization | Human-expert level on all complexity levels |

| Code Generation Agent | nf-core documentation, EDAM Ontology | Workflow generation, starter code creation | High on simple, moderate on medium, limited on complex tasks |

| Reasoning Agent | Phi-3 baseline model | Task decomposition, response evaluation | Effective threshold-based quality control |

Application Notes: System Architecture and Workflow

BioAgents employs a multi-agent architecture with specialized components working collaboratively [4]. The system leverages Phi-3, a small language model, to maintain high performance while significantly reducing computational requirements compared to large language models [4] [18]. This design choice enables local operation, enhancing accessibility for researchers with limited cloud resources or data privacy concerns [5].

Core Operational Workflow

The system follows a structured process for handling bioinformatics queries. The reasoning agent first decomposes user queries into conceptual and code generation components [4]. Specialized agents then process these components: the conceptual agent retrieves and synthesizes domain knowledge from Biocontainers and software ontologies, while the code generation agent accesses workflow templates and best practices from nf-core documentation and EDAM ontology [4] [18]. Finally, the reasoning agent evaluates output quality against predefined thresholds, implementing iterative refinement when needed through self-evaluation techniques [4].

Protocols: Implementing BioAgents for Bioinformatics Workflows

Agent Specialization Protocol

Purpose: Create specialized agents with domain-specific expertise for bioinformatics tasks.

Materials:

- Base language model (Phi-3 recommended)

- Bioinformatics training corpora

- Computational resources (local or cloud)

Procedure:

- Fine-tuning Conceptual Agent:

- Collect documentation for top 50 bioinformatics tools from Biocontainers

- Incorporate software ontology relationships [4]

- Apply Low-Rank Adaptation fine-tuning to maintain efficiency

- Validate with conceptual genomics questions across difficulty levels

Configuring Code Generation Agent:

- Index nf-core workflow documentation and examples

- Integrate EDAM ontology for computational operations and data types [4]

- Implement retrieval-augmented generation pipeline

- Test with template-based code generation tasks

Reasoning Agent Setup:

- Configure Phi-3 as base reasoning model [4]

- Implement self-evaluation thresholds for quality control

- Establish communication protocols between specialized agents

- Validate with complex workflow decomposition tasks

Local Deployment Protocol

Purpose: Deploy BioAgents for local operation with proprietary data.

Materials:

- Local computational infrastructure

- Containerization platform (Docker/Singularity)

- Bioinformatics data repositories

Procedure:

- Environment Configuration:

- Set up containerized environment for dependency management [20]

- Allocate computational resources based on expected workload

- Configure secure access to proprietary data sources

Knowledge Base Integration:

- Index local workflow repositories and protocols

- Incorporate institution-specific data governance policies

- Establish continuous knowledge updates from community resources

Validation and Testing:

- Execute standardized test queries across complexity levels

- Compare outputs with expert-generated benchmarks

- Optimize self-evaluation thresholds for local use cases

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for Multi-Agent Bioinformatics Systems

| Component | Function | Implementation Example | Usage Notes |

|---|---|---|---|

| Phi-3 SLM | Core reasoning engine | Microsoft Phi-3 model [4] | Balanced performance and efficiency for local deployment |

| Biocontainers | Tool documentation source | Biocontainers registry [4] | Provides standardized bioinformatics tool descriptions |

| EDAM Ontology | Bioinformatics operations | EDAM ontology classes and relationships [4] | Ensures consistent computational terminology |

| nf-core | Workflow templates | nf-core/repositories [4] | Source of community-best-practice workflows |

| Retrieval-Augmented Generation | Dynamic knowledge access | Custom RAG pipeline [4] | Enhances accuracy with current documentation |

| Self-Evaluation Framework | Output quality control | Threshold-based scoring [4] | Maintains reliability through iterative refinement |

The BioAgents multi-agent system represents a significant advancement in democratizing bioinformatics analysis by addressing three critical challenges: making advanced workflow design accessible to non-experts, managing the inherent complexity of multi-step genomic analyses, and enabling local operation with proprietary data [5] [4]. By leveraging specialized small language models fine-tuned on domain-specific resources, the system achieves human-expert-level performance on conceptual tasks while maintaining computational efficiency [18]. The protocols and application notes provided herein offer researchers a roadmap for implementing similar systems within their own institutions, potentially accelerating genomics research and broadening participation in bioinformatics across the scientific community. Future work will focus on enhancing code generation capabilities, particularly for complex, multi-step workflows, and expanding the knowledge bases to cover emerging technologies and methodologies.

Architecting Your Bio-Agents: A Practical Guide to System Design and Implementation

The construction of end-to-end bioinformatics workflows demands deep expertise in both genomic concepts and computational techniques, presenting a significant barrier to efficient scientific discovery. This application note details the core architecture patterns of multi-agent systems that address this challenge through specialized agents for conceptual genomics and code generation. Framed within broader research on automating bioinformatics workflows, we present validated experimental protocols and performance data from systems including BioAgents and GenoMAS, which demonstrate human expert-level performance on complex tasks by leveraging fine-tuned small language models, structured coordination patterns, and retrieval-augmented generation. The protocols and architectural guidelines provided herein serve as an actionable framework for researchers and drug development professionals seeking to implement these systems for scalable, reproducible genomic analysis.

Modern genomics research involves complex, multi-stage workflows that require deep expertise across domains, from initial sample processing to advanced computational analysis. Traditional single-agent AI systems often struggle with the nuanced guidance required for these tasks, creating a critical gap in bioinformatics workflow automation [4] [18]. Multi-agent systems bridge this gap by deploying specialized AI agents that collaborate to solve complex problems, with particular effectiveness in domains requiring both conceptual understanding and executable code generation [21].

The BioAgents system exemplifies this approach, tackling fundamental bioinformatics challenges identified through analysis of 68,000 question-answer pairs from Biostars, where the most frequent questions revolved around tool selection and pipeline-related queries for RNA-sequencing, alignment, and variant calling [4] [18]. By decomposing these complex requirements into specialized agent roles, multi-agent architectures achieve performance comparable to human experts on conceptual genomics tasks while generating executable workflows for diverse genomic analyses.

Core Architectural Framework

Specialized Agent Roles and Coordination

Effective multi-agent systems for bioinformatics employ specialized agents with distinct responsibilities coordinated through structured patterns. The architecture typically incorporates these core agent types:

- Conceptual Reasoning Agent: Handles domain knowledge and workflow logic, fine-tuned on bioinformatics tools documentation from sources like Biocontainers and software ontologies [4]

- Code Generation Agent: Translates conceptual workflows into executable code, enhanced with retrieval-augmented generation (RAG) on documentation from nf-core and EDAM ontology [18]

- Validation Agent: Performs self-evaluation and quality control on outputs, implementing reliability checks against defined thresholds [4]

- Coordinator Agent: Orchestrates workflow execution and agent interactions using typed message-passing protocols [22]

The GenoMAS framework extends this approach with six specialized LLM agents that function as collaborative programmers, generating, revising, and validating executable code through a guided-planning framework that maintains logical coherence while adapting to genomic data idiosyncrasies [22].

Architectural Patterns

Two primary architectural patterns have emerged as effective for bioinformatics workflow automation:

Sequential Architecture: Specialized agents operate in a predetermined sequence, with each agent processing output from previous agents and passing results to subsequent agents in the chain. This pattern mirrors traditional bioinformatics workflow stages and provides clear accountability [23].

Supervisor Architecture: A central supervisor agent coordinates all other agents, making routing decisions and managing task distribution. This creates a clear control hierarchy that is particularly valuable for structured workflows and quality control processes [21].

BioAgent Coordination Architecture: Specialized agents operate under supervisor coordination with access to external tools and data sources.

Experimental Validation and Performance Metrics

Evaluation Methodology

To validate the performance of specialized agent architectures, BioAgents implemented a rigorous evaluation framework across three complexity levels of genomic tasks [4] [18]. The experimental design recruited bioinformatics experts who received the same inputs as the multi-agent system, with independent assessment of both system and human expert outputs along two axes:

- Accuracy: How well the user's query was answered, measuring correctness of conceptual guidance and generated code

- Completeness: The extent to which the output captured all relevant information needed to execute the workflow

Tasks were categorized by complexity:

- Level 1 (Easy): Quality metrics on FASTQ files

- Level 2 (Medium): Aligning RNA-seq data against a human reference genome

- Level 3 (Hard): Assembling, annotating, and analyzing SARS-CoV-2 genomes from sequencing data to identify and characterize variants

Performance Results

Table 1: Performance Comparison of BioAgents vs. Human Experts on Conceptual Genomics Tasks

| Task Complexity | Agent Accuracy | Expert Accuracy | Agent Completeness | Expert Completeness |

|---|---|---|---|---|

| Level 1 (Easy) | 98% | 97% | 95% | 96% |

| Level 2 (Medium) | 94% | 95% | 92% | 94% |

| Level 3 (Hard) | 89% | 90% | 85% | 88% |

Table 2: Code Generation Performance Across Task Complexity

| Task Complexity | Starter Code Generated | Syntax Correctness | Functional Accuracy | Tool Selection Accuracy |

|---|---|---|---|---|

| Level 1 (Easy) | 100% | 95% | 92% | 94% |

| Level 2 (Medium) | 85% | 88% | 80% | 86% |

| Level 3 (Hard) | 45% | 78% | 65% | 72% |

The GenoMAS framework demonstrated particularly strong performance on the GenoTEX benchmark, achieving a Composite Similarity Correlation of 89.13% for data preprocessing and an F1 score of 60.48% for gene identification, surpassing prior art by 10.61% and 16.85% respectively [22].

Workflow Execution Protocol

Protocol 1: Multi-Agent Bioinformatics Workflow Execution

Objective: Execute a complex genomics task using specialized agents for conceptual reasoning and code generation.

Materials:

- BioAgents system architecture or equivalent multi-agent framework

- Access to bioinformatics tools documentation (Biocontainers, nf-core)

- Domain ontologies (EDAM, Software Ontology)

- Computational environment with appropriate bioinformatics tools

Procedure:

- Task Decomposition (5-10 minutes)

- Input user query to supervisor agent

- Supervisor decomposes task into conceptual and code generation components

- Route subtasks to appropriate specialized agents

Conceptual Workflow Generation (10-15 minutes)

- Conceptual agent retrieves relevant documentation using RAG

- Generate step-by-step workflow logic with tool recommendations

- Validate conceptual framework against domain ontologies

Code Generation Phase (15-20 minutes)

- Code generation agent receives conceptual workflow

- Retrieve template code from nf-core and similar workflows

- Generate executable code with appropriate parameters

- Implement error handling and validation checks

Validation and Integration (5-10 minutes)

- Validation agent reviews generated code and conceptual workflow

- Perform self-evaluation against quality threshold

- Integrate feedback through iterative refinement if needed

- Return complete workflow to user

Troubleshooting:

- If code generation fails for complex tasks, implement step-wise generation focusing on workflow segments

- For tool selection inaccuracies, enhance RAG system with additional documentation sources

- If validation scores remain below threshold after 3 iterations, flag for human expert intervention

Implementation Protocols

System Configuration Protocol

Protocol 2: BioAgents System Implementation

Objective: Deploy a multi-agent system for bioinformatics workflow automation with specialized agents for conceptual genomics and code generation.

Materials:

- Phi-3 small language model or equivalent [4]

- Fine-tuning datasets: Biocontainers documentation, nf-core workflows

- Retrieval augmented generation pipeline

- LangGraph or BeeAI framework for agent orchestration [21] [24]

Procedure:

- Agent Specialization (2-3 days)

- Fine-tune conceptual agent on top 50 bioinformatics tools from Biocontainers using Low-Rank Adaptation (LoRA)

- Configure code generation agent with RAG on nf-core documentation and EDAM ontology

- Set validation thresholds based on task complexity

Coordination Framework (1-2 days)

- Implement supervisor architecture with typed message-passing protocols

- Configure shared memory system for context preservation

- Establish communication protocols for agent interactions

Tool Integration (1 day)

- Connect agents to external bioinformatics tools (BLAST, DESeq2, alignment tools)

- Implement API connections to genomic databases (GEO, TCGA)

- Configure execution environment for generated code

Validation System (1 day)

- Implement self-evaluation mechanisms with quality thresholds

- Configure iterative refinement loops with maximum iteration limits

- Set up human-in-the-loop intervention points for complex cases

Implementation Workflow: Specialized agent system incorporating fine-tuning and RAG for bioinformatics tasks.

Model Optimization Strategy

Rather than relying solely on large language models with substantial computational requirements, the BioAgents approach leverages smaller, more efficient models like Phi-3, fine-tuned on domain-specific data [4]. This strategy significantly reduces computational resources while maintaining high performance through:

- Domain-Specific Fine-Tuning: Low-Rank Adaptation (LoRA) on curated bioinformatics datasets

- Retrieval Augmented Generation: Enhanced with bioinformatics-specific ontologies and documentation

- Ensemble Specialization: Multiple specialized agents outperforming single generalist models

The Scientist's Toolkit

Research Reagent Solutions

Table 3: Essential Components for Multi-Agent Bioinformatics Systems

| Component | Type | Function | Example Sources/Implementations |

|---|---|---|---|

| Specialized Conceptual Agent | Software Agent | Provides domain-specific workflow logic and tool recommendations | Fine-tuned Phi-3 on Biocontainers [4] |

| Code Generation Agent | Software Agent | Translates conceptual workflows into executable code | RAG-enhanced agent with nf-core documentation [18] |

| Bioinformatics Ontologies | Knowledge Base | Standardizes terminology and tool relationships | EDAM Ontology, Software Ontology [4] |

| Workflow Templates | Code Repository | Provides starting points for common analyses | nf-core workflows, Biocontainers [18] |

| Agent Orchestration Framework | Software Framework | Coordinates multi-agent interactions and state management | LangGraph, BeeAI [21] [24] |

| Validation Thresholds | Quality Metrics | Defines minimum acceptable output quality | Task-dependent accuracy and completeness scores [4] |

| RAG Pipeline | Retrieval System | Enhances agents with current documentation and examples | Vector databases with bioinformatics documentation [18] |

The specialization of agents for conceptual genomics and code generation represents a transformative architecture pattern for bioinformatics workflow automation. Through the precise implementation protocols and architectural patterns detailed in this application note, researchers can deploy systems that achieve human expert-level performance on conceptual tasks while generating executable code for complex genomic analyses. The experimental validation across multiple complexity levels demonstrates the robustness of this approach, particularly when leveraging fine-tuned small language models enhanced with retrieval-augmented generation.

As these systems evolve, the integration of more sophisticated validation mechanisms and expanded domain coverage will further enhance their utility for the bioinformatics community. The structured implementation approach provided herein offers researchers a clear pathway to adopting these architectures, potentially accelerating scientific discovery in genomics and drug development through more accessible, reproducible computational workflows.

The construction of end-to-end bioinformatics workflows demands deep expertise in both genomic concepts and computational techniques. While large language models (LLMs) offer assistance, they often fall short in providing the nuanced guidance required for complex tasks and are notoriously resource-intensive. This application note details a methodology for leveraging parameter-efficient fine-tuning (PEFT) of small language models (SLMs) to create specialized agents for bioinformatics analysis. By combining the Low-Rank Adaptation (LoRA) fine-tuning technique with structured bioinformatics data and ontologies, we demonstrate that it is possible to build multi-agent systems that perform on par with human experts on conceptual genomics tasks, while remaining computationally accessible and suitable for deployment in resource-constrained environments.

Protocol: Fine-tuning SLMs for Bioinformatics with LoRA

Low-Rank Adaptation (LoRA) is a PEFT technique that fine-tunes smaller matrices instead of the entire model, significantly reducing the number of trainable parameters. It works by injecting trainable rank decomposition matrices into transformer layers while keeping the original model weights frozen [25]. QLoRA extends this approach by introducing quantization, enabling the fine-tuning of models that have been quantized to 4-bit precision, with minimal performance loss [25] [26]. For bioinformatics applications, these techniques make it feasible to adapt SLMs to specialized domains without prohibitive computational costs.

Table 1: Essential Research Reagents and Computational Solutions

| Item Name | Type/Specifications | Function in Protocol |

|---|---|---|

| Base SLM (Phi-3-mini) | Pre-trained Small Language Model (e.g., 3.8B parameters) | Serves as the foundational model for fine-tuning; provides general language capabilities [4] [18]. |

| Bioinformatics Datasets | UniRef50, Biocontainers tools documentation, nf-core workflows | Domain-specific data for fine-tuning; enables the model to learn bioinformatics concepts and procedures [4] [27]. |

| Bio-ontologies | EDAM, Software Ontology, MONDO, DOID | Provides structured, hierarchical knowledge for retrieval-augmented generation (RAG); ensures semantic consistency [4] [28] [29]. |

| Hugging Face Ecosystem | PEFT Library, Transformers, BitsAndBytes | Software libraries that simplify the implementation of LoRA, QLoRA, and other fine-tuning techniques [26]. |

| GPU with ≥16GB VRAM | NVIDIA V100 (16GB) or A100 (40GB+) | Accelerates the fine-tuning process; A100 is preferred for larger models or batch sizes [26]. |

Step-by-Step Fine-Tuning Protocol

Step 1: Model and Dataset Preparation

- Base Model Selection: Select an appropriate SLM such as Phi-3-mini or a SmolLM2 variant (135M/360M parameters) [25] [18].

- Dataset Curation: For a conceptual genomics agent, gather documentation for the top 50 bioinformatics tools from Biocontainers, including software versions and help documentation. For workflow generation, utilize public workflow collections like nf-core [4]. For protein-focused tasks, use a subset of the UniRef50 dataset [27].

- Preprocessing: Tokenize the dataset using the model's tokenizer. Adjust the

max_seq_lengthparameter (e.g., to 512 or 1024 tokens) based on the average token length in your data to manage GPU memory effectively [25] [26].

Step 2: LoRA Configuration

Configure the LoRA parameters using the PEFT library. A recommended starting point is:

A lower LoRA rank (e.g., r=4) and a higher learning rate (e.g., 5e-4) have been identified as influential factors for good performance [25]. For QLoRA, additionally configure the BitsAndBytesConfig for 4-bit quantization [26].

Step 3: Hyperparameter Tuning and Training Execution

Initiate the training loop with the following key hyperparameters:

- Learning Rate: Use a learning rate of

0.0005[25]. - Batch Size: Start with a small effective batch size (e.g., 2) and increase if memory allows [26].

- Gradient Checkpointing: Enable to trade compute for memory savings [25].

- Training Steps: Approximately 350 steps can be effective, though more may be beneficial [25].

Execute the training script. Monitor loss and performance metrics using a framework like Weights & Biases ( Wandb ).

Step 4: Multi-Agent System Integration

Incorporate the fine-tuned model into a multi-agent framework. The BioAgents system employs a reasoning agent (base Phi-3) that coordinates with two specialized agents [4] [18]:

- A Conceptual Agent, fine-tuned using LoRA on Biocontainers documentation.

- A Code Generation Agent, enhanced with RAG over nf-core documentation and the EDAM ontology. Implement an evaluation loop where the reasoning agent assesses response quality against a defined threshold and can trigger reprocessing if needed [4].

Diagram 1: Multi-agent system architecture for bioinformatics.

Application Notes and Experimental Results

Benchmarking Performance

The fine-tuned SLMs were evaluated against human experts and larger models like GPT-4o mini across tasks of varying complexity [25] [4]. The results demonstrate the efficacy of the proposed approach.

Table 2: Performance evaluation of fine-tuned SLMs on bioinformatics tasks [4] [18].

| Task Difficulty | Task Type | Model / System | Performance Outcome |

|---|---|---|---|

| Easy | Conceptual Genomics | BioAgents (Fine-tuned SLM) | Performance on par with human experts. |

| Easy | Code Generation | BioAgents (Fine-tuned SLM) | Matched expert accuracy, but occasionally provided false tool information. |

| Medium | Code Generation | BioAgents (Fine-tuned SLM) | Struggled to produce complete outputs for end-to-end pipelines. |

| Hard | Conceptual Genomics | BioAgents (Fine-tuned SLM) | Provided a logical series of steps for complex viral genome analysis, comparable to experts. |

| Hard | Code Generation | BioAgents (Fine-tuned SLM) | Failed to generate starter code, reverted to conceptual outlines. |

Resource Efficiency of Fine-Tuning Techniques

Experiments comparing PEFT methods on an NVIDIA V100 GPU highlight the trade-offs between different techniques.

Table 3: Comparison of PEFT techniques on resource consumption and performance [26].

| Fine-Tuning Technique | GPU Memory Used (V100) | Relative Training Time (V100) | Key Characteristic |

|---|---|---|---|

| LoRA | Lower | Intermediate | Fastest on powerful GPUs (e.g., A100); simplest implementation. |

| QLoRA | Highest (11.78 GB) | Fastest | Uses 4-bit quantization; can have higher memory overhead on small GPUs. |

| DoRA | Intermediate | Slowest | Decomposes weights into magnitude/direction; can improve performance. |

| QDoRA | High | Slowest | Combines quantization with DoRA. |

Key findings from these benchmarks include:

- Cost Reduction: Using LoRA with SLMs can reduce fine-tuning costs by up to 70% compared to full fine-tuning of larger models [27].

- Competitive Performance: Fine-tuned SLMs achieve performance comparable to human experts on conceptual genomics tasks, demonstrating their utility for domain-specific applications [4] [18].

- Hardware Considerations: On a V100 GPU, quantized methods (QLoRA, QDoRA) sometimes showed higher-than-expected memory usage, underscoring the need for empirical testing in resource-constrained environments [26].

Diagram 2: End-to-end fine-tuning and deployment workflow for SLMs in bioinformatics.

This protocol outlines a robust methodology for leveraging SLMs fine-tuned with LoRA in bioinformatics. The integration of structured ontological knowledge and a multi-agent architecture enables the creation of systems that democratize access to complex bioinformatics analysis. While current implementations show human-expert-level performance on conceptual tasks, future work should focus on improving code generation capabilities for complex, multi-step workflows. The provided tables, diagrams, and step-by-step protocol offer researchers a clear pathway to implement and build upon this approach.

The development of end-to-end bioinformatics workflows demands deep expertise in both genomics and computational techniques, presenting a significant barrier to many researchers [4] [18]. While large language models (LLMs) offer some assistance, they often lack the nuanced guidance required for complex bioinformatics tasks and require substantial computational resources [4]. Multi-agent systems built on smaller, fine-tuned language models present a promising alternative, particularly when enhanced with Retrieval-Augmented Generation (RAG) [4] [18]. The BioAgents system demonstrates this approach, achieving performance comparable to human experts on conceptual genomics tasks by leveraging specialized knowledge from bioinformatics resources like nf-core and Biocontainers [4]. This protocol details the methodology for enhancing such agent systems through the strategic integration of nf-core and Biocontainers knowledge bases, enabling more reliable and context-aware assistance in workflow development.

Background

The Bioinformatics Workflow Challenge

Bioinformaticians frequently navigate complex, multi-stage pipelines that integrate diverse data types and procedural dependencies [4] [18]. Community platforms like Biostars provide valuable question-answer exchanges, while repositories like GitHub host reproducible workflow examples (Nextflow, Snakemake) and software containers (Biocontainers) [4]. Analysis of 68,000 Biostars QA pairs reveals that most questions revolve around specific bioinformatics software tools and pipeline-related queries for RNA-sequencing, alignment, and variant calling [4] [18]. This complexity creates steep learning curves for newcomers and challenges for experts to stay current with rapidly evolving techniques and software versions [4].

nf-core and Biocontainers Ecosystem

nf-core provides a community-driven collection of peer-reviewed bioinformatics pipelines built with Nextflow, offering standardized implementation of common analyses [30]. Biocontainers offers a comprehensive repository of Docker and Singularity containers for bioinformatics software, automatically built from Bioconda packages [30]. These projects have been fundamental to ensuring reproducibility and simplifying software deployment in bioinformatics. The nf-core community is currently transitioning to Seqera Containers, a new system built on Wave technology that provides on-demand container generation from Conda or PyPI packages while maintaining long-term storage stability [30].

Table 1: Container Technology Feature Comparison

| Feature | BioContainers | Wave | Seqera Containers |

|---|---|---|---|

| Support Bioconda packages | |||

| Support all conda channels | |||

| Support PyPI (pip) packages | |||

| Docker + Singularity support | |||

| Multi-package containers | (Mulled) | ||

| Container build logs | |||

| Long storage duration | * | (72 hours cache) | * (Minimum 5 years) |

| Stable image URIs | |||

| Pull delay for conda packages | Instant | ~2-3 minutes build on first request | Instant |

System Architecture and Implementation

Multi-Agent Framework Design

The BioAgents system employs a modular architecture with three specialized agents built upon the Phi-3 small language model [4] [18]:

- Conceptual Genomics Agent: Fine-tuned using Low-Rank Adaptation (LoRA) on documentation from the top 50 bioinformatics tools in Biocontainers, including detailed software versions and help documentation [4].

- Workflow Generation Agent: Enhanced with RAG on nf-core documentation and the EDAM ontology for workflow steps and structure [4] [18].

- Reasoning Agent: Orchestrates the other agents and incorporates self-evaluation capabilities to assess response quality against defined thresholds [4].

This division of labor allows each agent to develop specialized expertise while maintaining overall system efficiency through the use of smaller, fine-tuned models rather than resource-intensive large language models [4] [18].

Knowledge Base Integration Protocol

Biocontainers Knowledge Processing

The Conceptual Genomics Agent processes Biocontainers documentation through the following methodology:

- Tool Selection: Identify the top 50 most frequently used bioinformatics tools based on Biocontainers usage statistics and Biostars question frequency [4].

- Documentation Extraction: Collect comprehensive documentation for each tool, including help manuals, version information, and usage examples from Biocontainers metadata.

- Fine-tuning Dataset Creation: Structure the documentation into question-answer pairs suitable for training, incorporating software ontology information [4].

- Model Adaptation: Apply Low-Rank Adaptation (LoRA) to the base Phi-3 model using the structured bioinformatics dataset, preserving general knowledge while adding domain-specific expertise [4].

nf-core Workflow Knowledge Integration

The Workflow Generation Agent implements RAG with nf-core documentation through this protocol:

- Documentation Collection: Aggregate nf-core pipeline documentation, module descriptions, and configuration examples from the nf-core GitHub repository and official website [4] [18].

- Ontology Alignment: Map workflow components to the EDAM ontology, which provides formalized descriptions of bioinformatics operations, topics, data types, and formats [4].

- Vector Embedding Generation: Process the collected documentation using sentence transformers to create dense vector embeddings for semantic search.

- Retrieval Optimization: Implement hybrid search combining dense vector retrieval with keyword matching to ensure both relevance and precision in retrieved documents.

Experimental Protocol and Evaluation

Evaluation Framework Design