Deep Learning for Protein-Ligand Binding Affinity Prediction: A Comprehensive Guide for Drug Discovery

The prediction of protein-ligand binding affinity (PLA) is a cornerstone of modern drug discovery, crucial for identifying and optimizing potential therapeutic compounds.

Deep Learning for Protein-Ligand Binding Affinity Prediction: A Comprehensive Guide for Drug Discovery

Abstract

The prediction of protein-ligand binding affinity (PLA) is a cornerstone of modern drug discovery, crucial for identifying and optimizing potential therapeutic compounds. This article provides a comprehensive exploration of how deep learning (DL) has revolutionized this field, offering a faster and more computationally efficient alternative to traditional experimental and computational methods. Tailored for researchers, scientists, and drug development professionals, it covers the foundational concepts of PLA, the latest DL architectures—including Convolutional Neural Networks (CNNs), Graph Neural Networks (GNNs), and Transformers—and practical guidance on model training, optimization, and validation. By synthesizing current methodologies and addressing key challenges like data heterogeneity and model interpretability, this guide aims to bridge the gap between computational biology and deep learning, empowering professionals to leverage these advanced tools effectively.

The Foundation: Why Protein-Ligand Binding Affinity is Crucial for Drug Discovery

Protein-ligand binding affinity is a fundamental parameter in drug discovery, describing the strength of interaction between a biological target and a potential therapeutic compound [1]. Accurately predicting this affinity is crucial for identifying promising drug candidates, optimizing their properties, and reducing the time and cost associated with traditional experimental approaches [2] [1]. The binding affinity is quantitatively expressed as the dissociation constant (Kd), which represents the ligand concentration at which half of the protein binding sites are occupied [1]. With advancements in computational methods, deep learning has emerged as a transformative paradigm for affinity prediction, offering significant improvements over traditional docking scoring functions by leveraging complex patterns in protein and ligand data [3]. This technical guide explores the core concepts, measurement techniques, and the evolving role of deep learning frameworks in predicting protein-ligand interactions within the modern drug discovery pipeline.

Fundamental Concepts and Definitions

What is Binding Affinity?

Binding affinity quantifies the strength of the interaction between a protein and a ligand. In kinetic terms, it is defined by the affinity constant (Ka), which arises from the equilibrium between the binding (association) and dissociation rates of the interaction [1]. The formation of a protein-ligand complex is a reversible process:

L + P ⇌ LP

Where L is the ligand, P is the protein, and LP is the ligand-protein complex. The speed of the association (Von) and dissociation (Voff) reactions are governed by:

- Von = kon[L][P]

- Voff = koff[LP]

Here, kon is the association rate constant (M⁻¹s⁻¹), and koff is the dissociation rate constant (s⁻¹). At equilibrium, the rates are equal (Von = Voff), leading to the definition of the affinity constant Ka [1]:

Ka = kon / koff = [LP] / [L][P]

In practice, the dissociation constant (Kd) is more commonly used, as it has units of concentration (M) and represents the ligand concentration required to achieve half-maximal binding [1]:

Kd = 1 / Ka = koff / kon = [L][P] / [LP]

A lower Kd value indicates a tighter binding interaction and higher affinity, as less ligand is needed to occupy the protein's binding sites.

Models of Protein-Ligand Recognition

The mechanism by which proteins and ligands recognize and bind to each other is foundational to understanding affinity. Several models have been proposed to explain this process [1]:

- Lock and Key Model: Proposed by Emil Fischer in 1894, this model suggests that the ligand (key) has a shape that is perfectly complementary to the rigid binding site of the protein (lock) [1].

- Induced Fit Model: Proposed by Daniel Koshland in 1958, this model posits that both the ligand and the protein are flexible. The binding site conformation changes upon ligand binding to achieve optimal complementarity, similar to a hand adjusting a glove [1].

- Conformational Selection Model: This more recent model suggests that proteins exist in an equilibrium of multiple conformational states. The ligand selectively binds to and stabilizes the pre-existing conformation that it fits best, shifting the equilibrium toward that state [1].

Current computational tools are primarily based on these models, which focus on the binding step. However, their inability to fully account for the dissociation rate (koff) and mechanisms like ligand trapping is a noted limitation in accurately predicting affinity [1].

Experimental and Computational Determination of Binding Affinity

Experimental Methodologies

Experimental techniques for measuring binding affinity provide the ground-truth data essential for validating computational predictions. Key methodologies include:

- Isothermal Titration Calorimetry (ITC): Directly measures the heat change associated with binding, allowing for the determination of Kd, reaction stoichiometry (n), enthalpy (ΔH), and entropy (ΔS).

- Surface Plasmon Resonance (SPR): A biosensor-based technique that measures biomolecular interactions in real-time without labeling, providing direct data on association (kon) and dissociation (koff) rates, from which Kd is calculated.

- Inhibition Constant (Ki) Measurements: For enzyme inhibitors, the inhibition constant (Ki) is often reported, representing the dissociation constant for the enzyme-inhibitor complex, typically determined through enzymatic activity assays [1].

Computational Prediction and the Role of Docking

Computational approaches offer a faster, cost-effective alternative for affinity estimation, particularly in the early stages of drug discovery.

- Molecular Docking: Docking has two primary goals: predicting the binding pose of the ligand in the protein's binding site and predicting the binding affinity through a scoring function [1]. These scoring functions are mathematical models that evaluate the strength of interactions by considering factors like van der Waals forces, electrostatics, hydrogen bonding, and desolvation effects [1].

- Limitations of Traditional Scoring Functions: Despite success in pose prediction, the scoring functions of many popular docking programs (e.g., AutoDock, Glide, GOLD) often show poor correlation with experimentally determined binding affinities [1]. This can be attributed to inaccurate estimations of energetic contributions or a failure to model the complete biological mechanism of binding and dissociation [1].

Table 1: Types of Scoring Functions Used in Docking

| Type of Scoring Function | Description | Examples |

|---|---|---|

| Empirical | Parameterized using datasets of experimental structures and affinities. | Used in AutoDock, Glide, GOLD, MOE [1] |

| Force Field-Based | Based on molecular mechanics calculations; often combined with solvation terms. | MM/GBSA, MM/PBSA [1] |

| Knowledge-Based | Derived from statistical analysis of known protein-ligand complexes. | Linear regression models, machine learning algorithms [1] |

Deep Learning for Binding Affinity Prediction

The Deep Learning Paradigm

Deep learning (DL) models have emerged as a powerful and computationally efficient alternative to traditional scoring functions [3]. They can learn complex, non-linear relationships directly from data, such as protein sequences, ligand structures, and 3D complex geometries, enabling more accurate and generalizable affinity predictions.

A Framework for Structure-Based Prediction: FDA

A significant innovation in this space is the Folding-Docking-Affinity (FDA) framework, which explicitly incorporates predicted 3D structural information [2]. This approach is particularly valuable when experimental protein-ligand complex structures are unavailable.

The FDA framework consists of three replaceable components [2]:

- Folding: Generating the 3D protein structure from its amino acid sequence using tools like ColabFold (based on AlphaFold2) [2].

- Docking: Predicting the binding pose of the ligand within the generated protein structure using deep learning-based docking tools like DiffDock [2].

- Affinity: Predicting the binding affinity from the computed 3D protein-ligand binding structure using graph neural networks (GNNs) like GIGN [2].

This framework demonstrates performance comparable to state-of-the-art docking-free methods and shows enhanced generalizability, particularly in challenging scenarios where proteins or ligands in the test set were not seen during training [2].

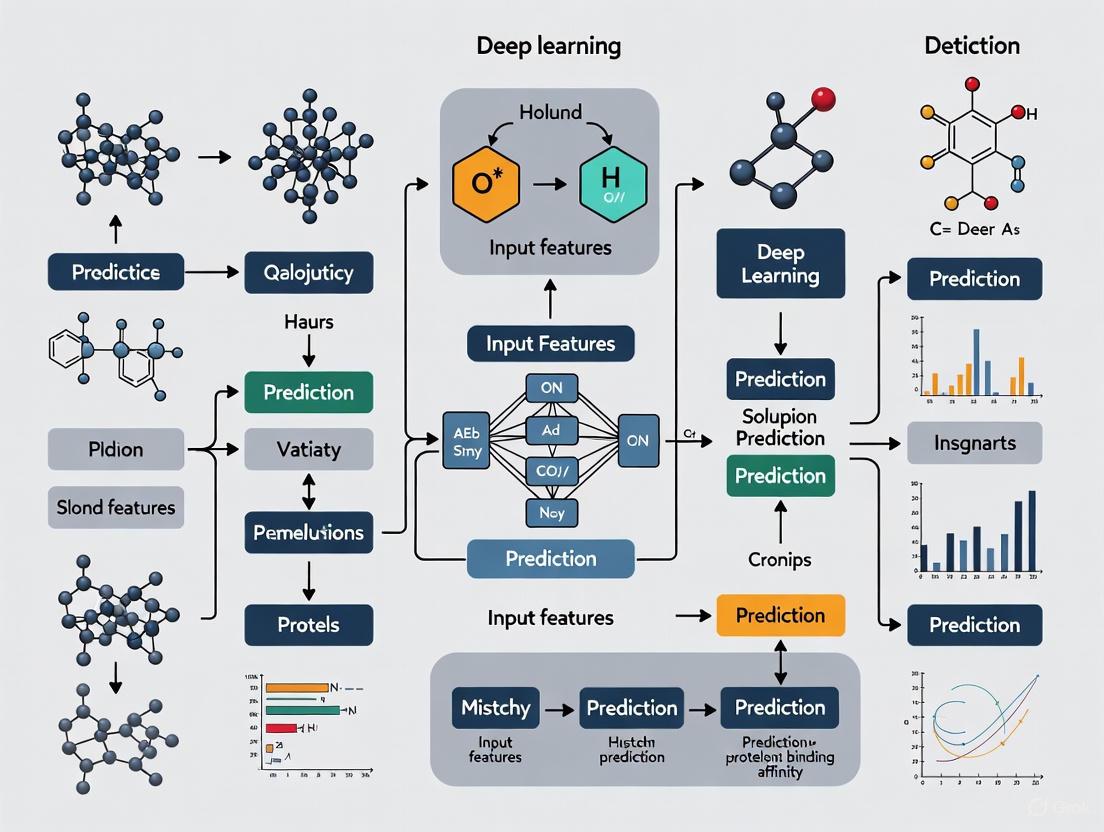

Diagram 1: FDA Framework for Affinity Prediction

Performance and Generalizability

Benchmarking the FDA framework on kinase-specific datasets (DAVIS and KIBA) under various data split scenarios revealed that its performance is on par with leading docking-free models [2]. Notably, in the most challenging "both-new" split (where both proteins and ligands in the test set are new), FDA outperformed its docking-free counterparts, indicating that explicitly modeling structural interactions improves generalizability to novel drug targets and compounds [2].

Table 2: Benchmarking Results of FDA vs. Docking-Free Models (Pearson Correlation - Rp)

| Data Split Scenario | Dataset | FDA (ColabFold-DiffDock) | MGraphDTA | DGraphDTA |

|---|---|---|---|---|

| Both-New | DAVIS | 0.29 | 0.24 | 0.23 |

| Both-New | KIBA | 0.51 | 0.48 | 0.46 |

| New-Protein | DAVIS | 0.34 | 0.28 | 0.25 |

| New-Protein | KIBA | 0.46 | 0.53 | 0.45 |

Table 3: Key Resources for Protein-Ligand Binding Affinity Research

| Item / Resource | Function / Description | Example Tools / Databases |

|---|---|---|

| Protein Structure Prediction | Generates 3D protein structures from amino acid sequences. | ColabFold, AlphaFold2 [2] |

| Molecular Docking Software | Predicts the binding pose and orientation of a ligand in a protein's binding site. | DiffDock, AutoDock, Glide, GOLD [2] [1] |

| Affinity Prediction Models | Predicts binding affinity from protein-ligand pair information or 3D structures. | GIGN, GraphDTA, DeepDTA, KDBNet [2] |

| Experimental Affinity Datasets | Provides ground-truth data for training and benchmarking computational models. | PDBBind, DAVIS, KIBA [2] |

| Kinase-Specific Model | A specialized model that incorporates features from predefined 3D kinase binding pockets. | KDBNet [2] |

Detailed Experimental Protocol: The FDA Workflow

The following protocol outlines the steps for implementing the Folding-Docking-Affinity (FDA) framework to predict binding affinity for a novel protein-ligand pair.

Step 1: Protein Folding with ColabFold

Objective: Generate a reliable 3D protein structure from the amino acid sequence. Methodology:

- Input the target protein's amino acid sequence in FASTA format into the ColabFold interface.

- Utilize the default multiple sequence alignment (MSA) settings to search databases like UniRef and BFD for evolutionary information.

- Run the structure prediction module, which employs a deep learning architecture based on AlphaFold2.

- The output is a predicted protein structure (PDB format), typically represented by the model with the highest predicted local distance difference test (pLDDT) score, which indicates per-residue confidence.

Step 2: Ligand Docking with DiffDock

Objective: Predict the most likely binding pose of the ligand within the folded protein structure. Methodology:

- Prepare the protein structure from Step 1 by adding hydrogen atoms and assigning partial charges in a molecular file format (e.g., .pdbqt).

- Input the ligand's structure, typically provided as a SMILES string or a 2D/3D molecular file.

- Run the DiffDock model, a diffusion-based deep learning method that generates candidate poses and ranks them by confidence.

- The output is a set of predicted protein-ligand complex structures, with the top-ranked pose selected for affinity prediction.

Step 3: Affinity Prediction with GIGN

Objective: Calculate the binding affinity from the predicted protein-ligand complex. Methodology:

- Input the top-ranked protein-ligand complex structure (PDB file) from Step 2 into the GIGN model.

- GIGN constructs an interaction graph where nodes represent protein and ligand atoms, and edges represent their spatial relationships and interactions.

- The graph neural network processes this graph through several message-passing layers to learn complex interaction features.

- The final network layer outputs a single, continuous value representing the predicted binding affinity (e.g., pKd, which is -log(Kd)).

Diagram 2: FDA Experimental Workflow

The accurate prediction of protein-ligand binding affinity remains a cornerstone of computational drug discovery. While classical methods and docking scoring functions have provided a foundation, their limitations in accuracy and generalizability are well-documented. The integration of deep learning represents a paradigm shift, enabling models to learn directly from complex structural and interaction data. Frameworks like FDA, which leverage AI for protein folding, docking, and affinity prediction, demonstrate the potential of a holistic, structure-based approach to improve predictive performance, especially for novel targets. Future progress in this field hinges on the development of unified models that more completely capture the physical mechanisms of binding, including the critical dissociation step, ultimately leading to more efficient and successful drug discovery pipelines.

The accurate prediction of protein-ligand binding affinity is a cornerstone of computer-aided drug design, serving as a critical indicator of a potential drug candidate's efficacy [4]. This process aims to quantify the strength of interaction between a biological target and a small molecule, which directly influences drug potency and selectivity [5]. For decades, the pharmaceutical industry has relied on traditional methodologies spanning both experimental and computational domains, yet these approaches carry significant limitations that impede the rapid discovery of new therapeutics. Experimental methods, while providing valuable insights, are notoriously resource-intensive, complex, and time-consuming [4] [6]. Concurrently, conventional computational techniques such as molecular docking with rigid scoring functions often oversimplify the complex physical interactions governing molecular recognition, leading to compromised accuracy and reliability [7] [8]. As drug discovery costs continue to escalate alongside declining approval rates, understanding these limitations becomes paramount for researchers and development professionals seeking to advance the field through innovative approaches like deep learning [5]. This technical examination delves into the specific constraints and associated costs of these traditional paradigms, establishing the foundational context for a broader thesis on data-driven solutions in structural bioinformatics.

The Substantial Costs of Experimental Binding Affinity Determination

Experimental techniques for determining binding affinity provide the ground truth data that computational models aim to predict. These methods measure interaction strength through various indicators such as inhibition constant (Kᵢ), dissociation constant (K_d), and half-maximal inhibitory concentration (IC₅₀) [4]. The foundational workflow involves preparing the protein and ligand samples, establishing the binding reaction conditions, measuring the physiological response, and finally calculating the affinity constants through data analysis. Each technique operates on different principles: isothermal titration calorimetry (ITC) measures heat changes during binding, surface plasmon resonance (SPR) detects changes in refractive index near a sensor surface, and fluorescence polarization (FP) monitors changes in fluorescence properties when small molecules bind to larger proteins [7] [4]. Despite their differences, these methods share common procedural stages that contribute to their overall cost and complexity, from initial reagent preparation through to data interpretation.

The following diagram illustrates the generalized workflow for experimental binding affinity determination:

Quantitative Analysis of Experimental Limitations

The operational workflow of experimental affinity determination translates directly into significant practical constraints. The specialized instrumentation required for techniques like ITC, SPR, and FP represents substantial capital investment, often exceeding hundreds of thousands of dollars [4]. The process demands highly purified protein samples and characterized ligands, with reagent consumption and preparation creating recurring expenses. A single measurement typically requires hours to complete, with comprehensive studies needing multiple replicates and conditions for statistical reliability [6]. Perhaps most significantly, these methods struggle to capture dynamic structural changes in proteins and ligands during binding, providing limited insight into the atomic-level interactions that drive the binding process [7] [4].

Table 1: Comparative Analysis of Experimental Binding Affinity Measurement Techniques

| Method | Key Measurements | Time Requirements | Key Limitations | Primary Applications |

|---|---|---|---|---|

| Isothermal Titration Calorimetry (ITC) | K_d, ΔH, ΔS, stoichiometry | Hours per titration | High protein consumption, limited sensitivity for very tight/weak binding | Full thermodynamic characterization |

| Surface Plasmon Resonance (SPR) | Kd, kon, k_off | Minutes to hours | Requires immobilization, surface effects possible | Kinetic profiling, fragment screening |

| Fluorescence Polarization (FP) | K_d, IC₅₀ | Minutes to hours | Requires fluorophore labeling, interference possible | High-throughput screening, competition assays |

| MMT Assay | IC₅₀, EC₅₀ | Hours to days | Cellular viability endpoint, indirect measurement | Cellular activity assessment |

Limitations of Traditional Computational Methods

Molecular Docking and Rigid Scoring Functions

Computational docking emerged as a complement to experimental approaches, predicting bound conformations and binding free energies of small molecules to macromolecular targets [8]. Tools like AutoDock Vina and AutoDock employ simplified representations of molecular systems to make conformational searching tractable, using rapid gradient-optimization or Lamarckian genetic algorithm search methods respectively [8]. The critical simplification in these approaches lies in their scoring functions - mathematical approximations that estimate binding free energy based on factors like van der Waals forces, hydrogen bonding, desolvation, and entropy [8] [5]. These functions are typically classified into three categories: force-field based (using molecular mechanics energy terms), empirical (fitting parameters to experimental data), and knowledge-based (deriving potentials from structural databases) [4]. Despite their utility for virtual screening, these scoring functions represent oversimplifications that fail to capture crucial physical and chemical complexities of binding interactions.

The fundamental architecture of traditional docking protocols follows a systematic workflow with inherent limitations at each stage:

Physical Simulation Methods and Their Computational Burden

More advanced physics-based simulation methods have gained prominence for structure-based affinity prediction, with Free Energy Perturbation (FEP) representing the current gold standard [6]. These methods directly model physical interactions between proteins and ligands at the atomic level, providing a more rigorous thermodynamic framework compared to docking scores. FEP calculates relative binding free energies by simulating the alchemical transformation of one ligand to another within the binding pocket, offering high accuracy for closely related compounds [6]. Similarly, Molecular Mechanics Poisson-Boltzmann Surface Area (MMPBSA) and Molecular Mechanics Generalized Born Surface Area (MMGBSA) approaches estimate binding affinities from molecular dynamics trajectories by combining molecular mechanics energies with implicit solvation models [4]. While these methods offer improved physical fidelity over docking scores, they come with extraordinary computational demands that limit their practical application.

Table 2: Performance Limitations of Traditional Computational Methods

| Method Category | Representative Tools | Binding Affinity Error | Computational Cost | Key Limitations |

|---|---|---|---|---|

| Molecular Docking | AutoDock Vina, AutoDock, Glide, GOLD | ~2-3 kcal/mol [8] | Minutes to hours per ligand | Rigid receptor approximation, simplified scoring functions, inadequate entropy treatment |

| Classical Scoring Functions | X-Score, ChemScore, AutoDock scoring function | >2 kcal/mol [4] | Seconds per ligand | Oversimplified energy terms, poor generalization across targets, limited chemical space coverage |

| Free Energy Calculations | FEP, TI, MM/PBSA, MM/GBSA | ~1 kcal/mol [6] | Days to weeks per transformation | Extremely high computational cost, requires high-quality protein structures, limited to small structural changes |

| Semi-empirical QM Methods | PM6-D3H4, GFN2-xTB, DFTB3-D3H5 | Variable accuracy [7] | Hours per complex | Questionable reliability in nanoscale complexes, parameterization limitations |

Quantitative Comparative Analysis: Accuracy vs. Cost Tradeoffs

The fundamental challenge in binding affinity prediction lies in navigating the accuracy-cost tradeoff between methodological approaches. Experimental techniques provide reference data but cannot realistically scale for screening thousands of compounds. Traditional computational methods offer speed but sacrifice accuracy and physical realism. This section provides a quantitative framework for understanding these relationships, highlighting the niche that modern machine learning approaches aim to fill.

Table 3: Comprehensive Method Comparison - Accuracy, Cost, and Throughput

| Methodology | Typical R² vs Experimental | Time per Compound | Hardware Requirements | Information Gained |

|---|---|---|---|---|

| Experimental Assays | Reference (R²=1.0) | Hours to days [4] | Specialized instruments (~$100K-$500K) | Direct measurement, kinetics, thermodynamics |

| Physical Simulations (FEP) | 0.6-0.8 [6] | Days to weeks [6] | High-performance computing clusters | Detailed mechanism, relative affinities for similar compounds |

| Molecular Docking | 0.3-0.5 [5] | Minutes to hours [8] | Standard workstations | Binding poses, approximate rankings |

| Semi-empirical Methods | Variable (dataset-dependent) [7] | Hours [7] | Computational clusters | Electronic structure insights, many-body effects |

| Deep Learning Models | 0.57-0.87 [7] [9] | Seconds to minutes [7] [9] | GPUs for training, CPUs for inference | Rapid screening, pattern recognition in structural data |

Table 4: Key Experimental and Computational Resources for Binding Affinity Studies

| Resource/Reagent | Category | Primary Function | Significance in Binding Studies |

|---|---|---|---|

| Purified Protein Samples | Experimental | Binding interaction participant | Determines system relevance; purity critical for accurate measurements |

| Characterized Ligand Library | Experimental | Binding interaction participant | Enables screening diversity; requires solubility and stability characterization |

| ITC Instrumentation | Experimental | Measures heat changes during binding | Provides full thermodynamic profile (K_d, ΔH, ΔS, n) without labeling |

| SPR Biosensors | Experimental | Detects mass changes on sensor surface | Enables kinetic profiling (kon, koff) with low sample consumption |

| Crystallographic Structures | Computational | Provides atomic-level complex coordinates | Essential for structure-based design; PDB primary source [5] |

| PDBbind Database | Computational | Curated protein-ligand complexes with binding data | Benchmarking for computational methods; >19,000 complexes [5] |

| AutoDock Suite | Computational | Molecular docking and virtual screening | Widely-used open-source platform for pose and affinity prediction [8] |

| BindingDB Database | Computational | Public binding affinity database | >1.6 million binding data points for model training/validation [5] |

The high costs and limitations of traditional experimental and computational methods for binding affinity prediction present significant bottlenecks in drug discovery. Experimental techniques provide essential ground truth data but cannot scale to meet the demands of modern screening campaigns. Traditional computational methods, particularly those relying on rigid scoring functions and simplified physical models, offer throughput but suffer from accuracy limitations that restrict their predictive utility [7] [8] [5]. Physical simulation methods like FEP provide improved accuracy but at computational costs that preclude their application to large compound libraries [6]. This methodological landscape, characterized by inescapable tradeoffs between accuracy, cost, and throughput, establishes the imperative for new approaches that can transcend these limitations. The emerging paradigm of deep learning for binding affinity prediction represents a promising avenue to integrate the physical insights of traditional methods with the scalability of data-driven approaches, potentially offering a path toward accurate, efficient, and generalizable predictions across diverse protein families and chemical space.

The prediction of protein-ligand binding affinity (PLA) is a cornerstone of computational drug discovery, directly influencing the efficiency and success of identifying viable therapeutic compounds [3]. Traditional computational methods, often hampered by time-consuming processes and limited accuracy, are being rapidly supplanted by deep learning (DL) models. These models offer a promising and computationally efficient paradigm, enabling rapid and scalable analysis while circumventing the rigid constraints of conventional scoring functions and the slow pace of experimental assays [3] [10]. This whitepaper provides an in-depth technical examination of how deep learning is catalyzing a paradigm shift in affinity prediction. We explore the core architectural innovations, detail rigorous experimental and benchmarking methodologies, address critical challenges such as data bias and generalization, and outline the integrated toolkit empowering modern researchers in this transformative field.

Conventional drug discovery is an expensive, time-consuming, and high-attrition process [11] [12]. The accurate prediction of how strongly a small molecule (ligand) binds to a protein target is crucial for speeding up drug research and design [10]. Before the rise of deep learning, computational methods relied heavily on classical scoring functions implemented in docking tools like AutoDock Vina and GOLD. These functions, based on force-fields, empirical data, or knowledge-based statistics, are often computationally intensive and show limited accuracy in binding affinity prediction [13].

Deep learning has emerged as a potent substitute, providing robust solutions to these challenging biological problems [11]. DL models leverage large datasets of protein-ligand complexes to learn the intricate, non-linear relationships between the structural features of a complex and its binding affinity. This data-driven approach avoids the need for manual feature engineering and can model complex interactions that are difficult to capture with pre-defined physical equations [14] [11]. The ability of DL to handle large datasets and learn complex non-linear relations has fueled a surge in deep learning-driven methodologies, revolutionizing the virtual screening pipeline and establishing a new, quantitative framework for studying drug-target relationships [11].

Core Deep Learning Architectures for Affinity Prediction

A variety of deep learning architectures have been deployed for PLA prediction, each with distinct advantages for processing structural and chemical information. These models can be broadly classified into several key categories based on their underlying neural network design.

The following table summarizes the primary architectures, their core principles, and respective strengths and weaknesses.

Table 1: Key Deep Learning Architectures for Binding Affinity Prediction

| Architecture | Core Principle | Input Representation | Strengths | Weaknesses |

|---|---|---|---|---|

| Convolutional Neural Networks (CNNs) [14] [10] | Applies filters to detect local spatial features in structured data. | 3D grid (voxel) representing the protein-ligand binding pocket. | Excellent at capturing spatial patterns and local atomic interactions. | Can be computationally expensive; sensitive to input orientation and alignment. |

| Graph Neural Networks (GNNs) [10] [13] | Operates on graph structures where nodes (atoms) are connected by edges (bonds). | Molecular graph of the protein and ligand. | Naturally represents molecular topology; invariant to rotation; captures both geometric and relational information. | Performance can depend on the quality of the graph construction and message-passing schemes. |

| Transformers & Attention-Based Models [10] [11] | Uses self-attention and cross-attention mechanisms to weigh the importance of different input elements. | Sequences (e.g., SMILES, amino acids) or graphs with attention. | Models long-range interactions; provides some interpretability via attention weights. | Can be data-hungry; computationally intensive for very large sequences or graphs. |

| Geometric Deep Learning (e.g., MaSIF) [15] | Learns from the geometric and chemical features of molecular surfaces. | Molecular surface meshes with chemical and shape descriptors. | Invariant to rotation and translation; can generalize to novel surfaces like protein-ligand "neosurfaces". | Requires specialized featurization of molecular surfaces. |

A common trend in modern development is the move towards hybrid and integrative models. For instance, the GEMS model reported in Nature Machine Intelligence combines a GNN architecture with transfer learning from protein language models to achieve state-of-the-art generalization by learning a sparse graph representation of protein-ligand interactions [13]. Similarly, other studies integrate graph-based representations of molecules with sequence-derived embeddings from large language models (LLMs) like ESM-2 and ProtBERT to enrich the feature set for prediction [11] [16].

Data, Benchmarking, and the Generalization Challenge

The performance and real-world utility of any deep learning model are inextricably linked to the data it is trained and evaluated on. The community has largely relied on publicly available databases like PDBbind, which provides protein-ligand structures and experimentally measured binding affinities [13].

The Critical Issue of Data Bias and Leakage

A seminal challenge identified in recent literature is the problem of train-test data leakage between the primary training set (PDBbind) and the standard benchmark for evaluation, the Comparative Assessment of Scoring Functions (CASF) [13]. Studies have revealed a high degree of structural similarity between complexes in these sets, meaning models can achieve high benchmark performance simply by memorizing training samples rather than genuinely learning to generalize. Alarmingly, some models performed well on CASF benchmarks even when critical protein or ligand information was omitted, confirming that their predictions were not based on a true understanding of protein-ligand interactions [13].

Advanced Benchmarking and Data Filtration

To address this, researchers have proposed new, more rigorous data-splitting and benchmarking protocols:

- PDBbind CleanSplit: A new training dataset curated by a structure-based filtering algorithm that eliminates train-test data leakage and redundancies within the training set [13]. The algorithm uses a combined assessment of protein similarity, ligand similarity, and binding conformation similarity to ensure training and test complexes are strictly independent. When top-performing models were retrained on CleanSplit, their benchmark performance dropped substantially, revealing their previous high scores were inflated by data leakage [13].

- Target Identification Benchmark: This approach reframes the generalization test, assessing whether a model can correctly identify the correct protein target for a given active molecule from a set of decoys—a task known as the "inter-protein scoring noise problem" [17]. A 2025 benchmark found that even advanced models like Boltz-2 struggled with this task, indicating a lack of true generalization across different binding pockets [17].

- AbRank Framework: For antibody-antigen affinity prediction, the AbRank benchmark reframes affinity prediction as a pairwise ranking task instead of a regression task. It uses an m-confident ranking framework, filtering out comparisons with marginal affinity differences to focus training on reliable, high-confidence pairs and improve robustness to experimental noise [16].

Table 2: Key Datasets and Benchmarks for Model Development and Evaluation

| Dataset/Benchmark | Primary Purpose | Key Feature | Consideration for Model Generalization |

|---|---|---|---|

| PDBbind [10] [13] | Primary training data for structure-based models. | Comprehensive collection of protein-ligand complexes with binding affinity data. | Contains internal redundancies and significant similarity to common test sets like CASF. |

| CASF Benchmark [13] | Standard benchmark for evaluating scoring functions. | A curated set of complexes for objective comparison of different methods. | High structural similarity to PDBbind leads to data leakage and over-optimistic performance. |

| PDBbind CleanSplit [13] | A refined training and evaluation split. | Structure-based filtering to remove train-test leakage and internal redundancy. | Enables genuine assessment of model generalization to unseen complexes. |

| LIT-PCBA [17] | Benchmark for target identification. | Tests a model's ability to identify the correct protein target for active molecules. | Directly tests for the "inter-protein scoring noise problem," a harder generalization task. |

| AbRank [16] | Benchmark for antibody-antigen affinity. | Formulates prediction as a pairwise ranking task with m-confident pairs. | Improves robustness to experimental noise and assesses generalization across Ab-Ag space. |

Detailed Experimental Protocol for a GNN-based Affinity Prediction

This section outlines a detailed methodology for training and evaluating a Graph Neural Network model for binding affinity prediction, incorporating best practices for mitigating data bias.

Data Preparation and Preprocessing

- Dataset Sourcing: Download the PDBbind database (e.g., v.2016 or later).

- Data Filtration: Apply the PDBbind CleanSplit protocol to ensure no proteins, ligands, or binding conformations in the training set are highly similar to those in the test set (e.g., CASF 2016) [13]. This involves:

- Calculating protein similarity using the TM-score.

- Calculating ligand similarity using the Tanimoto coefficient based on molecular fingerprints.

- Calculating the binding conformation similarity using pocket-aligned ligand root-mean-square deviation (RMSD).

- Removing any training complex that exceeds predefined similarity thresholds with any test complex.

- Graph Construction: For each protein-ligand complex, represent it as a heterogeneous graph.

- Nodes: Represent protein residues as nodes featurized with amino acid type, and atoms as nodes featurized with atom type, charge, and hybridization state.

- Edges: Define edges within the protein and ligand based on covalent bonds. Define intermolecular edges between protein and ligand atoms/residues based on spatial proximity (e.g., within a 5Å cutoff).

Model Architecture and Training

- Model Selection: Implement a GNN architecture such as a Graph Attention Network (GAT) or a Message Passing Neural Network (MPNN). The GEMS model is a strong reference [13].

- Feature Integration: Enhance node features by incorporating pre-trained embeddings from protein language models (e.g., from ESM-2) for protein residues and molecular language models for ligand atoms [13] [16].

- Training Loop:

- Loss Function: Use a pairwise ranking loss (e.g., Bayesian Personalized Ranking loss) instead of a standard regression loss (like Mean Squared Error) to improve robustness, as demonstrated in the AbRank framework [16].

- Optimization: Use the Adam optimizer with an initial learning rate of 0.001 and a batch size suited to available memory.

- Regularization: Employ standard techniques like dropout and weight decay to prevent overfitting.

Model Evaluation and Validation

- Primary Metrics: Evaluate the model on the held-out CASF test set using standard metrics:

- Root Mean Square Error (RMSE)

- Pearson Correlation Coefficient (R)

- Spearman's Rank Correlation Coefficient

- Generalization Test: Perform the target identification benchmark [17]. For a set of active ligands and their known targets, mixed with decoy proteins, the model should assign a higher predicted affinity to the correct target-ligand pair than to the decoy pairs.

Table 3: Key Research Reagent Solutions for Deep Learning-based Affinity Prediction

| Tool / Resource | Type | Primary Function | Application in Workflow |

|---|---|---|---|

| PDBbind [10] [13] | Database | Provides a comprehensive collection of protein-ligand complexes with experimental binding affinity data. | Primary source of structured data for training and testing structure-based models. |

| CASF [13] | Benchmark | A standardized set of complexes for the comparative assessment of scoring functions. | Used for the objective evaluation and comparison of model performance against other methods. |

| AlphaFold3 / Boltz-1 [13] [16] | Prediction Tool | Predicts the 3D structure of protein-ligand complexes from sequence. | Generates input structures for affinity prediction when experimental structures are unavailable. |

| ESM-2 / ProtBERT [11] [16] | Protein Language Model | Generates semantically rich, contextual embeddings from protein sequences. | Provides powerful feature representations for protein residues, used as input to GNNs or other architectures. |

| MaSIF-neosurf [15] | Geometric DL Tool | Learns molecular surface fingerprints to design binders against protein-ligand "neosurfaces". | Enables the design of de novo proteins that bind to specific, ligand-induced protein surfaces. |

| Therapeutics Data Commons (TDC) [12] | Platform | Provides access to datasets, tools, and benchmarks for machine learning in drug discovery. | A centralized resource for accessing curated datasets and evaluation frameworks. |

Deep learning has undeniably instigated a paradigm shift in protein-ligand binding affinity prediction, moving the field from reliance on rigid scoring functions to adaptable, data-driven models capable of rapid and scalable analysis [3]. However, as this review highlights, the path to building models that genuinely understand molecular interactions, rather than merely memorizing data, is fraught with challenges. Critical issues of data bias, benchmark leakage, and poor generalization to novel targets must be front and center in model development [17] [13].

The future of this field will likely be shaped by several key trends: the continued integration of large language models to provide a deeper semantic understanding of protein and ligand sequences [11] [16]; the refinement of geometric deep learning for more sophisticated 3D reasoning [15]; a stronger emphasis on rigorous, leakage-free benchmarking [13]; and the exploration of alternative learning paradigms, such as pairwise ranking, to enhance robustness [16]. As these technical advancements mature, deep learning for affinity prediction is poised to become an even more indispensable tool, accelerating the discovery of new therapeutics and deepening our quantitative understanding of molecular recognition.

Key Challenges and Opportunities in Computational Drug Target Identification and Validation

Computational drug target identification and validation represents a critical frontier in modern therapeutic development, situated within the broader context of deep learning for protein-ligand binding affinity research. The traditional drug discovery paradigm, often characterized by the "one gene, one drug, one disease" hypothesis, has contributed to high failure rates in clinical trials and escalating development costs, now estimated at approximately $2.6 billion per approved drug [18]. In response, the field is undergoing a transformative shift toward integrated, data-driven approaches that leverage artificial intelligence (AI) and deep learning to mitigate attrition, shorten timelines, and increase translational predictivity [19].

Target identification involves discovering biomolecules crucially involved in disease pathways, while validation confirms their therapeutic relevance and "druggability" – the likelihood that a target can be effectively modulated by a drug molecule [20]. An ideal drug target must satisfy multiple criteria: close association with disease mechanisms, presence of bindable sites, functional modifiability, and evidence of pharmacological effects from ligand binding [20]. Within this framework, computational methods, particularly deep learning models for predicting protein-ligand binding affinity, have evolved from supplemental tools to foundational components of the drug discovery pipeline [3] [9].

This whitepaper examines the key challenges and opportunities in computational drug target identification and validation, with specific emphasis on how deep learning approaches are reshaping this landscape. We provide a technical analysis of emerging methodologies, performance benchmarks, experimental protocols, and essential research tools that are defining the next generation of therapeutic development.

Key Challenges in Computational Target Identification and Validation

Data Quality and Availability

The performance of deep learning models in drug target discovery is fundamentally constrained by the quality and comprehensiveness of training data. Binding affinity datasets suffer from significant experimental variability, as different laboratories often produce divergent results for the same protein-ligand complexes [21]. This inconsistency introduces noise that impedes model generalization. Furthermore, the issue of data leakage presents a persistent challenge, where inappropriate dataset splitting can lead to inflated performance metrics through memorization rather than genuine learning [21]. The problem is compounded by the scarcity of reliably negative samples – confirmed non-interactions between drugs and targets – which are essential for supervised learning but rarely documented in public databases [18].

Model Interpretability and Biological Plausibility

While deep learning models demonstrate impressive predictive accuracy, they often function as "black boxes" with limited mechanistic interpretability. This opacity creates significant barriers to regulatory acceptance and clinical translation, as understanding why a model makes a particular prediction is crucial for validating its biological relevance [3]. The challenge lies in designing models that not only achieve high statistical performance but also capture physiologically meaningful relationships between chemical structures, protein conformations, and binding dynamics [9]. Bridging this gap between computational prediction and biological plausibility remains a central challenge in the field.

Optimization Challenges in Multitask Learning

Multitask learning frameworks, which simultaneously predict drug-target binding affinities and generate novel drug candidates, face significant optimization hurdles due to gradient conflicts between distinct objectives [9]. When tasks compete during training, model performance can degrade rather than improve – a phenomenon observed in architectures like CoVAE, which uses separate feature spaces for predictive and generative tasks [9]. These optimization challenges necessitate specialized algorithms, such as the FetterGrad algorithm developed for the DeepDTAGen framework, which maintains gradient alignment across tasks by minimizing Euclidean distance between task gradients [9].

Translational Gaps Between Prediction and Clinical Efficacy

Computational predictions of drug-target interactions frequently fail to translate into clinical success due to the complex physiological environment not captured by in silico models. Factors including protein dynamics, cellular context, tissue-specific expression, and metabolic stability significantly influence therapeutic efficacy but are challenging to incorporate into predictive algorithms [19] [20]. This translational gap is particularly pronounced for targets with low connectivity in known drug-target networks, where traditional network-based approaches historically performed poorly [18]. While newer methods like deepDTnet show improved performance on low-connectivity targets, the fundamental challenge of predicting in vivo behavior from in silico data remains substantial [18].

Emerging Opportunities and Methodological Advances

Deep Learning Architectures for Binding Affinity Prediction

Deep learning approaches have emerged as a computationally efficient paradigm for predicting protein-ligand binding affinities, circumventing the time-consuming nature of experimental assays and the rigidity of conventional scoring functions [3]. Recent architectural innovations have substantially improved prediction accuracy and applicability across diverse target classes.

Table 1: Performance Comparison of Deep Learning Models for Drug-Target Binding Affinity Prediction

| Model | Architecture | KIBA (CI) | Davis (CI) | BindingDB (CI) | Key Innovation |

|---|---|---|---|---|---|

| DeepDTAGen | Multitask learning | 0.897 | 0.890 | 0.876 | Unified framework for affinity prediction & drug generation |

| GraphDTA | Graph neural networks | 0.891 | - | - | Graph representation of drug molecules |

| GDilatedDTA | Dilated convolutional networks | 0.920 | - | 0.867 | Expanded receptive fields for protein sequences |

| DeepDTA | 1D CNN | 0.863 | 0.878 | - | SMILES & protein sequence processing |

| KronRLS | Kernel-based learning | 0.836 | 0.872 | - | Kronecker product similarity matrices |

| SimBoost | Gradient boosting machines | 0.836 | 0.872 | - | Feature-based similarity learning |

The DeepDTAGen framework represents a significant advancement through its multitask architecture, which jointly optimizes binding affinity prediction and target-aware drug generation using a shared feature space [9]. This approach leverages common knowledge of ligand-receptor interactions across both tasks, significantly increasing the potential clinical relevance of generated compounds. On benchmark datasets, DeepDTAGen achieves a concordance index (CI) of 0.897 on KIBA and 0.890 on Davis, outperforming previous state-of-the-art models [9].

Network-Based Deep Learning for Target Identification

Network-based deep learning approaches have demonstrated remarkable efficacy in identifying novel molecular targets for known drugs. The deepDTnet methodology exemplifies this trend, embedding 15 types of chemical, genomic, phenotypic, and cellular network profiles to generate biologically relevant features through low-dimensional vector representations for both drugs and targets [18]. This heterogeneous network integration enables the identification of thousands of novel drug-target interactions with high accuracy (AUROC = 0.963), substantially outperforming traditional machine learning approaches and previous state-of-the-art methodologies [18].

A key innovation in deepDTnet is its application of a deep neural network for graph representations (DNGR) algorithm, which learns informative vector representations by unique integration of large-scale chemical, genomic, and phenotypic profiles [18]. Furthermore, the model employs a Positive-Unlabeled (PU) matrix completion algorithm to address the absence of experimentally confirmed negative samples, enabling robust inference without negative training data [18]. When validated experimentally, deepDTnet successfully identified topotecan as a novel direct inhibitor of human ROR-γt (IC₅₀ = 0.43 μM), demonstrating potential therapeutic efficacy in a mouse model of multiple sclerosis [18].

Experimental Validation Methods for Target Engagement

Computational predictions require empirical validation to confirm direct target engagement in physiologically relevant contexts. Several experimental methods have emerged as standards for this crucial validation step:

Cellular Thermal Shift Assay (CETSA): CETSA has become a leading approach for validating direct drug-target binding in intact cells and tissues by monitoring thermal stabilization of target proteins upon ligand binding [19]. The method quantitatively measures dose- and temperature-dependent stabilization, enabling system-level validation of target engagement. Recent work by Mazur et al. (2024) applied CETSA with high-resolution mass spectrometry to quantify drug-target engagement of DPP9 in rat tissue, confirming binding ex vivo and in vivo [19].

Drug Affinity Responsive Target Stability (DARTS): DARTS monitors changes in protein stability by observing whether ligands protect target proteins from proteolytic degradation [20]. This label-free technique can be applied to complex cell lysates or purified proteins without requiring protein modification [20]. The DARTS protocol involves: (1) sample preparation (cell lysates or purified proteins), (2) small molecule treatment, (3) protease digestion, (4) protein stability analysis via SDS-PAGE or mass spectrometry, and (5) target protein identification through comparison of treated and untreated groups [20].

Diagram 1: Experimental Workflow for Drug Target Validation

Multimodal Data Integration and Foundation Models

The integration of multimodal data sources represents a transformative opportunity in computational target identification. Approaches that combine chemical, genomic, phenotypic, and cellular network profiles demonstrate significantly improved prediction accuracy compared to methods relying on single data types [18] [22]. Emerging foundation models, such as ATOMICA, provide information-rich interaction embeddings that capture complex binding site characteristics [21]. These 32-dimensional vectors assigned to protein structures can be reduced to principal components that retain >99% variance, enabling efficient feature extraction for downstream prediction tasks [21].

The practical implementation of these approaches is exemplified by platforms like Sonrai Discovery, which integrate complex imaging, multi-omic, and clinical data into a single analytical framework [23]. By layering diverse datasets, researchers can uncover previously inaccessible relationships between molecular features and disease mechanisms, accelerating the identification of novel therapeutic targets [23].

Experimental Protocols and Methodologies

Protocol for Deep Learning-Based Target Identification Using deepDTnet

The deepDTnet methodology provides a robust protocol for identifying novel molecular targets through heterogeneous network embedding [18]:

Step 1: Network Construction

- Assemble a drug-target network from six data resources, incorporating 5,680 experimentally validated drug-target interactions connecting 732 approved drugs and 1,176 human targets [18].

- Integrate 15 types of chemical, genomic, phenotypic, and cellular network profiles to build a comprehensive heterogeneous network [18].

Step 2: Feature Learning

- Apply Deep Neural Networks for Graph Representations (DNGR) algorithm to embed each vertex in the network into a low-dimensional vector space [18].

- Generate biologically and pharmacologically relevant features through learning low-dimensional but informative vector representations for both drugs and targets [18].

Step 3: Model Training

- Employ Positive-Unlabeled (PU) matrix completion algorithm to handle the absence of experimentally reported negative samples [18].

- Implement 5-fold cross-validation, where 20% of experimentally validated drug-target pairs are randomly selected as positive samples with a matching number of randomly sampled non-interacting pairs as negative samples for the test set [18].

Step 4: Experimental Validation

- Validate computational predictions through direct binding assays such as CETSA or DARTS [18] [20].

- Confirm functional efficacy in disease-relevant models, as demonstrated by the validation of topotecan as a ROR-γt inhibitor in a mouse model of multiple sclerosis [18].

Protocol for Binding Affinity Prediction Using DeepDTAGen

The DeepDTAGen framework provides a comprehensive protocol for predicting drug-target binding affinities while generating novel target-aware compounds [9]:

Step 1: Data Preparation

- Utilize benchmark datasets (KIBA, Davis, BindingDB) with standardized splitting procedures to prevent data leakage [9].

- Represent drugs as molecular graphs or SMILES strings and proteins as amino acid sequences or structural features [9].

Step 2: Model Implementation

- Implement a multitask learning architecture with shared encoder modules for both drugs and targets [9].

- Employ the FetterGrad algorithm to mitigate gradient conflicts between affinity prediction and drug generation tasks by minimizing Euclidean distance between task gradients [9].

Step 3: Model Evaluation

- Assess binding affinity predictions using Mean Squared Error (MSE), Concordance Index (CI), R squared (r²m), and Area Under Precision-Recall Curve (AUPR) [9].

- Evaluate generated compounds for validity (chemical correctness), novelty (absence from training data), uniqueness (structural diversity), and binding capability to intended targets [9].

Step 4: Compound Validation

- Perform quantitative structure-activity relationship (QSAR) analysis to validate generated compounds [9].

- Conduct chemical druggability assessment including solubility, drug-likeness, and synthesizability evaluations [9].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Essential Research Reagents and Computational Tools for Drug Target Identification

| Category | Specific Tools/Reagents | Function/Application | Key Features |

|---|---|---|---|

| Computational Frameworks | deepDTnet | Target identification & drug repurposing | Heterogeneous network embedding; AUROC=0.963 [18] |

| DeepDTAGen | Binding affinity prediction & drug generation | Multitask learning; FetterGrad optimization [9] | |

| Experimental Validation | CETSA | Cellular target engagement validation | Direct binding measurement in intact cells/tissues [19] |

| DARTS | Label-free target identification | Protein stability monitoring; no modification required [20] | |

| Data Resources | BindingDB | Binding affinity data | 269,590 IC50 measurements; strict filtering recommended [21] |

| PLINDER-PL50 | Standardized dataset splits | Prevents data leakage; 66,671 compounds [21] | |

| Automation Platforms | MO:BOT (mo:re) | 3D cell culture automation | Standardized organoid production; human-relevant models [23] |

| eProtein Discovery System (Nuclera) | Protein expression & purification | DNA to purified protein in <48 hours; 192 parallel conditions [23] | |

| Data Management | Cenevo/Labguru | R&D data platform | Connects siloed data; AI-assisted search & analysis [23] |

| Sonrai Discovery | Multi-omic data integration | Advanced AI pipelines for imaging, omics & clinical data [23] |

Integrated Workflow for Target Identification and Validation

Diagram 2: Integrated Computational-Experimental Workflow for Target Identification

Computational drug target identification and validation is undergoing rapid transformation through the integration of deep learning methodologies, particularly within protein-ligand binding affinity research. The field has progressed from uni-tasking models to integrated multitask frameworks that simultaneously predict binding affinities and generate novel therapeutic candidates. Current approaches successfully address historical challenges including data scarcity, model interpretability, and translational gaps through heterogeneous data integration, advanced neural architectures, and rigorous experimental validation.

The convergence of computational prediction with high-throughput experimental validation creates an unprecedented opportunity to accelerate therapeutic development. As deep learning models continue to evolve toward greater biological plausibility and clinical relevance, they promise to fundamentally reshape the drug discovery landscape, enabling more efficient identification of novel targets and accelerating the development of effective therapeutics for diverse human diseases.

Deep Learning Architectures in Action: From CNNs to Transformers

The accurate prediction of protein-ligand binding affinity represents a cornerstone of computational drug discovery, where the strategic representation of molecular data directly influences model performance and generalizability. This technical guide examines the evolution and integration of key structural representations—from the simplicity of SMILES strings for ligands and amino acid sequences for proteins to the complex richness of 3D structural data. Within deep learning frameworks for binding affinity research, the choice of representation imposes specific inductive biases that ultimately determine a model's capacity to learn genuine physicochemical principles governing molecular interactions versus merely memorizing spurious correlations within training datasets [24] [13]. As the field confronts challenges of generalization and data bias, sophisticated data representation strategies have emerged as critical differentiators between models that succeed on benchmark datasets and those that maintain predictive power when encountering novel protein families or chemical series [13].

The progression from one-dimensional symbolic representations to three-dimensional structural encodings reflects the field's deepening understanding of the structural determinants of molecular recognition. SMILES (Simplified Molecular Input Line Entry System) provides a compact line notation for describing ligand structures using short ASCII strings, offering computational efficiency but limited structural context [25]. Similarly, amino acid sequences serve as the fundamental representation for proteins, with single-letter or multi-letter codes describing linear polypeptide chains [26]. While these sequential representations have enabled significant advances in bioinformatics and cheminformatics, they inherently lack the spatial information essential for understanding molecular interactions. This limitation has driven the adoption of 3D structural representations that encode the spatial coordinates of atoms, enabling models to leverage distance-dependent physicochemical interactions critical for accurate affinity prediction [24].

Fundamental Data Representation Formats

SMILES Strings for Molecular Representation

The Simplified Molecular Input Line Entry System (SMILES) is a line notation system that describes molecular structures using short ASCII strings, providing a compact and human-readable representation for chemical compounds [25]. Developed in the 1980s by David Weininger at the USEPA, SMILES has evolved into an open standard (OpenSMILES) maintained by the Blue Obelisk open-source chemistry community [25]. The specification encodes molecular graphs through a series of rules representing atoms, bonds, branches, and ring closures.

Key SMILES Syntax Elements:

- Atoms: Represented by standard chemical element symbols (e.g., C, N, O). Atoms not in the "organic subset" (B, C, N, O, P, S, F, Cl, Br, I) or having formal charges, implicit hydrogens, or chiral centers must be enclosed in brackets (e.g.,

[Na+],[OH-]) [25]. - Bonds: Single bonds (

-) are typically omitted between aliphatic atoms. Double, triple, and quadruple bonds are represented by=,#, and$respectively. Adjacency implies single bonding [25]. - Branches: Represented using parentheses, allowing description of complex molecular structures with multiple substituents.

- Rings: Indicated by breaking cyclic structures and adding numerical labels to show connectivity between non-adjacent atoms (e.g.,

C1CCCCC1for cyclohexane) [25]. - Stereochemistry: Specified using

/and\for directional bonds around tetrahedral centers and double bond geometry [25].

For peptide representation, SMILES offers particular advantages in describing non-standard amino acids, post-translational modifications, and complex cyclization patterns that challenge traditional sequence-based representations [26]. The translation of peptide sequences from biological codes (single-letter or multi-letter amino acid abbreviations) to SMILES enables cheminformatic analysis using tools originally developed for small molecules, facilitating property prediction and database screening [26].

Table 1: SMILES Representation for Common Molecular Patterns

| Structural Feature | SMILES Example | Description |

|---|---|---|

| Ethanol | CCO |

Aliphatic alcohol (implicit single bonds and hydrogens) |

| Carbon dioxide | O=C=O |

Double bonds explicitly specified |

| Hydrogen cyanide | C#N |

Triple bond representation |

| Cyclohexane | C1CCCCC1 |

Ring closure with numerical labels |

| Dioxane | O1CCOCC1 |

Heterocyclic ring structure |

| L-Alanine | C[C@H](N)C(=O)O |

Stereochemistry specification |

Amino Acid Sequences and Biological Codes

Protein sequences are predominantly represented using standardized biological codes that describe the linear arrangement of amino acid residues. The single-letter code represents the 20 proteinogenic amino acids using uppercase letters (A, C, D, E, F, G, H, I, K, L, M, N, P, Q, R, S, T, V, W, Y), while D-enantiomers are typically indicated using lowercase letters in specialized contexts [26]. For non-proteinogenic amino acids, modified residues, or peptidomimetics, multi-letter codes (typically three characters) provide expanded representation capabilities, though these require careful annotation to ensure machine-readability [26].

Specialized representation systems have been developed to address the limitations of standard biological codes:

- HELM (Hierarchical Editing Language for Macromolecules): Employed by databases such as PubChem and ChEMBL, HELM provides a standardized notation for complex biomolecules including peptides, oligonucleotides, and conjugates, enabling precise description of modifications at atomic resolution [26].

- LINUCS: Originally designed for oligosaccharides, this code finds application in representing glycopeptides and other complex conjugates, particularly within the PubChem database [26].

The translation between biological sequence representations and chemical codes like SMILES enables integrated analysis across bioinformatics and cheminformatics platforms, facilitating research on modified peptides, peptidomimetics, and structure-activity relationships [26].

3D Structural Data and Molecular Descriptors

Three-dimensional structural representations encode spatial atomic coordinates, typically obtained from X-ray crystallography, NMR spectroscopy, or computational modeling. These representations enable the calculation of physicochemical descriptors critical for understanding molecular interactions and predicting binding affinity.

Principal Molecular Shape Descriptors:

- Normalized Principal Moment of Inertia (PMI): Quantifies molecular 3D-ness by comparing moments of inertia along principal axes, enabling normalized comparison across diverse structures [27]. PMI analysis reveals that most drug-like molecules exhibit predominantly linear or planar geometries, with fewer than 0.5% displaying highly 3D character [27].

- Plane of Best Fit: Calculates the deviation of atomic coordinates from a reference plane, providing a complementary measure of molecular planarity [27].

- sp³ Carbon Count: A simple metric quantifying the fraction of carbon atoms with tetrahedral hybridization, correlating with molecular complexity and three-dimensionality [27].

Analysis of approved therapeutics and protein-bound ligands reveals a striking predominance of planar and linear topologies, with approximately 80% of DrugBank compounds exhibiting 3D scores <1.2 and only 0.5% displaying highly 3D geometries (scores >1.6) [27]. This topological bias reflects both synthetic accessibility constraints and adherence to drug-like property guidelines such as the Rule of Five, rather than optimal molecular recognition principles.

Table 2: 3D Structural Descriptors for Molecular Shape Characterization

| Descriptor | Calculation Method | Interpretation | Typical Range for Drug-like Molecules |

|---|---|---|---|

| Normalized PMI Ratio | I1/I3 and I2/I3 where I1≤I2≤I3 | Linear (0,1), planar (0.5,0.5), spherical (1,1) | 80% < 1.2 [27] |

| 3D Score | I1/I3 + I2/I3 | Composite shape metric | Highly 3D: >1.6 (0.5% of drugs) [27] |

| Fraction sp³ Carbons | sp³ C / Total C | Molecular complexity/saturation | Varies by chemical series |

| Plane of Best Fit RMSD | Atomic deviation from reference plane | Planarity quantification | Compound-specific |

Data Representation in Binding Affinity Prediction

The Generalization Challenge in Structure-Based Models

Deep learning approaches for protein-ligand binding affinity prediction face significant generalization challenges when encountering novel protein families or ligand scaffolds unseen during training. Contemporary models frequently demonstrate degraded performance under rigorous leave-superfamily-out validation despite excellent benchmark metrics, indicating that reported performance often reflects data leakage and memorization rather than genuine learning of physicochemical principles [24] [13].

The root cause of this generalization failure lies in the competition between learning spurious correlations from structural motifs prevalent in training data versus acquiring transferable knowledge of distance-dependent molecular interactions [24]. Studies retraining state-of-the-art models on carefully curated datasets with reduced data leakage (PDBbind CleanSplit) observed marked performance drops, confirming that previous high benchmark scores were largely driven by dataset biases rather than model capability [13]. Alarmingly, some models maintain competitive performance even when critical protein or ligand information is omitted, suggesting they exploit dataset-specific artifacts rather than learning genuine structure-activity relationships [13].

Advanced Architectures for Structure-Based Affinity Prediction

CORDIAL: Interaction-Focused Representation

The CORDIAL (COvolutional Representation of Distance-dependent Interactions with Attention Learning) framework addresses generalization challenges through an inductive bias explicitly avoiding direct parameterization of chemical structures, instead focusing on learning distance-dependent physicochemical interaction signatures between proteins and ligands [24]. This interaction-centric representation enables maintained predictive performance under leave-superfamily-out validation conditions where conventional models degrade, demonstrating the value of encoding appropriate physicochemical principles into model architecture [24].

CORDIAL Experimental Protocol:

- Input Representation: Protein-ligand complexes are represented using 3D voxelized grids encoding distance-dependent interaction potentials rather than explicit atomic coordinates or chemical structures.

- Feature Engineering: Physicochemical interaction descriptors are calculated based on spatial proximity, including electrostatic complementarity, van der Waals interactions, and hydrogen bonding potentials.

- Network Architecture: Convolutional layers with attention mechanisms process the interaction grids, enabling learning of spatially localized interaction patterns.

- Training Regimen: Models are trained using rigorous cross-validation strategies ensuring no similarity between training and validation complexes, with explicit monitoring for memorization versus genuine learning.

- Validation: Performance assessment under leave-superfamily-out conditions provides realistic estimation of generalizability to novel targets [24].

GEMS: Graph Neural Network with Reduced Data Bias

The GEMS (Graph neural network for Efficient Molecular Scoring) architecture demonstrates how addressing data representation bias can substantially improve generalization capability [13]. By combining graph neural networks with transfer learning from protein language models and training on the rigorously filtered PDBbind CleanSplit dataset, GEMS maintains state-of-the-art performance on independent test sets while avoiding exploitation of data leakage [13].

GEMS Data Curation and Training Protocol:

- Multimodal Filtering: Training datasets are processed using a structure-based clustering algorithm that identifies and removes complexes with high similarity to test cases based on combined protein similarity (TM-scores), ligand similarity (Tanimoto coefficients), and binding conformation similarity (pocket-aligned ligand RMSD) [13].

- Redundancy Elimination: Similarity clusters within training data are identified and reduced to minimize memorization incentives, removing approximately 7.8% of training complexes to create a more diverse dataset [13].

- Graph Representation: Protein-ligand complexes are represented as sparse graphs with nodes for protein residues and ligand atoms, and edges encoding spatial relationships and interaction types.

- Transfer Learning: Protein language model embeddings provide evolutionary information, enabling better generalization to proteins with limited structural characterization.

- Ablation Validation: Controlled experiments confirm model reliance on genuine protein-ligand interactions rather than ligand memorization by demonstrating performance degradation when protein information is omitted [13].

Language Models for Structural Feature Prediction

Recent advances demonstrate that protein language models trained solely on sequence information can surprisingly capture three-dimensional structural features relevant to binding affinity prediction [28]. When applied to language representations combining reaction SMILES for substrates/products with amino acid sequence information for enzymes, these models can identify enzymatic binding sites with 52.13% accuracy compared to co-crystallized structures as ground truth [28]. This capability suggests that sequential representations implicitly encode substantial 3D structural information, bridging the gap between sequence-based and structure-based approaches.

Experimental Protocols and Methodologies

Data Curation and Cleaning Protocols

PDBbind CleanSplit Curation Methodology [13]:

- Train-Test Similarity Assessment: All CASF benchmark complexes are compared against all PDBbind training complexes using multimodal similarity metrics:

- Protein structure similarity: TM-score ≥ 0.7

- Ligand chemical similarity: Tanimoto coefficient ≥ 0.9

- Binding conformation similarity: Pocket-aligned ligand RMSD ≤ 2.0Å

- Leakage Elimination: All training complexes exceeding similarity thresholds with any test complex are removed from the training set (approximately 4% of complexes).

- Redundancy Reduction: Similarity clusters within training data are identified using adapted thresholds and iteratively removed until all clusters are resolved (additional 7.8% of complexes eliminated).

- Validation: Filtered training and test sets are verified to ensure no remaining complexes share biologically significant similarity that could enable prediction through memorization.

Data Preparation:

- Collect enzyme sequences with known catalytic activity

- Obtain reaction SMILES for substrate-product pairs

- Align sequences and identify conserved regions

Model Architecture:

- Implement transformer-based language model architecture

- Process sequence and reaction SMILES through separate embedding layers

- Apply multi-head attention mechanisms to capture long-range dependencies

Training Procedure:

- Train using masked language modeling objective on large corpus of enzyme sequences

- Fine-tune on specific enzyme families with known binding sites

- Validate predictions against crystallographic data

Binding Site Mapping:

- Extract attention weights from final layers

- Identify residues with highest attention scores for substrate recognition

- Map these residues to 3D structures to define putative binding pockets

Visualization of Data Representation Workflows

Protein-Ligand Affinity Prediction Workflow

Data Representation Evolution in Drug Discovery

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Resources for Protein-Ligand Binding Affinity Research

| Resource Name | Type | Primary Function | Application Context |

|---|---|---|---|

| PDBbind Database [13] | Structured Database | Curated protein-ligand complexes with binding affinity data | Training and benchmarking affinity prediction models |

| CASF Benchmark [13] | Evaluation Framework | Standardized test sets for scoring function comparison | Performance validation and model comparison |

| SwissADME [26] | Web Tool | Prediction of absorption, distribution, metabolism, excretion properties | Drug-likeness assessment and property optimization |

| CORDIAL Framework [24] | Deep Learning Architecture | Structure-based affinity prediction with focus on generalizability | Prediction for novel protein targets and chemical series |

| GEMS Model [13] | Graph Neural Network | Binding affinity prediction with reduced data bias | Robust screening with minimized overfitting risk |

| BioTriangle [26] | Computational Tool | Calculation of physicochemical and topological descriptors | Molecular representation and similarity assessment |

| HELM Notation [26] | Representation Standard | Standardized representation of complex biomolecules | Encoding modified peptides and biotherapeutics |

| OpenSMILES [25] | Chemical Representation | Open standard for molecular structure encoding | Ligand representation and database screening |

The evolution of data representation strategies—from sequential SMILES strings and amino acid sequences to sophisticated 3D structural encodings—has profoundly shaped the capabilities of deep learning frameworks in protein-ligand binding affinity research. The critical insight emerging from recent research is that representation choice directly influences model generalizability, with overly simplistic or biased representations encouraging memorization rather than genuine learning of physicochemical principles. Approaches that explicitly encode distance-dependent interaction signatures, such as CORDIAL, or that rigorously address dataset biases, such as GEMS trained on PDBbind CleanSplit, demonstrate markedly improved performance on novel targets unseen during training. As the field advances, the integration of representation learning with physics-based principles offers a promising path toward robust affinity prediction models that transcend the limitations of current benchmark-focused approaches, ultimately accelerating the discovery of novel therapeutic agents through computational design.

Convolutional Neural Networks (CNNs) for Spatial Feature Extraction from Molecular Structures

Accurate prediction of protein-ligand binding affinity is a cornerstone of rational drug discovery, serving as a critical determinant in identifying potential therapeutic compounds. Within this domain, deep learning has introduced powerful data-driven paradigms that complement and extend traditional physics-based strategies. Among these approaches, Convolutional Neural Networks (CNNs) have emerged as particularly significant for their ability to automatically extract spatially correlated features from molecular structures. Unlike conventional scoring functions that rely on predetermined physical equations, CNN-based methods learn the key features of protein-ligand interactions directly from structural data, enabling them to capture complex patterns that correlate with binding affinity. This capability is especially valuable for virtual screening and pose prediction, where accurately ranking potential drug candidates can dramatically reduce the time and cost associated with experimental assays [29] [30].