Data Quality and Standardization in Chemoinformatics: Foundational Principles, Methodologies, and Best Practices for Robust Research

This article addresses the critical challenge of data quality and standardization in chemoinformatics, a field pivotal to accelerating drug discovery and materials science.

Data Quality and Standardization in Chemoinformatics: Foundational Principles, Methodologies, and Best Practices for Robust Research

Abstract

This article addresses the critical challenge of data quality and standardization in chemoinformatics, a field pivotal to accelerating drug discovery and materials science. It provides researchers and drug development professionals with a comprehensive framework covering the foundational sources of data inconsistency, practical methodologies for standardization and pipelining, strategies for troubleshooting common issues, and rigorous approaches for model validation and benchmarking. By synthesizing current best practices and emerging trends, the content aims to equip scientists with the knowledge to enhance the reliability, reproducibility, and impact of their computational research, ultimately fostering more efficient and successful R&D outcomes.

The Data Quality Imperative: Understanding the Core Challenges in Chemical Information

The Impact of Poor Data Quality on Predictive Modeling and Drug Discovery

Technical Troubleshooting Guides

Troubleshooting Guide 1: Resolving Inaccurate Predictive Model Outputs

Problem: A predictive model for compound toxicity is generating unreliable and inaccurate predictions, leading to failed experimental validation.

Explanation: Inaccurate model outputs are frequently caused by underlying data quality issues. The model's predictions are only as reliable as the data it was trained on. Inconsistencies, errors, or biases in the source data will be learned and amplified by the model [1] [2].

Solution: A systematic approach to diagnose and rectify data quality problems.

Step 1: Audit Training Data Provenance and Completeness

- Action: Trace the training data back to its original source. Check for documentation on how the data was generated, including experimental protocols and units of measurement.

- Check for: Missing values, incomplete experimental context (e.g., assay conditions), or a dataset that lacks both positive (active) and negative (inactive) compounds, which is crucial for robust model training [3] [4].

- Fix: Prioritize data from sources that practice rigorous manual curation and provide full provenance. Impute missing values carefully or consider removing entries with excessive missing data.

Step 2: Check for Entity Disambiguation Errors

- Action: Scrutinize the dataset for inconsistent representations of the same chemical or biological entity.

- Check for: A single protein target referred to by multiple names or identifiers, or a small molecule represented by different stereochemical or salt forms that are not standardized [1] [4].

- Fix: Reconcile all entities to authoritative constructs. Use standardized identifiers (e.g., InChIKeys for compounds, UniProt IDs for proteins) to cluster identical entities before retraining the model [1].

Step 3: Validate Data Consistency and Normalization

- Action: Ensure that all numerical data, particularly bioactivity measurements (e.g., IC50, Ki), are on a consistent scale and in the same units.

- Check for: Mixed units (e.g., nM vs. µM) or data extracted from different assay types that are not comparable.

- Fix: Apply thorough data normalization. Convert all measurements to a standard unit. This process often requires human expertise to correctly interpret and reconcile data from scientific literature and patents [1].

Prevention: Implement a robust data governance framework that enforces FAIR (Findable, Accessible, Interoperable, Reusable) data principles from the point of data generation [4] [2].

Troubleshooting Guide 2: Addressing Failure to Reproduce Published Results

Problem: Your team cannot reproduce the results of a key published study or an earlier internal experiment.

Explanation: The inability to reproduce results is often rooted in ambiguous or incorrect metadata, rather than a failure of experimental technique. This includes incomplete descriptions of chemical structures, biological materials, or experimental procedures [4] [5].

Solution: A forensic analysis of the methods and materials described.

Step 1: Verify Chemical Structure and Purity

- Action: Re-examine the chemical structure of the compound used, paying close attention to stereochemistry, hydration, or salt forms that may have been incorrectly reported or interpreted.

- Check for: Errors in structure-identifier mapping, such as an incorrect CAS Registry Number (CAS RN) associated with a structure [4].

- Fix: Source the compound from a reputable supplier and confirm its identity and purity via analytical methods (e.g., NMR, LC-MS) before use. Consult multiple databases to resolve discrepancies.

Step 2: Scrutinize Biological Reagents and Assay Conditions

- Action: Confirm the identity and passage number of cell lines, the construct and expression system for recombinant proteins, and all critical buffer components.

- Check for: Cell line misidentification or contamination, which is a common issue. Also, check for vague descriptions of assay conditions (e.g., "room temperature," "standard buffer").

- Fix: Use authenticated, low-passage cell lines from reliable repositories. Document all assay conditions in exhaustive detail, including pH, temperature, incubation times, and solvent concentrations.

Step 3: Evaluate Data Interpretation and Visualization

- Action: Critically assess the figures and data representations in the original publication.

- Check for: Unclear or inconsistent use of arrow symbols in pathway diagrams, which can be interpreted in multiple ways (e.g., conversion, translocation, activation, inhibition) [6].

- Fix: Reach out to the corresponding author of the publication to request clarification on ambiguous methodological details or data representations.

Prevention: Maintain detailed, standardized electronic lab notebooks (ELNs) that capture every aspect of an experiment, enabling flawless replication.

Data Quality Assurance Framework

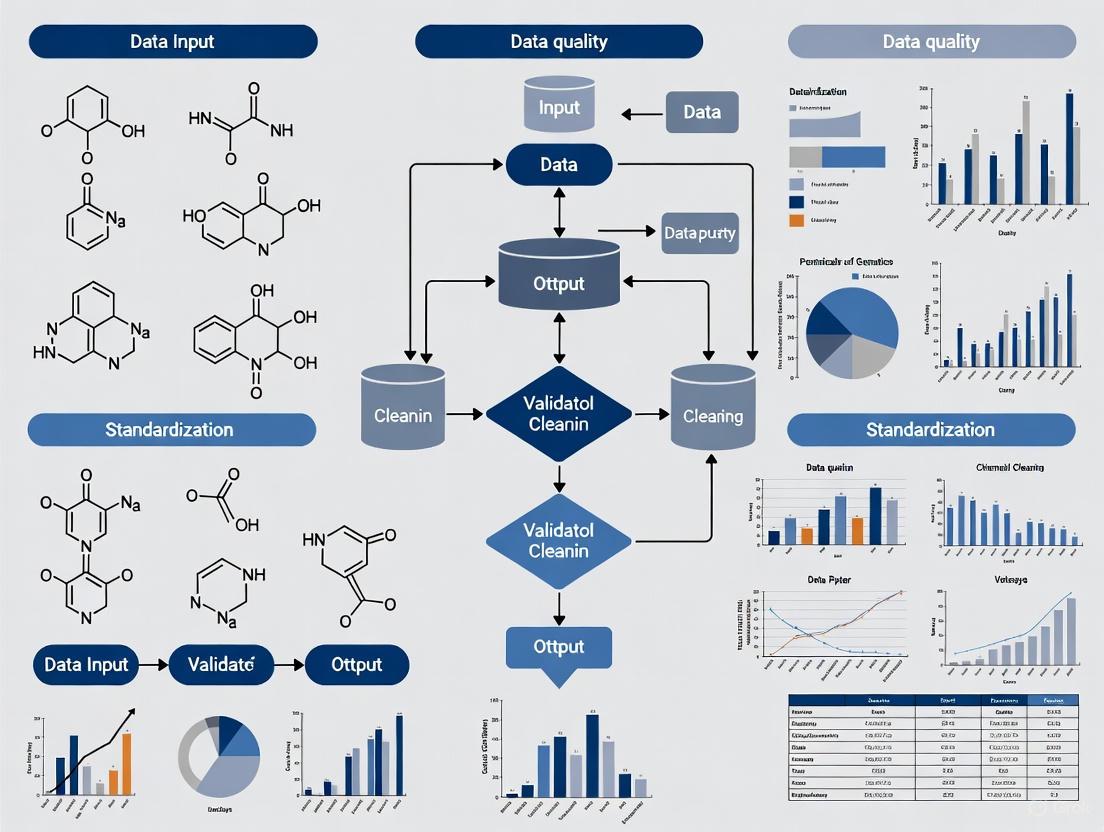

The following workflow outlines a comprehensive process for ensuring data quality, from initial profiling to ongoing governance.

Quantitative Impact of Poor Data Quality

The table below summarizes the tangible costs and operational impacts of data quality issues in drug discovery.

| Data Quality Issue | Impact on Predictive Modeling | Operational & Financial Cost |

|---|---|---|

| Inconsistent Entity Representation (e.g., multiple names for one protein) | Reduces model accuracy; creates false independent observations [1] | Wasted resources on testing misidentified compounds; delays in project timelines |

| Lack of Negative Data (e.g., reporting only active compounds) | Leads to models with poor selectivity and high false-positive rates [3] | Pursuit of non-viable lead compounds, increasing late-stage failure costs |

| Propagated Identifier Errors (e.g., incorrect CAS RN-structure links) | Generates fundamentally flawed training data, producing misleading predictions [4] | Costs of flawed research based on incorrect data; estimated average of $12.9M annually for companies [7] |

| Non-Standardized Units & Measurements | Makes data from different sources incompatible, reducing usable dataset size [1] | Time spent manually reconciling data; impedes automated data integration and analysis |

Frequently Asked Questions (FAQs)

Q1: What are the most common data quality issues in public chemical databases? The most frequent issues include incorrect associations between chemical structures and their identifiers (like CAS RNs), errors in representing stereochemistry, the propagation of errors from one database to another (data crosstalk), and a lack of clarity regarding data provenance and licensing [4]. These errors can be subtle but have a significant impact on predictive models.

Q2: How does poor data quality specifically impact AI and machine learning in drug discovery? AI/ML models are entirely dependent on their training data. Poor quality data leads to models that are inaccurate, unreliable, and prone to bias. For example, a model trained without carefully curated negative data (inactive compounds) will struggle to distinguish between active and inactive compounds in virtual screening [3] [8]. Furthermore, errors in chemical structures can lead the model to learn incorrect structure-activity relationships.

Q3: What is the difference between data quality assurance and data quality control? Data Quality Assurance (QA) is a proactive process focused on preventing data errors by establishing standards, protocols, and training. It is process-oriented. In contrast, Data Quality Control (QC) is a reactive process that involves detecting and correcting errors in existing datasets through activities like auditing, validation, and cleansing [9]. A robust data strategy requires both.

Q4: What are FAIR data principles and why are they important? FAIR stands for Findable, Accessible, Interoperable, and Reusable. These principles provide a framework for managing data to ensure it can be easily located, accessed, integrated, and reused by humans and machines. Adopting FAIR principles is crucial for accelerating drug discovery as it enhances data sharing, improves reproducibility, and ensures that data assets can be fully leveraged for future research [4] [2].

Q5: Our models are performing well on validation tests but failing in real-world applications. What could be wrong? This is often a sign of model overfitting or a data representativeness problem. Your training data may not adequately reflect the diversity of chemical space or biological contexts encountered in real-world scenarios. The training data might contain biases or lack critical negative examples, causing the model to perform poorly on novel, external compounds [3] [2]. Re-auditing the training data for coverage and bias is essential.

Standardized Experimental Protocol for Data Generation

Protocol: Validated Compound Bioactivity Data Generation

1. Objective: To generate accurate, reproducible, and well-annotated bioactivity data for a compound library against a specific protein target, ensuring fitness for use in predictive modeling.

2. Materials:

- Compound Library: Pre-formatted in DMSO, with confirmed identity and purity (e.g., >95% by LC-MS).

- Protein Target: Purified recombinant protein, with sequence and storage buffer fully documented.

- Assay Reagents: Substrate, co-factors, and detection reagents. Lot numbers for all critical reagents must be recorded.

- Equipment: Liquid handling robot, microplate reader, and data analysis software.

3. Procedure:

- Step 1: Plate Map Generation. Create a detailed electronic plate map that defines the location of each test compound, positive controls (known inhibitor), and negative controls (DMSO only). Include on-plate replicates for statistical robustness.

- Step 2: Assay Execution.

- Dilute the protein and compounds in the reaction buffer to the working concentrations.

- Using automated liquid handling, transfer compounds and then initiate the reaction by adding the protein/substrate mixture.

- Incubate the plate under defined conditions (temperature, time).

- Measure the signal according to the assay's detection method (e.g., fluorescence, absorbance).

- Step 3: Data Capture.

- Raw signal data from the plate reader is automatically uploaded to a database.

- The plate map file is electronically linked to the raw data file.

- Step 4: Data Analysis & Normalization.

- Calculate percent inhibition for each well using the mean of positive and negative controls on the same plate.

- Fit dose-response curves to generate IC50 values.

- All data transformation steps and calculation formulas must be documented and version-controlled.

4. Required Metadata & Documentation: This protocol must generate the following metadata to ensure data quality and reproducibility:

- Chemical Structure: Standardized InChI and SMILES strings for each compound.

- Sample Purity: Analytical data confirming compound purity and identity.

- Assay Buffer: Exact composition, pH, and preparation method.

- Control Data: Raw values for positive and negative controls on each plate.

- Data Processing Scripts: Versioned code used for curve fitting and IC50 calculation.

The Predictive Modeling Process: From Data to Insight

The following diagram illustrates the end-to-end workflow for building predictive models in drug discovery, highlighting critical data quality checkpoints.

| Tool / Resource Category | Specific Examples | Function & Relevance to Data Quality |

|---|---|---|

| Curated Public Databases | CAS BioFinder [1], ChEMBL [4], DSSTox/CompTox Chemicals Dashboard [4] | Provide pre-curated, high-quality chemical and bioactivity data with provenance, serving as reliable sources for model training. |

| Data Standardization Tools | Standardizer software, InChI/SMILES validators | Convert diverse data representations into consistent, standardized formats (e.g., canonical tautomers, neutral forms), ensuring data interoperability. |

| Automated Curation & FAIRification Platforms | Polly platform [2] | Use machine learning to automate the process of making data FAIR (Findable, Accessible, Interoperable, Reusable), crucial for handling large datasets. |

| Chemical Identifier Resolvers | PubChem Identifier Exchange Services, NCBI Utilities | Help resolve and cross-reference different chemical identifiers (e.g., names, CAS RN, structures) to ensure entity consistency. |

| Data Governance & Quality Frameworks | FAIR Data Principles [4] [2], Data Quality Pillars (Accuracy, Completeness, etc.) [9] | Provide the strategic foundation, policies, and metrics for maintaining high data quality across an organization. |

Molecular representations like SMILES, InChI, and MOL files serve as fundamental digital languages for chemistry, enabling data exchange, storage, and analysis in chemoinformatics. However, inconsistencies in these identifiers pose significant challenges for data integrity, affecting quantitative structure-activity relationship (QSAR) modeling, drug discovery, and chemical hazard assessment [10] [11]. This technical support guide addresses common pitfalls and provides troubleshooting methodologies to enhance data quality and standardization, which is crucial for reliable chemoinformatics research.

Frequently Asked Questions (FAQs)

1. Why does the same molecule generate different SMILES or InChI strings in different databases? Inconsistencies often arise from the use of different software tools and structure standardization rules across databases. Studies have shown that the consistency between systematic chemical identifiers and their corresponding MOL representation varies greatly between data sources (37.2% to 98.5%) [10]. When different chemistry business rules or normalization approaches are applied for data integration, the same structure can be represented by different identifiers.

2. My database search using an InChIKey failed to find a known compound. What could be wrong? InChIKey generation can vary between software due to differences in handling undefined stereochemistry, chiral flags, or input formats. For example, a molecule generated different InChIKeys from Marvin software versus the IUPAC standard due to an unset chiral flag in the MOL file [12]. Using non-standard InChI options can also produce different keys. Always ensure your input structure is properly defined and use standard, well-documented settings for identifier generation.

3. Why does my SMILES string fail to parse or generate an invalid structure? SMILES strings can contain syntax errors, valence errors, or kekulization failures. Common problems include unmatched parentheses, unclosed rings, or atoms with uncommon valence states [13]. For example, the pipe character ("|") is not a valid character in a SMILES string and will cause parsing to fail [14]. Always validate SMILES strings with a parsing tool before use in databases or applications.

4. How are salts and charged molecules handled inconsistently in InChI?

InChI handles protonation and charged species differently depending on the functional groups involved. For example, penicillin G potassium salt uses the /p layer to indicate proton removal, while chloramine-T adjusts the formula and /h layer instead [15]. This inconsistency arises from algorithmic treatment of different chemical functionalities and can lead to confusion when comparing ionic species.

5. What is the impact of these inconsistencies on chemoinformatics research? Identifier inconsistencies directly impact QSAR prediction accuracy, chemical hazard and risk assessments, and can cause problems in chemical ordering and analytical standard identification [11]. When merging data from multiple sources, these inconsistencies can lead to incorrect structure-activity relationships and reduced model reliability.

Troubleshooting Guides

Guide 1: Diagnosing and Resolving SMILES Inconsistencies

Problem: SMILES strings for the same compound are not matching across different databases or software tools.

Investigation Protocol:

- Standardize Input Structures: Begin by applying consistent structure standardization rules to all compounds. This includes normalization of tautomers, neutralization of charges, and unambiguous stereochemistry representation [10].

- Generate Canonical SMILES: Use a reliable cheminformatics toolkit (e.g., RDKit, Open Babel) with consistent parameters to generate canonical isomeric SMILES from the standardized structures.

- Validate SMILES Syntax: Use a validating SMILES parser to check for and categorize errors. The workflow below outlines a diagnostic procedure adapted from manual validation techniques [13].

Diagram: SMILES Validation Workflow. A systematic approach to diagnose common SMILES string errors.

- Compare Parent Structures: For advanced troubleshooting, compare the parent structures (ignoring stereochemistry) by removing stereochemical descriptors. This can help determine if the core connectivity is consistent.

Solution: Implement a consistent structure standardization protocol before generating any SMILES strings. For database curation, use automated validation scripts to flag and manually review compounds with syntax or valence errors.

Guide 2: Addressing InChI and InChIKey Generation Discrepancies

Problem: Different software tools generate different InChI or InChIKey identifiers for the same molecular structure.

Investigation Protocol:

- Verify Input Structure Integrity: Ensure the input MOL file contains complete and correct stereochemical information. Check that the chiral flag is properly set, as this is a common source of discrepancy [12].

- Use Standard InChI Generation: Always generate standard InChI using the official IUPAC software or tools that adhere strictly to its specifications. Avoid non-standard options unless specifically required.

- Cross-Validate with Multiple Tools: Generate InChI/InChIKey using different reputable tools (e.g., RDKit, Open Babel, ChemAxon) and compare results. Inconsistency indicates a problem with the input structure or software configuration.

- For Salts and Charged Molecules: Carefully analyze the InChI layers (particularly

/q,/p, and/f) to understand how charges and protons are being handled. Be aware that different protonation states of the same functional group may be treated differently [15].

Solution: For database indexing, always generate InChIKeys from standardized MOL files using a single, well-defined software configuration. If using RDKit, ensure you're using the latest version and consider known issues with specific structures [16]. For structures with undefined stereochemistry, explicitly define stereo centers or use consistent flags.

Guide 3: Identifying and Correcting Database Cross-Reference Errors

Problem: Chemical structures linked via cross-references between databases (e.g., PubChem, ChEBI, DrugBank) have inconsistent representations.

Investigation Protocol:

- Extract Cross-Referenced Compounds: Obtain pairs of compounds that are linked via cross-references between the databases of interest.

- Convert to Standard InChI: Generate Standard InChI strings from the MOL files of both structures in each pair, using the same software and version [10].

- Compare InChI Strings: Perform exact string matching of the full InChIs and the InChIKeys (first 14 characters representing the connectivity).

- Analyze Discrepancies: For inconsistent pairs, examine the specific differences:

- Compare with stereochemistry ignored (using the FICTS standardization rules or similar) [10]

- Check for differences in tautomeric representation

- Identify charge and protonation state variations

- Detect isotopic labeling differences

Solution: When merging data from multiple sources, regenerate systematic identifiers starting from the MOL representation after applying consistent, well-documented chemistry standardization rules. Prefer structure-based matching (using standardized InChI) over literal identifier matching for data integration tasks.

Experimental Protocols and Data

Quantitative Analysis of Identifier Consistency

Research has quantified the consistency of systematic identifiers within and between chemical databases. The table below summarizes key findings from a study analyzing major chemical resources [10].

Table 1: Consistency of Systematic Chemical Identifiers Within Databases

| Database | MOL-InChI Consistency | MOL-SMILES Consistency | MOL-IUPAC Consistency | Notes |

|---|---|---|---|---|

| DrugBank | 98.2% | 99.9% | 99.7% | 6,506 compounds analyzed |

| ChEBI | 89.3% | 92.3% | 88.0% | 21,367 compounds analyzed |

| HMDB | 100.0% | 100.0% | 90.5% | 8,534 compounds analyzed |

| PubChem | 100.0% | 100.0% | 94.1% | Subset of 5M+ compounds |

Table 2: Impact of Structure Standardization on Cross-Database Consistency

| Standardization Applied | Minimum Consistency | Maximum Consistency | Observation |

|---|---|---|---|

| With Stereochemistry | 25.8% | 93.7% | Wide variation in MOL representation of cross-referenced compounds |

| Without Stereochemistry | 47.6% | 95.6% | Significant improvement in consistency after removing stereo information |

Standardization Protocol for Consistent Identifier Generation

Based on the FICTS rules developed by the NCI/CADD group, apply the following standardization steps before generating any systematic identifiers [10]:

- Remove small organic fragments (F)

- Ignore isotopic labels (I)

- Neutralize charges (C)

- Generate canonical tautomers (T)

- Ignore stereochemistry information (S) - Apply only for non-stereosensitive applications

Implementation code outline:

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Molecular Representation Work

| Tool/Resource | Type | Primary Function | Application in Troubleshooting |

|---|---|---|---|

| RDKit | Cheminformatics Library | Molecular manipulation, property calculation, file conversion | Generate canonical SMILES, validate structures, convert between formats [17] |

| Open Babel | Chemical File Conversion Tool | Format translation, descriptor calculation | Batch conversion of chemical files, compare outputs from different tools [17] |

| InChI Software (IUPAC) | Reference Standard | Generate standard InChI/InChIKey | Provide benchmark identifiers for comparison [12] |

| PartialSMILES Parser | Validation Library | SMILES syntax validation | Diagnose specific SMILES parsing errors (syntax, valence, kekulization) [13] |

| FICTS Standardization Rules | Chemistry Standardization Protocol | Structure normalization | Preprocess structures before identifier generation to ensure consistency [10] |

| COD/CSD Databases | Curated Structure Databases | Source of validated molecular geometries | Reference data for validating molecular representations [18] |

Methodologies for Data Quality Assessment

Database Quality Control Protocol

For maintaining high-quality chemical databases, implement this systematic validation procedure:

- Structure-Identifier Consistency Check: Verify that all systematic identifiers (SMILES, InChI, IUPAC) correspond to the same MOL structure by converting all to Standard InChI and performing exact matching [10].

- Cross-Database Validation: For compounds with cross-references to external databases, compare the InChIKeys to identify inconsistent representations and correct the erroneous entries.

- Automated Error Flagging: Implement automated scripts to flag compounds with:

- Invalid SMILES syntax

- InChIKeys that don't match the structure

- Significant discrepancies with cross-referenced compounds

- Regular Revalidation: Schedule periodic database revalidation to maintain data quality as standardization methods and software tools evolve.

This comprehensive approach to identifying and resolving molecular representation inconsistencies will significantly enhance the reliability of chemoinformatics research and drug development workflows.

The Critical Role of Stereochemistry and Tautomerism in Data Ambiguity

Troubleshooting Guides

Guide 1: Resolving Tautomerism-Related Compound Registration Errors

Problem: During database registration, a new compound is flagged as a duplicate of an existing entry, but the structures appear different when viewed. This often leads to failed registration attempts and confusion about compound uniqueness.

Explanation: This is a classic symptom of tautomerism, where a single compound can exist as multiple, readily interconverting structural isomers [19]. Database lookup tools often normalize these different forms to a single canonical structure. If your submitted compound is a different tautomer of an already registered structure, the system will identify it as a duplicate [20].

Solution:

- Confirm Tautomeric Relationship: Use chemoinformatics toolkits (e.g., CACTVS, OpenEye) to enumerate possible tautomers of your submitted structure. Compare the canonical tautomer of your compound with that of the alleged duplicate [19] [20].

- Verify "Sameness" Experimentally (if critical): For crucial compounds, confirm the tautomeric relationship analytically. As demonstrated in a large-scale study, purchase the samples and analyze them via ¹H and 13C NMR spectroscopy. The NMR spectra of different tautomers of the same compound will be identical, confirming they are the same "stuff in the bottle" [19].

- Standardize Before Submission: Implement a pre-registration standardization step that converts all structures to a consistent, canonical tautomeric form. This prevents future duplication issues [21] [22].

Guide 2: Addressing Inconsistent Biological Screening Data for Stereoisomers

Problem: Screening data for a compound is inconsistent between different tests or collaborator sites. One test shows high activity, while another shows low or no activity, and the cause cannot be traced to obvious experimental error.

Explanation: This frequently occurs with chiral compounds. If a screening library uses a racemic mixture (a 50/50 mix of both enantiomers), the observed biological activity is an average of the activities of the two individual enantiomers [23]. One enantiomer (the eutomer) may be highly active, while the other (the distomer) may be inactive or even antagonistic. Slight variations in the composition of the screened material can lead to significant differences in the readout.

Solution:

- Chiral Resolution: Separate the racemic mixture into its pure enantiomers using chiral chromatography or asymmetric synthesis [23].

- Test Enantiomers Individually: Screen each pure enantiomer separately to determine their individual activities and potencies. This provides a clear structure-activity relationship (SAR) and eliminates ambiguity [23].

- Audit Screening Libraries: Review the composition of your compound libraries. Prefer libraries that supply stereoisomers as separate, defined entries rather than as racemic mixtures to ensure consistent and interpretable screening results [23].

Guide 3: Correcting Invalid Stereochemical Descriptors in Computed InChI Keys

Problem: After processing a chemical structure through an informatics pipeline, the generated InChI Key lacks stereochemical descriptors, even though the original structure had defined stereocenters.

Explanation: The standard InChI algorithm involves a normalization process that can remove certain types of stereochemical information. This includes converting relative stereochemistry to absolute or handling double bonds with undefined stereochemistry ("either" bonds) based on atom coordinates [22]. If the structure was not drawn with precise coordinates or used "either" bonds, the canonicalization step may generate an InChI Key that does not fully represent the intended stereochemistry.

Solution:

- Use the Original Connection Table: For database registration, treat the original connection table (e.g., from an MOL or SDF file) as the primary source of structural truth, not the InChI or InChI Key [22].

- Validate Cross-Representations: Use validation tools like the Chemical Validation and Standardization Platform (CVSP) to cross-validate that the InChI, SMILES, and connection table all represent the same stereochemistry [22].

- Employ Non-Standard InChI: For internal workflows where loss of stereo information is detrimental, consider using a "non-standard" InChI option that preserves this information, acknowledging that this will reduce interoperability with public databases [22].

Frequently Asked Questions (FAQs)

FAQ 1: How prevalent is tautomerism in real-world chemical databases, and why does it matter for drug discovery?

Tautomerism is not a rare edge case; it is a widespread phenomenon. A large-scale analysis of over 100 million unique chemical structures found that more than two-thirds are capable of tautomerism, with the potential to generate hundreds of millions of distinct tautomeric forms [20].

The impact on drug discovery is significant [21] [24]:

- Data Inconsistency: Different tautomers may be registered as distinct compounds in databases, fracturing associated bioassay data and misleading machine learning models.

- Resource Waste: Organizations may inadvertently request assays for multiple tautomeric forms of the same compound, leading to duplicated efforts and increased costs [21].

- Pharmacological Effects: Different tautomers can have different binding affinities and metabolic pathways. For example, the antibiotic erythromycin exists in three tautomeric forms, but only the ketonic form is active, necessitating larger doses to be effective [24].

FAQ 2: Can tautomerism and stereochemistry interact, and what are the consequences?

Yes, tautomerism and stereochemistry can interact, leading to complex and sometimes unexpected consequences [20]:

- Loss of Chirality: The migration of a double bond during tautomerism can eliminate a chiral center, effectively causing racemization.

- Creation of New Stereocenters: Conversely, tautomerism can create new chiral centers or E/Z double-bond stereochemistry.

- Altered Properties: This interconversion can change a molecule's shape, hydrogen-bonding pattern, and surface, thereby affecting its computed properties, database registration, and predicted biological activity.

FAQ 3: What are the best practices for standardizing chemical structures to minimize data ambiguity?

To ensure high-quality, unambiguous chemical data, implement the following best practices:

- Adopt a Canonical Tautomer Form: Establish and consistently use a single, rule-based canonical tautomer for all compounds in your database. This is crucial for reliable searching and machine learning [21].

- Validate All Representations: Cross-validate connection tables, SMILES strings, and InChI identifiers against each other to catch inconsistencies using tools like the Chemical Validation and Standardization Platform (CVSP) [22].

- Treat Stereoisomers as Distinct Entities: Register and manage individual stereoisomers as separate compounds. Develop and use chiral analytical methods (e.g., chiral HPLC) early in the discovery process to monitor and control stereochemical integrity [23].

Experimental Protocols

Protocol: Experimental Verification of Tautomer Identity via NMR Spectroscopy

Objective: To determine whether two commercially available samples, which are suspected to be different tautomers of the same chemical compound, are indeed the same substance ("stuff in the bottle") [19].

Background: Tautomeric equilibria can be influenced by solvent, temperature, and concentration. NMR spectroscopy provides a direct method to analyze the actual composition of a sample in solution. If two samples are different tautomers of the same compound, their NMR spectra will be identical because they exist in the same equilibrium mixture under the given conditions [19].

Materials:

- Research Reagent Solutions:

Methodology:

- Sample Preparation: Precisely weigh 2-5 mg of each commercial sample into separate, clean vials. Dissolve each sample in 0.6 mL of the same deuterated solvent. Transfer each solution to a separate, labeled NMR tube [19].

- Data Acquisition:

- Acquire standard ¹H NMR spectra for both samples using the NMR spectrometer. Ensure acquisition parameters (temperature, number of scans, pulse sequence) are identical for both samples.

- Acquire ¹³C NMR spectra for both samples to compare the carbon skeletons.

- Data Analysis:

- Compare the ¹H and ¹³C NMR spectra of the two samples.

- If the spectra are superimposable, the two samples are the same compound, existing in an identical equilibrium of tautomers in the chosen solvent.

- If the spectra are markedly different, the samples are likely different chemical compounds, and the initial tautomer hypothesis is incorrect.

Workflow Diagram:

Data Presentation

Table 1: Impact of Tautomerism on Compound Uniqueness in a Large Commercial Database

The following data summarizes a study of the Aldrich Market Select (AMS) database, which identified numerous cases of the same chemical being sold as different products due to tautomerism [19].

| Database Analyzed | Tautomer Pairs/Triplets Identified | Experimental Analysis | Experimental Confirmation Rate |

|---|---|---|---|

| Aldrich Market Select (AMS) (~6M samples) | 30,000 cases of multiple products being different tautomers | 166 purchased pairs/triplets analyzed by ¹H/¹³C NMR | Essentially all prototropic transforms were confirmed. Some ring-chain transforms were too "aggressive." |

Table 2: Prevalence and Impact of Tautomerism and Stereochemistry

This table consolidates data on the prevalence of tautomerism and the regulatory and practical implications of stereochemistry.

| Concept | Metric | Impact/Regulatory Guidance |

|---|---|---|

| Tautomerism Prevalence | >66% of 103.5M unique structures [20] | Creates ~680M tautomeric forms; causes registration duplicates and data fragmentation [19] [20]. |

| Stereochemistry in Screening | Racemate screening shows averaged activity [23] | Can mask true activity of a single enantiomer; requires chiral resolution for accurate SAR [23]. |

| Regulatory Guidance (ICH/FDA/EMA) | Requires stereochemical composition identification [23] | Mandates chiral analytical methods and justification for developing racemates over single enantiomers [23]. |

Standardization Workflow

Diagram: Chemical Data Standardization Workflow

The following diagram outlines a standardized workflow for processing chemical structures to minimize ambiguities related to tautomerism and stereochemistry, suitable for populating a high-quality chemical registration system.

Technical Support Center

Troubleshooting Guides

This section addresses common technical challenges faced when integrating chemical and biological data, providing root cause analyses and step-by-step solutions.

Problem 1: Inconsistent Molecular Structure Representations

- Symptoms: Failed structure searches, incorrect similarity calculations, errors in predictive model outputs.

- Root Cause: The same molecule can be represented in different ways (e.g., varying tautomeric forms, stereochemistry assignments, or salt forms) across data sources [25] [26]. Legacy data may have been generated using outdated or incorrect representation rules.

- Solution:

- Standardize Structures: Implement a consistent structural standardization workflow using tools like RDKit, ChemAxon JChem, or Schrodinger LigPrep [26]. This process should include:

- Aromatization of rings.

- Removal of counterions and salts, if required for the analysis.

- Standardization of specific chemotypes and functional groups.

- Validation of stereochemistry.

- Handle Tautomers: Apply consistent tautomer representation rules, such as those established by Sitzmann et al., to choose the most populated tautomer [26].

- Manual Verification: For complex molecules or a representative sample of the dataset, perform manual checks to identify errors that automated tools may miss [26].

- Standardize Structures: Implement a consistent structural standardization workflow using tools like RDKit, ChemAxon JChem, or Schrodinger LigPrep [26]. This process should include:

Problem 2: Discrepant or Non-Reproducible Bioactivity Data

- Symptoms: Large activity value variances for the same compound-target pair, poor-performing QSAR models, inability to confirm published results.

- Root Cause: Data originates from different laboratories using varied assay technologies (e.g., tip-based vs. acoustic dispensing), experimental conditions, or data processing protocols [26]. Subtle experimental variations can significantly influence the measured response.

- Solution:

- Identify Duplicates: Process the dataset to find chemical duplicates (identical compounds) and compare their reported bioactivities [26].

- Analyze Variability: For duplicate entries, calculate the mean and standard deviation of the activity values. Establish a threshold for acceptable variance based on the assay type.

- Curate the Data: Decide on a consolidation strategy. This could involve:

- Taking the mean or median of the activity values.

- Applying a weighted average based on the perceived reliability of the data source or assay method.

- Flagging or removing extreme outliers for further investigation [26].

- Document Metadata: Always record key experimental metadata (e.g., assay type, target information, measurement units) to provide context for the integrated data.

Problem 3: Heterogeneous and Incompatible Analytical Data Formats

- Symptoms: Inability to open or read data files from different instruments, loss of metadata during format conversion, hindered data assembly for multi-technique analysis.

- Root Cause: Analytical instruments from various vendors use proprietary data formats for techniques like chromatography (HPLC, UHPLC), mass spectrometry (MS), and spectroscopy (NMR, IR) [27]. This heterogeneity obstructs the assembly of interrelated datasets.

- Solution:

- Select a Standardized Format: Convert proprietary data into a standardized, non-proprietary format to ensure long-term accessibility and interoperability. Consider using open standards or actively maintained proprietary platforms that support a wide range of vendor formats [27].

- Leverage Data Platforms: Utilize platforms like the ACD/Labs Spectrus Platform, which natively supports over 150 proprietary analytical data formats, acting as a bridge between different vendor systems [27].

- Prioritize Metadata-Rich Formats: When converting data, choose formats that preserve the original metadata (e.g., experimental parameters, processing steps) to maintain data provenance and reproducibility [27].

Frequently Asked Questions (FAQs)

Q1: What are the primary types of heterogeneity we encounter in chemoinformatics data?

You will typically face three main types of heterogeneity [28] [29]:

- Format Heterogeneity: Data comes in different file and encoding formats (e.g., vendor-specific instrument formats, JSON, XML, CSV, SD-files) [30] [27].

- Semantic Heterogeneity: The same term can have different meanings, or different terms can mean the same thing across datasets. For example, "IC50" might be defined or measured differently in various labs [28] [26].

- Structural Heterogeneity: Data spans structured (e.g., database tables), semi-structured (e.g., XML, JSON), and unstructured (e.g., text in scientific literature) forms [29].

Q2: Our QSAR models are underperforming. Could integrated data quality be the issue?

Yes, this is a common cause. The accuracy of QSAR models is highly dependent on the quality of the underlying data [26]. To diagnose and fix this:

- Curate Chemical Structures: Ensure all molecular structures are correct and standardized. Errors in structures directly lead to errors in calculated descriptors and model predictions [26] [31].

- Curate Bioactivity Data: Identify and resolve discrepancies in activity data for chemical duplicates, as datasets with many inconsistent duplicates can lead to over-optimistic or inaccurate models [26].

- Check for Data Balance: Ensure your dataset includes both active and inactive (negative data) compounds, as this is essential for training reliable classification models [25].

Q3: What is the difference between data standardization and data normalization/harmonization?

These are two critical, distinct steps in data preparation [27]:

- Data Standardization homogenizes data from different sources into a consistent technical format. For example, converting all molecular structures into a single notation like SMILES or InChI, or converting all chromatographic data files into a unified format like AnIML or Allotrope [32] [27].

- Data Normalization/Harmonization translates the data content itself into an agreed-upon ontology or vocabulary. This ensures semantic consistency, for instance, mapping all terms for "dimethyl sulfoxide" to a standard identifier like "DMSO" across all assay data [27].

Q4: How can we prepare heterogeneous data for AI/ML applications?

AI/ML places a premium on well-curated, standardized data [25] [27]. Follow these steps:

- Engineer Your Data: Perform thorough data curation, standardization, and normalization as described in the previous FAQs [26].

- Choose Machine-Readable Formats: Use flexible, widely compatible formats like JSON or domain-specific standards like Chemical Markup Language (CML), which are well-suited for AI/ML workflows and cloud-based platforms [27].

- Implement Robust Metadata Management: Ensure all data is accompanied by rich, structured metadata to guarantee data provenance and reproducibility, which is critical for interpreting AI/ML outputs [27].

Experimental Protocols for Data Integration

Protocol 1: Integrated Chemical and Biological Data Curation Workflow

This protocol provides a detailed methodology for curating chemogenomics data prior to integration and model development, based on established best practices [26].

- Objective: To verify the accuracy, consistency, and reproducibility of both chemical structures and bioactivity data in a chemogenomics dataset.

Materials:

- Raw dataset of chemical structures and associated bioactivities.

- Cheminformatics software (e.g., RDKit, ChemAxon JChem, Knime with chemistry plugins).

- Access to chemical databases (e.g., PubChem, ChEMBL, ChemSpider) for verification.

Procedure:

- Chemical Structure Curation:

- Remove Incompatible Compounds: Filter out inorganic, organometallic compounds, mixtures, and large biologics if the subsequent analysis tools are not equipped to handle them [26].

- Structural Cleaning: Use software to detect and correct valence violations, extreme bond lengths/angles, and other structural errors [26].

- Standardization: Aromatize rings, normalize chemotypes, and standardize tautomeric forms to a consistent representation [26].

- Stereochemistry Verification: Check the correctness of stereocenters by comparing to similar compounds in trusted databases like PubChem or ChemSpider [26].

- Biological Data Curation:

- Identify Chemical Duplicates: Find all instances of the same compound in the dataset.

- Compare Bioactivities: For each set of duplicates, compare the reported bioactivity values (e.g., IC50, Ki).

- Resolve Discrepancies: Apply a pre-defined rule to consolidate multiple activity values (e.g., calculate the mean or median) or flag entries with high variance for further review [26].

- Validation:

- Manually inspect a representative or "suspicious" subset of the curated data to verify the automated process.

- If a crowd-curated platform like ChemSpider is available, leverage community expertise for verification [26].

- Chemical Structure Curation:

The following workflow diagram illustrates the key steps and decision points in this protocol:

Protocol 2: Standardization of Analytical Data for AI/ML

- Objective: To homogenize analytical data (e.g., from NMR, LC/MS) from diverse instrument sources into a standardized, machine-readable format suitable for AI/ML pipelines.

Materials:

- Raw analytical data files in various proprietary vendor formats.

- Data standardization tool (e.g., ACD/Labs Spectrus Platform, custom scripts with vendor SDKs, format converters).

- A target standardized data format (e.g., AnIML, Allotrope, JSON).

Procedure:

- Inventory Data Sources: Catalog all analytical techniques and instrument vendors from which data will be integrated.

- Select a Target Format: Choose a data format based on needs for longevity, metadata completeness, and AI/ML compatibility. JSON is often preferred for its flexibility and compatibility with modern AI/ML platforms, while domain-specific formats like AnIML offer high fidelity for analytical data [27].

- Convert Data: Use the chosen tool to batch-convert proprietary data files into the target standardized format. Ensure the conversion process preserves critical metadata (e.g., instrument parameters, acquisition date, processing methods) [27].

- Validate and Assemble: Check a sample of converted files for accuracy and completeness. Then, assemble the standardized datasets from multiple techniques (e.g., NMR, LC/UV/MS) to create a unified data resource for analysis [27].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key resources and tools essential for tackling heterogeneous data integration in chemoinformatics.

| Item | Function & Application |

|---|---|

| RDKit | An open-source toolkit for cheminformatics used for structural standardization, descriptor calculation, and machine learning [26]. |

| ChemAxon JChem | A commercial software suite that includes tools for structure standardization, tautomer normalization, and chemical database management [26]. |

| Knime Analytics Platform | A visual programming platform with extensive chemistry extensions (e.g., RDKit, CDK) used to build customizable, automated data curation workflows [26]. |

| PubChem | A public database of chemical compounds and their biological activities, useful for verifying chemical structures and finding related bioactivity data [32] [26]. |

| ChEMBL | A manually curated database of bioactive molecules with drug-like properties, providing high-quality data for building predictive models [32] [26]. |

| AnIML (Analytical Information Markup Language) | An XML-based standard designed for storing and sharing analytical data, helping to overcome instrument vendor format heterogeneity [27]. |

| Allotrope Framework | A suite of standards, including the Allotrope Data Format (ADF) and Ontology, for managing complex laboratory data throughout its lifecycle, improving interoperability [27]. |

| JSON (JavaScript Object Notation) | A lightweight, human-readable data format that is highly flexible and widely used for data exchange in AI/ML workflows [27]. |

Data Integration Standards and Formats

The table below summarizes key data formats and standards relevant to chemoinformatics, highlighting their primary use cases and types.

| Format/Standard | Primary Use Case | Type |

|---|---|---|

| SMILES | Linear string representation of molecular structures; ideal for database storage and fast searching [32]. | Open Standard |

| InChI | Standardized, non-proprietary identifier for molecular structures; ensures global uniqueness for data exchange [25] [32]. | Open Standard |

| AnIML | Storing and sharing data from a wide range of analytical techniques using XML [27]. | Open Standard |

| Allotrope Data Format (ADF) | Managing complex laboratory data from analytical instruments within a standardized framework [27]. | Consortium-based Standard |

| JCAMP-DX | Storing and exchanging spectral data [27]. | Open Standard |

| JSON | Data interchange format particularly well-suited for AI/ML workflows and web-based applications [27]. | Open Standard |

The Evolution from Proprietary Systems to Open Science and FAIR Principles

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: What are the FAIR Principles and why are they critical for modern chemoinformatics?

The FAIR Principles are a set of guiding criteria to make data Findable, Accessible, Interoperable, and Reusable by both humans and machines [33]. They are critical for modern chemoinformatics because the field is grappling with a data deluge and issues of data quality and reproducibility. Adhering to FAIR principles ensures that chemical data from different sources can be integrated and trusted, which is foundational for building reliable machine learning models and enabling collaborative open science [3] [34]. Initiatives like the Open Science Framework (OSF) provide robust, user-friendly tools to help researchers implement these principles effectively [34].

Q2: My ML model for toxicity prediction performs poorly on new compound series. What could be wrong?

This is a common problem often traced to data quality and applicability domain issues. The model may have been trained on low-quality, inconsistent data. For instance, a recent study found almost no correlation between IC50 values for the same compounds tested in the "same" assay by different groups [35]. Furthermore, the model's applicability domain—the chemical space where it can make reliable predictions—may not cover your new series.

- Troubleshooting Checklist:

- Audit Training Data: Verify the source and experimental consistency of your training data. Prefer data from standardized, high-throughput experiments over data manually curated from dozens of disparate publications.

- Define Applicability Domain: Implement methods to systematically analyze the relationship between your training data and the new compounds. This helps identify when a prediction is being made outside the model's reliable scope [35].

- Test Locally vs. Globally: Evaluate if a series-specific (local) model would outperform your current global model. The OpenADMET initiative is generating datasets to enable such comparisons [35].

Q3: How can I make my proprietary research data FAIR without compromising intellectual property?

You can implement FAIR principles for proprietary data without public disclosure. The key is to ensure data is FAIR for authorized users within your organization or consortium.

- Recommended Actions:

- Rich Internal Metadata: Use descriptive titles, detailed metadata, and standard ontologies within your internal data management systems. This fulfills the "Findable" and "Interoperable" pillars internally [34].

- Clear Access Protocols: Use access permission controls on collaborative platforms. You can make project components public (e.g., protocols) while keeping sensitive data private but accessible under clear terms, thus addressing "Accessibility" [34].

- Non-Proprietary Formats: Store data in standardized, non-proprietary file formats (e.g., CSV, TXT) with comprehensive documentation. This ensures "Interoperability" and "Reusability" for future projects, even if the data remains internal [34].

Q4: What are the biggest challenges in transitioning from proprietary software to open-source/open science platforms?

The transition faces several challenges, including resource disparities and motivational conflicts. Industry dominates key AI research elements—computing power, large datasets, and skilled researchers—and may lack motivation to create public scientific goods, instead prioritizing proprietary control to maintain competitive advantage [36]. For individual researchers, challenges include:

- Lack of Computational Resources: Access to large-scale GPU clusters and high-performance computing is often limited in academia [36].

- Data Silos and Standardization: Proprietary systems often use closed formats, hindering interoperability.

- High Implementation Costs & Skill Gaps: Integrating new open platforms with existing infrastructure and training staff requires significant investment [37].

Troubleshooting Guides

Problem: Inconsistent Molecular Representation Causing Data Interoperability Failures

- Symptoms: Errors when sharing files between different software, failure to accurately represent complex chemistry (stereochemistry, metal complexes), poor performance of ML models.

- Background: Molecular notations like SMILES and InChI are widely used but have limitations in consistently representing complex chemical information, which is critical for data interoperability and predictive modeling [3].

- Solution:

- Standardize Input: For data exchange, use the non-proprietary InChI identifier. For database storage and ML, SMILES is common but ensure generation is standardized using a tool like RDKit [3] [38].

- Validate Structures: Always include a structure validation step in your preprocessing workflow using toolkits like RDKit to correct errors and ensure consistency [38].

- Use Multiple Representations: For machine learning, do not rely on a single representation. Experiment with molecular graphs, fingerprints, and descriptors to find the most robust representation for your specific task [35] [38].

- Prevention: Adopt and document a standard operating procedure (SOP) for molecular representation and structure validation in your lab or organization.

Problem: Failure to Reproduce Literature-Based QSAR Model Predictions

- Symptoms: A published QSAR model performs poorly when applied to your in-house compounds, or you cannot recreate the model's published performance metrics.

- Background: Many published models are trained on public data aggregated from various sources, which can suffer from inconsistent experimental protocols, uncurated negative data, and reporting biases [3] [35].

- Solution:

- Scrutinize the Training Data: Investigate the source and composition of the model's training data. Check if it includes well-balanced positive and negative data, which is essential for reliability [3].

- Replicate Assay Conditions: If possible, compare the biological assay conditions used to generate your internal data with those from the literature. Key differences here are often the root cause.

- Generate High-Quality, Local Data: The most reliable solution is to build your own models using high-quality, consistently generated experimental data from relevant assays, similar to the approach taken by OpenADMET [35].

- Escalation: If reproduction is critical, contact the model's original authors for clarification on data preprocessing, hyperparameters, and applicability domain.

Experimental Protocols & Data Standards

Detailed Methodology for a High-Quality ADMET Data Generation Campaign

This protocol is designed to generate consistent, high-quality data for building robust machine learning models, addressing common data quality issues.

1. Objective: To systematically generate absorption, distribution, metabolism, excretion, and toxicity (ADMET) data for a diverse library of 10,000 compounds against a panel of key avoidome targets (e.g., hERG, CYP450s) [35].

2. Experimental Workflow:

- Compound Curation: Select compounds from commercial libraries and in-house collections to ensure chemical diversity and drug-like properties. Standardize all structures using RDKit and confirm purity (>95% by HPLC) [38].

- Assay Development: Establish standardized, high-throughput assays for each ADMET endpoint. Use a single, consistent protocol for each target across all compounds to minimize inter-assay variability [35].

- Data Generation:

- Run all assays in triplicate with appropriate controls (positive, negative, vehicle) on each plate.

- Include reference compounds with known activity in every run to monitor for assay drift.

- Record raw data and calculated activity values (e.g., IC50) in a centralized database.

- Data Processing:

- Apply quality control checks; flag and repeat outliers.

- Curate a dataset that includes both active and inactive compounds, as negative data is crucial for training discriminatory models [3].

3. FAIR Data Packaging:

- Findable: Assign a Digital Object Identifier (DOI) to the final dataset. Register it in a public repository like OSF with rich metadata (research field, tags, resource type) [34].

- Accessible: Provide a clear README file with access instructions. Use OSF's permission controls to manage access if needed [34].

- Interoperable: Save data in standard, non-proprietary formats (e.g., CSV). Include a data dictionary explaining all variables, units, and the standardized assay protocols [34].

- Reusable: Attach an open license (e.g., Creative Commons) to the dataset. Document all methodologies, analysis scripts, and the version of the chemical toolkits used [34].

Data Quality Metrics for Cheminformatics

The following table summarizes key metrics to assess data quality, a common source of problems in chemoinformatics.

| Metric | Description | Target Benchmark | Tool/Method for Assessment |

|---|---|---|---|

| Structure Validity | Percentage of molecules with chemically valid, interpretable structures. | >99.5% | RDKit, Open Babel [38] |

| Assay Reproducibility | Correlation (e.g., R²) of IC50 values for control compounds across different experimental batches. | R² > 0.9 | Internal quality control protocols [35] |

| Data Consistency | Uniformity in molecular representation (e.g., SMILES, InChI) and units of measurement across the dataset. | 100% | Standardized data preprocessing pipelines [38] |

| Negative Data Inclusion | Proportion of datasets that include confirmed inactive compounds alongside active ones. | Should be standard practice | Manual curation, literature review [3] |

Workflow Visualization: Implementing FAIR in Cheminformatics

The diagram below outlines a logical workflow for implementing FAIR principles in a typical chemoinformatics research cycle, from data generation to model sharing.

The Scientist's Toolkit: Key Research Reagent Solutions

This table details essential resources for conducting robust, data-driven chemoinformatics research.

| Item | Function | Relevance to Open Science & FAIR |

|---|---|---|

| RDKit | An open-source toolkit for cheminformatics, used for descriptor calculation, structure manipulation, and machine learning [38]. | Promotes interoperability and reproducibility through open-source, standardized algorithms. |

| Open Science Framework (OSF) | A free, open-source platform for managing, sharing, and documenting research projects and data throughout the entire project lifecycle [34]. | Directly enables FAIRness by providing infrastructure for persistent identifiers, metadata, and access control. |

| PubChem/ChEMBL | Large, public databases of chemical molecules and their biological activities [3]. | Key examples of open data resources that accelerate research through data sharing and reuse. |

| FAIR Data Steward | A professional specializing in data governance, quality, and lifecycle management to ensure data is accurate and compliant with standards [33]. | Critical for the successful implementation of FAIR principles within a research team or organization. |

| Hugging Face (Science Hub) | A platform hosting a vast number of open-source pre-trained models and datasets, including scientific models [36]. | Fosters model transparency, reproducibility, and community-driven development in scientific AI. |

Building a Robust Data Foundation: Standardization Protocols and Cheminformatics Pipelines

Implementing Automated Validation and Standardization with Tools like CVSP

Troubleshooting Guides and FAQs

Frequently Asked Questions (FAQs)

Q1: What is the primary purpose of the Chemical Validation and Standardization Platform (CVSP)? CVSP is a freely available internet-based platform designed to validate and standardize chemical structure datasets from various sources. It processes chemical structure files through tested validation and standardization protocols to ensure that data released into public databases is pre-validated, thereby improving data quality and homogeneity for exchange between online databases [39] [40] [41].

Q2: What common data quality issues does CVSP help to resolve? CVSP detects a myriad of issues that can exist with chemical structure representations online. These include inconsistencies between connection tables (in MOL/SDF files) and associated identifiers like SMILES and InChI, problems with atoms and bonds (e.g., query atoms and bonds), valences, stereochemistry, and the presence of chemically suspicious molecular patterns [39] [41].

Q3: The standalone CVSP website was taken down. Where can I now access its functionality?

The original standalone CVSP website was taken offline in November 2018. However, its core functionality and evolved ruleset have been integrated into the ChemSpider deposition system available at deposit.chemspider.com. The original codebase also remains available on GitHub [40].

Q4: What are the different severity levels of issues identified by CVSP? CVSP categorizes identified issues into three levels of severity to help users prioritize review:

- Error: Indicates a critical issue that very likely requires correction.

- Warning: Highlights a potential problem that should be reviewed.

- Information: Provides informational messages about the data for user awareness [41].

Q5: Why is cross-validating connection tables with SMILES and InChIs important? Often, the connection table (e.g., within an SDF file) is the primary source of structural data, while SMILES and InChIs are derived from it. Errors can occur during these derivations or through incorrect manual association. Cross-validation ensures that all representations of the same molecule are consistent, preventing the propagation of incorrect data [41].

Troubleshooting Common Issues

Issue 1: Inconsistent Stereochemistry Representation

- Problem: Stereochemical information (e.g., chiral centers, double bond geometry) is lost or incorrectly interpreted when converting between file formats or generating identifiers.

- Solution: CVSP includes validation of stereo chemistry. It is crucial to ensure that the original connection table accurately represents the intended stereochemistry. Be aware that standard InChI normalization can convert relative stereo to absolute and does not distinguish between undefined and explicitly marked "unknown" sp3 stereo, which can lead to information loss upon conversion [41].

Issue 2: Validation Errors with Organometallics or Special Structures

- Problem: The validation tool flags errors for organometallic compounds or other structures with non-standard bonding.

- Solution: Current exchange formats and standards, including InChI, have incomplete support for structures like organometallics. CVSP uses predefined molecular patterns to flag such chemically suspicious structures for manual review. This is an expected outcome, and careful manual curation is required for these complex structures [41].

Issue 3: Data Rejection during Database Deposition

- Problem: A dataset prepared for deposition to a database like ChemSpider is rejected due to quality issues.

- Solution: Prior to deposition, process your chemical structure files using the validation and standardization rules available in the ChemSpider deposition system (which incorporates the CVSP legacy). This pre-validation step helps identify and allow you to correct issues related to structure representation, identifier consistency, and more, leading to a higher acceptance rate [40] [41].

Experimental Protocols and Methodologies

Protocol: Large-Scale Dataset Validation using CVSP

This protocol outlines the methodology for using CVSP to validate and standardize a chemical dataset, as described in its foundational research [39] [41].

1. Principle The platform validates and standardizes chemical structure representations according to sets of systematic rules. It detects issues using pre-defined or user-defined dictionary-based molecular patterns and assigns a severity level to each identified issue [39].

2. Key Reagents and Solutions

| Research Reagent / Solution | Function in the Experiment |

|---|---|

| SDF (Structure-Data File) Input | The standard form of submission for collections of chemical data. It contains the connection tables and associated data fields [39]. |

| Cheminformatics Toolkits (Indigo, OpenEye) | The underlying computational engines that power the CVSP's structure processing, validation, and standardization capabilities [41]. |

| Pre-defined Molecular Pattern Dictionary | A set of rules identifying chemically suspicious structures (e.g., certain functional groups, bonding patterns) that require manual review [39]. |

| Standardization Ruleset | A systematic set of procedures (e.g., for aromatization, neutralization) applied to structures to produce a homogeneous representation [39]. |

3. Procedure

- Step 1: Data Submission. Submit the chemical dataset in SDF file format to the platform.

- Step 2: Field Mapping. Map the data fields within the SDF (e.g., SMILES, InChI, chemical names) to predefined CVSP fields for cross-validation.

- Step 3: Automated Processing. The CVSP automatically processes each record through its validation and standardization pipelines.

- Step 4: Results Review. The platform generates a report where issues are categorized by severity (Information, Warning, Error). The user is conveniently informed of the need to browse and review subsets of their data based on these categories [39].

4. Expected Outcome A processed dataset where structures have been standardized, and a detailed validation report is provided. This allows researchers to identify, review, and correct problematic structures before public deposition or further analysis [39].

5. Workflow Diagram

The Scientist's Toolkit: Essential Materials for Chemical Data Validation

| Item | Brief Explanation of Function |

|---|---|

| CVSP / ChemSpider Deposition | The core platform for automated validation and standardization of chemical structure files using systematic rules [39] [40]. |

| SDF (Structure-Data File) Format | The standard file format for submitting collections of chemical structures and associated properties for validation [39]. |

| SMILES Strings | A line notation for encoding molecular structures; used for cross-validation against the connection table in the SDF file [39] [32]. |

| InChI Identifiers | A standardized, non-proprietary identifier for chemical substances; used for cross-validation and as a consistent identifier across databases [39] [32]. |

| Pre-defined Validation Rules | A dictionary of molecular patterns that are chemically suspicious, used to automatically flag records for manual review [39]. |

| Cheminformatics Toolkits (e.g., Indigo, OpenEye) | Software libraries that provide the underlying algorithms for handling chemical structures, performing calculations, and executing standardization rules [41]. |

In cheminformatics, data pipelines form the industrial backbone, automating the collection, processing, and analysis of chemical data from diverse sources like lab experiments, computational simulations, and public databases [42]. Effective data pipelining is critical for managing the vast volumes of chemical data generated in fields like drug discovery and materials science [42]. This technical support guide addresses common pipeline challenges, focusing on the crucial decisions between ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform), as well as batch versus real-time processing, all within the overarching framework of ensuring data quality and standardization.

Understanding ETL and ELT: Which Pattern to Choose?

The choice between ETL and ELT determines when and where your data transformations occur, impacting flexibility, performance, and infrastructure costs.

ETL (Extract, Transform, Load) is the traditional approach where data is transformed before loading into the target data warehouse. This process is ideal for scenarios requiring strict data governance and when working with smaller datasets that can be efficiently processed on external servers [43].

ELT (Extract, Load, Transform) reverses this sequence, loading raw data directly into the target system (like a cloud data platform) and performing transformations within that destination. ELT has gained popularity due to optimized cloud compute costs, the simplicity of modern data platforms like Snowflake and Databricks, and its ability to handle raw, unstructured data effectively [43].

Decision Matrix: ETL vs. ELT

| Criteria | ETL | ELT |

|---|---|---|

| Transformation Sequence | Transform before loading | Load before transforming |

| Ideal Workload | Pre-defined, structured data | Exploratory analysis, raw/unstructured data |

| Infrastructure Demand | High on transformation engine | High on target data warehouse |

| Data Governance | Strong, as data is cleaned before storage | Can be lower, raw data is stored |

| Best for | Compliance-sensitive environments, pre-aggregated reporting | Agile environments, data science exploration |

For most modern cheminformatics workloads involving large-scale, exploratory data analysis, ELT is generally the recommended approach as it offers greater flexibility to researchers [43].

Batch vs. Real-Time Processing: Selecting the Right Paradigm

Choosing the correct processing mode is fundamental to meeting your project's timeliness requirements without introducing unnecessary complexity.

Batch Processing involves collecting and processing data in discrete chunks at scheduled intervals (e.g., daily or hourly). It is efficient for handling large volumes of data where immediate insight is not critical [42] [43].

Real-Time Processing (or Streaming) handles data continuously, as it arrives, enabling immediate analysis and decision-making. This is powered by technologies like change data capture (CDC) and stream-processing platforms [43].

Decision Matrix: Batch vs. Real-Time Processing

| Criteria | Batch Processing | Real-Time Processing |

|---|---|---|

| Data Flow | Periodic, in large chunks | Continuous, record-by-record or in micro-batches |

| Latency | High (hours/days) | Low (milliseconds/seconds) |

| Complexity & Cost | Lower | Significantly higher |

| Ideal Cheminformatics Use Cases | Daily lab instrument data sync, periodic QSAR model retraining, generating routine reports | High-throughput screening (HTS) analysis, real-time reaction monitoring, live dashboarding of active experiments |

| Technical Examples | Apache Airflow, AWS Batch, Cron jobs | Apache Kafka, Striim, AWS Kinesis |

Recommendation: Stick to batch processing unless your project has a definitive, time-sensitive requirement for real-time data. Real-time pipelines are complex to build, maintain, and troubleshoot [44] [45].

Common Data Quality Issues & Troubleshooting Guide

Data quality is the cornerstone of reliable cheminformatics research. Here are common root causes of pipeline issues and how to resolve them.

FAQ: Troubleshooting Common Pipeline Problems

Q1: Why does my pipeline fail immediately after a code update?

- Root Cause: A bug was introduced in the new version of the pipeline code [46].

- Solution: Use version control software like Git to compare the new production code with a prior stable version. Revert to the last working version and implement a robust CI/CD (Continuous Integration/Continuous Deployment) process with automated testing for data logic [47] [46].

Q2: Why is my pipeline stuck in a "queued" state and not executing?

- Root Cause: An infrastructure error, such as maxed-out memory, exceeded API call limits, or a failure in the underlying cluster (e.g., Apache Spark) [46].

- Solution: Check your infrastructure's resource utilization and quotas. Implement an observability platform to monitor memory, CPU, and API usage. For orchestrated pipelines, verify the health of your scheduler (e.g., Airflow) [47] [46].

Q3: Why is the molecular structure data in my database incorrect or nonsensical?

- Root Cause: This is often a data loss issue during the digitalization of chemical structures. Common file formats (like MOL, SMILES) or algorithms can fail to capture essential information like stereochemistry, special bond types (dative bonds in complexes), or correctly interpret abbreviations (e.g., "L" for ligand) [48].

- Solution:

- Validate at the Source: Use chemical drawing tools that enforce validation rules.

- Implement Parsing Checks: Add data quality checks in your pipeline to flag structures with missing stereochemistry or invalid valences.

- Standardize Abbreviations: Maintain and enforce a controlled vocabulary for structural abbreviations used in your organization [48].

Q4: Why did multiple pipeline jobs fail overnight without an obvious system error?

- Root Cause: A "ghost in the machine" scenario, or more likely, a scheduling change that caused jobs to run out of order or a permission issue preventing data access [46].

- Solution: Audit the orchestration logs for schedule changes. Verify that the service accounts running the pipelines have the correct read/write permissions on all source and target systems [46].

Q5: How can I ensure my data meets regulatory standards throughout the pipeline?

- Root Cause: Lack of built-in governance and lineage tracking.

- Solution: Choose ETL/ELT frameworks that embed strong controls, including field-level access control for sensitive data, immutable audit logs, and end-to-end lineage tracking from raw source to final report [47].

Essential Tools & Research Reagent Solutions

The "research reagents" for building robust cheminformatics pipelines are the software and platforms that handle data movement, transformation, and orchestration.

The Scientist's Toolkit: Key Pipeline Components

| Tool Category | Function | Example "Reagents" |

|---|---|---|

| Orchestration | Schedules, manages, and monitors workflow execution. | Apache Airflow, Dagster, Prefect [44] [47] |

| Data Integration | Core ETL/ELT engine for moving and transforming data. | Fivetran (SaaS), Airbyte (Open Source), Talend (Hybrid) [44] |

| Stream Processing | Ingests and processes continuous data streams. | Apache Kafka, Kafka Streams, AWS Kinesis [44] [43] [45] |

| Chemical Data Management | Standardized handling and representation of molecular data. | RDKit, ChemDraw, SMILES/InChI parsers [48] [49] |

| Observability | Provides visibility into pipeline health and data quality. | IBM Databand, Prometheus, Grafana [47] [46] |

Visual Guide: Pipeline Architecture & Decision Flow

To synthesize the concepts, the following diagrams illustrate a high-level pipeline architecture and the logical decision process for choosing the right pipeline design.

Cheminformatics Data Pipeline Architecture

Pipeline Design Decision Flowchart

Best Practices for Data Standardization and Normalization of Analytical Data

Technical Support Center

Troubleshooting Guides

Guide 1: Addressing Poor AI/ML Model Performance with Chemical Data

Problem: Machine learning models for property prediction (e.g., solubility, toxicity) show poor accuracy and fail to generalize on new compounds.

Diagnosis & Solution: This typically stems from issues in data preprocessing and molecular representation. Systematically check your data pipeline.

Step 1: Verify Molecular Representation Integrity

Step 2: Assess Data Quality for Negative Data

- Problem: Models trained only on "active" or "successful" compounds lack the ability to identify "inactive" or "invalid" patterns, reducing predictive reliability [25].

- Solution: Curate a balanced dataset that includes both positive and negative data (e.g., both toxic and non-toxic compounds). Actively source negative data from public repositories or historical screening data [25].

Step 3: Evaluate Feature Engineering Strategy