Data Harmonization in Multi-Omics Studies: 2025 Best Practices for Robust Integration and Clinical Translation

This article provides a comprehensive guide to data harmonization best practices tailored for researchers, scientists, and drug development professionals working with multi-omics data.

Data Harmonization in Multi-Omics Studies: 2025 Best Practices for Robust Integration and Clinical Translation

Abstract

This article provides a comprehensive guide to data harmonization best practices tailored for researchers, scientists, and drug development professionals working with multi-omics data. It covers the foundational principles of multi-omics integration, explores advanced methodological strategies for combining diverse datasets, offers solutions for common troubleshooting and optimization challenges, and outlines rigorous validation and comparative analysis frameworks. By addressing these four core intents, the article aims to equip practitioners with the knowledge to transform complex, heterogeneous biological data into reliable, actionable insights for precision medicine and therapeutic discovery.

Laying the Groundwork: Core Principles and the Imperative for Multi-Omics Harmonization

Defining Data Harmonization in the Multi-Omics Context

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between data harmonization and data integration in multi-omics studies?

Data harmonization is the crucial preparatory step that ensures different omics datasets are comparable and ready for integration. It involves mapping data to common ontologies, normalizing data to comparable scales or units, and applying consistent filtering criteria to mitigate technical variations like batch effects [1]. Data integration, conversely, is the subsequent step of jointly analyzing these harmonized datasets using statistical or machine learning methods (e.g., MOFA, DIABLO) to extract biological insights [2]. Simply put, harmonization makes the data uniform, while integration finds the meaning in the combined data.

2. How can I check if my datasets are compatible for multi-omics integration?

Before integration, verify the following aspects of your experimental design [1]:

- Sample Context: Ensure datasets originate from the same biological sample type (e.g., disease tissue vs. healthy control, same cell population).

- Population Consistency: Confirm that samples are from a comparable population regarding factors like gender, age, or treatment history.

- Metadata Alignment: Carefully read the metadata for each dataset to ensure key variables (e.g., clinical outcomes, experimental conditions) are defined and measured consistently across studies.

3. What are the best practices for handling missing data in multi-omics datasets?

Missing data is a common challenge, often arising from technological limits where molecules like proteins might be undetectable in one sample but present in another [2]. Best practices include:

- Generative Models: Advanced AI methods, such as Variational Autoencoders (VAEs) or Generative Adversarial Networks (GANs), can learn the underlying data distribution to impute plausible values for missing data points [3].

- Factorization Methods: Tools like MOFA (Multi-Omics Factor Analysis) are designed to handle missing values by inferring latent factors that explain the observed data, without requiring complete datasets [2].

- Quality Filtering: As a foundational step, prioritize data from carefully quality-controlled (QC-ed) studies to minimize non-random missingness from poor sample quality [1].

4. Which integration method should I choose for my specific biological question?

The choice of integration method is not one-size-fits-all and should be guided by your research goal. The table below summarizes the purpose of several state-of-the-art methods.

| Method | Primary Purpose | Key Characteristics |

|---|---|---|

| MOFA [2] | Unsupervised discovery of latent factors driving variation across omics layers. | Probabilistic, Bayesian framework; identifies shared and data-specific factors; does not require a pre-defined outcome. |

| DIABLO [2] | Supervised integration for biomarker discovery and phenotype prediction. | Uses known phenotype labels; performs feature selection to identify molecules predictive of a specific category (e.g., disease vs. healthy). |

| SNF [2] [4] | Unsupervised sample clustering and network-based fusion. | Constructs and fuses sample-similarity networks from each omics data type to identify patient subgroups. |

| Correlation Networks [4] | Uncover relationships between different molecular entities (e.g., genes and metabolites). | Uses statistical correlations (e.g., Pearson) to build interaction networks, helping identify key regulatory nodes and pathways. |

5. How can I address the "batch effect" problem when combining datasets from different studies or labs?

Batch effects, where technical variations obscure biological signals, are a major harmonization hurdle. Key strategies include:

- Standardization and Transformation: Apply consistent normalization methods across all datasets. Transforming data to a ranking system is a common practice to alleviate batch effects [1].

- Similarity Network Fusion (SNF): This method can be effective as it fuses data based on sample-similarity patterns, which can be more robust to batch effects than raw data integration [2] [4].

- Data Transformation: Normalize data to a consistent scale (e.g., 0-1) before integration to make them comparable, a technique often used in target prioritization pipelines [1].

Troubleshooting Guides

Issue: Incompatible Data Formats and Ontologies

Problem: You have collected transcriptomics and metabolomics data, but they are in different formats (e.g., raw count matrices vs. peak intensity tables), use different gene/protein identifiers, and lack standardized metadata.

Solution: Implement a comprehensive standardization and harmonization workflow.

Methodology:

- Format Conversion: Convert all data into a matrix format where rows are features (e.g., genes, proteins) and columns are samples.

- Identifier Mapping: Map all gene, protein, and metabolite identifiers to a consistent ontology or database (e.g., Ensembl IDs for genes, HMDB IDs for metabolites).

- Metadata Annotation: Create a unified metadata table for all samples, ensuring clinical or phenotypic terms are drawn from controlled vocabularies.

- Normalization: Apply appropriate normalization techniques for each data type (e.g., TPM for RNA-seq, quantile normalization for proteomics) to make distributions comparable.

Issue: High-Dimensionality and Data Sparsity

Problem: Your integrated dataset has thousands of molecular features (high dimensionality) but only a limited number of biological samples, and some data types (e.g., metabolomics) are inherently sparse, leading to overfitting and poor model performance.

Solution: Employ dimensionality reduction and feature selection techniques.

Methodology:

- Feature Filtering: Remove low-variance features and those with a high proportion of missing values.

- Factorization / Latent Variable Models: Use methods like MOFA to reduce dimensionality by inferring a small number of latent factors that capture the major sources of biological variation across all omics datasets [2] [3].

- Supervised Feature Selection: When a phenotype is known, use supervised methods like DIABLO, which incorporates penalization (e.g., Lasso) to select only the most informative features for integration and prediction [2].

- AI-Driven Integration: Leverage deep learning architectures like autoencoders to learn compressed, lower-dimensional representations of the data that are suitable for downstream tasks [3].

Issue: Interpreting Biologically Meaningful Results from Integrated Models

Problem: After running an integration model, you have a list of features or factors but struggle to translate these statistical outputs into actionable biological hypotheses.

Solution: Combine integration outputs with downstream functional analysis.

Methodology:

- Factor Interpretation (for MOFA): Examine the top features (genes, proteins) with the highest weights ("loadings") for each inferred factor. Then, perform pathway enrichment analysis on these top-feature sets.

- Network Integration: Map the results onto shared biochemical networks. For example, connect a prioritized transcription factor (from transcriptomics) to the transcripts it regulates and the associated metabolites from the metabolic pathways it influences [4] [5].

- Multi-Omics Pathway Analysis: Use pathway enrichment methods that are specifically designed for and can incorporate multiple types of omics data simultaneously, rather than analyzing each result in isolation [4] [1].

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table details key computational tools and resources essential for conducting robust multi-omics data harmonization and integration.

| Tool/Resource Name | Function | Application in Harmonization/Integration |

|---|---|---|

| MOFA+ [2] | Unsupervised multi-omics data integration | Discovers latent factors that capture the main sources of variation across multiple omics datasets. Ideal for exploratory analysis. |

| DIABLO [2] | Supervised multi-omics integration | Integrates data in relation to a categorical outcome for biomarker discovery and sample classification. |

| WGCNA [4] | Weighted Gene Co-expression Network Analysis | Identifies modules of highly correlated features; modules can be related to external traits or other omics data. |

| Cytoscape [4] | Network visualization and analysis | Visualizes complex interaction networks (e.g., gene-metabolite networks) derived from integrated data. |

| TCGA [2] [3] | Publicly available multi-omics database | Provides a vast resource of matched multi-omics data for method development, validation, and benchmarking. |

| Omics Playground [2] | Integrated analysis platform | Offers a code-free interface with multiple state-of-the-art integration methods and visualization capabilities. |

Conceptual Framework & Data Harmonization Strategies

What is multi-omics data integration and why is harmonization critical?

Multi-omics data integration involves combining and collectively analyzing disparate biological data layers, such as genomics, transcriptomics, proteomics, and metabolomics, to gain a comprehensive understanding of complex biological systems [6]. Data harmonization is the process of reconciling these various types, levels, and sources of data into formats that are compatible and comparable, making them useful for integrated analysis and decision-making [7]. This is essential because without effective harmonization, multi-omics analysis becomes more complex and resource-intensive without proportional gains in insight or productivity [8].

What are the primary strategies for integrating multi-omics data?

The integration of vertical or heterogeneous data (data from different omics levels) can be approached through several distinct strategies [8]. The choice of strategy depends on the biological question, data characteristics, and computational resources.

Table 1: Overview of Multi-Omics Data Integration Strategies

| Integration Strategy | Description | Key Advantages | Key Limitations |

|---|---|---|---|

| Early Integration | Concatenates all omics datasets into a single matrix prior to analysis [8]. | Simple and easy to implement [8]. | Creates a complex, high-dimensional matrix that is noisy and discounts data distribution differences [8]. |

| Mixed Integration | Separately transforms each dataset into a new representation before combining them [8]. | Reduces noise, dimensionality, and dataset heterogeneities [8]. | - |

| Intermediate Integration | Simultaneously integrates datasets to output common and omics-specific representations [8]. | Captures interactions between omics layers [8]. | Often requires robust pre-processing to handle data heterogeneity [8]. |

| Late Integration | Analyzes each omics dataset separately and combines the final predictions or results [8]. | Circumvents challenges of assembling different datatypes [8]. | Does not capture inter-omics interactions during the analysis [8]. |

| Hierarchical Integration | Focuses on including prior knowledge of regulatory relationships between omics layers [8]. | Truly embodies the intent of trans-omics analysis [8]. | A nascent field; methods are often less generalizable [8]. |

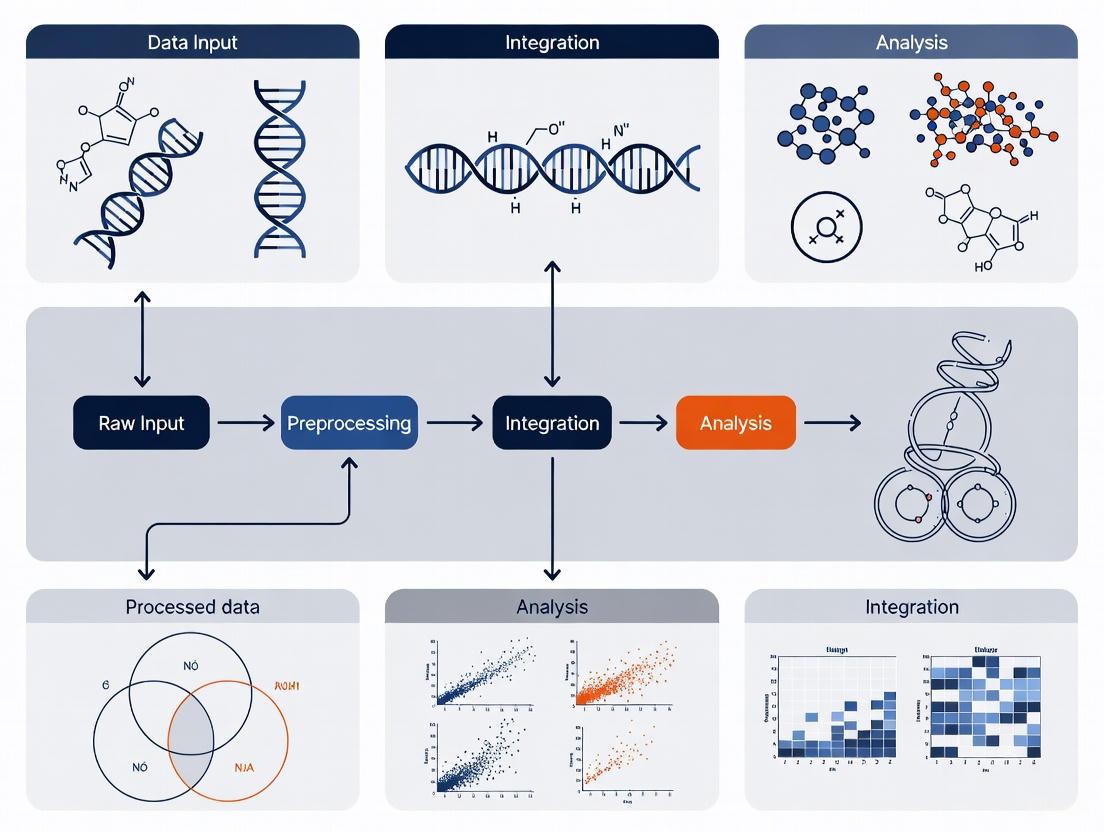

The following diagram illustrates the logical flow and differences between these primary integration strategies:

Troubleshooting Common Multi-Omics Challenges

How do I handle missing values and the High Dimension Low Sample Size (HDLSS) problem?

Problem: Omics datasets often contain missing values due to technical limitations, and frequently have thousands of variables (e.g., genes, proteins) but only a small number of samples [8]. This HDLSS problem can cause machine learning algorithms to overfit, reducing their generalizability [8].

Solutions:

- Missing Data: Implement an additional imputation process to infer missing values in incomplete datasets before applying statistical analyses [8]. The choice of imputation method (e.g., mean, k-nearest neighbors, model-based) should be carefully considered based on the nature of the missingness.

- HDLSS & Overfitting: Employ dimensionality reduction techniques (e.g., PCA, autoencoders) or feature selection methods to reduce the number of variables. Use regularization techniques (e.g., Lasso, Ridge regression) within your models and always validate models using held-out test sets or cross-validation to ensure generalizability [8].

Our data is heterogeneous and lacks pre-processing standards. How can we harmonize it effectively?

Problem: The sheer heterogeneity of omics data—comprising different data modalities, distributions, and types—poses a significant challenge. The absence of standardized pre-processing protocols means each data type requires tailored processing, introducing variability [8] [2].

Solutions:

- Adopt Established Data Standards: Utilize existing minimum information standards and data formats developed by the omics communities. Examples include:

- Flexible Harmonization: Recognize that stringent harmonization (using identical measures) is not always possible. Instead, aim for flexible harmonization, which ensures datasets are inferentially equivalent even if not identical, and transform them into a common format [7]. This involves resolving heterogeneity across three dimensions:

- Syntax: Convert data into a common technical format (e.g.,

.csv,.json). - Structure: Reconcile how variables relate to each other (e.g., from event data to panel data).

- Semantics: Carefully map the intended meaning of variables and ensure consistent operationalization of concepts across datasets [7].

- Syntax: Convert data into a common technical format (e.g.,

- Common Data Elements (CDEs): For clinical and cohort data, develop and use CDEs—standardized concepts that precisely define a question with a specified set of responses—to promote standardized data capture and retrospective harmonization [10].

How do we choose the right integration method from the many available?

Problem: A wide array of computational tools exists for multi-omics integration, leading to confusion about which method is best suited for a specific dataset or biological objective [11] [2].

Solutions:

- Align Method with Objective: The choice of integration tool should be driven by the primary scientific objective of your study [11]. The table below maps common objectives to suitable tools and methods.

Table 2: Matching Integration Tools to Scientific Objectives

| Scientific Objective | Recommended Method Type | Example Tools & Brief Description |

|---|---|---|

| Subtype Identification | Unsupervised methods that group samples based on shared multi-omics profiles [11]. | MOFA+ [2]: Unsupervised factor analysis to uncover latent sources of variation. SNF [2]: Fuses sample-similarity networks from each omics layer. |

| Detect Disease-Associated Molecular Patterns | Supervised or unsupervised methods that identify features correlated with a phenotype [11]. | DIABLO [2]: Supervised method for biomarker discovery and classification. MCIA [2]: Multivariate method to find correlated patterns across omics. |

| Understand Regulatory Processes | Methods that can model interactions and hierarchies between omics layers [11]. | Hierarchical Integration [8]: Incorporates prior knowledge of regulatory relationships (e.g., genomic variants influencing transcript levels). |

| Diagnosis/Prognosis & Drug Response Prediction | Supervised methods that build predictive models from multi-omics input [11]. | DIABLO [2]: Can be used for classification. Various machine learning models (e.g., random forests, neural networks) using late or intermediate integration. |

- Use Multiple Methods: For robust findings, consider using multiple integration methods to see if they yield consistent results [2].

- Leverage Validated Platforms: To reduce the bioinformatics bottleneck, consider using integrated analysis platforms like Omics Playground, which provide access to multiple state-of-the-art methods through a user-friendly interface [2].

How can we ensure the quality and biological relevance of our integrated results?

Problem: The outputs of integration algorithms can be statistically complex and challenging to interpret, with a risk of drawing spurious biological conclusions [2].

Solutions:

- Robust Validation: Implement a rigorous validation workflow. For subtype identification, validate clusters by assessing survival differences, clinical enrichment, or using external datasets. For supervised models, use held-out test sets and cross-validation [11].

- Downstream Biological Analysis: Use pathway and network analysis tools on the features (e.g., genes, proteins) highlighted by the integration model to place them in a functional biological context [2].

- Iterative Harmonization Checks: During data preparation, programmatically validate harmonized data. Check for adherence to controlled response options, data structure and format, value ranges, and conditional field consistency. Assign "Pass," "Fail," or "Warning" statuses to fields for review [10].

The following workflow outlines a robust process for preparing and validating harmonized data:

Experimental Protocols & Methodologies

Protocol for a Retrospective Multi-Omics Data Harmonization Project

This protocol is adapted from large-scale consortia experiences, such as the NHLBI CONNECTS program [10].

Objective: To harmonize pre-existing multi-omics and clinical datasets from different studies or cohorts into a FAIR (Findable, Accessible, Interoperable, Reusable) resource for integrated analysis.

Materials:

- Input Data: Raw multi-omics data files (e.g., FASTQ, BAM, abundance matrices) and associated clinical/ phenotypic data from multiple sources.

- Computing Infrastructure: High-performance computing or cloud-based environment (e.g., NHLBI BioData Catalyst) with sufficient storage and processing power.

- Software/Tools: Statistical programming environments (e.g., R, Python, SAS), data validation scripts, and potentially a metadata management tool.

Step-by-Step Methodology:

- Project Scoping & Team Formation:

- Define the research objectives and the specific omics datasets to be included.

- Assemble a multidisciplinary harmonization team including data managers, biostatisticians, bioinformaticians, and domain scientists [10].

Develop a Harmonization Data Dictionary:

- Define the target Common Data Elements (CDEs) that all data will be mapped to. This includes precisely defining each variable and its allowed values [10].

- Create a harmonization template that guides mappers on how to transform original study variables to the CDEs.

Execute Variable Mapping and Transformation:

- Data managers and statisticians from each study team map their native variables to the target CDEs. This process requires careful consideration of content equivalence [10].

- Programmatically transform the raw study data according to the mapping instructions. This is often done using scripts in R, Python, or SAS [10].

Automated and Manual Validation:

- Run automated validation scripts (e.g., in R) to assess the harmonized data [10]. Checks should include:

- Data structure and format (type, length).

- Adherence to controlled terminologies.

- Plausibility of value ranges and handling of missing data.

- Conditional logic (e.g., if variable A is present, variable B must also be present).

- Assign "Pass," "Fail," or "Warning" status to each field. Manually review and resolve all failures and warnings [10].

- Run automated validation scripts (e.g., in R) to assess the harmonized data [10]. Checks should include:

Data Packaging and Sharing:

- Export the validated, harmonized data into widely accessible formats (e.g., comma-delimited files).

- Prepare comprehensive metadata and documentation describing the harmonization process, assumptions, and limitations.

- Deposit both the raw and harmonized datasets, along with documentation, into a designated repository or cloud ecosystem (e.g., BioData Catalyst) to create a FAIR resource [10].

Visualization & Workflow Diagrams

Multi-Omics FAIR Data Generation Workflow

This diagram visualizes the end-to-end process of generating a standardized, harmonized multi-omics dataset ready for integration and analysis.

The Scientist's Toolkit: Research Reagent Solutions

Public Data Repositories & Knowledgebases

Table 3: Key Public Resources for Multi-Omics Research

| Resource Name | Type | Omics Content | Link |

|---|---|---|---|

| The Cancer Genome Atlas (TCGA) | Repository | Genomics, epigenomics, transcriptomics, proteomics [11] | portal.gdc.cancer.gov |

| Answer ALS | Repository | Whole-genome sequencing, RNA transcriptomics, ATAC-sequencing, proteomics, deep clinical data [11] | dataportal.answerals.org |

| jMorp | Database/ Repository | Genomics, methylomics, transcriptomics, metabolomics [11] | jmorp.megabank.tohoku.ac.jp |

| Fibromine | Database | Transcriptomics and proteomics data focused on fibrosis [11] | fibromine.com |

Computational Tools & Software

Table 4: Essential Tools for Multi-Omics Data Integration

| Tool Name | Category | Primary Function | Key Features |

|---|---|---|---|

| MOFA+ | Integration Tool | Unsupervised discovery of latent factors across multi-omics data [2]. | Probabilistic Bayesian framework; identifies shared and specific sources of variation [2]. |

| DIABLO | Integration Tool | Supervised integration for biomarker discovery and classification [2]. | Uses multiblock sPLS-DA; integrates data in relation to a categorical outcome [2]. |

| SNF | Integration Tool | Fuses sample-similarity networks from different omics types [2]. | Network-based; captures shared cross-sample similarity patterns [2]. |

| OmicsIntegrator | Utility Tool | Streamlines the process of harmonizing and integrating multi-omics datasets [6]. | Robust data integration capabilities [6]. |

| OmicsPlayground | Analysis Platform | Provides an all-in-one, code-free interface for multi-omics analysis [2]. | Integrates multiple state-of-the-art methods (MOFA, DIABLO, SNF) with visualization [2]. |

Troubleshooting Guide: Common Multi-Omics Data Harmonization Issues

This guide addresses frequent challenges encountered during multi-omics experiments, providing step-by-step solutions to ensure robust and reproducible data integration.

FAQ 1: My multi-omics datasets are in different formats and scales. How do I make them compatible for integration?

- Problem: Data from genomics, transcriptomics, and proteomics platforms arrive in disparate formats (e.g., FASTQ, BAM, raw mass spectrometry counts) with different measurement units and scales, making direct integration impossible [12] [2].

- Diagnosis: This is a standard pre-processing issue requiring data harmonization. Confirm the issue by checking for varying data distributions and value ranges across your datasets.

- Solution: Implement a standardized data harmonization pipeline [13] [14].

- Step 1: Data Acquisition & Extraction: Identify and collect all relevant data sources, including databases, APIs, and spreadsheets, noting their original formats [14].

- Step 2: Mapping: Create a unified data model or schema that defines common data elements, types, and relationships all data must follow [13] [14].

- Step 3: Ingest and Clean: Ingest raw data and clean it by removing errors, redundancies, and missing values. Normalize units, date formats, and naming conventions [13] [14].

- Step 4: Harmonize and Evaluate: Apply the defined schema to transform the raw data. This includes critical steps like normalization (e.g., using TPM for RNA-seq, FPKM for transcriptomics) to account for technical variations and batch effect correction (e.g., with tools like ComBat) to remove non-biological noise introduced by different technicians, reagents, or processing times [15] [12].

- Step 5: Deployment: Store the harmonized data in a centralized repository like a data warehouse or lake, making it accessible for analysis [13].

FAQ 2: After integration, my results are dominated by technical noise, not biological signals. What went wrong?

- Problem: The final integrated dataset or model is skewed by "batch effects" or other technical artifacts, leading to spurious conclusions [12] [2].

- Diagnosis: This indicates inadequate correction for batch effects during the pre-processing/harmonization phase. Diagnose by using Principal Component Analysis (PCA) to see if samples cluster more by processing batch than by biological group.

- Solution:

- Proactive Design: During experimental design, randomize samples across processing batches whenever possible [12].

- Statistical Correction: Apply batch effect correction algorithms after normalization but before data integration. Common methods include ComBat, Harmony, or ARSyN [12].

- Validation: Always validate that the correction worked by repeating the PCA to confirm that biological groups are now the primary source of variation.

FAQ 3: I have missing data for some omics layers in a subset of my samples. Can I still perform an integrated analysis?

- Problem: The dataset is incomplete, with some samples lacking data for one or more omics modalities (e.g., a patient has genomic data but is missing proteomic measurements) [12].

- Diagnosis: This is a common scenario in multi-omics studies, especially with clinical samples. Using only complete cases can severely bias your analysis and reduce statistical power.

- Solution: Choose an integration strategy and tools that are robust to missing data.

- Use "Late Integration" Methods: These methods build separate models for each complete omics dataset and then combine the predictions, making them naturally handle missingness [12].

- Employ Robust Imputation: Use imputation methods to estimate missing values. For example, k-nearest neighbors (k-NN) imputation can estimate a missing proteomic profile based on the profiles of samples with similar genomic and transcriptomic data [12].

- Leverage Specific Algorithms: Some multi-omics algorithms, like Multi-Omics Factor Analysis (MOFA), are designed to handle missing data by learning a latent representation from the available measurements [2].

FAQ 4: How do I choose the right data integration method for my specific biological question?

- Problem: With many multi-omics integration methods available (e.g., MOFA, DIABLO, SNF), selecting the most appropriate one is confusing [2].

- Diagnosis: The optimal method depends on your study's goal, data structure (matched vs. unmatched samples), and whether you have a specific outcome variable to predict [12] [2].

- Solution: Select your method based on the experimental goal, as summarized in the table below.

| Integration Method | Best For This Goal | Key Principle | Advantages |

|---|---|---|---|

| MOFA [2] | Unsupervised exploration; identifying latent factors that drive variation across omics layers. | Uses a Bayesian framework to infer sources of variation (factors) shared across multiple omics datasets. | Unsupervised; does not require sample labels. Handles missing data well. |

| DIABLO [2] | Supervised biomarker discovery; classifying patient groups (e.g., disease vs. healthy). | Uses a supervised, multi-block classification method to identify features that discriminate between predefined groups. | Ideal for prediction and biomarker identification. |

| SNF [12] [2] | Disease subtyping; integrating data from different sample sets. | Constructs and fuses sample-similarity networks from each omics data type into a single network. | Effective for identifying disease subtypes. Works well with unmatched data. |

FAQ 5: The results from my integrated analysis are difficult to interpret biologically. How can I translate them into insights?

- Problem: The output of a complex integration model (especially AI/ML models) is a "black box," providing patterns or feature lists without clear biological meaning [16].

- Diagnosis: This is a key bottleneck in multi-omics. The solution lies in post-integration biological interpretation.

- Solution:

- Pathway & Enrichment Analysis: Input the list of key features (genes, proteins, metabolites) identified by your model into enrichment tools (e.g., g:Profiler, Enrichr) to see if they cluster in known biological pathways [2].

- Network Integration: Map your results onto shared biochemical networks. Connect analytes (e.g., genes, proteins, metabolites) based on known interactions (e.g., a transcription factor to the transcript it regulates) to improve mechanistic understanding [5].

- Use Interpretable Models: Prioritize models that provide interpretable outputs. For instance, MOFA reveals which factors are important and which omics layers they affect, while DIABLO shows which features are most discriminative for a class [2] [16].

The Scientist's Toolkit: Essential Reagents & Materials for Multi-Omics

The following table details key reagents and solutions critical for generating robust multi-omics data, the quality of which directly impacts downstream harmonization success [15].

| Research Reagent / Material | Function in Multi-Omics Workflow |

|---|---|

| Next-Generation Sequencing (NGS) Library Prep Kits | Prepares DNA or RNA samples for sequencing by fragmenting, amplifying, and adding platform-specific adapters. Essential for genomics, epigenomics, and transcriptomics data generation. |

| Mass Spectrometry Grade Solvents & Enzymes | High-purity solvents (e.g., acetonitrile, methanol) and enzymes (e.g., trypsin) are critical for reproducible proteomics and metabolomics sample preparation and analysis, minimizing background noise. |

| Single-Cell Barcoding Reagents | Unique molecular identifiers (UMIs) and cell barcodes are used in single-cell RNA-seq (e.g., 10x Genomics) to tag molecules from individual cells, allowing for sample multiplexing and accurate transcript counting. |

| Antibodies for Protein Assays | Used in proteomics techniques like Western blot, immunoassay, or multiplexed panels (Olink, SomaScan) to specifically target and quantify protein abundance and post-translational modifications. |

| Bisulfite Conversion Reagent | Chemically modifies unmethylated cytosines in DNA to uracils, allowing for subsequent sequencing to determine genome-wide methylation patterns in epigenomics studies. |

| Cross-Linking Reagents | Chemicals like formaldehyde are used in techniques such as ChIP-seq (Chromatin Immunoprecipitation) to freeze protein-DNA interactions, enabling the study of the epigenome and transcriptome regulation. |

Experimental Protocol: A Standardized Multi-Omics Data Harmonization Workflow

This protocol outlines a generalized methodology for harmonizing disparate omics datasets, such as those from transcriptomics and proteomics, into a unified analysis-ready format [15] [13] [14].

1. Objective: To standardize, clean, and integrate raw data from multiple omics platforms into a cohesive dataset for downstream integrated analysis (e.g., using MOFA, DIABLO, or ML models).

2. Materials & Software:

- Input Data: Raw or pre-processed data matrices from various omics platforms (e.g., RNA-seq count matrix, proteomics intensity data).

- Computing Environment: R, Python, or a specialized platform like Omics Playground [2].

- Key R/Python Packages:

limma(ComBat),sva,mixOmics,MOFA2,INTEGRATE[15] [2].

3. Procedure:

- Step 1: Data Acquisition and Profiling

- Step 2: Schema Definition and Mapping

- Define a unified target schema. This includes deciding on common sample identifiers, feature naming conventions (e.g., using standard gene symbols), and data formats [13].

- Map the fields from each source dataset to the target schema.

- Step 3: Data Cleaning and Normalization

- Clean: Remove duplicates, handle missing values (e.g., via imputation or filtering), and correct obvious errors [14].

- Normalize: Apply platform-specific normalization to make data comparable within each omics type. For example:

- Step 4: Batch Effect Correction and Harmonization

- Step 5: Validation and Deployment

- Evaluate: Use visualization (e.g., PCA plots) to confirm that technical batch effects are minimized and biological signals are preserved.

- Deploy: Output the final harmonized data in an agreed-upon format (e.g., an H5 file, or multiple CSV files with aligned samples) and store it in a centralized system for analysis [13] [14].

4. Diagram: Multi-Omics Harmonization Workflow The following diagram visualizes the core steps of the data harmonization protocol.

Multi-Omics Integration Strategies at a Glance

The timing of data integration is a critical strategic decision. The table below compares the three primary approaches, which are also visualized in the subsequent diagram [12].

| Strategy | Timing | Advantages | Disadvantages |

|---|---|---|---|

| Early Integration | Data is merged before analysis. | Captures all possible cross-omics interactions; preserves raw information. | Extremely high dimensionality; computationally intensive; prone to noise. |

| Intermediate Integration | Data is transformed, then merged during analysis. | Reduces complexity; can incorporate biological context (e.g., networks). | May lose some raw information; requires careful method selection. |

| Late Integration | Models are built on each data type and merged after analysis. | Handles missing data well; computationally efficient; robust. | May miss subtle cross-omics interactions captured only by joint analysis. |

Diagram: Multi-Omics Integration Strategies

Adopting the FAIR Principles for Findable, Accessible, Interoperable, and Reusable Data

FAQs: Core FAIR Principles in Multi-Omics

What are the FAIR Data Principles and why are they critical for multi-omics research?

The FAIR Guiding Principles are a set of guidelines established in 2016 to improve the Findability, Accessibility, Interoperability, and Reuse of digital assets and data [17] [18]. In multi-omics studies, which involve integrating massive, complex datasets from genomics, transcriptomics, proteomics, and metabolomics, adhering to these principles is not merely beneficial—it is essential. FAIR provides the framework to manage the volume, velocity, and variety of multi-omics data, ensuring it can be discovered, integrated, and repurposed by both humans and computational systems to accelerate scientific discovery [5] [12] [19].

How is 'Interoperability' specifically achieved for heterogeneous omics data?

Achieving interoperability requires a multi-faceted approach centered on standardization. This involves:

- Standardized Vocabularies and Ontologies: Using shared, machine-readable languages and controlled vocabularies (e.g., SNOMED CT, LOINC) to describe data [20] [21].

- Common Data Elements (CDEs): Implementing CDEs across research teams and projects to ensure data is collected and structured consistently [22].

- Formal Semantics: Annotating data using formal semantics and common coordinate frameworks to ensure relationships between datasets are computationally accessible [22].

What is the difference between FAIR data and Open data?

FAIR and Open are distinct concepts. FAIR data is structured and described to be computationally actionable; it can be closed access, with strict security and permissions, yet still be Findable, Accessible, Interoperable, and Reusable by authorized users and systems [19]. Open data is defined by its lack of access restrictions and is made freely available to everyone. Not all open data is FAIR (e.g., a publicly available CSV file with no metadata), and not all FAIR data is open (e.g., a clinically sensitive genomic dataset in a secure, access-controlled repository) [19].

Troubleshooting Guides: Common FAIR Implementation Challenges

Issue 1: Data and Metadata Are Not Easily Discoverable

| Symptom | Possible Cause | Solution |

|---|---|---|

| Other researchers cannot locate your dataset. | Data is stored in personal or institutional storage without a persistent identifier. | Deposit data in a trusted repository that assigns a globally unique and persistent identifier (e.g., a DOI or Handle) [18] [20]. |

| Your dataset does not appear in relevant search engines. | Metadata is incomplete, uses non-standard terms, or is not registered in a searchable resource. | Create rich, machine-readable metadata using community-standardized schemas and ensure it is registered or indexed in a disciplinary resource [17] [20]. |

Issue 2: Inability to Integrate Multi-Omics Datasets

| Symptom | Possible Cause | Solution |

|---|---|---|

| Genomic and proteomic data from the same sample cannot be correlated. | Data formats are proprietary or inconsistent, and vocabularies are not aligned. | Use open, standard file formats (e.g., CSV, XML) and shared, broadly applicable ontologies (e.g., from the OBO Foundry) for all data and metadata [19] [20]. |

| Batch effects obscure biological signals when combining datasets from different labs. | A lack of harmonized protocols for sample preparation, data generation, and processing. | Implement and document Common Data Elements (CDEs) and standard operating procedures (SOPs) across all collaborating labs from the project's start [22]. |

Issue 3: Data Reuse is Hindered by Poor Provenance and Documentation

| Symptom | Possible Cause | Solution |

|---|---|---|

| You or others cannot replicate the analysis or understand the data's context. | Missing or unclear data usage license, provenance information, and methodological details. | Release data with a clear usage license and provide detailed provenance documentation that describes how the data was generated, processed, and analyzed [18] [20]. |

| The data's applicability for a new research question is uncertain. | Metadata lacks domain-relevant context and does not meet community standards. | Ensure metadata is richly described with a plurality of accurate attributes and is structured to meet domain-relevant community standards [20]. |

Experimental Protocols for FAIR Data Harmonization

Protocol: Implementing a Data Harmonization Framework for Team Science

Purpose: To establish a shared foundation for collecting, structuring, and sharing data within a large, interdisciplinary multi-omics consortium, enabling downstream integrated analyses [22].

Methodology:

- Establish Communication and Common Language: Facilitate workshops to build a shared vocabulary across computational and experimental researchers. This bridges disciplinary gaps and is the first step toward technical harmonization [22].

- Develop and Adopt Common Data Elements (CDEs): Collaboratively define the core set of data items that will be collected uniformly across all teams and experiments (e.g., standardized fields for sample ID, organism, tissue source, etc.) [22].

- Agree on Metadata Standards and Ontologies: Select and implement a minimal metadata standard specific to the project's data types (e.g., based on existing standards like the 3D Microscopy Metadata Standards). Mandate the use of agreed-upon controlled vocabularies and ontologies for semantic interoperability [22].

- Define the Data and Code Sharing Infrastructure: Select a common repository or platform with a defined dataset structure (e.g., the SPARC dataset structure) for publishing final, curated datasets. This ensures compliance with minimal metadata standards and facilitates discovery [22].

- Create a Data Management Plan (DMP): Document all agreed-upon standards, protocols, and infrastructure decisions in a DMP. This living document serves as the project's rulebook for FAIR data practices throughout the research lifecycle [20].

Workflow: The FAIRification Process for a Multi-Omics Dataset

The following diagram visualizes the pathway from raw, siloed data to a harmonized, FAIR-compliant dataset ready for integrated analysis.

| Tool Category | Example(s) | Function in FAIRification |

|---|---|---|

| Trusted Repositories | Zenodo, Figshare, Dataverse, Discipline-specific DBs [23] [20] | Provides a permanent home for data, assigns a Persistent Identifier (PID), and makes data discoverable and accessible. |

| Metadata Standards | ISA, SPARC Dataset Structure, 3D-MMS, CDISC [22] [20] [21] | Provides a structured schema for rich metadata collection, ensuring data is well-described and reusable. |

| Ontologies & Vocabularies | SNOMED CT, LOINC, OBO Foundry Ontologies [22] [21] | Provides standardized, machine-readable terms for data annotation, enabling semantic interoperability. |

| Data Formats | CSV, XML, JSON, RDF [20] | Open, non-proprietary formats ensure data can be read and processed by different computational systems in the long term. |

| Persistent Identifiers | Digital Object Identifier (DOI), Handle [18] [20] | A globally unique and permanent name for a dataset, making it reliably findable and citable. |

FAIR in Action: Multi-Omics Integration Workflow

The diagram below illustrates how FAIR principles enable the integration of disparate omics data layers through a unified computational analysis pipeline, leading to holistic biological insights.

The Critical Role of Rich Metadata and Standardized Ontologies

Frequently Asked Questions (FAQs) and Troubleshooting Guides

FAQ Category: Fundamentals of Metadata and Ontologies

Q1: What is the difference between data standardization and data harmonization? Standardization aims to unify data using a uniform methodology from the outset and can be seen as the most extreme form of stringent harmonization. Harmonization, however, is the practice of reconciling various types, levels, and sources of existing data into formats that are compatible and comparable for analysis [7]. It resolves heterogeneity in syntax (data format), structure (conceptual schema), and semantics (intended meaning) [7].

Q2: Why are minimum metadata requirements advocated over fixed standards in some areas of microbiome research? Due to the rapid technological progress in microbiome research, a flexible system that can be constantly improved is more practical than a rigid standard. Minimum requirements ensure essential information is captured while allowing for the evolution of new parameters as the field advances [24].

Q3: What are the core components of the FAIR principles that metadata should adhere to? Metadata should be curated to make data:

- Findable: Easy to locate by humans and computers.

- Accessible: Stored for long-term retrieval.

- Interoperable: Ready for integration with other data.

- Reusable: Fully described to allow replication and reuse [24].

FAQ Category: Implementation and Practical Challenges

Q4: I am preparing to submit my omics data to a public repository. What are the typical minimum metadata requirements? Common repositories often base their requirements on the MIxS (Minimum Information about any (x) Sequence) checklists [24]. While requirements can vary, the following table summarizes core elements often required:

| Metadata Category | Examples of Required Information |

|---|---|

| Investigation Details | Investigation type, project name [24] |

| Sample Details | Collection date, geographic location (latitude, longitude, country) [24] |

| Environmental Details | Biome, feature, material, selected environmental package [24] |

| Technical Methods | Sequencing method, library preparation protocols [24] |

Q5: A common error is the inconsistent use of ontologies, leading to data harmonization failures. How can I troubleshoot this?

- Problem: The same term is used for different concepts (e.g., "young adults" defined as 18-25 in one dataset and 18-30 in another).

- Solution: Implement a centralized reference ontology. For example, the OHDSI Standardized Vocabularies—which contains over 10 million concepts—provides a common framework that standardizes semantically equivalent concepts and supports international coding schemes, ensuring consistent meaning across datasets [25].

Q6: My multi-omics dataset has different data types with unique noise profiles and missing values. What is the first step to make them interoperable? The critical first step is preprocessing, which includes standardization and harmonization [15].

- Standardization: Ensures data is collected, processed, and stored consistently using agreed-upon protocols. This can involve normalizing data to account for differences in sample size, converting to a common scale, or removing technical biases [15].

- Harmonization: Aligns data from different sources by mapping them onto a common scale or reference, often using domain-specific ontologies [15].

FAQ Category: Advanced Data Integration and Integrity

Q7: What are the key challenges specific to multi-omics data integration? The table below outlines the primary challenges and their implications:

| Challenge | Description | Potential Consequence |

|---|---|---|

| Lack of Pre-processing Standards [2] | Each omics type (e.g., genomics, proteomics) has unique data structure, distribution, and batch effects. | Introduces variability, challenging harmonization. |

| Specialized Bioinformatics Expertise [2] | Requires cross-disciplinary knowledge in biostatistics, machine learning, and programming. | Major bottleneck in analysis. |

| Choice of Integration Method [2] | Multiple methods exist (e.g., MOFA, DIABLO, SNF), each with different approaches and outputs. | Confusion about the best method for a specific biological question. |

| Interpretation of Results [2] | Translating integrated outputs into actionable biological insight is complex. | Risk of drawing spurious conclusions. |

Q8: I've discovered a critical error in the metadata of a published dataset I am re-using. What should I do? Metadata integrity is a fundamental determinant of research credibility [26]. If you discover an error:

- Document the Error: Clearly identify the specific metadata field and the nature of the inaccuracy.

- Contact the Data Submitter: If contact information is available in the repository, reach out to them directly to alert them of the issue.

- Notify the Repository Curator: Submit a formal notice to the data repository (e.g., GEO, ENA) where the dataset is housed. They can place a note on the dataset record or contact the original submitters. Raising awareness of metadata errors is essential for maintaining the integrity of public data and preventing the propagation of incorrect findings [26].

Experimental Protocols and Workflows

Protocol 1: A Standardized Workflow for Multi-Omics Data Harmonization

This protocol provides a general methodology for harmonizing multi-omics data to ensure robustness and reproducibility.

Title: Multi-Omics Data Harmonization Workflow

Detailed Methodology:

- Data Preprocessing: Perform quality control, imputation of missing values, and noise reduction tailored to each omics data type (e.g., RNA-Seq, proteomics) [2].

- Standardization: Normalize data to account for differences in sample size or concentration. Convert data to a common scale or unit of measurement to ensure compatibility across platforms [15].

- Ontology Mapping: Map source data and metadata to a common, comprehensive reference ontology (e.g., OHDSI Standardized Vocabularies). This step standardizes semantically equivalent concepts and assigns domains according to clinical or biological categories [25].

- Data Harmonization: Resolve structural and semantic heterogeneity. This involves aligning data from different sources so they can be integrated, ensuring that the intended meaning of variables is consistent across all datasets [7].

- Apply Integration Method: Utilize a specific computational method (e.g., MOFA, DIABLO, SNF) to perform the integration based on the research question (supervised vs. unsupervised) [2].

Protocol 2: Implementing the FAIR Principles for Data Reusability

This protocol outlines key steps to make omics data Findable, Accessible, Interoperable, and Reusable.

Title: FAIR Data Principles Cycle

Detailed Methodology:

- Findable:

- Assign a persistent digital identifier (e.g., DOI) to your dataset.

- Describe the data with rich metadata, including the core elements from the MIxS checklist [24].

- Accessible:

- Deposit the data and metadata in a trusted, community-recognized repository (e.g., ENA, SRA, GEO).

- Ensure the data can be retrieved by their identifier using a standardized communication protocol.

- Interoperable:

- Use a formal, accessible, shared, and broadly applicable language for knowledge representation. This is achieved by using standardized ontologies and vocabularies (e.g., OHDSI, GO) [25].

- Qualify relationships between metadata elements using ontology terms.

- Reusable:

- Provide multiple, accurate, and relevant attributes to describe the data. Metadata should meet domain-specific community standards [24].

- Clearly state the license under which the data can be reused and associate detailed provenance information.

The Scientist's Toolkit: Essential Research Reagents and Solutions

The following table details key resources for managing metadata and performing data harmonization in multi-omics studies.

| Tool / Resource Name | Type | Primary Function | Relevance to Data Harmonization |

|---|---|---|---|

| MIxS Checklists [24] | Reporting Standard | Defines minimum information for sequencing data. | Provides a common set of fields for describing genomic, metagenomic, and marker gene sequences, ensuring basic interoperability. |

| OHDSI Standardized Vocabularies [25] | Reference Ontology | A large-scale, centralized ontology for international health data. | Supports data harmonization by standardizing semantically equivalent concepts from over 136 source vocabularies, enabling cross-study analysis. |

| MOFA [2] | Integration Algorithm | Unsupervised factorization to infer latent factors from multi-omics data. | Discovers the principal sources of variation shared across different omics data modalities. |

| DIABLO [2] | Integration Algorithm | Supervised integration for biomarker discovery. | Integrates multiple omics datasets to find components that discriminate between known phenotypic groups. |

| SNF [2] | Integration Algorithm | Fuses sample similarity networks from different data types. | Constructs an overall integrated matrix capturing complementary information from all omics layers. |

| Omics Playground [2] | Analysis Platform | An all-in-one, code-free platform for multi-omics analysis. | Democratizes data integration by providing a cohesive interface with guided workflows and multiple state-of-the-art integration methods. |

Integration in Action: Strategic Frameworks and Analytical Techniques for Multi-Omics Data

FAQs on Multi-Omics Data Fusion

1. What are the main types of data fusion strategies, and how do they differ?

The three primary strategies for multi-omics data fusion are early, intermediate, and late fusion. Their core difference lies in the stage at which data from different omics layers are combined.

- Early Fusion (also known as data-level or feature-level fusion) involves concatenating raw or pre-processed features from each modality into a single, unified dataset before model training [27] [12].

- Intermediate Fusion (or joint fusion) integrates modality-specific features during the learning process itself, allowing the model to learn complex inter-modal relationships [28].

- Late Fusion (decision-level fusion) processes each modality through independent models and combines their predictions at the final decision stage [27] [29].

2. When should I choose late fusion over early fusion?

Late fusion is particularly advantageous when your dataset has a low sample-to-feature ratio, which is common in bioinformatics [29]. It is more robust to overfitting in scenarios with high-dimensional data (e.g., features on the order of 10⁵) and a limited number of patient samples (e.g., 10 to 10³) [29]. It also handles data heterogeneity effectively, as each modality can be processed with its own optimal pipeline [27] [29]. If your different omics data types have varying levels of informativeness or noise, late fusion allows the model to naturally weigh each modality based on its predictive power [29].

3. What are the common pitfalls of early fusion and how can they be mitigated?

The most significant pitfall of early fusion is the "curse of dimensionality", where concatenating features creates an extremely high-dimensional feature space that can lead to model overfitting, especially with small sample sizes [27] [12]. It also struggles with data heterogeneity, as different omics types may have unique data structures, scales, and noise profiles [29].

Mitigation strategies include:

- Applying robust dimensionality reduction (e.g., PCA, autoencoders) before concatenation [29].

- Using strong regularization techniques in the subsequent model to prevent overfitting [29].

- Ensuring careful data normalization and harmonization across all modalities to make features more compatible [12].

4. How does intermediate fusion capture relationships between omics layers?

Unlike early and late fusion, intermediate fusion uses specialized model architectures that allow interaction between modalities during feature learning [28]. Techniques such as attention mechanisms can learn to weight the importance of specific features from different omics [27], while neural networks with shared layers can learn a joint representation that captures non-linear dependencies between, for instance, gene expression and protein abundance data [28]. This often leads to more biologically insightful models [28].

5. Is there a one-size-fits-all best fusion strategy?

No, the optimal fusion strategy is highly problem-specific and data-dependent [29]. The best choice depends on factors like sample size, data dimensionality, heterogeneity, and the specific biological question. Research indicates that late fusion often outperforms others in classical bioinformatics settings with limited samples and high-dimensional features [29], whereas early or intermediate fusion may be more effective in scenarios with larger sample sizes and fewer total features [29].

Comparison of Fusion Strategies

Table 1: Advantages and challenges of different multi-omics integration strategies.

| Strategy | Description | Advantages | Challenges |

|---|---|---|---|

| Early Fusion | Raw or pre-processed features from all omics are combined into a single input vector [27] [12]. | Simplicity of implementation; potential to capture all cross-omics interactions [12]. | High risk of overfitting with small sample sizes; requires all modalities to be present for each sample [27] [29]. |

| Intermediate Fusion | Data is integrated during model training, often using specialized architectures [28]. | Can capture complex, non-linear relationships between omics layers [27] [28]. | Increased model complexity; can be computationally intensive [28]. |

| Late Fusion | Separate models are built for each omics type, and their predictions are combined [27] [29]. | Robustness to overfitting and missing data; allows modality-specific preprocessing [27] [29]. | May miss subtle cross-omics interactions [12]. |

Table 2: Guide to selecting a fusion strategy based on data characteristics and research objectives.

| Criterion | Recommended Strategy | Rationale |

|---|---|---|

| Small Sample Size (n) & High Dimensionality (p) | Late Fusion | Reduces overfitting risk by building simpler, modality-specific models [29]. |

| Large Sample Size & Lower Dimensionality | Early or Intermediate Fusion | Sufficient data is available to learn complex, cross-modal patterns without overfitting [29]. |

| Primary Goal: Robust Prediction | Late Fusion | Proven to provide higher accuracy and robustness in survival prediction for cancer patients [29]. |

| Primary Goal: Biological Insight | Intermediate Fusion | Can reveal how different omics layers interact, providing mechanistic understanding [28]. |

| Presence of Missing Modalities | Late Fusion | Individual models can be trained on available data, and predictions are combined afterward [12]. |

Experimental Protocols

Protocol 1: Implementing a Late Fusion Workflow for Survival Prediction

This protocol is based on a machine learning pipeline that demonstrated consistent outperformance of single-modality approaches in cancer survival prediction using TCGA data [29].

1. Data Preprocessing and Dimensionality Reduction per Modality:

- Input: Separate datasets for each omics modality (e.g., transcripts, proteins, metabolites, clinical data) [29].

- Normalization: Apply modality-specific normalization (e.g., TPM for RNA-seq, intensity normalization for proteomics) [12].

- Feature Selection: For each modality, reduce dimensionality using supervised feature selection. In the referenced study, linear or monotonic methods (e.g., Pearson or Spearman correlation with the target) outperformed non-linear methods in this context [29].

- Output: A reduced, informative feature set for each omic type.

2. Train Unimodal Survival Models:

- For each processed omics modality, train an independent predictive model. The referenced pipeline found that ensemble methods like gradient boosting or random forests can be effective [29].

- Validate each model's performance rigorously using multiple training-test splits and report confidence intervals for metrics like the C-index [29].

3. Fuse Predictions:

- Combine the predictions (e.g., risk scores) from each unimodal model into a final ensemble prediction.

- Use a simple averaging or a weighted averaging scheme, where weights can be based on the unimodal model's performance [29] [12].

Protocol 2: An Intermediate Fusion Approach Using a Neural Network

This protocol outlines the steps for using a neural network to learn joint representations of multi-omics data, suitable for tasks like subtype classification [28].

1. Input Stream Setup:

- Design separate input branches for each omics data type (e.g., genomics, transcriptomics, proteomics). Each branch should accept a feature vector from its respective modality [28].

2. Feature Learning and Compression:

- Each input branch can consist of one or more fully connected layers that act as a modality-specific encoder. The goal is to transform the raw input into a meaningful representation [28].

- Alternatively, use a method like a Variational Autoencoder (VAE) per modality to compress the data into a lower-dimensional latent space [12].

3. Representation Fusion and Model Training:

- Concatenate the outputs (the learned features) from all modality-specific branches. This concatenated vector forms the joint representation [28].

- Feed this joint representation into a final set of fully connected layers to perform the prediction task (e.g., classification or regression) [28].

- Train the entire network (all branches and the joint head) end-to-end, allowing the model to learn which cross-modal features are most relevant for the task [28].

Multi-Omics Fusion Workflows

The Scientist's Toolkit

Table 3: Essential computational tools and reagents for multi-omics data fusion.

| Tool / Reagent | Type | Primary Function | Example Use Case |

|---|---|---|---|

| Seurat [30] | Software Tool | Weighted nearest-neighbor integration for single-cell multi-omics data. | Integrating mRNA expression and chromatin accessibility data from the same cell [30]. |

| MOFA+ [30] | Software Tool | Factor analysis-based integration to disentangle variation across omics layers. | Identifying common sources of variation in unmatched multi-omics datasets (e.g., mRNA, DNA methylation) [30]. |

| GLUE (Graph-Linked Unified Embedding) [30] | Software Tool | Variational autoencoder that uses prior biological knowledge to anchor features for integration. | Triple-omic integration of chromatin accessibility, DNA methylation, and mRNA data [30]. |

| The Cancer Genome Atlas (TCGA) [11] | Data Repository | Provides large-scale, publicly available multi-omics datasets (genomics, epigenomics, transcriptomics, proteomics) from cancer patients. | Benchmarking and training multi-omics fusion models for cancer subtype classification or survival prediction [11]. |

| Autoencoders (AEs) / Variational Autoencoders (VAEs) [12] | ML Method | Neural networks for non-linear dimensionality reduction, creating a lower-dimensional latent representation of high-dimensional omics data. | Compressing transcriptomics and proteomics data into a shared latent space for intermediate fusion [12]. |

Leveraging AI and Machine Learning for Pattern Recognition and Data Fusion

Frequently Asked Questions (FAQs)

Q1: What are the most significant data-related challenges when beginning a multi-omics study? The primary challenges, often called the "four Vs" of big data, are Volume (high-dimensional data where features far exceed samples), Variety (structural differences between data types like discrete mutations vs. continuous protein measurements), Velocity (managing real-time data streams), and Veracity (distinguishing biological signals from technical noise and batch effects) [31]. Computational scalability and the "curse of dimensionality" are also major hurdles [31].

Q2: Which AI models are best suited for integrating disparate omics data types? No single model is best for all scenarios, but several have proven effective [31] [32] [11]:

- Graph Neural Networks (GNNs) are ideal for modeling known biological structures, such as protein-protein interaction networks perturbed by mutations [31].

- Multi-modal Transformers excel at fusing fundamentally different data types, such as MRI radiomics with transcriptomic data [31].

- Fully Connected Neural Networks (FCNs), especially when enhanced with contrastive learning and domain-specific embeddings (e.g., BioBERT), are highly effective for harmonizing metadata and variable descriptions across cohort studies [32].

- Convolutional Neural Networks (CNNs) are used for image-based data, such as automatically quantifying protein staining in tissue samples with pathologist-level accuracy [31].

Q3: How can I handle missing data in one or more omics layers? Advanced imputation strategies are recommended over simply removing features or samples. Matrix factorization and deep learning (DL)-based reconstruction methods can intelligently estimate missing values based on patterns in the available data [31]. The pervasive nature of missing data due to technical limitations makes this a critical step in the preprocessing workflow [31].

Q4: What does "data harmonization" mean in this context, and can it be automated? Data harmonization is the process of standardizing disparate variables and metadata across multiple datasets into a unified format [32]. This is crucial for cross-study analysis. Yes, it can be automated using Natural Language Processing (NLP). For example, one method uses a Fully Connected Neural Network with BioBERT embeddings to classify variable descriptions from different studies (e.g., "SystolicBP" vs. "SBPvisit1") into unified medical concepts with high accuracy (AUC of 0.99) [32].

Q5: Why are my AI models performing well on training data but failing to generalize to new datasets? This is often due to batch effects—technical variations introduced by different sequencing platforms, laboratories, or protocols. To improve generalizability, employ rigorous batch correction tools like ComBat and ensure your model validation includes external validation on a completely independent dataset [31]. Techniques like federated learning also allow for model training across institutions without sharing raw data, which can improve robustness [31].

Troubleshooting Guides

Issue 1: Poor Model Performance Due to Technical Batch Effects

Problem: Your model's predictive accuracy drops significantly when applied to data generated from a different site or platform.

Solution: Implement a rigorous batch correction and validation pipeline.

- Step 1: Diagnose Batch Effects. Use Principal Component Analysis (PCA) or other visualization tools to see if samples cluster more strongly by batch (e.g., lab ID) than by biological condition.

- Step 2: Apply Batch Correction. Utilize tools like ComBat or other normalization methods to remove technical artifacts while preserving biological signals [31].

- Step 3: Validate Externally. Always test the final model on an external cohort that was not used in any part of the training or tuning process [31]. This is the gold standard for assessing true generalizability.

Issue 2: Inability to Integrate Heterogeneous Data Types

Problem: You have genomic, proteomic, and image data, but cannot effectively fuse them into a single analytical framework.

Solution: Choose an integration method based on your scientific objective. The table below summarizes the main approaches.

Table 1: Multi-Omics Data Integration Methods and Tools

| Scientific Objective | Description | Example Methods | Reference |

|---|---|---|---|

| Subtype Identification | Discover novel disease subtypes by grouping patients based on multi-omics profiles. | Clustering (e.g., iCluster), Matrix Factorization | [11] |

| Detect Disease-Associated Patterns | Identify complex molecular patterns and biomarkers correlated with a condition. | Multi-Kernel Learning, Pattern Recognition | [11] |

| Understand Regulatory Processes | Uncover how changes at one molecular level (e.g., epigenomics) affect another (e.g., transcriptomics). | Network Inference (e.g., GNNs), Bayesian Networks | [31] [11] |

| Diagnosis/Prognosis | Build classifiers to predict patient outcome or disease state. | Supervised ML/DL (e.g., Transformers, CNNs) | [31] [11] |

| Drug Response Prediction | Predict a patient's sensitivity or resistance to a specific therapy. | Regression Models, "Digital Twin" simulations | [31] |

Issue 3: The "Black Box" Problem – Lack of Model Interpretability

Problem: Your model makes accurate predictions, but you cannot understand how it arrived at them, which is critical for biological insight and clinical trust.

Solution: Integrate Explainable AI (XAI) techniques into your workflow.

- Step 1: Use Inherently Interpretable Models. For simpler tasks, start with models like decision trees or logistic regression, which are more transparent.

- Step 2: Apply Post-Hoc Explanation Methods. For complex models like deep neural networks, use techniques such as SHapley Additive exPlanations (SHAP). SHAP quantifies the contribution of each input feature (e.g., a specific gene mutation) to the final prediction, making the model's decision process clearer [31].

- Step 3: Biological Validation. Use the feature importance scores from XAI to prioritize findings (e.g., key genes or pathways) for downstream experimental validation in the lab.

Experimental Protocols

Protocol 1: NLP-Based Automated Data Harmonization

This protocol details the method for using a Fully Connected Neural Network (FCN) to harmonize variable metadata, as described in [32].

1. Objective: To automatically map free-text variable names and descriptions from different biomedical datasets into harmonized medical concepts.

2. Materials & Reagents:

- Datasets: Metadata (variable names and descriptions) from cohort studies (e.g., ARIC, MESA, FHS).

- Pretrained Language Model: BioBERT (Bidirectional Encoder Representations from Transformers for Biomedical Text Mining).

- Computing Environment: Standard deep learning framework (e.g., PyTorch, TensorFlow).

3. Procedure:

- Step 1 - Data Preparation: Extract all variable descriptions. Manually annotate a subset into predefined harmonized concepts (e.g., "systolic blood pressure," "diabetes medication") to create a labeled ground truth.

- Step 2 - Generate Embeddings: Convert each variable description into a 768-dimensional semantic vector using the pretrained BioBERT model.

- Step 3 - Create Paired Dataset: Frame the task as a binary classification. Generate pairs of variable descriptions and label them as either belonging to the same concept (matched pair) or not (non-matched pair). Maintain a balanced ratio (e.g., 1:3) of matched to non-matched pairs.

- Step 4 - Model Training: Train an FCN classifier. The input is the cosine similarity between the BioBERT embedding vectors of a paired description. The network uses binary cross-entropy loss and the Adam optimizer.

- Step 5 - Inference: For a new, unlabeled variable description, the model calculates its similarity to all known concept representatives and assigns it to the concept with the highest similarity score.

4. Expected Results: The published FCN model achieved a top-5 accuracy of 98.95% and an Area Under the Curve (AUC) of 0.99, significantly outperforming a logistic regression baseline (AUC 0.82) [32].

Diagram 1: NLP-based data harmonization workflow.

Protocol 2: AI-Driven Multi-Omics Integration for Patient Subtyping

1. Objective: To integrate genomic, transcriptomic, and proteomic data to identify novel, clinically relevant disease subtypes.

2. Materials & Reagents:

- Omics Data: Matched genomic (SNVs/CNVs), transcriptomic (RNA-seq), and proteomic (mass spectrometry) data from the same patient cohort.

- Data Repositories: Publicly available data from sources like The Cancer Genome Atlas (TCGA) [11].

- Computational Tools: Cloud-based analytics platforms (e.g., AWS with SageMaker, HealthOmics, Athena) or local high-performance computing clusters [33].

3. Procedure:

- Step 1 - Data Preprocessing & Harmonization: Independently preprocess each omics layer. This includes quality control, normalization (e.g., DESeq2 for RNA-seq), and batch effect correction (e.g., using ComBat) [31].

- Step 2 - Dimensionality Reduction: Apply feature selection or extraction (e.g., PCA) to each data modality to reduce noise and computational complexity.

- Step 3 - Data Integration: Use an intermediate integration method designed for subtype identification. A common approach is multi-omics matrix factorization, which learns a joint representation of the patient across all data types in a lower-dimensional space.

- Step 4 - Clustering: Apply a clustering algorithm (e.g., k-means, hierarchical clustering) on the integrated patient representations to identify distinct molecular subtypes.

- Step 5 - Clinical Validation: Correlate the identified subtypes with clinical outcomes (e.g., overall survival, response to therapy) to assess their biological and clinical relevance.

4. Expected Results: Discovery of patient subgroups with distinct multi-omics profiles and significantly different survival outcomes, which may not be identifiable using single-omics data alone. For example, one study reported integrated classifiers with AUCs between 0.81–0.87 for early-detection tasks [31].

Diagram 2: Multi-omics integration and subtyping workflow.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for AI-Driven Multi-Omics Research

| Tool / Resource Name | Type | Primary Function in Multi-Omics | Reference / Link |

|---|---|---|---|

| BioBERT | Pretrained Language Model | Generates domain-specific semantic embeddings for biomedical text, enabling automated metadata harmonization. | [32] |

| ComBat | Statistical Algorithm | Removes batch effects from high-dimensional datasets to improve data quality and model generalizability. | [31] |

| SHAP (SHapley Additive exPlanations) | Explainable AI (XAI) Library | Interprets complex AI model outputs by quantifying the contribution of each feature to a prediction. | [31] |

| Graph Neural Networks (GNNs) | AI Model Architecture | Models biological networks (e.g., protein-protein interactions) to uncover dysregulated pathways. | [31] |

| The Cancer Genome Atlas (TCGA) | Data Repository | Provides curated, publicly available multi-omics datasets from cancer patients for analysis and benchmarking. | [11] |

| AWS HealthOmics & SageMaker | Cloud Computing Platform | Offers managed services for storing, processing, and analyzing multi-omics data at scale. | [33] |

| Multi-Kernel Learning | Data Integration Method | Fuses different omics data types by assigning each a separate "kernel" function, then combining them. | [11] |

FAQs and Troubleshooting Guides

This section addresses common challenges researchers face during data pre-processing for multi-omics studies, providing targeted solutions and best practices.

FAQ 1: How should I handle missing data in my multi-omics dataset before running machine learning models?

- Problem: Machine learning models often fail or perform poorly when faced with missing values, which are pervasive in real-world omics data [34] [35].

- Solutions:

- Do not simply ignore missing values. Most algorithms cannot handle them and will produce errors [34].

- Use imputation. Replacing missing values with plausible estimates is the standard approach. The best method depends on your data and the missingness mechanism [36] [35].

- Impute before feature selection. Research indicates that performing imputation before feature selection leads to better model performance, as measured by recall, precision, F1-score, and accuracy [35].

- Troubleshooting: If your model's performance is poor after imputation, investigate the pattern of missingness (e.g., MCAR, MAR, NMAR) and try a more advanced imputation method. Simple methods like mean imputation can distort data distribution and variance [35].

FAQ 2: My data comes from different experimental batches. How can I correct for technical batch effects without removing true biological signals?

- Problem: Batch effects are technical biases from different library preps, sequencing runs, or sample handling that can obscure real biology and generate false signals [37].

- Solutions:

- Use established correction methods. Algorithms like ComBat and limma are designed to model and remove batch effects while preserving biological variation [38].

- Consider data incompleteness. For omic data with many missing values, standard tools may fail. Use methods specifically designed for incomplete data, such as Batch-Effect Reduction Trees (BERT) or HarmonizR, which retain more numeric values during integration [38].

- Leverage covariates and references. When batch designs are imbalanced, specify categorical covariates (e.g., biological conditions) or use reference samples to guide the correction process for more robust results [38].

- Troubleshooting: After correction, always validate that known biological signals persist. There is a risk of both under-correction (leaving residual bias) and over-correction (removing true biological variation) [37].

FAQ 3: What is the difference between data normalization for databases and for machine learning?

- Problem: The term "normalization" is used in two distinct contexts, which can cause confusion.

- Solutions:

- Database Normalization: This is a structural process for organizing data in a relational database to reduce redundancy and improve integrity. It follows rules called "normal forms" (1NF, 2NF, 3NF) [39] [40].

- Machine Learning Normalization (Feature Scaling): This is a mathematical process of bringing numeric features to a common scale to prevent algorithms with distance-based calculations from being skewed by the original magnitude of the features [39] [34].

- Troubleshooting: For machine learning, if your model is converging slowly or is dominated by a few features, you likely need to apply feature scaling (e.g., standardization, normalization) to your numerical data [34].

FAQ 4: Should I perform imputation before or after normalizing or correcting batch effects in a multi-omics workflow?

- Problem: The order of operations in a pre-processing pipeline can significantly impact the final results.