Cross-Attention in Protein-Ligand Interaction: A New Paradigm for AI-Driven Drug Discovery

This article explores the transformative impact of cross-attention mechanisms in predicting protein-ligand interactions, a cornerstone of modern drug discovery.

Cross-Attention in Protein-Ligand Interaction: A New Paradigm for AI-Driven Drug Discovery

Abstract

This article explores the transformative impact of cross-attention mechanisms in predicting protein-ligand interactions, a cornerstone of modern drug discovery. We begin by establishing the foundational principles of cross-attention and its superiority over traditional methods in capturing complex biomolecular relationships. The discussion then progresses to a detailed analysis of cutting-edge methodologies, including EZSpecificity, CAT-DTI, and KEPLA, which leverage cross-attention for tasks ranging from binding affinity prediction to substrate specificity and binding site identification. We further address critical troubleshooting and optimization strategies to enhance model generalizability and efficiency, tackling challenges like data imbalance and domain shift. Finally, the article provides a rigorous comparative validation of these AI-driven approaches against established benchmarks, demonstrating their significant performance gains. This resource is tailored for researchers, scientists, and drug development professionals seeking to understand and implement state-of-the-art computational techniques in their workflows.

The Foundational Shift: Why Cross-Attention is Revolutionizing Protein-Ligand Prediction

Limitations of Traditional Docking and Machine Learning Methods

Molecular docking, a cornerstone of computational drug discovery, is undergoing a significant transformation driven by artificial intelligence (AI). While traditional methods have served as indispensable tools for predicting protein-ligand interactions, they face substantial limitations in accuracy, physical plausibility, and generalization. The emergence of deep learning (DL) approaches has introduced new capabilities but also revealed novel challenges. This application note systematically examines the limitations of both traditional and DL-based molecular docking methods, contextualized within a research framework utilizing cross-attention mechanisms for protein-ligand interaction studies. We provide a comprehensive analysis of current limitations, quantitative performance comparisons, and detailed protocols for evaluating docking methods, specifically designed for researchers and drug development professionals.

Critical Limitations of Traditional and Deep Learning Docking Methods

Fundamental Constraints of Traditional Docking Approaches

Traditional physics-based docking tools like Glide SP and AutoDock Vina operate on a search-and-score framework, combining conformational search algorithms with scoring functions to estimate binding affinities [1]. These methods face several inherent limitations that constrain their predictive accuracy and practical utility in drug discovery pipelines.

A primary limitation is the oversimplified treatment of molecular flexibility. Most traditional methods allow ligand flexibility while treating the protein receptor as rigid, neglecting critical induced-fit effects where proteins undergo conformational changes upon ligand binding [2]. This simplification becomes particularly problematic in real-world scenarios such as cross-docking (docking to alternative receptor conformations) and apo-docking (using unbound structures), where protein flexibility significantly impacts binding pose accuracy.

The scoring function problem represents another critical limitation. Traditional scoring functions struggle to accurately predict binding affinities because they cannot adequately capture the complex physics of molecular recognition or account for entropic contributions and solvation effects [3]. Consequently, while these functions may successfully identify correct binding poses, they frequently fail in ranking compounds by binding affinity, limiting their utility for virtual screening [3] [4].

From a computational perspective, traditional methods face sampling and efficiency challenges. The computational demand of exploring high-dimensional conformational spaces forces traditional methods to sacrifice accuracy for speed, particularly problematic for large-scale virtual screening against rapidly expanding compound libraries [2] [5].

Emerging Challenges in Deep Learning-Based Docking

Deep learning approaches have introduced transformative capabilities but also revealed distinct limitations. Current DL docking methods can be categorized into generative diffusion models, regression-based architectures, and hybrid frameworks, each with specific strengths and weaknesses [1].

A significant concern is the generalization gap. DL models exhibit performance degradation when encountering novel protein binding pockets, sequences, or ligand scaffolds not represented in their training data [1] [6]. This limitation restricts their applicability in real-world drug discovery targeting unprecedented binding sites.

The physical plausibility problem particularly affects regression-based DL methods, which often generate chemically invalid structures with improper bond lengths, angles, or steric clashes despite favorable root-mean-square deviation (RMSD) scores [1] [2]. Evaluation using the PoseBusters toolkit reveals that many DL methods produce physically implausible structures, with some regression-based methods achieving PB-valid rates below 20% on challenging datasets [1].

Furthermore, biological relevance deficiencies persist even in geometrically accurate predictions. DL models frequently fail to recapitulate key protein-ligand interactions essential for biological activity, limiting their utility for understanding mechanism of action or guiding structure-based optimization [1].

Table 1: Quantitative Performance Comparison Across Docking Method Types

| Method Category | Pose Accuracy (RMSD ≤ 2Å) | Physical Validity (PB-valid) | Combined Success Rate | Virtual Screening Efficacy | Generalization to Novel Pockets |

|---|---|---|---|---|---|

| Traditional Methods | Moderate (e.g., Glide SP: 81.18% on Astex) | High (e.g., Glide SP: >94% across datasets) | High (e.g., Glide SP: 70.59% on Astex) | Moderate | Moderate |

| Generative Diffusion | High (e.g., SurfDock: 91.76% on Astex) | Moderate to Low (e.g., SurfDock: 63.53% on Astex) | Moderate (e.g., SurfDock: 61.18% on Astex) | Variable | Limited |

| Regression-based DL | Variable | Low (often <20% on challenging sets) | Low | Limited | Poor |

| Hybrid Methods | Moderate to High (e.g., Interformer: 81.18% on Astex) | Moderate to High (e.g., Interformer: 72.94% on Astex) | High (e.g., Interformer: 68.24% on Astex) | Promising | Moderate |

Cross-Attention Mechanisms for Protein-Ligand Interaction Modeling

Cross-attention layers offer a promising architectural framework for addressing key limitations in both traditional and DL-based docking approaches. These mechanisms enable explicit, learnable interactions between protein and ligand representations, capturing binding patterns in a ligand-aware manner [7].

The LABind framework exemplifies this approach, utilizing a graph transformer to capture binding patterns within the local spatial context of proteins while employing cross-attention to learn distinct binding characteristics between proteins and ligands [7]. This architecture allows the model to integrate protein sequence and structural information with ligand chemical properties encoded via pre-trained molecular language models, creating a unified representation of the interaction landscape.

Cross-attention mechanisms specifically address the generalization challenge by learning transferable binding patterns across diverse ligand types, including unseen ligands not present in training data [7]. Additionally, they mitigate the biological relevance deficiency by explicitly modeling interaction patterns rather than relying solely on geometric fitting.

Experimental Protocols for Method Evaluation

Protocol 1: Comprehensive Docking Performance Assessment

Objective: Systematically evaluate docking method performance across multiple dimensions including pose accuracy, physical validity, interaction recovery, and generalization.

Materials:

- Benchmark Datasets: Curate evaluation sets including the Astex diverse set (known complexes), PoseBusters benchmark (unseen complexes), and DockGen dataset (novel protein binding pockets) [1] [6]

- Docking Software: Select representative methods from each category: Traditional (Glide SP, AutoDock Vina), Generative Diffusion (SurfDock, DiffBindFR), Regression-based (KarmaDock, QuickBind), and Hybrid (Interformer) [1]

- Evaluation Tools: PoseBusters for physical plausibility assessment [1]

Procedure:

- Dataset Preparation: Prepare protein structures and ligands for each benchmark dataset, ensuring proper formatting and protonation states

- Pose Prediction: Run each docking method with default parameters to generate predicted binding poses

- Accuracy Assessment: Calculate RMSD between predicted and experimental poses using the formula: RMSD = √(Σ(xipred - xiexp)²/N), where xi represents atomic coordinates

- Physical Validity Check: Evaluate poses using PoseBusters to assess chemical and geometric consistency, including bond lengths, angles, stereochemistry, and clash detection

- Interaction Analysis: Compare key protein-ligand interactions (hydrogen bonds, hydrophobic contacts, salt bridges) between predicted and experimental poses

- Generalization Testing: Evaluate performance stratification across datasets of varying difficulty

Expected Outcomes: Traditional methods will demonstrate superior physical validity, while diffusion models will excel in pose accuracy. Hybrid methods are expected to provide the most balanced performance across evaluation metrics [1].

Protocol 2: Cross-Attention Model Training and Validation

Objective: Train and validate a cross-attention based model for ligand-aware binding site prediction.

Materials:

- Protein-Ligand Complex Data: Curated structures from PDBBind with binding site annotations [7]

- Feature Extraction Tools: Ankh protein language model and MolFormer molecular encoder [7]

- Computational Framework: Graph neural network implementation with cross-attention layers (e.g., PyTorch Geometric)

Procedure:

- Data Preprocessing: Extract protein sequences and structures with corresponding ligand SMILES strings from curated datasets

- Feature Generation: Encode protein sequences using Ankh to obtain sequence embeddings and process 3D structures to extract geometric features including angles, distances, and directions between residues [7]

- Ligand Encoding: Generate ligand representations using MolFormer pre-trained on SMILES sequences [7]

- Model Architecture: Implement graph transformer for protein feature extraction with cross-attention mechanism between protein and ligand representations

- Training Protocol: Train model to predict binding residues using multi-task learning objective with evaluation metrics including F1 score, Matthews correlation coefficient (MCC), and area under precision-recall curve (AUPR) [7]

- Validation: Evaluate generalization to unseen ligands and proteins using held-out test sets

Expected Outcomes: The cross-attention model should demonstrate improved binding site prediction accuracy, particularly for novel ligands, by explicitly modeling protein-ligand interactions rather than relying on pattern matching alone [7].

Table 2: Research Reagent Solutions for Docking Method Development

| Reagent Category | Specific Tools | Function | Application Context |

|---|---|---|---|

| Benchmark Datasets | Astex Diverse Set, PoseBusters Benchmark, DockGen | Method evaluation across difficulty levels | Performance validation and comparison |

| Evaluation Toolkits | PoseBusters | Physical plausibility assessment | Quality control for predicted structures |

| Protein Encoders | Ankh, ESMFold | Protein sequence and structure representation | Feature extraction for ML models |

| Ligand Encoders | MolFormer, RDKit | Molecular property calculation and representation | Ligand feature generation |

| Docking Software | Glide SP, AutoDock Vina, SurfDock, DiffBindFR | Traditional and DL-based pose generation | Baseline comparisons and hybrid approaches |

| Analysis Frameworks | Scikit-learn, PyTorch Geometric | Model implementation and evaluation | Custom method development |

Integration Strategies and Future Directions

To address the identified limitations, researchers should adopt integrated strategies that leverage the complementary strengths of different approaches. Hybrid methods that combine traditional conformational sampling with DL-based scoring represent a promising direction, offering improved balance between accuracy and physical plausibility [1]. Additionally, incorporating protein flexibility through molecular dynamics ensembles or specialized flexible docking algorithms can enhance performance for challenging targets with induced-fit effects [2] [8].

The integration of cross-attention mechanisms with physical constraints presents a particularly valuable research direction. By combining the representational power of DL with physics-based priors, these approaches could address both the physical plausibility and generalization challenges simultaneously [7]. Future work should focus on developing unified frameworks that explicitly model the dynamic nature of protein-ligand interactions while maintaining computational efficiency suitable for large-scale virtual screening.

Cross-attention mechanisms are revolutionizing the prediction of pairwise interactions in computational biology, particularly in the critical areas of protein-ligand and protein-protein binding. This architectural innovation enables deep, bidirectional information exchange between molecular entities, moving beyond traditional methods that process proteins and their partners in isolation. By allowing each residue in a protein to dynamically attend to the most relevant atoms or residues in a ligand or partner protein, cross-attention provides a powerful framework for modeling the complex, interdependent nature of molecular recognition events. This application note details the implementation, experimental protocols, and practical applications of cross-attention models, serving as an essential resource for researchers and drug development professionals engaged in structure-based interaction prediction.

The core innovation lies in cross-attention's ability to create a learnable communication channel between two distinct molecular graphs or sequences. In practical terms, this means that when predicting how a protein interacts with a specific ligand, the model doesn't just look at the protein and ligand separately—it enables the protein's representation to be influenced by the ligand's chemical characteristics, and vice versa. This bidirectional flow of information allows the model to capture subtle binding preferences and specific interaction patterns that would be missed by methods treating the interaction partners independently. Implementations such as LABind, Pair-EGRET, KEPLA, and PLAGCA have demonstrated that this approach significantly improves prediction accuracy for binding sites, interaction residues, and binding affinity, providing valuable tools for accelerating drug discovery and understanding fundamental biological processes.

Core Architectural Framework

Fundamental Mechanism of Cross-Attention

At its essence, cross-attention operates as an information-bridging mechanism between two distinct input sources—typically designated as "query" and "key-value" pairs. In protein-ligand interaction contexts, the protein often serves as the query source, while the ligand provides keys and values, or vice versa. The mechanism computes attention weights by comparing each element from the query source against all elements from the key source, determining how much focus to place on different parts of the key source when constructing updated representations for the query elements. These attention weights are then used to create weighted combinations of the value vectors, producing contextually enriched representations that incorporate relevant information from the interaction partner.

The mathematical formulation follows the standard attention mechanism: Attention(Q, K, V) = softmax(QKᵀ/√dₖ)V where Q (queries) originates from one modality (e.g., protein residues), and K (keys) and V (values) originate from the other modality (e.g., ligand atoms or molecular representation). The scaling factor √dₖ stabilizes gradients during training. The resulting output contains transformed query representations that now incorporate the most relevant information from the key-value source, effectively modeling the pairwise dependencies between the two interacting entities.

Implementation Variants in Current Methods

Recent advanced implementations have adapted this core mechanism to various molecular data representations:

Graph-based Cross-Attention: Methods like Pair-EGRET operate on graph representations of protein structures, where each residue forms a node connected to its spatial neighbors. Cross-attention is applied between graphs of interacting protein pairs, allowing interfacial residues to focus on their binding partners across the molecular interface [9]. Similarly, LABind encodes protein structures as graphs with spatial features and applies cross-attention between protein residue representations and ligand representations derived from SMILES sequences [10] [7].

Hierarchical Cross-Attention: KEPLA implements a dual-objective framework where cross-attention operates at both local and global levels. Local cross-attention captures fine-grained interactions between specific protein residues and ligand atoms, while global alignment ensures consistency with broader biochemical knowledge from Gene Ontology and ligand property databases [11].

Multi-Modal Cross-Attention: PLAGCA integrates multiple data types by employing cross-attention between different feature representations—specifically between global sequence features extracted from protein FASTA sequences and ligand SMILES strings, and local structural features derived from 3D molecular graphs of binding pockets [12].

Quantitative Performance Analysis

Binding Site Prediction Accuracy

Table 1: Performance comparison of cross-attention methods for protein-ligand binding site prediction

| Method | Dataset | AUPR | MCC | F1 Score | Key Advantage |

|---|---|---|---|---|---|

| LABind | DS1 | 0.723 | 0.581 | 0.662 | Generalization to unseen ligands |

| LABind | DS2 | 0.695 | 0.554 | 0.641 | Ligand-aware binding characteristics |

| LABind | DS3 | 0.708 | 0.567 | 0.653 | Unified model for small molecules & ions |

| GraphBind | DS1 | 0.642 | 0.492 | 0.583 | Hierarchical GNN without cross-attention |

| DeepSurf | DS1 | 0.587 | 0.451 | 0.539 | Surface-based features only |

| P2Rank | DS1 | 0.601 | 0.468 | 0.551 | Conservation & pocket detection |

LABind demonstrates marked advantages over competing methods across multiple benchmark datasets, with particularly strong performance in AUPR (Area Under Precision-Recall Curve), which is especially informative for imbalanced classification tasks where binding sites represent a small minority of residues [10] [7]. The integration of ligand information through cross-attention enables the model to learn distinct binding patterns for different ligand types while maintaining robustness when applied to ligands not present in the training data.

Interaction Affinity and Interface Prediction

Table 2: Performance of cross-attention methods for affinity prediction and interface residue identification

| Method | Dataset | RMSE | Pearson's r | MAE | Prediction Task |

|---|---|---|---|---|---|

| KEPLA | PDBbind | 0.991 | 0.831 | 0.745 | Binding affinity |

| PLAGCA | PDBbind | 1.028 | 0.815 | 0.768 | Binding affinity |

| Pair-EGRET | DSiB | 0.894* | 0.862* | N/A | Interface residues |

| KEPLA | CSAR-HiQ | 1.124 | 0.812 | 0.853 | Binding affinity |

| *Baseline (no cross-attention) | PDBbind | 1.123 | 0.786 | 0.842 | Binding affinity |

Note: * indicates metrics converted from method-specific evaluation criteria; DSiB refers to partner-specific interaction benchmark [9] [11] [12].

For binding affinity prediction, KEPLA achieves significant improvements, reducing RMSE by 5.28% on PDBbind and 12.42% on CSAR-HiQ compared to state-of-the-art baselines [11]. This enhancement stems from the effective integration of biochemical knowledge with structural information through the cross-attention mechanism. Similarly, Pair-EGRET demonstrates remarkable performance in partner-specific protein-protein interaction site prediction, accurately identifying interfacial residues through learned cross-attention patterns between protein pairs [9].

Experimental Protocols

Protocol 1: Protein-Ligand Binding Site Prediction with LABind

Purpose: To identify binding residues for small molecules and ions in a ligand-aware manner, including generalization to unseen ligands.

Input Requirements:

- Protein structure file (PDB format) or sequence for structure prediction

- Ligand SMILES string

- Optional: Experimental binding site annotations for validation

Procedure:

- Data Preprocessing

- Generate protein graph representation from 3D structure

- Calculate node spatial features: angles, distances, directions from atomic coordinates

- Compute edge spatial features: directions, rotations, and distances between residues

- Extract protein sequence embeddings using Ankh protein language model

- Calculate DSSP features for structural information

- Concatenate sequence embeddings and DSSP features to form protein-DSSP embedding

Ligand Representation

- Input ligand SMILES sequence into MolFormer pre-trained model

- Extract molecular representation capturing chemical properties

- Project representation to compatible dimensionality with protein features

Cross-Attention Implementation

- Implement attention-based learning interaction module:

- Where: Qprotein = learned queries from protein representation, Kligand, V_ligand = keys and values from ligand representation

- Apply multi-head attention (typically 8 heads) to capture different interaction aspects

Binding Site Prediction

- Process cross-attention output through Multi-Layer Perceptron classifier

- Apply sigmoid activation for per-residue binding probability

- Use optimal threshold (maximizing MCC) for binary predictions

Validation & Analysis

- Calculate performance metrics: AUPR, MCC, F1, Recall, Precision

- Compare with ground truth binding residues

- Visualize attention weights to interpret binding determinants

Technical Notes: LABind maintains robust performance even with predicted protein structures from ESMFold or OmegaFold, extending applicability to proteins without experimental structures [10] [7].

Protocol 2: Protein-Protein Interaction Site Prediction with Pair-EGRET

Purpose: To accurately predict interfacial residues in protein-protein complexes using partner-specific modeling.

Input Requirements:

- 3D structures of both interacting proteins (receptor and ligand)

- Optional: PDB file of complex for validation

Procedure:

- Graph Construction

- Represent both proteins as directed k-nearest neighbor graphs

- Define graph nodes corresponding to amino acid residues

- Create directed edges to k closest neighbors based on average inter-atom distances

- Calculate edge features: inter-residue distance and relative orientation

Feature Extraction

- Generate node features using ProtBERT embeddings (1024-dimensional)

- Append physicochemical properties (16 dimensions): hydrophobicity, polarity, flexibility, etc.

- Final node feature dimension: 1040

Cross-Attention Between Protein Pairs

- Implement edge-aggregated graph attention network (GAT)

- Apply cross-attention mechanism between receptor and ligand graphs:

- Where M represents edge feature aggregation from both proteins

- Use learned attention coefficients to weight neighbor contributions

Interface Prediction

- Process final node representations through output layer

- Predict interaction probability for each residue

- Generate pairwise residue interaction predictions if needed

Interpretation & Validation

- Analyze cross-attention matrix to identify critical residue pairs

- Visualize interfacial residues on 3D structure

- Calculate interface region accuracy and pairwise precision

Technical Notes: Pair-EGRET excels at both interface region prediction and specific residue-residue interaction identification, providing comprehensive interaction mapping [9].

Protocol 3: Binding Affinity Prediction with KEPLA

Purpose: To predict protein-ligand binding affinity incorporating biochemical knowledge from Gene Ontology and ligand properties.

Input Requirements:

- Protein amino acid sequence (FASTA format)

- Ligand molecular graph or SMILES string

- Optional: 3D structure for local feature extraction

Procedure:

- Input Encoding

- Protein: Encode sequence using ESM (Evolutionary Scale Modeling)

- Ligand: Encode molecular graph using GCN (Graph Convolutional Network)

- Generate both global and local representations for both molecules

Knowledge Integration

- Retrieve Gene Ontology annotations for protein

- Extract ligand properties: hydrogen bond donors/acceptors, molecular descriptors

- Construct knowledge graph embeddings for both entities

- Align structural representations with knowledge embeddings

Cross-Attention Module

- Implement local interaction mapping between protein and ligand representations

- Apply cross-attention to capture fine-grained interactions:

- Fuse outputs from structural and knowledge pathways

Affinity Prediction

- Process joint representation through MLP decoder

- Output continuous binding affinity value (pKd or pKi)

- Apply regularization to prevent overfitting

Cross-Domain Evaluation

- Implement cluster-based pair split for domain shift simulation

- Train on source domain (60% protein clusters + 60% ligand clusters)

- Evaluate on target domain (remaining clusters)

- Assess generalization capability

Technical Notes: KEPLA's knowledge enhancement provides scientific interpretability through attention visualization and knowledge graph relations, moving beyond black-box predictions [11].

Visualization Framework

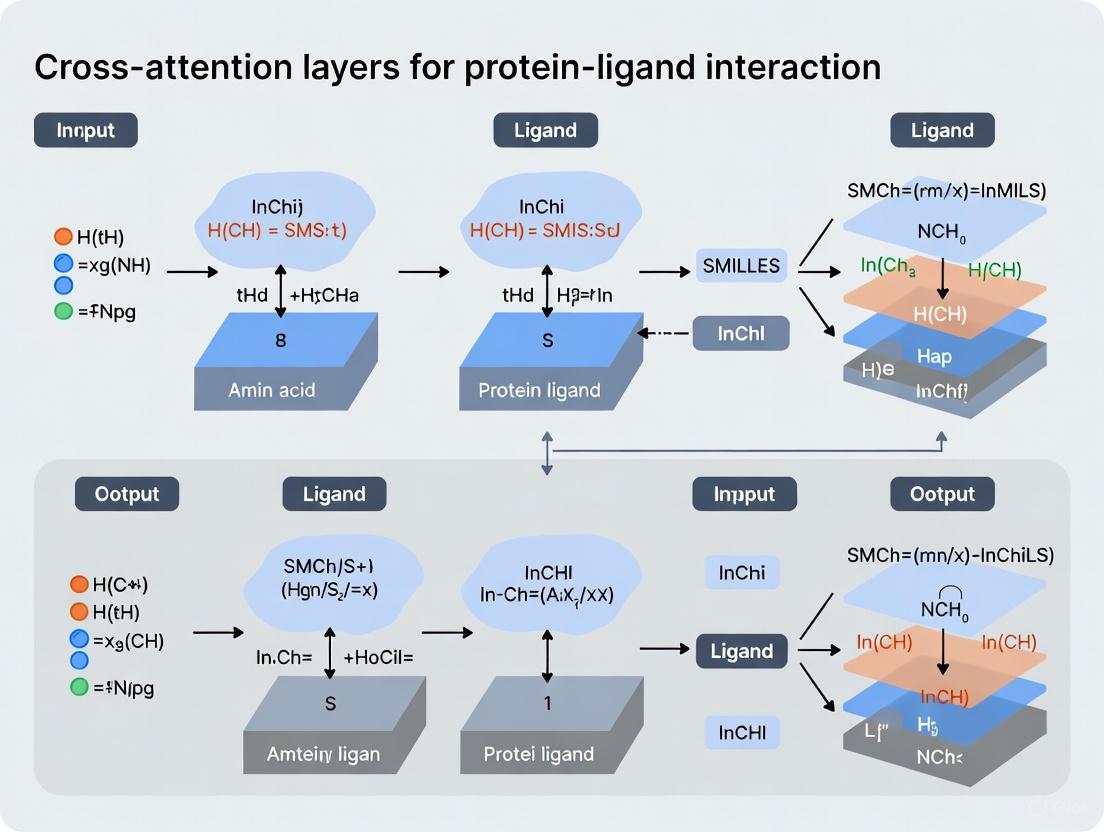

Workflow Diagram: Cross-Attention in Protein-Ligand Interaction

Diagram Title: LABind Cross-Attention Workflow

Architecture Diagram: Cross-Attention Mechanism

Diagram Title: Cross-Attention Mechanism Architecture

Research Reagent Solutions

Table 3: Essential computational tools and resources for cross-attention implementation

| Resource | Type | Application | Access |

|---|---|---|---|

| ProtBERT | Protein Language Model | Generating contextual residue embeddings from protein sequences | HuggingFace Model Hub |

| Ankh | Protein Language Model | Sequence representation in LABind | OpenSource |

| MolFormer | Molecular Language Model | Ligand representation from SMILES strings | NVIDIA NGC Catalog |

| ESMFold/OmegaFold | Structure Prediction | Generating 3D structures from sequences when experimental structures unavailable | OpenSource |

| DSSP | Structural Feature Tool | Calculating secondary structure and solvent accessibility | GitHub Repository |

| PDBbind | Benchmark Dataset | Training and evaluation for affinity prediction | Public Database |

| Gene Ontology | Knowledge Base | Biochemical knowledge integration in KEPLA | Public Database |

| RDKit | Cheminformatics | Molecular descriptor calculation and SMILES processing | OpenSource |

Implementation Considerations

Data Preparation Guidelines

Successful implementation of cross-attention models requires careful data preparation. For protein inputs, ensure consistent preprocessing of 3D structures, including proper hydrogen addition and residue numbering alignment. For ligand inputs, standardize SMILES representation using tools like RDKit to avoid representation variances. When working with binding affinity data, carefully curate the dataset to remove ambiguous complexes and ensure consistent measurement types (Kd, Ki, IC50). Implement rigorous data splitting strategies, such as cluster-based splits that separate proteins and ligands by similarity to prevent data leakage and properly evaluate generalization capability [11].

Computational Requirements and Optimization

Cross-attention models are computationally intensive, particularly for large protein complexes or high-throughput screening. Recommended implementation includes GPU acceleration with at least 16GB VRAM for training, and batch size optimization to balance memory constraints and training stability. For attention computation, consider implementing memory-efficient variants such as factored attention or block-sparse patterns when working with very large inputs. Monitoring attention entropy during training can help identify collapsed attention heads that may require reinitialization or regularization.

Interpretation and Validation Strategies

The cross-attention weights provide inherent interpretability, but require careful analysis. Implement attention visualization tools to map attention patterns onto 3D structures, identifying potential binding hotspots. Validate predictions through multiple metrics beyond overall accuracy, including performance on specific ligand classes and statistical significance testing. For binding site predictions, complement computational validation with experimental literature evidence when available, and consider employing ensemble methods to improve robustness across diverse protein families and ligand types.

In the field of computational drug discovery, accurately predicting how small molecules (ligands) interact with protein targets is a fundamental challenge. Traditional methods often struggle to capture the complex, long-range dependencies that govern these interactions, where atoms distant in sequence can be spatially close and critical for binding. Cross-attention mechanisms, a core component of modern transformer architectures, are emerging as a powerful solution to this challenge. These mechanisms allow for direct, dynamic communication between all elements of a protein and all elements of a ligand, enabling models to identify and weigh the importance of specific inter-molecular relationships regardless of their positional separation. This application note details how cross-attention is revolutionizing protein-ligand interaction research by capturing these non-local dependencies, providing researchers with protocols, data, and tools for implementation.

Quantitative Superiority of Cross-Attention Models

Cross-attention-based models have demonstrated state-of-the-art performance across multiple benchmarks related to protein-ligand interactions, from predicting binding affinity to identifying binding sites.

Table 1: Performance of Cross-Attention Models on Binding Affinity Prediction (CASF-2016 Benchmark)

| Model | Core Principle | Pearson's R (↑) | RMSE (↓) | MAE (↓) | CI (↑) |

|---|---|---|---|---|---|

| DAAP [13] | Distance features + Attention | 0.909 | 0.987 | 0.745 | 0.876 |

| PLAGCA [14] | Graph Cross-Attention | 0.864 | 1.120 | 0.860 | 0.847 |

| LumiNet [15] | Physics-integrated GNN | 0.850 | - | - | - |

Table 2: Performance of Cross-Attention Models on Binding Site Prediction

| Model | Task | Key Metric | Performance |

|---|---|---|---|

| LABind [7] [10] | Ligand-aware Binding Site Prediction | AUPR | Superior to P2Rank, DeepSurf, and DeepPocket |

| EZSpecificity [16] | Enzyme Substrate Specificity | Identification Accuracy | 91.7% (vs. 58.3% for previous model) |

The DAAP (Distance plus Attention for Affinity Prediction) model highlights the power of combining physics-inspired distance features with an attention mechanism, achieving a remarkably high correlation coefficient of 0.909 on the standard CASF-2016 benchmark [13]. Similarly, PLAGCA integrates global sequence features with local 3D structural features via graph cross-attention, demonstrating superior generalization capability and lower computational costs [14]. For binding site identification, LABind utilizes a graph transformer and cross-attention to learn distinct binding characteristics from protein structures and ligand SMILES sequences, enabling it to predict sites even for unseen ligands [7] [10].

Experimental Protocols for Cross-Attention Implementation

Protocol A: Implementing a Graph Cross-Attention Workflow for Affinity Prediction (Based on PLAGCA)

This protocol outlines the procedure for predicting protein-ligand binding affinity by integrating global and local features with cross-attention [14].

1. Input Representation and Feature Extraction: * Protein Global Features: Input the protein's FASTA sequence. Use a self-attention block or a pre-trained protein language model (e.g., Ankh [7]) to generate a global feature representation of the entire protein sequence. * Ligand Global Features: Input the ligand's SMILES string. Use a self-attention block or a pre-trained molecular language model (e.g., MolFormer [7]) to generate a global feature representation of the ligand. * Local Structure Representation: * Generate the 3D structure of the protein's binding pocket and the ligand. * Represent the pocket and ligand as a molecular graph, where nodes are atoms/residues and edges represent bonds or spatial proximity. * Use a Graph Neural Network (GNN) to generate initial atomic-level embeddings for both molecules.

2. Feature Interaction via Graph Cross-Attention: * Input the protein pocket and ligand graph embeddings into a cross-attention module. * In this module, the ligand embeddings serve as the Query, and the protein pocket embeddings serve as the Key and Value (or vice-versa). This allows each ligand atom to attend to and aggregate relevant information from all protein pocket atoms. * The output is a refined ligand representation that is context-aware of the protein pocket's structure.

3. Feature Fusion and Prediction: * Concatenate the protein global features, ligand global features, and the refined local interaction features from the cross-attention module. * Feed the combined feature vector into a Multi-Layer Perceptron (MLP) regressor. * The final output is the predicted binding affinity (e.g., pKd, pKi).

Protocol B: Ligand-Aware Binding Site Prediction with Cross-Attention (Based on LABind)

This protocol describes a method for predicting which protein residues form a binding site for a specific small molecule or ion [7] [10].

1. Input Encoding: * Ligand Encoding: Input the SMILES sequence of the ligand into a pre-trained molecular language model (MolFormer) to obtain a comprehensive ligand representation. * Protein Encoding: * Sequence Features: Input the protein sequence into a pre-trained protein language model (Ankh) to obtain per-residue embeddings. * Structural Features: Process the protein's 3D structure with a tool like DSSP to obtain geometric features (e.g., angles, distances, solvent accessibility). * Graph Construction: Convert the protein structure into a graph where nodes are residues. Node features are a combination of sequence embeddings and DSSP features. Edge features include spatial distances and directions between residues.

2. Protein-Ligand Interaction with Cross-Attention: * Process the protein graph through a graph transformer to capture internal residue-residue relationships and binding patterns. * The ligand representation and the transformed protein residue representations are processed through a cross-attention mechanism. * This mechanism enables the protein residues to "query" the ligand representation, learning the distinct binding characteristics for that specific ligand.

3. Binding Site Classification: * The output representation for each residue, now enriched with protein-ligand interaction information, is fed into an MLP classifier. * The classifier predicts a probability for each residue, indicating its likelihood of being part of a binding site for the query ligand.

Visualizing Workflows and Architectures

Graph 1: Hierarchical Workflow for Affinity Prediction. This diagram illustrates the integration of global sequence features and local 3D structural features through a cross-attention mechanism, as seen in models like PLAGCA [14] and LABind [7].

Graph 2: Core Cross-Attention Architecture. This diagram details the core cross-attention mechanism where the ligand representation queries the protein context, enabling ligand-aware prediction of binding sites, a key feature of LABind [7] [10].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Research Reagent Solutions for Cross-Attention Research

| Item Name | Function/Application | Specific Examples |

|---|---|---|

| PDBbind Database | Provides curated experimental protein-ligand structures and binding affinities for training and benchmarking. | PDBbind v2016, v2020 [13] [14] |

| CASF Benchmark | Standardized benchmark set for rigorous evaluation of scoring power (affinity prediction). | CASF-2016 [13] [15] |

| Pre-trained Language Models | Provides rich, contextualized initial representations for proteins and ligands, boosting model performance. | Ankh (Protein), MolFormer (Ligand) [7] [10] |

| Graph Neural Network (GNN) Libraries | Framework for building models that operate directly on molecular graph structures. | PyTorch Geometric, Deep Graph Library (DGL) [17] [15] |

| Structure Analysis Tools | Extracts secondary structure and solvent accessibility features from protein 3D structures. | DSSP [7] [10] |

| Cross-Attention Implementation | The core algorithmic component that models interactions between protein and ligand representations. | Custom modules in PyTorch/TensorFlow [17] [14] |

The Transition from Sequence-Based to Interaction-Aware Models

The field of computational biology is undergoing a significant paradigm shift, moving from models that analyze biomolecular sequences in isolation to those that explicitly capture the intricate interactions between molecular entities. This transition is particularly transformative in protein-ligand interaction research, where accurately predicting binding affinity and docking poses is crucial for drug discovery. Traditional sequence-based models, which process protein and ligand information through separate encoders, have demonstrated limitations in generalizability and predictive accuracy because they fail to capture the complex, dynamic interactions that occur at the binding interface [12] [18].

The integration of cross-attention layers represents a cornerstone of this evolution, enabling models to learn the conditional relationships between protein residues and ligand atoms directly from data. These attention mechanisms allow for the creation of interaction-aware models that can identify specific non-covalent bonds, such as hydrogen bonds and hydrophobic interactions, which are critical for understanding binding mechanisms and predicting drug efficacy [18]. This application note details this methodological transition, provides experimental protocols for implementing interaction-aware models, and highlights the superior performance of these approaches through quantitative benchmarks.

The Paradigm Shift: From Sequential to Interaction-Aware Modeling

Limitations of Sequence-Based Models

Traditional sequence-based models for protein-ligand interaction have primarily relied on processing protein sequences (e.g., via FASTA) and ligand information (e.g., via SMILES strings) through separate, parallel encoders [12]. These encoders typically utilize convolutional neural networks (CNNs) or recurrent neural networks (RNNs) to extract global features from each molecule independently. The extracted features are then concatenated and passed to a final classifier or regression head to predict binding affinity or other properties.

The fundamental limitation of this architecture is its inability to model intermolecular interactions. By processing protein and ligand features in separate silos, these models lack a dedicated mechanism to identify which protein residues interact with which ligand atoms, or to capture the specific physicochemical nature of these interactions [18]. This often results in models that learn superficial correlations from the training data rather than the underlying binding mechanisms, leading to poor generalization on unseen protein-ligand pairs [12].

The Rise of the Interaction-Aware Paradigm

Interaction-aware models address these limitations by architecturally prioritizing the modeling of inter-molecular relationships. The core innovation is the use of cross-attention mechanisms that allow features from the protein and ligand to dynamically interact and influence each other during the computation of representations.

In this paradigm, the model learns to:

- Attend to relevant protein residues given a specific ligand atom, and vice versa.

- Weight the importance of different potential interactions.

- Integrate this interaction information directly into the molecular representations.

This approach is biologically grounded, as it mirrors the actual process of binding where local and specific interactions collectively determine the binding affinity and pose [18]. Models like Interformer and PLAGCA exemplify this shift, employing graph-transformers and cross-attention layers to explicitly model non-covalent interactions, thereby achieving new state-of-the-art performance in docking and affinity prediction tasks [18] [12].

Architectural Implementation of Cross-Attention Mechanisms

Graph-Transformer Hybrid Architectures

The Graph-Transformer architecture has emerged as a powerful framework for interaction-aware modeling, as demonstrated by the Interformer model [18]. This hybrid design effectively captures both the local connectivity within molecules and the global dependencies between them.

Table 1: Core Components of a Graph-Transformer for Protein-Ligand Interaction

| Component | Function | Implementation in Interformer |

|---|---|---|

| Input Representation | Represents protein binding site and ligand as graphs. | Nodes: atoms; Features: pharmacophore types. Edges: based on Euclidean distance [18]. |

| Intra-Blocks | Updates node features by capturing intra-molecular interactions (within protein or ligand). | Self-attention layers that operate on individual molecular graphs [18]. |

| Inter-Blocks | Captures inter-molecular interactions between protein and ligand atom pairs. | Cross-attention layers where one molecule's nodes attend to the other's, generating an "Inter-representation" [18]. |

| Interaction-Aware MDN | Models the conditional probability of distances for atom pairs, focusing on specific interactions. | Uses mixture density network (MDN) with Gaussian functions to model hydrogen bonds and hydrophobic interactions explicitly [18]. |

The following diagram illustrates the flow of information in a Graph-Transformer architecture like Interformer:

Figure 1: Graph-Transformer Architecture for Docking and Affinity Prediction. Intra-Blocks process individual molecules, while the Inter-Block uses cross-attention to model their interactions.

Hierarchical Cross-Attention for Binding Affinity Prediction

The PLAGCA and CheapNet models showcase another effective pattern: using hierarchical representations with cross-attention for the specific task of binding affinity prediction [12] [19]. These models integrate multiple levels of molecular information to achieve robust performance.

- Global Feature Extraction: PLAGCA uses sequence encoding and self-attention to extract global features from protein FASTA sequences and ligand SMILES strings [12].

- Local Interaction Feature Extraction: Simultaneously, it employs graph neural networks (GNNs) to extract local, 3D structural features from the protein binding pocket and the ligand [12].

- Feature Integration via Cross-Attention: A cross-attention mechanism is applied to these hierarchical representations, allowing the model to focus on the most relevant local features conditioned on the global context, and vice versa. The final prediction is made by a multi-layer perceptron (MLP) on the concatenated features [12].

CheapNet refines this concept by introducing cluster-level cross-attention. It generates hierarchical cluster-level representations from atom-level embeddings via differentiable pooling, which efficiently captures essential higher-order interactions that are critical for accurate binding affinity prediction while maintaining computational efficiency [19].

Quantitative Performance Benchmarks

The transition to interaction-aware models is quantitatively justified by their superior performance on established benchmarks for docking accuracy and binding affinity prediction.

Table 2: Performance Comparison of Interaction-Aware Models on Docking Tasks

| Model | Architecture | Benchmark | Performance (Top-1 Success Rate, RMSD < 2Å) |

|---|---|---|---|

| Interformer [18] | Graph-Transformer + Interaction-Aware MDN | PDBBind Time-Split | 63.9% |

| DiffDock [18] | GNN-based | PDBBind Time-Split | 53.6% |

| GNINA [18] | CNN-based | PDBBind Time-Split | 22.3% |

| Interformer [18] | Graph-Transformer + Interaction-Aware MDN | PoseBusters Benchmark | 84.09% |

Table 3: Performance of Interaction-Aware Models on Affinity Prediction

| Model | Architecture | Key Feature | Performance |

|---|---|---|---|

| PLAGCA [12] | GNN + Cross-Attention | Integrates global sequence and local 3D graph features | Outperforms state-of-the-art methods, superior generalization |

| CheapNet [19] | Hierarchical Cross-Attention | Atom-level and cluster-level interactions | State-of-the-art across multiple affinity prediction tasks |

Experimental Protocols

Protocol 1: Training an Interaction-Aware Docking Model

This protocol outlines the procedure for training a model like Interformer for protein-ligand docking and pose scoring.

A. Input Preparation and Featurization

- Data Source: Obtain protein-ligand complexes with 3D structures from databases like PDBBind.

- Graph Construction:

- For each complex, define a protein graph (binding site residues) and a ligand graph.

- Node Features: Use pharmacophore atom types (e.g., hydrogen bond donor/acceptor, hydrophobic, aromatic) for both protein and ligand atoms [18].

- Edge Features: For intra-molecular edges, use Euclidean distance between atoms. For inter-molecular edges, compute distances between all protein and ligand atom pairs within a specified cutoff (e.g., 5 Å).

B. Model Training Cycle

- Pre-Training (Optional): Pre-train the Intra-Blocks on large-scale molecular datasets using masked atom prediction or related self-supervised tasks.

- Supervised Docking Training:

- Feed the featurized protein and ligand graphs into the model.

- The Intra-Blocks process each graph independently to generate refined atom representations.

- The Inter-Block performs cross-attention between the protein and ligand representations.

- The Interaction-Aware MDN predicts parameters for multiple Gaussian distributions to model distances for different interaction types (general, hydrophobic, hydrogen bond) [18].

- The loss function is a negative log-likelihood loss against the true distances from crystal structures.

- Pose Scoring and Affinity Prediction Head Training:

- Use the generated docking poses (from Monte Carlo sampling using the predicted energy function) as input.

- A virtual node collects information from the pose via a final self-attention layer.

- This virtual node's embedding is used to predict a confidence (pose) score and a binding affinity value (e.g., pIC50) [18].

- Employ a contrastive pseudo-Huber loss, which uses both good (native-like) and poor (decoy) poses to teach the model to discriminate between them [18].

Protocol 2: Assessing Binding Affinity with Cross-Attention

This protocol describes the methodology for training a model like PLAGCA or CheapNet for predicting protein-ligand binding affinity.

A. Multi-Modal Input Processing

- Sequence-Based Inputs:

- Encode the protein primary sequence from its FASTA format into a one-hot or embedding matrix.

- Encode the ligand's SMILES string into a sequential representation.

- Structure-Based Inputs:

- Extract or define the 3D binding pocket from the protein structure.

- Represent the pocket and the ligand as 3D graphs where nodes are atoms and edges are based on spatial proximity.

- Use atom features like element type, charge, and hybridization state.

B. Hierarchical Feature Integration and Prediction

- Global Feature Extraction: Process the protein and ligand sequences through separate encoders (e.g., CNNs or Transformers) to get global feature vectors [12].

- Local Feature Extraction: Process the protein pocket and ligand graphs through a GNN to get a set of local, atom-level features [12] [19].

- Cross-Attention Fusion:

- Apply a cross-attention layer where the global protein features are the query, and the local ligand features are the key and value (and vice versa). This allows the model to identify which local ligand features are most relevant given the global protein context.

- In CheapNet, an additional cross-attention step is performed on hierarchically pooled cluster-level representations to capture higher-order interactions [19].

- Affinity Prediction: Concatenate the final global and local, interaction-aware representations. Feed this combined feature vector into an MLP regressor to predict the binding affinity value [12].

The workflow for this protocol is summarized below:

Figure 2: Binding Affinity Prediction Workflow. The model integrates global sequence information and local 3D structural features via cross-attention.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Tools and Datasets for Interaction-Aware Research

| Resource Name | Type | Function/Purpose | Relevance to Interaction-Aware Models |

|---|---|---|---|

| PDBBind [18] | Dataset | Curated database of protein-ligand complexes with 3D structures and binding affinity data. | Primary source for training and benchmarking docking and affinity prediction models. |

| PoseBusters Benchmark [18] | Benchmark | Evaluates physical plausibility and correctness of docking poses. | Critical for validating the real-world performance of docking models like Interformer. |

| ESM-2 [20] | Pre-trained Model | Protein Language Model that generates embeddings from amino acid sequences. | Can be used to initialize protein feature encoders, providing evolutionarily informed input representations. |

| Monte Carlo (MC) Sampling [18] | Algorithm | A method for sampling conformational space by making random changes and accepting them based on an energy function. | Used in the docking pipeline (e.g., in Interformer) to generate candidate ligand poses by minimizing a model-predicted energy function. |

| Differentiable Pooling [19] | Algorithm | A method for hierarchically coarsening graph representations in a way that maintains differentiability for gradient-based learning. | Used in models like CheapNet to efficiently generate cluster-level features from atom-level graphs. |

| Spectral-Normalized Neural Gaussian Process (SNGP) [21] | Method | Enhances a model's ability to provide uncertainty estimates for its predictions. | Can be integrated to identify out-of-distribution samples and improve model reliability, though not yet common in interaction-aware models. |

Architectural Deep Dive: Implementing Cross-Attention for Specific Prediction Tasks

Accurately predicting the binding affinity between a protein and a small molecule (ligand) is a cornerstone of structure-based drug discovery, as it directly expresses the effectiveness of the protein-ligand complex and helps in ranking candidate drugs [22]. Traditional computational methods, ranging from molecular dynamics simulations to machine learning-based scoring functions, often face a trade-off between computational overhead and prediction accuracy [22] [23]. Recently, deep learning models have emerged as powerful tools capable of automatically learning complex patterns from protein and ligand data without relying heavily on domain-specific feature engineering [22] [14].

A significant architectural innovation in this domain is the adoption of the cross-attention mechanism. Unlike models that process protein and ligand features in isolation, cross-attention explicitly models the mutual interactions between amino acids in a protein and atoms in a ligand [14]. This allows the model to identify and weigh which specific parts of the protein are most influenced by which parts of the ligand, and vice versa, leading to a more nuanced and physically meaningful representation of the binding interaction [19] [14]. This document details the application and protocols for several state-of-the-art architectures that utilize cross-attention, namely EBA, CheapNet, and PLAGCA, providing a framework for their implementation in drug discovery research.

Comparative Analysis of Architectures

The following table summarizes the core characteristics, strengths, and performance metrics of the key architectures discussed in this protocol.

Table 1: Comparative Analysis of Protein-Ligand Binding Affinity Prediction Architectures

| Architecture | Core Innovation | Input Features | Key Mechanism | Reported Performance (Benchmark) |

|---|---|---|---|---|

| EBA (Ensemble Binding Affinity) [22] | Ensemble of 13 deep learning models | Combinations of 5 simple 1D sequential and structural features | Self-attention & cross-attention layers; model ensembling | CASF-2016: R=0.914, RMSE=0.957 [22] |

| CheapNet [19] [24] | Hierarchical cluster-level interactions | Molecular structures (3D) | Cross-attention between protein and ligand clusters | State-of-the-art across multiple tasks with high efficiency [19] |

| PLAGCA [14] | Integration of global and local features | Protein sequence, ligand SMILES, and 3D pocket structure | Graph cross-attention on local pockets; self-attention on sequences | Outperforms state-of-the-art on PDBBind2016 core set and CSAR-HiQ sets [14] |

| DEAttentionDTA [25] | Dynamic word embeddings | Protein sequence, pocket sequence, ligand SMILES | Self-attention on dynamically embedded sequences | Superior results on PDBBind2020 and CASF benchmarks [25] |

Detailed Architectures and Experimental Protocols

EBA: Ensemble Binding Affinity Prediction

The EBA framework addresses the challenge of low generalization in single-model approaches by leveraging the power of model ensembling. It trains multiple deep learning models, each with different combinations of input features, and combines their predictions to achieve superior accuracy and robustness [22].

Key Components:

- Input Features: EBA utilizes five types of 1D sequential and structural features, including a novel angle-based feature vector for short-range direct interactions, rather than relying on complex 3D complex features [22].

- Model Architecture: Individual models employ both self-attention and cross-attention layers. Self-attention captures long-range interactions within a protein or ligand sequence, while cross-attention layers are specifically designed to capture the interaction between proteins and ligands [22].

- Ensemble Strategy: Thirteen models are trained on various combinations of the five input features. The final prediction is generated by aggregating the outputs of the best-performing ensemble of these models [22].

Experimental Protocol:

- Data Preparation:

- Model Training:

- Train the 13 distinct deep learning models, each with a unique combination of input features.

- Each model should be trained using a regression loss function, such as Mean Squared Error (MSE), to predict the binding affinity (e.g., -logKd, -logKi).

- Ensemble Construction:

- Evaluate all possible combinations (ensembles) of the trained models on a held-out validation set.

- Select the ensemble that achieves the highest Pearson Correlation Coefficient (R) and the lowest Root Mean Square Error (RMSE).

- Evaluation:

- Benchmark the performance of the selected EBA ensemble on standard test sets like CASF-2016 and CSAR-HiQ, comparing R, RMSE, and MAE metrics against state-of-the-art predictors [22].

CheapNet: Cross-Attention on Hierarchical Representations

CheapNet addresses the computational inefficiency and noise associated with atom-level modeling by introducing a hierarchical representation that integrates atom-level and cluster-level interactions [19] [24].

Key Components:

- Hierarchical Representations: CheapNet goes beyond atom-level interactions. It uses differentiable pooling to group atoms into meaningful clusters, thereby capturing higher-order molecular representations that are crucial for binding [19] [24].

- Cross-Attention Mechanism: The core of CheapNet is a cross-attention mechanism that operates between the protein clusters and ligand clusters. This allows the model to focus on biologically relevant binding interactions at a more abstract and efficient level [19].

Experimental Protocol:

- Environment Setup:

- Data Preprocessing:

- Download preprocessed datasets (e.g., for Cross-dataset Evaluation, Diverse Protein Evaluation, or LEP) using the provided commands in the repository, which automatically fetch data from sources like GIGN and ATOM3D [24].

- Training:

- Prediction and Evaluation:

PLAGCA: Graph Cross-Attention for Local Pockets

PLAGCA is designed to integrate both global sequence information and local three-dimensional structural features of the protein binding pocket, addressing the limitation of methods that ignore local interaction features [14].

Key Components:

- Feature Integration: PLAGCA extracts three types of features:

- Global features from protein FASTA sequences and ligand SMILES strings using self-attention blocks.

- Local interaction features from the 3D molecular structures of protein binding pockets and ligands using a graph neural network (GNN) [14].

- Graph Cross-Attention: A graph cross-attention mechanism is applied to the local 3D graphs to learn the critical interaction features between the protein pocket and the ligand, highlighting residues with high contribution to binding [14].

Experimental Protocol:

- Data and Representation:

- Use protein-ligand complexes from PDBbind. Extract the global FASTA sequence and ligand SMILES.

- For local features, generate a molecular graph for the protein binding pocket and the ligand. Nodes represent atoms, and edges represent bonds or distances.

- Model Implementation:

- Implement a dual-pathway network:

- Global Pathway: Use an embedding layer followed by self-attention blocks to process sequences.

- Local Pathway: Use a GNN followed by a graph cross-attention layer to process the 3D graphs.

- Concatenate the output features from both pathways and feed them into a Multi-Layer Perceptron (MLP) for final affinity prediction [14].

- Implement a dual-pathway network:

- Interpretability Analysis:

- Analyze the attention scores from the graph cross-attention layer to identify critical functional residues in the protein pocket that contribute most to the binding affinity prediction [14].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Research Reagents and Resources for Implementation

| Resource Name | Type | Description / Function | Example Source / Tool |

|---|---|---|---|

| PDBbind Database | Dataset | Comprehensive collection of protein-ligand complexes with binding affinity data for training and testing. | http://www.pdbbind.org.cn/ [14] |

| CASF Benchmark | Dataset | Well-known benchmark sets (e.g., CASF2016, CASF2013) for standardized performance evaluation. | PDBbind website [22] |

| SMILES String | Data Format | 1D string representation of a ligand's molecular structure. | Open Babel for conversion from SDF [25] |

| GNN & Transformer | Software Library | Libraries for building graph neural networks and attention mechanisms. | PyTorch, PyTorch Geometric, DeepMind's Graph Nets |

| Cross-Attention Module | Algorithmic Component | Core mechanism to model interactions between protein and ligand representations. | Custom implementation in model architectures [19] [14] |

Workflow and Architectural Diagrams

Generic Cross-Attention Model Workflow

The following diagram illustrates a high-level workflow common to many cross-attention based binding affinity prediction models, integrating steps from EBA, CheapNet, and PLAGCA.

Generic Cross-Attention Model Workflow: This diagram outlines the common steps in a cross-attention based pipeline, from data sourcing and feature extraction to encoding, interaction modeling, and final affinity prediction.

CheapNet's Hierarchical Cross-Attention Architecture

CheapNet's Hierarchical Architecture: This diagram details CheapNet's specific two-stage process, which first processes atoms and then groups them into clusters for efficient cross-attention.

CAT-DTI is a deep learning model designed to predict drug-target interactions by effectively capturing the feature representations of drugs and proteins alongside their interaction characteristics. The framework is engineered to enhance generalization capability within real-world scenarios, often characterized by out-of-distribution data. Its primary innovation lies in integrating a cross-attention mechanism with a Transformer-based architecture, possessing domain adaptation capability. This allows the model to efficiently learn the complex relationships between drug molecules and protein targets, a critical task for accelerating drug discovery and reducing development costs [17].

The prediction of drug-target interactions is a cornerstone of computer-aided drug discovery. While traditional methods, such as molecular docking, are often limited by computational inefficiency and relatively low accuracy of scoring functions, deep learning methods have shown significant promise. However, many existing deep learning models fail to fully capture global context information while retaining local features or adequately model the local crucial interaction sites between the drug molecule and target protein. The CAT-DTI framework was proposed to address these specific limitations, achieving superior predictive performance by leveraging a protein feature encoder that combines convolutional neural networks (CNN) with Transformer, and a cross-attention module for feature fusion [17] [26].

The CAT-DTI framework processes drug and target inputs through separate feature encoders before fusing their representations to predict the interaction. The following diagram illustrates the core workflow and architecture of the CAT-DTI model.

Protein Feature Encoder

The protein feature encoder is a critical component that processes the amino acid sequence of a target protein. It employs a convolution neural network (CNN) combined with a Transformer to encode the distance relationship between amino acids within the protein sequence. The CNN is effective at capturing local residue patterns and motifs from the amino acid sequence. The Transformer architecture then leverages self-attention to capture global context and long-distance dependencies between these local subsequences, which is crucial for understanding the full protein structure. This hybrid approach allows the model to consider both local features and global context information simultaneously, addressing a key limitation of models that rely solely on CNN [17] [26].

Drug Feature Encoder

For drug representation, the model begins by converting the drug's SMILES string into a corresponding 2D molecular graph. Each atom node in the graph is initialized with a 74-dimensional integer vector that encapsulates atom attributes such as type, degree, number of implicit hydrogens, formal charge, and hybridization. A three-layer Graph Convolutional Network (GCN) is then used to transmit and aggregate information on the drug molecular structure. Each GCN layer updates the feature representation of each atomic node using the information of its neighboring nodes, thereby effectively capturing the correlation information between adjacent atoms in the drug molecule. The output is a node-level drug feature map, which is retained for subsequent explicit learning of interactions with protein fragments [17].

Cross-Attention Module for Feature Fusion

After obtaining the feature maps for the drug and protein, they are input into a cross-attention module. This module is designed to interact the protein and drug features for feature fusion, rather than simply concatenating them. The mechanism allows the model to capture the interaction relationship between specific drug substructures and protein regions. Specifically, the key and value from the protein attention are swapped with those from the drug attention, enabling a deeper fusion of information. This process helps the model to preserve the internal features of drugs and proteins while simultaneously exploring the interaction information between them, addressing a common oversight in models that focus only on extracting internal features [17].

Domain Adaptation and Decoder

To enhance the model's generalization to novel drug-target pairs in real-world scenarios, CAT-DTI integrates a Conditional Domain Adversarial Network (CDAN). This component is employed to align DTI representations under diverse distributions, facilitating effective knowledge transfer from the source domain (training data) to a target domain with different data characteristics. Finally, the fused and domain-adapted features are processed through a decoder, typically a fully connected neural network, to produce the final DTI prediction [17].

Performance Evaluation and Benchmarking

The performance of CAT-DTI has been rigorously evaluated against multiple baseline models on several public benchmark datasets. The following tables summarize key quantitative results.

Table 1: Performance Comparison of CAT-DTI and Baseline Models on the BindingDB, BioSNAP, and Human Datasets (Values are AUROC)

| Model | BindingDB | BioSNAP | Human |

|---|---|---|---|

| SVM | 0.939 | 0.862 | 0.913 |

| RF | 0.942 | 0.860 | 0.939 |

| DeepConv-DTI | 0.945 | 0.886 | 0.978 |

| GraphDTA | 0.951 | 0.887 | 0.965 |

| MolTrans | 0.952 | 0.895 | 0.981 |

| DrugBAN | 0.960 | 0.903 | 0.981 |

| CAT-DTI | 0.965 | 0.909 | 0.983 |

| NFSA-DTI | 0.965 | 0.909 | 0.987 |

Table 2: Detailed Performance of CAT-DTI on the DrugBank Dataset

| Metric | Performance (Std) |

|---|---|

| Accuracy | 82.02% |

| Precision | 81.90% |

| MCC | 64.29% |

| F1 Score | 82.09% |

As shown in Table 1, CAT-DTI demonstrates robust and superior performance, achieving the highest or tied highest Area Under the Receiver Operating Characteristic Curve (AUROC) across all three benchmark datasets (BindingDB, BioSNAP, and Human). It outperforms other advanced models like DrugBAN and MolTrans, underscoring its effectiveness. The model's strong performance is further confirmed on the DrugBank dataset (Table 2), where it shows robust results across multiple metrics, including accuracy, precision, and F1 score [26] [27].

Experimental Protocol for CAT-DTI Implementation

This protocol provides a detailed methodology for replicating the CAT-DTI training and evaluation process as described in the foundational research.

Data Preprocessing and Preparation

- Drug Molecular Graph Construction: Convert drug SMILES strings into 2D molecular graphs. For each atom, generate a 74-dimensional feature vector encoding atom type, degree, number of implicit Hs, formal charge, number of radical electrons, hybridization, number of total Hs, and aromaticity. Set a maximum number of nodes per graph (e.g.,

m_d = 100) to ensure uniform input size [17]. - Protein Sequence Encoding: Represent protein amino acid sequences as numerical embeddings. The specific method for initial embedding generation (e.g., one-hot encoding, learned embeddings) should be detailed based on the original implementation [17].

- Dataset Splitting: Randomly split the chosen benchmark dataset (e.g., DrugBank, Davis, KIBA) into training, validation, and test sets using a standard ratio such as 8:1:1. This split is crucial for unbiased evaluation [27].

Model Training Procedure

- Initialization: Initialize model parameters, including the GCN for drugs, the CNN-Transformer hybrid for proteins, and the cross-attention modules.

- Loss Function: Use a binary cross-entropy loss function for the DTI classification task.

- Domain Adaptation Integration: Incorporate the Conditional Domain Adversarial Network (CDAN) loss during training. This involves a gradient reversal layer to maximize the domain classifier's loss, thereby encouraging the learning of domain-invariant features [17].

- Optimization: Train the model using a stochastic gradient descent-based optimizer (e.g., Adam). Utilize the validation set for early stopping to prevent overfitting. Monitor key metrics like AUC and AUPR on the validation set.

Model Evaluation and Validation

- Performance Metrics: Evaluate the trained model on the held-out test set. Report standard metrics for binary classification, including:

- Comparative Analysis: Compare the model's performance against established baseline models to contextualize the results.

Table 3: Key Research Reagents and Computational Tools for DTI Research

| Item / Resource | Function / Description |

|---|---|

| SMILES Strings | A standardized line notation for representing molecular structures of drugs, serving as the primary input for the drug encoder. |

| Amino Acid Sequences | The primary structure of the target protein, provided as a string of one-letter codes, serving as input for the protein encoder. |

| Molecular Graphs | A graph representation of a drug molecule where nodes are atoms and edges are bonds; used by GCNs to capture topological information. |

| Graph Convolutional Network (GCN) | A type of neural network that operates directly on graph structures to learn node embeddings by aggregating information from neighbors. |

| CNN-Transformer Hybrid Encoder | A feature extraction module that combines the local feature detection of CNNs with the global context capture of Transformer self-attention. |

| Cross-Attention Mechanism | A neural network layer that enables the model to jointly attend to and fuse information from two different modalities (e.g., drug and protein features). |

| Conditional Domain Adversarial Network (CDAN) | A technique to improve model generalization by aligning feature distributions across different domains (e.g., different experimental settings). |

| Benchmark Datasets (e.g., DrugBank, Davis, KIBA) | Publicly available, curated datasets containing known drug-target interactions used for training and evaluating DTI prediction models. |

Logical Flow of the CAT-DTI Framework

The following diagram illustrates the logical sequence of operations and decision points within the CAT-DTI framework, from input processing to final prediction.

Enzyme substrate specificity, the precise recognition and catalytic action of an enzyme on particular target molecules, is a cornerstone of biological function and a critical parameter in biotechnology and drug discovery [28]. The traditional "lock and key" analogy has been superseded by a more nuanced understanding of induced fit and enzyme promiscuity, where enzymes can dynamically adjust their conformation and even catalyze reactions beyond their primary function [28]. Accurately predicting these interactions has been a persistent challenge, impeding the efficient application of enzymes in fundamental research and industry.

The emergence of artificial intelligence (AI) is revolutionizing this field. This Application Note focuses on EZSpecificity, a novel AI tool that leverages a cross-attention-empowered SE(3)-equivariant graph neural network to achieve unprecedented accuracy in predicting enzyme-substrate pairs [28] [16] [29]. Developed by researchers at the University of Illinois Urbana-Champaign, EZSpecificity represents a significant leap forward, providing researchers with a powerful, freely available online tool to accelerate their work [28] [30].

EZSpecificity: Core Technology and Performance

Architectural Innovation and Training Regime

EZSpecificity's predictive power stems from its sophisticated architecture and the comprehensive dataset on which it was trained. The model is built on a cross-attention graph neural network that operates directly on the 3D structural representations of enzymes and substrates [16] [29]. The cross-attention mechanism is pivotal as it allows the model to learn the specific chemical interactions between amino acid residues in the enzyme's active site and functional groups on the substrate [30]. This SE(3)-equivariant design ensures that the model's predictions are robust to rotations and translations of the input structures, a crucial feature for analyzing molecular interactions [16].

The model was trained on a vast, tailor-made database of enzyme-substrate interactions that integrated both sequence and structural information [16]. To overcome the scarcity of experimental data, the team employed extensive molecular docking simulations, performing millions of calculations to create a large-scale computational dataset of enzyme-substrate pairs [28] [30]. This hybrid training approach, which combined limited experimental data with expansive computational data, was key to building a highly accurate and generalizable model [30].

Quantitative Performance Benchmarking

EZSpecificity's performance was rigorously evaluated against ESP, the existing state-of-the-art model for enzyme substrate specificity prediction. The validation involved benchmark tests across multiple scenarios and experimental follow-up on a challenging enzyme class.

Table 1: Comparative Performance of EZSpecificity vs. ESP Model

| Evaluation Metric | EZSpecificity | ESP (State-of-the-Art) |

|---|---|---|

| Overall Accuracy (Top Prediction) | 91.7% [28] [16] | 58.3% [28] [16] |

| Validation Case | 8 Halogenase enzymes vs. 78 substrates [28] [16] | 8 Halogenase enzymes vs. 78 substrates [28] [16] |

The experimental validation on halogenases, a class of enzymes with poorly characterized specificity that is increasingly used to synthesize bioactive molecules, underscores EZSpecificity's practical utility and superior accuracy in real-world applications [28] [16].

The following diagram illustrates the integrated computational and experimental workflow for developing and validating EZSpecificity:

Application Notes: Harnessing EZSpecificity in Research

Accessing the Tool

EZSpecificity has been developed as a freely available online tool to maximize its accessibility to the research community [28]. Users can access the model through a user-friendly web interface. The researchers have made the tool open source with no restrictions, though a patent has been filed to protect the intellectual property [30]. The official demo can be accessed via the Shukla Group's website or the publication links associated with the Nature paper [29].

Input Requirements and Workflow

To use EZSpecificity, researchers must provide two key pieces of information about the system they wish to analyze:

- Protein Sequence: The amino acid sequence of the enzyme of interest.

- Substrate Structure: The chemical structure of the target substrate molecule [28].

The model processes these inputs through its cross-attention graph neural network to predict the compatibility of the enzyme-substrate pair, outputting a prediction of whether the substrate is likely to be accepted by the enzyme [28] [30].

Practical Use-Cases and Applications

EZSpecificity is designed to accelerate research and development across multiple disciplines:

- Drug Discovery: Identify novel substrates for enzymes involved in biosynthetic pathways of therapeutic agents, or predict off-target effects of drug candidates [28] [30].

- Synthetic Biology and Metabolic Engineering: Determine the optimal enzyme and substrate combinations to efficiently produce desired chemicals, fuels, and materials in engineered biological systems [28] [30].

- Enzyme Engineering and Characterization: Rapidly screen and prioritize enzyme mutants for experimental testing, streamlining the process of designing enzymes with new or improved functions [28]. The tool is particularly valuable for characterizing understudied enzyme families, such as halogenases [16].

Experimental Protocol: Validation of Halogenase Substrate Specificity

The following section details the experimental protocol used to validate EZSpecificity's predictions for halogenase enzymes, a process that can be adapted for testing computational predictions in other enzyme systems.

Reagent Setup

Table 2: Essential Research Reagents for Enzyme Specificity Validation