Chemoinformatics: The Data-Driven Revolution Reshaping Modern Chemical Research

This article explores the transformative role of chemoinformatics as an indispensable pillar of modern chemical research and drug discovery.

Chemoinformatics: The Data-Driven Revolution Reshaping Modern Chemical Research

Abstract

This article explores the transformative role of chemoinformatics as an indispensable pillar of modern chemical research and drug discovery. Tailored for researchers, scientists, and drug development professionals, it details how this interdisciplinary field integrates chemistry, computer science, and data analysis to accelerate innovation. The scope covers foundational concepts, core methodologies and applications in drug and material design, strategies to overcome data integrity and skill gap challenges, and a comparative analysis of leading software platforms. The article concludes by synthesizing key takeaways and forecasting future directions, including the impact of AI, quantum computing, and self-driving labs on biomedical research.

Chemoinformatics Demystified: From Molecules to Manageable Data

Chemoinformatics is an interdisciplinary field that integrates chemistry, computer science, and data analysis to solve complex chemical problems and enhance research efficiency. This technical guide explores the foundational principles, applications, and methodologies of chemoinformatics within the context of modern chemical research. As the volume of chemical data continues to grow exponentially, chemoinformatics has emerged as a critical discipline for managing, analyzing, and extracting valuable insights from chemical information systems. The field leverages computational tools, artificial intelligence, and machine learning to drive innovation across various domains, particularly in drug discovery and materials science. This whitepaper provides a comprehensive overview of the core components of chemoinformatics, detailed experimental protocols, key research reagents and tools, and visual representations of critical workflows. Aimed at researchers, scientists, and drug development professionals, this document underscores the pivotal role of chemoinformatics as an indispensable pillar of contemporary chemical research, enabling data-driven decision-making and accelerating scientific discovery.

Chemoinformatics, defined as "the application of informatics methods to solve chemical problems" [1], represents a transformative intersection of chemistry, computer science, and data analysis. This interdisciplinary field has evolved from its origins in the pharmaceutical industry during the late 1990s into a cornerstone of modern chemical research [1] [2]. The primary impetus behind its development has been the need to manage and extract meaningful patterns from the enormous volumes of chemical data generated by high-throughput screening, automated synthesis, and advanced analytical techniques [1]. As chemical research undergoes digital transformation, chemoinformatics provides the critical computational framework for handling increasing information complexity, thereby accelerating discovery processes across multiple domains.

The significance of chemoinformatics in contemporary research landscapes cannot be overstated. It encompasses a wide array of computational techniques designed to handle chemical data, ranging from molecular modeling to the design of novel compounds and materials [1]. The field has expanded beyond its initial pharmaceutical applications to include data-driven approaches that facilitate the storage, retrieval, and analysis of chemical data on an unprecedented scale [1]. This expansion has been accelerated by initiatives promoting public databases such as PubChem and ChEMBL, which have democratized access to chemical information and fostered global research collaboration [1] [2]. Furthermore, the formal integration of chemoinformatics into university curricula reflects its growing importance in equipping future researchers with essential computational skills for modern chemical problem-solving [1].

The Interdisciplinary Foundation of Chemoinformatics

The structural foundation of chemoinformatics rests upon three interconnected pillars: chemistry, computer science, and information science. This triad forms a synergistic relationship where each discipline contributes essential components to create a robust framework for chemical data analysis and prediction.

Chemistry: The Molecular Basis

The chemical domain provides the fundamental molecular context for all chemoinformatics applications. Key aspects include:

Molecular Modeling: Computational representation of molecular structures, properties, and behaviors using mathematical approaches [1] [3]. This includes techniques such as quantum mechanics, molecular mechanics, and molecular dynamics simulations that enable researchers to predict and visualize molecular characteristics without synthetic experimentation.

Chemical Database Management: Systematic organization, storage, and retrieval of chemical information [3]. This component addresses the challenges of handling diverse chemical data types, including structures, properties, spectra, and biological activities, while ensuring data integrity and accessibility.

Structure-Activity Relationship (SAR) Analysis: Quantitative exploration of the relationships between chemical structures and their biological activities or properties [1] [3]. SAR methodologies enable the prediction of compound behavior based on structural features, guiding the optimization of lead compounds in drug discovery.

Computer Science: The Computational Engine

The computer science pillar provides the algorithmic and software infrastructure necessary for processing chemical information:

Software Development for Chemoinformatics: Creation of specialized applications and tools tailored to chemical data manipulation [3]. This includes the development of open-source platforms such as RDKit and the Chemistry Development Kit (CDK) that provide fundamental cheminformatics functionalities to the research community [2].

Data Mining and Machine Learning Applications: Implementation of advanced algorithms to discover patterns, relationships, and predictive models from large chemical datasets [3]. Machine learning techniques, particularly deep learning, have significantly enhanced the ability to analyze complex chemical data and predict molecular properties [1] [4].

Computational Chemistry Algorithms: Development and optimization of mathematical procedures for solving chemical problems [3]. These algorithms enable tasks such as molecular docking, conformational analysis, and quantum chemical calculations that form the computational core of chemoinformatics applications.

Information Science: The Data Management Framework

The information science component focuses on the systematic handling and interpretation of chemical data:

Data Integration and Analysis: Combining heterogeneous chemical data from multiple sources and extracting meaningful insights [3]. This approach facilitates comprehensive analyses that leverage diverse data types, including chemical structures, assay results, and literature information.

Knowledge Management in Chemical Research: Organizing and preserving chemical knowledge to support research decision-making [3]. This includes the implementation of electronic laboratory notebooks, data standards, and ontology development to capture and formalize chemical expertise.

Information Retrieval Systems for Chemical Data: Designing specialized search and retrieval systems for chemical databases [3]. These systems enable efficient access to chemical information through structure, substructure, similarity, and property-based searching methodologies.

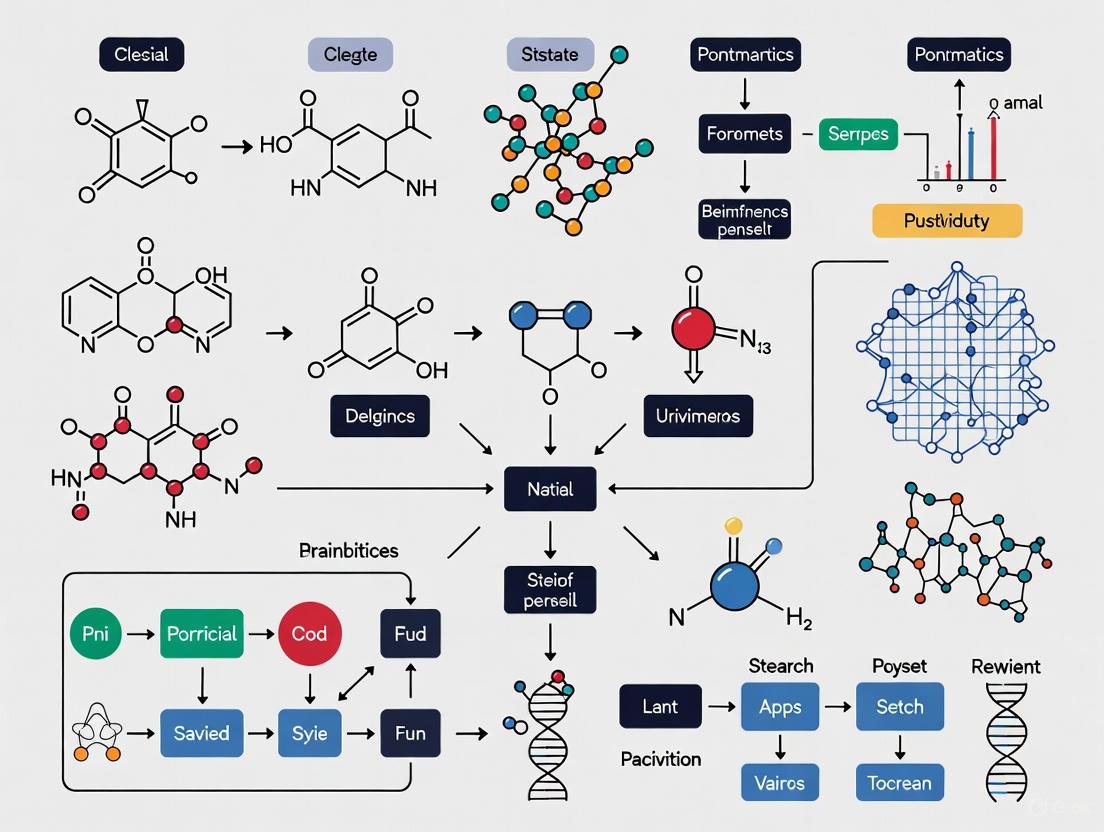

The following diagram illustrates the interconnectedness of these three foundational disciplines and their collective contribution to chemoinformatics applications:

Figure 1: Interdisciplinary Foundation of Chemoinformatics

Key Applications in Modern Chemical Research

Drug Discovery and Development

Chemoinformatics has revolutionized pharmaceutical research by significantly accelerating and de-risking the drug discovery pipeline:

Virtual Screening and Hit Identification: Chemoinformatics streamlines virtual screening by analyzing extensive chemical libraries from sources like ChEMBL and PubChem [5]. Ligand-based (LBVS) and structure-based virtual screening (SBVS) techniques, combined with molecular docking, predict drug-target interactions and rank candidates based on binding affinity. Machine learning enhances these predictions by identifying complex patterns in large datasets that might escape conventional analysis methods. For example, the Exscalate4Cov project demonstrated the power of virtual screening by utilizing high-performance computing to screen vast chemical libraries to identify molecules that could inhibit the SARS-CoV-2 virus [4].

Lead Optimization and ADMET Predictions: Quantitative Structure-Activity Relationship (QSAR) modeling predicts biological activity based on molecular structure, guiding strategic modifications to improve potency and selectivity [5]. ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) predictions assess critical pharmacokinetic parameters, ensuring drug candidates have favorable safety and metabolic profiles. Machine learning models such as Deep-PK, which uses graph neural networks to predict pharmacokinetics and toxicity, exemplify how cheminformatics tools enhance molecular optimization while reducing the risk of late-stage failures [5].

De-risking Drug Development: By predicting compound properties before costly experimental validation, cheminformatics enhances efficiency and resource allocation in drug discovery. This approach is particularly valuable in early-phase research, where computational assessments can prioritize the most promising candidates for synthesis and testing. Real-world applications include the use of cheminformatics to identify brachyury inhibitors for chordoma treatment and to discover disease-modulating compounds for Alzheimer's research [5].

Materials Science and Green Chemistry

Beyond pharmaceutical applications, chemoinformatics plays an increasingly important role in materials design and sustainable chemistry:

Materials Informatics: The application of chemoinformatics principles to design novel materials with tailored properties for specific applications [1]. This includes the development of materials for energy storage, electronics, and nanotechnology through computational prediction of material characteristics based on molecular structure.

Green Chemistry and Sustainability: AI-driven retrosynthesis tools optimize synthetic routes by minimizing waste, reducing reliance on hazardous reagents, and lowering energy consumption [4]. These advanced tools align with global efforts to promote more sustainable chemical practices by identifying environmentally benign synthetic pathways that maintain efficiency while reducing ecological impact.

Polymer and Nanomaterial Design: Chemoinformatics facilitates the design of complex polymeric structures and nanomaterials with precise characteristics. For instance, researchers have applied QSPR (Quantitative Structure-Property Relationship) modeling to predict the cytotoxicity of metal oxide nanoparticles, enabling safer nanomaterial design [4].

Analytical Chemistry and Automation

The integration of chemoinformatics with laboratory automation has transformed chemical research workflows:

High-Throughput Screening (HTS) Enhancement: Chemoinformatics manages large HTS datasets, identifies true active compounds, and reduces false positives [5]. Machine learning models, such as Minimal Variance Sampling Analysis (MVS-A), efficiently identify false positives and prioritize true hits without relying on interference assumptions, processing HTS data in under 30 seconds per assay even on low-resource hardware [5].

Smart Labs and Automated Workflows: The evolution of chemical laboratories into automated, intelligent environments integrates robotics, AI, cheminformatics, and data analytics [4]. These "smart labs" enhance efficiency, accuracy, and safety by performing repetitive tasks with high precision while enabling real-time monitoring and process optimization through advanced sensors.

Analytical Data Interpretation: Chemoinformatics tools assist in interpreting complex analytical data, including spectral information from NMR, MS, and IR spectroscopy. For example, platforms like NMRShiftDB provide open-access databases of NMR chemical shifts that facilitate structural elucidation through comparative analysis [2].

Market Context and Growth Projections

The expanding role of chemoinformatics in chemical research is reflected in its significant market growth and adoption across industries. The following table summarizes key market projections and growth factors:

Table 1: Chemoinformatics Market Size and Growth Projections

| Metric | 2024 Value | 2025 Value | 2029 Projection | 2034 Projection | CAGR (Compound Annual Growth Rate) |

|---|---|---|---|---|---|

| Market Size | USD 3.88 billion [3] | USD 4.36-4.49 billion [3] [6] | USD 5.21 billion [6] | USD 16.69 billion [3] | 15.71% (2025-2034) [3] |

| Software Segment Share | 41% [3] | - | - | - | - |

| Chemical Analysis Application Share | 30% [3] | - | - | - | - |

Table 2: Key Market Growth Drivers and Regional Distribution

| Growth Driver | Significance | Regional Leadership | Fastest-Growing Region |

|---|---|---|---|

| Drug Discovery Demands | Primary driver due to need for efficient pharmaceutical R&D [3] | North America (35% revenue share in 2024) [3] | Asia-Pacific [3] [6] |

| Material Science Applications | Expanding role in designing and optimizing advanced materials [3] | - | - |

| Personalized Medicine | FDA CDER approved 12 personalized medicines (34% of therapeutic NMEs) in 2022 [6] | - | - |

| Technological Advancements | AI and machine learning integration enhancing capabilities [3] | - | - |

This substantial market growth underscores the increasing reliance on chemoinformatics across chemical industries and research institutions. The field's expansion is particularly driven by the pharmaceutical sector's need to improve R&D efficiency and success rates, with 90% of drugs failing during clinical trials (52% due to lack of efficacy and 24% due to safety issues) [5]. Chemoinformatics addresses these challenges by enabling earlier and more accurate prediction of compound properties, thereby reducing late-stage failures.

Essential Methodologies and Experimental Protocols

Molecular Property Prediction Using QSAR

Objective: To predict biological activity or chemical properties based on molecular structure using Quantitative Structure-Activity Relationship (QSAR) modeling.

Protocol:

Dataset Curation:

- Collect a set of chemical structures with associated experimental biological activities or properties.

- Ensure data quality by removing duplicates and correcting erroneous entries.

- Apply chemical standardization (e.g., using RDKit) to normalize structures [4].

- Divide the dataset into training (70-80%), validation (10-15%), and test sets (10-15%).

Molecular Descriptor Calculation:

Model Building:

- Select appropriate machine learning algorithms (e.g., random forest, support vector machines, neural networks).

- Train models using the training set and optimize hyperparameters via cross-validation.

- Validate model performance using the validation set and metrics such as R², RMSE, or AUC.

Model Application:

- Apply the trained model to predict activities or properties for new compounds.

- Utilize applicability domain assessment to evaluate prediction reliability.

Key Considerations: The availability of high-quality negative (inactive) data is essential for improving the reliability and generalizability of QSAR models, particularly in drug discovery where distinguishing between active and inactive compounds enhances virtual screening accuracy [1].

Virtual Screening for Hit Identification

Objective: To computationally identify potential bioactive compounds from large chemical libraries.

Protocol:

Library Preparation:

- Curate a virtual compound library from databases such as ZINC, PubChem, or in-house collections.

- Prepare structures by adding hydrogens, generating tautomers, and enumerating stereoisomers.

- Generate multiple conformations for each molecule using tools like OMEGA or RDKit.

Target Preparation:

- Obtain the three-dimensional structure of the biological target (e.g., from Protein Data Bank).

- Prepare the protein by adding hydrogens, assigning protonation states, and removing water molecules.

- Define the binding site based on known ligand positions or pocket detection algorithms.

Molecular Docking:

Post-processing:

- Apply filters based on drug-likeness (e.g., Lipinski's Rule of Five) and ADMET properties.

- Cluster results to select diverse chemotypes for experimental validation.

- Visually inspect top-ranking complexes to confirm binding mode plausibility.

Key Considerations: Structure-based virtual screening (SBVS) requires high-quality protein structures, while ligand-based approaches (LBVS) depend on known active compounds for similarity searching or pharmacophore modeling [5].

Retrosynthetic Analysis Using AI

Objective: To plan synthetic routes for target molecules using AI-powered retrosynthetic analysis.

Protocol:

Target Input:

- Define the target molecule using SMILES notation or structure drawing.

- Specify constraints such as available starting materials or excluded reagents.

Pathway Generation:

- Utilize AI-powered platforms such as IBM RXN, AiZynthFinder, or ASKCOS [4].

- Generate multiple retrosynthetic pathways through iterative bond disconnections.

- Apply reaction templates and neural network models to predict feasible transformations.

Pathway Evaluation:

- Assess generated routes based on criteria including step count, yield, cost, and safety.

- Prioritize pathways with commercial availability of intermediates and reagents.

- Consider green chemistry principles by minimizing hazardous reagents and waste.

Experimental Validation:

- Select top-ranked synthetic routes for laboratory execution.

- Optimize reaction conditions (catalyst, solvent, temperature) based on predictive models.

- Iteratively refine the route based on experimental outcomes.

Key Considerations: AI-driven retrosynthesis tools can identify unconventional yet viable reaction routes that might be overlooked by human intuition, expanding the accessible synthetic landscape [4].

The following diagram illustrates a generalized chemoinformatics workflow integrating these key methodologies:

Figure 2: Generalized Chemoinformatics Workflow

Successful implementation of chemoinformatics methodologies requires a comprehensive toolkit of software, databases, and computational resources. The following table details essential components of the modern chemoinformatics research environment:

Table 3: Essential Chemoinformatics Research Tools and Resources

| Tool Category | Specific Tools | Function | Access |

|---|---|---|---|

| Cheminformatics Toolkits | RDKit [4] [2], Chemistry Development Kit (CDK) [2], Open Babel [2] | Provides fundamental cheminformatics functionalities including molecular representation, descriptor calculation, and substructure searching | Open Source |

| Molecular Modeling Suites | Schrödinger [4], AutoDock [4] [5], MOE [5] | Enables molecular visualization, docking simulations, and protein-ligand interaction analysis | Commercial |

| Retrosynthesis Platforms | IBM RXN [4], AiZynthFinder [4], ASKCOS [4], Synthia [4] | AI-powered synthesis planning and reaction prediction | Varies (Commercial/Open) |

| Chemical Databases | PubChem [1] [2], ChEMBL [1] [2], ChemSpider [5] | Provides access to chemical structures, properties, and bioactivity data | Open Access |

| Machine Learning Libraries | DeepChem [4], Chemprop [4], kMoL [7] | Specialized ML frameworks for chemical data analysis and property prediction | Open Source |

| Workflow Platforms | KNIME [5] [2], Jupyter Notebooks [2] | Integrates multiple cheminformatics tools into reproducible analytical workflows | Open Source |

| Molecular Representation | SMILES [1], InChI [1] [2], MOL files [1] | Standardized formats for chemical structure encoding and exchange | Open Standards |

The evolution of these tools from proprietary systems to open-source platforms has dramatically democratized access to cheminformatics capabilities. This shift, championed by initiatives such as the Blue Obelisk movement and the adoption of the FAIR principles (Findable, Accessible, Interoperable, Reusable), has fostered collaborative innovation and transparency in chemical research [2]. The development of standardized molecular representations like the International Chemical Identifier (InChI) has further enhanced data interoperability across diverse platforms and databases [1] [2].

Chemoinformatics has established itself as an indispensable discipline at the intersection of chemistry, computer science, and data analysis, fundamentally transforming modern chemical research methodologies. By providing sophisticated computational tools for managing, analyzing, and predicting chemical information, this interdisciplinary field addresses the critical challenges posed by the increasing volume and complexity of chemical data. The integration of artificial intelligence and machine learning has further enhanced the predictive capabilities of chemoinformatics, enabling more accurate molecular design, property prediction, and synthesis planning. As evidenced by its substantial market growth and expanding applications across drug discovery, materials science, and sustainable chemistry, chemoinformatics represents a foundational pillar of contemporary chemical research. For researchers, scientists, and drug development professionals, proficiency in cheminformatics principles and tools is no longer optional but essential for driving innovation and maintaining competitive advantage in an increasingly data-driven scientific landscape. The continued evolution of open science initiatives, collaborative platforms, and advanced computational methodologies will further solidify the role of chemoinformatics as a catalyst for scientific discovery and technological advancement in the chemical sciences.

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone of computational chemistry and chemoinformatics, providing a critical framework for predicting the biological activity and physicochemical properties of molecules from their structural features. The evolution of QSAR from its conceptual origins in the 19th century to today's artificial intelligence (AI)-driven paradigms encapsulates the broader transformation of chemical research into a data-rich, interdisciplinary science [1]. This journey reflects the expanding role of chemoinformatics—defined as the application of informatics methods to solve chemical problems—in modern chemical research [1] [8].

The development of QSAR has fundamentally reshaped drug discovery and chemical risk assessment, creating a predictive modeling environment that accelerates the identification of therapeutic candidates while reducing reliance on costly experimental screening. This whitepaper traces the technical evolution of QSAR methodologies, examining how the integration of increasingly sophisticated computational approaches has established chemoinformatics as an indispensable pillar of contemporary chemical research and development.

The Foundations: Early QSAR (1860s-1950s)

The conceptual foundations of QSAR emerged from systematic observations of relationships between simple chemical properties and biological effects, long before the formal establishment of the field.

Key Historical Milestones

Table 1: Foundational Developments in Early QSAR

| Year | Researcher(s) | Contribution | Significance |

|---|---|---|---|

| 1868 | Crum-Brown and Fraser | First general QSAR equation: Physiological action = f(Chemical constitution) [9] [10] | Established the fundamental principle that biological activity is a function of chemical structure |

| 1893 | Richet | Inverse relationship between toxicity and aqueous solubility for alcohols, ethers, and ketones [9] [10] | Demonstrated that physicochemical properties could quantitatively predict biological effects |

| 1897-1899 | Meyer and Overton | Correlation between lipophilicity (oil-water partition coefficients) and narcotic activity [9] [10] | Identified hydrophobicity as a critical determinant of biological activity |

| 1935-1937 | Hammett | Developed sigma (σ) constants and the Linear Free-Energy Relationship (LFER) [9] [10] | Provided the first electronic parameters quantifying substituent effects on reactivity |

| 1952 | Taft | Introduced the first steric parameter (Eₛ) and method for separating polar, steric, and resonance effects [10] | Completed the triumvirate of key physicochemical properties: electronic, steric, and hydrophobic |

The earliest quantitative observations established linear relationships between simple physicochemical properties and biological outcomes. These foundational studies introduced the crucial concept that molecular properties could be numerically encoded and correlated with biological activity, setting the stage for more sophisticated modeling approaches [9] [10].

Experimental Protocols in Early QSAR

The experimental determination of key parameters in early QSAR studies followed rigorous methodologies:

Partition Coefficient Measurement: Researchers determined lipophilicity by shaking a compound vigorously between n-octanol and water phases in a separatory funnel, allowing phases to separate, and quantifying the compound concentration in each phase through spectroscopic methods or titration. The partition coefficient (P) was calculated as the ratio of concentrations in the octanol and water phases [10].

Hammett σ Constant Determination: Scientists derived electronic parameters by measuring the dissociation constants (K) of substituted benzoic acids in water at 25°C using potentiometric titration. The σ value for a substituent was calculated as log(K/K₀), where K₀ represents the dissociation constant of unsubstituted benzoic acid [10].

Taft Eₛ Steric Parameter Determination: Researchers determined steric parameters by measuring the hydrolysis rates of substituted aliphatic esters under acidic conditions, comparing them to the hydrolysis rates of reference acetate esters, effectively isolating steric effects from electronic contributions [10].

The Formalization of Modern QSAR (1960s-1990s)

The 1960s marked the critical transition of QSAR from observational correlations to a formalized predictive science, establishing methodological frameworks that remain relevant today.

The Hansch-Fujita Approach

Corwin Hansch and Toshio Fujita pioneered the multiparameter approach that became the foundation of modern QSAR. Their methodology expressed biological activity as a linear function of hydrophobic, electronic, and steric parameters [11] [9]. The general form of the Hansch equation is:

Log(1/C) = a(log P) + b(log P)² + cσ + dEₛ + k [9]

Where C represents the molar concentration producing a defined biological effect, P is the octanol-water partition coefficient, σ represents Hammett electronic constants, Eₛ represents Taft steric parameters, and a-d are coefficients determined by multiple regression analysis [9]. The inclusion of the squared (log P)² term addressed the parabolic relationship often observed between hydrophobicity and biological activity, reflecting transport processes where optimal activity occurs at an intermediate lipophilicity [10].

The Free-Wilson Model

Concurrently, Free and Wilson developed an additive model based on the presence or absence of specific substituents at defined molecular positions. This approach expressed biological activity as:

Where BA is the biological activity, aᵢ represents the contribution of substituent i, xᵢ indicates the presence (1) or absence (0) of that substituent, and μ is the overall average activity [9]. The model was solved using multiple linear regression, with the primary advantage being that it required no explicit physicochemical parameters, relying instead on the structural framework of the molecules themselves [9].

The Mixed Approach

Kubinyi later developed a hybrid approach that combined elements of both the Hansch and Free-Wilson methods:

Log BA = Σaᵢⱼ + Σkᵢφⱼ + k [9]

Where Σ(aᵢⱼ) represents the Free-Wilson component for substituents, and Σkᵢφⱼ represents the Hansch-type contributions of the parent skeleton [9]. This integrated methodology leveraged the strengths of both approaches, providing greater flexibility in model construction.

Classical QSAR Experimental Workflow

The standard workflow for classical QSAR studies involved:

Compound Selection: A series of 20-50 congeneric compounds with varying substituents and measured biological activities was assembled [9].

Descriptor Calculation: Physicochemical parameters (log P, σ, Eₛ) were either experimentally determined or obtained from published values [9].

Model Construction: Multiple linear regression analysis was performed using statistical packages to derive coefficients relating descriptors to biological activity [9].

Model Validation: The correlation coefficient (R²), cross-validated R² (Q²), and standard error of estimate were calculated to assess model robustness [9].

The Computational Revolution: QSAR in the Cheminformatics Era (2000s-2010s)

The emergence of chemoinformatics as a distinct discipline in the late 1990s transformed QSAR from a specialized technique to a high-throughput computational approach [1] [12]. This transition was characterized by several key developments.

Expansion of Molecular Descriptors

The descriptor repertoire expanded dramatically from the classic triumvirate of hydrophobicity, electronic, and steric parameters to thousands of computationally-derived molecular features [11] [1]. These included:

- Topological Descriptors: Encoding molecular connectivity patterns, branching, and shape [11]

- Geometric Descriptors: Capturing 3D molecular dimensions and surface properties [13]

- Quantum Chemical Descriptors: Derived from molecular orbital calculations (HOMO-LUMO energies, electrostatic potentials) [11] [13]

- Electronic Descriptors: Extending beyond Hammett constants to include dipole moments, polarizabilities, and hydrogen-bonding parameters [11]

Software packages such as DRAGON, PaDEL, and RDKit emerged as essential tools for high-throughput descriptor calculation, enabling the numerical representation of chemical structures on an unprecedented scale [13].

Advanced Modeling Techniques

With the expansion of molecular descriptors, QSAR modeling incorporated more sophisticated machine learning algorithms capable of handling high-dimensional, non-linear relationships:

- Support Vector Machines (SVM): Effective for classification tasks and non-linear regression with limited samples [13]

- Random Forests (RF): Ensemble method robust to noisy data and irrelevant descriptors [13]

- Partial Least Squares (PLS): Superior to multiple linear regression for correlated descriptors [13]

Table 2: Evolution of QSAR Modeling Techniques

| Era | Primary Methods | Key Descriptors | Typical Dataset Size | Software/Tools |

|---|---|---|---|---|

| 1960s-1980s (Classical) | Multiple Linear Regression, Hansch Analysis, Free-Wilson | log P, σ, Eₛ | 20-50 compounds | Manual calculation, early statistical packages |

| 1990s-2000s (Chemoinformatics) | PLS, PCA, k-NN, Early SVM | Topological, 3D, quantum chemical descriptors | Hundreds to thousands | DRAGON, SYBYL, MOE |

| 2010s-Present (AI-Driven) | Deep Learning, Random Forest, Gradient Boosting, Graph Neural Networks | Learned representations, molecular graphs, fingerprints | Thousands to millions | RDKit, TensorFlow, PyTorch, DeepChem |

High-Dimensional QSAR Approaches

The era saw the development of dimensionality reduction techniques and higher-dimensional QSAR approaches:

- 3D-QSAR: Techniques like Comparative Molecular Field Analysis (CoMFA) and Comparative Molecular Similarity Index Analysis (CoMSIA) incorporated spatial molecular interaction fields,

- 4D-QSAR: Added an ensemble dimension by considering multiple molecular conformations [13]

- Descriptor Selection: Algorithms like PCA (Principal Component Analysis) and RFE (Recursive Feature Elimination) addressed the "curse of dimensionality" by identifying the most relevant descriptors [13]

The Contemporary Landscape: AI-Integrated QSAR (2010s-Present)

The integration of artificial intelligence, particularly deep learning, has marked the most transformative development in QSAR methodology, enabling the analysis of extremely complex structure-activity relationships across vast chemical spaces.

Deep Learning Architectures in QSAR

Modern AI-driven QSAR employs sophisticated neural network architectures that fundamentally reshape how molecular structures are represented and analyzed:

- Graph Neural Networks (GNNs): Operate directly on molecular graph representations, with atoms as nodes and bonds as edges, automatically learning relevant features through message-passing mechanisms [13]

- SMILES-Based Transformers: Apply natural language processing techniques to Simplified Molecular Input Line Entry System strings, capturing syntactic and semantic patterns in chemical structures [13]

- Convolutional Neural Networks (CNNs): Process grid-based molecular representations such as molecular surfaces or interaction fields [13]

- Autoencoders: Generate compressed, informative molecular representations (deep descriptors) in an unsupervised manner [13]

These approaches enable automatic feature learning, eliminating the need for manual descriptor engineering and capturing complex, hierarchical molecular patterns that traditional descriptors might miss [13].

Integrative Modeling Approaches

Contemporary QSAR increasingly functions within integrated computational workflows that combine multiple methodologies:

Experimental Protocols in AI-Driven QSAR

The methodology for developing AI-integrated QSAR models involves distinct computational phases:

Data Curation and Preprocessing:

Model Training and Validation:

- Implementation of neural architectures using frameworks like TensorFlow or PyTorch [13]

- Hyperparameter optimization via grid search or Bayesian methods [13]

- Rigorous validation using train-validation-test splits and cross-validation [13]

- Application of metrics including AUC-ROC, precision-recall, and Matthews correlation coefficient [13]

Model Interpretation and Explainability:

- Application of SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) to elucidate feature importance [13]

- Attention mechanisms in transformer models to identify structurally significant regions [13]

- Saliency maps for graph neural networks to highlight atoms and bonds critical to activity predictions [13]

Table 3: Key Research Reagents and Computational Tools in QSAR

| Category | Tool/Resource | Specific Examples | Primary Function |

|---|---|---|---|

| Chemical Databases | Public Compound Repositories | PubChem, ChEMBL, ZINC [1] | Source of chemical structures and associated bioactivity data |

| Descriptor Calculation | Cheminformatics Software | RDKit, DRAGON, PaDEL [13] | Generation of molecular descriptors from chemical structures |

| Modeling Frameworks | Machine Learning Libraries | Scikit-learn, TensorFlow, PyTorch [13] | Implementation of machine learning and deep learning algorithms |

| Specialized QSAR | Integrated Platforms | KNIME, Orange, BioSolveIT [12] | End-to-end QSAR workflow management |

| Validation & Analysis | Statistical Analysis Tools | QSARINS, R, Python SciPy [13] | Model validation, statistical analysis, and visualization |

The evolution of QSAR from its origins in simple linear correlations to today's sophisticated AI-integrated approaches exemplifies the transformative impact of chemoinformatics on chemical research. This journey has witnessed several paradigm shifts: from manual to automated descriptor calculation, from linear to complex non-linear models, and from isolated technique to integrated predictive framework. Throughout this evolution, the fundamental principle has remained constant: quantitative relationships connect molecular structure to biological activity.

The integration of artificial intelligence has positioned QSAR at the forefront of data-driven chemical discovery, enabling the analysis of increasingly complex biological endpoints and the exploration of vast chemical spaces. As QSAR continues to evolve, it will undoubtedly face challenges related to model interpretability, regulatory acceptance, and ethical implementation. However, its trajectory suggests an increasingly central role in addressing global challenges through the rational design of therapeutic agents, materials, and environmentally benign chemicals. Within the broader context of chemoinformatics, QSAR stands as a testament to the power of interdisciplinary approaches in advancing chemical research and development.

In modern chemical research, the ability to represent molecular structures in a computer-readable format is foundational. Cheminformatics, which integrates chemistry, computer science, and data analysis, relies on these representations to drive innovation in areas like drug discovery and materials science [1]. Molecular representations translate physical molecular structures into standardized digital formats, enabling the storage, retrieval, analysis, and prediction of chemical properties on a large scale [14]. The core data representations—SMILES, InChI, and molecular fingerprints—serve as the critical bridge between chemical structures and the computational models that accelerate scientific discovery [1] [14]. This guide provides a technical examination of these representations, framing them within the essential role of chemoinformatics in contemporary research.

Technical Deep Dive: SMILES

The Simplified Molecular-Input Line-Entry System (SMILES) is a line notation that uses short ASCII strings to describe the structure of chemical species [15]. Developed in the 1980s by David Weininger and funded by the US Environmental Protection Agency, its design allows molecule editors to convert these strings back into two-dimensional drawings or three-dimensional models [15].

Core Specification Rules

The SMILES syntax is governed by a set of precise rules for encoding molecular graphs:

- Atoms: Atoms are represented by their standard chemical symbols. Atoms in the "organic subset" (B, C, N, O, P, S, F, Cl, Br, I) are typically written without brackets if they are neutral, have implicit hydrogens, and are standard isotopes. All other atoms (e.g.,

[Au]for gold) must be enclosed in square brackets[][15] [16]. Formal charges are indicated with a plus+or minus-sign following the atom symbol within brackets (e.g.,[NH4+]for ammonium). Multiple charges can be represented by a digit or by repeating the sign [15]. - Bonds: Bonds are represented by specific symbols. Single bonds (

-), double bonds (=), triple bonds (#), and aromatic bonds (:) can be explicitly noted. Single bonds between aliphatic atoms are usually omitted for brevity [15] [16]. A "non-bond" (e.g., for ionic compounds) is indicated by a period.[15]. - Branches: Branches are specified by enclosing them in parentheses. For example, isobutyl alcohol can be written as

CC(C)CO[16]. - Rings: Ring structures are defined by breaking a bond in the ring and assigning the same numerical label to the two atoms that form the ring closure. For example, cyclohexane is written as

C1CCCCC1[15] [16]. - Aromaticity: Aromatic rings can be represented in Kekulé form (e.g.,

C1=CC=CC=C1for benzene) or, more commonly, by using lower-case atomic symbols for aromatic atoms (e.g.,c1ccccc1) [15] [16].

Canonical and Isomeric SMILES

A single molecule can have many valid SMILES strings (e.g., CCO, OCC, and C(O)C for ethanol). Canonical SMILES algorithms generate a unique, standardized string for a given molecular structure, which is essential for database indexing and ensuring uniqueness [15]. Isomeric SMILES extend the notation to include stereochemical information, such as configuration at tetrahedral centers and double bond geometry, which cannot be specified by connectivity alone [15].

Technical Deep Dive: InChI

The International Chemical Identifier (InChI) is an open standard developed by IUPAC to provide a non-proprietary, unique identifier for chemical substances [16]. While SMILES is often considered more human-readable, InChI was designed as a standardized representation to facilitate data exchange [15] [1].

The InChI Layers

The strength of InChI lies in its layered structure, which systematically encodes different types of chemical information. The following diagram illustrates the relationship between these layers and the final InChIKey.

The InChI identifier is built from several layers that encode specific structural information [16]:

- Main Layer: Contains the molecular formula and atom connectivity information.

- Charge Layer: Specifies the net charge of the molecule.

- Stereochemical Layer: Encodes double bond and tetrahedral stereochemistry.

- Isotopic Layer: Records isotopic specifications.

- Fixed-H Layer: Describes the positions of fixed hydrogens (e.g., in tautomers).

Technical Deep Dive: Molecular Fingerprints

Molecular fingerprints are another form of representation, but unlike SMILES and InChI, they are not human-readable. They are high-dimensional vectors (often binary bit strings) designed to capture structural or chemical features for efficient computational comparison and machine learning [17] [14].

Types of Fingerprints

Fingerprints can be categorized based on their generation method:

- Path-Based Fingerprints: These enumerate linear or branched atom paths of a given length within a molecule. The RDKit fingerprint and hashed Atom Pair fingerprint are examples of this type [17].

- Circular Fingerprints (ECFP/FCFP): The Extended Connectivity Fingerprint (ECFP) is a widely used circular fingerprint that iteratively captures circular atom environments (topological neighborhoods) around each atom up to a defined radius. It is designed to be invariant to atom numbering. The FCFP variant uses pharmacophoric features instead of atom types [17].

- Predefined Substructure Fingerprints: These fingerprints, such as MACCS keys, use a fixed dictionary of SMARTS patterns (substructural queries). Each bit in the fingerprint indicates the presence or absence of a specific predefined substructure within the molecule [17].

- Topological Torsion Fingerprints: This type encodes sequences of four bonded atoms, providing a local characterization of the molecular structure [17].

Comparative Analysis

The table below provides a consolidated technical comparison of the three core molecular representations.

Table 1: Comparative analysis of SMILES, InChI, and molecular fingerprints

| Feature | SMILES | InChI | Molecular Fingerprints |

|---|---|---|---|

| Representation Type | Line notation (ASCII string) | Layered identifier (string) | Binary bit vector or integer vector |

| Human Readability | High (for simple molecules) | Low | None |

| Primary Design Goal | Compactness and human-input | Standardization and unique identification | Similarity searching and machine learning |

| Canonical Form | Yes (algorithm-dependent) | Yes (standardized) | Not applicable |

| Stereochemistry Support | Yes (isomeric SMILES) | Yes (in separate layers) | Varies by type |

| Key Strength | Compact, intuitive, widely supported | Standardized, non-proprietary, unique | Fast similarity computation, model input |

| Key Limitation | Multiple valid strings per molecule | Less human-readable, complex | Lossy representation; not reversible |

Applications in Modern Cheminformatics & Drug Discovery

These core representations are the bedrock upon which modern, data-driven chemical research is built. Their applications are vast and critical to accelerating discovery.

- Enabling AI and Machine Learning: SMILES and fingerprints are the primary inputs for AI models in drug discovery. Language models, such as Transformers, tokenize SMILES strings to predict molecular properties or generate novel compounds [14]. Graph Neural Networks (GNNs) use graph-based representations, often derived from SMILES, to learn from molecular structure [18] [14]. Molecular fingerprints are extensively used in Quantitative Structure-Activity Relationship (QSAR) modeling to build predictive models for properties like toxicity and bioavailability [19] [14].

- Virtual Screening and Scaffold Hopping: Fingerprints are indispensable for virtual screening, where large chemical libraries are rapidly searched to identify molecules similar to a known active compound [19] [14]. This facilitates scaffold hopping—the discovery of new core structures (scaffolds) that retain biological activity—by identifying molecules that are functionally similar but structurally distinct [14].

- Chemical Database Management: Canonical SMILES and InChI are vital for managing chemical databases. They ensure unique indexing of molecules, prevent duplicates, and enable efficient structure and substructure searching across vast repositories like PubChem and ChEMBL [1] [19].

Experimental Protocol: From Fingerprint to Validated Molecule

The following workflow is common in AI-driven drug discovery for generating and validating novel compounds.

Table 2: Research reagents and tools for generative cheminformatics

| Tool/Reagent | Type | Primary Function in Protocol |

|---|---|---|

| RDKit | Cheminformatics Toolkit | Molecular representation conversion, fingerprint generation, descriptor calculation [19]. |

| ECFP4 | Molecular Fingerprint | Serves as the input representation for the generative model [17]. |

| Transformer Model | AI Architecture | Acts as the Neural Machine Translation (NMT) engine to decode the fingerprint into a SMILES string [17]. |

| SELFIES | Molecular Representation | An alternative to SMILES that guarantees 100% syntactic validity; can be used as an intermediate or output format [17]. |

| ChemProp | Machine Learning Package | Predicts molecular properties (e.g., solubility, toxicity) of the generated SMILES for virtual validation [18]. |

| AutoDock/Gnina | Docking Software | Performs structure-based validation of the generated molecule's binding affinity to a target protein [20] [18]. |

Protocol Steps:

- Input: The process begins with a target molecular fingerprint (e.g., ECFP4) that encodes desired chemical features [17].

- Translation: A pre-trained Neural Machine Translation (NMT) model, often based on the Transformer architecture, decodes the fingerprint representation into a SMILES string. Studies have shown this reconstruction can be achieved with high accuracy, overcoming the traditionally "lossy" nature of fingerprints [17].

- Validity Check: The generated SMILES string is checked for chemical validity and sanity using a toolkit like RDKit. If invalid, the process can be iterated.

- Virtual Validation: The valid SMILES undergoes multi-stage in silico validation:

- Property Prediction: Models like ChemProp predict key ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties and physicochemical profiles [18].

- Molecular Docking: Tools like Gnina simulate the binding of the generated molecule to a protein target, assessing the binding mode and affinity [18].

- Output: Molecules that pass these virtual validation filters become high-priority candidates for synthesis and experimental testing.

Future Perspectives and Challenges

The field of molecular representation continues to evolve. SELFIES (SELF-referencIng Embedded strings) is a new representation designed to guarantee 100% syntactic validity when generated by AI models, addressing a key limitation of SMILES [17]. Graph-based representations, which natively model atoms as nodes and bonds as edges, are becoming increasingly important for capturing structural information more directly for GNNs [14]. Multimodal and contrastive learning approaches that combine multiple representations (e.g., SMILES, graphs, and 3D information) are emerging as powerful strategies for learning more robust molecular embeddings [14].

Despite these advances, challenges remain. Data quality and standardization are persistent issues, and no single representation is perfect for all tasks [1] [21]. The future will likely see a focus on developing more comprehensive, flexible, and interoperable representations to further improve the predictive power of chemoinformatic models [1] [14]. As these tools mature, their role in enabling autonomous laboratories and accelerating the discovery of new medicines and materials will only grow more profound [4] [21].

Chemical databases constitute the foundational infrastructure of modern chemoinformatics, serving as critical repositories for the structures, properties, and biological activities of molecules. The field of chemoinformatics leverages computational methods to solve chemical problems, and its advancement is intrinsically linked to the quality, scope, and accessibility of underlying chemical data [22]. In the early 2000s, researchers faced a significant dearth of publicly accessible chemistry and bioactivity data [23]. The subsequent emergence of large-scale public resources has transformed the research landscape, enabling data-driven approaches across chemical biology, medicinal chemistry, and drug discovery.

This whitepaper examines three pivotal public chemical databases—PubChem, ChEMBL, and ChemSpider—that capture the majority of open chemical structure records and have become massively enabling resources for the scientific community [24]. These platforms function as meta-portals that subsume and link to a major proportion of public bioactivity data extracted from literature, patents, and screening assays [24]. Understanding their distinct characteristics, content coverage, and specialized functionalities is essential for researchers to effectively leverage their capabilities. The integration of these resources into the chemoinformatics workflow represents a paradigm shift in how chemical information is curated, accessed, and utilized to accelerate scientific discovery.

PubChem

Established in 2004 as a component of the NIH Molecular Libraries Roadmap Initiative, PubChem has evolved into the largest public repository of chemical information [25] [26]. Maintained by the National Center for Biotechnology Information (NCBI), it serves as a key resource for cheminformatics, chemical biology, and drug discovery communities [22] [26]. PubChem organizes its data into three interlinked databases: Substance (depositor-provided chemical descriptions), Compound (unique chemical structures derived from Substance records), and BioAssay (biological screening results and experimental data) [25] [26].

The system employs a submitter-based model where chemical structures conforming to standardization rules are accepted as primary database records assigned to discrete submitters via Substance Identifiers (SIDs) [24]. These are subsequently merged according to PubChem chemistry rules into non-redundant Compound Identifiers (CIDs) [24]. As of 2021, PubChem contained more than 293 million substance descriptions, 111 million unique chemical structures, and 271 million bioactivity data points from 1.2 million biological assays [25]. The resource integrates data from hundreds of sources worldwide, including government agencies, academic institutions, pharmaceutical companies, and chemical vendors [25] [26].

ChEMBL

ChEMBL is a manually curated database of bioactive molecules with drug-like properties, maintained by the European Bioinformatics Institute (EMBL-EBI) [27] [28]. Launched in 2009, it has grown into a Global Core Biodata Resource that provides high-quality, open, and FAIR (Findable, Accessible, Interoperable, Reusable) data on bioactive compounds [27] [29]. Unlike PubChem's submitter-driven model, ChEMBL employs expert curation to extract bioactivity data from medicinal chemistry literature and selected patents, focusing particularly on quantitative measurements of drug-target interactions [27] [29].

The database captures bioactivity data across all stages of drug discovery, with particular strength in containing carefully standardized potency values (e.g., IC₅₀, Kᵢ) that enable direct comparison across experiments [27] [29]. A significant feature introduced in 2013 is the pChEMBL value, which provides a negative logarithmic transformation of potency measurements to facilitate comparative analysis [29]. As of release 33 (2023), ChEMBL contains information extracted from over 88,000 publications and patents, encompassing more than 20.3 million bioactivity measurements for 2.4 million unique compounds [27].

ChemSpider

ChemSpider, managed by the Royal Society of Chemistry, serves as a central hub for chemical structure data, integrating and validating information from hundreds of data sources [24]. While specific current metrics for ChemSpider were not highlighted in the search results, earlier reports indicated it contained 63 million chemical structures as of 2018 [24]. The platform excels in structure-centric integration, providing access to physical property data, spectra, synthetic pathways, and safety information [24].

A key distinguishing feature of ChemSpider is its focus on curation and validation of chemical structures and associated data, employing both automated and community-driven approaches to ensure data quality [24]. The platform serves as a foundational resource for the chemical sciences, linking chemical structures to relevant research articles, patents, and other online resources [24].

Table 1: Key Characteristics of Major Chemical Databases

| Feature | PubChem | ChEMBL | ChemSpider |

|---|---|---|---|

| Primary Focus | Comprehensive chemical repository with bioactivity data | Manually curated bioactivity data from literature | Chemical structure integration and validation |

| Managing Organization | NCBI (NIH, USA) | EMBL-EBI (Europe) | Royal Society of Chemistry (UK) |

| Content Scope | 111M+ compounds, 293M+ substances, 1.2M+ assays [25] | 2.4M+ compounds, 20.3M+ bioactivities [27] | 63M+ structures (2018 estimate) [24] |

| Data Curation Approach | Submitter-driven with standardization | Expert manual curation | Automated and community curation |

| Key Unique Features | Integration with NCBI resources, diverse data types | pChEMBL values, drug annotation | Structure validation, spectral data |

Table 2: Data Content Comparison Across Databases

| Data Category | PubChem | ChEMBL | ChemSpider |

|---|---|---|---|

| Chemical Structures | 111 million unique compounds (2021) [25] | 2.4 million compounds (2023) [27] | 63 million structures (2018) [24] |

| Bioactivity Measurements | 271 million data points (2021) [25] | 20.3 million measurements (2023) [27] | Limited information |

| Biological Assays | 1.25 million assays (2021) [25] | 1.6 million assays (2023) [27] | Not applicable |

| Target Coverage | >10,000 protein target sequences [25] | >17,000 targets (∼10,600 proteins) [27] | Not applicable |

| Contributing Sources | 629 data sources (2018) [25] | 420 deposited datasets, >88,000 documents [27] | 282 sources (2018) [24] |

Research Applications and Experimental Protocols

Typical Research Use Cases

Chemical databases support diverse research applications across multiple domains. Lead identification and optimization represents a primary application, where researchers mine structure-activity relationship (SAR) data to guide medicinal chemistry efforts [22]. For example, PubChem's bioactivity data enables similarity searching for analogs of known active compounds and profiling of selectivity and promiscuity patterns [22].

Chemical biology and target discovery represents another major application area. ChEMBL's curated data on compound-target interactions facilitates polypharmacology studies and the identification of tool compounds for probing novel biological targets [27] [29]. The database has been instrumental in projects such as mapping the "PROTACtable genome" for targeted protein degradation and identifying drug repurposing opportunities for COVID-19 and heart failure [27].

Chemical space analysis leverages the extensive compound collections in these databases to explore structural diversity, scaffold distributions, and property relationships [22]. Researchers have analyzed drug-like and lead-like compounds from PubChem using multiple structural descriptors to visualize and navigate chemical space [22]. Similarly, ChEMBL data has enabled analyses of target and scaffold trends over time, revealing historical patterns in medicinal chemistry research [27].

Experimental Data Access Protocols

Accessing data from chemical databases typically follows standardized protocols:

1. Structure and Identity Searching:

- Exact structure search identifies compounds with identical connectivity, accounting for stereochemistry

- Similarity search employs molecular fingerprints (e.g., PubChem fingerprints) to find structurally analogous compounds [22]

- Substructure search retrieves compounds containing specific molecular frameworks

- Search by identifier using database-specific codes (CID, SID, AID for PubChem; ChEMBL ID for ChEMBL) [22] [25]

2. Bioactivity Data Retrieval:

- Target-centric queries retrieve all bioactive compounds for specific protein targets

- Compound-centric queries extract all bioactivity data for specific compounds across multiple assays

- Assay-centric queries access complete results from specific screening experiments

3. Programmatic Access:

- PubChem provides Power User Gateway (PUG) services for programmatic access [25]

- ChEMBL offers RESTful web services and data downloads in multiple formats

- Most databases provide bulk download options via FTP servers [25]

Diagram 1: Chemical Database Query Workflow (Width: 760px)

Essential Research Reagent Solutions

The effective utilization of chemical databases requires a suite of computational tools and resources that constitute the modern chemoinformatician's toolkit.

Table 3: Essential Research Reagents for Database Mining

| Tool/Resource | Function | Application Example |

|---|---|---|

| Molecular Fingerprints | Structural representation for similarity searching | PubChem fingerprints for compound clustering [22] |

| Standardization Algorithms | Structural normalization for cross-database comparison | Tautomer normalization for consistent registration |

| Programmatic Interfaces | Automated data access via APIs | PUG-REST for batch retrieval from PubChem [25] |

| Cheminformatics Toolkits | Fundamental computational chemistry operations | RDKit for descriptor calculation and scaffold analysis |

| Data Analysis Platforms | Integrated environments for data exploration | ChemMine Tools for PubChem data import and analysis [22] |

| Visualization Tools | Interactive chemical data exploration | Avogadro for structure retrieval and visualization [22] |

Integration in Cheminformatics Workflows

The complementary nature of PubChem, ChEMBL, and ChemSpider enables their integrated use in comprehensive chemoinformatics workflows. A typical research pipeline might begin with structural identity checking in ChemSpider to validate chemical structures, proceed to bioactivity profiling in ChEMBL to gather potency data against relevant targets, and expand to broad activity screening in PubChem to assess promiscuity and off-target effects [24] [30].

This integration is facilitated by cross-database identifiers, particularly the International Chemical Identifier (InChI) system, which provides a standardized representation of chemical structures [24]. The InChI Key serves as a universal fingerprint that enables structure matching across databases, overcoming differences in internal registration systems and curation practices [24].

The role of these databases extends beyond simple data retrieval to enabling predictive modeling and machine learning applications. The large-scale, high-quality bioactivity data in ChEMBL has been instrumental in developing target prediction models based on conformal prediction [27]. Similarly, PubChem's extensive HTS data has supported the development of bioassay ontologies and semantic tools for assay characterization [22].

Diagram 2: Chemical Data Ecosystem and Flow (Width: 760px)

PubChem, ChEMBL, and ChemSpider collectively form an indispensable infrastructure for modern chemoinformatics and drug discovery research. Despite their differing architectures and curation philosophies—with PubChem emphasizing comprehensiveness, ChEMBL focusing on curated bioactivity data, and ChemSpider specializing in structure validation and integration—these resources exhibit powerful complementarity [24] [30]. Their existence has fundamentally transformed the practice of chemical research by providing open access to chemical information that was previously fragmented or inaccessible.

The continued evolution of these databases reflects emerging challenges and opportunities in chemical data science. The growing volume of deposited versus extracted data in ChEMBL, the expanding patent coverage in PubChem, and the increasing sophistication of cross-database integration strategies all point toward a future where chemical knowledge becomes increasingly FAIR (Findable, Accessible, Interoperable, and Reusable) [27] [29]. For researchers in chemical biology and drug discovery, proficiency in leveraging these resources has become an essential competency, enabling more informed experimental design, efficient resource utilization, and accelerated discovery timelines. As the field advances, these databases will continue to serve as both repositories of existing knowledge and platforms for the generation of new insights through large-scale data analysis and integration.

From Data to Discovery: Core Cheminformatics Methods and Real-World Applications

Virtual screening (VS) has emerged as a fundamental computational methodology in early drug discovery, enabling the rapid and cost-effective identification of hit compounds from vast chemical libraries. By leveraging chemoinformatics, artificial intelligence (AI), and molecular modeling, VS allows researchers to prioritize molecules with the highest potential for experimental testing. This technical guide explores the core principles, methodologies, and cutting-edge applications of VS, framed within the critical role of chemoinformatics as the backbone of modern, data-driven chemical research [1] [31].

Chemoinformatics, defined as the application of informatics methods to solve chemical problems, has become a cornerstone of modern chemical research [1]. It provides the essential toolkit for managing, analyzing, and extracting knowledge from the enormous datasets generated in contemporary science. In drug discovery, this translates to powerful applications in virtual screening, quantitative structure-activity relationships (QSAR), and molecular property prediction [4] [1].

The traditional drug discovery pipeline is notoriously time-consuming and expensive. Virtual screening addresses this bottleneck by acting as a computational filter. It is a technique that uses computer programs to search for potential hits from virtual fragment libraries, significantly increasing the hit rate compared to traditional high-throughput screening (HTS) alone [31]. By computationally evaluating vast libraries of compounds, VS helps identify a manageable subset of promising candidates for synthesis and biological testing, saving substantial resources and accelerating the initial phases of research [31].

Core Principles and Types of Virtual Screening

Virtual screening methodologies are broadly classified into two categories, each with distinct approaches and applications.

Structure-Based Virtual Screening (SBVS)

SBVS relies on the three-dimensional structure of a biological target, typically obtained from X-ray crystallography, NMR, or cryo-EM. The core technology is molecular docking, which predicts how a small molecule (ligand) binds to the target's binding site and scores the strength and quality of that interaction [31].

- Process: A library of small molecules is computationally "docked" into the target's binding site.

- Output: Each molecule receives a score predicting its binding affinity.

- Application: Ideal for targets with well-characterized structures, allowing for the identification of novel chemotypes that complement the binding site's topology and chemistry.

Ligand-Based Virtual Screening (LBVS)

LBVS is used when the 3D structure of the target is unknown but information about known active compounds is available. It operates on the principle of molecular similarity, which assumes that structurally similar molecules are likely to exhibit similar biological activities [31].

- Methods:

- 2D Fingerprint Similarity: Compares molecular structures based on the presence or absence of specific substructures.

- Pharmacophore Modeling: Identifies molecules that share a common set of steric and electronic features necessary for biological activity.

- 3D Shape Screening: Overlays and compares the three-dimensional shapes of molecules to find those with similar steric profiles [32] [31].

The following table summarizes the key characteristics of these two approaches.

Table 1: Comparison of Structure-Based and Ligand-Based Virtual Screening

| Feature | Structure-Based Virtual Screening (SBVS) | Ligand-Based Virtual Screening (LBVS) |

|---|---|---|

| Prerequisite | 3D structure of the target protein | Set of known active ligands |

| Core Method | Molecular docking | Molecular similarity, pharmacophore modeling |

| Key Output | Predicted binding pose and affinity | Similarity score to known actives |

| Primary Use Case | Target with a known structure, novel hit identification | Target with unknown structure, scaffold hopping |

| Advantages | Can discover novel scaffolds; provides structural insights | Does not require a protein structure; generally faster |

| Limitations | Dependent on quality and relevance of the protein structure; computationally intensive | Limited by the quality and diversity of known actives |

AI-Enhanced Virtual Screening: A Case Study

Recent advances integrate AI and machine learning (ML) to create hybrid VS pipelines that achieve both efficiency and precision. A seminal study by Ji et al. demonstrates this powerful combination for identifying inhibitors of the understudied GluN1/GluN3A NMDA receptor [32].

Experimental Protocol and Workflow

The researchers employed a multi-stage AI-enhanced method to screen a massive library of 18 million molecules [32]:

- Initial Shape Screening: The library was first ranked using ROCS (Rapid Overlay of Chemical Structures), a 3D shape similarity tool, to identify molecules with shapes similar to a known reference compound [32].

- AI-Driven Refinement: The top-ranking compounds were then processed by a Graph Neural Network (GNN)-based drug-target interaction model. This step enhanced the accuracy of the subsequent docking simulation by incorporating more complex structure-activity relationships [32].

- Molecular Docking: The refined compound set was subjected to molecular docking against the GluN1/GluN3A receptor structure.

- Experimental Validation: The final computational hits were synthesized and tested experimentally using calcium flux assays (FDSS/μCell) and manual patch-clamp recordings for functional validation [32].

Key Findings and Results

This hybrid workflow successfully identified two potent inhibitors with IC~50~ values below 10 μM. One candidate exhibited particularly strong inhibitory activity, with an IC~50~ of 5.31 ± 1.65 μM, a result that was confirmed by patch-clamp electrophysiology [32]. This case highlights how AI can streamline the VS process, enabling the efficient exploration of ultra-large libraries for challenging biological targets.

The workflow for this integrated approach is summarized in the following diagram.

The Chemoinformatics Foundation: Data and Tools

The execution of any virtual screen depends on a robust chemoinformatics infrastructure for handling chemical data and applying computational tools.

Chemical Structure Representation

To be processed by computers, chemical structures must be converted into machine-readable formats [31].

- SMILES (Simplified Molecular Input Line Entry System): A line notation that uses ASCII strings to represent the structure of a molecule. It is compact and widely used for database storage and searching [33] [31].

- Connection Tables: Explicit representations of molecular topology, storing atom types, bond types, and connectivity. Common file formats include SDF (Structure Data File) and MOL2 [31].

- InChI (International Chemical Identifier): A non-proprietary, standardized identifier developed by IUPAC and NIST, designed to provide a unique string representation for each compound [33].

Key Software and Tools

A diverse ecosystem of software tools supports different aspects of the VS workflow.

- For Docking (SBVS): AutoDock, Schrödinger Suite [4] [34].

- For Ligand-Based Methods (LBVS): Tools like ROCS for 3D shape screening [32].

- For Cheminformatics and ML: RDKit (open-source toolkit for cheminformatics), Chemprop (message-passing neural networks for property prediction), and DeepChem [4].

- For Retrosynthesis and Library Design: IBM RXN, AiZynthFinder, and Reactor for enumerating virtual chemical libraries from validated reaction schemes [4] [33].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful virtual screening campaigns rely on both computational and experimental resources. The following table details key solutions and their functions in the workflow.

Table 2: Key Research Reagent Solutions for Virtual Screening and Hit Identification

| Research Reagent / Solution | Function in the VS Workflow |

|---|---|

| Virtual Compound Libraries (e.g., ZINC, REAL Database) | Large collections of commercially available or easily synthesizable compounds used as the input for screening [33] [31]. |

| Target Protein Structure (e.g., from PDB) | The 3D atomic coordinates of the biological target, essential for structure-based virtual screening and docking studies [31]. |

| Known Active Ligands | A set of compounds with confirmed biological activity against the target; serves as the reference for ligand-based virtual screening [31]. |

| Functional Assay Kits (e.g., Calcium Flux FDSS/μCell) | Cell-based or biochemical assays used for the experimental validation of computational hits and determination of IC~50~ values [32]. |

| Patch-Clamp Electrophysiology Setup | A gold-standard technique for validating the functional activity of hits on ion channel targets, providing detailed mechanistic data [32]. |

Advanced Methodologies: Free Energy Perturbation (FEP)

Beyond standard docking, more sophisticated physics-based methods like Free Energy Perturbation (FEP) are increasingly used for lead optimization. FEP provides highly accurate predictions of the relative binding free energies between closely related ligands [34]. This allows medicinal chemists to prioritize which synthetic analogs are most likely to have improved potency.

- Recent Advances: Improvements in FEP include automated lambda window selection for better efficiency, refined force fields with QM-derived torsional parameters, and better handling of charge changes and water placement within binding sites [34].

- Active Learning FEP: Emerging workflows combine the accuracy of FEP with the speed of ligand-based QSAR methods. FEP is run on a small subset of a virtual library, and the results are used to train a QSAR model that predicts the binding affinity for the entire library, creating an efficient, iterative exploration cycle [34].

- Absolute Binding FEP (ABFE): ABFE calculates the binding free energy of a single ligand without a direct reference molecule, offering potential for virtual screening of diverse compounds, though it is computationally more demanding than relative FEP [34].

Virtual screening, powered by the tools and principles of chemoinformatics, has irrevocably transformed the landscape of early drug discovery. The integration of AI and machine learning, as exemplified by hybrid screening pipelines, is pushing the boundaries of efficiency and success. Furthermore, the advent of more accurate simulation techniques like FEP and the growth of expansive, synthetically accessible virtual libraries are compounding these benefits. As these computational methodologies continue to evolve and integrate more deeply with automated synthesis and smart labs, they will undoubtedly solidify the role of chemoinformatics as a central pillar in accelerating the discovery of new therapeutic agents.

Chemoinformatics has emerged as a cornerstone of modern chemical research, fundamentally transforming how scientists approach the discovery and design of new molecules. Defined as "the application of informatics methods to solve chemical problems," this interdisciplinary field bridges chemistry, computer science, and data analysis [1]. In the context of predictive modeling, chemoinformatics provides the essential framework and tools for managing chemical data on an unprecedented scale, enabling the extraction of meaningful patterns from complex molecular datasets [1] [8]. The integration of artificial intelligence (AI) and machine learning (ML) has significantly advanced this capability, allowing researchers to predict molecular properties and biological activities with remarkable accuracy before synthesis ever begins [1].

Quantitative Structure-Activity Relationship (QSAR) modeling represents one of the most impactful applications of chemoinformatics, establishing quantitative correlations between chemical structures and their biological effects or physicochemical properties [13]. Originally introduced decades ago through classical approaches like Hansch analysis, QSAR has evolved dramatically with the advent of machine learning and deep learning techniques [35]. This evolution has transformed drug discovery from a trial-and-error process to a data-driven science, significantly reducing the time and cost associated with traditional approaches [13] [36]. The emergence of what is now termed "deep QSAR" marks a pivotal advancement, leveraging deep neural networks to automatically learn relevant features from molecular structures without manual descriptor engineering [35]. This technical guide explores the core methodologies, protocols, and applications of QSAR and machine learning within the expanding domain of chemoinformatics, providing researchers with the practical knowledge to implement these approaches in their work.

Molecular Descriptors: The Foundation of QSAR

QSAR modeling depends fundamentally on molecular descriptors—numerical representations that encode various chemical, structural, or physicochemical properties of compounds [13]. These descriptors serve as the input features for machine learning models, creating mathematical relationships between molecular structure and activity or property endpoints.

Classification and Types of Molecular Descriptors

Molecular descriptors are typically categorized based on the dimensionality of the structural information they encode, each offering distinct advantages for different modeling scenarios [13].

Table: Classification of Molecular Descriptors in QSAR Modeling

| Descriptor Type | Description | Examples | Applications |

|---|---|---|---|

| 1D Descriptors | Based on bulk properties and chemical composition | Molecular weight, atom count, bond count, molecular formula | Preliminary screening, simple property prediction |

| 2D Descriptors | Derived from molecular topology and connectivity | Topological indices, connectivity indices, graph-theoretical descriptors | High-throughput virtual screening, toxicity prediction |

| 3D Descriptors | Represent spatial molecular geometry | Surface area, volume, molecular shape, steric/electrostatic parameters | Protein-ligand docking, conformational analysis, 3D-QSAR |

| 4D Descriptors | Incorporate conformational flexibility and ensemble information | Conformer ensembles, interaction pharmacophores | Refined QSAR, ligand-based pharmacophore modeling |

| Quantum Chemical Descriptors | Derived from quantum mechanical calculations | HOMO-LUMO energies, dipole moment, electrostatic potential surfaces | Electronic property prediction, reaction mechanism studies |

| Deep Learning Descriptors | Learned representations from neural networks | Graph neural network embeddings, SMILES-based latent vectors | Data-driven pipelines across diverse chemical spaces |