Chemogenomics Methods: A Comprehensive Guide to Target Discovery and Drug Development

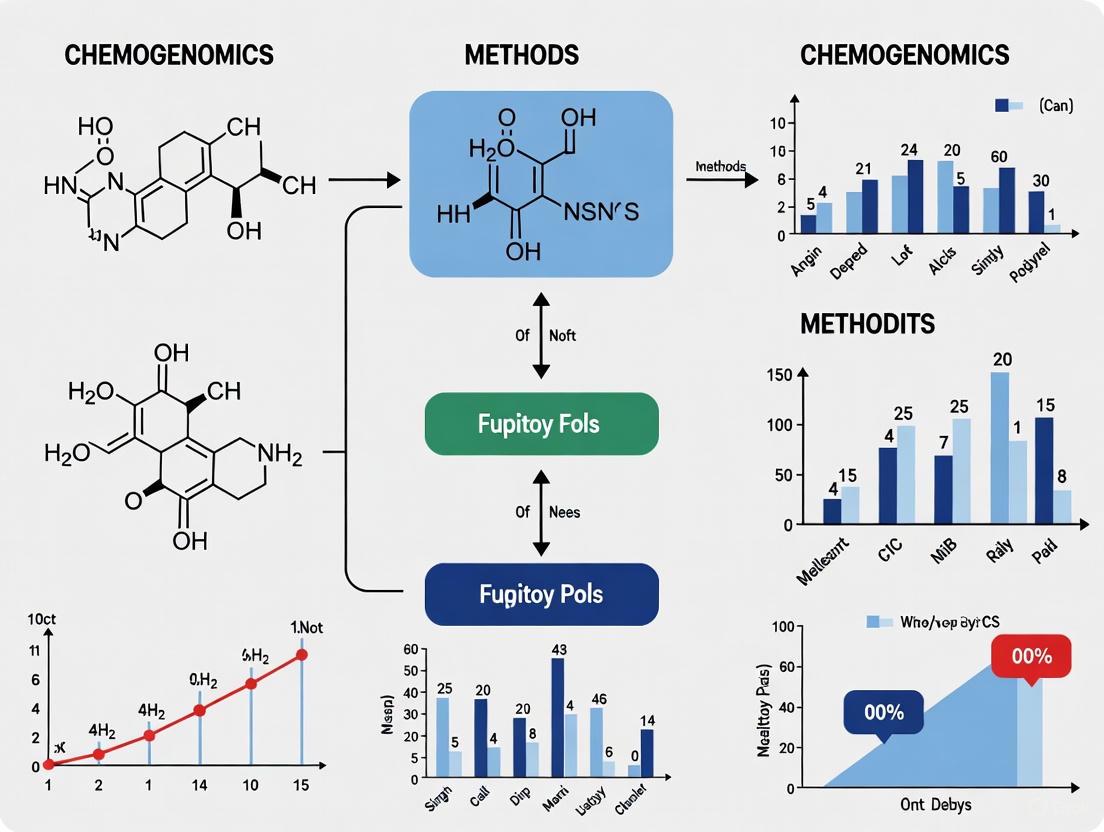

This article provides a comprehensive overview of chemogenomics, an interdisciplinary field that systematically links small molecules to biological targets to accelerate drug discovery.

Chemogenomics Methods: A Comprehensive Guide to Target Discovery and Drug Development

Abstract

This article provides a comprehensive overview of chemogenomics, an interdisciplinary field that systematically links small molecules to biological targets to accelerate drug discovery. Tailored for researchers, scientists, and drug development professionals, it covers foundational principles, key methodological approaches—including both experimental and computational techniques—and practical guidance for troubleshooting and optimizing screens. Furthermore, it explores validation strategies and comparative analyses of large-scale datasets, offering insights into the robustness and future applications of chemogenomics in bridging phenotypic screening with target-based drug discovery.

Core Principles: Defining Chemogenomics and Its Role in Modern Biology

What is Chemogenomics? Bridging Chemistry and Genomics

Chemogenomics represents a systematic, large-scale strategy in drug discovery that aims to identify all possible interactions between chemical compounds and biological targets within a gene family. This field stands at the intersection of chemistry and genomics, leveraging organized chemical libraries to probe families of functionally related proteins, with the ultimate goal of parallel identification of novel drugs and drug targets [1] [2]. The table below summarizes its core defining characteristics.

| Aspect | Description |

|---|---|

| Core Objective | Systematic screening of targeted chemical libraries against families of drug targets to identify novel drugs and drug targets [1]. |

| Primary Strategy | Uses targeted chemical libraries (containing known ligands for some family members) to identify ligands for other, often uncharacterized, members of the same protein family [1]. |

| Key Principle | Leverages the concept that compounds designed for one protein family member often bind to other members of the same family, facilitating the exploration of the entire target space [1] [3]. |

| Experimental Approaches | Divided into forward chemogenomics (phenotype-based) and reverse chemogenomics (target-based) [1]. |

Core Concepts and Strategic Approaches

The completion of the human genome project provided an abundance of potential targets for therapeutic intervention, creating a need for systematic methods to characterize them [1]. Chemogenomics addresses this by integrating target and drug discovery, using active compounds as chemical probes to characterize proteome functions [1]. The interaction between a small molecule and a protein induces a phenotype, allowing researchers to associate a protein with a specific molecular event [1]. A key advantage over genetic approaches is the ability to modify protein function reversibly and in real-time [1].

Forward vs. Reverse Chemogenomics

Two complementary experimental approaches form the backbone of chemogenomic investigation.

Forward Chemogenomics (Classical/Phenotype-based): This approach begins with a desired phenotype, such as the arrest of tumor growth. Researchers screen for small molecules that induce this phenotype without prior knowledge of the specific molecular target. Once active compounds (modulators) are identified, they are used as tools to isolate and identify the protein responsible for the observed effect. The main challenge lies in designing phenotypic assays that can efficiently lead from screening to target identification [1].

Reverse Chemogenomics (Target-based): This strategy starts with a specific, known protein target. Researchers first identify small molecules that perturb the target's function in a controlled, in vitro enzymatic assay. Subsequently, the biological phenotype induced by these modulators is analyzed in cellular or whole-organism models. This method is used to validate the biological role of the target and is enhanced by modern capabilities for parallel screening and lead optimization across entire target families [1].

Key Methodologies and Experimental Protocols

Chemogenomics relies on a variety of sophisticated experimental and computational protocols to link compounds to their targets and functions.

Fitness-Based Chemogenomic Profiling for Target Identification

A powerful method for identifying a small molecule's target involves fitness-based profiling using barcoded yeast libraries [4]. In this competitive assay, a pool of thousands of unique yeast strains (e.g., gene deletion or overexpression strains) is grown in the presence and absence of the small molecule of interest. The relative abundance of each strain in the pool is tracked over time by sequencing the unique DNA barcodes. Strains whose genes are essential for surviving the drug treatment will drop out of the population, while strains that confer resistance will become more abundant. This generates a fitness profile that directly points to the drug's mechanism of action and potential target [4].

Protocol Summary: Competitive Fitness-Based Profiling [4]

- Library Preparation: A barcoded yeast library (e.g., deletion collection, DAmP collection, or MoBY-ORF collection) is cultured.

- Competitive Growth: The pooled strains are grown competitively in two conditions: with the small molecule (drug condition) and without (control condition).

- Sample Harvesting: Genomic DNA is harvested from the pools at multiple time points during growth.

- Barcode Amplification & Sequencing: The unique molecular barcodes are amplified via PCR and sequenced using high-throughput sequencing.

- Data Analysis: The abundance of each barcode in the drug condition is compared to the control. Strains showing significant sensitivity or resistance are identified, and Gene Ontology (GO) analysis of these genes is performed to infer the molecule's MoA and potential target.

In Silico Chemogenomic Approaches for Drug-Target Interaction Prediction

The increasing volume of chemogenomic data has enabled the development of computational methods to predict drug-target interactions (DTIs). These in silico approaches are crucial for reducing the drug/target search space, thereby lowering the cost, time, and labor involved in the drug discovery pipeline [3]. The table below compares the major categories of these methods.

| Method Category | Key Advantage | Key Disadvantage |

|---|---|---|

| Similarity Inference | High interpretability based on the "wisdom of the crowd" principle. | May miss novel interactions ("serendipic results") and often ignores continuous binding affinity data [3]. |

| Network-Based (NBI) | Does not require 3D target structures or negative samples for training. | Suffers from the "cold start" problem (cannot predict for new drugs) and is biased toward well-connected nodes [3]. |

| Feature-Based Machine Learning | Can handle new drugs/targets by relying on their features, not just known interactions. | Feature selection is critical and difficult; class imbalance can be an issue in classification models [3]. |

| Matrix Factorization | Does not require negative samples and is efficient for large datasets. | Primarily models linear relationships, struggling with complex non-linear drug-target interactions [3]. |

| Deep Learning | Automates manual feature extraction, potentially capturing complex patterns. | Low interpretability ("black box" nature); reliability of auto-learned features can be a concern [3]. |

These computational models are often powered by integrated databases like CHEMGENIE, which harmonize compound-target association data from multiple public and in-house sources, creating a "model-ready" resource for predictive analytics [5].

Target Deconvolution by Limited Proteolysis-Mass Spectrometry (LiP-MS)

This protocol is used to identify protein targets of small molecules directly in a complex cellular lysate. It is based on the principle that a small molecule binding to a protein will induce structural changes that alter its susceptibility to proteolysis by a non-specific protease. These changes are detected and quantified using mass spectrometry [6].

Protocol Summary: Target Deconvolution by LiP-MS [6]

- Treatment: A native cell lysate is divided and incubated with either the small molecule of interest or a vehicle control (e.g., DMSO).

- Limited Proteolysis: Both samples are subjected to a brief, controlled digestion with a robust, non-specific protease like proteinase K.

- Protease Inactivation: The protease is inactivated, and the proteins are denatured.

- Complete Digestion: The entire protein mixture is digested to completion with a sequence-specific protease (e.g., trypsin).

- LC-MS/MS Analysis: The resulting peptides are analyzed by Liquid Chromatography with Tandem Mass Spectrometry (LC-MS/MS).

- Data Analysis: Proteomic software identifies and quantifies the peptides. Peptides from the LiP step that show significant abundance changes between the drug and control conditions indicate structural alterations due to drug binding, enabling the identification of the target protein.

Applications and Case Studies in Drug Discovery

Chemogenomics has proven its value across multiple facets of modern drug development, from understanding traditional medicines to creating new clinical candidates.

Determining Mechanism of Action (MoA) for Traditional Medicines

Chemogenomics has been applied to identify the mode of action of compounds used in traditional medicine systems, such as Traditional Chinese Medicine (TCM) and Ayurveda [1]. The compounds in these medicines often have "privileged structures" and known safety profiles, making them attractive starting points for drug development. In one case study, databases of traditional medicine compounds and their known phenotypic effects were analyzed in silico. For a class of TCM "toning and replenishing medicine," the approach predicted sodium-glucose transport proteins and PTP1B as targets relevant to the observed hypoglycemic (blood sugar-lowering) phenotype, providing a novel, molecular understanding of its action [1].

From Chemical Probe to Clinical Candidate: BET Bromodomain Inhibitors

A seminal example of chemogenomics in practice is the development of Bromodomain and Extra-Terminal (BET) inhibitors for cancer therapy [7].

- The Probe: (+)-JQ1, a potent and selective chemical probe for the BET family of bromodomains, was developed through molecular modeling. It was instrumental in validating BET proteins as compelling targets in cancer, demonstrating anti-proliferative effects in numerous haematological and solid tumors [7].

- The Challenge: Despite its utility as a probe, (+)-JQ1 had a short half-life, making it unsuitable for clinical use [7].

- The Clinical Candidates: The structural and biological insights from (+)-JQ1 inspired the development of multiple clinical candidates via medicinal chemistry optimization.

- I-BET762 (Molibresib): Identified via a phenotypic screen, this triazolodiazepine compound shares a similar structure to JQ1 but was optimized for improved potency, pharmacokinetic properties, and stability. It has entered clinical trials for acute myeloid leukemia (AML), breast cancer, and prostate cancer [7].

- OTX015: Another JQ1-derived candidate that showed potent BET inhibition and entered clinical trials for hematological malignancies and solid tumors [7].

- CPI-0610: Constellation Pharmaceuticals directly used the JQ1 structure to inspire the design of their BET inhibitor, CPI-0610, starting from a fragment-based screening approach [7].

This pipeline from probe to candidate underscores how chemogenomic tools can accelerate drug discovery by providing a validated target and a high-quality chemical starting point.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful chemogenomics research relies on a suite of specialized reagents and tools, as detailed in the following table.

| Tool / Reagent | Function / Application |

|---|---|

| Targeted Chemical Library | A collection of small molecules designed to target specific protein families (e.g., kinases, GPCRs). It contains known ligands to facilitate the identification of ligands for orphan targets within the same family [1]. |

| Barcoded Yeast Libraries (YKO, DAmP, MoBY-ORF) | Collections of yeast strains where each strain has a unique gene deletion or alteration and a unique DNA barcode. Used in competitive fitness-based profiling to identify drug targets and mechanisms of action [4]. |

| Chemogenomic Databases (e.g., CHEMGENIE, ChEMBL, STITCH) | Integrated databases that harmonize compound-target interaction data from multiple sources. They are essential for data mining, predictive modeling, and target deconvolution [5]. |

| Nanoluciferase (NanoLuc) / HiBiT Tags | A small, bright luciferase enzyme used in Bioluminescence Resonance Energy Transfer (BRET) and Cellular Thermal Shift Assays (CETSA) to study protein-protein interactions and target engagement in live cells [6]. |

| Cysteine-Reactive Alkyne Probes | Chemical tools used to profile the engagement and selectivity of covalent cysteine-reactive inhibitors on a proteome-wide scale via chemical proteomics [6]. |

| 3D Spheroid Cultures | Three-dimensional cell cultures that better mimic the in vivo tumor microenvironment. Used in high-throughput phenotypic screening of small-molecule libraries for activities like invasion inhibition [6]. |

The foundational principle of modern chemogenomics, often termed the similar property principle, posits that chemically similar compounds are likely to exhibit similar biological activities and interact with similar protein targets [8]. This core hypothesis enables the prediction of drug-target interactions (DTIs) on a large scale, facilitating the acceleration of drug discovery, drug repositioning, and the understanding of polypharmacology [9] [3]. The transition from traditional phenotypic screening to target-based approaches has underscored the need for precise target identification and mechanism of action (MoA) understanding [9]. In silico target prediction methods have thus become indispensable, as they leverage the growing wealth of chemogenomic data from public repositories like ChEMBL, PubChem, and BindingDB to systematically explore the relationship between chemical structures and biological targets [9] [10] [3]. While this hypothesis provides a powerful framework, its reliability is contingent upon the quality of the underlying data and the sophistication of the computational methods employed to navigate the complex landscape of chemical and biological space [10].

Theoretical Foundations and Methodological Frameworks

The validation of the "similar compounds, similar targets" hypothesis relies on computational methodologies that can be broadly categorized into ligand-centric and target-centric approaches.

Ligand-Centric Methods: These methods operate on the principle that a query molecule's targets can be inferred by comparing its structure to a database of known bioactive molecules. The similarity between molecules is typically quantified using molecular fingerprints and similarity coefficients, such as the Tanimoto coefficient [8]. For instance, the MolTarPred method uses 2D structural similarity (e.g., MACCS or Morgan fingerprints) to identify known ligands that are most similar to a query compound, with the assumption that their annotated targets are potential targets for the query [9]. The effectiveness of this approach is highly dependent on the comprehensiveness of the knowledgebase of known ligand-target interactions.

Target-Centric Methods: This alternative approach involves building predictive models for specific biological targets. Methods such as Quantitative Structure-Activity Relationship (QSAR) modeling use machine learning algorithms (e.g., Random Forest, Naïve Bayes) to correlate chemical structure with biological activity for a given target [9]. Structure-based methods, such as molecular docking, leverage the 3D structure of a protein to predict how strongly a small molecule will bind to it [9]. While powerful, these methods can be limited by the availability of high-quality protein structures, a gap that is increasingly being filled by computational tools like AlphaFold [9].

More advanced chemical similarity network approaches have been developed to overcome the limitations of simple pairwise similarity comparisons. Methods like CSNAP (Chemical Similarity Network Analysis Pull-down) classify compounds into subnetworks based on shared chemical scaffolds (chemotypes). A network-based scoring function then predicts drug targets for a query compound based on the most common targets among its network neighbors, potentially capturing more complex relationships than direct similarity [11]. This has been extended into the 3D realm with CSNAP3D, which combines 3D molecular shape and pharmacophore features to identify "scaffold hopping" compounds—structurally distinct molecules that share a similar 3D environment and can interact with the same target [11].

Table 1: Overview of In Silico Target Prediction Methods

| Method Category | Representative Examples | Core Algorithm/Principle | Key Requirements |

|---|---|---|---|

| Ligand-Centric | MolTarPred, SEA, SuperPred | 2D/3D Chemical Similarity | Database of known active ligands |

| Target-Centric (Ligand-Based) | RF-QSAR, TargetNet, ChEMBL | QSAR with Machine Learning (e.g., Random Forest) | Bioactivity data for the target |

| Target-Centric (Structure-Based) | Molecular Docking | Protein-Ligand Docking Simulations | 3D Structure of the target protein |

| Network-Based | CSNAP, CSNAP3D | Chemical Similarity Network Analysis | A dataset of compounds with annotated targets |

Experimental Validation and Performance Benchmarking

A precise, comparative evaluation of target prediction methods is critical for assessing their practical utility. A 2025 benchmark study systematically evaluated seven methods (MolTarPred, PPB2, RF-QSAR, TargetNet, ChEMBL, CMTNN, and SuperPred) using a shared dataset of FDA-approved drugs to ensure a fair comparison [9].

The performance of these methods was evaluated using metrics such as recall, which measures the ability to identify true positive interactions. The study highlighted that strategies like high-confidence filtering (e.g., using only ChEMBL interactions with a confidence score ≥7) can impact performance, reducing recall and thus potentially making it less ideal for broad drug repurposing applications where sensitivity is key [9]. Furthermore, the choice of molecular representation was found to be critical; for MolTarPred, Morgan fingerprints with Tanimoto scores demonstrated superior performance compared to MACCS fingerprints with Dice scores [9]. The overall benchmark concluded that MolTarPred was the most effective method among those tested [9].

Table 2: Performance Comparison of Selected Target Prediction Methods from a 2025 Benchmark

| Method | Type | Key Algorithm | Key Finding |

|---|---|---|---|

| MolTarPred | Ligand-centric | 2D Similarity | Most effective method in the benchmark; optimized with Morgan fingerprints. |

| RF-QSAR | Target-centric | Random Forest | Performance varies with the target and training data quality. |

| CSNAP3D | Network-based | 3D Shape & Pharmacophore | Achieved >95% success rate in predicting targets for 206 known drugs. |

| DeepDTAGen | Deep Learning | Multitask Deep Learning | Predicts drug-target affinity and generates novel drugs simultaneously. |

Beyond target prediction, the hypothesis is also being leveraged with deep learning for generative tasks. The DeepDTAGen framework uses a multitask learning approach to predict drug-target binding affinity and simultaneously generate novel, target-aware drug molecules [12]. On benchmark datasets like KIBA, Davis, and BindingDB, it achieved a Concordance Index (CI) of 0.897, 0.890, and 0.876, respectively, demonstrating strong predictive performance [12]. This showcases an advanced application of the core hypothesis, where understanding the structure-activity relationship is used not just for prediction, but also for the de novo design of new therapeutic compounds.

Essential Protocols for Chemogenomic Analysis

Protocol 1: Ligand-Based Target Fishing with MolTarPred

This protocol outlines the steps for using a MolTarPred-like, ligand-centric approach to predict potential targets for a query small molecule [9].

- Database Preparation: Obtain a comprehensive database of known ligand-target interactions, such as ChEMBL (version 34 or newer). Standardize the data by selecting for high-confidence interactions (e.g., confidence score ≥ 7) and filtering for specific activity types (e.g., IC50, Ki, EC50 ≤ 10,000 nM). Remove entries associated with non-specific or multi-protein complexes to ensure target specificity [9].

- Molecular Representation: Encode the chemical structures in the database and the query molecule(s) using a suitable molecular fingerprint. The Morgan fingerprint (radius 2, 2048 bits) is recommended based on benchmark results [9].

- Similarity Calculation: For the query molecule, calculate the pairwise structural similarity against all molecules in the database. The Tanimoto coefficient is the standard metric for this calculation [9] [8].

- Target Inference: Rank the database molecules based on their similarity to the query. The top k most similar molecules (e.g., top 1, 5, 10, or 15) are identified, and the targets annotated to these molecules are retrieved as putative targets for the query compound [9].

- Hypothesis Generation & Validation: The resulting list of potential targets forms a MoA hypothesis, which must be validated through subsequent experimental assays (e.g., in vitro binding assays) [9].

Protocol 2: Constructing a Chemical Space Network (CSN)

This protocol describes creating a CSN to visualize and analyze relationships within a compound dataset, which can help identify clusters of compounds sharing similar targets [13].

- Data Curation: Load a dataset of compounds (e.g., SMILES strings and bioactivity data). Clean the data by removing entries with missing values, checking for and handling salts, and merging duplicate compounds by averaging their activity values [13].

- Compute Pairwise Relationships: For every pair of compounds in the curated dataset, compute a similarity value. This can be a 2D Tanimoto similarity based on RDKit fingerprints or a maximum common substructure (MCS)-based similarity [13].

- Define Network Edges: Apply a similarity threshold to determine which compound pairs are sufficiently similar to be connected in the network. For example, only draw an edge if the Tanimoto similarity is ≥ 0.65 [13].

- Build the Network Graph: Use a network analysis library like NetworkX. Represent each compound as a node and each validated similarity relationship as an edge [13].

- Visualize and Analyze: Plot the network, using node color to represent a property like bioactivity (Ki value) and node size to represent a network property like degree centrality. Analyze the network to identify densely connected clusters, which often correspond to groups of compounds with similar structures and, by the core hypothesis, potentially similar targets [13].

Chemical Space Network Revealing Target-Cluster Relationships

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful chemogenomics research relies on a suite of computational tools, databases, and software libraries. The following table details key resources for conducting target prediction and chemical space analysis.

Table 3: Essential Reagents and Tools for Chemogenomics Research

| Item Name | Type/Source | Function in Research |

|---|---|---|

| ChEMBL Database | Public Bioactivity Database | A manually curated database of bioactive molecules with drug-like properties. It provides annotated drug-target interactions, inhibitory concentrations (e.g., IC50), and binding affinities (e.g., Ki) for training and validating predictive models [9] [10]. |

| RDKit | Open-Source Cheminformatics Library | A core software library used for cheminformatics tasks, including reading and writing chemical structures, generating molecular fingerprints (e.g., Morgan), calculating molecular descriptors, and performing substructure searches [13]. |

| NetworkX | Python Library for Network Analysis | Used to create, manipulate, and study the structure, dynamics, and functions of complex networks. It is essential for building and analyzing Chemical Space Networks (CSNs) [13]. |

| Molecular Fingerprints (e.g., Morgan, MACCS) | Computational Molecular Descriptors | Mathematical representations of a molecule's structure that enable quantitative similarity comparisons. They are the fundamental input for most ligand-centric prediction methods and similarity searches [9] [8]. |

| Tanimoto Coefficient | Similarity Metric | A standard measure for quantifying the similarity between two molecules represented by fingerprints. A higher score indicates greater structural similarity, forming the basis for target inference [9] [8]. |

| Confidence Score (ChEMBL) | Data Quality Metric | A score (0-9) assigned to target assignments in ChEMBL, indicating the level of confidence in the interaction. Filtering for high-confidence scores (e.g., ≥7) during database preparation improves data quality for modeling [9]. |

General Workflow for Ligand-Based Target Prediction

The core hypothesis that "similar compounds have similar targets" remains a powerful and productive principle in chemogenomics. While its application in simple similarity searching is effective, the field is rapidly advancing with more sophisticated methodologies. Network-based approaches like CSNAP3D and multitask deep learning models like DeepDTAGen are pushing the boundaries, enabling the deorphanization of novel compounds and the generation of new drug candidates, all while accounting for the complex, polypharmacological nature of small molecules [11] [12]. The continued growth of high-quality, public chemogenomics data, coupled with rigorous data curation practices and benchmarked computational methods, ensures that this core hypothesis will continue to be a cornerstone of modern, data-driven drug discovery [9] [10].

Chemogenomics is an innovative approach in chemical biology that systematically investigates the interactions between small molecules and biological systems to identify therapeutic targets and active compounds [14]. This methodology synergizes combinatorial chemistry with genomic and proteomic sciences, creating a powerful framework for modern drug discovery [14]. The core premise involves using carefully designed compound libraries to probe biological systems, generating multidimensional data through various readout technologies that reveal complex bioactivity relationships [15]. As the field has evolved, it has shifted from single-target profiling to multidimensional biological fingerprinting, reflecting a growing awareness of polypharmacology and biological networks [15]. This guide examines the three fundamental components—compound libraries, biological systems, and readouts—that form the foundation of chemogenomics research, providing researchers with technical insights into their integration and application.

Compound Libraries: Design and Curation

Library Composition and Characteristics

Chemogenomics libraries consist of carefully selected, chemically diverse compounds systematically organized to probe biological space [14]. These libraries are designed to cover broad areas of chemical space while including targeted sets for specific protein families. The composition of a typical chemogenomics library includes several key categories of compounds with distinct characteristics and applications, as detailed in Table 1.

Table 1: Composition and Characteristics of Chemogenomics Libraries

| Compound Category | Key Characteristics | Primary Applications | Examples |

|---|---|---|---|

| Kinase Inhibitors | High selectivity, ATP-competitive or allosteric | Pathway analysis, cancer research | Selective kinase modulators |

| GPCR Ligands | Agonists, antagonists, allosteric modulators | Signal transduction studies | Receptor-specific probes |

| Epigenetic Modifiers | Target histone modifications, DNA methylation | Epigenetics research, oncology | HDAC inhibitors, bromodomain ligands |

| Pharmacological Probes | Well-annotated, high selectivity | Mechanism of action studies | Bioactive probe molecules |

Library Sourcing and Management

Contemporary chemogenomics libraries are sourced through both commercial acquisition and custom synthesis. Recent announcements highlight the acquisition of libraries containing over 1,600 diverse, highly selective, and well-annotated pharmacologically active probe molecules [16]. These libraries are stored and managed in specialized compound management facilities that ensure the highest standards of quality, integrity, and logistical efficiency [16]. Proper library management enables seamless integration of screening compounds into research projects while maximizing reliability and reproducibility in drug discovery efforts.

Beyond specialized chemogenomic sets, broader screening libraries include diversity collections of approximately 100,000 compounds rigorously analyzed for full-scale high-throughput screening (HTS) or cost-effective pilot studies [16]. Fragment libraries represent another essential component, with collections of approximately 1,300 fragments incorporating bespoke, structurally unique fragments designed by expert chemists [16]. These fragments typically follow the "rule of three" (molecular weight <300, cLogP ≤3, hydrogen bond donors/acceptors ≤3) for optimal probe development.

Biological Systems in Chemogenomics

Model Organisms and Cellular Systems

Biological systems in chemogenomics range from simple microbial models to complex human cell lines, each offering distinct advantages for specific research applications. The selection of an appropriate biological system is critical for generating meaningful data that can be translated to therapeutic insights.

Table 2: Biological Systems Used in Chemogenomics Screening

| System Type | Specific Examples | Advantages | Common Readouts |

|---|---|---|---|

| Yeast Mutant Libraries | Heterozygous/homozygous deletions, overexpression strains [17] | Genetic tractability, high-throughput capability | Growth rates, viability assays |

| Cancer Cell Lines | Diverse panels (NCI-60) [17] | Human relevance, disease modeling | Viability, proliferation assays |

| Primary Cells | Patient-derived cells | Clinical relevance | Functional assays, secretion profiles |

| Complex Organisms | C. elegans, zebrafish [17] | Whole-organism context | Developmental, behavioral phenotypes |

Genetic Manipulation Strategies

Different genetic manipulation strategies enable distinct approaches to chemogenomic screening. Three primary library types for yeast systems include:

- Heterozygous deletion libraries: Contain diploid strains with single-gene deletions, useful for identifying drug-target interactions through haploinsufficiency [17]

- Homozygous deletion libraries: Contain haploid strains with complete gene deletions, revealing genes essential for resistance or sensitivity [17]

- Overexpression libraries: Enable identification of suppressors or enhancers of compound activity through gene dosage effects [17]

Similar approaches have been adapted for mammalian systems using RNA interference (RNAi), CRISPR-Cas9 gene editing, and cDNA overexpression libraries to systematically probe gene-compound relationships.

Readout Technologies and Data Generation

Phenotypic and Functional Readouts

Readout technologies transform biological responses into quantifiable data, enabling researchers to decode compound mechanisms. These technologies span multiple dimensions of biological effects, from cellular phenotypes to molecular interactions.

Table 3: Readout Technologies in Chemogenomics

| Readout Category | Specific Technologies | Data Type | Information Gained |

|---|---|---|---|

| Viability/Proliferation | Growth rates, metabolic activity assays | Quantitative | Compound efficacy, toxicity |

| Gene Expression | DNA microarrays, RNA-seq [17] | Genome-wide | Transcriptional responses, pathways |

| Protein Activity | Target engagement assays, phosphorylation | Quantitative | Mechanism of action, potency |

| Morphological | High-content screening, imaging | Multivariate | Phenotypic profiling, off-target effects |

| Binding | Affinity selection, thermal shift | Binary/Quantitative | Direct target identification |

Experimental Designs for Readout Acquisition

Two fundamental experimental designs govern how readouts are acquired in chemogenomic screens:

- Non-competitive arrays: Each mutant strain is cultured separately in an arrayed format, with compounds tested individually against each strain [17]. This approach allows for clear attribution of phenotypes to specific genetic perturbations but requires substantial resources.

- Competitive mutant pools: All mutant strains are cultured together in a pooled format, with relative abundance measured before and after compound treatment [17]. This enables highly parallel screening but requires specialized detection methods such as molecular barcoding.

The selection between these designs involves trade-offs between throughput, resolution, and resource requirements, with the optimal approach dependent on specific research goals and constraints.

Integrated Workflows and Experimental Protocols

Comprehensive Screening Workflow

The integration of compound libraries, biological systems, and readout technologies occurs through standardized workflows that ensure reproducibility and data quality. The following diagram illustrates a generalized chemogenomics screening workflow:

Protocol: Yeast Chemogenomic Haploinsufficiency Screen

This protocol outlines a standardized approach for identifying cellular targets of small molecules using yeast haploinsufficiency screening [17]:

Materials and Reagents:

- Yeast heterozygous deletion library (arrayed format)

- Compound library dissolved in DMSO

- Solid or liquid growth media compatible with screening format

- Robotic pinning equipment or liquid handling systems

- Plate readers for absorbance/turbidity measurements

- Barcoding primers for competitive pool screens

Procedure:

- Library Preparation: Culture individual deletion strains in separate wells of 384-well plates. For pooled approaches, mix all strains in equal proportions.

- Compound Treatment: Transfer compounds to assay plates using robotic systems. Include DMSO-only controls for normalization.

- Inoculation and Growth: Apply yeast libraries to compound plates. Incubate at 30°C with appropriate humidity control.

- Phenotypic Measurement: Monitor growth by measuring optical density (OD600) at 24-hour intervals for 48-72 hours.

- Data Collection: Calculate growth inhibition relative to DMSO controls. For pooled screens, harvest cells and sequence barcodes to determine strain abundance.

Data Analysis:

- Calculate Z-scores for each strain-compound combination [(growthcompound - meancontrol) / SD_control]

- Identify sensitive strains with Z-score < -2.0

- Map sensitive strains to biological pathways using enrichment analysis

- Compare sensitivity profiles across multiple compounds to identify common mechanisms

Protocol: Mammalian Cell Line Profiling

For mammalian systems, the following protocol enables chemogenomic profiling using cancer cell line panels:

Materials and Reagents:

- Panel of cancer cell lines (e.g., NCI-60, CCLE)

- Compound library with appropriate controls

- Cell culture media and reagents

- Cell viability assay kits (e.g., ATP-based, resazurin)

- High-content imaging systems (optional)

- RNA/DNA extraction kits for omics readouts

Procedure:

- Cell Preparation: Plate cells in 384-well plates at optimized densities. Include controls for background subtraction.

- Compound Treatment: Add compound libraries using concentration-response formats (e.g., 8-point 1:3 serial dilutions).

- Incubation: Maintain cells for 72-120 hours based on doubling times.

- Viability Assessment: Add viability reagent and measure signal according to manufacturer protocols.

- Secondary Assays: For hits, perform additional assays (apoptosis, cell cycle, high-content imaging).

- Molecular Profiling: Extract RNA/DNA from treated cells for transcriptomic or genetic analyses.

Data Analysis:

- Calculate IC50 values using nonlinear regression

- Generate sensitivity scores based on area under the curve (AUC)

- Correlate sensitivity with genomic features (mutations, expression)

- Identify biomarkers predictive of compound response

The Scientist's Toolkit: Essential Research Reagents

Successful chemogenomics research requires specialized reagents and tools that enable precise interrogation of compound-biological system interactions. The following table details essential components of the chemogenomics research toolkit:

Table 4: Essential Research Reagents for Chemogenomics

| Reagent Category | Specific Examples | Function | Considerations |

|---|---|---|---|

| Curated Compound Libraries | BioAscent Chemogenomic Library (1,600+ compounds) [16] | Phenotypic screening, target identification | Selectivity, annotation quality |

| Genetic Perturbation Libraries | Yeast deletion collection, CRISPR guides | Target deconvolution, pathway analysis | Coverage, efficiency |

| Viability Assays | ATP-lite, resazurin, colony formation | Quantifying cellular responses | Dynamic range, compatibility |

| High-Content Screening Platforms | Automated microscopy, image analysis | Multiparametric phenotyping | Throughput, information content |

| Omics Profiling Tools | RNA-seq, proteomics platforms | Mechanism of action studies | Cost, data complexity |

The integration of carefully designed compound libraries, appropriate biological systems, and multidimensional readout technologies forms the foundation of successful chemogenomics research. As the field advances, the systematic application of these core components enables researchers to navigate the complex landscape of small molecule-biological system interactions with increasing precision. The protocols and frameworks presented in this guide provide a roadmap for implementing chemogenomics approaches that can accelerate target identification, mechanism elucidation, and ultimately, therapeutic development. Future directions will likely involve even more sophisticated integration of chemical and biological data types, enhanced by artificial intelligence and machine learning approaches, to further decode the complex relationships between small molecules and living systems [15].

Chemogenomics represents a paradigm shift in pharmaceutical research, integrating large-scale chemical and biological data to understand the interactions between small molecules and their protein targets across entire biological systems. This approach has become indispensable for addressing the high costs and protracted timelines of traditional drug discovery, which can exceed $2.6 billion and 10-15 years per new drug [18]. Within this framework, target deconvolution and drug repositioning have emerged as two pivotal applications that leverage chemogenomic principles to accelerate therapeutic development. Target deconvolution identifies the molecular targets of bioactive compounds discovered in phenotypic screens, while drug repositioning finds new therapeutic uses for existing drugs or candidates [19] [20]. Both applications rely on the systematic mapping of chemical space to biological target space, enabled by advances in computational biology, high-throughput screening, and artificial intelligence.

The fundamental premise of chemogenomics is that comprehensive understanding of compound-target interactions facilitates both the elucidation of mechanisms of action for phenotypic hits and the discovery of novel therapeutic indications for known compounds. This review provides an in-depth examination of the methodologies, experimental protocols, and computational tools driving innovation in these two major application areas, with particular emphasis on their integration within modern drug discovery pipelines.

Target Deconvolution: Elucidating Mechanisms of Action

Conceptual Framework and Significance

Target deconvolution refers to the process of identifying the direct molecular target(s) of a bioactive small molecule within a complex biological system [20]. This process is particularly crucial following phenotype-based screening, where compounds are selected for their ability to induce a desired cellular or physiological response without prior knowledge of their specific molecular mechanisms [21] [22]. The primary challenge lies in bridging the gap between observed phenotypic effects and the precise protein targets responsible for these effects.

The significance of target deconvolution extends beyond mere mechanism elucidation. It enables researchers to: (1) assess potential on-target and off-target effects early in development; (2) guide structure-activity relationship (SAR) studies for lead optimization; (3) understand potential toxicity profiles; and (4) facilitate intellectual property protection by defining precise mechanisms of action [20]. Furthermore, comprehensive target deconvolution can reveal unexpected polypharmacology that may enhance therapeutic efficacy or identify potential resistance mechanisms.

Experimental Methodologies for Target Deconvolution

Several well-established experimental approaches facilitate target deconvolution, each with distinct strengths, limitations, and appropriate application contexts (Table 1).

Table 1: Experimental Methodologies for Target Deconvolution

| Method | Principle | Key Steps | Sensitivity | Throughput | Best For |

|---|---|---|---|---|---|

| Affinity-Based Pull-Down | Immobilized compound captures binding proteins from lysate [20] | 1. Compound immobilization2. Incubation with cell lysate3. Affinity enrichment4. MS identification | High (nM range) | Medium | High-affinity binders; stable complexes |

| Activity-Based Protein Profiling (ABPP) | Bifunctional probes label active sites covalently [20] | 1. Probe design with reactive group2. Live cell or lysate labeling3. Enrichment via handle4. MS identification | Medium (μM range) | High | Enzymes with nucleophilic residues |

| Photoaffinity Labeling (PAL) | Photoreactive group forms covalent bonds upon UV exposure [20] | 1. Trifunctional probe design2. Binding equilibrium3. UV crosslinking4. Enrichment and MS | Medium (μM range) | Medium | Transient interactions; membrane proteins |

| Stability-Based Profiling | Ligand binding alters protein thermal stability [20] | 1. Compound treatment2. Thermal or chemical denaturation3. Proteome-wide quantification4. Stability shift analysis | Variable | High | Native conditions; proteome-wide coverage |

Affinity-Based Pull-Down Assays

Protocol for Affinity-Based Chemoproteomics:

- Probe Design: Modify the compound of interest with a linker (e.g., PEG spacer) and an immobilization handle (e.g., biotin, alkyne) at a position that does not interfere with biological activity.

- Matrix Preparation: Pre-equilibrate streptavidin/sepharose beads in lysis buffer (e.g., 50 mM Tris-HCl, pH 7.5, 150 mM NaCl, 0.1% NP-40).

- Lysate Preparation: Harvest cells of interest and lyse in appropriate buffer containing protease inhibitors. Clarify by centrifugation at 15,000 × g for 15 minutes.

- Affinity Enrichment: Incubate cell lysate (1-5 mg total protein) with immobilized compound (10-100 μM) for 1-2 hours at 4°C with gentle rotation.

- Washing: Pellet beads and wash sequentially with lysis buffer, high-salt buffer (500 mM NaCl), and low-salt buffer (50 mM NaCl) to remove non-specific binders.

- Elution: Competitively elute bound proteins with excess free compound (100-500 μM) or denature directly in SDS-PAGE loading buffer.

- Identification: Separate proteins by SDS-PAGE, excise bands, trypsin-digest, and analyze by liquid chromatography-tandem mass spectrometry (LC-MS/MS).

This approach is particularly effective for high-affinity interactions (Kd < 1 μM) but requires careful optimization to minimize non-specific binding [20]. Controls including bare beads and structurally unrelated immobilized compounds are essential for distinguishing specific interactions.

Photoaffinity Labeling (PAL)

Protocol for Photoaffinity Labeling:

- Probe Design: Synthesize a trifunctional probe containing: (a) the compound of interest, (b) a photoreactive group (e.g., diazirine, benzophenone), and (c) an enrichment handle (e.g., alkyne for click chemistry).

- Cell Treatment: Incubate live cells or cell lysates with the photoaffinity probe (1-50 μM) for 30-60 minutes in the dark at physiological temperature.

- Crosslinking: Irradiate with UV light (365 nm for diazirines, 350-365 nm for benzophenones) for 5-15 minutes on ice to initiate covalent bonding.

- Cell Lysis: Lyse cells in RIPA buffer with protease inhibitors.

- Click Chemistry: If using an alkyne handle, perform copper-catalyzed azide-alkyne cycloaddition with biotin-azide (100 μM, 1 hour, room temperature).

- Enrichment: Capture biotinylated proteins with streptavidin beads (2 hours, 4°C).

- Stringent Washing: Wash beads with sequential buffers including 1% SDS to remove non-specific binders.

- Elution and Analysis: Elute proteins and identify by LC-MS/MS.

PAL is particularly valuable for capturing transient interactions and studying membrane protein targets that are challenging to address with other methods [20].

Computational Approaches for Target Deconvolution

Computational methods have dramatically enhanced target deconvolution efforts by enabling in silico prediction of potential targets before experimental validation.

Knowledge Graph Approaches

Protein-protein interaction knowledge graphs (PPIKG) have emerged as powerful tools for narrowing candidate targets from phenotypic screens [21]. The workflow typically involves:

- Graph Construction: Integrate protein-protein interaction data from multiple databases (e.g., STRING, BioGRID) with compound-target relationships and pathway information.

- Phenotype Contextualization: Annotate nodes with phenotypic associations and pathway relevance.

- Candidate Prioritization: Apply graph algorithms to identify proteins closely connected to the phenotype of interest.

- Experimental Integration: Combine with molecular docking to predict binding potential.

In a recent application to p53 pathway activators, a PPIKG approach reduced candidate proteins from 1088 to 35, dramatically streamlining the subsequent experimental validation that identified USP7 as a direct target of UNBS5162 [21].

Molecular Docking and Virtual Screening

Structure-based virtual screening leverages protein-ligand complementarity to predict potential targets:

- Compound Preparation: Generate 3D conformations and optimize geometry.

- Target Library Preparation: Curate a diverse set of protein structures with defined binding sites.

- High-Throughput Docking: Screen compound against target library using software like AutoDock Vina or Glide.

- Scoring and Ranking: Prioritize targets based on docking scores, interaction patterns, and conservation of binding motifs.

This approach benefits from integration with functional annotation to filter biologically plausible targets [21] [23].

The following diagram illustrates the integrated computational-experimental workflow for target deconvolution:

Integrated Workflow for Target Deconvolution

Drug Repositioning: Discovering New Therapeutic Applications

Rationale and Economic Impact

Drug repositioning (also called drug repurposing) identifies new therapeutic uses for existing drugs or drug candidates beyond their original indications [18]. This strategy leverages established safety profiles and pharmacological data, significantly reducing development risks, costs, and timelines compared to de novo drug discovery. While traditional drug development costs approximately $2.6 billion and requires 10-15 years, repositioned drugs can reach patients with approximately $300 million investment and in as little as 3-6 years [18].

The economic advantage stems from bypassing much of the preclinical testing and having existing manufacturing processes, allowing repositioned drugs to advance directly to Phase II trials for new indications in many cases. Notable success stories include sildenafil (repurposed from angina to erectile dysfunction), minoxidil (hypertension to hair loss), and imatinib (CML to GIST) [19]. During the COVID-19 pandemic, drug repositioning gained particular prominence with the rapid identification of baricitinib (from rheumatoid arthritis) as an effective treatment [18].

Methodological Frameworks for Drug Repositioning

AI-Driven Repositioning Approaches

Artificial intelligence has revolutionized drug repositioning by enabling integration of heterogeneous data types and detection of non-obvious drug-disease relationships (Table 2).

Table 2: AI and Machine Learning Approaches for Drug Repositioning

| Method Category | Key Algorithms | Data Types Utilized | Strengths | Limitations |

|---|---|---|---|---|

| Classical ML | Random Forest, SVM, Logistic Regression [18] | Molecular descriptors, target annotations | Interpretability; works with small datasets | Limited ability with complex patterns |

| Deep Learning | CNN, LSTM, Autoencoders [24] [18] | Chemical structures, omics profiles, clinical data | Automatic feature extraction; handles complexity | Large data requirements; black box nature |

| Network-Based | Graph Neural Networks, Network Propagation [24] [18] | PPI networks, drug-target-disease networks | Captures system-level biology | Dependent on network completeness |

| Multi-Task Learning | Multi-task DNN, Parameter Sharing [24] | Multiple bioactivity assays, omics datasets | Transfer learning across tasks | Complex implementation |

Machine learning (ML) algorithms learn patterns from existing drug-target-disease relationships to predict new associations. Supervised approaches use labeled training data (known drug-indication pairs), while unsupervised methods identify novel clusters and patterns without pre-existing labels [18]. Deep learning architectures, particularly graph neural networks (GNNs), excel at modeling the complex relationships between drugs, targets, and diseases by representing them as interconnected networks [24].

Network-Based Repositioning Strategies

Network pharmacology approaches conceptualize drug action within the context of biological systems rather than isolated targets [24]. The fundamental premise is that diseases arise from perturbations in cellular networks, and effective therapeutics should restore network homeostasis.

Protocol for Network-Based Drug Repositioning:

- Network Construction:

- Assemble protein-protein interaction (PPI) network from databases like STRING or BioGRID

- Annotate nodes with disease associations from DisGeNET or OMIM

- Integrate drug-target interactions from DrugBank or ChEMBL

Disease Module Identification:

- Define disease-associated proteins based on genetic association, expression profiling, or literature mining

- Identify significantly interconnected disease modules using algorithms like Molecular Complex Detection (MCODE)

Proximity Analysis:

- Calculate network-based distance between drug targets and disease modules

- Compute

d_{s,t}= average shortest path length between drug targets and disease proteins - Compare to null distribution of random targets to assess significance

Signature-Based Matching:

- Obtain disease signatures from transcriptomic databases (e.g., LINCS L1000, GEO)

- Calculate drug signatures from perturbation experiments

- Use pattern-matching algorithms (e.g., Kolmogorov-Smirnov statistic) to identify inverse correlations between drug and disease signatures

Multi-scale Integration:

- Combine network proximity with functional enrichment, side effect similarity, and genetic evidence

- Apply machine learning classifiers to integrate multiple evidence types and prioritize candidates

The following diagram illustrates the network-based drug repositioning approach:

Network-Based Drug Repositioning Workflow

Successful drug repositioning relies on integration of diverse data types from publicly available repositories (Table 3).

Table 3: Key Databases for Drug Repositioning Research

| Database | Primary Content | Key Features | Application in Repositioning |

|---|---|---|---|

| DrugBank | Drug-target interactions, mechanisms, pharmacokinetics [24] [19] | Comprehensive drug information with target links | Identify shared targets between indications |

| ChEMBL | Bioactivity data for drug-like molecules [24] | Curated bioactivity data, SAR information | Multi-target activity profiling |

| TTD | Therapeutic targets, approved drugs, clinical trials [24] | Focus on known therapeutic targets | Target-disease indication mapping |

| KEGG | Pathways, diseases, drugs [19] | Integrated pathway information | Pathway-centric repositioning |

| DepMap | Cancer dependency screens [19] | CRISPR screening data across cancer lines | Identify cancer-specific dependencies |

| DrugComb | Drug combination screens [19] | Synergy and sensitivity data | Combination therapy opportunities |

Integrated Chemogenomic Platforms and Case Studies

Exemplary Integrated Workflows

The most effective applications of chemogenomics integrate both target deconvolution and repositioning strategies within unified platforms. The EUbOPEN initiative provides a notable example with its chemogenomic sets covering 1000 targets by the end of 2025, organized into major target families including protein kinases, membrane proteins, and epigenetic modulators [25]. These compound sets enable systematic linking of chemical perturbations to phenotypic outcomes and subsequent target identification.

Another integrated approach combines pharmacotranscriptomics with high-throughput screening, where drug-induced gene expression signatures serve as functional fingerprints that can be matched to disease states [26]. This methodology has been particularly valuable for elucidating mechanisms of Traditional Chinese Medicine and identifying repositioning opportunities for known compounds.

Case Study: p53 Pathway Activator Discovery

A recent study demonstrated the power of integrating knowledge graphs with experimental validation for target deconvolution [21]:

- Phenotypic Screening: Identified UNBS5162 as a p53 pathway activator using a high-throughput luciferase reporter assay.

- Knowledge Graph Construction: Built a protein-protein interaction knowledge graph (PPIKG) focused on p53 signaling.

- Candidate Prioritization: The PPIKG analysis reduced candidate targets from

1088to35potentially interacting proteins. - Molecular Docking: Screened UNBS5162 against prioritized candidates, predicting strong binding to USP7.

- Experimental Validation: Confirmed USP7 as a direct target through binding assays and functional studies.

This case highlights how computational prioritization dramatically streamlines the experimental workload in target deconvolution.

Case Study: AI-Driven Repositioning for COVID-19

During the COVID-19 pandemic, the DeepCE model demonstrated how AI could accelerate drug repositioning by predicting gene expression changes induced by novel chemicals [22]. This approach enabled high-throughput phenotypic screening in silico, generating lead compounds consistent with clinical evidence. The platform integrated chemical structure data with transcriptomic responses to prioritize candidates for further testing, showcasing the potential of AI-driven repositioning in public health emergencies.

Successful implementation of chemogenomic approaches requires specialized computational tools, experimental reagents, and data resources (Table 4).

Table 4: Essential Research Reagents and Resources for Chemogenomics

| Resource Type | Specific Tools/Reagents | Function/Application | Key Features |

|---|---|---|---|

| Chemical Probes | EUbOPEN Chemogenomic Sets [25] | Target family-focused screening | Covers 1000 targets; quality-controlled |

| Computational Tools | RDKit [23] | Cheminformatics and molecular modeling | Open-source; comprehensive descriptor calculation |

| Database Platforms | DrugBank [24] [19] | Drug-target interaction data | Annotated with mechanistic and pharmacological data |

| Target Deconvolution Services | TargetScout, OmicScouts [20] | Experimental target identification | Affinity-based and photoaffinity labeling approaches |

| AI/ML Platforms | DeepCE [22] | Predictive modeling for repositioning | Gene expression-based compound screening |

Future Directions and Concluding Remarks

The fields of target deconvolution and drug repositioning are rapidly evolving, driven by advances in artificial intelligence, multi-omics technologies, and systems biology. Several emerging trends promise to further accelerate these chemogenomic applications:

- Generative AI models are being increasingly applied to design novel polypharmacological compounds with specific multi-target profiles [24].

- Federated learning approaches enable model training across multiple institutions while preserving data privacy, potentially unlocking valuable clinical datasets for repositioning [24].

- Single-cell multi-omics provides unprecedented resolution for understanding compound effects in heterogeneous cell populations, enhancing both deconvolution and repositioning efforts [22].

- Integrative phenotypic screening combines high-content imaging with transcriptomics and proteomics to create rich compound signatures that facilitate both target identification and repurposing [22].

In conclusion, target deconvolution and drug repositioning represent two major applications of chemogenomics that are transforming pharmaceutical research. By systematically mapping the complex relationships between small molecules and biological targets, these approaches accelerate therapeutic development, reduce costs, and increase success rates. As computational and experimental methods continue to advance and integrate, chemogenomics promises to play an increasingly central role in delivering novel treatments for human disease.

Practical Approaches: Experimental and Computational Chemogenomic Workflows

Building and Annotating Chemogenomic Libraries

Chemogenomic (CG) libraries are structured collections of small molecules designed to systematically probe the functions of a wide range of proteins within the druggable proteome. Unlike highly selective chemical probes, chemogenomic compounds may bind to multiple targets but are exceptionally valuable due to their well-characterized target profiles. When several compounds with diverse off-target activity profiles are combined into a collection, they enable powerful target deconvolution based on selectivity patterns, forming a cornerstone of modern chemical biology and early drug discovery research [27].

The strategic development and comprehensive annotation of these libraries represent a core methodology for expanding the explored druggable proteome. This guide details the contemporary principles, technical protocols, and analytical frameworks for constructing and annotating high-quality chemogenomic libraries, contextualized within initiatives like EUbOPEN and Target 2035, which aim to provide pharmacological modulators for most human proteins [27].

Library Design and Planning

Defining Scope and Objectives

The initial design phase requires clear objectives. Libraries can be designed for broad target-family coverage or for specific phenotypic screening contexts, such as precision oncology.

- Target-Family Focus: Design libraries to cover specific protein families (e.g., kinases, GPCRs, E3 ubiquitin ligases, Solute Carriers (SLCs)). The EUbOPEN consortium, for example, has assembled a CG library covering one-third of the druggable genome [27].

- Phenotypic Screening Focus: For projects like profiling glioblastoma patient cells, library design prioritizes compounds with known or predicted activity against pathways relevant to the disease phenotype [28].

Family-specific criteria must be established, considering ligandability, availability of characterized compounds, and the necessity for multiple chemotypes per target [27].

Virtual Library Enumeration and Scoring

Before synthesis, virtual libraries are enumerated and scored for drug-like properties.

- Building Block Selection: Start from comprehensive catalogs of available chemical building blocks.

- Property Calculation: Enumerate a virtual library and calculate key properties for each member. Common parameters include molecular weight (MW), logP, hydrogen bond donors (HBD), hydrogen bond acceptors (HBA), and topological polar surface area (TPSA) [29].

- Scoring and Filtering: A scoring system is applied to select optimal building blocks. For instance, each library member can receive a point for each satisfied Lipinski's rule parameter, which is then translated into a combined score for ranking building blocks for purchase [29].

Table 1: Key Drug-Like Property Ranges for Virtual Library Filtering

| Property | Target Range | Scoring Purpose |

|---|---|---|

| Molecular Weight (MW) | Typically < 500 Da | Reduce attrition in later development stages |

| logP | Typically < 5 | Ensure favorable solubility and permeability |

| Hydrogen Bond Donors (HBD) | ≤ 5 | Optimize compound absorption |

| Hydrogen Bond Acceptors (HBA) | ≤ 10 | Optimize compound absorption |

| Topological Polar Surface Area (TPSA) | Variable based on target | Estimate membrane permeability |

The final selected building blocks should generate a library where the majority of compounds satisfy these drug-like criteria, substantially improving the library's overall quality compared to the original virtual enumeration [29].

Library Synthesis and Production

Synthesis Strategies and Platforms

Two primary synthesis strategies are employed: DNA-encoded libraries (DELs) and barcode-free self-encoded libraries (SELs).

- DNA-Encoded Library (DEL) Synthesis: This traditional approach involves alternating steps of chemical synthesis and enzymatic DNA ligation. While powerful, it is limited by the requirement for all chemical reactions to be water- and DNA-compatible, which restricts the chemistry that can be used. Furthermore, the DNA tag can be over 50 times larger than the small molecule, potentially interfering with target binding [29].

- Barcode-Free Self-Encoded Library (SEL) Synthesis: This emerging platform uses solid-phase combinatorial synthesis to generate large libraries (e.g., 500,000 members) without physical barcodes. Compounds are later identified through tandem mass spectrometry (MS/MS) and automated structure annotation. This method allows for a wider range of chemical reactions and is not compromised by nucleic acid-binding targets, making it ideal for previously inaccessible targets like the DNA-processing enzyme FEN1 [29].

Exemplary SEL Synthesis Protocols

Protocol 1: Sequential Attachment (SEL 1) This protocol is adapted from Fmoc-based solid-phase peptide synthesis.

- Step 1: Sequentially attach two amino acid building blocks to the solid support.

- Step 2: Add a carboxylic acid decorator using optimized coupling conditions.

- Quality Control: Analyze the crude library using LC-MS to confirm synthesis quality and diversity [29].

Protocol 2: Trifunctional Benzimidazole Synthesis (SEL 2) This protocol creates a diverse library based on a benzimidazole core.

- Step 1: Systematically optimize the route towards trifunctional benzimidazoles.

- Step 2: Test the scope of primary amines for nucleophilic aromatic substitution. In one study, a large fraction of 92 tested amines resulted in >65% conversion.

- Step 3: Investigate heterocyclization efficiency with a panel of aldehydes. From 95 aldehydes tested, 65 resulted in >55% conversion to the final compound.

- Final Analysis: Use crude LC-MS traces to validate the quality of the synthesized library [29].

Protocol 3: Suzuki-Miyaura Cross-Coupling (SEL 3) This protocol employs palladium-catalyzed cross-coupling.

- Step 1: Link an amino acid building block to an aryl bromide on solid phase.

- Step 2: Optimize Suzuki-Miyaura reaction conditions for the solid-phase system.

- Step 3: Test the scope of bifunctional aryl bromides and boronic acids. From one analysis, 9 of 19 aryl bromides and 50 of 86 boronic acids resulted in >65% conversion.

- Final Analysis: Confirm coupling efficiency and library quality via LC-MS [29].

The following workflow diagram illustrates the core steps in creating a barcode-free Self-Encoded Library (SEL), from design to hit identification.

Library Annotation and Analysis

Annotation through Profiling

Compound annotation is what transforms a simple collection into a powerful chemogenomic tool. This involves profiling each compound across a wide array of assays.

- Biochemical Profiling: Test compounds for potency and selectivity against purified target proteins, such as in kinase or GPCR panels.

- Cellular Profiling: Assess target engagement, cellular activity, and toxicity in relevant cell models. The EUbOPEN consortium, for instance, profiles compounds in patient-derived disease assays for conditions like inflammatory bowel disease, cancer, and neurodegeneration [27].

- Criteria for Annotation: High-quality annotation includes data on potency (e.g., IC50, Ki), selectivity (at least 30-fold over related proteins), cellular target engagement, and a reasonable cellular toxicity window [27].

Hit Identification and Decoding in SELs

For barcode-free SELs, decoding hit compounds after affinity selection is a critical, multi-step process.

- Sample Complexity: The final sample from an affinity selection may contain hundreds of compounds with a high degree of mass degeneracy (isobaric compounds).

- MS/MS Analysis: The sample is analyzed via nanoLC-MS/MS, which can produce ~80,000 MS1 and MS2 scans in a single run.

- Automated Structure Annotation: Manual analysis is impractical. Instead, use software like SIRIUS 6 and CSI:FingerID for reference spectra-free structure annotation. Since the complete space of potential library structures is known, the enumerated library is used as a custom database to score and identify the compounds against [29].

Analyzing Library Relationships with Chemical Space Networks

Chemical Space Networks (CSNs) provide a powerful visual and analytical method to interpret relationships within a curated chemogenomic dataset.

- Network Construction: CSNs are created using tools like RDKit and NetworkX. Compounds (nodes) are connected by edges, defined by a pairwise relationship such as a 2D fingerprint Tanimoto similarity value or a maximum common substructure similarity [13].

- Visualization and Analysis: Nodes can be colored based on bioactivity (e.g., Ki values), and edges can be styled based on similarity thresholds. This helps in visualizing compound clusters and structure-activity relationships. Network properties like the clustering coefficient, degree assortativity, and modularity can be calculated to quantitatively analyze the library's structure [13].

Table 2: Key Platforms for Chemogenomic Library Synthesis and Annotation

| Platform / Technique | Core Function | Key Advantage | Consideration |

|---|---|---|---|

| DNA-Encoded Library (DEL) | Combinatorial synthesis with DNA barcoding for hit ID | Mature technology, very large library sizes | Chemistry limited by DNA-compatibility; unsuitable for nucleic acid-binding targets |

| Self-Encoded Library (SEL) | Barcode-free synthesis; hit ID via MS/MS annotation | Broader reaction scope; target-agnostic | Relies on advanced MS and software for decoding |

| Chemical Space Networks (CSN) | Visualization & analysis of compound relationships | Reveals SAR and clustering not apparent in lists | Most useful for datasets of 10s to 1000s of compounds |

| SIRIUS/CSI:FingerID | Software for automated MS/MS structure annotation | Does not require a reference spectral database | Requires a known virtual library for scoring in SEL decoding |

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential reagents, materials, and software used in the construction and annotation of chemogenomic libraries.

Table 3: Essential Research Reagents and Tools for Chemogenomics

| Item Name | Function / Application | Example Use Case |

|---|---|---|

| Solid Support Resin | A solid, insoluble substrate for combinatorial synthesis. | Foundation for solid-phase synthesis in SEL production [29]. |

| Fmoc-Amino Acids | Protected amino acid building blocks for synthesis. | Used as core scaffolds in library design (e.g., SEL 1) [29]. |

| DNA Barcodes & Ligation Enzymes | Encoding tags and tools for their attachment. | Essential for constructing DNA-encoded libraries (DELs) [29]. |

| Chemogenomic (CG) Compound Sets | Pre-assembled, well-annotated collections of small molecules. | EUbOPEN provides a CG set covering 1/3 of the druggable genome for screening [27]. |

| NanoLC-MS/MS System | High-sensitivity analytical instrument for separation and mass analysis. | Identifying hit structures from barcode-free affinity selections [29]. |

| SIRIUS & CSI:FingerID Software | Computational tools for interpreting MS/MS data. | Automated annotation of compound structures from fragmentation spectra [29]. |

| RDKit | Open-source cheminformatics toolkit. | Calculating molecular descriptors, fingerprints, and generating chemical space networks [13]. |

| NetworkX | Python library for network analysis. | Creating, analyzing, and visualizing Chemical Space Networks (CSNs) [13]. |

Building and annotating chemogenomic libraries is a multidisciplinary process that integrates sophisticated chemical design, robust synthesis, and comprehensive bioactivity profiling. The emergence of barcode-free technologies like SELs, coupled with advanced computational annotation and visualization tools like CSNs, is expanding the accessible druggable proteome. These libraries, when developed and annotated to high standards, serve as indispensable resources for the research community. They accelerate early drug discovery and target validation, directly contributing to the ambitious goals of global initiatives like Target 2035. By providing a framework for systematic, open-access chemical tool generation, as exemplified by the EUbOPEN consortium, chemogenomics continues to empower scientists to unlock novel biology and develop new therapeutic strategies [27].

Phenotypic Screening with Targeted Compound Sets

Chemogenomics represents a research paradigm that explores the systematic interaction between chemical compounds and biological systems, typically through targeted compound libraries designed to perturb specific protein families or pathways. When applied to phenotypic drug discovery (PDD), this approach enables the identification of novel therapeutic agents based on their effects on disease-relevant phenotypes without requiring prior knowledge of specific molecular targets [30]. This methodology has re-emerged as a powerful strategy over the past decade, contributing to a disproportionate number of first-in-class medicines compared to target-based approaches [30] [31].

The fundamental premise of using targeted compound sets in phenotypic screening lies in their ability to provide immediate mechanistic insights while maintaining the biological context of complex disease models. Unlike conventional phenotypic screening that uses diverse compound libraries with unknown mechanisms, targeted sets offer a strategic advantage by covering defined portions of the druggable genome, enabling researchers to connect observed phenotypes to specific target classes or pathways [32]. Modern PDD combines this original concept with advanced tools and strategies, including improved disease models, high-content readouts, and computational analytics, to systematically pursue drug discovery based on therapeutic effects in biologically relevant systems [30].

The Rationale for Targeted Compound Sets in Phenotypic Screening

Expanding Druggable Target Space

Phenotypic screening using targeted compound libraries has significantly expanded the "druggable target space" to include unexpected cellular processes and novel mechanisms of action (MOA). Notable successes include:

- Small molecule splicing modifiers like risdiplam for spinal muscular atrophy, which work by stabilizing the U1 snRNP complex to correct SMN2 pre-mRNA splicing [30]

- CFTR correctors such as lumacaftor, tezacaftor, and elexacaftor that enhance the folding and plasma membrane insertion of the mutant CFTR protein in cystic fibrosis [30]

- Molecular glues like lenalidomide that redirect E3 ubiquitin ligase substrate specificity to promote degradation of target proteins [30]

These examples demonstrate how phenotypic strategies with targeted compounds can reveal new target classes and MOAs that might not have been discovered through target-based approaches.

Balancing Mechanistic Insight and Biological Complexity

Targeted compound sets offer a strategic middle ground between fully target-agnostic phenotypic screening and reductionist target-based approaches. While conventional PDD does not rely on knowledge of specific drug targets, the use of targeted libraries provides:

- Immediate target hypotheses for follow-up validation

- Coverage of biologically relevant target space with chemical probes

- Functional annotation of compounds within physiological contexts

- Polypharmacology assessment by evaluating multi-target engagement in disease-relevant models [30]

This balanced approach addresses one of the major challenges of traditional PDD—target deconvolution—while maintaining the advantages of phenotypic screening in complex biological systems [31].

Designing Targeted Compound Libraries for Phenotypic Screening

Library Composition and Coverage

The effectiveness of targeted compound sets in phenotypic screening depends heavily on library design and composition. Chemogenomics libraries typically include compounds with known target annotations, but it is important to recognize that even the best libraries only interrogate a fraction of the human genome—approximately 1,000–2,000 targets out of 20,000+ genes [33]. This limitation underscores the importance of strategic library design to maximize biological relevance within practical constraints.

Table 1: Representative Chemogenomic Libraries for Phenotypic Screening

| Library Name | Source | Compound Count | Target Coverage | Special Features |

|---|---|---|---|---|

| Pfizer Chemogenomic Library | Pfizer | Not specified | Broad target coverage | Industry-developed |

| GSK Biologically Diverse Compound Set (BDCS) | GSK | Not specified | Diverse biological activities | Industry-developed |

| Prestwick Chemical Library | Prestwick | Not specified | FDA-approved drugs | Repurposing focus |

| Library of Pharmacologically Active Compounds | Sigma-Aldrich | Not specified | Known bioactivities | Commercial availability |

| MIPE Library | NCATS | Not specified | Translational focus | Public screening program |

| Custom Network Pharmacology Library | Academic [32] | 5,000 | Diverse targets | Integrated morphological profiling |

Library Design Strategies

Effective library design incorporates multiple considerations:

- Target diversity: Coverage across major target classes (kinases, GPCRs, ion channels, nuclear receptors, etc.)

- Chemical diversity: Structural and physicochemical diversity within target classes

- Polypharmacology potential: Compounds with known multi-target activities

- Chemical tractability: Compounds with properties suitable for optimization

- Biological context: Alignment between library targets and disease biology [32]

Advanced library design may incorporate system pharmacology networks that integrate drug-target-pathway-disease relationships as well as morphological profiles from assays like Cell Painting to enhance biological relevance [32].

Experimental Workflows and Methodologies

Core Screening Workflow

The following diagram illustrates a generalized experimental workflow for phenotypic screening with targeted compound sets:

Advanced Screening Approaches

Compressed Screening for Enhanced Throughput

Compressed screening represents an innovative approach that pools multiple perturbations to reduce sample requirements, cost, and labor while maintaining the ability to deconvolve individual compound effects. The methodology works by:

- Pool construction: Combining N perturbations into unique pools of size P

- Experimental testing: Screening pools in complex disease models

- Computational deconvolution: Using regularized linear regression and permutation testing to infer individual perturbation effects [34]