Chemogenomics Library Development for Phenotypic Screening: A Comprehensive Guide to Design, Application, and Target Deconvolution

This article provides a comprehensive guide for researchers and drug development professionals on the development and application of chemogenomics libraries in phenotypic screening.

Chemogenomics Library Development for Phenotypic Screening: A Comprehensive Guide to Design, Application, and Target Deconvolution

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the development and application of chemogenomics libraries in phenotypic screening. It covers the foundational principles of how annotated small-molecule collections bridge the gap between phenotypic observations and target identification. The content explores practical methodologies for library design, including the integration of genomic data and cheminformatics. It addresses key challenges such as limited target coverage and polypharmacology, offering strategic solutions for optimization. Finally, it examines validation frameworks and comparative analyses of existing libraries, concluding with future directions involving AI and multi-omics integration to expand the druggable genome and accelerate the discovery of novel therapeutics.

The Core Concept: How Chemogenomics Libraries Bridge Phenotypic Discovery and Target Identification

Chemogenomics represents a paradigm shift in chemical biology and drug discovery, moving beyond the traditional "one target–one drug" approach to a more comprehensive systems-level perspective. This innovative method systematically utilizes collections of annotated small molecules to study the response of complex biological systems, enabling the functional annotation of proteins and the discovery and validation of therapeutic targets [1] [2] [3]. At the heart of this strategy lies the chemogenomics library—a carefully curated collection of chemically diverse compounds designed to probe biological function across a wide target space. The resurgence of interest in phenotypic drug discovery (PDD) has further elevated the importance of chemogenomics libraries, as they provide critical tools for bridging the gap between observed phenotypic outcomes and their underlying molecular mechanisms [2] [4].

Unlike highly selective chemical probes that must meet stringent selectivity criteria, chemogenomics libraries typically comprise tool compounds that may not be exclusively selective for single targets [1]. This intentional relaxation of selectivity constraints enables coverage of a much larger portion of the druggable genome, which currently encompasses approximately 3,000 targets but continues to expand as new target areas such as the ubiquitin system and solute carriers are explored [1]. The fundamental premise of chemogenomics is that by systematically screening these annotated compound collections against biological systems, researchers can simultaneously identify bioactive small molecules and gain insights into their mechanisms of action, thereby accelerating both target validation and drug discovery [3].

Defining Characteristics and Composition of Chemogenomics Libraries

Core Definitions and Distinctions

A chemogenomics library is fundamentally distinct from general compound collections in its design philosophy and application. While chemical probes are cell-active, small-molecule ligands that selectively bind to specific biomolecular targets and typically require extensive validation, chemogenomics compounds serve as well-annotated tool compounds for functional annotation in complex cellular systems [1] [5]. These small molecule modulators (agonists, antagonists, etc.) used in chemogenomic studies may not be exclusively selective, which allows for covering a larger target space than would be possible with highly selective chemical probes alone [1]. This distinction is crucial—whereas chemical probes prioritize selectivity for deconvoluting specific biological functions, chemogenomics libraries embrace a broader targeting strategy to explore larger biological and chemical spaces.

The composition of chemogenomics libraries is typically organized into subsets covering major target families such as protein kinases, membrane proteins, and epigenetic modulators [1]. For example, the EUbOPEN consortium has established peer-reviewed criteria for inclusion of small molecules into their chemogenomic library, with the ambitious goal of covering approximately 30% of all currently known druggable targets [1]. This systematic approach to library design ensures comprehensive coverage of biological mechanisms while maintaining sufficient annotation for meaningful biological interpretation.

Quantitative Analysis of Library Characteristics

Table 1: Comparative Analysis of Selected Chemogenomics Libraries

| Library Name | Size (Compounds) | Key Characteristics | Target Coverage | Primary Applications |

|---|---|---|---|---|

| EUbOPEN Chemogenomic Library | Not specified | Organized by target families; peer-reviewed inclusion criteria | ~30% of druggable proteome (≈900 targets) | Target annotation and validation [1] |

| BioAscent Chemogenomic Library | ~1,600 | Diverse, selective, well-annotated probes | Multiple target classes | Phenotypic screening and MoA studies [6] |

| C3L Minimal Screening Library | 1,211 | Optimized for anticancer targets | 1,386 anticancer proteins | Precision oncology [7] |

| Phenotypic Screening Library [2] | 5,000 | Integrates drug-target-pathway-disease relationships | Diverse panel of drug targets | Phenotypic screening and target deconvolution |

| MIPE 4.0 | 1,912 | Known mechanism of action | Multiple target classes | Mechanism interrogation [8] |

Table 2: Polypharmacology Index (PPindex) of Common Chemogenomics Libraries

| Library | PPindex (All Compounds) | PPindex (Without 0-target Compounds) | Relative Target Specificity |

|---|---|---|---|

| DrugBank | 0.9594 | 0.7669 | Highest specificity [8] |

| LSP-MoA | 0.9751 | 0.3458 | Moderate specificity [8] |

| MIPE 4.0 | 0.7102 | 0.4508 | Moderate specificity [8] |

| Microsource Spectrum | 0.4325 | 0.3512 | Lower specificity [8] |

The polypharmacology index (PPindex) serves as a crucial quantitative metric for evaluating the target specificity of chemogenomics libraries. Derived from Boltzmann distributions of known targets per compound, this index helps researchers select appropriate libraries based on their specific needs—higher PPindex values indicate greater target specificity, which is particularly valuable for target deconvolution in phenotypic screening [8]. Interestingly, analysis reveals that the bin of compounds with no annotated target often represents the single largest category in many libraries, highlighting the ongoing challenge of comprehensive target annotation [8].

Design Strategies for Chemogenomics Libraries

Criteria for Compound Selection and Annotation

The construction of a high-quality chemogenomics library requires rigorous criteria for compound selection and annotation. The EUbOPEN consortium, for instance, has established peer-reviewed criteria conducted by independent experts, though specific details of these criteria are not fully elaborated in the available literature [1]. Generally, selection parameters include drug-like properties, structural diversity, and well-annotated mechanisms of action. For example, the BioAscent library selection process considers medicinal chemistry suitability and the presence of diverse Murcko Scaffolds and Frameworks to ensure broad chemical coverage [6].

A critical consideration in library design is the balance between target selectivity and polypharmacology. While selective compounds are valuable for precise target modulation, appropriately promiscuous compounds can provide advantages for complex diseases like cancer, neurological disorders, and diabetes, which often involve multiple molecular abnormalities rather than single defects [2]. This understanding has led to the development of libraries specifically designed for selective polypharmacology, where compounds are chosen for their ability to modulate a collection of targets across different signaling pathways relevant to specific disease states [4].

Specialized Library Design Approaches

Recent advances in chemogenomics library design have incorporated sophisticated computational and systems biology approaches. One innovative strategy involves creating rational libraries for phenotypic screening through structure-based molecular docking of chemical libraries to disease-specific targets identified using genomic profiles and protein-protein interaction networks [4]. For instance, in glioblastoma multiforme (GBM) research, researchers have identified druggable binding sites on proteins implicated in GBM through differential expression analysis of patient RNA sequencing data, then mapped these onto protein-protein interaction networks to construct disease-specific subnetworks for library enrichment [4].

Another emerging approach involves the development of minimal screening libraries that maximize target coverage while minimizing library size. Recent research has demonstrated the feasibility of creating a library of just 1,211 compounds to target 1,386 anticancer proteins, optimized through analytical procedures that consider cellular activity, chemical diversity, availability, and target selectivity [7]. Such designed libraries are particularly valuable for precision oncology applications, where patient-specific vulnerabilities can be identified through targeted screening of patient-derived cells.

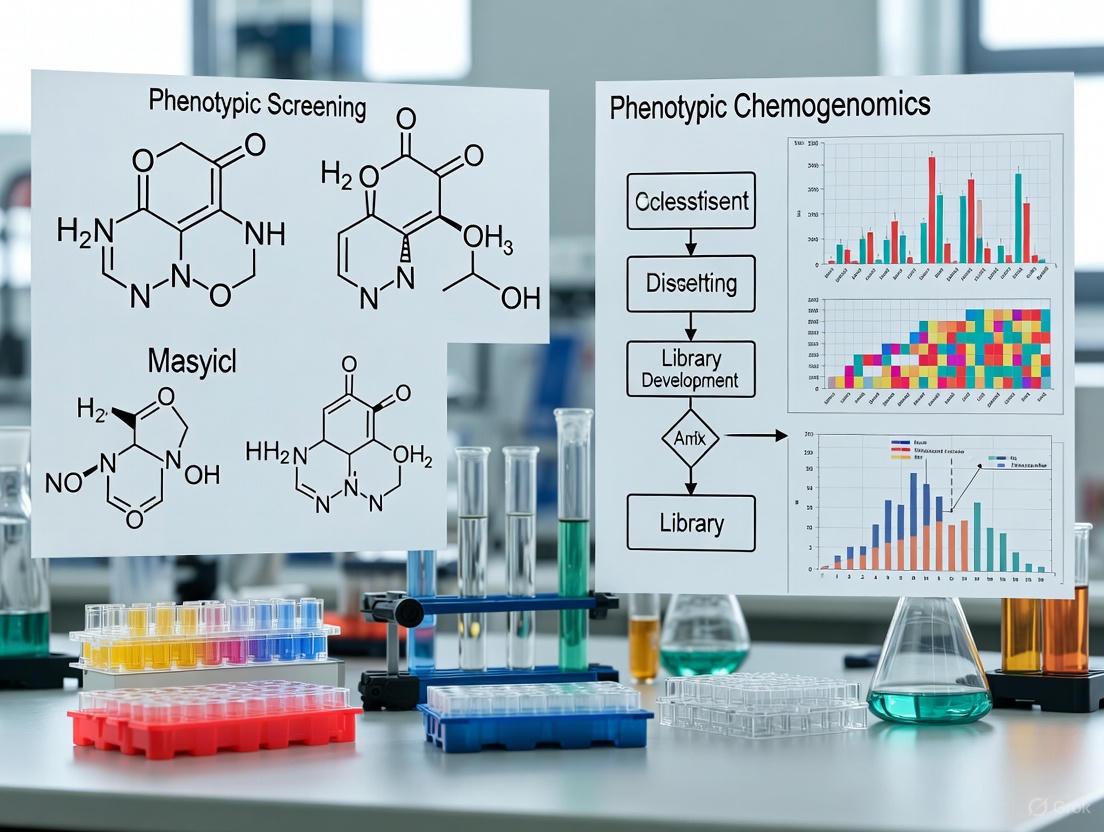

Diagram 1: A generalized workflow for designing chemogenomics libraries, highlighting key stages from target identification to experimental validation.

Applications in Phenotypic Screening and Target Deconvolution

Phenotypic Drug Discovery Paradigm

The resurgence of phenotypic screening in drug discovery has created a natural synergy with chemogenomics approaches. Phenotypic drug discovery strategies re-emerged as promising approaches for identifying novel and safe drugs, particularly for complex diseases where multiple molecular abnormalities coexist [2]. However, a significant challenge in phenotypic screening is the lack of knowledge about specific drug targets, necessitating combination with chemical biology approaches like chemogenomics to identify therapeutic targets and mechanisms of action associated with observable phenotypes [2].

Chemogenomics libraries serve as powerful tools in this context by enabling researchers to connect morphological or phenotypic perturbations to specific molecular targets. For example, advanced phenotypic profiling approaches such as the Cell Painting assay—which uses automated image analysis to measure hundreds of morphological features in cells—can be integrated with chemogenomics libraries to create systems pharmacology networks linking drug-target-pathway-disease relationships [2]. This integration allows for more efficient target identification and mechanism deconvolution from phenotypic assays.

Experimental Protocols and Workflows

A typical chemogenomics screening workflow involves several well-defined stages, from library preparation to data analysis. The following protocol outlines key steps in utilizing chemogenomics libraries for phenotypic screening:

Library Preparation and Quality Control: Chemogenomics libraries are typically maintained in DMSO solutions (e.g., 2mM & 10mM) in individual-use tubes to ensure compound integrity [6]. Quality control measures include assessment of compound purity, stability, and potential assay interference (e.g., PAINS compounds that may cause false positives).

Biological System Selection and Assay Development: Choose disease-relevant biological systems, which may include:

Phenotypic Screening Implementation:

- Treat biological systems with chemogenomics library compounds across appropriate concentration ranges

- Implement relevant phenotypic endpoints (cell viability, morphological changes, functional responses)

- Utilize high-content imaging technologies where applicable [2]

- Include appropriate controls and replicates for statistical rigor

Data Integration and Analysis:

- Collect and process multi-dimensional data (e.g., morphological profiles from Cell Painting) [2]

- Integrate results with existing biological knowledge networks

- Apply computational approaches for target prediction and pathway analysis

Target Deconvolution and Validation:

Diagram 2: The integration of chemogenomics libraries with phenotypic screening and multi-omics approaches facilitates target deconvolution and mechanism of action studies.

Research Reagent Solutions: Essential Tools for Chemogenomics

Table 3: Essential Research Reagents for Chemogenomics Applications

| Reagent/Resource | Function | Example Applications | Key Characteristics |

|---|---|---|---|

| Chemogenomic Compound Libraries | Modulate specific target families | Phenotypic screening, target validation | Well-annotated, target-focused [1] [6] |

| Cell Painting Assay | High-content morphological profiling | Phenotypic characterization, mechanism study | 1,779+ morphological features [2] |

| CRISPR-Cas Tools | Gene editing for target validation | Genetic perturbation studies, confirmation | Enables functional genomics [2] |

| Patient-Derived Cells | Disease-relevant biological systems | Personalized medicine, translational research | Maintain disease pathophysiology [4] [7] |

| 3D Culture Systems | Better mimic tissue microenvironment | Spheroid/organoid screening | Enhanced physiological relevance [4] |

| Thermal Proteome Profiling | Identify direct drug targets | Target deconvolution | Proteome-wide engagement [4] |

| Network Analysis Tools | Integrate multi-omics data | Systems pharmacology | Pathway/network visualization [2] |

The successful implementation of chemogenomics approaches relies on a suite of specialized research reagents and tools. BioAscent's chemogenomic library, for instance, comprises over 1,600 diverse, highly selective, and well-annotated pharmacologically active probe molecules, making it a powerful tool for phenotypic screening and mechanism of action studies [6]. These libraries typically include classes of compounds targeting key protein families such as kinases, GPCRs, ion channels, nuclear receptors, and epigenetic regulators.

Complementary technologies like the Cell Painting assay provide robust morphological profiling capabilities, measuring hundreds of cellular features across different cellular compartments to create distinctive fingerprints for different biological states and compound treatments [2]. When integrated with chemogenomics libraries, these tools enable the construction of comprehensive networks linking compound structure to target engagement to phenotypic outcome.

Advanced target deconvolution methods have become increasingly important in chemogenomics. Thermal proteome profiling, for example, has been successfully used to confirm engagement of multiple targets by compounds identified through phenotypic screens of enriched chemogenomics libraries [4]. This mass spectrometry-based method monitors protein thermal stability changes upon compound binding across the proteome, providing direct evidence of target engagement in cellular contexts.

Case Studies and Experimental Evidence

Glioblastoma Application

A compelling case study demonstrating the power of chemogenomics approaches comes from glioblastoma multiforme (GBM) research. Researchers created a rational library for phenotypic screening by using structure-based molecular docking to prioritize compounds targeting GBM-specific proteins identified through the tumor's RNA sequence and mutation data combined with cellular protein-protein interaction data [4]. This approach involved:

- Identifying druggable binding sites on proteins implicated in GBM through differential expression analysis of patient samples

- Mapping these proteins onto large-scale protein-protein interaction networks to construct a GBM-specific subnetwork

- Screening an in-house library of approximately 9,000 compounds against 316 druggable binding sites on proteins in this subnetwork

- Selecting compounds predicted to simultaneously bind to multiple proteins for phenotypic screening

This strategy yielded several active compounds, including one designated IPR-2025, which inhibited cell viability of patient-derived GBM spheroids with single-digit micromolar IC₅₀ values—substantially better than standard-of-care temozolomide—while showing no effect on primary hematopoietic CD34+ progenitor spheroids or astrocyte cell viability [4]. Subsequent RNA sequencing and thermal proteome profiling confirmed that the compound engages multiple targets, demonstrating selective polypharmacology that effectively inhibits GBM phenotypes without affecting normal cell viability.

Reproducibility and Robustness Assessment

The reproducibility of chemogenomics approaches has been systematically evaluated in large-scale studies. One comprehensive analysis compared two major yeast chemogenomics datasets—one from an academic laboratory (HIPLAB) and another from the Novartis Institute of Biomedical Research (NIBR)—comprising over 35 million gene-drug interactions and more than 6,000 unique chemogenomic profiles [9]. Despite substantial differences in experimental and analytical pipelines, the combined datasets revealed robust chemogenomic response signatures characterized by consistent gene signatures, enrichment for biological processes, and mechanisms of drug action.

This study identified that the majority (66.7%) of cellular response signatures were conserved across both datasets, providing strong evidence for the biological relevance of these systems-level response patterns [9]. Such reproducibility assessments are crucial for validating chemogenomics approaches and providing guidelines for implementing similar high-dimensional screens in mammalian systems, including parallel CRISPR screens in human cells.

The field of chemogenomics continues to evolve, with several emerging trends shaping its future development. There is growing emphasis on creating more targeted libraries for specific therapeutic areas, such as the minimal screening libraries developed for precision oncology applications [7]. These libraries are designed to maximize target coverage while minimizing size, making them particularly valuable for screening patient-derived cells in resource-constrained settings.

Integration of chemogenomics with increasingly sophisticated phenotypic readouts represents another important direction. As advanced technologies in cell-based phenotypic screening continue to develop—including improved iPS cell technologies, gene-editing tools, and high-content imaging assays—the demand for well-annotated chemogenomics libraries tailored for these applications will likely increase [2]. Furthermore, the systematic assessment of library characteristics, such as the polypharmacology index, provides quantitative frameworks for library selection and optimization [8].

In conclusion, chemogenomics libraries represent powerful resources that bridge chemical biology and functional genomics. By providing well-annotated collections of tool compounds, these libraries enable researchers to systematically probe biological function, identify novel therapeutic targets, and deconvolute mechanisms of action in phenotypic screening. As library design strategies become more sophisticated and integrated with multi-omics technologies, chemogenomics approaches will continue to play an increasingly important role in accelerating drug discovery and understanding biological systems.

The Resurgence of Phenotypic Screening and the Need for Mechanism-Based Tools

Phenotypic drug discovery (PDD), an empirical strategy that interrogates biological systems without requiring complete understanding of underlying molecular pathways, has experienced a major resurgence over the past decade [10]. This revival follows compelling evidence that phenotypic screening disproportionately contributes to first-in-class medicines: between 1999 and 2008, 28 of 50 first-in-class new molecular entities were discovered through phenotypic approaches [11]. Modern PDD combines the original concept of observing therapeutic effects on disease physiology with advanced tools and strategies, enabling systematic drug discovery based on therapeutic effects in realistic disease models [10].

Despite its successes, a fundamental challenge persists: the translation of observed phenotypic effects to understanding of molecular mechanism of action (MoA). This guide examines the resurgence of phenotypic screening, its proven value in expanding druggable target space, and the critical development of mechanism-based tools—particularly advanced chemogenomics libraries—necessary to bridge the gap between phenotype and mechanism.

The Resurgence and Rationale of Phenotypic Screening

Historical Context and Modern Resurgence

The drug discovery paradigm has shifted from a reductionist vision to a more complex systems pharmacology perspective. Traditional "one target—one drug" approaches have demonstrated limitations, with drug candidates often failing in advanced clinical stages due to insufficient efficacy or safety concerns [2]. Phenotypic screening re-emerged as a powerful alternative after analysis revealed that a majority of first-in-class drugs between 1999 and 2008 were discovered empirically without a predefined target hypothesis [10].

Modern phenotypic screening is defined by its focus on modulating a disease phenotype or biomarker rather than a pre-specified target to provide therapeutic benefit [10]. This approach has matured into an accepted discovery modality in both academia and the pharmaceutical industry, driven by notable successes including ivacaftor and lumacaftor for cystic fibrosis, risdiplam for spinal muscular atrophy, and daclatasvir for hepatitis C [10].

Key Advantages Over Target-Based Approaches

Phenotypic screening offers several distinct advantages that explain its resurgence:

Expansion of Druggable Target Space: PDD reveals unexpected cellular processes and novel mechanisms of action, expanding beyond traditional target classes to include processes like pre-mRNA splicing, target protein folding, and multi-component cellular machines [10].

Polypharmacology by Design: Phenotypic approaches can identify molecules that engage multiple targets simultaneously, which may be advantageous for complex, polygenic diseases with multiple underlying mechanisms [10].

Biology-First Interrogation: By allowing cells or organisms to reveal targets necessary for desired phenotypes, PDD avoids preconceptions about disease pathways and can identify previously unknown biology [11].

Table 1: Comparison of Phenotypic vs. Target-Based Screening Approaches

| Parameter | Phenotypic Screening | Target-Based Screening |

|---|---|---|

| Discovery Basis | Functional biological effects | Predefined target modulation |

| Discovery Bias | Unbiased, allows novel target identification | Hypothesis-driven, limited to known pathways |

| Mechanism of Action | Often unknown initially, requires deconvolution | Defined from the outset |

| Target Space | Expands druggable target space | Limited to previously validated targets |

| Technical Requirements | High-content imaging, functional genomics, AI | Structural biology, computational modeling |

| Success in First-in-Class Drugs | Disproportionately high | Less represented |

Phenotypic Screening Successes and Novel Mechanisms

Phenotypic screening has contributed numerous therapeutic advances with unprecedented mechanisms of action:

Cystic Fibrosis Therapies

Target-agnostic compound screens using cell lines expressing disease-associated CFTR variants identified compounds that improved CFTR channel gating (potentiators like ivacaftor) and compounds with unexpected mechanisms enhancing CFTR folding and membrane insertion (correctors like tezacaftor and elexacaftor) [10]. The combination therapy addressing 90% of CF patients was approved in 2019 [10].

Spinal Muscular Atrophy

Phenotypic screens identified small molecules that modulate SMN2 pre-mRNA splicing to increase full-length SMN protein [10]. These compounds work by stabilizing the U1 snRNP complex—an unprecedented drug target and mechanism—with risdiplam gaining FDA approval in 2020 as the first oral disease-modifying therapy for SMA [10].

Cancer Therapeutics

The optimized analogue lenalidomide gained FDA approval for several blood cancer indications, though its unprecedented molecular target and MoA were only elucidated several years post-approval [10]. Lenalidomide binds to the E3 ubiquitin ligase Cereblon and redirects its substrate selectivity to promote degradation of specific transcription factors [10].

Table 2: Notable Recent Successes from Phenotypic Screening

| Therapeutic Area | Compound | Target/Mechanism | Significance |

|---|---|---|---|

| Cystic Fibrosis | Ivacaftor, Elexacaftor, Tezacaftor | CFTR potentiators and correctors | First disease-modifying therapies for most CF patients |

| Spinal Muscular Atrophy | Risdiplam, Branaplam | SMN2 pre-mRNA splicing modification | First oral disease-modifying therapy for SMA |

| Hepatitis C | Daclatasvir | NS5A protein modulation | Key component of curative DAA combinations |

| Multiple Myeloma | Lenalidomide | Cereblon E3 ligase modulation | Novel mechanism inspiring targeted protein degradation field |

| Osteoarthritis | Kartogenin | Filamin A/CBFβ interaction disruption | Induces chondrocyte differentiation |

The Central Challenge: Mechanism of Action Deconvolution

A major historical barrier to using phenotypic assays has been the challenge in determining the mechanism of action for compounds of interest [11]. Without understanding molecular targets, further optimization and safety profiling become exceptionally difficult. Several methodologies have been developed to address this challenge:

Affinity-Based Methods

Affinity chromatography, photo-crosslinking, and mass spectrometry-based approaches enable identification of direct protein targets. For example, kartogenin—identified in a screen for chondrocyte differentiation—was determined to bind filamin A and disrupt its interaction with CBFβ, leading to CBFβ translocation to the nucleus and RUNX-mediated transcription of chondrocyte genes [11].

Gene Expression Profiling

Array-based profiling and RNA-Seq can uncover modulated pathways and dependencies. Treatment of human mesenchymal stem cells with kartogenin resulted in changes to only 39 genes after six hours, five of which were involved in RUNX transcriptional pathways, providing crucial mechanistic insight [11].

Genetic Modifier Screening

shRNA and CRISPR screening enable chemical genetic epistasis analysis, where loss of target function can be identified through modification of compound effects [11].

Computational Profiling

Modern approaches include classification methodologies inspired by sequence alignment tools that hypothesize MoA based on pairwise associations of phenotypic fingerprints [12]. These methods use machine learning classifiers to provide accurate prediction frameworks based on morphological profiling [12].

Diagram 1: MoA Deconvolution Methods

Chemogenomics Libraries: Bridging Phenotype and Mechanism

The Rationale for Chemogenomics Libraries

Chemogenomics libraries represent systematic collections of small molecules designed to modulate a diverse panel of protein targets across the human proteome [2]. These libraries address a critical limitation of conventional phenotypic screening: even the best chemogenomics libraries only interrogate a small fraction of the human genome—approximately 1,000–2,000 targets out of 20,000+ genes [13]. This aligns with comprehensive studies of chemically addressed proteins, highlighting vast unexplored regions of biological space [13].

Advanced chemogenomics libraries integrate drug-target-pathway-disease relationships with morphological profiles from assays like Cell Painting, creating systems pharmacology networks that assist in target identification and mechanism deconvolution [2].

Library Design and Composition

Modern chemogenomics libraries for phenotypic screening are constructed with several key considerations:

Target Diversity: Libraries should encompass a large and diverse panel of drug targets involved in diverse biological effects and diseases, often achieved through scaffold-based selection to ensure structural and functional diversity [2].

Annotation Quality: High-quality target annotations derived from databases like ChEMBL provide crucial mechanistic links between compound activity and biological pathways [2].

Tumor Genomic Tailoring: For disease-specific applications like glioblastoma, libraries can be enriched by docking compounds to targets identified through tumor RNA sequence and mutation data combined with protein-protein interaction networks [4].

Table 3: Essential Research Reagent Solutions for Phenotypic Screening

| Reagent/Category | Function/Application | Key Characteristics |

|---|---|---|

| Cell Painting Assay | High-content morphological profiling | Multiparametric imaging of cell structures, 1779+ morphological features |

| CRISPR-Cas9 Tools | Functional genomic screening | Gene knockout/modification for target identification |

| 3D Spheroid Models | Physiologically relevant screening | Better mimics tumor microenvironment vs 2D cultures |

| iPSC-Derived Cells | Disease-relevant models | Patient-specific screening, differentiation potential |

| Protein Interaction Maps | Target pathway analysis | ~8,000 proteins, ~27,000 interactions for network analysis |

| Chemogenomic Library | Targeted phenotypic screening | ~5,000 compounds with diverse target annotations |

Integrated Workflow: Phenotypic Screening to Mechanism

An effective modern phenotypic screening workflow integrates multiple approaches:

Diagram 2: Integrated Screening Workflow

Experimental Protocol: Target-Informed Phenotypic Screening

For glioblastoma multiforme (GBM), researchers developed a protocol integrating genomic data with phenotypic screening:

Target Identification: Analyze TCGA RNA-seq data to identify genes overexpressed in GBM (p < 0.001, FDR < 0.01, log2FC > 1) combined with somatic mutation data [4].

Network Mapping: Map protein products onto human protein-protein interaction networks (approximately 8,000 proteins and 27,000 interactions) to construct disease-specific subnetworks [4].

Binding Site Analysis: Identify druggable binding sites on proteins in the GBM subnetwork, classifying sites as catalytic (ENZ), protein-protein interaction interfaces (PPI), or allosteric (OTH) [4].

Virtual Screening: Dock in-house compound libraries (approximately 9,000 compounds) to druggable binding sites using knowledge-based scoring methods [4].

Phenotypic Screening: Test selected compounds (47 candidates in the GBM example) in 3D spheroids of patient-derived GBM cells with counter-screening in normal cells [4].

MoA Studies: Employ RNA sequencing and thermal proteome profiling to identify engaged targets and mechanisms [4].

This approach identified compound IPR-2025, which inhibited GBM spheroid viability with single-digit micromolar IC50 values, blocked endothelial tube formation, and showed no effect on normal cells, demonstrating selective polypharmacology [4].

The resurgence of phenotypic screening represents not a return to traditional methods but an evolution toward integrated, systematic approaches that combine the unbiased discovery potential of phenotypic observation with increasingly sophisticated mechanism-based tools. Key future directions include:

Advanced Model Systems: Continued development of physiologically relevant models including organoids, organ-on-chip devices, and patient-derived iPSC models that better recapitulate human disease [10] [14].

Artificial Intelligence Integration: AI and machine learning will enhance image analysis, pattern recognition, and mechanism prediction from complex phenotypic data [12] [14].

Multi-Omics Integration: Combining phenotypic data with genomics, proteomics, and transcriptomics for deeper mechanistic insights [14].

Functional Genomics Coupling: Combining CRISPR-based genetic screens with small-molecule phenotypic screening to accelerate target identification [13] [10].

The greatest challenge remains the efficient translation of phenotypic effects to mechanistic understanding. Chemogenomics libraries represent a crucial tool in this effort, creating structured bridges between observable biology and molecular targets. As these libraries expand in diversity and specificity, and as deconvolution methodologies advance, phenotypic screening is poised to maintain its critical role in identifying first-in-class therapies for complex diseases, truly embracing the promise of systems pharmacology in drug discovery.

In the evolving paradigm of modern drug discovery, the shift from a reductionist, target-based approach to a systems pharmacology perspective has catalyzed the resurgence of phenotypic screening [15]. This strategy allows for the identification of novel therapeutic agents without prior knowledge of specific molecular targets, operating within a physiologically relevant biological context [16] [17]. However, a significant challenge emerges following the identification of active compounds: the subsequent process of target deconvolution, which is essential for understanding a compound's mechanism of action (MoA) and for its further optimization as a drug candidate [16] [17]. Within this framework, chemogenomic libraries composed of annotated compounds—small molecules with known or suspected target affinities—provide a powerful solution. These libraries bridge the critical gap between observing a phenotypic effect and identifying its underlying molecular cause, thereby accelerating the translation of phenotypic hits into viable therapeutic starting points.

The Chemogenomics Library: A Bridge between Phenotype and Target

A chemogenomics library for phenotypic screening is a carefully curated collection of small molecules designed to interrogate a wide but defined portion of the druggable genome. Unlike diversity libraries, the value of a chemogenomics library lies in the annotations associated with each compound—the known or predicted protein targets, pathways, and biological processes they modulate [15]. The fundamental principle of using such a library is that if a compound induces a phenotype of interest, its annotation provides a direct, testable hypothesis about which targets and pathways are responsible for that phenotype.

Composition and Design of an Annotated Library

The development of a chemogenomics library involves integrating heterogeneous data sources to create a system pharmacology network. A representative library, as described in the literature, integrates the following elements into a graph database [15]:

- Bioactivity Data: Sources like ChEMBL provide standardized bioactivity data (e.g., Ki, IC50, EC50) for millions of molecules against thousands of targets [15].

- Pathway Information: Databases like Kyoto Encyclopedia of Genes and Genomes (KEGG) link targets to their involvement in broader biological pathways [15].

- Disease Ontology: Resources like the Human Disease Ontology (DO) associate targets and pathways with human diseases [15].

- Morphological Profiling: Data from high-content imaging assays, such as the Cell Painting assay, provide a quantitative, multivariate readout of cellular morphology. When a compound from the library is profiled in such an assay, it generates a unique morphological "fingerprint" that can be compared to other annotated compounds. Similar profiles suggest functional or target-level similarities, even between chemically distinct compounds [15].

To ensure chemical diversity and broad coverage, molecules are often processed using software like ScaffoldHunter to identify representative core structures, organizing the chemical space hierarchically [15].

Table 1: Key Public and Commercial Chemogenomics Libraries

| Library Name | Developer/Provider | Key Characteristics | Primary Application |

|---|---|---|---|

| Mechanism Interrogation PlatE (MIPE) | National Center for Advancing Translational Sciences (NCATS) | Publicly available; designed for mechanistic studies [15]. | Phenotypic screening and target deconvolution in an academic setting. |

| Pfizer Chemogenomic Library | Pfizer | Industrially curated; targets a diverse set of protein families [15]. | Internal drug discovery programs. |

| Biologically Diverse Compound Set (BDCS) | GlaxoSmithKline (GSK) | Industrially curated; focuses on biological and chemical diversity [15]. | Internal drug discovery programs. |

| Sygnature's AI-Informed Platform | Sygnature Discovery | Combines AI-driven analytics (e.g., AI4Lit) with highly curated data sources and expert guidance [18]. | Customized target identification and validation for client projects. |

Coverage and Limitations

It is critical to recognize that even the most comprehensive chemogenomic libraries interrogate only a fraction of the human genome. Current best-in-class libraries cover approximately 1,000 to 2,000 distinct protein targets out of over 20,000 protein-coding genes [13]. This inherent limitation means that phenotypic screens using these libraries are biased towards the "druggable" proteome with known ligands. Furthermore, a single compound in the library is rarely completely specific and may interact with several unintended "off-targets," which can both confound and serendipitously inform the deconvolution process [16].

Experimental Methodologies for Target Deconvolution

When an annotated compound from a chemogenomics library shows activity in a phenotypic assay, its annotation provides a starting hypothesis. This hypothesis must then be validated using rigorous experimental target deconvolution techniques. The following are key methodologies, often used in combination.

Affinity Chromatography

This is a foundational chemical proteomics approach for directly isolating target proteins.

Detailed Protocol:

- Probe Design: The hit compound is modified with a chemical handle (e.g., an alkyne or azide group) for immobilization. This modification must be strategically placed at a site that does not interfere with its biological activity, often informed by structure-activity relationship (SAR) data [16].

- Immobilization: The modified compound is covalently attached to a solid support, such as sepharose beads or high-performance magnetic beads, which simplifies washing and separation steps [16].

- Pull-Down Experiment: The immobilized "bait" is incubated with a cell lysate or a complex protein mixture. Proteins that bind to the compound are captured on the beads.

- Washing and Elution: The beads are extensively washed with buffer to remove non-specifically bound proteins. Specifically bound proteins are then eluted, either by using a high concentration of the free competitor compound (to displace specific binders) or by denaturing conditions.

- Target Identification: The eluted proteins are separated by gel electrophoresis and identified using liquid chromatography-tandem mass spectrometry (LC-MS/MS) [16] [17].

Photoaffinity Labeling (PAL)

PAL is particularly valuable for capturing weak or transient protein-ligand interactions and for studying integral membrane proteins.

Detailed Protocol:

- Probe Synthesis: A trifunctional probe is synthesized, containing: a) the compound of interest, b) a photoreactive group (e.g., diazirine or benzophenone), and c) an enrichment handle (e.g., an alkyne for subsequent "click" chemistry) [16] [17].

- Cellular Treatment and Cross-Linking: Living cells or cell lysates are treated with the probe, allowing it to bind to its cellular targets. The sample is then exposed to UV light, which activates the photoreactive group, forming a covalent bond between the probe and its target protein(s).

- Tag Conjugation and Enrichment: After cell lysis, a reporter tag (e.g., biotin for streptavidin-based enrichment) is conjugated to the handle via copper-catalyzed azide-alkyne cycloaddition (CuAAC) "click chemistry" [16].

- Purification and Identification: The biotin-tagged protein complexes are captured using streptavidin-coated beads, purified, and analyzed by LC-MS/MS [17].

Table 2: Comparison of Key Target Deconvolution Techniques

| Technique | Key Principle | Advantages | Disadvantages | Suitability for Annotated Compounds |

|---|---|---|---|---|

| Affinity Chromatography | Immobilized compound pulls down direct binding partners from a lysate [16] [17]. | Direct; provides dose-response data; works for a wide range of target classes [17]. | Requires a high-affinity ligand and a site for modification without losing activity [16] [17]. | High; the annotation provides confidence for probe design. |

| Photoaffinity Labeling (PAL) | Photoreactive probe covalently cross-links to targets upon UV exposure [16] [17]. | Captures transient/weak interactions; suitable for membrane proteins [17]. | Probe synthesis can be complex; potential for non-specific cross-linking. | High; ideal for validating targets suggested by annotation. |

| Activity-Based Protein Profiling (ABPP) | Probe with reactive electrophile labels active enzymes based on their catalytic mechanism [16]. | Reports on enzyme activity, not just abundance; high sensitivity. | Limited to enzyme classes with reactive nucleophiles (e.g., serine hydrolases, cysteine proteases) [16]. | Moderate; useful if the annotated target is an enzyme from a susceptible class. |

| Label-Free Methods (e.g., TPP, CETSA) | Monitor protein thermal stability shifts induced by ligand binding [17]. | No chemical modification needed; works in a native, cellular context [17]. | Can be challenging for low-abundance, very large, or membrane proteins [17]. | High; excellent for orthogonal validation without probe synthesis. |

Integrating Morphological Profiling for MoA Deconvolution

Beyond direct target identification, the Cell Painting assay can be used to generate hypotheses about a compound's MoA. The fundamental principle here is that compounds targeting the same protein or pathway often produce similar morphological profiles [15]. The workflow is as follows:

- Profile Library Compounds: The entire annotated chemogenomics library is screened in the Cell Painting assay to establish a reference database of morphological fingerprints.

- Profile Uncharacterized Hit: A novel hit from a separate phenotypic screen is profiled under the same conditions.

- Pattern Matching: The hit's morphological profile is computationally compared to the reference database. If it clusters closely with annotated compounds, the MoA of those compounds provides a strong hypothesis for the hit's MoA, guiding subsequent target deconvolution efforts [15].

The Scientist's Toolkit: Key Reagents and Solutions

Successful target deconvolution relies on a suite of specialized reagents and tools.

Table 3: Essential Research Reagent Solutions for Target Deconvolution

| Reagent / Solution | Function | Example Use Case |

|---|---|---|

| Annotated Chemogenomics Library | A collection of small molecules with known target annotations to link phenotype to potential target [15]. | Primary tool for initial phenotypic screening and hypothesis generation. |

| Click Chemistry Reagents | A set of reagents (e.g., azide/alkyne tags, copper catalyst, biotin-azide) for bioorthogonal conjugation of tags to probes after cellular processing [16]. | Used in PAL and ABPP to attach affinity/visualization tags post-binding. |

| Photoaffinity Probes | Trifunctional molecules containing the ligand, a photoreactive group (e.g., diazirine), and a clickable handle [17]. | For covalently capturing protein targets in live cells for PAL. |

| Streptavidin-Magnetic Beads | Solid support for affinity purification; magnetic properties enable rapid washing and separation [16]. | Used to isolate biotin-tagged protein complexes in affinity purification and PAL. |

| Stable Cell Lines | Cells engineered to express a protein target or a reporter gene under a specific promoter. | For validating target engagement and functional consequences in a relevant cellular context. |

| LC-MS/MS System | High-sensitivity mass spectrometry system for protein identification and quantification. | The core analytical platform for identifying proteins isolated by affinity methods. |

Phenotypic drug discovery (PDD) has re-emerged as a powerful strategy for identifying novel therapeutic agents without presupposing a specific molecular target, allowing for the interrogation of complex biological systems [13] [15]. Within this paradigm, chemogenomic (CG) libraries have become indispensable tools. These libraries are collections of well-annotated, small-molecule pharmacological agents designed to modulate a wide range of protein targets [19]. A fundamental premise of their use is that when a compound from a CG library produces a phenotype, its known target annotations provide immediate starting hypotheses for the mechanism of action (MoA), thereby bridging the gap between phenotypic observation and target-based validation [19] [20].

Despite their utility, a significant limitation constrains the potential of this approach. The human genome encodes over 20,000 proteins, yet the best chemogenomics libraries interrogate only a small fraction of this potential—approximately 1,000–2,000 targets [13]. This coverage represents just 5-10% of the human genome, leaving a vast expanse of biological space unexplored and creating a critical gap in our ability to fully leverage phenotypic screening for novel biology and first-in-class therapies [13] [21]. This whitepaper details the current scope of chemogenomic libraries, quantifies the existing gaps, and outlines the experimental and collaborative strategies being deployed to address them.

Quantitative Analysis of Current Coverage and Gaps

The following tables summarize the quantitative landscape of chemogenomic library coverage, highlighting both the current scope and the specific nature of the gaps.

Table 1: Current Coverage of the Human Proteome by Chemogenomic Libraries

| Metric | Current Figure | Source / Context |

|---|---|---|

| Total Human Proteins | ~20,000+ | [13] |

| Targets Addressed by Best CG Libraries | 1,000 - 2,000 | [13] |

| Percentage of Genome Covered | ~5% - 10% | Calculated from [13] |

| EUbOPEN Project Target Goal (Druggable Proteome) | ~1/3 (One Third) | [21] |

| Publicly Available Compounds (Bioactivity ≤10 μM) | 566,735 | [21] |

| Human Targets with Associated Bioactive Compounds | 2,899 | [21] |

Table 2: Characterization of Gaps and Mitigation Strategies

| Gap Category | Description | Examples of Underrepresented Families | Current Initiatives for Coverage |

|---|---|---|---|

| Established but Uneven | Coverage is heavily skewed towards historically "druggable" families. | Kinases, GPCRs | EUbOPEN CG library assembly; focus shifts to other families [21] [22]. |

| Emerging Target Families | Proteins with therapeutic potential but lacking quality chemical tools. | Solute Carriers (SLCs), E3 Ubiquitin Ligases | Dedicated chemical probe development programs within EUbOPEN and SGC [21] [23]. |

| Undrugged Proteome | Proteins with no known potent or selective small-molecule modulators. | Many proteins implicated by disease genetics but with unknown function. | Target 2035 initiative; computational hit-finding (CACHE); Open Chemistry Networks (OCN) [21] [23]. |

Experimental Protocols for Library Development and Application

Closing the coverage gap requires standardized methodologies for developing new chemical tools and applying existing libraries in phenotypic screens. The following protocols are critical to the field.

Protocol for Assembling and Annotating a Chemogenomic Library

This methodology outlines the creation of a systems pharmacology-informed CG library, as demonstrated in recent research [15].

- Data Integration and Network Construction: Assemble a network pharmacology database using a graph database platform (e.g., Neo4j). Integrate heterogeneous data sources, including:

- Bioactivity Data: From public databases like ChEMBL, containing molecules, targets, and standard bioactivity values (Ki, IC50, EC50).

- Pathway Information: From resources like the Kyoto Encyclopedia of Genes and Genomes (KEGG).

- Gene Ontology (GO): For functional annotation of proteins.

- Disease Ontology (DO): To link targets and pathways to human diseases.

- Morphological Profiles: From high-content imaging datasets like the Cell Painting assay (BBBC022), which provides hundreds of quantitative morphological features.

- Compound Selection and Scaffold Analysis: From the integrated network, select a diverse set of small molecules with robust bioactivity and annotation. Use software like ScaffoldHunter to decompose molecules into hierarchical scaffolds, ensuring chemical and target diversity in the final library.

- Functional Enrichment Analysis: Use tools like the R package

clusterProfilerto perform GO, KEGG, and DO enrichment analyses on the target sets of the selected compounds. This validates the library's coverage of biological processes, pathways, and diseases. - Quality Control and Annotation: Critically, compounds must be characterized for identity and purity. Furthermore, comprehensive cell-based annotation is essential to control for general cell health effects, as described in Protocol 3.2.

Protocol for Image-Based Viability Annotation of Chemogenomic Compounds

This live-cell high-content imaging protocol is designed to annotate CG libraries for effects on basic cellular functions, distinguishing specific phenotypes from non-specific cytotoxicity [20].

- Cell Seeding and Compound Treatment: Plate adherent cells (e.g., U2OS, HEK293T, MRC9) in multi-well plates and allow to adhere. Treat cells with CG compounds over a range of concentrations and time points (e.g., 24, 48, 72 hours), including DMSO vehicle and reference cytotoxic compounds as controls.

- Live-Cell Staining: Simultaneously stain living cells with a panel of low-concentration, non-toxic fluorescent dyes:

- Nuclear Stain: Hoechst 33342 (e.g., 50 nM) to identify nuclei and assess morphology.

- Microtubule Stain: BioTracker 488 Green Microtubule Cytoskeleton Dye to visualize tubulin and cytoskeletal integrity.

- Mitochondrial Stain: MitoTracker Red or DeepRed to monitor mitochondrial mass and health.

- High-Content Imaging and Image Analysis: Acquire images on a high-throughput microscope at regular intervals. Use automated image analysis software (e.g., CellProfiler) to identify single cells and measure features related to:

- Nuclear morphology (size, shape, texture, intensity).

- Cytoskeletal structure.

- Mitochondrial content and distribution.

- Machine Learning-Based Phenotype Classification: Train a supervised machine-learning algorithm on reference compounds to gate cells into distinct phenotypic categories:

- Healthy

- Early Apoptotic

- Late Apoptotic

- Necrotic

- Lysed

- Data Integration and Compound Triage: Calculate IC50 values for the loss of healthy cells over time. Use the multi-dimensional data to flag compounds that induce rapid, non-specific cytotoxicity, allowing researchers to exclude them from follow-up mechanistic studies or to contextualize a phenotypic readout.

Workflow for a Phenotypic Screen Using a Chemogenomic Library

The following diagram illustrates the logical workflow for deploying a CG library in a phenotypic screen and deconvoluting the results.

Phenotypic Screening with a CG Library

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials and Reagents for Chemogenomics Research

| Item / Reagent | Function / Application | Key Characteristics |

|---|---|---|

| EUbOPEN Chemogenomic Library | A large, openly available set of compounds covering kinases, GPCRs, SLCs, E3 ligases, and epigenetic targets for phenotypic screening and target deconvolution. | Profiled in patient-derived assays; aims to cover one-third of the druggable proteome [21] [22]. |

| Kinase Chemogenomic Set (KCGS) | A well-annotated set of kinase inhibitors for probing kinase-related phenotypes and signaling pathways. | Includes inhibitors with narrow and broad profiles to explore kinome inhibition [22]. |

| High-Quality Chemical Probes | The gold standard for target validation; potent, selective, cell-active small molecules for specific protein targets. | Potency <100 nM; selectivity >30-fold; evidence of cellular target engagement; often accompanied by a matched negative control [21] [23]. |

| Cell Painting Assay Kits | A high-content imaging-based assay for morphological profiling; used to generate a high-dimensional phenotypic fingerprint for genetic or compound perturbations. | Stains nucleus, nucleoli, cytoplasmic RNA, actin, and mitochondria [15] [24]. |

| Live-Cell Staining Dyes (Hoechst, MitoTracker, BioTracker) | For real-time, multiplexed assessment of cell health, morphology, and cytotoxicity in high-content imaging assays. | Low cytotoxicity at working concentrations; compatible with live-cell imaging over extended time courses [20]. |

| opnMe.com (Boehringer Ingelheim) | A portal providing access to high-quality, pre-clinical tool compounds ("Molecules to Order") for open research. | Free-of-charge, no-strings-attached access to well-characterized probe molecules [23]. |

The critical gap in genomic coverage by current chemogenomic libraries represents both a challenge and a catalyst for innovation in drug discovery. Major international initiatives like Target 2035 and EUbOPEN are proactively addressing this gap through a multi-pronged strategy: expanding CG library coverage, developing high-quality chemical probes for understudied target families, and leveraging open science principles [21] [23]. The integration of advanced technologies—including high-content morphological profiling, artificial intelligence for data integration and MoA prediction, and automated high-throughput biology—is crucial for scaling these efforts [25] [24].

The future of phenotypic screening hinges on our collective ability to close this coverage gap. By building more comprehensive and richly annotated chemogenomic libraries, the research community will empower itself to move more efficiently from phenotypic observation to validated target, ultimately accelerating the discovery of novel therapies for patients.

Chemogenomic libraries represent a transformative tool in modern drug discovery, bridging the gap between phenotypic screening and target-based approaches. These carefully curated collections of biologically active small molecules, annotated with their known target information, enable researchers to rapidly deconvolute complex biological phenomena and accelerate therapeutic development. This technical guide examines two cornerstone applications of chemogenomic library screening: drug repurposing and predictive toxicology. For drug discovery professionals, these approaches provide a strategic framework to identify new therapeutic uses for existing compounds beyond their original indications and to proactively address safety concerns that account for over half of all project failures. By integrating advanced computational biology, high-content screening technologies, and machine learning, chemogenomic libraries have evolved into indispensable resources for maximizing efficiency in pharmaceutical research and development.

Phenotypic Drug Discovery (PDD) has re-emerged as a powerful approach for identifying first-in-class therapeutics, accounting for a disproportionate number of innovative medicines compared to strictly target-based approaches [10]. However, a significant challenge in PDD remains the identification of specific molecular targets and mechanisms of action responsible for observed phenotypic effects. Chemogenomic libraries address this bottleneck directly.

A chemogenomic library is a collection of selective small-molecule pharmacological agents designed to represent a large and diverse panel of drug targets involved in diverse biological effects and diseases [2] [19]. When used in phenotypic screens, hits from these libraries immediately suggest that the annotated target or targets of that pharmacological agent may be involved in perturbing the observable phenotype [19]. This approach systematically connects chemical starting points to potential biological targets, transforming phenotypic discovery into a more target-informed process.

These libraries are constructed by integrating heterogeneous data sources—including drug-target-pathway-disease relationships, morphological profiling data from assays like Cell Painting, and cheminformatics analyses of chemical scaffolds [2]. The resulting resource enables a system pharmacology perspective that mirrors the complex polypharmacology of most effective drugs, where therapeutic effects often arise from modulation of multiple targets rather than a single protein [10].

Drug Repurposing Applications

Framework and Strategic Advantages

Drug repurposing, also known as drug repositioning, identifies new therapeutic uses for existing drugs beyond their original indications [26]. Chemogenomic libraries are ideally suited for this application, as they contain compounds with well-established safety profiles and often extensive clinical data. Screening these libraries in disease-relevant phenotypic assays can rapidly reveal novel therapeutic applications while significantly de-riscing the development process.

The advantages of this approach are substantial:

- Accelerated Timelines: Repurposing bypasses many early-stage discovery processes, shortening the path to clinical translation [26].

- Reduced Costs: Development costs are significantly lower as repurposing leverages existing chemical matter and safety data [26].

- Higher Success Rates: Compounds with established human safety profiles have lower failure rates in clinical trials for new indications [26].

Notable Success Cases

Several landmark examples demonstrate the power of chemogenomic approaches in repurposing:

Table 1: Notable Drug Repurposing Successes

| Drug Name | Original Indication | Repurposed Indication | Mechanistic Insights |

|---|---|---|---|

| Thalidomide [26] [10] | Sedative | Multiple Myeloma, Lepra Reactions | Binds E3 ubiquitin ligase Cereblon, redirecting substrate specificity to degrade transcription factors IKZF1/IKZF3 [10] |

| Sildenafil [26] | Hypertension, Angina | Erectile Dysfunction | Unexpected discovery of PDE5 inhibition effects on blood flow |

| Metformin [26] | Type 2 Diabetes | PCOS, Cancer Investigated | AMPK activation affecting multiple metabolic pathways |

| Bupropion [26] | Depression | Seasonal Affective Disorder, Obesity | Noradrenaline/dopamine reuptake inhibition affecting multiple neurological pathways |

Experimental Protocol: Repurposing Screening Workflow

A robust phenotypic screening protocol for drug repurposing involves these critical steps:

Library Curation: Select compounds from chemogenomic libraries representing diverse target classes and mechanisms. Prioritize compounds with established safety profiles but unexplored potential in the target disease area.

Phenotypic Assay Development: Establish a disease-relevant phenotypic assay with quantifiable readouts. For cardiovascular applications, zebrafish embryos cultured in 96-well microtiter plates have been successfully employed, with phenotypic abnormalities examined by visual inspection or automated analysis [27].

High-Throughput Screening: Implement robotic liquid-handling systems to efficiently screen compound libraries. Use appropriate controls and replication strategies to ensure statistical robustness.

Hit Validation: Confirm primary hits through dose-response studies and orthogonal assay systems to exclude false positives.

Target Deconvolution: Leverage the annotated targets of hit compounds from the chemogenomic library as starting points for mechanistic studies, followed by experimental validation using genetic approaches (e.g., CRISPR) or biochemical techniques.

Clinical Translation: Develop biomarkers based on the phenotypic readouts to facilitate clinical proof-of-concept studies.

Drug Repurposing Workflow

Predictive Toxicology Applications

Framework and Strategic Advantages

Predictive toxicology represents a critical application of chemogenomic libraries, addressing the concerning statistic that safety concerns halt 56% of drug discovery projects—making toxicity the largest contributor to project failure after efficacy [28]. Traditional toxicity assessment methods face significant limitations: in vitro tests often lack physiological relevance, while in vivo studies are expensive, time-consuming, and raise ethical concerns [28].

Chemogenomic libraries enable a paradigm shift by providing:

- Early Risk Identification: Potential toxicity issues can be flagged earlier in the discovery process, avoiding costly late-stage failures.

- Mechanistic Insights: Annotated targets allow correlation of specific pharmacological activities with toxicity endpoints.

- Polypharmacology Assessment: Understanding a compound's full target signature helps predict off-target toxicities.

Key Toxicity Endpoints and Predictive Models

Table 2: Key Predictive Toxicology Applications

| Toxicity Endpoint | Predictive Assays | Chemogenomic Targets | Validation Methods |

|---|---|---|---|

| Cardiotoxicity [28] | hERG inhibition assays, cardiomyocyte functional assays | hERG potassium channel, other ion channels | ECG parameters in preclinical models, clinical monitoring |

| Hepatotoxicity [28] | 3D spheroid models, organ-on-a-chip systems | Metabolic enzymes (CYPs), transporters | Liver enzyme monitoring, histopathology |

| Genetic Toxicity | Ames test, micronucleus assay | DNA-interacting proteins | Genetic toxicology screening battery |

| Organ-Specific Toxicity | Cell Painting morphology [2] | Diverse target classes | Histopathological examination |

Experimental Protocol: Predictive Toxicology Screening

A comprehensive predictive toxicology screening protocol incorporates these elements:

Data Integration and Model Training:

- Collate historical in vitro and in vivo toxicity data for chemogenomic library compounds

- Train machine learning models using chemical features and target annotations to predict toxicity endpoints

- Incorporate high-content imaging data from assays like Cell Painting, which captures 1,779 morphological features across cell, cytoplasm, and nucleus compartments [2]

In Silico Prediction:

- Screen virtual compounds against predictive models before synthesis

- Prioritize compounds with favorable predicted toxicity profiles

- Identify structural alerts and problematic target engagements

In Vitro Validation:

- Employ advanced model systems such as 3D spheroids or organ-on-a-chip technologies that better replicate in vivo conditions [28]

- For cardiotoxicity risk, utilize hERG inhibition assays as a well-established proxy [28]

- Implement high-content imaging to capture complex morphological changes indicative of toxicity

Mechanistic Investigation:

- Use target annotations from hit compounds to investigate toxicity mechanisms

- Explore structure-activity relationships to separate efficacy from toxicity

- Validate mechanisms using genetic approaches (e.g., CRISPR knockouts)

Predictive Toxicology Workflow

Integrated Experimental Protocols

Core Methodologies for Chemogenomic Library Screening

Phenotypic High-Throughput Screening Protocol

Phenotypic high-throughput screening forms the foundation of both repurposing and toxicology applications. The essential methodology includes:

Assay Design: Develop a biologically relevant and quantifiable phenotypic endpoint. For example, screens investigating exocytosis used BSC1 fibroblast cells incubated with a temperature-sensitive mutant form of vesicular stomatitis virus fused with green fluorescent protein (VSVGts-GFP) to track protein trafficking [27].

Automation Implementation: Utilize robotic liquid-handling devices for compound transfer to 96-, 384-, or 1536-well microtiter plates.

Controls and Normalization: Include appropriate positive and negative controls on each plate. Apply statistical normalization methods such as Z-score or B-score analysis to correct for positional effects and plate-to-plate variability [27].

Hit Identification: Establish statistically robust thresholds for hit identification, typically 3 standard deviations from the mean assay response.

Counter-Screening: Implement secondary assays to exclude compounds acting through nuisance mechanisms (e.g., cytotoxicity, assay interference).

Cell Painting Assay for Morphological Profiling

The Cell Painting assay provides a powerful multiparametric approach for both phenotypic screening and toxicity assessment:

Sample Preparation: Plate cells (e.g., U2OS osteosarcoma cells) in multiwell plates, perturb with test treatments, then stain with six fluorescent dyes targeting different cellular components [2].

Image Acquisition: Acquire high-resolution images on a high-throughput microscope across multiple channels.

Image Analysis: Use automated image analysis software (e.g., CellProfiler) to identify individual cells and measure morphological features (size, shape, texture, intensity, etc.) across different subcellular compartments [2].

Profile Generation: Create a morphological profile for each treatment based on approximately 1,700 extracted features [2].

Pattern Recognition: Apply machine learning algorithms to identify compounds with similar profiles, suggesting similar mechanisms of action or toxicity.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Research Reagents for Chemogenomic Screening

| Reagent/Resource | Function | Example Applications |

|---|---|---|

| Chemogenomic Library [2] [19] | Collection of annotated bioactive compounds | Phenotypic screening, target deconvolution |

| Cell Painting Dyes [2] | Multiplexed staining of cellular components | Morphological profiling, mechanism identification |

| High-Content Imaging System | Automated image acquisition and analysis | Quantitative phenotypic assessment |

| Organ-on-a-Chip Systems [28] | Microfluidic devices mimicking human organs | Physiologically relevant toxicity screening |

| CRISPR-Cas9 Tools [10] | Precision gene editing | Target validation, mechanism studies |

| Bioinformatics Databases (ChEMBL, KEGG, GO) [2] | Structured biological and chemical knowledge | Target annotation, pathway analysis |

Emerging Technologies and Future Directions

The field of chemogenomic library screening continues to evolve through several technological advancements:

Artificial Intelligence Integration: AI and machine learning algorithms are being applied to predict drug-disease interactions and identify repurposing candidates based on shared molecular pathways [26]. These approaches can analyze large-scale data from chemogenomic screens to uncover hidden relationships.

Advanced Disease Models: Improved model systems, including patient-derived organoids and humanized animal models, provide more physiologically relevant contexts for phenotypic screening [10].

Functional Genomics Integration: Combining chemogenomic libraries with CRISPR-based screening enables comprehensive mapping of compound mechanisms [10].

Massively Parallel Reporter Assays (MPRAs): Techniques like perturbation MPRAs allow high-throughput functional assessment of non-coding regulatory elements, expanding the scope of investigable biology [29].

Network Pharmacology Approaches: Integrating chemogenomics with systems biology enables understanding of polypharmacology and complex mechanism-of-action profiles [2] [10].

These technological advances are progressively enhancing the predictive power and efficiency of chemogenomic approaches, solidifying their role as cornerstone methodologies in modern drug discovery.

Building Better Libraries: Strategic Design and Practical Applications in Disease Research

The drug discovery paradigm has significantly shifted from a reductionist, "one target—one drug" vision to a more complex systems pharmacology perspective that acknowledges a "one drug—several targets" reality [2]. This shift is partly driven by the high failure rates of drug candidates in advanced clinical stages due to lack of efficacy and safety, particularly for complex diseases like cancer, neurological disorders, and diabetes, which often stem from multiple molecular abnormalities rather than a single defect [2]. Within this context, high-throughput phenotypic screening (pHTS) has re-emerged as a powerful approach for small-molecule discovery, prioritizing drug candidate cellular bioactivity over precise mechanism of action (MoA) understanding [30]. Phenotypic screening occurs in physiologically relevant environments (cells, organoids, or whole organisms), potentially yielding hits with greater success probability in later drug development stages compared to traditional target-based screening (tHTS) [30].

The central challenge in phenotypic screening is target deconvolution—identifying the molecular targets responsible for the observed phenotype once active compounds are found [30] [2]. Chemogenomics libraries have emerged as a critical tool for addressing this challenge. These are collections of well-annotated small molecules, often with known or postulated mechanisms of action, designed to cover a broad spectrum of biological targets [2] [31]. The underlying assumption is that knowledge of a compound's primary target can facilitate automatic target deconvolution in phenotypic screens. However, the utility of these libraries is profoundly influenced by the polypharmacology of their constituent compounds—the phenomenon where most drug-like molecules interact with multiple molecular targets, averaging six known targets per molecule even after optimization [30]. This polypharmacology directly opposes the desired target specificity for straightforward deconvolution, necessitating careful library characterization and design [30].

Numerous chemogenomics libraries have been developed by both academic institutions and pharmaceutical companies. These libraries vary in their design principles, size, target coverage, and intended applications. Below is a detailed examination of several prominent publicly available and commercial libraries.

Table 1: Key Publicly Available and Commercial Chemogenomics Libraries

| Library Name | Developer | Size (Compounds) | Key Characteristics | Primary Application |

|---|---|---|---|---|

| MIPE 4.0 (Mechanism Interrogation PlatE) | National Center for Advancing Translational Sciences (NCATS) [2] | ~1,912 [30] | Small molecule probes with known mechanism of action [30]. | Target deconvolution in phenotypic screening [30]. |

| LSP-MoA (Laboratory of Systems Pharmacology – Method of Action) | Laboratory of Systems Pharmacology | Not explicitly stated | Optimized to optimally target 1,852 genes in the liganded genome; data-driven design for binding selectivity and target coverage [31]. | Mechanism of action studies and phenotypic screening [31]. |

| The Spectrum Collection | Microsource Discovery Systems | ~1,761 [30] | Bioactive compounds for HTS or target-specific assays [30]. | General bioactive screening. |

| DrugBank Library | University of Alberta | ~9,700 [30] | Includes approved, biotech, and experimental drugs; not all compounds have annotated targets [30]. | Broad drug repurposing and screening. |

| Phenotypic Screening Library (PSL) | Enamine | ~5,760 [32] | Combines approved drugs, compounds with known MoA, and potent inhibitors; designed for multipurpose use with rich biological annotation [32]. | Specialized phenotypic screens investigating new MoAs and targets [32]. |

Other notable libraries mentioned in the literature include the Pfizer chemogenomic library, the GlaxoSmithKline (GSK) Biologically Diverse Compound Set (BDCS), Prestwick Chemical Library, and the Sigma-Aldrich Library of Pharmacologically Active Compounds (LOPAC) [2]. The design and curation of these libraries are paramount, as the scientific community relies heavily on historical chemogenomics data to guide small-molecule bioactivity screens and chemical probe development [33]. Establishing the highest quality standards for data deposited in chemogenomics databases is therefore a critical concern for the field [33].

Quantitative Comparison and Polypharmacology Assessment

A critical factor distinguishing chemogenomics libraries is their degree of polypharmacology. A quantitative assessment method using a polypharmacology index (PPindex) was developed to compare libraries objectively [30]. This method involves plotting all known targets for each compound in a library as a histogram, which typically fits a Boltzmann distribution. The linearized slope of this distribution (the PPindex) indicates the overall polypharmacology of the library, where larger absolute values (steeper slopes) suggest more target-specific libraries, and smaller values indicate more polypharmacologic libraries [30].

Table 2: Polypharmacology Index (PPindex) for Various Compound Libraries [30]

| Database / Library | PPindex (All Data) | PPindex (Excluding Compounds with 0 Targets) | PPindex (Excluding Compounds with 0 or 1 Target) |

|---|---|---|---|

| DrugBank | 0.9594 | 0.7669 | 0.4721 |

| LSP-MoA | 0.9751 | 0.3458 | 0.3154 |

| MIPE 4.0 | 0.7102 | 0.4508 | 0.3847 |

| DrugBank Approved | 0.6807 | 0.3492 | 0.3079 |

| Microsource Spectrum | 0.4325 | 0.3512 | 0.2586 |

The initial analysis using all data suggested that the DrugBank library and LSP-MoA were highly target-specific [30]. However, this can be misleading due to data sparsity; many compounds in large libraries like DrugBank may have only one annotated target simply because they have not been screened against others. To reduce this bias, the PPindex is recalculated excluding compounds with zero or one annotated target. This adjusted view reveals that the LSP-MoA, MIPE, and Microsource libraries demonstrate significant polypharmacology, with the Microsource Spectrum library being the most polypharmacologic among the tested sets [30]. This quantitative comparison clearly distinguishes libraries and informs their selection; for instance, a more target-specific library would be more useful for straightforward target deconvolution in a phenotypic screen [30].

Beyond polypharmacology, chemical diversity is another vital metric. Analysis of structural similarity using Tanimoto distances shows that libraries like MIPE, LSP-MoA, Microsource, and DrugBank generally exhibit high chemical diversity, with similar distributions of cluster sizes when compounds are grouped by structural similarity [30]. This suggests that, despite differences in polypharmacology, major public chemogenomics libraries maintain a broad coverage of chemical space.

Experimental Protocols for Library Analysis and Design

Protocol 1: Calculating the Polypharmacology Index (PPindex)

The PPindex provides a quantitative measure of a library's target specificity [30].

Materials:

- Chemical Library: Curated list of compounds with standardized identifiers (e.g., ChEMBL ID, PubChem CID, SMILES).

- Target Annotation Database: A source of reliable bioactivity data (e.g., ChEMBL, DrugBank).

- Software: MATLAB (with Curve Fitting Toolbox) or Python (with NumPy/SciPy) for data fitting.

Method:

- Compound Standardization: Convert all compound identifiers in the library to canonical Simplified Molecular Input Line Entry System (SMILES) strings to account for salts and stereochemistry [30].

- Target Identification: Query the target annotation database for all known molecular targets of each compound. Include in vitro binding data (Ki, IC50, EC50) and consider a threshold (e.g., affinity < upper assay limit) to define a true interaction. To account for incomplete data, include compounds with high structural similarity (e.g., Tanimoto coefficient > 0.99) in the query [30].

- Data Aggregation: For each compound, count the number of unique, validated molecular targets.

- Histogram Generation: Create a histogram where the x-axis represents the number of targets per compound, and the y-axis represents the frequency (number of compounds) [30].

- Distribution Fitting: Fit the histogram values to a Boltzmann distribution. Most libraries will show a distribution where the bin for compounds with no annotated targets is the largest single category [30].

- Linearization and Slope Calculation: Transform the sorted histogram values (descending order) using the natural logarithm. The slope of the linearized distribution is the PPindex [30].

Protocol 2: Building a Network Pharmacology Database for Library Curation

This protocol, adapted from a study that developed a 5,000-compound chemogenomics library, outlines a systems pharmacology approach to library design [2].

Materials:

- Data Sources: ChEMBL database (for bioactivity data), Kyoto Encyclopedia of Genes and Genomes (KEGG) (for pathways), Gene Ontology (GO) (for biological functions), Disease Ontology (DO) (for disease associations), morphological profiling data (e.g., Cell Painting from Broad Bioimage Benchmark Collection) [2].