Chemogenomics for Pathway Discovery: Integrating AI, Multi-Omics, and Systems Pharmacology for Next-Generation Drug Development

This article provides a comprehensive exploration of chemogenomic approaches for biological pathway identification, a key strategy in modern drug discovery.

Chemogenomics for Pathway Discovery: Integrating AI, Multi-Omics, and Systems Pharmacology for Next-Generation Drug Development

Abstract

This article provides a comprehensive exploration of chemogenomic approaches for biological pathway identification, a key strategy in modern drug discovery. It covers the foundational principles of systematically screening chemical libraries against target families to elucidate novel pathways and drug targets. The scope extends to advanced methodological applications, including machine learning, multi-omics integration, and network-based analysis for uncovering complex pathway biology. The article also addresses critical challenges in data interpretation, pathway annotation biases, and model generalizability, offering practical troubleshooting and optimization strategies. Finally, it examines validation frameworks and comparative analyses of computational tools, positioning chemogenomics as an indispensable platform for accelerating systems pharmacology and precision medicine.

Foundations of Chemogenomics: From Target Families to Biological Pathways

Chemogenomics is a systematic approach to drug discovery that aims to identify all possible ligands and modulators for all gene products within a biological system [1]. In the post-genomic era, this discipline represents a paradigm shift, moving from a "one drug, one target" model to a comprehensive exploration of the chemical space against families of biologically relevant targets [1]. By leveraging the comprehensive genomic data available after the elucidation of the human genome, chemogenomics systematically explores the interaction between chemical compounds and protein families to accelerate the identification of effective new medicines and biological probes [1] [2].

The strategy brings together diverse disciplines including chemistry, genetics, chemo- and bioinformatics, structural biology, and high-throughput biological screening in both phenotypic and target-based assays [1]. This integrated approach allows for the accelerated exploration of biological function and the simultaneous discovery of new targets and their effector molecules, making it a powerful framework for modern drug discovery and biological pathway research [1].

Core Principles and Strategic Frameworks

The Chemogenomic Approach to Expanding the Druggable Proteome

Traditional drug development has focused on a limited set of well-established target families, leaving much of the proteome unexplored [3]. Chemogenomics addresses this limitation through systematic efforts to develop chemical tools for understudied proteins. Current small-molecule drug development focuses on a few well-established target families that define the explored druggable proteome. Although the number of target families has increased significantly over the past few decades, many proteins within established and yet to be discovered target families remain unexplored [3].

The primary tools in chemogenomics include chemical probes—highly characterized, potent, and selective, cell-active small molecules that modulate protein function—and chemogenomic (CG) compounds, which are potent inhibitors or activators with narrow but not exclusive target selectivity [3]. These CG tools are powerful reagents when several small molecules with diverse off-target activity profiles are combined into collections that allow target deconvolution based on selectivity patterns [3].

Global Initiatives: Target 2035 and EUbOPEN

The Target 2035 initiative is an international federation of biomedical scientists from public and private sectors leveraging 'open' principles to develop a pharmacological tool for every human protein by the year 2035 [4]. This ambitious goal represents a global effort to make chemical and biological tools and data freely available to the research community [3].

A major contributor to these efforts is the EUbOPEN consortium (Enabling and Unlocking Biology in the OPEN), a public-private partnership with the goal of creating, distributing, and annotating the largest openly available set of high-quality chemical modulators for human proteins [3]. EUbOPEN's activities are structured around four pillars:

- Chemogenomic library collections covering one third of the druggable proteome

- Chemical probe discovery and technology development for hit-to-lead chemistry

- Profiling of bioactive compounds in patient-derived disease assays

- Collection, storage and dissemination of project-wide data and reagents [3]

Table 1: Key Global Chemogenomics Initiatives

| Initiative | Primary Objective | Key Outputs | Participating Organizations |

|---|---|---|---|

| Target 2035 | Develop pharmacological tools for every human protein by 2035 [4] | Open science resources, chemical probes, data standards | Global federation of academia and industry |

| EUbOPEN | Create openly available chemical modulators for human proteins [3] | 100 chemical probes, CG libraries, disease assay data | 22 partners from academia and pharmaceutical industry |

| CACHE | Benchmark computational hit-finding methods [4] | Experimental validation of predicted compounds, benchmarking data | Public-private partnership including Bayer, SGC |

| Open Chemistry Networks (OCN) | Develop probes for understudied targets through open collaboration [4] | Small molecule binders, open data sets | International network of chemists and biochemists |

Experimental Methodologies and Workflows

Chemogenomic Screening and Data Generation

Chemogenomic screening involves large-scale testing of compound libraries against panels of biological targets such as kinases, GPCRs, or cytochromes [5]. These efforts have led to the rapid expansion of publicly available chemogenomics repositories including ChEMBL, PubChem, and PDSP, which provide foundational data for developing computational models of chemical bioactivity [5].

The screening process must address several methodological considerations:

- Screening Technologies: Subtle experimental details such as differences in biological screening technologies can significantly influence results. For example, the type of dispensing techniques (tip-based versus acoustic) used in HTS can significantly influence the experimental responses measured for the same compounds tested in the same assay [5].

- Target Families: Focused screening on protein families allows for leveraging structural and functional relationships to interpret results and identify selective compounds.

- Assay Types: Both biochemical (target-based) and phenotypic (cell-based) assays provide complementary information about compound activity.

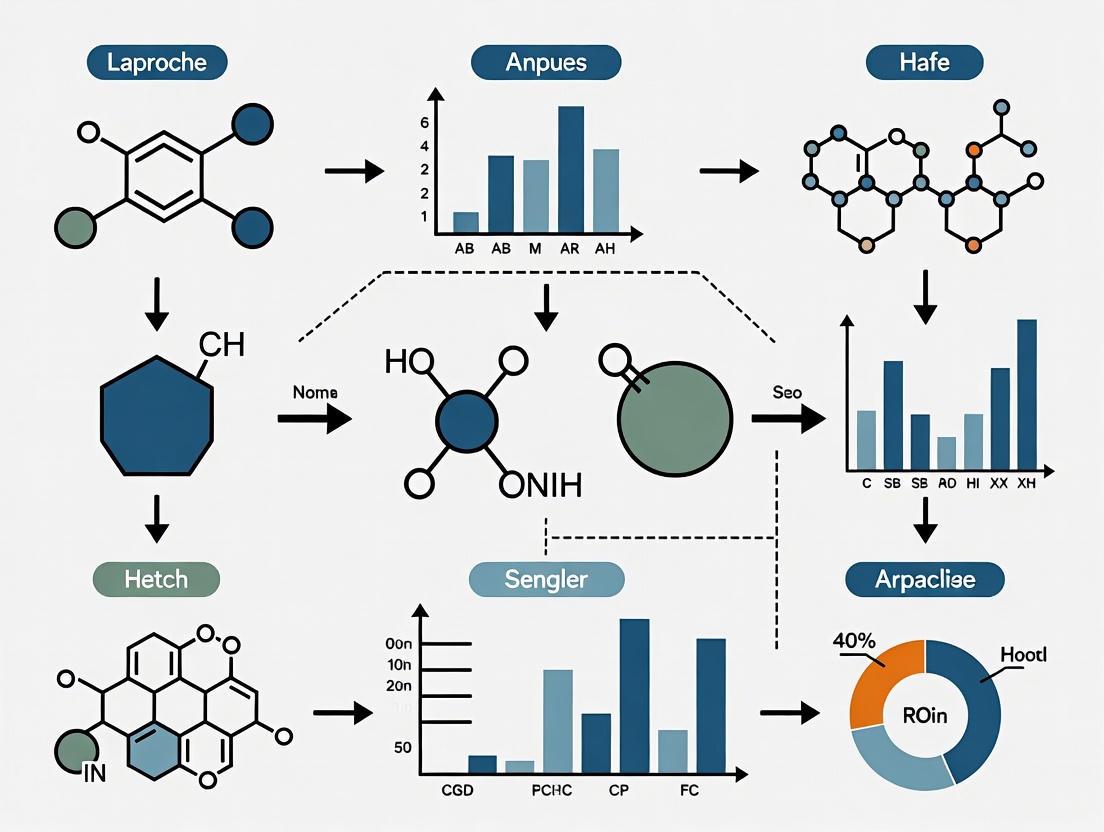

Diagram 1: Chemogenomic Screening Workflow. This flowchart outlines the key stages in systematic screening of chemical libraries against target families, highlighting the major protein classes typically investigated.

Data Curation and Quality Control

The quality and reproducibility of chemogenomics data are critical challenges that require rigorous curation protocols. Studies have shown concerning error rates in published chemical and biological data, with an average of two molecules with erroneous chemical structures per medicinal chemistry publication and an overall error rate of 8% for compounds in some databases [5].

An integrated workflow for chemical and biological data curation includes these essential steps:

Chemical Structure Curation:

- Removal of incomplete records (inorganics, organometallics, counterions, biologics, mixtures)

- Structural cleaning (detection of valence violations, extreme bond lengths/angles)

- Ring aromatization and normalization of specific chemotypes

- Standardization of tautomeric forms using tools like Molecular Checker/Standardizer, RDKit, or LigPrep [5]

- Verification of stereochemistry correctness

Bioactivity Data Processing:

- Detection and handling of chemical duplicates where the same compound is recorded multiple times

- Comparison of bioactivities reported for retrieved duplicates

- Assessment of experimental variability and technical reproducibility

Manual Verification:

- Manual checking of complex structures or compounds with many atoms

- Generation of representative dataset samples for quality assessment

- Engagement of scientific community in crowd-sourced curation efforts [5]

Table 2: Chemical Probe Criteria and Characterization Standards

| Parameter | Minimum Standard | Characterization Methods | Purpose |

|---|---|---|---|

| Potency | < 100 nM in vitro activity [2] | IC₅₀, Kᵢ, KD measurements | Ensure effective target modulation |

| Selectivity | >30-fold over related proteins [2] | Profiling against industry standard target panels | Minimize off-target effects |

| Cellular Activity | Target engagement <1 μM (or <10 μM for PPIs) [3] | Cellular target engagement assays | Confirm activity in physiological context |

| Toxicity Window | Reasonable cellular toxicity window [3] | Cytotoxicity assays | Distinguish target-mediated from non-specific effects |

| Negative Control | Structurally similar inactive compound [3] | Matched control synthesis | Control for off-target effects |

Advanced Detection Methods: Phospholipidosis Screening Example

Recent advances in detection methodologies combine high-content imaging with machine learning to address specific screening challenges. For example, drug-induced phospholipidosis (DIPL)—characterized by excessive accumulation of phospholipids in lysosomes—can lead to clinical adverse effects and alter phenotypic responses in functional studies using chemical probes [6].

A sophisticated approach to this problem involves:

- High-Content Live-Cell Imaging: A versatile imaging approach used to evaluate chemogenomic and lysosomal modulation libraries [6].

- Machine Learning Integration: Training and evaluating several machine learning models using comprehensive sets of publicly available compounds [6].

- Model Interpretation: Using SHapley Additive exPlanations (SHAP) to interpret the best-performing model [6].

- Probe Analysis: Applying the algorithm to high-quality chemical probes from the Chemical Probes Portal revealed that closely related molecules, such as chemical probes and their matched negative controls, can differ in their ability to induce phospholipidosis [6].

This integrated approach highlights the importance of identifying phospholipidosis for robust target validation in chemical biology and demonstrates how advanced detection methods enhance the reliability of chemogenomic screening [6].

Key Research Reagents and Materials

Table 3: Essential Research Reagents for Chemogenomics Screening

| Reagent / Material | Function | Examples / Specifications |

|---|---|---|

| Chemogenomic Compound Libraries | Systematic coverage of chemical space against target families | EUbOPEN library covering 1/3 of druggable proteome [3] |

| Chemical Probes | Highly characterized, potent, selective modulators | Peer-reviewed probes with negative controls [3] |

| Patient-Derived Cell Assays | Disease-relevant biological context | Inflammatory bowel disease, cancer, neurodegeneration models [3] |

| Target Protein Panels | Comprehensive coverage of protein families | Kinases, E3 ligases, solute carriers (SLCs) [3] [5] |

| Public Data Repositories | Data storage, annotation, and dissemination | ChEMBL, PubChem, PDSP, EUbOPEN project resource [3] [5] |

Data Analysis and Computational Integration

Pathway Analysis and Bioinformatics Integration

The biological interpretation of chemogenomics data requires sophisticated bioinformatics approaches. Pathway analysis tools enable researchers to connect compound-target interactions to broader biological systems:

- Gene Set Enrichment Analysis (GSEA): Determines whether defined sets of genes show statistically significant differences between biological states [7].

- Kyoto Encyclopedia of Genes and Genomes (KEGG): Systematic analysis of gene functions, linking genomic information with higher-order functional information [7].

- Protein-Protein Interaction (PPI) Networks: Assessment of interactive relationships using databases like STRING, followed by network construction with tools like Cytoscape [7].

- Hub Gene Identification: Using algorithms like Molecular Complex Detection (MCODE) and cytoHubba to identify key nodes in interaction networks [7].

Machine Learning for Drug-Target Interaction Prediction

Computational prediction of drug-target interactions (DTI) plays an increasingly important role in chemogenomics. The EmbedDTI framework represents recent advances in this area, enhancing molecular representations through several innovative approaches:

- Protein Sequence Embedding: Leveraging language modeling for pre-training feature embeddings of amino acids, followed by convolutional neural networks for further representation learning [8].

- Multi-Level Compound Representation: Building two levels of graphs to represent compound structural information—atom graph and substructure graph—and adopting graph convolutional network with an attention module to learn embedding vectors [8].

- Model Architecture: Combining these enhanced representations through convolutional neural network blocks for proteins and graph convolutional networks for compounds, then concatenating feature vectors for binding affinity prediction [8].

This approach has demonstrated superior performance compared to existing DTI predictors on benchmark datasets, achieving the lowest mean square error (MSE) and highest concordance index (CI) in comparative evaluations [8].

Diagram 2: Drug-Target Interaction Prediction Architecture. This computational workflow illustrates how modern machine learning approaches integrate protein sequence information and compound structural data to predict binding affinities.

Applications in Biological Pathway Research

Target Deconvolution and Pathway Identification

Chemogenomic approaches are particularly powerful for target deconvolution and pathway identification in complex biological systems. The use of compound sets with diverse but overlapping selectivity profiles enables researchers to connect phenotypic effects to specific molecular targets [3]. When multiple compounds with known but varying target affinities produce similar phenotypic outcomes, researchers can infer the involvement of specific pathways in the observed biological response.

This approach is especially valuable for studying:

- Understudied Target Families: Proteins with limited characterization can be connected to biological pathways through their chemical perturbants.

- Polypharmacology: Understanding how multi-target drugs produce their therapeutic effects through action on multiple pathway components.

- Pathway Crosstalk: Identifying connections between seemingly distinct biological processes through shared chemical sensitivities.

Clinical Translation and Drug Discovery

Chemical probes developed through chemogenomic approaches have proven valuable for validating disease-modifying targets, facilitating investigation of target function, safety, and translation [2]. While probes and drugs often differ in their properties, chemical probes provide useful starting points for small molecule drugs and can accelerate the drug discovery process [2].

Notable examples of clinical candidates inspired by chemical probes include:

- BET bromodomain inhibitors: (+)-JQ1, a potent inhibitor of BRD4, inspired the development of multiple clinical candidates including I-BET762/GSK525762/molibresib and OTX015/MK-8628 for cancer treatment [2].

- Epigenetic modulators: Probes targeting various epigenetic reader domains have led to clinical candidates for hematological malignancies and solid tumors [2].

- Kinase inhibitors: Selective probes for understudied kinases have provided starting points for therapeutic development in inflammatory diseases and cancer.

The systematic nature of chemogenomics ensures that these discoveries contribute to a growing knowledge base that can be leveraged for future drug discovery efforts, particularly through open science initiatives that make high-quality chemical probes and data freely available to the research community [3] [4].

Chemogenomics represents a systematic approach in modern drug discovery and functional genomics that investigates the interactions between small molecules and biological target families on a genome-wide scale. The core premise of chemogenomics is the systematic screening of targeted chemical libraries against families of functionally related protein targets—such as GPCRs, kinases, nuclear receptors, proteases, and ion channels—with the dual goal of identifying novel therapeutic compounds and elucidating the functions of uncharacterized targets [9] [10]. This approach has fundamentally transformed how researchers approach biological pathway identification by integrating chemical and biological spaces to establish ligand-target relationships not evident from individual disciplines [9].

In the specific context of biological pathway identification, chemogenomics provides powerful tools for deconvoluting complex cellular networks. Where traditional genetics modifies gene function permanently, chemogenomics uses small molecules as reversible, temporal probes to modulate protein function, allowing researchers to observe real-time interactions and phenotypic consequences that can be interrupted upon compound withdrawal [10]. This dynamic intervention provides unique insights into pathway architecture, compensation mechanisms, and functional redundancies that might be obscured in genetic models. The field operates through two principal, complementary paradigms: forward chemogenomics and reverse chemogenomics, each with distinct strategic approaches for pathway elucidation.

Forward Chemogenomics: From Phenotype to Target

Conceptual Framework and Workflow

Forward chemogenomics, also termed "classical chemogenomics," initiates the investigation with a phenotypic observation and works toward identifying the molecular mechanisms responsible [10]. In this approach, researchers first identify small molecules that induce a specific phenotype of interest in cells or whole organisms, then use these bioactive compounds as tools to identify the protein targets responsible for the observed phenotypic effect [9] [10]. The fundamental strategy involves screening diverse compound libraries against model biological systems to identify modulators that produce a target phenotype—such as inhibition of tumor growth, alteration of metabolic activity, or changes in cellular morphology. Once phenotype-inducing compounds are identified, the subsequent challenge is target deconvolution, determining which proteins these compounds interact with to produce the observed effect [10].

This phenotype-first approach is particularly valuable for investigating biological pathways where the molecular basis of a desired phenotype is unknown, making it a powerful method for discovering novel components of signaling networks and metabolic pathways. The main challenge of forward chemogenomics lies in designing phenotypic assays that can efficiently transition from screening to target identification, requiring sophisticated methods to link chemical-induced phenotypes to specific protein targets and pathway nodes [10].

Key Experimental Methodologies

Pooled Competitive Fitness Screening with Barcoded Libraries: A powerful forward chemogenomics methodology utilizes pooled, barcoded yeast deletion collections, enabling genome-wide screening in a single culture [11] [12]. This approach involves:

- Library Preparation: The approximately 6,000 strains in the yeast deletion collection, each with a unique 20 bp DNA "barcode," are pooled and cultured together [11].

- Chemical Treatment: The pooled culture is divided and grown in the presence or absence of the small molecule of interest.

- Competitive Growth: Strains are grown competitively in a pool. The relative fitness of each deletion strain under chemical treatment is determined by comparing barcode abundances between treated and untreated cultures [12].

- Microarray Analysis: Genomic DNA is isolated from pooled cultures, barcodes are amplified via PCR, and barcode intensities are measured by microarray to quantify the relative abundance of each strain [11].

- Data Analysis: Sensitivity or resistance profiles (chemogenomic profiles) are generated by comparing strain fitness across conditions. Strains showing hypersensitivity to a compound often identify genes that buffer the target pathway, while resistant strains may indicate the drug target or efflux mechanisms [12].

Fitness-Based Profiling for Mechanism of Action (MOA): Beyond simple viability, fitness-based chemogenomic profiling can suggest a compound's broader MOA. Gene Ontology (GO) analysis of the resulting sensitivity profile identifies biological pathways and processes enriched among sensitive deletion strains, helping infer the pathway affected by the compound [12]. For example, if a compound causes hypersensitivity in multiple deletion strains all involved in cell wall integrity, this strongly suggests the compound's MOA involves disrupting cell wall biosynthesis pathways.

Applications and Strengths

Forward chemogenomics has been successfully applied to identify genes in previously uncharacterized biological pathways. A notable example involved elucidating the biosynthesis pathway of diphthamide, a modified histidine residue on translation elongation factor 2. Researchers used chemogenomic cofitness data from Saccharomyces cerevisiae—which measures the similarity of growth fitness under various conditions between deletion strains—to identify a strain (ylr143w) with high cofitness to strains lacking known diphthamide biosynthesis genes. This forward approach led to the discovery that YLR143W was the missing diphthamide synthetase responsible for the final step in the pathway [10].

The principal strength of forward chemogenomics is its unbiased nature; it requires no preconceived notions about which specific protein or pathway is involved, allowing for truly novel discoveries. It directly links chemical-induced phenotypes to biological functions in a physiologically relevant context, making it ideal for investigating complex cellular processes and pathways where key components remain unknown.

Reverse Chemogenomics: From Target to Phenotype

Conceptual Framework and Workflow

Reverse chemogenomics represents the complementary approach to forward chemogenomics, beginning with a specific protein target of interest and working toward understanding its biological function and phenotypic influence [10]. This strategy initially identifies small molecules that interact with and perturb the function of a predefined enzyme or receptor in a simplified in vitro system, such as a purified protein assay. Once target-specific modulators are identified, the phenotypic consequences of this targeted perturbation are analyzed in more complex biological systems—first in cellular models and potentially progressing to whole organisms [10].

This target-first approach closely resembles traditional target-based drug discovery but is enhanced by capabilities for parallel screening across multiple members of a target family and the application of chemogenomic profiling to understand downstream effects [9] [10]. The underlying principle is that by specifically modulating one protein target and observing the resulting phenotypic changes, researchers can confirm the protein's role in biological pathways and elucidate its connections to broader cellular networks. Reverse chemogenomics is particularly powerful for annotating the functions of orphan receptors or proteins of unknown function that belong to well-characterized gene families [9].

Key Experimental Methodologies

In Silico Chemogenomics and Virtual Screening: A cornerstone of modern reverse chemogenomics involves computational approaches to predict interactions between small molecules and protein targets across gene families [9]. The workflow typically includes:

- Target Family Characterization: Collecting amino acid sequences, structural data (NMR, crystal structures, homology models), and known ligand information for all members of a gene family.

- Ligand-Target Modeling: Using machine learning algorithms (e.g., Support Vector Machines) to build models that predict binding between chemicals and targets. The model is trained on known interacting and non-interacting pairs, representing each target-chemical pair by a vector Φ(t, c) to compute a linear function f(t, c) = w⊤Φ(t, c) whose sign predicts binding potential [9].

- Virtual Screening: Applying these models to screen large virtual compound libraries in silico to identify potential ligands for other members of the gene family, including orphan targets [9].

- Experimental Validation: Testing computationally predicted ligands in biochemical and cellular assays to confirm activity and determine phenotypic effects.

Target-Based High-Throughput Screening (HTS): Experimental reverse chemogenomics employs HTS of chemical libraries against purified protein targets or cellular models expressing specific targets. For example, in GPCR-targeted reverse chemogenomics, screening technologies might include:

- Competitive Ligand-Binding Assays (CLBA): A classical technique that quantifies the interaction between a GPCR and a radiolabeled reference ligand by titration with the molecule of interest [13]. This method provides high specificity and sensitivity for characterizing direct target engagement.

- Functional Assays: These measure downstream signaling events following target activation or inhibition, such as calcium mobilization, cAMP accumulation, or β-arrestin recruitment, providing insights into the functional consequences of ligand binding [13].

Applications and Strengths

Reverse chemogenomics has proven highly effective in identifying new therapeutic applications for existing drugs and tool compounds by revealing previously unknown "off-target" interactions. For instance, the application of in silico chemogenomics has successfully identified new targets for approved drugs including aprindine, gentamicin, clotrimazole, tetrabenazine, griseofulvin, and cinnarizine [9]. This approach can repurpose known compounds for new indications based on their newly discovered polypharmacology.

In pathway elucidation, reverse chemogenomics helps validate the functional role of specific proteins within biological networks. For example, when a compound designed to inhibit a specific kinase in vitro also produces an anti-proliferative phenotype in cells, this confirms that kinase's role in proliferation pathways. Furthermore, by screening a compound against multiple related targets, researchers can map specificity and cross-reactivity within gene families, revealing functional redundancies and compensatory mechanisms within pathways [9].

The strength of reverse chemogenomics lies in its straightforward target-to-phenotype logic, which often enables more direct interpretation of results than forward approaches. The initial focus on well-defined molecular targets simplifies the optimization of chemical probes through structure-activity relationship (SAR) studies and facilitates the generation of hypotheses about biological function that can be tested in increasingly complex model systems.

Comparative Analysis: Strategic Selection Guide

The decision to employ forward or reverse chemogenomics depends on the research goals, available tools, and biological context. The table below summarizes the core characteristics of each approach.

Table 1: Strategic Comparison of Forward and Reverse Chemogenomics

| Feature | Forward Chemogenomics | Reverse Chemogenomics |

|---|---|---|

| Fundamental Objective | Identify drug targets responsible for a phenotype [10] | Validate phenotypes resulting from a drug-target interaction [10] |

| Screening Approach | Phenotype-based screening in cells or organisms [10] | Target-based screening against defined proteins [9] |

| Starting Point | Biological phenotype (e.g., loss-of-function) [10] | Protein target (e.g., enzyme, receptor) [10] |

| Typical Assay Systems | Pooled competitive growth assays, phenotypic cellular assays [11] [12] | In vitro enzymatic assays, binding assays (e.g., CLBA) [10] [13] |

| Target Identification | Required post-screening; can be challenging [10] | Defined prior to screening |

| Pathway Elucidation Strength | Unbiased discovery of novel pathway components [10] | Systematic validation of target function within pathways [9] |

| Key Challenge | Designing assays that enable direct target identification [10] | Recapitulating relevant physiology in reductionist assays [12] |

The following workflow diagram illustrates the conceptual framework and key decision points for both strategies:

Successful implementation of chemogenomics strategies requires specialized biological and chemical reagents. The table below details key resources essential for designing and executing both forward and reverse chemogenomics studies.

Table 2: Essential Research Reagents and Resources for Chemogenomics

| Resource Category | Specific Examples | Function and Application |

|---|---|---|

| Chemical Libraries | GlaxoSmithKline Biologically Diverse Compound Set; Pfizer Chemogenomic Library; LOPAC1280; Prestwick Chemical Library [9] | Provide diverse small molecules for screening; target-focused libraries enrich for activity against specific gene families. |

| Barcoded Deletion Collections | Yeast Deletion Collection (YKO) [11] [12] | Enable genome-wide pooled competitive fitness assays in forward chemogenomics. |

| Gene Dosage Variant Libraries | Heterozygous Deletion Collection; DAmP Collection; MoBY-ORF Collection [12] | Allow direct drug target identification via HIP/HOP assays; libraries with partial or increased gene dosage help pinpoint targets. |

| Public Bioactivity Databases | ChEMBL; PubChem; BindingDB; ExCAPE-DB [5] [14] | Provide annotated chemogenomics data for building predictive models and validating findings. ExCAPE-DB offers a standardized, integrated dataset [14]. |

| Standardized Cell Assay Systems | GPCR-expressing Cell Lines; Reporter Gene Assays [13] | Enable target-specific screening and functional characterization in reverse chemogenomics. |

Integrated Applications and Future Perspectives in Pathway Research

The power of forward and reverse chemogenomics is magnified when integrated, creating a virtuous cycle of discovery and validation. For instance, a hit from a forward phenotypic screen can be advanced through reverse chemogenomics approaches to optimize its selectivity and understand its broader interaction profile across the proteome. Conversely, unexpected "off-target" effects discovered during reverse chemogenomics profiling can serve as starting points for forward chemogenomics to explore new biology and identify novel pathway connections [9] [10].

Modern cheminformatics platforms are crucial for this integration, leveraging publicly available chemogenomics repositories like ChEMBL and PubChem [5] [14]. However, researchers must be aware of data quality challenges, including chemical structure errors and bioactivity variability, necessitating rigorous curation workflows before model development [5]. Standardization of chemical structures, bioactivity annotations, and target identifiers—as implemented in resources like ExCAPE-DB—is essential for building reliable predictive models of polypharmacology and off-target effects [14].

Emerging artificial intelligence (AI) technologies are poised to further transform chemogenomics. Deep learning methods, such as chemogenomic neural networks (CNN), can learn complex representations from molecular graphs and protein sequences to predict drug-target interactions (DTIs) across large chemical and biological spaces [9]. These computational advances, combined with high-throughput experimental platforms—particularly for challenging target classes like GPCRs—will continue to enhance the efficiency and precision of both forward and reverse chemogenomics strategies for biological pathway identification [13].

In conclusion, forward and reverse chemogenomics provide complementary and powerful frameworks for deconstructing biological pathways. The strategic choice between them depends on the specific research question, with forward approaches excelling at unbiased discovery of novel pathway components, and reverse approaches providing targeted validation of specific protein functions within broader networks. As chemical and genomic technologies continue to advance and integrate, chemogenomics will remain a cornerstone strategy for elucidating complex biological systems and accelerating therapeutic discovery.

The Role of Privileged Structures and Target-Family Focus (e.g., GPCRs, Kinases)

The pursuit of innovative drug discovery paradigms has increasingly centered on chemogenomic approaches that leverage privileged molecular scaffolds and target-family specialization to interrogate biological pathways. This technical guide examines the strategic integration of privileged structures with focused research on two major drug target families: G protein-coupled receptors (GPCRs) and kinases. We present quantitative analyses of target family importance, detailed experimental methodologies for pathway identification, and visualization of key signaling cascades. Within the context of chemogenomic research, this framework enables systematic mapping of biological pathways through targeted chemical intervention, accelerating the identification of novel therapeutic opportunities and enhancing our understanding of complex cellular networks.

The concept of "privileged structures" represents a foundational element in modern chemogenomic approaches to biological pathway identification. Privileged structures are molecular scaffolds with versatile binding properties that enable a single scaffold to provide potent and selective ligands for multiple biological targets through strategic modification of functional groups [15]. These scaffolds typically exhibit favorable drug-like properties, leading to more drug-like compound libraries and development candidates. The strategic application of privileged structures allows researchers to target distinct protein families systematically, including GPCRs, ligand-gated ion channels (LGIC), and enzymes/kinases [15]. This approach has proven particularly valuable in chemogenomic studies where understanding structure-target relationships facilitates the design of focused libraries for pathway elucidation.

In the context of biological pathway identification, privileged structures serve as chemical probes to interrogate protein function and network relationships. By applying these versatile scaffolds across multiple targets within a protein family, researchers can map common and divergent signaling mechanisms, revealing how molecular interactions translate to cellular responses. This methodology aligns with the goals of initiatives such as Target 2035, which aims to develop chemical tools for all human proteins to comprehensively understand biological pathways [16]. Currently, available chemical tools target only 3% of the human proteome yet cover 53% of human biological pathways, demonstrating the efficiency of targeted approaches using privileged scaffolds [16].

Target-Family Focus in Chemogenomic Research

Quantitative Significance of GPCRs and Kinases

Target-family focused approaches have emerged as powerful strategies in chemogenomic research, with GPCRs and kinases representing two of the most therapeutically significant protein families. The tabulated data below illustrates their quantitative importance in drug discovery and research attention trends.

Table 1: Quantitative Significance of GPCRs and Kinases in Drug Discovery

| Parameter | GPCRs | Kinases |

|---|---|---|

| Percentage of FDA-approved drug targets | 34% [17] | Approximately 2.5% (extrapolated from market data) |

| Percentage of all marketed drugs targeting | 33-50% [18] [19] | Growing percentage (increasing research attention) [20] |

| Number of human genes | Nearly 800 [19] (≈4% of human genome [17]) | >500 human protein kinases [21] |

| Global drug sales volume | $180 billion (2018 estimate) [17] | Significant and growing market share |

| Research attention trend (1998-2017) | Steady increase, recently outpaced by kinases in compound and paper counts [20] | Steepest upward trend, surpassing GPCRs in compound counts (2013) and paper counts (2015) [20] |

Table 2: Research Attention Metrics for Major Target Families (1998-2017)

| Target Family | Unique Compounds Trend | Paper Counts Trend | Unique Targets Trend | Drug-Target Annotations |

|---|---|---|---|---|

| GPCRs | Steady increase, high counts | Consistently high, smooth increase | Relatively flat 2005-2017 | Steady increase with relative enrichment from 2005 |

| Kinases | Rapid increase, surpassing GPCRs from 2013 | Rapid increase, surpassing GPCRs from 2015 | Large fluctuations with peaks in 2008, 2011 | Significant peaks in 2011, 2017 from large-scale studies |

| Ion Channels | Moderate increase | Outperformed proteases | Moderate numbers | - |

| Nuclear Receptors | - | - | - | Outperformed others 1998-2004 in drug annotations |

The research attention trends reveal distinct innovation patterns between these target families. Kinase research has been characterized by large-scale screening studies that dramatically accelerated target investigation, such as comprehensive kinase inhibitor selectivity screens in 2008 and 2011 [20]. In contrast, GPCR research has demonstrated more consistent, steady growth despite the technical challenges associated with membrane protein purification and crystallization [20]. These differential trends highlight how technical advances and community resources shape target family investigation within chemogenomic research.

GPCRs as Privileged Targets

G protein-coupled receptors represent the largest family of membrane receptors in eukaryotes and serve as a paradigm for target-family focused research. GPCRs share a common architecture of seven transmembrane α-helical domains, with an extracellular N-terminus, three extracellular loops, three intracellular loops, and an intracellular C-terminus [17] [19]. This structural conservation across nearly 800 human GPCRs enables targeted approaches using privileged scaffolds that exploit common binding features [19].

GPCRs recognize tremendously diverse signals including light energy, peptides, lipids, sugars, proteins, odors, pheromones, hormones, and neurotransmitters [18] [17]. They regulate an incredible array of physiological functions from sensation to growth to hormone responses, making them invaluable probes for pathway identification [18]. Their signaling mechanism involves conformational changes upon ligand binding that promote interaction with heterotrimeric G proteins, acting as guanine nucleotide exchange factors (GEFs) to catalyze GDP-GTP exchange on the Gα subunit [19]. This initiates diverse intracellular signaling cascades through second messengers including cyclic AMP (cAMP), diacylglycerol (DAG), and inositol 1,4,5-triphosphate (IP3) [18].

Table 3: GPCR Classification and Signaling Mechanisms

| Classification System | Categories | Key Features |

|---|---|---|

| Classical System | Class A (Rhodopsin-like) | Largest class (85% of GPCRs); includes olfactory receptors |

| Class B (Secretin receptor family) | Characteristic structural motifs | |

| Class C (Glutamate receptor family) | Includes metabotropic glutamate receptors | |

| GRAFS System | Glutamate | Corresponds to Class C |

| Rhodopsin | Corresponds to Class A | |

| Adhesion | Unique structural and functional features | |

| Frizzled/Taste2 | Includes taste receptors | |

| Secretin | Corresponds to Class B | |

| Primary G Protein Coupling | Gs | Stimulates adenylyl cyclase, increases cAMP |

| Gi/o | Inhibits adenylyl cyclase, decreases cAMP | |

| Gq/11 | Activates phospholipase C-β, generates IP3 and DAG | |

| G12/13 | Regulates cytoskeletal changes, Rho GTPase activation |

The diagram below illustrates the core GPCR signaling pathway, highlighting key secondary messenger systems and downstream effects:

Figure 1: GPCR Signaling Pathway and Second Messenger Systems

Kinases as Privileged Targets

Kinases represent another major family of drug targets that have received increasing research attention, particularly in recent years. The human genome encodes approximately 500 protein kinases that control multiple aspects of cell and organism growth, differentiation, and function [21]. Kinases regulate target protein function through transfer of phosphate from ATP to the hydroxyl group of tyrosine, serine, or threonine residues in target proteins [21]. This fundamental mechanism enables their central role in signal transduction networks.

Two primary categories of tyrosine kinases exist: receptor tyrosine kinases (RTKs) and non-receptor tyrosine kinases. Approximately 20 RTK families and at least 9 distinct groups of nonreceptor tyrosine kinases have been identified in humans [21]. RTKs are single-pass transmembrane proteins that bind extracellular polypeptide ligands (e.g., growth factors) and cytoplasmic effector proteins. Ligand binding promotes receptor dimerization and autophosphorylation of tyrosine residues, stabilizing the active kinase conformation and creating binding sites for downstream adaptor, scaffold, and effector proteins [21].

Table 4: Major Kinase Families and Their Functions

| Kinase Category | Key Examples | Primary Functions |

|---|---|---|

| Receptor Tyrosine Kinases | EGFR/ErbB family, PDGFR, FGFR | Growth factor signaling, cell proliferation, differentiation |

| Non-receptor Tyrosine Kinases | Src family, Abl, Jak, Fak | Immune signaling, cell adhesion, migration |

| Tec Family Kinases | Tec, Btk, Itk | B-cell and T-cell receptor signaling |

| MAPK Pathway Kinases | ERK, p38, JNK | Cellular stress responses, proliferation signals |

| Serine/Threonine Kinases | PKC, AKT/PKB | Cell survival, metabolism, apoptosis regulation |

The diagram below illustrates the core kinase signaling pathway, highlighting key cascades and downstream effects:

Figure 2: Kinase Signaling Pathways and Major Cascades

Experimental Methodologies for Pathway Identification

Target Discovery Approaches

Chemogenomic pathway identification relies on sophisticated experimental methodologies that leverage privileged structures and target-family knowledge. The following table summarizes key approaches for target discovery and pathway mapping:

Table 5: Experimental Methods for Target Discovery and Pathway Identification

| Method | Principle | Applications in Pathway Identification |

|---|---|---|

| Drug Affinity Responsive Target Stability (DARTS) | Monitors changes in protein stability when ligands protect targets from protease degradation [22] | Identify direct protein targets of privileged scaffolds in complex biological samples |

| Multiomics Analysis | Integrates proteomic, genomic, and transcriptomic data to map pathway relationships | Systems-level understanding of target family signaling networks |

| Gene Editing | CRISPR/Cas9 and related technologies to knock out or modify potential target genes | Functional validation of pathway components and synthetic lethal interactions |

| Network-Based Inference | Uses protein-protein interaction networks to predict new drug targets based on guilt-by-association [22] | Expand known pathways and identify novel nodes for therapeutic intervention |

| Machine Learning DTI Prediction | Algorithms learn patterns from known drug-target interactions to predict new interactions [22] | Accelerate discovery of novel pathway components amenable to modulation by privileged structures |

Detailed Protocol: Drug Affinity Responsive Target Stability (DARTS)

The DARTS method provides a label-free approach for identifying direct molecular targets of privileged scaffolds, making it particularly valuable for chemogenomic pathway mapping [22]. The detailed experimental workflow includes:

Sample Preparation: Prepare cell lysates or purified protein libraries representing the biological system of interest. Maintain physiological conditions to preserve native protein conformations.

Small Molecule Treatment: Incubate aliquots of the protein sample with the privileged scaffold compound or control vehicle. Typical concentrations range from nanomolar to micromolar, depending on expected binding affinity.

Protease Digestion: Divide the treated protein samples into multiple aliquots and digest with a nonspecific protease (typically thermolysin or proteinase K) across a range of concentrations. Include undigested controls for reference.

Protein Stability Analysis: Terminate protease reactions and analyze protein patterns using SDS-PAGE or mass spectrometry. Compare digestion patterns between compound-treated and control samples.

Target Identification: Identify proteins showing reduced degradation in compound-treated samples compared to controls. These stabilized proteins represent potential direct binding partners of the privileged scaffold.

Validation: Confirm putative targets through complementary approaches such as cellular thermal shift assay (CETSA), surface plasmon resonance (SPR), or functional assays.

The DARTS method is particularly advantageous for chemogenomic studies as it requires no chemical modification of the privileged scaffold, works with complex protein mixtures, and can detect interactions with low-abundance targets [22]. However, proper controls are essential to eliminate false positives from nonspecific stabilization effects.

Detailed Protocol: Kinase Inhibitor Profiling

Large-scale kinase inhibitor profiling represents a powerful target-family focused approach for pathway identification. The methodology involves:

Kinase Panel Selection: Curate a diverse panel of purified human kinases representing major kinase families and signaling pathways. Include both well-characterized and understudied kinases.

Compound Screening: Screen privileged scaffold compounds against the kinase panel using activity-based assays. Common formats include mobility shift assays, fluorescence resonance energy transfer (FRET), or radiolabeled ATP incorporation.

Concentration-Response Analysis: For hits showing significant inhibition, perform detailed concentration-response studies to determine IC50 values and selectivity profiles.

Cellular Target Engagement: Validate direct target engagement in cellular contexts using techniques such as thermal protein profiling or chemical proteomics.

Pathway Mapping: Integrate kinase inhibition profiles with known signaling networks to map pathways affected by privileged scaffold compounds.

Functional Validation: Use genetic approaches (RNAi, CRISPR) to validate pathway components and confirm phenotypic effects observed with chemical inhibition.

This approach was successfully employed in large-scale kinase inhibitor profiling studies that identified novel targets and pathways, sparking increased research interest in kinase biology [20].

The Scientist's Toolkit: Research Reagent Solutions

Table 6: Essential Research Reagents for GPCR and Kinase Studies

| Reagent Category | Specific Examples | Function and Application |

|---|---|---|

| GPCR-Targeted Reagents | GTPγS (non-hydrolyzable GTP analog) | Measures G protein activation in GPCR functional assays |

| Forskolin (adenylyl cyclase activator) | Modulates cAMP pathways in GPCR secondary messenger assays | |

| β-arrestin recruitment assays | Measures GPCR desensitization and internalization | |

| BRET/FRET-based GPCR signaling biosensors | Monitors real-time GPCR activation and signaling dynamics | |

| Kinase-Targeted Reagents | ATP-competitive affinity matrices | Purifies kinase targets and identifies kinase-compound interactions |

| Phospho-specific antibodies | Detects phosphorylation status of kinase substrates | |

| Kinase profiling panels | Assesses selectivity of kinase inhibitors across kinome | |

| Akt/PKB pathway inhibitors (e.g., MK-2206) | Probes PI3K/AKT survival signaling pathways | |

| General Pathway Mapping Tools | Protease enzymes (thermolysin, proteinase K) | DARTS experiments for target identification |

| Bimolecular fluorescence complementation (BiFC) | Visualizes protein-protein interactions in pathway mapping | |

| CRISPR/Cas9 gene editing systems | Functional validation of pathway components | |

| Tandem mass spectrometry (LC-MS/MS) | Identifies protein targets and phosphorylation sites |

Integration with Chemogenomic Pathway Identification

The strategic combination of privileged structures and target-family focus creates a powerful framework for chemogenomic pathway identification. This integrated approach enables systematic mapping of biological pathways through several key mechanisms:

First, privileged scaffolds provide versatile chemical starting points that can be optimized for multiple targets within a protein family, revealing connections between molecular targets and downstream phenotypic effects. The application of privileged structure libraries against focused target families like GPCRs or kinases generates rich datasets that illuminate both on-target and polypharmacological effects [15].

Second, target-family specialization allows researchers to leverage conserved structural features and assay technologies across multiple targets. For example, conserved binding pockets in GPCRs or ATP-binding sites in kinases enable development of standardized screening approaches that accelerate pathway mapping [18] [21].

Third, initiatives like Target 2035 aim to develop chemical probes for all human proteins, with current tools already covering 53% of human biological pathways despite targeting only 3% of the human proteome [16]. This demonstrates the efficiency of targeted approaches using privileged scaffolds against key protein families.

The integration of these approaches within chemogenomic research continues to evolve with emerging technologies including machine learning-based drug-target interaction prediction, multiomics integration, and advanced gene editing techniques [22]. These innovations promise to accelerate biological pathway identification and therapeutic discovery through more systematic mapping of the interface between chemical space and biological systems.

Linking Small Molecule-Protein Interactions to Phenotypic Outcomes and Pathway Hypotheses

The fundamental paradigm of modern chemogenomics posits that small molecule compounds can be used as targeted perturbagens to elucidate protein function and deconvolve complex biological pathways. This approach bridges the gap between molecular interactions and phenotypic outcomes by systematically mapping chemical tools to their protein targets and subsequent pathway modulations. The core hypothesis suggests that compounds with similar interaction profiles will influence biological systems in related ways, enabling researchers to generate testable hypotheses about pathway organization and function through controlled chemical interventions [23]. This methodology represents a significant shift from traditional reductionist "magic bullet" approaches toward a more holistic systems biology perspective that acknowledges the inherent promiscuity of small molecules and their effects on entire biological networks [23].

Advanced computational platforms now enable the creation of multiscale interactomic signatures that describe compound behavior across multiple biological scales, from direct protein binding to pathway modulation and phenotypic outcomes [23]. These signatures facilitate the relating of compounds to each other with the hypothesis that similar signatures yield similar biological behavior, enabling more accurate prediction of therapeutic potential and generation of novel drug candidates. The integration of heterogeneous data types—including drug side effects, protein pathways, protein-protein interactions, protein-disease associations, and Gene Ontology terms—creates a comprehensive framework for understanding how molecular interactions propagate through biological systems to produce observable phenotypes [23].

Computational Frameworks for Multiscale Analysis

Signature-Based Profiling Platforms

The Computational Analysis of Novel Drug Opportunities (CANDO) platform exemplifies the multiscale therapeutic discovery approach by generating "multiscale interactomic signatures" for each compound that describe its functional behavior as vectors of real values [23]. These signatures integrate multiple data types:

- Compound-protein interactions scored using bioanalytical docking protocols

- Protein-pathway associations from databases like Reactome

- Protein-disease associations from curated resources

- Drug side effect profiles from sources like OFFSIDES

- Gene Ontology annotations for functional context

The platform employs a graph feature embedding algorithm (node2vec) to create multiscale interactomic signatures from heterogeneous biological networks [23]. The hypothesis is that compounds with similar signatures will have similar effects in biological systems and therefore can be repurposed accordingly. Benchmarking results indicate that networks incorporating side effect data significantly enhance performance, suggesting that adverse drug reactions contain rich information describing compound effects on biological systems [23].

Pathway-Centric Chemogenomic Mapping

The Probe my Pathway (PmP) database provides a specialized resource that directly maps high-quality chemical probes and chemogenomic compounds onto human biological pathways from the Reactome database [24]. This portal enables researchers to:

- Browse pathway coverage via interactive icicle charts that visualize the extent of chemical tool availability across pathway hierarchies

- Identify under-explored pathways with limited chemical coverage for targeted probe development

- Select appropriate chemical tools for specific pathway modulation experiments

- Explore structural chemistry of ligands targeting specific cellular machineries

PmP currently contains 554 chemical probes, 484 chemogenomic compounds, 11,175 proteins, and 2,673 pathways, updated annually with high-quality, well-characterized compounds [24]. This resource is particularly valuable for designing experiments that test specific pathway hypotheses through controlled chemical perturbations.

Table 1: Key Computational Platforms for Linking Small Molecules to Pathways

| Platform/Resource | Primary Function | Data Types Integrated | Key Applications |

|---|---|---|---|

| CANDO [23] | Multiscale interactomic signature generation | Protein interactions, pathways, side effects, gene ontology, disease associations | Drug repurposing, therapeutic candidate generation, adverse effect prediction |

| Probe my Pathway (PmP) [24] | Chemical tool to pathway mapping | Chemical probes, chemogenomic compounds, Reactome pathways | Pathway coverage analysis, chemical tool selection, target identification |

| PDBe Tools [25] | Structural analysis of small molecules in PDB | Protein-ligand structures, chemical descriptors, interaction patterns | Ligand characterization, interaction analysis, functional role assignment |

Structural Analysis and Interaction Mapping

Specialized tools for analyzing small molecule structures within the Protein Data Bank (PDB) provide critical insights into the molecular basis of compound-protein interactions. PDBe has developed several resources to address the complexity of small-molecule data in the PDB:

- PDBe CCDUtils: A chemistry toolkit for accessing and enriching ligand data from PDB reference dictionaries, computing physicochemical properties, and identifying core chemical substructures [25]

- PDBe Arpeggio: For analyzing interactions between ligands and macromolecules at the atomic level

- PDBe RelLig: For identifying the functional roles of ligands within protein-ligand complexes

These tools help researchers navigate the complexities of small molecules and their roles in biological systems, facilitating mechanistic understanding of biological functions [25]. The resources are particularly valuable for understanding how specific molecular interactions translate to functional consequences at the protein level, which then propagate to pathway and phenotypic levels.

Experimental Methodologies and Workflows

Multi-Omics Pathway Identification Protocol

Systematic identification of cancer pathways through integrated transcriptomics and proteomics analysis provides a robust methodology for linking molecular profiles to pathway hypotheses [26]. The experimental workflow comprises:

Sample Preparation and Data Collection:

- Utilize cancer cell lines from resources like the Cancer Cell Line Encyclopedia (CCLE)

- Generate RNA-Seq transcriptomics data measuring RNA transcript abundance

- Perform tandem mass tag (TMT)-based quantitative proteomics for large-scale protein quantification

- Ensure paired transcriptomics and proteomics data when possible (371 of 375 cell lines in published studies) [26]

Data Analysis and Significance Testing:

- Apply statistical approaches to identify significant transcripts and proteins for each cancer type

- Use optimal combination of Gini purity and FDR adjusted P-value for differential expression

- Typical results range from 5,756-11,143 significant transcripts and 409-2,443 significant proteins per cancer type [26]

- Identify protein coding biotypes in significant transcript sets that correspond to significant proteins

Pathway Enrichment and Characterization:

- Analyze significant transcripts and proteins for enrichment of biological pathways separately

- Identify overlapping pathways derived from both transcripts and proteins as characteristic

- Number of characteristic pathways typically ranges from 4-112 per cancer type [26]

- Prioritize pathways present in multiple analyses for experimental validation

Table 2: Representative Pathway-Drug Associations Identified Through Multi-Omics Analysis

| Cancer Type | Characteristic Pathway | Targeting Drugs | Validation Status |

|---|---|---|---|

| Acute Myeloid Leukemia | Olfactory Transduction | Multiple candidates identified | Literature corroboration |

| Urinary Tract Cancer | Alpha-6 Beta-1 and Alpha-6 Beta-4 Integrin Signaling | Under investigation | Experimental validation pending |

| Breast Cancer | Signaling by GPCR | Multiple candidates identified | FDA-approved for some |

| Stomach Cancer | Axon Guidance | Under investigation | Novel hypothesis |

Protein-Protein Interaction Inhibition Strategies

Structure-based approaches for developing protein-protein interaction (PPI) inhibitors provide a methodology for testing specific pathway hypotheses through targeted complex disruption [27]. The workflow involves:

Target Selection and Validation:

- Select biologically relevant targets with PPI interfaces amenable to disruption

- Utilize gene knockdown strategies (RNAi or CRISPR-Cas9) to define biological relevance

- Employ synthetic lethality assays to elucidate proteins linked with disease states

- Leverage computational prediction algorithms to identify binary PPIs and multi-protein complexes

Hot Spot Identification and Compound Design:

- Perform computational analysis of protein complexes to identify critical binding regions

- Utilize alanine scanning mutagenesis to identify hot spot residues (ΔΔG ≥1 kcal/mol upon substitution) [27]

- Measure changes in solvent-accessible surface area (ΔSASA) upon binding

- Design small molecules or peptidomimetics that reproduce functionality of hot spot residues

Compound Optimization and Validation:

- Develop orthosteric modulators that mimic critical features of binding interface

- Explore allosteric modulators that morph protein conformation

- Consider PPI stabilizers as alternative to inhibitors for certain targets

- Validate through biochemical and cellular assays confirming pathway modulation

Visualization and Data Integration

Experimental Workflow for Pathway Hypothesis Generation

The following diagram illustrates the integrated computational and experimental workflow for generating pathway hypotheses from small molecule-protein interactions:

Pathway-Centric Chemical Tool Selection Framework

The following diagram outlines the decision process for selecting chemical tools to test specific pathway hypotheses:

Table 3: Key Research Reagents and Computational Resources for Chemogenomic Pathway Analysis

| Resource/Reagent | Type | Primary Function | Application Context |

|---|---|---|---|

| Chemical Probes Portal [24] | Compound Database | Curated collection of high-quality chemical probes with selectivity profiles | Identification of well-characterized tools for specific protein targets |

| Reactome [24] | Pathway Database | Hierarchical representation of human biological pathways | Pathway context analysis and mapping of chemical tools |

| PDBe CCDUtils [25] | Computational Tool | Chemistry toolkit for accessing and analyzing PDB ligand data | Structural analysis of small molecules and interaction patterns |

| CANDO Platform [23] | Computational Platform | Multiscale interactomic signature generation and analysis | Drug repurposing, mechanism prediction, and candidate generation |

| Kinase Chemogenomic Set (KCGS) [24] | Compound Library | Well-characterized kinase-focused chemical compounds | Selective modulation of kinase signaling pathways |

| Cancer Cell Line Encyclopedia [26] | Biological Resource | Multi-omics data for 1000+ cancer cell lines across 40+ cancer types | Model systems for pathway analysis and drug screening |

| node2vec Algorithm [23] | Computational Method | Graph feature embedding for network analysis | Generation of multiscale interactomic signatures from heterogeneous data |

| RDKit [25] | Computational Library | Cheminformatics and machine learning for small molecules | Chemical descriptor calculation and substructure analysis |

The integration of small molecule-protein interaction data with multiscale biological networks represents a powerful framework for generating and testing pathway hypotheses. By leveraging computational platforms that create holistic signatures of compound behavior, researchers can move beyond single-target thinking toward a systems-level understanding of how chemical perturbations propagate through biological networks to produce phenotypic outcomes. The methodologies and resources outlined in this guide provide a foundation for designing experiments that systematically connect molecular interactions to pathway modulation, enabling more efficient drug discovery and repurposing while advancing our fundamental understanding of biological systems. As chemical probe coverage expands and computational methods mature, the vision of comprehensively mapping the human pathome through controlled chemical perturbations moves increasingly toward reality.

The Evolution from 'One-Drug, One-Target' to Systems-Level Pathway Analysis

The paradigm of drug discovery has undergone a fundamental transformation, shifting from the reductionist 'one-drug, one-target' approach to embracing the complexity of biological systems through pathway-level analysis. This evolution represents a response to the limitations of traditional methods in addressing complex diseases and the growing recognition that cellular processes operate through interconnected networks rather than isolated molecular components. Enabled by advances in high-throughput omics technologies and sophisticated computational methods, systems-level pathway analysis now provides a framework for understanding drug effects in their physiological context, leading to more effective therapeutic strategies with improved safety profiles and enhanced efficacy.

The dominant 'one-drug, one-target' paradigm that guided drug discovery for decades aimed to design selective drug molecules acting on individual biological targets [28]. This approach was built on a simplistic perspective of human anatomy and physiology, where health was determined by individual diagnostic markers, and drugs were developed to modulate specific targets to return these markers to normal ranges [29]. While this reductionist model yielded important therapeutic breakthroughs, it ignored the cellular and physiological context of drugs' mechanisms of action, making it difficult to address safety and toxicity issues adequately in drug development [28].

The emergence of systems biology and precision medicine has catalyzed a fundamental re-evaluation of this paradigm [29]. Complex diseases such as cancer, cardiovascular diseases, and neurological disorders typically result from the dysfunction of multiple pathways rather than a small number of individual genes [28]. This recognition, coupled with an appreciation of staggering human biological complexity—including approximately 19,000 coding genes, 20,000 gene-coded proteins, 250,000-1 million protein variants, and ~40,000 metabolites—has necessitated a more holistic approach to therapeutic intervention [29].

The advent of high-throughput omics technologies has enabled researchers to collect large-scale datasets on various properties of compounds, features of target genes/proteins, and responses in the human physiological system [28]. These technological advances, combined with sophisticated computational methods, have paved the way for pathway-based analysis as a powerful framework for drug target inference and validation.

Limitations of the Traditional 'One-Drug, One-Target' Model

Scientific and Clinical Shortcomings

The traditional drug discovery model has demonstrated significant limitations in both scientific rationale and clinical performance:

Insufficient efficacy: Most drugs developed under the one-drug-one-target paradigm show limited effectiveness across patient populations. Analyses reveal that drugs are only 30-75% effective, with the lowest responders being oncology patients (25% response rate) and significant non-response rates in Alzheimer's (70%), arthritis (50%), diabetes (43%), and asthma (40%) patients [29].

Safety concerns: Drug promiscuity remains a significant issue, with individual drugs potentially interacting with an estimated 6-28 off-target moieties on average [29]. Between 1994-2015, the FDA recalled 26 drugs from the market primarily due to safety concerns [29].

High attrition rates: The drug development process faces staggering failure rates—46% in Phase I clinical trials, 66% in Phase II, and 30% in Phase III—with only approximately 8% of lead compounds successfully traversing the clinical trials gauntlet [29].

Economic Challenges

The economic implications of these limitations are substantial:

Prolonged development timelines: The average time required from drug discovery to product launch remains 12-15 years [29].

Extraordinary costs: The total capitalized cost of bringing a new drug to market was recently estimated at $2.87 billion [29].

These challenges collectively highlight the need for a more sophisticated approach that accounts for biological complexity and the network properties of disease mechanisms.

Quantitative Foundations of Successful Drug Targets

Analysis of systems-level properties of human genes and proteins targeted by 919 FDA-approved drugs has revealed distinct quantitative characteristics that distinguish successful drug targets from other genes and proteins [30] [31].

Table 1: Quantitative Properties of Successful Drug Targets Compared to Average Human Genes

| Property | Successful Drug Targets | Average Human Genes | Statistical Significance |

|---|---|---|---|

| Network Connectivity | Higher but not most highly connected | Lower | P-value = 0.0064 |

| Betweenness Centrality | Higher values | Lower | P-value = 0.0004 (HPRD network) |

| Tissue Expression Entropy | Lower entropy (more tissue-specific) | Higher entropy | Highly significant |

| Non-synonymous/Synonymous SNP Ratio (Cratio) | Significantly smaller | Larger | P-value = 0.0007 |

| Target Distribution | 36% receptors, 35% enzymes, 21% transport/storage proteins | Varies widely | Functional bias |

Network Topology Properties

In molecular interaction networks, successful drug targets occupy distinct topological niches:

Moderate connectivity: Successful drug targets exhibit higher connectivity than average nodes in molecular networks (approximately 9.1 in GeneWays network versus average connectivity), but are far from being the most highly connected nodes (maximum connectivity 346) [30]. This moderate connectivity suggests they occupy influential but not critically central positions in cellular networks.

Elevated betweenness: Drug targets show higher betweenness values, indicating they tend to bridge multiple clusters of interacting molecules rather than residing within tightly-knit modules [30] [31]. This positioning may allow for more specific modulation of pathway activity.

Sequence and Expression Properties

At the sequence and expression levels, successful drug targets demonstrate:

Evolutionary conservation: The significantly lower ratio of non-synonymous to synonymous SNPs (Cratio) suggests successful drug targets tend to be less polymorphic at the population level [30]. This reduced genetic variation may increase the likelihood that drugs targeting these proteins will be effective across diverse populations.

Tissue specificity: Lower entropy of tissue expression indicates successful drug targets show more restricted expression patterns across tissues [30] [31]. This tissue specificity may contribute to more selective drug action and reduced off-target effects.

Technological Enablers: Omics Platforms for Pathway Analysis

The shift to systems-level pharmacology has been enabled by advanced technological platforms that provide comprehensive molecular profiling capabilities.

Table 2: Omics Technologies for Drug Target Discovery

| Technology Platform | Key Methods | Applications in Drug Discovery | Limitations |

|---|---|---|---|

| Genomics | Microarrays, Next-Generation Sequencing (NGS), RNA-seq | Identify genetic alterations, measure transcript levels, discover novel isoforms | Cannot directly capture protein-level information |

| Proteomics | 2D gel electrophoresis, Mass spectrometry, iTRAQ, MRM | Target identification, efficacy/toxicity biomarkers, protein/drug interaction analysis | Technical challenges in comprehensive coverage |

| Metabolomics | NMR, Liquid chromatography, Mass spectrometry | Measure small molecule metabolites, capture rapid physiological responses | Complex data interpretation, limited reference databases |

Genomic Technologies

Genomic technologies characterize the physiological state of biological systems from the perspective of the genome:

Microarray technology: Developed in the mid-1990s, microarrays enable affordable genotyping and expression profiling, with applications including gene expression arrays, genotyping arrays, and comparative genomic hybridization (CGH) for copy number variation analysis [28].

Next-generation sequencing (NGS): NGS technologies provide more sensitive and accurate measurements than microarrays, with broader applications including identification of genetic alterations, measurement of transcript levels (RNA-seq), discovery of novel isoforms, and inference of epigenetic status [28] [32]. The NGS market is expected to reach $21.62 billion by 2025, reflecting its growing importance [32].

Proteomic and Metabolomic Technologies

Proteomic technologies: These platforms profile protein expression levels and modifications, providing more direct information on drug targets since proteins are the functional units in biological systems [28]. Advanced methods include protein sequence tags (PST), multidimensional protein identification technology (MudPIT), and isotope-coded affinity tagging (ICAT) [28].

Metabolomic technologies: Metabolomics measures concentrations of small molecule metabolites using nuclear magnetic resonance (NMR), liquid chromatography, and mass spectrometry [28]. A key advantage of metabolomics is its ability to capture rapid metabolic responses (seconds to minutes) compared to genetic responses (days to weeks) [28].

Computational Methods for Pathway-Based Drug Target Inference

Computational approaches for drug target identification have evolved to leverage pathway information from multi-omics data.

Approaches for Drug-Target Interaction Prediction

Table 3: Computational Approaches for Drug Target Identification

| Approach | Methodology | Pros | Cons |

|---|---|---|---|

| Ligand-based | QSAR, chemical structure similarity | Easily applied to new drugs with similar structures | Requires many known ligands for target proteins |

| Target-based | Docking analysis, protein structure/sequence similarity | Rich information on various target proteins | Not designed for genome-scale computation |

| Phenotype-based | Connectivity Map, expression response profiling | Genome-scale computation feasible | May overlook valuable information from other data sources |

Pathway Analysis Methodologies

Pathway analysis translates gene sets into functional insights by mapping measured molecules to known pathways. Two primary computational approaches have emerged:

Pathway Analysis Methodologies: GSEA vs. ORA

Gene Set Enrichment Analysis (GSEA)

GSEA evaluates whether predefined gene sets are enriched at the top or bottom of a ranked gene list based on expression changes:

- Rank genes: Genes are ranked based on the magnitude of their differential expression between experimental conditions [33].

- Calculate enrichment: GSEA checks if genes from a particular pathway cluster together at either extreme of this ranked list [33].

- Score normalization: An enrichment score (ES) is computed and normalized (NES) to account for dataset size differences [33].

- Interpretation: A positive NES indicates pathway activation (genes at top of list), while a negative NES suggests suppression (genes at bottom) [33].

GSEA is particularly valuable when biological pathways are globally upregulated or downregulated, even if not all individual genes in the pathway show significant differential expression [33].

Over-Representation Analysis (ORA)

ORA employs a simpler approach to identify pathways over-represented in differentially expressed genes:

- Identify DEGs: Select genes showing significant differential expression between conditions [33].

- Test over-representation: Examine whether DEGs are disproportionately represented in predefined pathways compared to random chance [33].

- Statistical testing: Use Fisher's exact test or hypergeometric distribution to calculate significance (p-value) of overlap [33].

- Interpretation: A significant p-value indicates the pathway is over-represented and likely biologically relevant [33].

ORA is ideal for smaller datasets or when researchers need a quicker, more straightforward analysis focused specifically on differentially expressed genes [33].

Experimental Protocols for Pathway-Based Target Discovery

Multi-Omics Integration Protocol

A 2025 study demonstrated a protocol for systematic identification of cancer pathways through integrated transcriptomics and proteomics analysis [26]:

Sample Collection: 1,023 human cancer cell lines collected, including 1,019 with RNA-Seq data and 375 with proteomics data (371 with both data types) [26].

Differential Expression Analysis: Identify significant transcripts and proteins for each cancer type using optimal combination of Gini purity and FDR-adjusted p-value [26].

Pathway Enrichment: Analyze significant transcripts and proteins for enrichment of biological pathways using databases like KEGG, Reactome, and WikiPathways [26].

Consensus Pathway Identification: Select overlapping pathways derived from both transcripts and proteins as characteristic for each cancer type [26].

Drug-Pathway Mapping: Retrieve potential anti-cancer drugs targeting these pathways from pharmacological databases [26].

This approach identified between 4 (stomach cancer) and 112 (acute myeloid leukemia) characteristic pathways per cancer type, with corresponding therapeutic drugs ranging from 1 (ovarian cancer) to 97 (AML and NSCLC) [26].

Chemical-Genomic Profiling Protocol

Chemical-genetic approaches systematically assess how genetic changes affect drug response:

Perturbation Design: Treat diverse genetic variants (e.g., yeast deletion strains or human cancer cell lines) with chemical compounds [34].

Phenotypic Screening: Measure growth inhibition or other phenotypic responses at multiple compound concentrations [34].

Dose-Response Analysis: Calculate GI50 values (concentration for 50% growth inhibition) for each compound-genotype combination [34].

Correlation Mapping: Cluster compounds with similar response profiles and correlate with molecular target data [34].

Target Validation: Use secondary assays to confirm predicted drug-target relationships [34].