Chemogenomics: A Comprehensive Guide to Accelerating Target Discovery in Drug Development

This article provides a comprehensive overview of chemogenomics, an innovative strategy that integrates combinatorial chemistry, genomics, and proteomics to systematically identify and validate novel therapeutic targets and bioactive compounds.

Chemogenomics: A Comprehensive Guide to Accelerating Target Discovery in Drug Development

Abstract

This article provides a comprehensive overview of chemogenomics, an innovative strategy that integrates combinatorial chemistry, genomics, and proteomics to systematically identify and validate novel therapeutic targets and bioactive compounds. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of forward and reverse chemogenomics, details cutting-edge methodological approaches including chemogenomic library screening and in silico prediction tools like the Komet algorithm, addresses key troubleshooting and optimization challenges, and presents validation frameworks and comparative analyses of computational techniques. The content synthesizes how chemogenomics is transforming drug discovery by enabling rapid, parallel identification of targets and drug candidates, ultimately aiming to de-risk and expedite the development of new treatments for human diseases.

Demystifying Chemogenomics: Core Concepts and Strategic Frameworks for Target Identification

Chemogenomics represents a transformative, interdisciplinary strategy in modern drug discovery and chemical biology. It is defined as the systematic screening of targeted chemical libraries of small molecules against individual drug target families—such as G protein-coupled receptors (GPCRs), nuclear receptors, kinases, and proteases—with the ultimate goal of identifying novel drugs and drug targets [1]. This approach strives to study the intersection of all possible drugs on all potential targets emerging from genomic sequencing, moving beyond single-target focus to a global perspective on pharmacological space [1] [2].

The foundational premise of chemogenomics rests on two key assumptions: first, that compounds sharing chemical similarity often share biological targets; and second, that targets sharing similar ligands frequently share similar binding sites or structural patterns [2]. By leveraging these principles, researchers can systematically explore the largely uncharted territory where an estimated 3000 druggable targets exist in the human genome, only approximately 800 of which have been seriously investigated by the pharmaceutical industry [2].

Core Principles and Strategic Approaches

Fundamental Concepts

At its core, chemogenomics integrates target and drug discovery by using active compounds as molecular probes to systematically characterize proteome functions [1]. The interaction between a small molecule and a protein induces an observable phenotype, enabling researchers to associate specific proteins with molecular events [1]. Unlike genetic approaches, chemogenomic techniques can modify protein function rather than the gene itself, offering the advantage of observing interactions and reversibility in real-time [1].

The field operates through two complementary experimental paradigms:

Table 1: Comparison of Chemogenomic Approaches

| Approach | Screening Direction | Primary Goal | Starting Point | Validation Method |

|---|---|---|---|---|

| Forward Chemogenomics | Phenotype → Compound → Target | Identify drug targets by discovering molecules that induce specific phenotypes [1] | Desired phenotype with unknown molecular basis [1] | Use modulators to identify responsible proteins [1] |

| Reverse Chemogenomics | Target → Compound → Phenotype | Validate phenotypes by finding molecules that interact with specific proteins [1] | Known protein target [1] | Analyze induced phenotype in cellular or whole-organism tests [1] |

Experimental Workflows

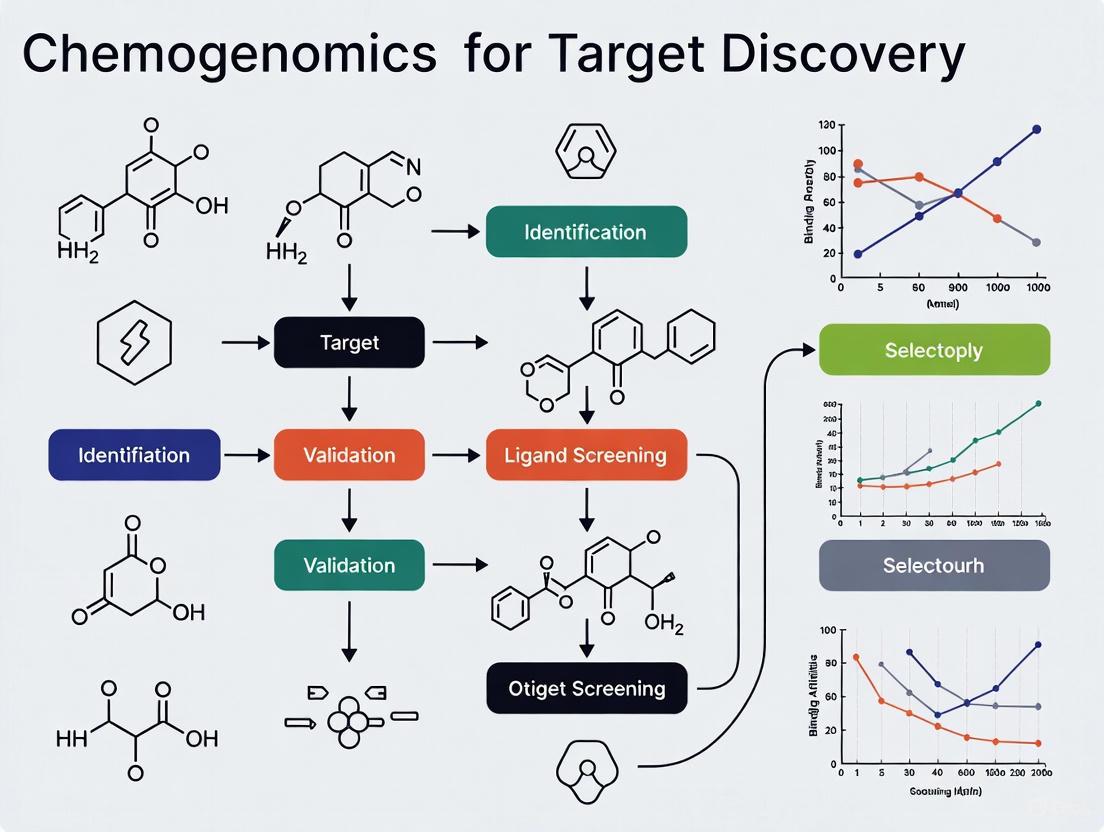

The implementation of chemogenomic strategies requires carefully designed workflows that integrate computational and experimental components. The following diagram illustrates the two primary screening approaches:

Practical Implementation and Methodologies

Data Curation and Quality Control

The reliability of chemogenomics studies depends critically on rigorous data curation. As chemogenomics repositories such as ChEMBL, PubChem, and PDSP continue to expand, concerns about data quality and reproducibility have emerged [3]. Studies have revealed error rates ranging from 0.1% to 3.4% for chemical structures in public and commercial databases, with some analyses indicating that only 20-25% of published assertions about biological functions for novel deorphanized proteins could be consistently reproduced [3].

An integrated chemical and biological data curation workflow should include:

- Chemical Structure Curation: Identification and correction of structural errors, removal of incomplete records (inorganics, organometallics, counterions, biologics, mixtures), structural cleaning (detection of valence violations, extreme bond lengths/angles), ring aromatization, normalization of specific chemotypes, and standardization of tautomeric forms [3].

- Stereochemistry Verification: Careful checking of stereocenters, particularly for complex molecules with multiple asymmetric carbons [3].

- Bioactivity Data Processing: Detection and resolution of chemical duplicates where the same compound is recorded multiple times with potentially different experimental responses [3].

Specialized software tools facilitate these curation tasks, including Molecular Checker/Standardizer (Chemaxon JChem), RDKit program tools, and LigPrep (Schrodinger Suite) [3]. For large datasets, manual inspection of at least a subset of compounds remains essential, particularly for complex structures or molecules with numerous atoms [3].

Chemogenomics Libraries and Screening

Central to the chemogenomics approach is the development of specialized compound collections known as chemogenomics libraries. These libraries are strategically designed to target specific protein families by including known ligands of at least one—and preferably several—family members [1] [4]. The underlying rationale is that ligands designed for one family member will often bind to additional related targets, enabling comprehensive coverage of the target family [1].

Table 2: Essential Research Reagents and Solutions for Chemogenomics

| Research Reagent | Function/Purpose | Key Characteristics | Application Examples |

|---|---|---|---|

| Targeted Chemical Libraries | Systematic screening against protein families [1] | Contains known ligands for target family members; designed for broad coverage [1] | GPCR screening, kinase inhibitor profiling [1] |

| Barcoded Yeast Libraries | Competitive fitness-based chemogenomic profiling [5] | Enables pooling of strains for high-throughput screening [5] | Target identification via HIP/HOP assays [5] |

| Protein Family-Specific Assays | Functional screening of compound libraries [1] | Optimized for specific target classes (GPCRs, kinases, etc.) | High-throughput binding or functional assays [2] |

| Liquid Handling Automation | Miniaturization and parallelization of screening [6] | Enables high-throughput compound testing with reproducibility | Benchop systems (e.g., Tecan Veya) to multi-robot workflows [6] |

| 3D Cell Culture Systems | Biologically relevant compound screening [6] | Provides human-relevant tissue models (e.g., organoids) | MO:BOT platform for standardized 3D culture [6] |

High-throughput screening technologies form the operational backbone of chemogenomics implementation. Modern systems range from simple, accessible benchtop systems to complex, unattended multi-robot workflows [6]. The primary objective of these automated platforms is to replace human variation with stable, reproducible systems that generate trustworthy data [6]. As noted by industry experts, "If AI is to mean anything, we need to capture more than results. Every condition and state must be recorded, so models have quality data to learn from" [6].

Applications in Target Discovery Research

Mechanism of Action Elucidation

Chemogenomics has proven particularly valuable for identifying the mode of action (MOA) for therapeutic compounds, including those derived from traditional medicine systems [1]. For traditional Chinese medicine and Ayurvedic formulations, chemogenomic approaches can predict ligand targets relevant to known phenotypes, helping to bridge empirical knowledge with modern molecular understanding [1]. In one case study, targets such as sodium-glucose transport proteins and PTP1B were identified as relevant to the hypoglycemic phenotype of "toning and replenishing medicine" in traditional Chinese medicine [1].

Fitness-based chemogenomic profiling approaches in model systems like yeast have enabled systematic MOA determination [5]. These methods utilize barcoded yeast libraries—including the YKO homozygous and haploid non-essential gene deletion collection, heterozygous deletion collection, DAmP collection, and MoBY-ORF collections—to quantitatively rank genes by their importance for resistance to compounds or ability to confer resistance [5].

Novel Target Identification

Chemogenomics profiling enables the discovery of completely new therapeutic targets through systematic analysis of chemical-protein interactions. In antibacterial development, researchers have leveraged existing ligand libraries for enzymes in essential bacterial pathways to identify new targets for known ligands [1]. For example, mapping a murD ligase ligand library to other members of the mur ligase family (murC, murE, murF, murA, and murG) revealed new targets for existing ligands, potentially leading to broad-spectrum Gram-negative inhibitors [1].

The following diagram illustrates a generalized workflow for target identification using chemogenomics approaches:

Biological Pathway Elucidation

Beyond single target identification, chemogenomics approaches can illuminate complete biological pathways. In a notable example, researchers used chemogenomics thirty years after the initial discovery of diphthamide (a modified histidine derivative) to identify the enzyme responsible for the final step in its synthesis [1]. By analyzing Saccharomyces cerevisiae cofitness data—which represents similarity of growth fitness under various conditions between different deletion strains—scientists identified the YLR143W gene product as the missing diphthamide synthetase [1]. This finding demonstrated how chemogenomic profiles could resolve long-standing biochemical mysteries by identifying genes with functional relationships to known pathway components.

Current Challenges and Future Directions

Despite its considerable promise, chemogenomics faces significant challenges in implementation. Data quality and reproducibility remain persistent concerns, with subtle experimental variations—such as differences in dispensing techniques (tip-based versus acoustic)—significantly influencing experimental responses and potentially compromising computational models built from these datasets [3]. The problem is sufficiently pressing that NIH has launched rigor and reproducibility initiatives and maintains a web portal dedicated to enhancing research reproducibility [3].

The future of chemogenomics is increasingly intertwined with artificial intelligence and machine learning. However, as experts note, most organizations are still grappling with fragmented, siloed data and inconsistent metadata—fundamental barriers that prevent automation and AI from delivering full value [6]. Success in this arena requires both "inside-out" approaches that embed intelligent tools directly into software scientists already use, and "outside-in" strategies that enable clean data surfacing into corporate data lakes and AI models [6].

Initiatives such as Target 2035 represent coordinated international efforts to address these challenges by creating open collaborative frameworks for target discovery [7]. These consortia provide platforms for computational scientists to benchmark hit-finding algorithms in real-world settings, with experimental testing of model predictions [7]. As these efforts mature, they will likely accelerate the systematic mapping of the pharmacological space, bringing us closer to the ultimate goal of chemogenomics: the comprehensive identification of ligands for all potential therapeutic targets in the human genome.

In the field of chemogenomics, small molecule probes serve as indispensable tools for bridging the gap between genomic information and functional protein understanding. These chemically synthesized compounds are designed to interact with specific proteins or protein families, enabling researchers to modulate and monitor protein activity within complex biological systems. The strategic use of these probes has revolutionized target discovery research by providing a direct means to validate protein function and assess therapeutic potential [8]. Unlike genetic approaches that permanently alter gene expression, small molecule probes offer reversible, dose-dependent, and often domain-specific protein inhibition, allowing for precise temporal control over protein function interrogation [8]. This capability is particularly valuable for investigating proteins with multiple functional domains or complex roles in cellular processes, where destructive validation methods would obscure important biological insights.

The integration of small molecule probes into chemogenomics workflows has accelerated the drug discovery process by providing well-characterized starting points for therapeutic development. These probes adhere to strict criteria, including minimal in vitro potency of less than 100 nM, greater than 30-fold selectivity over sequence-related proteins, comprehensive profiling against pharmacologically relevant targets, and demonstrable on-target cellular effects at concentrations greater than 1 μM [8]. By meeting these rigorous standards, chemical probes deliver high-quality pharmacological tools that yield more reliable data for target validation studies, ultimately reducing attrition rates in later stages of drug development. As chemogenomics continues to evolve toward systematic proteome exploration, small molecule probes represent a core component of the integrated strategy to translate genomic findings into clinical breakthroughs.

Technological Advances in Probe-Based Proteome Interrogation

Pooled Protein Tagging with Ligandable Domains

Recent breakthroughs in genomic engineering have enabled innovative approaches for proteome-wide functional studies using small molecule probes. Pooled protein tagging with ligandable domains represents a transformative methodology that allows researchers to systematically investigate thousands of proteins in parallel rather than through traditional single-protein experiments [9]. This approach involves generating complex cell libraries where each cell expresses a different protein fused to a generic, ligand-binding domain that serves as a universal handle for small molecule interaction [9]. These "ligandable domains" include versatile protein tags such as HaloTag, which covalently binds to chloroalkane ligands with efficiency comparable to biotin-streptavidin interactions, providing fast bio-orthogonal labeling in mammalian cells [9].

The power of this platform lies in its ability to couple pooled tag systems with specialized chemical modulators or fluorescent ligands, enabling researchers to simultaneously map subcellular localization changes, manipulate protein stability, induce non-native protein-protein interactions, and monitor dynamic cellular processes across the entire proteome [9]. By moving beyond single-protein experiments, this approach reveals system-level insights into protein behavior and network interactions that were previously inaccessible through conventional methods. The scalability of this technology makes it particularly valuable for functional annotation of understudied proteins and profiling the "ligandability" of proteomes – identifying which proteins are capable of binding small molecules with high affinity and specificity [10].

CRISPR-Based Endogenous Tagging Systems

The implementation of CRISPR-based methodologies has addressed critical limitations in traditional protein tagging approaches by enabling precise, endogenous tagging of proteins under native regulatory control. Several innovative CRISPR-based tagging systems have been developed, each with distinct advantages for specific research applications:

Table 1: Comparison of Endogenous Protein Tagging Methods

| Method | Key Feature | Integration Mechanism | Primary Application | Fusion Type |

|---|---|---|---|---|

| Homology-Independent Intron Targeting | Inserts synthetic exons within introns | CRISPR-induced DSBs + NHEJ | Screening multiple fusion variants per gene | Internal gene fusions |

| HITAG System | C-terminal tagging near stop codons | CRISPR-induced DSBs + NHEJ | Systematic C-terminal tagging | C-terminal fusions |

| Prime Editing-Based Tagging | Precise, indel-free integration | Prime editing without DSBs | N- or C-terminal tagging with short sequences | Terminal fusions |

Homology-independent intron targeting utilizes CRISPR-induced double-strand breaks (DSBs) combined with non-homologous end-joining (NHEJ) to integrate synthetic exons within intronic regions [9]. This approach capitalizes on the abundance of viable CRISPR target sites in introns and produces scarless fusions, as any indels occurring during integration are restricted to the intron rather than the coding sequence [9]. The HITAG (High-Throughput Insertion of Tags Across the Genome) system employs a different strategy, favoring tag insertion within exons at or near protein termini to ensure proper reading frame preservation through downstream selection markers and exogenous stop codons [9]. For applications requiring highest precision, prime editing-based pooled tagging enables exact, indel-free N- or C-terminal tagging of endogenous genes without relying on NHEJ, though it is currently limited to tags that can be encoded within a prime editing guide RNA (pegRNA) [9].

These CRISPR-based technologies have dramatically accelerated the functional characterization of proteins by ensuring that tagged proteins are expressed under native regulatory control, preserving physiological expression patterns, stoichiometries, and post-transcriptional regulation that are often disrupted in overexpression systems [9].

Experimental Frameworks and Methodologies

Proteome-Wide Localization Studies

The integration of pooled protein tagging with multifunctional ligand-binding domains enables systematic profiling of protein localization dynamics across the entire proteome. The experimental workflow begins with the generation of a complex cell library where each cell expresses a different protein fused to a ligand-binding domain such as HaloTag [9]. Following library validation, cells are treated with fluorescently-labeled ligands specifically designed to bind the ligandable domain – for HaloTag, this involves chloroalkane-functionalized fluorophores that form covalent bonds with the tag [9]. The labeled cells are then subjected to high-content imaging or sorted via fluorescence-activated cell sorting (FACS) to capture localization patterns.

Critical to this methodology is the subsequent deconvolution of the pooled library to identify which protein is tagged in each cell exhibiting a phenotype of interest. This is typically achieved through next-generation sequencing of integrated barcodes or amplification of genomic integration sites [9]. The resulting data provide a comprehensive map of protein localization under baseline conditions or in response to various perturbations, offering insights into protein function, trafficking mechanisms, and compartment-specific interactions. This approach has revealed novel insights into dynamic protein redistribution during cellular processes such as mitosis, stress response, and differentiation.

Protein Stability and Degradation Profiling

Small molecule probes enable sophisticated interrogation of protein stability and targeted degradation through several complementary approaches. Direct stability assessment utilizes pulse-chase strategies with fluorescent ligands to monitor protein turnover rates in live cells [9]. Cells expressing tagged proteins are briefly pulsed with a cell-permeable fluorescent ligand, followed by tracking of fluorescence intensity over time to determine degradation kinetics. This method can be combined with pharmacological inhibitors to identify specific degradation pathways involved in protein turnover.

For targeted protein degradation, bifunctional small molecules (PROTACs) are employed that simultaneously bind both the ligandable domain and components of the ubiquitin-proteasome system, such as E3 ubiquitin ligases [9]. These heterobifunctional probes effectively recruit target proteins to degradation machinery, resulting in selective depletion from cells. The experimental protocol involves treating the pooled library with degradation-inducing compounds, followed by quantitative proteomics or sequencing-based abundance measurements to identify successfully degraded targets and assess degradation kinetics.

A third approach leverages destabilizing domains that conditionally control protein stability based on the presence or absence of specific small molecule ligands [9]. In this system, proteins are fused to domains that are inherently unstable but can be stabilized by ligand binding. Treatment with the corresponding small molecule probe rapidly stabilizes the tagged protein, while washout initiates degradation, enabling precise temporal control over protein abundance for functional studies.

Table 2: Small Molecule Probe Applications in Protein Function Studies

| Application | Probe Type | Key Readout | Information Gained |

|---|---|---|---|

| Subcellular Localization | Fluorescent ligands | High-content imaging | Protein trafficking, compartmentalization |

| Protein-Protein Interactions | Dimerizing probes | Proximity labeling/MS | Interaction networks, complex formation |

| Targeted Degradation | PROTACs | Protein abundance | Essentiality, functional consequences |

| Protein Stability | Stabilizing ligands | Turnover kinetics | Degradation pathways, half-life |

| Enzyme Activity | Activity-based probes | Catalytic activity | Functional states, inhibition |

Protein-Protein Interaction Mapping

Small molecule probes facilitate systematic mapping of protein-protein interactions through induced proximity approaches. Chemically-induced dimerization strategies utilize bifunctional small molecules that simultaneously bind two different ligandable domains, forcing physical interaction between their fusion partners [9]. This approach allows researchers to examine the functional consequences of specific protein interactions and identify downstream signaling events. Alternatively, proximity-labeling techniques employ enzymes such as engineered biotin ligases or peroxidases fused to the protein of interest, which catalyze the labeling of nearby proteins with biotin upon addition of small molecule substrates [9]. The biotinylated proteins can then be purified and identified by mass spectrometry, providing a snapshot of the proximal proteome.

The experimental workflow for interaction mapping begins with treatment of the pooled library with dimerizing or proximity-labeling probes, followed by activation of the labeling system if necessary. For proximity labeling, cells are typically incubated with the small molecule substrate (e.g., biotin phenol for APEX2) for a short duration before quenching and cell lysis [9]. Biotinylated proteins are then captured using streptavidin beads, digested with trypsin, and analyzed by liquid chromatography-tandem mass spectrometry (LC-MS/MS). The resulting interaction networks are reconstructed by matching identified proteins to their corresponding barcodes in the original library, enabling system-level analysis of protein complexes and interaction dynamics in response to cellular perturbations.

Research Reagent Solutions Toolkit

The effective implementation of small molecule probe strategies requires a comprehensive toolkit of specialized reagents and technologies. The following table details essential research reagent solutions for probe-based protein function studies:

Table 3: Essential Research Reagent Solutions for Probe-Based Studies

| Reagent/Category | Function | Key Characteristics | Example Applications |

|---|---|---|---|

| HaloTag System | Covalent protein labeling | Derived from bacterial haloalkane dehalogenase; fast bio-orthogonal labeling | Protein localization, pulse-chase studies, protein trafficking [9] |

| CRISPR Tagging Tools | Endogenous gene tagging | CRISPR-Cas9 with NHEJ or HDR; requires only sgRNA | Endogenous protein tagging under native regulation [9] |

| ORFeome Libraries | Exogenous protein expression | Collection of ORF clones; strong promoter-driven expression | Studying proteins not natively expressed in cell lines [9] |

| Fluorescent Ligands | Visualization and tracking | Chloroalkane-functionalized fluorophores for HaloTag | Live-cell imaging, high-content screening, FACS analysis [9] |

| PROTAC Molecules | Targeted protein degradation | Heterobifunctional degraders; recruit to ubiquitin ligases | Protein knockdown, functional redundancy studies [9] |

| Destabilizing Domains | Conditional protein stability | Domains stabilized by specific small molecule ligands | Rapid protein control, essentiality testing [9] |

| High-Content Imaging Systems | Automated phenotype analysis | Automated microscopy + computational analysis | Subcellular localization, morphological changes [9] |

| Acoustic Droplet MS | Label-free screening | Acoustic droplet ejection mass spectrometry | Pharmacological inhibition studies [10] |

Applications in Drug Target Discovery and Validation

From Chemical Probes to Clinical Candidates

Small molecule probes have repeatedly demonstrated their value as starting points for drug development programs, bridging the gap between basic research and clinical applications. The journey from chemical probe to clinical candidate is exemplified by the development of BET bromodomain inhibitors for cancer therapy. The initial chemical probe (+)-JQ1 was instrumental in validating BET proteins as therapeutic targets through its potent inhibition of BRD4 (K_D = 50-90 nM) and anti-proliferative effects across multiple cancer types [8]. While (+)-JQ1 itself was unsuitable for clinical use due to its short half-life, it served as the structural template for optimized compounds including I-BET762 (GSK525762), which maintained similar target engagement while achieving improved pharmacokinetic properties [8].

The optimization process from probe to drug candidate involves systematic medicinal chemistry to enhance drug-like properties while maintaining target potency and selectivity. For I-BET762, researchers addressed stability issues associated with the triazolobenzodiazepine core by eliminating the nitrogen at the 3-position and replacing the phenylcarbamate with an ethylacetamide, resulting in lowered log P and molecular weight while improving oral bioavailability [8]. This compound advanced to clinical trials for NUT carcinoma and other solid tumors, demonstrating target engagement with once-daily dosing and clinical benefit in some patients [8]. Similarly, OTX015 was developed as another triazolothienodiazepine-based BET inhibitor with structural similarities to (+)-JQ1 but with modifications that substantially improved drug-likeness and oral bioavailability [8]. These examples illustrate how chemical probes serve as valuable structural templates that inspire drug discovery efforts even when the original probe lacks optimal drug-like properties.

Target Validation and Safety Assessment

Beyond providing starting points for drug development, small molecule probes play a crucial role in target validation and safety assessment during early drug discovery. The stringent selectivity requirements for high-quality chemical probes (typically >30-fold selectivity over related targets) make them ideal tools for establishing confidence in a target's therapeutic potential before committing significant resources to drug development [8]. By using selective probes to modulate target activity in disease-relevant models, researchers can evaluate both efficacy and potential safety concerns associated with target inhibition.

This approach is particularly valuable for assessing the therapeutic window of novel targets. For example, probes targeting epigenetic readers and writers have been extensively used to evaluate the consequences of modulating specific chromatin regulatory pathways, revealing both therapeutic opportunities and potential toxicities [8]. The reversible, dose-dependent nature of small molecule probe effects enables more nuanced safety assessment than genetic knockout approaches, allowing researchers to establish relationships between target engagement, pathway modulation, and phenotypic outcomes. This information is critical for establishing go/no-go decisions in target selection and for guiding compound optimization efforts to maximize therapeutic index.

Visualizing Experimental Workflows and Signaling Pathways

Pooled Protein Tagging and Screening Workflow

Chemical Probe to Drug Candidate Pipeline

Future Perspectives and Concluding Remarks

The integration of small molecule probes with advanced genomic technologies continues to transform chemogenomics and target discovery research. Emerging directions include the development of more versatile ligandable domains beyond current workhorses like HaloTag, expanding the toolbox of available probes and enabling more sophisticated multiplexed experiments [9]. The ongoing Target 2035 initiative represents an ambitious collaborative effort to develop chemical probes for the entire human proteome, mirroring the comprehensive scope of earlier genomics projects [8]. This systematic approach to probe development promises to dramatically accelerate functional annotation of the proteome and identification of new therapeutic targets.

Advancements in artificial intelligence and data integration are poised to further enhance the utility of small molecule probes in drug discovery. As noted in recent analyses, successful implementation of AI in pharmaceutical research requires high-quality, well-structured data with comprehensive metadata annotation [6]. The standardized experimental frameworks enabled by pooled protein tagging approaches generate precisely the type of consistent, comparable datasets needed to train predictive models for target identification and compound optimization. Additionally, the growing emphasis on human-relevant model systems, including 3D organoids and complex co-cultures, creates new opportunities to apply small molecule probes in more physiologically authentic contexts [6].

In conclusion, small molecule probes represent a core strategic asset in modern chemogenomics and target discovery research. Their unique combination of specificity, reversibility, and temporal control enables researchers to move beyond correlation to establish causal relationships between protein function and disease phenotypes. As technological advances continue to enhance the scale, precision, and analytical depth of probe-based experiments, these powerful tools will play an increasingly central role in bridging the gap between genomic information and therapeutic innovation, ultimately accelerating the development of novel treatments for human disease.

Forward chemogenomics represents a powerful, phenotype-first approach in modern drug discovery. In contrast to target-based strategies that begin with a known molecular target, forward chemogenomics starts with the observation of a desired phenotypic change in a biologically relevant system and works to identify the protein target(s) responsible for that phenotype [11]. This approach has gained significant traction based on its potential to address the incompletely understood complexity of diseases and its proven track record in delivering first-in-class drugs [11]. The fundamental premise relies on using well-characterized chemical modulators as molecular probes to unravel biological pathways and identify novel therapeutic targets, effectively bridging the gap between phenotypic observations and target identification.

The resurgence of interest in phenotypic screening approaches, coupled with major advances in cell-based screening technologies and 'omics' tools, has positioned forward chemogenomics as a strategic capability within comprehensive drug discovery portfolios [11]. This methodology is particularly valuable for investigating orphan targets or poorly understood disease mechanisms where the complete signaling networks remain unmapped. By employing sets of chemically diverse modulators against specific protein families or entire target classes, researchers can systematically probe biological systems and establish causal relationships between target engagement and phenotypic outcomes.

Core Principles and Framework

Conceptual Foundation

The forward chemogenomics workflow operates on a well-defined conceptual framework centered on the principle of using chemical tools to elucidate biological function. The process begins with the selection of a compound set representing diverse chemotypes against target classes of interest. These compounds are then screened in phenotypic assays relevant to disease states, with active "hit" compounds selected for further investigation [12]. The critical step of target deconvolution follows, employing various biochemical and computational methods to identify the molecular target(s) responsible for the observed phenotype. Finally, rigorous validation confirms the causal relationship between target engagement and phenotypic outcome, ultimately leading to new target hypotheses for therapeutic development.

The chain of translatability forms a crucial concept in forward chemogenomics, emphasizing the need for strong linkage between the cellular disease model used for phenotypic screening, the relevant human disease biology, and the compound-induced phenotypic changes [11]. This chain ensures that observations made in experimental systems have genuine relevance to human pathophysiology, addressing one of the historical challenges in phenotypic screening. Modern implementations incorporate 'omics knowledge—including genomic, transcriptomic, and proteomic data—to precisely define cellular disease phenotypes in the era of precision medicine, significantly enhancing the predictive value of these approaches [11].

Comparative Analysis with Reverse Chemogenomics

Table 1: Key Differences Between Forward and Reverse Chemogenomics

| Aspect | Forward Chemogenomics | Reverse Chemogenomics |

|---|---|---|

| Starting Point | Phenotypic observation | Known molecular target |

| Primary Screening | Phenotypic assays | Target-based assays |

| Target Identification | Post-screening (deconvolution) | Pre-defined |

| Hit Validation | Confirmation of target-phenotype linkage | Optimization of target binding affinity |

| Strengths | Identifies novel targets; addresses complex biology | High throughput; straightforward optimization |

| Challenges | Target deconvolution; off-target effects | Limited to known targets; may miss complex biology |

Forward chemogenomics differs fundamentally from reverse approaches, which begin with a validated molecular target and screen for compounds that modulate its activity. The reverse approach benefits from straightforward optimization pathways and well-defined structure-activity relationships but is limited to known targets with established roles in disease [11]. In contrast, forward chemogenomics offers the advantage of target-agnostic discovery, potentially identifying entirely novel therapeutic targets and mechanisms, though it faces challenges in target deconvolution and establishing direct causal relationships [11].

Experimental Methodologies and Workflows

Core Workflow and Signaling Pathways

The following diagram illustrates the complete forward chemogenomics workflow from initial compound selection through target validation:

Critical Experimental Protocols

Compound Library Design and Profiling

The foundation of successful forward chemogenomics lies in the careful design and validation of compound libraries. As demonstrated in NR4A receptor studies, comparative profiling under uniform conditions across orthogonal test systems is essential for establishing high-quality chemical tools [12]. The protocol involves:

Compound Selection: Prioritize chemically diverse compounds with documented activity against target families of interest. Include both agonists and inverse agonists where possible to enable bidirectional modulation studies [12].

Orthogonal Assay Systems: Implement multiple complementary screening approaches:

- Gal4-hybrid-based reporter gene assays

- Full-length receptor reporter gene assays

- Cell-free binding assays (ITC, DSF)

- Selectivity screening against representative panels of unrelated targets

Compound Validation: Rigorously characterize all compounds for:

- Purity and identity (HPLC, MS/NMR)

- Kinetic solubility

- Multiplex toxicity profiling (cell confluence, metabolic activity, apoptosis, necrosis)

- Direct binding confirmation through biophysical methods [12]

This comprehensive profiling approach identified significant deviations from published activities for several putative NR4A ligands, with some compounds showing complete lack of on-target binding, highlighting the critical importance of experimental validation [12].

Phenotypic Screening Implementation

Phenotypic screening requires careful consideration of the biological system and assay design to ensure relevance and translatability:

Model System Selection: Choose disease-relevant cellular models that accurately recapitulate key aspects of human pathophysiology. Advanced systems including induced pluripotent stem cells (iPSCs), 3D organoids, and microphysiological systems (organs-on-chips) offer enhanced biological relevance [11].

Assay Development: Design assays measuring functionally relevant endpoints connected to disease biology. Implement the "phenotypic screening rule of 3" framework, which emphasizes using multiple assay types, multiple cell types, and multiple activation states to enhance predictive validity [11].

Readout Selection: Incorporate high-content imaging and multi-parameter readouts to capture complex phenotypic responses. Transcriptomic profiling and pathway reporter genes can provide molecular signatures of compound activity [11].

A key example includes the development of glomerulus-on-a-chip microdevices for modeling diabetic nephropathy, which enabled more physiologically relevant screening compared to traditional 2D culture systems [11].

Target Deconvolution Methods

Target deconvolution represents the most technically challenging aspect of forward chemogenomics. Several complementary approaches are employed:

Chemical Proteomics: Utilize compound-conjugated matrices for affinity purification of interacting proteins from cell lysates. Combine with quantitative mass spectrometry (SILAC, TMT) to distinguish specific binders from non-specific interactions.

Genome-wide CRISPR Screening: Implement positive selection screens to identify genetic modifiers of compound sensitivity, revealing components of the compound's mechanism of action.

Transcriptomic Profiling: Employ connectivity mapping approaches comparing compound-induced gene expression signatures to reference databases containing signatures of compounds with known mechanisms [11].

Biophysical Methods: Use surface plasmon resonance (SPR) and thermal shift assays to confirm direct compound-target interactions identified through other methods.

The integration of multiple deconvolution approaches significantly enhances confidence in identified targets and helps address false positives from individual methods.

Technological Enablers and Computational Integration

Advanced Computational Approaches

Modern forward chemogenomics increasingly relies on computational methods to enhance efficiency and precision:

Multitask Deep Learning: Frameworks like DeepDTAGen demonstrate the power of integrated models that simultaneously predict drug-target binding affinities and generate novel target-aware drug variants using shared feature spaces [13]. These approaches utilize common pharmacological knowledge to link predictive and generative tasks.

Gradient Conflict Resolution: Advanced algorithms such as FetterGrad address optimization challenges in multitask learning by minimizing Euclidean distance between task gradients, ensuring aligned learning from shared feature spaces [13].

Binding Affinity Prediction: Modern DTA prediction models employ convolutional neural networks (CNNs) that process drug SMILES strings and protein sequences, with enhanced performance through graph representations of drug molecules and text-based information incorporation [13].

These computational approaches have demonstrated robust performance in predicting drug-target interactions, with DeepDTAGen achieving MSE of 0.146, CI of 0.897, and r²m of 0.765 on KIBA benchmark datasets, outperforming traditional machine learning models by 7.3% in CI and 21.6% in r²m [13].

Emerging Experimental Technologies

Several innovative methodologies are enhancing forward chemogenomics capabilities:

DNA-Encoded Libraries (DELs): Enable high-throughput screening of millions of compounds against biological targets by utilizing DNA as a unique identifier for each compound, dramatically increasing screening efficiency [14].

Targeted Protein Degradation (TPD): Technologies like PROTACs employ small molecules to tag undruggable proteins for degradation via cellular machinery, expanding the druggable target space [14].

Click Chemistry: Streamlines synthesis of diverse compound libraries through highly efficient and selective reactions, particularly Cu-catalyzed azide-alkyne cycloaddition (CuAAC), facilitating rapid hit discovery and optimization [14].

Research Reagent Solutions and Tools

Table 2: Essential Research Reagents for Forward Chemogenomics

| Reagent/Tool Category | Specific Examples | Function and Application |

|---|---|---|

| Validated Chemical Tools | NR4A modulator set (8 compounds) [12] | Pre-validated direct modulators for target identification studies; includes 5 agonists and 3 inverse agonists |

| Cell-Based Assay Systems | Gal4-hybrid reporter assays [12] | Standardized systems for measuring transcriptional activity and compound modulation |

| Biophysical Characterization | Isothermal Titration Calorimetry (ITC) [12] | Cell-free validation of direct compound-target binding and affinity measurement |

| Phenotypic Screening Models | Glomerulus-on-a-chip microdevices [11] | Physiologically relevant systems for disease modeling and compound screening |

| Computational Frameworks | DeepDTAGen [13] | Multitask deep learning for binding affinity prediction and target-aware drug generation |

| Pathway Analysis Tools | Connectivity Map [11] | Reference database of gene expression signatures for mechanism identification |

The NR4A modulator set exemplifies the ideal chemical tool characteristics for forward chemogenomics applications. This collection includes Cytosporone B (NR4A1 agonist, Kd = 0.115 nM), Isoxazolo-pyridinone 7 (pan-NR4A agonist, EC50 = 0.5-1.3 μM), and several structurally diverse compounds with confirmed binding through orthogonal validation [12]. Such well-characterized tool compounds enable confident target identification and validation studies by providing multiple chemical starting points with established mechanism of action.

Case Studies and Applications

NR4A Receptor Profiling and Applications

A comprehensive example of forward chemogenomics implementation involved the systematic profiling of NR4A family modulators. This study evaluated reported and commercially available compounds under uniform conditions, revealing a lack of on-target binding for several putative ligands while validating a set of eight direct modulators with diverse chemotypes [12]. The validated set enabled:

Target Identification in ER Stress: Prospective applications uncovered novel roles of NR4A receptors in endoplasmic reticulum stress response, linking specific receptor modulation to cytoprotective effects [12].

Adipocyte Differentiation Studies: Demonstrated NR4A involvement in adipocyte differentiation, revealing new regulatory mechanisms in metabolic disease pathways [12].

Tool Compound Establishment: Created a highly annotated chemical toolset for broad research community use, emphasizing commercial availability to promote unrestricted application [12].

This work highlights the importance of compound validation and the power of well-characterized tool sets in connecting orphan targets to biologically relevant phenotypes.

Integration with Multi-Omics Approaches

Advanced forward chemogenomics implementations successfully integrate multiple 'omics technologies to enhance target discovery:

Transcriptomic-Driven Insights: Tissue transcriptome analysis identified epidermal growth factor as a chronic kidney disease biomarker, demonstrating how omics data can guide target hypothesis generation [11].

Molecular Phenotyping: Combined molecular information with biological relevance and patient data to improve early drug discovery productivity, creating comprehensive compound profiles beyond simple efficacy metrics [11].

Toxicogenomics Integration: Incorporated toxicogenomics data with high-throughput screening to identify safety liabilities early in the discovery process, enhancing compound selection criteria [11].

These integrated approaches demonstrate the evolution of forward chemogenomics from simple phenotypic screening to sophisticated systems-level analysis, significantly enhancing its predictive power and clinical translatability.

Forward chemogenomics continues to evolve with emerging technologies and methodologies. The integration of artificial intelligence and machine learning approaches is poised to address increasing target complexity and enhance prediction accuracy [14]. Multitask learning frameworks that simultaneously predict binding affinities and generate novel target-aware compounds represent particularly promising directions [13]. Additionally, the growing emphasis on diverse biological contexts—including population-specific genomic variation and rare disease mechanisms—will likely expand the application space for forward chemogenomics approaches [11].

The demonstrated success of forward chemogenomics in identifying first-in-class drugs and novel therapeutic targets underscores its enduring value in drug discovery portfolios. By maintaining a focus on physiological relevance through sophisticated disease models and leveraging advances in computational prediction and multi-omics integration, this approach will continue to provide crucial insights into disease mechanisms and therapeutic opportunities. As the field progresses, increased attention to tool compound quality, standardized validation methodologies, and data sharing will further enhance the impact and efficiency of forward chemogenomics in target discovery and drug development.

Reverse chemogenomics is a systematic approach in chemical biology and drug discovery that begins with a validated protein target and aims to identify or design small molecules that modulate its activity, subsequently analyzing the phenotypic outcomes in cellular or organismal systems [1]. This strategy stands in contrast to forward chemogenomics, which starts with a phenotypic screen to find active compounds before identifying their protein targets [15] [1]. The reverse approach is particularly valuable for target validation and mechanism of action studies, as it allows researchers to explore the functional consequences of modulating specific, pre-validated targets in disease-relevant contexts [16] [17].

The fundamental premise of reverse chemogenomics is that selective chemical modulators can serve as powerful tools to establish causal relationships between a target protein and observed biological phenomena [16]. This approach has been enhanced by parallel screening capabilities and the ability to perform lead optimization across multiple targets within the same protein family [1]. By leveraging known target information, reverse chemogenomics provides a more direct path to understanding the pharmacological consequences of target modulation while facilitating the discovery of novel therapeutic agents with defined mechanisms of action [1].

Conceptual Framework and Workflow

Defining the Reverse Approach

In the reverse chemogenomics paradigm, the initial focus is on a validated biological target with established relevance to a particular disease process or signaling pathway [16] [17]. This target-first approach mirrors reverse genetics in molecular biology, where specific genes are manipulated to observe resulting phenotypes [17]. The process typically begins with target selection and credentialing, demonstrating the protein's relevance to a biological pathway, process, or disease of interest [17]. Once validated, the presumption is that binders or inhibitors of this protein will affect the desired process, though this impact must be characterized through observation of compound-induced phenotypes [17].

The reverse approach is sometimes described as "reverse drug discovery" because it analyzes in detail the results of exposing a biological system to compounds with known effects on specific targets [18]. This allows for a more precise understanding of the mechanism of action as well as potential side effects, enabling more intelligent subsequent screening with better, more relevant assay readouts [18]. The method identifies or confirms the role of the target protein in biological responses by observing phenotypes induced by target-specific small molecules in cellular tests or whole organisms [1].

Comparative Workflow: Forward vs. Reverse Chemogenomics

The following diagram illustrates the key conceptual differences and directional approaches between forward and reverse chemogenomics:

Technical Workflow in Practice

The implementation of reverse chemogenomics follows a structured experimental pathway from target to phenotype:

Computational Approaches for Target Identification

Reverse Screening Methodologies

Reverse screening computational methods are essential for identifying potential protein targets of small molecules in reverse chemogenomics [19]. Also known as in silico target fishing, these approaches differ from conventional virtual screening by identifying potential targets of a given compound from large receptor databases rather than finding ligands for a specific target [19]. Three primary computational methods have emerged as cornerstone approaches in this field.

Table 1: Computational Reverse Screening Methods for Target Identification

| Method | Principle | Key Tools/Software | Applications | Advantages/Limitations |

|---|---|---|---|---|

| Shape Screening | Compares 3D molecular shape similarity to known ligands in annotated databases [19] | ChemMapper, TargetHunter, SEA | Initial target hypothesis generation; Drug repurposing [19] | Fast and simple; Limited by database coverage and annotation quality |

| Pharmacophore Screening | Matches essential chemical features responsible for biological activity [19] | PharmMapper, Pharmer | Mechanism of action studies; Polypharmacology prediction [19] | Captures key functional interactions; Dependent on pharmacophore model quality |

| Reverse Docking | Docks a query compound into multiple protein structures to assess binding affinity [19] | INVDOCK, idTarget | Off-target effect prediction; Side effect mechanism elucidation [19] | Provides structural insights; Computationally intensive and time-consuming |

Practical Implementation of Computational Methods

The workflow for computational target identification typically begins with shape-based or pharmacophore-based screening to generate initial target hypotheses, followed by reverse docking for validation and detailed binding analysis [19]. For example, researchers used shape screening to discover that curcumin suppresses human colon cancer cell proliferation by targeting CDK2 [19]. Similarly, reverse docking revealed that the marine compound wentilactone B induces G2/M phase arrest and apoptosis in hepatocellular carcinoma cells by co-targeting Ras/Raf/MAPK signaling pathway proteins [19].

These computational approaches are particularly valuable for exploring molecular mechanisms of compounds derived from natural products or traditional medicines, where cellular activities may be observed but precise molecular targets remain unknown [19]. The integration of large-scale databases such as ChEMBL, BindingDB, and the Protein Data Bank has significantly enhanced the power and accuracy of these computational predictions [19].

Experimental Protocols and Methodologies

Chemogenomic Library Development

The foundation of successful reverse chemogenomics research lies in the development of high-quality chemogenomic libraries. These are carefully curated collections of chemically diverse compounds designed to systematically target specific protein families or the broader druggable genome [20] [1]. Unlike general compound libraries, chemogenomic libraries typically contain hundreds to thousands of selective small molecules with known or potential targets or functions [15].

Library design principles include comprehensive target coverage, chemical diversity, and well-annotated compound information. As described in one research effort, a system pharmacology network integrating drug-target-pathway-disease relationships was used to develop a chemogenomic library of 5000 small molecules representing a large and diverse panel of drug targets involved in various biological effects and diseases [20]. This library was designed specifically to assist in target identification and mechanism deconvolution for phenotypic assays [20].

Quality control measures for chemogenomic libraries include structural identity verification, purity assessment, solubility testing, and comprehensive annotation of biological activities [21]. The EUbOPEN project represents a large-scale initiative to assemble an open-access chemogenomic library covering more than 1000 proteins with well-annotated compounds and chemical probes [21].

Phenotypic Screening and Annotation

Once target-specific compounds are identified, they are subjected to phenotypic analysis in biologically relevant systems. Modern approaches often employ high-content screening technologies that capture multiparametric data on cellular responses [21]. The following protocol outlines a comprehensive phenotypic screening approach for annotating chemogenomic libraries:

Protocol: High-Content Phenotypic Profiling for Compound Annotation

Objective: To comprehensively characterize the phenotypic effects of target-specific compounds on cellular health and function.

Materials:

- Cell Lines: Adherent cell lines such as U2OS (osteosarcoma), HeLa (cervical cancer), or HEK293T (human embryonic kidney)

- Live-Cell Dyes:

- Hoechst33342 (50 nM final concentration): Nuclear staining

- MitotrackerRed or MitotrackerDeepRed: Mitochondrial staining

- BioTracker 488 Green Microtubule Cytoskeleton Dye: Microtubule network visualization

- Compound Library: Chemogenomic compounds dissolved in DMSO with appropriate controls

- Equipment: High-content imaging system with environmental control for live-cell imaging

Procedure:

- Cell Seeding: Plate cells in multi-well imaging plates at appropriate density (e.g., 5,000 cells/well for U2OS) and culture for 24 hours.

- Compound Treatment: Add chemogenomic compounds at multiple concentrations (typically 1 nM-10 μM) using DMSO as vehicle control.

- Staining: Add optimized dye combinations directly to culture medium without washing steps.

- Image Acquisition: Acquire images at multiple time points (e.g., 24, 48, 72 hours) using automated microscopy with environmental control (37°C, 5% CO₂).

- Image Analysis: Use automated image analysis software (e.g., CellProfiler) to segment cells and extract morphological features.

- Phenotype Classification: Implement machine learning algorithms to classify cells into distinct phenotypic categories based on nuclear morphology, cytoskeletal organization, and mitochondrial health.

Data Analysis:

- Calculate IC₅₀ values for cytotoxicity over time

- Classify compounds based on kinetic profiles of phenotypic effects

- Identify specific phenotypic signatures associated with target modulation

- Distinguish primary target effects from secondary cytotoxicity [21]

This protocol enables time-dependent characterization of compound effects, capturing the kinetics of different cell death mechanisms and cellular responses. For example, membrane-permeabilizing agents like digitonin show rapid cytotoxicity, while epigenetic target inhibitors such as JQ1 exhibit slower and more gradual effects [21].

Research Reagent Solutions

Successful implementation of reverse chemogenomics requires carefully selected reagents and tools. The following table outlines essential research reagents and their applications in reverse chemogenomics studies:

Table 2: Essential Research Reagents for Reverse Chemogenomics

| Reagent Category | Specific Examples | Function/Application | Technical Considerations |

|---|---|---|---|

| Chemical Libraries | Pfizer chemogenomic library; GSK Biologically Diverse Compound Set; NCATS MIPE library [20] | Target identification and validation; Structure-activity relationship studies | Select libraries with known target annotation and chemical diversity |

| Cell Line Models | U2OS (osteosarcoma); HEK293T (embryonic kidney); MRC9 (non-transformed fibroblasts) [21] | Phenotypic screening in disease-relevant contexts; Mechanism of action studies | Use multiple cell lines to assess context-specific effects |

| Live-Cell Imaging Dyes | Hoechst33342 (nuclear); Mitotracker Red/Deep Red (mitochondria); BioTracker microtubule dyes [21] | Multiparametric phenotypic characterization; Real-time kinetic analysis | Optimize dye concentrations to minimize cytotoxicity while maintaining signal |

| Computational Tools | PharmMapper; ChemMapper; INVDOCK; idTarget [19] | In silico target prediction; Binding affinity estimation; Off-target effect prediction | Use multiple complementary approaches to increase prediction confidence |

| Target Annotation Databases | ChEMBL; BindingDB; Protein Data Bank; KEGG Pathways [20] [19] | Target validation; Pathway analysis; Polypharmacology assessment | Regularly update databases to incorporate latest structural and interaction data |

Case Studies and Applications

Elucidating Nur77 Signaling Pathways

Reverse chemogenomics has been successfully applied to characterize the orphan nuclear receptor Nur77 (NR4A1), a transcription factor involved in apoptosis, autophagy, inflammation, and metabolism [15]. Researchers at Xiamen University constructed a targeted chemical library of over 300 derivatives based on the natural product cytosporone-B (Csn-B), initially identified as a Nur77 agonist [15].

Through systematic phenotypic analysis, they discovered that different Nur77-targeting compounds induced distinct biological outcomes:

Compound TMPA: Bound to the Nur77 ligand-binding domain, causing conformational changes that disrupted Nur77 association with LKB1. This resulted in LKB1 release into the cytoplasm, where it phosphorylated and activated AMPK, ultimately downregulating glucose levels in diabetic mice [15].

Compound THPN: Triggered Nur77 translocation to mitochondria through interaction with Nix, where it localized to the mitochondrial inner membrane and interacted with ANT1. This caused opening of the mitochondrial permeability transition pore and mitochondrial membrane depolarization, leading to irreversible autophagic death of melanoma cells [15].

These findings illustrate how reverse chemogenomics can decipher complex signaling networks and identify context-specific therapeutic strategies targeting the same protein.

COVID-19 Drug Discovery Applications

The COVID-19 pandemic highlighted the utility of reverse chemogenomics approaches for rapid therapeutic development. Researchers employed computer-aided drug discovery methods, including chemogenomics and drug repositioning, to identify potential treatments for SARS-CoV-2 infection [22]. This involved screening existing drug libraries against key viral targets such as the main protease (Mpro) and RNA-dependent RNA polymerase (RdRp) [22].

Successful outcomes included the identification of remdesivir (RdRp inhibitor) and molnupiravir (which induces viral RNA mutations) as effective antivirals against SARS-CoV-2 [22]. These applications demonstrate how reverse chemogenomics can accelerate drug discovery by leveraging existing target knowledge and compound libraries to address emerging health threats.

Signaling Pathway Diagrams

Nur77-Mediated Signaling Networks

The reverse chemogenomics approach to characterizing Nur77 revealed its involvement in multiple signaling pathways with distinct phenotypic outcomes:

Integrated Experimental Workflow

A comprehensive reverse chemogenomics study integrates multiple methodological approaches from target validation to phenotypic analysis:

Reverse chemogenomics represents a powerful target-centric approach for elucidating biological mechanisms and discovering novel therapeutic strategies. By beginning with validated protein targets and systematically identifying chemical modulators, researchers can establish causal relationships between target modulation and phenotypic outcomes. The integration of computational prediction methods with experimental validation creates a robust framework for understanding complex biological systems.

The continued development of annotated chemogenomic libraries, improved phenotypic screening technologies, and advanced computational algorithms will further enhance the power and applicability of reverse chemogenomics. As these methodologies mature, they promise to accelerate the discovery of novel therapeutic agents while deepening our understanding of biological pathways and their roles in health and disease.

The Role of Chemogenomic Libraries in Systematic Screening

The drug discovery paradigm has significantly evolved, shifting from a reductionist model of "one target–one drug" to a more complex systems pharmacology perspective of "one drug–several targets" [23]. This transition responds to the high failure rates of drug candidates in advanced clinical stages due to insufficient efficacy or safety concerns, particularly for complex diseases like cancers, neurological disorders, and diabetes that often stem from multiple molecular abnormalities rather than single defects [23]. Chemogenomics has emerged as a powerful strategy at the intersection of chemical biology and genomics, defined as the systematic screening of targeted chemical libraries of small molecules against specific drug target families with the dual goal of identifying novel drugs and elucidating novel drug targets [1].

A chemogenomic library is a collection of well-defined, selective small-molecule pharmacological agents where a hit in a phenotypic screen suggests that the annotated target(s) of that pharmacological agent are involved in perturbing the observed phenotype [24] [25]. These libraries serve as essential tools for bridging the gap between phenotypic screening approaches, which observe compound effects in complex biological systems without requiring prior knowledge of specific molecular targets, and target-based approaches, which focus on modulating specific, pre-validated targets [23] [24]. The strategic application of chemogenomic libraries considerably expedites the conversion of phenotypic screening projects into target-based drug discovery campaigns, while also enabling applications in drug repositioning, predictive toxicology, and novel pharmacological modality discovery [24] [25].

Core Concepts and Strategic Approaches

Fundamental Principles of Chemogenomic Library Design

Chemogenomic libraries are constructed with several fundamental design principles that distinguish them from general compound collections. First, they typically include known ligands of at least one, and preferably several, members of a target family, operating on the principle that ligands designed for one family member will often bind to additional family members due to structural similarities [1]. This approach ensures that the compounds collectively bind to a high percentage of the target family proteome. Second, these libraries prioritize well-annotated compounds with comprehensively characterized mechanisms of action, potency, and selectivity profiles, enabling meaningful interpretation of screening results [24]. Third, they encompass chemical diversity while maintaining target focus, covering a wide range of protein targets and biological pathways implicated in various disease areas [23] [26].

The composition of chemogenomic libraries can vary significantly depending on their intended application. For example, the EUbOPEN consortium is assembling an open-access chemogenomic library comprising approximately 5,000 well-annotated compounds covering roughly 1,000 different proteins, alongside synthesizing at least 100 high-quality, open-access chemical probes [27]. Similarly, researchers have developed a chemogenomic library of 5,000 small molecules representing a large and diverse panel of drug targets involved in diverse biological effects and diseases, designed specifically to assist in target identification and mechanism deconvolution for phenotypic assays [23].

Forward versus Reverse Chemogenomic Approaches

Chemogenomic screening employs two complementary experimental strategies, each with distinct applications and workflows.

Forward chemogenomics (also known as classical chemogenomics) begins with the investigation of a particular phenotype, followed by identification of small compounds that interact with this function while the molecular basis remains unknown [1]. Once modulators are identified, they serve as tools to identify the protein responsible for the phenotype. For example, a loss-of-function phenotype such as arrest of tumor growth would be studied to identify compounds that induce this phenotype, followed by target identification efforts. The primary challenge of forward chemogenomics lies in designing phenotypic assays that enable immediate transition from screening to target identification [1].

Reverse chemogenomics first identifies small compounds that perturb the function of an enzyme or specific target in the context of an in vitro enzymatic assay, then analyzes the phenotype induced by the molecule in cellular or whole-organism tests [1]. This approach confirms the role of the target in the biological response and was historically virtually identical to target-based approaches applied in drug discovery over past decades. However, modern reverse chemogenomics is enhanced by parallel screening capabilities and the ability to perform lead optimization on multiple targets belonging to one target family simultaneously [1].

Table 1: Comparison of Forward and Reverse Chemogenomics Approaches

| Aspect | Forward Chemogenomics | Reverse Chemogenomics |

|---|---|---|

| Starting Point | Phenotype of interest | Known protein target |

| Screening Approach | Phenotypic assays on cells or organisms | In vitro target-based assays |

| Primary Challenge | Target identification after hit discovery | Phenotypic characterization after target engagement |

| Typical Application | Novel target discovery | Target validation and function elucidation |

| Throughput Potential | Moderate (complex assays) | High (simplified assay systems) |

Composition and Design of Chemogenomic Libraries

Quantitative Design Considerations

The design of a targeted screening library of bioactive small molecules presents significant challenges because most compounds modulate their effects through multiple protein targets with varying degrees of potency and selectivity [26]. Effective library design requires analytic procedures that balance multiple factors including library size, cellular activity, chemical diversity, availability, and target selectivity [26]. Researchers have implemented systematic strategies for designing anticancer compound libraries adjusted for these parameters, resulting in a minimal screening library of 1,211 compounds capable of targeting 1,386 anticancer proteins [26].

Notable examples of chemogenomic libraries include the Pfizer chemogenomic library, the GlaxoSmithKline (GSK) Biologically Diverse Compound Set (BDCS), Prestwick Chemical Library, the Sigma-Aldrich Library of Pharmacologically Active Compounds, and the publicly available Mechanism Interrogation PlatE (MIPE) library developed by the National Center for Advancing Translational Sciences (NCATS) [23]. These libraries vary in size, composition, and specific application focus, but share the common characteristic of containing well-annotated compounds with defined biological activities.

Table 2: Exemplary Chemogenomic Libraries and Their Characteristics

| Library Name | Developer/Provider | Key Characteristics | Reported Size |

|---|---|---|---|

| EUbOPEN Library | EUbOPEN Consortium | Open access, ~1,000 proteins covered | ~5,000 compounds [27] |

| Minimal Anticancer Library | Academic Research | Covers 1,386 anticancer targets | 1,211 compounds [26] |

| MIPE Library | NCATS | Public screening programs | Not specified [23] |

| GSK BDCS | GlaxoSmithKline | Biologically diverse compound set | Not specified [23] |

| Prestwick Chemical Library | Prestwick Chemical | Focus on marketed drugs | Not specified [23] |

Scaffold-Based Organization and Chemical Space Networks

A critical aspect of chemogenomic library design involves the organization of compounds based on their molecular scaffolds to ensure appropriate diversity and coverage of chemical space. Software tools like ScaffoldHunter enable the systematic decomposition of each molecule into different representative scaffolds and fragments through a stepwise process: (1) removing all terminal side chains while preserving double bonds directly attached to rings, and (2) removing one ring at a time using deterministic rules to preserve the most characteristic "core structure" until only one ring remains [23]. These scaffolds are then distributed across different levels based on their relationship distance from the original molecule node, creating a hierarchical organization that facilitates navigation of chemical space and compound selection [23].

Chemical Space Networks (CSNs) provide powerful visualization tools for representing relationships within chemogenomic libraries. In a typical CSN, compounds are represented as nodes connected by edges, where edges represent defined relationships such as 2D fingerprint-based Tanimoto similarity, substructure-based similarity, or asymmetric Tversky similarity [28]. CSNs enable researchers to visualize and interpret complex relationships within small molecule datasets, typically representing datasets containing tens to thousands of compounds with some level of similarity or other definable relationship [28]. These network representations facilitate the application of established network science algorithms and statistical calculations, including clustering coefficient, degree assortativity, and modularity analysis, providing quantitative insights into library composition and compound relationships [28].

Diagram 1: Chemogenomic Screening Workflow. This workflow illustrates the integrated process of chemogenomic library design and screening application, highlighting the parallel paths for target-focused and phenotypic-focused approaches.

Implementation and Experimental Protocols

Phenotypic Screening and Morphological Profiling

Advanced cell-based phenotypic screening technologies have re-emerged as powerful approaches in identifying and developing novel therapeutics, facilitated by developments in induced pluripotent stem (iPS) cell technologies, gene-editing tools like CRISPR-Cas, and advanced imaging assays [23]. The Cell Painting assay represents a particularly advanced high-content imaging-based high-throughput phenotypic profiling method that enables comprehensive morphological characterization of cellular responses to compound treatments [23].

In a typical Cell Painting protocol, U2OS osteosarcoma cells are plated in multiwell plates, perturbed with test treatments, stained with fluorescent dyes, fixed, and imaged on a high-throughput microscope [23]. Automated image analysis using CellProfiler software then identifies individual cells and measures hundreds of morphological features across different cellular compartments (cell, cytoplasm, and nucleus), including intensity, size, area shape, texture, entropy, correlation, granularity, and spatial relationships [23]. For the BBBC022 dataset, 1,779 morphological features are measured, which after quality control and filtering for non-zero standard deviation and correlation (less than 95%), provide a rich morphological profile for each compound [23]. These profiles enable researchers to group compounds into functional pathways, identify phenotypic impacts of chemical perturbations, and discover signatures of disease [23].

Data Integration and Network Pharmacology

The true power of chemogenomic screening emerges through data integration and network pharmacology approaches that combine heterogeneous data sources into unified analytical frameworks. Researchers have developed system pharmacology networks that integrate drug-target-pathway-disease relationships with morphological profiles from Cell Painting assays using high-performance NoSQL graph databases like Neo4j [23]. This architecture consists of nodes representing specific objects (molecules, scaffolds, proteins, pathways, diseases) linked by edges representing relationships between them (a scaffold being part of a molecule, a molecule targeting a protein, a target acting in a pathway, etc.) [23].

This network pharmacology approach enables the identification of proteins modulated by chemicals that correlate with specific morphological perturbations at the cellular level, potentially leading to identifiable phenotypes, diseases, or adverse outcomes [23]. The integration of additional biological context through databases like ChEMBL (bioactivity data), Kyoto Encyclopedia of Genes and Genomes (KEGG) (pathways), Gene Ontology (GO) (biological processes and functions), and Human Disease Ontology (DO) (disease classifications) creates a comprehensive systems biology framework for interpreting chemogenomic screening results [23]. Statistical enrichment analyses using tools like the R package clusterProfiler enable identification of significantly overrepresented biological pathways, processes, and disease associations among hit compounds from chemogenomic screens [23].

Diagram 2: Data Integration Framework. This diagram illustrates the integration of multiple data sources into a unified network pharmacology database for comprehensive analysis and target identification.

Applications in Drug Discovery and Chemical Biology

Target Identification and Mechanism of Action Studies

A primary application of chemogenomic library screening involves target identification and mechanism of action (MOA) studies for compounds emerging from phenotypic screens. When a compound from a chemogenomic library produces a hit in a phenotypic screen, its annotated targets provide immediate hypotheses about which specific proteins or pathways might be mediating the observed phenotypic effect [24]. This approach significantly accelerates the often challenging process of target deconvolution that traditionally follows phenotypic screening hits.

Chemogenomics has been successfully applied to determine MOA even for complex traditional medicines, including Traditional Chinese Medicine (TCM) and Ayurveda [1]. For example, when analyzing the therapeutic class of "toning and replenishing medicine" from TCM, researchers identified sodium-glucose transport proteins and PTP1B (an insulin signaling regulator) as targets linked to the hypoglycemic phenotype [1]. Similarly, for Ayurvedic anti-cancer formulations, target prediction programs enriched for cancer progression targets like steroid-5-alpha-reductase and synergistic targets such as the efflux pump P-glycoprotein [1]. These target-phenotype links help identify novel MOAs for complex natural product mixtures.

Drug Repositioning and Predictive Toxicology

Beyond novel target identification, chemogenomic screening enables drug repositioning applications by revealing novel therapeutic indications for existing drugs or clinical candidates [24]. When known drugs or compounds with well-characterized target profiles produce unexpected hits in phenotypic screens for different disease areas, these findings immediately suggest potential new therapeutic applications. This approach leverages existing safety and pharmacokinetic data for these compounds, potentially significantly shortening development timelines for new indications.