Chemogenomic Library Screening in Precision Oncology: A Strategic Guide to Target Discovery and Drug Development

This article provides a comprehensive overview of chemogenomic library screening and its pivotal role in advancing precision oncology.

Chemogenomic Library Screening in Precision Oncology: A Strategic Guide to Target Discovery and Drug Development

Abstract

This article provides a comprehensive overview of chemogenomic library screening and its pivotal role in advancing precision oncology. Aimed at researchers, scientists, and drug development professionals, it explores the foundational principles of using annotated small-molecule libraries to deconvolute complex disease biology. The scope ranges from the design and application of these libraries in phenotypic and target-based screens to the critical troubleshooting of limitations and the rigorous validation of screening hits. By integrating insights from functional genomics, cheminformatics, and machine learning, this guide serves as a strategic resource for leveraging chemogenomic approaches to identify novel therapeutic targets and develop more effective, personalized cancer treatments.

The Foundation of Chemogenomics in Precision Oncology: From Concepts to Library Design

In the pursuit of precision oncology, the strategic design of screening libraries represents a critical frontier. A chemogenomic library is not merely a collection of compounds but a systematically designed resource of targeted small molecules screened against specific drug target families—such as kinases, GPCRs, and nuclear receptors—with the dual goal of identifying novel drugs and elucidating novel drug targets [1]. Unlike simple compound collections, these libraries are constructed with intentionality: they integrate target and drug discovery by using bioactive compounds as probes to characterize proteome functions and link molecular targets to phenotypic outcomes [1]. This approach is fundamentally transforming oncology research by enabling the identification of patient-specific vulnerabilities and driving the development of targeted therapeutic strategies.

The completion of the human genome project has provided an abundance of potential targets for therapeutic intervention, and chemogenomics aims to study the intersection of all possible drugs on all these potential targets [1]. In precision oncology, this translates to designing libraries that cover a wide range of protein targets and biological pathways implicated across various cancers, making it possible to identify patient-specific treatment vulnerabilities [2] [3]. The strategic value of these libraries lies in their targeted nature; by including known ligands for various members of a target family, they collectively bind to a high percentage of the target family, enabling more efficient discovery workflows [1].

Quantitative Characterization of Chemogenomic Libraries

Library Composition and Target Coverage

The structural and functional composition of a chemogenomic library determines its utility in precision oncology research. The following table summarizes key quantitative parameters from established library designs and their applications.

Table 1: Characterization of Chemogenomic Library Designs and Applications

| Library / Strategy | Size (Compounds) | Target Coverage | Primary Application | Key Design Considerations |

|---|---|---|---|---|

| Minimal Screening Library [2] | 1,211 | 1,386 anticancer proteins | Phenotypic profiling in glioblastoma | Library size, cellular activity, chemical diversity and availability, target selectivity |

| Physical Screening Library [2] [3] | 789 | 1,320 anticancer targets | Pilot screening of glioma stem cells | Adjustment for cellular activity and target selectivity |

| EUbOPEN Initiative [4] | Not specified | ~30% of druggable proteome (~900 targets) | Functional annotation of proteins | Less stringent selectivity criteria than chemical probes; coverage of major target families |

| Optimized Library Design [5] | Variable | Focused on reducing polypharmacology | Enhanced target deconvolution in phenotypic screens | Sequential elimination of highly promiscuous compounds while prioritizing target coverage |

Polypharmacology Index Comparison

A critical consideration in library design is the degree of polypharmacology—the tendency of compounds to interact with multiple targets. Researchers have developed a quantitative polypharmacology index (PPindex) to compare libraries, where larger absolute values indicate more target-specific libraries [5].

Table 2: Polypharmacology Index (PPindex) of Various Compound Libraries

| Library | PPindex (All Compounds) | PPindex (Without 0-target compounds) | PPindex (Without 0 & 1-target compounds) |

|---|---|---|---|

| DrugBank | 0.9594 | 0.7669 | 0.4721 |

| LSP-MoA | 0.9751 | 0.3458 | 0.3154 |

| MIPE 4.0 | 0.7102 | 0.4508 | 0.3847 |

| Microsource Spectrum | 0.4325 | 0.3512 | 0.2586 |

| DrugBank Approved | 0.6807 | 0.3492 | 0.3079 |

The variation in PPindex values across libraries highlights their different design philosophies. Libraries with higher PPindex values (closer to 1) are more target-specific and potentially more useful for target deconvolution in phenotypic screens [5]. This quantitative assessment enables researchers to select libraries based on the specific needs of their experimental approach—whether target identification or phenotypic screening.

Experimental Protocols for Library Application

Protocol: Phenotypic Screening for Patient-Specific Vulnerabilities

This protocol details the application of chemogenomic libraries to identify patient-specific vulnerabilities in cancer cells, as demonstrated in glioblastoma (GBM) research [2] [3].

3.1.1 Research Reagent Solutions

Table 3: Essential Research Reagents for Phenotypic Screening

| Reagent / Material | Function / Application | Specifications |

|---|---|---|

| Chemogenomic Physical Library | Targeted perturbation of biological pathways | 789 compounds covering 1,320 anticancer targets [2] |

| Glioma Stem Cells (GSCs) | Patient-derived model system | Isolated from glioblastoma patients; represent tumor heterogeneity |

| Cell Culture Media | Maintenance of stem cell properties | Serum-free conditions with appropriate growth factors |

| High-Content Imaging System | Phenotypic profiling | Automated microscopy and image analysis for cell survival quantification |

| Viability Assays | Assessment of cell survival and proliferation | Multiparametric measurements (e.g., ATP content, apoptosis markers) |

3.1.2 Step-by-Step Workflow

Library Preparation:

- Reformulate compounds to ensure consistent solubility and concentration

- Arrange compounds in screening plates using appropriate controls (DMSO, positive controls)

- Store at -20°C until use to maintain compound integrity

Cell Culture and Plating:

- Maintain patient-derived glioma stem cells in serum-free media with appropriate growth factors

- Passage cells at 70-80% confluence to maintain stemness properties

- Plate cells in 384-well imaging plates at optimized density (e.g., 1,000-2,000 cells/well)

- Allow cells to adhere and recover for 24 hours before compound treatment

Compound Treatment:

- Transfer compounds from library storage plates to cell culture plates using liquid handling robotics

- Use appropriate dilution series (e.g., 1, 5, 10 μM) to assess dose-dependent effects

- Include DMSO controls (typically 0.1% final concentration) for normalization

- Incubate cells with compounds for 72-96 hours to assess phenotypic effects

Phenotypic Profiling:

- Fix cells and stain with appropriate markers for viability, apoptosis, and differentiation

- Alternatively, use live-cell imaging for kinetic assessment of phenotypic responses

- Acquire images using high-content imaging system with 20× objective

- Capture multiple fields per well to ensure statistical robustness

Image and Data Analysis:

- Quantify cell number, viability, and morphological features using image analysis software

- Normalize data to DMSO controls (100% viability) and positive controls (0% viability)

- Calculate Z-scores for each compound to identify significant vulnerabilities

- Apply appropriate statistical corrections for multiple comparisons

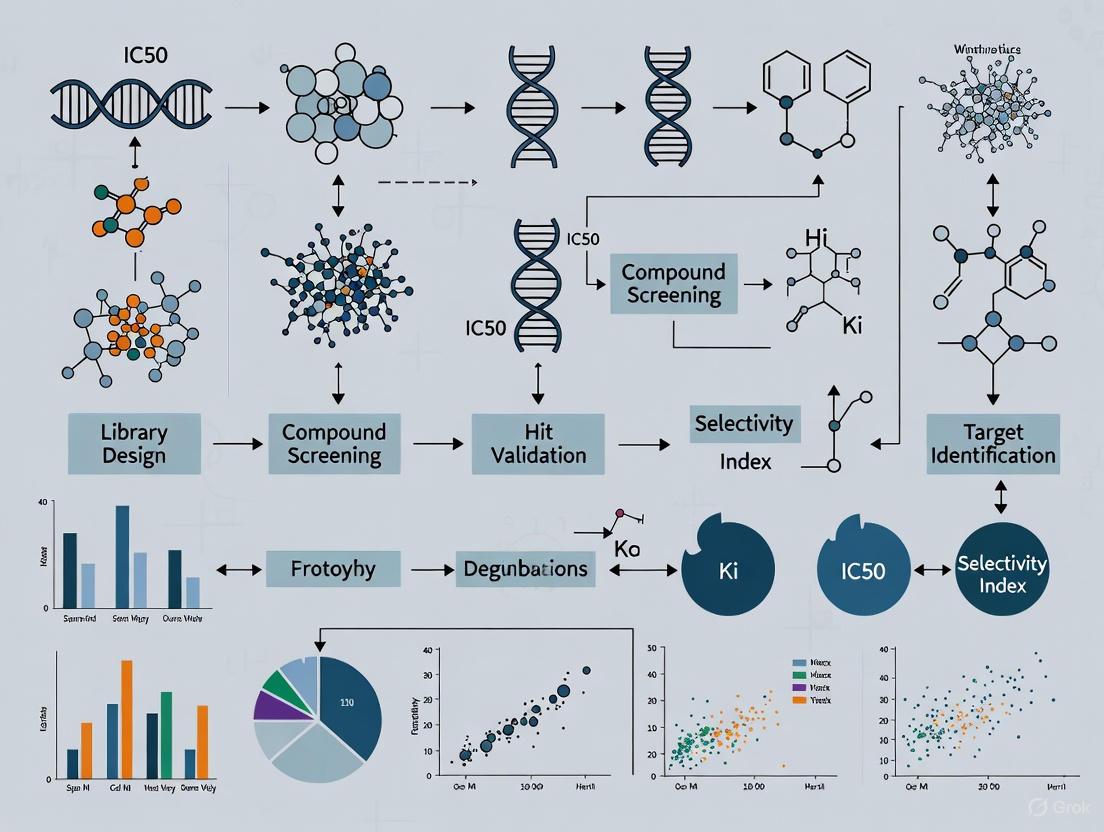

Diagram 1: Phenotypic screening workflow for identifying patient-specific vulnerabilities using a designed chemogenomic library, illustrating the process from library design to vulnerability identification.

Protocol: Target Deconvolution Using Forward Chemogenomics

This protocol outlines the forward chemogenomics approach for identifying molecular targets responsible for observed phenotypic effects [1].

3.2.1 Research Reagent Solutions

| Reagent / Material | Function / Application | Specifications |

|---|---|---|

| Phenotypic Assay System | Detection of desired phenotypic response | Optimized for robustness and reproducibility |

| Target Family Library | Coverage of relevant target classes | Kinases, GPCRs, epigenetic regulators, etc. |

| Affinity Beads | Pull-down of compound-binding proteins | Streptavidin, glutathione, or nickel beads |

| Mass Spectrometry System | Protein identification and quantification | High-resolution LC-MS/MS instrumentation |

| CRISPR-Cas9 System | Functional validation of candidate targets | Gene knockout or knockdown capabilities |

3.2.2 Step-by-Step Workflow

Phenotypic Screening:

- Implement a robust phenotypic assay measuring a therapeutically relevant endpoint (e.g., tumor growth inhibition, differentiation, apoptosis)

- Screen the chemogenomic library against the assay system

- Identify compounds that induce the desired phenotype with appropriate potency (e.g., IC50 < 10 μM)

Target Identification:

- For confirmed hits, immobilize compounds on solid support (e.g., via biotin linkage)

- Incubate immobilized compounds with cell lysates from sensitive models

- Pull down compound-binding proteins using appropriate affinity beads

- Wash extensively to remove non-specific binders

- Elute specifically bound proteins for identification

Protein Identification:

- Digest pulled-down proteins with trypsin

- Analyze peptides by liquid chromatography coupled to tandem mass spectrometry (LC-MS/MS)

- Identify proteins using database searching algorithms

- Prioritize candidate targets based on spectral counts, peptide abundance, and specific interaction

Target Validation:

- Validate direct binding using biophysical methods (SPR, ITC) or cellular assays (CETSA)

- Use CRISPR-Cas9 to knockout candidate targets in sensitive cell lines

- Assess whether target knockout phenocopies compound treatment

- Perform rescue experiments to confirm specificity

Diagram 2: Forward chemogenomics workflow for target deconvolution, illustrating the process from phenotypic screening to target validation.

Computational Validation and Benchmarking

DeepTarget: A Computational Framework for MOA Prediction

The development of computational tools like DeepTarget represents a significant advancement in chemogenomic library applications. DeepTarget integrates large-scale drug and genetic knockdown viability screens with omics data to predict a drug's mechanisms of action (MOA) driving its cancer cell killing [6]. This approach builds on the principle that CRISPR-Cas9 knockout of a drug's target gene can mimic the drug's effects, thus identifying genes whose deletion phenocopies a drug treatment can reveal its potential targets [6].

4.1.1 DeepTarget Protocol for MOA Prediction

Data Integration:

- Collect drug response profiles across a panel of cancer cell lines (e.g., DepMap database)

- Obtain genome-wide CRISPR-CO viability profiles for the same cell lines

- Integrate corresponding omics data (gene expression and mutation status)

Primary Target Prediction:

- Compute Drug-KO Similarity (DKS) scores using Pearson correlation

- Identify genes whose knockout induces similar viability patterns as drug treatment

- Apply linear regression to correct for screen confounding factors

- Prioritize targets with highest DKS scores as primary targets

Context-Specific Secondary Target Prediction:

- Perform de novo decomposition of drug response into gene knockout effects

- Compute Secondary DKS scores in cell lines lacking primary target expression

- Identify alternative mechanisms active when primary targets are absent

Mutation Specificity Analysis:

- Compare drug-target relationships in different genetic contexts

- Calculate mutant-specificity scores by comparing DKS scores in mutant vs. wild-type cell lines

- Identify preferential targeting of mutant or wild-type protein forms

4.1.2 Benchmarking Performance

DeepTarget has been benchmarked against structure-based methods using eight gold-standard datasets of high-confidence cancer drug-target pairs. DeepTarget stratified positive vs. negative pairs with a mean AUC of 0.73 across all datasets, compared to 0.58 for RosettaFold and 0.53 for Chai-1, outperforming other models in 7 out of 8 tested datasets [6].

Diagram 3: DeepTarget computational workflow for predicting mechanisms of action, showing the integration of multiple data types to generate comprehensive MOA profiles.

Chemogenomic libraries represent a paradigm shift in precision oncology, moving beyond simple compound collections to strategically designed resources that integrate target and drug discovery. The effective application of these libraries requires careful consideration of design principles—including library size, cellular activity, chemical diversity, and target selectivity—as well as robust experimental and computational protocols for library screening and target deconvolution. As demonstrated in glioblastoma and other cancer models, these approaches can reveal patient-specific vulnerabilities and novel therapeutic opportunities, ultimately advancing the goal of personalized cancer therapy. The continued refinement of chemogenomic libraries, coupled with advanced computational tools like DeepTarget, promises to accelerate drug discovery and development in oncology by providing a more systematic framework for understanding drug mechanisms of action in relevant cellular contexts.

Precision oncology represents a paradigm shift from traditional, one-size-fits-all cancer treatment toward a personalized approach rooted in the molecular characteristics of individual tumors [7]. This evolution is driven by advancements in molecular biology, high-throughput sequencing, and computational tools that effectively integrate complex multi-omics data [7]. The fundamental principle of precision oncology involves customizing treatments based on specific genetic, epigenetic, and transcriptomic aberrations that drive tumorigenesis, enabling therapies that target discrete oncogenic drivers or signaling pathways essential for tumor cell proliferation and survival [8].

The clinical implementation of precision oncology relies heavily on comprehensive molecular profiling to identify actionable biomarkers. These biomarkers can arise from various sources, including tumor tissues, blood, and other bodily fluids, encompassing DNA, RNA, proteins, and metabolites [7]. The identification of specific mutations, such as those in the EGFR gene in non-small cell lung cancer (NSCLC) or BRCA1/2 mutations in breast and ovarian cancers, provides critical indicators for targeted therapies like EGFR inhibitors or PARP inhibitors, significantly improving patient outcomes [7] [8]. Furthermore, the characterization of predictive biomarkers including homologous recombination deficiency (HRD), microsatellite instability (MSI), and tumor mutational burden (TMB) has refined patient stratification and expanded opportunities for individualized treatment selection [8].

Table 1: Key Biomarker Categories in Precision Oncology

| Biomarker Category | Molecular Components | Clinical Applications | Examples |

|---|---|---|---|

| Genomic | DNA mutations, copy number variations, structural rearrangements | Targeted therapy selection, prognosis | EGFR, BRAF, KRAS, TP53 mutations [7] |

| Transcriptomic | Gene expression levels, fusion genes, splice variants | Diagnostics, therapy resistance mechanisms | ALK, ROS1, NTRK fusions [8] |

| Proteomic | Protein expression, post-translational modifications | Treatment target identification, response prediction | PD-L1, HER2 expression [9] |

| Epigenomic | DNA methylation, histone modifications | Early detection, therapeutic targeting | MLH1 hypermethylation [7] |

Experimental Protocols: Integrating Chemogenomic Library Screening with Molecular Profiling

Protocol 1: Design and Implementation of Targeted Anticancer Compound Libraries

Principle: Systematic design of focused small-molecule libraries for phenotypic screening in patient-derived models enables efficient identification of patient-specific vulnerabilities [10].

Materials and Reagents:

- Patient-derived cancer cells (e.g., glioma stem cells for glioblastoma)

- Comprehensive anti-Cancer small-Compound Library (C3L) or similar annotated library

- Cell culture reagents and appropriate media

- High-content imaging systems for phenotypic analysis

- Compound management and liquid handling systems

Procedure:

- Target Space Definition: Compile a comprehensive list of protein targets associated with cancer development using resources from The Human Protein Atlas and PharmacoDB, resulting in a target space of approximately 1,655 proteins covering all "hallmarks of cancer" categories [10].

- Compound Curation: Identify and curate small-molecule compounds targeting these proteins from public databases and commercial sources, including both approved/investigational compounds (AICs) and experimental probe compounds (EPCs) [10].

- Library Optimization: Apply multi-objective optimization to maximize cancer target coverage while minimizing library size. Implement filtering procedures based on cellular activity, chemical diversity, and commercial availability [10].

- Phenotypic Screening: Array the final screening library (e.g., 1,211 compounds covering 84% of cancer-associated targets) for cell survival profiling in patient-derived models [10].

- Data Analysis: Identify patient-specific vulnerabilities and heterogeneous phenotypic responses across cancer subtypes through quantitative analysis of screening data.

Expected Results: The protocol enables identification of patient-specific drug sensitivities with potential clinical applications. In a pilot study using glioma stem cells from glioblastoma patients, highly heterogeneous phenotypic responses were observed across patients and subtypes, demonstrating the utility of this approach for personalized therapy identification [10].

Protocol 2: Comprehensive Molecular Profiling for Biomarker Discovery

Principle: Integration of multi-omics data provides complementary insights into cancer biology, enabling identification of therapeutic biomarkers and patient stratification strategies [7] [8].

Materials and Reagents:

- Tumor tissue samples (fresh frozen or FFPE)

- Blood samples for liquid biopsy and germline DNA

- DNA/RNA extraction kits

- Next-generation sequencing platforms

- Bioinformatics software for data analysis

Procedure:

- Sample Collection: Obtain matched tumor-normal pairs from patients, with blood samples for germline comparison and circulating tumor DNA analysis.

- DNA Sequencing: Perform whole-genome sequencing (WGS) or whole-exome sequencing (WES) to identify somatic mutations, copy number variations, and structural rearrangements. WGS interrogates the entire ~3.2 billion base pairs, while WES targets the ~1-2% protein-coding regions [8].

- RNA Sequencing: Conduct whole-transcriptome sequencing (RNA-Seq) to identify gene fusions, alternative splicing events, and expression patterns. RNA-Seq is particularly powerful for detecting oncogenic fusions (e.g., ALK, ROS1, NTRK) that may evade DNA-based detection [8].

- Computational Analysis: Utilize bioinformatics pipelines including GATK for variant calling, STAR for alignment, DESeq2 for differential expression analysis, and integrative platforms like cBioPortal for multi-omics data interpretation [7].

- Clinical Interpretation: Annotate variants according to established guidelines (e.g., AMP/ASCO/CAP) and identify actionable alterations for targeted therapy selection.

Expected Results: Comprehensive molecular profiling identifies clinically actionable genomic alterations, including driver mutations, fusion genes, and biomarkers such as TMB, MSI, and HRD status, informing targeted therapy selection and clinical trial eligibility [8].

Data Presentation: Quantitative Analysis of Screening Libraries and Biomarker Performance

Table 2: Composition and Target Coverage of the C3L Chemogenomic Library [10]

| Library Component | Compound Count | Target Coverage | Key Characteristics |

|---|---|---|---|

| Theoretical Set | 336,758 | 1,655 cancer-associated proteins | In silico collection from established target-compound pairs |

| Large-Scale Set | 2,288 | Same target space as theoretical set | Filtered by activity and similarity thresholds |

| Screening Set | 1,211 | 84% of cancer targets (1,320 targets) | Optimized for physical screening; purchasable compounds |

Table 3: Performance Comparison of PD-L1 Assessment Methods in NSCLC [9]

| Assessment Method | Hazard Ratio (Durvalumab vs Chemotherapy) | Biomarker Positive Prevalence | Median Overall Survival (Months) |

|---|---|---|---|

| Visual Scoring (TC ≥50%) | 0.69 (CI 0.46-1.02) | 29.7% | Not specified |

| PD-L1 QCS-PMSTC | 0.62 (CI 0.46-0.82) | 54.3% | 19.9 |

| GMM Classifier | Similar to TC ≥50% | 52.7% | 20.9 |

Visualization: Workflow Diagrams for Precision Oncology Implementation

Chemogenomic Screening and Molecular Profiling Workflow

Molecular Data Integration and Clinical Decision Pathway

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Research Reagents and Platforms for Precision Oncology Investigations

| Reagent/Platform | Category | Function/Application | Examples/Specifications |

|---|---|---|---|

| Ambient-Stable NGS Library Prep | Sequencing Reagents | Facilitates next-generation sequencing without cold-chain requirements | Lyophilized reagents for library preparation; enables NGS in limited infrastructure settings [11] |

| C3L Compound Library | Chemical Screening | Targeted phenotypic screening for patient-specific vulnerabilities | 1,211 compounds covering 1,320 anticancer targets; optimized for cellular activity and diversity [10] |

| Bioinformatics Platforms | Computational Tools | Analysis of multi-omics data for biomarker discovery | Galaxy, DNAnexus, cBioPortal, GATK, DESeq2 [7] |

| PD-L1 QCS System | Digital Pathology | Quantitative continuous scoring of PD-L1 expression | Computer vision system for granular cell-level quantification in whole slide images [9] |

| Single-Cell Analysis Software | Computational Biology | Identifies rare cellular subpopulations and heterogeneity | Seurat for single-cell RNA sequencing data analysis [7] |

Discussion: Integrating Chemogenomic Approaches with Evolving Diagnostic Modalities

The integration of chemogenomic library screening with comprehensive molecular profiling represents a powerful strategy for advancing precision oncology. Chemogenomic libraries like the C3L provide a structured approach to interrogate cancer vulnerabilities across defined target spaces, while multi-omics profiling enables the detailed molecular characterization necessary for patient stratification [10]. This combined approach addresses the fundamental challenge of tumor heterogeneity by identifying patient-specific dependencies that may not be evident through genomic analysis alone.

Recent technological advancements are further enhancing the implementation of precision oncology. The development of ambient-stable, lyophilized reagents for NGS library preparation helps remove cold-chain barriers, simplify workflows, and expand access to precision oncology testing in settings with limited infrastructure [11]. Similarly, computational pathology approaches like the PD-L1 Quantitative Continuous Scoring (QCS) system demonstrate how artificial intelligence can improve biomarker quantification beyond subjective visual assessment, potentially expanding patient populations that may benefit from targeted immunotherapies [9].

The successful clinical implementation of these approaches requires structured interdisciplinary frameworks. The ESMO Precision Oncology Working Group has established recommendations for Molecular Tumor Boards (MTBs), emphasizing the need for interdisciplinary expertise, structured reporting, and quality indicators for monitoring clinical effectiveness [12]. These recommendations support the harmonization of precision oncology practices while allowing adaptation to local resources and center volumes.

Future directions in precision oncology will likely focus on enhanced multi-omics integration, improved computational capabilities for biomarker discovery, and the development of more sophisticated chemogenomic libraries that encompass emerging therapeutic modalities. As these technologies evolve, they hold the potential to transform complex molecular data into actionable strategies for precision-driven cancer care, ultimately improving therapeutic efficacy and patient outcomes across diverse cancer types.

In modern precision oncology research, phenotypic drug discovery (PDD) strategies have re-emerged as powerful approaches for identifying novel therapeutic agents. These strategies do not rely on preconceived knowledge of specific molecular targets but instead focus on observing phenotypic changes in disease-relevant cellular models [13]. Chemogenomic libraries serve as the cornerstone of this approach, comprising carefully curated collections of small molecules with annotated biological activities. These libraries enable researchers to probe complex biological systems and deconvolute the mechanisms of action underlying observed phenotypes, thereby bridging the gap between phenotypic screening and target identification [13]. The core value of these libraries lies in their strategic design, which balances chemical diversity with comprehensive target coverage across the human proteome, facilitating the translation of genomic information into effective new drugs for cancer treatment [14].

Core Components of a Chemogenomic Library

Structural and Functional Diversity

A well-constructed chemogenomic library must encompass sufficient structural diversity to probe a wide range of biological targets and pathways. This diversity is achieved through several complementary strategies. Scaffold-based analysis provides a systematic method for ensuring structural diversity by classifying compounds according to their core ring structures and then progressively simplifying these structures through deterministic rules in a stepwise fashion [13]. This hierarchical approach to chemical classification helps maximize the exploration of chemical space while maintaining representative core structures.

Functional diversity is equally critical and is often achieved by incorporating multiple classes of bioactive compounds. These typically include: High-quality chemical probes with well-characterized selectivity and potency; Approved drugs with established mechanisms of action; Experimental compounds targeting novel or underexplored biological pathways; and Nuisance compounds that identify assay interference patterns, such as the "Collection of Useful Nuisance Compounds" (CONS) which helps establish high-quality assay integrity [15]. The integration of morphological profiling data, such as that from the Cell Painting assay, further enhances functional characterization by capturing subtle phenotypic changes induced by compound treatment [13].

Comprehensive Annotation and Metadata

Robust annotation transforms a simple compound collection into a powerful chemogenomic tool. Essential annotations include target specificity (primary targets and off-target interactions), potency metrics (IC₅₀, Kᵢ, EC₅₀), mechanism of action, and pathway associations. These annotations are typically sourced from manually curated databases such as ChEMBL, Guide to Pharmacology, and BindingDB [15].

Recent advances have enabled the integration of additional data layers, including morphological profiles from high-content imaging and toxicological properties from sources like the EPA Integrated Risk Information System [16]. The application of network pharmacology approaches allows for the integration of these heterogeneous data sources into unified frameworks that capture drug-target-pathway-disease relationships, creating system-level understanding of compound activities [13].

Table 1: Essential Annotation Types for Chemogenomic Libraries

| Annotation Type | Description | Example Sources |

|---|---|---|

| Target Affinities | Quantitative binding/activity measurements | ChEMBL, BindingDB [16] |

| Pathway Associations | Involvement in biological pathways | KEGG, Reactome [13] |

| Disease Relevance | Connections to human pathologies | Disease Ontology [13] |

| Morphological Impact | Phenotypic profiles from cell painting | BBBC022 dataset [13] |

| Safety & Toxicity | Adverse effect and risk assessment | EPA IRIS, MotherToBaby [16] |

Strategic Target Coverage

The primary objective of a chemogenomic library is to achieve maximal coverage of the "druggable genome" – those genes encoding proteins that can be targeted by small molecules. However, even comprehensive libraries cover only a fraction of the human proteome. Current estimates indicate that the best chemogenomic libraries interrogate approximately 1,000-2,000 targets out of the 20,000+ protein-coding genes in the human genome [17].

Strategic focus often prioritizes target classes with established or potential relevance to cancer biology, including kinases, GPCRs, ion channels, nuclear receptors, and epigenetic regulators [2] [18]. In precision oncology applications, libraries must be specifically designed to cover protein targets implicated in various cancers. For example, one reported minimal screening library of 1,211 compounds targets 1,386 anticancer proteins, providing coverage of critical pathways dysregulated in malignancies [2].

Table 2: Target Family Distribution in a High-Quality Chemical Probe Set

| Target Family | Representative Targets | Coverage in HQCP Set |

|---|---|---|

| Kinases | EGFR, BRAF, CDKs, BCR-ABL | Extensive coverage of kinome [18] |

| Epigenetic Regulators | HDACs, BET bromodomains, HMTs | Growing representation [18] |

| Nuclear Receptors | Estrogen receptor, AR, RAR | Moderate coverage [13] |

| GPCRs | 5-HT receptors, chemokine receptors | Selective coverage [13] |

| Ion Channels | TRP channels, voltage-gated channels | Emerging coverage [18] |

Application in Precision Oncology

Chemogenomic libraries enable the identification of patient-specific vulnerabilities through phenotypic screening of patient-derived cells. In a pilot study screening glioma stem cells from glioblastoma (GBM) patients, researchers used a physical library of 789 compounds covering 1,320 anticancer targets, which revealed highly heterogeneous phenotypic responses across patients and GBM subtypes [2]. This approach exemplifies how targeted libraries can identify patient-specific dependencies that might be missed in genomic analyses alone.

The integration of chemogenomic screening with multi-omic profiling (genomics, transcriptomics, proteomics) significantly enhances therapeutic decision-making in Molecular Tumor Boards (MTBs). As demonstrated in a study incorporating reverse phase protein array (RPPA) proteomic analysis, protein-level data complemented NGS-based genomic profiling and supported additional therapeutic considerations for 54% of profiled patients [19]. This multi-omic approach is particularly valuable given that genomic variation and transcriptomic expression are often loosely correlated with protein activity and abundance in cancer tissues [19].

Diagram 1: Multi-omic workflow for precision oncology. This workflow integrates chemogenomic library screening with genomic and proteomic data to inform therapeutic decisions in Molecular Tumor Boards (MTBs).

Essential Protocols

Protocol: Construction of a Targeted Chemogenomic Library

Objective: Assemble a targeted screening library of bioactive small molecules for precision oncology applications, optimized for library size, cellular activity, chemical diversity, and target selectivity [2].

Materials:

- Compound databases (ChEMBL, Guide to Pharmacology, BindingDB)

- Target and pathway annotations (KEGG, Reactome, Gene Ontology)

- Morphological profiling data (Cell Painting from BBBC022)

- Disease association data (Disease Ontology)

- Scaffold analysis software (ScaffoldHunter)

- Database management system (Neo4j for graph database)

Procedure:

- Data Integration: Extract compounds with bioactivity data from ChEMBL (>5 million molecules in version 22), focusing on human targets with cancer relevance [13].

- Target Prioritization: Prioritize proteins implicated in oncogenic processes using KEGG pathway maps and Disease Ontology annotations for cancer subtypes [13].

- Selectivity Filtering: Apply selectivity criteria based on fold-change between primary and secondary targets (e.g., 100x selectivity based on biochemical and cell-based assays) [15].

- Scaffold Diversity Analysis: Process compounds using ScaffoldHunter to generate hierarchical scaffold representations, ensuring coverage of diverse chemical space [13].

- Network Integration: Construct a network pharmacology model using Neo4j graph database, linking compounds to targets, pathways, diseases, and morphological profiles [13].

- Library Validation: Validate coverage against known anticancer targets (aim for >1,000 targets with 1,200-1,500 compounds) [2].

- Physical Library Assembly: Source compounds from reliable suppliers, prepare DMSO stock solutions, and create assay-ready plates for high-throughput screening.

Protocol: Phenotypic Screening Using Cell Painting Assay

Objective: Identify compounds inducing morphological changes in cancer cells and link these phenotypes to potential mechanisms of action using chemogenomic library annotations.

Materials:

- U2OS osteosarcoma cells or patient-derived cancer cells

- Cell Painting staining cocktail (Mitochondria, ER, Nucleus, Golgi, Cytoskeleton dyes)

- High-content imaging system

- Image analysis software (CellProfiler)

- Annotated chemogenomic library (5000 compounds)

- Data analysis tools (R package clusterProfiler for GO and KEGG enrichment)

Procedure:

- Cell Preparation: Plate U2OS osteosarcoma cells or patient-derived glioma stem cells in multiwell plates [13].

- Compound Treatment: Treat cells with compounds from the chemogenomic library at appropriate concentrations (typically 1-10 µM) for 24-72 hours.

- Staining and Fixation: Stain cells with the Cell Painting cocktail, then fix for imaging [13].

- Image Acquisition: Acquire images on a high-throughput microscope, capturing multiple channels corresponding to different cellular compartments.

- Image Analysis: Process images using CellProfiler to identify individual cells and measure morphological features (intensity, size, shape, texture, granularity) across different cellular compartments [13].

- Profile Generation: Create morphological profiles for each compound by averaging features across replicate wells.

- Pattern Recognition: Compare profiles to identify compounds with similar morphological impacts, potentially indicating shared mechanisms of action.

- Mechanism Deconvolution: Use the chemogenomic library annotations to hypothesize potential targets for compounds inducing similar phenotypic profiles.

Protocol: Multivariate Phenotypic Screening for Lead Prioritization

Objective: Implement a multivariate screening approach to thoroughly characterize compound activity across multiple parasite fitness traits, as demonstrated in macrofilaricidal lead discovery [18].

Materials:

- B. malayi microfilariae or cancer cell models

- Tocriscreen 2.0 library or equivalent chemogenomic library

- Automated imaging systems

- Metabolic assay reagents

- Motility tracking software

Procedure:

- Primary Bivariate Screening: Screen compounds against microfilariae or cancer cells at 1-100 µM, assessing motility at 12 hours and viability at 36 hours post-treatment [18].

- Hit Identification: Apply statistical thresholds (Z-score >1) to identify initial hits from primary screening.

- Dose-Response Characterization: Generate 8-point dose-response curves for confirmed hits.

- Multivariate Secondary Screening: Multiplex adult parasite or 3D cancer spheroid assays to characterize hits across multiple phenotypic endpoints:

- Neuromuscular control (motility)

- Fecundity (reproductive capacity)

- Metabolic activity

- Viability

- Potency Determination: Calculate EC₅₀ values for each phenotype to identify compounds with differential potency across traits.

- Stage-Specific Activity: Compare potency against different life stages (microfilariae vs. adults) or cancer cell types (primary vs. metastatic).

- Target Validation: Leverage known human targets of hit compounds to explore homologous parasite or cancer pathways.

Diagram 2: Multivariate screening workflow. This tiered screening approach efficiently identifies and characterizes bioactive compounds across multiple phenotypic endpoints.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for Chemogenomic Screening

| Reagent/Resource | Function | Application Notes |

|---|---|---|

| High-Quality Chemical Probe Set | Selective modulation of specific targets | 875 compounds for 637 primary targets; 213 available free from SGC/opnMe [15] |

| Collection of Useful Nuisance Compounds | Identify assay interference patterns | 103 compounds for establishing high-quality HTS assays [15] |

| Cell Painting Assay Kit | Morphological profiling using 6 fluorescent dyes | Enables mechanism of action prediction [13] |

| CZ-OPENSCREEN Bioactive Library | Phenotypic screening collection | High content of approved drugs and probes with chemogenomic annotations [15] |

| ChEMBL Database | Bioactive molecule data with drug-like properties | Manually curated database with 1.6M+ compounds and 11K+ targets [14] |

| PubChem | Public chemical database with bioactivity data | 119M+ compounds, 295M+ bioactivities; integrated literature/patent data [16] |

| ScaffoldHunter Software | Hierarchical scaffold analysis for diversity assessment | Ensures representative coverage of chemical space [13] |

| Neo4j Graph Database | Network pharmacology integration | Connects compounds to targets, pathways, diseases [13] |

Well-constructed chemogenomic libraries represent indispensable tools in modern precision oncology research, enabling the translation of phenotypic observations into mechanistic understanding and therapeutic hypotheses. The strategic integration of structural diversity, comprehensive annotation, and maximized target coverage creates a powerful platform for drug discovery that bridges the gap between phenotypic screening and target-based approaches. As precision oncology continues to evolve, the marriage of chemogenomic libraries with multi-omic profiling technologies and advanced screening methodologies will undoubtedly yield novel therapeutic strategies for cancer patients, particularly those with limited treatment options. The protocols and frameworks outlined herein provide a roadmap for researchers to develop and implement these critical resources in their own precision oncology initiatives.

Systems pharmacology is an interdisciplinary field that utilizes network analysis to understand drug action within the complex regulatory systems of the human body. By moving beyond the traditional "one drug, one target" paradigm, it provides a framework for analyzing drug actions and side effects in the context of the entire genome and the intricate networks within which drug targets and disease gene products function [20]. This approach is particularly valuable in precision oncology, where understanding the multi-target mechanisms of drugs can help address the therapeutic challenges posed by complex diseases like cancer [21]. The core premise of systems pharmacology is that drugs exert their effects by perturbing biological networks, and that analyzing these networks can reveal novel therapeutic opportunities while improving the safety and efficacy of existing medications [20].

Chemogenomic libraries represent a key technological enable for applying systems pharmacology principles in precision oncology research. These libraries are structured collections of small molecules designed to systematically interrogate biological systems, typically targeting a defined subset of the genome. In oncology applications, these libraries allow researchers to identify patient-specific vulnerabilities by screening against disease models, connecting compound-target interactions to network-level perturbations [2]. However, it is important to recognize that even comprehensive chemogenomic libraries interrogate only a fraction of the human genome—approximately 1,000–2,000 targets out of 20,000+ genes—highlighting the need for strategic library design and network-based interpretation of screening results [22].

Key Concepts and Network Fundamentals

Network Components and Relationships

In systems pharmacology, networks are constructed with nodes (representing biological entities such as proteins, genes, drugs, or diseases) connected by edges (representing interactions or relationships between these entities) [20]. These networks can be analyzed to identify important topological properties, such as hubs (highly connected nodes) and centrality measures, which help pinpoint biologically significant elements within complex systems [20].

Table 1: Types of Networks Used in Systems Pharmacology Analysis

| Network Type | Node Entities | Edge Relationships | Primary Application in Drug Discovery |

|---|---|---|---|

| Protein-Protein Interaction | Proteins | Physical interactions between proteins | Identify downstream effects of target modulation and potential side effects [20] |

| Drug-Target | Drugs and proteins | Known interactions between compounds and their protein targets | Understand polypharmacology and drug repurposing opportunities [20] |

| Chemical Space | Compounds | Structural similarity (e.g., Tanimoto similarity) [23] | Library design and compound prioritization based on structural relationships |

| Disease-Gene | Diseases and genes | Known associations between genetic factors and diseases | Identify novel therapeutic targets for complex diseases [21] |

| Metabolic | Metabolites | Biochemical reactions connecting metabolites | Analyze metabolic pathway vulnerabilities in cancer [21] |

Network Visualization and Analysis Principles

Effective network visualization requires careful consideration of color contrast and symbolism to ensure clear interpretation. The Systems Biology Graphical Notation (SBGN) provides standardized symbols for biological network visualization, including distinct representations for stimulation (empty arrowhead), inhibition (bar perpendicular to arc), and catalysis (empty circle) [24]. When creating network visualizations, sufficient color contrast between elements and their background is essential for readability, which can be calculated using relative luminance values and contrast ratios [25].

Application Note: Network-Based Analysis of Glioblastoma Vulnerabilities

Experimental Protocol: Chemogenomic Screening for Patient-Specific Vulnerabilities

Purpose: To identify patient-specific therapeutic vulnerabilities in glioblastoma (GBM) through phenotypic screening of glioma stem cells using a targeted chemogenomic library.

Materials and Reagents:

- Primary glioma stem cells from patient-derived xenografts (representing major GBM subtypes)

- Targeted chemogenomic library (1,211 compounds covering 1,386 anticancer proteins) [2]

- Cell culture reagents appropriate for maintaining stem cell properties

- High-content imaging system for phenotypic profiling

- Viability assay reagents (e.g., ATP-based luminescence)

Procedure:

- Library Design and Curation:

- Select compounds based on coverage of protein targets and pathways implicated in cancer

- Apply filters for cellular activity, chemical diversity, and target selectivity

- Format library for high-throughput screening (e.g., 384-well plates)

Cell Preparation and Plating:

- Culture patient-derived glioma stem cells under conditions that maintain stemness

- Harvest cells at logarithmic growth phase

- Plate cells in assay-compatible plates at optimized density

- Allow cells to adhere and recover for appropriate duration

Compound Treatment:

- Dispense compounds across concentration range (typically 0.1-10 μM)

- Include appropriate controls (DMSO vehicle, positive cytotoxicity controls)

- Incubate for predetermined duration (e.g., 72-96 hours)

Phenotypic Profiling:

- Fix and stain cells for relevant phenotypic markers

- Acquire high-content images using automated microscopy

- Quantify multiple phenotypic features (cell number, morphology, death markers)

Data Analysis:

- Normalize data to vehicle controls

- Calculate viability and phenotypic scores

- Identify hits based on statistical significance and effect size

- Perform network analysis to connect compound targets to biological pathways

Troubleshooting Notes:

- Heterogeneous responses across patients and subtypes are expected; ensure sufficient biological replicates

- Confirm stem cell maintenance throughout assay duration using marker expression

- Validate screening hits through secondary orthogonal assays

Diagram 1: Experimental workflow for network-based chemogenomic screening in glioblastoma.

Research Reagent Solutions

Table 2: Essential Research Reagents for Network Pharmacology in Oncology

| Reagent/Category | Specific Examples | Function in Research | Considerations for Implementation |

|---|---|---|---|

| Chemogenomic Libraries | Targeted anticancer library (1,211 compounds) [2] | Systematic perturbation of cancer-relevant targets | Balance coverage with practicality; ensure target selectivity and cellular activity [2] |

| Bioinformatics Databases | DrugBank, TCMSP, PharmGKB, STRING [21] | Provide drug-target-disease relationship data | Integrate multiple databases for comprehensive coverage; address data heterogeneity |

| Network Analysis Tools | Cytoscape, NetworkX [23] | Network construction, visualization, and topological analysis | Choose tools based on scalability and integration capabilities with existing workflows |

| Compound-Target Annotation | ChEMBL, BindingDB | Link screening hits to potential mechanisms | Critical for interpreting phenotypic screening results and building networks [22] |

| Pathway Analysis Resources | KEGG, Gene Ontology, Reactome [21] | Functional interpretation of network components | Use multiple resources to overcome biases in individual databases |

Protocol: Constructing and Analyzing Chemical Space Networks

Computational Methods for Network Construction

Purpose: To create Chemical Space Networks (CSNs) that visualize relationships between compounds based on structural similarity, enabling compound prioritization and library analysis.

Software and Tools:

- RDKit (cheminformatics functionality)

- NetworkX (network analysis and manipulation)

- Matplotlib (visualization)

- Pandas (data handling) [23]

Procedure:

- Data Curation:

- Import compound structures (SMILES format)

- Remove salts and standardize structures using RDKit

- Check for and merge duplicate compounds

- Verify structure validity and unique identifiers

Similarity Calculation:

- Generate molecular fingerprints (e.g., RDKit 2D fingerprints)

- Compute pairwise similarity matrix (e.g., Tanimoto similarity)

- Apply similarity threshold (e.g., 0.5-0.7) to define edges [23]

Network Construction:

- Initialize NetworkX graph object

- Add nodes (compounds) with attributes (structure, properties)

- Add edges between nodes exceeding similarity threshold

- Store edge weights based on similarity values

Network Visualization:

- Define node positions using layout algorithms (e.g., spring layout)

- Map node colors to biological activity or properties

- Adjust edge styles based on similarity values

- Optionally replace node symbols with chemical structures [23]

Network Analysis:

- Calculate topological properties (clustering coefficient, degree distribution)

- Identify network communities (modularity analysis)

- Correlate network position with biological activity

Code Example (Key steps for CSN creation):

Diagram 2: Computational workflow for constructing chemical space networks.

Data Presentation and Interpretation

Table 3: Key Network Properties and Their Biological Interpretation in Chemical Space Networks

| Network Property | Calculation Method | Interpretation in Drug Discovery Context | Application Example |

|---|---|---|---|

| Clustering Coefficient | Proportion of triangles around node | Identifies structurally similar compound clusters | Guide compound selection to explore diverse chemotypes [23] |

| Degree Centrality | Number of connections per node | Highlights compounds with many structural analogs | Identify privileged scaffolds or potential promiscuous binders |

| Modularity | Strength of network division into modules | Reveals natural grouping of compounds by structural class | Support library design by ensuring coverage of multiple structural classes |

| Degree Assortativity | Correlation between degrees of connected nodes | Measures tendency of nodes to connect with similar nodes | Understand network connectivity patterns and information flow [23] |

Integrating Network Analysis with Multi-Omics Data

Protocol: Multi-Scale Network Construction for Target Identification

Purpose: To integrate drug-target networks with genomic and transcriptomic data to identify therapeutic targets in the context of cancer subtypes.

Materials:

- Drug-target interaction data (DrugBank, ChEMBL)

- Protein-protein interaction networks (STRING, BioGRID)

- Genomic data (mutations, copy number variations)

- Transcriptomic data (RNA-seq, gene expression)

- Patient clinical and outcome data

Procedure:

- Data Layer Acquisition:

- Compile drug-target interactions from public databases

- Obtain protein-protein interaction networks

- Import genomic and transcriptomic data for patient cohorts

- Align all data using standardized gene identifiers

Network Integration:

- Construct bipartite drug-target network

- Overlay with protein-protein interaction network

- Integrate genomic alterations as node attributes

- Incorporate gene expression as node weights

Network Analysis:

- Identify network neighborhoods of drug targets

- Calculate topological parameters (degree, betweenness centrality)

- Perform pathway enrichment analysis of network components

- Correlate network features with therapeutic response

Validation and Prioritization:

- Prioritize targets based on network topology and genomic alterations

- Validate predictions using orthogonal approaches (e.g., CRISPR screens)

- Develop multi-scale models connecting network perturbations to cellular phenotypes

Diagram 3: Multi-scale network integration for target identification in precision oncology.

The integration of systems pharmacology and network-based approaches provides a powerful framework for advancing precision oncology through chemogenomic library screening. By conceptualizing drug action as network perturbations rather than isolated target interactions, researchers can better understand therapeutic and adverse effects, identify patient-specific vulnerabilities, and develop more effective combination therapies. The protocols and application notes presented here offer practical guidance for implementing these approaches in oncology drug discovery, with particular relevance for addressing the challenges of tumor heterogeneity and adaptive resistance. As the field evolves, the integration of increasingly sophisticated network analysis with multi-omics data and artificial intelligence will further enhance our ability to map the complex relationship between chemical space and biological activity, ultimately accelerating the development of personalized cancer therapies.

In the evolving landscape of precision oncology, the ability to connect complex cellular phenotypes to specific molecular targets is paramount. Phenotypic screening represents an empirical strategy for interrogating biological systems without requiring complete prior knowledge of the underlying molecular pathways [22]. This approach has led to the discovery of first-in-class therapies with unprecedented mechanisms of action, such as pharmacological chaperones for cystic fibrosis and gene-specific splicing correctors for spinal muscular atrophy [22]. Chemogenomic libraries—targeted collections of bioactive small molecules—serve as the critical bridge linking observed phenotypic outcomes to the protein targets and biological pathways that drive them [2] [26]. These libraries are strategically designed to cover a wide spectrum of proteins and pathways implicated in cancer, making them particularly valuable for identifying patient-specific vulnerabilities in precision oncology research [2] [27]. The fundamental premise is that by observing phenotypic changes induced by chemical probes with known or partially known target annotations, researchers can work backward to identify the key biological targets and pathways responsible for disease phenotypes.

Quantitative Foundation: Chemogenomic Library Scope and Coverage

Designing a targeted screening library of bioactive small molecules requires careful consideration of library size, cellular activity, chemical diversity, availability, and target selectivity [2]. The resulting compound collections must balance comprehensive coverage with practical screening constraints. The table below summarizes the quantitative scope of typical chemogenomic libraries and their target coverage in the context of human genome.

Table 1: Chemogenomic Library Coverage of the Human Genome

| Library Type | Representative Compound Count | Targeted Proteins | Approximate Human Genome Coverage | Key Characteristics |

|---|---|---|---|---|

| Minimal Screening Library | 1,211 [2] | 1,386 [2] | ~7% (1,386/20,000+) [22] | Covers essential anticancer proteins; optimized for efficiency. |

| Physical Screening Library | 789 [2] | 1,320 [2] | ~6.6% (1,320/20,000+) [22] | Used in pilot studies; practical implementation of virtual library. |

| Comprehensive Chemogenomic Library | 1,000 - 2,000 compounds [22] | 1,000 - 2,000 targets [22] | ~5-10% (1,000-2,000/20,000+) [22] | Interrogates the "druggable" genome; targets with known ligands. |

Despite their value, it is crucial to recognize that even the best chemogenomic libraries interrogate only a fraction of the human genome—approximately 1,000–2,000 targets out of more than 20,000 genes [22]. This limitation highlights a significant opportunity for expanding the druggable genome and developing compounds for novel targets. The highly curated virtual library of 1,211 compounds designed to target 1,386 anticancer proteins demonstrates the efficient design principles that maximize target coverage with minimal compound redundancy [2]. In practice, a physical library of 789 compounds covering 1,320 of these targets has been successfully deployed for phenotypic screening in patient-derived glioma stem cells, revealing highly heterogeneous responses across patients and glioblastoma subtypes [2].

Experimental Protocols

Protocol 1: Phenotypic Screening Using a Chemogenomic Library

Purpose: To identify compounds that induce a desired phenotypic change in a disease-relevant cellular model, thereby revealing potential therapeutic targets.

Materials:

- Cell Model: Patient-derived cells (e.g., glioma stem cells for glioblastoma [2]), primary cells, or relevant cell lines.

- Chemogenomic Library: A curated library of bioactive small molecules (e.g., a 789-compound library [2]).

- Assay Reagents: Cell culture media, stains, or dyes compatible with high-content imaging or other endpoint measurements.

- Equipment: Automated liquid handler, multi-well plates, high-content imaging system or plate reader, and data analysis software.

Procedure:

- Cell Seeding: Seed cells into 384-well plates at an optimized density using an automated liquid handler to ensure consistency. Allow cells to adhere and recover for 24 hours.

- Compound Transfer: Using a pintool or acoustic dispenser, transfer the chemogenomic library compounds into the assay plates. Include DMSO vehicle controls and appropriate positive controls on each plate.

- Incubation: Incubate compound-treated cells for a predetermined period (e.g., 72-144 hours) under standard culture conditions (37°C, 5% CO₂).

- Phenotypic Endpoint Measurement:

- Fixation and Staining: Fix cells with 4% paraformaldehyde, then permeabilize with 0.1% Triton X-100. Stain with fluorescent dyes (e.g., Hoechst for nuclei, phalloidin for actin, antibodies for specific markers).

- High-Content Imaging: Acquire images using a high-content microscope with a 20x objective. Capture multiple fields per well to ensure statistical robustness.

- Image and Data Analysis:

- Extract quantitative features (e.g., cell count, nuclear size, cytoskeletal integrity) from the images using analysis software.

- Normalize data to vehicle control wells (set as 100% viability or baseline phenotype) and positive control wells (set as 0% viability or maximal effect).

- Calculate Z-scores to identify hits that significantly alter the phenotype beyond a defined threshold (e.g., Z-score > 2 or < -2).

Troubleshooting Note: The limited throughput of complex phenotypic models can be a bottleneck. Prioritize assays with the highest biological relevance and implement automation where possible to increase throughput [22].

Protocol 2: Target Deconvolution for Phenotypic Hits

Purpose: To identify the molecular target(s) responsible for the observed phenotypic effect of a confirmed hit compound.

Materials:

- Biotinylated Analogues: Synthesize or source biotin-tagged versions of the hit compound.

- Cell Lysates: Prepare from the same cell line used in the phenotypic screen.

- Streptavidin Beads: For pull-down experiments.

- Mass Spectrometry (MS)-Grade Reagents: Water, acetonitrile, trypsin, and compatible buffers.

Procedure:

- Cellular Protein Interaction:

- Treat cells with the biotinylated hit compound at the active concentration determined in the primary screen. Include a vehicle control and an inactive, structurally similar analogue as negative controls.

- Lyse cells using a non-denaturing RIPA buffer supplemented with protease and phosphatase inhibitors.

- Affinity Purification:

- Incubate clarified cell lysates with streptavidin-conjugated magnetic beads for 2-4 hours at 4°C with gentle rotation.

- Wash beads stringently with lysis buffer followed by PBS to remove non-specifically bound proteins.

- Protein Elution and Digestion:

- Elute bound proteins by boiling beads in SDS-PAGE loading buffer or via competitive elution with free biotin.

- Separate proteins by SDS-PAGE and perform in-gel tryptic digestion. Alternatively, perform on-bead digestion.

- Mass Spectrometric Analysis and Target Identification:

- Analyze resulting peptides by liquid chromatography-tandem mass spectrometry (LC-MS/MS).

- Identify proteins by searching fragmentation spectra against a human protein database.

- Compare protein lists from the active compound pull-down to the negative controls. Proteins significantly enriched in the active sample are potential cellular targets.

Validation: Confirm target engagement using complementary techniques such as cellular thermal shift assays (CETSA), surface plasmon resonance (SPR), or genetic knockdown/knockout to see if modulating the target recapitulates the phenotype.

Computational and Data Analysis Approaches

The era of big data in drug discovery necessitates robust computational tools to analyze and interpret the complex datasets generated from phenotypic and target identification screens [28]. Visual analytics frameworks such as Scaffold Hunter combine techniques from data mining and information visualization to support the analysis of chemical compound data [28]. This platform allows researchers to interactively explore high-dimensional chemical and biological data through multiple interconnected views, including scaffold trees, dendrograms, heat maps, and molecule clouds [28].

Table 2: Key Computational Tools for Data Analysis in Phenotype-to-Target Workflows

| Tool/Approach | Primary Function | Application in Phenotype-to-Target |

|---|---|---|

| Scaffold Hunter [28] | Visual analytics framework for chemical data. | Interactive analysis of structure-activity relationships; visualization of chemical space and bioactivity data. |

| CDD Visualization [29] | Browser-based software for plotting and analyzing large data sets. | Identification of patterns and outliers in screening data; generation of publication-quality graphics. |

| Machine Learning (ML) [30] | Predictive modeling of molecular properties and interactions. | Prediction of drug-target interactions; optimization of lead compounds; analysis of high-content screening data. |

| Chemogenomic Methods [26] | In silico prediction of drug-target interactions. | Classification of drug-target interactions using features from chemical and genomic spaces. |

Machine learning approaches, particularly deep learning, are revolutionizing the field by enabling precise predictions of molecular properties, protein structures, and ligand-target interactions [30]. These methods are especially valuable for prioritizing compounds and targets for experimental validation, thereby accelerating the drug discovery process. Furthermore, the application of natural language processing tools like SciBERT and BioBERT can streamline the extraction of relevant biomedical knowledge from the vast scientific literature, potentially uncovering novel drug-disease relationships [30].

Diagram 1: Phenotype to Target Workflow. This diagram outlines the key experimental stages in linking chemical tools to biological outcomes.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents and resources essential for implementing the described phenotype-to-target pipeline.

Table 3: Essential Research Reagents for Phenotype-to-Target Studies

| Research Reagent | Specification/Example | Critical Function in Workflow |

|---|---|---|

| Curated Chemogenomic Library | 789 compounds targeting 1,320 anticancer proteins [2] | Provides the foundational chemical tools to probe biological systems and induce phenotypic changes. |

| Patient-Derived Cell Models | Glioma stem cells (GSCs) from glioblastoma patients [2] | Offers a clinically relevant, patient-specific model system that preserves tumor heterogeneity. |

| High-Content Imaging System | Automated microscope with 20x or higher objective [2] | Enables quantitative, multi-parameter analysis of complex phenotypic endpoints at single-cell resolution. |

| Affinity Purification Reagents | Biotinylated compound analogues and streptavidin beads [26] | Facilitates the physical pull-down of compound-bound proteins for target identification via mass spectrometry. |

| Visual Analytics Software | Scaffold Hunter [28] or CDD Visualization [29] | Allows interactive exploration and interpretation of high-dimensional chemical and biological screening data. |

Diagram 2: Relationship Between Compound, Target, and Phenotype. This diagram illustrates the logical chain of causality from compound-target engagement to phenotypic outcome.

Screening in Action: Methodologies and Translational Applications in Cancer Research

High-throughput phenotypic profiling has emerged as a powerful strategy in precision oncology, enabling the functional characterization of cellular responses to genetic and chemical perturbations. Within this domain, the Cell Painting assay has established itself as a cornerstone method for generating rich, morphological profiles that can serve as cellular "fingerprints" for drug mechanisms and disease states [31]. By multiplexing fluorescent dyes to mark multiple organelles, this assay creates high-dimensional data that captures subtle phenotypic changes often invisible to targeted assays [32] [31].

The integration of phenotypic profiling with chemogenomic libraries—collections of compounds with known target annotations—creates a powerful framework for identifying patient-specific vulnerabilities and accelerating targeted therapy development [33] [3]. This approach is particularly valuable in oncology, where tumor heterogeneity and evolving resistance mechanisms demand functional assessment of drug responses. These profiling methods enable drug repositioning, mechanism of action (MoA) deconvolution, and the identification of novel therapeutic vulnerabilities based on functional phenotypes rather than predetermined molecular hypotheses [31] [33].

Core Methodologies: From Cell Painting to Advanced Multiplexing

The Standard Cell Painting Assay

The foundational Cell Painting protocol utilizes six fluorescent dyes imaged across five channels to capture morphological information from eight cellular components [31]. This standardized approach balances comprehensiveness with practical implementation for high-throughput screening.

Table 1: Standard Cell Painting Reagent Configuration

| Cellular Component | Fluorescent Dye | Imaging Channel |

|---|---|---|

| Nucleus | Hoechst | DNA (e.g., 405 nm) |

| Nucleoli & Cytoplasmic RNA | SYTO 14 | RNA |

| Endoplasmic Reticulum | Concanavalin A, Alexa Fluor 488 conjugate | ER |

| Actin Cytkeleton | Phalloidin (e.g., Alexa Fluor 568 conjugate) | Actin |

| Golgi Apparatus | Wheat Germ Agglutinin, Alexa Fluor 594 conjugate | Golgi |

| Mitochondria | MitoTracker Deep Red | Mito (e.g., 640 nm) |

The experimental workflow follows a standardized sequence: (1) cell plating in multi-well plates (typically 384-well format for high-throughput applications), (2) chemical or genetic perturbation (usually for 24-48 hours), (3) fixation and multiplexed staining, (4) high-content imaging using automated microscopy systems, and (5) automated image analysis to extract ~1,500 morphological features per cell [31] [34]. These features include measurements of size, shape, texture, intensity, and spatial relationships across all stained compartments.

Cell Painting PLUS: Enhanced Multiplexing Capacity

The Cell Painting PLUS (CPP) assay represents a significant advancement that addresses key limitations of the standard approach [32]. Through an innovative iterative staining-elution cycle method, CPP expands the multiplexing capacity to at least seven fluorescent dyes that label nine different subcellular compartments separately, including the addition of lysosomes which are not typically included in standard Cell Painting [32].

The key innovation in CPP is the development of an optimized dye elution buffer (0.5 M L-Glycine, 1% SDS, pH 2.5) that efficiently removes staining signals while preserving cellular morphology for subsequent staining rounds [32]. This enables fully sequential imaging of each dye in separate channels, achieving complete spectral separation and generating more specific phenotypic profiles without signal bleed-through compromises.

Diagram 1: Cell Painting PLUS workflow showing iterative staining.

Table 2: Comparison of Standard vs. PLUS Cell Painting Methods

| Parameter | Standard Cell Painting | Cell Painting PLUS |

|---|---|---|

| Dyes/Compartments | 6 dyes, 8 compartments | 7+ dyes, 9+ compartments |

| Imaging Channels | 5 channels (with merging) | Separate channel per dye |

| Lysosome Staining | Not typically included | Included |

| Signal Specificity | Compromised by channel merging | Optimal (no merging) |

| Customization | Fixed panel | Highly customizable |

| Experimental Time | Standard protocol | Extended due to cycles |

| Information Content | High | Enhanced organelle specificity |

Practical Implementation and Protocol Adaptation

Protocol for 96-Well Plate Format

While high-throughput screening often utilizes 384-well plates, adaptation to 96-well plates increases accessibility for laboratories with medium-throughput requirements [34]. The following protocol has been validated for U-2 OS human osteosarcoma cells:

Cell Culture and Plating:

- Culture U-2 OS cells in McCoy's 5a medium supplemented with 10% FBS and 1% penicillin-streptomycin [34]

- Maintain cells below 80-90% confluence and use within three passages after thawing

- Seed cells at 5,000 cells/well in 96-well plates 24 hours prior to chemical exposures

- Critical consideration: Cell seeding density significantly influences phenotypic profiles and requires optimization for different cell lines [34]

Chemical Exposure:

- Prepare compound stocks in DMSO at 200× treatment concentration

- Dilute in exposure media to final DMSO concentration of 0.5% v/v

- Include appropriate controls: vehicle (DMSO), phenotypic negative control (sorbitol), and cytotoxic control (staurosporine)

- Expose cells for 24 hours before fixation and staining [34]

Staining and Image Acquisition:

- Fix cells and stain according to standard Cell Painting protocols [31]

- Acquire images using high-content imaging systems (e.g., Opera Phenix)

- Extract features using image analysis software (e.g., CellProfiler, Columbus)

- Generate ~1,300 morphological features per cell for subsequent analysis [34]

Quantitative Analysis and Benchmark Concentrations

Concentration-response modeling of phenotypic profiles enables derivation of benchmark concentrations (BMCs) for chemical hazard assessment [34]. The analysis workflow includes:

- Feature normalization to vehicle control cells

- Multivariate analysis (principal component analysis)

- Mahalanobis distance calculation for each treatment concentration

- Concentration-response modeling to determine BMCs

Studies demonstrate that BMCs derived from 96-well and 384-well formats show good concordance, with most differing by less than one order of magnitude [34]. This supports the robustness and transferability of Cell Painting across laboratory settings and plate formats.

Research Reagent Solutions

Table 3: Essential Materials for Cell Painting Implementation

| Reagent/Equipment | Function/Purpose | Implementation Notes |

|---|---|---|

| U-2 OS cells (human osteosarcoma) | Standard cell model for phenotypic profiling | Also applicable: MCF-7, HepG2, A549, patient-derived cells [32] [34] |

| Multiplexed fluorescent dyes (Hoechst, Phalloidin, etc.) | Staining of specific organelles | Standard set: 6 dyes; CPP: 7+ dyes with elution capability [32] [31] |

| Opera Phenix or similar HCS system | Automated high-content imaging | Enables high-throughput acquisition of multiparametric image data [34] |

| CellProfiler/Columbus | Image analysis and feature extraction | Extracts ~1,300-1,500 morphological features/cell [34] [35] |

| 96-well or 384-well plates | Experimental format | 384-well for high-throughput; 96-well for medium-throughput [34] |

| Dye elution buffer (CPP-specific) | Signal removal between staining cycles | 0.5 M L-Glycine, 1% SDS, pH 2.5 [32] |

Data Analytics and Computational Workflows

The scale of data generated by Cell Painting requires specialized computational approaches. For the JUMP-Cell Painting dataset—comprising more than 2 billion cell images—innovative analytics workflows have been developed [35].

The Equivalence Score (Eq. Score) provides a multivariate metric for comparing treatment effects against negative controls, enabling efficient large-scale profiling [35]. This approach demonstrates superior performance in k-nearest neighbor classification of morphological profiles compared to principal component analysis or raw feature analysis, highlighting the importance of specialized computational methods for phenotypic data.

Diagram 2: Computational workflow for phenotypic profiling.

Integration with Chemogenomic Libraries for Precision Oncology

The combination of phenotypic profiling with chemogenomic libraries creates a powerful platform for precision oncology discovery. These libraries contain compounds with known target annotations, enabling hypothesis-driven investigation of cellular vulnerabilities [33] [3].

In glioblastoma, this approach has identified patient-specific vulnerabilities by screening glioma stem cells from patients against a library of 789 compounds covering 1,320 anticancer targets [3]. The resulting phenotypic profiles revealed highly heterogeneous responses across patients and molecular subtypes, highlighting the potential for functional precision oncology beyond genomic markers alone.

The integration framework follows a logical progression:

- Library Design: Curate compounds based on target coverage, chemical diversity, and relevance to cancer pathways

- Phenotypic Screening: Apply Cell Painting to capture multidimensional responses

- Profile Analysis: Cluster compounds and perturbations based on phenotypic similarity

- Target Inference: Annotate unknown compounds or patient-specific vulnerabilities based on known reference compounds

This integrated approach is particularly valuable for identifying therapeutic options for tumors without clear genomic drivers or with rare mutations, expanding the scope of precision oncology beyond conventional biomarker-guided therapy.

Functional genomics represents a powerful approach for directly annotating gene functions by uncovering their roles and interactions in biological processes, thereby establishing causal links between genes and diseases [36]. Perturbomics, a key functional genomics strategy, systematically analyzes phenotypic changes resulting from targeted gene perturbation to infer gene function [36]. The advent of CRISPR-Cas technology has revolutionized perturbomics by enabling precise, scalable gene editing with fewer off-target effects compared to previous RNAi methods, making it particularly valuable for identifying novel therapeutic targets in oncology [36]. Within precision oncology, chemogenomic library screening integrates chemical and genetic perturbation data to identify patient-specific vulnerabilities and optimize therapeutic strategies [3]. The integration of CRISPR screening with chemogenomic approaches provides a powerful framework for identifying novel drug targets and understanding drug mechanisms of action across diverse cancer types and patient populations [3] [37].

Technical Foundations of CRISPR-Cas Screening

Basic Screening Design and Workflow

The fundamental CRISPR-Cas9 system consists of two core components: the Cas9 nuclease that induces double-strand DNA breaks and the guide RNA (gRNA) that directs Cas9 to specific genomic loci [36]. Following DNA cleavage, cellular repair via non-homologous end joining often introduces frameshifting insertion or deletion mutations that effectively disrupt gene function [36]. A standard pooled CRISPR screening workflow involves several key steps: (1) designing gRNA libraries targeting either genome-wide gene sets or specific pathways; (2) synthesizing and cloning gRNAs into viral vectors; (3) transducing a large population of Cas9-expressing cells with the viral library; (4) applying selective pressures such as drug treatments or nutrient deprivation; (5) harvesting genomic DNA from selected populations and amplifying gRNA sequences; and (6) sequencing and computational analysis to identify gRNAs enriched or depleted under selection [36].

Advanced CRISPR Screening Modalities

Beyond simple knockout screens, several advanced CRISPR screening modalities have expanded the applications for target discovery:

- CRISPR interference (CRISPRi) utilizes nuclease-inactive dCas9 fused to transcriptional repressors like KRAB to silence gene expression without introducing DNA breaks, enabling studies of essential genes, non-coding RNAs, and genomic enhancers [36].

- CRISPR activation (CRISPRa) employs dCas9 fused to transcriptional activators such as VP64, VPR, or SAM to enhance gene expression, facilitating gain-of-function studies that complement loss-of-function approaches [36].