Chemogenomic Libraries for Phenotypic Screening: A Guide to Accelerating Target Deconvolution and Drug Discovery

This article provides a comprehensive resource for researchers and drug development professionals on the application of chemogenomic libraries in phenotypic screening.

Chemogenomic Libraries for Phenotypic Screening: A Guide to Accelerating Target Deconvolution and Drug Discovery

Abstract

This article provides a comprehensive resource for researchers and drug development professionals on the application of chemogenomic libraries in phenotypic screening. It explores the foundational principles of these targeted compound collections, which are annotated with known biological activities, and their role in bridging the gap between phenotypic observation and molecular target identification. The content covers practical strategies for library design, screening methodologies, and the critical interpretation of complex polypharmacology data. Furthermore, it addresses common challenges and limitations in the field, such as library coverage and assay relevance, while presenting validation frameworks and future directions, including the integration of computational and multi-omics data for enhanced predictive power in discovering novel therapeutics.

Foundations of Chemogenomics: Bridging Phenotypic Screening and Target-Based Discovery

Defining Chemogenomic Libraries and Their Core Components

Chemogenomic libraries represent a strategic intersection of chemical and biological sciences, serving as powerful tools for phenotypic screening in modern drug discovery. These annotated collections of small molecules enable researchers to deconvolute complex biological responses and identify novel therapeutic targets by linking observable phenotypic changes to specific protein targets or pathways. This technical guide details the core components, construction methodologies, and applications of chemogenomic libraries, with particular emphasis on their implementation within phenotypic screening workflows. We provide comprehensive experimental protocols, quantitative analyses of library compositions, and visualization frameworks to support researchers in developing and utilizing these resources for targeted therapeutic discovery.

Chemogenomic libraries are systematically designed collections of well-annotated small molecules used to interrogate biological systems through phenotypic screening [1]. Unlike traditional compound libraries selected for chemical diversity, chemogenomic libraries are curated based on biological target coverage, with each compound serving as a pharmacological probe for specific proteins or pathways. The fundamental premise is that when a compound from such a library produces a phenotypic effect, its annotated targets become candidates for mediating the observed phenotype, thereby facilitating target deconvolution [2] [1].

The resurgence of phenotypic drug discovery (PDD) has increased the importance of these libraries, as they help bridge the gap between phenotypic observations and molecular mechanisms [2]. Where traditional phenotypic screening identifies compounds that modulate phenotypes without target knowledge, chemogenomic approaches integrate target-pathway-disease relationships to create a framework for mechanistic interpretation [2]. This strategy has proven particularly valuable for complex diseases like cancer, neurological disorders, and metabolic diseases, which often involve multiple molecular abnormalities rather than single defects [2].

Core Components of Chemogenomic Libraries

Structural and Chemical Elements

The chemical composition of a chemogenomic library requires careful balancing of multiple factors to ensure both broad target coverage and interpretable results:

Scaffold Diversity: Libraries should incorporate multiple chemical scaffolds to increase the probability of capturing diverse phenotypes and provide orthogonality through chemically distinct compounds that are less likely to share unknown off-target effects [3]. Analysis of successful libraries reveals they typically contain 29+ distinct molecular skeletons [3].

Selectivity Profiles: Individual compounds are characterized for activity against both primary targets and potential off-targets. The ideal compound exhibits high potency for its intended target (typically ≤1 μM) with minimal off-target interactions (≤5 annotated off-targets) [3].

Physicochemical Properties: Compounds are optimized for cellular permeability and low cytotoxicity to ensure phenotypic effects reflect target modulation rather than general toxicity. Cytotoxicity profiling in relevant cell lines (e.g., HEK293T) assesses effects on growth rate, metabolic activity, and apoptosis induction [3].

Table 1: Quantitative Analysis of Chemogenomic Library Components Based on Recent Implementations

| Library Component | Typical Range | Specific Examples | Key Considerations |

|---|---|---|---|

| Library Size | 34 - 5,000 compounds | 34-compound NR3-focused library [3], 5,000-compound diverse target library [2] | Balance between coverage and screening feasibility |

| Target Potency | ≤1 μM for well-covered targets, ≤10 μM for less explored targets | NR3C1 ligands (sub-μM) [3], NR3B ligands (≤10 μM) [3] | Concentration selection critical for adequate target engagement |

| Chemical Diversity | 29+ molecular scaffolds in 34-compound library [3] | NR3 library with low pairwise Tanimoto similarity [3] | Reduces probability of shared unknown off-targets |

| Target Coverage | ~1,000-2,000 of 20,000+ human genes [4] | Kinase-focused, GPCR-focused libraries [2] | Best libraries cover only fraction of druggable genome |

Biological and Annotation Elements

The biological annotations transform a chemical collection into a true chemogenomic resource:

Target Annotations: Each compound is annotated with primary molecular targets, supported by standardized bioactivity data (Ki, IC50, EC50) from databases like ChEMBL [2] [3]. The NR3 library development, for example, integrated data from ChEMBL, PubChem, IUPHAR/BPS, BindingDB, and Probes&Drugs [3].

Pathway Context: Integration with pathway databases (KEGG, Gene Ontology) places targets within broader biological systems, enabling interpretation of phenotypic outcomes in pathway contexts [2].

Mechanism of Action Diversity: Libraries incorporate compounds with diverse mechanisms (agonists, antagonists, inverse agonists, modulators, degraders) for each target where available, providing richer biological information [3].

Applications in Phenotypic Screening

Target Identification and Validation

Chemogenomic libraries excel in connecting phenotypic outcomes to molecular targets. In proof-of-concept application, an NR3 chemogenomic library identified roles for ERR (NR3B) and GR (NR3C1) in regulating and resolving endoplasmic reticulum stress, revealing previously unexplored therapeutic potential for these nuclear receptors [3]. This demonstrates how focused libraries can elucidate novel biology for even well-characterized target families.

Addressing Complex Diseases

The selective polypharmacology approach enabled by chemogenomic libraries is particularly valuable for complex diseases like glioblastoma (GBM), which involves multiple signaling pathways. Library screening in patient-derived GBM spheroids identified compound IPR-2025, which inhibited cell viability with single-digit micromolar IC50 values—substantially better than standard-of-care temozolomide—while sparing normal cells [5]. Subsequent thermal proteome profiling confirmed engagement with multiple targets, illustrating how rationally designed libraries can yield compounds with optimal polypharmacological profiles [5].

Integration with Advanced Phenotyping Technologies

Modern chemogenomic libraries leverage advanced phenotyping platforms like the Cell Painting assay, which uses high-content imaging to capture comprehensive morphological profiles [2]. This integration creates powerful networks linking drug-target-pathway-disease relationships with morphological outcomes, enabling more sophisticated deconvolution of screening results [2].

Table 2: Experimental Applications of Chemogenomic Libraries in Disease Research

| Disease Area | Library Characteristics | Screening Model | Key Outcomes |

|---|---|---|---|

| Glioblastoma (GBM) [5] | Library enriched for GBM-specific targets using tumor RNA sequence and mutation data | 3D patient-derived spheroids | Identified compound with selective polypharmacology, superior to temozolomide |

| Steroid Hormone Signaling [3] | 34 compounds covering all NR3 subfamilies | Cellular models of endoplasmic reticulum stress | Revealed novel roles for ERR and GR in stress resolution |

| Biofuel Production [6] | DNA-barcoded mutant libraries | Microbial growth in plant hydrolysates | Identified tolerance genes in Z. mobilis and S. cerevisiae |

Library Design and Curation Methodologies

Compound Selection and Prioritization

The development of a high-quality chemogenomic library follows a rigorous curation pipeline:

Target Identification: Define the target space based on scientific objectives, whether focusing on specific protein families (e.g., NR3 receptors) [3] or disease-associated targets (e.g., GBM subnetwork) [5].

Candidate Compilation: Filter available ligands based on potency (typically ≤1 μM), commercial availability, and initial selectivity profiles [3]. For the NR3 library, this began with 9,361 annotated ligands filtered to 40 candidates [3].

Diversity Optimization: Apply computational methods to maximize chemical diversity. The NR3 library used pairwise Tanimoto similarity computed on Morgan fingerprints with a diversity picker to ensure low molecular similarity [3].

Experimental Validation: Profile selected compounds for cytotoxicity, selectivity, and liability targets before final library assembly [3].

Computational Enrichment Strategies

Advanced libraries incorporate structural and systems biology data for target-focused enrichment. In the GBM application, researchers identified druggable binding sites on proteins within a GBM-specific interaction network, then used molecular docking to screen compounds against 316 druggable binding sites [5]. This rational enrichment strategy improved the probability of identifying compounds with desired polypharmacology against disease-relevant targets.

Essential Research Reagents and Tools

Table 3: Key Research Reagent Solutions for Chemogenomic Library Development and Screening

| Reagent/Tool Category | Specific Examples | Function in Workflow |

|---|---|---|

| Bioactivity Databases | ChEMBL [2] [3], PubChem [3], BindingDB [3] | Source of standardized compound-target bioactivity data for library annotation |

| Pathway Resources | KEGG [2], Gene Ontology [2] | Contextualizing targets within biological pathways and processes |

| Selectivity Panels | Nuclear receptor reporter assays [3], kinase profiling [3] | Experimental determination of compound selectivity across target families |

| Liability Screens | Differential scanning fluorimetry (DSF) panels [3] | Identifying interactions with promiscuous targets that could confound results |

| Cytotoxicity Assays | Growth rate, metabolic activity, apoptosis induction [3] | Ensuring compounds are non-toxic at concentrations used for phenotypic screening |

| Morphological Profiling | Cell Painting assay [2], High-content imaging [2] | Generating multidimensional phenotypic profiles for mechanism interrogation |

Experimental Workflows and Protocols

Library Assembly and Characterization Protocol

The following workflow details the comprehensive characterization of candidate compounds for chemogenomic library inclusion, based on established methodologies [3]:

Initial Compound Acquisition

- Source compounds from commercial vendors with purity ≥95%

- Prepare stock solutions in DMSO with standardized concentration

Cytotoxicity Profiling

- Culture HEK293T cells in appropriate medium

- Treat cells with compound concentrations >>EC50/IC50 (typically 0.3-10 μM)

- Assess multiple toxicity endpoints:

- Growth rate measurement over 72 hours

- Metabolic activity using MTT or similar assays

- Apoptosis/necrosis induction via flow cytometry

Selectivity Screening

- Perform uniform hybrid reporter gene assays for broad target families

- Test agonistic, antagonistic, and inverse agonistic activity

- Include representative receptors from NR1, NR2, NR4, and NR5 families

- Conduct assays at concentrations >>EC50/IC50 for primary targets

Liability Target Screening

- Employ differential scanning fluorimetry (DSF) for promiscuous targets

- Test at 20 μM concentration against panel of kinases and bromodomains

- Identify compounds with minimal liability target interactions

Final Compound Selection

- Compare characterized candidates based on comprehensive profiles

- Prioritize compounds with complementary selectivity and mode of action

- Optimize for full target family coverage with minimal redundancy

Phenotypic Screening Implementation

For phenotypic screening with assembled chemogenomic libraries [5]:

Model System Selection

- Employ disease-relevant models (patient-derived spheroids, primary cells)

- Implement 3D culture systems where appropriate

- Include relevant normal cell controls for selectivity assessment

Screening Execution

- Treat systems with library compounds at validated concentrations

- Include appropriate controls (DMSO, reference compounds)

- Monitor phenotypic endpoints relevant to disease biology

Hit Validation

- Confirm phenotype in secondary assays

- Exclude cytotoxic compounds through counter-screening

- Prioritize compounds with novel mechanism potential

Target Deconvolution

- Employ multi-omics approaches (RNA sequencing, proteomics)

- Utilize thermal proteome profiling for target engagement confirmation

- Integrate chemogenomic annotations with phenotypic data

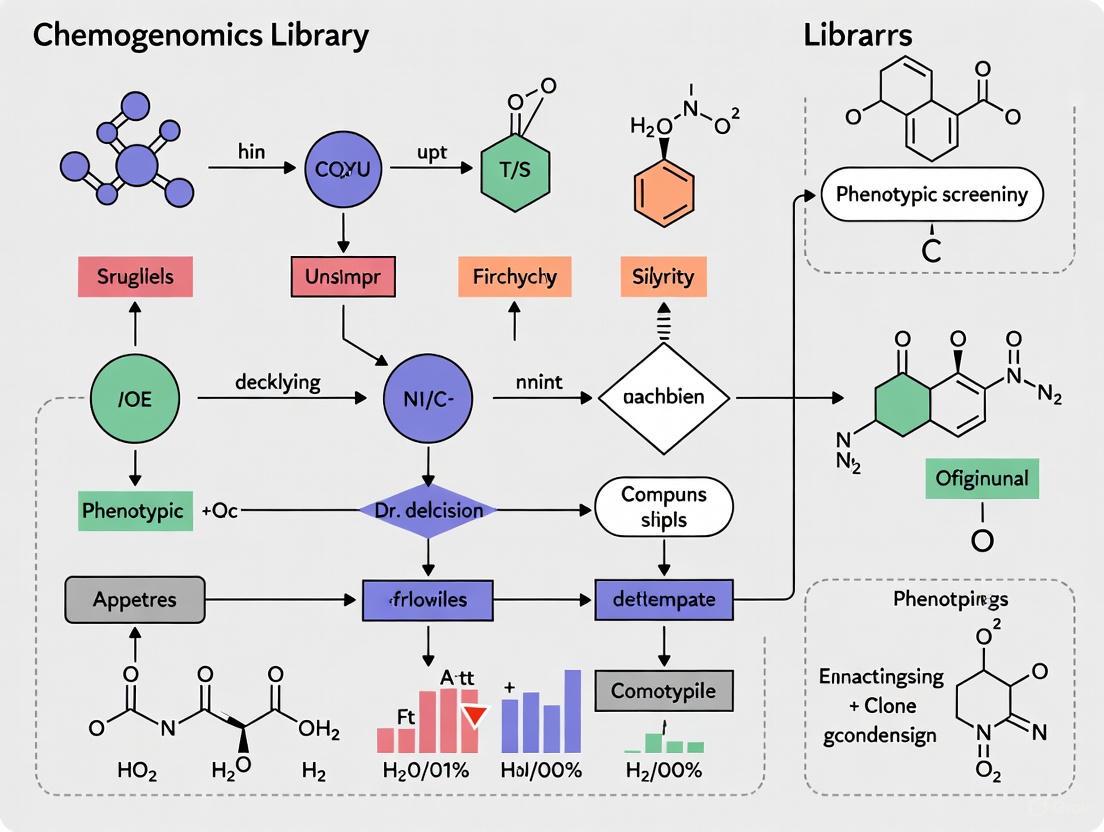

Visualizing Workflows and Relationships

Chemogenomic Library Development Workflow

Phenotypic Screening and Target Identification

Despite their utility, current chemogenomic libraries face limitations, covering only approximately 1,000-2,000 of the 20,000+ protein-coding genes in the human genome [4]. This coverage gap represents both a challenge and opportunity for library development. Future advancements will likely focus on expanding target coverage, particularly for poorly explored protein families, and improving library design through integration of structural biology, chemoproteomics, and artificial intelligence approaches.

The integration of chemogenomic libraries with emerging technologies—including CRISPR-based functional genomics, high-content morphological profiling, and multi-omics analyses—will further enhance their utility for phenotypic drug discovery [2] [4]. These integrated approaches promise to accelerate the identification and validation of novel therapeutic targets, particularly for complex diseases that have proven intractable to single-target strategies.

In conclusion, chemogenomic libraries represent a powerful platform for phenotypic screening that facilitates the conversion of phenotypic observations into target-based discovery approaches. Through careful design, comprehensive annotation, and strategic implementation, these libraries serve as essential tools for modern drug discovery, enabling researchers to navigate the complexity of biological systems and identify novel therapeutic opportunities.

The Resurgence of Phenotypic Screening in Modern Drug Discovery

For decades, target-based drug discovery (TDD) has dominated the pharmaceutical landscape, operating on the reductionist principle of "one target—one drug." However, the disproportionate number of first-in-class medicines originating from phenotypic approaches has driven a major resurgence in phenotypic drug discovery (PDD) [7]. Modern PDD represents a fundamental shift from this target-centric view to a biology-first approach that examines the effects of chemical or genetic perturbations on cells, tissues, or whole organisms without presupposing molecular targets [8]. This strategy is particularly valuable for complex, polygenic diseases such as cancers, neurological disorders, and diabetes, which often result from multiple molecular abnormalities rather than a single defect [2].

The renewed utilization of PDD has started to change how we conceptualize drug discovery and has served as an important testing ground for technical innovations in the life sciences [7]. By combining target-agnostic screening with modern tools like high-content imaging, functional genomics, and artificial intelligence (AI), researchers can now capture complex cellular responses and discover active compounds with novel mechanisms of action (MoA), particularly in systems where the biological target is unknown or difficult to isolate [9].

The Phenotypic Screening Workflow and Key Methodologies

Core Experimental Framework

Modern phenotypic screening employs a systematic workflow that integrates biology, chemistry, and computational analysis. The process typically involves disease-relevant models (including primary cells, co-cultures, and 3D systems), chemical or genetic perturbations, multiparameter readouts (often via high-content imaging), and computational deconvolution to identify hits and their mechanisms of action [7] [8]. This framework allows researchers to identify compounds that modulate cells to produce a desired outcome even when the phenotype requires targeting several biological pathways or systems simultaneously [10].

The following diagram illustrates the integrated workflow of a modern phenotypic screening campaign, highlighting the closed-loop feedback between experimental and computational phases:

Advanced Profiling Technologies

Cell Painting has emerged as a particularly powerful high-content imaging assay for phenotypic screening. This multiplexed approach uses fluorescent dyes to visualize multiple cellular compartments simultaneously—including the nucleus, endoplasmic reticulum, mitochondria, Golgi apparatus, actin cytoskeleton, and cytoplasmic RNA [2] [9]. The resulting images capture a wealth of morphological information that serves as a "fingerprint" of cellular state, enabling unsupervised pattern recognition and detection of subtle phenotypic changes that might escape traditional single-parameter assays [9].

Recent advances have enhanced Cell Painting with live-cell multiplexed assays that classify cells based on nuclear morphology—an excellent indicator for cellular responses such as early apoptosis and necrosis. When combined with measurements of cytoskeletal morphology, cell cycle, and mitochondrial health, this provides a comprehensive, time-dependent characterization of compound effects on cellular health in a single experiment [11].

Chemogenomic Libraries for Phenotypic Screening

The design of specialized chemical libraries is critical for effective phenotypic screening. Chemogenomic libraries represent collections of selective small molecules that modulate protein targets across the human proteome and can induce phenotypic perturbations [2]. Unlike target-focused libraries, these collections are optimized for phenotypic studies by covering a large and diverse panel of drug targets involved in diverse biological effects and diseases [2].

Table 1: Key Components of Chemogenomic Libraries for Phenotypic Screening

| Library Component | Description | Key Features | Applications |

|---|---|---|---|

| Bioactive Compounds | Small molecules with known or potential biological activity | Well-annotated targets, diverse chemotypes, cellular activity | Primary screening, hit identification |

| Chemical Probes | Highly selective compounds with narrow target profiles | Defined mechanism of action, minimal off-target effects | Target validation, pathway elucidation |

| Reference Compounds | Compounds with established phenotypic profiles | Known morphological impact, well-characterized effects | Assay controls, profile comparison |

| Scaffold-Diverse Collection | Structurally diverse compound families | Broad coverage of chemical space, representative scaffolds | Novel mechanism discovery, chemical biology |

In one implementation, researchers developed a chemogenomic library of 5,000 small molecules representing a large panel of drug targets involved in diverse biological effects and diseases. This library was designed through a system pharmacology network integrating drug-target-pathway-disease relationships as well as morphological profiles from Cell Painting assays [2]. For precision oncology applications, other researchers have created minimal screening libraries—such as a collection of 1,211 compounds targeting 1,386 anticancer proteins—designed based on cellular activity, chemical diversity, and target selectivity [12].

The AI and Computational Revolution in Phenotypic Screening

Machine Learning and Active Learning Frameworks

Artificial intelligence has dramatically transformed phenotypic screening by enabling the analysis of complex, high-dimensional data that exceeds human interpretation capacity. Modern AI platforms like Ardigen phenAID leverage deep learning in computer vision and AI-cheminformatics to bridge the gap between cell imaging and small molecule design [9]. These systems can obtain up to 40% more accurate hits, curtail negative effects from the outset, explore millions of molecules by interrogating the chemical space, and extract pivotal scientific insights from morphological profiling [9].

A notable computational advance is the development of closed-loop active reinforcement learning frameworks. In one implementation, researchers created a model called DrugReflector that was initially trained on compound-induced transcriptomic signatures from the Connectivity Map [10]. The system uses a closed-loop feedback process that incorporates additional experimental transcriptomic data to iteratively improve the model. Testing showed that DrugReflector provided an order of magnitude improvement in hit-rate compared with screening of a random drug library, and benchmarking demonstrated its superiority over alternative algorithms for predicting phenotypic screening outcomes [10].

Mechanism of Action Deconvolution

A significant challenge in phenotypic screening is identifying the molecular mechanisms through which hit compounds achieve their effects. Modern computational approaches address this through several strategies:

The idTRAX platform utilizes a machine learning-based approach that relates cell-based screening of small-molecule compounds to their kinase inhibition data to directly identify effective and readily druggable targets [13]. This method efficiently identifies cancer-selective targets—for example, revealing that inhibiting AKT selectively kills MFM-223 and CAL148 triple-negative breast cancer cells, while inhibiting FGFR2 only kills MFM-223 [13].

AI-driven morphological profiling can predict mechanisms of action by comparing novel compound profiles to extensive reference databases. Platforms like Ardigen phenAID apply machine learning models to extract features from Cell Painting images and compare them to annotated reference profiles, enabling prediction of bioactivity and MoA inference through identification of phenotypic similarities to known drugs [9].

Multi-Omics Integration

The most advanced phenotypic screening platforms now integrate imaging data with multiple omics layers to provide biological context and enhance target identification. Multi-omics approaches combine transcriptomics, proteomics, metabolomics, and epigenomics with phenotypic profiles to gain a systems-level view of biological mechanisms that single-omics analyses cannot detect [8].

Table 2: Multi-Omics Data Integration in Phenotypic Screening

| Omics Layer | Data Type | Relevance to Phenotypic Screening | Technologies |

|---|---|---|---|

| Transcriptomics | Gene expression patterns | Identifies pathway activation, compensatory mechanisms | RNA-seq, single-cell RNA-seq |

| Proteomics | Protein abundance and post-translational modifications | Reveals signaling network perturbations, target engagement | Mass spectrometry, phosphoproteomics |

| Metabolomics | Metabolic pathway fluxes | Contextualizes stress response and disease mechanisms | LC/MS, GC/MS |

| Epigenomics | Chromatin accessibility, histone modifications | Provides insights into regulatory modifications | ATAC-seq, ChIP-seq |

| Functional Genomics | Gene essentiality and genetic interactions | Maps genotype-phenotype relationships | CRISPR screens, Perturb-seq |

This integration enables network pharmacology approaches that combine network sciences and chemical biology, allowing the integration of heterogeneous data sources and examination of a drug's action on several protein targets and their related biological regulatory processes in systems biology [2].

Experimental Protocols and Implementation

Cell Painting Assay Protocol

The Cell Painting assay provides a standardized approach for generating rich morphological profiles. The following protocol outlines key steps for implementation:

Cell Culture and Plating: Plate appropriate cell lines (e.g., U2OS osteosarcoma cells or disease-relevant primary cells) in multiwell plates, typically 96-well or 384-well format for screening.

Compound Treatment: Perturb cells with test compounds at appropriate concentrations and time points, including DMSO controls and reference compounds with known phenotypic effects.

Staining and Fixation: Apply the six-dye Cell Painting staining cocktail:

- Hoechst 33342: Labels nuclei

- Concanavalin A conjugated to Alexa Fluor 488: Labels endoplasmic reticulum

- Wheat Germ Agglutinin conjugated to Alexa Fluor 555: Labels Golgi apparatus and plasma membrane

- Phalloidin conjugated to Alexa Fluor 555: Labels actin cytoskeleton

- SYTO 14 green fluorescent: Labels nucleoli and cytoplasmic RNA

- MitoTracker Deep Red FM: Labels mitochondria After staining, fix cells with formaldehyde to preserve morphological structures [2] [9].

Image Acquisition: Acquire images using a high-throughput microscope capable of capturing multiple fluorescence channels. Typically, 9-25 fields per well are imaged to ensure adequate cell sampling.

Image Analysis and Feature Extraction: Process images using CellProfiler or similar software to identify individual cells and measure morphological features. The BBBC022 dataset, for example, includes 1,779 morphological features measuring intensity, size, area shape, texture, entropy, correlation, granularity, and angle between neighbors across three cellular compartments: cell, cytoplasm, and nucleus [2].

Phenotypic Screening Data Analysis Pipeline

The computational analysis of phenotypic screening data involves multiple stages:

Quality Control and Normalization: Apply robust normalization techniques to remove technical artifacts and batch effects. Use control compounds to assess assay quality and performance.

Feature Selection and Compression: Identify informative features while removing redundant or non-informative measurements. Techniques include removing features with non-zero standard deviation and high correlation (e.g., >95% correlation) [2].

Profile Generation and Similarity Analysis: Create morphological profiles for each treatment by averaging feature values across replicates. Calculate similarity scores between compound profiles using appropriate distance metrics (e.g., Pearson correlation, cosine similarity).

Hit Identification and Prioritization: Apply machine learning models to identify compounds that induce desired phenotypic changes. Active learning approaches like DrugReflector can iteratively improve hit selection based on experimental feedback [10].

Mechanism of Action Prediction: Compare novel compound profiles to reference databases to infer potential mechanisms of action through similarity analysis [9].

Success Stories and Clinical Impact

Phenotypic screening has generated numerous therapeutic successes in recent years, often with novel mechanisms of action that would have been difficult to identify through target-based approaches:

Cystic Fibrosis (CF): Target-agnostic compound screens using cell lines expressing disease-associated CFTR variants identified both potentiators (ivacaftor) that improve CFTR channel gating and correctors (tezacaftor, elexacaftor) that enhance CFTR folding and plasma membrane insertion [7]. The triple combination of elexacaftor, tezacaftor, and ivacaftor was approved in 2019 and addresses 90% of the CF patient population [7].

Spinal Muscular Atrophy (SMA): Phenotypic screens identified small molecules that modulate SMN2 pre-mRNA splicing to increase levels of functional SMN protein [7]. The resulting compound, risdiplam, was approved by the FDA in 2020 as the first oral disease-modifying therapy for SMA. It works by stabilizing the U1 snRNP complex—an unprecedented drug target and mechanism of action [7].

Oncology Applications: Phenotypic screening combined with machine learning identified lenalidomide's novel molecular mechanism several years post-approval. The drug binds to the E3 ubiquitin ligase Cereblon and redirects its substrate selectivity to promote degradation of specific transcription factors [7]. This novel mechanism is now being intensively explored in targeted protein degraders.

The following diagram illustrates how phenotypic screening reveals novel mechanisms of action, using these successful therapies as examples:

Implementation Guide: Establishing a Phenotypic Screening Platform

Research Reagent Solutions and Essential Materials

Successful implementation of phenotypic screening requires careful selection of reagents and tools. The following table details key components of the phenotypic screening toolkit:

Table 3: Essential Research Reagents and Platforms for Phenotypic Screening

| Category | Specific Tools/Reagents | Function | Considerations |

|---|---|---|---|

| Cell Models | Primary cells, iPSCs, 3D organoids, co-culture systems | Provide disease-relevant biological context | Physiological relevance, scalability, reproducibility |

| Chemogenomic Libraries | Targeted compound collections (e.g., 1,211-5,000 compounds) | Enable systematic perturbation of biological pathways | Target coverage, chemical diversity, annotation quality |

| Staining Reagents | Cell Painting dye cocktail (6-plex fluorescent dyes) | Multiplexed visualization of cellular compartments | Signal intensity, minimal bleed-through, compatibility |

| Imaging Platforms | High-content screening systems with automated microscopy | Acquisition of high-resolution cellular images | Throughput, resolution, environmental control |

| Analysis Software | CellProfiler, Genedata Screener, Ardigen phenAID | Image analysis, feature extraction, data management | Algorithm performance, scalability, interoperability |

| AI/ML Platforms | DrugReflector, idTRAX, custom deep learning models | Hit identification, MoA prediction, virtual screening | Model interpretability, training data requirements, validation |

Practical Implementation Considerations

Establishing an effective phenotypic screening platform requires addressing several practical considerations:

Assay Design and Validation: Develop disease-relevant phenotypic endpoints that capture meaningful biology while remaining practical for screening. Validate assays using reference compounds with known effects and ensure robustness through appropriate Z'-factor calculations and quality control measures.

Data Management Infrastructure: Implement scalable data storage and computational resources capable of handling large image datasets (often terabytes per screen) and complex analysis workflows. Platforms like Genedata Screener provide solutions for automating assay analysis, validating raw data and assay result quality, and consolidating assay information across the enterprise [14].

Integration with Existing Workflows: Ensure seamless connectivity between phenotypic screening platforms and other research tools, including electronic lab notebooks (ELNs), laboratory information management systems (LIMS), and compound management systems. Open architecture and flexible APIs enable automated data flow and reduce manual effort [14].

Cross-functional Collaboration: Foster collaboration between biologists, chemists, data scientists, and computational researchers to effectively design, execute, and interpret phenotypic screens. Centralized platforms that provide structured, secure data access keep multidisciplinary teams aligned [14] [9].

Phenotypic screening has evolved from a serendipity-dependent process to a systematic, technology-driven approach that combines biology-first experimentation with advanced computational analysis. The integration of high-content imaging, chemogenomic libraries, and AI-powered analytics has created a powerful platform for identifying novel therapeutic mechanisms, particularly for complex diseases that have eluded target-based approaches.

The future of phenotypic screening will likely involve even deeper integration of multiple data modalities, including single-cell technologies, spatial transcriptomics, and real-time live-cell imaging. As AI models become more sophisticated and reference datasets expand, phenotypic approaches will continue to enhance our understanding of biological complexity and accelerate the discovery of transformative medicines.

By embracing this integrated approach, researchers can leverage phenotypic screening not as a standalone technique, but as a central component of a comprehensive drug discovery strategy that bridges the gap between observable biology and therapeutic intervention.

The Critical Challenge of Target Deconvolution

Target deconvolution is the process of identifying the molecular target or targets of a chemical compound discovered through phenotypic screening [15]. This process provides a critical link between initial phenotype-based screens and subsequent stages of compound optimization, mechanistic interrogation, and preclinical characterization [15]. In the drug discovery pipeline, phenotypic screening assesses chemical compounds for their ability to evoke a desired phenotype without prior knowledge of specific molecular targets. While this approach can more accurately reflect complex biological contexts and has demonstrated efficient translation into clinical innovations, it creates a fundamental challenge: the mechanism of action remains unknown without identifying the specific cellular targets through which the compound functions [15].

The resurgence of phenotypic screening in modern drug discovery has made target deconvolution increasingly vital. Between 1999 and 2008, over half of FDA-approved first-in-class small-molecule drugs were discovered through phenotypic screening [5]. This approach is particularly valuable for complex diseases like cancer, neurological disorders, and diabetes, which often result from multiple molecular abnormalities rather than a single defect [2]. However, the success of phenotypic screening hinges on effectively addressing the critical challenge of target deconvolution to elucidate mechanistic underpinnings of promising hits.

The Chemogenomics Library Framework

Definition and Role in Phenotypic Screening

Chemogenomics libraries represent specialized collections of small molecules designed to systematically probe biological systems. These libraries typically consist of compounds with known mechanisms of action and often target-specific annotations, enabling researchers to connect phenotypic observations to potential molecular targets [2]. When a compound from a chemogenomics library produces a desired phenotypic effect, its known target annotation is presumed to be responsible for the observed activity, thereby facilitating target deconvolution [16].

The development of advanced chemogenomics libraries involves creating system pharmacology networks that integrate drug-target-pathway-disease relationships alongside morphological profiling data, such as that obtained from the Cell Painting assay [2]. This integration enables the construction of specialized libraries containing thousands of small molecules that represent a large and diverse panel of drug targets involved in diverse biological effects and diseases [2]. Such platforms significantly assist in target identification and mechanism deconvolution for phenotypic assays.

The Polypharmacology Challenge

A significant complication in using chemogenomics libraries for target deconvolution is the inherent polypharmacology of most bioactive compounds. Most drug molecules interact with an average of six known molecular targets, even after optimization [16]. This polypharmacology directly conflicts with the assumed target specificity of chemogenomics libraries, creating a fundamental challenge for accurate target deconvolution.

Research has quantified this challenge through a "polypharmacology index" (PPindex), which measures the overall target specificity of compound libraries [16]. Studies comparing prominent libraries reveal substantial differences in their polypharmacology profiles:

Table 1: Polypharmacology Index (PPindex) of Selected Compound Libraries

| Library Name | PPindex (All Data) | PPindex (Without 0-target compounds) | PPindex (Without 0 & 1-target compounds) |

|---|---|---|---|

| DrugBank | 0.9594 | 0.7669 | 0.4721 |

| LSP-MoA | 0.9751 | 0.3458 | 0.3154 |

| MIPE 4.0 | 0.7102 | 0.4508 | 0.3847 |

| Microsource Spectrum | 0.4325 | 0.3512 | 0.2586 |

| DrugBank Approved | 0.6807 | 0.3492 | 0.3079 |

Source: Adapted from [16]

The table demonstrates that polypharmacology profiles vary significantly between libraries, with steeper slopes (higher PPindex values) indicating more target-specific libraries. This variation profoundly impacts the effectiveness of target deconvolution efforts, as libraries with higher polypharmacology create greater ambiguity in linking phenotypic effects to specific molecular targets.

Experimental Methodologies for Target Deconvolution

Affinity-Based Chemoproteomics

Affinity-based pull-down assays represent a foundational workhorse technology for target deconvolution [15]. This approach involves modifying a compound of interest to enable its immobilization on a solid support, then exposing this "bait" to cell lysates. Proteins binding to the immobilized compound are isolated through affinity enrichment and identified via mass spectrometry [15].

Table 2: Key Experimental Approaches for Target Deconvolution

| Method | Principle | Applications | Requirements | Commercial Examples |

|---|---|---|---|---|

| Affinity-Based Pull-down | Immobilized compound used as bait to capture binding proteins from lysates [15] | Broad applicability across target classes; provides dose-response data [15] | Requires high-affinity probe that can be immobilized without disrupting function [15] | TargetScout [15] |

| Activity-Based Protein Profiling (ABPP) | Uses bifunctional probes with reactive groups that covalently bind targets; competition assays assess compound binding [15] | Identifying reactive residues in accessible regions of target proteins [15] | Requires reactive residues in accessible protein regions [15] | CysScout [15] |

| Photoaffinity Labeling (PAL) | Trifunctional probe with photoreactive moiety forms covalent bonds with targets upon light exposure [15] | Studying integral membrane proteins; capturing transient compound-protein interactions [15] | Optimization of photoreactive group positioning [15] | PhotoTargetScout [15] |

| Label-Free Thermal Stability Assays | Measures changes in protein thermal stability upon ligand binding [15] | Studying compound-protein interactions under native conditions [15] | Challenging for low-abundance, very large, or membrane proteins [15] | SideScout [15] |

Experimental Protocol: Affinity Pull-Down and Mass Spectrometry

Procedure:

- Chemical Probe Design: Modify the compound of interest to incorporate a functional handle (e.g., biin, azide, or alkyne) while preserving its biological activity [15].

- Immobilization: Covalently attach the chemical probe to a solid support matrix (e.g., agarose beads) [15].

- Sample Preparation: Prepare cell lysates from relevant biological systems, maintaining native protein structures and interactions.

- Affinity Enrichment: Incubate the immobilized bait with cell lysates to allow target proteins to bind. Wash extensively to remove non-specifically bound proteins [15].

- Elution: Release bound proteins using competitive elution (with excess free compound) or denaturing conditions.

- Protein Identification: Digest eluted proteins with trypsin and analyze peptides via liquid chromatography-tandem mass spectrometry (LC-MS/MS) [15].

- Data Analysis: Identify specific binders by comparing to control samples (e.g., beads alone or with inactive compound analog).

Critical Considerations:

- Validate that the chemical probe maintains similar potency and selectivity to the parent compound.

- Include appropriate controls to distinguish specific binding from non-specific interactions.

- Use quantitative proteomics methods (e.g., SILAC, TMT) to enhance specificity of target identification.

- Correlate binding affinity with functional activity through dose-response experiments [15].

Integration of Knowledge Graphs and Molecular Docking

Novel computational approaches are emerging to complement experimental methods. Protein-protein interaction knowledge graphs (PPIKG) integrate biological data to predict potential targets, significantly narrowing candidate proteins for experimental validation [17]. For example, in deconvoluting the target of p53 pathway activator UNBS5162, a PPIKG approach reduced candidate proteins from 1088 to 35, dramatically saving time and resources before molecular docking identified USP7 as a direct target [17].

This integrated methodology combines phenotypic screening with computational prediction:

- Conduct phenotype-based high-throughput screening to identify active compounds [17].

- Construct a knowledge graph incorporating protein-protein interactions, pathways, and compound-target relationships [17].

- Use graph analysis algorithms to prioritize potential targets based on network proximity to the phenotypic pathway.

- Perform molecular docking of the active compound against prioritized targets [17].

- Validate top predictions through experimental assays.

Case Study: Glioblastoma Multiforme (GBM) Drug Discovery

Rational Library Design for Phenotypic Screening

A compelling application of advanced target deconvolution strategies appears in glioblastoma multiforme (GBM) research, where researchers created a rational library for phenotypic screening by integrating tumor genomic data with structural biology [5]. This approach involved:

- Target Selection: Analyzing GBM tumor RNA sequencing data to identify differentially expressed genes and somatic mutations [5].

- Network Mapping: Mapping these genes onto protein-protein interaction networks to construct a GBM-specific subnetwork [5].

- Druggable Site Identification: Identifying druggable binding pockets on proteins within this subnetwork [5].

- Virtual Screening: Molecular docking of approximately 9,000 compounds against these druggable sites to select candidates predicted to simultaneously bind multiple GBM-relevant proteins [5].

This rationally designed library of 47 candidates led to the identification of compound IPR-2025, which demonstrated promising activity in patient-derived GBM spheroids and endothelial tube formation assays while sparing normal cells [5]. Subsequent target deconvolution using thermal proteome profiling confirmed that the compound engages multiple targets, exemplifying selective polypharmacology [5].

Research Reagent Solutions for GBM Target Deconvolution

Table 3: Essential Research Reagents for Target Deconvolution in Phenotypic Screening

| Reagent / Resource | Function in Target Deconvolution | Application Example |

|---|---|---|

| TargetScout Service | Affinity-based pull-down and profiling service for target identification [15] | Isolating and identifying target proteins from cell lysates [15] |

| CysScout Platform | Proteome-wide profiling of reactive cysteine residues using activity-based protein profiling [15] | Identifying targets through cysteine-reactive competitive binding [15] |

| PhotoTargetScout | Photoaffinity labeling service for identifying compound-protein interactions [15] | Studying membrane proteins and transient interactions [15] |

| SideScout Service | Label-free proteome-wide protein stability assay [15] | Detecting ligand binding through thermal stability shifts [15] |

| ChEMBL Database | Public database of bioactive molecules with drug-like properties and assay data [2] | Annotating compound-target interactions and polypharmacology profiles [2] |

| Cell Painting Assay | High-content morphological profiling using fluorescent dyes [2] | Generating phenotypic profiles for comparing compound effects [2] |

| Thermal Proteome Profiling | Mass spectrometry-based method detecting protein thermal stability changes upon ligand binding [5] | Identifying direct and indirect targets in complex biological systems [5] |

Target deconvolution remains a critical challenge in phenotypic screening, but integrated approaches combining advanced chemoproteomics, computational methods, and rationally designed chemogenomics libraries are progressively overcoming these hurdles. The future of target deconvolution lies in multidisciplinary strategies that leverage:

- Advanced Chemoproteomics: Continued development of more sensitive, comprehensive, and physiologically relevant methods for capturing compound-target interactions.

- AI-Driven Platforms: Artificial intelligence and machine learning approaches that can integrate diverse data types to predict targets and mechanisms of action [18].

- Knowledge Graphs: Expanding biological knowledge bases that contextualize targets within broader cellular networks and pathway biology [17].

- Rational Library Design: More sophisticated chemogenomics libraries with optimized polypharmacology profiles that balance target coverage with deconvolution feasibility [5].

As these technologies mature, they promise to accelerate the identification of novel therapeutic targets and streamline the transition from phenotypic observations to mechanistically understood drug candidates, ultimately enhancing the efficiency and success rate of modern drug discovery.

The drug discovery paradigm has significantly shifted from a reductionist, single-target approach to a more complex systems pharmacology perspective that acknowledges a single drug often interacts with several targets [2]. This evolution has driven the resurgence of phenotypic drug discovery (PDD), where compounds are screened in complex biological systems without prior assumption of a specific molecular target. The primary challenge in PDD, however, is target deconvolution—identifying the molecular mechanism of action (MoA) after a bioactive compound is found [16]. Chemogenomic libraries have emerged as a powerful solution to this challenge.

These libraries are composed of small molecules with well-annotated targets and/or mechanisms of action. When used in phenotypic screens, they provide a direct link between an observed phenotype and a specific target or set of targets, thereby accelerating the deconvolution process [19]. This technical guide provides an in-depth analysis of key chemogenomic libraries, their quantitative properties, and their practical application in phenotypic screening research.

Core Chemogenomic Libraries: A Comparative Analysis

Several publicly available and corporate chemogenomic libraries have been established as key resources for the research community. The following table summarizes the core characteristics of these foundational libraries.

Table 1: Core Chemogenomic Libraries and Their Properties

| Library Name | Key Features & Composition | Primary Application Context | Notable Characteristics |

|---|---|---|---|

| MIPE (Mechanism Interrogation PlatE) | 1,912 small molecule probes with known MoA [16]. | Phenotypic screening for target identification and drug repurposing [16]. | Publicly available; compounds selected for their established biological activity. |

| LSP-MoA (Laboratory of Systems Pharmacology - Method of Action) | An optimized chemical library designed to optimally target the liganded kinome [16]. | Deconvolution of kinase-driven phenotypes [16]. | Rationally designed for target family coverage; used in systems biology approaches. |

| Microsource Spectrum | A collection of 1,761 bioactive compounds, including drugs, bioactive alkaloids, and other mediators [16]. | High-throughput or target-specific phenotypic assays [16]. | Commercially available; contains a wide range of known bioactives. |

| EUbOPEN Library | Aims to assemble an open-access library covering >1,000 proteins with well-annotated compounds and chemical probes [19]. | Target identification and validation across a large swath of the druggable genome [19]. | Product of a major IMI consortium; emphasizes high-quality chemical probes. |

Quantitative Comparison: The Polypharmacology Index (PPindex)

A critical consideration when selecting a chemogenomic library is the inherent polypharmacology—the tendency of a compound to bind to multiple targets—of its constituents. Even after optimization, most drug molecules interact with an average of six known molecular targets [16]. High polypharmacology within a library can complicate target deconvolution.

To objectively compare libraries, a quantitative Polypharmacology Index (PPindex) has been developed. This metric is derived from the linearized slope of the Boltzmann distribution that fits a histogram of the number of known targets per compound in a library. A larger PPindex (slope closer to a vertical line) indicates a more target-specific library, whereas a smaller PPindex (slope closer to a horizontal line) indicates a more polypharmacologic library [16].

Table 2: Polypharmacology Index (PPindex) of Major Libraries [16]

| Database | PPindex (All Data) | PPindex (Without 0-target bin) | PPindex (Without 0 & 1-target bins) |

|---|---|---|---|

| DrugBank | 0.9594 | 0.7669 | 0.4721 |

| LSP-MoA | 0.9751 | 0.3458 | 0.3154 |

| MIPE | 0.7102 | 0.4508 | 0.3847 |

| Microsource Spectrum | 0.4325 | 0.3512 | 0.2586 |

| DrugBank Approved | 0.6807 | 0.3492 | 0.3079 |

The data reveals that while DrugBank appears highly target-specific, this is influenced by data sparsity. After removing the bias of compounds with zero or one known target, the LSP-MoA and MIPE libraries demonstrate a middle ground of polypharmacology, making them potentially more useful for deconvoluting complex phenotypes than highly promiscuous libraries [16].

Experimental Protocol: Annotating Libraries with Phenotypic Profiling

The utility of a chemogenomic library is enhanced by comprehensive annotation that goes beyond target affinity to include a compound's effect on basic cellular functions. The following workflow, HighVia Extend, is a live-cell multiplexed assay designed for this purpose [19].

Figure 1: Workflow for the HighVia Extend live-cell phenotypic profiling assay.

Detailed Methodology

Step 1: Cell Seeding and Compound Treatment

- Plate adherent cells (e.g., HeLa, U2OS, MRC9) in multiwell plates suitable for high-content imaging.

- Treat cells with compounds from the chemogenomic library at a range of concentrations (e.g., 1 nM - 10 µM). Include DMSO as a vehicle control and known cytotoxic agents (e.g., staurosporine, digitonin) as reference controls [19].

Step 2: Staining with Live-Cell Dyes Prepare a dye mixture in culture medium containing:

- Hoechst33342 (50 nM): Labels nuclear DNA. This low concentration minimizes dye-induced toxicity and allows for long-term imaging [19].

- BioTracker 488 Green Microtubule Cytoskeleton Dye: Labels the tubulin network to assess cytoskeletal morphology.

- MitoTracker Red or DeepRed: Labels mitochondria to assess mitochondrial health and mass.

Add the dye mixture to cells concurrently with or shortly after compound addition.

Step 3: Continuous Live-Cell Imaging

- Place the plate in a high-content imaging system maintained at 37°C and 5% CO₂.

- Acquire images from multiple sites per well at regular intervals (e.g., every 4-6 hours) for an extended period (e.g., 72 hours) [19].

Step 4: Image and Data Analysis

- Use automated image analysis software (e.g., CellProfiler) to identify individual cells and segment cellular compartments (nucleus, cytoplasm).

- Extract morphological features for each cell (e.g., nuclear size and shape, cytoskeletal texture, mitochondrial granularity).

- Employ a supervised machine-learning algorithm to gate cells into distinct phenotypic categories based on the extracted features [19]:

- Healthy

- Early Apoptotic (characterized by nuclear pyknosis)

- Late Apoptotic (characterized by nuclear fragmentation)

- Necrotic

- Lysed

Step 5: Profiling and Annotation

- Generate time-dependent IC₅₀ values for the loss of healthy cells for each compound.

- Create a phenotypic profile for each compound based on its kinetic response and the population distribution across the different health categories.

- Annotate the chemogenomic library with this information, flagging compounds that cause rapid, non-specific cytotoxicity or cytoskeletal disruption.

The Scientist's Toolkit: Essential Reagents for Profiling

Table 3: Key Research Reagent Solutions for Phenotypic Annotation

| Item / Reagent | Function in the Protocol | Key Parameters & Notes |

|---|---|---|

| Live-Cell Dyes | Multiplexed staining of organelles and cellular structures. | Use low, non-toxic concentrations (e.g., 50 nM Hoechst33342). Validate dye combinations for lack of interference [19]. |

| Cell Health Reference Compounds | Assay validation and training set for machine learning. | Include compounds with diverse MoAs: e.g., Staurosporine (cytotoxic), JQ1 (slow cytostatic), Digitonin (membrane permeabilization) [19]. |

| High-Content Imaging System | Automated, kinetic image acquisition in a controlled environment. | Must maintain 37°C and 5% CO₂ for long-term live-cell imaging. |

| Image Analysis Software (e.g., CellProfiler) | Cell segmentation, feature extraction, and population classification. | Requires development of a custom pipeline for segmentation and a trained classifier for population gating [19]. |

Emerging Trends and Future Perspectives

Expanding the Druggable Genome

Current chemogenomic libraries cover only a fraction of the ~20,000 genes in the human genome, with estimates of about 2,000 targets covered [20]. Initiatives like EUbOPEN and Target 2035 aim to expand this coverage by generating high-quality chemical probes and chemogenomic compounds for the entire druggable proteome [19]. This expansion is critical for ensuring that phenotypic screens can effectively interrogate a wider array of biological pathways.

Integrating Novel Data Types and AI

The field is moving towards richer annotation of libraries by integrating diverse data types:

- Morphological Profiling: Assays like Cell Painting generate high-dimensional morphological profiles that can be used to connect compound-induced phenotypes to those caused by genetic perturbations [2].

- Chemical Proteomics: Techniques like thermal proteome profiling (TPP) can experimentally map a compound's engagement with its cellular targets on a proteome-wide scale, providing unbiased annotation of its polypharmacology [5].

- AI-Driven Mining: Computational frameworks are being developed to mine large-scale phenotypic HTS data to identify compounds with likely novel MoAs, effectively creating next-generation chemogenomic libraries with expanded target coverage [20]. These approaches identify "Gray Chemical Matter" (GCM)—compounds that show selective phenotypic activity in multiple assays but lack a known MoA, offering a path to discover novel biology [20].

Rational Library Design for Complex Diseases

For complex diseases like glioblastoma (GBM), rational library design is being employed. This involves:

- Using the tumor's genomic profile (e.g., RNA sequencing, mutation data) to identify overexpressed proteins and key network nodes.

- Mapping these proteins onto a human protein-protein interaction network to define a disease-relevant subnetwork.

- Using molecular docking to virtually screen compound libraries against multiple druggable binding sites on proteins within this subnetwork.

- Selecting a focused set of compounds predicted to simultaneously engage multiple disease-relevant targets for phenotypic screening in physiologically relevant models (e.g., patient-derived spheroids) [5].

This strategy intentionally aims for selective polypharmacology, where a single compound modulates a collection of targets across different signaling pathways that drive the disease phenotype, potentially leading to more efficacious therapies with reduced toxicity [5].

For decades, drug discovery was dominated by the "one target–one drug" paradigm, which aimed to develop highly selective ligands for individual disease proteins to maximize therapeutic benefit and minimize off-target effects [21]. While this strategy achieved some successes, it possesses major limitations in addressing complex diseases, with approximately 90% of such candidates failing in late-stage clinical trials due to lack of efficacy or unexpected toxicity [21]. These failures often stem from the reductionist oversight of the complex, redundant, and networked nature of human biology, where targeting a single node in a complex network can easily be circumvented by the system, leading to lack of long-term efficacy or emergence of resistance [21].

The recognition of these limitations has driven a fundamental transformation toward systems pharmacology and rational polypharmacology. This approach embraces the deliberate design of small molecules that act on multiple therapeutic targets simultaneously, offering a transformative approach to overcome biological redundancy, network compensation, and drug resistance [21]. This shift represents a move from "magic bullets" to "magic shotguns" – single therapeutic agents capable of modulating multiple disease-relevant targets in a coordinated manner [21]. The clinical success of many promiscuous drugs, initially termed "dirty drugs," further supports this paradigm shift, suggesting that a certain degree of multi-target activity could be advantageous [21].

The Scientific Rationale for Polypharmacology

Theoretical Foundations and Advantages

Polypharmacology provides several distinct advantages over single-target approaches, particularly for complex diseases. By addressing several key disease drivers simultaneously, multi-target drugs can achieve synergistic therapeutic effects greater than single-target approaches [21]. The simultaneous modulation of multiple pathways helps prevent biological systems from simply "rerouting" signaling to escape a solitary blockade, a common limitation in targeted therapies [21].

Additionally, polypharmacology offers a powerful strategy for mitigating drug resistance. Pathogens and cancer cells frequently develop resistance to highly specific drugs through mutations in the drug's target. A drug that inhibits several unrelated targets substantially lowers the probability that a single genetic change confers full resistance, as the organism would need to simultaneously adapt to multiple inhibitory actions [21].

From a clinical perspective, single polypharmacological agents also offer practical benefits over combination therapies (polypharmacy), including reduced risk of drug-drug interactions, simplified dosing schedules, and improved patient compliance [21]. A multi-target drug guarantees that all its activities are delivered in a fixed ratio, reaching targets simultaneously in the correct balance, thereby avoiding the pharmacokinetic variability that arises when separate drugs with different absorption and elimination profiles are used in combination [21].

Therapeutic Applications Across Disease Areas

Table 1: Multi-Target Drug Applications in Complex Diseases

| Disease Area | Key Targets/Pathways | Example Agents | Therapeutic Rationale |

|---|---|---|---|

| Oncology | Multiple kinases in oncogenic signaling cascades (e.g., PI3K/Akt/mTOR) | Sorafenib, Sunitinib | Block redundant signaling pathways; prevent tumor escape and resistance; induce synthetic lethality |

| Neurodegenerative Disorders | Cholinesterase; β-amyloid aggregation; oxidative stress pathways | Memoquin (MTDL) | Address multiple pathological processes simultaneously: protein aggregation, neurotransmitter deficits, neuroinflammation |

| Metabolic Disorders | GLP-1/GIP receptors; PPAR pathways | Tirzepatide | Simultaneously address glycemic control, weight loss, and cardiovascular risk factors |

| Infectious Diseases | Multiple bacterial targets (e.g., quinolone targets + membrane disruptors) | Antibiotic hybrids | Reduce resistance emergence by requiring simultaneous mutations in different pathways |

The insufficiency of one-target therapies is most evident in complex, multifactorial diseases [21]. In cancer, polypharmacology is especially advantageous for cancers driven by intricate networks, as multi-target agents can induce synthetic lethality and prevent compensatory mechanisms, resulting in more durable responses [21]. In neurodegenerative diseases like Alzheimer's and Parkinson's, single-target therapies have largely failed, prompting a shift toward multi-target-directed ligands (MTDLs) that integrate activities like cholinesterase inhibition and anti-amyloid effects within one molecule [21]. For metabolic disorders, drugs that can simultaneously address multiple abnormalities are particularly valuable for improving adherence and reducing side effects compared to multiple single-target therapies [21]. In infectious diseases, multi-target antimicrobials can attack multiple bacterial targets simultaneously, reducing the risk of resistance development [21].

Computational Frameworks for Polypharmacology

Machine Learning and AI-Driven Approaches

The complex and nonlinear nature of multi-target drug discovery requires computational methods that can efficiently model interactions across diverse chemical and biological spaces. Machine learning (ML) has emerged as a powerful approach to address these challenges, offering the flexibility to integrate heterogeneous data, learn hidden patterns, and make predictions at scale [22]. ML algorithms can learn from diverse data sources—including molecular structures, omics profiles, protein interactions, and clinical outcomes—to prioritize promising drug-target pairs, predict off-target effects, and propose novel compounds with desirable polypharmacological profiles [22].

Deep learning (DL) architectures, particularly graph neural networks (GNNs) and transformer-based models, are increasingly being leveraged to capture sequential, contextual, and multimodal biological information [22]. These approaches allow for the integration of chemical structure, target profiles, gene expression, and clinical phenotypes into unified predictive frameworks. The incorporation of systems pharmacology principles enables ML models to go beyond molecule-level predictions by considering the effects of drugs across pathways, tissues, and disease networks, facilitating a more holistic view of therapeutic efficacy and safety [22].

Table 2: Machine Learning Approaches in Multi-Target Drug Discovery

| ML Approach | Key Features | Applications in Polypharmacology | Data Sources |

|---|---|---|---|

| Classical ML (SVMs, Random Forests) | Interpretability; robustness with curated datasets | Drug-target interaction prediction; adverse effect prediction | Molecular descriptors; bioactivity data |

| Deep Learning (Neural Networks) | Handling complex, nonlinear relationships; automatic feature learning | Polypharmacology prediction; de novo molecular design | High-dimensional chemical and biological data |

| Graph Neural Networks (GNNs) | Learning from molecular graphs and biological networks | Predicting drug-target interactions; network pharmacology | Molecular structures; protein-protein interaction networks |

| Transformer-based Models | Capturing sequential, contextual biological information | Protein function prediction; multi-modal data integration | Amino acid sequences; omics data; literature mining |

Experimental Workflow for Chemogenomics Screening

The following diagram illustrates the integrated computational and experimental workflow for polypharmacology-focused drug discovery within a chemogenomics framework:

Key Research Reagents and Tools

Table 3: Essential Research Reagent Solutions for Polypharmacology Studies

| Reagent/Tool Category | Specific Examples | Function in Polypharmacology Research |

|---|---|---|

| Chemogenomic Libraries | Pfizer chemogenomic library; GSK Biologically Diverse Compound Set; NCATS MIPE library [2] | Provide targeted chemical collections covering diverse protein families for systematic screening |

| Bioactivity Databases | ChEMBL; BindingDB; DrugBank; STITCH [2] [22] | Curate drug-target interaction data, binding affinities, and multi-label activity profiles for model training |

| Pathway and Ontology Resources | KEGG Pathway; Gene Ontology (GO); Disease Ontology (DO) [2] | Annotate protein targets with biological context, pathway membership, and disease associations |

| Morphological Profiling Assays | Cell Painting; High-content screening (HCS) [2] | Generate high-dimensional phenotypic profiles connecting compound treatment to cellular phenotypes |

| Functional Genomics Tools | CRISPR-Cas screens; siRNA libraries [4] | Systematically perturb genes to identify synthetic lethal interactions and validate network dependencies |

Experimental Protocols and Methodologies

Development of Chemogenomics Libraries for Phenotypic Screening

The development of advanced chemogenomics libraries represents a critical methodology for phenotypic screening in polypharmacology research. These libraries are designed to represent a large and diverse panel of drug targets involved in diverse biological effects and diseases [2]. A typical protocol involves:

Library Curation and Assembly: Select compounds with known target annotations from databases like ChEMBL (containing approximately 1.6 million molecules with bioactivities and 11,224 unique targets) [2]. Apply scaffold-based diversity analysis using tools like ScaffoldHunter to ensure structural representation across different chemotypes [2]. This step ensures coverage of the druggable genome while maintaining chemical diversity.

Network Pharmacology Integration: Construct a systems pharmacology network integrating drug-target-pathway-disease relationships using graph databases (e.g., Neo4j) [2]. Incorporate heterogeneous data sources including:

- Drug-target interactions from ChEMBL

- Pathway information from KEGG

- Functional annotations from Gene Ontology

- Disease classifications from Disease Ontology

- Morphological profiling data from Cell Painting assays [2]

Morphological Profiling Integration: Implement high-content imaging-based high-throughput phenotypic profiling using the Cell Painting assay [2]. This protocol involves:

- Plating U2OS osteosarcoma cells in multiwell plates

- Perturbing with library compounds

- Staining with fluorescent dyes (fixing and imaging on a high-throughput microscope)

- Automated image analysis using CellProfiler to identify individual cells and measure morphological features (typically 1,779 features measuring intensity, size, shape, texture, granularity) [2]

- Generating cell profiles for comparison across compound treatments

Target Deconvolution and Validation

A significant challenge in phenotypic screening is target identification for active compounds. The following diagram outlines the integrated target deconvolution workflow:

Chemical Proteomics Workflow:

- Prepare cell lysates from relevant disease models

- Incubate with immobilized compound (affinity matrix)

- Capture direct binding proteins

- Identify bound proteins via mass spectrometry

- Validate interactions through orthogonal binding assays (SPR, ITC) [4]

CRISPR Functional Genomics:

- Perform arrayed or pooled CRISPR screens in disease-relevant cell lines

- Identify genetic vulnerabilities and synthetic lethal interactions

- Cross-reference with compound sensitivity profiles

- Validate network dependencies through rescue experiments [4]

Machine Learning-Based Target Prediction:

- Generate molecular representations (fingerprints, graph embeddings)

- Train multi-task learning models on known drug-target interactions

- Predict polypharmacological profiles using similarity-based and deep learning approaches

- Integrate network-based prioritization using protein-protein interaction data [22]

Challenges and Future Perspectives

Despite significant advances, polypharmacology faces several challenges. Data sparsity remains a limitation, as even the best chemogenomics libraries only interrogate a small fraction of the human genome—approximately 1,000–2,000 targets out of 20,000+ genes [4]. This limited coverage highlights significant gaps in our ability to probe the entire druggable genome. Additionally, model interpretability and generalizability present ongoing challenges for ML approaches in polypharmacology, with concerns about transparency, fairness, and reproducibility requiring careful attention [22].

Looking forward, several promising directions are emerging. Generative AI models for de novo design of multi-target compounds are showing increasing sophistication, with some generated compounds demonstrating biological efficacy in vitro [21]. Federated learning approaches offer potential for leveraging distributed datasets while addressing privacy concerns [22]. The integration of multi-omics data and CRISPR functional screens will further enhance our ability to guide multi-target design [21]. Finally, patient-specific therapy design through the integration of systems pharmacology with personalized disease models represents the frontier of precision polypharmacology [22].

As these technologies mature, AI-enabled polypharmacology is poised to become a cornerstone of next-generation drug discovery, with potential to deliver more effective therapies tailored to the complexity of human disease [21]. The integration of systems-level understanding with sophisticated computational methods will continue to drive the transition from serendipitous drug discovery to rational, network-targeted therapeutic design.

Implementing Phenotypic Screens: From Library Design to Hit Identification

Strategies for Rational Library Design and Curation

An In-Depth Technical Guide

Within the modern drug discovery paradigm, which has shifted from a reductionist "one target—one drug" vision to a more complex systems pharmacology perspective, chemogenomics libraries have become indispensable tools [2]. These libraries, consisting of carefully selected small molecules, are particularly crucial for phenotypic drug discovery (PDD). Since phenotypic screening does not rely on prior knowledge of specific molecular targets, it must be combined with chemical biology approaches to identify the therapeutic targets and mechanisms of action underlying an observable phenotype [2]. The strategic design and rigorous curation of these chemical libraries are therefore foundational to their success, enabling the deconvolution of complex biological responses and accelerating the identification of novel therapeutic agents. This guide outlines the core strategies and methodologies for constructing and curating chemogenomics libraries tailored for phenotypic screening research, providing a practical framework for researchers and drug development professionals.

Core Strategies for Rational Library Design

The design of a targeted screening library is a complex endeavor, as most small molecules exert their effects by modulating multiple protein targets with varying potency and selectivity [12]. Rational design strategies must balance multiple, often competing, parameters to create a collection that is both practically manageable and scientifically comprehensive.

Defining Library Objectives and Scope

The initial phase involves a precise definition of the library's purpose. For precision oncology, for instance, the goal may be to identify patient-specific vulnerabilities, necessitating a library that covers a wide range of protein targets and biological pathways implicated in various cancers [12]. Key considerations include:

- Cellular Activity Prioritization: Selection should favor compounds with demonstrated cellular activity and bioavailability to ensure relevance in phenotypic assays conducted in cell-based systems [12].

- Druggable Genome Coverage: The library should encompass a large and diverse panel of drug targets involved in a wide spectrum of biological effects and diseases, effectively representing the "druggable genome" [2].

- Scaffold Diversity: Filtering based on chemical scaffolds is essential to ensure structural diversity, which supports the exploration of a broad chemical space and reduces bias toward specific chemotypes [2].

Analytic Procedures for Compound Selection

Systematic analytic procedures are required to translate strategic objectives into a physical compound list. These procedures adjust for critical factors including library size, chemical diversity, commercial availability, and target selectivity [12]. The outcome can range from extensive libraries, such as the 5,000-molecule library developed for system pharmacology network building, to minimal screening libraries, like one documented for targeting 1,386 anticancer proteins with 1,211 compounds [12]. This process often involves a stepwise filtration of large compound collections from sources like the ChEMBL database to select molecules with robust bioactivity data [2].

Table 1: Key Design Considerations for Chemogenomics Libraries

| Design Consideration | Description | Example Implementation |

|---|---|---|

| Cellular Activity | Prioritize compounds with proven activity in cellular assays to ensure biological relevance. | Select compounds with reported IC50, Ki, or EC50 values in cell-based assays from ChEMBL [2]. |

| Target Coverage | Ensure the library covers a wide range of protein targets and biological pathways relevant to the disease area. | Design a minimal library of 1,211 compounds to target 1,386 anticancer proteins [12]. |

| Chemical Diversity | Incorporate diverse chemical scaffolds to enable exploration of broad structure-activity relationships and reduce bias. | Use software like ScaffoldHunter to classify molecules and select representatives from different scaffold levels [2]. |

| Target Selectivity | Include compounds with varying degrees of selectivity to enable polypharmacology studies and deconvolution of complex phenotypes. | Analytic procedures that assess and balance the selectivity profiles of compounds during library selection [12]. |

A Practical Workflow for Data Curation

The accuracy of any model or screening outcome is inherently tied to the quality of the underlying data. Data curation—the process of verifying the accuracy, consistency, and reproducibility of reported chemical and biological data—is therefore a critical, non-negotiable step preceding model development or screening campaigns [23]. An integrated workflow addresses both chemical and biological data quality.

Chemical Structure Curation

The curation of chemical structures is a non-trivial task that involves identifying and correcting structural errors to ensure a standardized representation [23]. This process includes several key steps:

- Removal of Incompatible Compounds: Incomplete or confusing records, such as inorganics, organometallics, counterions, biologics, and mixtures, should be removed, as many cheminformatics programs are not equipped to handle them [23].

- Structural Cleaning and Standardization: This involves the detection and correction of valence violations, extreme bond lengths and angles, ring aromatization, normalization of specific chemotypes, and standardization of tautomeric forms [23]. The treatment of tautomers is particularly challenging and can be managed using empirical rules to represent the most populated tautomer of a given chemical [23].

- Verification of Stereochemistry: Bioactive chemicals often contain stereocenters, and errors in their assignment are common. The correctness of stereochemistry should be verified, potentially by comparing chemical entries to similar compounds in online databases [23].

Several software tools are available to automate these tasks, including:

- Molecular Checker/Standardizer (available in Chemaxon JChem, free for academic organizations) [23].

- RDKit program tools (free software) [23].

- LigPrep (available in the Schrodinger Small Molecule Discovery Suite for subscribers) [23].

These functions can be integrated into sharable workflows using platforms like Knime to streamline the curation procedure [23]. Despite these automated tools, manual curation remains critical for identifying errors that are obvious to trained chemists but not to computers [23].