Chemogenomic Libraries for High-Throughput Phenotypic Screening: A Comprehensive Guide to Design, Application, and Validation

This article provides a comprehensive examination of chemogenomic libraries in high-throughput phenotypic screening, addressing both their transformative potential and significant limitations in modern drug discovery.

Chemogenomic Libraries for High-Throughput Phenotypic Screening: A Comprehensive Guide to Design, Application, and Validation

Abstract

This article provides a comprehensive examination of chemogenomic libraries in high-throughput phenotypic screening, addressing both their transformative potential and significant limitations in modern drug discovery. Tailored for researchers, scientists, and drug development professionals, it covers foundational principles of chemogenomic library design and composition, practical methodologies for implementation across diverse assay systems, strategic troubleshooting for common experimental challenges, and rigorous validation frameworks for data interpretation. By synthesizing current best practices with emerging computational and AI-driven approaches, this resource aims to enhance screening effectiveness and accelerate the identification of novel therapeutic targets and mechanisms through phenotypic drug discovery.

Building the Foundation: Understanding Chemogenomic Libraries and Phenotypic Screening Principles

The drug discovery paradigm has significantly shifted from a reductionist vision (one target—one drug) to a more complex systems pharmacology perspective (one drug—several targets) over the past two decades [1]. This evolution is largely driven by the recognition that complex diseases like cancers, neurological disorders, and diabetes are often caused by multiple molecular abnormalities rather than single defects [1]. Chemogenomic libraries represent a strategic response to this complexity, serving as curated collections of small molecules with defined biological activities against specific protein targets or families. These libraries occupy a crucial niche between target-based and phenotypic drug discovery, providing researchers with annotated chemical tools to deconvolute complex biological mechanisms observed in phenotypic screens [1] [2].

The resurgence of phenotypic screening in drug discovery has highlighted a critical challenge: while phenotypic assays can identify compounds that produce desirable changes in disease-relevant models, they do not inherently reveal the specific molecular targets or mechanisms of action responsible for these effects [1] [3]. Chemogenomic libraries bridge this gap by providing target-annotated compounds that can help researchers connect observable phenotypes to underlying molecular mechanisms. However, it is important to recognize that even the most comprehensive chemogenomic libraries interrogate only a fraction of the human genome—approximately 1,000–2,000 targets out of 20,000+ genes—highlighting both their utility and limitations [3].

Core Components and Design Principles

Structural and Informational Architecture

A modern chemogenomic library integrates multiple dimensions of chemical and biological information into a unified framework. The structural architecture typically involves:

Scaffold-based Organization: Compounds are systematically classified using software like ScaffoldHunter, which cuts each molecule into different representative scaffolds and fragments through a stepwise process of removing terminal side chains and rings to identify characteristic core structures [1]. This hierarchical organization enables researchers to explore structure-activity relationships across compound classes.

Target Annotation: Each compound is annotated with its known protein targets, typically drawn from resources like ChEMBL (which contained 1,678,393 molecules with bioactivities and 11,224 unique targets as of version 22) [1]. This annotation includes quantitative bioactivity data such as Ki, IC50, and EC50 values.

Pathway and Disease Context: Beyond direct target annotations, compounds are linked to broader biological contexts through integration with KEGG pathways, Gene Ontology terms, and Human Disease Ontology resources [1]. This enables researchers to place compound activities within meaningful biological networks.

Quantitative Prioritization of Tool Compounds

Not all compounds in a chemogenomic library are equally useful as chemical probes. A systematic, evidence-based approach to compound prioritization is essential for creating effective screening collections. The Tool Score (TS) methodology provides a quantitative metric for ranking compounds based on integrated large-scale, heterogeneous bioactivity data [4]. This meta-analysis approach evaluates compounds across multiple dimensions:

- Strength of Target Engagement: Prioritizing compounds with potent and well-characterized interactions with their primary targets.

- Selectivity Profiles: Identifying compounds with minimal off-target activities, particularly across unrelated target families.

- Evidence Quality: Weighting compounds with multiple independent confirmations of activity and selectivity more highly.

Validation studies have demonstrated that high-TS tools show more reliably selective phenotypic profiles in cell-based pathway assays compared to lower-TS compounds [4]. This approach also helps identify frequently tested but non-selective compounds that may produce misleading results in phenotypic screens.

Implementation Protocols and Workflows

Library Assembly and Curation Protocol

Creating a high-quality chemogenomic library requires meticulous attention to compound selection, annotation, and quality control. The following protocol outlines key steps for library development:

Table 1: Chemogenomic Library Assembly Protocol

| Step | Description | Key Resources | Quality Metrics |

|---|---|---|---|

| 1. Compound Sourcing | Select compounds from commercial vendors, in-house collections, and published chemical probes | ChEMBL, DrugBank, commercial vendors | Chemical diversity, target coverage, structural integrity |

| 2. Target Annotation | Annotate compounds with known targets and bioactivity data | ChEMBL, IUPHAR, PubChem | Bioactivity values (Ki, IC50), species specificity, assay type |

| 3. Scaffold Analysis | Classify compounds by chemical scaffolds and structural relationships | ScaffoldHunter, RDKit | Scaffold diversity, representation of privileged structures |

| 4. Pathway Mapping | Link targets to biological pathways and processes | KEGG, Reactome, Gene Ontology | Pathway coverage, disease relevance, network connectivity |

| 5. Quality Control | Verify compound identity, purity, and solubility | LC-MS, NMR, solubility assays | ≥95% purity, confirmed structure, DMSO solubility |

| 6. Database Integration | Compile data into searchable database or network | Neo4j, SQL databases | Data completeness, cross-references, query performance |

Phenotypic Screening and Mechanism Deconvolution

Once assembled, chemogenomic libraries can be deployed in phenotypic screening campaigns with built-in capabilities for mechanism deconvolution. A representative workflow for glioblastoma multiforme (GBM) research illustrates this approach [5]:

Target Selection: Identify differentially expressed genes and somatic mutations from GBM patient data (e.g., from The Cancer Genome Atlas). Filter based on protein-protein interaction networks to identify 117 proteins with druggable binding sites [5].

Virtual Screening: Dock approximately 9,000 compounds against 316 druggable binding sites on proteins in the GBM subnetwork using knowledge-based scoring methods [5].

Phenotypic Screening: Test selected compounds in 3D spheroids of patient-derived GBM cells while assessing toxicity in non-transformed primary cell lines (e.g., CD34+ progenitor cells and astrocytes).

Angiogenesis Assessment: Evaluate effects on tube formation in brain endothelial cells to identify compounds with anti-angiogenic properties [5].

Mechanism Elucidation: Employ RNA sequencing and thermal proteome profiling to identify potential targets and mechanisms of action for hit compounds [5].

This integrated approach led to the identification of compound IPR-2025, which inhibited GBM cell viability with single-digit micromolar IC50 values—substantially better than standard-of-care temozolomide—while sparing normal cells [5].

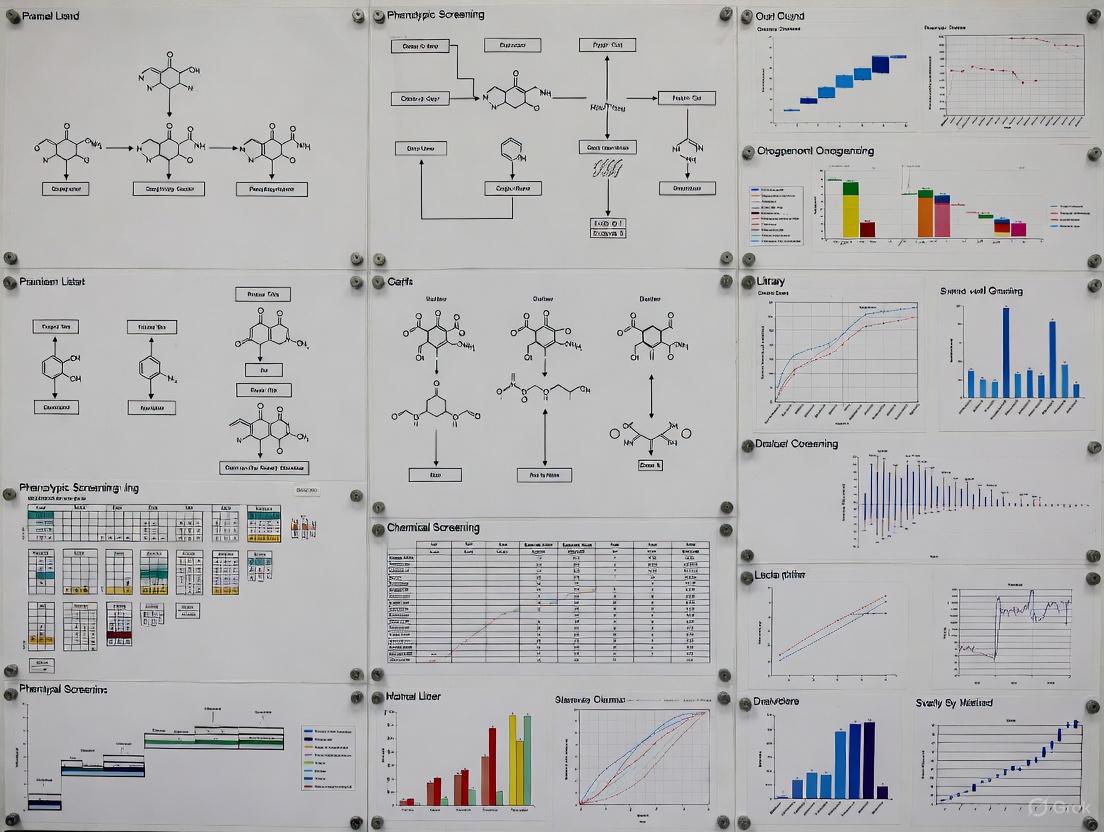

Diagram 1: Integrated Chemogenomic Screening Workflow for GBM

Quality Control and Annotation Standards

Comprehensive Compound Characterization

The utility of a chemogenomic library depends heavily on the quality and completeness of compound annotation. Beyond target affinity, comprehensive characterization should include:

Chemical Quality: Verification of structural identity (e.g., by NMR or LC-MS) and purity (typically ≥95%) [2]. Solubility in DMSO and aqueous buffers should be quantified to ensure compounds remain in solution under assay conditions.

Biological Specificity: Assessment of effects on basic cellular functions including cell viability, mitochondrial health, membrane integrity, cell cycle progression, and cytoskeletal integrity [2]. The HighVia Extend protocol provides a live-cell multiplexed assay that classifies cells based on nuclear morphology and other indicators of cellular health over time [2].

Morphological Profiling: Integration with high-content imaging approaches like Cell Painting, which captures hundreds of morphological features across multiple cellular compartments [1]. This creates distinctive "morphological fingerprints" that can help connect compound activity to specific phenotypic outcomes.

Table 2: Essential Quality Metrics for Chemogenomic Library Compounds

| Quality Dimension | Assessment Method | Acceptance Criteria | Purpose |

|---|---|---|---|

| Chemical Integrity | LC-MS, NMR | ≥95% purity, structure confirmation | Ensure compound identity and minimize impurities |

| Solubility | Kinetic solubility assay | ≥100 µM in DMSO, no precipitation in buffer | Avoid false negatives from compound aggregation |

| Membrane Integrity | HighVia Extend assay | IC50 > 10× target engagement concentration | Discern specific from non-specific cytotoxic effects |

| Mitochondrial Health | MitotrackerRed staining | No depolarization at working concentrations | Identify mitochondrial toxicants |

| Cytoskeletal Effects | Tubulin staining | No aberrant polymerization/depolymerization | Exclude tubulin-interfering compounds |

| Nuclear Morphology | Hoechst 33342 staining | Normal nuclear size and shape | Detect apoptosis and other nuclear abnormalities |

Table 3: Key Research Reagent Solutions for Chemogenomic Screening

| Reagent/Resource | Function | Application Notes |

|---|---|---|

| ChEMBL Database | Bioactivity data for target annotation | Source standardized bioactivity data (Ki, IC50, EC50) for 1.6M+ compounds |

| Cell Painting Assay | Morphological profiling | Extract 1,779+ morphological features for phenotypic classification |

| ScaffoldHunter | Chemical scaffold analysis | Hierarchically organize compounds by structural relationships |

| Neo4j Graph Database | Network pharmacology integration | Connect compounds, targets, pathways, and diseases in queryable network |

| HighVia Extend Assay | Live-cell health assessment | Multiplexed viability, mitochondrial, and cytoskeletal profiling over time |

| Hoechst 33342 | Nuclear staining | 50 nM optimal for live-cell imaging without cytotoxicity |

| MitotrackerRed/DeepRed | Mitochondrial staining | Assess mass and membrane potential; use at non-toxic concentrations |

| BioTracker 488 Microtubule Dye | Tubulin visualization | Taxol-derived dye for cytoskeletal integrity assessment |

Applications in Phenotypic Drug Discovery

System Pharmacology Networks

The true power of chemogenomic libraries emerges when they are integrated into system pharmacology networks that connect multiple layers of biological information. These networks typically incorporate:

- Drug-Target Relationships: Annotated compound-protein interactions with quantitative binding data [1].

- Pathway Context: KEGG pathway mappings that place targets within broader signaling networks [1].

- Disease Associations: Disease Ontology linkages that connect targets and pathways to human diseases [1].

- Morphological Profiles: Cell Painting data that links compound treatment to observable phenotypic changes [1].

This multi-layered integration enables researchers to move beyond single-target thinking and explore the polypharmacological profiles of compounds in a systematic way. For example, a compound that produces a specific morphological phenotype can be connected to its known targets, which can then be placed within relevant disease-associated pathways [1].

Selective Polypharmacology for Intractable Diseases

The selective polypharmacology approach—where compounds are designed or selected to modulate multiple specific targets simultaneously—is particularly promising for complex diseases like glioblastoma [5]. This strategy acknowledges that suppressing tumor growth in cancers harboring numerous mutations may require coordinated modulation of multiple signaling pathways.

In the GBM case study, the enriched chemogenomic library approach identified compound IPR-2025, which engaged multiple targets while sparing normal cells [5]. This selective polypharmacology profile—confirmed through thermal proteome profiling—enabled potent anti-tumor effects without general cytotoxicity, demonstrating the power of target-informed phenotypic screening.

Diagram 2: Selective Polypharmacology Mechanism

The field of chemogenomic libraries continues to evolve toward broader target coverage and more sophisticated annotation. Initiatives like the EUbOPEN project aim to assemble an open-access chemogenomic library covering more than 1,000 proteins with well-annotated compounds and chemical probes [2]. The ultimate goal of Target 2035 is to expand this collection to cover the entire druggable proteome [2].

Artificial intelligence and machine learning are playing increasingly important roles in analyzing the complex datasets generated from chemogenomic screening [6]. These technologies enable predictive modeling of compound activities and enhance pattern recognition in high-dimensional data. Additionally, the integration of chemogenomic libraries with advanced cellular models—including patient-derived organoids and complex co-culture systems—promises to increase the physiological relevance of phenotypic screening [5] [3].

In conclusion, chemogenomic libraries have evolved from simple collections of target-annotated compounds to sophisticated system pharmacology networks that integrate chemical, biological, and phenotypic information. When properly designed, characterized, and implemented, these resources provide powerful platforms for bridging the gap between phenotypic observations and molecular mechanisms, accelerating the discovery of novel therapeutic strategies for complex diseases.

The landscape of drug discovery has witnessed a significant paradigm shift with the resurgence of phenotypic drug discovery (PDD) after decades of dominance by target-based approaches. Between 1999 and 2008, phenotypic screening was responsible for the discovery of over half of FDA-approved first-in-class small-molecule drugs, demonstrating its disproportionate impact on pharmaceutical innovation [5]. This resurgence stems from the recognition that complex polygenic diseases often require modulation of multiple targets or pathways, which can be more effectively identified through phenotypic observation rather than single-target reductionism [7]. Modern PDD combines the original concept of observing therapeutic effects on disease physiology with advanced tools and strategies, including more sophisticated disease models, high-content screening technologies, and computational analytics [7]. This article examines the advantages of phenotypic screening over target-based approaches and provides detailed protocols for implementation within high-throughput chemogenomic library research.

Advantages of Phenotypic Drug Discovery

Expansion of Druggable Target Space and Novel Mechanisms

Phenotypic screening has uniquely expanded the "druggable target space" to include unexpected cellular processes and novel target classes that would be difficult to identify through rational target-based design [7]. This approach has revealed therapeutic interventions acting via non-traditional targets including membranes, ion channels, ribosomes, microtubules, and large complex molecular structures like ATP synthase [8]. Unlike target-based discovery, which typically focuses on enzymes and receptors with well-characterized activities, PDD can identify compounds working through novel mechanisms of action (MoA) even when the functional roles of targets in disease are not fully understood [8].

Table 1: Recently Approved Therapies Identified Through Phenotypic Drug Discovery

| Drug Name | Therapeutic Area | Year Approved | Novel Target/Mechanism |

|---|---|---|---|

| Risdiplam [8] | Spinal Muscular Atrophy | 2020 | SMN2 pre-mRNA splicing modifier |

| Vamorolone [8] | Duchenne Muscular Dystrophy | 2023 | Dissociative steroid receptor modulator |

| Daclatasvir [8] | Hepatitis C | 2014 | NS5A replication complex inhibitor |

| Lumacaftor [8] | Cystic Fibrosis | 2015 | CFTR corrector (protein folding/trafficking) |

| Perampanel [8] | Epilepsy | 2012 | Non-competitive AMPA receptor antagonist |

Effective Polypharmacology and Systems-Level Approaches

PDD naturally accommodates polypharmacology – where compounds simultaneously modulate multiple targets – which can be advantageous for treating complex diseases with redundant or networked pathophysiology [7]. Suppressing tumor growth in cancers like glioblastoma multiforme (GBM) without toxicity may be best achieved by small molecules that selectively modulate a collection of targets across different signaling pathways, an approach known as selective polypharmacology [5]. Unlike target-based drug discovery (TDD), which often experiences remarkable attrition due to flawed target hypotheses or incomplete understanding of compensatory mechanisms, phenotypic screening captures the complexity of cellular signaling networks and adaptive resistance mechanisms seen in clinical settings [9].

Higher Success Rates for First-in-Class Medicines

Systematic analyses demonstrate that PDD generates a disproportionate number of first-in-class medicines compared to target-based approaches [7]. A review of new FDA-approved treatments between 1999 and 2008 found that PDD was responsible for 28 first-in-class small molecule drugs discovered compared to 17 from target-based methods [8]. From 2012 to 2022, application of PDD methods in large pharmaceutical companies grew from less than 10% to an estimated 25-40% of project portfolios, reflecting increased recognition of its value [8].

Application Note: Phenotypic Screening for Glioblastoma Multiforme

Background and Rationale

Glioblastoma multiforme (GBM) remains the most aggressive brain tumor with a median survival of only 14-16 months and a five-year survival rate of 3-5%, responding poorly to standard-of-care therapies [5]. The intratumoral genetic instability of GBM allows these malignancies to modulate cell survival pathways, angiogenesis, and invasion, making single-target approaches largely ineffective [5]. This application note describes a rational approach to create chemical libraries tailored for phenotypic screening to generate small molecules with selective polypharmacology that inhibit GBM growth without affecting nontransformed normal cell lines.

Experimental Workflow and Design

The integrated workflow combined tumor genomic data with virtual screening and phenotypic validation in biologically relevant models [5]. The process began with identification of druggable pockets on protein structures from the Protein Data Bank (PDB), classified based on whether they occurred at a catalytic site (ENZ), a protein-protein interaction interface (PPI), or an allosteric site (OTH) [5]. Gene expression profiles from 169 GBM tumors and 5 normal samples from The Cancer Genome Atlas (TCGA) were analyzed to identify genes overexpressed in GBM (p < 0.001, FDR < 0.01, and log2 fold change > 1) [5]. The 755 identified genes with somatic mutations that were overexpressed in GBM were mapped onto a large-scale protein-protein interaction network to construct a GBM subnetwork, resulting in 117 proteins with at least one druggable binding site [5].

Diagram 1: GBM Phenotypic Screening Workflow (77 characters)

Key Results and Validation

Screening the rationally enriched library of 47 candidates led to several active compounds, including compound 1 (IPR-2025), which demonstrated [5]:

- Inhibition of cell viability of low-passage patient-derived GBM spheroids with single-digit micromolar IC50 values substantially better than standard-of-care temozolomide

- Blocked tube-formation of endothelial cells in Matrigel with submicromolar IC50 values

- No effect on primary hematopoietic CD34+ progenitor spheroids or astrocyte cell viability, demonstrating selective toxicity

- Engagement of multiple targets confirmed through mass spectrometry-based thermal proteome profiling

Table 2: Experimental Results for Phenotypic Screening Hit IPR-2025

| Assay Type | Model System | Endpoint | Result | Comparison to Control |

|---|---|---|---|---|

| Viability assay [5] | Patient-derived GBM spheroids | IC50 | Single-digit μM | Superior to temozolomide |

| Angiogenesis assay [5] | Endothelial cells (Matrigel) | Tube formation IC50 | Submicromolar | Not applicable |

| Specificity assay [5] | Hematopoietic CD34+ progenitors | Viability | No effect | Favorable toxicity profile |

| Specificity assay [5] | Astrocytes | Viability | No effect | Favorable toxicity profile |

| Target engagement [5] | Thermal proteome profiling | Multiple targets confirmed | Positive | Polypharmacology confirmed |

Detailed Experimental Protocols

Protocol 1: Target Enrichment and Library Design for Phenotypic Screening

Principle: Create focused chemical libraries for phenotypic screening by structure-based molecular docking of chemical libraries to disease-specific targets identified using tumor RNA sequence and mutation data with cellular protein-protein interaction data [5].

Materials:

- Tumor genomic data (e.g., from TCGA)

- Protein Data Bank structures

- Protein-protein interaction networks (e.g., literature-curated and experimentally determined networks)

- Chemical library (~9000 compounds)

- Molecular docking software (e.g., support vector machine-knowledge-based scoring)

Procedure:

- Identify Druggable Binding Sites: Search for druggable binding sites on proteins implicated in the disease context and classify them by functional importance (catalytic site, protein-protein interaction interface, or allosteric site) [5].

- Differential Expression Analysis: Collect gene expression profiles from relevant disease and normal samples. Perform differential expression analysis to identify significantly overexpressed genes (p < 0.001, FDR < 0.01, and log2FC > 1) [5].

- Somatic Mutation Integration: Retrieve somatic mutation data from disease samples and identify genes with both mutations and overexpression [5].

- Network Mapping: Map identified genes onto combined protein-protein interaction networks (literature-curated and experimentally determined) to construct a disease-specific subnetwork [5].

- Virtual Screening: Dock in-house compound library to the set of druggable binding sites on proteins in the disease subnetwork using appropriate scoring methods to predict binding affinities [5].

- Compound Selection: Select small molecules predicted to simultaneously bind to multiple proteins for phenotypic screening [5].

Protocol 2: Phenotypic Screening Using 3D Spheroid Models

Principle: Screen compounds against three-dimensional spheroids of patient-derived cells to better represent the tumor microenvironment, complemented by testing in nontransformed normal cell lines to assess selective toxicity [5].

Materials:

- Patient-derived disease cells (e.g., GBM spheroids)

- Normal primary cell lines (e.g., CD34+ progenitor cells, astrocytes)

- Endothelial cells for angiogenesis assays

- Matrigel for tube formation assays

- Cell viability assay reagents

- High-content imaging system

Procedure:

- Spheroid Generation: Culture patient-derived cells in low-adherence plates with appropriate media to form three-dimensional spheroids [5].

- Compound Treatment: Treat spheroids with test compounds across a concentration range (e.g., 0.1-100 μM) for 72-120 hours.

- Viability Assessment: Measure cell viability using appropriate assays (e.g., ATP-based assays). Calculate IC50 values using nonlinear regression [5].

- Selectivity Testing: Test active compounds in parallel against nontransformed normal cell lines in both 2D (e.g., astrocytes) and 3D (e.g., CD34+ progenitor spheroids) formats [5].

- Angiogenesis Assay: Seed endothelial cells on Matrigel and treat with compounds. Quantify tube formation after 6-18 hours using image analysis [5].

- Mechanistic Studies: For promising compounds, perform RNA sequencing of treated versus untreated cells to identify potential mechanisms of action [5].

- Target Engagement: Confirm compound binding to predicted targets using thermal proteome profiling or cellular thermal shift assays [5].

Protocol 3: Multi-Modal Profiling for Compound Activity Prediction

Principle: Integrate chemical structures with phenotypic profiles (imaging and gene expression) to predict compound bioactivity using machine learning approaches, enhancing hit identification and prioritization [10].

Materials:

- Chemical structure databases

- Cell Painting assay reagents for morphological profiling

- L1000 assay reagents for gene expression profiling

- Machine learning frameworks (e.g., graph convolutional networks)

Procedure:

- Chemical Structure Profiling: Compute chemical structure profiles using graph convolutional nets or similar approaches [10].

- Morphological Profiling: Perform Cell Painting assay using appropriate fluorescent dyes and high-content imaging. Extract morphological features using CellProfiler or similar software [10].

- Gene Expression Profiling: Conduct L1000 assay to measure gene expression profiles [10].

- Assay Selection: Select diverse assays representative of the screening center's activities, filtered to reduce similarity [10].

- Model Training: Train machine learning models using a multi-task setting with 5-fold cross-validation using scaffold-based splits to evaluate ability to predict hits in held-out compounds with dissimilar structures [10].

- Data Fusion: Implement late data fusion by building assay predictors for each modality independently, then combine output probabilities using max-pooling [10].

- Validation: Assess performance using area under the receiver operating characteristic curve (AUROC), with AUROC > 0.9 considered well-predicted [10].

Diagram 2: Multi-Modal Bioactivity Prediction (56 characters)

The Scientist's Toolkit: Essential Research Reagents and Technologies

Table 3: Key Research Reagent Solutions for Phenotypic Drug Discovery

| Reagent/Technology | Function | Application Note |

|---|---|---|

| 3D Spheroid Culture Systems [5] | Mimics tumor microenvironment | Provides more physiologically relevant screening format compared to 2D monolayers |

| Cell Painting Assay [10] | High-content morphological profiling | Uses fluorescent dyes to label multiple cell components; enables unsupervised detection of subtle phenotypic changes |

| L1000 Gene Expression Profiling [10] | Transcriptomic profiling at scale | Measures 978 "landmark" genes to infer entire transcriptome; cost-effective for large compound libraries |

| Thermal Proteome Profiling [5] | Target identification and engagement | Monitors protein thermal stability changes upon compound binding; confirms direct target engagement |

| Protein-Protein Interaction Knowledge Graph (PPIKG) [11] | Target deconvolution | Integrates heterogeneous biological data; narrows candidate targets from thousands to dozens for experimental validation |

| Patient-Derived Cells [5] | Disease-relevant screening models | Maintains genetic and phenotypic characteristics of original tumors; better predicts clinical efficacy |

| High-Content Imaging Systems [9] | Automated phenotypic analysis | Enables quantitative multiparametric analysis of complex cellular phenotypes in high-throughput format |

| Knowledge Graph Embedding Methods [11] | Predictive target discovery | Maps entities and relationships to vector space; predicts potential targets for phenotypic screening hits |

Discussion and Future Perspectives

The resurgence of phenotypic drug discovery represents a maturation rather than a transient trend, with PDD now serving as an accepted discovery modality in both academia and the pharmaceutical industry [7]. Future advances will be driven by several key technological innovations:

Artificial Intelligence and Machine Learning: AI is rapidly reshaping phenotypic screening by enhancing efficiency, lowering costs, and driving automation in drug discovery [8] [6]. Machine learning algorithms can analyze massive datasets generated from high-throughput screening platforms with unprecedented speed and accuracy, reducing the time needed to identify potential drug candidates [6] [10]. The integration of AI with robotics and cloud-based platforms offers scalability, real-time monitoring, and enhanced collaboration across global research teams [6].

Advanced Disease Models: The field is moving beyond traditional 2D cell cultures to more physiologically relevant models including organoids, microphysiological systems, and human-based phenotypic platforms [12]. These advanced models better capture the complexity of human disease and are being applied throughout the discovery process for hit triage and prioritization, elimination of hits with unsuitable mechanisms, and supporting clinical strategies through pathway-based decision frameworks [12].

Integrated Workflows: Future success will depend on adaptive, integrated workflows that leverage the strengths of both phenotypic and target-based approaches [9]. The convergence of high-throughput screening, structural biology, and computational modeling creates powerful pipelines for addressing complex biological challenges [9]. As these approaches increase in use, they will gain power for driving better decisions, generating better leads faster, and in turn promoting greater adoption of PDD [12].

The demonstrated ability of phenotypic screening to identify first-in-class medicines with novel mechanisms positions it as an essential component of modern drug discovery, particularly for complex diseases where single-target approaches have shown limited success.

Chemogenomic libraries are strategically designed collections of small molecules used to systematically probe biological systems. Within high-throughput phenotypic screening, these libraries serve as powerful tools for identifying novel therapeutic agents and deconvoluting complex mechanisms of action without prior knowledge of specific molecular targets. The resurgence of phenotypic drug discovery (PDD) has heightened the importance of these libraries, with studies indicating that over half of FDA-approved first-in-class small-molecule drugs discovered between 1999 and 2008 originated from phenotypic screening approaches [3]. The effectiveness of a chemogenomic library is not determined by a single parameter but rather by the careful optimization of three interdependent components: size, diversity, and target coverage. This application note details the essential characteristics of effective chemogenomic libraries and provides protocols for their construction and application in a high-throughput phenotypic screening context, framed within a broader thesis on PDD research.

Library Design: Core Components and Quantitative Benchmarks

The construction of a high-quality chemogenomic library requires careful balancing of multiple physicochemical and biological parameters. The primary goal is to create a collection that broadly samples the biologically relevant chemical space (BioReCS) while ensuring sufficient depth in probing the druggable genome.

Table 1: Key Design Parameters for Chemogenomic Libraries

| Parameter | Recommended Range | Rationale & Impact on Screening |

|---|---|---|

| Library Size | 3,000 - 5,000 compounds [13] | Balances practical screening throughput with sufficient coverage of target diversity. |

| Molecular Weight | Up to 800 g/mol [14] | Accommodates beyond Rule of 5 (bRo5) compounds while maintaining generally favorable pharmacokinetics. |

| Target Coverage | ~1,000 - 2,000 protein targets [3] | Interrogates a significant fraction of the druggable genome, estimated at 20,000+ genes. |

| Potency Criteria | Nanomolar range (<1000 nM) [14] | Ensures inclusion of high-quality chemical starting points with strong structure-activity relationships. |

Target Coverage and the Druggable Genome

A central limitation in library design is that even the best chemogenomic libraries interrogate only a small fraction of the human genome—approximately 1,000–2,000 targets out of 20,000+ genes [3]. This highlights a significant opportunity for expanding into underexplored regions of biological target space. Effective libraries must therefore be designed to maximize the breadth and relevance of their target coverage.

Chemical Diversity and Scaffold Representation

Diversity is not merely a function of the number of unique structures but of the breadth of distinct molecular scaffolds represented. A common practice involves using software like ScaffoldHunter to deconstruct molecules into representative core structures, distributing them across different levels based on their relationship distance from the parent molecule node [13]. This hierarchical scaffold analysis ensures the library covers a wide array of distinct chemotypes, reducing redundancy and increasing the probability of identifying novel bioactive compounds.

Experimental Protocol: Construction of a Phenotypic Screening Library

This protocol outlines the systematic development of a chemogenomic library tailored for high-throughput phenotypic screening, integrating public bioactivity data and chemical informatics tools.

Data Collection and Curation

- Step 1: Source Bioactivity Data. Extract compounds with associated bioactivity data (e.g., IC50, Ki, EC50, KD) from public databases such as ChEMBL [14] [13]. The raw data set from ChEMBL can exceed 11 million entries, providing a foundation for filtering [14].

- Step 2: Apply Potency and Property Filters. Filter the raw dataset to retain compounds with:

- Step 3: Remove Undesirable Chemotypes. Exclude compounds with:

Library Assembly and Enrichment

- Step 4: Scaffold Analysis and Diversity Selection. Process the filtered compound set using ScaffoldHunter [13] to generate a hierarchical representation of molecular scaffolds. Select compounds to maximize the number of unique, representative scaffolds within the desired library size (e.g., 5,000 compounds).

- Step 5: Functional Enrichment (Optional). For disease-specific screening, enrich the library using structure-based virtual screening. As demonstrated in glioblastoma (GBM) research, dock an in-house library against druggable binding sites on proteins identified from the tumor's genomic and protein-protein interaction network [5]. Select compounds predicted to bind multiple key targets to enable selective polypharmacology.

- Step 6: Physical Library Assembly. Procure selected compounds from commercial vendors (e.g., Enamine's REAL Space) [14]. Prepare stock solutions in DMSO and store in barcoded plates at -20°C to -80°C to ensure stability and enable automated handling.

Experimental Protocol: Phenotypic Screening and Target Deconvolution

This protocol describes the application of the constructed chemogenomic library in a high-content phenotypic screen followed by mechanistic investigation.

Phenotypic Screening Using Cell Painting Assay

- Step 1: Cell Culture and Plating. Plate disease-relevant cells (e.g., U2OS osteosarcoma cells or patient-derived primary cells) in multiwell plates. For complex phenotypes, use 3D spheroid or organoid models to better capture the disease microenvironment [3] [5].

- Step 2: Compound Treatment. Treat cells with library compounds across a range of concentrations (e.g., 1 nM - 10 µM) using automated liquid handling, including appropriate positive and negative controls.

- Step 3: Staining and Imaging. Stain fixed cells with the Cell Painting dye cocktail [13]:

- Mitochondria: MitoTracker Deep Red

- Nuclei: Hoechst 33342

- Endoplasmic Reticulum: Concanavalin A, Alexa Fluor 488 conjugate

- Nucleoli and Cytoplasmic RNA: Syto 14 green fluorescent

- F-Actin and Golgi: Phalloidin (Alexa Fluor 568 conjugate) and Wheat Germ Agglutinin (Alexa Fluor 555 conjugate)

- Step 4: Image Analysis and Feature Extraction. Acquire high-resolution images on a high-content microscope. Use automated image analysis software (e.g., CellProfiler) to identify individual cells and extract morphological features (size, shape, texture, intensity, granularity) for each cellular compartment (cell, cytoplasm, nucleus) [13]. A typical profile may contain over 1,700 morphological features.

Diagram 1: Phenotypic screening and target deconvolution workflow.

Target Deconvolution and Mechanism of Action Studies

- Step 5: Transcriptomic Profiling. Treat cells with hit compounds and perform RNA sequencing (RNA-seq). Analyze differential gene expression to generate hypotheses about affected pathways and targets [5]. Alternatively, use predictive tools like DeepCE to infer chemical-induced gene expression profiles for novel compounds [16].

- Step 6: Proteomic Target Engagement. Confirm direct target engagement using mass spectrometry-based thermal proteome profiling (TPP) [5]. This method identifies proteins whose thermal stability shifts upon compound binding, providing direct evidence of physical interaction within a cellular context.

- Step 7: Network Pharmacology Integration. Construct a systems pharmacology network using a graph database (e.g., Neo4j) to integrate drug-target interactions, pathways (KEGG), gene ontologies (GO), disease ontologies (DO), and morphological profiles [13]. This enables the prediction of mechanisms of action by connecting phenotypic signatures to known biological networks.

Table 2: Key Research Reagents and Computational Tools

| Tool or Resource | Function / Application | Key Features / Notes |

|---|---|---|

| ChEMBL Database | Public repository of bioactive molecules with drug-like properties [14] [13]. | Provides curated bioactivity data (IC50, Ki, etc.) for library construction and benchmarking. |

| Cell Painting Assay | High-content morphological profiling for phenotypic screening [13]. | Uses 6 fluorescent dyes to label 8 cellular components; generates >1,700 morphological features. |

| ScaffoldHunter | Software for hierarchical scaffold analysis and diversity assessment [13]. | Deconstructs molecules to reveal core structures, enabling diversity-based library design. |

| Enamine REAL Space | Commercially accessible virtual chemical library [14]. | Contains billions of make-on-demand compounds for library expansion and hit optimization. |

| Neo4j | Graph database platform for network pharmacology integration [13]. | Enables integration of drug-target-pathway-disease relationships for mechanism deconvolution. |

| RDKit | Open-source cheminformatics toolkit [15]. | Handles chemical data preprocessing, descriptor calculation, and similarity searching. |

| DeepCE | Deep learning model for predicting gene expression profiles [16]. | Uses graph neural networks to predict cellular responses to de novo chemicals. |

Well-designed chemogenomic libraries represent a critical resource for advancing phenotypic drug discovery. By strategically balancing size, diversity, and target coverage—as quantified in this application note—researchers can construct screening collections that maximize the probability of identifying novel therapeutic agents with complex mechanisms of action. The integrated experimental protocols provided here, from library construction through target deconvolution, offer a roadmap for applying these principles in practice. As chemical biology evolves, the continued refinement of these libraries, particularly through expansion into underexplored regions of chemical and target space, will be essential for addressing increasingly challenging therapeutic areas.

The druggable genome, defined as the subset of human genes encoding proteins that can interact with drug-like molecules, represents the universe of potential therapeutic targets. However, current chemogenomic libraries—collections of compounds with known biological annotations—cover only a fraction of this potential. Research indicates that even the most comprehensive chemogenomic libraries interrogate just 1,000–2,000 out of over 20,000+ human genes [3]. This narrow coverage creates significant blind spots in phenotypic screening campaigns, potentially causing researchers to miss crucial biological mechanisms and therapeutic opportunities.

This limitation stems from a fundamental imbalance in drug development focus. Studies of drugs with specified mechanisms of action reveal that 75.9% of targeted genes are modulated by inhibitors, while only 23.2% are targeted by activator drugs [17]. This bias toward inhibition mechanisms leaves entire protein classes unexplored. Furthermore, the overreliance on immortalized cell lines and simplistic two-dimensional assays in traditional screening approaches fails to capture the complex pathophysiology of diseases, further limiting the effective investigation of the druggable genome [5] [3].

Table 1: Quantitative Analysis of the Druggable Genome Coverage Gap

| Metric | Current Coverage | Total Potential | Coverage Gap |

|---|---|---|---|

| Protein-coding genes targeted by annotated compounds | 1,000-2,000 [3] | ~20,000+ | 90-95% |

| Genes targeted by approved or investigational drugs | 2,553 [17] | ~20,000+ | ~87% |

| Genes targeted by activator drugs | 592 [17] | Unknown | Significant imbalance |

| Genes targeted by inhibitor drugs | 1,937 [17] | Unknown | Less severe gap |

Experimental Protocols for Comprehensive Target Identification

Protocol: Druggable Genome-Wide Mendelian Randomization (MR)

Purpose: To identify and prioritize causal disease genes with therapeutic potential using genetic evidence [18] [19] [20].

Workflow Overview:

Methodology Details:

- Druggable Gene Identification: Curate druggable genes from sources like the Drug-Gene Interaction Database (DGIdb) and published literature, yielding approximately 4,463-5,583 potential targets [18] [20].

- Instrumental Variable (IV) Selection:

- Obtain blood cis-eQTL (expression quantitative trait loci) data from consortia such as eQTLGen (31,684 individuals, 19,250 transcripts) [18] [20].

- Select genetic variants within ±1 Mb of gene coding sequences that are significantly associated with gene expression (P < 5 × 10⁻⁸).

- Clump variants to ensure independence (linkage disequilibrium r² < 0.01, window size = 10 Mb).

- Calculate F-statistics to exclude weak instruments (F < 10 indicates potential bias) [18].

- MR Analysis:

- Implement inverse-variance weighted (IVW) method as primary analysis for multiple IVs.

- Use Wald ratio method for single IV scenarios.

- Perform sensitivity analyses including MR-Egger, weighted median, and weighted mode to assess pleiotropy.

- Apply Bonferroni correction for multiple testing (e.g., P < 2.94 × 10⁻⁶ for 16,987 genes) [20].

- Validation:

- Conduct Bayesian colocalization analysis to assess shared causal variants between gene expression and disease (posterior probability for H4 > 80% considered significant) [18] [20].

- Perform Steiger filtering to ensure correct causal direction.

- Implement Summary-data-based MR (SMR) with HEIDI test to distinguish pleiotropy from linkage [18].

- Safety Profiling:

Protocol: AI-Enhanced Direction of Effect (DOE) Prediction

Purpose: To predict whether therapeutic benefit requires activation or inhibition of identified targets, addressing the activator drug gap [17].

Methodology Details:

- Feature Engineering:

- Tabular Features: Compile 41 gene-level characteristics including LOEUF (loss-of-function observed/expected upper bound fraction), haploinsufficiency predictions, mode of inheritance associations, protein localization, and functional class [17].

- Embedding Features: Generate 256-dimensional GenePT embeddings from NCBI gene summaries and 128-dimensional ProtT5 embeddings from amino acid sequences to capture functional context [17].

- Model Training:

- Train multi-class classifiers to predict DOE-specific druggability (activator, inhibitor, other mechanisms) for 19,450 protein-coding genes.

- Implement calibration to ensure predicted probabilities match observed frequencies.

- Validate using known drug-target pairs from ChEMBL, clinical trial databases, and pharmaceutical pipelines [17].

- Application:

- Apply optimized F1-score thresholds (activator: 0.18, inhibitor: 0.30) for candidate selection.

- Integrate allelic series data across allele frequency spectrum (common to ultrarare variants) to infer dose-response relationships [17].

Protocol: Computationally Enriched Library Design for Phenotypic Screening

Purpose: To create focused chemical libraries tailored to disease-specific molecular networks [5].

Workflow Overview:

Methodology Details:

- Target Selection:

- Identify overexpressed genes in disease-relevant tissues (e.g., glioblastoma multiforme tumors from TCGA: p < 0.001, FDR < 0.01, log₂FC > 1) [5].

- Integrate somatic mutation data to identify dysregulated pathways.

- Map implicated genes onto protein-protein interaction networks to construct disease-specific subnetworks.

- Binding Site Identification:

- Classify druggable binding sites on protein structures from Protein Data Bank as catalytic sites (ENZ), protein-protein interaction interfaces (PPI), or allosteric sites (OTH) [5].

- Virtual Screening:

- Dock in-house compound libraries (~9,000 molecules) to multiple druggable binding sites simultaneously.

- Use knowledge-based scoring functions (e.g., SVR-KB) to predict binding affinities.

- Prioritize compounds predicted to engage multiple targets within the disease network [5].

- Experimental Validation:

- Screen selected compounds in disease-relevant models (e.g., patient-derived spheroids).

- Include counter-screens against normal primary cells (e.g., CD34+ progenitors, astrocytes) to assess selectivity [5].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Expanded Druggable Genome Research

| Resource Category | Specific Examples | Key Applications | Coverage Capabilities |

|---|---|---|---|

| Druggable Genome Databases | DGIdb [20], Finan et al. list [18] | Therapeutic target identification | 4,463-5,583 druggable genes |

| Genetic Datasets | eQTLGen Consortium (blood cis-eQTLs) [18] [20], UK Biobank Proteomics (pQTLs) [20], OneK1K (sc-eQTLs) [18] | Causal gene inference | 31,684 individuals, 19,250 transcripts [18] |

| Disease GWAS Resources | FinnGen [18] [20], other large-scale biobanks | Genetic association data | 484,589 individuals for POAG [18] |

| Compound Libraries | UF Scripps Drug Discovery Library [21], specialized chemogenomic collections [13] | Phenotypic and target-based screening | 665,000+ unique compounds [21] |

| Computational Tools | TwoSampleMR R package [18], molecular docking platforms (CB-Dock2) [18], Neo4j graph databases [13] | Data integration, MR analysis, virtual screening | Enables multi-omic integration |

Discussion and Future Perspectives

The integration of genetic evidence with computational and experimental approaches provides a powerful framework for expanding the effective coverage of the druggable genome. Mendelian randomization serves as a robust method for prioritizing causal genes, with studies successfully identifying novel therapeutic targets for conditions including primary open-angle glaucoma (YWHAG, GFPT1) [18], osteoporosis (TAS1R3, TMX2, SREBF1) [19], and low back pain (P2RY13) [20]. The addition of single-cell eQTL data further enables cell-type-specific target identification, as demonstrated by the discovery of GFPT1's paradoxical effect in CD4+ memory T cells [18].

Future efforts should focus on developing more sophisticated multi-omic integration platforms that combine genetic, transcriptomic, proteomic, and chemical data. The expanding availability of single-cell sequencing technologies and protein-protein interaction maps will further enhance our ability to construct comprehensive disease networks for targeted library design [5] [13]. Additionally, the application of advanced machine learning methods, including gene and protein embeddings, shows promising results for predicting direction of effect and expanding the repertoire of activator targets [17].

By implementing these complementary protocols—druggable genome MR, DOE prediction, and computationally enriched library design—research teams can systematically address the critical limitation of narrow druggable genome coverage in phenotypic screening. This integrated approach enables more comprehensive exploration of therapeutic possibilities, ultimately increasing the likelihood of discovering first-in-class therapies for complex diseases.

The resurgence of phenotypic screening in drug discovery has created an urgent need for more intelligent chemical library design. Chemogenomic libraries have emerged as powerful tools that bridge the gap between target-based and phenotypic approaches by providing well-annotated, target-focused compound collections. These libraries consist of small molecules with defined pharmacological activities against specific protein targets, enabling researchers to deconvolute complex phenotypic readouts and identify mechanisms of action [1]. Unlike traditional diversity libraries, chemogenomic libraries are curated to cover a significant portion of the druggable genome, allowing for systematic exploration of biological pathways and networks [22].

The fundamental challenge in chemogenomic library design lies in balancing three competing demands: achieving sufficient chemical diversity to explore broad biological space, maintaining drug-like properties to ensure clinical translatability, and incorporating biological relevance for specific disease contexts. This application note details structured strategies and practical protocols for designing chemogenomic libraries that optimize these parameters, with a specific focus on applications in high-throughput phenotypic screening for oncology and other complex diseases. We present quantitative frameworks for library optimization, detailed experimental protocols for validation, and visual workflows to guide implementation.

Strategic Frameworks for Chemogenomic Library Design

Quantitative Approaches to Library Optimization

Designing a targeted screening library of bioactive small molecules requires careful analytic procedures adjusted for multiple parameters. Effective libraries must balance comprehensive target coverage with practical screening constraints while maintaining chemical and biological relevance [23]. The table below summarizes key design parameters and their quantitative optimization targets based on published successful implementations.

Table 1: Key Parameters for Chemogenomic Library Design and Optimization

| Design Parameter | Optimization Target | Implementation Example |

|---|---|---|

| Library Size | 1,200-5,000 compounds for minimal screening [23] [1] | 1,211 compounds targeting 1,386 anticancer proteins [23] |

| Target Coverage | 1,000+ proteins from druggable genome [1] [22] | 1,320 anticancer targets covered by 789 compounds [23] |

| Chemical Diversity | High scaffold diversity (e.g., 57k Murcko scaffolds for 86k compounds) [24] | Murcko Frameworks and scaffold analysis [24] |

| Cellular Activity | Prioritization of compounds with demonstrated cellular activity [23] | Inclusion of FDA-approved drugs and clinical candidates [1] |

| Target Selectivity | Balanced selectivity and polypharmacology profiles [5] | Selective polypharmacology for complex diseases [5] |

Integration of Disease-Specific Context

A particular powerful approach involves tailoring libraries to specific disease contexts through systematic analysis of genomic and proteomic data. For glioblastoma multiforme (GBM), researchers have demonstrated how tumor genomic profiles can drive library enrichment [5]. This process involves:

- Target Identification: Analysis of differential gene expression and somatic mutations from patient data (e.g., TCGA) to identify disease-relevant targets [5].

- Network Mapping: Construction of disease-specific subnetworks using protein-protein interaction data to identify key nodal targets [5].

- Druggability Assessment: Identification of druggable binding sites (catalytic sites, protein-protein interfaces, allosteric sites) on target proteins [5].

- Virtual Screening: Computational docking of compounds against multiple disease-relevant targets to identify selective polypharmacology agents [5].

This strategy enables the creation of focused libraries that target the specific pathogenic pathways operative in a given disease context, moving beyond one-target-one-drug paradigms to address disease complexity [5].

Figure 1: Chemogenomic Library Design Workflow. This strategy integrates disease genomics with compound selection for phenotypic screening.

Experimental Protocols for Library Implementation and Validation

Protocol: Development of a Phenotypic Screening Platform Using Chemogenomic Libraries

This protocol describes the implementation of a multivariate phenotypic screening platform that leverages chemogenomic libraries for target deconvolution and mechanism of action studies. The methodology is adapted from established approaches in filarial nematode research [25] and cancer biology [23] [5], with specific adaptations for live-cell imaging and high-content analysis.

Materials and Reagents

Table 2: Essential Research Reagent Solutions for Phenotypic Screening

| Reagent Category | Specific Examples | Function/Purpose |

|---|---|---|

| Cell Lines | Patient-derived GBM spheroids, U2OS, HEK293T, MRC9 fibroblasts [5] [22] | Disease-relevant models for phenotypic screening |

| Viability Assays | alamarBlue, Hoechst33342, MitotrackerRed [22] | Multiplexed cell health assessment |

| Cell Painting Reagents | Fluorescent dyes for nuclei, cytoplasm, mitochondria, ER, Golgi, nucleoli, cytoskeleton [1] | Morphological profiling |

| Chemogenomic Libraries | Tocriscreen 2.0 (1280 compounds), EUbOPEN collection (1000+ proteins) [25] [22] | Target-annotated compound sources |

| Image Analysis | CellProfiler, HighVia Extend protocol [1] [22] | Automated feature extraction |

Procedure

Library Preparation

- Select a core chemogenomic library of 1,200-5,000 compounds with known target annotations and diverse mechanisms of action [23] [25].

- Prepare compound plates in DMSO at recommended storage concentrations (typically 2-10 mM) using low-protein-binding plates to prevent adsorption [24].

- Include appropriate control compounds: known cytotoxic agents (e.g., staurosporine, camptothecin), pathway-specific modulators, and vehicle controls (DMSO) [22].

Cell Culture and Plating

- Culture disease-relevant cell lines (e.g., patient-derived glioblastoma stem cells for cancer research) in appropriate media [23] [5].

- For 3D models: Generate spheroids using low-attachment plates or hanging drop methods, allowing 3-5 days for mature spheroid formation [5].

- Plate cells in assay-ready plates: 2D monolayers at 50-70% confluence, 3D spheroids at optimal density for imaging.

Compound Treatment and Staining

- Treat cells with chemogenomic library compounds across appropriate concentration ranges (typically 1 nM-10 μM) using liquid handling systems.

- For multiplexed viability assessment at multiple time points (e.g., 12, 24, 48, 72h), employ the HighVia Extend protocol [22]:

- Stain with 50 nM Hoechst33342 (nuclear marker)

- Add 100 nM MitotrackerRed (mitochondrial health)

- Include BioTracker 488 Green Microtubule Cytoskeleton Dye (cytoskeletal integrity)

- For fixed-cell morphological profiling (Cell Painting), follow established protocols with up to 8 dyes capturing 5+ cellular compartments [1].

Image Acquisition and Analysis

- Acquire images using high-content imaging systems (e.g., ImageXpress, Opera, or CellInsight)

- For live-cell imaging: Maintain environmental control (37°C, 5% CO₂) throughout time course experiments [22].

- Extract morphological features using CellProfiler or similar platforms, typically generating 1,000+ features per cell [1].

- Apply machine learning algorithms for cell classification into phenotypic categories (healthy, early/late apoptotic, necrotic) [22].

Data Integration and Target Deconvolution

- Integrate phenotypic profiles with target annotation data from chemogenomic library.

- Apply cluster analysis to group compounds with similar phenotypic profiles and target annotations.

- Use network pharmacology approaches to connect compound targets to pathways and biological processes [1].

Figure 2: Phenotypic Screening Workflow. This protocol enables comprehensive compound profiling and target identification.

Timing and Optimization Notes

- The complete protocol typically requires 2-3 weeks from library preparation to data analysis.

- Critical optimization points include: dye concentration titration to minimize phototoxicity in live-cell imaging [22], cell density optimization for each cell type, and compound concentration ranging to capture both efficacy and toxicity.

- For specific applications like angiogenesis assessment, include specialized assays such as endothelial tube formation [5].

Application Case Studies in Oncology Drug Discovery

Glioblastoma Patient-Dependent Vulnerability Profiling

In a pioneering study applying chemogenomic library screening to glioblastoma, researchers developed a minimal screening library of 1,211 compounds targeting 1,386 anticancer proteins [23]. This library was screened against glioma stem cells derived from multiple glioblastoma patients, revealing highly heterogeneous phenotypic responses across patients and molecular subtypes [23]. Key findings included:

- Identification of patient-specific vulnerabilities despite common diagnosis

- Compound-induced phenotypes varied significantly across patient-derived cells

- The approach successfully matched targeted therapies to patient-specific dependency networks

This case demonstrates how chemogenomic libraries can uncover personalized therapeutic opportunities that might be missed in conventional one-target-one-drug approaches.

Selective Polypharmacology for Complex Tumor Phenotypes

Another innovative approach combined tumor genomic data with virtual screening to create focused libraries for phenotypic screening [5]. Researchers:

- Identified 755 overexpressed and mutated genes in GBM from TCGA data

- Mapped these onto protein-protein interaction networks to identify 117 proteins with druggable binding sites

- Docked approximately 9,000 compounds against 316 druggable sites

- Selected 47 candidates for phenotypic screening

This rational library enrichment strategy yielded compound IPR-2025, which demonstrated:

- Superior inhibition of patient-derived GBM spheroids compared to standard care temozolomide

- Antiangiogenic activity in endothelial tube formation assays

- Minimal toxicity to normal cells

- Engagement of multiple targets confirmed by thermal proteome profiling [5]

This case highlights how targeted library design can identify selective polypharmacology agents that address the complexity of cancer signaling networks.

Discussion and Future Perspectives

The strategic design of chemogenomic libraries represents a critical advancement in phenotypic drug discovery. By systematically balancing diversity, drug-likeness, and biological relevance, these libraries enable more efficient deconvolution of mechanisms of action while maintaining translational potential. The integration of disease genomics with chemoinformatic selection creates a powerful framework for addressing complex diseases like cancer, neurological disorders, and infectious diseases [1] [5] [25].

Future developments in this field will likely include more dynamic library designs that can be iteratively refined based on screening data, increased integration of artificial intelligence for compound selection and optimization, and expanded target coverage approaching the full druggable genome [15] [26]. The ongoing development of open-access initiatives like EUbOPEN and Target 2035 will further accelerate this field by providing well-annotated chemical tools for the research community [22].

As phenotypic screening continues to evolve, the strategic design of chemogenomic libraries will remain essential for translating complex phenotypic observations into actionable therapeutic strategies with clear mechanisms of action. The protocols and frameworks presented here provide a foundation for implementing these approaches in both academic and industrial drug discovery settings.

Implementation Strategies: Practical Approaches for Screening and Mechanism Deconvolution

The convergence of induced pluripotent stem (iPS) cell technology, CRISPR-Cas9 gene editing, and high-content imaging represents a transformative approach in modern phenotypic screening and drug discovery. Induced pluripotent stem cells (iPSCs), reprogrammed from somatic cells using Yamanaka factors (Oct4, Klf4, Sox2, and c-Myc), provide a virtually unlimited source of human cells that can be differentiated into any cell type [27]. When combined with the precision of CRISPR-Cas9 gene editing and the analytical power of high-content imaging and analysis, researchers can now conduct high-throughput phenotypic screens on physiologically relevant human cell models with genetically defined backgrounds [28] [29]. This integration enables the systematic functional annotation of genes in disease-relevant cell types and accelerates the identification of novel therapeutic targets and candidates, particularly for complex and incurable diseases like glioblastoma and neurodegenerative disorders [5] [29].

Applications in Phenotypic Screening and Drug Discovery

The integration of these technologies enables several key applications in high-throughput phenotypic screening, each contributing to different stages of the drug discovery pipeline.

Table 1: Key Applications of Integrated Technologies in Phenotypic Screening

| Application | Description | CRISPR Tool | Readout | Reference |

|---|---|---|---|---|

| Functional Genomics | Systematic identification of gene functions in disease-relevant cell types | CRISPRn, CRISPRi, CRISPRa | Survival, FACS, scRNA-seq, imaging | [29] |

| Disease Modeling | Generation of isogenic cell lines with specific disease-causing mutations | CRISPRn (HDR) | High-content imaging, functional assays | [30] [27] |

| Compound Screening | Testing drug efficacy and toxicity in physiologically relevant models | CRISPRi/a (modulators) | Multiparametric phenotypic profiling | [28] [31] |

| Target Identification | Uncovering novel therapeutic targets through genetic screening | CRISPRn/i (knockout/knockdown) | High-content imaging, transcriptomics | [5] [29] |

| Pathway Analysis | Elucidating signaling pathways and mechanisms of disease | CRISPRa (activation) | Phosphorylation, localization, morphology | [29] |

The global high-content screening market, valued at $3.1 billion in 2023 and projected to reach $5.1 billion by 2029, reflects the growing adoption of these integrated approaches [31]. Similarly, the high-throughput screening market is expected to grow from $26.12 billion in 2025 to $53.21 billion by 2032, driven by the need for faster drug discovery processes [6].

Research Reagent Solutions and Essential Materials

Table 2: Essential Research Reagents and Materials for Integrated Screening Platforms

| Category | Specific Product/Technology | Function | Example Use Cases |

|---|---|---|---|

| Stem Cell Culture | mTeSR Plus, Stemflex Medium | Maintain iPSCs in feeder-free conditions | Culturing iPSCs prior to differentiation [30] |

| Gene Editing | Alt-R S.p. HiFi Cas9 Nuclease V3, sgRNAs | Precision genome editing | Introducing disease-relevant mutations [30] |

| HDR Enhancers | ssODN templates, HDR enhancer (IDT) | Improve homology-directed repair efficiency | Introducing point mutations with high efficiency [30] |

| Cell Survival Enhancers | CloneR (STEMCELL Technologies), Revitacell | Improve single-cell survival after editing | Critical for clonal expansion after nucleofection [30] |

| Nucleofection System | Lonza Nucleofector System | Deliver CRISPR components to iPSCs | Transfection with RNP complexes [30] |

| High-Content Imagers | ImageXpress Micro Confocal, CellVoyager CQ1 | Automated acquisition of cellular images | High-throughput phenotypic screening [31] |

| Analysis Software | Harmony Software (PerkinElmer) | Analyze high-content imaging data | Multiparametric analysis of cell phenotypes [31] |

| 3D Culture | Nunclon Sphera Plates, Matrigel | Support 3D spheroid and organoid growth | Creating physiologically relevant models [31] |

Detailed Experimental Protocols

High-Efficiency CRISPR-Cas9 Gene Editing in iPSCs

This protocol enables highly efficient introduction of point mutations in human iPSCs through homology-directed repair (HDR), achieving rates greater than 90% when combining p53 inhibition and pro-survival molecules [30].

Materials:

- Human iPSCs maintained in StemFlex or mTeSR Plus medium on Matrigel

- Alt-R S.p. HiFi Cas9 Nuclease V3 (IDT #108105559)

- Target-specific sgRNA (IDT)

- Single-stranded oligonucleotide (ssODN) repair template

- pCXLE-hOCT3/4-shp53-F plasmid (Addgene #27077)

- CloneR (STEMCELL Technologies #05888)

- Revitacell (Gibco #A2644501)

- Accutase (VWR # AT104)

- Nucleofection system and appropriate kits

Procedure:

- Culture Preparation: Maintain iPSCs in StemFlex or mTeSR Plus medium on Matrigel-coated plates. Change to cloning media (StemFlex with 1% Revitacell and 10% CloneR) 1 hour before nucleofection.

- RNP Complex Formation: Combine 0.6 µM sgRNA with 0.85 µg/µL HiFi Cas9 nuclease and incubate at room temperature for 20-30 minutes.

- Cell Preparation: When iPSCs reach 80-90% confluency, dissociate with Accutase for 4-5 minutes.

- Nucleofection Mixture: Combine RNP complex with 0.5 µg pmaxGFP, 5 µM ssODN, and 50 ng/µL pCXLE-hOCT3/4-shp53-F plasmid.

- Nucleofection: Perform nucleofection according to manufacturer's instructions for iPSCs.

- Post-nucleofection Culture: Plate transfected cells in cloning media and transition to standard culture conditions after 24-48 hours.

- Clone Isolation and Validation: After 5-7 days, pick individual clones for expansion and validate editing through sequencing (e.g., ICE analysis) and functional assays.

Critical Steps:

- Include silent mutations in the repair template to disrupt the PAM site and prevent re-cutting [30]

- Use HDR enhancers and pro-survival additives throughout the process

- Confirm karyotypic stability through G-banding after editing [30]

High-Content Screening of CRISPR-Edited iPSC-Derived Models

This protocol enables high-throughput phenotypic screening of genetically defined iPSC-derived cell models using high-content imaging and analysis.

Materials:

- CRISPR-edited iPSCs with disease-relevant mutations

- Differentiation reagents specific for target cell type

- 384-well imaging-optimized microplates

- High-content imaging system (e.g., ImageXpress Micro Confocal or equivalent)

- Cell painting dyes (if performing Cell Painting)

- Fixation and permeabilization reagents (if endpoint assay)

- Phenotypic reference compounds (if screening chemical libraries)

- Harmony High-Content Analysis Software or equivalent

Procedure:

- Cell Differentiation: Differentiate CRISPR-edited iPSCs into target cell type (e.g., neurons, cardiomyocytes, hepatocytes) using established protocols.

- Assay Plate Preparation: Seed differentiated cells into 384-well plates at optimized density. Include appropriate controls (wild-type, diseased, and corrected isogenic lines).

- Compound Treatment: For chemical screens, add compounds from chemogenomic libraries using automated liquid handling systems. Include DMSO controls.

- Staining and Fixation: For Cell Painting assays, stain cells with fluorescent dyes targeting multiple cellular compartments. Alternatively, use specific antibodies for phenotypic markers of interest.

- Image Acquisition: Acquire images using high-content imaging system with appropriate magnification (20x or 40x) and channels. Automate using plate handling robotics.

- Image Analysis: Extract quantitative features using specialized software. For multiparametric analysis, include measurements of cell morphology, texture, intensity, and spatial relationships.

- Data Integration: Combine phenotypic data with genetic perturbation or compound information. Use machine learning approaches for pattern recognition and hit identification.

Critical Steps:

- Optimize cell density and differentiation efficiency before large-scale screening

- Include isogenic controls to account for genetic background effects

- Validate key hits through orthogonal assays and dose-response experiments

Signaling Pathways and Experimental Workflows

High-Content Screening Workflow Integration

CRISPR Editing Efficiency Enhancement Pathway

Data Analysis and Integration

High-content screening generates complex multiparametric data requiring sophisticated analysis approaches. The integration of high-content imaging data with genetic and chemical perturbation information enables comprehensive phenotypic profiling [32]. Key considerations include:

- Multiparametric Analysis: Extract hundreds of morphological and intensity features from each cell to create detailed phenotypic profiles

- Machine Learning Approaches: Apply unsupervised and supervised learning methods to identify patterns and classify phenotypes

- Multiomics Integration: Combine high-content imaging data with transcriptomic, proteomic, and genomic information to build comprehensive models of cellular responses

- Visualization Techniques: Use dimensionality reduction (t-SNE, UMAP) and interactive visualization to explore complex datasets and generate hypotheses [33]

Advanced software platforms like Harmony (PerkinElmer) and ZEN (Zeiss) provide automated analysis workflows, while cloud-based storage solutions enable collaborative analysis of large datasets [31]. The application of artificial intelligence further enhances pattern recognition and predictive modeling in high-throughput screening [6].

Modern drug discovery is increasingly leveraging sophisticated computational pipelines to deconvolute complex biological interactions and cellular phenotypes. Two particularly powerful approaches, network pharmacology and morphological profiling, are transforming high-throughput phenotypic screening of chemogenomic libraries. Network pharmacology moves beyond the traditional "one-drug-one-target" paradigm to understand drug actions within the interconnected network of biological systems [34]. Meanwhile, advanced morphological profiling technologies, particularly when enhanced by fractal analysis and artificial intelligence (AI), can capture subtle, disease-relevant phenotypic changes that are otherwise obscured in standard assays [35]. When integrated, these approaches provide a comprehensive framework for predicting compound bioactivity, elucidating mechanisms of action (MoA), and accelerating the identification of novel therapeutic candidates [10]. This Application Note provides detailed protocols for implementing these computational pipelines within chemogenomic library research.

Network Pharmacology Pipeline

Conceptual Framework and Workflow

Network pharmacology represents a paradigm shift from targeted drug discovery to a holistic, systems-level approach. It is founded on the principle that complex diseases arise from perturbations in biological networks rather than single targets, and that therapeutic interventions—especially multi-component natural products like Traditional Chinese Medicine (TCM)—act through multi-target mechanisms [34] [36]. The core workflow involves constructing and analyzing complex networks that integrate chemical information, multi-omics data (genomics, transcriptomics, proteomics, metabolomics), and clinical efficacy evidence to elucidate the "multi-component-multi-target-multi-pathway" mode of action [36].

Table 1: Key Data Types and Resources for Network Pharmacology

| Data Category | Specific Data Types | Representative Resources/Databases | Application in Pipeline |

|---|---|---|---|

| Chemical Information | Compound structures, bioactivity, ADMET properties | ZINC, ChEMBL, PubChem | Identify active compounds, predict target interactions |

| Omics Data | Genomics, transcriptomics, proteomics, metabolomics | GEO, TCGA, Human Protein Atlas | Identify disease-associated genes/proteins |

| Network & Pathway | Protein-protein interactions, signaling pathways | STRING, KEGG, Reactome | Construct biological networks |

| Knowledge Bases | Drug-target interactions, disease-gene associations | DrugBank, DisGeNET, OMIM | Contextualize findings and validate predictions |

Protocol: AI-Driven Multi-Scale Network Analysis

Purpose: To systematically identify therapeutic mechanisms of multi-component treatments from molecular to patient levels.

Materials & Computational Tools:

- Data Resources: Compound-target databases (e.g., ChEMBL, BindingDB), protein-protein interaction networks (e.g., STRING), pathway databases (e.g., KEGG, Reactome).

- Software/Packages: R/Python environments with specialized libraries (e.g.,

clusterProfilerfor gene ontology analysis [37],Cytoscapefor network visualization, deep learning frameworks like PyTorch/TensorFlow). - AI Models: Graph Neural Networks (GNNs), Graph Convolutional Networks (GCNs), and natural language processing (NLP) models for literature mining [36].

Experimental Procedure:

Data Collection and Curation

- Compile lists of active compounds and their chemical descriptors from the chemogenomic library.

- Retrieve known and predicted protein targets for each compound using similarity search, docking, or AI-based prediction tools.

- Gather disease-relevant multi-omics data (e.g., transcriptomic profiles of diseased vs. healthy tissues) from public repositories (GEO, TCGA) or in-house studies.

Network Construction and Target Identification

- Construct a compound-target network by linking compounds to their respective protein targets.

- Build a disease-specific network by integrating:

- Differentially expressed genes/proteins from omics data.

- Protein-protein interactions (PPI) from reference databases.

- Key signaling pathways implicated in the disease pathology.

- Overlay the compound-target network onto the disease network to identify key network nodes (proteins) and edges (interactions) modulated by the compounds.

AI-Enhanced Analysis and Validation

- Cluster Analysis: Apply clustering algorithms (e.g., hierarchical clustering, K-means with gap statistics for optimal cluster number selection [37]) to group genes/compounds with similar patterns. Perform Gene Ontology (GO) and pathway enrichment analysis on each cluster to identify biologically relevant modules.

- Model Prediction: Utilize GNNs to learn from the heterogeneous biological network and predict novel drug-target-disease associations. Employ explainable AI (XAI) techniques like SHAP to interpret model predictions and identify critical features [36].

- Experimental Validation: Prioritize predicted targets and pathways for experimental validation using techniques such as:

- In vitro binding assays (e.g., SPR)

- Functional cellular assays (e.g., reporter gene assays, knock-down/knock-out experiments)

- Analysis of patient-derived samples or relevant animal models

Figure 1: AI-Driven Network Pharmacology Workflow. This pipeline integrates diverse data types to predict multi-scale mechanisms of action.

Morphological Profiling Pipeline

Conceptual Framework and Advanced Readouts