Chemogenomic Libraries: A Guide to Target Discovery and Phenotypic Screening in Modern Drug Development

This article provides a comprehensive overview of chemogenomic libraries, which are curated collections of small molecules with annotated biological activities used to systematically probe protein families and biological pathways.

Chemogenomic Libraries: A Guide to Target Discovery and Phenotypic Screening in Modern Drug Development

Abstract

This article provides a comprehensive overview of chemogenomic libraries, which are curated collections of small molecules with annotated biological activities used to systematically probe protein families and biological pathways. Aimed at researchers, scientists, and drug development professionals, it covers the foundational principles of chemogenomics, strategic design and assembly of these libraries, and their pivotal applications in phenotypic screening, target deconvolution, and drug repurposing. It further addresses key methodological challenges and limitations, explores advanced computational and machine learning approaches for validation, and discusses the future trajectory of chemogenomics in accelerating the discovery of novel therapeutics and drug targets.

What is a Chemogenomic Library? Defining the Core Concepts and Strategic Goals

Systematic Screening of Targeted Chemical Libraries

Systematic screening of targeted chemical libraries represents a cornerstone methodology in modern chemogenomics, enabling the parallel exploration of chemical and biological spaces to accelerate drug discovery. This whitepaper examines the core principles, experimental methodologies, and practical applications of targeted library screening within chemogenomic research. By integrating chemical genomics with high-throughput screening technologies, researchers can efficiently identify novel therapeutic agents and elucidate the functions of previously uncharacterized targets. We present detailed protocols for both forward and reverse chemogenomic approaches, quantitative analysis of library composition trends, and visualization of key workflow relationships. The strategic implementation of targeted screening libraries continues to transform early drug discovery, particularly for complex diseases requiring multi-target approaches, by providing a systematic framework for linking chemical structures to biological functions across entire gene families.

Chemogenomics constitutes an interdisciplinary research paradigm that systematically investigates the interactions between chemical compounds and biological target families, with the ultimate goal of identifying novel drugs and drug targets [1]. At the heart of this approach lies the chemogenomic library – a carefully curated collection of small molecules designed to target specific protein families such as G-protein-coupled receptors (GPCRs), kinases, nuclear receptors, proteases, and ion channels [1] [2]. These libraries operate on the fundamental principle that "similar receptors bind similar ligands," allowing researchers to extrapolate known ligand-target relationships to unexplored family members [3].

The strategic value of chemogenomic libraries stems from their ability to efficiently explore the ligand-target space, which encompasses all potential interactions between compounds in the library and their protein targets [4]. This systematic exploration enables parallel processing of multiple targets within gene families, significantly increasing the efficiency of lead identification compared to traditional single-target approaches [5] [3]. As pharmaceutical research has shifted from a reductionist "one target—one drug" vision to a more complex systems pharmacology perspective, chemogenomic libraries have emerged as essential tools for addressing the multi-factorial nature of complex diseases like cancer, neurological disorders, and diabetes [5].

The composition of these libraries varies significantly based on their intended application, ranging from focused libraries targeting specific protein families to diverse collections designed for broad phenotypic screening [6] [7]. What distinguishes chemogenomic libraries from general compound collections is their intentional design around target family principles and the annotation of compounds with known target information, creating a knowledge-rich resource for predictive drug discovery [4].

Core Principles and Strategic Approaches

Fundamental Concepts

Chemogenomics operates on several interconnected principles that guide library design and screening strategies. The similarity principle – that similar targets bind similar ligands – forms the theoretical foundation for knowledge transfer within target families [3]. This principle enables researchers to predict ligands for orphan receptors (those with no known ligands) based on their similarity to well-characterized family members [1]. The reverse is also true: compounds with structural similarities to known active molecules may interact with related targets, enabling the discovery of novel target relationships [3].

A key advantage of chemogenomic approaches is their ability to modulate protein function rather than genetic expression, allowing real-time observation of phenotypic changes and reversibility upon compound addition and withdrawal [1]. This dynamic intervention provides insights into protein function that complement genetic knockout studies, particularly for essential genes where knockout would be lethal [1]. The approach also facilitates the identification of polypharmacology – where a single compound interacts with multiple targets – which has emerged as a valuable therapeutic strategy for complex diseases [5].

Forward vs. Reverse Chemogenomics

Chemogenomic screening strategies are broadly categorized into two complementary approaches, each with distinct experimental workflows and applications:

Forward Chemogenomics (also called classical chemogenomics) begins with the observation of a particular phenotype and aims to identify small molecules that induce or modify this phenotype [1]. The molecular basis of the desired phenotype is initially unknown, and the identified modulators serve as tools to discover the protein responsible for the phenotype [1]. For example, researchers might screen for compounds that arrest tumor growth without prior knowledge of the specific molecular target involved. The primary challenge in forward chemogenomics lies in designing phenotypic assays that facilitate the transition from screening to target identification [1].

Reverse Chemogenomics starts with a specific protein target and identifies small molecules that perturb its function in vitro, then characterizes the phenotypic effects induced by these modulators in cellular or whole-organism systems [1]. This approach validates the role of the target in biological responses and has been enhanced through parallel screening and lead optimization across multiple targets within the same family [1]. Reverse chemogenomics closely resembles traditional target-based drug discovery but leverages systematic profiling across target families to increase efficiency [1].

Table 1: Comparison of Forward and Reverse Chemogenomic Approaches

| Characteristic | Forward Chemogenomics | Reverse Chemogenomics |

|---|---|---|

| Starting Point | Phenotypic observation | Known protein target |

| Screening Focus | Identification of modulators that affect phenotype | Identification of ligands that bind to target |

| Primary Challenge | Target deconvolution | Phenotypic validation |

| Typical Applications | Discovery of novel mechanisms, pathway analysis | Target validation, selectivity profiling |

| Throughput Capacity | Generally lower due to complex assays | Generally higher with purified targets |

Composition and Design of Targeted Libraries

Library Diversity and Source Materials

Chemogenomic libraries incorporate compounds from diverse sources, each offering distinct advantages for drug discovery. Synthetic compounds represent the largest category, typically including known drugs, clinical candidates, and specialized chemical probes [6]. These are often supplemented with natural products and their derivatives, which provide exceptional structural diversity evolved through biological optimization and frequently demonstrate favorable absorption, distribution, metabolism, excretion, and toxicity (ADME/Tox) profiles [8] [7]. Many organizations also include fragment libraries composed of low molecular weight compounds (<300 Da) that efficiently probe chemical space and serve as optimal starting points for medicinal chemistry optimization [7].

The strategic composition of a chemogenomic library depends on its intended application. Focused libraries target specific protein families or therapeutic areas and often yield higher hit rates with cleaner structure-activity relationship data [7]. By contrast, diverse screening collections aim for broad coverage of chemical space and are particularly valuable for exploratory research and phenotypic screening where the molecular targets may be unknown [6] [7]. In practice, many research institutions maintain both types, such as the Stanford HTS facility which offers both diverse collections (~127,500 compounds) and targeted libraries for kinases, CNS targets, and covalent inhibitors [6].

Quality Filtering and Compound Selection

The effectiveness of a chemogenomic library depends heavily on rigorous filtering to ensure compound quality and appropriate physicochemical properties. Standard practice involves multiple filtering steps to eliminate problematic compounds and optimize library composition:

Removal of problematic functionalities: Compounds with functional groups associated with assay interference or promiscuous binding are eliminated using filters such as the Rapid Elimination of Swill (REOS) and Pan Assay Interference Compounds (PAINS) [8]. These include reactive groups like aldehydes, alkyl halides, Michael acceptors, and redox-active compounds that can generate false positives [8].

Physicochemical property filtering: Most libraries apply modified "Rule of Five" criteria to maintain drug-like properties, typically including molecular weight between 100-500 Da, calculated logP between -5 and 5, and limited numbers of hydrogen bond donors and acceptors [6]. However, these criteria may be adjusted for specific target classes, such as central nervous system targets where blood-brain barrier penetration is desired [6].

Structural diversity analysis: Computational tools like Bayesian categorization and clustering algorithms ensure appropriate structural diversity and novelty relative to existing internal collections [6]. This step maximizes the exploration of chemical space while maintaining sufficient compound density around privileged scaffolds for structure-activity relationship studies.

Table 2: Representative Chemogenomic Libraries and Their Characteristics

| Library Name | Size | Focus/Target | Key Features | Applications |

|---|---|---|---|---|

| GSK Biologically Diverse Compound Set | Not specified | Diverse targets | Biological and chemical diversity | Broad phenotypic screening |

| Pfizer Chemogenomic Library | Not specified | Target-specific | Ion channels, GPCRs, kinases | Probe-based screening |

| Prestwick Chemical Library | 1,280+ | Approved drugs | FDA/EMA-approved compounds | Drug repurposing, safety profiling |

| LOPAC1280 | 1,280 | Pharmacologically active | Known bioactives | Assay validation |

| NCATS MIPE 3.0 | Not specified | Oncology | Kinase inhibitor dominated | Anticancer phenotypic screening |

| ChemDiv Kinase Library | 10,000 | Kinases | Mitotic & tyrosine kinase focused | Kinase inhibitor discovery |

Emerging Library Technologies

Recent advances in library technologies have expanded the scope and efficiency of chemogenomic screening. DNA-encoded chemical libraries (DELs) represent a transformative approach where each small molecule is covalently linked to a unique DNA barcode that records its synthetic history [7]. This technology enables the creation and screening of libraries containing billions of compounds through affinity selection followed by next-generation sequencing, dramatically reducing the resources required for ultra-high-throughput screening [7]. Several DEL-derived candidates have advanced to clinical trials, validating this approach for hit identification.

Fragment-based libraries have gained prominence due to their superior efficiency in exploring chemical space and higher hit rates (typically 3-10%) compared to conventional high-throughput screening [7]. The small size of fragments (<300 Da) makes them excellent starting points for medicinal chemistry optimization, often resulting in lead compounds with improved ligand efficiency and physicochemical properties [7]. Fragment screening typically requires biophysical methods such as surface plasmon resonance or protein crystallography to detect the weak binding affinities characteristic of fragment-target interactions.

Experimental Protocols and Methodologies

High-Throughput Screening Workflows

The systematic screening of targeted chemical libraries typically follows established high-throughput screening (HTS) protocols adapted for specific assay formats and readouts. A standard workflow encompasses several critical stages:

Library Preparation and Assay Optimization: Prior to screening, compound libraries are formatted in 384-well or 1536-well microplates, typically as 1-10 mM dimethyl sulfoxide (DMSO) solutions [6]. Assay development involves optimizing reagent concentrations, incubation times, and detection parameters using appropriate positive and negative controls. For cell-based assays, cell density, viability, and reporter system functionality must be established across the plate format to ensure robust signal-to-noise ratios and Z'-factors >0.5, indicating excellent assay quality [8].

Primary Screening Implementation: Most HTS campaigns screen each compound at a single concentration (typically 1-10 μM) to identify "hits" that modulate the target or phenotype beyond a predetermined threshold (usually 3 standard deviations from the mean) [8]. Alternatively, quantitative HTS (qHTS) screens compounds at multiple concentrations in the primary screen, providing immediate concentration-response data but requiring significantly more resources [8]. Screening throughput varies from 10,000 to 100,000+ compounds per day, depending on assay complexity and automation capabilities.

Hit Validation and Counter-Screening: Initial hits undergo confirmation screening to exclude false positives resulting from compound interference or assay artifacts. This includes re-testing in dose-response format, assessing compound purity and identity, and counter-screening against orthogonal assays [6]. Specifically, potential promiscuous inhibitors are evaluated using tools like the Scripps assay interference checker or Badapple promiscuity predictor [6].

Phenotypic Screening Protocols

Phenotypic screening using chemogenomic libraries requires specialized protocols that differ from target-based approaches. The Cell Painting protocol represents a prominent example of high-content phenotypic screening that generates multivariate morphological profiles [5]. The standard workflow includes:

Cell culture and compound treatment: U2OS osteosarcoma cells or other relevant cell lines are plated in multiwell plates and perturbed with library compounds at appropriate concentrations, typically for 24-48 hours [5].

Staining and fixation: Cells are stained with a panel of fluorescent dyes targeting multiple cellular compartments: Mitotracker (mitochondria), Concanavalin A (endoplasmic reticulum), Hoechst 33342 (nucleus), Phalloidin (actin cytoskeleton), and Wheat Germ Agglutinin (Golgi apparatus and plasma membrane) [5].

Image acquisition and analysis: High-content imaging systems capture multiple fields per well, and automated image analysis software (e.g., CellProfiler) extracts morphological features including intensity, size, shape, texture, and granularity parameters for each cellular compartment [5]. Typically, 1,000+ morphological features are measured per cell, with subsequent data reduction to eliminate correlated parameters.

Profile comparison and clustering: Morphological profiles induced by test compounds are compared to reference compounds with known mechanisms using similarity metrics, enabling classification of novel compounds into functional pathways [5].

Target Deconvolution Methods

For forward chemogenomic approaches, target identification represents a critical step following phenotypic screening. Common deconvolution methods include:

Affinity-based purification: Compound analogs equipped with photoaffinity tags or solid supports are used to capture interacting proteins from cell lysates, followed by mass spectrometry identification [1]. This approach directly identifies physical interactors but may capture both functional targets and non-specific binders.

Genomic and genetic approaches: CRISPR-based gene knockout or knockdown screens can identify genes whose modification abolishes compound activity [5]. Similarly, yeast chemogenomic profiling screens compound libraries against comprehensive deletion or overexpression strains to identify genetic modifiers of compound sensitivity [1].

Bioinformatics-based prediction: Computational methods leverage chemogenomic databases to predict targets based on structural similarity to known bioactive compounds or morphological similarity to compounds with characterized mechanisms [5] [4]. These in silico predictions provide testable hypotheses for experimental validation.

Visualization of Chemogenomic Workflows

Experimental Strategy Selection

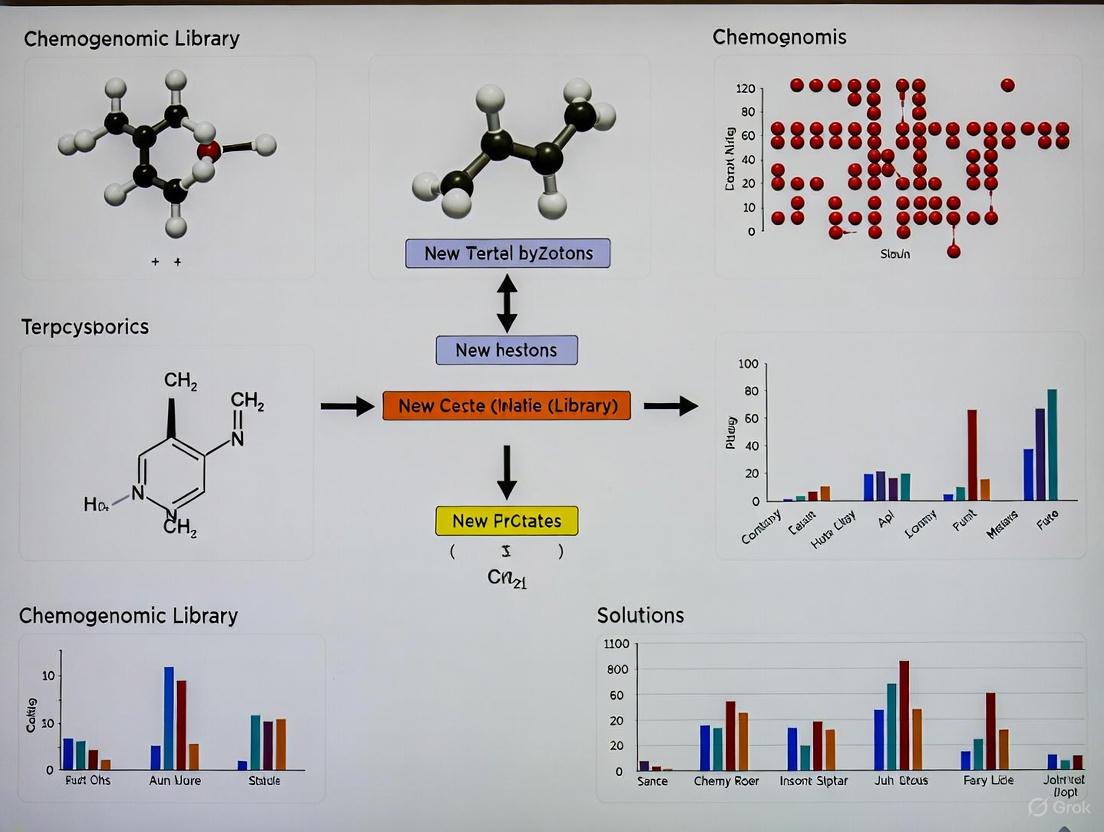

The following diagram illustrates the decision process for selecting appropriate chemogenomic screening strategies based on research objectives and available target information:

Diagram 1: Chemogenomic Screening Strategy Selection

Integrated Screening Workflow

This diagram outlines the complete integrated workflow for systematic screening of targeted chemical libraries, encompassing both experimental and computational components:

Diagram 2: Integrated Screening Workflow

The Scientist's Toolkit: Essential Research Reagents

Successful implementation of chemogenomic library screening requires specialized reagents and tools. The following table details essential components of the screening toolkit:

Table 3: Essential Research Reagents for Chemogenomic Screening

| Reagent/Tool Category | Specific Examples | Function/Purpose |

|---|---|---|

| Curated Compound Libraries | ChemDiv, SPECS, Chembridge collections [6] | Source of chemical diversity for screening |

| Bioactive Reference Sets | LOPAC1280, NIH Clinical Collection, Microsource Spectrum [6] | Assay validation and control compounds |

| Specialized Targeted Libraries | Kinase-focused (ChemDiv), CNS-penetrant (Enamine), Covalent inhibitors [6] | Targeting specific protein families or properties |

| Cell-Based Assay Reagents | Cell Painting dyes (Mitotracker, Hoechst, Phalloidin) [5] | Phenotypic profiling and high-content imaging |

| Protein Production Systems | Recombinant expression, Purification kits | Target protein production for biochemical assays |

| Automation & Liquid Handling | Robotic dispensers, Plate handlers | High-throughput screening implementation |

| Detection & Readout Systems | Plate readers, High-content imagers | Signal detection and data acquisition |

| Cheminformatics Software | Pipeline Pilot, Openeye, MOE [8] | Compound filtering, library design, data analysis |

| Data Analysis Platforms | CellProfiler, KNIME, R/Bioconductor [5] | Image analysis, hit identification, pattern recognition |

Applications in Drug Discovery and Chemical Biology

Target Identification and Validation

Chemogenomic library screening has proven particularly valuable for identifying and validating novel therapeutic targets, especially for orphan receptors with no known ligands or biological functions [1] [3]. By screening focused libraries against multiple members of a target family, researchers can simultaneously identify ligands for characterized targets and orphan family members, accelerating the functional annotation of the genome [1]. For example, chemogenomic approaches have identified ligands for understudied GPCRs and kinases, revealing their roles in disease pathways and establishing their therapeutic potential [3].

The ability to profile compounds across multiple related targets also enables the intentional exploration of polypharmacology – where compounds are designed or selected to interact with multiple specific targets [5]. This approach has shown particular promise for complex diseases like cancer and neurological disorders, where multi-target therapies may offer superior efficacy compared to highly selective single-target agents [5]. The systematic mapping of compound-target interactions also helps identify off-target effects early in development, potentially reducing late-stage failures due to toxicity or lack of efficacy [2].

Mechanism of Action Elucidation

Chemogenomic libraries serve as powerful tools for elucidating mechanisms of action (MOA) for both new chemical entities and traditional medicines [1]. By comparing the phenotypic profiles or target interaction patterns of uncharacterized compounds to those with known mechanisms, researchers can generate testable hypotheses about MOA [5] [1]. This approach has been applied to traditional medicine systems like Ayurveda and Traditional Chinese Medicine, where target prediction programs have identified potential mechanisms underlying observed therapeutic effects [1].

In oncology, chemogenomic profiling has revealed patient-specific vulnerabilities and targeted therapeutic opportunities [9]. For example, a recent study screening a minimal library of 1,211 compounds targeting 1,386 anticancer proteins against glioblastoma patient cells identified highly heterogeneous phenotypic responses across patients and molecular subtypes, highlighting the potential for personalized treatment approaches [9]. Such applications demonstrate how chemogenomic libraries can bridge the gap between molecular target identification and patient-specific therapeutic strategies.

Pathway Analysis and Biological Discovery

Beyond direct drug discovery applications, chemogenomic libraries have contributed fundamental biological insights by revealing novel pathway components and functional relationships [1]. Forward chemogenomic screens in model organisms like yeast have identified genes involved in specific biological processes based on compound sensitivity profiles [1]. For instance, chemogenomic approaches helped identify the enzyme responsible for the final step in diphthamide biosynthesis after thirty years of unsuccessful conventional approaches [1].

The integration of chemogenomic screening data with other functional genomics datasets (transcriptomics, proteomics) creates multi-dimensional views of biological systems that enhance our understanding of pathway architecture and regulatory mechanisms [5] [9]. These integrated approaches are particularly powerful for mapping complex signaling networks and identifying nodes that may be susceptible to pharmacological intervention [9].

Systematic screening of targeted chemical libraries represents a sophisticated methodology that continues to evolve through advances in library design, screening technologies, and data analysis approaches. By intentionally exploring the intersection of chemical and biological spaces, chemogenomic strategies accelerate the identification of novel therapeutic agents and the functional annotation of biological targets. The integration of forward and reverse chemogenomic approaches provides a powerful framework for linking phenotypic observations to molecular mechanisms, addressing a critical challenge in modern drug discovery.

As chemogenomic methodologies mature, several trends are shaping their future development: the increasing application of artificial intelligence and machine learning for library design and hit prioritization; the growing use of DNA-encoded libraries that dramatically expand accessible chemical space; and the tighter integration of multi-omics data to contextualize screening results and identify patient-specific therapeutic opportunities [7] [9]. These advances, combined with the foundational principles and methodologies described in this whitepaper, ensure that systematic screening of targeted chemical libraries will remain an essential component of biomedical research and therapeutic development.

The paradigm of drug discovery has progressively shifted from a reductionist, single-target model to a more holistic, systems-level approach. This evolution has given rise to chemogenomic libraries, which are systematic collections of small molecules designed to interact with a wide range of biological targets. These libraries serve as a foundational resource for phenotypic drug discovery (PDD), where the initial screening is based on observable changes in cells or organisms rather than predefined molecular targets [5]. The "ultimate goal" of parallel identification marries this phenotypic screening approach with advanced computational and experimental techniques to simultaneously uncover novel therapeutic compounds and their protein targets, thereby de-risking and accelerating the early drug discovery pipeline.

This parallel strategy is crucial for addressing complex diseases such as cancers, neurological disorders, and diabetes, which are often driven by multiple molecular abnormalities rather than a single defect [5]. By investigating compound bioactivity and target engagement concurrently, researchers can more efficiently map the complex polypharmacology of small molecules and elucidate their mechanisms of action (MoA), which remains a significant challenge in phenotypic screening [5] [10].

Foundational Concepts and Strategic Approaches

The Architecture of a Chemogenomic Library

A modern chemogenomic library is not merely a diverse collection of chemicals; it is a strategically assembled set of compounds designed for maximum utility in deconvoluting biological mechanisms. The design incorporates several key principles:

- Target Diversity: The library should encompass a large and diverse panel of drug targets involved in a wide spectrum of biological processes and diseases. For example, one developed system pharmacology network integrates drug-target-pathway-disease relationships and contains a library of 5,000 small molecules representing this diversity [5].

- Chemical Diversity and Scaffold Representation: To ensure broad coverage of chemical space, compounds are selected based on representative scaffolds. Software like ScaffoldHunter is used to classify molecules by their core structural frameworks, distributing them across different levels based on their relationship distance from the parent molecule node [5].

- Data Integration: The true power of a chemogenomic library is unlocked by embedding it within a network pharmacology framework. This involves integrating heterogeneous data sources—including bioactivity data (e.g., from ChEMBL), pathways (e.g., KEGG, Gene Ontology), diseases (e.g., Disease Ontology), and morphological profiling data (e.g., from Cell Painting assays)—into a unified, queryable system, often using graph databases like Neo4j [5].

The Parallel Discovery Workflow

The parallel identification process is a multi-stage, iterative cycle. The diagram below illustrates the integrated workflow that connects computational and experimental modules to achieve parallel discovery.

Core Methodologies for Parallel Identification

Computational & AI-Driven Frameworks

Advanced computational models are the engine of parallel discovery, enabling the prediction of interactions and the generation of novel candidates before costly wet-lab experiments.

Multitask Deep Learning for DTA Prediction and Generation

A key innovation is the development of multitask learning frameworks like DeepDTAGen. These models unify two critically interconnected tasks that are often treated separately:

- Drug-Target Affinity (DTA) Prediction: This is a regression task that predicts the strength of interaction between a drug and a target, providing more rich information than a simple binary interaction prediction [11].

- Target-Aware Drug Generation: This generative task designs novel molecular structures conditioned on a specific target protein [11].

By using a shared feature space for both tasks, these models ensure that the generated drugs are informed by the structural properties of the molecules, the conformational dynamics of the proteins, and the bioactivity relationships between them. This shared knowledge significantly increases the potential for clinical success of the generated compounds. A critical technical advancement in such frameworks is the development of algorithms like FetterGrad to mitigate gradient conflicts between the distinct tasks during model training, ensuring stable and effective learning [11].

Table 1: Performance of DeepDTAGen on Benchmark Datasets for DTA Prediction

| Dataset | MSE (↓) | Concordance Index (CI) (↑) | R²m (↑) |

|---|---|---|---|

| KIBA | 0.146 | 0.897 | 0.765 |

| Davis | 0.214 | 0.890 | 0.705 |

| BindingDB | 0.458 | 0.876 | 0.760 |

Performance metrics (Mean Squared Error, Concordance Index, and R²m) demonstrate the model's accuracy in predicting binding affinity. Lower MSE and higher CI/R²m are better [11].

Multi-Agent Systems for End-to-End Discovery

For a fully integrated pipeline, multi-agent frameworks represent the cutting edge. These systems orchestrate specialized AI agents that autonomously or semi-autonomously perform different stages of the discovery process. As demonstrated in one study, a team of agents can:

- Mine scientific literature for novel target associations.

- Generate novel molecular structures for prioritized targets.

- Predict bioactivity, selectivity, and ADME/Tox properties (Absorption, Distribution, Metabolism, Excretion, and Toxicity) using robust machine learning classifiers [12].

This orchestration creates a cohesive, end-to-end discovery engine from target identification to optimized hit candidates. The application of such a system to Alzheimer's Disease successfully led to the identification and generation of novel inhibitors for multiple protein targets (SGLT2, SEH, HDAC, and DYRK1A), showcasing its utility in parallel, multi-target drug discovery [12].

Experimental Validation & Target Deconvolution

When a compound shows a desired phenotypic effect in a screen, the next critical step is to identify its molecular target(s). This process, known as target deconvolution, is the experimental cornerstone of parallel identification.

Affinity-Based Pull-Down Methods

These methods rely on chemically modifying the small molecule of interest to "pull" its target out of a complex biological mixture.

- On-Bead Affinity Matrix: A linker is used to covalently attach the small molecule to solid support (e.g., agarose beads). This matrix is then exposed to a cell lysate, and bound proteins are eluted and identified via SDS-PAGE and mass spectrometry [10].

- Biotin-Tagged Approach: A biotin tag is attached to the small molecule. After incubation with cells or lysates, the target proteins are captured using streptavidin-coated beads and subsequently identified. While cost-effective, the strong biotin-streptavidin interaction often requires harsh denaturing conditions for elution, which can compromise protein activity [10].

- Photoaffinity Tagged Approach (PAL): This powerful method uses a probe containing a photoreactive group (e.g., diazirine) and an affinity tag (e.g., biotin). Upon exposure to light, the photoreactive group forms a permanent covalent bond with the target protein, enabling stringent purification and identification. This method is highly specific and sensitive, and is particularly useful for capturing transient or low-affinity interactions [10].

The following diagram illustrates the key experimental workflows for target deconvolution.

Label-Free Methods

To avoid potential pitfalls of chemical modification, label-free methods identify targets using the small molecule in its natural state.

- Drug Affinity Responsive Target Stability (DARTS): This technique exploits the principle that a protein's structure often becomes more stable and less susceptible to proteolytic degradation when bound to a small molecule. By comparing protease digestion patterns in the presence and absence of the drug, the target protein can be identified [10].

- Stability of Proteins from Rates of Oxidation (SPROX): This method measures the change in a protein's thermodynamic stability upon ligand binding by monitoring its rate of methionine oxidation. Binding events stabilize the protein, leading to a slower oxidation rate, which can be detected by mass spectrometry [10].

Table 2: Comparison of Key Target Deconvolution Techniques

| Method | Principle | Key Advantage | Key Limitation |

|---|---|---|---|

| On-Bead Affinity | Molecule immobilized on beads captures target from lysate. | Does not require a specific tag; can handle complex molecules. | Requires a site for immobilization that does not affect bioactivity. |

| Biotin-Tagged Pull-Down | Biotinylated molecule captures target on Streptavidin beads. | Simple, cost-effective, and widely adopted. | Harsh elution conditions; tag may affect cell permeability/bioactivity. |

| Photoaffinity Labeling (PAL) | Photoreactive probe covalently crosslinks to target upon UV exposure. | Captures transient/weak interactions; high specificity. | Requires complex synthetic chemistry; potential for non-specific crosslinking. |

| DARTS | Target binding confers resistance to proteolysis. | Uses native compound; no chemical modification needed. | May miss targets that are not protease-sensitive or whose stability doesn't change. |

| SPROX | Target binding increases resistance to chemical denaturation/oxidation. | Uses native compound; can work with complex mixtures. | Relies on methionine content; may not detect all binding events. |

Implementation and Practical Application

Building a Targeted Screening Library

For precision oncology and other focused applications, the design of a targeted chemogenomic library requires strategic prioritization. One approach involves analytic procedures that balance:

- Library Size: Designing a minimal, manageable screening library. A virtual library of 1,211 compounds can be designed to target 1,386 anticancer proteins, which can then be translated into a physical screening library of several hundred compounds [9].

- Cellular Activity: Prioritizing compounds with known cellular bioactivity.

- Chemical Diversity and Availability: Ensuring broad scaffold coverage and compound procureability.

- Target Selectivity: Including compounds with varying degrees of selectivity to enable polypharmacology studies and MoA deconvolution [9].

In a pilot glioblastoma (GBM) study, a physical library of 789 compounds covering 1,320 anticancer targets was used to profile patient-derived glioma stem cells. The results revealed highly heterogeneous phenotypic responses across patients and GBM subtypes, successfully identifying patient-specific vulnerabilities and validating the library's utility in precision oncology [9].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful parallel discovery relies on a suite of specialized reagents and tools.

Table 3: Key Research Reagent Solutions for Parallel Drug and Target Identification

| Reagent / Tool | Function | Application in Parallel Discovery |

|---|---|---|

| Chemogenomic Library | A curated collection of small molecules targeting diverse proteins. | The core resource for initial phenotypic screening and hit identification. |

| Affinity Tags (Biotin) | A high-affinity ligand for streptavidin. | Used to create biotin-conjugated small molecule probes for affinity-based pull-down assays [10]. |

| Photoaffinity Probes | Small molecules incorporating a photoreactive group (e.g., diazirine) and a tag. | Enables covalent crosslinking of a small molecule to its target protein for stringent isolation and identification [10]. |

| Streptavidin-Coated Beads | Solid support for immobilizing biotinylated molecules. | Used to capture and purify biotin-tagged small molecule-protein complexes from cell lysates [10]. |

| Cell Painting Assay Kits | A multiplexed fluorescence imaging assay using 6 dyes to label 8 cellular components. | Generates rich morphological profiles for phenotypic screening and MoA hypothesis generation [5]. |

| Graph Databases (e.g., Neo4j) | A database that uses graph structures for semantic queries with nodes and edges. | Integrates heterogeneous data (drug, target, pathway, disease) into a unified network pharmacology platform for knowledge mining [5]. |

The parallel identification of novel drugs and their targets represents a powerful, integrative frontier in drug discovery. By leveraging strategically designed chemogenomic libraries as a starting point, and then combining multitask AI models for prediction and generation with robust experimental target deconvolution methods, researchers can systematically navigate the complexity of biological systems. This approach directly addresses the critical bottleneck of MoA elucidation in phenotypic screening and is particularly suited for complex, polygenic diseases.

While challenges remain—such as the need for high-quality, accessible data to power AI models and the complex chemistry required for some probe molecules—the framework outlined in this guide provides a realistic and actionable path forward. The future of this field lies in the continued refinement of a human-in-the-loop paradigm, where expert oversight guides the curation of data, the validation of models, and the interpretation of complex, multi-modal results from these integrated parallel workflows.

Chemogenomic libraries represent a paradigm shift in drug discovery, moving from a single-target to a systems-level approach. These libraries are systematically designed collections of small molecules used to interrogate entire families of biological targets simultaneously. The core components of these libraries—annotated ligands with known activities and probes for orphan receptors with unknown ligands—create a powerful platform for elucidating complex biological pathways and identifying novel therapeutic opportunities. This technical guide examines the fundamental architecture of chemogenomic libraries, detailing their construction, screening methodologies, and application in modern drug development, with particular emphasis on the critical role of orphan receptor deorphanization in expanding the druggable genome.

Chemogenomics systematically screens targeted chemical libraries of small molecules against specific drug target families (e.g., GPCRs, nuclear receptors, kinases, proteases) with the ultimate goal of identifying novel drugs and drug targets [1]. This approach integrates target and drug discovery by using active compounds as chemical probes to characterize proteome functions, creating an intersectional map of all possible drugs against all potential therapeutic targets [13] [1].

The fundamental premise of chemogenomics rests on two key principles: first, that chemically similar compounds are likely to share biological targets, and second, that proteins with similar binding sites may be targeted by similar ligands [13]. This enables researchers to fill the sparse chemogenomic matrix—a conceptual grid mapping all compounds against all potential targets—by predicting unknown compound-target relationships from known data points [13].

Core Components of a Chemogenomic Library

Annotated Ligands

Annotated ligands are small molecules with previously characterized biological activities against specific targets. These compounds serve as reference points within chemogenomic libraries and are essential for establishing structure-activity relationships across target families.

Key characteristics of annotated ligands include:

- Verified biological activity: Demonstrated modulation of target function (e.g., IC50, Ki, EC50 values)

- Chemical tractability: Well-defined chemical structures amenable to modification

- Target annotation: Known interactions with specific protein targets or pathways

- Mechanism of action: Understanding of how the ligand modulates target function

In library design, annotated ligands provide the foundation for navigating "ligand space" through molecular descriptors ranging from 1D global properties (molecular weight, log P) to 2D topological fingerprints and 3D conformational properties [13]. The most popular similarity metric for comparing these molecular fingerprints is the Tanimoto coefficient, which quantifies chemical similarity from 0 (completely dissimilar) to 1 (identical compounds) [13].

Orphan Receptors

Orphan receptors are proteins identified through genomic sequencing that have structural homology to known receptors but whose endogenous ligands remain unknown [14] [15]. These receptors represent significant opportunities for novel target discovery, as their deorphanization (identification of native ligands) can reveal new regulatory pathways and therapeutic interventions.

Orphan receptors are particularly prominent in two protein families:

- G protein-coupled receptors (GPCRs): Nearly 100 receptor-like genes remain orphans, typically designated with "GPR" prefixes (e.g., GPR21) [14]

- Nuclear receptors: Transcription factors including Rev-Erbα, RORs, and HNFα, many of which regulate metabolic processes and development [16] [15]

The strategic value of orphan receptors lies in their potential to reveal entirely new biological systems that impact human health. As noted in research, "Orphan nuclear receptors provide a unique resource for uncovering novel regulatory systems that impact human health and provide excellent drug targets for a variety of human diseases" [16].

Complementary Elements

Beyond the core components, chemogenomic libraries incorporate several additional elements:

Table 1: Supplementary Components of Chemogenomic Libraries

| Component | Description | Function |

|---|---|---|

| Target Libraries | Collections of related proteins (e.g., kinase families, GPCR panels) | Enable systematic screening across target families |

| Biological Systems | Cell-based assays, whole organisms, pathway reporters | Provide physiological context for compound evaluation |

| Readout Technologies | Binding assays, gene expression profiling, high-content imaging | Quantify biological responses to library compounds |

| Chemical Scaffolds | Core structural frameworks with demonstrated biological relevance | Facilitate exploration of structure-activity relationships |

These components work synergistically to enable comprehensive mapping of chemical-biological interactions, supporting both target discovery and compound optimization.

Library Design and Curation Strategies

Chemical Space Navigation

Effective library design requires systematic navigation of chemical space using molecular descriptors that encode critical compound properties:

1D Descriptors: Global properties including molecular weight, atom counts, polar surface area, and lipophilicity (log P) that predict absorption, distribution, metabolism, excretion, and toxicity (ADMET) properties [13].

2D Topological Descriptors: Structural fingerprints encoding molecular connectivity, fragments, and substructures that enable rapid similarity searching and clustering [13]. Simplified molecular input line entry system (SMILES) strings provide linear representations for computational handling [13].

3D Conformational Descriptors: Spatial properties including pharmacophore patterns, molecular shapes, and interaction fields that capture structural complementarity to biological targets [13].

Diversity and Selectivity Balancing

A critical challenge in library design lies in balancing target coverage with compound specificity. The polypharmacology index (PPindex) quantifies this balance by analyzing the distribution of known targets per compound across a library [17]. Libraries with higher PPindex values demonstrate greater target specificity, which is particularly valuable for phenotypic screening approaches where target deconvolution is challenging [17].

Comparative studies reveal significant variation in polypharmacology profiles across commonly used libraries:

Table 2: Polypharmacology Index of Selected Chemogenomic Libraries

| Library Name | Size (Compounds) | PPindex (All Targets) | PPindex (Without 0/1 Target Bins) | Primary Application |

|---|---|---|---|---|

| DrugBank | 9,700+ | 0.9594 | 0.4721 | Broad drug discovery |

| LSP-MoA | Not specified | 0.9751 | 0.3154 | Kinome targeting |

| MIPE 4.0 | 1,912 | 0.7102 | 0.3847 | Mechanism interrogation |

| Microsource Spectrum | 1,761 | 0.4325 | 0.2586 | Bioactive compounds |

Recent library design strategies emphasize optimal target coverage with minimal polypharmacology. For example, one precision oncology approach developed a minimal screening library of 1,211 compounds targeting 1,386 anticancer proteins, maximizing target diversity while maintaining compound specificity [9].

Existing Library Frameworks

Several well-established chemogenomic libraries provide valuable models for library construction:

Commercial Libraries:

- ChemDiv's Chemogenomic Library for Phenotypic Screening (90,959 compounds) [18]

- Target Identification TIPS Library (27,664 compounds) [18]

- Selective Target Activity Profiling Library (14,839 compounds) [18]

Specialized Collections:

- Human Transcription Factors Annotated Library (5,114 compounds) [18]

- Human Receptors Annotated Library (5,398 compounds) [18]

- CNS Target Activity Set (7,000 compounds) [18]

These libraries exemplify the strategic grouping of compounds by target class, mechanism, or therapeutic application, enabling focused screening campaigns against specific biological domains.

Experimental Methodologies

Orphan Receptor Deorphanization

Deorphanization strategies employ multiple complementary approaches to identify native ligands for orphan receptors:

Cell-Based Reporter Assays: Mammalian cells transfected with orphan receptor constructs (often fused to Gal4 DNA-binding domains) and reporter genes (e.g., luciferase) are treated with candidate ligand libraries, with receptor activation measured via reporter activity [16].

Direct Binding Approaches: Immobilized orphan receptors are exposed to potential ligand sources (cell lysates, compound libraries), with bound ligands subsequently eluted and characterized through analytical methods like mass spectrometry [16].

Interaction-Based Screening: Techniques including fluorescence resonance energy transfer (FRET) and Amplified Luminescent Proximity Homogeneous Assay (AlphaScreen) detect ligand-induced interactions between receptors and coactivators, providing high-throughput screening capabilities [16].

Structural Biology Methods: X-ray crystallography of ligand-binding domains reveals electron density for endogenous ligands or synthetic compounds, as demonstrated by the identification of cholesterol as a RORα ligand through structural analysis [16].

Virtual Screening: Computational docking of compound libraries into orphan receptor binding sites, guided by crystal structures, enables rapid identification of potential ligands for experimental validation [16].

Phenotypic Screening Applications

Forward chemogenomics utilizes phenotypic screening to identify compounds that induce desired phenotypic changes, with subsequent target identification through the annotated compounds producing those phenotypes [1]. Advanced phenotypic profiling methods include:

Cell Painting: A high-content imaging assay that uses multiple fluorescent dyes to label various cellular components, generating rich morphological profiles that can connect compound treatment to specific phenotypic outcomes [19]. The BBBC022 dataset incorporates 1,779 morphological features measuring intensity, size, texture, and granularity across cellular compartments [19].

High-Content Screening (HCS): Automated microscopy and image analysis enable quantification of complex phenotypic responses to library compounds, facilitating connection of chemical structure to biological effect [19].

Target Deconvolution Techniques

Once phenotypic hits are identified, target deconvolution establishes their mechanisms of action:

Chemogenomic Profiling: Screening active compounds against panels of known targets based on structural similarity to annotated ligands [1].

Network Pharmacology Integration: Constructing interaction networks that connect compounds to targets, pathways, and diseases using database resources like ChEMBL, KEGG, and Gene Ontology [19]. These networks enable prediction of compound mechanisms through enrichment analysis of targeted pathways [19].

Affinity-Based Proteomics: Chemical proteomics approaches using immobilized active compounds to capture and identify interacting proteins from complex biological samples.

Below is the experimental workflow integrating these methodologies:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Chemogenomics Research

| Reagent Category | Specific Examples | Function/Application |

|---|---|---|

| Compound Libraries | MIPE 4.0 (1,912 compounds), LSP-MoA library, Microsource Spectrum (1,761 compounds) [17] | Targeted screening with annotated mechanisms |

| Bioactive Collections | ChemDiv Chemogenomic Library (90,959 compounds), Target Identification TIPS Library (27,664 compounds) [18] | Phenotypic screening and target identification |

| Specialized Libraries | Human Transcription Factors Library (5,114 compounds), CNS Annotated Library (704 compounds) [18] | Target-class specific screening |

| Assay Systems | Cell Painting assays, Gal4-reporter systems, AlphaScreen assays [19] [16] | Functional characterization of compound activity |

| Database Resources | ChEMBL, KEGG Pathways, Gene Ontology, Disease Ontology [19] | Target-pathway-disease annotation and network construction |

| Analysis Tools | ScaffoldHunter, Neo4j, RDkit, ClusterProfiler [19] [17] | Chemical scaffold analysis, network visualization, similarity searching |

Applications in Drug Discovery

Mechanism of Action Elucidation

Chemogenomics provides powerful approaches for determining mechanisms of action (MOA) for compounds with observed phenotypic effects. This has been particularly valuable for characterizing traditional medicines, where chemogenomic profiling has identified potential targets for traditional Chinese medicine and Ayurvedic formulations, connecting phenotypic effects to specific molecular targets [1].

Novel Target Identification

Systematic mapping of compound-target interactions reveals new therapeutic opportunities. In antibacterial development, chemogenomics approaches have identified ligands for multiple enzymes in the peptidoglycan synthesis pathway (murC, murE, murF), suggesting potential broad-spectrum Gram-negative inhibitors [1].

Pathway Mapping

Chemogenomics facilitates discovery of genes within biological pathways through analysis of cofitness data and phenotypic profiling. This approach identified YLR143W as the missing diphthamide synthetase in Saccharomyces cerevisiae, completing the pathway for this modified histidine derivative thirty years after its initial discovery [1].

Orphan Receptor Deorphanization Successes

Successful orphan receptor deorphanization has created important new therapeutic targets:

Nuclear Receptors: The farnesoid X receptor (FXR) was adopted through identification of bile acids as endogenous ligands [14]. The retinoid X receptor (RXR) and peroxisome proliferator-activated receptors (PPARs) have become important drug targets for metabolic disorders [16] [14].

GPCRs: Multiple orphan GPCRs have been deorphanized, revealing new signaling systems and potential therapeutic applications [14].

The relationship between library components and drug discovery applications is visualized below:

Chemogenomic libraries represent a powerful infrastructure for modern drug discovery, integrating annotated ligands and orphan receptor probes to systematically explore the druggable genome. The strategic design of these libraries—balancing diversity, specificity, and comprehensive target coverage—enables both target-based and phenotypic screening approaches. As library design methodologies advance and deorphanization efforts continue to expand the landscape of druggable targets, chemogenomic approaches will play an increasingly central role in elucidating complex biological pathways and identifying novel therapeutic interventions for diverse human diseases. The continued refinement of these libraries, incorporating emerging chemical and biological data, promises to accelerate the transition from genomic information to therapeutic breakthroughs.

Chemogenomics, also known as chemical genomics, represents a systematic strategy in early drug discovery that involves screening targeted chemical libraries of small molecules against distinct families of drug targets, such as G-protein-coupled receptors (GPCRs), kinases, nuclear receptors, and proteases [1] [2]. The ultimate goal is the parallel identification of novel drugs and the biological targets they modulate [1]. This approach is grounded in the principle that ligands designed for one member of a protein family often exhibit binding affinity for other related family members, enabling the collective compounds in a targeted library to interact with a significant portion of the target family [1]. Chemogenomics serves to integrate target and drug discovery by using small molecule compounds as chemical probes to characterize protein functions and elucidate proteome functions [1]. The interaction between a small compound and a protein induces a phenotypic change, allowing researchers to associate a specific protein with a molecular event [1]. A key advantage of chemogenomics over genetic techniques is its ability to modify protein function reversibly and in real-time, observing phenotypic changes upon compound addition and their reversal after its withdrawal [1].

Core Concepts and Definitions

Forward Chemogenomics

Forward chemogenomics, also termed classical chemogenomics, begins with the investigation of a particular phenotype of interest, such as the arrest of tumor growth, with the aim of identifying small molecules that induce this phenotype [1] [2]. The molecular basis of the desired phenotype is initially unknown [1]. Once modulator compounds that produce the target phenotype are identified, they are used as tools to isolate and identify the specific proteins and genes responsible for the observed effect [1]. The primary challenge of this strategy lies in designing robust phenotypic assays that can seamlessly transition from screening to target identification [1]. This approach is considered "unbiased" because it interrogates the entire genome without preconceived notions about which specific targets are involved, often utilizing methods like chemical mutagenesis to uncover drug-target interactions [20].

Reverse Chemogenomics

Reverse chemogenomics starts from a known, validated protein target [1]. This approach first identifies small molecules that perturb the function of a specific enzyme or receptor in a controlled in vitro system [1]. Following the identification of these modulators, the phenotypic consequences of the molecule are analyzed in cellular assays or whole organisms to confirm the biological role of the target and understand its functional impact in a complex biological context [1]. Historically, this strategy was virtually identical to target-based approaches applied in drug discovery over the past decades, but it is now enhanced by parallel screening capabilities and the ability to perform lead optimization across multiple targets belonging to a single protein family [1]. This approach has been likened to "reverse drug discovery," where a compound with a known effect is studied in detail to understand its precise mechanism of action [21].

Table 1: Comparative Overview of Forward and Reverse Chemogenomics

| Feature | Forward Chemogenomics | Reverse Chemogenomics |

|---|---|---|

| Starting Point | Phenotype (e.g., loss-of-function) [1] | Known protein target [1] |

| Primary Goal | Identify drug targets underlying a phenotype [1] [2] | Validate phenotypes induced by modulating a specific target [1] [2] |

| Screening Context | Cells or whole organisms (phenotypic screening) [1] | In vitro enzymatic or binding assays (target-based screening) [1] |

| Key Challenge | Designing assays that enable direct target identification [1] | Confirming the phenotypic role of the in vitro target [1] |

| Information Flow | Phenotype → Compound → Target Identification [1] | Target → Compound → Phenotype Validation [1] |

Experimental Methodologies and Workflows

Workflow for Forward Chemogenomics

The forward chemogenomics workflow begins with the establishment of a biologically relevant phenotypic assay. The following protocol outlines key steps for a genetic screening approach using chemical mutagenesis to uncover drug-target interactions [20].

- Phenotypic Assay Design: Establish a robust cellular or organismal model system that accurately reports on the phenotype of interest (e.g., cell death, growth arrest, or morphological change). The assay must be scalable for high-throughput screening [1] [20].

- Genetic Perturbation with Chemical Mutagenesis: Treat the model system with an alkylating chemical mutagen (e.g., ENU) to induce random single nucleotide changes across the genome. This creates a library of genetic variants [20].

- Selection and Screening: Challenge the mutagenized population with the drug or compound of interest. Select and isolate clones that show resistance or altered sensitivity to the compound, indicating a potential perturbation of the drug-target interaction [20].

- Next-Generation Sequencing (NGS): Prepare genomic DNA libraries from the selected resistant clones. Perform whole-genome or exome sequencing using high-throughput NGS platforms to identify the mutations that confer resistance [20].

- Target Identification via Mutation Mapping: Analyze sequencing data to map the mutations. The underlying assumption is that mutations conferring drug resistance will cluster at the direct drug-target interaction site, thereby identifying the drug's target and the specific binding interface at amino acid resolution [20].

Figure 1: The Forward Chemogenomics Workflow begins with a phenotype and progresses to target identification.

Workflow for Reverse Chemogenomics

The reverse chemogenomics workflow initiates with a defined protein target. Below is a detailed methodology combining biochemical and computational reverse screening.

- Target Selection and Assay Development: Select a purified, validated protein target (e.g., a specific kinase). Develop a high-throughput in vitro biochemical assay (e.g., fluorescence-based, luminescence) to measure the target's enzymatic activity or ligand binding [1] [22].

- High-Throughput In Vitro Screening: Screen a targeted chemical library against the purified target. Identify "hits" – compounds that significantly modulate the target's activity in the in vitro system [1].

- Cellular Phenotype Analysis: Take the confirmed hits from the in vitro screen and test them in cell-based assays. The goal is to analyze the phenotype induced by the molecule and confirm that modulating the selected target produces the expected biological effect [1].

- Computational Target Fishing (Reverse Screening): For a given active compound, use computational methods to identify additional potential protein targets (in silico target fishing) [22]. This step helps in predicting polypharmacology and off-target effects.

- Shape Screening: Compare the 3D shape of the query molecule to a database of known ligands annotated with target information (e.g., using ChemMapper) [22].

- Pharmacophore Screening: Match the key pharmacophoric features of the query molecule against a database of pharmacophore models derived from known active compounds (e.g., using PharmMapper) [22].

- Reverse Docking: Dock the query molecule successively into the binding sites of a large database of protein 3D structures (e.g., from the PDB) to identify potential targets with favorable binding affinity (e.g., using INVDOCK) [22].

- Experimental Validation: Select the top-ranked potential targets from the computational prediction and validate the interactions experimentally using techniques such as cellular thermal shift assays (CETSA), surface plasmon resonance (SPR), or other binding/functional assays [22].

Figure 2: The Reverse Chemogenomics Workflow begins with a known target and incorporates computational fishing.

Essential Research Reagents and Tools

Successful implementation of chemogenomics approaches relies on a suite of specialized reagents, compound libraries, and databases. The table below details key resources essential for building a chemogenomics research platform.

Table 2: The Scientist's Toolkit: Key Reagents and Resources for Chemogenomics

| Resource Category | Specific Examples | Function and Application |

|---|---|---|

| Targeted Chemical Libraries | Kinase Chemogenomic Set (KCGS) [23], EUbOPEN Chemogenomics Library [23], Pfizer/GSK In-house Libraries [2] | Pre-annotated sets of compounds designed to target specific protein families (e.g., kinases, GPCRs), enabling parallel profiling across multiple related targets. |

| Public Bioactivity Databases | ChEMBL [24] [25], PubChem [24] [25], BindingDB [22], ExCAPE-DB [25] | Large-scale repositories of chemical structures and their associated bioactivity data against biological targets. Serve as the foundation for building predictive models and validation. |

| Protein Structure Databases | Protein Data Bank (PDB) [22] | A repository of 3D structural data of proteins and nucleic acids. Critical for structure-based reverse docking and understanding binding interactions. |

| Computational Target Fishing Tools | Shape Screening: ChemMapper, SEA [22]Pharmacophore Screening: PharmMapper [22]Reverse Docking: INVDOCK, idTarget [22] | Software and web services used to predict the protein targets of a given small molecule, aiding in mechanism of action studies and drug repositioning. |

| Data Curation & Standardization Tools | RDKit [24], Molecular Checker/Standardizer (Chemaxon) [24], AMBIT [25] | Cheminformatics toolkits used to standardize chemical structures (e.g., tautomers, stereochemistry) and bioactivity data, which is vital for ensuring data quality and model reliability. |

Applications in Drug Discovery and Research

Chemogenomics strategies have been successfully applied to various challenges in modern drug discovery and biological research.

Determining Mechanism of Action (MOA): Chemogenomics has been used to elucidate the MOA of traditional medicines, such as Traditional Chinese Medicine (TCM) and Ayurveda [1]. By linking the phenotypic effects of these remedies (e.g., anti-inflammatory, hypoglycemic) with computational target prediction, researchers can identify potential protein targets relevant to the observed therapeutic effects, such as sodium-glucose transport proteins or steroid-5-alpha-reductase [1].

Identifying Novel Drug Targets: Chemogenomics profiling enables the discovery of completely new therapeutic targets. For instance, leveraging a ligand library for the bacterial enzyme murD and applying the chemogenomics similarity principle led to the identification of new ligands for other members of the mur ligase family (murC, murE, etc.), revealing new targets for developing broad-spectrum Gram-negative antibiotics [1].

Drug Repositioning and Polypharmacology: Reverse screening methods are particularly valuable for finding new therapeutic indications for existing drugs (drug repositioning) and for predicting "off-target" effects that contribute to a drug's efficacy or its side effects [2] [22]. By computationally screening an approved drug against a large panel of protein targets, new unexpected interactions can be discovered and experimentally validated.

Uncovering Genes in Biological Pathways: Chemogenomics can help identify missing genes in complex biological pathways. In one example, researchers used cofitness data from Saccharomyces cerevisiae (yeast) deletion strains to identify the previously unknown enzyme (YLR143W) responsible for the final step in the biosynthesis of diphthamide, a modified amino acid [1].

Data Curation: A Critical Prerequisite

The power of chemogenomics is heavily dependent on the quality of the underlying data. Concerns about the reproducibility of published scientific data have highlighted the necessity of rigorous data curation before building predictive models [24]. An integrated workflow for chemical and biological data curation is essential. Key steps include:

- Chemical Structure Curation: Standardization of structures, removal of inorganic and organometallic compounds, correction of valence violations, normalization of tautomeric forms, and verification of stereochemistry [24].

- Bioactivity Data Processing: Identification and handling of chemical duplicates (the same compound tested multiple times), aggregation of multiple activity values for the same compound-target pair, and filtering based on reliable assay types and physicochemical properties (e.g., molecular weight < 1000 Da) [24] [25].

Adherence to these best practices ensures that the data extracted from public repositories like ChEMBL and PubChem is reliable and suitable for robust chemogenomics analysis and model development [24].

The traditional drug discovery model, often characterized as 'one-drug-one-target,' has increasingly revealed limitations in addressing complex diseases such as cancers, neurological disorders, and metabolic conditions. These diseases typically arise from multiple molecular abnormalities rather than single defects, necessitating a more comprehensive therapeutic approach [5]. Over the past two decades, the field has witnessed a paradigm shift toward systems pharmacology, which acknowledges that most small molecules interact with multiple protein targets, a phenomenon known as polypharmacology [26] [5]. This shift has been driven by the high failure rates of drug candidates in advanced clinical stages due to insufficient efficacy and safety concerns, highlighting the need for a more holistic understanding of drug action within biological systems [5].

Central to this modern approach is chemogenomics, which utilizes well-annotated collections of small molecules to probe protein functions in complex cellular systems [27]. A chemogenomic library is defined as a collection of selective small-molecule pharmacological agents, where a hit in a phenotypic screen suggests that the annotated target(s) of that pharmacological agent may be involved in perturbing the observable phenotype [26] [28]. These libraries, combined with quantitative and systems pharmacology (QSP) approaches, enable researchers to model the dynamic interactions between drugs and biological systems as a whole, rather than focusing on individual constituents [29]. This integrative framework has emerged as an innovative strategy that combines physiology and pharmacology to accelerate medical research, moving beyond narrow pathway focus to simultaneously consider multiple receptors, cell types, metabolic pathways, and signaling networks [29].

Chemogenomic Libraries: Design, Quality Control, and Characterization

Fundamental Concepts and Design Principles

Chemogenomic libraries represent strategically designed collections of small molecules that collectively cover a significant portion of the druggable genome. These libraries are curated to include compounds with well-defined mechanisms of action against specific protein families or biological pathways [5] [27]. The design philosophy acknowledges that high-quality chemical probes with exclusive selectivity exist for only a small fraction of potential targets; therefore, chemogenomic libraries may include compounds with less stringent selectivity criteria to enable coverage of a larger target space [27]. Initiatives such as EUbOPEN aim to cover approximately 30% of the druggable proteome, estimated to comprise about 3,000 targets, through their chemogenomic compound collections [27].

Library design involves careful consideration of multiple factors:

- Cellular activity and potency against intended targets

- Chemical diversity and structural representation

- Target selectivity profiles and polypharmacology

- Pathway coverage across biological processes implicated in disease

- Physicochemical properties ensuring compatibility with screening assays

Advanced analytic procedures have been developed to design anticancer compound libraries adjusted for library size, cellular activity, chemical diversity and availability, and target selectivity [30]. These procedures result in compound collections that cover a wide range of protein targets and biological pathways implicated in various cancers, making them particularly applicable to precision oncology approaches [30].

Quality Control and Characterization

Rigorous quality control is essential for chemogenomic libraries, as compounds with incorrect identity or insufficient purity can lead to misleading biological activity data [31]. Liquid chromatography-mass spectrometry (LC-MS) has emerged as a medium-throughput, semi-automated quality control method suitable for chemogenomic libraries [31]. This rapid method can cover a broad chemical space of small organic compounds with diverse physicochemical properties such as polarity and lipophilicity, confirming both compound identity and purity [31].

The process involves:

- Confirmation of compound identity through mass spectrometry

- Assessment of purity using chromatographic separation

- Minimal material requirements to enable comprehensive testing

- Semi-automated workflows to handle library scale

Beyond chemical quality control, comprehensive bioinformatic annotation is crucial for maximizing the utility of chemogenomic libraries. This includes mapping compounds to their primary targets, secondary targets, associated biological pathways, and related disease areas [5]. The integration of diverse data sources such as ChEMBL, KEGG pathways, Gene Ontology, and Disease Ontology creates a rich knowledge network that enhances the interpretability of screening results [5].

Table 1: Key Components of Chemogenomic Library Design and Characterization

| Component | Description | Data Sources/Methods |

|---|---|---|

| Compound Selection | Covers major target families (kinases, GPCRs, epigenetic modulators) with cellular activity | ChEMBL, commercial libraries, in-house collections [5] [27] |

| Structural Diversity | Representative scaffolds and fragments ensuring chemical diversity | ScaffoldHunter software, stepwise ring removal [5] |

| Target Annotation | Mapping compounds to protein targets, pathways, and diseases | ChEMBL, KEGG, GO, Disease Ontology [5] |

| Quality Control | Confirmation of compound identity and purity | LC-MS with semi-automated workflows [31] |

| Morphological Profiling | Linking compounds to cellular phenotypes | Cell Painting assay, high-content imaging [5] |

Applications in Phenotypic Screening and Target Deconvolution

Phenotypic Drug Discovery

The revival of phenotypic screening in drug discovery has been facilitated by advances in cell-based screening technologies, including induced pluripotent stem (iPS) cell technologies, gene-editing tools such as CRISPR-Cas, and imaging assay technologies [5]. Phenotypic screening does not rely on prior knowledge of molecular targets, instead focusing on observable changes in cellular or organismal phenotypes in response to compound treatment [26]. This approach has re-emerged as a promising strategy for identifying novel and safe drugs, particularly for complex diseases where the precise molecular pathology may not be fully understood [5].

Chemogenomic libraries are particularly valuable in phenotypic screening because a hit from such a collection suggests that the annotated target or targets of the active probe molecules are involved in the phenotypic perturbation [26]. This provides a direct link between phenotypic observations and potential molecular mechanisms, helping to bridge the gap between phenotypic and target-based screening approaches [26] [28]. The integration of chemogenomic libraries with high-content imaging approaches, such as the Cell Painting assay, enables the creation of morphological profiles that can connect compound-induced phenotypes to specific biological pathways [5]. This assay involves staining U2OS osteosarcoma cells in multiwell plates, followed by automated image analysis using CellProfiler to identify individual cells and measure hundreds of morphological features [5].

Target Identification and Mechanism Deconvolution

A significant challenge in phenotypic drug discovery is the subsequent identification of therapeutic targets and mechanisms of action responsible for the observed phenotypes [5]. Chemogenomic approaches facilitate this target deconvolution through their annotated nature, where the biological activities of library components provide clues about which targets and pathways might be modulating the phenotype [26].

Advanced computational methods have been developed to support this process:

- Systems pharmacology networks integrating drug-target-pathway-disease relationships

- Graph databases (e.g., Neo4j) incorporating heterogeneous biological data

- Network analysis connecting morphological profiles to biological pathways

- Enrichment calculations for Gene Ontology, KEGG pathways, and Disease Ontology

These approaches enable researchers to move from observed phenotypic changes to hypotheses about underlying molecular mechanisms, creating a reverse translation framework from phenotype to target [5]. For example, a study profiling glioma stem cells from glioblastoma patients using a chemogenomic library of 789 compounds covering 1,320 anticancer targets revealed highly heterogeneous phenotypic responses across patients and GBM subtypes, identifying patient-specific vulnerabilities [30].

Diagram 1: Phenotypic Screening and Target Deconvolution Workflow. This diagram illustrates the iterative process of using chemogenomic libraries in phenotypic screening to generate target hypotheses.

Integration with Quantitative and Systems Pharmacology

Foundations of Quantitative and Systems Pharmacology

Quantitative and Systems Pharmacology (QSP) represents an innovative and quantitative approach that integrates physiology and pharmacology to provide a holistic understanding of interactions between the human body, diseases, and drugs [29]. QSP is defined as the quantitative analysis of the dynamic interactions between drugs and a biological system that aims to understand the behavior of the system as a whole, as opposed to the behavior of its individual constituents [29]. This approach employs sophisticated mathematical models, frequently represented as Ordinary Differential Equations (ODEs), to capture the intricate mechanistic details of pathophysiology across multiple scales [29].

The major advantage of QSP is its ability to integrate data and knowledge through both horizontal and vertical integration [29]:

- Horizontal integration involves simultaneously considering multiple receptors, cell types, metabolic pathways, or signaling networks, moving beyond a narrow focus on specific pathways or targets

- Vertical integration spans multiple time and space scales, from molecular interactions (hours) to disease progression (months to years)

QSP models are versatile and can be developed to encompass both individual and population scales, capturing physiological dynamics unique to individual patients while accounting for variability across populations by adjusting physiological parameters [29]. This multi-scale capability makes QSP particularly valuable for understanding and predicting drug actions at different levels of granularity, from molecular targets to patient populations.

Integration of Chemogenomics and QSP

The integration of chemogenomics and QSP creates a powerful framework for modern drug discovery, combining the target-focused annotation of chemogenomic libraries with the system-level modeling capabilities of QSP. This integration facilitates what has been termed "integrated pharmacometrics and SP (iPSP)" models – mathematical frameworks that use a combination of pharmacometrics and systems pharmacology approaches [32]. These integrated models incorporate:

- Mechanistic/detailed biological components and relationships based on prior knowledge (systems pharmacology)

- Typical PK and PD biomarker observations or clinical outcomes in humans/animals (pharmacometrics)

- Variability between individuals and populations (pharmacometrics)

Approximately 19% of research articles in the field implement this iPSP approach, demonstrating its growing adoption and utility [32]. The integration enables researchers to leverage the strengths of both fields: the well-annotated compound-target relationships from chemogenomics and the system-level, multiscale modeling from QSP that can predict emergent behaviors not apparent from reductionist approaches [32].

Table 2: QSP Model Applications in Drug Development

| Application Area | QSP Contribution | Exemplary Models |