Chemogenomic Compound Annotation: Foundational Concepts, Methodologies, and Best Practices for Drug Discovery

This article provides a comprehensive overview of chemogenomic compound annotation strategies, a key discipline at the intersection of chemistry, biology, and informatics that systematically links small molecules to their biological...

Chemogenomic Compound Annotation: Foundational Concepts, Methodologies, and Best Practices for Drug Discovery

Abstract

This article provides a comprehensive overview of chemogenomic compound annotation strategies, a key discipline at the intersection of chemistry, biology, and informatics that systematically links small molecules to their biological targets. Aimed at researchers, scientists, and drug development professionals, it covers foundational principles, including the definition of ligand and target spaces and the role of annotated chemical libraries. The scope extends to methodological approaches for ligand and target description, computational tools for interaction prediction, and practical applications in target deconvolution and drug repositioning. It further addresses common challenges and optimization techniques, and concludes with critical validation frameworks and comparative analyses of annotation tools to guide robust, data-driven decision-making in modern drug discovery pipelines.

The Core Principles of Chemogenomics and Annotated Libraries

The completion of the human genome project marked a transformative moment in biomedical science, unveiling thousands of genes potentially associated with disease yet presenting a formidable challenge: systematically converting this genetic information into effective therapeutics. Chemogenomics has emerged as the interdisciplinary field addressing this challenge through the comprehensive exploration of the interaction between chemical and genomic spaces. This represents a fundamental shift from traditional single-target drug discovery toward a systems-based approach that focuses on entire gene families, enabling parallel processing of multiple targets for more efficient pharmaceutical development. Defined as "the determination and practical application of the relationships between chemical and genomic spaces," chemogenomics aims to systematically identify all ligands and modulators for all gene products, thereby accelerating the exploration of biological function across entire gene families [1] [2].

The field sits at the intersection of multiple disciplines, including chemistry, genetics, bioinformatics, structural biology, and high-throughput screening, integrating these traditionally separate domains into a unified framework for target and drug discovery simultaneously. This review examines the core principles, methodologies, and applications of modern chemogenomics, providing researchers with both the theoretical foundation and practical toolkit for implementing chemogenomic strategies in contemporary drug development pipelines.

Core Principles: From Single-Target to Systems-Based Approaches

The Evolution from Reductionist to Systematic Discovery

Traditional drug discovery has long followed a reductionist paradigm—a single target, single drug approach that dominated pharmaceutical research for decades. This methodology involves optimizing ligand properties (potency, selectivity, pharmacokinetics) toward a single macromolecular target, with an estimated 800 proteins investigated despite approximately 3,000 being considered "druggable" targets [3]. In contrast, chemogenomics operates on two fundamental assumptions: first, that compounds sharing chemical similarity should share biological targets; and second, that targets sharing similar ligands should share similar binding patterns [3]. This establishes a systematic framework where data on "unliganded" targets can be inferred from the closest "liganded" neighboring targets, and data on "untargeted" ligands can be gathered from the closest "targeted" ligands.

Table: Comparison of Traditional vs. Chemogenomics Approaches in Drug Discovery

| Aspect | Traditional Drug Discovery | Chemogenomics Approach |

|---|---|---|

| Scope | Single target investigation | Entire gene families & pathways |

| Chemical Space | Focused libraries for specific targets | Diverse libraries annotated across multiple targets |

| Target Selection | Based on individual disease association | Based on gene family relationships & structural similarity |

| Data Structure | Isolated structure-activity relationships | Annotated ligand-target interaction matrices |

| Knowledge Transfer | Limited between projects | Systematic extrapolation across target classes |

| Primary Goal | Optimize potency against one target | Understand ligand interactions across target families |

The Ligand-Target Interaction Matrix

The conceptual foundation of chemogenomics is the ligand-target interaction space—a two-dimensional matrix where targets are represented as columns and compounds as rows, with values typically representing binding constants (Ki, IC₅₀) or functional effects (EC₅₀) [3]. This matrix is inherently sparse, as not all compounds have been tested against all potential targets. Predictive chemogenomics attempts to fill these gaps using computational approaches that leverage both ligand-based and target-based similarities, creating a knowledge system that grows increasingly valuable with each additional data point. The systematic annotation of compounds according to their targets enables genome sequence information to be directly associated with ligands, allowing gene homology-based identification of ligands for closely related targets [1].

Computational Methodologies in Chemogenomics

Navigating Chemical Space

Effective navigation through chemical space requires robust methods for compound description and comparison. Ligands are typically described using molecular descriptors ranging from 1D to 3D representations:

- 1D descriptors include global properties such as molecular weight, atom counts, and predicted properties like log P, which are computationally efficient and useful for preliminary filtering [3].

- 2D topological descriptors encode structural patterns, connectivity tables, and structural fingerprints that capture molecular substructures without spatial information [3].

- 3D conformational descriptors represent spatial properties including pharmacophores, molecular shapes, and fields that directly model stereochemical requirements for binding [3].

For similarity searching, the Tanimoto coefficient serves as the predominant metric, calculated as Tc = c/(a+b-c), where 'a' and 'b' represent bits set in compounds A and B, and 'c' represents shared bits [3]. Simplified molecular input line entry system (SMILES) strings provide a standardized representation for chemical structures, enabling efficient storage and comparison of compounds in large databases [3].

Characterizing Target Space

Protein targets are similarly classified using hierarchical descriptor systems:

- 1D sequence information enables clustering by gene families using databases like UniProt and Pfam, with motif-based analyses focusing on conserved functional regions [3].

- 2D structural classifications capture fold similarities through databases such as SCOP and CATH, identifying structural motifs conserved across evolutionarily related targets [3].

- 3D binding site characterization focuses specifically on the structural features of ligand-binding pockets, where similarities often persist even when overall sequence homology is low [3].

The integration of these target characterization methods with ligand similarity approaches enables powerful cross-target prediction, where known ligands for characterized targets can serve as starting points for identifying ligands of uncharacterized but related targets.

Advanced Integrative Approaches

Modern chemogenomics has evolved to incorporate phenotypic screening with multi-omics data and artificial intelligence, creating an exponentially more powerful discovery platform. This integrated approach captures subtle, disease-relevant phenotypes at scale through high-content imaging, single-cell technologies, and functional genomics, then contextualizes these observations with genomic, transcriptomic, proteomic, metabolomic, and epigenomic data layers [4]. AI and machine learning models fuse these multimodal datasets that were previously too complex to analyze collectively, enabling the detection of patterns that escape traditional analytical methods [4].

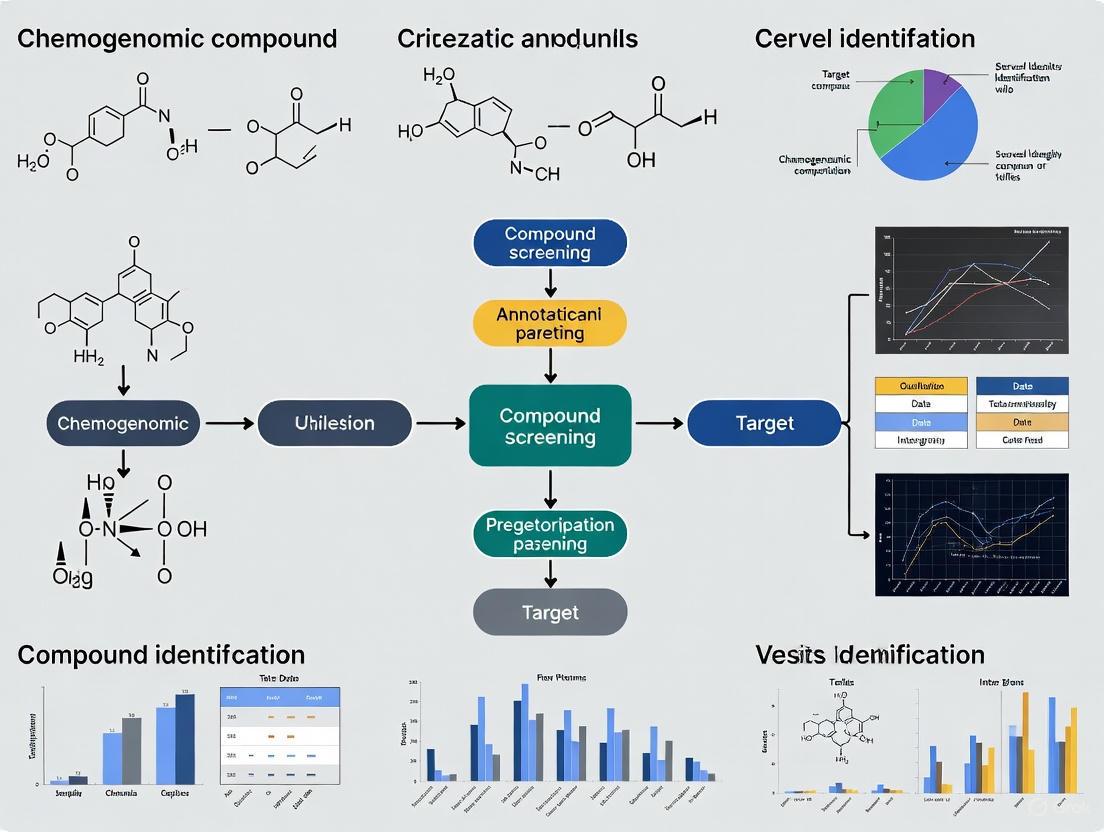

Diagram: Modern chemogenomics integrates diverse data types through AI to accelerate multiple aspects of drug discovery.

Experimental Frameworks and Protocols

Annotated Chemical Libraries

Annotated chemical libraries serve as the experimental cornerstone of chemogenomics, functioning as information-rich databases that integrate biological and chemical data [1]. These libraries systematically associate compounds with their molecular targets, creating a knowledge base that enables:

- Target validation through identification of selective chemical modulators

- Lead discovery by identifying novel ligands for pharmaceutically relevant targets

- Selectivity analysis by determining structural basis of ligand selectivity across target families

- Library design by informing the creation of target-focused combinatorial libraries

The practical implementation involves testing compound libraries against diverse target panels, with binding or functional data recorded in structured databases. This creates the ligand-target interaction matrix that forms the foundation for knowledge-based discovery.

High-Content Phenotypic Screening

Modern phenotypic screening has evolved significantly from traditional observation-based approaches. Current best practices incorporate:

- High-content imaging using assays like Cell Painting that visualize multiple organelles and cellular components [4]

- Single-cell technologies including Perturb-seq that capture heterogeneity in phenotypic responses [4]

- Pooled perturbations with computational deconvolution to enhance scalability and reduce costs [4]

- Multiplexed assays that simultaneously capture multiple phenotypic endpoints

These approaches generate rich, multidimensional phenotypic profiles that, when integrated with omics data and AI analysis, can identify bioactive compounds without presupposing molecular targets [4].

Table: Research Reagent Solutions for Chemogenomic Screening

| Reagent/Technology | Function | Application in Chemogenomics |

|---|---|---|

| Cell Painting Assay | Multiplexed imaging of cellular components | Generates morphological profiles for phenotypic screening [4] |

| Perturb-seq | Single-cell RNA sequencing after genetic perturbation | Links genetic perturbations to transcriptional phenotypes [4] |

| Annotated Compound Libraries | Chemically diverse libraries with target annotations | Enables target deconvolution and selectivity profiling [1] |

| Target-Directed Combinatorial Libraries | Libraries focused on specific protein families | Increases hit rates for targets with known ligand preferences [1] |

| Functional Genomics Libraries | CRISPR, RNAi, or cDNA collections | Enables systematic target identification and validation |

Multi-Omics Integration Protocols

Integrating phenotypic data with omics layers provides biological context to observed phenotypes. Standardized protocols include:

- Transcriptomics to identify gene expression changes associated with compound treatment

- Proteomics to characterize signaling and post-translational modifications

- Metabolomics to contextualize stress response and disease mechanisms

- Epigenomics to reveal regulatory modifications induced by compound exposure

Multi-omics integration follows a workflow of data generation, preprocessing, dimensional reduction, and multimodal data fusion, typically employing specialized bioinformatics pipelines and AI models to detect systems-level patterns not apparent from single-omics analyses [4].

Implementation and Applications

Predictive Drug-Target Affinity Modeling

Modern deep learning approaches have significantly advanced chemogenomic prediction capabilities. Frameworks like DeepDTAGen exemplify the state-of-the-art, employing multitask learning to simultaneously predict drug-target binding affinities and generate novel target-aware drug variants [5]. These models address the critical need for interaction strength information beyond simple binary classification of interactions.

The implementation typically involves:

- Representation learning for both compounds (using SMILES, molecular graphs, or fingerprints) and targets (using sequences or structural features)

- Multitask architecture that shares feature learning between affinity prediction and compound generation tasks

- Gradient alignment algorithms like FetterGrad to mitigate optimization conflicts between tasks

- Validation frameworks incorporating drug selectivity analysis, quantitative structure-activity relationship studies, and cold-start testing [5]

These models demonstrate robust performance across benchmark datasets including KIBA, Davis, and BindingDB, achieving MSE values as low as 0.146 on KIBA test sets while maintaining high concordance indices of 0.897 [5].

Knowledge-Based Library Design

Chemogenomics informs the design of targeted combinatorial libraries through systematic analysis of structure-activity relationship data across gene families. The methodology involves:

- Target family analysis to identify conserved binding features

- Ligand-based design using known active compounds as templates

- Structure-based design when protein structural data is available

- Diversity-oriented synthesis to explore regions of chemical space underrepresented in screening libraries

This approach creates libraries with higher probabilities of success against particular target classes while maintaining sufficient diversity to explore structure-activity relationships [1].

Diagram: The iterative knowledge-building cycle in chemogenomics library design and screening.

Success Stories and Clinical Applications

Chemogenomic approaches have yielded successful applications across therapeutic areas:

- COVID-19 drug repurposing: The DeepCE model predicted gene expression changes induced by novel chemicals, enabling high-throughput phenotypic screening for COVID-19 therapeutics and generating lead compounds consistent with clinical evidence [4].

- Oncology: Archetype AI identified AMG900 and novel invasion inhibitors in lung cancer using patient-derived phenotypic data integrated with omics layers [4].

- Infectious disease: GNEprop and PhenoMS-ML models uncovered novel antibiotics by interpreting imaging and mass spectrometry phenotypes [4].

- Triple-negative breast cancer: idTRAX machine learning approaches identified cancer-selective targets through integrated chemogenomic analysis [4].

These successes demonstrate how integrative chemogenomic platforms can reduce discovery timelines and enhance confidence in hit validation across diverse disease areas.

Future Directions and Challenges

Emerging Trends

The future of chemogenomics is being shaped by several converging technological trends:

- Automation and robotics are increasing throughput and reproducibility while reducing manual labor in screening workflows [6].

- Human-relevant models including 3D cell cultures and organoids are improving the translational predictive value of phenotypic screening [6].

- Foundation models in AI are being applied to extract features from complex imaging and omics data at unprecedented scales [6].

- Multi-modal data integration platforms are enabling unified analysis of previously siloed data types [6].

These advances are supported by developments in laboratory information management systems that ensure data traceability and metadata richness, both essential for training reliable AI models [6].

Persistent Challenges

Despite significant progress, chemogenomics faces several ongoing challenges:

- Data heterogeneity resulting from different formats, ontologies, and resolutions continues to complicate integration [4].

- Privacy and ethical concerns around sensitive health data require robust compliance frameworks and debiasing approaches [4].

- Interpretability limitations of complex AI models can hinder clinical adoption and trust [4].

- Infrastructure demands for multi-modal AI necessitate substantial computing resources, creating barriers to widespread implementation [4].

Addressing these challenges requires continued development of FAIR data standards, open biobank initiatives, user-friendly machine learning toolkits, and explainable AI methodologies [4].

Chemogenomics represents a fundamental paradigm shift from single-target reductionism to systems-based drug discovery. By systematically exploring the relationships between chemical and genomic spaces, this approach enables more efficient identification of novel therapeutic agents across gene families. The integration of annotated chemical libraries, multi-omics data, phenotypic screening, and artificial intelligence has created a powerful framework that accelerates both target validation and lead optimization simultaneously.

As the field continues to evolve, focusing on improved data standardization, model interpretability, and human-relevant experimental systems will further enhance the impact of chemogenomics on therapeutic development. For researchers and drug development professionals, mastering chemogenomic principles and methodologies is increasingly essential for success in the modern pharmaceutical landscape, where systematic, knowledge-based approaches are replacing serendipitous discovery.

The core conceptual framework of modern chemogenomics is built upon the ligand-target matrix, a two-dimensional knowledge space where the biological targets form one axis and the chemical ligands form the other [7]. Each intersection within this matrix represents a potential interaction—a binding event or functional modulation that forms the basis of chemical biology and drug discovery. This conceptual organization enables systematic navigation of chemical and biological spaces, transforming the complex problem of compound annotation into a structured, computable format.

The ligand-target knowledge space serves as the foundational element for predicting protein-ligand interactions, identifying off-target effects, and de-orphaning phenotypic screening hits [8] [7]. Each row in this matrix represents the activity profile of a single ligand across multiple targets, while each column represents the binding profile of a single target across multiple ligands. This bidirectional relationship creates a powerful framework for knowledge-based drug discovery strategies, allowing researchers to project target spaces into ligand domains and vice versa [7].

The Bow-Pharmacological Space: An Integrated Conceptual Framework

Theoretical Foundation and Three-Dimensional Integration

The bow-pharmacological space (BOW space) represents an advanced evolution of the basic ligand-target matrix by explicitly incorporating three distinctive subspaces: the protein space, ligand space, and crucially, the interaction space that connects them [8]. This framework addresses a critical limitation of conventional chemogenomic approaches that typically utilize only one or two of these subspaces. The conceptual "bow tie" shape emerges from the interconnected nature of these three domains, with the interaction space forming the central knot that binds the protein and ligand information spaces together.

The protein space encodes sequence-derived features and structural information, the ligand space contains chemical descriptors and fingerprint representations, while the interaction space quantitatively represents the known relationships between proteins and ligands [8]. This tripartite structure enables more accurate modeling of the complex relationships between chemical structures and their biological functions by explicitly accounting for the pharmacological context in which these interactions occur.

Computational Implementation and Feature Engineering

In practical implementation, the BOW space is encoded as 439 distinct features spanning the three subspaces [8]. Feature selection analysis using the Boruta algorithm has demonstrated that all three subspaces contribute non-redundant information to prediction models, with approximately half of the features classified as "strictly important" and nearly two-thirds as "selected features" when including tentative classifications [8]. The distribution of relevant features across all subspaces confirms the theoretical value of this integrated approach.

Experimental validation of this framework has demonstrated that models trained without the bow-interaction space component suffer approximately 10% degradation in area under the curve (AUC) performance metrics, with sensitivity (true positive rate) being particularly affected [8]. This evidence strongly supports the inclusion of all three subspaces for optimal predictive performance in ligand-target interaction mapping.

Quantitative Assessment of Predictive Modeling Approaches

Performance Benchmarking Across Machine Learning Algorithms

The bow-pharmacological space framework enables superior prediction of protein-ligand interactions when coupled with appropriate machine learning algorithms. Bayesian Additive Regression Trees (BART) has demonstrated particular efficacy, providing both high-accuracy classification and reliable probabilistic estimates of interaction likelihood [8].

Table 1: Performance Comparison of Machine Learning Algorithms Applied to Bow-Pharmacological Space

| Algorithm | Accuracy Range | Sensitivity | Specificity | AUC |

|---|---|---|---|---|

| BART | 94.5-98.4% | High | High | >0.9 |

| Random Forest | 94-98% | High | Low | >0.9 |

| SVM | 90-94% | Low | High | >0.9 |

| Decision Trees | 85-90% | Moderate | Moderate | >0.9 |

| Logistic Regression | 88-92% | Moderate | Moderate | >0.9 |

BART's "sum-of-trees" model architecture, constrained by regularized priors to maintain weak learner status for individual trees, demonstrates particular strength in balanced sensitivity and specificity—correctly classifying both interacting and non-interacting pairs with high reliability [8]. The Bayesian framework also provides natural uncertainty quantification through posterior inference, enabling prioritization of experimental assays based on prediction confidence [8].

Dataset-Specific Performance Metrics

The bow-pharmacological space framework has been validated across major target classes using established benchmark datasets [8]. The consistent high performance across diverse protein families demonstrates the generalizability of this approach.

Table 2: Performance of BART Model Across Protein Target Classes

| Target Class | Target Count | Ligand Count | Known Interactions | Accuracy | Evaluation Method |

|---|---|---|---|---|---|

| Enzymes | 664 | 445 | 2,926 | 94.5% | 10-fold CV |

| Ion Channels | 204 | 210 | 1,476 | 96.7% | 10-fold CV |

| GPCRs | 95 | 223 | 635 | 98.4% | 10-fold CV |

| Nuclear Receptors | 26 | 54 | 90 | 95.6% | 10-fold CV |

The performance consistency across target classes with varying dataset sizes (from 26 nuclear receptors to 664 enzymes) highlights the robustness of the bow-pharmacological space representation. Ten-fold cross-validation was employed in all cases to ensure reliable performance estimation [8].

Experimental Methodologies for Target Identification

Direct Biochemical Approaches

Direct biochemical methods represent the most straightforward approach for experimental target identification, relying on physical interactions between small molecules and their protein targets [9]. Affinity purification techniques form the cornerstone of this approach, wherein compounds are immobilized on solid supports and exposed to protein lysates to capture interacting targets [9].

Direct Biochemical Target Identification

Critical considerations for affinity purification experiments include:

- Immobilization Strategy: Selection of appropriate tethers that maintain compound activity while bound to solid supports [9]

- Control Design: Using inactive analogs or capped beads without compound to distinguish specific from nonspecific binding [9]

- Stringency Optimization: Balancing wash conditions to retain genuine interactions while reducing background noise [9]

- Elution Methods: Competitive elution with free compound or direct protein digestion for mass spectrometry-based identification [9]

Advanced variations include photoaffinity cross-linking to covalently capture low-affinity interactions, and peptide-based immobilization systems that preserve compound accessibility [9].

Genetic Interaction Methods

Genetic approaches to target identification leverage cellular systems to detect changes in compound sensitivity following genetic manipulation [9]. These methods can be deployed in both hypothesis-driven and unbiased screening formats.

Genetic Interaction Target Identification

Key genetic interaction methodologies include:

- Resistance Mutagenesis: Selection for spontaneous mutations that confer compound resistance, followed by identification of mutated genes [9]

- Overexpression Screening: Identification of targets whose overexpression diminishes compound activity [9]

- CRISPR/Cas9 Screens: Systematic knockout libraries to identify genes whose disruption alters compound sensitivity [9]

- RNAi Screening: Targeted gene knockdown approaches to validate putative targets [9]

Computational Inference Methods

Computational inference approaches generate target hypotheses through pattern recognition rather than direct physical or genetic evidence [9]. These methods compare compound-induced profiles to reference databases.

Computational Inference Target Identification

Primary computational inference strategies include:

- Chemical Similarity Searching: Comparison to compounds with known targets based on structural or descriptor similarity [7]

- Gene Expression Profiling: Comparison of transcriptomic signatures to reference compounds with established mechanisms [9]

- Proteomic Profiling: Pattern matching of protein expression or phosphorylation changes [9]

- Bioactivity Spectrum Analysis: Comparison across multiple assay readouts to identify similar phenotypic responses [9]

Research Reagent Solutions for Chemogenomic Studies

Essential Materials for Ligand-Target Interaction Studies

Table 3: Key Research Reagents for Chemogenomic Compound Annotation

| Reagent Category | Specific Examples | Function/Application |

|---|---|---|

| Compound Libraries | Synthetic small molecules, Natural products | Source of chemical diversity for screening [9] |

| Protein Production Systems | Recombinant expression, Cell-free translation | Target protein production [9] |

| Immobilization Supports | Affinity resins, Activated beads | Compound immobilization for pull-down assays [9] |

| Detection Reagents | Fluorescent dyes, Antibodies, Mass tags | Readout generation for binding events [9] |

| Cell-Based Assay Systems | Engineered cell lines, Reporter constructs | Phenotypic screening and validation [9] |

| Genetic Tools | CRISPR libraries, RNAi collections, Mutant strains | Genetic interaction studies [9] |

| Bioinformatic Databases | Chemogenomic knowledgebases, Protein-ligand interaction databases | Reference data for computational inference [8] [7] |

Integration of Multi-Method Evidence for Target Validation

The most robust target identification strategies integrate evidence from multiple complementary approaches [9]. Direct biochemical methods provide physical evidence of interaction but may miss functionally relevant low-affinity binders. Genetic methods establish functional relevance but may identify downstream effectors rather than direct targets. Computational methods generate testable hypotheses efficiently but require experimental validation.

Successful integration involves iterative hypothesis generation and testing, where initial computational predictions guide focused biochemical experiments, with genetic approaches providing functional validation in biologically relevant contexts [9]. This multi-faceted strategy increases confidence in target identification while simultaneously illuminating mechanisms of action and potential off-target effects.

The bow-pharmacological space framework serves as a unifying conceptual structure for integrating these diverse data types, providing a computational representation that can incorporate protein features, ligand descriptors, and interaction evidence into a coherent predictive model [8]. This integrated approach represents the state-of-the-art in chemogenomic compound annotation and has demonstrated successful prospective predictions, such as the identification of KIF11 ligands subsequently validated by independent crystallographic studies [8].

The Central Role of Annotated Chemical Libraries as Knowledge Bases

Annotated chemical libraries represent a pivotal knowledge base in modern chemogenomics, serving as information-rich repositories that integrate biological data with chemical structures to facilitate the systematic exploration of ligand-target interactions [1]. In the post-genomic era, the discovery of multitude of genes associated with pathologic conditions has opened new horizons in drug discovery, creating an urgent need for systematic approaches to characterize the function of chemical compounds against biological targets [1]. Annotated libraries fundamentally bridge the chemical space and the genomic space, creating a structured ligand-target knowledge space where compounds are systematically categorized according to their protein targets and biological effects [1]. This formalized annotation transforms simple compound collections into powerful discovery tools that enable knowledge-based exploration of biological mechanisms and accelerate the identification of novel therapeutic leads.

The chemogenomic framework positions annotated libraries as central assets for elucidating the complex relationships between chemical structures and their effects on biological systems. By applying chemical-genetic approaches, researchers can perform unbiased functional annotation of chemical libraries, using cellular response patterns to elucidate compound mode of action [10]. This strategy is particularly powerful in model organisms like Saccharomyces cerevisiae, where comprehensive genetic tools enable high-throughput profiling of compound effects across thousands of defined genetic backgrounds [10]. The resulting chemical-genetic interaction profiles provide diagnostic functional information that, when compared with compendiums of genetic interaction profiles, enables prediction of biological processes targeted by specific compounds [10]. This systematic annotation creates a virtuous cycle of knowledge generation, wherein each newly characterized compound enhances the predictive power of the entire library for future investigations.

Practical Implementation and Screening Methodologies

High-Throughput Chemical-Genetic Screening Platform

The practical implementation of annotated library screening involves sophisticated experimental platforms designed to generate rich biological data at scale. A highly parallel and unbiased yeast chemical-genetic screening system exemplifies this approach, comprising three critical components: a diagnostic mutant collection constructed in a drug-sensitive genetic background, a multiplexed barcode sequencing protocol for simultaneous assessment of hundreds of mutants, and a computational framework for comparing chemical-genetic profiles with a comprehensive compendium of genetic interactions [10]. This integrated system enables functional annotation of thousands of compounds by quantitatively measuring fitness defects or advantages when mutant strains are grown in compound presence, generating chemical-genetic interaction profiles that reveal a compound's biological activity [10].

A key innovation in optimizing these screening platforms involves the development of sensitized genetic backgrounds that enhance detection of bioactive compounds. Research demonstrates that a pdr1Δ pdr3Δ snq2Δ (3Δ) drug-sensitized yeast strain exhibits approximately a 5-fold increase in detecting growth-inhibitory compounds compared to wild-type cells [10]. This sensitized background significantly increases the "hit rate" from approximately 7% in wild-type strains to about 35% across 13,524 compounds tested, while also enhancing detection of specific chemical-genetic interactions for well-characterized compounds like benomyl and micafungin [10]. The increased sensitivity enables more efficient identification of compound-mode of action relationships even at lower compound concentrations.

Diagnostic Gene Set Selection and Optimization

Strategic reduction of screening complexity is essential for scalable annotation of large compound libraries. Rather than employing the complete set of ~5,000 viable yeast deletion mutants, computational approaches can identify optimized subsets of diagnostic mutant strains that retain predictive power across all major biological processes [10]. One implemented design selected 310 deletion mutant strains (~6% of all nonessential genes) that span similar functional space as the entire non-essential deletion collection [10]. This subset was curated not merely for proportional bioprocess representation, but specifically for predictive power in gene similarity-based target prediction, enabling conservation of informative genetic interaction signatures while significantly enhancing screening throughput.

The optimization of signal detection parameters is crucial for generating high-quality chemical-genetic profiles. Systematic evaluation of inoculum size, incubation time, and PCR amplification cycles revealed that incubation time has the most pronounced effect on the signal-to-noise ratio of chemical-genetic profiles, with optimal outcomes observed after 48 hours of incubation [10]. This extended incubation enabled efficient depletion of gene deletion mutants defective in microtubule functions (CIN1, CIN4, GIM3, TUB3) from cultures grown in the presence of benomyl, clearly revealing compound-specific sensitivity patterns [10]. The robustness of the assay to variations in inoculum density and PCR amplification cycles further supports its utility for high-throughput screening applications.

Cheminformatics Approaches for Library Enumeration and Annotation

The construction and enumeration of virtual chemical libraries represents a complementary computational approach to library annotation. Chemoinformatics-based methods enable the systematic generation of virtual compound collections using pre-validated reactions and accessible chemical reagents, with libraries like CHIPMUNK (95 million compounds) and GDB-17 (160 billion compounds) demonstrating the vast scale possible through these approaches [11]. The process typically employs linear notation systems such as SMILES (Simplified Molecular Input Line System), SMARTS (SMILES Arbitrary Target Specification), and InChI (International Chemical Identifier) to represent chemical structures in machine-readable formats [11]. These representations enable efficient storage and processing of large numbers of molecules, facilitating the application of computational filters for properties like synthetic feasibility, drug-likeness, and absence of problematic structural motifs associated with toxicity or assay interference.

Several specialized software tools have been developed to support the enumeration of virtual chemical libraries. Reactor, DataWarrior, and KNIME offer accessible platforms for library generation using pre-validated chemical reactions, while commercial solutions like Schrödinger and Molecular Operating Environment (MOE) provide robust environments for scaffold-based library design [11]. These tools enable researchers to explore chemical space systematically, focusing on regions with higher probabilities of biological relevance. The resulting annotated virtual libraries serve as valuable resources for virtual screening campaigns, leveraging structural similarity principles to identify novel compounds with potential activity against pharmaceutically relevant targets.

Table 1: Key Software Tools for Chemical Library Enumeration

| Tool Name | Access | Primary Approach | Key Features |

|---|---|---|---|

| Reactor | Academic license available | Pre-validated reactions | Reaction-based enumeration |

| DataWarrior | Free open access | Pre-validated reactions | Combined with data analysis and visualization |

| KNIME | Free open access | Pre-validated reactions | Workflow-based, extensible platform |

| Schrödinger | Commercial | Scaffold replacement | Comprehensive drug discovery suite |

| Molecular Operating Environment (MOE) | Commercial | Scaffold replacement | Advanced molecular modeling and simulation |

| D-Peptide Builder | Free webserver | Combinatorial peptide libraries | Specialized for linear/cyclic peptides |

Experimental Protocols and Methodologies

Protocol: Pooled Chemical-Genetic Screening in Yeast

This protocol describes a highly multiplexed method for generating chemical-genetic interaction profiles using a pooled yeast deletion mutant collection in a drug-sensitized background [10].

Materials and Reagents

- Drug-sensitized yeast strain (pdr1Δ pdr3Δ snq2Δ) with integrated diagnostic mutant collection

- Compound libraries dissolved in appropriate solvent (typically DMSO)

- YPD growth medium

- PCR reagents for barcode amplification

- Multiplexed sequencing platform

Procedure

- Pool Preparation: Grow individual diagnostic mutant strains to mid-log phase and combine equal volumes to create the mutant pool. Determine cell density by spectrophotometry.

- Compound Treatment: Dispense the mutant pool into 384-well microtiter plates containing test compounds. Include solvent-only controls on each plate. Use a final compound concentration typically between 10-50 μM based on preliminary activity assessment.

- Incubation: Incubate plates at 30°C for 48 hours with continuous shaking. This extended incubation optimizes signal-to-noise ratio for chemical-genetic interaction detection [10].

- Harvesting and DNA Extraction: Transfer aliquots from each well to separate tubes. Centrifuge to pellet cells and extract genomic DNA using standard yeast protocols.

- Barcode Amplification: Amplify unique molecular barcodes from each sample using a multiplexed PCR approach with 12-14 amplification cycles to maintain linear amplification range.

- Sequencing Library Preparation: Pool PCR products and prepare sequencing libraries using platform-specific protocols. The implemented system supports 768-plex barcode sequencing [10].

- Sequencing and Data Acquisition: Sequence barcode libraries on an appropriate high-throughput platform. Sequence to sufficient depth to ensure >100x coverage per barcode in each sample.

Data Analysis

- Barcode Counting: Quantify barcode abundance for each strain in each condition using demultiplexed sequencing data.

- Fitness Calculation: Calculate relative fitness for each mutant in each compound treatment compared to solvent controls.

- Profile Generation: Generate chemical-genetic interaction profiles by Z-score normalization of fitness values across all mutants for each compound.

- Functional Annotation: Compare chemical-genetic profiles to a reference database of genetic interaction profiles to predict targeted biological processes.

Protocol: Chemoinformatics-Based Library Enumeration

This protocol describes the computational enumeration of target-focused chemical libraries using open-source tools and pre-validated reaction schemes [11].

Materials and Software

- Chemical sketching software (MarvinSketch, ACD/ChemSketch, or ChemDraw)

- Library enumeration tool (DataWarrior, KNIME, or Reactor)

- List of available building blocks or reagents

- Defined reaction schemes or scaffold templates

Procedure

- Reaction Definition: Select appropriate chemical transformations for library assembly. Prioritize reactions with demonstrated broad substrate scope and high yield.

- Reagent Selection: Curate building block collections for each reaction position. Apply property filters (molecular weight, lipophilicity, presence of undesirable functional groups) to ensure chemical feasibility.

- Reaction Encoding: Represent the selected reaction using appropriate chemical representation language (SMIRKS, SMARTS, or RXN notation).

- Library Enumeration: Execute the combinatorial enumeration using selected software tools. For large libraries, consider using structure-based clustering to select diverse subsets.

- Post-Processing: Filter enumerated structures based on computed physicochemical properties, structural alerts for toxicity, and potential pan-assay interference compounds (PAINS).

- Annotation: Add metadata including synthetic history, building block sources, and computed molecular properties to each compound.

- Output Generation: Export the annotated library in standard formats (SDF, SMILES) for integration with screening databases.

Data Analysis, Interpretation, and Target Prediction

The transformation of raw screening data into biological insights requires sophisticated computational approaches that leverage the annotated knowledge base. The core analytical strategy involves comparing chemical-genetic interaction profiles with a compendium of genetic interaction profiles to identify functional similarities [10]. This approach leverages the principle that if a bioactive compound inhibits a specific target protein, loss-of-function mutations in the corresponding target gene should partially mimic the compound's bioactivity, resulting in similar interaction profiles [10]. For example, the genetic interaction profile of a partial loss-of-function mutation in ERG11 closely resembles the chemical-genetic interaction profile of fluconazole, confirming the relationship between compound and target [10].

Advanced similarity metrics and clustering algorithms enable the systematic assignment of compounds to biological processes based on their chemical-genetic profiles. This process involves calculating similarity scores between each compound profile and reference genetic interaction profiles from the global genetic network [10]. Compounds are then annotated to specific biological processes according to the functional enrichment of their most similar genetic profiles. This methodology has been successfully applied to screen seven different compound libraries totaling 13,524 compounds, enabling functional diversity assessment, biological process prediction validation, and identification of compounds with dual modes of action [10].

The integration of structural and biological data in annotated libraries enables additional analysis dimensions through chemogenomics knowledge-based strategies [1]. By systematically relating compound structural features to biological activities across target families, researchers can develop predictive models for target deconvolution and selectivity estimation. These approaches are particularly valuable for profiling compound libraries against gene families like kinases or GPCRs, where structural knowledge of conserved binding elements guides the interpretation of screening data and prioritization of compounds for further development.

Table 2: Quantitative Assessment of Screening Platform Performance

| Performance Metric | Wild-Type Strain | Drug-Sensitized Strain (3Δ) | Improvement Factor |

|---|---|---|---|

| Compound hit rate (≥20% growth inhibition) | ~7% | ~35% | 5× |

| Specific chemical-genetic interactions detected with benomyl (34.4 μM) | Not detected with TUB3 mutant | Clearly detected with TUB3 mutant | Significant enhancement |

| Specific chemical-genetic interactions detected with micafungin (25 nM) | Not detected with BCK1 mutant | Clearly detected with BCK1 mutant | Significant enhancement |

| Number of diagnostic mutants required for functional coverage | ~5,000 | 310 | ~16× reduction |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents for Chemical-Genomic Screening

| Reagent / Material | Function and Application | Technical Specifications |

|---|---|---|

| Diagnostic Mutant Collection | Set of engineered strains for chemical-genetic profiling | 310 gene deletion mutants in pdr1Δ pdr3Δ snq2Δ background; covers major biological processes [10] |

| DNA Barcode System | Unique molecular identifiers for multiplexed screening | 20bp sequences for each strain; compatible with 768-plex sequencing [10] |

| Drug-Sensitized Yeast Background | Enhanced sensitivity for detecting bioactive compounds | pdr1Δ pdr3Δ snq2Δ (3Δ) triple deletion strain; 5× increase in hit detection [10] |

| Multiplexed Sequencing Platform | High-throughput barcode quantification | Enables parallel processing of 768 samples; optimized PCR cycle determination (12-14 cycles) [10] |

| Annotated Compound Libraries | Reference collections with known mechanisms | Libraries with varying structural diversity; include compounds with verified targets for validation |

| Cheminformatics Software Tools | Library enumeration and analysis | DataWarrior, KNIME, or Reactor for library building; SMILES/SMARTS for structure representation [11] |

Visualizing Workflows and Relationships

Diagram 1: High-Throughput Chemical-Genetic Screening Workflow

Diagram 2: Chemogenomic Data Integration Framework

Within pharmaceutical research, a significant paradigm shift has occurred from traditional receptor-specific studies to a cross-receptor view to increase the efficiency of modern drug discovery [12]. Receptors are no longer viewed as single entities but are grouped into sets of related proteins or receptor families that are explored systematically [12]. This interdisciplinary approach, which attempts to derive predictive links between the chemical structures of bioactive molecules and the receptors with which they interact, is referred to as chemogenomics [12]. The field is built upon core assumptions that similar receptors bind similar ligands and that compounds sharing chemical similarity should share targets [12] [3]. These principles allow for the rational compilation of screening sets and knowledge-based design of chemical libraries to accelerate lead finding [12].

Core Principles and Theoretical Foundations

The Fundamental Assumptions of Chemogenomics

Chemogenomics operates on two foundational principles that enable the systematic exploration of chemical and target spaces:

Chemical Similarity Principle: Compounds sharing some chemical similarity should also share targets [3]. This principle enables ligand-based approaches where known ligands of a target can serve as starting points for discovering ligands for similar targets.

Target Family Principle: Targets sharing similar ligands should share similar patterns in their binding sites [3]. This allows for target-based approaches where knowledge about well-characterized targets can be transferred to less-studied, similar targets.

These assumptions facilitate a more efficient exploration of the pharmacological space by establishing predictive links between chemical structures and biological targets [12]. Sir James Black's notion that "the most fruitful basis for the discovery of a new drug is to start with an old drug" encapsulates the practical application of these principles [12].

Defining Similarity in Ligand and Target Spaces

The operationalization of chemogenomic approaches requires precise definitions of what constitutes "similarity" for both ligands and targets.

Ligand Similarity

Table 1: Molecular Descriptors for Quantifying Ligand Similarity

| Descriptor Dimension | Nature | Examples | Common Applications |

|---|---|---|---|

| 1-D | Global properties | Molecular weight, atom counts, log P | ADMET prediction, drug-likeness classification [3] |

| 2-D | Topological | Structural fingerprints, substructures, graph-based methods | Similarity searching, clustering, virtual screening [3] |

| 3-D | Conformational | Pharmacophores, molecular shapes, fields | Structure-based design, scaffold hopping [3] |

To efficiently navigate ligand space, compounds must be described using appropriate properties (descriptors), and a similarity metric must be employed to measure distances between compounds [3]. The most popular similarity index is the Tanimoto coefficient, which ranges from 0 for completely dissimilar structures to 1 for identical compounds [3].

Target Similarity

Table 2: Classification Schemes for Target Similarity

| Dimension | Classification Scheme | Database Examples | Application in Chemogenomics |

|---|---|---|---|

| 1-D | Sequence | UniProt, Pfam | Family-level classification (e.g., GPCRs, kinases) [3] |

| Patterns | Sequence motifs | PRINTS, PROSITE | Identification of functional domains [3] |

| 2-D | Secondary structure fold | SCOP, CATH | Fold-based target grouping [3] |

| 3-D | Atomic coordinates | PDB, MODBASE | Binding site comparison and analysis [3] |

In chemogenomic approaches, the focus is often on the ligand-binding site, where structural similarities among related targets are usually much higher than when considering the full 1-D sequence or 3-D structure [3].

Ligand-Based Chemogenomic Approaches

Ligand-based approaches apply the principle that "similar receptors bind similar ligands" by focusing on the chemical similarity between compounds without directly considering target information [12].

Practical Implementation and Case Studies

GPCR-Focused Library Design: Researchers at Chemical Diversity Lab Inc. developed a scoring scheme based on physicochemical properties for classifying 'GPCR-ligand-like' and 'non-GPCR-ligand-like' compounds [12]. A neural network model trained with thousands of known GPCR ligands and non-GPCR ligands correctly classified over 90% of randomly selected compound sets [12]. This model was used to select 30,000 compounds as a GPCR-focused collection from the company's larger compound repository [12].

Purinergic GPCR Library Synthesis: Scientists at Sanofi-Aventis designed and synthesized chemical libraries targeting the subfamily of purinergic GPCRs [12]. They identified common chemical scaffolds and three-dimensional pharmacophores within known ligands of purinergic GPCRs and synthesized libraries comprising 2,400 compounds around 5 chemical scaffolds [12]. Screening these libraries against the adenosine A1 receptor yielded three novel antagonist series, validating the ligand-based approach [12].

Experimental Protocol: Ligand-Based Virtual Screening

- Reference Ligand Collection: Compile a set of known ligands for the target family of interest from databases such as ChEMBL [3] or PubChem [13].

- Descriptor Calculation: Compute molecular descriptors or fingerprints for all reference ligands and the screening database [3].

- Similarity Assessment: Calculate pairwise similarity (e.g., using Tanimoto coefficient) between reference ligands and database compounds [3].

- Compound Prioritization: Rank database compounds by their similarity to reference ligands and select top-ranking compounds for testing [12].

- Experimental Validation: Test selected compounds in relevant biological assays to confirm activity [12].

Target-Based Chemogenomic Approaches

Target-based approaches compare and classify receptors based on ligand-binding sites using sequence motifs or 3D structural information [12]. These methods often focus on residues important for ligand binding, sometimes referred to as 'chemoprints' [12].

Practical Implementation and Case Studies

CRTH2 Receptor Target Hopping: A notable example of target-based chemogenomics involved the prostaglandin D2-binding GPCR, CRTH2 [12]. Researchers found that the ligand-binding cavity of CRTH2 closely resembled that of the angiotensin II type 1 receptor in terms of physicochemical properties, despite low overall sequence homology [12]. Using a 3D pharmacophore model adapted from angiotensin II antagonists, they performed an in silico screen of 1.2 million compounds [12]. Experimental testing of 600 selected molecules yielded several potent CRTH2 antagonist series [12].

Orphan Receptor Ligand Prediction: In a more advanced target-based approach, researchers used machine learning models trained on descriptors of ligands and receptors to predict ligands for 55 orphan receptors from the NCI database [12]. This approach merged descriptors describing putative ligand-receptor complexes and used matrices of biological activity data for compounds profiled against multiple targets [12].

Experimental Protocol: Binding Site Comparison

- Binding Site Identification: Identify key residues in the binding site of the reference target through structural data or mutagenesis studies [12].

- Target Comparison: Compare binding sites across multiple targets in the family using sequence alignment or 3D structural superposition [3].

- Similarity Quantification: Calculate binding site similarity based on physicochemical properties or spatial arrangements of key residues [12].

- Knowledge Transfer: Apply known ligand chemotypes from similar targets to the target of interest [12].

- Library Design and Screening: Design focused libraries or select screening candidates based on transferred knowledge and test experimentally [12].

Data Curation and Quality Considerations

The reliability of chemogenomic approaches depends heavily on the quality of the underlying chemical and biological data [13]. Several studies have highlighted concerns about data quality and reproducibility in public chemogenomics repositories [13].

Data Curation Workflow

Key Curation Steps

Chemical Structure Curation:

Bioactivity Data Curation:

Assay Metadata Standardization:

Table 3: Essential Research Reagents and Computational Tools for Chemogenomics

| Resource Category | Specific Tools/Databases | Key Function | Access |

|---|---|---|---|

| Chemical Databases | ChEMBL, PubChem, PDSP | Source of annotated chemical structures and bioactivities [13] | Public |

| Curated Databases | ChemSpider, DrugBank | Community-curated chemical structures with stereochemistry confirmation [13] | Public |

| Target Databases | UniProt, PDB, Pfam | Protein sequence, structure, and family information [3] | Public |

| Curation Tools | RDKit, Chemaxon JChem, Schrodinger LigPrep | Structural cleaning, standardization, tautomer treatment [13] | Various |

| Modeling Platforms | QSPRpred, DeepChem, KNIME | QSAR modeling, descriptor calculation, machine learning [14] | Open source/Commercial |

| Descriptor Tools | Multiple implementations in QSPRpred, DeepChem | Calculation of 1D, 2D, and 3D molecular descriptors [14] | Open source |

The assumptions of chemical similarity and target family relationships form the conceptual foundation of modern chemogenomics [12]. These principles enable systematic approaches to drug discovery that increase efficiency by leveraging knowledge across related targets and compounds [12]. Ligand-based methods exploit chemical similarity to extrapolate knowledge to new targets [12], while target-based approaches utilize binding site similarity to transfer knowledge across protein families [12]. The effectiveness of both approaches depends critically on rigorous data curation and quality control [13]. As chemogenomics continues to evolve, these core assumptions will remain central to strategies for comprehensively exploring chemical and target spaces to accelerate drug discovery [1].

In modern chemogenomics and computational drug discovery, the Compound-Target Interaction Matrix represents a foundational data structure for systematizing and predicting the interactions between chemical compounds and their biological targets. This matrix provides a computational framework where rows typically represent individual chemical compounds or drugs, and columns represent protein targets or other biomolecules. Each cell within the matrix contains quantitative or categorical data describing the nature and strength of the interaction, such as binding affinity values, inhibition constants (Ki), dissociation constants (Kd), or half-maximal inhibitory concentration (IC50) measurements [15] [16]. The structural organization of this matrix enables researchers to identify patterns, predict new interactions, and elucidate mechanisms of action across vast chemical and biological spaces.

The importance of this data structure extends throughout the drug development pipeline, from initial target identification to lead optimization. By providing a unified representation of compound-target relationships, the matrix serves as the backbone for machine learning models, chemoinformatic analyses, and systems pharmacology approaches [15] [17]. Within the context of chemogenomic compound annotation strategies, this matrix enables the integration of heterogeneous biological and chemical data, facilitating the discovery of structure-activity relationships and polypharmacological profiles that are essential for developing effective therapeutic interventions.

Core Structural Components and Data Dimensions

A well-constructed Compound-Target Interaction Matrix incorporates multiple dimensions of data to comprehensively capture the complexity of drug-target interactions. The core components can be categorized into three primary domains: compound descriptors, target descriptors, and interaction measurements.

Table 1: Core Components of the Compound-Target Interaction Matrix

| Component Category | Specific Descriptors | Data Type | Description |

|---|---|---|---|

| Compound Descriptors | Molecular graphs, SMILES strings, MACCS keys, structural fingerprints | Graph, String, Binary | Encodes chemical structure, functional groups, and physicochemical properties [15] [16] |

| Target Descriptors | Amino acid sequences, dipeptide compositions, structural motifs, domain information | String, Numerical, Categorical | Represents protein sequence, structure, and functional domains [15] [16] |

| Interaction Measurements | Binding affinity (Kd, Ki, IC50), mechanism of action (activation/inhibition), interaction context | Numerical, Binary, Categorical | Quantifies interaction strength and defines pharmacological relationship [15] [18] |

| Contextual Metadata | Tissue specificity, cellular localization, experimental conditions | Categorical, Numerical | Provides biological context for the interaction [17] |

The matrix structure must also accommodate different levels of evidence supporting each interaction, ranging from FDA-approved drug indications to pre-clinical experimental data and computational predictions [18]. High-quality matrices incorporate confidence scores or evidence codes that reflect the source and reliability of each data point, enabling researchers to weight interactions appropriately during analysis. The integration of temporal and spatial dimensions further enhances the utility of the matrix by capturing how interactions vary across biological contexts, developmental stages, or disease states [17].

Constructing a comprehensive Compound-Target Interaction Matrix requires the integration of data from multiple heterogeneous sources, each contributing different types of evidence and covering various aspects of compound-target relationships. The major data sources include experimental databases, clinical resources, and computational predictions, which must be harmonized to create a unified representation.

Table 2: Key Data Sources for Matrix Construction

| Data Source Category | Example Resources | Data Provided | Evidence Level |

|---|---|---|---|

| Experimental Databases | BindingDB, DCDB, ALMANAC, PDX-based screens | Quantitative binding affinities, synergy scores, dose-response data | High [18] [16] |

| Clinical Resources | FDA approvals, NCCN Guidelines, ClinicalTrials.gov | Approved indications, clinical trial outcomes, therapeutic guidelines | Highest [18] |

| Computational Predictions | REFLECT, DTIAM, Komet, MDCT-DTA | Predicted interactions, affinity scores, mechanism of action | Variable [15] [18] [16] |

| Biomarker Databases | OncoDrug+, VICC, DGIdb | Genomic biomarkers, mutation-specific responses, companion diagnostics | Context-dependent [18] |

The integration process involves significant data harmonization challenges, as different sources often use varying identifiers, measurement units, and experimental protocols. Successful matrix construction requires the implementation of entity resolution algorithms to normalize compound and target identifiers across databases, as well as quality control pipelines to identify and handle conflicting data points [18] [16]. For computational predictions, it is essential to include confidence metrics that reflect the reliability of each prediction, such as the interaction scores provided by the REFLECT method or the probability outputs from machine learning models like DTIAM [15] [18].

Experimental Methodologies and Protocols

The data populating Compound-Target Interaction Matrices is generated through diverse experimental methodologies, each with specific protocols and applications. These methods span from high-throughput screening approaches to precise mechanistic studies, providing different levels of detail about compound-target interactions.

Biochemical and Cellular Assay Protocols

Standardized experimental protocols are essential for generating consistent, high-quality data for inclusion in interaction matrices. For biochemical binding assays, the protocol typically involves incubating the purified target protein with the test compound under controlled conditions, followed by separation of bound and unbound compound and quantification of binding parameters [19]. Key steps include:

- Target Preparation: Purification of the recombinant target protein and verification of structural integrity and activity.

- Compound Dilution Series: Preparation of compound solutions across a range of concentrations (typically 8-12 points in a 3- or 10-fold dilution series).

- Binding Reaction: Incubation of target and compound for a defined period at controlled temperature.

- Separation and Detection: Application of appropriate detection methods (e.g., fluorescence polarization, surface plasmon resonance, radioligand binding) to quantify bound complex.

- Data Analysis: Calculation of binding parameters (Kd, Ki) through nonlinear regression of the concentration-response data [19].

For cellular target engagement assays, protocols must account for compound permeability, metabolism, and cellular context. The five-star matrix framework emphasizes the importance of measuring not just binding but also proximal functional effects (dimension 3) and downstream biological consequences (dimension 4) to fully characterize the interaction [17]. These protocols typically include:

- Cell Line Selection and Culture: Use of disease-relevant cell models with appropriate expression of the target.

- Compound Treatment: Exposure of cells to compound across a concentration range for defined time periods.

- Target Engagement Measurement: Application of techniques like cellular thermal shift assays (CETSA) or resonance energy transfer methods.

- Functional Readouts: Measurement of immediate downstream signaling events (e.g., phosphorylation, second messenger production).

- Phenotypic Assessment: Evaluation of ultimate cellular responses (e.g., proliferation, apoptosis, differentiation) [17].

High-Throughput Screening Workflows

Large-scale interaction data generation employs high-throughput screening (HTS) protocols that enable testing of thousands to millions of compound-target combinations. These protocols are optimized for efficiency, reproducibility, and miniaturization:

Diagram 1: High-Throughput Screening Workflow

The HTS process begins with assay optimization to ensure robustness and suitability for automation, typically evaluated using metrics like Z'-factor. Automated screening then tests compound libraries against targets in microtiter plates (384 or 1536-well format), generating raw data that undergoes quality control and normalization before hit identification based on predefined activity thresholds [18]. For drug combination studies, as implemented in resources like OncoDrug+, matrix-style screening protocols test pairwise compound combinations across multiple concentrations, generating synergy scores that require specialized analysis methods like the Bliss independence model or Loewe additivity [18].

Computational Frameworks and Machine Learning Approaches

Computational methods play an increasingly important role in predicting compound-target interactions, especially for novel compounds or targets with limited experimental data. These approaches leverage the structural framework of the interaction matrix to train machine learning models that can generalize to new chemical and biological space.

Feature Representation and Model Architectures

The performance of computational prediction models heavily depends on how compounds and targets are represented as feature vectors. Advanced frameworks like DTIAM employ multi-task self-supervised pre-training on molecular graphs of compounds and primary sequences of proteins to learn meaningful representations that capture substructure and contextual information [15]. These representations are then used for downstream prediction tasks including binary interaction prediction, binding affinity regression, and mechanism of action classification.

For compound representation, contemporary approaches utilize:

- Molecular graph encoders that learn from atom and bond features using graph neural networks

- SMILES string processing using natural language processing techniques like Transformer architectures

- Chemical fingerprint extraction including extended-connectivity fingerprints (ECFPs) and MACCS keys [16]

For target representation, common approaches include:

- Amino acid sequence encoding using convolutional neural networks or protein language models

- Dipeptide composition and other sequence-derived features

- Structural feature extraction when 3D structures are available [15] [16]

Addressing Data Challenges

Real-world compound-target interaction datasets present significant challenges that require specialized computational solutions. The data imbalance problem, where confirmed interactions are vastly outnumbered by unknown or non-interacting pairs, is particularly pronounced. To address this, approaches like Generative Adversarial Networks (GANs) have been employed to create synthetic data for the minority class, effectively reducing false negatives and improving model sensitivity [16]. In one implementation, the GAN-based approach combined with Random Forest classification achieved remarkable performance metrics, including accuracy of 97.46%, precision of 97.49%, and ROC-AUC of 99.42% on the BindingDB-Kd dataset [16].

The cold start problem - predicting interactions for novel compounds or targets with no known interactions - represents another significant challenge. Frameworks like DTIAM address this through self-supervised pre-training on large amounts of unlabeled data, enabling the model to learn generalizable representations that transfer well to new entities [15]. The model architecture incorporates Transformer encoders for both compounds and targets, followed by interaction modeling that captures complex relationships between the representations.

Diagram 2: DTIAM Model Architecture

The Five-Star Matrix: A Translational Framework

Beyond mere interaction cataloging, the Compound-Target Interaction Matrix serves as the foundation for translational frameworks that bridge basic research and clinical applications. The five-star matrix represents an advanced implementation of this concept, providing a comprehensive framework for translational drug discovery organized across five dimensions and five systems [17].

The five dimensions include:

- Biodistribution: Compound concentration at the target site

- Target Binding/Occupancy: Direct interaction between compound and target

- Proximal Effect: Immediate functional consequences of target engagement

- Biological Effect: Downstream phenotypic consequences

- Disease Effect: Ultimately clinically relevant outcomes [17]

This multidimensional framework enables researchers to systematically evaluate compound-target interactions across different levels of biological complexity, from biochemical systems to clinical applications. By populating this expanded matrix with experimental and clinical data, researchers can identify gaps in the translational pathway and develop targeted experiments to address these gaps [17].

Research Reagent Solutions and Essential Materials

The experimental generation of data for Compound-Target Interaction Matrices requires specific research reagents and tools that enable precise measurement of interactions across different biological systems.

Table 3: Essential Research Reagents and Materials

| Reagent/Material | Function | Application Context |

|---|---|---|

| Recombinant Proteins | Purified targets for biochemical binding assays | In vitro binding studies, high-throughput screening [19] |

| Validated Cell Lines | Disease-relevant cellular models | Cellular target engagement, functional assays [17] |

| Chemical Probes | Well-characterized tool compounds | Target validation, assay controls [17] |

| Antibodies | Detection of targets and downstream effectors | Immunoassays, Western blotting, cellular imaging [19] |

| Microtiter Plates | Miniaturized reaction vessels | High-throughput screening, dose-response studies [18] |

| Detection Reagents | Fluorescent, luminescent, or colorimetric readouts | Signal measurement in various assay formats [19] |

The selection of appropriate research reagents is critical for generating high-quality, reproducible data for inclusion in interaction matrices. For example, the use of chemical probes with well-characterized target profiles enables proper validation of screening assays and serves as positive controls for interaction studies [17]. Similarly, patient-derived cell models and xenograft systems provide more physiologically relevant contexts for evaluating compound-target interactions in disease-specific backgrounds [18].

Applications in Drug Discovery and Development

The Compound-Target Interaction Matrix serves as a critical tool throughout the drug discovery and development pipeline, enabling data-driven decisions at multiple stages. In target identification and validation, the matrix helps prioritize targets with favorable "druggability" profiles and minimal safety concerns based on known interaction patterns [17]. During lead identification and optimization, the matrix facilitates structure-activity relationship analysis by revealing how structural modifications affect interactions across multiple targets, enabling the design of compounds with improved selectivity and reduced off-target effects [15].

In clinical development, interaction matrices enriched with biomarker information enable patient stratification strategies and identification of predictive biomarkers for treatment response. Resources like OncoDrug+ exemplify this application by systematically linking drug combinations with specific cancer types and genetic biomarkers, supporting evidence-based clinical decision-making [18]. The matrix framework also supports drug repurposing efforts by revealing novel therapeutic applications for existing drugs based on their interaction profiles, potentially shortening development timelines and reducing risks [15] [18].

The integration of interaction matrices with other data types, such as gene expression profiles and patient clinical data, creates even more powerful frameworks for precision medicine. This integrated approach enables the development of patient-specific interaction networks that can predict individual treatment responses and guide personalized therapeutic strategies [17] [18].

Computational Strategies and Practical Applications in Annotation

Molecular descriptors are mathematical representations of chemical compounds that serve as the foundational bridge between chemical structures and their biological, chemical, or physical properties. Within chemogenomic compound annotation strategies, the systematic application of 1D, 2D, and 3D descriptors enables the efficient exploration of ligand-target space, facilitating target validation, biological mechanism deconvolution, and the discovery of bioactive small molecules. This whitepaper provides an in-depth technical examination of molecular descriptor methodologies, their computational protocols, and their integral role in the rational design of annotated chemical libraries for modern drug discovery platforms [20] [21] [1].

Chemogenomics is an innovative approach in chemical biology that synergizes combinatorial chemistry with genomic and proteomic data to systematically study biological system responses to compound libraries [20]. Central to this strategy is the annotated chemical library, where ligands are classified according to their protein targets, creating a rich ligand-target knowledge space for data mining and target discovery [1]. The effective exploration of this space requires sophisticated molecular representation techniques that translate chemical structures into computer-readable formats [21].

Molecular representation forms the cornerstone of computational chemistry and drug design, enabling the application of machine learning (ML) and deep learning (DL) models to tasks including virtual screening, activity prediction, and scaffold hopping [21]. The evolution of these representations from simple numerical descriptors to complex, AI-driven embeddings has significantly expanded our ability to navigate and characterize the vast, nearly infinite chemical space [21].

Classical Molecular Descriptor Taxonomies

Traditional molecular representation methods rely on explicit, rule-based feature extraction. These can be broadly categorized into one-dimensional (1D), two-dimensional (2D), and three-dimensional (3D) descriptors, each capturing distinct aspects of molecular structure and properties.

1D Molecular Descriptors

1D descriptors consist of global molecular properties and are typically numerical values representing physicochemical characteristics. They are calculated from molecular formula and connectivity without requiring geometric information.

Table 1: Common 1D Molecular Descriptors and Their Applications

| Descriptor Category | Example Descriptors | Calculation Method | Primary Applications |

|---|---|---|---|

| Constitutional | Molecular Weight, Atom Count, Bond Count | Direct counting from molecular graph | Quick filtering, drug-likeness rules (e.g., Lipinski's Rule of 5) |

| Physicochemical | LogP (lipophilicity), Molar Refractivity, TPSA (Topological Polar Surface Area) | Empirical or additive atom-based methods | ADMET prediction, solubility, permeability assessment |

| Electronic | pKa, HOMO/LUMO energies, Dipole Moment | Quantum mechanical or empirical calculations | Reactivity prediction, ionization state analysis |

Experimental Protocol: Calculating 1D Descriptors

- Input Preparation: Obtain molecular structure in SMILES (Simplified Molecular-Input Line-Entry System) or MOL file format [21].

- Descriptor Selection: Choose relevant 1D descriptors based on the target property (e.g., LogP for permeability studies).

- Descriptor Calculation:

- Utilize cheminformatics software (e.g., RDKit, OpenBabel, alvaDesc).

- For constitutional descriptors: Parse molecular graph and count atoms/bonds.

- For physicochemical descriptors: Apply fragment contribution methods (e.g., Crippen's method for LogP).

- For electronic descriptors: Apply semi-empirical quantum methods if needed.

- Data Normalization: Apply standardization techniques (z-score, min-max scaling) for machine learning applications.

2D Molecular Descriptors

2D descriptors are derived from molecular topology (connectivity) and include structural fingerprints and topological indices. They capture patterns of atom connectivity without considering three-dimensional conformation.

Table 2: Key 2D Molecular Descriptors and Their Characteristics

| Descriptor Type | Representative Examples | Representation Format | Strengths | Common Uses |

|---|---|---|---|---|

| Topological Indices | Wiener Index, Zagreb Index, Balaban J | Numerical values | Graph invariance, low dimensionality | QSAR, similarity searching |

| Molecular Fingerprints | ECFP (Extended-Connectivity Fingerprints), FCFP (Functional-Class Fingerprints) | Bit strings (binary vectors) | High throughput, effective similarity assessment | Virtual screening, clustering, machine learning [21] |

| Fragment-Based | MACCS Keys, PubChem Fingerprint | Bit strings (predefined structural keys) | Interpretability, standardization | Rapid similarity search, substructure filtering |

Experimental Protocol: Generating 2D Molecular Fingerprints

- Molecular Standardization:

- Input structures in SMILES format [21].

- Apply standardization: sanitization, neutralization, tautomer standardization.

- Fingerprint Selection:

- ECFP/ECFP4: Captures circular atom environments; radius=2 for ECFP4.