Chemical Biology Approaches for Target Validation: Current Strategies and Future Directions in Drug Discovery

This article provides a comprehensive overview of modern chemical biology approaches for target validation in drug discovery.

Chemical Biology Approaches for Target Validation: Current Strategies and Future Directions in Drug Discovery

Abstract

This article provides a comprehensive overview of modern chemical biology approaches for target validation in drug discovery. Covering foundational principles to cutting-edge methodologies, it explores affinity-based techniques, label-free methods, computational approaches, and chemical probes. Essential reading for researchers and drug development professionals seeking to reduce attrition rates, enhance translational predictivity, and make informed decisions on target selection and validation strategies. The content addresses key challenges in the field while highlighting emerging technologies like AI integration and functional validation platforms that are reshaping early-stage research and development.

The Foundation of Target Validation: Principles and Paradigms in Chemical Biology

Target validation represents a critical stage in the drug discovery pipeline, where the predicted molecular target of a therapeutic compound is rigorously verified. This process establishes a causal relationship between target modulation and desired therapeutic outcome, determining whether a drug candidate merits progression through costly clinical development. Within the broader thesis on chemical biology approaches for target validation research, this whitepaper provides a comprehensive technical examination of core concepts, methodologies, and experimental frameworks. We define essential terminology, outline key validation techniques with detailed protocols, and present quantitative assessment criteria to guide researchers in establishing robust evidence for target-disease relationships.

Core Concepts and Definitions

Target validation is the process by which the predicted molecular target – for example, a specific protein or nucleic acid – of a small molecule is verified [1]. This foundational step moves beyond mere target identification to demonstrate that modulating the target produces a therapeutically relevant effect in disease models.

The molecular target typically constitutes a biologically active macromolecule such as an enzyme, receptor, ion channel, or nucleic acid whose activity can be modulated by a therapeutic agent. Validation establishes pharmacological linkage between compound binding and functional downstream consequences.

Within chemical biology, target validation employs chemical probes—selective small molecules designed to perturb specific protein functions—to illuminate fundamental biology and assess therapeutic potential [2]. These probes serve as critical tools for establishing causal relationships between target modulation and phenotypic outcomes.

The validation process must distinguish between correlative observations (where target activity associates with disease states) and causal relationships (where target modulation directly alters disease phenotypes). Chemical biology approaches are particularly powerful for establishing causality through controlled, temporal perturbation of biological systems.

Key Methodologies in Target Validation

Experimental Approaches and Techniques

Multiple orthogonal methodologies are employed to build compelling evidence for target engagement and biological relevance. These approaches can be categorized into genetic, biochemical, and chemical strategies, each providing complementary evidence for target validation.

Table 1: Core Methodologies in Target Validation

| Method Category | Specific Techniques | Key Applications | Evidence Provided |

|---|---|---|---|

| Genetic Perturbation | CRISPR-Cas9, RNAi, Overexpression | Functional genomics | Target-disease linkage |

| Biochemical & Biophysical | ITC, BLI, DSF, SPR | Binding quantification | Direct target engagement |

| Chemical Proteomics | Affinity chromatography, Thermal stability profiling | Target identification | Cellular target engagement |

| Structural Biology | X-ray crystallography, BLI | Mechanism of action | Structural binding evidence |

| Phenotypic Screening | High-content imaging, Functional assays | Biological consequence | Functional impact |

Genetic perturbation methods establish functional relationships between targets and disease phenotypes. Knockdown or overexpression of the presumed target provides evidence for its functional role in disease-relevant pathways [1]. CRISPR-based editing enables precise genetic manipulation to assess consequent phenotypic changes [2].

Biochemical and biophysical approaches quantitatively measure direct compound-target interactions. Isothermal Titration Calorimetry (ITC) determines ligand binding constants in solution by measuring binding heats, revealing thermodynamic driving forces that give rise to ligand binding [2]. Biolayer Interferometry (BLI) serves as a label-free direct detection method for studying protein-ligand interactions, enabling determination of binding constants and kinetic parameters [2]. Differential Scanning Fluorimetry (Thermal Shift Assays) leverages ligand-induced thermal stabilization of proteins to evaluate binding, applicable to any stable protein in solution with minimal optimization [2].

Chemical proteomics represents a powerful chemical biology approach that integrates compound affinity chromatography with protein mass spectrometry to identify proteins that bind to compounds in cell or tissue lysates [2]. This methodology exposes compounds to an entire competitive cellular proteome (~6,000 natural full-length proteins with posttranslational modifications), providing physiologically relevant context for evaluating cellular effects.

Thermal Stability Profiling represents an emerging methodology that enables profiling of small molecules and metabolites in intact living cells by monitoring ligand-induced thermal stabilization of proteins [2]. This approach allows target engagement assessment in physiologically relevant cellular environments.

Experimental Protocols

Chemical Proteomics Workflow

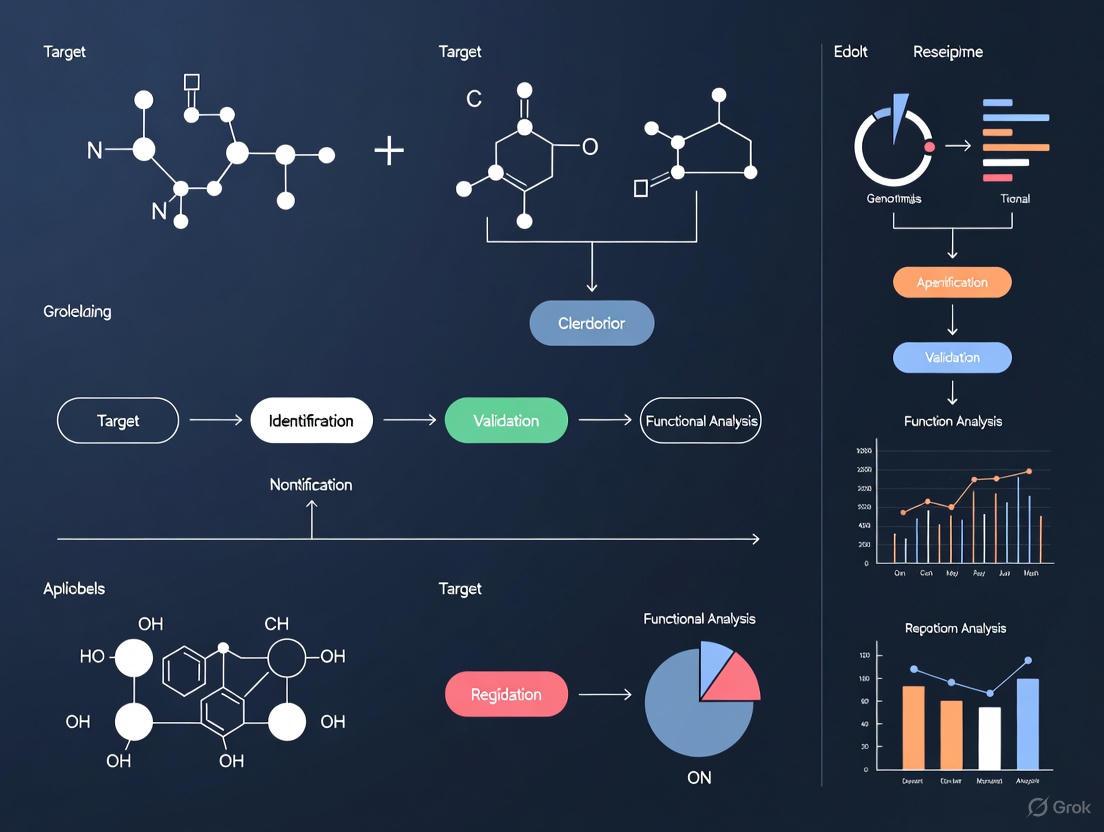

The following diagram illustrates the key steps in a standard chemical proteomics experiment for target validation:

Detailed Protocol:

- Compound immobilization: Covalently link the compound of interest to solid support beads (e.g., sepharose) via appropriate chemical linkers.

- Lysate preparation: Prepare whole-cell or tissue lysates in physiological buffers containing detergents to maintain protein structure while enabling accessibility.

- Affinity purification: Incubate compound-conjugated beads with lysate for 1-4 hours at 4°C with gentle rotation.

- Washing: Perform sequential washes with lysis buffer followed by mild detergent buffers to remove nonspecifically bound proteins.

- Competitive elution: Elute specifically bound proteins using excess free compound or buffer conditions that disrupt interactions.

- Protein identification: Digest eluted proteins with trypsin and analyze peptides by liquid chromatography-tandem mass spectrometry (LC-MS/MS).

- Data analysis: Identify specific binders through statistical comparison against control beads using bioinformatic tools.

Thermal Shift Assay Protocol

Differential Scanning Fluorimetry (Thermal Shift Assay) measures protein thermal stabilization upon ligand binding [2].

Reagents and Equipment:

- Purified target protein (>90% purity)

- Fluorescent dye (e.g., SYPRO Orange)

- Real-time PCR instrument capable of temperature ramping

- Ligands/inhibitors for testing

- Appropriate protein storage buffer

Procedure:

- Prepare protein-dye mixture in optimized buffer (typically 1-5 μM protein concentration).

- Dispense 18 μL protein-dye mixture into PCR plate wells.

- Add 2 μL test compound or control solution (DMSO).

- Run temperature gradient from 25°C to 95°C with 1°C increments per minute.

- Monitor fluorescence intensity continuously as protein unfolds and exposes hydrophobic regions.

- Calculate melting temperature (Tm) from fluorescence inflection point.

- Determine ΔTm values (Tm with compound - Tm without compound) as indicator of binding.

Interpretation: Significant positive ΔTm values (typically >1°C) suggest compound binding and stabilization of protein structure.

Quantitative Assessment and Criteria

Establishing minimally acceptable criteria (MAC) for target validation provides objective thresholds for decision-making. The targeted test evaluation framework adapts this approach from diagnostic test development to target validation [3].

Table 2: Minimally Acceptable Criteria for Target Validation

| Validation Parameter | Assessment Method | Minimally Acceptable Criteria | Evidence Level |

|---|---|---|---|

| Binding Affinity | ITC, BLI, SPR | Kd < 10 μM for tool compounds | Direct engagement |

| Cellular Activity | Functional assays | IC50/EC50 < 10x biochemical potency | Cellular engagement |

| Target Modulation | Western blot, qPCR | >50% target modulation | Functional consequence |

| Selectivity | Chemical proteomics | <5 significant off-targets | Selectivity evidence |

| Phenotypic Concordance | Phenotypic screening | Consistent with target biology | Disease relevance |

The framework involves defining minimally acceptable criteria (MAC) for key validation parameters before initiating studies [3]. These criteria should be established based on the intended therapeutic context and the consequences of target modulation.

For diagnostic applications in target validation, the framework proposes establishing a target region in ROC (receiver operating characteristic) space defined by minimally acceptable sensitivity and specificity criteria [3]. A test is considered acceptable when both point estimates and confidence intervals for sensitivity and specificity fall within this target region.

Chemical Biology Approaches

Chemical biology provides unique tools and perspectives for target validation, emphasizing the use of chemical probes to modulate and study biological systems [2] [4]. These approaches bridge chemistry and biology to create reagents that explore protein function and assess therapeutic potential.

Photopharmacology represents an emerging chemical biology approach that uses light to change the shape and/or properties of a therapeutic agent [4]. This enables precise temporal and spatial control over compound activity, allowing researchers to establish causal relationships between target engagement and phenotypic outcomes with high resolution.

Photoaffinity labeling utilizes photoreactive small-molecule probes to covalently capture protein-ligand interactions [4]. When combined with mass spectrometry, this approach enables identification of cellular targets and binding sites, providing mechanistic insights into compound mechanism of action.

Chemical biological target validation approaches are particularly valuable for characterizing inhibitors developed in medicinal chemistry efforts [4]. These methods help establish the relationship between chemical structure, target engagement, and functional outcomes, strengthening the validation evidence.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for Target Validation

| Reagent/Category | Function/Application | Key Characteristics |

|---|---|---|

| Selective Chemical Probes | Target perturbation | High potency (IC50 < 100 nM), >30-fold selectivity |

| CRISPR-Cas9 Systems | Genetic knockout | Gene-specific gRNAs, efficient delivery systems |

| Affinity Matrices | Chemical proteomics | Compound-conjugated beads, appropriate linker chemistry |

| Activity-Based Probes | Target engagement monitoring | Reporter tags (fluorescent/biotin), maintained target affinity |

| Protéomics Kits | Sample preparation | Lysis buffers, digestion enzymes, clean-up columns |

| Cell Line Panels | Specificity assessment | Disease-relevant models, diverse genetic backgrounds |

The selection of appropriate research reagents is critical for robust target validation. Chemical probes should demonstrate high potency (typically <100 nM), >30-fold selectivity against related targets, and pharmacological specificity confirmed in cellular models [2]. These characteristics ensure that observed phenotypes can be confidently attributed to modulation of the intended target.

CRISPR-Cas9 systems enable precise genetic perturbation with specific guide RNAs designed to minimize off-target effects while maximizing editing efficiency [1]. Proper controls, including multiple independent guides targeting the same gene and rescue experiments, strengthen validation evidence.

Affinity matrices for chemical proteomics require careful consideration of linker chemistry and attachment points that preserve compound affinity while enabling efficient capture of interacting proteins [2]. Control beads without compound or with inactive analogs are essential for distinguishing specific binders.

Target validation represents a multidisciplinary endeavor that integrates chemical, biological, and computational approaches to build compelling evidence for therapeutic target selection. Chemical biology provides particularly powerful tools through the development and application of selective chemical probes that enable temporal and spatial control over target modulation. The field continues to evolve with emerging technologies such as photopharmacology, advanced chemoproteomics, and structural biology methods that provide increasingly sophisticated insights into target engagement and mechanism of action. By applying orthogonal validation strategies and establishing rigorous, pre-specified criteria for success, researchers can enhance the efficiency of drug discovery and improve the probability of clinical success for new therapeutic modalities.

The evolution from classical genetics to modern chemical biology represents a fundamental paradigm shift in how scientists investigate and manipulate biological systems. Classical genetics, the oldest discipline in genetics, was based solely on the visible results of reproductive acts, going back to Gregor Mendel's experiments on Mendelian inheritance [5]. This field consisted of techniques and methodologies used before the advent of molecular biology and focused primarily on the transmission of genetic traits via reproductive acts [5]. In contrast, chemical biology is a modern scientific discipline that combines chemistry and biology by using chemistry and chemical techniques to study biological systems [6]. The main difference between chemical biology and biochemistry is that chemical biology involves adding novel chemical compounds to a biological system, while biochemistry focuses on studying chemical reactions that naturally occur inside organisms [6].

This evolution has proven particularly significant in the context of target validation for drug discovery. Target validation is a crucial element of drug discovery, especially given the wealth of potential targets emerging from cancer genome sequencing and functional genetic screens [7]. The time and cost of downstream drug discovery efforts make it essential to build confidence in proposed targets using different technical approaches, with complementary biological and chemical biology strategies being essential for robust target validation [7]. The historical progression from observing phenotypic traits to actively manipulating biological systems with chemical tools has transformed our approach to understanding disease mechanisms and developing therapeutic interventions.

Foundations: Classical Genetics and its Principles

Classical genetics originated with Gregor Mendel's experiments with garden peas in the 19th century, where he formulated and defined the fundamental biological concept known as Mendelian inheritance [5]. Mendel's work established the basic mechanisms of heredity through his observations of phenotypic characteristics in peas, including seed color, flower color, and seed shape [5]. His systematic crossing of peas with differing phenotypic characteristics allowed him to deduce how parental plants passed traits to their offspring and to determine which traits were dominant versus recessive based on the distribution of phenotypes in subsequent generations [5].

The fundamental concepts and definitions established by classical genetics continue to underpin modern genetic research:

- Gene: The hereditary factor tied to a particular simple feature or character [5]

- Genotype: The set of genes for one or more characters possessed by an individual [5]

- Phenotype: An individual's visible, physical traits [5]

- Allele: Paired genes that control the same trait in an individual [5]

A key discovery of classical genetics in eukaryotes was genetic linkage, which demonstrated that some genes do not segregate independently at meiosis, thus breaking the laws of Mendelian inheritance and providing a method to map characteristics to specific locations on chromosomes [5]. This concept of linkage maps is still used today, especially in plant improvement breeding programs [5].

The Mendelian inheritance patterns established through classical genetics provided the foundational framework for understanding how traits are transmitted across generations. Mendel's work with monohybrid crosses (showing a 3:1 ratio) and dihybrid crosses (showing a 9:3:3:1 ratio) established patterns of inheritance that could be explained by the basic mechanisms of heredity [5]. These patterns were later explained at the molecular level after advances in molecular biology, but the fundamental principles established through classical approaches remain intact and in use today [5].

The Transition to Molecular Understanding

The transition from classical to molecular genetics was marked by several pivotal discoveries that fundamentally changed how scientists approached biological research. After the discovery of the genetic code and cloning tools such as restriction enzymes, the avenues of investigation open to geneticists were greatly broadened [5]. While some classical genetic ideas were supplanted with the mechanistic understanding brought by molecular discoveries, many classical concepts remained intact and simply gained molecular explanations [5].

Friedrich Miescher's work in the latter half of the 19th century represented an important early step in this transition when he used chemical compounds to isolate and break down the nuclei of cells [6]. He obtained substances that would later be termed "nucleic acids," which we now recognize as the genetic information of the cell [6]. Similarly, in 1828, German chemist Friedrich Wöhler isolated the molecule urea by mixing chemicals such as ammonium chloride and silver cyanate [6]. This was particularly significant because urea had previously only been obtained from living organisms, and this demonstration that biological compounds could be made from inorganic materials challenged the widespread belief in a "vital force" necessary for all biological compounds [6].

The development of cellular imaging techniques during the 19th century, including useful compounds like aniline dye for staining cells, further bridged the gap between classical observation and molecular investigation [6]. Additionally, the beginnings of chemical intervention in biological systems emerged with compounds like Salvarsan, invented by Paul Ehrlich in the 19th century to treat syphilis by targeting the bacteria that caused it [6]. This represented an early application of chemical compounds to modulate biological systems for therapeutic purposes.

As molecular biology developed, it gave rise to reverse genetics (sometimes equated with molecular genetics), in which a specific gene of interest is targeted for mutation, deletion, or functional ablation, followed by a broad search for the resulting phenotype [8]. This approach contrasted with the forward genetics approach of classical genetics, where researchers would identify a phenotype of interest and then work to identify the gene or genes responsible [8]. This shift in approach mirrored the broader transition from observation-based genetics to intervention-based molecular biology.

Emergence of Chemical Biology as a Discipline

Chemical biology began to be recognized as a distinct field in the 20th century, with the term only coming into widespread use in the 1990s [6]. The discipline encompasses a wide range of research topics including enzymology, medicinal chemistry, structural biology, and proteomics (the study of proteins), and typically involves extensive collaboration between scientists specializing in biology or chemistry [6]. The field represents a convergence of chemical and biological approaches, leveraging the principles and techniques of both disciplines to address complex biological questions.

The philosophical and methodological differences between chemical biology and related fields are significant. While biochemistry is concerned with the chemical processes that naturally occur in cells and tends to focus on larger molecules like proteins and nucleic acids, chemical biology involves adding chemical compounds to biological systems to observe effects and typically studies smaller molecules [6]. Chemical biology aims to develop techniques that can eventually be applied to cells in living organisms, with particular relevance for treatment options for cancer and other diseases [6].

Chemical biology's emergence as a distinct discipline coincided with important methodological advances. The advent of affinity purification techniques provided a direct approach to finding target proteins that bind to small molecules of interest [8]. Early work in this area involved monitoring chromatographic fractions for enzyme activity after exposing extracts to compounds immobilized on a column, followed by elution [8]. Such approaches have been used successfully to identify protein targets of both natural and synthetic small molecules [8]. Modern approaches have evolved to include methods based on chemical or ultraviolet light-induced cross-linking, which use covalent modification of the protein target to increase the likelihood of capturing low-abundance proteins or those with low affinity for the small molecule [8].

Table: Key Historical Developments in the Emergence of Chemical Biology

| Time Period | Development | Key Contributors | Significance |

|---|---|---|---|

| 1828 | Synthesis of Urea | Friedrich Wöhler | Demonstrated biological compounds could be made from inorganic materials |

| Late 19th Century | Cellular Staining | Various | Enabled visualization of cellular structures |

| Late 19th Century | Pathogen-Targeted Therapy | Paul Ehrlich | Early example of targeted chemical intervention |

| Late 19th Century | Nucleic Acid Isolation | Friedrich Miescher | Identified chemical basis of inheritance |

| 1990s | Formalization of Field | Multiple | "Chemical biology" recognized as distinct discipline |

Chemical Biology in Target Validation and Drug Discovery

Target validation is a crucial element of modern drug discovery, particularly given the wealth of potential targets emerging from cancer genome sequencing and functional genetic screens [7]. The significant time and cost of downstream drug discovery efforts make it essential to build confidence in proposed targets, ideally using different technical approaches [7]. Chemical biology has emerged as a powerful approach for this validation process, with chemical probes playing an essential role in supporting the unbiased interpretation of biological experiments necessary for rigorous preclinical target validation [9].

The approach of using fully profiled chemical probes represents a fundamental shift in how researchers approach target validation. By developing a 'chemical probe tool kit' and a framework for its use, chemical biology can play a more central role in identifying targets of potential relevance to disease, avoiding many of the biases that complicate target validation as currently practiced [9]. This approach has been particularly valuable given the pharmaceutical industry's struggles with high attrition rates in clinical development, primarily due to a lack of clinical efficacy demonstrated by candidate drugs [10].

Two fundamental approaches to understanding the action of small molecules on biological systems mirror the historical divide between classical and molecular genetics:

Reverse Chemical Genetics: Analogous to reverse genetics, this approach involves selecting and purifying a protein target before conducting a high-throughput screen [8]. After target validation or credentialing, binders or inhibitors of this protein are tested for their impact on biological processes [8].

Forward Chemical Genetics: Analogous to forward genetics, this approach tests small molecules directly for their impact on biological processes, often in cells or whole animals [8]. Phenotypic screens expose candidate compounds to proteins in biologically relevant contexts without preconceived notions of relevant targets and signaling pathways [8].

Table: Comparison of Approaches to Biological Investigation

| Characteristic | Classical Genetics (Forward) | Reverse Genetics | Forward Chemical Genetics | Reverse Chemical Genetics |

|---|---|---|---|---|

| Starting Point | Phenotype observation | Known gene/protein | Phenotypic screening | Known protein target |

| Methodology | Identify genes responsible for phenotype | Ablate gene and observe phenotype | Test compounds for biological impact | Screen compounds against purified target |

| Advantages | Unbiased discovery | Precise targeting | Biologically relevant context | High-throughput capability |

| Limitations | Time-consuming | May not reflect natural context | Target identification required | May lack biological context |

Several important drug programs have been inspired by phenotypic screening results, demonstrating the power of the forward chemical genetics approach. Notable examples include the effects of cyclosporine A and FK506 on T-cell receptor signaling, which led to the discoveries of FKBP12, calcineurin, and mTOR [8]. Similarly, the performance of trapoxin A in differentiation and proliferation assays led to the discovery of histone deacetylases [8]. These successes highlight how such assays 'prevalidate' the small molecule and its initially unknown protein target as an effective means of perturbing the biological process or disease model under study [8].

Key Methodologies and Experimental Protocols

Affinity Purification and Chemoproteomics

Affinity purification provides the most direct approach to identifying target proteins that bind to small molecules of interest [8]. The general protocol involves immobilizing the compound of interest on a solid support, incubating with cell lysates or protein mixtures, washing away non-specifically bound proteins, and then identifying specifically bound proteins typically through mass spectrometry [8]. Key considerations in these experiments include preparing immobilized affinity reagents that retain cellular activity, using appropriate controls (such as beads loaded with an inactive analog or capped without compound), and selecting appropriate tethers that minimize nonspecific interactions [8].

Recent advances in affinity-based methods have addressed various challenges in target identification. Photoaffinity labeling approaches use covalent modification of the protein target to increase the likelihood of capturing low-abundance proteins or those with low affinity for the small molecule [8]. A variation on this method couples covalent modification to two-dimensional gel electrophoresis to deconvolve nonspecific interactions [8]. Another approach involves immobilizing small molecules to peptides that allow recovery of the probe-protein complex by immunoaffinity purification, addressing the issue of functional group masking during coupling reactions [8].

Phenotypic Screening and Target Deconvolution

Phenotypic screening followed by target deconvolution represents a powerful chemical biology approach that has led to important biological discoveries [8]. The general workflow begins with screening compounds in cell-based or organism-based assays that measure relevant phenotypic outputs. Once compounds with desired phenotypic effects are identified, the challenging process of target identification begins, often using a combination of methods to build confidence in the identification [8].

The process of target deconvolution can be approached through three distinct and complementary strategies:

Direct Biochemical Methods: These involve labeling the protein or small molecule of interest, incubating the two populations, and directly detecting binding, usually following wash procedures [8].

Genetic Interaction Methods: These use genetic manipulation to identify protein targets by modulating presumed targets in cells, thereby changing small-molecule sensitivity [8].

Computational Inference Methods: These use pattern recognition to compare small-molecule effects to those of known reference molecules or genetic perturbations, generating target hypotheses rather than directly identifying targets [8].

In practice, most target-identification projects proceed through a combination of these methods, with researchers using both direct measurements and inferences to test increasingly specific target hypotheses [8]. The analytical integration of multiple, complementary approaches generally provides the most robust solution to the target identification challenge [8].

Diagram 1: Workflow for phenotypic screening and target deconvolution in chemical biology.

Chemical Probe Development and Optimization

The development of high-quality chemical probes is essential for rigorous target validation [9]. Fully profiled chemical probes support the unbiased interpretation of biological experiments necessary for rigorous preclinical target validation [9]. The process of chemical probe development involves iterative optimization of compound properties to ensure selectivity, potency, and appropriate pharmacokinetic properties.

Recent advances in chemical probe development include the use of "silent" reporters containing click handles onto which fluorescent dyes can be appended intracellularly [10]. These probes provide a more accurate picture of subcellular distribution and target engagement since the physicochemistry of a fluorometric dye can perturb the function of a chemical tool [10]. Similarly, the development of bifunctional probes that simultaneously target multiple proteins, such as the HDAC/BET inhibitors designed by Atkinson and co-workers, provides unique tools for studying epigenetic modulation [10].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table: Key Research Reagent Solutions in Chemical Biology

| Reagent/Material | Function/Application | Example Use Cases |

|---|---|---|

| Immobilized Affinity Matrices | Purification of target proteins using small molecule baits | Identification of direct protein targets through pull-down assays [8] |

| Photoaffinity Labels | Covalent cross-linking of small molecules to their protein targets | Capture of low-abundance proteins or low-affinity interactions [8] |

| Click Chemistry Handles | Bioorthogonal conjugation for visualization and purification | Target visualization and identification through clickable tags [10] |

| Chemical Libraries | Collections of compounds for screening | Phenotypic screening and structure-activity relationship studies [10] |

| Activity-Based Probes | Reporting on enzyme activity in complex proteomes | Optimization of selective inhibitors in complex proteomes [10] |

| Bifunctional Chemical Modulators | Simultaneous targeting of multiple proteins | Study of epigenetic mechanisms using dual pharmacology tools [10] |

Case Studies and Applications

Discovery of BET Bromodomain Inhibitors

An affinity-based chemoproteomic approach was originally used to identify the BET bromodomains as targets of a phenotypic screening hit bearing the benzodiazepine unit [10]. This discovery and the subsequent development of BET inhibitors facilitated by their accessibility through the Structural Genomics Consortium has helped elucidate bromodomain biology, particularly in oncology and inflammation [10]. This case exemplifies the power of combining phenotypic screening with rigorous target identification approaches to open new therapeutic avenues.

Targeting the Ubiquitin-Proteasome System

The development of chemical tools to inhibit the ubiquitin-proteasome system (UPS) by Linder and co-workers demonstrates how classic mechanistic investigation into biochemical effects can yield important pharmacological insights [10]. Through detailed study of the biochemical effects of their inhibitor, the researchers gained further understanding of this modality's pharmacology, highlighting how chemical biology approaches can illuminate complex biological systems.

Identification of MLKL in Necroptosis

Lei and co-workers described an impressive example of target identification using affinity pull-down experiments [10]. Through SAR optimization of a hit from a phenotypic screen for necroptosis, they developed 'necrosulfonamide' (NSA). Immobilization of this inhibitor using a rigid polyproline linker, which improved isolation of low abundance proteins, identified Mixed Lineage Kinase Domain-Like Protein (MLKL) as a direct target for NSA [10]. This case illustrates the importance of linker optimization in affinity purification approaches.

Diagram 2: Historical evolution from classical genetics to modern chemical biology approaches.

The historical evolution from classical genetics to modern chemical biology represents a continuous refinement of our approach to understanding and manipulating biological systems. Classical genetics provided the foundational principles of heredity and trait transmission [5], while molecular biology offered mechanistic explanations at the molecular level [8]. Chemical biology has emerged as a powerful synthesis of chemical and biological approaches, enabling both the understanding and targeted manipulation of biological systems for therapeutic applications [6].

The application of chemical biology to target validation and drug discovery has addressed critical challenges in pharmaceutical development, particularly the high attrition rates due to lack of clinical efficacy [10] [9]. By developing and applying high-quality chemical probes, researchers can build confidence in proposed targets before committing to extensive downstream development efforts [7] [9]. The integration of complementary approaches—including affinity-based methods, genetic interactions, and computational inference—provides a robust framework for target identification and validation [8].

Future advances in chemical biology will likely focus on improving the quality and characterization of chemical probes, developing more sophisticated methods for target deconvolution, and increasingly leveraging computational approaches to integrate diverse data types [8] [9]. As chemical biology continues to mature, its central role in identifying targets of potential relevance to disease and providing rigorous validation of these targets will be essential for advancing therapeutic development and improving human health.

The Critical Role of Target Validation in Reducing Drug Attrition

Clinical development success remains very low across all drug modalities, with typical success rates being a single-digit percentage from Phase I entry to regulatory approval. Industry analyses indicate that the overall Likelihood of Approval (LOA) has fallen from approximately 10% in 2014 to just 6-7% in recent years [11]. This high attrition rate, particularly in Phase II clinical trials, drives enormous research and development costs and significantly depresses return on investment for pharmaceutical companies. Insufficient validation of drug targets in the early stages of development has been strongly linked to these costly clinical trial failures and lower drug approval rates [12]. Within this challenging landscape, robust target validation emerges as a critical foundation for improving R&D productivity, serving as the essential process that confirms whether modulating a specific biological target offers genuine therapeutic potential before significant resources are committed to drug development [12].

Quantitative Analysis of Drug Attrition Across Modalities

Drug attrition rates vary significantly across different therapeutic modalities, though all face substantial challenges. The table below summarizes clinical phase transition success rates and overall likelihood of approval for major drug classes based on comprehensive industry data (2005-2025) [11].

Table 1: Clinical Attrition Rates by Drug Modality

| Modality | Phase I→II Success | Phase II→III Success | Phase III→Approval | Overall LOA |

|---|---|---|---|---|

| Small Molecules | 52.6% | 28.0% | ~57.0% | 5.7% |

| Peptides | 52.3% | Data Missing | Data Missing | 8.0% |

| Monoclonal Antibodies | 54.7% | Data Missing | 68.1% | 12.1% |

| Protein Biologics | 51.6% | Data Missing | 89.7% | 9.4% |

| Antibody-Drug Conjugates | 41-42% | 41-42% | ~100% | Data Missing |

| Oligonucleotides (ASO) | 61.0% | Data Missing | 66.7% | 5.2% |

| Oligonucleotides (RNAi) | ~70.0% | Data Missing | 100% | 13.5% |

| Cell & Gene Therapies | 48-52% | Data Missing | Data Missing | 10-17% |

Phase II represents the most significant hurdle across all modalities, with only approximately 28% of all programs advancing beyond this stage [11]. The biological and translational factors driving attrition differ by modality: small molecules and peptides frequently fail due to toxicity and pharmacokinetic issues; oligonucleotides face delivery and stability challenges; antibody-drug conjugates confront complex engineering hurdles; proteins and antibodies risk immunogenic responses; and cell/gene therapies navigate manufacturing and immune challenges [11].

Foundational Principles of Target Validation

Target validation constitutes the process of subjecting a potential drug target to rigorous experiments that confirm its direct involvement in a specific disease pathway and demonstrate that modulating its activity can produce a therapeutic effect [12]. This process begins after target identification and serves as the critical gatekeeper determining whether a target progresses further in the drug development pipeline [12].

The validation process typically follows a logical workflow that progresses from computational assessment to increasingly complex biological systems, as illustrated below:

The Target Validation Toolbox

Modern chemical biology employs diverse methodological approaches for target validation, each with distinct applications and limitations:

Table 2: Target Validation Methodologies

| Method Category | Key Technologies | Primary Applications | Limitations |

|---|---|---|---|

| Genetic/Genomic | CRISPR/Cas9 knockout/activation [13], RNA interference [14], Antisense oligonucleotides [11] | Functional genomics, pathway analysis, loss/gain-of-function studies | Off-target effects, compensatory mechanisms |

| Proteomic | Cellular Thermal Shift Assay (CETSA) [12], Activity-Based Protein Profiling (ABPP) [12], Chemical proteomics [12] | Target engagement verification, identification of binding partners | Technical complexity, limited dynamic range |

| Cell-Based | High-Throughput Screening (HTS) [13], Cell viability/proliferation assays [12] | Compound screening, phenotypic assessment | Translation to in vivo systems |

| In Vivo | Mouse xenograft models [12], Genetic animal models | Therapeutic efficacy, toxicology assessment | Species differences, cost, time |

Experimental Protocols for Target Validation

High-Throughput siRNA-Based Functional Validation

RNA interference (RNAi) provides a powerful approach for functional target validation through gene-specific knockdown. The protocol below outlines a robust methodology for siRNA-based screening [14]:

Workflow Overview:

- Screen Design: Implement a project with a functional cell-based screen for a biological process of interest using libraries of small interfering RNA (siRNA) molecules

- Gene Knockdown: Utilize siRNAs as potent gene-specific inhibitors in cultured mammalian cells

- Confirmation: Verify siRNA-mediated knockdown of target genes by TaqMan analysis

- Functional Assessment: Select genes with impacts on biological functions of interest for further analysis

- Target Prioritization: Advance confirmed and validated genes for HTS to yield lead compounds

Key Technical Considerations:

- Ensure siRNA specificity to minimize off-target effects

- Include appropriate positive and negative controls

- Implement robust statistical analysis for hit identification

- Validate findings with multiple siRNAs targeting the same gene

CRISPR/Cas9 Screening for Systematic Target Validation

CRISPR/Cas9 technology enables genome-wide functional validation through precise gene editing. The following workflow details a pooled screening approach [13]:

Protocol Specifications:

- Library Selection: Choose between knockout (Toronto KnockOut) or activation (SAM) libraries based on validation needs

- Virus Production: Produce high-titer lentivirus using multi-plasmid systems with proper safety controls

- Transduction Optimization: Determine optimal multiplicity of infection (MOI) to ensure single integration events

- Selection & Screening: Apply appropriate selective pressure (e.g., antibiotics) followed by phenotypic screening

- Sequencing & Analysis: Extract genomic DNA, amplify target regions, and sequence to identify enriched/depleted guides

Chemical Biology Approaches for Direct Target Engagement

Chemical biology provides direct methods for establishing target engagement and mechanism of action:

Cellular Thermal Shift Assay (CETSA) Protocol [12]:

- Compound Treatment: Expose cells or tissue samples to the drug compound of interest

- Heat Denaturation: Subject samples to different temperatures to denature proteins

- Protein Extraction: Separate soluble (stable) proteins from insoluble (denatured) proteins

- Target Quantification: Detect target protein levels in soluble fractions using immunoblotting or mass spectrometry

- Data Analysis: Calculate thermal shift as evidence of compound-target engagement

Chemical Proteomics Workflow [12]:

- Probe Design: Create chemical probes that specifically bind to desired proteins

- Affinity Purification: Retrieve probe-bound proteins from complex biological samples

- Mass Spectrometry: Identify interacting proteins using advanced proteomic techniques

- Network Analysis: Integrate results into biological pathways to understand mechanism

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for Target Validation

| Reagent Category | Specific Examples | Function & Application |

|---|---|---|

| CRISPR Libraries | Toronto KnockOut v3 (4 gRNAs/gene, 70,948 total gRNAs) [13] | Genome-wide knockout screening for functional validation |

| RNAi Reagents | siRNA libraries, ASOs (antisense oligonucleotides) [14] | Gene-specific knockdown for target prioritization |

| Cell-Based Assay Systems | Reporter cell lines, primary cells, co-culture systems [13] | Phenotypic screening and functional assessment |

| Proteomic Tools | CETSA reagents, activity-based probes, mass spectrometry kits [12] | Direct target engagement and binding confirmation |

| Animal Models | Tumor cell line xenografts, genetically engineered mouse models [12] | In vivo target validation and therapeutic efficacy testing |

| Detection Reagents | TaqMan assays, antibodies for Western blot, fluorescent markers [14] | Target quantification and visualization |

Impact on Drug Development Success

Comprehensive target validation directly addresses the primary causes of clinical phase attrition. By front-loading the discovery pipeline with rigorous validation, organizations can significantly reduce failure rates in later, more expensive stages of development [12]. Effective target validation and early proof-of-concept studies could substantially reduce phase II clinical trial failures, consequently lowering the overall cost of developing new molecular entities [12].

The strategic implementation of chemical biology approaches—including CRISPR functional genomics, chemical proteomics, and high-throughput screening—provides the multidimensional evidence needed to build confidence in therapeutic targets before committing to full-scale drug development. As novel modalities like cell and gene therapies, oligonucleotides, and ADCs continue to emerge, robust target validation becomes even more crucial for navigating their unique biological complexities and achieving developmental success [11].

In the challenging landscape of pharmaceutical R&D, where overall likelihood of approval has declined to approximately 6-7% [11], target validation represents the critical foundation for improving success rates. The integration of advanced chemical biology approaches—including CRISPR screening, chemical proteomics, and high-throughput functional genomics—provides powerful tools for de-risking drug discovery pipelines. By employing these methodologies systematically and early in the development process, researchers can significantly reduce costly late-stage attrition, enhance R&D productivity, and ultimately deliver more effective therapeutics to patients. As the field continues to evolve with novel modalities and complex targets, the role of comprehensive target validation will only grow in importance for achieving sustainable drug development success.

Within chemical biology, the systematic use of small molecules to decipher complex biological processes provides a powerful framework for target validation and drug discovery. This whitepaper delineates the two principal methodologies governing this approach: forward and reverse chemical genetics. Forward chemical genetics initiates with a phenotypic screen of small molecules in a biological system, progressing to identify the molecular targets responsible for the observed effects. Conversely, reverse chemical genetics begins with a predefined protein target of interest and seeks small molecules that modulate its function, subsequently observing the resulting phenotypic outcomes [15] [16] [17]. This guide offers an in-depth technical comparison of these strategies, detailing their experimental workflows, core methodologies, and applications in target validation research. It further provides a structured analysis of their respective advantages and challenges, serving as a comprehensive resource for researchers and drug development professionals.

Chemical genetics is a multidisciplinary field that utilizes small molecules as probes to perturb and understand biological systems, thereby linking gene and protein function to phenotypic outcomes [15] [17]. Unlike classical genetics, which directly alters genetic information, chemical genetics targets the proteins, offering reversible, dose-dependent, and temporal control over biological processes [16] [17]. This makes it particularly valuable for studying essential genes or transient biological events where traditional genetic knockouts might be lethal or uninformative.

The field is bifurcated into two complementary research strategies. Forward chemical genetics mirrors forward classical genetics; it starts with an observable phenotype and works backward to identify the responsible genotype and its protein products [17]. Reverse chemical genetics, analogous to reverse genetics, begins with a known gene or protein and investigates its function by identifying modulating compounds and characterizing the resulting phenotype [15] [18]. Both strategies serve as a critical bridge between phenotypic screening and the comprehensive exploration of underlying mechanisms of action (MoA), playing an indispensable role in elucidating biological pathways and advancing the drug discovery process [15].

Forward Chemical Genetics: From Phenotype to Target

Core Principles and Workflow

Forward chemical genetics is a hypothesis-generating approach that prioritizes phenotypic relevance. It is characterized by its unbiased nature, allowing for the discovery of novel druggable targets and compounds with unique therapeutic effects without prior knowledge of the specific protein target [15] [19]. The process typically involves three fundamental steps, as outlined in Table 1 [16].

Table 1: Key Steps in a Forward Chemical Genetics Screen

| Step | Description | Key Considerations |

|---|---|---|

| 1. Phenotypic Screening | A library of small molecules is screened in a cellular or organismal system for a desired phenotypic change [16] [19]. | Assay design (e.g., image-based) is critical; must be robust and relevant. Cellular uptake and bioavailability can cause false negatives [15] [20]. |

| 2. Target Identification | Active compounds ("hits") are immobilized, and their interacting protein targets are isolated and identified [16]. | The most significant bottleneck. Methods include affinity pull-down, chemoproteomics, and tagged library approaches [15] [16]. |

| 3. Target Validation | The putative target is confirmed through competition assays and genetic studies (e.g., mutants, transgenic lines) [16]. | Critical to confirm specificity and that the phenotypic effect is due to engagement with the identified target [16]. |

The following diagram illustrates the conceptual workflow and the critical decision points in a forward chemical genetics screen.

Key Methodologies and Protocols

High-Throughput Phenotypic Screening

Modern forward genetics employs automation to screen large chemical libraries efficiently. A representative protocol for a high-throughput screen using Arabidopsis thaliana involves several key stages as described in [20]:

- Library Preparation: A library of 50,000 small molecules is received in 96-well format. A dilution library is created using a liquid handling robot to transfer compounds into v-bottom plates containing water, achieving a working concentration.

- Assay Setup: A media-seed mixture is prepared. Half-strength Murashige and Skoog (MS) media with 0.1% agar is used. Arabidopsis seeds are sterilized, vernalized, and added to the media at a density of 0.1g/100mL. The liquid handling robot then dispenses this media-seed mixture into 96-well flat-bottom plates containing the diluted compounds.

- Incubation and Analysis: Plates are incubated under controlled conditions. After a set period, plates are visualized under a dissecting microscope to identify compounds that induce phenotypic alterations such as short roots, altered coloration, or inhibited germination [20].

Target Deconvolution via Chemoproteomics

Once a bioactive compound is identified, the primary challenge is target identification. Chemoproteomics has emerged as a straightforward and effective approach [15]. It can be broadly classified into two strategies:

- Chemical Probe-Based Methods: The hit compound is functionalized to create a chemical probe, often incorporating tags for affinity enrichment (e.g., biotin) and photoaffinity groups (e.g., diazirines) for covalent cross-linking upon UV irradiation. This allows for the pull-down of engaged proteins, which are then identified via high-resolution mass spectrometry [15]. Click chemistry can further improve the efficiency and sensitivity of this process.

- Probe-Free Methods: Recently developed methods detect protein-ligand interactions without modifying the parent ligand molecule. These techniques directly monitor the changes in protein properties or stability upon compound binding, such as in thermal proteome profiling or drug affinity responsive target stability (DARTS) [15].

Reverse Chemical Genetics: From Target to Phenotype

Core Principles and Workflow

Reverse chemical genetics is a hypothesis-driven approach that starts with a known gene or protein target and aims to discover or design small molecules that modulate its activity, thereby elucidating its biological function [15] [17] [18]. This method is highly targeted and facilitates rational drug design and structure-activity relationship (SAR) analysis [15]. A common application is in comprehensive fitness profiling to understand drug-target interactions and mechanisms of resistance [21]. The workflow, detailed in Table 2, involves a defined sequence of steps.

Table 2: Key Steps in a Reverse Chemical Genetics Screen

| Step | Description | Key Considerations |

|---|---|---|

| 1. Target Selection | A specific, well-defined protein target (e.g., an enzyme, receptor) is selected based on genomic or proteomic data [15] [18]. | Requires prior biological knowledge. The target must be "druggable"—able to bind a small molecule with high affinity and specificity. |

| 2. Compound Screening | Libraries of small molecules are screened against the purified target or in a cellular system engineered for the target [17] [18]. | Screening assays are designed to measure binding (e.g., SPR) or functional modulation (e.g., enzyme activity). |

| 3. Phenotypic Characterization | Active compounds are introduced into cells or model organisms to observe the resulting phenotypic effects [17]. | The observed phenotype may not fully recapitulate the complex pathophysiology of a human disease [15]. |

| 4. Resistance & Validation | For anti-infectives/anti-cancer drugs, resistance alleles can be profiled to understand target interactions and validate target engagement [21]. | Identifies mutations that confer resistance, confirming the drug's mechanism of action and predicting clinical resistance. |

The diagram below outlines the core workflow for a reverse chemical genetics approach, highlighting its targeted nature.

Key Methodologies and Protocols

Comprehensive Fitness Profiling

A powerful reverse genetics method involves profiling the fitness of numerous target variants against a drug. A study on the anti-cancer drug methotrexate (MTX) and its target, dihydrofolate reductase (DFR1), exemplifies this [21]:

- Variant Library Creation: A "variomics" library is employed, containing approximately 200,000 plasmid-borne point mutation alleles for the yeast DFR1 gene, maintained within a heterozygous diploid knockout strain [21].

- Competitive Resistance Assay: The diploid library is sporulated to generate a haploid pool. Both diploid and haploid pools are grown competitively in the presence of MTX over a 6-day time course. Cells harboring mutant dfr1 alleles that confer resistance will be enriched in the population [21].

- Sequencing and Statistical Analysis: The dfr1 alleles from the drug-treated pools are PCR-amplified and sequenced using next-generation sequencing. A Bayesian statistical model (RVD) is applied to the sequencing data to identify point mutations whose frequency is significantly correlated with drug resistance [21].

- Validation: Candidate mutant alleles are synthesized and tested individually in vivo to confirm they confer MTX resistance, validating their functional role [21].

Comparative Analysis: Advantages, Limitations, and Applications

Strategic Comparison for Target Validation

The choice between forward and reverse chemical genetics is strategic and depends on the research goals. The following table provides a side-by-side comparison of the two approaches.

Table 3: Comparative Analysis of Forward and Reverse Chemical Genetics

| Aspect | Forward Chemical Genetics | Reverse Chemical Genetics |

|---|---|---|

| Starting Point | Phenotype (cellular/organismal) [15] [22] | Known gene/protein target [15] [22] |

| Approach | Phenotype → Genotype → Protein [17] | Protein → Compound → Phenotype [17] |

| Hypothesis Nature | Hypothesis-generating, unbiased discovery [15] [22] | Hypothesis-driven, targeted investigation [15] [22] |

| Primary Challenge | Target deconvolution is a major bottleneck [15] [16] [20] | Poor translatability; disparity between molecular function and disease phenotype [15] |

| Key Advantage | Identifies novel targets and pathways; examines complex, therapeutically relevant phenotypes [15] [17] | Avoids target deconvolution difficulties; enables rational drug design and SAR [15] |

| Throughput | High-throughput phenotypic screening is possible but can be labor-intensive [20] | Highly efficient for testing known targets [22] |

| Druggability | Can reveal druggable targets for previously "undruggable" processes [15] | Limited to known, presumed druggable targets [15] |

Application in Drug Discovery

Both approaches have proven instrumental in drug discovery:

- Forward Chemical Genetics: Has been used to identify inhibitors of various processes (e.g., auxin transport, vacuolar sorting) in plants, providing tools for basic research and potential agrochemical leads [16]. In medicine, it systematizes the discovery of small molecules for basic biological research, which can serve as starting points for drug development [19].

- Reverse Chemical Genetics: The development of COX-2 inhibitors is a classic example. After the COX-2 enzyme was discovered as a key mediator of inflammation, targeted screens were employed to find small molecules that selectively inhibit it, aiming to create pain relievers without the gastrointestinal side effects of non-selective COX inhibitors like aspirin [17]. Furthermore, reverse genetics is pivotal in vaccine development, where engineered, attenuated viral strains are created based on known genetic sequences [23].

The Scientist's Toolkit: Essential Research Reagents

Successful execution of chemical genetics screens relies on a suite of essential reagents and tools. The following table details key components of the research toolkit.

Table 4: Essential Research Reagents for Chemical Genetics

| Reagent / Tool | Function | Application Notes |

|---|---|---|

| Chemical Library | A collection of diverse small molecules for screening [17]. | Libraries can contain 10,000 to over 150,000 compounds. Organizations like the NIH are developing extensive public libraries [17] [20]. |

| Liquid Handling Robot | Automates the transfer of liquids (compounds, media) in microtiter plates [20]. | Critical for high-throughput screens; increases speed, minimizes error, and reduces labor [20]. |

| Affinity/Biotin Tags | Chemical moieties (e.g., biotin) covalently linked to a bioactive compound [15]. | Enables immobilization of the compound on a solid support (e.g., streptavidin beads) for target pull-down in forward genetics [15] [16]. |

| Photoaffinity Labels | Chemical groups (e.g., diazirines) that form covalent bonds with proximal proteins upon UV light exposure [15]. | Used in chemoproteomic probes to "trap" transient drug-target interactions, facilitating isolation and identification [15]. |

| Mass Spectrometer | An analytical instrument for identifying and quantifying proteins [15]. | Used after affinity enrichment to identify the specific proteins bound to a chemical probe [15]. |

| Variomics Library | A library of organisms (e.g., yeast) expressing thousands of point mutations in a target gene [21]. | Used in reverse genetics to comprehensively profile drug resistance mutations and understand target interactions [21]. |

Forward and reverse chemical genetics represent two fundamental, complementary paradigms for leveraging small molecules in biological research and target validation. The forward approach, beginning with phenotype, is a powerful engine for unbiased discovery, capable of revealing novel biology and therapeutic opportunities. The reverse approach, starting with a known target, offers a streamlined, hypothesis-driven path for interrogating specific proteins and developing targeted therapies. The integration of both approaches—using forward genetics to identify novel targets and pathways, and reverse genetics to validate and mechanistically characterize them—provides a comprehensive strategy for functional discovery. As technological advancements in automation, chemoproteomics, and functional genomics continue to evolve, both forward and reverse chemical genetics will remain indispensable in the toolkit of researchers and drug developers striving to decipher biological complexity and translate these insights into new medicines.

In the field of chemical biology and drug discovery, the identification and validation of key biomolecules as therapeutic targets is a fundamental process. A drug target is defined as a biological entity, usually a protein or gene, that interacts with and whose activity is modulated by a particular compound to elicit a therapeutic effect [24]. The journey from a biological hypothesis to a clinically validated target is intricate, requiring a multidisciplinary approach that integrates knowledge of disease pathophysiology, molecular biology, and sophisticated validation technologies. This whitepaper provides an in-depth technical examination of the primary classes of therapeutic targets—with a focus on enzymes and receptors—within the context of modern chemical biology approaches for target validation research. We explore the mechanistic roles these biomolecules play in disease processes, detail experimental methodologies for their identification and validation, and discuss emerging technologies that are reshaping the target validation landscape. The overarching goal is to provide researchers and drug development professionals with a comprehensive framework for navigating the complexities of target assessment in biomedical research.

Major Classes of Therapeutic Biomolecules

Nuclear Receptors

Nuclear receptors (NRs) represent a superfamily of ligand-activated transcription factors that regulate gene expression in response to metabolic, hormonal, and environmental signals [25]. These receptors act as intracellular sensors, converting metabolic and hormonal signals into transcriptional changes that govern critical processes including energy homeostasis, lipid and glucose metabolism, inflammation, immune responses, and cellular differentiation [25]. Unlike membrane-bound receptors, NRs directly bind to DNA at hormone response elements (HREs) in target gene promoters. Upon ligand binding, NRs undergo conformational changes, recruit co-regulators, and modify chromatin to activate or repress transcription [25].

Type I NRs, or steroid hormone receptors, are typically localized in the cytoplasm in an inactive state, bound to heat shock proteins (HSPs). Upon ligand binding, they dissociate from chaperone proteins, dimerize, and translocate to the nucleus to bind specific HREs [25]. The therapeutic relevance of NRs is substantial, with several drugs targeting NRs already approved and many others under investigation. For instance, PPARγ agonists (e.g., pioglitazone, rosiglitazone) are used for diabetes management, FXR agonists (e.g., obeticholic acid) for liver diseases, and selective thyroid hormone receptor agonists (e.g., resmetirom) for Metabolic dysfunction-Associated Steatohepatitis (MASH) [25].

Table 1: Key Nuclear Receptor Families and Their Therapeutic Applications

| Nuclear Receptor | Primary Functions | Therapeutic Applications | Example Drugs |

|---|---|---|---|

| PPARs (α, γ, δ) | Lipid metabolism, glucose homeostasis, inflammation, energy expenditure [25] | Type 2 diabetes, cardiovascular diseases, metabolic syndrome [25] | Pioglitazone, Rosiglitazone [25] |

| FXR | Bile acid sensor, regulates cholesterol metabolism, bile acid synthesis, lipid homeostasis [25] | MASLD, MASH, cholestatic liver diseases [25] | Obeticholic Acid [25] |

| LXRs | Cholesterol homeostasis, reverse cholesterol transport, inflammation, glucose metabolism [25] | Atherosclerosis, lipid disorders [25] | (Modulators under investigation) |

| VDR | Calcium/phosphate regulation, immune function, insulin sensitivity [25] | Chronic kidney disease, osteoporosis [25] | Calcitriol, Paricalcitol [25] |

Enzymes as Therapeutic Targets

Enzymes, as biological catalysts, regulate a vast array of metabolic biochemical reactions under physiological conditions and represent a major class of druggable targets [26]. Their high substrate specificity enables precise modulation of metabolic and physiological processes, making them exceptionally attractive for therapeutic intervention. Enzyme-based therapies have been particularly successful in the treatment of genetic disorders caused by enzyme deficiencies, such as lysosomal storage diseases including Gaucher's disease and Pompe disease, where enzyme replacement therapy (ERT) restores normal metabolic function [26].

Anti-inflammatory enzymes represent a promising therapeutic alternative to conventional drugs like NSAIDs and corticosteroids, which are often limited by adverse side effects, long-term toxicity, and drug resistance [26]. These enzymes function by scavenging reactive oxygen species (ROS), inhibiting cytokine transcription, degrading circulating cytokines, and blocking cytokine release by targeting exocytosis-related receptors [26].

Table 2: Major Classes of Therapeutic Enzymes and Their Applications

| Enzyme Class | Mechanism of Action | Therapeutic Applications | Example Enzymes |

|---|---|---|---|

| Oxidoreductases | Neutralize reactive oxygen species (ROS), mitigate oxidative stress [26] | Inflammation-associated tissue damage [26] | Catalase, Superoxide Dismutase [26] |

| Hydrolases | Degrade pro-inflammatory mediators, proteins, and other molecules [26] | Anti-inflammatory, digestive disorders, removal of necrotic tissue [26] | Trypsin, Chymotrypsin, Nattokinase, Bromelain, Papain [26] |

| Recombinant Enzymes | Target-specific metabolic pathways or genetic deficiencies [26] | Lysosomal storage diseases, cancer, thrombosis [26] | L-Asparaginase (ALL), Streptokinase (thrombolysis), Glucocerebrosidase (Gaucher's) [26] |

The global market for therapeutic enzymes was valued at USD 7322.4 million in 2023 and is projected to reach USD 16,750 million by 2030, with a Compound Annual Growth Rate (CAGR) of 12.6% [26], underscoring their growing importance in modern pharmacology.

Chemical Biology Approaches for Target Identification

Target identification can be approached through two fundamental paradigms: target deconvolution, which begins with a drug that appears efficacious, and target discovery, which starts with a hypothesis about a target's role in disease [24]. Chemical biology provides a diverse toolkit for both approaches.

Direct Biochemical Methods

Affinity Purification provides the most direct approach for identifying target proteins that bind to small molecules of interest [8]. This method involves immobilizing the bioactive small molecule on a solid support to create an affinity matrix, which is then exposed to cell lysates or tissue extracts. After extensive washing to remove non-specifically bound proteins, the specifically bound target proteins are eluted and identified typically through mass spectrometry [8].

Key Considerations for Affinity Purification:

- Immobilization Strategy: The small molecule must be coupled to the solid support through a chemical tether that does not interfere with its biological activity [8].

- Control Experiments: Essential controls include beads loaded with an inactive analog or capped without compound to distinguish specific binding from background [8].

- Challenge of Weak Interactions: Stringent washing conditions may bias identification toward high-affinity interactions, potentially missing lower-affinity targets that might be biologically relevant [8].

Recent advancements include photoaffinity labeling, which uses covalent modification via ultraviolet light-induced cross-linking to capture low-abundance proteins or those with low affinity for the small molecule [8].

Genetic Interaction Methods

Genetic approaches modulate presumed targets in cells to alter small-molecule sensitivity. RNA interference (RNAi) using small interfering RNAs (siRNAs) is a particularly popular method for temporary suppression of a gene product, allowing researchers to mimic the effect of a drug and observe the resulting phenotypic effect [24]. This approach demonstrates the functional "value" of the target without requiring the drug itself.

Advantages and Limitations of siRNA:

- Advantages: Investigate target inhibition without a drug; more accurate mimic of drug effect than gene knockout; no protein structure knowledge required; inexpensive [24].

- Disadvantages: Down-regulating a gene is not equivalent to inhibiting a specific protein domain; can produce exaggerated effects compared to pharmacological inhibition; incomplete knockdown; delivery challenges [24].

The emergence of CRISPR-based gene-editing technologies has further expanded the therapeutic potential of enzymes and the tools for target validation, enabling precise genetic modifications for treating inherited disorders and developing personalized medicine strategies [26].

Computational Inference Methods

Computational approaches generate target hypotheses by comparing small-molecule effects to those of known reference molecules or genetic perturbations [8]. Molecular interaction networks (network medicine) represent a powerful emerging approach that applies network science and systems biology to analyze complex biological systems and disease [27]. Using comprehensive protein-protein interaction networks (interactomes) as templates, researchers can identify subnetworks governing specific diseases, unveil potential disease drivers, and study the effects of novel or repurposed drugs [27].

Graph Neural Networks (GNNs) and other deep learning approaches are increasingly applied to predict drug-target interactions (DTI) by learning the chemical and structural characteristics of molecules represented as graphs [28]. Frameworks like DeepNC utilize GNN algorithms to learn features of drugs and targets, then predict binding affinity values, demonstrating improved performance in terms of mean square error and concordance index on benchmarked datasets [28].

Figure 1: Direct Biochemical Target Identification Workflow

Methodologies for Target Validation

Target validation is the crucial process of demonstrating the functional role of an identified target in the disease phenotype [24]. The GOT-IT recommendations provide a framework for systematic target assessment, focusing on aspects such as target-related safety issues, druggability, assayability, and potential for therapeutic differentiation [29].

Key Validation Steps

A robust validation protocol includes two key steps [24]:

- Reproducibility: Once a drug target is identified, the initial experiment must be repeated to confirm it can be successfully reproduced.

- Introduction of Variation: This involves systematically altering parameters to establish a causal relationship:

- Modulate the drug's affinity to the target by modifying the drug molecule's structure.

- Vary the cell or tissue type to determine if this alters the drug's effect.

- Introduce mutations into the binding domain of the protein target, which should result in modulation or loss of the drug's activity.

Integrative Validation Approaches

Given the complexity of biological systems, target validation typically requires multiple orthogonal methods to build a compelling case. Chemical biology contributes significantly through:

- Chemical Probes: Well-characterized small molecules used to perturb specific protein targets and interrogate biological function [8].

- Phenotypic Screening in Relevant Models: Exposing cells, isolated tissues, or animal models to small molecules to determine whether a specific candidate molecule exerts the desired effect in a disease-relevant context [24].

- Mechanistic Studies: Following initial target identification, additional functional studies help identify unwanted off-target effects or establish new roles for the target protein in biological networks [8].

Figure 2: Multi-Method Target Validation Strategy

The Scientist's Toolkit: Research Reagent Solutions

Successful target identification and validation relies on a suite of specialized reagents and tools. The following table details essential materials used in the featured experiments and their functions.

Table 3: Essential Research Reagents for Target Identification and Validation

| Research Reagent | Function/Application | Key Characteristics |

|---|---|---|

| siRNA/shRNA | Gene knockdown to validate target function and mimic drug effect [24] | Temporary suppression of gene expression; requires efficient delivery systems [24] |

| Affinity Beads/Resins | Immobilization of small molecules for affinity purification [8] | Compatible with various coupling chemistries; low nonspecific binding [8] |

| Photoaffinity Probes | Covalent cross-linking of small molecules to targets for capturing transient interactions [8] | Contain photoreactive groups (e.g., diazirines, aryl azides); enable target identification [8] |

| Chemical Probes | Highly characterized small molecules for selective target modulation in cellular studies [8] | Well-defined potency and selectivity; used for mechanistic studies [8] |

| CRISPR-Cas9 Systems | Precise gene editing for functional validation of targets [26] | Enables gene knockout, knock-in, or mutation; high specificity [26] |

The systematic identification and validation of key biomolecules—particularly enzymes and receptors—as therapeutic targets remains a cornerstone of chemical biology and drug discovery. The process has evolved from single-target, reductionist approaches to more integrated strategies that acknowledge the complexity of biological networks and the prevalence of polypharmacology. Successful target assessment now requires a multidisciplinary toolkit, combining direct biochemical methods, genetic interactions, and computational inference, with rigorous validation through phenotypic studies in disease-relevant models. As technologies such as graph neural networks for drug-target prediction, CRISPR-based gene editing, and sophisticated chemical probe design continue to advance, they promise to enhance the efficiency and success rate of target validation. However, as articulated by the GOT-IT recommendations, a timely focus on comprehensive target assessment, including druggability, safety issues, and potential for differentiation, is essential for facilitating the transition from academic discovery to clinical development [29]. Ultimately, a deeper understanding of target biology within its full pathological context, combined with these advanced chemical biology approaches, will be crucial for delivering the next generation of safe and effective therapeutics.

In the landscape of modern drug discovery, the Target Assessment Framework constitutes a critical, foundational paradigm. This systematic approach for evaluating and validating molecular targets is designed to confirm their direct involvement in disease pathways and their potential for therapeutic intervention [12]. In an era characterized by high attrition rates in pharmaceutical development, a rigorous target validation process serves as a crucial gatekeeper, ensuring that only the most promising targets progress through the costly later stages of drug development [1]. Insufficient validation of drug targets in early development has been directly linked to costly clinical trial failures and lower drug approval rates, underscoring the immense economic and scientific implications of this foundational phase [12]. This framework operates within a broader chemical biology context, integrating diverse methodologies from genetics, proteomics, computational biology, and high-throughput screening to build compelling evidence for target-disease relationships before substantial resources are committed.

Defining Target Identification and Validation

Within the drug discovery pipeline, target identification and validation represent distinct but interconnected processes. Target identification entails pinpointing the specific molecular entity—such as a protein, nucleic acid, or signaling pathway—that undergoes a change in behavior or function when bound by a drug candidate, serving as the critical first step in understanding the mechanism of action for pharmaceutical compounds [12]. This process synthesizes information to pinpoint specific peptides, enzymes, or signaling pathways associated with a disease [12].

Following identification, target validation constitutes a series of rigorous experiments and investigations that confirm the target's direct involvement in a specific biological pathway and demonstrate its capacity to produce a therapeutic effect [12]. This process answers the fundamental question: Does modulation of this target produce a clinically relevant therapeutic benefit? The validation process typically includes initial computer modeling to screen targets for potential drug interactions, followed by in vivo or in vitro validation techniques utilizing methods like gene knockouts, RNA interference, antisense technology, and analysis of resulting phenotypes such as cellular fitness and proliferation [12]. Successful target validation establishes a solid foundation for subsequent drug development campaigns and provides critical insights for medicinal chemistry optimization efforts [8].

Key Methodologies in Target Validation

The target validation toolbox encompasses diverse methodological approaches, each with distinct strengths and applications. These can be broadly categorized into direct biochemical methods, genetic interaction strategies, and computational inference techniques.

Direct Biochemical Methods