Building Trust in AI-Driven Drug Discovery: A 2025 Roadmap for Enhanced Reliability and Transparency

This article provides a comprehensive guide for researchers and drug development professionals on the critical challenges and solutions for ensuring reliability and transparency in AI-driven drug discovery.

Building Trust in AI-Driven Drug Discovery: A 2025 Roadmap for Enhanced Reliability and Transparency

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the critical challenges and solutions for ensuring reliability and transparency in AI-driven drug discovery. Covering the foundational regulatory landscape from the FDA and EMA, it delves into practical methodologies like Explainable AI (xAI) and robust data governance. The content further addresses troubleshooting for bias and data drift, outlines frameworks for model validation and credibility assessment, and concludes with a forward-looking synthesis on fostering trustworthy AI to accelerate the delivery of safe and effective therapeutics.

The New Frontier: Understanding the Urgency for Transparency in AI-Driven Drug Discovery

The traditional drug discovery process is historically long and resource-intensive, often spanning over a decade with costs exceeding $2 billion, and characterized by a success rate of less than 10% from clinical trials to market [1] [2]. Artificial intelligence (AI) is fundamentally disrupting this model, compressing discovery timelines that traditionally took 4-6 years into periods as short as 12-18 months [3] [4]. This paradigm shift replaces sequential, labor-intensive workflows with AI-powered discovery engines capable of integrating multi-omics data streams to parallel process and accelerate tasks from target identification to lead optimization [3] [1].

By leveraging machine learning (ML), deep learning (DL), and generative models, AI-driven platforms analyze vast chemical and biological datasets to uncover patterns and insights nearly impossible for human researchers to detect unaided [1]. This has enabled notable achievements such as Insilico Medicine's generative-AI-designed drug for idiopathic pulmonary fibrosis, which progressed from target discovery to Phase I trials in just 18 months, and Exscientia's AI-designed small molecule for obsessive-compulsive disorder, which reached human trials in under 12 months [3] [1]. The industry is projected to see 30% of new drugs discovered using AI by 2025, signaling a fundamental transformation in pharmaceutical research and development [4].

Quantifying the Acceleration: Data on AI-Driven Timelines and Costs

AI implementation delivers substantial improvements in both time and cost efficiency across the drug discovery pipeline. The following table summarizes key performance metrics and comparative case studies.

Table 1: AI Impact on Discovery Timelines and Success Rates

| Metric | Traditional Discovery | AI-Accelerated Discovery | Source |

|---|---|---|---|

| Preclinical Timeline | 4-6 years | 12-18 months | [3] [1] |

| Cost per Molecule to Preclinical | Industry average: ~$2.6B | Savings of 30-40% | [4] [2] |

| Design Cycle Efficiency | Industry standard cycles | ~70% faster, 10x fewer compounds synthesized | [3] |

| Clinical Success Rate | ~10% (Phase I to approval) | Potential for significant increase (early data) | [4] [2] |

| Hit Rate from Screening | ~2.5% (HTS) | Substantially improved via virtual screening | [2] |

Table 2: Documented Case Studies of AI-Accelerated Discovery

| Company/Drug | Therapeutic Area | AI Application | Reported Timeline Compression |

|---|---|---|---|

| Insilico Medicine (ISM001-055) | Idiopathic Pulmonary Fibrosis | Generative chemistry for novel target and drug design | Target to Phase I: 18 months (vs. 4-6 years) [3] |

| Exscientia (DSP-1181) | Obsessive-Compulsive Disorder | Generative AI for small molecule design | Design to clinic: <12 months [1] |

| Exscientia (Platform) | Oncology, Inflammation | End-to-end AI design platform | Design cycles ~70% faster [3] |

| Schrödinger (Zasocitinib) | Immunology (TYK2 inhibitor) | Physics-enabled molecular design | Advanced to Phase III trials [3] |

Technical Support Center: Troubleshooting AI-Driven Research Workflows

Frequently Asked Questions (FAQs)

Q1: Our AI model for virtual screening identifies compounds with excellent predicted binding affinity, but they consistently show poor activity in biological assays. What could be the issue?

A: This common problem, often termed the "generalization gap," typically stems from several technical root causes:

- Training Data Bias: The model was trained on a chemical space that is not representative of the compounds being screened. The training set may lack diversity or contain systematic errors [5] [2].

- Lab Data Discrepancy: A disconnect exists between the in silico prediction endpoint (e.g., binding affinity) and the actual experimental readout (e.g., cellular activity) due to unmodeled biological complexity [5].

- Algorithmic Blind Spots: The model may be overfitting to irrelevant patterns or "shortcuts" in the training data instead of learning the true underlying structure-activity relationship [5].

Q2: How can we ensure our AI-driven discovery process will be transparent enough for regulatory scrutiny?

A: Building trust with regulators requires proactive implementation of Explainable AI (xAI) principles:

- Implement xAI Techniques: Utilize methods like counterfactual explanations and feature importance scoring (e.g., SHAP, LIME) to interpret model predictions. This allows researchers to ask "what if" questions and understand which molecular features drive the output [5].

- Document the "AI Trail": Maintain rigorous documentation of training data provenance, model versioning, hyperparameters, and all pre- and post-processing steps [5] [2].

- Adhere to Emerging Frameworks: Follow guidelines from the EU AI Act, which classifies AI systems in healthcare as "high-risk" and mandates transparency and human oversight. Note that exemptions exist for systems used "for the sole purpose of scientific research and development," but building compliant processes is a best practice [5].

Q3: Our AI-predicted ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties often do not align with later experimental results. How can we improve reliability?

A: This indicates a problem with model applicability or data quality:

- Expand and Curate Training Data: Ensure your ADMET training datasets are large, high-quality, and chemically diverse. Pay special attention to the accuracy of experimental data used for training, as noise here directly impacts predictive performance [2].

- Apply Applicability Domain Analysis: Implement techniques to define the chemical space where the model makes reliable predictions. Flag compounds that fall outside this domain for priority experimental validation [2].

- Use Ensemble Modeling: Combine predictions from multiple, diverse algorithms (e.g., Random Forest, Graph Neural Networks) to reduce variance and improve overall robustness [2].

Q4: We've discovered a significant performance gap in our predictive model for one demographic group. How can we address this bias?

A: Uncovering model bias is a critical finding. Mitigation requires a multi-faceted approach:

- Audit with xAI: Use explainable AI tools to pinpoint the source of bias by identifying which input features disproportionately influence the skewed predictions [5].

- Augment Training Data: Strategically balance underrepresented groups in your datasets. This can involve collecting new data or using validated synthetic data generation techniques to fill gaps without compromising patient privacy [5].

- Continuous Monitoring: Bias is not a "one-time fix." Establish ongoing monitoring protocols to regularly audit model performance across different demographic and biological subgroups [5].

Essential Research Reagent Solutions for AI-Hybrid Workflows

Table 3: Key Research Reagents and Platforms for AI-Driven Discovery

| Reagent/Platform Type | Specific Example | Primary Function in AI Workflow |

|---|---|---|

| Automated Liquid Handlers | Tecan Veya, Eppendorf Research 3 neo pipette | Provides reproducible, high-throughput assay data for training and validating AI models [6]. |

| 3D Cell Culture Systems | mo:re MO:BOT Platform | Generates human-relevant, high-quality biological data on drug efficacy/toxicity, improving AI prediction accuracy [6]. |

| Protein Production Systems | Nuclera eProtein Discovery System | Rapidly produces soluble, active proteins for structural data and experimental screening, feeding AI with critical protein-ligand information [6]. |

| Data Management Platforms | Cenevo (Labguru, Mosaic), Sonrai Discovery | Unifies siloed data from instruments and assays into a structured, AI-ready format with rich metadata [6]. |

| Phenotypic Screening Platforms | Recursion's Phenomics Platform | Generates high-content cellular imaging data at scale, which is analyzed by AI to identify novel drug candidates and mechanisms [3]. |

Experimental Protocols for Validating AI Discoveries

Protocol: In Vitro Validation of an AI-Discovered Hit Compound

Objective: To experimentally confirm the biological activity and preliminary selectivity of a small-molecule hit identified through an AI-based virtual screen.

Methodology:

- Compound Acquisition/Preparation: Source or synthesize the top-ranked AI-predicted hit compounds. Prepare a 10 mM stock solution in DMSO and serial dilute for assays.

- Primary Target Assay: Perform a dose-response experiment using a target-specific biochemical or biophysical assay (e.g., kinase activity assay, SPR binding assay) to determine the IC50 or Kd value.

- Counter-Screen for Selectivity: Test the compound against a panel of related targets (e.g., kinase panel, GPCR panel) at a single concentration (e.g., 10 µM) to assess initial selectivity.

- Cellular Efficacy Assay: Evaluate the compound's functional activity in a cell-based model relevant to the disease (e.g., a reporter gene assay or a measure of cell viability) to determine the EC50.

- Cytotoxicity Assessment: Perform a viability assay (e.g., MTT, CellTiter-Glo) on a relevant non-target cell line to gauge preliminary therapeutic window.

Validation Criteria:

- Potency: IC50/EC50 < 1 µM in primary and cellular assays.

- Selectivity: < 50% inhibition of >90% of off-targets in the panel at 10 µM.

- Cytotoxicity: CC50 > 10-fold over the cellular EC50.

Protocol: Benchmarking an AI ADMET Prediction Model

Objective: To validate the performance of a newly developed AI model for predicting human liver microsomal (HLM) stability against internal and external test sets.

Methodology:

- Data Curation: Compile a high-quality dataset of HLM stability measurements (e.g., % remaining after 30 min) for diverse chemical structures.

- Data Splitting: Split the data into a training set (80%) and a hold-out test set (20%). Ensure the test set is representative and not used in any model training.

- Experimental Testing: Select 50-100 compounds from an external chemical library not used in training. Experimentally measure their HLM stability using standard LC-MS/MS methods.

- Model Prediction & Comparison: Run the AI model's predictions on both the hold-out test set and the external test set.

- Statistical Analysis: Calculate performance metrics (e.g., ROC-AUC, Precision-Recall AUC, Mean Absolute Error) for both test sets and compare against standard benchmarks (e.g., random forest, linear regression).

Validation Criteria:

- The model demonstrates a ROC-AUC > 0.8 on the external test set.

- The performance drop from the hold-out test set to the external test set is < 10%, indicating good generalizability.

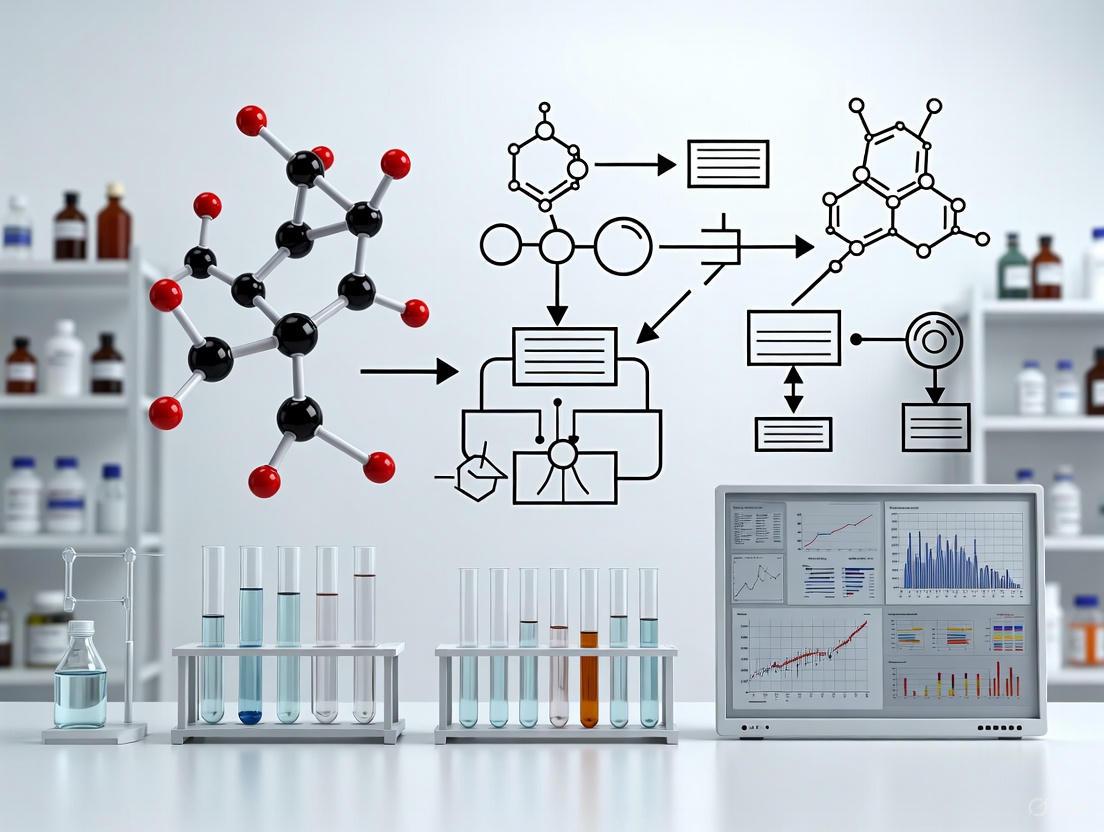

Visualizing the Workflow: From AI Prediction to Experimental Validation

The following diagram illustrates the integrated, iterative cycle that defines modern AI-driven drug discovery, bridging in silico predictions with robust experimental validation.

Frequently Asked Questions (FAQs)

FAQ 1: What exactly is the "black box" problem in the context of AI for drug discovery?

The "black box" problem refers to the inability to understand the internal reasoning process of complex AI models, particularly deep learning systems. These models provide outputs—such as predicting a compound's efficacy or toxicity—without revealing how they arrived at those conclusions [5] [7]. In pharmaceutical R&D, this opacity is a critical barrier because knowing why a model makes a certain prediction is as important as the prediction itself for building scientific trust, ensuring safety, and meeting regulatory standards [5].

FAQ 2: Why is explainability so critical for AI used in drug development compared to other industries?

Explainability is paramount in drug development due to the high-stakes nature of the field, where decisions directly impact human health and safety. Unlike other applications, AI in pharma must support rigorous scientific validation and regulatory scrutiny. Unexplainable models can obscure critical failures, such as hidden biases or incorrect assumptions, which can lead to costly clinical trial failures or patient harm [7]. Furthermore, regulators are increasingly mandating transparency for high-risk AI systems used in healthcare [5].

FAQ 3: How can biased data impact my AI-driven drug discovery project, and can Explainable AI (XAI) help?

Biased data can severely skew AI predictions, leading to drugs that are less effective or safe for patient populations underrepresented in the training data (e.g., specific genders, ethnicities, or age groups) [5]. This can perpetuate healthcare disparities and undermine the goal of personalized medicine. XAI serves as a powerful tool to uncover and mitigate these biases by providing transparency into model decision-making. It highlights which features most influence predictions, allowing researchers to identify when bias may be corrupting results and take corrective actions, such as rebalancing datasets or refining algorithms [5].

FAQ 4: What are the key regulatory considerations for using AI in drug development?

Regulatory landscapes are evolving rapidly. A key development is the EU AI Act, which classifies AI systems used in healthcare and drug development as "high-risk" [5]. This mandates strict requirements for transparency and accountability, requiring that these systems be "sufficiently transparent" so users can correctly interpret their outputs. While AI systems used solely for scientific R&D may be exempt, those influencing clinical decisions face stringent oversight [5]. The U.S. FDA is also actively developing a risk-based regulatory framework for AI in drug development, emphasizing the need for trustworthiness and robust validation [8].

FAQ 5: Are there specific XAI techniques I can implement in my research workflow today?

Yes, several XAI techniques are readily applicable in drug research. Two of the most prominent are:

- SHAP (SHapley Additive exPlanations): This method quantifies the contribution of each input feature (e.g., a molecular descriptor) to a single prediction, explaining the output of any complex model [9].

- LIME (Local Interpretable Model-agnostic Explanations): LIME approximates a complex "black box" model with a simpler, interpretable model locally around a specific prediction to explain its outcome [9]. Additionally, counterfactual explanations are gaining traction, allowing scientists to ask "what-if" questions to understand how a model's prediction would change if specific input features were altered [5].

Troubleshooting Common XAI Implementation Issues

Problem 1: Model Predictions are Inconsistent with Known Domain Knowledge

- Symptoms: The AI model suggests active compounds with unstable chemical structures or prioritizes drug targets that contradict established biological pathways.

- Diagnosis: The model may be learning spurious correlations from the data rather than causally relevant biological signals.

- Solution: Use XAI to audit feature importance.

- Step 1: Employ a technique like SHAP to generate a list of the top features driving the model's predictions for a set of correct and incorrect outputs [9].

- Step 2: Have domain experts (e.g., medicinal chemists, biologists) review this list. Features with high importance that lack biological plausibility are key indicators of a model learning the wrong patterns.

- Step 3: Retrain the model using feature selection that incorporates domain knowledge, or use the insights to correct biases in the dataset itself.

Problem 2: Difficulty Convincing Stakeholders to Trust AI-Generated Leads

- Symptoms: Project managers or regulatory teams are hesitant to advance AI-prioritized candidates into expensive experimental phases due to a lack of rationale.

- Diagnosis: A trust deficit caused by the model's opacity.

- Solution: Integrate XAI reports directly into candidate review workflows.

- Step 1: For each short-listed compound, automatically generate an XAI summary.

- Step 2: This summary should include, at a minimum:

- A SHAP summary plot visualizing global feature importance.

- Local explanations for the specific compound using LIME or SHAP, detailing which structural fragments or properties contributed to its high score [9].

- Counterfactual examples showing similar compounds that the model predicted would be inactive and why [5].

- Step 3: Present this dossier alongside the raw prediction score to provide a comprehensive, evidence-based case for each candidate.

Problem 3: Suspected Performance Disparities Across Patient Subgroups

- Symptoms: The model performs well on average but shows degraded accuracy for specific demographic groups, raising concerns about equitable application.

- Diagnosis: Underlying bias in the training data, such as the underrepresentation of certain populations [5].

- Solution: Proactively use XAI for bias detection and fairness auditing.

- Step 1: Stratify your validation set by key demographic variables (e.g., sex, genetic ancestry).

- Step 2: Run XAI analysis on predictions for each subgroup. Look for significant differences in the influential features between groups.

- Step 3: If bias is confirmed, techniques like data augmentation (e.g., carefully generating synthetic data for underrepresented groups) can be applied to create a more balanced dataset for retraining [5].

Key Quantitative Data in Explainable AI Research

Table 1: Bibliometric Analysis of XAI in Drug Research (2002-2024)

| Country | Total Publications (TP) | Total Citations (TC) | TC/TP (Quality Indicator) | Publication Year Start |

|---|---|---|---|---|

| China | 212 | 2949 | 13.91 | 2013 |

| USA | 145 | 2920 | 20.14 | 2006 |

| Germany | 48 | 1491 | 31.06 | 2002 |

| UK | 42 | 680 | 16.19 | 2007 |

| Switzerland | 19 | 645 | 33.95 | 2006 |

| Thailand | 19 | 508 | 26.74 | 2015 |

Source: Adapted from a 2025 bibliometric study analyzing 573 representative articles [9].

Table 2: Impact of AI and XAI on Drug Discovery Metrics

| Metric | Traditional Drug Discovery | AI-Accelerated Discovery | Role of XAI |

|---|---|---|---|

| Timeline for Novel Compound Design | ~5-6 years [10] | Can be as low as 46 days [10] | Provides rationale for generated structures, speeding up validation [5]. |

| Cost per Approved Compound | Exceeds $2.6 billion [10] | Significant reduction in early R&D costs [10] | Reduces risk of late-stage failure by ensuring model decisions are sound [5]. |

| Key Application: Drug Repurposing | Relies on serendipity and lengthy literature review | AI identified Baricitinib for COVID-19 in early 2020 [10] | Uncovers hidden connections and provides evidence for the new therapeutic application [11]. |

Experimental Protocol: Validating an AI-Discovered Compound with XAI

This protocol outlines the steps to experimentally validate a hit compound identified by an AI model, using XAI insights to guide the process.

Objective: To confirm the predicted activity and mechanism of action of an AI-generated CDK20 inhibitor for idiopathic pulmonary fibrosis (inspired by a real-world case [10]).

Materials and Reagents:

- AI-Generated Hit Compound: The small molecule designed by the generative AI model (e.g., Insilico Medicine's Chemistry42 platform [10]).

- Control Compound: A known inactive compound with similar chemical properties.

- In vitro Model: Human cell lines relevant to the disease pathology (e.g., lung fibroblasts).

- Target Protein: Purified CDK20 protein.

- Assay Kits: Cell viability assay (e.g., MTT), apoptosis detection kit, kinase activity assay.

- XAI Tool: Software/library capable of running SHAP or LIME analysis (e.g., SHAP Python library).

Methodology:

- In silico Rationalization with XAI:

- Input: The AI model and the proposed hit compound.

- Action: Run SHAP analysis to determine which molecular features (e.g., specific functional groups, topological torsion) the model deemed most important for predicting CDK20 inhibition and low cytotoxicity.

- Output: A ranked list of critical features and their impact on the prediction. This forms the "hypothesis" for why the compound should work [5] [9].

Biochemical Validation:

- Experiment: Conduct a kinase activity assay using the purified CDK20 protein.

- Measurement: Measure the IC50 value of the hit compound and compare it to the control.

- XAI Integration: If the XAI analysis highlighted specific binding interactions, consider conducting molecular dynamics simulations to visually confirm these interactions.

Cellular Validation:

- Experiment: Treat the relevant human cell lines with the hit compound and control.

- Measurements:

- Assess anti-fibrotic activity (e.g., reduction in collagen deposition).

- Measure cell viability and apoptosis to confirm the predicted low cytotoxicity.

- XAI Integration: If the model's prediction of low toxicity was driven by specific metabolic features, design targeted assays to probe that specific metabolic pathway.

Data Correlation and Iteration:

- Correlate the experimental results with the initial XAI insights. If the compound is active, the XAI features should align with the biological mechanism. If it fails, the XAI analysis can help diagnose the failure, guiding the next round of AI-driven compound generation [5].

Visualizing the XAI Workflow for Drug Discovery

The diagram below illustrates a robust workflow integrating XAI into the AI-driven drug discovery pipeline to enhance transparency and reliability.

XAI Integration Workflow in Drug Discovery

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Tools for Explainable AI in Pharmaceutical Research

| Tool / Technique | Type | Primary Function in XAI | Example Use Case in Drug Discovery |

|---|---|---|---|

| SHAP | Software Library | Explains the output of any ML model by quantifying each feature's contribution to a prediction [9]. | Identifying which molecular descriptors most strongly influenced a toxicity prediction. |

| LIME | Software Library | Creates a local, interpretable model to approximate the predictions of any black-box classifier [9]. | Understanding why a specific compound was classified as "active" by a complex deep learning model. |

| Counterfactual Explanations | Methodology | Generates "what-if" scenarios to show how minimal changes to input features would alter the model's output [5]. | Guiding medicinal chemists on how to modify a lead compound to reduce predicted off-target effects. |

| Knowledge Graphs | Data Structure | Integrates disparate biological data to create a network of relationships, providing context for AI predictions [10]. | Validating an AI-predicted drug target by examining its connected pathways and entities in the graph. |

| AlphaFold | AI System | Provides highly accurate protein structure predictions, offering a structural basis for interpreting AI models [11]. | Visualizing how an AI-designed small molecule is predicted to bind to its protein target. |

The integration of Artificial Intelligence (AI) into drug discovery and development represents a paradigm shift, offering the potential to dramatically accelerate target identification, compound screening, and clinical trial design [12]. However, this technological revolution introduces unprecedented challenges in regulatory oversight, including the "black box" problem of complex AI models, pervasive risks of data bias, and the need for ongoing performance monitoring [5] [13]. For researchers and scientists, navigating the evolving regulatory expectations is crucial for ensuring that AI-driven discoveries are both innovative and compliant. This technical support guide provides a comparative analysis of the U.S. Food and Drug Administration (FDA) and European Medicines Agency (EMA) approaches to AI oversight in 2025, framed within the broader thesis of improving reliability and transparency in AI-driven research. By understanding these frameworks, research professionals can better design experiments, implement AI tools, and prepare for regulatory interactions throughout the drug development lifecycle.

Comparative Analysis: FDA vs. EMA Regulatory Philosophies

The FDA and EMA share the common goal of ensuring that AI technologies used in pharmaceutical development are safe and effective, but they have developed distinct regulatory philosophies and implementation frameworks [14].

Foundational Regulatory Approaches

FDA's Flexible, Risk-Based Model: The FDA has adopted a flexible, risk-based framework that emphasizes a "Total Product Life Cycle (TPLC)" approach and "Good Machine Learning Practice (GMLP)" principles [15] [14]. This approach allows for case-by-case evaluation and encourages early engagement between sponsors and the agency. The FDA focuses significantly on post-market surveillance and continuous monitoring, requiring that AI models demonstrate reliability and effectiveness over time, even after deployment [16] [14].

EMA's Structured, Risk-Tiered Framework: The EMA has established a more formalized and structured regulatory architecture based on a detailed risk classification system [17] [13]. Its 2024 Reflection Paper outlines specific requirements for "high patient risk" and "high regulatory impact" applications [13]. The EMA places greater emphasis on rigorous upfront validation and requires comprehensive documentation and clinical evidence before AI tools can be incorporated into drug development processes [14].

Side-by-Side Comparison of Key Regulatory Elements

Table: Comparative Overview of FDA and EMA AI Oversight for Drug Development

| Regulatory Element | U.S. FDA Approach | European EMA Approach |

|---|---|---|

| Core Philosophy | Flexible, risk-based, product life cycle-focused [15] [14] | Structured, risk-tiered, precautionary [13] [14] |

| Primary Guidance | Draft Guidance (Jan 2025) on AI in drug development [18] | Reflection Paper on AI in the medicinal product lifecycle (2024) [17] |

| Risk Classification | Based on device risk classification (Class I-III) [15] | Focus on "high patient risk" and "high regulatory impact" [13] |

| Validation Emphasis | Context-specific validation with ongoing monitoring [16] | Rigorous pre-market validation and documentation [14] |

| Model Changes | Predetermined Change Control Plans (PCCPs) [15] | Prohibits incremental learning during trials; frozen models required [13] |

| Transparency | Explainability required to the extent possible [16] | Preference for interpretable models; justification needed for black-box [13] |

| Regulatory Engagement | Encourages early and ongoing stakeholder engagement [14] | Formal consultations via Innovation Task Force, Scientific Advice [13] |

Technical Requirements: Building Compliant AI Systems

Data Integrity and Governance

Both agencies emphasize data integrity as a foundational requirement for AI systems used in regulated drug development environments.

FDA Data Integrity Expectations: The FDA requires that data used in AI models complies with ALCOA+ principles (Attributable, Legible, Contemporaneous, Original, Accurate, plus Complete, Consistent, Enduring, and Available) [16]. This includes maintaining robust data lineage, version control, and immutable audit trails throughout the model lifecycle.

EMA Data Quality Framework: The EMA's updated Annex 11 (2025) places Quality Risk Management at the center of computerized system oversight, requiring continuous validation and controlled data governance systems [19]. Data sources must be thoroughly documented, with explicit assessment of data representativeness and strategies to address class imbalances [13].

Model Transparency and Explainability

Overcoming the "black box" problem is a central concern for both regulators, though with nuanced expectations.

FDA Explainability Requirements: The FDA mandates documentation of what data trained the model, how features were selected, and the model's decision logic to the extent possible [16]. The agency recognizes that complete explainability may not always be feasible but requires sufficient transparency for regulatory assessment.

EMA Interpretability Standards: The EMA explicitly states a preference for interpretable models but acknowledges that black-box models may be justified by superior performance [13]. In such cases, developers must provide explainability metrics and thorough documentation of model architecture and performance characteristics.

Bias Detection and Mitigation

Algorithmic bias represents a significant risk to patient safety and generalizability of research findings, with both agencies implementing requirements to address this challenge.

FDA Bias Mitigation Framework: The FDA requires models to demonstrate fairness assessments, bias detection mechanisms, corrective measures, and ongoing monitoring [16]. The agency's recent warning letters emphasize the importance of representative training data and performance across diverse patient populations.

EMA Bias Prevention Strategy: The EMA mandates systematic assessment of data representativeness and requires strategies to address class imbalances and potential discrimination [13]. The framework emphasizes proactive identification of bias risks, particularly for applications affecting safety or regulatory decision-making.

Table: Essential Components for AI Bias Mitigation in Drug Development

| Component | Implementation Requirements | Validation Approach |

|---|---|---|

| Data Representativeness | Documentation of demographic, clinical, and genetic diversity in training data [5] | Statistical analysis of feature distribution across subpopulations [13] |

| Bias Detection | Implementation of fairness metrics and disparate impact analysis [16] | Performance testing across relevant patient subgroups [13] |

| Bias Mitigation | Techniques such as reweighting, adversarial debiasing, or synthetic data augmentation [5] | Comparative analysis of model performance pre- and post-mitigation [13] |

| Ongoing Monitoring | Continuous performance tracking across deployment environments [20] | Statistical process control for detecting performance drift [16] |

Experimental Protocols: Methodologies for Compliant AI Research

AI Model Validation Framework

A robust validation strategy is essential for regulatory compliance. The following workflow outlines key stages in developing AI models for regulated drug development environments.

Figure 1. AI Model Validation Workflow for Regulatory Compliance

The validation workflow consists of these critical phases:

Context of Use (COU) Definition: Precisely specify the AI's intended function within the drug development process. This forms the basis for all subsequent validation activities and determines the regulatory scrutiny level [18].

Data Curation and Representativeness Assessment: Implement rigorous processes to document data provenance, transformation pipelines, and assess representativeness across relevant patient demographics and clinical conditions [13].

Prospective Performance Testing: Conduct validation using predefined performance metrics and statistical boundaries established in the validation protocol. Testing should reflect real-world operating conditions [13].

Comprehensive Documentation: Maintain detailed records of model architecture, training data, hyperparameters, and performance characteristics. Documentation must support regulatory assessment and facilitate explainability [16].

Post-Market Performance Monitoring: Implement continuous monitoring systems to detect performance degradation, data drift, or concept drift in real-world deployment environments [20].

Digital Twin Implementation Protocol

The use of "digital twins" – computational replicas of patients or trial cohorts – represents an emerging application of AI in clinical development that illustrates regulatory adaptation [13].

Methodology for Validated Digital Twin Deployment:

Model Specification: Define the mathematical framework and underlying assumptions of the digital twin model, including how it will emulate control-arm outcomes.

Data Integration Pipeline: Establish validated processes for integrating multimodal data sources (e.g., clinical records, genomic data, real-world evidence) while maintaining data integrity.

Comparative Validation: Execute prospective studies comparing digital twin predictions against traditional control arms where ethically feasible, with predefined success criteria [13].

Uncertainty Quantification: Implement robust methods to quantify and communicate uncertainty in digital twin predictions, including confidence intervals and sensitivity analyses.

Regulatory Engagement: Pursue early regulatory consultation through appropriate channels (e.g., FDA's Q-Submission program, EMA's Innovation Task Force) to align on validation requirements [13].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Components for AI-Driven Drug Discovery Research

| Tool/Component | Function | Regulatory Considerations |

|---|---|---|

| Explainable AI (xAI) Libraries | Provide interpretability for complex models through feature importance, counterfactual explanations, and model distillation [5] | Must be validated for use in regulated contexts; documentation required for explainability metrics [13] |

| Bias Detection Frameworks | Identify and quantify potential algorithmic bias across protected attributes and patient subgroups [16] | Required for fairness assessments; should align with FDA and EMA expectations for demographic representation [13] |

| Data Version Control Systems | Track dataset revisions, maintain provenance, and ensure reproducibility of model training [19] | Essential for ALCOA+ compliance and data integrity requirements [16] |

| Model Monitoring Platforms | Detect performance degradation, data drift, and concept drift in deployed models [20] | Must be included in post-market surveillance plans with defined triggers for corrective action [16] |

| Synthetic Data Generators | Create artificially balanced datasets to address class imbalances and improve model generalizability [5] | Use requires careful validation; synthetic data must accurately represent underlying biological relationships [13] |

Frequently Asked Questions: Troubleshooting AI Compliance Challenges

Q1: Our AI model for predicting compound efficacy shows excellent overall performance but exhibits significant performance variation across ethnic subgroups. How should we address this before regulatory submission?

A1: This indicates potential algorithmic bias that must be addressed prior to submission. Implement the following troubleshooting protocol:

- Conduct comprehensive bias auditing using disaggregated analysis across all relevant demographic and clinical subgroups [5].

- Apply bias mitigation techniques such as reweighting, adversarial debiasing, or synthetic data augmentation to improve fairness [5].

- Document all mitigation efforts and validate model performance separately in each subgroup to demonstrate equitable performance [13].

- Prepare a bias impact statement for regulatory submission that acknowledges the initial limitation, describes mitigation approaches, and presents post-mitigation performance metrics [16].

Q2: We need to update our AI model with new training data to improve performance. What regulatory considerations apply to model retraining?

A2: Model updates trigger different regulatory requirements based on the agency and significance of changes:

- For FDA submissions, implement a Predetermined Change Control Plan (PCCP) that proactively outlines the scope of anticipated modifications, and the validation procedures that will be used to ensure continued safety and effectiveness [15].

- For EMA applications, note that substantial model changes during clinical development may require submission of a substantial modification notice. The EMA generally prohibits incremental learning during pivotal trials, requiring frozen, documented models [13].

- For both agencies, maintain rigorous version control and document all retraining activities, including the rationale for updates, data used, and performance changes [19].

Q3: How can we demonstrate explainability for our complex deep learning model when complete interpretability isn't technically feasible?

A3: When full interpretability isn't achievable, implement a layered explainability strategy:

- Develop local explainability approaches that provide insight into model decisions for individual predictions rather than global model behavior [5].

- Implement counterfactual explanations that show how changes to input features would alter model outputs, providing biological insights for researchers [5].

- Conduct comprehensive sensitivity analysis to identify which input features most significantly impact predictions [13].

- Document the model's decision logic to the extent possible, including feature selection rationale and performance characteristics across diverse scenarios [16].

- For the EMA, provide justification for using a black-box model by demonstrating its superior performance compared to more interpretable alternatives [13].

Q4: What are the key differences in real-world performance monitoring expectations between the FDA and EMA?

A4: While both agencies emphasize post-market monitoring, their approaches differ in focus:

- The FDA places stronger emphasis on continuous real-world performance monitoring and has issued specific requests for public comment on approaches for measuring AI-enabled device performance in real-world settings [20]. The FDA expects ongoing lifecycle evaluation with drift monitoring and retraining controls [16].

- The EMA focuses more on structured post-authorization studies and integrates monitoring within established pharmacovigilance systems [13]. While allowing more flexible AI deployment post-authorization, the EMA requires ongoing validation and performance monitoring [13].

- Both agencies expect defined triggers for corrective action when performance degradation is detected, and comprehensive documentation of all monitoring activities [16] [13].

Q5: Our AI tool is used exclusively in early-stage drug discovery for target identification. Does it fall under FDA or EMA regulatory oversight?

A5: The regulatory status depends on the context and eventual use:

- AI used solely for early research with no immediate impact on patient care or regulatory decisions typically faces less regulatory scrutiny [13].

- The EMA explicitly excludes AI systems used "for the sole purpose of scientific research and development" from the scope of the EU AI Act, provided they are not used in clinical management [5].

- The FDA focuses oversight on AI that "influences regulated decisions" related to safety, effectiveness, or quality claims [16].

- However, if research outputs eventually support regulatory submissions, you must maintain documentation and validation records sufficient to demonstrate credibility, even for early-stage tools [18]. Implement "fit-for-purpose" validation based on the potential risk and impact of AI-driven decisions [13].

Technical Support Center: Troubleshooting AI in Drug Discovery

This technical support center provides practical, evidence-based guidance for researchers navigating the challenges of implementing AI in drug discovery pipelines. The following troubleshooting guides and FAQs address specific, high-frequency issues encountered in real-world experimental settings.

Frequently Asked Questions (FAQs)

Q1: Our AI model identified a promising target, but the resulting drug candidate failed in preclinical testing due to unexpected toxicity. What are the most likely causes?

- A: This common failure point often stems from one of three issues [21] [5]:

- Biological Complexity Over-simplification: The AI model may have focused on a single pathway without fully accounting for the target's role in other biological systems (e.g., a kinase target relevant in fibrosis also playing a role in cancer) [22]. Solution: Implement causal AI models that infer biological mechanism, not just correlation, and validate against multi-omic datasets [23].

- Data Bias in Training Sets: The data used to train the target identification model may have been unrepresentative or lacked sufficient toxicology annotations [5]. Solution: Adopt FAIR (Findable, Accessible, Interoperable, Reusable) data principles and use specialized Laboratory Information Management Systems (LIMS) to ensure data quality and structure from the outset [24].

- "Black Box" Decisions: The model may have made a correct prediction for the wrong, unverifiable reasons [5]. Solution: Integrate Explainable AI (xAI) tools that provide counterfactual explanations (e.g., "How would the prediction change if this molecular feature were altered?") to build trust and uncover flawed logic before experimental validation [5].

Q2: We are preparing an Investigational New Drug (IND) application for an AI-discovered molecule. What regulatory challenges should we anticipate?

- A: Regulatory bodies are developing specific frameworks for AI in drug development [25] [23] [26]. Key considerations are:

- Model Transparency: The U.S. Food and Drug Administration (FDA) has released draft guidance emphasizing a risk-based framework for assessing AI model credibility. Be prepared to explain your model's rationale, not just its output [25] [5] [26].

- Data Provenance: You must document the source, quality, and handling of all data used to train and validate your AI models [24] [26].

- Real-World Evidence: The FDA and EMA are increasingly open to Real-World Evidence (RWE) and adaptive trial designs supported by AI. Engage with regulators early through pre-submission meetings to align on your AI strategy and data requirements [23].

Q3: Our generative AI designed a novel molecule with excellent predicted binding affinity, but it has poor solubility and metabolic stability. How can we improve the chemical realism of AI-generated compounds?

- A: This indicates a disconnect between the AI's optimization goal and real-world drug-like properties [21].

- Refine the Reward Function: Ensure your generative model's optimization algorithm penalizes compounds that violate established rules for solubility (e.g., LogP), metabolic soft spots, and chemical stability [11] [26].

- Integrate Multi-Objective Optimization: Use AI platforms that balance multiple parameters simultaneously—such as binding affinity, solubility, selectivity, and predicted ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity)—rather than optimizing for a single parameter like potency [22] [26].

- Leverage Specialized Tools: Incorporate in silico ADMET prediction tools early in the design cycle to filter out non-viable molecules before synthesis [26].

Q4: Our clinical trial for an AI-discovered drug failed to meet its primary endpoint. Did AI fail, or is there value in the resulting data?

- A: A failed trial does not necessarily mean the AI approach was invalid [21] [23].

- Conduct Deep Subgroup Analysis: Use biology-first AI to re-analyze trial data. Identify patient subgroups (based on biomarkers, genomics, or proteomics) that did respond to the therapy. This can rescue a program by refining the patient population for a subsequent trial [23].

- Learn for the Next Program: Even unsuccessful programs generate valuable data. Publish timelines, costs, and failure analyses to help establish industry benchmarks. This transparency helps the entire field improve by understanding the failure modes of AI-driven discovery [21].

Troubleshooting Guide: Data and Bias Management

A primary source of experimental failure in AI-driven discovery is biased or poor-quality data. The following workflow provides a systematic protocol for identifying and mitigating these issues.

Diagram 1: A systematic workflow for diagnosing and correcting bias in AI models using Explainable AI (xAI).

Experimental Protocol: Mitigating Gender Bias in a Predictive Model for Drug Dosage

- Objective: To identify and correct a gender-based performance bias in a model predicting optimal drug dosage.

- Background: Models trained on historically male-dominated clinical trial data may perform poorly for female patients, leading to adverse events [5].

- Methodology [5]:

- Interrogate with xAI: Use SHAP (SHapley Additive exPlanations) or LIME (Local Interpretable Model-agnostic Explanations) on your initial model. This will reveal which features are most influential in its predictions.

- Check for Imbalance: Audit the training dataset's demographic composition. A significant under-representation of female patients is a clear risk factor.

- Data Augmentation: If an imbalance is found, employ techniques like synthetic data generation (using Generative Adversarial Networks) to create a balanced dataset that preserves biological reality without compromising patient privacy.

- Retrain & Validate: Retrain the model on the augmented, balanced dataset. Crucially, validate its performance on a separate, balanced hold-out test set, reporting performance metrics disaggregated by sex.

- Expected Outcome: A model whose predictive accuracy and dosage recommendations are equitable across demographic groups, thereby improving patient safety and clinical trial success rates.

Quantitative Landscape of AI-Discovered Drugs in Clinical Development

Concrete progress is best measured by the advancement of AI-discovered drugs through the clinical pipeline. The following tables summarize the current state as of 2025.

Table 1: AI Drug Clinical Pipeline Highlights (2025)

| Drug Candidate | Company | Target | Indication | Key 2025 Milestone | Regulatory Status |

|---|---|---|---|---|---|

| Rentosertib (ISM001-055) [27] [22] | Insilico Medicine | TNIK | Idiopathic Pulmonary Fibrosis | Phase IIa: +98.4 mL FVC gain at 60 mg [27] | Orphan Drug (FDA) [27] |

| ISM5411 [27] | Insilico Medicine | PHD1/2 | Ulcerative Colitis | Phase I: safe, gut-restricted PK profile [27] | — |

| ISM6331 [27] | Insilico Medicine | Pan-TEAD | Mesothelioma / Hippo-pathway tumours | First patient dosed in global Phase I [27] | Orphan Drug (FDA) [27] |

| REC-994 [25] [22] | Recursion Pharmaceuticals | N/A | Cerebral Cavernous Malformation | Phase II: safety endpoints met, long-term efficacy not confirmed [25] [22] | — |

| DSP-0038 [26] | N/A | N/A | N/A | Advancing in clinical trials [26] | — |

Table 2: AI-Driven Clinical Trial Success Rates (2024-2025 Analysis)

| Trial Phase | Industry Average Success Rate | AI-Driven Candidate Success Rate | Key Factors for AI Performance |

|---|---|---|---|

| Phase I | 40–65% [22] [26] | 80–90% [22] [26] | Superior prediction of safety and drug-like properties in silico [26]. |

| Phase II | ~40% [22] | ~40% (on par) [22] | Efficacy remains a complex biological challenge; AI helps with patient stratification [21] [23]. |

| Phase III | N/A | Limited data | No novel AI-discovered drug had achieved clinical approval as of 2024 [21]. |

Experimental Protocols: From Target Identification to Clinical Trials

Protocol: End-to-End AI-Driven Drug Discovery

The following workflow, exemplified by companies like Insilico Medicine, outlines a proven protocol for generating a preclinical drug candidate.

Diagram 2: A closed-loop AI pipeline for integrated target discovery and molecule design.

Detailed Methodology [22]:

- Target Identification: Use an AI platform (e.g., PandaOmics) to analyze complex biological datasets (genomics, transcriptomics, proteomics). The AI identifies and ranks novel disease-associated targets (e.g., TNIK for idiopathic pulmonary fibrosis) based on genetic evidence, pathway analysis, and literature mining.

- Target Validation: The AI-prioritized target is validated experimentally in relevant cellular and animal models of the disease to confirm its functional role.

- Generative Molecule Design: A generative chemistry AI (e.g., Chemistry42) uses multiple AI models in parallel to design novel small-molecule structures that are predicted to bind to the validated target. The AI optimizes for potency, selectivity, and drug-likeness.

- In Silico Screening & Optimization: The generated molecules are virtually screened and ranked based on predicted ADMET properties and synthetic feasibility. The best candidates are synthesized.

- Preclinical Candidate Nomination: The synthesized compounds undergo rigorous in vitro and in vivo testing. A candidate is selected based on a favorable efficacy and safety profile.

- Benchmark: This end-to-end process, from program initiation to preclinical candidate nomination, has been achieved in approximately 18 months, significantly faster than the 4-6 years typical of traditional methods [27] [22].

Protocol: AI-Optimized Patient Stratification for Clinical Trials

Objective: To improve Phase II trial success rates by using AI to identify biomarkers that predict patient response.

Methodology (Bayesian Causal AI Approach) [23]:

- Define Mechanistic Priors: Start with a biological hypothesis. Integrate known genetic variants, proteomic signatures, and metabolomic shifts related to the drug's mechanism of action.

- Integrate Multi-modal Baseline Data: Collect rich baseline data from trial participants, including genomic, transcriptomic, and proteomic profiles, in addition to standard clinical metrics.

- Model Training & Causal Inference: Train a Bayesian causal AI model on this data. Unlike correlation-based models, this approach infers causal relationships between biomarkers and treatment outcomes.

- Identify Responder Subgroups: The model will identify distinct patient subgroups based on a granular biological understanding, not just broad clinical categories. For example, it may find that patients with a specific metabolic phenotype respond significantly better.

- Adapt Trial Design: Use these insights to refine inclusion/exclusion criteria for subsequent trial phases, enriching for the patient population most likely to benefit.

The Scientist's Toolkit: Essential Research Reagents & Platforms

Table 3: Key Research Reagent Solutions for AI-Driven Discovery

| Tool / Reagent Category | Example(s) | Primary Function in AI Workflow |

|---|---|---|

| AI Target Discovery Platform | PandaOmics [22], BenevolentAI's platform [11] | Analyzes complex multi-omic and clinical data to identify and prioritize novel therapeutic targets. |

| Generative Chemistry AI | Chemistry42 [22], Atomwise (CNNs) [11] [22] | Designs novel, synthesizable small molecules and biologics with optimized properties de novo. |

| Protein Structure Prediction | AlphaFold 2 & 3 [11] [26], ProteinMPNN [26] | Provides high-accuracy protein structure predictions, crucial for structure-based drug design. |

| Specialized Biologics LIMS | Biologics LIMS [24] | Centralizes and structures complex biological data (samples, plate layouts, assay results), making it AI-ready and FAIR-compliant. |

| Explainable AI (xAI) Tool | Counterfactual Explanation Tools [5], SHAP, LIME | Unpacks "black box" AI decisions, providing biological insights and helping to identify model bias or errors. |

| Bayesian Causal AI Model | BPGbio's platform [23] | Infers causality from biological data, enabling smarter clinical trial design and patient stratification. |

In the high-stakes field of drug discovery, the integration of artificial intelligence (AI) promises to revolutionize research by accelerating target identification and compound efficacy prediction [5]. However, the tremendous potential of these tools is often gated by a significant challenge: the "black box" problem, where AI models produce outputs without revealing their reasoning [5]. This lack of transparency is a critical barrier in a scientific context where understanding why a model makes a prediction is as important as the prediction itself [5]. Establishing a common language for AI, Machine Learning (ML), and Explainable AI (xAI) is not an academic exercise; it is a foundational requirement for ensuring reliability, facilitating peer review, meeting regulatory standards, and building trust in AI-driven insights [28] [5]. This guide provides the essential definitions and troubleshooting support to help research teams navigate this complex landscape.

Core Definitions: AI, ML, and Explainable AI

To build a shared understanding, it is crucial to define the key terms that form the backbone of AI-driven research.

- Artificial Intelligence (AI) is a broad field of computer science dedicated to creating systems capable of performing tasks that typically require human intelligence. In scientific research, this encompasses everything from rule-based expert systems to advanced machine learning models [28].

- Machine Learning (ML) is a subset of AI that focuses on developing algorithms that can learn patterns and make decisions from data, without being explicitly programmed for every task [28]. ML models are often trained on large datasets to identify complex relationships, making them powerful for tasks like predicting molecular binding affinities.

- Explainable AI (XAI) refers to a set of processes and methods that make the decision-making processes of AI and ML systems transparent and understandable to human users [28] [29]. Unlike "black box" models, XAI provides insights into how an AI model reaches its conclusions, allowing researchers to interpret, trust, and verify the outputs [28] [29]. This is particularly critical in high-stakes applications like healthcare and drug discovery [29].

The table below summarizes the key comparisons and techniques associated with XAI.

Table 1: Explainable AI (XAI) at a Glance

| Aspect | Description |

|---|---|

| Core Objective | To allow human users to comprehend and trust the results and output created by machine learning algorithms [28]. |

| The "Black Box" Problem | The inability to comprehend how a complex AI algorithm arrived at a specific result, common in deep learning and neural networks [28] [5]. |

| Key XAI Techniques | Prediction Accuracy: Using methods like LIME to validate model output [28]. Traceability: Using techniques like DeepLIFT to trace decisions back to inputs [28]. Decision Understanding: Educating teams on how the AI makes decisions to build trust [28]. |

| XAI vs. Responsible AI | XAI analyzes results after they are computed, while Responsible AI focuses on building fairness and accountability during the planning stages [28]. |

Troubleshooting Guide: Common Issues in AI for Drug Discovery

This section addresses specific, technical problems that researchers may encounter when developing and deploying AI/ML models.

Model Performance & Validation

Q: Our AI model demonstrates high accuracy on training data but performs poorly on external validation datasets. What could be the cause and how can we address this?

This is a classic sign of overfitting, where the model has learned the noise and specific patterns of the training data rather than generalizable biological principles.

Root Causes:

- Data Silos and Non-Representative Training Data: The model was trained on data that is not representative of the broader patient population or chemical space due to biased or fragmented data sources [5].

- Data Leakage: Information from the test set inadvertently influences the training process, leading to overly optimistic performance estimates [30].

- Inadequate Performance Metric Selection: Relying solely on metrics like accuracy without reporting more informative metrics like Positive Predictive Value (PPV) or Negative Predictive Value (NPV), which are critical for clinical applicability [30].

Methodology for Resolution:

- Audit Training Data: Use XAI techniques to uncover potential biases in the training data. Check for representation across key subgroups (e.g., demographic, genetic) [5].

- Implement Robust Validation: Ensure a strict separation between training, validation, and test sets. Employ external validation cohorts from independent sources [30].

- Expand Performance Reporting: Beyond sensitivity and specificity, always calculate and report prevalence-dependent metrics like PPV and NPV [30]. The table below, based on an analysis of FDA-reviewed AI devices, shows the relative lack of reporting for these critical metrics.

Table 2: Transparency in Performance Metrics for AI/ML Medical Devices (Analysis of 1,012 FDA Summaries)

| Performance Metric | Percentage of Devices Reporting the Metric [30] |

|---|---|

| Sensitivity | 23.9% |

| Specificity | 21.7% |

| AUROC (Area Under the ROC Curve) | 10.9% |

| Positive Predictive Value (PPV) | 6.5% |

| Accuracy | 6.4% |

| Negative Predictive Value (NPV) | 5.3% |

| No performance metrics reported | 51.6% |

Data Quality & Bias

Q: We are concerned that our compound efficacy predictions may be skewed by biases in our historical dataset. How can we detect and mitigate this?

Bias in datasets is a profound challenge that can lead to unfair or inaccurate outcomes, perpetuating healthcare disparities and undermining patient stratification [5].

Root Causes:

- Underrepresentation: Historical data may insufficiently represent certain demographic groups or molecular subtypes [5].

- The Gender Data Gap: For example, if training data predominantly comes from male subjects, dosage recommendations and efficacy predictions may be less accurate for females [5].

- Systemic Bias Reproduction: AI models, including generative AI and large language models, can learn and amplify existing biases present in their training data [5].

Methodology for Resolution:

- Bias Audit with XAI: Leverage XAI tools to highlight which features most influence predictions. This can reveal if a model is disproportionately relying on a feature correlated with a bias, such as a specific demographic marker [5].

- Data Augmentation: Use techniques like synthetic data generation to carefully balance the representation of underrepresented groups in the training set without compromising patient privacy [5].

- Continuous Monitoring: Implement a framework for continuous monitoring with XAI to detect performance "drift" or degradation when models are exposed to new, real-world data that differs from the training set [28].

Transparency & Regulatory Preparedness

Q: With the evolving regulatory landscape (e.g., EU AI Act), how can we ensure our AI-driven research tools are sufficiently transparent?

Regulatory bodies are increasingly mandating transparency for high-risk AI systems. A core principle of the EU AI Act, for example, is that such systems must be "sufficiently transparent" so users can correctly interpret their outputs [5].

Root Causes:

- Lack of Reporting Standards: Many AI/ML devices have historically been approved with significant gaps in transparency reporting. A 2025 study found the average transparency score for FDA-reviewed devices was only 3.3 out of 17 [30].

- Unclear Documentation: Failure to document dataset demographics, model characteristics, and clinical study details [30].

Methodology for Resolution:

- Adopt a Transparency Framework: Systematically document the AI model's lifecycle. The table below outlines categories for transparency reporting based on regulatory insights.

- Implement Counterfactual Explanations: Use XAI techniques that allow scientists to ask "what if" questions (e.g., "How would the prediction change if this molecular feature were different?") [5]. This extracts biological insights directly from the model and helps refine drug design.

- Proactive Governance: Embed ethical principles and transparency requirements into the AI development process from the start, adopting a responsible AI approach alongside XAI [28].

Table 3: Essential Transparency Reporting Categories for AI in Research

| Reporting Category | Specific Information to Document |

|---|---|

| Dataset Characteristics | Data source; dataset size (number of patients/images); demographic composition (age, sex, etc.) [30]. |

| Model Characteristics | Primary input modality (e.g., image, language); model architecture (e.g., convolutional neural network) [30]. |

| Model Performance | A full suite of metrics including sensitivity, specificity, AUROC, PPV, and NPV, with clear context on study design (retrospective/prospective) [30]. |

| Clinical Validation | Details of the clinical study, including sample size and whether it was prospective or retrospective [30]. |

The Scientist's Toolkit: Essential Research Reagents & Solutions

This table details key methodological "reagents" and their functions for implementing XAI and ensuring robust AI-driven research.

Table 4: Key Research Reagent Solutions for Transparent AI

| Research Reagent | Function & Application |

|---|---|

| LIME (Local Interpretable Model-agnostic Explanations) | Explains the predictions of any classifier by perturbing the input and seeing how the prediction changes, creating a local, interpretable model [28]. |

| Counterfactual Explanations | Allows researchers to interrogate the model by slightly altering input features (e.g., molecular descriptors) to see how the output changes, providing biological insight [5]. |

| DeepLIFT (Deep Learning Important FeaTures) | compares the activation of each neuron to a reference neuron, providing a traceable link between each activated neuron and the model's output [28]. |

| Synthetic Data | Artificially generated data that mimics real-world data, used to augment training datasets and address imbalances (e.g., gender data gap) without compromising privacy [5]. |

| AI Characteristics Transparency Reporting (ACTR) Score | A novel scoring metric to systematically quantify the transparency of an AI model across 17 categories, helping teams prepare for regulatory scrutiny [30]. |

Experimental Protocol: Workflow for Implementing XAI in a Drug Discovery Pipeline

The following diagram maps the logical workflow and signaling pathway for integrating XAI into a typical AI-driven drug discovery experiment to ensure reliability and transparency.

From Theory to Bench: Implementing Explainable AI and Transparent Workflows

Frequently Asked Questions (FAQs)

1. What is the fundamental "black box" problem in AI-driven drug discovery? While AI models, particularly complex deep learning models, demonstrate tremendous predictive capabilities in tasks like target identification and compound efficacy prediction, their internal decision-making processes are often opaque [5]. This lack of transparency makes it difficult for researchers to understand or verify the reasoning behind predictions, which is a critical barrier in drug discovery where scientific rationale is as important as the output itself [5] [31]. This opacity can hinder trust, acceptance, and the formulation of testable scientific hypotheses.

2. How do counterfactual explanations (CFs) make AI predictions more interpretable? Counterfactual explanations provide interpretability by generating hypothetical, minimally modified versions of a test instance that lead to an opposing prediction outcome [32]. In drug discovery, for a compound predicted as active, a counterfactual would be a very similar molecule predicted to be inactive [32]. The structural differences between the original molecule and its counterfactual directly highlight the specific chemical features or substructures that the model deems critical for its prediction, making the output intuitive and actionable for medicinal chemists [32] [33].

3. My counterfactual explanations seem chemically implausible. What could be wrong? Chemically implausible counterfactuals are a known limitation of some generation methods. Traditional masking strategies that simply remove atoms or features often create structures that fall outside the training data distribution, leading to invalid molecules and unreliable explanations [33]. To address this, use advanced methods like counterfactual masking, which replaces masked substructures with chemically reasonable fragments sampled from generative models (e.g., CReM, DiffLinker) trained to complete molecular graphs, ensuring the generated examples are valid and in-distribution [33].

4. How can I use XAI to identify and mitigate bias in my predictive models? Bias in AI models often stems from unrepresentative or imbalanced training datasets, which can lead to skewed predictions and perpetuate healthcare disparities [5]. Explainable AI (XAI) acts as a tool to uncover these biases by providing transparency into model decision-making. By highlighting which features most influence predictions, XAI allows researchers to audit AI systems, identify gaps in data coverage (e.g., underrepresentation of certain demographic groups or chemical spaces), and take corrective actions such as rebalancing datasets, refining algorithms, or using data augmentation to improve fairness and generalizability [5].

5. Are there regulatory guidelines for using AI and XAI in pharmaceutical research? Regulatory landscapes are evolving. The EU AI Act, for instance, classifies certain AI systems in healthcare and drug development as "high-risk," mandating strict requirements for transparency and accountability [5]. These systems must be "sufficiently transparent" so users can interpret their outputs. It is important to note that exemptions exist; AI systems used "for the sole purpose of scientific research and development" are generally excluded from the Act's scope [5]. Nonetheless, employing XAI is a proactive step toward building the trust and transparency that regulators increasingly demand.

Troubleshooting Guides

Issue 1: Model Predictions Lack Actionable Insights for Chemists

Problem: Your model accurately predicts compound activity, but the output is a simple "active/inactive" label. Research chemists cannot use this information to guide the rational design of improved molecules because the structural drivers of the prediction are unclear.

Solution: Implement counterfactual explanation (CF) techniques to generate "what-if" scenarios.

- Recommended Technique: Structure-based counterfactual generation via molecular recombination [32].

- Step-by-Step Protocol:

- Input: Start with your test compound (e.g., a kinase inhibitor predicted as active) [32].

- Core Decomposition: Use an algorithm (e.g., the Compound-Core Relationship (CCR) algorithm) to decompose the test compound and a set of its analogues into a core structure and a set of substituents at defined substitution sites [32].

- Substituent Library: Create a comprehensive library of chemically diverse substituents. This can be a general library of common fragments found in bioactive compounds, augmented with substituents specific to your chemical series (e.g., from kinase inhibitors) [32].

- Systematic Recombination: For the core of your test compound, systematically recombine it with all substituents from your library at one or two substitution sites simultaneously. This generates a large set of candidate molecules [32].

- Counterfactual Identification: Run these candidate molecules through your trained predictive model. Identify those candidates that are structurally very similar to your test compound but receive an opposing prediction (e.g., "inactive"). These are your counterfactual explanations [32].

- Expected Outcome: You will obtain a set of analogous molecules that "flip" the model's prediction. By comparing the original compound with its counterfactuals, chemists can immediately see which specific substituents or structural moieties the model associates with activity or inactivity, providing a direct, testable hypothesis for lead optimization [32].

Issue 2: Explanations are Unreliable Due to "Out-of-Distribution" Masking

Problem: When using perturbation-based explanation methods (like atom masking) to interpret graph neural network (GNN) predictions, the "masked" molecules are chemically invalid, causing the model to fail and provide nonsensical explanations.

Solution: Adopt the Counterfactual Masking (CM) framework, which ensures all masked structures remain valid, in-distribution molecules [33].

- Recommended Technique: Counterfactual Masking with generative fragment replacement [33].

- Step-by-Step Protocol:

- Identify Important Subgraph: Use a standard explanation method (e.g., GNNExplainer) on your input molecule to identify a connected subgraph of atoms deemed important for the prediction [33].

- Define Context: Remove this important subgraph from the full molecular graph. The remaining atoms and bonds form the "context". The atoms that were connected to the removed subgraph are defined as "attachment points" [33].

- Generative Replacement: Use a generative model (e.g., CReM or DiffLinker) conditioned on the defined context and attachment points. The model is trained to fill in missing fragments in a chemically valid way. Sample multiple new fragments from this model to replace the originally important subgraph [33].

- Evaluation & Explanation: The set of newly generated molecules, which are now valid counterfactuals, allows for a robust evaluation of the original explanation. In classification, you can present molecules that are predicted to be in a different class as counterfactual examples. This process reveals how changes to specific structural elements affect the property of interest [33].

- Expected Outcome: This method produces chemically realistic alternative molecules, leading to more robust and trustworthy explanations. It bridges the gap between explainability and molecular design by showing how to structurally alter a compound to change its properties [33].

Issue 3: Difficulty Quantifying Feature Importance in Complex Multi-Task Models

Problem: You are using a multi-task model (e.g., predicting activity against multiple kinase targets) but cannot decipher which molecular features are important for which specific task, leading to a lack of selectivity insights.

Solution: Combine model-agnostic explanation methods with multi-task modeling to disentangle feature contributions.

- Recommended Technique: SHapley Additive exPlanations (SHAP) analysis on a multi-task Random Forest (RFC) classifier [32] [34].

- Step-by-Step Protocol:

- Model Training: Train a multi-task Random Forest classifier, for example, to distinguish inhibitors across six classes of kinase targets. Use a representative and balanced dataset for each class to avoid training bias [32].

- Hyperparameter Optimization: Optimize model hyperparameters (e.g., number of trees, minimum samples per leaf) using a method like grid search on a validation set to ensure robust performance [32] [34].

- Calculate SHAP Values: For a given test compound and its prediction, use the SHAP library to compute Shapley values. This quantifies the marginal contribution of each input feature (e.g., the presence or absence of a specific molecular fingerprint bit) to the model's output probability for each kinase class [34].

- Analysis: Analyze the resulting SHAP values. Features with high positive SHAP values for a specific class are strong drivers for that class's prediction. You can create summary plots to visualize the global importance of features across the entire dataset, or force plots to deconstruct an individual prediction [34].

- Expected Outcome: You will obtain a quantitative measure of how much each molecular feature contributes to the prediction for each individual kinase target. This can reveal substructures that confer broad selectivity or, conversely, highly specific features that target a single kinase, guiding the design of more selective drug candidates [32] [34].

Data Presentation

Table 1: Quantitative Performance of Machine Learning Models for Cardiac Toxicity (TdP Risk) Prediction

This table summarizes the performance of various ML models in classifying Torsades de Pointes (TdP) risk, demonstrating how XAI can be used to select optimal models and biomarkers. AUC (Area Under the Curve) scores are used, where 1.0 is a perfect classifier [34].

| Model / Classifier | High-Risk AUC | Intermediate-Risk AUC | Low-Risk AUC | Key Biomarkers (Selected via SHAP) |

|---|---|---|---|---|

| Artificial Neural Network (ANN) | 0.92 | 0.83 | 0.98 | dVm/dtrepol, dVm/dtmax, APD90, APD50, APDtri, CaD90, CaD50, Catri, CaDiastole, qInward, qNet [34] |

| XGBoost | 0.89 | 0.80 | 0.95 | Varies based on model-specific SHAP analysis [34] |

| Support Vector Machine (SVM) | 0.87 | 0.78 | 0.93 | Varies based on model-specific SHAP analysis [34] |

| Random Forest (RF) | 0.85 | 0.75 | 0.90 | Varies based on model-specific SHAP analysis [34] |

Table 2: Country-Specific Research Output and Influence in XAI for Drug Research (2002-2024)

This bibliometric analysis shows the global distribution of research activity and impact in the field of Explainable AI for drug research, based on total publications (TP) and total citations (TC) until June 2024 [9].

| Country | Total Publications (TP) | Percentage of Total (%) | Total Citations (TC) | TC/TP (Avg. Citations per Paper) |

|---|---|---|---|---|

| China | 212 | 37.00% | 2949 | 13.91 |

| USA | 145 | 25.31% | 2920 | 20.14 |

| Germany | 48 | 8.38% | 1491 | 31.06 |

| United Kingdom | 42 | 7.33% | 680 | 16.19 |

| Switzerland | 19 | 3.32% | 645 | 33.95 |

| Thailand | 19 | 3.32% | 508 | 26.74 |

Experimental Protocols

Protocol 1: Systematic Generation of Counterfactuals for Kinase Inhibitor Profiling

Objective: To explain predictions of a multi-task kinase inhibitor model by generating structurally analogous counterfactual compounds that flip the predicted class [32].

Materials:

- Compounds & Data: Curated sets of inhibitors for six kinase targets (e.g., EGFR, VEGFR2, FLT3, JAK2, Src, MET) from ChEMBL. Data should be balanced across classes (e.g., 623 compounds per class via random undersampling) [32].

- Molecular Representation: Extended Connectivity Fingerprint (ECFP4, 2048 bits) for featurization [32].

- Software: RDKit for cheminformatics, scikit-learn for Random Forest implementation, and custom scripts for core decomposition and recombination [32].

Methodology:

- Model Training:

- Train a multi-task Random Forest classifier on the balanced kinase inhibitor dataset.

- Perform hyperparameter optimization via grid search (e.g., number of trees: 25-400, min samples per leaf: 1-10) using a 70/30 train/validation split [32].

- Evaluate final model performance on a held-out test set using metrics like balanced accuracy (BA) and Matthew's Correlation Coefficient (MCC) [32].

- Counterfactual Generation:

- For a test compound, extract its molecular core and all possible R-group substitution sites using a core decomposition algorithm (e.g., CCR) [32].

- Recombine the core with a large library of substituents (e.g., 666 fragments from common bioactive compounds and kinase inhibitors) at single sites and pairs of sites to generate thousands of candidate molecules [32].

- Predict the class of all candidate molecules using the trained multi-task model.

- Selection: Identify counterfactuals as candidates that are structurally very close (low Tanimoto distance) to the test compound but are predicted to belong to a different kinase class [32].

- Explanation: Analyze the structural differences between the test compound and its counterfactuals. The changing substituents indicate the chemical features critical for the model's class distinction.

Protocol 2: Using SHAP to Identify Optimal In-silico Biomarkers for Cardiac Toxicity

Objective: To identify the most influential in-silico biomarkers for predicting drug-induced Torsades de Pointes (TdP) risk using Explainable AI, and to build an optimized classifier [34].

Materials:

- Data: In-vitro patch clamp data for 28 drugs (IC50, Ki, Kd values for ion channels like hERG, ICaL) from the CiPA initiative [34].

- Simulation: O'Hara-Rudy (ORd) human ventricular cell model to simulate action potentials and calculate biomarkers under drug effects [34].

- Biomarkers: Twelve in-silico biomarkers, including APD90, APD50, dVm/dtmax, dVm/dtrepol, CaD90, CaD50, qNet, and qInward [34].

- Models: A suite of ML classifiers (ANN, SVM, RF, XGBoost, KNN, RBF) [34].

Methodology: