Building Resilient Bioinformatics Pipelines: Error Handling and Self-Correction in Multi-Agent AI Systems

This article explores the critical challenge of error handling and self-correction in multi-agent AI systems for bioinformatics.

Building Resilient Bioinformatics Pipelines: Error Handling and Self-Correction in Multi-Agent AI Systems

Abstract

This article explores the critical challenge of error handling and self-correction in multi-agent AI systems for bioinformatics. As these systems tackle complex tasks from sequencing alignment to variant calling, faulty agents and cascading errors pose significant risks to data integrity and scientific conclusions. We examine the foundational principles of resilient system design, survey methodological advances in self-correction and rollback mechanisms, provide troubleshooting strategies for common failure modes, and present validation frameworks for comparative performance assessment. Targeted at researchers, scientists, and drug development professionals, this review synthesizes current research and practical approaches for building robust, self-correcting bioinformatics multi-agent systems that can maintain reliability in production environments.

The Critical Need for Error Resilience in Bioinformatics Multi-Agent Systems

In modern bioinformatics, the integrity of data and analytical processes forms the foundation of scientific discovery and clinical application. The principle of "garbage in, garbage out" (GIGO) is particularly critical in this field, where errors in input data or processing can cascade through entire analysis pipelines, leading to flawed conclusions with serious consequences [1]. These consequences range from misdiagnoses in clinical settings where genomic data informs patient treatment, to the waste of millions in research funding when drug development targets are identified from low-quality data [1]. A staggering statistic reveals that nearly 30% of published research contains errors traceable to data quality issues at the collection or processing stage [1].

The emergence of multi-agent systems (MAS) represents a promising frontier for addressing these challenges through enhanced error detection and self-correction capabilities. BioAgents, a MAS built on small language models fine-tuned on bioinformatics data, demonstrates how specialized autonomous agents can work collaboratively to troubleshoot complex bioinformatics pipelines [2]. By incorporating self-evaluation mechanisms, these systems can assess the accuracy of their own outputs against defined thresholds, reprocessing responses that fall below quality standards to enhance reliability [2]. This article explores the high stakes of bioinformatics errors and establishes a technical support framework with practical troubleshooting guidance, all within the context of advancing self-correction capabilities in bioinformatics multi-agent systems research.

Technical Support Center

Frequently Asked Questions (FAQs)

FAQ 1: What are the most critical points in a bioinformatics workflow where errors commonly occur? Errors can manifest at multiple stages, but the most critical points include: (1) Sample collection and preparation, where issues like mislabeling or contamination occur; (2) Raw data generation, where low sequencing quality scores (Phred scores) or adapter contamination compromise data; (3) Read alignment, characterized by low alignment rates or poor mapping quality; and (4) Variant calling, where inadequate quality filtering leads to false positives/negatives [1]. Implementing quality control checkpoints at each of these stages is essential for error prevention.

FAQ 2: How can I determine if my sequencing data is of sufficient quality for analysis? Utilize quality assessment tools like FastQC to generate key metrics including base call quality scores (Phred scores), read length distributions, GC content analysis, adapter content evaluation, and sequence duplication rates [1] [3]. Establish minimum quality thresholds for these metrics before proceeding to downstream analyses, as recommended by resources like the European Bioinformatics Institute [1].

FAQ 3: What is the difference between quality control (QC) and quality assurance (QA) in bioinformatics? Quality Control (QC) focuses on identifying defects in specific outputs through activities like raw data validation and processing checks. Quality Assurance (QA) is a proactive, systematic process that aims to prevent errors by implementing standardized protocols, validation metrics, and comprehensive documentation throughout the entire data lifecycle [3].

FAQ 4: How does a multi-agent system improve error detection and correction? Multi-agent systems like BioAgents employ specialized agents for specific tasks (tool selection, workflow generation, error troubleshooting) that communicate and coordinate to solve complex problems [2]. Through self-evaluation, the system assesses response quality against a threshold, automatically reprocessing subpar outputs. This creates an iterative self-correction loop that enhances reliability without constant human intervention [2].

FAQ 5: Why is biological replication more important than sequencing depth for statistical power? While deeper sequencing can improve detection of rare features, it is primarily the number of biological replicates—independent samples that represent the population—that enables robust statistical inference [4]. High-throughput technologies can create the illusion of large datasets, but without adequate replication, conclusions cannot be generalized beyond the specific samples measured [4].

Troubleshooting Guides

Guide 1: Addressing Poor Data Quality in Raw Sequencing Files

- Symptoms: Low Phred scores, high adapter content, unusual GC distributions, or elevated duplication rates in reports from tools like FastQC.

- Investigation Steps:

- Verify that the same issue appears across multiple samples to rule out isolated sample preparation failures.

- Check laboratory protocols for deviations in sample preparation, library construction, or sequencing machine calibration.

- Consult the sequencing center's quality report to determine if the issue is batch-wide.

- Solutions:

- Trimming and Filtering: Use tools like Trimmomatic or Picard to remove low-quality bases, adapter sequences, and PCR duplicates [1].

- Pipeline Adjustment: If quality is uniformly poor, consider repeating the sequencing run.

- Threshold Implementation: Establish and enforce minimum quality thresholds for raw data before proceeding to analysis [1].

- Multi-Agent System Context: In a MAS, an agent specialized in data quality could automatically parse FastQC reports, flag datasets falling below thresholds, and recommend appropriate preprocessing tools, creating a self-correcting data ingestion pipeline [2].

Guide 2: Resolving Pipeline Failures in Alignment or Variant Calling

- Symptoms: Abnormally low alignment rates in tools like STAR or HISAT2; excessive false positive/negative variant calls in GATK outputs; workflow execution errors.

- Investigation Steps:

- Reference Genome Check: Confirm the reference genome version and index compatibility with your alignment tool.

- Parameter Audit: Review alignment and variant calling parameters for appropriateness to your data type (e.g., RNA-seq vs. DNA-seq).

- Quality Metric Verification: Examine mapping quality scores (MAPQ) and coverage depth/d uniformity using tools like SAMtools or Qualimap [1].

- Solutions:

- Reference Reconciliation: Ensure consistency in reference genome versions across all pipeline steps.

- Parameter Optimization: Recalibrate parameters based on tool best practices documentation (e.g., GATK Best Practices).

- Validation: Employ orthogonal validation methods (e.g., PCR for variants) to confirm key findings [1].

- Multi-Agent System Context: A multi-agent system could deploy a specialized agent to cross-reference tool parameters with curated best-practice databases (like EDAM ontology) and suggest corrections, demonstrating collaborative problem-solving [2].

Guide 3: Correcting for Batch Effects and Technical Artifacts

- Symptoms: Samples cluster by processing date, sequencing batch, or other technical factors rather than biological groups in PCA plots.

- Investigation Steps:

- Correlate technical metadata (sequencing date, library batch, technician) with expression/variant patterns.

- Include control samples across batches to detect systematic technical variation.

- Solutions:

- Experimental Design: Randomize sample processing across batches whenever possible [4].

- Statistical Correction: Apply batch effect correction algorithms (e.g., ComBat, RUV) in the statistical analysis phase, while being cautious not to remove biological signal.

- Inclusion of Covariates: Account for batch variables in statistical models.

Quantitative Impact of Bioinformatics Errors

The consequences of bioinformatics errors can be quantified in terms of financial cost, scientific integrity, and clinical impact. The following table summarizes key data points from recent analyses.

Table 1: Quantitative Impact of Data Quality Issues in Bioinformatics

| Impact Category | Statistical Evidence | Source |

|---|---|---|

| Research Reproducibility | Up to 70% of researchers have failed to reproduce another scientist's experiments; over 50% have failed to reproduce their own. | [3] |

| Published Error Rates | Recent studies indicate that up to 30% of published research contains errors traceable to data quality issues. | [1] |

| Clinical Sample Errors | A 2022 survey of clinical sequencing labs found up to 5% of samples had labeling or tracking errors before corrective measures. | [1] |

| Financial Implications | Improving data quality could reduce drug development costs by up to 25%, saving millions in research funding. | [3] |

Essential Protocols for Error Prevention

Protocol 1: Implementing a Multi-Layer Quality Control System

A robust QC system requires checkpoints at multiple stages of the bioinformatics workflow [1] [3].

- Raw Data Assessment: Run FastQC on raw sequencing files. Scrutinize Phred scores (Q≥30 is generally good), GC content, overrepresented sequences, and adapter contamination.

- Alignment QC: After alignment, generate metrics including alignment rate (e.g., >70-90% depending on organism and data type), insert size distribution (if applicable), and coverage depth using tools like SAMtools or Qualimap.

- Variant Calling QC: For variant call sets, apply variant quality score recalibration (VQSR) or hard-filtering based on quality depth (QD), strand bias (FS), and other context-specific metrics as outlined in GATK Best Practices.

- Expression Analysis QC: For transcriptomic data, assess RNA integrity numbers (RIN), read distribution across features, and outlier detection via PCA.

Protocol 2: Power Analysis for Optimal Experimental Design

To avoid underpowered studies that waste resources or overpowered studies that waste money, conduct a power analysis before data collection [4].

- Define Parameters: Determine four of the following five parameters to calculate the fifth:

- Significance Level (α): Typically set at 0.05.

- Power (1-β): Typically set at 0.8 or 0.8.

- Effect Size: The minimum biological effect you want to detect (e.g., 2-fold gene expression change).

- Sample Size (n): The number of biological replicates per group.

- Variance: The expected variability within each group.

- Estimate Inputs: Use pilot data, previous literature, or logical reasoning from first principles to estimate the effect size and variance.

- Calculate: Use statistical software (e.g., R's

pwrpackage) to perform the calculation, typically to solve for the required sample size. - Implement: Design the experiment with the calculated number of biological replicates, ensuring true independence to avoid pseudoreplication.

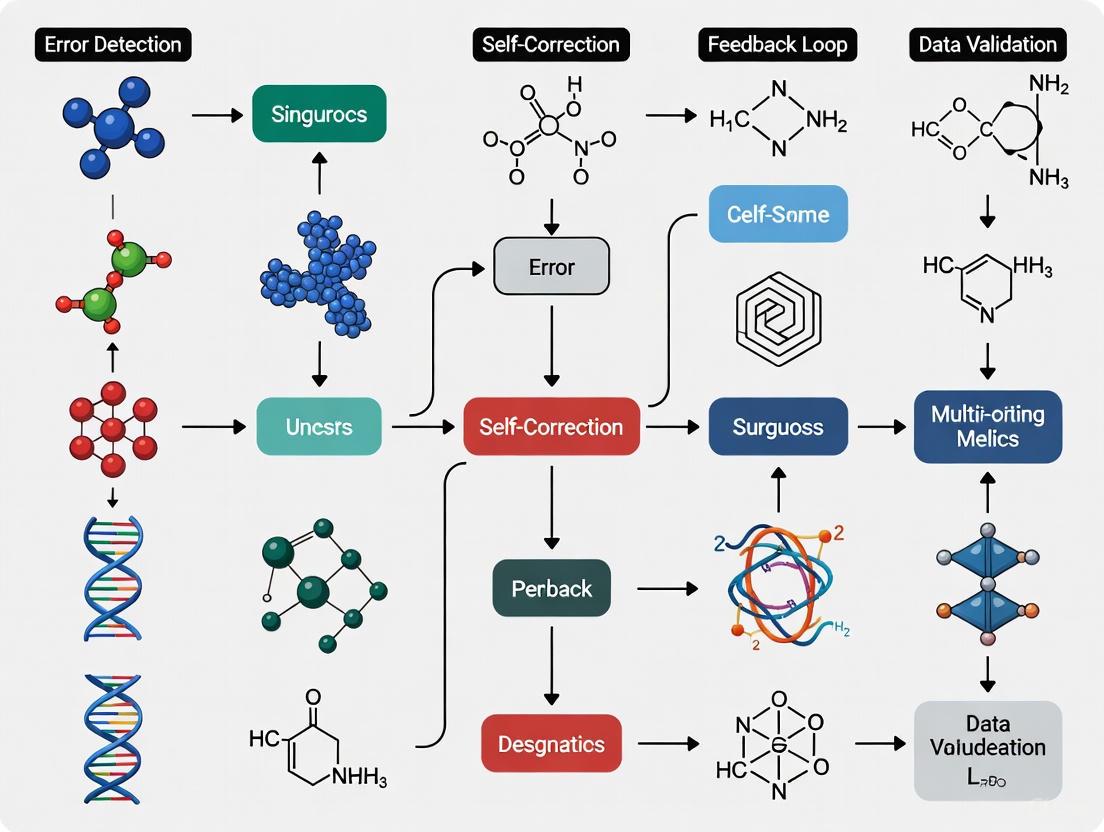

Visualization of Workflows and Logical Relationships

Multi-Agent System for Bioinformatics Error Handling

Bioinformatics Quality Assurance Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials and Tools for Robust Bioinformatics Analysis

| Item/Tool Name | Type | Primary Function |

|---|---|---|

| FastQC | Software Tool | Provides quality control metrics for raw sequencing data, including base quality scores, GC content, and adapter contamination [1] [3]. |

| Biocontainers | Software Resource | Provides standardized, portable environments (Docker, Singularity) for bioinformatics software, ensuring reproducibility and version control [2]. |

| Reference Standards | Biological/Data Material | Well-characterized samples with known properties used to validate bioinformatics pipelines and identify systematic errors [3]. |

| EDAM Ontology | Bioinformatics Ontology | A structured framework of well-established concepts in data analysis and life science, used to standardize tool annotations and improve discoverability [2]. |

| nf-core | Workflow Repository | A community-driven collection of peer-reviewed, curated bioinformatics pipelines (e.g., for RNA-seq, variant calling) built with Nextflow [2]. |

| STRING Database | Protein Network Database | Compiles, scores, and integrates protein-protein association information from multiple sources, used for functional enrichment analysis [5]. |

In bioinformatics, multi-agent systems are increasingly deployed to automate complex, multi-stage analytical workflows, such as genome sequencing, variant calling, and phylogenetic analysis [2]. These systems distribute tasks across specialized, autonomous agents that collaborate to achieve overarching research goals [6]. While this architecture offers significant advantages in processing complex biological data, it also introduces unique vulnerabilities. Cascading failures and state synchronization challenges represent two critical threats to system reliability and data integrity. When a single agent malfunctions or operates on outdated information, the error can propagate through the system, compromising the entire workflow and leading to erroneous scientific conclusions [7] [8]. This technical support guide addresses these vulnerabilities within the context of bioinformatics research, providing actionable troubleshooting protocols, FAQs, and mitigation strategies to ensure the robustness of self-correcting multi-agent systems.

Frequently Asked Questions (FAQs)

Q1: What is a cascading failure in a bioinformatics multi-agent system? A cascading failure occurs when a localized error or performance degradation in one agent triggers a chain reaction of failures in downstream agents [7]. In a bioinformatics context, this might manifest as a quality control agent producing incorrectly validated data, which is then processed by an alignment agent, and finally used by a variant calling agent, ultimately resulting in a flawed analysis. These failures are particularly problematic because individual agents may function correctly in isolation, but their interactions produce unintended, emergent behaviors that corrupt the entire scientific workflow [7] [8].

Q2: What causes state synchronization failures, and how do they impact genomic analysis? State synchronization failures occur when autonomous agents develop inconsistent views of shared system state. This is primarily caused by stale state propagation, conflicting state updates, or partial state visibility [8]. For example, in an order fulfillment system, if a payment agent updates an order status to "paid" but an inventory agent reads the status before receiving the update, it may refuse to allocate inventory [8]. In genomics, an analogous situation could involve a data preprocessing agent and an assembly agent working with different versions of a dataset, leading to assembly errors or haplotype misidentification.

Q3: What are the most common communication-related failures? The most prevalent communication failures include:

- Message Ordering Violations: When messages arrive out of sequence, violating causal dependencies (e.g., a execution signal arriving before a price update in a trading system) [8].

- Timeout and Retry Ambiguity: An agent times out waiting for a response, retries an operation, and inadvertently causes duplicate processing (e.g., double-charging a payment) [8].

- Schema Evolution Incompatibility: Different agent versions with incompatible message schemas lead to parsing failures or incorrect data interpretation [8].

Q4: How can I monitor my multi-agent system for emergent risks? Implement runtime monitoring for specific risk signals [7]:

- Safety Drift: Gradual deviation of agent behavior from expected parameters.

- Anomalous Sequence Detection: Unusual patterns in agent-to-agent communications.

- Invalid Tool Usage: Agents attempting to use tools in unintended ways. Effective monitoring requires structured logging of agent interactions and systems to flag behavioral anomalies across chains [7] [9].

Troubleshooting Guides

Protocol for Diagnosing Cascading Failures

Objective: Identify the root cause and propagation path of a cascading failure in a bioinformatics multi-agent workflow.

Materials:

- Distributed tracing tools (e.g., Jaeger, Zipkin)

- System logs from all agent components

- Workflow orchestration metadata

Methodology:

- Activate Distributed Tracing: Implement tracing that tracks requests across all agent interactions, preserving causal relationships and timing information [8]. Ensure traces capture:

- Causal Chains: Complete execution paths from initial request through all agent invocations.

- Temporal Relationships: Precise timing of agent invocations, message passing, and state updates.

- Context Flow: Information flow between agents, including context size and transformation points [8].

Reconstruct the Failure Chain:

- Use tracing data to identify the originating agent where the failure first manifested.

- Map the propagation path to downstream agents, noting how each agent amplified or transformed the error.

- Look for patterns of resource contention or coordination overhead that may have exacerbated the cascade [9] [8].

Simulate the Failure:

- In a testing environment, replay the traced execution with the same input data and system conditions.

- Systematically vary parameters to isolate the specific conditions triggering the cascade.

- Implement and validate circuit breakers or rollback mechanisms to contain similar failures in the future [7].

Protocol for Resolving State Synchronization Issues

Objective: Detect and resolve state inconsistencies between agents in a multi-agent bioinformatics system.

Materials:

- State transition logs

- Versioned state tracking system

- Conflict detection algorithms

Methodology:

- Implement State Consistency Validation:

Diagnose Synchronization Gaps:

Apply Remediation Strategies:

- For stale state issues, implement heartbeat mechanisms or state checksum comparisons to ensure timely propagation.

- For conflicting updates, introduce distributed locking mechanisms or implement conflict-free replicated data types (CRDTs).

- For partial visibility, revise state partitioning strategies to ensure agents have access to relevant state information [8].

Table 1: State Synchronization Failure Patterns and Mitigations

| Failure Pattern | Root Cause | Impact on Bioinformatics Workflows | Mitigation Strategy |

|---|---|---|---|

| Stale State Propagation | Slow state updates between agents | Variant calls based on outdated quality metrics | Implement state checksums with validation |

| Conflicting State Updates | Concurrent modifications without coordination | Contradictory annotations from parallel analysis | Introduce distributed locking mechanisms |

| Partial State Visibility | Information silos between specialized agents | Incomplete phylogenetic analysis due to missing data | Redesign state sharing protocols |

Failure Mode Visualization

Cascading Failures and State Sync Issues

Quantitative Failure Data

Table 2: Multi-Agent System Failure Metrics and Detection

| Failure Category | Performance Impact | Detection Metrics | Threshold for Alert |

|---|---|---|---|

| Coordination Latency | 100-500ms per interaction [8] | Handoff latency accumulation | Total workflow latency > single-agent baseline |

| State Synchronization | Unmeasurable data corruption | State propagation latency | SLA thresholds based on application needs [8] |

| Resource Contention | API rate limit exhaustion | Aggregate consumption across agents | Within 80% of total system capacity [9] |

| Communication Breakdown | Exponential load from retry storms | Retry rates across agents | Correlated spikes > 3 standard deviations [8] |

Research Reagent Solutions

Table 3: Essential Research Tools for Multi-Agent System Reliability

| Tool/Category | Function | Application in Bioinformatics |

|---|---|---|

| Distributed Tracing (e.g., Jaeger) | Tracks requests across agent interactions | Debugging genome analysis workflows [8] |

| Galileo Evaluation Tools | Simulates agent workflows and inspects failure cascades | Pre-deployment validation of pipeline reliability [7] |

| Containerization Technologies | Isolates and manages agent resource needs | Preventing resource contention in shared environments [10] |

| De Bruijn Graph Methods | Error correction using k-mer frequency | Self-correction of sequencing reads in ONT data [11] [12] |

| MAESTRO Framework | Layered threat modeling for agent systems | Comprehensive vulnerability assessment [7] |

| Retrieval-Augmented Generation (RAG) | Dynamically retrieves domain-specific knowledge | Enhancing agent decision-making in specialized analyses [2] |

Systemic Risk Mitigation Framework

Systemic Risk Mitigation Framework

Troubleshooting Guide: FAQs on Multi-Agent System Failures

Q1: Why does my multi-agent system provide correct conceptual steps but fail to generate executable code for complex workflows?

A: This is a known performance discrepancy in agentic systems. In evaluation, systems like BioAgents demonstrated human-expert-level performance on conceptual genomics tasks but struggled with code generation as workflow complexity increased. For medium-complexity tasks (e.g., RNA-seq alignment pipelines), systems often produce incomplete outputs, while for hard tasks (e.g., SARS-CoV-2 genome analysis), they may default to conceptual outlines instead of starter code [2] [13]. This limitation stems from gaps in indexed workflows and insufficient tool diversity in training data [13].

Q2: How can prompt injection attacks affect my bioinformatics multi-agent system, and what are the observable symptoms?

A: Prompt injection remains one of the most potent attack vectors against AI agents [14]. In a bioinformatics context, attackers can manipulate agents to:

- Leak sensitive data, including proprietary genomic information or database schemas [14]

- Misuse integrated tools to execute unintended actions, such as corrupting alignment data or modifying workflow parameters maliciously [14]

- Subvert agent behavior to ignore safety rules and execute harmful code [14] Observable symptoms include unexpected tool usage patterns, retrieval of internal system information, and execution of commands outside normal workflow parameters [14].

Q3: What are the signs that my agent's tools have been exploited, particularly in a bioinformatics context?

A: Tool exploitation manifests through several indicators:

- Unauthorized access to internal network resources through compromised web reader tools [14]

- Unexpected remote code execution through code interpreter tools, potentially compromising sensitive genomic data [14]

- Credential leakage leading to impersonation and privilege escalation within computational infrastructure [14] In bioinformatics systems, watch for abnormal database queries, unexpected file system access, or unauthorized execution of computational tools like sequence aligners or variant callers [14].

Q4: Why does iterative self-correction sometimes degrade rather than improve my agent's output quality?

A: BioAgents research incorporated self-evaluation to enhance reliability, where the reasoning agent assessed response quality against a defined threshold, with below-threshold outputs being reprocessed [2] [13]. However, the iterative process revealed diminishing returns, where repeated refinements negatively impacted output quality and did not necessarily lead to improved outcomes [2] [13]. This suggests limited effectiveness of simple self-correction loops without additional safeguards.

Q5: How can I determine the optimal number of specialized agents for my bioinformatics workflow without overwhelming the system?

A: Research indicates performance varies with agent count. In diagnostic testing, using GPT-4 as the base model, "Most Likely Diagnosis" accuracy in primary consultations was 31.31% (2 agents), 32.45% (3 agents), 34.11% (4 agents), and 31.79% (5 agents) [15]. This suggests an optimal range of 3-4 agents for many applications. Exceeding this count provides diminishing returns and may trigger token limitations that prevent completion of complex workflows [15].

Quantitative Analysis of Agent Performance and Failures

Table 1: Performance Comparison of Multi-Agent Systems Across Domains

| System / Metric | Conceptual Task Accuracy | Code Generation Completeness | Optimal Agent Count | Key Limitations |

|---|---|---|---|---|

| BioAgents (Bioinformatics) | Comparable to human experts [2] | Poor for complex workflows [13] | 3-4 specialized agents [2] | Code generation gaps; tool misinformation [2] |

| MAC Framework (Medical Diagnosis) | 34.11% (most likely diagnosis) [15] | N/A (Diagnostic focus) | 4 doctor agents + supervisor [15] | Performance plateaus with additional agents [15] |

| Investment Advisory Assistant | N/A | N/A | 3 specialized agents [14] | Vulnerable to prompt injection; tool exploitation [14] |

Table 2: Attack Success Rates Against Vulnerable AI Agents

| Attack Vector | Impact Severity | Framework Agnostic | Primary Mitigation |

|---|---|---|---|

| Prompt Injection | High: Data leakage, tool misuse, behavior subversion [14] | Yes [14] | Content filtering; prompt hardening [14] |

| Tool Exploitation | Critical: RCE, credential theft, unauthorized access [14] | Yes [14] | Input sanitization; access controls [14] |

| Intent Breaking | Medium-High: Goal manipulation, workflow disruption [14] | Yes [14] | Safeguards in agent instructions [14] |

| Resource Overload | Medium: Performance degradation, unresponsiveness [14] | Yes [14] | Resource monitoring; quota enforcement [14] |

Experimental Protocols for Agent Security and Reliability Testing

Protocol 1: Assessing Vulnerability to Prompt Injection Attacks

Objective: Evaluate agent resistance to malicious prompt injections that attempt to exfiltrate data or manipulate behavior [14].

Methodology:

- Deploy a test environment with three cooperating agents: orchestration agent, news agent, and stock agent mimicking the investment advisory architecture [14]

- Implement identical tools: search engine, web content reader, database interface, stock API, and code interpreter [14]

- Craft malicious prompts designed to:

- Extract agent instructions and tool schemas

- Gain unauthorized access to internal networks via web tools

- Leak credentials through manipulated outputs

- Execute attacks at beginning of new sessions to eliminate previous interaction influence [14]

- Measure success rates of exfiltration attempts and behavior manipulation

Evaluation Metrics:

- Percentage of successful instruction/tool schema extractions

- Rate of unauthorized internal resource access

- Success of credential leakage attempts

Protocol 2: Evaluating Self-Correction Capabilities in Bioinformatics Context

Objective: Assess the effectiveness of self-evaluation and correction mechanisms in specialized domains [2] [13].

Methodology:

- Implement BioAgents-style architecture with two specialized agents and one reasoning agent [13]

- Fine-tune first agent on bioinformatics tools documentation from Biocontainers and software ontology [2]

- Implement second agent with RAG on nf-core documentation and EDAM ontology [2]

- Devise three use cases of varying difficulty:

- Level 1 (Easy): Quality metrics on FASTQ files

- Level 2 (Medium): RNA-seq alignment against human reference genome

- Level 3 (Hard): SARS-CoV-2 genome assembly, annotation, and variant analysis [2]

- Implement self-evaluation threshold with reprocessing of below-threshold responses [2]

- Measure accuracy and completeness against expert bioinformatician outputs [2]

Evaluation Metrics:

- Accuracy: How well user queries are answered

- Completeness: Extent outputs capture all relevant information

- Self-correction effectiveness: Improvement rate through iteration cycles

Workflow Visualization: Agent Architectures and Attack Vectors

BioAgent Workflow and Attack Vectors

Self-Correction with Diminishing Returns

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for Robust Multi-Agent Bioinformatics Systems

| Component | Function | Implementation Example |

|---|---|---|

| Small Language Model Base | Provides reasoning capability with reduced computational requirements vs. LLMs [2] | Phi-3 model [2] [13] |

| Retrieval Augmented Generation (RAG) | Enhances responses with domain-specific knowledge; improves adaptability to new tools [2] | nf-core documentation; EDAM ontology [2] |

| Fine-tuning Framework | Specializes agents for domain-specific conceptual tasks [2] | Low-Rank Adaptation (LoRA) on Biocontainers documentation [2] |

| Tool Sanitization Layer | Prevents tool exploitation attacks through input validation and access controls [14] | Input sanitization; strict access controls [14] |

| Content Filtering | Detects and blocks prompt injection attempts at runtime [14] | Real-time content analysis; pattern detection [14] |

| Self-Evaluation Mechanism | Enables quality assessment against defined thresholds [2] | Reasoning agent with quality scoring [2] |

The Garbage In, Garbage Out (GIGO) Principle in Bioinformatics Data Pipelines

In bioinformatics, the Garbage In, Garbage Out (GIGO) principle dictates that the quality of your output is directly determined by the quality of your input. Flawed, biased, or poor-quality input data will inevitably produce unreliable and misleading results, regardless of the computational sophistication of your analysis pipelines [1] [16]. The stakes are exceptionally high; studies indicate that up to 30% of published bioinformatics research contains errors traceable to data quality issues at the collection or processing stage, which can adversely affect patient diagnoses in clinical genomics, waste millions in drug discovery, and misdirect scientific fields for years [1].

Troubleshooting Guides

Common Data Quality Issues and Solutions

Table 1: Common GIGO-Related Issues and Troubleshooting Steps

| Problem Category | Specific Symptoms | Diagnostic Steps | Corrective Actions |

|---|---|---|---|

| Data Quality Issues [1] [17] | Low Phred scores in FASTQ files; unexpected GC content; high adapter content. | 1. Run FastQC for initial quality metrics [17].2. Use MultiQC to aggregate results across samples [17].3. Check for contamination signals. | 1. Trim adapters and low-quality bases with Trimmomatic [1].2. Filter out low-quality reads.3. Re-sequence samples if quality is irrecoverable. |

| Sample & Labeling Errors [1] | Inconsistent results from technical replicates; genotype-phenotype mismatch. | 1. Verify sample tracking in a LIMS.2. Use genetic markers to confirm sample identity.3. Check for batch effects via PCA. | 1. Implement barcode labeling systems.2. Establish and enforce SOPs for sample handling.3. Statistically correct for batch effects in the design. |

| Tool Compatibility & Versioning [17] | Pipeline fails with cryptic errors; inconsistent results between runs. | 1. Check software versions and dependencies.2. Analyze log files for error messages.3. Use Git to track changes in pipeline scripts [17]. | 1. Use Conda to create isolated, version-controlled environments [18].2. Consult tool manuals and community forums.3. Use workflow managers like Nextflow or Snakemake for reproducibility [2] [18]. |

| Technical Artifacts [1] | PCR duplicates skewing coverage; systematic sequencing errors. | 1. Use Picard tools to mark duplicates.2. Analyze alignment metrics with SAMtools or Qualimap [1]. | 1. Remove PCR duplicates.2. Re-run analyses with corrected parameters or tools. |

A Multi-Agent System for Automated GIGO Prevention

Multi-agent systems (MAS) represent an advanced framework for building self-correcting bioinformatics pipelines. These systems decompose complex tasks among specialized, collaborative agents, enhancing error detection and correction [2] [13].

Experimental Protocol: Implementing a Multi-Agent QC Pipeline

Agent Specialization: Deploy multiple specialized agents, each fine-tuned for a specific task [2] [13].

- A Data Ingestion Agent validates raw data formats and metadata upon pipeline initiation.

- A Quality Control Agent runs tools like FastQC and MultiQC, interpreting results against predefined thresholds [17].

- An Alignment & Analysis Agent monitors stage-specific metrics (e.g., alignment rates, coverage depth) using SAMtools [1].

- A Supervisor/Reasoning Agent synthesizes findings from all agents, makes final decisions on data quality, and triggers re-runs or alerts [15].

Knowledge Integration: Enhance agents using fine-tuning on domain-specific data (e.g., bioinformatics tool documentation) and Retrieval-Augmented Generation (RAG) from curated sources like the EDAM ontology and nf-core documentation to ensure recommendations are accurate and current [2] [13].

Self-Evaluation Loop: Implement a self-evaluation step where the reasoning agent assesses the quality of the collective output against a confidence threshold. If the score is low, the system can automatically re-trigger analysis with adjusted parameters [2] [13].

The following diagram illustrates the workflow and interactions of these agents within a self-correcting pipeline:

Frequently Asked Questions (FAQs)

Q1: What is the most critical step to prevent GIGO in my bioinformatics pipeline? The most critical step is implementing rigorous Quality Control (QC) at the very beginning with your raw data. As the GIGO principle states, no amount of sophisticated downstream analysis can compensate for fundamentally flawed input [1] [16]. Using tools like FastQC to scrutinize raw sequencing files before proceeding with alignment or variant calling is non-negotiable.

Q2: How can multi-agent systems help mitigate the GIGO problem? Multi-agent systems combat GIGO by introducing modular, specialized oversight. Instead of one monolithic pipeline, multiple agents act as independent validators. For example, in the BioAgents system, one agent fine-tuned on tool documentation can catch incorrect software usage, while another using RAG on workflow best practices can identify suboptimal parameter choices, effectively creating a collaborative safety net [2] [13].

Q3: My pipeline ran to completion without errors. Does that mean my data and results are good? Not necessarily. A lack of fatal errors only confirms that the tools executed, not that they executed correctly on high-quality data. Technical artifacts like batch effects or low-level contamination can produce biologically plausible but entirely inaccurate results [1]. Always validate key findings using independent methods if possible and perform sanity checks on the results (e.g., check expression of housekeeping genes in RNA-seq).

Q4: What are the best practices for ensuring reproducibility and data integrity?

- Version Control: Use Git for all your code and scripts [17] [18].

- Environment Management: Use Conda to create reproducible software environments for each project [18].

- Workflow Management: Use Snakemake or Nextflow to ensure pipeline steps are documented and reproducible [1] [18].

- Documentation: Maintain detailed records of all parameters, software versions, and data transformations [1] [17].

Q5: Where can I find reliable, pre-validated pipelines to reduce GIGO risk? The nf-core community provides a collection of peer-reviewed, curated bioinformatics pipelines written in Nextflow [18]. These pipelines incorporate best practices for quality control and analysis, making them an excellent starting point that minimizes errors from faulty workflow design.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Digital "Reagents" for Robust Bioinformatics Research

| Tool Name | Category | Primary Function | Role in Combating GIGO |

|---|---|---|---|

| FastQC [17] | Quality Control | Provides quality metrics for raw sequencing data. | Identifies quality issues at the earliest stage, preventing propagation of "garbage" data. |

| MultiQC [17] | Quality Control | Aggregates results from multiple tools (FastQC, etc.) into a single report. | Allows holistic assessment of data quality across an entire project, revealing batch effects. |

| Conda/Bioconda [18] | Environment Management | Manages isolated software environments with specific versioned dependencies. | Eliminates "works on my machine" problems, ensuring tool behavior is consistent and reproducible. |

| Nextflow/Snakemake [2] [18] | Workflow Management | Orchestrates complex, multi-step computational pipelines. | Ensures workflow reproducibility and provides built-in mechanisms for failure recovery and caching. |

| Git [17] [18] | Version Control | Tracks changes in code and scripts over time. | Creates an audit trail for all analytical decisions, allowing pinpointing of when errors were introduced. |

| BioAgents (MAS) [2] [13] | Multi-Agent System | Provides interactive, expert-like assistance in pipeline design and troubleshooting. | Democratizes expert knowledge, helping users avoid common pitfalls in tool selection and workflow logic. |

Visualizing the GIGO Principle in a Standard Bioinformatics Workflow

The following diagram maps the GIGO principle and key quality control checkpoints onto a standard bioinformatics workflow, showing how errors can propagate and where MAS agents can intervene.

This technical support document addresses a known performance issue within bioinformatics multi-agent systems (MAS): the decline in reliability for complex code generation and genomics tasks. As these systems are deployed for more advanced research and drug development, understanding and mitigating these drops is crucial for maintaining robust, automated workflows. The content herein is framed within the broader research thesis that effective error handling and self-correction mechanisms are fundamental to the evolution of trustworthy agentic bioinformatics.

Frequently Asked Questions (FAQs)

1. What specific performance drops are observed in bioinformatics multi-agent systems? Performance degradation follows a clear pattern as task complexity increases. Systems like BioAgents demonstrate human-expert-level performance on conceptual genomics questions but show significant declines in code generation tasks, especially for medium and high-complexity workflows [2] [13]. In the most complex scenarios, the system may fail to generate starter code entirely, reverting to a conceptual outline [2].

2. Why does task complexity so severely impact code generation? The primary reasons are gaps in the system's knowledge and training data. Performance drops have been attributed to "gaps in the indexed workflows, and a lack of tool and language diversity in the training dataset" [2] [13]. Furthermore, complex tasks require successful coordination among multiple agents; a single point of failure can lead to a cascade of errors [19].

3. What are the common failure modes in multi-agent systems? Failures can be categorized using the MAST framework (Misalignment, Ambiguity, Specification errors, and Termination gaps) [19]. Key failure modes include:

- Communication Ambiguity: Agents misinterpret each other's outputs [19].

- Poor Task Decomposition: A planning agent breaks down a problem into poorly defined or incompatible subtasks [19].

- Uncoordinated Agent Outputs: Agents produce work based on mismatched assumptions (e.g., different data formats) [19].

- Lack of Oversight: No effective "judge" agent exists to validate the overall correctness of the workflow output [19].

4. How can self-correction mechanisms like self-evaluation help? Systems like BioAgents implement self-evaluation where a reasoning agent assesses response quality against a defined threshold. Outputs scoring below this threshold are reprocessed [2] [13]. However, this approach can show diminishing returns, where repeated refinement attempts can sometimes negatively impact output quality, indicating that simple retries are an insufficient self-correction strategy [2].

5. What is the role of Retrieval-Augmented Generation (RAG) in improving reliability? RAG enhances an agent's access to domain-specific knowledge. Frameworks like MARWA emphasize a "retrieval-augmented framework to strengthen tool command accuracy," which incorporates multi-perspective LLM-augmented descriptions of tools and workflows [20]. This grounds the agent's responses in verified documentation, reducing hallucinations and improving accuracy.

Troubleshooting Guide

This guide outlines steps to diagnose and address performance issues in your multi-agent bioinformatics workflows.

Problem: Incomplete or Missing Code for Complex Workflows

| Step | Action | Expected Outcome |

|---|---|---|

| 1 | Verify RAG Knowledge Base | Confirm the indexed documentation (e.g., nf-core, EDAM ontology, Biocontainers) contains examples of the target workflow or its components [2] [13]. |

| 2 | Simplify Task Decomposition | Instruct the planner agent to break the task into smaller, more atomic subtasks. Validate that each subtask has a clear, single objective [19]. |

| 3 | Check Agent Specialization | Ensure that specialized agents (e.g., for tool selection, code generation) are fine-tuned on relevant, high-quality data to maintain their expertise [2]. |

| 4 | Implement Output Validation | Introduce a verifier or "judge" agent to check the syntactical and logical correctness of generated code snippets before they are integrated [19]. |

Problem: Cascading Errors and Agent Miscommunication

| Step | Action | Expected Outcome |

|---|---|---|

| 1 | Audit Communication Protocols | Enforce a standardized data format (e.g., JSON) for all inter-agent communication to prevent misinterpretation [19]. |

| 2 | Improve Context Passing | Implement a robust memory manager to ensure critical context from earlier steps is selectively and accurately passed to downstream agents [21]. |

| 3 | Define Clear Termination Conditions | Set explicit success/failure criteria for each agent's subtask to prevent infinite loops or premature termination [19]. |

| 4 | Isolate Failing Agents | Run agents individually with their subtask input to identify the specific agent or module that is the source of the error [21]. |

Experimental Protocols for Performance Evaluation

To systematically study performance drops, the following experimental methodology can be employed, based on established research practices [2] [13].

Protocol 1: Tiered Task Difficulty Evaluation

Objective: To quantify performance degradation across varying levels of task complexity.

- Task Design: Create a set of tasks categorized into three tiers:

- Level 1 (Easy): Single-step tasks (e.g., "Provide quality metrics on FASTQ files").

- Level 2 (Medium): Multi-step, established pipelines (e.g., "Align RNA-seq data against a human reference genome").

- Level 3 (Hard): Complex, multi-objective workflows (e.g., "Assemble, annotate, and analyze SARS-CoV-2 genomes to characterize variants").

- Output Generation: For each task, prompt the MAS to generate two outputs: a conceptual genomics plan and executable code/workflow.

- Expert Assessment: A bioinformatics expert reviews all outputs based on two axes:

- Accuracy: How well the query was answered.

- Completeness: The extent to which the output captured all relevant information.

- Data Analysis: Compare system performance against human expert benchmarks for each tier and output type.

Protocol 2: Self-Correction Feedback Loop Analysis

Objective: To evaluate the efficacy of self-evaluation and iterative refinement mechanisms.

- Setup: Configure the MAS to use a self-evaluation agent that scores all outputs on a predefined scale (e.g., 1-10).

- Threshold Setting: Define a quality threshold below which outputs are automatically reprocessed.

- Iteration Cycle: For a set of failing tasks, allow a fixed number of refinement iterations (e.g., 3-5).

- Metric Tracking: For each iteration, record the self-evaluation score and the subsequent expert-assessed score.

- Outcome Analysis: Determine if iterative refinement leads to genuine improvement, stagnation, or degradation in output quality, identifying the point of diminishing returns.

The logical workflow for this self-correction analysis is outlined below.

Quantitative Performance Data

The following tables summarize typical performance data observed in studies of systems like BioAgents, illustrating the core challenge of performance drops [2] [13].

Table 1: Performance Across Task Difficulty Levels

| Task Difficulty | Conceptual Genomics | Code Generation | Key Observations |

|---|---|---|---|

| Level 1 (Easy) | High Accuracy & Completeness | Matches Expert Accuracy | Occasional tool hallucinations in code. |

| Level 2 (Medium) | High Accuracy & Completeness | Struggles with Complete Outputs | Fails to produce full end-to-end pipelines. |

| Level 3 (Hard) | High Accuracy & Completeness | Fails to Generate Starter Code | Reverts to conceptual step outlines. |

Table 2: Common MAS Failure Modes (MAST Framework) [19]

| Failure Category | Specific Issue | Impact on Performance |

|---|---|---|

| Specification & Design | Ambiguous Initial Instructions | Agents diverge in behavior and understanding. |

| Specification & Design | Poor Task Decomposition | Subtasks are too granular or not serializable. |

| Inter-Agent Misalignment | Communication Ambiguity | Outputs from one agent are unusable by the next. |

| Inter-Agent Misalignment | Uncoordinated Agent Outputs | Outputs are in incompatible formats (e.g., YAML vs. JSON). |

| Termination Gaps | Lack of Oversight/Judge | Incorrect or incomplete results are not caught. |

| Termination Gaps | Inadequate Loop Detection | Agents run indefinitely, wasting computational resources. |

The Scientist's Toolkit: Research Reagent Solutions

The following tools and data sources are essential for developing and troubleshooting bioinformatics multi-agent systems.

Table 3: Essential Resources for Bioinformatics MAS Development

| Item | Function in Research | Reference/Source |

|---|---|---|

| Biocontainers | Provides standardized, containerized bioinformatics software packages, used for fine-tuning agents on tool documentation. | [2] [13] |

| EDAM Ontology | A comprehensive ontology of bioinformatics operations, topics, and data types, used to structure knowledge for agents. | [2] [13] |

| nf-core | A community-driven collection of peer-reviewed, versioned bioinformatics pipelines. Serves as a gold-standard source for workflow retrieval (RAG). | [2] [13] |

| Phi-3 / Small Language Models (SLMs) | A class of smaller, more efficient language models that enable local operation and reduce computational resource demands for agents. | [2] [13] |

| Biostars QA Dataset | A repository of 68,000+ bioinformatics question-answer pairs used to understand common user challenges and inform agent design. | [2] [13] |

| Low-Rank Adaptation (LoRA) | A parameter-efficient fine-tuning technique used to adapt base language models for specialized bioinformatics tasks without full retraining. | [2] [13] |

System Architecture and Error Flow

A high-level view of a multi-agent system like BioAgents helps visualize where performance bottlenecks and errors can occur. The following diagram maps the information flow and critical points of failure.

Architectural Patterns and Self-Correction Mechanisms for Resilient Systems

In the context of bioinformatics multi-agent systems, the underlying organizational architecture directly influences capabilities in error handling, self-correction, and troubleshooting efficiency. Hierarchical, flat, and linear structures each present distinct advantages and limitations for managing complex computational workflows. As bioinformatics pipelines grow increasingly sophisticated—encompassing data preprocessing, alignment, variant calling, and analysis—the choice of system architecture becomes critical for ensuring reliability and facilitating rapid problem resolution. Research on systems like BioAgents demonstrates how multi-agent frameworks leverage these structural paradigms to democratize bioinformatics analysis, enabling researchers to develop and troubleshoot complex pipelines through specialized agents working in coordination [2] [13].

This technical support center provides structured guidance for researchers navigating bioinformatics challenges within these system architectures. By framing troubleshooting methodologies within specific organizational contexts, we aim to enhance error handling capabilities and support the self-correction mechanisms essential for robust bioinformatics research.

Defining Organizational Structures

Hierarchical structures resemble pyramids with clear vertical chains of command, where authority cascades down from a single person at the top to multiple management layers [22] [23]. This traditional model features specialized departments with clearly defined reporting relationships and is commonly found in large organizations with extensive workforces.

Flat structures eliminate multiple middle management layers, creating shorter, wider organizations where employees typically report directly to leadership [22] [23]. This model fosters collaborative environments with distributed decision-making authority and is frequently adopted by startups and smaller research teams.

Linear structures represent one of the simplest organizational forms, with self-contained departments and clear, unified lines of authority flowing directly from top to bottom [24]. This structure maintains strict accountability through simplified reporting relationships without matrixed connections.

Quantitative Structural Comparison

Table 1: Comparative analysis of organizational structure characteristics

| Characteristic | Hierarchical Structure | Flat Structure | Linear Structure |

|---|---|---|---|

| Management Layers | Multiple layers [22] | Few or no middle management [22] [23] | Minimal, direct layers [24] |

| Decision-Making Approach | Top-down [23] | Collaborative/Decentralized [23] | Centralized at top [24] |

| Communication Flow | Vertical through formal channels [22] | Direct and horizontal [23] | Vertical, simplified chain [24] |

| Employee Autonomy | Lower autonomy [23] | Higher autonomy [23] | Limited to role [24] |

| Role Definition | Clearly defined, specialized roles [22] | Broader roles with overlapping responsibilities [23] | Strictly defined departmental roles [24] |

| Error Handling | Formal escalation procedures | Peer collaboration and direct resolution | Direct supervisor intervention |

| Best Suited For | Large organizations with complex operations [22] | Small teams and dynamic environments [23] | Stable environments with routine tasks [24] |

Table 2: Performance metrics in bioinformatics contexts

| Performance Metric | Hierarchical Structure | Flat Structure | Linear Structure |

|---|---|---|---|

| Response to Simple Errors | Slow (requires escalation) [23] | Rapid (direct action) [23] | Moderate (direct supervisor) [24] |

| Complex Problem-Solving | Structured but bureaucratic [22] | Innovative but potentially unfocused [23] | Methodical but inflexible [24] |

| Adaptability to New Tools | Slow adoption process [22] | Rapid integration [23] | Standardized implementation [24] |

| Cross-Domain Collaboration | Limited by departmental boundaries [23] | Naturally facilitated [23] | Formally channeled [24] |

| Knowledge Transfer | Formal training systems | Organic sharing | Structured documentation |

Architectural Implementation in Bioinformatics Multi-Agent Systems

Multi-Agent System Architectures for Bioinformatics

Bioinformatics multi-agent systems represent a practical application of these organizational structures for specialized research tasks. Systems like BioAgents utilize a coordinated approach where different architectural paradigms govern how specialized agents collaborate on complex bioinformatics workflows [2] [13]. The system employs two specialized agents—one fine-tuned on bioinformatics tools documentation, and another utilizing retrieval-augmented generation (RAG) on nf-core documentation and EDAM ontology—with a central reasoning agent coordinating their activities [2].

Research demonstrates that implementing self-evaluation mechanisms within these multi-agent systems enhances reliability by allowing agents to assess response quality against defined thresholds [2] [13]. This structural approach to error handling mirrors the accountability pathways in human organizational structures while leveraging computational advantages for iterative improvement.

Experimental Protocol: Evaluating Architecture Performance

Objective: To quantify error handling efficiency across hierarchical, flat, and linear architectures in bioinformatics multi-agent systems.

Methodology:

- Task Design: Implement three use cases of varying complexity:

- Level 1 (Easy): Quality metrics on FASTQ files

- Level 2 (Medium): RNA-seq alignment against human reference genome

- Level 3 (Hard): SARS-CoV-2 genome assembly, annotation, and variant analysis [2]

Agent Configuration:

- Deploy specialized agents for conceptual genomics and code generation tasks

- Implement reasoning agent with self-evaluation capabilities

- Establish communication protocols matching each architectural paradigm

Evaluation Metrics:

- Accuracy: How well the user's query was answered

- Completeness: Extent to which output captured all relevant information

- Time to Resolution: Duration from error identification to correction

- Explanation Quality: Logical reasoning provided for solutions [2]

Validation: Expert bioinformaticians review system outputs and compare with human expert performance on identical tasks [2].

Diagram 1: Three organizational structures for bioinformatics teams.

Troubleshooting Guides: Architecture-Specific Error Resolution

Hierarchical Structure Troubleshooting

Problem: Slow response to pipeline errors

- Symptoms: Bioinformatics pipeline issues require multiple approval layers before implementation of fixes, causing significant downtime [23].

- Resolution Protocol:

- Pre-authorize specific technical decisions for common pipeline failures

- Implement tiered response system with clear authority thresholds

- Establish direct technical channels for time-critical errors while maintaining reporting protocols

- Architectural Advantage: Clear accountability and specialized depth for complex, multi-faceted errors [22]

Problem: Communication silos between specialized teams

- Symptoms: Alignment team resolves mapping issues without notifying variant calling team, causing downstream errors [23].

- Resolution Protocol:

- Implement cross-functional liaison roles between departments

- Schedule regular inter-departmental technical syncs

- Create shared documentation repository with cross-indexed error solutions

Flat Structure Troubleshooting

Problem: Ambiguous responsibility for pipeline failures

- Symptoms: RNA-seq quality control errors remain unaddressed as team members assume others will handle them [23].

- Resolution Protocol:

- Implement rotating "pipeline lead" role with clearly defined responsibility periods

- Establish peer review checkpoints for critical workflow stages

- Create public task assignment system for error resolution

- Architectural Advantage: Rapid response capability and collaborative problem-solving for novel challenges [23]

Problem: Inconsistent tool implementation

- Symptoms: Different team members implement conflicting versions of alignment tools, causing reproducibility issues [17].

- Resolution Protocol:

- Develop standardized containerization approach (Docker/Singularity)

- Implement tool version registry with mandatory compliance

- Establish lightweight approval process for new tool incorporation

Linear Structure Troubleshooting

Problem: Single point of failure in workflow expertise

- Symptoms: When the alignment specialist is unavailable, variant calling pipeline halts entirely [24].

- Resolution Protocol:

- Develop cross-training program for adjacent technical domains

- Create detailed standard operating procedures for all specialized tasks

- Implement "buddy system" for critical technical roles

- Architectural Advantage: Clear escalation paths and standardized procedures for routine errors [24]

Problem: Inflexible response to novel errors

- Symptoms: Unprecedented quality control metrics in novel sequencing data cause pipeline stagnation [24].

- Resolution Protocol:

- Establish defined innovation periods for protocol development

- Create external consultation channel for novel problems

- Implement periodic workflow review against community standards

Self-Correction Mechanisms in Multi-Agent Systems

Implementation Framework

Bioinformatics multi-agent systems incorporate self-correction mechanisms that mirror effective error handling in human organizational structures. These systems employ several technical approaches to enable autonomous problem-resolution:

Self-evaluation mechanisms allow agents to assess their output quality against defined thresholds before delivering responses to users [2] [13]. Outputs scoring below established quality thresholds trigger reprocessing, where agents independently reanalyze prompts to generate improved responses.

Collaborative reasoning frameworks enable multi-agent systems to provide transparent explanations for their bioinformatics recommendations, similar to how effective research teams document their decision-making processes [2]. For example, when recommending alignment tools like STAR or HISAT2 for RNA-seq data, these systems specify factors influencing tool selection such as dataset size and desired accuracy levels [2].

Diagram 2: Self-correction workflow in bioinformatics multi-agent systems.

Research Reagent Solutions for Multi-Agent Systems

Table 3: Essential components for bioinformatics multi-agent systems

| Component | Function | Implementation Example |

|---|---|---|

| Specialized Agents | Domain-specific task execution | Bioinformatics tool selection agent fine-tuned on Biocontainers documentation [2] |

| Reasoning Engine | Coordinates agent activities and evaluates outputs | Phi-3 model serving as central reasoning agent [2] [13] |

| Retrieval-Augmented Generation (RAG) | Enhances responses with current domain knowledge | RAG implementation on nf-core documentation and EDAM ontology [2] |

| Self-Evaluation Module | Quality assessment of generated solutions | Threshold-based scoring system for response quality [2] |

| Bioinformatics Knowledge Base | Domain-specific data for training and reference | Biocontainers tools documentation and software ontology [2] |

| Workflow Management Interface | Pipeline orchestration and error tracking | Integration with Nextflow, Snakemake, or Galaxy workflows [17] |

Frequently Asked Questions (FAQs)

Q1: How does organizational structure impact bioinformatics pipeline efficiency? A1: Organizational structure directly influences error response time, cross-team collaboration, and innovation capacity. Hierarchical structures provide clear accountability for complex errors but may slow response times, while flat structures enable rapid innovation but may struggle with coordination in large projects [22] [23]. The optimal structure depends on team size, project complexity, and error handling requirements.

Q2: What self-correction mechanisms show promise in bioinformatics multi-agent systems? A2: Current research indicates that self-evaluation mechanisms, where agents assess response quality against defined thresholds before delivery, significantly enhance output reliability [2]. Additionally, collaborative reasoning frameworks that provide transparent explanations for bioinformatics recommendations improve trust and facilitate human-agent collaboration in troubleshooting complex workflows.

Q3: How can we mitigate communication silos in hierarchical bioinformatics teams? A3: Effective strategies include implementing cross-functional liaison roles, scheduling regular inter-departmental technical syncs, creating shared documentation repositories with cross-indexed error solutions, and establishing center of excellence groups for key bioinformatics methodologies [23].

Q4: What are the most common pitfalls in flat organizational structures for research teams? A4: Flat structures often struggle with ambiguous responsibility for pipeline failures, inconsistent tool implementation across team members, power struggles in the absence of formal authority structures, and difficulty maintaining specialized expertise without clear career progression paths [23]. These can be mitigated through rotating leadership roles and standardized protocols.

Q5: How do linear structures maintain efficiency in routine bioinformatics operations? A5: Linear structures excel in environments with well-established workflows through clear escalation paths, standardized procedures for common errors, direct accountability, and simplified communication channels [24]. However, they may struggle with novel problems requiring interdisciplinary collaboration.

Q6: What metrics should we use to evaluate error handling in bioinformatics teams? A6: Key performance indicators include time to error identification, time to resolution, error recurrence rates, cross-disciplinary collaboration incidents, solution scalability, and reproducibility of error fixes across similar scenarios [2] [17].

The comparative analysis of hierarchical, flat, and linear architectures reveals distinct advantages for different bioinformatics research contexts. Hierarchical structures provide the specialized depth and clear accountability necessary for complex, multi-faceted computational challenges, while flat architectures foster the innovation and rapid iteration valuable in emerging research domains. Linear structures offer efficiency and stability for established workflows with well-characterized error profiles.

In multi-agent bioinformatics systems, architectural choices directly influence self-correction capabilities and error handling efficiency. By implementing appropriate organizational structures aligned with research goals and error profiles, bioinformatics teams can enhance troubleshooting effectiveness and advance the reliability of computational research in drug development and genomic medicine.

Implementing Self-Evaluation and Self-Correction Loops in Agent Workflows

In bioinformatics multi-agent systems, self-evaluation and self-correction loops are critical for enhancing the reliability and trustworthiness of automated workflows. These systems break down complex tasks, such as genome sequencing or variant calling, across multiple specialized agents that must coordinate effectively [2] [6]. However, research indicates that a significant portion of multi-agent system failures—32% from poor task specification and 28% from coordination problems—can be mitigated through robust internal validation and error recovery mechanisms [25]. This guide provides targeted support for researchers implementing these vital self-healing capabilities.

Frequently Asked Questions (FAQs)

What are self-evaluation and self-correction loops in agent systems? Self-evaluation is an agent's ability to assess the quality and accuracy of its own outputs against defined criteria [2] [13]. Self-correction refers to the subsequent processes where the agent attempts to rectify identified errors, often by re-processing prompts, adjusting its reasoning, or employing alternative tools [26].

Why do my agents get stuck in repetitive loops during self-correction? Repetitive loops often occur due to a lack of effective stopping criteria or escalation protocols. Implementing a maximum retry threshold and a structured fallback plan—such as handing the task to a different specialized agent or flagging it for human review—can prevent this [27] [25].

How can I ensure my multi-agent system remains transparent in its decisions? Transparency is achieved by mandating that agents provide rationales for their decisions. Using reasoning frameworks like Chain-of-Thought (CoT) or ReAct forces agents to explain their step-by-step logic, making the decision-making process interpretable [2] [13]. Furthermore, linking every predicted workflow step or parameter back to its source evidence in the literature is a proven method for ensuring traceability [28].

What is the most common cause of agent failure in tool execution? A frequent cause is unhandled edge cases or unexpected outputs from external tools and APIs. Agents can fail to complete a task if a tool returns an ambiguous response, encounters a network timeout, or receives data in an unanticipated format [27]. Implementing robust function call validation and retry mechanisms with exponential backoff can mitigate these issues [26].

Troubleshooting Guides

Problem 1: Inconsistent or Hallucinated Outputs

Symptoms: The agent generates plausible but incorrect tool names, parameter settings, or workflow steps that are not grounded in source documentation.

Solutions:

- Implement a "Judge" Agent: Introduce an independent agent whose sole role is to validate the outputs of other working agents against predefined criteria and source materials. This breaks groupthink and catches hallucinations before they propagate [25].

- Enhance Retrieval-Augmented Generation (RAG): Build a unified vector index over full-text publications, tables, and figures. Use this index to ground the agent's responses in citable evidence, and implement automated consistency checks to suppress ungrounded information [28].

- Enforce Structured Outputs: Move away from free-form prose. Require agents to output structured data (e.g., JSON) that conforms to a strict schema, which is easier to validate automatically for completeness and correctness [25].

Problem 2: Coordination Failures in Multi-Agent Systems

Symptoms: Agents duplicate work, provide conflicting instructions, or are unable to synthesize their results into a cohesive final output.

Solutions:

- Implement Structured Communication Protocols: Replace unstructured chat with schema-validated message types (e.g.,

request,inform,commit). This clarifies intent and reduces ambiguity in inter-agent communication [25]. - Define Clear Agent Roles and Resource Ownership: Explicitly assign ownership of specific resources (e.g., a particular data file, database table, or workflow step) to a single agent to prevent conflicts over shared resources [25].

- Use a Planner-Executor Loop: Separate the planning of steps from their execution. A planning agent can first generate a validated workflow, which execution agents then carry out, reducing runtime coordination errors [26].

Problem 3: Self-Correction Leads to Performance Degradation

Symptoms: The system's output quality worsens with repeated self-correction attempts, or agents become stuck in infinite loops.

Solutions:

- Set a Evaluation Threshold and Retry Limit: Define a quantitative quality score for self-evaluation. If an output does not meet the threshold after 1-2 retry attempts, the system should escalate the problem rather than continuing to iterate, as studies show diminishing returns with repeated self-correction [2] [13].

- Incorporate Dynamic Human-in-the-Loop: Design the system to flag low-confidence outputs or persistent errors for human expert review. This feedback can then be incorporated into the system's memory for continuous learning [27] [29].

Experimental Protocols & Data

Protocol: Evaluating Self-Evaluation Loops in a Bioinformatics Agent

This methodology is adapted from the evaluation of the BioAgents system [2] [13].

- Agent Setup: Fine-tune a base language model (e.g., Phi-3) on bioinformatics-specific data, such as tool documentation from Biocontainers and the EDAM ontology. Equip the agent with a RAG system indexed on nf-core workflows and scientific literature.

- Task Design: Present the agent with conceptual genomics and code generation tasks of varying complexity (e.g., "How do I align RNA-seq data against a human reference genome?").

- Self-Evaluation Trigger: After the agent generates an initial answer, trigger its self-evaluation module. The agent should score its own response on a scale of 0-1 for confidence/accuracy.

- Correction Cycle: If the score is below a set threshold (e.g., 0.7), the agent re-analyzes the prompt and attempts to generate an improved output. Limit this to 1-2 cycles.

- Human Evaluation: An expert bioinformatician reviews both the initial and final outputs, scoring them for Accuracy (correctness of the answer) and Completeness (inclusion of all relevant steps/information) without knowing which is which.

Quantitative Results from a Comparative Study [2] [13]: Table: Performance Comparison on Conceptual Genomics Tasks

| Task Complexity | BioAgents (with Self-Evaluation) Accuracy | Human Expert Accuracy | Key Observation |

|---|---|---|---|

| Easy | High | High | Matched expert performance |

| Medium | High | High | Provided tool rationales on par with experts |

| Hard | High | High | Occasionally omitted steps, but provided logical step series |

Table: Performance Comparison on Code Generation Tasks

| Task Complexity | BioAgents (with Self-Evaluation) Accuracy | Human Expert Accuracy | Key Observation |

|---|---|---|---|

| Easy | High | High | Sometimes gave false tool info |

| Medium | Struggled | High | Failed to produce complete, executable pipelines |

| Hard | Failed | High | Generated conceptual outlines instead of code |

System Workflow Diagram

Multi-Agent Architecture Diagram

The Scientist's Toolkit

Table: Essential Reagents & Frameworks for Agent Research

| Item Name | Type | Function in Research |

|---|---|---|

| LangChain [26] | Software Framework | Facilitates building agent workflows with memory management, tool integration, and error handling. |

| AutoGen [25] | Software Framework | Well-suited for creating and managing conversational multi-agent workflows. |

| Phi-3 [2] [13] | Small Language Model (SLM) | A base model that can be fine-tuned for bioinformatics, enabling high performance with lower computational cost. |

| FAISS Vector Store [28] | Database | Enables efficient similarity search in RAG systems, crucial for grounding agent responses in scientific literature. |

| BioContainers/EDAM [2] [13] | Bioinformatics Ontology | Provides structured, standardized terminology for bioinformatics tools, data, and formats, used for fine-tuning agents. |

| Model Context Protocol (MCP) [26] | Communication Protocol | Enforces structured, schema-validated communication between agents and tools, reducing coordination errors. |

| Pinecone/Weaviate [26] | Vector Database | Used for robust state recovery and long-term memory, allowing agents to learn from past errors. |

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between using database snapshots and compensating transactions for rollback in a bioinformatics multi-agent system?

A1: The core difference lies in their approach to reversing changes. Database snapshots capture the entire state of the data at a specific point in time, allowing you to restore the system to that exact previous state. This is akin to a system-wide "undo" that reverts all changes, both good and bad, made after the snapshot was taken [30]. In contrast, a compensating transaction is a new, specially designed transaction that semantically reverses the effects of a previously committed transaction. It applies business logic to undo a specific action—for example, crediting an account that was previously debited—without affecting other, potentially valid, work done in the interim [31] [32]. Snapshots are often simpler but less granular, while compensating transactions offer precise control but require more complex design.

Q2: During a long-running genome assembly workflow, one agent commits data to a database, but a subsequent agent fails. A full snapshot rollback would undo hours of work. What's a better strategy?

A2: For these long-running processes, the Saga pattern with compensating transactions is the recommended strategy [31] [32]. Instead of one large transaction, you break the workflow into a sequence of independent, smaller transactions, each scoped to a single agent's task. If a subsequent agent fails, instead of a full rollback, you execute a series of compensating transactions that semantically undo the work of the previously completed steps in reverse order.

- Example: An agent that submitted a job to a computational cluster would have a compensating transaction that cancels that job. An agent that wrote preliminary results to a database would have a compensator that deletes or flags that data [31]. This allows you to recover from the failure without losing the entire workflow's progress.

Q3: Our multi-agent system for drug discovery analysis sometimes produces "garbage" data due to upstream errors. How can we prevent this from corrupting our results?

A3: This is a classic "Garbage In, Garbage Out" (GIGO) scenario. Prevention requires a multi-layered approach to data quality [1]:

- Implement Quality Control (QC) Checkpoints: Integrate automated QC agents into your workflow. These agents should validate data at key stages using metrics appropriate for your data type (e.g., Phred scores for sequencing data, checks for batch effects) [1].

- Data Validation: Ensure data makes biological sense by checking for expected patterns or using cross-validation with alternative methods [1].

- Standardized Protocols: Use Standard Operating Procedures (SOPs) for data handling and agent interactions to reduce variability and errors [1].

- Leverage Rollbacks: If a QC agent detects anomalous data, use a rollback mechanism (snapshot or compensating transaction) to revert the system to a state before the garbage data was introduced, allowing for re-analysis or corrective action [30] [33].

Q4: What are the key limitations of using compensating transactions?

A4: While powerful, compensating transactions have several important limitations [31]:

- No True Isolation: The original transaction's results are visible to other processes before compensation. This can lead to "dirty reads" where another agent acts on data that is later undone.

- Complexity: Designing the logic to perfectly reverse every operation is complex and can be as difficult as designing the original workflow.

- Compensation Failure: The compensating transaction itself can fail, requiring a robust error-handling strategy for this scenario.

- Incomplete Reversal: Some actions, like sending an email notification or triggering a physical instrument, cannot be fully reversed. The compensation can only attempt to mitigate the effects (e.g., sending a follow-up email).

Troubleshooting Guides

Problem: Irreversible Action Taken by an Agent An agent in the system performed a destructive, non-recoverable action, such as deleting a critical file or stopping an essential service.

- Solution 1: Pre-Action Simulation. Before executing an action, especially one flagged as high-risk, the system should simulate it in a sandboxed environment to assess its impact [33].

- Solution 2: Action Validation and Constraints. Implement a rule-based layer that rejects actions deemed irreversible or destructive before they are executed. In the STRATUS system, for example, "every action must be undoable," and proposals like deleting a production database are rejected outright [33].

- Solution 3: Escalation to Human Operators. For actions that cannot be made safe through the above methods, the system should be designed to escalate the decision to a human operator [33].

Problem: Rollback Mechanism Itself Fails The process of restoring a system snapshot or executing a compensating transaction encounters an error.