Boosting Binding Affinity Prediction: How Transfer Learning from Language Models is Revolutionizing Drug Discovery

Accurate prediction of drug-target binding affinity is a critical yet challenging task in computational drug discovery, traditionally hampered by limited labeled data and poor generalization.

Boosting Binding Affinity Prediction: How Transfer Learning from Language Models is Revolutionizing Drug Discovery

Abstract

Accurate prediction of drug-target binding affinity is a critical yet challenging task in computational drug discovery, traditionally hampered by limited labeled data and poor generalization. This article explores the paradigm shift enabled by transfer learning from protein and molecular language models. We first establish the foundational principles of language models like ESM and ChemBERTa for encoding biological and chemical sequences. The discussion then progresses to methodological architectures that integrate these pre-trained embeddings, from simple concatenation to advanced geometry-aware and conditioning approaches. A critical troubleshooting section addresses pervasive issues of data bias and dataset leakage, offering solutions for robust model evaluation. Finally, we survey the validation landscape, comparing the performance of these novel approaches against traditional methods on established benchmarks, underscoring their superior generalization and growing impact on accelerating therapeutic development.

The Foundation: From Biological Sequences to Semantic Embeddings

The Data Scarcity Problem in Traditional Binding Affinity Prediction

The accurate prediction of binding affinity, the strength of interaction between a drug candidate and its biological target, is a cornerstone of modern drug discovery. Traditional methods for assessing affinity, whether through wet-lab experiments or physics-based computational simulations, are notoriously constrained by a fundamental limitation: data scarcity. This scarcity manifests not only in the sheer volume of data but also in its quality, diversity, and accessibility. The recent integration of artificial intelligence (AI) and machine learning (ML) has promised to revolutionize the field. However, these data-driven models are themselves critically hampered by the very data scarcity they aim to overcome, creating a cyclical challenge that impedes rapid therapeutic development. This whitepaper delineates the multifaceted nature of the data scarcity problem and frames the emerging paradigm of transfer learning from protein and molecular language models as a transformative solution. By leveraging knowledge pre-trained on vast, unlabeled biological and chemical corpora, researchers can build accurate and generalizable predictive models even when high-quality, labeled binding affinity data is exceedingly limited.

The Dimensions of Data Scarcity

The data scarcity problem in binding affinity prediction is not monolithic but can be decomposed into several interconnected challenges, each inflating the cost and timeline of drug discovery.

The High Cost of Experimental Data Generation

The gold-standard data for binding affinity comes from experimental techniques such as Isothermal Titration Calorimetry (ITC) or Surface Plasmon Resonance (SPR). These methods are low-throughput, requiring significant time, specialized equipment, and costly reagents. Consequently, the generation of new, high-fidelity data points is a slow and expensive process, creating a natural bottleneck. This experimental barrier fundamentally limits the size of datasets available for training robust machine learning models.

The Data Leakage and Generalization Crisis

A more insidious aspect of data scarcity is the problem of data leakage in benchmark datasets, which has led to a widespread overestimation of model performance. When models are trained and tested on non-independent data, they learn to "memorize" structural similarities rather than generalizable principles of binding.

A seminal 2025 study by Graber et al. exposed a substantial data leakage between the widely used PDBbind training database and the Comparative Assessment of Scoring Functions (CASF) benchmark. Their analysis revealed that nearly 49% of CASF test complexes had highly similar counterparts (in terms of protein structure, ligand identity, and binding pose) in the training set [1]. This allowed models to achieve high benchmark performance through memorization, not genuine understanding. When models were retrained on a rigorously filtered dataset called PDBbind CleanSplit, which removes these redundancies, the performance of state-of-the-art models dropped markedly [1]. This crisis highlights that the effective data for learning generalizable rules is even scarcer than previously assumed.

Table 1: Impact of Data Leakage on Model Generalization

| Training Scenario | Description | Reported Performance | True Generalization |

|---|---|---|---|

| Standard PDBbind | Training and test sets contain structurally similar complexes. | Spuriously high (e.g., Pearson R ~0.80+ in some models) | Overestimated; models fail on novel targets. |

| PDBbind CleanSplit | Training set is strictly filtered to be independent of test sets. | Lower, more realistic performance metrics | Accurately reflects model's ability to predict for unseen complexes. |

The Challenge of Data for Complex Therapeutics

The problem is further exacerbated for advanced therapeutic modalities like Antibody-Drug Conjugates (ADCs). The development of ADCs involves optimizing three components—an antibody, a linker, and a cytotoxic payload—which creates a massive combinatorial space. Data on conjugation site effects, linker stability, and payload release kinetics is exceptionally sparse compared to small molecules [2]. This "data sparsity for rare conjugation chemistries" forces developers to rely heavily on empirical approaches, slowing down the rational design of next-generation ADCs [3].

Overcoming Scarcity with Transfer Learning from Language Models

Transfer learning from large language models (LLMs) presents a powerful framework to bypass the data scarcity bottleneck. The core idea is to pre-train a model on a vast, unlabeled corpus to learn fundamental representations of biological sequences and chemical structures. These pre-trained representations encapsulate deep semantic and syntactic knowledge, which can then be fine-tuned on small, task-specific datasets (like binding affinity measurements) to achieve high performance.

Protein and Molecular Language Models

Language models originally developed for human language have been successfully adapted to the "languages" of biology and chemistry.

- Protein Language Models (pLMs): Models like ProtT5 and ESM-2 are trained on millions of protein sequences from diverse organisms. They learn to represent amino acids in the context of their surrounding sequence, capturing evolutionary constraints, structural features, and functional sites without ever seeing a 3D structure [4] [5].

- Molecular Language Models: Models like ChemBERTa and MolFormer are trained on string-based representations of small molecules, such as SMILES (Simplified Molecular-Input Line-Entry System). They learn the grammatical rules of chemical structures and the relationships between molecular substructures and properties [6] [5].

Experimental Protocol: Implementing a pLM-Based Affinity Prediction Workflow

The following protocol details a typical pipeline for developing a binding affinity predictor using transfer learning from pLMs, as exemplified by the BAPULM framework [5].

Objective: To predict the binding affinity between a protein target and a small-molecule ligand using only their sequence information, leveraging pre-trained language models.

Inputs:

- Protein amino acid sequence (e.g., in FASTA format)

- Ligand SMILES string

Procedure:

Feature Extraction with Pre-trained Models:

- Proteins: Pass the protein sequence through a pre-trained pLM (e.g., ProtT5-XL-U50). Extract the last hidden layer embeddings or use per-residue embeddings averaged across the sequence to obtain a fixed-dimensional, dense vector representation of the entire protein.

- Ligands: Pass the SMILES string through a pre-trained molecular LM (e.g., MolFormer) to obtain a fixed-dimensional, dense vector representation of the ligand.

Data Integration and Splitting:

- Concatenate the protein and ligand feature vectors to form a unified representation of the complex.

- Use a rigorous data partitioning strategy to create training, validation, and test sets. Critical Step: Avoid random splitting. Instead, use structure-based clustering (e.g., CleanSplit algorithm [1]) or UniProt-based partitioning [4] to ensure no proteins in the test set are highly similar to those in the training set. This is essential for evaluating true generalization.

Model Training and Fine-Tuning:

- Construct a regression model, typically a fully connected neural network, that takes the concatenated feature vector as input and outputs a predicted binding affinity value (e.g., pKd, pKi).

- Initialize the model weights and train the network on the training set. Optionally, the feature extractors (pLMs) can be fine-tuned alongside the regression head if the dataset is sufficiently large, or their weights can be frozen.

Validation and Testing:

- Evaluate the model's performance on the held-out validation and test sets using metrics such as Pearson's R (for scoring power), Root-Mean-Square Error (RMSE), and Concordance Index (CI).

Case Study: BAPULM Framework Efficacy

The BAPULM framework demonstrates the power of this approach. By using ProtT5 for proteins and MolFormer for ligands, it achieved state-of-the-art results on multiple benchmark datasets without using any 3D structural information, proving that sequence-based models pre-trained on large corpora can effectively predict binding affinity [5].

Table 2: Performance of a Sequence-Based Model (BAPULM) on Benchmark Datasets

| Dataset | Scoring Power (Pearson R) | Key Implication |

|---|---|---|

| benchmark1k2101 | 0.925 ± 0.043 | High accuracy is achievable without 3D structural data. |

| Test2016_290 | 0.914 ± 0.004 | Robust performance on established benchmarks. |

| CSAR-HiQ_36 | 0.813 ± 0.001 | Effective even on smaller, high-quality test sets. |

Complementary Strategies for Data Efficiency

Beyond transfer learning, other computational strategies are being developed to maximize learning from limited data.

Multitask Learning

Frameworks like DeepDTAGen jointly perform binding affinity prediction and target-aware drug generation. These shared tasks force the model to learn a more robust and generalizable representation of the underlying drug-target interaction space, improving performance on both tasks, especially when data for either is limited [7].

Data Augmentation with Synthetic Complexes

To combat data scarcity, researchers are turning to AI to generate synthetic protein-ligand complexes. Co-folding models like Boltz-1 can predict the 3D structure of a complex from sequence and SMILES information. However, a 2025 study by Hsu et al. highlighted a critical caveat: quality supersedes quantity. They found that augmenting training data with a smaller set of high-confidence synthetic complexes improved model performance, while adding a larger set of lower-quality complexes provided no benefit or was even detrimental [8]. This underscores the need for rigorous quality filtering in data augmentation.

The following table catalogues essential computational tools and datasets for conducting transfer learning research in binding affinity prediction.

Table 3: Key Research Reagents for Binding Affinity Prediction with Transfer Learning

| Resource Name | Type | Function in Research | Relevance to Data Scarcity |

|---|---|---|---|

| ESM-2 / ProtT5 | Protein Language Model | Generates semantically rich, numerical embeddings from protein sequences. | Provides pre-trained knowledge of protein evolution and function, reducing need for labeled affinity data. |

| MolFormer / ChemBERTa | Molecular Language Model | Generates numerical embeddings from molecular representations (SMILES). | Provides pre-trained knowledge of chemical space and structure-property relationships. |

| PDBbind CleanSplit | Curated Dataset | Provides a benchmark training set free of data leakage for rigorous model evaluation. | Enables accurate assessment of true model generalization, addressing overestimation from data leakage. |

| BindingDB | Affinity Database | A public repository of experimental drug-target binding affinities. | Serves as a primary source of ground-truth data for model training and fine-tuning. |

| Target2035 Initiative | Research Consortium | Aims to generate high-quality, open-source binding data for thousands of human proteins. | A long-term, community-wide effort to systematically address the root cause of data scarcity. |

The data scarcity problem has long been a fundamental constraint in traditional binding affinity prediction. The advent of AI and ML promised a way forward but initially stumbled over issues of generalization stemming from inadequate and leaky data. The integration of transfer learning from protein and molecular language models represents a paradigm shift. By pre-training on the vast "texts" of evolution and chemistry, these models develop a foundational understanding of their respective domains. This knowledge allows researchers to build accurate predictive models for binding affinity that require only small, focused datasets for fine-tuning, effectively bypassing the historical data bottleneck. As the field moves forward, the combination of these advanced modeling techniques with rigorously curated, non-redundant datasets and strategic data augmentation will continue to mitigate the data scarcity problem, accelerating the discovery of novel therapeutics.

Protein Language Models (pLMs) and Molecular Language Models (mLMs) are specialized branches of artificial intelligence that apply the principles of natural language processing (NLP) to biological and chemical sequences. Just as large language models like ChatGPT learn statistical patterns from vast text corpora, pLMs are trained on millions of protein amino acid sequences, while mLMs typically learn from string-based molecular representations such as SMILES (Simplified Molecular Input Line Entry System) [9]. These models have emerged as revolutionary technologies that bring transformative changes to drug discovery and therapeutic research by acquiring rich representational capabilities from large-scale sequence datasets [10]. The critical functions of proteins in biological processes often arise through interactions with small molecules, making the intersection of pLMs and mLMs particularly important for understanding these interactions in contexts such as drug design, bioengineering, and cellular metabolism [11].

The foundational architecture behind most modern pLMs and mLMs is the Transformer model, which employs self-attention mechanisms to capture long-range dependencies in sequential data [12]. Two primary training paradigms dominate the field: Masked Language Modeling (MLM), where the model learns to predict randomly masked tokens in the input sequence (exemplified by BERT-style models), and Autoregressive Modeling, where the model predicts the next token in a sequence (exemplified by GPT-style models) [10]. Protein language models such as ESM-2 (Evolutionary Scale Modeling) and ProtTrans learn the statistical patterns of evolutionary relationships from sequence data alone, without explicit supervision, capturing fundamental principles of protein biochemistry, structure, and function [13] [12]. This pre-training enables them to encode knowledge about protein biochemistry and evolution in their internal representations, known as embeddings, which encapsulate everything from biochemical characteristics of individual amino acids to complex higher-order interactions reflecting structural and functional properties [13].

Core Architectures and Model Types

Protein Language Models (pLMs)

Protein language models can be systematically classified based on their architectures and information sources. The primary architectural distinction lies between encoder-style models (like BERT) and decoder-style models (like GPT). Encoder models are typically pre-trained using masked language modeling objectives and excel at producing rich contextual embeddings for downstream prediction tasks. In contrast, decoder models are generally pre-trained using next-token prediction and demonstrate stronger capabilities in generative applications [10] [13].

ESM-2 (Evolutionary Scale Modeling 2) represents a family of pLMs that scale from 8 million to 15 billion parameters, with the larger models demonstrating enhanced capabilities in capturing complex patterns in protein sequence space [13]. ProtTrans includes models like ProtBERT and ProtT5, which leverage the transformer architecture processed on massive protein datasets—ProtBert, for instance, was trained on 2 billion protein sequences with 420 million parameters [12]. ESM3 represents the cutting edge with a staggering 98 billion parameters and has demonstrated remarkable capabilities in generating functional protein sequences [13].

Recent trends have also seen the development of multimodal pLMs that integrate co-evolutionary information, structural data, and functional annotations, as well as domain-specific models specialized for particular protein families such as antibodies and T-cell receptors [10]. These specialized models often outperform general-purpose pLMs on their specific domains by incorporating relevant inductive biases and training data.

Molecular Language Models (mLMs)

Molecular Language Models operate on string-based representations of chemical structures, most commonly SMILES notation, which encodes molecular graphs as linear sequences of characters [9]. Similar to pLMs, mLMs can be based on either encoder or decoder architectures, with each serving different purposes in drug discovery pipelines.

Encoder-style mLMs excel at learning rich representations of molecular structures that can be used for property prediction tasks such as binding affinity, solubility, toxicity, and other pharmacologically relevant characteristics [9]. Decoder-style mLMs demonstrate stronger performance in de novo molecular design, where the goal is to generate novel drug-like molecules with desired properties [9]. The Chemcrow and Coscientist systems represent advanced mLMs that can automate chemistry experiments and assist in directed synthesis and chemical reaction prediction [9].

Table 1: Comparison of Major Protein Language Model Architectures

| Model | Architecture | Parameters | Training Data | Primary Use Cases |

|---|---|---|---|---|

| ESM-2 | Transformer Encoder | 8M - 15B | 250M sequences | Feature extraction, variant effect prediction |

| ProtBERT | Transformer Encoder | 420M | 2B sequences | Protein function prediction, embeddings |

| ESM3 | Transformer Decoder | 98B | Multi-modal data | Protein design, function prediction |

| ProtT5 | Transformer Encoder-Decoder | Not specified | Large-scale sequences | Sequence generation, feature extraction |

| ESM-MSA | Transformer Encoder | Not specified | 26M MSAs | MSA-based predictions |

Application to Binding Affinity Prediction

Binding affinity prediction represents one of the most valuable applications of pLMs and mLMs in drug discovery, as it directly impacts the identification and optimization of therapeutic compounds. The accurate prediction of protein-ligand binding affinities enables researchers to prioritize compounds for synthesis and testing, dramatically reducing the time and cost associated with experimental screening [11] [9].

Methodological Approaches

Several architectural paradigms have emerged for combining pLMs and mLMs in binding affinity prediction:

Sequence-Based Methods utilize only 1D amino acid sequence data as input, making them widely applicable even when 3D structural information is unavailable [12]. These approaches convert protein sequences into numerical embeddings using pre-trained pLMs, while molecular structures are typically represented as SMILES strings or molecular graphs. The CGPDTA framework exemplifies this approach, leveraging transfer learning from both protein and molecular language models while incorporating molecular substructure graphs and protein pocket sequences to represent local features of drugs and targets [14]. A key advantage of sequence-based methods is their applicability to proteins without experimentally determined structures, though they may sacrifice some accuracy compared to structure-aware methods.

Structure-Based Methods incorporate 3D structural information of both proteins and ligands, typically using geometric deep learning architectures such as Graph Neural Networks (GNNs) [1] [15]. In these approaches, protein structures are represented as graphs where nodes correspond to amino acids and edges represent spatial relationships, while small molecules are represented as molecular graphs with atoms as nodes and bonds as edges. The GEMS (Graph neural network for Efficient Molecular Scoring) model exemplifies this approach, leveraging a sparse graph modeling of protein-ligand interactions combined with transfer learning from language models to achieve state-of-the-art predictions on benchmark datasets [1].

Hybrid Methods combine the strengths of both sequence-based and structure-based approaches. One recent hybrid model integrates pLM embeddings as node features in a 3D Graph Attention Network (GAT), effectively combining sequential information encoded in protein sequences with spatial relationships within the protein structure [15]. Research has shown that while using experimental protein structure almost always improves binding site prediction accuracy, complex pLMs still contain substantial structural information that leads to good predictive performance even without explicit 3D structure [15].

Critical Data Considerations

A significant challenge in binding affinity prediction is the issue of data leakage between standard training and test datasets, which has led to inflated performance metrics and overestimation of model generalization capabilities [1]. The widely used PDBbind database and Comparative Assessment of Scoring Functions (CASF) benchmark datasets exhibit substantial similarities, with nearly 600 high-similarity pairs detected between training and test complexes, affecting 49% of all CASF complexes [1].

To address this problem, researchers have developed PDBbind CleanSplit, a training dataset curated by a structure-based filtering algorithm that eliminates train-test data leakage as well as redundancies within the training set [1]. This algorithm uses a combined assessment of protein similarity (TM-scores), ligand similarity (Tanimoto scores), and binding conformation similarity (pocket-aligned ligand RMSD) to identify and remove problematic overlaps. When state-of-the-art models like GenScore and Pafnucy were retrained on CleanSplit, their performance dropped substantially, confirming that previous high scores were largely driven by data leakage rather than genuine understanding of protein-ligand interactions [1].

Table 2: Performance Comparison of Binding Affinity Prediction Methods

| Model | Architecture | Training Data | CASF2016 RMSE | Key Innovation |

|---|---|---|---|---|

| GEMS | Graph Neural Network | PDBbind CleanSplit | State-of-the-art | Sparse graph modeling + transfer learning |

| CGPDTA | Transfer Learning | Traditional PDBbind | Not specified | Molecular substructure graphs + protein pockets |

| GenScore | Deep Learning | PDBbind | Performance drops on CleanSplit | Structure-based scoring function |

| Pafnucy | 3D CNN | PDBbind | Performance drops on CleanSplit | Volumetric grid representation |

| Search Algorithm | Similarity-based | PDBbind | Pearson R=0.716, competitive RMSE | Simple similarity search baseline |

Experimental Protocols and Methodologies

Protocol: pLM Embedding Extraction for Binding Affinity Prediction

Objective: Extract meaningful protein representations from pLMs for downstream binding affinity prediction tasks.

Materials and Reagents:

- Protein sequences in FASTA format

- Pre-trained pLM (e.g., ESM-2, ProtBERT)

- Computational environment with appropriate deep learning frameworks (PyTorch/TensorFlow)

- Hardware with GPU acceleration for efficient inference

Procedure:

- Sequence Preprocessing: Input protein sequences are truncated or padded to the maximum sequence length acceptable by the chosen pLM (e.g., 1022 residues for ESM-1v).

- Embedding Extraction: Pass each preprocessed sequence through the pLM to obtain residue-level embeddings from the final hidden layer.

- Embedding Compression: Apply mean pooling (averaging embeddings across all sequence positions) to generate a single fixed-dimensional representation for each protein. Research has systematically demonstrated that mean pooling generally outperforms alternative compression methods across diverse prediction tasks [13].

- Feature Integration: Combine protein embeddings with molecular representations (e.g., molecular graphs or MLM embeddings) to create input features for the affinity prediction model.

- Model Training: Train a regression model (e.g., regularized linear models, neural networks) using the combined representations to predict experimental binding affinities (typically pKd, pKi, or pIC50 values).

Validation: Evaluate model performance using strictly independent test sets such as PDBbind CleanSplit to ensure genuine generalization capability rather than data leakage [1].

Protocol: Structure-Based Affinity Prediction with GEMS

Objective: Implement the GEMS architecture for structure-based binding affinity prediction with robust generalization.

Materials and Reagents:

- 3D structures of protein-ligand complexes (PDB format)

- PDBbind CleanSplit dataset

- Graph neural network framework (PyTorch Geometric)

- Pre-trained pLM for protein initialization

Procedure:

- Graph Construction: Represent each protein-ligand complex as a sparse graph where:

- Nodes correspond to protein residues and ligand atoms

- Edges represent spatial proximity and chemical interactions

- Feature Initialization: Initialize protein residue nodes using pre-trained pLM embeddings and ligand atom nodes using chemical features (atom type, hybridization, etc.).

- Graph Neural Network: Apply multiple layers of message passing to update node representations based on local neighborhood information.

- Global Pooling: Aggregate node representations to form a global graph embedding.

- Affinity Prediction: Map the graph embedding to a single binding affinity value through fully connected layers.

Key Innovation: The sparse graph representation explicitly models protein-ligand interactions while transfer learning from pLMs incorporates evolutionary information, enabling the model to generalize to novel complexes not seen during training [1].

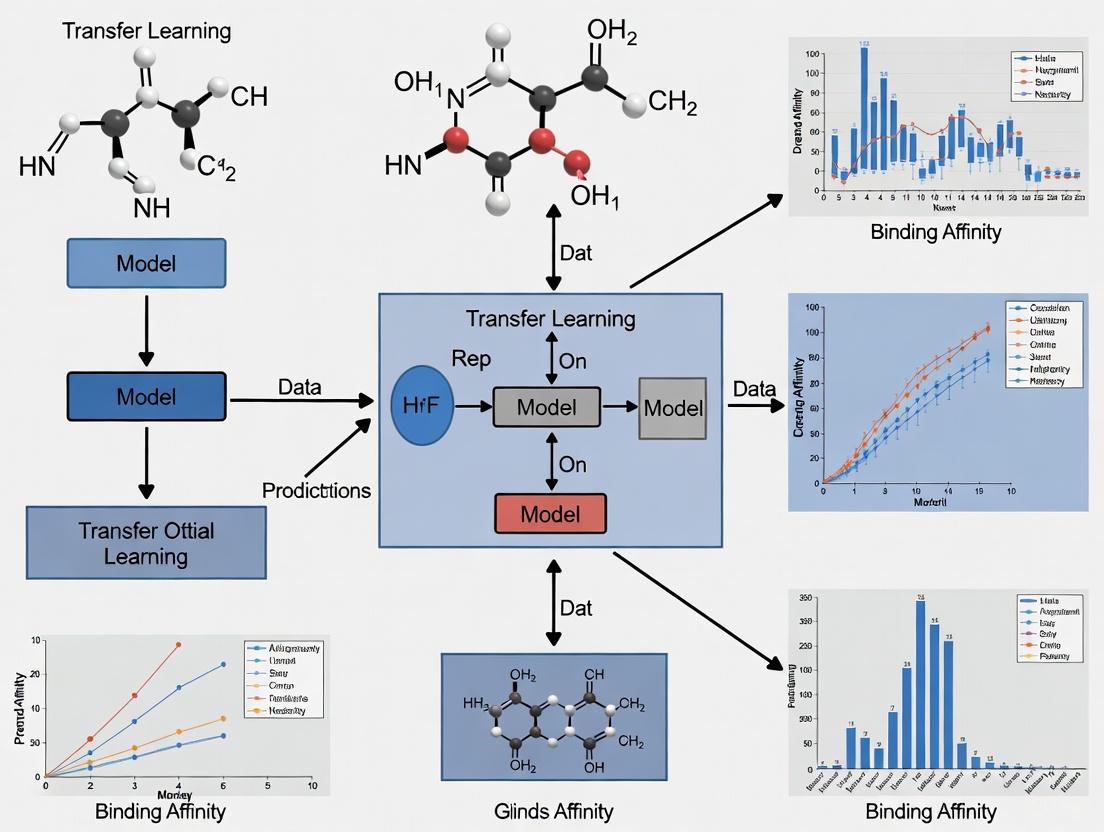

Visualization of Model Architectures and Workflows

pLM Feature Extraction for Binding Affinity Prediction

Diagram 1: pLM Feature Extraction Workflow for Binding Affinity Prediction

GEMS Architecture for Structure-Based Prediction

Diagram 2: GEMS Architecture for Structure-Based Binding Affinity Prediction

Table 3: Essential Research Resources for pLM and mLM Applications in Binding Affinity Prediction

| Resource | Type | Description | Application in Binding Affinity Research |

|---|---|---|---|

| PDBbind Database | Dataset | Comprehensive collection of protein-ligand complexes with binding affinity data | Primary training and benchmarking data for affinity prediction models |

| PDBbind CleanSplit | Dataset | Curated version of PDBbind with minimized data leakage | Rigorous evaluation of model generalization capabilities |

| ESM-2 Models | Pre-trained Model | Protein language model family (8M to 15B parameters) | Feature extraction for protein sequence representation |

| ProtTrans Models | Pre-trained Model | Transformer-based pLMs (ProtBERT, ProtT5) trained on billions of sequences | Alternative protein representation learning |

| GEMS | Software | Graph neural network for molecular scoring | Structure-based binding affinity prediction with generalization |

| CASF Benchmark | Evaluation Suite | Comparative Assessment of Scoring Functions | Standardized performance comparison of affinity prediction methods |

| RDKit | Software | Cheminformatics and machine learning tools | Molecular representation, feature extraction, and manipulation |

| PyTorch Geometric | Software | Library for deep learning on graphs | Implementation of GNNs for structure-based affinity prediction |

| sc-PDB | Dataset | Database of druggable binding sites from Protein Data Bank | Binding site prediction and analysis |

Future Directions and Challenges

The field of protein and molecular language models continues to evolve rapidly, with several promising research directions emerging. Multimodal integration represents a key frontier, where models combine sequence, structure, and functional information to create more comprehensive representations of proteins and their interactions [10]. The recent development of generative pLMs like ESM3, which can design novel protein sequences with desired functions, points toward a future where AI plays a central role in de novo protein design [13].

Interpretability remains a significant challenge, as the internal decision-making processes of complex pLMs are often opaque. Recent work using sparse autoencoders to identify interpretable features within pLM representations shows promise for opening the "black box" and understanding what features models use for their predictions [16]. This enhanced explainability is particularly important for building trust in model predictions for critical applications like drug discovery.

Efficiency considerations are also gaining attention, as researchers question whether larger models are always better. Surprisingly, medium-sized models (e.g., ESM-2 650M and ESM C 600M) have demonstrated consistently good performance, falling only slightly behind their larger counterparts despite being many times smaller [13]. This suggests that model selection should be guided by specific application requirements and data availability rather than simply pursuing the largest available architectures.

As the field matures, the integration of pLMs and mLMs into end-to-end drug discovery pipelines holds the potential to dramatically reduce the time and cost of developing new therapeutics. However, realizing this potential will require addressing ongoing challenges related to data quality, model generalization, and biological validation [9].

How pLMs like ESM and ProtT5 Learn the 'Grammar of Life' from Sequence Data

The advent of protein Language Models (pLMs) represents a paradigm shift in computational biology, leveraging the architectural principles of large language models to decipher the complex patterns within protein sequences. Models such as ESM (Evolutionary Scale Modeling) and ProtT5 are trained on hundreds of millions of protein sequences, learning the underlying "grammar" that governs protein structure and function without explicit supervision. These models have begun to provide an important alternative to capturing the information encoded in a protein sequence in computers, advancing our understanding of the language of life as written in proteins [17]. Within the specific context of binding affinity research—a critical area for drug discovery and understanding cellular processes—pLMs offer a transformative approach. They enable the prediction of protein-protein and protein-ligand interactions directly from sequence, providing a powerful tool when structural data is scarce or uncertain. By leveraging transfer learning, where knowledge gained from broad pre-training is fine-tuned for specific predictive tasks, pLMs are establishing new benchmarks for accuracy and efficiency in computational biology.

Architectural Foundations: How pLMs Process Sequence Information

The ability of pLMs to learn the grammar of life stems from their underlying transformer architecture and their training on massive, diverse sequence corpora.

Core Model Architectures and Training

ESM and ProtT5, while sharing the transformer foundation, implement it in distinct ways. ESM2 utilizes an encoder-only transformer architecture, pre-trained using a masked language modeling objective where random amino acids in a sequence are hidden and the model must predict them based on their context [18]. In contrast, ProtT5 adopts an encoder-decoder design based on the T5 (Text-to-Text Transfer Transformer) framework, which is also pre-trained on large-scale protein databases using a masked language modeling objective [19] [18]. This pre-training on hundreds of millions of sequences allows both models to learn contextual relationships among amino acids that reflect evolutionary conservation, structural constraints, and higher-level functional patterns. The self-attention mechanism within the transformer is particularly crucial, as it directly calculates the pairwise associations between all residues in a sequence, enabling the model to capture long-range interactions and dependencies that are fundamental to protein folding and function [20].

From Sequence to Representation: Generating Embeddings

The primary output of a pLM is a set of embedding vectors—fixed-size, numerical representations that capture the contextual information of each amino acid in a sequence. For a given protein sequence, models like ProtT5 generate a sequence of 1,024-dimensional residue embeddings [19]. These embeddings can be used directly for residue-level prediction tasks or pooled (e.g., by averaging) to create a single, global representation for a whole protein [19]. These embeddings implicitly encode a remarkable amount of structural and functional information. Studies have shown they capture tendencies for secondary structure formation, intrinsic disorder, and even aspects of long-range residue interactions, making them suitable for tasks that traditionally relied on explicit structural information [19] [18]. The quality of these representations is evidenced by the performance of pLMs in various downstream tasks, where ProtT5, for instance, has been shown to outperform other embedding methods like ESM-1b and ProGen2 in characterizing amino acid sequences for protein-protein binding events [20].

Quantitative Performance in Binding Affinity Prediction

The effectiveness of pLMs is best demonstrated by their performance on specific, challenging prediction tasks relevant to drug discovery and basic research. The following table summarizes the performance of several pLM-based methods on key benchmarks.

Table 1: Performance of pLM-Based Methods on Binding Prediction Benchmarks

| Method | Task | Key Model Components | Performance Metrics |

|---|---|---|---|

| ProtT-Affinity [19] | Protein-Protein Binding Affinity Prediction | ProtT5 embeddings + Lightweight Transformer | Pearson's R: 0.628 & 0.459 on two test sets; MAE: ~1.72 kcal/mol |

| PepENS [21] | Protein-Peptide Binding Residue Prediction | Ensemble of ProtT5, PSSM, HSE, EfficientNetB0, CatBoost, Logistic Regression | Precision: 0.596; AUC: 0.860 (Dataset 1) |

| EDLMPPI [22] [20] | Protein-Protein Interaction Site Identification | ProtT5 + Multi-source Biological Features + BiLSTM + Capsule Network | Average Precision improvement of nearly 10% over state-of-the-art methods |

| Fine-tuned ESM2/ProtT5 [18] | Amino Acid-Level Feature Prediction (20 features, e.g., active site, binding site) | Fine-tuned ESM2 (3B parameter) and ProtT5 | High performance across features (e.g., AUROC > 0.8 for many features) |

As the data shows, pLM-based approaches are competitive and often superior to traditional methods. While sequence-only models like ProtT-Affinity may not always surpass the highest-performing structure-based methods, they provide a practical and robust alternative when structural data is missing or unreliable [19]. Furthermore, hybrid models that combine pLM embeddings with evolutionary and structural features, such as PepENS and EDLMPPI, consistently set new state-of-the-art performance, demonstrating the integrative power of these representations.

Experimental Protocols: From Pre-training to Fine-tuning

Applying pLMs to binding affinity research follows a structured pipeline, from data curation to model adaptation and evaluation. The workflow below illustrates the major stages of a typical pLM-based binding prediction study.

Diagram 1: pLM-Based Binding Prediction Workflow

Data Curation and Feature Extraction

The first critical step involves assembling a high-quality, non-redundant dataset. A standard practice is to use publicly available databases like BioLiP (for peptide-binding proteins) or PDBBind (for protein-ligand complexes) and then apply strict homology filtering to remove sequences with high identity, ensuring the model generalizes to new protein families [21] [19]. For instance, one protocol uses the "blastclust" tool from the BLAST package to exclude sequences with over 30% sequence identity [21]. Subsequently, protein sequences are fed into a pre-trained pLM to generate feature embeddings. For example, in the EDLMPPI method, each protein sequence is passed through ProtT5 to obtain a 1,024-dimensional vector representation for each residue [22] [20]. These embeddings can be used alone or combined with other features. The PepENS model, for example, creates a powerful multi-modal feature set by integrating ProtT5 embeddings with Position-Specific Scoring Matrices (PSSM) and structure-based Half-Sphere Exposure (HSE) metrics [21].

Model Design, Training, and Fine-tuning Strategies

With features in hand, the next step is to design a predictive model. Architectures vary widely based on the task:

- Simple Classifiers: For affinity prediction, a lightweight transformer with cross-attention can be used to model interactions between two protein embedding sets [19].

- Complex Ensembles: For binding site prediction, an ensemble of deep learning models (e.g., combining BiLSTM and Capsule Networks) can be trained on the combined features to improve robustness [20].

- Transfer Learning: A common and powerful technique is to fine-tune the pre-trained pLM itself on the specific binding task. This involves continuing the training of the pLM (e.g., ESM2 or ProtT5) on the labeled binding data, often using parameter-efficient methods like LoRA (Low-Rank Adaptation). LoRA inserts small, trainable matrices into the transformer's attention layers while keeping the original weights frozen, dramatically reducing computational cost and preventing overfitting [18]. As demonstrated in a study fine-tuning for 20 protein features, this approach yields models that significantly outperform classifiers built on frozen embeddings [18].

Performance Evaluation and Validation

Finally, models are rigorously evaluated on held-out test sets. Standard metrics include:

- For affinity prediction: Pearson correlation coefficient (R), Mean Absolute Error (MAE) in kcal/mol [19].

- For binding site prediction: Area Under the Curve (AUC), Precision, Average Precision (AP), and Matthews Correlation Coefficient (MCC) [21] [22]. Performance should be benchmarked against existing state-of-the-art methods to validate improvements. Furthermore, interpretability analyses can provide biological insights, such as highlighting which residues the model deems critical for binding, thereby building trust in the predictions [20].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Resources for pLM-Based Binding Research

| Resource Category | Specific Tool / Database | Function and Utility |

|---|---|---|

| Pre-trained pLMs | ProtT5 (ProtT5-XL-UniRef50), ESM2 (various sizes) | Provides foundational sequence representations and embeddings for downstream tasks. [21] [18] |

| Benchmark Datasets | PDBBind, BioLiP, Dset448, Dset72, Dset_164 | Provides curated, experimentally-verified data for training and fair evaluation of models. [21] [19] [20] |

| Feature Tools | PSI-BLAST (for PSSM), DSSP (for HSE, SS) | Generates complementary evolutionary and structural features to enrich pLM embeddings. [21] |

| Efficient Fine-Tuning | LoRA (Low-Rank Adaptation) | Enables parameter-efficient adaptation of large pLMs to specific tasks with limited data. [18] |

| Model Architectures | Transformers, BiLSTM, Capsule Networks, CNN (e.g., EfficientNetB0) | Serves as the predictive backbone that processes pLM embeddings for final output. [21] [20] |

Protein Language Models like ESM and ProtT5 have fundamentally changed the landscape of binding affinity research by providing deep, context-aware sequence representations that capture the grammatical rules of protein function. Their ability to be fine-tuned for specific tasks or integrated into complex ensemble models makes them uniquely powerful for predicting interactions in the absence of high-resolution structures. As these models continue to evolve, future developments will likely involve more sophisticated multimodal approaches that seamlessly combine sequence, structure, and dynamics information [17]. Furthermore, addressing challenges such as predicting the effects of higher-order mutations and understanding multi-protein complexes will be key. For now, pLMs have firmly established themselves as an indispensable tool in the computational biologist's arsenal, accelerating drug discovery and deepening our understanding of life's molecular mechanisms.

The application of large language models (LLMs) to molecular science represents a paradigm shift in computational chemistry and drug discovery. Chemical Language Models (CLMs), which interpret Simplified Molecular-Input Line-Entry System (SMILES) strings, have emerged as powerful tools for molecular property prediction, a critical task in accelerating drug development. These models adapt the transformer architectures that revolutionized natural language processing (NLP) to the specialized "language" of chemistry, where SMILES strings serve as sentences and molecular substructures as words [23] [24].

Framed within the broader context of transfer learning for binding affinity research, CLMs offer a promising pathway to overcome the data scarcity that often plagues computational drug design. By pre-training on vast unlabeled molecular databases and subsequently fine-tuning on specific property prediction tasks, these models demonstrate remarkable sample efficiency [25] [23]. This technical guide examines the architectural foundations, training methodologies, and practical applications of SMILES-interpreting models like ChemBERTa, with particular emphasis on their evolving role in predicting drug-target interactions and binding affinities—a cornerstone of modern therapeutic development.

SMILES Representation and Tokenization Strategies

The SMILES notation provides a linear string representation of molecular structure, translating atomic connectivity into a sequence of characters that can be processed by NLP techniques. However, raw SMILES strings require segmentation into meaningful tokens before they can be embedded into a numerical representation learnable by neural networks. Two predominant philosophies have emerged in this tokenization process, each with distinct implications for model performance and efficiency [24].

Table 1: Comparison of SMILES Tokenization Strategies

| Strategy | Description | Vocabulary Size | Training Data Requirements | Chemical Awareness |

|---|---|---|---|---|

| Chemistry-Agnostic | Treats SMILES as generic text using standard NLP tokenizers (BPE, character-level) | ~591 tokens (ChemBERTa-2) | High (77M compounds) | Learned from data |

| Chemistry-Aware | Uses chemical substructures (e.g., Morgan fingerprints) as tokens | ~13,325 tokens (MolBERT) | Low (4M compounds) | Injected via tokenization |

The chemistry-agnostic approach, exemplified by ChemBERTa, treats SMILES strings as generic text, allowing the model to learn chemical grammar and semantics entirely from data. This strategy requires substantial training data but offers broad generalizability. In contrast, the chemistry-aware approach, implemented in MolBERT, leverages domain knowledge by using molecular substructures (such as those generated by Morgan fingerprints) as tokens. This method injects chemical expertise directly into the tokenization process, significantly reducing data and computational requirements for effective training [24].

Model Architectures and Training Methodologies

Core Architectures

Chemical language models primarily utilize transformer architectures, with encoder-only configurations being particularly prevalent for property prediction tasks. ChemBERTa adapts the RoBERTa architecture with 6 layers and 12 attention heads, processing tokenized SMILES sequences through self-attention mechanisms to capture long-range dependencies in molecular structure [24]. The recently introduced ChemBERTa-3 framework provides an open-source training ecosystem for chemical foundation models, emphasizing scalability through distributed computing implementations like AWS-based Ray deployments and on-premise high-performance computing clusters [26].

These models employ masked language modeling (MLM) as their primary self-supervised pre-training objective, where randomly masked tokens in SMILES sequences must be predicted from context. This forces the model to learn fundamental principles of chemical validity and molecular syntax. ChemBERTa-2 introduced an alternative multi-task regression (MTR) approach that simultaneously predicts hundreds of molecular properties during pre-training, demonstrating consistent outperformance over standard MLM across downstream tasks [24].

Transfer Learning Framework

Effective application of CLMs to specialized domains like binding affinity prediction typically follows a three-stage transfer learning pipeline, exemplified by the ChemLM framework [23]:

- Self-supervised pre-training: The model learns general chemical principles from large unlabeled datasets (e.g., 10 million compounds from ZINC).

- Domain adaptation: Further self-supervised training on domain-specific molecules refines the model's understanding of relevant chemical space.

- Supervised fine-tuning: The model is optimized on labeled data for specific property prediction tasks.

Domain adaptation addresses the "domain shift" between general chemical knowledge and task-specific requirements, which is particularly crucial for binding affinity prediction where training data may be limited. Data augmentation through SMILES enumeration—generating alternative valid SMILES representations of the same molecule—has been shown to significantly enhance model robustness during this stage [23].

Experimental Protocols and Benchmarking

Performance Evaluation

Rigorous benchmarking of CLMs reveals both their capabilities and limitations. A comprehensive evaluation of 25 molecular embedding models across 25 datasets found that while CLMs achieve competitive performance, traditional chemical fingerprints like ECFP remain surprisingly difficult to outperform. Only one model (CLAMP) demonstrated statistically significant improvement over ECFP in this extensive comparison [27].

Table 2: Selected Benchmark Results for Molecular Property Prediction

| Model | Architecture | Tokenization | Tox21 (ROC-AUC) | ClinTox (ROC-AUC) | SIDER (ROC-AUC) |

|---|---|---|---|---|---|

| ChemBERTa-2 | Transformer (Encoder) | Chemistry-Agnostic | ~0.830 | ~0.920 | ~0.605 |

| MolBERT | Transformer (Encoder) | Chemistry-Aware | 0.839 | ~0.940 | ~0.625 |

| D-MPNN | Graph Neural Network | N/A | ~0.820 | ~0.885 | ~0.580 |

However, benchmarks focusing specifically on binding affinity prediction have uncovered significant challenges with data leakage and evaluation rigor. Studies analyzing the PDBbind database and Comparative Assessment of Scoring Function (CASF) benchmarks identified substantial train-test leakage, with nearly 50% of CASF complexes having highly similar counterparts in the training data. This inflation of reported performance metrics has led to overestimation of model generalization capabilities [1].

Out-of-Distribution Generalization

The critical challenge of out-of-distribution (OOD) generalization for molecular property prediction was systematically examined in the BOOM benchmark, which evaluated over 140 model-task combinations. Results revealed that even top-performing models exhibited average OOD errors approximately 3× larger than in-distribution errors. Current chemical foundation models, including transformer-based architectures, did not demonstrate strong OOD extrapolation capabilities, highlighting a key frontier for model development [28].

Application to Binding Affinity Research

Addressing Data Challenges

Binding affinity prediction presents particular challenges for CLMs due to limited labeled data and the complexity of protein-ligand interactions. The PDBbind CleanSplit dataset was recently developed to address data leakage issues by applying structure-based filtering to eliminate similarities between training and test complexes [1]. This curated benchmark enables genuine evaluation of model generalizability to unseen protein-ligand complexes.

CLMs enhance binding affinity prediction through several mechanisms:

- Representation learning: Pre-trained embeddings capture nuanced chemical similarities that inform binding potential.

- Transfer learning: Knowledge from large molecular corpora transfers to affinity prediction with limited data.

- Data augmentation: SMILES enumeration expands limited training datasets for improved generalization [23].

Case Study: Pathoblocker Identification for Pseudomonas aeruginosa

A practical demonstration of CLMs in drug discovery involved identifying pathoblockers targeting Pseudomonas aeruginosa. ChemLM was fine-tuned on just 219 compounds with varying potency against the quorum-sensing receptor PqsR. The model achieved substantially higher accuracy in identifying highly potent pathoblockers compared to state-of-the-art graph neural networks and other language models, validating its utility in real-world drug discovery scenarios with limited data [23].

Implementation Guide

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Resources

| Resource | Type | Function | Example Sources |

|---|---|---|---|

| ZINC20 | Dataset | Large-scale unlabeled compounds for pre-training | [26] |

| PDBbind CleanSplit | Dataset | Curated protein-ligand complexes without data leakage | [1] |

| ChemBERTa-3 Framework | Software | Open-source training framework for chemical foundation models | [26] |

| SMILES Enumeration | Algorithm | Data augmentation through alternative SMILES representations | [23] |

| Morgan Fingerprints | Algorithm | Chemistry-aware tokenization for efficient learning | [24] |

Optimization Guidelines

Hyperparameter optimization significantly impacts CLM performance. Analysis of ChemLM revealed that the number of SMILES augmentations during domain adaptation and embedding aggregation strategies were the most influential factors, while the number of attention heads and layers had minimal impact [23]. For binding affinity prediction specifically, critical considerations include:

- Data splitting: Implement structure-based splits to avoid data leakage and properly evaluate generalization.

- Domain adaptation: Incorporate target-specific compounds during self-supervised training stages.

- Regularization: Employ L2 regularization and early stopping to prevent overfitting on limited affinity data.

- Multi-task learning: Jointly predict related molecular properties to improve feature learning [29] [23].

Chemical language models interpreting SMILES strings represent a transformative technology for molecular property prediction, with particular relevance to binding affinity research. Models like ChemBERTa demonstrate how transfer learning from large unlabeled molecular datasets can overcome data limitations in drug discovery. However, challenges remain in out-of-distribution generalization, evaluation rigor, and architectural optimization. Future developments will likely focus on multi-modal approaches combining SMILES representations with structural information, improved pre-training objectives that better capture physical principles of molecular interactions, and more robust benchmarking methodologies. As these models mature, they hold significant promise for accelerating the identification of therapeutic candidates through more accurate and generalizable binding affinity prediction.

Transfer learning, the process of repurposing knowledge gained from solving one problem to address a different but related challenge, has emerged as a transformative paradigm in artificial intelligence and computational research. In biological sciences and drug discovery, this approach enables researchers to overcome data scarcity and improve model generalization by leveraging pre-existing knowledge. The core intuition is that a model trained on a large and general dataset effectively serves as a generic model of its domain, whose learned feature maps can be repurposed for specialized tasks without starting from scratch [30]. This capability is particularly valuable in binding affinity research, where experimental data is often limited and expensive to acquire.

The fundamental principle of transfer learning involves initial training on a source task with abundant data, followed by knowledge transfer to a target task with limited data. This process stands in contrast to traditional machine learning approaches that treat each problem in isolation. In the context of binding affinity prediction, transfer learning allows models to incorporate general biochemical knowledge before fine-tuning on specific protein-ligand interaction data, resulting in more robust and accurate predictions [1]. Recent advances have demonstrated that this approach significantly enhances model performance, especially when applied to strictly independent test datasets that avoid the pitfalls of data leakage [1].

Within drug discovery, the application of transfer learning from language models represents a particularly promising frontier. Inspired by breakthroughs in natural language processing (NLP), researchers have developed bioinformatics equivalents of word-embedding technologies that capture functional relationships between biological entities rather than treating them as independent identifiers [31]. This functional representation approach has proven especially valuable for analyzing gene signatures and predicting drug-target interactions, where it substantially improves sensitivity in detecting weak molecular signals that traditional identity-based methods often miss [31].

Transfer Learning from Language Models: Core Concepts and Biological Applications

Fundamental Analogy: From Natural Language to Biological Data

The application of language model principles to biological data represents one of the most significant advances in computational drug discovery. This approach draws a direct analogy between natural language and biological systems: just as words gain meaning from their context in sentences, genes and proteins derive functional significance from their context in biological pathways and networks [31]. Early NLP analyses used one-hot encoding of words where each word was encoded by its identity, treating "cat" and "kitty" as equally distant as "cat" and "rock." Similarly, traditional bioinformatics methods treated genes as independent identifiers, ignoring their underlying functional relationships [31].

The breakthrough came with the introduction of word-embedding technologies like word2vec in NLP, which capture semantic meanings by representing words as vectors in a high-dimensional space where synonyms are positioned close together [31]. This inspired the development of similar embedding approaches for biological entities. For example, the Functional Representation of Gene Signatures (FRoGS) approach maps individual human genes into high-dimensional coordinates that encode their biological functions, trained such that genes with similar Gene Ontology annotations and experimental expression profiles are positioned near each other in the embedding space [31]. This functional representation enables more meaningful comparisons between gene signatures by capturing pathway-level similarities even when the specific genes involved show little overlap.

Technical Implementation of Biological Language Models

Implementing transfer learning from language models for biological data involves several key steps. First, pre-training occurs on large-scale biological datasets to learn fundamental representations of genes, proteins, or compounds. For example, protein language models like ProtTrans are trained on millions of protein sequences to learn structural and functional principles [32]. Similarly, molecular models like MG-BERT are pre-trained on chemical compound databases to learn fundamental biochemical properties [32].

The second step involves fine-tuning these pre-trained models on specific downstream tasks, such as binding affinity prediction or drug-target interaction identification. During this phase, the model adapts its general biological knowledge to the specific problem domain with a smaller, task-specific dataset [32]. This approach has proven particularly valuable for addressing the sparseness intrinsic to experimental signatures, where technical variations often lead to limited overlap between gene signatures studying the same biological pathway [31].

Table: Comparison of Language Model Applications in Natural Language Processing and Biological Research

| Aspect | Natural Language Processing | Biological Research |

|---|---|---|

| Basic Units | Words | Genes, Proteins, Compounds |

| Embedding Method | word2vec, BERT | FRoGS, ProtTrans, ChemBERTa |

| Relationship Captured | Semantic similarity | Functional similarity |

| Primary Advantage | Understands synonyms and context | Identifies functional pathways beyond gene identity |

| Typical Application | Text classification, translation | Drug-target prediction, binding affinity |

Application in Binding Affinity and Drug-Target Interaction Research

Critical Challenges in Binding Affinity Prediction

Binding affinity prediction represents a cornerstone of computational drug design, yet it faces significant challenges that transfer learning approaches aim to address. A primary issue is data bias and leakage, where similarities between training and test datasets artificially inflate performance metrics. Recent research has revealed that train-test data leakage between the PDBbind database and Comparative Assessment of Scoring Function (CASF) benchmarks has severely inflated the performance metrics of many deep-learning-based binding affinity prediction models, leading to overestimation of their generalization capabilities [1]. Alarmingly, some models perform comparably well on CASF benchmarks even after omitting all protein or ligand information from their input data, suggesting their predictions are based on memorization rather than genuine understanding of protein-ligand interactions [1].

Another significant challenge is the sparseness of experimental signatures, where each signature consists of only a sparse sampling of the genes underlying regulated pathways. If we randomly sample 10 genes from a hypothetical 100-gene pathway twice, the chance of having three or more common genes is only 6%, despite representing the same pathway [31]. This sparseness is intrinsic to all experimental signatures and arises from various technical factors including RNA-seq signal alterations, read dropouts with lower gene expression levels, and regulatory variations in transcriptional factor binding sites [31].

Transfer Learning Solutions for Enhanced Generalization

To address these challenges, researchers have developed sophisticated transfer learning approaches that improve model generalization. The GEMS (Graph neural network for Efficient Molecular Scoring) model exemplifies this trend by combining a novel graph neural network architecture with transfer learning from language models trained on the filtered PDBbind CleanSplit dataset [1]. This approach maintains high benchmark performance even when trained on datasets with reduced data leakage, demonstrating genuine generalization capability rather than exploiting dataset similarities [1].

Another innovative framework, EviDTI, utilizes evidential deep learning for uncertainty quantification in drug-target interaction prediction [32]. This approach integrates multiple data dimensions—including drug 2D topological graphs, 3D spatial structures, and target sequence features—with pre-trained knowledge from language models. Through evidential deep learning, EviDTI provides uncertainty estimates for its predictions, allowing researchers to prioritize drug-target pairs with higher confidence for experimental validation [32]. This capability is particularly valuable in drug discovery, where well-calibrated uncertainty information enhances efficiency by reducing false positives.

Table: Performance Comparison of EviDTI with Baseline Models on DrugBank Dataset

| Model | Accuracy (%) | Precision (%) | MCC (%) | F1 Score (%) | AUC (%) |

|---|---|---|---|---|---|

| EviDTI | 82.02 | 81.90 | 64.29 | 82.09 | Not specified |

| RF | 71.07 | Not specified | Not specified | Not specified | Not specified |

| SVM | Not specified | Not specified | Not specified | Not specified | Not specified |

| NB | Not specified | Not specified | Not specified | Not specified | Not specified |

Experimental Protocols and Methodologies

FRoGS Implementation for Gene Signature Analysis

The Functional Representation of Gene Signatures (FRoGS) approach employs a specific methodology for comparing gene signatures through functional embedding. The protocol begins with embedding generation, where individual human genes are mapped into high-dimensional coordinates encoding their functions based on Gene Ontology annotations and ARCHS4 experimental expression profiles [31]. The model is trained to assign coordinates so that neighboring genes share similar annotations and expression correlations.

For similarity assessment, the protocol involves generating two foreground gene sets and one background gene set for a given pathway W. Both foreground sets are seeded with λ random genes within W and 100-λ random genes outside W, simulating experimentally derived signatures from perturbations co-targeting the same pathway. The background set contains no genes from W. The process is repeated 200 times, and similarity score distributions are compared using one-sided Wilcoxon signed-rank test to characterize if the foreground-foreground similarity scores exceed foreground-background similarities [31].

The validation phase uses t-SNE projection to visually confirm that genes cluster by function in the embedding space. Performance comparison against state-of-the-art methods including OPA2Vec, Gene2vec, clusDCA, and Fisher's exact test demonstrates FRoGS's superiority, particularly under weak signals (λ = 5), where most embedding methods outperform Fisher's exact test [31]. This protocol provides the foundation for sensitive gene signature comparisons in drug target prediction.

PDBbind CleanSplit Dataset Curation

Addressing data leakage in binding affinity prediction requires careful dataset curation. The PDBbind CleanSplit protocol employs a structure-based clustering algorithm to identify and remove structural similarities between training and test datasets [1]. The method involves multimodal filtering that combines assessment of protein similarity (TM scores), ligand similarity (Tanimoto scores), and binding conformation similarity (pocket-aligned ligand root-mean-square deviation) [1].

The specific protocol includes these critical steps:

- Similarity identification: Compare all CASF complexes with all PDBbind complexes using combined similarity metrics

- Training data filtering: Exclude all training complexes that closely resemble any CASF test complex

- Ligand-based filtering: Remove training complexes with ligands identical to those in CASF test complexes (Tanimoto > 0.9)

- Redundancy reduction: Apply adapted filtering thresholds to identify and eliminate similarity clusters within the training dataset

This rigorous protocol resulted in the removal of 4% of training complexes due to train-test similarity and an additional 7.8% due to internal redundancies [1]. The resulting CleanSplit dataset enables genuine evaluation of model generalization to unseen protein-ligand complexes by ensuring strict separation from benchmark datasets.

EviDTI Framework for Drug-Target Interaction Prediction

The EviDTI framework employs a comprehensive experimental protocol for drug-target interaction prediction with uncertainty quantification. The methodology consists of three main components [32]:

- Protein feature encoding: Utilize ProtTrans pre-trained model to generate initial target representation, followed by light attention mechanism for local interaction insights

- Drug feature encoding: Encode 2D topological information using MG-BERT pre-trained model processed by 1DCNN, and 3D spatial structure through GeoGNN module converting structure to atom-bond and bond-angle graphs

- Evidential layer processing: Concatenate target and drug representations fed into evidential layer outputting parameter α for calculating prediction probability and uncertainty

The evaluation protocol involves testing on three benchmark datasets (DrugBank, Davis, and KIBA) randomly split into training, validation, and test sets in 8:1:1 ratio. Performance is assessed using seven metrics: accuracy, recall, precision, Matthews correlation coefficient, F1 score, area under the ROC curve, and area under the precision-recall curve [32]. This comprehensive evaluation demonstrates EviDTI's competitive performance against 11 baseline models while providing calibrated uncertainty estimates.

Visualization of Workflows and Relationships

Transfer Learning from Language Models for Binding Affinity

FRoGS Functional Representation Methodology

Table: Key Research Reagents and Computational Resources for Transfer Learning in Binding Affinity Research

| Resource Name | Type | Function in Research | Example Applications |

|---|---|---|---|

| PDBbind Database | Database | Provides curated protein-ligand complexes with binding affinity data for training and validation | Training data for binding affinity prediction models [1] |

| CASF Benchmark | Benchmark Dataset | Standardized sets for evaluating scoring function performance | Model validation and comparison [1] |

| FRoGS (Functional Representation of Gene Signatures) | Computational Method | Embeds genes based on functional similarity rather than identity | Comparing gene signatures, identifying shared pathways [31] |

| ProtTrans | Pre-trained Model | Protein language model trained on millions of sequences | Protein feature extraction for binding prediction [32] |

| MG-BERT | Pre-trained Model | Molecular graph representation learning | Drug compound feature encoding [32] |

| EviDTI Framework | Computational Framework | Drug-target interaction prediction with uncertainty quantification | Prioritizing high-confidence drug-target pairs [32] |

| PDBbind CleanSplit | Curated Dataset | Filtered training dataset minimizing data leakage | Genuine evaluation of model generalization [1] |

| GEMS (Graph neural network for Efficient Molecular Scoring) | Model Architecture | Graph neural network with transfer learning for binding affinity | Structure-based affinity prediction [1] |

Transfer learning from language models represents a paradigm shift in binding affinity research and computational drug discovery. By leveraging broad knowledge from large-scale biological data, researchers can develop more accurate and generalizable models for specific tasks like drug-target interaction prediction and binding affinity estimation. The approaches discussed—from functional representation of gene signatures to evidential deep learning frameworks—demonstrate significant improvements over traditional methods that treat biological entities as independent identifiers rather than functionally related components.

Future research directions will likely focus on multimodal integration that combines diverse data types including genomic, structural, and clinical information. Additionally, improved uncertainty quantification methods like those implemented in EviDTI will become increasingly important for prioritizing experimental validation and reducing false positives in drug discovery pipelines. As the field addresses critical challenges like data leakage through rigorous dataset curation, transfer learning approaches will continue to enhance their reliability and applicability to real-world drug discovery problems.

The integration of language model principles with biological domain knowledge creates a powerful framework for understanding complex biomolecular interactions. By representing biological entities through their functional relationships rather than isolated identities, these approaches capture the essential nature of biological systems as interconnected networks rather than collections of independent components. This conceptual advancement, combined with sophisticated computational implementations, positions transfer learning as a cornerstone technology for the next generation of binding affinity research and drug discovery.

The emergence of protein language models (pLMs) represents a paradigm shift in computational biology, establishing embeddings as a universal key for a wide range of downstream prediction tasks. These models capture the fundamental "grammar of the language of life" from protein sequences, generating compact, information-rich vector representations that serve as exclusive input for supervised prediction methods [33] [34]. This technical review examines the theoretical foundations, practical advantages, and transformative applications of embeddings, with particular focus on binding affinity prediction in structure-based drug design. We demonstrate that pLM-based approaches now significantly outperform traditional multiple sequence alignment (MSA)-dependent methods in accuracy while consuming substantially fewer computational resources [33]. Through detailed experimental protocols and performance analyses, we establish that embeddings provide a universal, task-agnostic foundation that enables robust generalization across diverse protein prediction challenges.

From Sequence to Vector: The Embedding Process

Protein language models process amino acid sequences through deep neural networks trained on millions of diverse protein sequences, learning evolutionary patterns and biochemical principles without explicit supervision. The resulting embeddings are fixed-size vector representations that implicitly encapsulate structural, functional, and evolutionary information [33] [34]. Unlike traditional bioinformatics approaches that rely on explicit evolutionary information from multiple sequence alignments, pLMs derive this knowledge directly from sequence statistics, enabling MSA-free prediction with comparable or superior accuracy.

The Universal Key Hypothesis

The "universal key" hypothesis posits that protein embeddings provide a sufficiently rich, task-agnostic representation to serve as the exclusive input for diverse downstream prediction tasks. This represents a significant departure from the previous 33-year paradigm where evolutionary information extracted through simple averaging from MSAs was the most successful approach for protein prediction [33]. Embeddings effectively condense biological grammar so efficiently that downstream methods succeed with remarkably small models, requiring few free parameters in an era of increasingly complex deep neural architectures [34].

Theoretical Foundations and Comparative Advantages

Resource Efficiency and Performance Benefits

The transition to embedding-based methods offers substantial practical advantages for research implementation, particularly in resource-constrained environments or high-throughput applications.

Table 1: Comparative Analysis of MSA-Based vs. Embedding-Based Approaches

| Characteristic | MSA-Based Methods | Embedding-Based Methods | Practical Implication |

|---|---|---|---|

| Computational Demand | High (per-prediction alignment) | Low (once pre-training complete) | Scalability for large datasets |

| Evolutionary Information | Explicit from family alignment | Implicit from sequence statistics | No family knowledge required |

| Protein Specificity | Family-dependent | Protein-specific solutions | Novel protein applications |

| Model Size | Larger downstream models | Small downstream models | Faster deployment/inference |

| Accuracy Trend | Established baseline | Significantly improved for many tasks | State-of-the-art performance |

The resource advantage emerges primarily after the initial pLM pre-training phase. Once this foundation is established, pLM-based solutions consume substantially fewer computational resources than MSA-based alternatives, making them particularly valuable for large-scale screening applications in drug discovery [33].

Embeddings as Task-Agnostic Foundations

Universal embeddings differ fundamentally from task-specific representations by capturing intrinsic data patterns without optimization for predefined objectives. This quality enables their application across diverse downstream tasks including classification, regression, similarity search, and outlier detection [35]. In tabular data applications, this approach transforms entities and rows into vector representations that serve as foundations for multiple analytical applications without retraining [35]. Similarly, in protein science, pLM embeddings provide a universal substrate for predicting structure, function, solubility, domains, and binding properties from the same foundational representation [33].

Application in Binding Affinity Prediction

The Generalization Challenge in Scoring Functions

Accurate prediction of protein-ligand binding affinities remains a critical challenge in computational drug design. Traditional scoring functions implemented in docking tools like AutoDock Vina show limited accuracy in binding affinity prediction [1]. While deep learning approaches have demonstrated improved performance, many models suffer from overestimated generalization capability due to train-test data leakage between the PDBbind database and Comparative Assessment of Scoring Function (CASF) benchmarks [1].

Recent investigations reveal that nearly 50% of CASF complexes have exceptionally similar counterparts in training data, sharing similar ligand and protein structures with comparable ligand positioning and closely matched affinity labels [1]. This data leakage enables models to achieve inflated performance metrics through memorization rather than genuine understanding of protein-ligand interactions.

Advanced Architectures: GEMS Model

The Graph neural network for Efficient Molecular Scoring (GEMS) represents a state-of-the-art approach that addresses generalization challenges through a novel architecture combining graph neural networks with transfer learning from protein language models [1].

Table 2: GEMS Model Components and Functions

| Component | Type/Architecture | Function in Binding Affinity Prediction |

|---|---|---|

| Protein Representation | pLM Embeddings (Transfer Learning) | Encodes structural and evolutionary information |

| Graph Construction | Sparse Graph of Protein-Ligand Interactions | Models atomic-level interactions |

| Neural Architecture | Graph Neural Network (GNN) | Processes structured interaction data |

| Training Data | PDBbind CleanSplit | Prevents data leakage, ensures generalization |

| Output | Binding Affinity Prediction | Quantitative estimate of binding strength |

GEMS leverages a sparse graph modeling of protein-ligand interactions and transfer learning from language models to generalize to strictly independent test datasets [1]. Ablation studies confirm that the model fails to produce accurate predictions when protein nodes are omitted, demonstrating that its predictions derive from genuine understanding of protein-ligand interactions rather than exploiting dataset artifacts [1].

Experimental Protocol: Robust Binding Affinity Prediction

Dataset Preparation: PDBbind CleanSplit

The PDBbind CleanSplit dataset addresses critical data leakage issues through structure-based filtering:

Similarity Assessment: Compute multimodal similarity between all protein-ligand complexes using:

- Protein similarity (TM-scores)

- Ligand similarity (Tanimoto scores)

- Binding conformation similarity (pocket-aligned ligand RMSD)

Leakage Elimination: Remove all training complexes that closely resemble any CASF test complex according to combined similarity thresholds.

Redundancy Reduction: Apply adapted filtering thresholds to identify and eliminate similarity clusters within the training dataset, removing 7.8% of training complexes to minimize memorization.

Ligand Independence: Exclude all training complexes with ligands identical to those in CASF test complexes (Tanimoto > 0.9).

This protocol produces a training dataset strictly separated from CASF benchmarks, enabling genuine evaluation of model generalizability to unseen protein-ligand complexes [1].

Model Training and Evaluation