Binding Affinity Prediction: The AI Revolution in Drug Discovery

Accurate prediction of drug-target binding affinity is a cornerstone of modern computational drug discovery, enabling the rapid identification and optimization of therapeutic candidates.

Binding Affinity Prediction: The AI Revolution in Drug Discovery

Abstract

Accurate prediction of drug-target binding affinity is a cornerstone of modern computational drug discovery, enabling the rapid identification and optimization of therapeutic candidates. This article provides a comprehensive overview for researchers and drug development professionals, covering the foundational principles of binding affinity. It explores the evolution of predictive methodologies from physics-based simulations to cutting-edge deep learning and multimodal AI models. The content addresses critical challenges including data bias, generalization, and model optimization, and concludes with a forward-looking analysis of validation frameworks and the future trajectory of AI-driven, personalized drug design.

The Foundation of Drug-Target Interactions: What is Binding Affinity?

In the field of drug discovery, binding affinity prediction represents a fundamental pursuit—the ability to accurately quantify and forecast the strength of interactions between a potential drug molecule and its biological target. Understanding these interactions is crucial for designing compounds with optimal efficacy and specificity. This guide provides a comprehensive technical examination of the key parameters used to define binding affinity, from fundamental equilibrium constants to the more complex influences of protonation states. For researchers and drug development professionals, mastering these concepts is not merely academic; it directly enables the rational design of therapeutic molecules, the interpretation of high-throughput screening data, and the successful navigation of the hit-to-lead optimization process. The accurate prediction of binding affinity remains a central challenge in structure-based drug design, where computational models strive to bridge the gap between structural data and biological activity [1].

Fundamental Equilibrium Constants: Kd and Ki

At its core, binding affinity describes the tendency of a molecule (ligand) to bind to a target (receptor or enzyme). The most direct measure of this affinity is the Dissociation Constant (Kd), a thermodynamic parameter that describes the equilibrium between the bound and unbound states of a protein-ligand complex [2]. It is defined as the ratio of the dissociation rate constant (k~off~ or k~-1~) to the association rate constant (k~on~ or k~1~):

Where [P] is the free protein concentration, [L] is the free ligand concentration, and [PL] is the concentration of the protein-ligand complex. A lower Kd value indicates a tighter binding interaction, as it signifies that a lower concentration of free reactants is required to achieve half-maximal saturation of the binding sites.

Closely related to Kd is the Inhibition Constant (Ki), which is a specific type of dissociation constant applied to enzyme inhibitors [2]. The Ki value represents the equilibrium dissociation constant for the binding of an inhibitor to an enzyme. However, a critical distinction is that the kinetic mechanism of inhibition (e.g., competitive, uncompetitive, non-competitive, mixed) dictates the precise binding equilibrium described by the Ki. Unlike the more general Kd, Ki is specifically measured through inhibition kinetics rather than direct binding measurements.

Table 1: Comparison of Fundamental Binding Affinity Constants

| Parameter | Full Name | Definition | Key Characteristics | Preferred Measurement Methods |

|---|---|---|---|---|

| Kd | Dissociation Constant | Concentration of ligand at which half the protein binding sites are occupied at equilibrium. | A true thermodynamic constant; general measure of binding affinity. | Isothermal Titration Calorimetry (ITC), Surface Plasmon Resonance (SPR), Bio-Layer Interferometry (BLI) [3] |

| Ki | Inhibition Constant | Dissociation constant for an enzyme-inhibitor complex. | Mechanism-dependent (competitive, uncompetitive, etc.); measured via functional inhibition [2]. | Enzyme kinetics assays; derived from IC50 values with knowledge of mechanism and substrate concentration [2]. |

Functional Assay Parameters: IC50 and EC50

In practical drug discovery, especially in high-throughput screening, functional assays are often used, which yield different but related parameters. The Half-Maximal Inhibitory Concentration (IC50) is the concentration of an inhibitor required to reduce a given biological activity or process to half of its uninhibited value [2]. It is crucial to understand that IC50 is not a direct measure of a binding equilibrium. Instead, it is a functional potency value that can be influenced by the assay conditions, particularly the substrate concentration and the mechanism of inhibition.

The relationship between IC50 and the more fundamental Ki is governed by the mechanism of inhibition and the assay conditions. For a competitive inhibitor, the relationship is given by:

Where [S] is the substrate concentration and K~m~ is the Michaelis constant. This equation highlights that for competitive inhibition, the IC50 value increases with increasing substrate concentration, eventually approaching the Ki value only when [S] is much less than K~m~ [2] [3].

The Half-Maximal Effective Concentration (EC50) is a more general term for the concentration of a drug that induces a response halfway between the baseline and maximum. It is typically used for agonists or in systems where the compound does not completely inhibit a process, even at high concentrations. While IC50 specifically quantifies inhibition, EC50 can quantify any effect, making it essential for characterizing partial inhibitors or activators [2].

Table 2: Comparison of Functional Potency Parameters from Assays

| Parameter | Full Name | Definition | Key Characteristics | Relationship to Binding Constants |

|---|---|---|---|---|

| IC50 | Half-Maximal Inhibitory Concentration | Concentration required for 50% inhibition of a biological activity. | Highly dependent on assay conditions (e.g., substrate concentration); not a direct binding constant. | For competitive inhibition: IC~50~ = K~i~ (1 + [S]/K~m~) [2] |

| EC50 | Half-Maximal Effective Concentration | Concentration that produces 50% of the maximum possible effect. | Used for agonists or partial inhibitors; reflects efficacy, not just binding. | Reports on binding affinity regardless of efficacy for partial inhibitors [2]. |

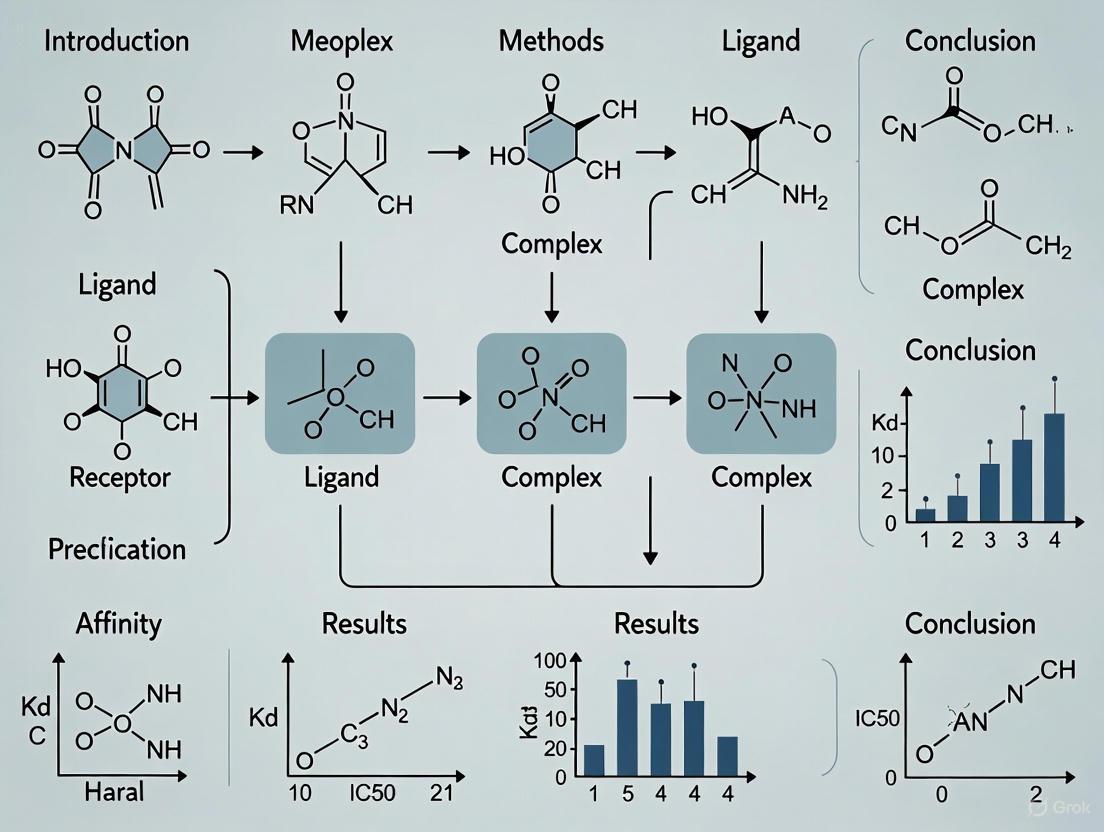

The following diagram illustrates the logical relationship between these core parameters and the experimental contexts from which they are derived.

The Influence of Protonation and pK Values on Binding Affinity

The binding affinity between a protein and a ligand is not solely determined by their static structures. A critical and often overlooked factor is the change in protonation states of ionizable groups upon binding. The pK~a~ of an ionizable group (e.g., on a lysine side chain or a ligand carboxylic acid) can shift significantly during complex formation, altering the group's charge state and profoundly impacting the binding energy [4].

The physical origins of these pK shifts can be decomposed into two primary contributions [4]:

- The Born (Desolvation) Contribution (ΔpK~Born~): When a charged group becomes buried in a less polar protein environment upon ligand binding, it is energetically costly. This desolvation penalty can make it more favorable for the group to protonate (if acidic) or deprotonate (if basic), shifting its pK~a~.

- The Background Interaction Contribution (ΔpK~Back~): This includes new electrostatic interactions formed between the ionizable group and partial charges on the ligand, as well as alterations in interactions with other groups within the protein due to binding-induced conformational changes.

The energetic consequences of these protonation state changes can be substantial—often exceeding several kcal/mol—making them significant contributors to the overall binding free energy. Consequently, the binding affinity of a drug candidate can exhibit strong pH dependence. If the complex formation is associated with a net uptake or release of protons, the optimal binding will occur at a specific pH [4]. This has direct implications for drug design, as the sub-cellular environment of the target must be considered. Furthermore, the common practice in molecular docking of using a single, fixed protonation state for the receptor and ligand can lead to inaccurate affinity predictions if these changes are not accounted for [4].

Experimental Protocols for Determining Binding Affinity

Determining Kd via a Convergence IC50 Method (Competitive Immunoassay)

A practical method for determining the affinity constant (Kd) of an antibody-antigen pair using standard immunoassay technology relies on the principle that the molar IC50 of a competitive assay asymptotically approaches the Kd value as the concentrations of the reagents are infinitely diluted [3].

Protocol:

- Assay Setup: Develop a competitive immunoassay where the ligand (e.g., a monovalent antigen/hapten) competes with a labeled version of the same ligand for binding to the protein (e.g., an antibody).

- 2D Dilution Series: Perform a two-dimensional dilution of the key reagents. Typically, this involves creating a dilution series of the protein (antibody) and, for each protein concentration, running a full dilution series of the competing ligand.

- Data Analysis: For each protein concentration curve, determine the IC50 value (the molar concentration of ligand that gives 50% inhibition).

- Convergence to Kd: Plot the observed IC50 values against the corresponding protein concentrations used in the assay. The y-intercept of this plot, as the protein concentration theoretically approaches zero, provides an estimate of the Kd. In practice, the experiment is repeated with progressively lower reagent concentrations until the IC50 values stabilize and converge on the Kd [3].

Utilizing Surface Plasmon Resonance (SPR) for Kinetic and Affinity Measurements

Surface Plasmon Resonance (SPR), commercialized by systems like Biacore, is a dominant technique for determining affinity constants as it can provide both kinetic (on-rate k~on~, off-rate k~off~) and thermodynamic (Kd) data [3].

Protocol:

- Immobilization: One binding partner (typically the protein target) is immobilized on a dextran-coated gold chip.

- Ligand Injection: The other partner (the ligand/drug candidate) is flowed over the chip surface in a series of concentrations.

- Real-time Monitoring: The SPR signal, proportional to the mass of the bound complex, is monitored in real-time during the association (ligand injection) and dissociation (buffer injection) phases.

- Data Fitting: The resulting sensorgrams (signal vs. time plots) are globally fitted to a binding model to extract the association rate constant (k~on~) and the dissociation rate constant (k~off~). The equilibrium dissociation constant is calculated as Kd = k~off~ / k~on~ [3].

Key Considerations:

- Immobilization can potentially disturb the native structure or function of the protein.

- The method is sensitive to refractive index changes and non-specific binding.

- For bivalent molecules like antibodies, immobilizing the antigen can lead to avidity effects that overestimate the monovalent affinity (Kd). Using a monovalent antigen (hapten) in solution for competitive formats can circumvent this issue [3].

The Scientist's Toolkit: Key Reagents and Technologies

Table 3: Essential Research Reagent Solutions for Binding Affinity Studies

| Item / Technology | Function in Affinity Determination |

|---|---|

| Monovalent Hapten | A small molecule with a single epitope used in competitive assays to prevent multivalent binding (avidity), allowing measurement of the true intrinsic affinity constant (Kd) [3]. |

| SPR/BLI Chips | Functionalized sensor surfaces (e.g., with dextran for covalent protein immobilization) used in Surface Plasmon Resonance (SPR) and Bio-Layer Interferometry (BLI) to capture one binding partner for label-free interaction analysis [3]. |

| Fluorescently Labeled Ligands | Ligands conjugated to fluorophores for use in homogeneous binding assays such as Fluorescence Anisotropy/Polarization or Microscale Thermophoresis (MST) [3]. |

| High-Throughput Experimentation (HTE) Kits | Miniaturized, pre-packaged reaction arrays enabling the rapid synthesis and screening of large chemical libraries to generate structure-activity relationship (SAR) and affinity data [5]. |

Computational Prediction of Binding Affinity and Current Challenges

The accurate in silico prediction of binding affinity is a major goal in structure-based drug design. While classical scoring functions implemented in docking tools have limitations, deep learning models offer new potential [1]. These models, particularly Graph Neural Networks (GNNs) and convolutional networks, learn to predict binding affinities from structural data of protein-ligand complexes.

A significant challenge in this field has been the overestimation of model performance due to train-test data leakage. This occurs when the protein-ligand complexes used to train a model are structurally very similar to those in the benchmark test sets. Models can then "memorize" affinities rather than learning generalizable principles of molecular interaction. A 2025 study highlighted this issue, showing that a simple search algorithm that finds the most similar training complex could match the performance of some deep learning models, indicating reliance on data leakage [1].

To address this, rigorously curated datasets like PDBbind CleanSplit have been developed. These datasets use structure-based filtering algorithms to remove complexes from the training set that have high similarity (in protein structure, ligand chemistry, and binding pose) to those in the test sets, ensuring a more genuine evaluation of a model's ability to generalize to novel targets [1]. When state-of-the-art models are retrained on such clean splits, their performance often drops substantially, confirming that previous benchmark results were inflated. Promisingly, models like GEMS (Graph neural network for Efficient Molecular Scoring) that leverage sparse graph modeling and transfer learning have demonstrated robust performance even on strictly independent test datasets, marking a step toward reliable affinity prediction for drug discovery [1].

The following workflow diagram integrates both experimental and computational approaches to binding affinity determination, highlighting the path to a robust prediction model.

The successful development of new therapeutics hinges on the precise and efficient exploration of molecular interactions, with binding affinity prediction serving as the fundamental pillar throughout the drug discovery pipeline. Binding affinity—the strength of interaction between a drug candidate and its biological target—directly influences drug efficacy and therapeutic potential [6] [7]. Accurate prediction of these affinities enables researchers to better understand molecular interactions and dramatically accelerates the identification of promising drug candidates by reducing the number of compounds that need to be synthesized and tested [6] [7]. This whitepaper examines how computational advances in binding affinity prediction are revolutionizing three critical phases of drug discovery: hit identification, lead optimization, and drug repurposing, ultimately creating a more efficient and targeted approach to pharmaceutical development.

The challenges of traditional drug discovery are substantial, often requiring over a decade and billions of dollars to bring a single drug to market [7] [8]. Early computational strategies for binding affinity prediction relied mainly on physics-based methods like molecular docking and molecular dynamics (MD) simulations [7]. While these approaches offer detailed structural insights, they typically demand extensive computational resources and accurate structural input, limiting their applicability in large-scale screening [7] [9]. The integration of artificial intelligence (AI) and machine learning (ML) has transformed this landscape, enabling data-driven approaches that learn from known drug-target binding data to reduce reliance on computationally intensive simulations [7] [10] [8].

Hit Identification: Accelerating Initial Candidate Discovery

Hit identification focuses on discovering initial compounds with measurable activity against a therapeutic target. This stage has been revolutionized by high-throughput technologies and computational methods that can rapidly screen vast chemical spaces.

Advanced Screening Technologies

DNA-encoded libraries (DELs) have emerged as a powerful technology for hit identification, enabling ultra-high-throughput screening of millions of compounds against selected molecular targets [11]. DELs utilize DNA as a unique identifier for each compound, facilitating simultaneous testing of enormous chemical libraries while generating vast numbers of drug-target interaction data points at minimal cost [11] [12]. Complementary approaches such as Proteome Integral Solubility Alteration (PISA) assays assess proteome-wide ligand-induced thermal stability shifts, offering indirect quantitative information about binding affinity and target engagement, though they remain experimentally demanding and low throughput [11].

Computational Screening and Generative AI

Computational approaches bridge the gap between experimental throughput and mechanistic resolution, enabling prediction of binding affinities across large chemical and proteomic spaces [11]. Modern deep learning frameworks like MMAtt-DTA, an attention-based architecture, can predict binding affinities for over 452,000 compounds and 1,251 human protein targets with high accuracy [11]. Generative AI models have further expanded possibilities for hit identification. For instance, BoltzGen represents a breakthrough as the first model capable of generating novel protein binders ready to enter the drug discovery pipeline, having been rigorously validated on 26 targets including therapeutically relevant cases and targets explicitly chosen for their dissimilarity to training data [9].

Table 1: Key Databases for Drug-Target Interaction Data in Hit Identification

| Database | Primary Focus | Key Metrics | Expert Ranking Score |

|---|---|---|---|

| ChEMBL | Bioactivity measurements | >21 million measurements, >2.4 million ligands, >16,000 targets [11] | 10/10 [11] |

| BindingDB | Experimentally determined binding affinities | ~2.4 million measurements, ~1.3 million unique ligands, ~9,000 targets [11] | 9/10 [11] |

| GtoPdb | Expert-curated pharmacological data | 3,039 targets, 12,163 ligands with emphasis on GPCRs, ion channels, nuclear receptors [11] | 8/10 [11] |

Experimental Protocol: DNA-Encoded Library Screening

Objective: Identify hit compounds against a protein target from a DNA-encoded chemical library. Materials:

- Target protein: Purified and biotinylated

- DEL: DNA-encoded chemical library containing millions to billions of compounds

- Streptavidin-coated magnetic beads: For target immobilization

- Selection buffer: Typically PBS with 0.05% Tween-20 and BSA

- PCR reagents: For amplification of enriched DNA tags

- Next-generation sequencing platform: For tag identification

Procedure:

- Incubation: Mix the biotinylated target protein with the DEL in selection buffer for 2-16 hours at 4°C with gentle rotation.

- Capture: Add streptavidin-coated magnetic beads and incubate for 30 minutes.

- Washing: Separate beads using a magnet and wash 3-5 times with selection buffer to remove non-binders.

- Elution: Release bound compounds by heat denaturation or specific elution conditions.

- Amplification and Sequencing: PCR-amplify the associated DNA tags and sequence them using NGS.

- Hit Identification: Map sequencing reads back to their corresponding chemical structures; compounds with significant enrichment are considered hits.

Lead Optimization: Enhancing Drug Properties

Once hit compounds are identified, lead optimization focuses on improving their affinity, selectivity, and drug-like properties through systematic chemical modification.

Computational Methods for Lead Optimization

Free Energy Perturbation (FEP) has gained prominence as a dominant structure-based approach for predicting relative binding free energies [6]. These methods are widely trusted as they directly model physical interactions between proteins and ligands at the atomic level, with utilization surging due to advances in accurate force-field energetics combined with huge increases in computing power [6]. However, FEP has limitations including high computational cost, requirement for high-quality protein structure, and limited applicability to narrow windows of structural changes around a reference ligand [6].

Physics-informed machine learning represents a groundbreaking alternative, overcoming the need for assumptions regarding ligand conformations and alignments [6]. These models dynamically identify and refine optimal ligand poses as parameters evolve, effectively learning both structure and physical interactions simultaneously while achieving accuracy comparable to FEP at roughly 0.1% of the computational cost [6]. Frameworks like HPDAF (Hierarchically Progressive Dual-Attention Fusion) integrate protein sequences, drug molecular graphs, and structural information from protein-binding pockets through specialized feature extraction modules, demonstrating a 7.5% increase in Concordance Index and 32% reduction in Mean Absolute Error compared to baseline models like DeepDTA [7].

Table 2: Comparison of Lead Optimization Methods

| Method | Key Features | Computational Cost | Domain Applicability |

|---|---|---|---|

| Free Energy Perturbation (FEP) | Physics-based, atomic-level modeling [6] | Very high (requires supercomputing resources) [6] | Narrow window around reference ligand [6] |

| Physics-Informed ML | Dynamically refines ligand poses, physically meaningful parameters [6] | ~1000x lower than FEP [6] | Broader applicability to new chemical scaffolds [6] |

| Multitask Learning (DeepDTAGen) | Predicts affinity and generates novel drugs simultaneously [10] | Moderate (single model for multiple tasks) [10] | Can generate target-aware drug variants [10] |

Synergistic Approaches

The most effective lead optimization strategies combine multiple approaches. Using FEP and physics-informed ML in parallel has been shown to improve accuracy because their prediction errors tend to be uncorrelated [6]. A sequential approach can also yield dramatic efficiency improvements: physics-informed ML methods first screen larger or more chemically diverse compound libraries at high throughput, then more computationally intensive FEP methods are applied only to the top candidates [6].

Diagram 1: Lead optimization workflow combining machine learning and physics-based simulations.

Experimental Protocol: Surface Plasmon Resonance (SPR) for Binding Affinity Measurement

Objective: Quantitatively measure binding affinity (KD) and kinetics (ka, kd) of lead compounds. Materials:

- SPR instrument: e.g., Biacore series

- Sensor chip: CM5 for covalent immobilization

- Running buffer: HBS-EP (10 mM HEPES, 150 mM NaCl, 3 mM EDTA, 0.05% surfactant P20, pH 7.4)

- Immobilization reagents: EDC/NHS for activation, ethanolamine HCl for deactivation

- Analytes: Purified lead compounds in running buffer

- Target protein: Purified for immobilization

Procedure:

- System Preparation: Prime the SPR instrument with running buffer.

- Ligand Immobilization:

- Activate the sensor chip surface with a 1:1 mixture of 0.4 M EDC and 0.1 M NHS for 7 minutes.

- Dilute the target protein to 10-50 μg/mL in 10 mM sodium acetate buffer (pH 4.0-5.0) and inject over the activated surface for immobilization.

- Deactivate the surface with 1 M ethanolamine HCl (pH 8.5) for 7 minutes.

- Binding Analysis:

- Inject a series of analyte concentrations (typically 0.1-100 × KD) over the immobilized target.

- Use a multi-cycle kinetics approach with a contact time of 60-180 seconds and dissociation time of 300-900 seconds.

- Include a reference flow cell for background subtraction.

- Data Analysis:

- Subtract reference cell and buffer injection responses.

- Fit the sensograms to a 1:1 binding model to calculate association (ka) and dissociation (kd) rate constants.

- Calculate equilibrium dissociation constant KD = kd/ka.

Drug Repurposing: Leveraging Existing Compounds

Drug repurposing represents a cost-effective and expedited alternative to traditional drug development pipelines, with the potential to address unmet clinical needs by systematically identifying new indications for existing approved drugs [11].

Data-Driven Repurposing Frameworks

Effective drug repurposing relies on comprehensive drug-target interaction (DTI) data from extensively curated resources. Recent analyses have manually classified targets into 12 high-level biological families and mapped 817 clinically approved drug indications into 28 broader therapeutic groups, creating a structured framework for systematic profiling of physicochemical properties among approved drugs across therapeutic categories [11]. This framework enables identification of associations between physicochemical characteristics and therapeutic groups, providing practical guidance for indication-specific compound prioritization [11].

Pathway-based computational pipelines can predict repositioning opportunities for FDA-approved drugs across disease types. For example, one implemented approach demonstrated adaptability across 10 major cancer types, providing a reference framework that can be readily extended to other therapeutic indications [11]. These analyses have revealed distinct clustering patterns among indication groups and physicochemical properties that may guide the design of novel therapeutics tailored to specific indication groups [11].

Computational Models for Repurposing

DeepDTAGen represents a novel multitask learning framework that simultaneously predicts drug-target binding affinities and generates new target-aware drug variants using common features for both tasks [10]. This approach addresses optimization challenges through the FetterGrad algorithm, which mitigates gradient conflicts by minimizing Euclidean distance between task gradients [10]. On benchmark datasets including KIBA, Davis, and BindingDB, DeepDTAGen achieved state-of-the-art performance with MSE of 0.146, CI of 0.897, and r²m of 0.765 on the KIBA test set, outperforming traditional machine learning models by 7.3% in CI and 21.6% in r²m while reducing MSE by 34.2% [10].

Table 3: Multitask Learning Performance for Binding Affinity Prediction and Drug Generation

| Model | MSE (KIBA) | CI (KIBA) | r²m (KIBA) | Validity | Novelty |

|---|---|---|---|---|---|

| KronRLS | 0.222 [10] | 0.836 [10] | 0.629 [10] | - | - |

| SimBoost | 0.222 [10] | 0.836 [10] | 0.629 [10] | - | - |

| GraphDTA | 0.147 [10] | 0.891 [10] | 0.687 [10] | - | - |

| DeepDTAGen | 0.146 [10] | 0.897 [10] | 0.765 [10] | 95.2% [10] | 99.8% [10] |

Experimental Protocol: Thermal Shift Assay for Target Engagement

Objective: Identify potential new targets for existing drugs by detecting protein thermal stability changes. Materials:

- Real-time PCR instrument: With protein melt curve capability

- SYPRO Orange dye: 5000× concentrate in DMSO

- Protein targets: Purified human proteins (e.g., kinase panel)

- Compound library: FDA-approved drugs in DMSO

- Assay buffer: PBS or appropriate protein buffer

Procedure:

- Plate Preparation:

- Dilute each protein target to 1 μM in assay buffer.

- Add 1 μL of compound (10 μM final) or DMSO control to designated wells.

- Add 19 μL of protein solution to each well.

- Add 5 μL of 20× SYPRO Orange dye (final 5×).

- Thermal Denaturation:

- Seal the plate and centrifuge at 1000 × g for 1 minute.

- Program the real-time PCR instrument with a thermal gradient from 25°C to 95°C with 1°C increments and 1-minute holds.

- Monitor fluorescence with FRET filter set.

- Data Analysis:

- Plot fluorescence vs. temperature for each well.

- Calculate Tm as the temperature at maximum derivative of fluorescence.

- Identify hits as compounds causing ΔTm > 1°C compared to DMSO control.

- Validate hits through secondary binding assays.

Diagram 2: Computational drug repurposing workflow integrating multiple data sources and validation.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagent Solutions for Binding Affinity Studies

| Reagent/Material | Function | Application Examples |

|---|---|---|

| DNA-Encoded Libraries (DELs) | Ultra-high-throughput screening of compound libraries [11] [12] | Hit identification against protein targets [11] |

| Streptavidin-Coated Magnetic Beads | Immobilization of biotinylated target proteins [11] | DEL selection, pull-down assays [11] |

| SPR Sensor Chips (CM5) | Covalent immobilization of proteins for binding studies [7] | Kinetic characterization of lead compounds [7] |

| SYPRO Orange Dye | Fluorescent dye that binds hydrophobic protein regions [11] | Thermal shift assays for target engagement [11] |

| Click Chemistry Reagents | Modular synthesis of compound libraries [12] | PROTAC synthesis, library diversification [12] |

Binding affinity prediction serves as the crucial link connecting hit identification, lead optimization, and drug repurposing in modern drug discovery. The integration of computational methods—from physical simulation-based approaches to machine learning and generative AI—has created a powerful synergy that accelerates and refines each stage of the drug development process. As these technologies continue to evolve, supported by rigorous experimental validation and standardized data frameworks, they promise to further reduce development timelines, increase success rates, and drive the creation of innovative therapies for unmet medical needs. The future of drug discovery lies in the intelligent integration of these computational and experimental approaches, creating a more efficient and targeted path from basic research to clinical application.

The accurate prediction of protein-ligand binding affinity, which characterizes the strength of interaction between a drug candidate and its target protein, represents one of the most fundamental challenges in modern drug discovery [13]. This parameter guides critical stages of development, from initial hit identification and lead optimization to final candidate selection, ensuring compounds demonstrate both strong binding and appropriate selectivity for their biological targets [13]. Traditionally, this process has relied heavily on experimental methods—in vitro assays and in vivo animal studies—that are extraordinarily resource-intensive, time-consuming, and costly [14]. The high attrition rate of drug candidates during clinical development, often due to poor pharmacokinetic and metabolic properties, has further intensified the need for more predictive and efficient early-stage screening methodologies [15].

In response to these challenges, in silico methods—biological experiments conducted entirely via computer simulation—have emerged as a transformative approach [14] [16]. By leveraging advances in computational biology, artificial intelligence (AI), and regulatory science, these methods are rapidly displacing traditional reliance on animal and early-phase human trials for many applications [16]. This whitepaper examines the compelling economic and scientific justification for shifting to in silico methodologies for binding affinity prediction, detailing the limitations of traditional approaches, the capabilities of modern computational tools, and the integrated workflows that maximize their potential for drug discovery researchers and development professionals.

The Economic and Practical Limitations of Traditional Methods

Traditional drug discovery has long been hampered by a process of trial and error, with binding affinity assessment typically progressing through sequential experimental stages [13]. In vitro studies, conducted in controlled laboratory environments outside living organisms, provide initial invaluable advantages for cellular and molecular investigation but fail to replicate the precise cellular conditions and natural functioning of a whole biological system [14]. Consequently, they frequently yield results that do not correspond to what occurs within a living organism, potentially overlooking critical interactions and compensatory mechanisms [14].

In vivo studies, conducted within whole living organisms, offer more reliable observation of overall experimental effects where interactions, metabolism, and distribution contribute to the final observable outcome [14]. However, these studies present significant ethical considerations, regulatory complexities, and far greater costs and time requirements [14] [16]. The resource intensity of this traditional paradigm is staggering: bringing a new therapeutic agent to market typically requires over a decade and costs billions of dollars [17], with high attrition rates creating substantial economic inefficiencies [15].

Table 1: Comparative Analysis of Experimental Approaches in Drug Discovery

| Approach | Throughput | Cost | Biological Relevance | Key Limitations |

|---|---|---|---|---|

| In Silico | Very High | Very Low | Limited to modeled biology | Dependent on model accuracy and training data |

| In Vitro | High | Moderate | Lacks systemic complexity | Fails to replicate full organism context [14] |

| In Vivo | Low | Very High | High - full physiological context | Ethical concerns, time-consuming, expensive [14] [16] |

The fundamental economic challenge lies in the traditional sequence of experimentation, where resource-intensive methods are deployed before sufficient mechanistic understanding is achieved. This often leads to late-stage failures that could potentially be identified earlier through computational profiling and prediction [15]. With regulatory agencies such as the FDA announcing plans to phase out mandatory animal testing for many drug types [16], the field is poised for a fundamental restructuring of validation approaches that places greater emphasis on computational and human-relevant systems.

The Rise of In Silico Methods for Binding Affinity Prediction

Computational Approaches and Their Evolution

In silico methods for binding affinity prediction have evolved significantly from early conventional approaches to sophisticated AI-driven platforms. Conventional methods typically relied on ab initio quantum mechanical calculations or empirical approaches derived from experimental data, often formulated as physics-based models or parametric equations [13]. While these methods provided valuable insights, they tended to be rigid and performed well only in specific scenarios, such as with particular protein families [13].

The introduction of traditional machine learning methods around 2005 marked a significant advancement, with algorithms applied to human-engineered features extracted from complex structures achieving measurable improvements over conventional approaches [13]. These methods proved less rigid and often more accurate, particularly for binding affinity scoring and ranking tasks [13]. More recently, deep learning approaches have begun to dominate the field, leveraging increased protein-ligand samples in standard benchmarks and relying less on human-engineered features [13]. This progression has progressively enhanced our ability to explore vast chemical spaces, investigate molecular interactions, predict binding affinity, and optimize drug candidates with unprecedented accuracy and efficiency [17].

Key Methodological Categories

Modern binding affinity prediction methods generally fall into three primary categories, each with distinct advantages and applications:

Physical Simulation-based Methods, such as free energy perturbation (FEP), have gained prominence for protein targets with known structures [6]. These methods are widely trusted as they directly model physical interactions between proteins and ligands at the atomic level [6]. Recent advances in accurate force-field energetics combined with enormous increases in computing power have driven their increased utilization [6]. However, these approaches face limitations including high computational cost, the requirement for a high-quality protein structure, and restricted applicability to structural changes around a reference ligand [6].

Machine Learning-based Scoring Functions encompass both traditional machine learning and deep learning approaches [18] [13]. These methods typically use algorithms trained on vast chemical libraries and experimental data to propose molecular structures satisfying precise target product profiles, including potency, selectivity, and ADME properties [19]. Pioneering approaches like multiple-instance machine learning overcome the need for assumptions regarding ligand conformations and alignments, instead dynamically identifying and refining optimal ligand poses as parameters evolve [6].

Hybrid Methods that combine physical simulations with machine learning represent an emerging powerful category. Methods such as physics-informed ML embed physical domain knowledge to predict binding affinity while automatically solving molecular pose problems [6]. These approaches explicitly model physical factors governing molecular recognition—accounting for ligand shape, electrostatics, hydrogen-bonding preferences, and conformational strain—while capturing the physical interactions driving affinity rather than relying solely on statistical correlations [6].

Table 2: Performance Comparison of Binding Affinity Prediction Methods

| Method Type | Accuracy | Computational Cost | Domain Applicability | Structure Requirement |

|---|---|---|---|---|

| Physical Simulation (FEP) | High (target-dependent) | Very High | Narrow (around reference ligand) | High-quality structure needed [6] |

| Traditional Machine Learning | Moderate | Low | Broad chemical space | Not always required |

| Deep Learning | Improving with data | Moderate | Broad chemical space | Not always required |

| Physics-Informed ML | Comparable to FEP | ~1000x lower than FEP | Broad, including new scaffolds [6] | Not always required [6] |

Quantitative Advantages: The Business Case for In Silico Methods

The justification for adopting in silico methods extends beyond scientific curiosity to compelling business economics. Companies leveraging these approaches report dramatically compressed discovery timelines; for instance, Insilico Medicine's generative-AI-designed idiopathic pulmonary fibrosis drug progressed from target discovery to Phase I trials in just 18 months, compared to the typical ~5 years needed for traditional discovery and preclinical work [19]. Similarly, Exscientia reports in silico design cycles approximately 70% faster and requiring 10× fewer synthesized compounds than industry norms [19].

The economic argument becomes particularly compelling when examining computational efficiency. Physics-informed ML methods achieve accuracy comparable to free energy perturbation at roughly 0.1% of the computational cost [6]. This extraordinary efficiency gain enables researchers to evaluate significantly more compounds and explore wider chemical spaces using the same computational resources, potentially identifying more promising candidates while consuming fewer wet-lab resources [6].

The throughput advantages are equally impressive. A 2025 study demonstrated that integrating pharmacophoric features with protein-ligand interaction data could boost hit enrichment rates by more than 50-fold compared to traditional methods [20]. Furthermore, deep graph networks have been used to generate over 26,000 virtual analogs, resulting in sub-nanomolar inhibitors with dramatic potency improvements over initial hits [20]. These quantitative advantages translate directly into reduced resource consumption, accelerated discovery timelines, and potentially higher-quality drug candidates.

Integrated Workflows and Experimental Protocols

Synergistic Methodologies

The most effective modern drug discovery pipelines leverage in silico and experimental methods not as competitors but as complementary components of an integrated workflow [6] [20]. This synergistic approach recognizes that direct physical simulation and physically motivated ML methods make largely orthogonal assumptions, meaning their prediction errors tend to be uncorrelated [6]. Using these methods in parallel and averaging their predictions has been demonstrated to improve overall accuracy [6].

Two primary integration strategies have emerged as particularly effective:

Parallel Implementation, where multiple prediction methods are applied simultaneously and results are combined to improve accuracy through consensus approaches. This strategy leverages the fact that different methodological categories produce uncorrelated errors, potentially yielding more robust predictions than any single method [6].

Sequential Implementation, where physics-informed ML methods first screen larger or more chemically diverse compound libraries at high throughput, after which more computationally intensive FEP methods are applied only to the top candidates [6]. This approach creates a funnel-like filtering process that maximizes efficiency while maintaining high confidence in final selections.

Diagram 1: Integrated in silico and experimental workflow for efficient drug discovery.

Detailed Experimental Protocols

Free Energy Perturbation (FEP) Protocol: FEP calculations require several methodical steps beginning with system preparation, where protein structures are obtained from crystallography or homology modeling and prepared with protonation states and solvation [6]. Ligand parameterization follows using appropriate force fields, with system setup placing the protein-ligand complex in a water box with ions [6]. Equilibration through molecular dynamics ensures system stability, followed by production simulations using alchemical transformation pathways between ligand pairs [6]. Finally, free energy differences are calculated using thermodynamic integration or Bennett acceptance ratio methods, with results validated against known experimental data where available [6].

Physics-Informed ML Screening Protocol: This approach begins with feature engineering that incorporates physically meaningful molecular representations capturing 3D shape, charge, and stereochemistry [6]. Model training follows using multiple-instance learning frameworks that dynamically identify optimal ligand poses during parameter evolution [6]. The trained model then functions analogously to a protein pocket, allowing new molecules to be fitted using a process directly akin to molecular docking and scoring [6]. Virtual screening of compound libraries ranks candidates by predicted affinity and drug-like properties, with top candidates advanced to experimental validation or further computational refinement [6].

CETSA Target Engagement Validation: For experimental confirmation, the Cellular Thermal Shift Assay (CETSA) protocol begins with compound treatment of intact cells or tissue samples, followed by heating to denature and precipitate unbound target proteins [20]. Centrifugation separates soluble fractions, with subsequent detection and quantification of remaining target proteins using immunoblotting or mass spectrometry [20]. Finally, data analysis determines temperature-dependent stabilization (Tm shifts) and dose-response relationships to confirm direct target engagement in physiologically relevant environments [20].

Essential Research Reagent Solutions

The successful implementation of in silico drug discovery workflows relies on both computational tools and experimental reagents that facilitate validation. The table below details key resources mentioned in recent literature.

Table 3: Essential Research Reagent Solutions for Binding Affinity Studies

| Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| PDBbind Database | Dataset | Curated experimental binding affinities from PDB | Training and benchmarking binding affinity predictors [13] |

| CETSA (Cellular Thermal Shift Assay) | Experimental Assay | Measure target engagement in intact cells/tissues | Confirm computational predictions in physiologically relevant systems [20] |

| AutoDock | Software Platform | Molecular docking and virtual screening | Filter compounds for binding potential before synthesis [20] |

| SwissADME | Web Tool | Predict absorption, distribution, metabolism, excretion | Evaluate drug-likeness and pharmacokinetic properties [20] |

| Zebrafish Model | In Vivo System | Bridge in vitro and in vivo testing | Provide complex in vivo data with ethical/economic advantages [14] |

The economic and scientific evidence supporting the shift toward in silico methods for binding affinity prediction is compelling and multifaceted. The dramatically lower computational costs (approximately 0.1% of FEP for physics-informed ML), substantially accelerated timelines (70% faster design cycles), and enhanced exploration of chemical space (50-fold improvement in hit enrichment) collectively present an undeniable case for computational integration [6] [19] [20]. Furthermore, regulatory developments such as the FDA's plan to phase out mandatory animal testing for many drug types signal a fundamental paradigm shift toward computational and human-relevant systems [16].

For researchers and drug development professionals, the strategic implication is clear: organizations that fail to integrate in silico methodologies throughout their discovery pipelines risk being outpaced by those leveraging these technologies. The most successful approaches will not completely replace experimental validation but will strategically deploy computational methods to de-risk decision-making and concentrate resources on the most promising candidates [6] [20]. As methodological improvements continue to address current limitations in accuracy, interpretability, and computational requirements, in silico binding affinity prediction will increasingly become the foundational pillar of efficient, effective, and ethical drug discovery. Within the coming decade, failure to employ these methods may be viewed not merely as outdated, but as scientifically and economically indefensible [16].

The Role of Datasets in Binding Affinity Prediction

Binding affinity prediction is a critical component of modern computational drug discovery. It aims to quantify the strength of interaction between a drug molecule (ligand) and its protein target, which directly influences the drug's efficacy and specificity [10]. The development of reliable computational models for this task, particularly machine learning and deep learning scoring functions, is heavily dependent on large, high-quality datasets that provide three-dimensional structural information of protein-ligand complexes alongside experimentally measured binding affinities [21] [22].

These datasets serve dual purposes: as training resources for parameterizing models and as standardized benchmarks for objectively comparing different computational approaches. The quality, size, and composition of these datasets directly impact the accuracy and generalizability of the resulting predictive models [23] [24].

Core Datasets and Benchmarks

PDBbind: The Comprehensive Reference Set

Initiated in 2004, PDBbind is a curated database that links protein-ligand complex structures from the Protein Data Bank (PDB) with their experimentally measured binding affinity data [21].

| Feature | Description |

|---|---|

| Data Source | Protein Data Bank (PDB) structures with experimental binding data [21] |

| Key Metric | Binding affinity (K(d), K(i), IC(_{50})) [21] |

| Organization | General set (~19,500 complexes), Refined set (higher quality), Core set (benchmarking) [21] [23] |

| Primary Use | Training and testing scoring functions (both classical and ML-based) [21] [25] |

| Noted Considerations | Contains structural artifacts; potential data leakage between subsets [21] [23] |

The PDBbind workflow involves extracting structures from the PDB, annotating binding data from scientific literature, and curating the data into hierarchical subsets. The "general" set serves as a broad training resource, while the "refined" and "core" sets provide high-quality complexes for testing and validation [21]. However, recent analyses indicate that PDBbind suffers from structural artifacts and potential data leakage, where high similarity between training and test complexes can lead to overly optimistic performance estimates [21] [23]. Initiatives like HiQBind-WF and LP-PDBBind have emerged to address these issues through improved curation and data splitting protocols [21] [23].

BindingDB: The Binding Affinity Repository

BindingDB is a public database focusing primarily on measured binding affinities between drug-like compounds and protein targets [21] [26].

| Feature | Description |

|---|---|

| Data Source | Scientific literature and patents [21] |

| Key Metric | Binding affinity (K(d), K(i), IC(_{50})) [21] |

| Scale | ~2.9 million binding measurements, ~1.3 million compounds [21] |

| Primary Use | Binding affinity prediction, bioactivity modeling, virtual screening [10] [23] |

| Noted Considerations | Rich affinity data, often used with structural data from other sources [23] |

BindingDB's strength lies in its extensive collection of binding measurements, which often surpasses the structural data available in PDBbind. It is commonly used to augment structural data from other sources or to create independent test sets like BDB2020+ for validating model performance on truly novel complexes [23].

CASF: The Standardized Benchmark

The Comparative Assessment of Scoring Functions (CASF) benchmark is not a dataset itself, but a standardized protocol built upon the PDBbind core set to objectively evaluate scoring functions [21] [25].

| Feature | Description |

|---|---|

| Data Source | PDBbind core set [21] |

| Evaluation Metrics | Scoring, ranking, docking, and screening power [25] |

| Organization | Annual benchmarks (CASF-2016, etc.) using updated PDBbind cores [21] |

| Primary Use | Standardized comparison of scoring function performance [25] |

| Noted Considerations | Benchmarking results can be influenced by data quality in PDBbind [21] |

CASF evaluates four key capabilities of scoring functions: scoring power (accuracy of affinity prediction), ranking power (ability to rank ligands by affinity for a specific target), docking power (identification of correct binding poses), and screening power (discrimination of true binders from non-binders) [25]. This comprehensive assessment provides a holistic view of a scoring function's practical utility in drug discovery pipelines.

DUD-E: The Decoy Database

The Directory of Useful Decoys: Enhanced (DUD-E) was developed to address the critical need for benchmarking virtual screening methods—the ability to distinguish true binders from non-binders [27] [28].

| Feature | Description |

|---|---|

| Data Source | Original targets from PDB with known active compounds [28] |

| Key Components | Active ligands and property-matched decoy molecules [27] |

| Scale | 102 targets, ~20,000 active ligands, ~50 decoys per active [28] |

| Primary Use | Evaluating virtual screening and enrichment capabilities [27] [28] |

| Noted Considerations | Some formatting issues in provided structures [27] |

DUD-E's methodology involves selecting protein targets with known active ligands, then generating decoy molecules that are physically similar but chemically dissimilar to the active compounds. This design helps prevent artificial enrichment based on simple physicochemical properties, providing a more realistic assessment of a method's ability to identify true binders [27].

Experimental Workflows and Protocols

Dataset Curation and Preparation

High-quality dataset preparation requires meticulous structural curation to address common issues in original PDB structures. The HiQBind/PDBBind-Opt workflow exemplifies this process [21] [24]:

Diagram: High-Quality Dataset Curation Workflow.

This workflow applies critical filters to exclude problematic complexes: covalent binders (require different treatment than non-covalent interactions), rare elements (challenging for models due to sparse data), and steric clashes (physically unrealistic interactions) [21] [24]. Structure-fixing modules then correct common issues with bond orders, protonation states, and missing atoms before final refinement.

Benchmarking Scoring Functions

The CASF benchmark provides a standardized methodology for comprehensive scoring function evaluation [25]:

Diagram: CASF Benchmarking Methodology for Scoring Functions.

Each test in the CASF protocol addresses a distinct capability: scoring power measures correlation between predicted and experimental affinities, ranking power evaluates correct ordering of ligands by affinity for specific targets, docking power assesses identification of native-like binding poses, and screening power measures enrichment of true binders over non-binders [25].

The Scientist's Toolkit

| Research Reagent / Resource | Function in Research |

|---|---|

| RCSB Protein Data Bank (PDB) | Primary repository of 3D structural data for biological macromolecules [21] |

| Chemical Component Dictionary (CCD) | Reference for chemical nomenclature, geometry, and bond ordering [21] |

| RDKit | Open-source cheminformatics toolkit for molecule manipulation and feature generation [25] |

| PDBFixer | Tool for adding missing atoms and residues to protein structures [24] |

| Schrödinger Protein Preparation Wizard | Commercial tool for comprehensive structure preparation and optimization [25] |

| Lemon Data Mining Framework | Efficient framework for accessing and organizing PDB data for benchmark creation [27] |

| MMTF (Macromolecular Transmission Format) | Compact binary format for efficient storage and processing of PDB data [27] |

| Chemfiles I/O Library | Multi-format library for reading and writing chemical structure files [27] |

The field of binding affinity prediction continues to evolve with several emerging trends. Multitask learning frameworks like DeepDTAGen that jointly predict binding affinities and generate novel drug candidates represent a promising integration of predictive and generative approaches [10]. There is also growing emphasis on developing balanced scoring functions that perform well across all key tasks (scoring, ranking, docking, screening) rather than excelling at just one [25].

Addressing dataset quality issues remains an active research area, with initiatives like HiQBind, LP-PDBBind, and PDBBind-Opt providing more rigorous curation protocols [21] [23] [24]. The creation of time-split and similarity-controlled benchmarks like BDB2020+ helps ensure more realistic assessment of model generalizability to novel targets and compounds [23].

These datasets and benchmarks collectively provide the foundation for developing and validating computational methods that accelerate drug discovery. As the field progresses toward more integrated and generalized approaches, these resources will continue to play a crucial role in translating computational predictions into therapeutic advances.

From Docking to Deep Learning: A Landscape of Predictive Methods

The process of drug discovery is both time-intensive and costly, with the initial identification of candidate molecules that can effectively bind to a specific biological target being a critical step. A molecule's therapeutic potential is fundamentally governed by the strength with which it binds to its target protein, a property quantified as its binding affinity [29]. Accurate prediction of binding affinity allows researchers to computationally screen vast libraries of compounds, prioritizing the most promising candidates for further laboratory testing and thereby accelerating the entire research pipeline [30].

Binding affinity represents the free energy change (ΔG) associated with the formation of a protein-ligand complex. More negative values indicate a thermodynamically more favorable and stronger binding interaction [29]. In practice, the binding affinities for drug-like molecules typically fall within a range of approximately -15 kcal/mol to -4 kcal/mol [29]. The core challenge in computational drug discovery is to predict this value accurately and efficiently, a task addressed by methods spanning a wide spectrum of computational cost and accuracy, from fast, approximate techniques to highly detailed, resource-intensive simulations.

Molecular Docking

Core Principles

Molecular docking is a computational technique that predicts the preferred orientation (the "pose") of a small molecule (ligand) when bound to a target protein. Following pose prediction, a scoring function estimates the binding affinity. Docking functions by performing a conformational search of the ligand in the protein's binding site and then ranking the generated poses based on a scoring algorithm that typically approximates the free energy of binding [30]. These scoring functions can be physics-based (estimating energy terms), empirical (using weighted chemical descriptors), or knowledge-based (derived from statistical analyses of known protein-ligand structures) [30].

Performance and Applications

Docking is valued for its high speed, typically taking less than a minute per compound on standard CPU hardware, making it the primary tool for virtual screening of large compound libraries [29]. However, this speed comes at the cost of accuracy. The root-mean-square error (RMSE) of docking-predicted affinities is generally in the range of 2–4 kcal/mol, with correlation coefficients to experimental data often being low and system-dependent [29]. Its main application is in the rapid filtering of thousands to millions of compounds to identify a manageable number of hits for further experimental investigation.

Experimental Protocol: A Standard Docking Workflow

A typical molecular docking protocol involves several key steps to prepare the protein and ligand, run the docking simulation, and analyze the results [31]:

- Protein Preparation: Obtain the 3D protein structure from a database like the RCSB PDB (e.g., PDB ID: 1LUG). Prepare the structure by adding polar hydrogen atoms, assigning charges, and defining the binding site.

- Ligand Preparation: Build or source the ligand structure. Generate likely 3D conformations and optimize them using energy minimization.

- Docking Execution: Use a docking program (e.g., AutoDock Vina) with an appropriate force field (e.g., the zinc-optimized AD4Zn for metalloenzymes). The software performs a conformational search, generating multiple potential binding poses.

- Pose Analysis and Scoring: The generated poses are ranked based on the program's scoring function. The top-ranked poses are visually inspected to assess the plausibility of the binding mode and key interactions (e.g., hydrogen bonds, hydrophobic contacts).

Free Energy Perturbation (FEP)

Core Principles

Free Energy Perturbation is an alchemical method for calculating the free energy difference between two similar states. In drug discovery, it is most often used to compute the relative binding free energy between two similar ligands that bind to the same protein [32]. This is achieved by performing molecular dynamics (MD) simulations that gradually and computationally "mutate" one ligand into another within the binding site. By using a thermodynamic cycle, FEP provides highly accurate comparisons of binding affinity, making it a gold standard for lead optimization where small, systematic changes are made to a lead compound [32] [33].

Performance and Applications

FEP is at the high-accuracy end of the prediction spectrum but is computationally intensive. It can achieve impressive accuracy, with mean absolute errors (MAE) often reported between 0.8–1.2 kcal/mol and Pearson correlation coefficients (R) ranging from 0.5 to over 0.9, depending on the system and implementation [32]. However, this high accuracy requires substantial computational resources, with simulations often taking 12 or more hours of GPU time per calculation, rendering it impractical for screening tens of thousands of candidates [29] [32]. Its primary application is in the lead optimization phase, where it guides medicinal chemists in selecting the most potent derivatives from a congeneric series.

Experimental Protocol: An FEP Workflow

A standard FEP workflow involves setting up a series of simulations that transform one ligand into another, both in the binding site and in solution [32]:

- System Setup: The protein-ligand complex is prepared and solvated in an explicit water model. A similar system is created for the ligand in solution.

- λ-Schedule Definition: A pathway for the alchemical transformation is defined by a series of intermediate "λ-windows" (e.g., 12-24 windows), where λ controls the coupling between the initial and final states.

- Molecular Dynamics Simulation: Independent MD simulations are run at each λ-window, sampling the configurations of the system as the transformation occurs.

- Free Energy Analysis: The free energy difference for the transformation is calculated from the ensemble of configurations collected at each window, using methods like the Bennett Acceptance Ratio (BAR) or Multistate BAR (MBAR). The relative binding free energy is then derived via the thermodynamic cycle.

MM/GBSA and MM/PBSA

Core Principles

The Molecular Mechanics/Generalized Born Surface Area (MM/GBSA) and Molecular Mechanics/Poisson-Boltzmann Surface Area (MM/PBSA) methods aim to fill the gap between the high speed of docking and the high accuracy of FEP [29]. These are end-state methods, meaning they calculate binding free energy using snapshots from MD simulations of the free protein, free ligand, and the complex. The binding free energy (ΔGbind) is approximated by the equation:

ΔGbind = ΔHgas + ΔGsolvent - TΔS ≈ ΔEMM + ΔGsolv - TΔS

Here, ΔEMM is the gas-phase molecular mechanics energy (van der Waals and electrostatic terms from a force field), ΔGsolv is the solvation free energy (calculated by a Generalized Born (GB) or Poisson-Boltzmann (PB) model for the polar component, plus a non-polar term based on the solvent-accessible surface area, SASA), and -TΔS is the entropic contribution, often estimated using normal-mode or quasi-harmonic analysis [29] [34] [31].

Performance and Applications

MM/GBSA offers a intermediate balance, providing more accuracy than docking while being significantly faster than FEP. It has been shown to achieve correlation coefficients of ~0.55–0.77 for specific test sets, such as carbonic anhydrase inhibitors [31]. Its performance is highly sensitive to the choice of parameters, particularly the atomic charges used for the ligand [31]. A known challenge is the large and often noisy entropic term (-TΔS), which is sometimes omitted from the calculation due to its computational cost and uncertainty [29]. MM/GBSA is commonly used to re-score the top poses obtained from molecular docking to improve the ranking of ligands.

Experimental Protocol: An MM/GBSA Workflow

A typical MM/GBSA calculation involves running a molecular dynamics simulation to generate an ensemble of structures, which are then used for the energy calculations [29] [31]:

- MD Simulation Setup: A protein-ligand complex is prepared, solvated, energy-minimized, and heated to the target temperature (e.g., 300 K). An equilibrium MD simulation is run (e.g., 10 ns of equilibration followed by a production run).

- Snapshot Extraction: Hundreds of snapshots are extracted at regular intervals from the stable part of the MD trajectory (e.g., every 10 ps, yielding 300 snapshots).

- Free Energy Calculation: For each snapshot, the MM/GBSA energy components (ΔEMM, ΔGGB, ΔGSASA) are calculated. The entropic term (-TΔS) can be calculated for a subset of snapshots or estimated.

- Averaging and Analysis: The binding free energy for each snapshot is averaged over all snapshots to produce a final estimated ΔGbind. The results are then correlated with experimental data.

Comparative Analysis

The table below provides a direct comparison of the three conventional approaches based on key performance and resource metrics.

Table 1: Comparative Analysis of Conventional Binding Affinity Prediction Methods

| Feature | Molecular Docking | MM/GBSA | Free Energy Perturbation (FEP) |

|---|---|---|---|

| Computational Speed | Fast (minutes on CPU) [29] | Medium (hours on GPU) [29] | Slow (12+ hours on GPU per calculation) [29] |

| Accuracy (RMSE) | 2-4 kcal/mol [29] | >1 kcal/mol (system-dependent) | ~1 kcal/mol or below [32] |

| Accuracy (Correlation) | Low (e.g., ~0.3) [29] | Medium (e.g., 0.55-0.77) [31] | High (e.g., 0.5-0.9) [32] |

| Primary Application | Virtual screening of large libraries | Re-scoring docking poses, moderate-throughput screening | Lead optimization of congeneric series |

| Key Limitation | Low accuracy of scoring functions | Noisy entropic term, sensitivity to charges/parameters [29] [31] | High computational cost, limited to similar ligands [29] |

Advanced Methodologies and Recent Developments

Addressing Key Challenges with Enhanced Workflows

Researchers are continuously developing enhanced protocols to overcome the limitations of conventional methods. For instance, the accuracy of MM/GBSA can be significantly improved by using quantum mechanics-derived atomic charges (e.g., from B3LYP-D3(BJ) DFT calculations) instead of standard forcefield charges, as demonstrated in a study on carbonic anhydrase inhibitors which achieved an R² of 0.77 [31]. Similarly, hybrid methods like QCharge-VM2 combine the Mining Minima (M2) method with QM/MM-derived charges, achieving a Pearson correlation of 0.81 and an MAE of 0.60 kcal/mol across diverse targets, rivaling FEP accuracy at a lower computational cost [32].

Another significant challenge is accounting for protein flexibility. Advanced workflows now integrate ensemble docking, where docking is performed against multiple protein conformations generated through methods like Anisotropic Network Models (ANM) or MD simulations [35]. This approach is crucial for capturing binding-site dynamics and improving prediction quality for flexible targets. Furthermore, specialized methods have been developed for complex systems like membrane proteins, extending the applicability of MM/PBSA by incorporating multi-trajectory approaches and automated membrane parameterization [34].

The Emergence of Machine Learning

A major trend in the field is the integration of machine learning (ML) with conventional physics-based approaches. ML models, particularly Graph Neural Networks (GNNs) like PLAIG and message-passing neural networks, can learn complex patterns from protein-ligand structures and achieve high prediction speeds [36] [37]. The most powerful emerging paradigms are hybrid models that combine the strengths of both worlds. For example, the DockBind framework leverages docking poses generated by tools like DiffDock and augments them with physics-based and chemical descriptors (e.g., neural potential energy, molecular fingerprints) within an ML model to enhance affinity estimation [38]. At the frontier, foundation models like Boltz-2 claim to approach the accuracy of FEP—achietaining a Pearson correlation of 0.62 on a standard benchmark—while being over 1000 times faster, signaling a potential shift in the speed-accuracy landscape of affinity prediction [33].

Essential Research Reagents and Computational Tools

The following table details key software, tools, and "reagents" essential for conducting research in conventional binding affinity prediction.

Table 2: Key Research Reagents and Tools for Binding Affinity Prediction

| Tool/Reagent Name | Type/Category | Primary Function in Research |

|---|---|---|

| AutoDock Vina [31] | Docking Software | Widely-used program for predicting protein-ligand binding poses and scoring. |

| AD4Zn Force Field [31] | Docking Parameter | A zinc-optimized scoring function for accurate docking with metalloenzymes. |

| AMBER [34] | MD & Analysis Suite | Software package for running MD simulations and performing MM/PBSA/GBSA calculations. |

| QM/MM Charges [32] [31] | Computational Parameter | High-accuracy atomic charges for ligands derived from quantum mechanical calculations, used to improve MM/GBSA electrostatic terms. |

| ANM (Anisotropic Network Model) [35] | Sampling Tool | A coarse-grained elastic model used to efficiently generate an ensemble of plausible protein conformers for ensemble docking. |

| PDBbind [30] [36] | Benchmark Dataset | A curated database of protein-ligand complexes with experimentally measured binding affinities, used for training and validating prediction methods. |

| BindingDB [29] | Experimental Database | A public database of measured binding affinities, focusing on drug-like molecules and protein targets. |

Workflow Visualization

The diagram below illustrates the decision-making process for selecting an appropriate binding affinity prediction method based on the research goal and available resources.

Method Selection Workflow

Molecular Docking, Free Energy Perturbation, and MM/GBSA represent foundational pillars in the computational prediction of protein-ligand binding affinity. Each method occupies a distinct niche in the trade-off between computational speed and predictive accuracy, making them suited for different stages of the drug discovery pipeline. Docking enables the initial vast exploration of chemical space, FEP provides high-precision guidance for lead optimization, and MM/GBSA offers a valuable intermediate option. The field continues to evolve rapidly, with current research focused on integrating these conventional physics-based approaches with powerful machine-learning models and enhancing their accuracy through advanced quantum mechanical and sampling techniques. This synergy promises to deliver increasingly robust and efficient tools, solidifying the role of in silico prediction as an indispensable component of modern drug development.

Drug-target binding affinity (DTA) prediction is a critical component of modern computational drug discovery, providing a quantitative measure of the interaction strength between a drug candidate and its protein target. Unlike binary classification of interactions, affinity prediction offers a continuous value that more accurately reflects biological reality and helps prioritize lead compounds. This whitepaper examines foundational machine learning approaches that helped establish the DTA prediction field, focusing on three key methodologies: the similarity-based KronRLS method, the feature-engineered SimBoost model, and early feature-based frameworks. We present detailed methodologies, performance benchmarks on standard datasets, and practical implementation protocols to guide researchers in applying these techniques. The transition from traditional wet-lab experiments, which are notoriously time-consuming and expensive, to these computational methods has significantly accelerated early-stage drug screening and repositioning efforts.

The process of drug discovery traditionally relies on identifying compounds that can selectively bind to specific protein targets to produce therapeutic effects. Drug-target binding affinity (DTA) quantifies the strength of these interactions, typically measured through dissociation constant (Kd), inhibition constant (Ki), or half-maximal inhibitory concentration (IC50) values [39] [40]. Accurate DTA prediction is crucial because it determines dosage requirements and potential efficacy; compounds with insufficient binding affinity rarely progress through development pipelines.

Traditional experimental methods for assessing binding affinity involve extensive wet-lab procedures that are costly, time-consuming, and resource-intensive, typically requiring 10-15 years and billions of dollars to bring a single drug to market [41] [7]. Computational DTA prediction methods emerged to address these limitations by leveraging machine learning to screen compounds in silico before experimental validation. Early approaches focused primarily on binary classification—predicting whether a drug-target pair interacts—but this failed to capture the continuum of interaction strengths that determines therapeutic potential [39] [40].

The shift to regression-based affinity prediction represented a significant advancement, enabling researchers to prioritize compounds based on predicted binding strength rather than mere interaction likelihood [39]. This whitepaper explores the machine learning foundations that enabled this transition, focusing on methodologies that remain influential in contemporary deep learning architectures for drug discovery.

Foundational Machine Learning Approaches

Similarity-Based Methods: KronRLS

The Kronecker Regularized Least Squares (KronRLS) method represents an early similarity-based approach to DTA prediction that leverages drug-drug and target-target similarity matrices [39] [40]. KronRLS operates on the principle that similar drugs should interact similarly with similar targets, formulating DTA prediction as a regularized optimization problem in the reproduced kernel Hilbert space.

The mathematical foundation of KronRLS relies on the Kronecker product of drug similarity matrix Kd and target similarity matrix Kt to define a similarity measure for drug-target pairs. The resulting kernel matrix K = Kd ⊗ Kt encompasses all possible pair similarities, enabling the prediction of continuous binding affinity values through the minimization of a regularized loss function. For a drug-target pair (di, tj), the prediction f(di, tj) is expressed as a linear combination of the kernel evaluations with the training pairs.

KronRLS utilizes Tanimoto similarity for drugs based on molecular fingerprints and Smith-Waterman similarity for protein sequences, capturing structural and sequential relationships without explicit feature engineering [40]. This approach effectively captures linear dependencies in the interaction data but may overlook complex non-linear relationships that deeper models can exploit.

Feature-Based Methods: SimBoost

SimBoost introduces a non-linear approach to DTA prediction using gradient boosting machines to overcome the limitations of linear methods like KronRLS [39]. As a feature-based method, SimBoost constructs comprehensive feature vectors for drug-target pairs by combining three feature types: drug-specific features, target-specific features, and pairwise interaction features.

SimBoost's feature engineering process includes:

- Drug features: Similarity scores with other drugs in the dataset

- Target features: Similarity scores with other targets in the dataset

- Pair features: Graph-theoretic measures derived from the drug-target interaction network, such as the number of common neighbors or topological similarity

The model employs a gradient boosting framework with regression trees as base learners, sequentially building an ensemble that minimizes the residual errors of previous trees. This approach captures complex non-linear relationships between features and binding affinities, typically outperforming linear methods on benchmark datasets [39]. Additionally, SimBoostQuant extends this framework to generate prediction intervals using quantile regression, providing confidence estimates for affinity predictions that are crucial for decision-making in drug discovery pipelines.

Additional Feature-Based Frameworks

Beyond SimBoost, other feature-based approaches have contributed significantly to DTA prediction methodologies. These methods typically combine chemical descriptors for drugs with sequence or structural descriptors for proteins to create feature vectors for standard machine learning algorithms.

Early feature-based implementations utilized:

- Support Vector Machines (SVM) for regression tasks

- Random Forests for handling high-dimensional feature spaces

- Deep Neural Networks (DNNs) for automatic feature hierarchy learning

These approaches differ from similarity-based methods by relying on explicit feature engineering rather than pairwise similarity matrices, potentially capturing more nuanced structure-activity relationships. The primary challenge lies in designing features that effectively represent the complex physicochemical properties governing molecular interactions while maintaining computational efficiency for large-scale screening applications.

Experimental Protocols & Benchmarking

Standardized Datasets for DTA Evaluation

Robust evaluation of DTA prediction models requires standardized benchmarks. The following datasets have emerged as community standards:

Table 1: Standard Datasets for DTA Prediction Benchmarking

| Dataset | Description | Affinity Measure | Statistics | Data Transformation |

|---|---|---|---|---|

| Davis | Kinase inhibitors binding data | Kd (dissociation constant) | 68 drugs, 442 targets, 30,056 interactions | pKd = -log10(Kd/1e9) [40] |