Beyond the Price Tag: A Comprehensive Analysis of NGS Cost-Effectiveness in Modern Chemogenomics

This article provides a rigorous, evidence-based assessment of the cost-effectiveness of Next-Generation Sequencing (NGS) against traditional single-gene testing methods in chemogenomics and drug development.

Beyond the Price Tag: A Comprehensive Analysis of NGS Cost-Effectiveness in Modern Chemogenomics

Abstract

This article provides a rigorous, evidence-based assessment of the cost-effectiveness of Next-Generation Sequencing (NGS) against traditional single-gene testing methods in chemogenomics and drug development. Tailored for researchers, scientists, and drug development professionals, it synthesizes the latest clinical data, market trends, and economic models. The analysis covers foundational principles, diverse methodological applications, strategies for troubleshooting and cost optimization, and direct comparative validations from recent studies in oncology and infectious diseases. The findings demonstrate that while NGS requires higher initial investment, it delivers superior long-term value through comprehensive genomic profiling, faster turnaround times, and more efficient resource utilization, ultimately accelerating precision medicine and therapeutic discovery.

The Economic and Technological Landscape of NGS in Chemogenomics

The integration of genomic technologies, particularly Next-Generation Sequencing (NGS), into clinical practice necessitates rigorous economic evaluation to demonstrate value for money and inform healthcare resource allocation. Economic evaluations in genomic medicine compare the costs and health outcomes of alternative testing strategies, such as NGS versus traditional single-gene testing (SGT), to determine which approach provides the best return on investment. For researchers, scientists, and drug development professionals, understanding the key metrics and methodologies used in these assessments is crucial for designing cost-effective genomic testing strategies and justifying their adoption in healthcare systems. The core question these evaluations address is whether the clinical benefits achieved through advanced genomic diagnostics justify their additional costs compared to standard approaches.

The fundamental metrics for evaluating cost-effectiveness are the Incremental Cost-Effectiveness Ratio (ICER) and the Quality-Adjusted Life-Year (QALY). Health technology assessment agencies and payers increasingly require evidence of cost-effectiveness, in addition to clinical validity and utility, to support coverage and reimbursement decisions for genomic tests. This is particularly relevant as healthcare systems worldwide grapple with the financial implications of implementing precision medicine, where evidence of clinical utility alone is often insufficient for widespread adoption without concurrent demonstration of economic value [1] [2].

Core Metrics and Methodological Framework

Quality-Adjusted Life-Year (QALY)

The Quality-Adjusted Life-Year (QALY) is a standardized measure of health outcome that combines both the quantity and quality of life into a single metric. One QALY represents one year of life in perfect health. The QALY calculation incorporates utility weights (values typically ranging from 0, representing death, to 1, representing perfect health) that reflect patient preferences for specific health states.

- Calculation Methodology: QALYs are calculated by multiplying the time spent in a health state by the utility weight associated with that health state. For example, if a genomic test enables a treatment that provides 4 additional years of life at a utility weight of 0.8, followed by 2 years at a utility weight of 0.6, the total QALY gain would be: (4 × 0.8) + (2 × 0.6) = 3.2 + 1.2 = 4.4 QALYs.

- Application in Genomics: In genomic medicine, QALYs capture how genetic testing influences both survival and health-related quality of life through more accurate diagnosis, targeted treatments, and avoidance of ineffective therapies or adverse drug reactions.

Incremental Cost-Effectiveness Ratio (ICER)

The Incremental Cost-Effectiveness Ratio (ICER) represents the additional cost per unit of health gain (typically per QALY gained) when comparing an intervention to an alternative. It is the primary metric used to determine whether a healthcare intervention provides good value for money.

- Calculation Formula: ICER = (Cost of Intervention - Cost of Comparator) / (Effectiveness of Intervention - Effectiveness of Comparator)

- Interpretation Framework: The calculated ICER value is compared against a willingness-to-pay (WTP) threshold, which represents the maximum amount a healthcare system is willing to pay for an additional QALY. These thresholds vary by country, with common benchmarks including:

- 1-3 times per capita GDP per QALY gained, following WHO recommendations [3]

- Country-specific thresholds (e.g., £20,000-£30,000 per QALY in the UK; $50,000-$150,000 per QALY in the US)

- Decision Rules:

- ICER < WTP threshold: Intervention is considered cost-effective

- ICER > WTP threshold: Intervention is not considered cost-effective

- Dominant: Intervention is both more effective and less costly (automatically cost-effective)

- Dominated: Intervention is both less effective and more costly (automatically not cost-effective)

Table 1: ICER Interpretation Framework Based on Common WTP Thresholds

| ICER Value Relative to Threshold | Decision Interpretation |

|---|---|

| Less than per capita GDP | Highly cost-effective |

| 1-3 times per capita GDP | Cost-effective |

| More than 3 times per capita GDP | Not cost-effective |

Quantitative Comparison: NGS vs. Traditional Testing Approaches

Economic evidence demonstrates that the cost-effectiveness of NGS depends heavily on clinical context, testing volume, and the number of biomarkers analyzed. The following tables summarize key comparative findings across different applications and settings.

Table 2: Cost-Effectiveness of NGS vs. Single-Gene Testing in Oncology [4] [5] [6]

| Application Context | Testing Scenario | Economic Finding | Key Determinants |

|---|---|---|---|

| Targeted Panel Testing | 4+ genes requiring testing | Cost-saving versus SGT | Reduced turnaround time, staff requirements, hospital visits |

| Large Panels (hundreds of genes) | Routine oncology practice | Generally not cost-effective | High test cost without proportional clinical benefit |

| Italian Hospitals Study (NSCLC & mCRC) | 15 of 16 testing cases | Cost-saving alternative to SGT | Savings of €30-€1249 per patient; economies of scale |

| Metastatic Cancer | Including targeted therapy costs | ICER above common thresholds | High drug costs outweigh testing savings |

Table 3: Cost-Effectiveness of NGS in Infectious Disease and Rare Diseases [3] [7]

| Application Context | Testing Scenario | Economic Finding | Key Metrics |

|---|---|---|---|

| CNS Infections (mNGS vs. culture) | Post-neurosurgical patients in ICU | ICER of ¥36,700 per timely diagnosis | Cost-effective at China's GDP-based WTP threshold |

| Rare Disease Diagnosis | Exome sequencing as first-tier test | Cost-saving with highest diagnostic yield (36%) | Reduces diagnostic odyssey and associated costs |

| Non-Invasive Prenatal Testing | Cell-free DNA screening | Willingness to pay AU$323 for expanded screening | Patients value broader condition detection |

Experimental Protocols for Cost-Effectiveness Research

Decision-Analytic Modeling for Genomic Test Evaluation

Decision-analytic modeling provides a systematic framework for evaluating the long-term costs and outcomes of genomic testing strategies, particularly when long-term clinical trial data are unavailable.

Model Structure Selection:

- Decision Trees: Appropriate for short-term outcomes (e.g., diagnostic accuracy studies)

- Markov Models: Suitable for chronic conditions requiring simulation of disease progression over time

- Partitioned Survival Models: Commonly used in oncology to model progression-free and overall survival

Data Input Requirements:

- Test characteristics: Sensitivity, specificity, turnaround time

- Clinical management pathways: Based on test results

- Health state utilities: Quality of life weights for relevant health states

- Cost components: Test costs, treatment costs, healthcare utilization costs

- Clinical outcomes: Disease progression, survival, treatment response rates

Analysis Methodology:

- Define comparative strategies (e.g., NGS panel vs. sequential single-gene testing)

- Model clinical pathways and associated costs and outcomes for each strategy

- Calculate incremental costs and QALYs between strategies

- Compute ICER and compare to WTP threshold

- Conduct sensitivity analyses to assess parameter uncertainty

Prospective Cost-Effectiveness Analysis alongside Clinical Studies

The following experimental protocol is adapted from a study of metagenomic NGS for central nervous system infections in postoperative neurosurgical patients [3]:

Study Design:

- Randomized controlled trial with 1:1 allocation (mNGS vs. conventional culture)

- Setting: Intensive Care Unit, Beijing Tiantan Hospital

- Participants: 60 patients with clinically confirmed CNS infections post-neurosurgery

- Timeframe: March 2023-January 2024

Intervention and Comparator:

- Intervention Group: Cerebrospinal fluid pathogen culture + mNGS

- Control Group: Pathogen culture only

Cost Measurement:

- Direct medical costs: Detection costs, anti-infective therapy, hospitalization

- Cost data collection: Patient-level microcosting from hospital accounting systems

- Time horizon: Duration of hospitalization

Effectiveness Measurement:

- Primary outcome: Incremental treatment response score at discharge (0-2 scale)

- Secondary outcomes: Turnaround time, antibiotic costs, length of stay

- QALY measurement: Not feasible in acute infection setting; therefore, surrogate endpoint used

Analysis Plan:

- Decision-tree model constructed using TreeAge Pro 2022

- ICER calculation: (CostmNGS - CostCulture) / (EffectivenessmNGS - EffectivenessCulture)

- WTP threshold: 1-3 times China's 2023 per capita GDP (¥89,000)

- Statistical analysis: Independent sample t-tests, Mann-Whitney tests, χ² tests

Visualizing Cost-Effectiveness Analysis Workflows

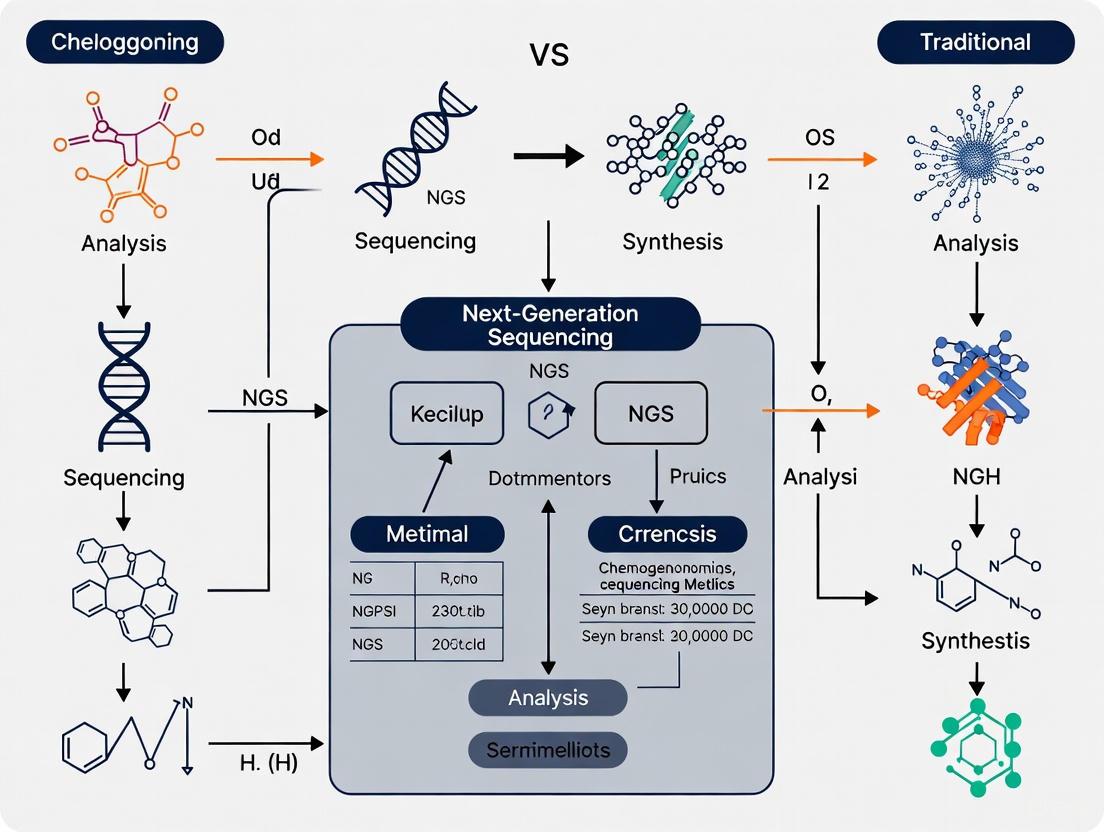

The following diagram illustrates the conceptual workflow for conducting cost-effectiveness analyses of genomic technologies, highlighting key decision points and methodological considerations.

Figure 1: Cost-Effectiveness Analysis Workflow for Genomic Technologies

Table 4: Key Research Reagent Solutions for Genomic Cost-Effectiveness Studies

| Tool/Resource | Function | Application Example |

|---|---|---|

| TreeAge Pro | Decision-analytic modeling software | Building Markov models and decision trees for lifetime horizon analyses [3] |

| Genomics Costing Tool (GCT) | Systematic cost estimation for sequencing | Estimating establishment and operational costs for genomic surveillance [8] |

| WHO CHOICE Guidelines | Standardized methods for cost-effectiveness analysis | Setting WTP thresholds at 1-3× GDP per capita for international comparisons [3] |

| Quality of Life Instruments (EQ-5D, SF-6D) | Health state utility measurement | Eliciting preference-based weights for QALY calculation [7] |

| Clinical Guidelines (NCCN, ESMO) | Standard care pathways definition | Establishing comparator strategies and clinical management algorithms [4] |

The economic evaluation of genomic diagnostics relies on standardized metrics (ICER, QALY) and methodologies that enable comparison across diverse healthcare contexts and technologies. Current evidence indicates that targeted NGS panels demonstrate cost-effectiveness compared to sequential single-gene testing when 4+ genes require analysis, particularly through savings in turnaround time, staff requirements, and hospital visits [4] [5]. The field continues to evolve with emerging methodologies that incorporate patient-centered outcomes, equity considerations, and broader elements of value beyond traditional cost-per-QALY frameworks. For researchers and drug development professionals, rigorous economic evaluation is increasingly essential for demonstrating the value of genomic technologies and guiding their appropriate integration into clinical practice.

Within drug development and chemogenomics research, selecting the optimal biomarker testing strategy is paramount for efficient target identification and validation. The debate often centers on the choice between traditional, low-plex methods and next-generation sequencing (NGS), with cost-effectiveness being a critical deciding factor [4]. This guide provides an objective technical and economic comparison of these approaches, offering researchers and scientists a clear framework for decision-making. The analysis demonstrates that while traditional methods like Sanger sequencing retain utility for targeted interrogation, NGS workflows offer superior economic and technical value in most modern research contexts, particularly as the scale of genomic inquiry increases [4] [9].

Technical Comparison: NGS vs. Traditional Methods

The fundamental difference between these technologies lies in their scale of operation. Traditional Sanger sequencing, a first-generation method, processes a single DNA fragment at a time [9]. In contrast, NGS is a massively parallel process, simultaneously sequencing millions to billions of DNA fragments [10] [9]. This core distinction drives differences in application, data output, and required infrastructure.

Methodological Principles and Evolution

- First-Generation Sequencing (Sanger Sequencing): This method, pioneered by Frederick Sanger, is based on the chain-termination principle [10]. It uses dideoxynucleotides (ddNTPs) to halt DNA synthesis at specific bases, generating DNA fragments of varying lengths that are separated by capillary electrophoresis to reveal the sequence [10] [9]. It is characterized by high per-read accuracy and long read lengths (500-1000 base pairs) but has extremely low throughput [9].

- Next-Generation Sequencing (NGS): Also known as second-generation sequencing, NGS encompasses several platforms that rely on parallel sequencing-by-synthesis [10] [11]. The process involves fragmenting DNA, attaching adapters to create a library, amplifying these fragments on a flow cell (e.g., via bridge amplification), and then sequencing them through iterative cycles of fluorescently-labeled nucleotide incorporation and imaging [9] [11]. Semiconductor sequencing (e.g., Ion Torrent) represents another NGS approach, detecting pH changes during nucleotide incorporation rather than using optical methods [10] [11].

- Third-Generation Sequencing: Emerging technologies like Single-Molecule Real-Time (SMRT) sequencing (PacBio) and Nanopore sequencing constitute the third generation [10] [9]. They sequence single DNA molecules in real-time, producing very long reads (averaging 10,000-30,000 base pairs) that are invaluable for resolving complex genomic regions, albeit with historically higher error rates that are now rapidly improving [10] [12].

Table 1: Core Characteristics of Sequencing Technology Generations

| Feature | Sanger (First-Gen) | NGS (Second-Gen) | Long-Read (Third-Gen) |

|---|---|---|---|

| Sequencing Principle | Chain termination with ddNTPs and electrophoresis [9] | Massively parallel sequencing-by-synthesis or semiconductor detection [10] [11] | Single-molecule real-time sequencing or nanopore detection [10] |

| Throughput | Low (one fragment per reaction) | Very High (millions to billions of fragments per run) [9] | High (hundreds of thousands of long fragments) |

| Typical Read Length | Long (500 - 1000 bp) [9] | Short (50 - 600 bp) [9] | Very Long (10,000 - 30,000+ bp) [10] |

| Primary Applications | Validating single genes or variants, cloning | Whole genomes, exomes, transcriptomes, targeted panels, epigenomics [10] [13] | De novo genome assembly, resolving complex structural variants, haplotype phasing [9] |

Workflow and Data Output Comparison

The end-to-end workflow for NGS is more complex than for Sanger sequencing, necessitating specialized infrastructure and expertise.

NGS vs Sanger Workflow

The NGS workflow involves more steps upfront in library preparation, including fragmentation and adapter ligation, which are not required for Sanger sequencing [11]. However, this initial complexity enables the massive multiplexing that is the hallmark of NGS. The data output differs radically: a single Sanger sequencing run yields a single sequence read, while a single NGS run on a high-throughput instrument like the Illumina NovaSeq X can generate 26 billion reads [12]. Consequently, the data management challenge for NGS is significant, often generating terabytes of data per run that require sophisticated bioinformatics pipelines for alignment, variant calling, and annotation [9] [11].

Economic and Cost-Effectiveness Analysis

The cost conversation has evolved from a simple comparison of per-test list prices to a more nuanced analysis that incorporates throughput, scalability, and the holistic impact on research outcomes and downstream healthcare costs.

Direct and Holistic Cost Comparisons

The most straightforward economic comparison is of direct testing costs. A systematic review of cost-effectiveness studies found that targeted NGS panels (2-52 genes) become cost-effective compared to sequential single-gene testing when four or more genes require analysis [4]. This is because the cost of multiple individual Sanger tests quickly surpasses the single cost of an NGS panel.

However, a holistic analysis that includes indirect costs reveals further advantages for NGS. This broader view accounts for factors such as:

- Turnaround Time: NGS can provide comprehensive results in hours to days, significantly faster than the weeks often needed for sequential single-gene testing [4]. This accelerates research cycles and, in clinical diagnostics, can lead to faster therapeutic decisions.

- Personnel and Resource Utilization: The streamlined, multiplexed NGS workflow reduces hands-on technical time and laboratory resources per data point compared to managing numerous individual assays [4].

- Sample Requirements: NGS can generate comprehensive genomic data from a limited sample quantity, a critical advantage in fields like oncology where biopsy material is often scarce [4].

Table 2: Economic Comparison of Single-Gene Testing vs. NGS Panels

| Cost Factor | Single-Gene Testing (e.g., Sanger) | NGS Targeted Panel | Economic Implication |

|---|---|---|---|

| Direct Cost per Gene | Low (for one gene) | Higher fixed cost | Cost-effective for NGS when 4+ genes tested [4] |

| Total Cost for Multi-Gene Analysis | Increases linearly with each additional gene | Fixed, regardless of panel size | NGS offers significant savings for comprehensive profiling [4] |

| Turnaround Time | Slow for multiple sequential tests | Fast, simultaneous results for all targets | NGS reduces time-to-result, accelerating R&D [4] |

| Personnel & Resource Cost | High per-data-point effort | Lower per-data-point effort | NGS improves operational efficiency [4] |

| Sample Consumption | High if multiple tests are run | Low (single test) | NGS preserves precious research samples (e.g., tumor biopsies) [4] |

Cost-Effectiveness in Specific Applications

Evidence from various medical and research fields supports the cost-effectiveness of NGS under specific conditions.

- Oncology Biomarker Testing: The aforementioned systematic review, which spanned 12 countries and 6 oncology indications, concluded that the current literature supports the cost-effectiveness of NGS as a biomarker testing strategy, particularly when holistic costs are considered [4].

- Infectious Disease Diagnostics: A 2025 prospective pilot study on postoperative central nervous system infections found that while metagenomic NGS (mNGS) had a higher direct detection cost (¥4,000 vs. ¥2,000 for culture), its shorter turnaround time (1 day vs. 5 days) and resultant reduction in anti-infective costs (¥18,000 vs. ¥23,000) made it cost-effective, with an Incremental Cost-Effectiveness Ratio (ICER) of ¥36,700 per additional timely diagnosis [14] [15].

- Broad Trends: The overall cost of sequencing a human genome has plummeted from nearly $3 billion during the Human Genome Project to under $1,000 today, and even below $200 on some of the latest platforms, a reduction that massively outpaces Moore's Law [9] [12]. This dramatic cost reduction has democratized access to whole-genome sequencing for research and is a primary driver of its growing integration into drug development pipelines [16].

Experimental Protocols and Supporting Data

To illustrate the practical application of these technologies, this section details a typical experimental setup for comparing NGS and traditional methods in a chemogenomics context, such as profiling cancer cell lines for drug response biomarkers.

Detailed Methodologies

Protocol 1: Sequential Single-Gene Sanger Sequencing for Mutation Profiling

- Sample Preparation: Extract genomic DNA from cell lines or tissues. Quantify and assess quality using spectrophotometry or fluorometry.

- Target-Specific PCR: Design and optimize PCR primers for each gene target of interest (e.g., KRAS, EGFR, BRAF). Perform individual PCR reactions for each gene for each sample.

- PCR Product Purification: Treat PCR products with exonuclease I and shrimp alkaline phosphatase (ExoSAP) to remove excess primers and nucleotides.

- Sanger Sequencing Reaction: Set up sequencing reactions for each purified PCR product using BigDye Terminator chemistry. This involves cycle sequencing with fluorescently labeled ddNTPs.

- Purification of Sequencing Reactions: Remove unincorporated dyes using column-based or precipitation methods.

- Capillary Electrophoresis: Load purified reactions onto a genetic analyzer for capillary electrophoresis. The instrument detects the fluorescent signal as DNA fragments are separated by size.

- Data Analysis: Use sequence analysis software (e.g., SeqScanner) to assemble chromatograms, call bases, and compare sequences to a reference to identify variants. Each variant must be confirmed by repeat PCR and sequencing.

Protocol 2: Targeted Gene Panel Sequencing via NGS

- Sample Preparation: Extract genomic DNA as in Protocol 1.

- Library Preparation:

- Fragmentation: Shear genomic DNA to a desired size (e.g., 200-500 bp) using acoustic shearing or enzymatic fragmentation.

- End-Repair and A-Tailing: Convert the fragmented DNA into blunt-ended, 5'-phosphorylated fragments, then add a single 'A' base to the 3' ends.

- Adapter Ligation: Ligate universal adapters containing sequencing primer binding sites and sample-specific barcodes (indexes) to the A-tailed fragments. This allows for multiplexing—pooling dozens or hundreds of samples in a single sequencing run.

- Library Amplification: Perform a limited-cycle PCR to amplify the adapter-ligated library.

- Target Enrichment: Hybridize the library to biotinylated probes designed to capture the exons of a defined set of cancer-related genes. Capture the probe-bound library using streptavidin-coated magnetic beads, and wash away non-specific fragments. Elute the enriched library.

- Sequencing: Pool the enriched, barcoded libraries and load onto an NGS platform (e.g., Illumina MiSeq, NextSeq, or NovaSeq). The system performs cluster generation (bridge amplification on the flow cell) followed by sequencing-by-synthesis with fluorescent reversible terminator nucleotides [11].

- Bioinformatics Analysis:

- Demultiplexing: Assign raw sequencing reads to each sample based on their unique barcode.

- Quality Control & Trimming: Assess read quality and trim adapter sequences and low-quality bases.

- Alignment: Map the cleaned reads to a reference human genome (e.g., GRCh38).

- Variant Calling: Use specialized algorithms (e.g., GATK, VarScan) to identify single nucleotide variants (SNVs), insertions/deletions (indels), and copy number variations (CNVs) across all targeted genes simultaneously.

- Annotation: Interpret the functional impact of identified variants using public databases (e.g., ClinVar, COSMIC).

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Kits for Sequencing Workflows

| Item | Function in Workflow | Example in Protocol |

|---|---|---|

| Nucleic Acid Extraction Kits | Isolate high-quality, pure DNA/RNA from biological samples (cell lines, tissues, blood). | Used in the initial step of both protocols [11]. |

| PCR Reagents & Primers | Amplify specific genomic regions for Sanger or amplify adapter-ligated libraries for NGS. | Target-specific primers for Sanger; universal primers for NGS library amplification [11]. |

| NGS Library Prep Kits | Convert fragmented DNA/RNA into a sequencing-ready library by end-repair, A-tailing, and adapter ligation. | Kits containing enzymes and buffers for steps in Protocol 2, Part 2 [11]. |

| Target Enrichment Panels | Biotinylated probe sets to capture and enrich specific genomic regions of interest from a whole-genome library. | Cancer gene panel used in Protocol 2, Part 3 [4]. |

| Indexing (Barcoding) Oligos | Unique DNA sequences ligated to each sample's library, enabling multiplexing of many samples in a single run. | Adapters containing barcodes in Protocol 2, Part 2 [11]. |

| Sequencing Chemistries | Fluorescent dyes (Illumina SBS) or specialized nucleotides (Ion Torrent) that enable the sequencing reaction. | Reversible terminators for Illumina platforms [10] [11]. |

The technical and economic comparison between NGS and traditional methods reveals a clear trajectory in genomics research. Sanger sequencing remains a powerful, unambiguous tool for validating a limited number of specific genetic variants. However, for the broad, discovery-driven profiling intrinsic to modern chemogenomics and drug development, NGS provides unparalleled scale, speed, and comprehensive data. The economic argument is equally compelling: NGS becomes the cost-effective solution when the research question expands beyond a handful of genes, as its multiplexing capability drastically reduces the per-gene cost and operational burden [4]. As sequencing costs continue to fall and bioinformatics tools become more sophisticated and integrated with AI [13] [16], the adoption of NGS as the default technology for genomic analysis in research and clinical diagnostics is set to expand further, solidifying its role in advancing personalized medicine.

Market Dynamics and Growth Trajectory of the NGS Sector (2025-2032 Forecasts)

The next-generation sequencing (NGS) market is experiencing transformative growth, propelled by technological advancements, declining costs, and expanding applications across biomedical research and clinical diagnostics. This sector represents a paradigm shift in genomics, enabling ultra-high throughput, scalability, and speed that have revolutionized biological investigation [17].

The global NGS market is on a strong growth trajectory, with valuations and forecasts indicating substantial expansion through 2032. Table 1 summarizes the quantitative market projections from leading industry analyses.

Table 1: Global NGS Market Size and Forecasts

| Market Research Firm | 2024/2025 Baseline Value | 2032 Forecast Value | Compound Annual Growth Rate (CAGR) |

|---|---|---|---|

| Coherent Market Insights | USD 18.94 Bn (2025) | USD 49.49 Bn (2032) | 14.7% [18] |

| Fortune Business Insights | USD 10.44 Bn (2025) | USD 27.55 Bn (2032) | 14.9% [17] |

| Precedence Research | USD 15.53 Bn (2025) | USD 60.33 Bn (2034) | 16.20% (2025-2034) [19] |

Several key factors are driving this growth:

- Precision Medicine: The rising demand for precision medicine is a significant driver. NGS technology enables rapid sequencing of large portions of an individual's genome, facilitating the creation of tailored treatments instead of a one-size-fits-all approach [19].

- Expanding Clinical Applications: NGS has diversified applications in diagnostics, particularly in oncology, rare genetic diseases, reproductive health, and infectious disease management. Its use in identifying genetic mutations for targeted cancer therapies and non-invasive prenatal testing (NIPT) has become standard practice [18] [20].

- Technological Innovation and Cost Reduction: The cost of sequencing has decreased dramatically, increasing accessibility for laboratories of all sizes. There has been a 96% decrease in the average cost-per-genome since 2013, with the industry now achieving benchmarks like the sub-$100 genome [21] [22].

- Government and Institutional Initiatives: Increased research and development funding, along with national genome projects (e.g., the Genome India Project), are accelerating NGS adoption and integration into healthcare systems worldwide [18] [17].

Product and Technology Comparison

The NGS landscape features multiple platforms and technologies, each with distinct strengths, operational costs, and ideal use cases. A comparative analysis is essential for informed decision-making.

Sequencing Platforms and Operational Costs

Table 2 provides a detailed comparison of selected high-throughput sequencers available on the market, based on data from platform providers.

Table 2: High-Throughput Sequencer Comparison (Data as of Q3 2024)

| Sequencer | Manufacturer | Approx. Instrument Cost | Cost per Genome (30x) | Key Strengths | Considerations |

|---|---|---|---|---|---|

| DNBSEQ-T7 | Complete Genomics | Not Specified | $150 [22] | Low operational cost, field-tested DNBSEQ technology [22]. | |

| NovaSeq X Plus | Illumina | >2x DNBSEQ-T7 cost [22] | $200 [22] | Established ecosystem, extensive support, and community [21]. | Higher initial investment and cost per genome [22]. |

| UG100 | Ultima Genomics | 2.5x DNBSEQ-T7 cost [22] | $100 [22] | Lowest consumable cost per genome [22]. | New, unproven technology platform [22]. |

Sequencing Technologies and Technical Specifications

Different sequencing technologies underlie these platforms, each with specific performance characteristics. Table 3 outlines the major technologies.

Table 3: NGS Technology Comparison

| Technology | Representative Platform | Amplification Type | Typical Read Length | Common Applications |

|---|---|---|---|---|

| Sequencing by Synthesis (SBS) | Illumina | Bridge PCR | Short (36-300 bp) [10] | Whole-genome, exome, transcriptome, targeted sequencing [17] [10]. |

| Ion Semiconductor | Ion Torrent | Emulsion PCR | Short (200-400 bp) [10] | Targeted sequencing, infectious disease, cancer panels [10]. |

| Single-Molecule Real-Time (SMRT) | PacBio | Without PCR | Long (avg. 10,000-25,000 bp) [10] | De novo assembly, resolving complex regions, full-length transcript sequencing [10]. |

| Nanopore | Oxford Nanopore | Without PCR | Long (avg. 10,000-30,000 bp) [10] | Real-time sequencing, field applications, metagenomics [10]. |

NGS Technology Decision Workflow

Cost-Effectiveness Analysis: NGS vs Traditional Methods in Chemogenomics

A core thesis for NGS adoption in chemogenomics research is its superior cost-effectiveness compared to traditional molecular methods. While initial instrument investment may be higher, NGS provides a broader discovery power that can replace multiple single-use tests.

Conceptual Framework for Economic Evaluation

Economic evaluations of NGS must account for the total cost of ownership and downstream value. Key considerations for a model-based cost-effectiveness analysis (CEA) in a research context include [23]:

- Beyond Reagent Costs: The evaluation should include instrument purchase, library preparation, labor, data analysis, and storage costs, not just sequencing consumables [21] [23].

- Value of Comprehensive Data: Unlike traditional methods that target specific pre-defined markers (e.g., Sanger sequencing, PCR), NGS identifies variants across thousands of regions simultaneously with single-base resolution. This unbiased approach can uncover novel biomarkers and mechanisms in a single experiment, accelerating discovery [20].

- Replacement of Multiple Assays: One NGS run can potentially replace several separate experiments (e.g., SNP arrays, expression arrays, Sanger sequencing), consolidating costs and saving valuable research time [21].

Experimental Protocol: Gene Expression Profiling in Drug Response

This protocol compares NGS-based RNA-Seq to the traditional method of quantitative PCR (qPCR) for profiling gene expression changes in cell lines treated with novel chemical compounds.

1. Hypothesis: RNA-Seq provides a more cost-effective and comprehensive profile of transcriptomic changes in response to compound treatment compared to a targeted qPCR panel.

2. Sample Preparation:

- Treat a human cell line (e.g., HepG2) with a chemical compound of interest and a DMSO vehicle control in triplicate.

- After 24 hours, extract total RNA and assess quality and integrity.

3. Traditional Method (qPCR):

- Reverse Transcription: Convert total RNA to cDNA.

- qPCR Workflow: Perform qPCR reactions using a pre-designed panel of 50 genes involved in drug metabolism and stress response. This requires prior knowledge of which genes to target.

- Data Analysis: Calculate fold-change using the ΔΔCt method for the 50 pre-selected genes.

4. NGS Method (RNA-Seq):

- Library Preparation: Use a poly-A selection kit to enrich for mRNA from the same RNA samples. Prepare sequencing libraries with dual indexing to allow for sample multiplexing.

- Sequencing: Pool all libraries and sequence on a benchtop sequencer (e.g., Illumina NextSeq 1000/2000) to a depth of 25 million reads per sample [20].

- Data Analysis: Align reads to the reference genome, quantify gene-level counts, and perform differential expression analysis. The results will cover all ~20,000 genes in the transcriptome.

5. Key Metrics for Comparison:

- Total Cost per Sample: Include all consumables and instrument depreciation for both methods.

- Number of Genes Analyzed: 50 (qPCR) vs. all detected genes (RNA-Seq).

- Novel Findings: The number of significantly dysregulated genes or pathways discovered by RNA-Seq that were not on the original qPCR panel.

- Time to Results: Total hands-on and instrument time.

Expected Outcome: While the per-sample cost for RNA-Seq may be higher in a single experiment, its ability to generate a hypothesis-free, genome-wide expression profile often makes it more cost-effective in the long run by revealing unexpected drug targets or mechanisms of action that would require multiple, sequential qPCR experiments to uncover.

Research Reagent Solutions for NGS in Chemogenomics

Table 4 details essential materials and reagents for a typical NGS workflow in a chemogenomics setting.

Table 4: Essential Research Reagent Solutions for NGS

| Item | Function | Example in Chemogenomics |

|---|---|---|

| Nucleic Acid Extraction Kits | Isolate high-quality DNA or RNA from complex biological samples (cells, tissues). | Extract RNA from compound-treated cell lines for transcriptomic studies [20]. |

| Library Preparation Kits | Fragment DNA/cDNA and attach adapter sequences for sequencing. | Kits for RNA-Seq, whole-genome sequencing, or targeted panels for oncogenes [18] [20]. |

| Sequence-Specific Baits | For hybrid capture in targeted sequencing, enriching genomic regions of interest. | Focus sequencing on a defined set of 500 genes involved in drug metabolism and pharmacokinetics (DMPK) [20]. |

| Quality Control Kits/Instruments | Quantify and assess the integrity of nucleic acids and final libraries. | Use of a nucleic acid quantitation instrument and quality analyzer is critical pre-sequencing [21]. |

| Indexing Oligonucleotides | Barcode individual samples to allow multiplexing in a single sequencing run. | Pooling RNA-Seq libraries from dozens of compound treatments to reduce cost per sample [21]. |

| Cluster & Sequencing Kits | Flow cell reagents and enzymes for on-instrument cluster generation and sequencing-by-synthesis. | Platform-specific consumables (e.g., Illumina's SBS chemistry) required to execute the sequencing run [18] [10]. |

Regional Analysis and End-User Adoption

The adoption and growth of NGS technology vary significantly across regions and end-user segments, reflecting differences in infrastructure, funding, and research focus.

Regional Dominance and Growth: North America has established itself as the dominant region, accounting for 44.2% to 55.65% of the global market share in 2024/2025 [18] [17]. This leadership is attributed to a strong presence of key market players, robust research infrastructure, significant R&D investments, and the early integration of genomics into clinical applications. However, the Asia Pacific region is projected to be the fastest-growing market, driven by rising healthcare needs, technological advancements, falling sequencing costs, and supportive government genome initiatives in countries like India, Japan, and China [18] [17] [19].

End-User Segmentation: The market is segmented by end-users who leverage NGS for different purposes.

- Hospitals & Clinics: This segment is increasingly integrating NGS for precision diagnostics and personalized treatment planning, especially in oncology [18].

- Pharmaceutical & Biotechnology Companies: These entities represent a major end-user segment, utilizing NGS extensively in drug discovery and development, particularly for identifying therapeutic targets and developing companion diagnostics [17].

- Academic & Research Institutes: This segment continues to hold a notable market share, using NGS as a fundamental tool for a wide array of basic and applied research projects [19].

Future Outlook and Emerging Trends

The NGS sector continues to evolve rapidly, with several key trends shaping its future trajectory beyond 2025.

- Multiomics and AI Integration: The integration of genomic data with other molecular data types (epigenomics, transcriptomics, proteomics)—known as multiomics—is becoming a standard for comprehensive biological insight. Artificial intelligence (AI) and machine learning (ML) are critical for analyzing these complex, high-dimensional datasets to uncover novel biomarkers and biological pathways [24].

- The Rise of Long-Read Sequencing: While short-read sequencing dominates the market, long-read technologies (e.g., PacBio, Oxford Nanopore) are gaining traction for their ability to resolve complex genomic regions, detect structural variations, and perform de novo assemblies without inference [10] [24].

- Spatial Genomics: A shift toward in situ sequencing of cells within intact tissue is emerging. This spatial context provides unparalleled insight into cellular interactions and the tissue microenvironment, which is crucial for understanding cancer and developmental biology [24].

- Decentralization and Commoditization: Sequencing is becoming more accessible, moving beyond large central genomic centers to individual laboratories and clinics. As the technology matures and costs decline further, a trend toward commoditization is expected, shifting competitive focus toward ease-of-use, workflow integration, and data analysis capabilities [22] [24].

The Expanding Role of Chemogenomics in Targeted Therapy and Drug Discovery

The integration of chemogenomics into targeted therapy and drug discovery represents a paradigm shift in how researchers approach disease treatment. This approach, which systematically investigates the interactions between chemical compounds and biological targets, is increasingly reliant on advanced genomic technologies. Next-generation sequencing (NGS) has emerged as a pivotal tool in this domain, enabling comprehensive genomic profiling that reveals drug-target interactions on an unprecedented scale. While the scientific value of NGS is widely acknowledged, its adoption in research and clinical settings hinges critically on demonstrating cost-effectiveness compared to traditional single-gene testing methods. A growing body of evidence indicates that when considering the full testing workflow—including turnaround time, personnel requirements, and the number of hospital visits—targeted NGS panels provide significant cost savings over conventional biomarker testing approaches, particularly when four or more genes require analysis [4]. This economic rationale, coupled with its technical capabilities, positions NGS as a cornerstone technology for advancing chemogenomic applications in precision medicine.

NGS vs. Traditional Methods: A Quantitative Cost-Effectiveness Analysis

The economic evaluation of NGS versus traditional single-gene testing methods reveals clear advantages under specific conditions. Traditional methods, while inexpensive and readily accessible for individual biomarker detection, become increasingly costly and inefficient when multiple genetic alterations need assessment. Comparative analyses across various oncology indications and geographical regions demonstrate that targeted panel testing (2-52 genes) becomes cost-effective when four or more genes require simultaneous analysis [4].

Table 1: Cost-Effectiveness Comparison of NGS vs. Traditional Single-Gene Testing

| Evaluation Metric | Traditional Single-Gene Testing | Targeted NGS Panels (2-52 genes) | Large NGS Panels (100+ genes) |

|---|---|---|---|

| Cost per single gene | Low | Moderate | High |

| Cost efficiency threshold | N/A | 4+ genes | Generally not cost-effective |

| Turnaround time | Variable (sequential testing) | Reduced (parallel testing) | Varies by platform |

| Personnel requirements | Higher (multiple tests) | Lower (single workflow) | Lower (single workflow) |

| Tissue requirements | Higher (sequential consumption) | Lower (single consumption) | Lower (single consumption) |

| Hospital visits | Potentially more | Reduced | Reduced |

The holistic value of NGS extends beyond direct testing costs. Studies evaluating long-term patient outcomes and healthcare system costs demonstrate that NGS reduces turnaround time, healthcare staff requirements, number of hospital visits, and overall hospital costs [4]. This comprehensive economic advantage positions NGS as a transformative technology for chemogenomic research and clinical application, particularly in complex diseases like cancer where multiple genetic drivers may be present simultaneously.

Experimental Protocols in Chemogenomics: Integrating NGS with Functional Drug Screening

Advanced chemogenomic approaches integrate genomic profiling with functional drug response data to identify patient-specific treatment options. The following detailed methodology from a clinical study on acute myeloid leukemia (AML) illustrates this integrated approach [25].

Patient Sampling and Preparation

- Sample Collection: Obtain bone marrow aspirates (5-10 mL) and peripheral blood (20 mL) in EDTA tubes from relapsed/refractory AML patients.

- Blast Enrichment: Isolate mononuclear cells using Ficoll density gradient centrifugation (400-500 × g, 30 minutes, room temperature).

- Cryopreservation: Suspend cells in fetal bovine serum with 10% DMSO, freeze at -80°C using controlled-rate freezing, transfer to liquid nitrogen for long-term storage.

Targeted Next-Generation Sequencing Protocol

- DNA Extraction: Use QIAamp DNA Blood Mini Kit according to manufacturer's instructions, quantify via Nanodrop, and assess quality by agarose gel electrophoresis.

- Library Preparation: Employ custom targeted panels (e.g., 40-gene panel covering recurrent AML mutations) with Illumina Nextera Flex for enrichment, following manufacturer's protocol with 100ng input DNA.

- Sequencing Parameters: Run on Illumina NextSeq 550 platform with 2×150 bp paired-end reads, minimum coverage 500x, target coverage 1000x.

- Bioinformatic Analysis: Process raw data through FastQC for quality control, align to GRCh37 with BWA, perform variant calling with GATK, annotate variants using SnpEff and custom databases.

Ex Vivo Drug Sensitivity and Resistance Profiling (DSRP)

- Thawing and Viability: Rapidly thaw cryopreserved cells in 37°C water bath, wash twice in pre-warmed RPMI-1640, assess viability via trypan blue exclusion (minimum 80% required).

- Drug Panel Preparation: Prepare 76-drug panel in 10 concentration points (0.1 nM - 10 µM) using DMSO stocks stored at -80°C, include controls (medium only, DMSO, cytotoxic controls).

- Cell Culture and Dosing: Plate 5,000 viable cells/well in 384-well plates, add drug dilutions using automated liquid handler, incubate for 72 hours at 37°C, 5% CO₂.

- Viability Assessment: Measure cell viability using CellTiter-Glo luminescent assay according to manufacturer's protocol, read luminescence on microplate reader.

- Data Analysis: Calculate EC50 values using nonlinear regression (sigmoidal dose-response model in GraphPad Prism), normalize data against controls, generate Z-scores for cross-patient comparison.

Data Integration and Treatment Strategy

- Multidisciplinary Review: Convene molecular biologists, clinicians, and bioinformaticians to review integrated NGS and DSRP data.

- Drug Selection Criteria: Apply Z-score threshold <-0.5 to identify sensitive drugs, prioritize drugs targeting actionable mutations identified by NGS.

- Combination Design: Consider drug accessibility, potential toxicities, and literature support for combinations when designing polytherapy regimens.

Table 2: Essential Research Reagent Solutions for Chemogenomic Studies

| Reagent Category | Specific Examples | Function in Workflow |

|---|---|---|

| Nucleic Acid Extraction | QIAamp DNA Blood Mini Kit | High-quality DNA isolation for NGS library preparation |

| Targeted Enrichment | Illumina Nextera Flex | Capture and amplify genes of interest for sequencing |

| Sequencing Chemistry | Illumina SBS reagents | Enable sequencing-by-synthesis with fluorescent detection |

| Cell Culture Media | RPMI-1640 with supplements | Maintain cell viability during drug sensitivity testing |

| Viability Assays | CellTiter-Glo | Quantify ATP levels as surrogate for cell viability |

| Drug Libraries | Custom 76-compound panel | Test broad range of targeted and chemotherapeutic agents |

Technological Advancements: The Evolving NGS Landscape in Chemogenomics

The NGS technology landscape has evolved rapidly, with significant implications for chemogenomic applications. Key developments across sequencing platforms have enhanced the feasibility of comprehensive genomic profiling in research and clinical contexts.

Platform-Specific Technical Advancements

- Oxford Nanopore Technologies: The 2024 launch of Q30 Duplex Kit14 enables dual-strand sequencing with accuracy exceeding 99.9%, rivaling short-read platforms while maintaining advantages in read length and real-time analysis [26].

- Pacific Biosciences: The Revio system with HiFi chemistry produces highly accurate long reads (10-25 kb, Q30-Q40 accuracy) through circular consensus sequencing, ideal for detecting complex structural variations [26].

- Illumina: The NovaSeq X series provides ultra-high throughput (up to 16 terabases per run), dramatically reducing per-genome sequencing costs and enabling large-scale cohort studies [26].

Emerging Methodological Innovations

Recent innovations focus on multi-omic integration and spatial context. Pacific Biosciences' SPRQ chemistry, launched in late 2024, combines DNA sequence information with regulatory data by using a transposase to label accessible chromatin regions with 6-methyladenine marks, enabling simultaneous assessment of sequence and structure from the same molecule [26]. Spatial biology approaches are also advancing, with 2025 expected to bring increased adoption of in situ sequencing of cells within native tissue contexts, allowing researchers to explore complex cellular interactions and disease mechanisms with unprecedented resolution [24].

Data Analysis and Integration: AI-Enhanced Computational Frameworks

The massive datasets generated by chemogenomic approaches require sophisticated computational tools for meaningful interpretation. Artificial intelligence (AI) and machine learning have become indispensable for extracting biological insights from integrated genomic and drug response data.

AI-based computational tools now play pivotal roles in strategic experiment planning, assisting researchers in predicting outcomes, optimizing protocols, and anticipating potential challenges [27]. In genomic analysis specifically, tools like Google's DeepVariant utilize deep learning to identify genetic variants with greater accuracy than traditional methods, while other AI models analyze polygenic risk scores to predict disease susceptibility and drug responses [13]. The integration of AI with multi-omics data has further enhanced its capacity to predict biological outcomes, contributing significantly to advancements in precision medicine [13].

Cloud computing platforms have emerged as essential infrastructure for managing chemogenomic data. Services like Amazon Web Services (AWS) and Google Cloud Genomics provide scalable solutions for storing, processing, and analyzing terabytes of sequencing data, enabling global collaboration while maintaining compliance with regulatory frameworks such as HIPAA and GDPR [13]. This computational infrastructure makes advanced chemogenomic analysis accessible to research institutions without significant local computational resources.

The expanding role of chemogenomics in targeted therapy and drug discovery is intrinsically linked to advancements in NGS technologies and their demonstrated cost-effectiveness compared to traditional testing approaches. The economic evidence is clear: when considering the complete testing workflow and clinical decision-making process, targeted NGS panels provide significant advantages over sequential single-gene testing for multi-genic conditions. As sequencing costs continue to decline and platforms evolve toward multi-omic integration, the value proposition of comprehensive chemogenomic profiling will further strengthen. The convergence of more affordable sequencing, enhanced computational tools, and standardized analytical frameworks will accelerate the adoption of these approaches, ultimately enabling more precise and personalized therapeutic interventions across a broadening spectrum of diseases. Future developments will likely focus on streamlining the integration of diverse data types—genomic, transcriptomic, epigenomic, and proteomic—into unified chemogenomic models that better predict drug efficacy and identify novel therapeutic opportunities.

Visual Workflows: Experimental Design and Data Integration

Chemogenomic Workflow Diagram

NGS Cost-Effectiveness Decision Pathway

Strategic Implementation and Clinical Applications of NGS

Next-generation sequencing (NGS) has emerged as a transformative technology for comprehensive genomic profiling in advanced non-small cell lung cancer (NSCLC), enabling simultaneous detection of multiple biomarkers to guide targeted therapy decisions. This case study objectively compares the performance, cost-effectiveness, and clinical utility of NGS-based approaches against traditional single-gene testing methods within chemogenomics research. The analysis demonstrates that targeted NGS panels become cost-effective when four or more genes require testing, with comprehensive profiling significantly increasing patient eligibility for personalized treatments compared to limited panels. While implementation requires consideration of bioinformatics infrastructure and testing workflows, NGS technologies provide researchers and clinicians with a powerful tool for advancing precision oncology in NSCLC management.

Non-small cell lung cancer constitutes approximately 85% of all lung cancer diagnoses and remains the leading cause of cancer-related mortality worldwide [28]. The identification of oncogenic driver mutations in genes such as EGFR, ALK, ROS1, KRAS, MET, RET, BRAF, and NTRK has revolutionized NSCLC management, enabling precision medicine approaches that significantly improve patient outcomes [28] [29]. These mutations define distinct molecular subsets with specific therapeutic vulnerabilities, making comprehensive molecular profiling a critical component of modern NSCLC management.

International guidelines now recommend comprehensive molecular profiling for all patients with advanced NSCLC to identify actionable mutations and guide optimal treatment strategies [29]. The prevalence of actionable genomic alterations in early-stage NSCLC is comparable to that in advanced disease, supporting the integration of genomic analysis as a cornerstone for therapeutic decision-making across disease stages [28]. Traditionally, single-gene testing approaches have been used for biomarker detection, but these methods present significant limitations in tissue utilization, turnaround time, and cost efficiency when multiple biomarkers require assessment.

Methodology: NGS Versus Traditional Testing Approaches

Next-Generation Sequencing Technology

Next-generation sequencing represents a paradigm shift in genomic analysis, enabling the simultaneous sequencing of millions of DNA fragments in a high-throughput and cost-effective manner [10]. Unlike traditional Sanger sequencing, which was time-intensive and costly, NGS allows comprehensive genomic characterization through parallel sequencing, providing researchers with detailed information about genome structure, genetic variations, and gene expression profiles [13]. Second-generation sequencing platforms including Illumina, Ion Torrent, and SOLiD have significantly increased throughput and speed through sequencing-by-synthesis approaches, while third-generation technologies from Pacific Biosciences and Oxford Nanopore offer real-time, long-read sequencing capabilities [10].

Table 1: Comparison of Major NGS Platforms for Cancer Genomics

| Platform | Technology | Amplification Type | Read Length | Primary Applications in NSCLC |

|---|---|---|---|---|

| Illumina | Sequencing-by-synthesis | Bridge PCR | 36-300 bp | Targeted panels, whole exome, transcriptome |

| Ion Torrent | Semiconductor sequencing | Emulsion PCR | 200-400 bp | Targeted gene panels, hotspot identification |

| PacBio SMRT | Single-molecule real-time | Without PCR | 10,000-25,000 bp | Structural variant detection, fusion genes |

| Oxford Nanopore | Electrical impedance detection | Without PCR | 10,000-30,000 bp | Real-time sequencing, fusion identification |

Traditional Single-Gene Testing Methods

Conventional biomarker testing in NSCLC has largely relied on single-gene assays that detect individual mutations through techniques such as polymerase chain reaction (PCR), Sanger sequencing, and fluorescent in situ hybridization (FISH) [4]. While these methods are established and readily accessible, they possess inherent limitations for comprehensive genomic profiling. Each single-gene test typically detects only one mutation, requiring sequential testing that consumes valuable tissue samples, extends turnaround time, and increases overall costs when multiple biomarkers need assessment [4]. The limited scope of single-gene testing also fails to identify complex genomic alterations, co-mutations with prognostic significance, and novel biomarkers beyond currently established targets.

Experimental Protocols for NGS in NSCLC

For researchers implementing NGS-based genomic profiling in NSCLC, the following core experimental workflow represents standard methodology:

Sample Preparation and Quality Control

- Obtain formalin-fixed paraffin-embedded (FFPE) tumor specimens or liquid biopsy samples

- Perform manual microdissection to select representative tumor areas with sufficient tumor cellularity (typically >20%)

- Extract genomic DNA using specialized kits (e.g., QIAamp DNA FFPE Tissue kit)

- Quantify DNA concentration using fluorometric methods (e.g., Qubit dsDNA HS Assay) and assess purity via spectrophotometry (A260/A280 ratio 1.7-2.2)

- Require minimum of 20ng DNA for library preparation [30]

Library Preparation and Target Enrichment

- Utilize hybrid capture methods for DNA library preparation (e.g., Agilent SureSelectXT Target Enrichment Kit)

- Employ targeted gene panels (e.g., SNUBH Pan-Cancer v2.0 targeting 544 genes) [30]

- Assess library size (250-400bp) and quantity using bioanalyzer systems

- Sequence on appropriate NGS platforms (e.g., Illumina NextSeq 550Dx)

Data Analysis and Variant Calling

- Align reads to reference genome (hg19)

- Detect single nucleotide variants and small insertions/deletions using specialized tools (e.g., Mutect2) with variant allele frequency threshold ≥2%

- Identify copy number variations (e.g., CNVkit, average CN ≥5 for amplification)

- Detect gene fusions using structural variant callers (e.g., LUMPY, read counts ≥3)

- Determine microsatellite instability status and tumor mutational burden

- Classify variants according to established guidelines (e.g., Association for Molecular Pathology tiers) [30]

Performance Comparison: Analytical Metrics

Detection Capabilities and Mutational Spectrum

Comprehensive genomic profiling via NGS demonstrates superior detection capabilities compared to traditional single-gene testing approaches. In a real-world study of 990 patients with advanced solid tumors, NGS testing successfully identified tier I variants (variants of strong clinical significance) in 26.0% of cases, with KRAS (10.7%), EGFR (2.7%), and BRAF (1.7%) representing the most frequently altered genes [30]. The broader mutational spectrum detected by NGS includes both actionable driver mutations and co-alterations with significant prognostic implications, such as TP53 mutations present in nearly half of NSCLC cases and associated with poor survival in EGFR-mutant tumors [28].

Table 2: Mutation Detection Rates in NSCLC Genomic Profiling

| Gene/Alteration | Prevalence in NSCLC | Detection Method | Therapeutic Implications |

|---|---|---|---|

| EGFR mutations | 30-50% in Asian populations [29] | PCR, Sanger sequencing, NGS | EGFR TKIs (gefitinib, osimertinib) |

| ALK rearrangements | 3-7% [29] | FISH, IHC, NGS | ALK inhibitors (alectinib, lorlatinib) |

| ROS1 fusions | 1-2% [29] | FISH, NGS | ROS1 inhibitors (crizotinib, entrectinib) |

| BRAF V600E | 1-3% [29] | PCR, NGS | BRAF/MEK inhibitors (dabrafenib/trametinib) |

| KRAS mutations | 10.7% (tier I) [30] | PCR, NGS | Emerging targeted therapies |

Liquid Biopsy Applications

NGS-based liquid biopsy represents a particularly valuable application for patients with limited tissue availability. In a study of 48 NSCLC patients with inadequate tumor tissue for molecular profiling, liquid biopsy using broad-panel NGS identified mutations in 58.3% of cases, with actionable mutations detected in 41.6% of patients [29]. The most common alterations identified were EGFR mutations, followed by ALK rearrangements and other less common targets. Among patients who received targeted therapy based on liquid biopsy results, 14.3% achieved complete metabolic response and 71.4% had partial response, demonstrating the clinical utility of this approach when tissue sampling is inadequate [29].

Cost-Effectiveness Analysis

Direct Economic Comparisons

Economic evaluations demonstrate that the cost-effectiveness of NGS-based approaches depends on the number of genes requiring assessment. Systematic review evidence indicates that targeted panel testing (2-52 genes) reduces costs compared with conventional single-gene testing when four or more genes require analysis [4]. The cost advantage of NGS becomes particularly evident when considering holistic testing costs, including turnaround time, healthcare personnel requirements, number of hospital visits, and associated hospital expenses [4].

Table 3: Cost-Effectiveness Comparison of Testing Approaches

| Cost Parameter | Single-Gene Testing | Targeted NGS Panels | Comprehensive NGS Panels |

|---|---|---|---|

| Testing cost per patient (multiple biomarkers) | Higher when >4 genes | Lower when >4 genes [4] | Variable based on panel size |

| Personnel time & resources | Higher (sequential testing) | Lower (parallel testing) [4] | Moderate to high |

| Turnaround time | Extended (weeks to months) | Reduced (days to weeks) [4] | Similar to targeted NGS |

| Tissue consumption | Higher | Lower | Lower |

| Cost to find eligible patient (by cancer type) | |||

| - NSCLC | $2,800 | $5,000 [31] | |

| - Cholangiocarcinoma | $4,400 | $4,400 [31] | |

| - Pancreatic carcinoma | $27,000 | $5,500 [31] | |

| - Gastro-oesophageal | Not measurable (0% eligible) | $5,200 [31] |

Impact on Treatment Eligibility and Personalized Therapy

Comprehensive genomic profiling significantly increases patient eligibility for personalized treatments compared to limited testing approaches. Research comparing small NGS panels (≤60 biomarkers) versus comprehensive panels (>60 biomarkers) demonstrated improved eligibility to personalized therapies across multiple cancer types [31]. In NSCLC, comprehensive panels increased eligibility from 37% to 39%; however, more substantial improvements were observed in other malignancies: cholangiocarcinoma (17% to 43%), pancreatic carcinoma (3% to 35%), and gastro-oesophageal carcinoma (0% to 40%) [31].

The implementation of Molecular Tumour Boards (MTBs) further enhances the value of NGS testing by facilitating interpretation of complex genomic data. MTB discussion accounts for only 2-3% of the total diagnostic journey cost per patient (approximately €113/patient) while significantly optimizing the selection of appropriate targeted therapies [31]. The combination of NGS and MTB review has been shown to reduce inappropriate targeted therapy prescriptions and enable patient access to off-label treatments or clinical trials [31].

Key Oncogenic Signaling Pathways in NSCLC

The major signaling pathways driven by oncogenic alterations in NSCLC represent critical targets for therapeutic intervention. The following diagram illustrates these key pathways and their interactions:

NSCLC Signaling Pathways and Therapeutic Targets

Research Reagent Solutions for NGS Implementation

Successful implementation of NGS-based comprehensive genomic profiling requires specific research reagents and laboratory materials. The following toolkit outlines essential solutions for researchers developing NGS capabilities in NSCLC:

Table 4: Essential Research Reagents for NGS-Based Genomic Profiling

| Reagent Category | Specific Products | Function in Workflow |

|---|---|---|

| DNA Extraction Kits | QIAamp DNA FFPE Tissue kit | Isolation of high-quality DNA from archived tumor samples |

| DNA Quantification | Qubit dsDNA HS Assay, NanoDrop Spectrophotometer | Accurate measurement of DNA concentration and purity |

| Library Preparation | Agilent SureSelectXT Target Enrichment Kit | Target capture and library construction for sequencing |

| Target Enrichment | SNUBH Pan-Cancer v2.0 Panel (544 genes) | Comprehensive genomic coverage of NSCLC-relevant genes |

| Sequencing Platforms | Illumina NextSeq 550Dx, NovaSeq X | High-throughput sequencing with appropriate coverage |

| Bioinformatics Tools | Mutect2 (variant calling), CNVkit (copy number), LUMPY (fusions) | Detection and annotation of genomic alterations |

| Quality Control | Agilent 2100 Bioanalyzer, High Sensitivity DNA Kit | Assessment of library size and quantity before sequencing |

Comprehensive genomic profiling using NGS technologies represents a cost-effective and clinically valuable approach for advanced NSCLC biomarker testing, particularly when four or more genes require assessment. The implementation of NGS-based testing, complemented by Molecular Tumour Board review, significantly enhances patient eligibility for personalized treatments while optimizing resource utilization in cancer diagnostics. For researchers and drug development professionals, NGS platforms provide unprecedented capabilities for discovering novel biomarkers, understanding resistance mechanisms, and developing targeted therapeutic strategies. As sequencing costs continue to decline and bioinformatics pipelines become more sophisticated, NGS is poised to become the standard approach for genomic profiling in NSCLC and other malignancies, ultimately advancing the goals of precision oncology through biologically informed, patient-centered treatment strategies.

Central nervous system (CNS) infections remain formidable challenges in clinical practice, characterized by high mortality rates exceeding 10-30% and significant diagnostic complexities [14]. Traditional diagnostic paradigms rely heavily on conventional microbiological tests (CMTs) including cultures, nucleic acid amplification tests, and serologic assays. However, these methods possess inherent limitations: cerebrospinal fluid (CSF) cultures demonstrate sensitivity as low as 5%-10% in post-neurosurgical infections, with time-to-result averaging 5-7 days [14]. This diagnostic delay frequently leads to empirical antimicrobial therapy that is either suboptimal or unnecessarily broad-spectrum, potentially compromising patient outcomes and contributing to antimicrobial resistance [32].

Metagenomic next-generation sequencing (mNGS) has emerged as a transformative diagnostic technology that enables unbiased detection of microbial nucleic acids (DNA and/or RNA) directly from clinical specimens without prior knowledge of the causative pathogen [33] [10]. This hypothesis-free approach is particularly valuable for CNS infections where the differential diagnosis encompasses diverse pathogens including bacteria, viruses, fungi, and parasites with overlapping clinical presentations [34]. This case study provides a comprehensive comparison of mNGS performance against traditional diagnostic methods for CNS infections, framed within the broader context of cost-effectiveness in clinical genomics research.

Performance Comparison: mNGS vs. Traditional Methods

Diagnostic Accuracy and Pathogen Detection

Multiple clinical studies have demonstrated the superior sensitivity of mNGS compared to conventional methods across various patient populations with suspected CNS infections.

Table 1: Comparative Diagnostic Performance of mNGS vs. Conventional Methods

| Metric | mNGS Performance | Conventional Methods Performance | Study Details |

|---|---|---|---|

| Overall Sensitivity | 63.1% [34] | 45.9% (CSF direct detection) [34] | 7-year study of 4,828 samples [34] |

| Positivity Rate in CNS Infection | 67.5% [32] | 18.3% [32] | 338 patients with suspected CNS infections [32] |

| CSF Culture Comparison | 77.11% pathogen identification [35] | 6.36% pathogen identification [35] | 110 patients with suspected CNS infections [35] |

| Detection of Culture-Difficult Pathogens | Superior for viruses, fungi, and fastidious bacteria [34] | Limited for viruses and intracellular pathogens [34] | Broad pathogen spectrum [34] |

The agnostic nature of mNGS is particularly valuable for detecting unexpected, rare, or fastidious pathogens. In a substantial 7-year study of 4,828 samples, mNGS identified 797 organisms from 697 (14.4%) samples, consisting of 363 (45.5%) DNA viruses, 211 (26.4%) RNA viruses, 132 (16.6%) bacteria, 68 (8.5%) fungi, and 23 (2.9%) parasites [34]. This broad detection capability extends to pathogens that traditional culture methods often miss, including Mycobacterium tuberculosis, Coccidioides species, and arboviruses [34].

Turnaround Time and Clinical Impact

The significantly reduced time-to-result for mNGS testing represents one of its most clinically valuable attributes, directly influencing patient management decisions.

Table 2: Turnaround Time and Clinical Management Impact

| Parameter | mNGS | Conventional Methods | Clinical Implications |

|---|---|---|---|

| Turnaround Time | 24-48 hours [32] [14] | 72-120 hours (culture) [35] [14] | Faster targeted therapy initiation |

| Time to Clinical Improvement | Median: 14 days [32] | Median: 17 days [32] | Significant reduction (p=0.032) [32] |

| 14-day Clinical Improvement Rate | 42.6% [32] | 31.4% [32] | Significantly higher (p=0.032) [32] |

| Therapy Modification | 63% of mNGS-positive cases [33] | Limited by delayed results [33] | Enables targeted escalation/de-escalation |

The rapid turnaround time of mNGS (typically 24-48 hours) compared to conventional culture methods (3-5 days) enables clinicians to make earlier evidence-based decisions regarding antimicrobial therapy [35] [32] [14]. This temporal advantage translates directly to improved clinical outcomes, including significantly reduced time to clinical improvement and higher rates of improvement within 14 days [32]. Furthermore, the comprehensive pathogen detection facilitated by mNGS leads to modification of antimicrobial therapy in approximately 63% of positive cases, allowing for both appropriate escalation when needed and de-escalation or discontinuation when broad-spectrum coverage is unnecessary [33].

Experimental Design and Methodologies

Standardized mNGS Wet-Lab Protocol

The experimental workflow for CSF mNGS testing involves multiple critical steps to ensure accurate and reproducible results:

Sample Processing and Nucleic Acid Extraction: CSF samples (1.5-3 ml) are collected via lumbar puncture under sterile conditions [35]. Samples are vigorously agitated with glass beads for 30 minutes at 2800-3200 rpm, followed by the addition of lysozyme for cell wall disruption [35]. DNA and RNA are co-extracted using commercial kits such as the TIANamp Micro DNA Kit (DP316) and TIANamp Micro RNA Kit (DP431) according to manufacturer's protocols [35].

Library Preparation and Sequencing: Extracted RNA undergoes reverse transcription to generate cDNA [35]. DNA libraries are constructed through enzymatic fragmentation, end repair, adapter ligation, and PCR amplification using kits such as the PMseq RNA Infection Pathogen High-throughput Detection Kit [35]. Each library is uniquely barcoded to enable multiplexing, followed by quality assessment using an Agilent 2100 Bioanalyzer [35]. Pooled libraries are sequenced on platforms such as the BGISEQ-50/MGISEQ-2000, generating tens of millions of reads per sample [35].

Bioinformatic Analysis Pipeline

The computational analysis of mNGS data involves a multi-step process to distinguish pathogen sequences from host background and environmental contamination:

Quality Control and Host Sequence Subtraction: Raw sequencing data first undergo quality filtering to remove low-quality reads and adapter sequences [35]. The remaining high-quality sequences are aligned to the human reference genome (hg38) using tools such as Burrows-Wheeler Alignment, and human sequences are computationally subtracted to enrich for microbial reads [35].

Microbial Classification and Interpretation: Non-human sequences are aligned against comprehensive pathogen databases such as the NCBI RefSeq database containing 4,945 viral taxa, 6,350 bacterial genomes or scaffolds, 1,064 fungi, and 234 parasites associated with human infections [35] [34]. Positive results are determined using established criteria: bacteria (excluding mycobacteria and nocardia) and viruses are reported when coverage is 10-fold greater than any other microorganism; Mycobacterium tuberculosis is reported with ≥1 genus-specific read; nontuberculous mycobacteria and nocardia are reported when read numbers rank in the top 10 of the bacteria list; fungi are reported with 5-fold greater coverage than other microorganisms [35].

Cost-Effectiveness Analysis in Clinical Genomics

Health Economic Evaluation

While mNGS has higher upfront costs compared to conventional methods, comprehensive economic analyses demonstrate its value proposition through improved outcomes and optimized resource utilization.

Table 3: Cost-Effectiveness Comparison of Diagnostic Approaches

| Economic Factor | mNGS | Conventional Methods | Study Details |

|---|---|---|---|

| Test Cost | ~¥4,000 (≈$550) [14] | ~¥2,000 (≈$275) [14] | Per-test direct cost [14] |

| Antimicrobial Costs | ¥18,000 (≈$2,475) [14] | ¥23,000 (≈$3,162) [14] | Significant reduction (p=0.02) [14] |

| Incremental Cost-Effectiveness Ratio (ICER) | ¥36,700 per additional timely diagnosis [14] | Reference [14] | Below China's WTP threshold (¥89,000) [14] |

| Overall Hospitalization Costs | No significant difference [14] | No significant difference [14] | Despite higher test cost [14] |

A prospective pilot study conducted in a critical care neurosurgical setting demonstrated that although mNGS detection costs were approximately double that of conventional pathogen cultures (¥4,000 vs. ¥2,000; p<0.001), the overall anti-infective treatment costs were significantly lower in the mNGS group (¥18,000 vs. ¥23,000; p=0.02) [14]. The calculated incremental cost-effectiveness ratio (ICER) of ¥36,700 per additional timely diagnosis falls well below China's GDP-based willingness-to-pay (WTP) threshold of ¥89,000, establishing mNGS as a cost-effective diagnostic approach [14].

Antimicrobial Stewardship Impact

The diagnostic precision of mNGS directly facilitates antimicrobial stewardship efforts. In immunocompromised pediatric patients with malignancies or hematopoietic cell transplantation, mNGS detected pathogens in 69-86% of episodes of culture-negative sepsis or persistent febrile neutropenia, compared to 18-56% for culture/PCR methods [33]. Early testing (<48 hours) shortened fever duration by approximately 1.5 days and reduced antimicrobial costs by 25-30% in this high-risk population [33]. These findings underscore the role of mNGS in promoting judicious antibiotic use through rapid de-escalation of empirical therapy when broad-spectrum coverage is unwarranted, while simultaneously enabling appropriate escalation for identified pathogens that would otherwise remain undetected.

Essential Research Reagents and Materials

The successful implementation of mNGS for pathogen detection requires specific laboratory reagents and bioinformatic resources.

Table 4: Essential Research Reagents and Computational Tools for mNGS

| Category | Specific Product/Resource | Application/Function |

|---|---|---|

| Nucleic Acid Extraction | TIANamp Micro DNA Kit (DP316) [35] | Simultaneous extraction of DNA and RNA from CSF samples |

| Library Preparation | PMseq RNA Infection Pathogen High-throughput Detection Kit [35] | Library construction for sequencing including fragmentation, adapter ligation, and amplification |

| Sequencing Platform | BGISEQ-50/MGISEQ-2000 [35] | High-throughput sequencing generating millions of reads |

| Bioinformatic Tools | Burrows-Wheeler Alignment (BWA) [35] | Alignment to human reference genome (hg38) for host sequence subtraction |

| Pathogen Databases | NCBI RefSeq [35] | Comprehensive microbial database for pathogen classification |

| Quality Control | Agilent 2100 Bioanalyzer [35] | Assessment of library quality before sequencing |

This comparative analysis demonstrates that mNGS represents a significant advancement in the diagnostic paradigm for CNS infections, offering superior sensitivity, broader pathogen detection coverage, and significantly faster turnaround times compared to conventional microbiological methods. While the direct per-test cost of mNGS is higher, its clinical utility in guiding appropriate antimicrobial therapy and facilitating stewardship initiatives translates to improved patient outcomes and favorable cost-effectiveness within accepted health economic thresholds. The integration of mNGS into diagnostic algorithms for complex CNS infections, particularly in immunocompromised and critically ill patients, provides a powerful tool for precision infectious disease management with growing evidence supporting its routine clinical implementation.

Leveraging NGS in Pharmacogenomics for Drug Response Prediction

Pharmacogenomics (PGx) investigates how an individual's genetic makeup influences their response to drugs, aiming to customize treatments for improved safety and efficacy [36]. For years, traditional methods like polymerase chain reaction (PCR) and microarrays were the standard for PGx testing. However, these technologies are limited to interrogating predetermined, common variants [37]. Next-generation sequencing (NGS) has emerged as a transformative technology that enables comprehensive profiling of pharmacogenes by detecting known variants, novel variants, and complex structural variations in a single assay [37] [38]. This guide provides an objective comparison of NGS performance against traditional methods, supported by experimental data, within the critical context of cost-effectiveness for research and drug development.

Performance Comparison: NGS vs. Traditional PGx Methods

Detection Capabilities and Diagnostic Yield

Traditional PGx technologies, such as single-gene tests or microarrays, are inexpensive and accessible but can only detect specific, known mutations [4] [37]. In contrast, NGS can simultaneously test multiple genes and identify variants of unknown significance, providing a more comprehensive genetic profile.

Table 1: Comparative Analysis of PGx Testing Technologies

| Feature | Traditional Methods (PCR, Microarrays) | Targeted NGS Panels | Whole Genome Sequencing (WGS) |

|---|---|---|---|