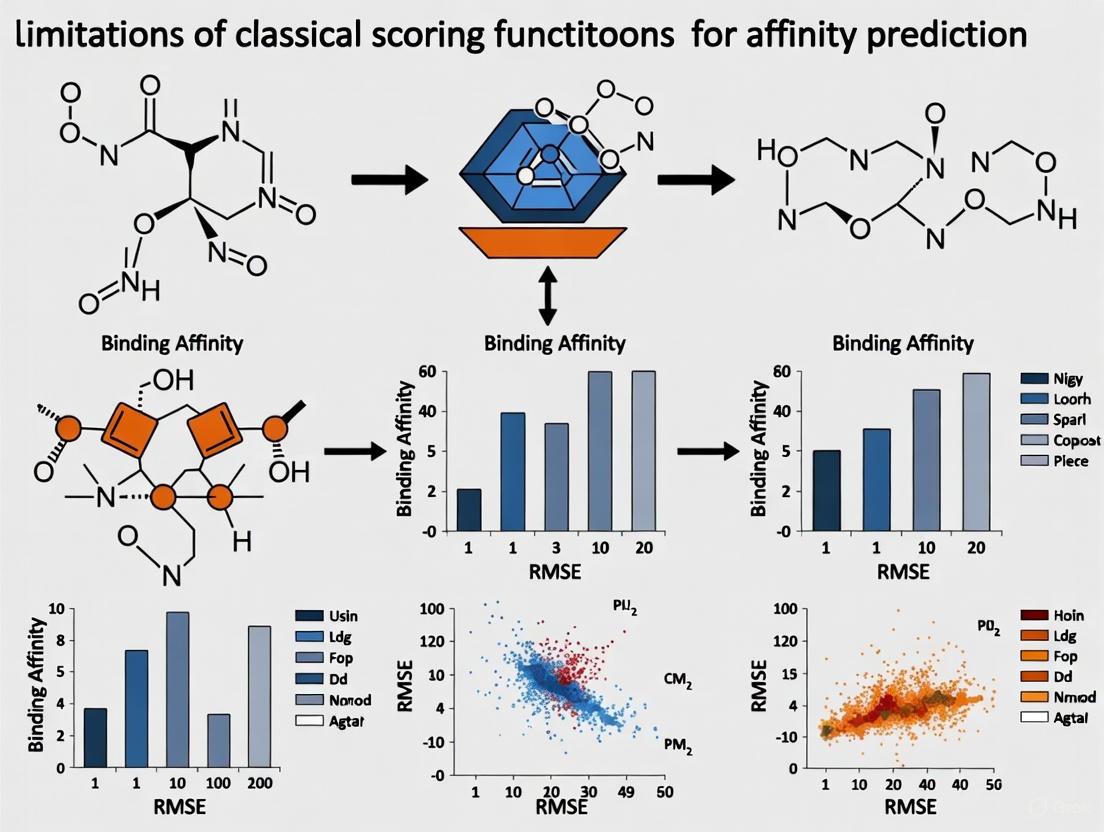

Beyond the Plateau: Uncovering the Critical Limitations of Classical Scoring Functions in Binding Affinity Prediction

Accurate prediction of protein-ligand binding affinity is a cornerstone of computer-aided drug discovery, yet the performance of classical scoring functions has remained stagnant.

Beyond the Plateau: Uncovering the Critical Limitations of Classical Scoring Functions in Binding Affinity Prediction

Abstract

Accurate prediction of protein-ligand binding affinity is a cornerstone of computer-aided drug discovery, yet the performance of classical scoring functions has remained stagnant. This article provides a comprehensive analysis for researchers and drug development professionals on the fundamental and methodological limitations of classical force-field-based, empirical, and knowledge-based scoring functions. We explore their rigid functional forms, inadequate treatment of key physical forces, and failure to generalize across diverse protein families. The content further details how these limitations manifest in practical applications like virtual screening and lead optimization, examines current challenges in model validation due to dataset biases, and synthesizes emerging solutions. By contrasting classical approaches with modern machine-learning-based alternatives, this review offers a clear-eyed perspective on the path toward more reliable and predictive computational tools for drug design.

The Roots of Stagnation: Core Principles and Inherent Flaws of Classical Scoring Functions

In the fields of computational chemistry and molecular modelling, scoring functions are mathematical functions used to approximately predict the binding affinity between two molecules after they have been docked [1]. The drug discovery process is notoriously expensive and time-consuming, and structure-based virtual screening (VS) has become a widely used approach to triage unpromising compounds early in the pipeline [2]. Through the prediction of the binding mode and affinity of a small molecule within the binding site of a target protein, molecular docking helps researchers understand key properties related to the binding process [2]. The fast evaluation of docking poses and the accurate prediction of binding affinity is essential in these protocols, and scoring functions emerge as a straightforward and fast strategy, despite their limited accuracy, remaining the main alternative for VS experiments [2]. This technical guide details the historical triad of classical scoring functions, framing their development within the context of their inherent limitations for binding affinity prediction.

The Classical Triad: Frameworks and Methodologies

Scoring functions are typically divided into three main classes: force field-based, empirical, and knowledge-based [2] [1]. Although large-scale comparative assessments are relatively rare, the strengths and limitations of current functions are fairly evident: they generally perform well in reproducing binding modes but struggle to accurately quantitate binding affinities or the effects of small structural changes [3]. The following sections and Table 1 provide a detailed breakdown of each class.

Table 1: Comparative Overview of Classical Scoring Function Types

| Function Class | Theoretical Basis | Energy Terms/Descriptors | Parameterization Method | Representative Examples |

|---|---|---|---|---|

| Force Field-Based [1] | Molecular mechanics, classical force fields | Sum of intermolecular van der Waals and electrostatic energies; sometimes includes internal ligand strain energy and implicit solvation (GBSA/PBSA) [1]. | Parameters derived from fundamental physical chemistry and quantum mechanics calculations [4]. | DOCK [2], DockThor [2] |

| Empirical [2] | Linear Free Energy Relationships | Weighted sum of physicochemical terms: hydrophobic contacts, hydrogen bonds, rotatable bonds immobilized, etc. [1]. | Multiple linear regression (MLR) or machine learning on datasets of protein-ligand complexes with known affinities [2] [5]. | LUDI [2], ChemScore [2], GlideScore [2] |

| Knowledge-Based [1] | Inverse Boltzmann statistics from structural databases | Pairwise atom-atom "potentials of mean force" derived from observed contact frequencies in databases like the PDB [2] [1]. | Statistical analysis of large 3D structural databases (e.g., PDB, Cambridge Structural Database) [1]. | DrugScore [2], PMF [2] |

Force Field-Based Scoring Functions

Force field-based scoring functions estimate affinities by summing the strength of intermolecular interactions, primarily van der Waals and electrostatic terms, using a molecular mechanics force field [1]. The intramolecular energies (strain energy) of the binding partners are also frequently included [1]. Since binding occurs in aqueous solution, a critical consideration is the treatment of solvation effects, which can be incorporated using implicit solvation models such as GBSA or PBSA [1]. The parameters for these functions are derived from fundamental physical chemistry and quantum mechanics calculations, rather than from fitting to binding affinity data [4]. Popular force fields that provide parameters for small molecules include the General AMBER Force Field (GAFF), the CHARMM General Force Field (CGenFF), and those in the OPLS and GROMOS families [4].

Empirical Scoring Functions

Empirical scoring functions are founded on the idea that the binding free energy can be correlated to a set of descriptors capturing key interactions involved in binding [2]. These functions take the form of a weighted sum of physicochemical terms, such as hydrophobic contacts, hydrogen bonds, and the number of rotatable bonds immobilized upon complex formation [1]. The central methodology involves using a dataset composed of three-dimensional structures of diverse protein-ligand complexes with associated experimental binding affinity data [2]. The coefficients (weights) of the functional terms are then obtained through regression analysis, traditionally using multiple linear regression (MLR), to calibrate the model and establish a relationship between the descriptors and the experimental affinity [2] [5]. The first empirical scoring function, LUDI, was developed by Böhm, pioneering this approach for predicting binding free energy [2].

Knowledge-Based Scoring Functions

Knowledge-based scoring functions, also known as potentials of mean force, are based on the statistical analysis of interacting atom pairs from a large database of experimentally determined protein-ligand complexes [2] [1]. The underlying principle is that intermolecular interactions between certain types of atoms that occur more frequently than expected in a random distribution are likely to be energetically favorable [1]. These observed frequencies are converted into a pseudopotential that describes the preferred geometries and interactions for protein-ligand atom pairs [2]. The resulting scoring function thus captures the implicit knowledge of molecular recognition "learned" from the structural data in repositories like the Protein Data Bank (PDB) [1].

Experimental Protocols for Development and Validation

The development and validation of scoring functions, particularly empirical ones, follow a structured protocol. The general workflow for developing an empirical scoring function, as detailed in recent literature [2] [5], is outlined below.

Empirical Scoring Function Development Workflow

Protocol: Building an Empirical Scoring Function

As defined by Pason and Sotriffer, the development of an empirical scoring function requires three core components [2] [5]:

Descriptors: A set of descriptors that quantitatively describe the binding event. These are typically structural and physicochemical features derived from the 3D complex, such as:

Training Dataset: A curated dataset of three-dimensional structures of protein–ligand complexes, each associated with reliable experimental binding affinity data (e.g., Kd, Ki, IC50). The diversity and quality of this dataset are crucial for the generalizability of the resulting model [2].

Regression/Classification Algorithm: A statistical or machine-learning algorithm to calibrate the model by establishing a relationship between the descriptors and the experimental affinity. Classical methods use multiple linear regression (MLR), but recent efforts increasingly employ sophisticated machine-learning techniques like Random Forests (RF) or Support-Vector Machines (SVM) to capture potential non-linear relationships [2] [5].

Key Benchmarks for Validation

To assess a scoring function's practical utility, it is rigorously tested on three distinct tasks, which also represent its primary goals in a docking workflow [2]:

- Pose Prediction (Binding Mode Prediction): The ability to identify the experimentally observed binding mode (the "native pose") by ranking it with the most favorable score among a set of generated decoy poses. Current methods generally perform this task with satisfactory accuracy [2].

- Binding Affinity Prediction: The ability to correctly predict the absolute binding free energy of a complex, ranking different ligands correctly according to their potency. This is the most challenging task, and the accuracy of current scoring functions remains a significant bottleneck, particularly in lead optimization [2] [3].

- Virtual Screening (Active/Inactive Classification): The ability to distinguish active compounds from inactive ones in a large database, which is critical for identifying novel hit molecules. The performance of scoring functions in this task is considered sufficient to make virtual screening a practically useful endeavor [2] [3].

The development and application of classical scoring functions rely on a suite of computational tools and data resources. The table below catalogs key "research reagents" essential for work in this field.

Table 2: Essential Research Reagents and Resources for Scoring Function Research

| Resource Name | Type | Primary Function / Application | Key Features / Notes |

|---|---|---|---|

| Protein Data Bank (PDB) [1] | Database | Primary repository for experimentally determined 3D structures of proteins and protein-ligand complexes. | Essential for training knowledge-based functions and for benchmarking pose prediction; provides structural data for empirical function development. |

| Cambridge Structural Database (CSD) [1] | Database | Repository for experimentally determined small-molecule organic and metal-organic crystal structures. | Used in knowledge-based function development to derive statistical potentials for intermolecular interactions. |

| AutoDock Vina [2] | Docking Software | Widely used molecular docking program that includes its own scoring function. | Employs a hybrid scoring function; commonly used as a platform for testing and validating new scoring methods. |

| Glide (Schrödinger) [2] | Docking Software | Commercial docking program with the empirical GlideScore function. | Known for its high accuracy in pose prediction; often used as a benchmark in performance comparisons. |

| GOLD [2] | Docking Software | Docking software using a genetic algorithm for pose exploration and its own empirical scoring function. | Supports multiple scoring functions; widely used in virtual screening campaigns. |

| DOCK [2] | Docking Software | One of the earliest docking programs, using a force field-based scoring function. | Allows for explicit consideration of solvent and user-defined scoring terms. |

| GAFF / GAFF2 [4] | Force Field | General AMBER Force Field for small molecules. | Provides parameters for force field-based scoring and molecular dynamics simulations; compatible with AMBER protein FFs. |

| CGenFF [4] | Force Field | CHARMM General Force Field for small molecules. | Provides parameters for a wide range of drug-like molecules within the CHARMM force field ecosystem. |

| OPLS3e [4] | Force Field | Optimized Potentials for Liquid Simulations force field. | Includes extensive parameters for drug-like compounds and a ligand-specific charge model; implemented in Schrödinger software. |

Critical Limitations and the Path Forward

The traditional triad of scoring functions, while foundational, faces profound challenges that limit their predictive accuracy, particularly for binding affinity. A core limitation is the simplified treatment of entropy and solvent effects [2]. While some empirical functions include a term for conformational entropy based on rotatable bonds, this is a crude approximation. Furthermore, the explicit and dynamic role of water molecules in binding, which can be crucial for affinity and specificity, is often poorly captured [2] [3].

Another fundamental issue is the inherent difficulty of the parameterization process. The development of empirical and knowledge-based functions is intrinsically linked to the quality, size, and diversity of the experimental data used for training. Inconsistencies in experimental data and the limited coverage of chemical and target space in current datasets can lead to functions that do not generalize well [2] [5]. The approximations used by these functions suggest that the best available classical functions may be close to the limit of what can be achieved with these empirical approaches [3].

The field is now moving beyond the classical triad. The most significant trend is the shift towards machine-learning (ML) and deep-learning (DL) based scoring functions [2] [1] [6]. Unlike classical functions, ML-based models do not assume a predetermined functional form, allowing them to infer complex, non-linear relationships directly from data. These methods have consistently been found to outperform classical functions at binding affinity prediction for diverse protein-ligand complexes [1]. Recent advances also include integrating knowledge-guided pre-training strategies that incorporate additional semantic information, such as molecular descriptors and fingerprints, to learn more robust molecular representations, significantly improving predictive performance [6]. Furthermore, efforts are underway to incorporate more sophisticated physics, such as explicit polarization and quantum mechanical effects, and to develop more automated and intelligent parameterization toolkits for force fields [4]. This evolution points toward a future of hybrid models that leverage the strengths of data-driven learning while respecting the physical principles that govern molecular recognition.

Research Directions Overcoming Classical Limitations

In computational drug discovery, the accurate prediction of drug-target binding affinity is a cornerstone for identifying and optimizing lead compounds. For decades, this field has been dominated by classical scoring functions—mathematical models that estimate binding strength using predetermined equations with fixed functional forms [7]. These models typically express the binding free energy (ΔG) as a weighted sum of physicochemically-inspired terms, such as van der Waals forces, electrostatic interactions, hydrogen bonding, and desolvation penalties [7]. While this approach benefits from interpretability and computational efficiency, its inherent rigidity fundamentally limits accuracy and flexibility. The reliance on a fixed architecture, where the mathematical relationship between variables is defined a priori by the researcher, fails to capture the complex, non-linear, and context-dependent nature of molecular recognition. This whitepaper examines the technical limitations imposed by these rigid functional forms, quantifies their performance shortcomings, and explores emerging methodologies that promise to overcome these constraints through more flexible, data-driven approaches to affinity prediction.

The Architecture of Classical Scoring Functions and Their Inherent Limitations

Deconstruction of Standard Functional Forms

Classical scoring functions for binding affinity prediction are historically categorized into physics-based, empirical, and knowledge-based approaches, though the boundaries are often blurred [7]. A typical physics-based scoring function, for instance, often adopts a functional form akin to:

ΔG(binding) = ΔE(VdW) + ΔE(el) + ΔE(H-bond) + ΔG(solv) [7]

In this predefined equation, each term represents a specific type of interaction: van der Waals (ΔE(VdW)), electrostatic (ΔE(el)), hydrogen bonding (ΔE(H-bond)), and solvation free energy (ΔG(solv)). The model's final form is a linear combination of these components. Similarly, empirical functions fit coefficients to these terms using experimental binding data, while knowledge-based functions derive potentials of mean force from structural databases. The critical shared limitation is not necessarily the choice of terms but the fixed combinatorial rule—the assumption that the total binding energy can be expressed as a simple, weighted sum of independent contributions. This form cannot capture synergistic or emergent effects between different interaction types, leading to an oversimplified representation of the highly cooperative and complex process of molecular binding.

Core Technical Limitations of Rigid Forms

The reliance on predetermined equations introduces several fundamental technical constraints that curtail predictive accuracy:

- Inability to Capture Complex Non-linearities: Biological binding interactions are inherently non-linear. For example, the strength of a hydrogen bond can be context-dependent, influenced by the local dielectric environment or the presence of co-operative effects with nearby hydrophobic patches. A linear additive model cannot represent such higher-order interactions.

- Constraint of Parameter Space: The flexibility of a model is determined by its functional form and its number of free parameters. Classical scoring functions possess a limited number of tunable parameters (e.g., the weights in the linear combination), which restricts their ability to fit diverse and complex datasets. This is a classic case of underfitting, where the model is not expressive enough to capture the true underlying function governing the data [8].

- Systematic Bias Towards Training Conditions: The fixed form often embeds assumptions that reflect the data on which it was originally developed. For instance, a potential parameterized on equilibrium crystal structures may perform poorly for highly disordered states or non-equilibrium systems, a phenomenon observed in MEAM potentials for Al-Cu alloys [8]. This limits transferability across different target classes or chemical spaces.

- Limited Adaptability to New Data: Incorporating new knowledge or experimental data into a model with a rigid form often requires a complete re-parameterization or, in some cases, is fundamentally impossible without altering the core equation itself. This makes the model brittle and difficult to improve incrementally.

Quantitative Performance Analysis: Rigid Forms vs. Emerging Approaches

The limitations of rigid functional forms become starkly evident when their performance is quantitatively compared with more flexible, data-driven methods on benchmark tasks. The following table synthesizes key performance metrics from comparative studies, highlighting the accuracy gap.

Table 1: Quantitative Performance Comparison of Scoring Function Paradigms

| Model Category | Representative Example | Key Functional Form Characteristic | Reported Performance (R²) | Primary Limitation Illustrated |

|---|---|---|---|---|

| Classical Scoring Function | Physics-Based/ Empirical SFs [7] | Linear combination of pre-defined energy terms. | Lower accuracy, struggles with target identification [9] | Inability to generalize across diverse protein targets. |

| Machine Learning Model | Random Forest (RF) on molecular vibrations [10] [11] | Ensemble of decision trees; non-linear, data-derived rules. | R² > 0.94 for affinity prediction [10] [11] | Highlights the predictive power of flexible, non-parametric models. |

| Symbolic Regression (SR) | SR-derived interatomic potentials [8] | Equation discovered via RL/MCTS; no pre-defined form. | Outperformed Sutton-Chen EAM potentials [8] | Demonstrates that discovered equations can be both accurate and interpretable. |

| Deep Learning (DL) | Boltz-2 & other DL SFs [9] [7] | Multi-layer neural networks; highly non-linear function approximators. | Approaches FEP performance in some domains [9] | Struggles with generalization/memorization on target ID benchmarks [9]. |

A critical benchmark known as the "inter-protein scoring noise problem" further exposes the weakness of classical functions. While these functions can sometimes enrich active molecules for a single specific target, they generally fail to identify the correct protein target for a given active molecule due to scoring variations between different binding pockets [9]. A truly robust affinity prediction method must perform both tasks reliably, a hurdle that rigid forms have not yet cleared.

Case Studies and Experimental Protocols

Case Study 1: Symbolic Regression for Advanced Interatomic Potentials

This case study demonstrates how moving beyond fixed forms can improve accuracy even in a closely related field—material science—providing a template for drug discovery.

Experimental Protocol: The methodology for developing Symbolic Regression (SR)-derived potentials involves a multi-step, data-driven workflow [8].

- Data Generation: High-quality training data is generated using nested ensemble sampling with Density Functional Theory (DFT) calculations. This creates a diverse dataset spanning crystalline, disordered, and defective atomic configurations [8].

- Symbolic Search: A Reinforcement Learning (RL) search engine, specifically Continuous-Action Monte Carlo Tree Search (MCTS), explores the space of possible mathematical expressions. This is combined with gradient descent to optimize constants within candidate equations [8].

- Validation: The discovered potential functions (e.g., SR1 and SR2 for copper) are rigorously validated against a suite of material properties, including lattice constants, elastic constants, defect energies, and crucially, melting behavior through two-phase solid-amorphous interface simulations [8].

Key Workflow Diagram: The following diagram illustrates the contrast between the classical and SR approaches to model development.

Case Study 2: Molecular Vibration Descriptors with Random Forest

This study directly addresses drug-target affinity (DTA) prediction and showcases a high-performing machine learning model that bypasses classical rigid forms.

Experimental Protocol: The detailed methodology for constructing the quantitative prediction model is as follows [10] [11]:

- Data Curation: Large benchmark datasets were compiled from public databases (PubChem, DrugBank, ChEMBL, Uniprot). The Kd dataset contained 10,923 ligand-target-Kd pairs, and the EC50 dataset contained 11,076 ligand-target-EC50 pairs [10] [11].

- Descriptor Calculation & Screening: Initially, 1,874 molecular descriptors were calculated using the PaDEL software. Critically, these were filtered down to 813 descriptors associated with molecular vibrations, as these reflect underlying physicochemical properties like electronegativity and bond polarity [11]. Protein sequence descriptors (e.g., Normalized Moreau-Broto autocorrelation G3) were also computed [10] [11].

- Model Training with a "Whole System" View: The model was trained using a Random Forest (RF) algorithm. The input features combined the screened molecular vibration descriptors for the drug and the protein sequence descriptors for the target, treating the drug-target pair as a single holistic system [10] [11].

- Validation: Model performance was evaluated via internal cross-validation and external tests, achieving a coefficient of determination (R²) greater than 0.94 [10] [11].

Key Workflow Diagram: The holistic "whole system" approach is visualized below.

Table 2: Key Research Reagents and Computational Tools for Advanced Affinity Prediction

| Item / Resource | Function / Purpose | Relevance to Overcoming Rigid Forms |

|---|---|---|

| PaDEL-Descriptor [10] [11] | Software to calculate a comprehensive set of molecular descriptors from chemical structure. | Enables featurization based on holistic molecular properties (e.g., vibrations) rather than pre-defined interaction terms. |

| Density Functional Theory (DFT) [8] | Ab initio quantum mechanical method for calculating electronic structure. | Provides high-quality, quantum-accurate training data for developing and validating more flexible models like SR potentials. |

| Random Forest Algorithm [10] [11] | A machine learning method that constructs multiple decision trees for regression or classification. | Provides a powerful, non-parametric alternative to linear models, capable of capturing complex non-linearities without a fixed equation. |

| Reinforcement Learning (RL) & MCTS [8] | A search strategy for exploring large combinatorial spaces (e.g., of mathematical expressions). | The core engine in symbolic regression that allows for the discovery of novel, interpretable functional forms directly from data. |

| Benchmark Datasets (Kd, EC50) [10] [11] | Curated datasets of drug-target pairs with experimentally measured binding affinities. | Essential for training and fairly evaluating the performance of new, flexible models against classical baselines. |

| LIT-PCBA Benchmark Set [9] | A demanding benchmark set designed for evaluating target identification capability. | Tests generalizability—a key weakness of rigid functions—by requiring models to rank affinities across different proteins. |

The evidence is compelling: the rigid functional forms underpinning classical scoring functions constitute a significant bottleneck in the pursuit of accurate, generalizable, and predictive models for binding affinity. Their inability to capture the complex, non-linear physics of molecular interactions inherently limits their accuracy and domain of applicability, as quantified by their struggle with the inter-protein scoring noise problem [9] [7]. Emerging paradigms, including machine learning models that leverage holistic molecular descriptors [10] [11] and symbolic regression that discovers physically interpretable equations directly from data [8], demonstrate a clear path forward. These approaches reject the constraint of predetermined equations in favor of flexibility and data-driven discovery. For the field of computational drug discovery to advance, the research community must increasingly embrace these flexible modeling paradigms, fostering a shift from assuming the form of the solution to letting high-quality data and intelligent algorithms reveal it.

Classical scoring functions are pivotal tools in structure-based drug design, tasked with predicting the binding affinity of a small molecule to a target protein. Despite their long-standing utility, their predictive accuracy has plateaued, largely due to two fundamental omissions: the inadequate treatment of solvation effects and protein flexibility [12] [13]. These molecular phenomena are central to the process of binding, yet classical approaches handle them through drastic simplifications that limit their realism and predictive power. This review delineates how these shortcomings have constrained the reliability of affinity prediction and surveys the emerging computational strategies that are beginning to redress these gaps, thereby framing the limitations within the broader thesis on the evolution of scoring function research.

Classical scoring functions are broadly categorized as force-field, empirical, or knowledge-based [14]. Regardless of type, they share a common methodological constraint: the imposition of a predetermined, theory-inspired functional form for the relationship between the variables characterizing the protein-ligand complex and the predicted binding affinity [12]. This rigid approach leads to poor predictivity for complexes that do not conform to the underlying modeling assumptions. Furthermore, for the sake of computational efficiency, these functions employ a minimal description of protein flexibility and an implicit treatment of solvent, ignoring the dynamic and solvation-driven nature of the binding process [12]. The following sections will dissect the specific challenges posed by solvation and flexibility and detail how modern approaches are integrating them into a new generation of predictive models.

The Critical Role of Solvation in Binding Affinity

The Physics of Solvation and its Inadequate Representation

Solvation effects play a critical role in determining the binding free energy in protein-ligand interactions [14]. When a ligand binds to a protein, it undergoes a desolvation process, whereby water molecules are displaced from both the ligand's and the protein's binding site. This process involves a complex balance of energetic contributions: the screening of electrostatic interactions by water, the hydrophobic effect for nonpolar atoms, and the hydrophilic effect for polar groups [14]. Classical scoring functions often neglect these contributions entirely or account for them through oversimplified terms, such as a simple solvent-accessible surface area (SASA)-based energy term, which fails to capture the nuanced physics of water-mediated interactions [14].

The inherent challenge in incorporating solvation is the parameterization of pairwise potentials, solvation, and entropy, which belong to different energetic categories [14]. Consequently, despite the recognized importance of solvation in ligand binding, most classical knowledge-based scoring functions do not explicitly include its contributions, partly due to the difficulty in deriving the corresponding pair potentials and the resulting double-counting problem [14]. This omission represents a significant source of error in binding affinity predictions.

Advanced Methods for Incorporating Solvation Effects

Recent research has developed novel computational models to explicitly include solvation and entropic effects. One prominent method involves an iterative approach to simultaneously derive effective pair potentials and atomic solvation parameters [14]. The binding energy score is expressed as:

ΔGbind=∑ijuij(r)+∑iσiΔSAi

where uij(r) is the pair potential, σi is the solvation parameter for atom type i, and ΔSAi is the change in the solvent-accessible surface area [14]. The solvation parameters σi are iteratively improved by comparing the predicted and observed SASA changes in the training set complexes, effectively learning the solvation contribution from the data itself [14].

Another approach is seen in the development of physics-based scoring functions like DockTScore, which incorporate optimized terms for solvation and lipophilic interactions, moving beyond simplistic models to better represent the protein-ligand recognition process [15]. Similarly, machine-learning scoring functions circumvent the need for a predetermined functional form, allowing the collective effect of solvation and other interactions to be implicitly inferred from large experimental datasets [12].

Table 1: Computational Methods for Incorporating Solvation Effects

| Method Name | Underlying Approach | Key Solvation Terms | Reported Performance |

|---|---|---|---|

| ITScore/SE [14] | Knowledge-based with iterative parameter fitting | SASA-based energy term with atomic solvation parameters | R² = 0.76 on validation set of 77 complexes |

| DockTScore [15] | Empirical, physics-based with machine learning | Optimized solvation and lipophilic interaction terms | Competitive performance on DUD-E datasets |

| Machine-Learning SFs [12] | Data-driven, non-linear regression | Implicitly learned from comprehensive feature sets | Outperform classical SFs in binding affinity prediction |

The Challenge of Protein Flexibility and Dynamics

Protein Flexibility as a Major Limiting Factor

Protein flexibility stands out as one of the most important and challenging issues for binding mode prediction in molecular docking [13]. Proteins are dynamic entities that undergo continuous conformational changes of varying magnitudes, which are essential for biological processes like molecular recognition [16] [17]. However, classical docking tools and their embedded scoring functions often treat the protein receptor as a rigid body, an approximation that fails to capture the induced-fit and conformational selection mechanisms that frequently characterize binding [13].

The major limitation of treating proteins as rigid is the failure to account for the conformational entropy contribution to the binding free energy and the structural rearrangements that can open or close binding pockets [12] [13]. This simplification is primarily driven by the astronomical computational cost associated with sampling the full conformational space of a protein during docking. As a result, the reliability of structure-based affinity prediction is severely compromised for targets that undergo significant structural changes upon ligand binding [13].

Computational Strategies for Modeling Protein Flexibility

A variety of conformational sampling methods have been proposed to tackle the challenge of protein flexibility, ranging from techniques that account for local binding-site sidechain rearrangements to those that model full protein flexibility [13].

- Ensemble Docking: This method involves docking ligands into an ensemble of multiple protein conformations, typically derived from experimental structures (e.g., from crystallography) or computational simulations like Molecular Dynamics (MD) [13]. This approach implicitly accounts for large-scale conformational changes and is particularly useful for virtual screening experiments.

- Molecular Dynamics (MD) Simulations: All-atom MD simulations provide valuable, atomistically detailed information on protein conformational behavior on various timescales [16]. Databases like ATLAS provide large-scale, standardized MD simulations, offering insights into dynamic properties of functional protein regions [16]. MD ensembles have been shown to enhance docking performance by providing a more realistic representation of the protein's conformational landscape [16].

- On-the-fly Sampling: For applications requiring accurate binding mode prediction (geometry prediction), methods that explore the flexibility of the whole protein-ligand complex during the docking process might be necessary [13]. These methods, while computationally expensive, can capture synergistic motions between the ligand and the protein that are crucial for forming the complex.

The choice of the best method depends heavily on the system under study and the research application, with a trade-off always existing between computational cost and the level of flexibility accounted for [13].

Diagram 1: Computational workflows for incorporating protein flexibility in docking. Methods branch from a single input structure and converge on producing improved docking poses, which are suitable for different applications.

The Emergence of Machine Learning and Advanced Physical Models

The Paradigm Shift to Machine-Learning Scoring Functions

The limitations of classical scoring functions have catalyzed a shift towards machine-learning scoring functions (ML-SFs) [12] [18]. Unlike classical functions that assume a predetermined functional form (e.g., linear regression with a small number of expert-selected features), ML-SFs use non-linear regression models to infer the functional form directly from the data [12]. This data-driven approach allows ML-SFs to exploit very large volumes of structural and interaction data effectively, capturing complex, non-additive interactions that are hard to model explicitly.

The performance gap between classical and machine-learning SFs is significant and is expected to widen as more training data becomes available [12]. For instance, the ML-SF RF-Score-VS demonstrated a dramatic improvement in virtual screening performance: its top 0.1% of molecules achieved an 88.6% hit rate, compared to just 27.5% for Vina [18]. In binding affinity prediction, RF-Score-VS also substantially outperformed Vina, with Pearson correlations of 0.56 and -0.18, respectively [18]. Other deep learning models, such as DAAP, which uses distance-based features and attention mechanisms, have achieved state-of-the-art performance, with a Pearson correlation of 0.909 on the CASF-2016 benchmark [19].

Integrating Physics-Based Terms with Machine Learning

A promising trend is the development of hybrid scoring functions that integrate precise, physics-based descriptors with powerful machine-learning regression algorithms. The DockTScore suite of functions is a prime example, which explicitly accounts for physics-based terms—including optimized MMFF94S force-field terms, solvation and lipophilic interactions, and an improved ligand torsional entropy estimate—combined with machine learning models like Support Vector Machine (SVM) and Random Forest (RF) [15]. This approach aims to retain the physical interpretability of the interaction terms while leveraging the ability of machine learning to model complex, non-linear relationships, thereby avoiding the over-optimistic accuracy estimates sometimes associated with purely black-box models [15].

Table 2: Comparison of Scoring Function Performance on Benchmark Tasks

| Scoring Function Type | Example | Virtual Screening Hit Rate (Top 1%) | Binding Affinity Prediction (Pearson R) | Key Advantages |

|---|---|---|---|---|

| Classical SF | Vina | 16.2% [18] | -0.18 [18] | Speed, simplicity |

| Machine-Learning SF | RF-Score-VS | 55.6% [18] | 0.56 [18] | Handles large datasets, non-linearity |

| Deep Learning SF | DAAP | N/A | 0.909 [19] | Captures complex interactions directly from structure |

| Physics-Based ML SF | DockTScore (MLR) | Competitive on DUD-E [15] | Competitive on core set [15] | Balance of physical interpretability and accuracy |

Experimental Protocols and the Scientist's Toolkit

Detailed Methodology: Incorporating Solvation and Entropy

The iterative method for developing the ITScore/SE knowledge-based scoring function provides a clear protocol for integrating solvation and entropy [14]:

- Training Set Curation: A set of high-quality protein-ligand complexes with known 3D structures and binding affinities is assembled.

- Feature Calculation: For each complex, compute the initial set of knowledge-based pair potentials and the change in Solvent-Accessible Surface Area (ΔSASA) for each atom type upon binding. The SASA is calculated using an algorithm of uniform atom-based spherical grids with a probe radius of 1.4 Å.

- Iterative Parameter Optimization: The effective pair potentials uij(r) and atomic solvation parameters σi are simultaneously derived using an iterative algorithm. The potentials are updated in each step n as follows:

- uij(n+1)(r) = uij(n)(r) + λkBT[ gij(n)(r) - gijobs(r) ]

- σi(n+1) = σi(n) + λkBT( fΔSAi(n) - fΔSAiobs ) Here, gijobs(r) and fΔSAiobs are the observed pair distribution and SASA change fractions from the native structures, while gij(n)(r) and fΔSAi(n) are the Boltzmann-weighted averages predicted by the current potentials over native and decoy structures.

- Convergence Check: The iteration continues until the difference between the predicted and observed distribution functions falls below a predefined threshold.

- Validation: The final scoring function is validated on independent test sets for binding mode identification and affinity prediction.

Table 3: Key Resources for Advanced Scoring Function Development

| Resource Name | Type | Function in Research |

|---|---|---|

| PDBbind [12] [15] | Database | A comprehensive, curated database of protein-ligand complexes with binding affinity data, used for training and benchmarking scoring functions. |

| DUD-E [18] | Benchmark Dataset | "Directory of Useful Decoys: Enhanced" provides benchmark sets for virtual screening, containing known actives and property-matched decoys for many targets. |

| CASF Benchmark [19] | Benchmark Suite | A standardized benchmark for evaluating scoring functions on core tasks like binding affinity prediction, pose prediction, and virtual screening. |

| ATLAS [16] | MD Simulation Database | A database of standardized all-atom molecular dynamics simulations, providing insights into protein dynamics for a representative set of proteins. |

| CHARMM36m Force Field [16] | Molecular Model | A force field used in MD simulations to compute potential energy, parameterized for balanced sampling of folded and disordered proteins. |

| GROMACS [16] | Software | A high-performance molecular dynamics package used to simulate the Newtonian equations of motion for systems with hundreds to millions of particles. |

The inadequate treatment of solvation effects and protein flexibility has been a fundamental bottleneck in the accuracy of classical scoring functions. As this review outlines, these omissions stem from necessary but limiting simplifications made to maintain computational feasibility. The emergence of machine-learning scoring functions represents a paradigm shift, leveraging large datasets to infer complex relationships without being constrained by a predetermined functional form [12] [18]. Simultaneously, the integration of more rigorous physics-based terms, such as explicit solvation and entropy contributions, is providing a more realistic description of the binding process [14] [15]. The synergy of these two approaches—data-driven machine learning and theory-inspired physical models—is paving the way for a new generation of scoring functions with enhanced predictive power and greater generality.

Future progress will depend on continued advances in several areas. The development of large-scale, standardized dynamical data, as exemplified by the ATLAS database, will be crucial for modeling protein flexibility in a consistent manner [16]. Furthermore, the creation of target-specific scoring functions for challenging target classes like protein-protein interactions demonstrates a move away from a one-size-fits-all approach, promising better performance for specific therapeutic applications [15] [20]. As computational power grows and algorithms become more sophisticated, the explicit and accurate integration of solvation, entropy, and full flexibility will transition from a specialist's challenge to a standard component of the drug designer's toolkit, finally overcoming the key omissions that have long limited structure-based affinity prediction.

The additivity assumption posits that the total binding energy of a protein-ligand complex can be represented as the sum of independent, localized interactions. This principle underpins classical scoring functions in molecular recognition, where the affinity for any given molecular structure is calculated by summing contributions from individual atoms, functional groups, or residue pairs. The computational efficiency of this approach has made it a cornerstone in structural bioinformatics and early-stage drug discovery, particularly for rapid virtual screening of compound libraries.

However, mounting experimental evidence from quantitative biochemistry reveals that molecular recognition in biological systems frequently deviates from perfect additivity. Non-additive effects emerge from complex, cooperative interactions within and between molecules—effects that simple summing functions cannot capture. This whitepaper examines the fundamental limitations of the additivity assumption through key case studies and quantitative data, providing researchers with a framework for critically evaluating scoring function performance in affinity prediction research.

Quantitative Evidence: Case Studies Challenging Additivity

Protein-DNA Recognition: A Benchmark System

Protein-DNA interactions serve as an ideal model system for testing additivity due to their well-defined binding interfaces and the discrete nature of nucleotide positions. A re-analysis of seminal studies on the Mnt repressor protein and mouse EGR1 protein binding provides compelling quantitative evidence against purely additive models [21].

Table 1: Correlation Between Measured Binding Affinities and Additive Model Predictions

| Zif268 Variant | Mononucleotide BAM (123) | Dinucleotide BAM (12*3) | Dinucleotide BAM (1*23) |

|---|---|---|---|

| Wild-type | 0.973 | 0.986 | 0.987 |

| RGPD | 0.883 | 0.942 | 0.941 |

| REDV | 0.999 | 0.999 | 0.999 |

| LRHN | 0.927 | 0.978 | 0.956 |

| KASN | 0.695 | 0.791 | 0.718 |

While the mononucleotide Best Additive Model (BAM) shows strong correlations for some proteins (e.g., REDV at 0.999), performance substantially degrades for others (KASN at 0.695) [21]. The consistent improvement of dinucleotide models, which incorporate some positional interdependencies, demonstrates that positional interdependence significantly impacts binding affinity. For the KASN variant, the dinucleotide model (12*3) achieves a correlation of 0.791 compared to 0.695 for the mononucleotide model—a 14% improvement in explanatory power [21].

Beyond DNA: Evidence from Protein-Ligand Interactions

The limitations of additivity extend to protein-ligand interactions central to drug discovery. Fragment-Based Drug Discovery (FBDD) highlights the importance of non-additive synergy when fragments are combined [22]. While fragments themselves follow approximately additive rules due to their small size and simple interactions, their optimization into lead compounds frequently reveals cooperative effects that deviate from predictions based on fragment properties alone.

Modern machine learning approaches explicitly address these limitations. The ProBound framework, which models transcription factor binding affinity from sequencing data, incorporates cooperativity terms and multi-protein complex interactions that fundamentally violate simple additivity [23]. Similarly, the SCAGE architecture for molecular property prediction employs a multitask pretraining framework that captures complex relationships between molecular structure and function beyond what additive models can represent [24].

Experimental Protocols for Quantifying Non-Additivity

Comprehensive Binding Affinity Profiling

Objective: Systematically measure positional interdependence in molecular recognition.

Methodology:

- Library Design: Generate complete combinatorial libraries covering all possible sequence variations at target positions (e.g., all 16 dinucleotides for two positions, all 64 trinucleotides for three positions) [21] [23].

- Affinity Measurement: Utilize high-throughput binding assays (SELEX, KD-seq, protein-binding microarrays) to determine binding constants for all library variants [23].

- Additive Model Fitting: Calculate the Best Additive Model (BAM) by converting association constants to binding probabilities and determining position-specific weights that maximize correlation with experimental data [21].

- Deviation Quantification: Compute correlation coefficients between measured affinities and BAM predictions across all variants. Significant deviations indicate non-additive effects.

Critical Controls:

- Multiple experimental replicates to assess measurement error (e.g., 9 replicates in the EGR1 study) [21].

- Comparison of multiple additive models (mononucleotide vs. dinucleotide) to isolate specific types of interdependencies.

Fragment-Based Binding Analysis

Objective: Quantify cooperative effects in molecular assembly.

Methodology:

- Fragment Library Screening: Employ diverse biophysical techniques (NMR, SPR, X-ray crystallography) to identify fragment hits against protein targets [22] [25].

- Binding Site Mapping: Determine spatial relationships between fragment binding sites using structural biology methods.

- Linker Optimization: Systematically explore chemical space connecting fragment hits while monitoring changes in binding affinity.

- Cooperativity Calculation: Compare measured affinity of optimized compounds against predictions based on fragment affinities and linking chemistry.

Computational Approaches Overcoming Additivity Limitations

Advanced Machine Learning Architectures

Next-generation computational models address additivity failures through several innovative approaches:

Multitask Pretraining Frameworks: SCAGE incorporates four pretraining tasks (molecular fingerprint prediction, functional group prediction, 2D atomic distance prediction, and 3D bond angle prediction) to learn comprehensive molecular representations that capture complex structure-activity relationships [24].

Cooperativity Modeling: ProBound explicitly models cooperative binding in multi-TF complexes through energy terms that depend on relative positioning and orientation of binding partners [23].

Geometric Learning: Incorporation of 3D structural information (atomic distances, bond angles, conformational flexibility) enables models to capture spatial relationships that violate simple additivity [24] [23].

Interpretability and Explainability

Modern non-additive models provide biochemical insights through:

- Attention Mechanisms: Identifying atomic-level contributions to molecular activity [24].

- Functional Group Annotation: Assigning unique functional groups to each atom to enhance understanding of molecular activity [24].

- Methylation Awareness: Quantifying position-specific impacts of epigenetic modifications on binding affinity [23].

Table 2: Comparison of Molecular Recognition Modeling Approaches

| Model Type | Key Assumptions | Strengths | Limitations |

|---|---|---|---|

| Additive (BAM) | Position independence | Computational efficiency; Simple interpretation | Fails for cooperative systems; Limited accuracy |

| Dinucleotide BAM | Dinucleotide interdependence | Captures nearest-neighbor effects; Improved accuracy | Still misses longer-range interactions |

| ProBound | Multi-experiment integration | Quantifies cooperativity; Handles modifications | Computational intensity; Complex implementation |

| SCAGE | Multitask representation learning | Captures complex structure-activity relationships | Requires extensive pretraining data |

Research Reagent Solutions

Table 3: Essential Research Materials for Non-Additivity Studies

| Reagent/Technology | Function | Application Context |

|---|---|---|

| SELEX-seq | High-throughput profiling of protein-DNA interactions | Comprehensive binding affinity measurement [23] |

| KD-seq | Absolute affinity determination using input, bound and unbound fractions | Direct measurement of binding constants [23] |

| Fragment Libraries (~1400 compounds) | Screening for molecular recognition elements | Identifying privileged substructures [25] |

| Multi-TF SELEX | Characterization of cooperative complexes | Quantifying cooperativity in multi-protein assemblies [23] |

| Methylated DNA Libraries | Profiling epigenetic effects on recognition | Methylation-aware binding models [23] |

Visualizing Experimental Workflows

Protein-DNA Binding Analysis

Machine Learning Framework for Non-Additive Binding

The empirical evidence against universal additivity in molecular recognition is substantial and growing. Quantitative studies of protein-DNA interactions reveal significant positional interdependencies, while fragment-based drug discovery demonstrates cooperative effects in molecular assembly. These non-additive phenomena necessitate advanced modeling approaches that explicitly account for cooperativity, spatial relationships, and contextual effects.

Modern machine learning frameworks like ProBound and SCAGE point the way forward by integrating diverse data types, modeling cooperativity explicitly, and maintaining biophysical interpretability. As molecular recognition research advances, the field must move beyond the convenient but limited additive assumption toward more sophisticated models that capture the complex, emergent properties of biological systems. This paradigm shift will enable more accurate affinity prediction, rational design of molecular interventions, and ultimately, more efficient drug discovery pipelines.

From Theory to Practice: How Methodological Shortcomings Hinder Real-World Drug Discovery

Structure-based virtual screening (VS) has become an indispensable tool in computational drug discovery, yet its effectiveness is fundamentally constrained by the accuracy of scoring functions (SFs). Classical SFs, which rely on empirical, force-field-based, or knowledge-based approaches, have hit a persistent performance plateau in their ability to discriminate between binders and non-binders. This whitepaper delineates the core limitations of these classical SFs and frames them within the broader thesis of affinity prediction research. We explore the emergence of machine-learning (ML) scoring functions as a transformative solution, presenting quantitative benchmarks and detailed methodologies that underscore their superior performance in enriching true actives and predicting binding affinities.

The primary goal of structure-based virtual screening is to identify novel bioactive molecules from vast chemical libraries by computationally docking them into a target protein's structure. The efficacy of this process hinges entirely on the scoring function's ability to rank compounds based on their predicted affinity. Classical SFs, embedded in popular docking tools, estimate binding energy using simplified physical models or statistical potentials derived from known protein-ligand structures. Despite their long-standing utility, these functions suffer from well-documented limitations: they often inadequately account for conformational entropy, solvation effects, and specific interaction nuances, leading to inaccurate affinity predictions and poor enrichment of true binders [18]. Consequently, the field has witnessed a performance plateau, where incremental improvements in classical SFs have yielded diminishing returns, creating a critical bottleneck in the early drug discovery pipeline [18] [26]. This paper examines the evidence for this plateau and the subsequent paradigm shift towards data-driven ML approaches, which learn the complex relationships between protein-ligand structural features and binding affinities directly from large-scale experimental data.

Quantitative Evidence of the Performance Plateau

Extensive benchmarking studies across diverse protein targets provide concrete evidence of the limitations of classical SFs. The data reveal that while these functions can serve as loose classifiers, their performance, particularly in early enrichment, is significantly surpassed by modern machine-learning scoring functions.

Table 1: Virtual Screening Performance Comparison on the DUD-E Benchmark (102 Targets)

| Scoring Function | Type | Hit Rate at Top 1% | Hit Rate at Top 0.1% | Binding Affinity Pearson Correlation |

|---|---|---|---|---|

| RF-Score-VS | Machine Learning | 55.6% | 88.6% | 0.56 |

| AutoDock Vina | Classical (Empirical) | 16.2% | 27.5% | -0.18 |

| DOCK3.7 | Classical (Force-Field) | ~15% (est.) | - | - |

The data in Table 1, derived from a large-scale study on the DUD-E benchmark, is telling. The machine-learning SF, RF-Score-VS, achieves a hit rate at the top 1% of ranked molecules that is more than three times that of a classical SF like Vina [18]. The difference is even more dramatic in the ultra-early enrichment zone (top 0.1%), where RF-Score-VS identifies hits with near 90% accuracy. Furthermore, the poor Pearson correlation of Vina's scores with experimental binding affinity (-0.18) underscores its inability to provide a meaningful quantitative estimate of binding strength, a core limitation in affinity prediction research [18].

This performance gap is not isolated. A 2025 benchmarking study on Plasmodium falciparum Dihydrofolate Reductase (PfDHFR) variants further corroborates these findings. The study showed that re-scoring initial docking poses with ML SFs like CNN-Score dramatically improved early enrichment. For the wild-type enzyme, re-scoring with CNN-Score achieved an enrichment factor at 1% (EF1%) of 28, a substantial improvement over the baseline docking tools [27].

Detailed Experimental Protocols for Benchmarking Scoring Functions

To ensure the reproducibility of VS benchmarks and the rigorous validation of new SFs, researchers adhere to standardized protocols. The following methodology outlines a comprehensive benchmarking workflow.

Data Set Curation and Preparation

Data Provenance: Public benchmark sets like DUD-E (Directory of Useful Decoys: Enhanced) and DEKOIS 2.0 are commonly used. These sets provide, for a given protein target, a list of known active molecules and a set of decoy molecules—structurally similar but presumed inactive molecules that act as negative controls [18] [27].

- Protein Preparation: Crystal structures of the target protein are obtained from the Protein Data Bank (PDB). The preparation steps, often performed with tools like OpenEye's "Make Receptor," involve:

- Removing water molecules, unnecessary ions, and redundant chains.

- Adding and optimizing hydrogen atoms.

- Defining the binding site and saving the prepared structure in the required format for docking (e.g., PDB, OEB) [27].

- Ligand and Decoy Preparation: Active and decoy molecules are processed to generate viable 3D conformations. This typically involves:

- Using tools like Omega to generate multiple conformers per ligand or a single, energy-minimized representative.

- Converting the prepared structures into file formats compatible with specific docking programs (e.g., PDBQT for AutoDock Vina, mol2 for PLANTS) [27].

Docking and Validation Strategy

Docking Experiments: The prepared ligand and decoy libraries are docked into the prepared protein structure using one or more docking programs (e.g., AutoDock Vina, FRED, PLANTS). The grid box dimensions are set to encompass the entire binding site [27].

Performance Validation: To prevent overfitting and ensure generalizability, strict cross-validation strategies are employed:

- Vertical Split: The training and test sets contain data from entirely different protein targets. This simulates the scenario of predicting ligands for a novel target with no known binders and is the most stringent test of generalizability [18].

- pROC-AUC and Enrichment Factor (EF): The primary metrics for VS performance. The area under the ROC curve (pROC-AUC) measures overall discrimination, while the EF, particularly at early stages (e.g., EF1%), measures the ability to enrich true actives at the very top of the ranked list, which is critical for practical VS campaigns [27].

Table 2: Key Research Reagents and Computational Tools for Virtual Screening Benchmarking

| Reagent / Tool Name | Type/Category | Primary Function in VS Workflow |

|---|---|---|

| DUD-E / DEKOIS 2.0 | Benchmark Dataset | Provides curated sets of active molecules and property-matched decoys for rigorous performance assessment. |

| AutoDock Vina | Docking Program | Generates plausible binding poses and provides an initial score using an empirical scoring function. |

| RF-Score-VS / CNN-Score | Machine-Learning Scoring Function | Re-scores docking poses to significantly improve the ranking of active molecules over decoys. |

| PDBbind Database | Training Dataset | A comprehensive collection of protein-ligand complexes with binding affinity data for training ML scoring functions. |

| OpenBabel / SPORES | File Format Tool | Converts and processes chemical file formats between different docking and analysis software. |

Virtual Screening Benchmarking Workflow

Emerging Solutions and Persistent Hurdles

The transition to machine-learning scoring functions is not a panacea. While they show remarkable performance on established benchmarks, significant challenges remain that define the current frontier of affinity prediction research.

The Data Leakage and Generalization Crisis

A critical issue undermining the perceived progress in ML-based affinity prediction is train-test data leakage. A 2025 analysis revealed that the standard benchmark used for evaluating SFs, the Comparative Assessment of Scoring Functions (CASF), shares a high degree of structural similarity with the PDBbind database used to train these models. This means models can perform well by memorizing similarities rather than by genuinely learning protein-ligand interactions [28]. When models like GenScore and Pafnucy were retrained on a rigorously filtered dataset (PDBbind CleanSplit) to eliminate this leakage, their performance dropped markedly, revealing an overestimation of their true generalization capabilities [28]. This highlights a core challenge: developing models that generalize to genuinely novel targets and not just those structurally related to training examples.

The Rise of Target-Specific and Advanced Architecture Models

To combat generalization issues and improve accuracy, researchers are developing specialized approaches:

- Target-Specific Scoring Functions: Instead of a one-size-fits-all SF, models are trained specifically on data for a single target. For example, graph convolutional neural networks (GCNs) have been used to create target-specific SFs for cGAS and kRAS, which showed significant superiority over generic SFs in virtual screening accuracy and robustness [29].

- Sparsely Connected Graph Neural Networks: Models like GEMS (Graph neural network for Efficient Molecular Scoring) leverage a sparse graph representation of protein-ligand interactions and transfer learning from protein language models. When trained on the cleaned PDBbind CleanSplit dataset, GEMS maintained high performance, suggesting a better ability to generalize to strictly independent test data [28].

The performance plateau of classical scoring functions in virtual screening is a well-documented reality, driven by their inherent inability to capture the complex physical chemistry of molecular recognition. The field is unequivocally shifting towards machine-learning-based solutions, which have demonstrated a profound ability to enrich true binders and offer more accurate affinity predictions. However, the path forward must be navigated with caution. The dual challenges of data leakage in public benchmarks and the limited generalization of many current models represent the next major hurdles. Future research must prioritize the development of rigorously benchmarked models, trained on non-redundant, leakage-free data, and validated on truly novel targets. The integration of advanced architectures like graph neural networks and the strategic use of target-specific training paradigms offer promising avenues to finally move beyond the plateau and deliver on the promise of accurate, reliable affinity prediction for drug discovery.

Accurately predicting the binding affinity between a small molecule and its protein target is a cornerstone of computational drug discovery. The strength of this interaction, quantified as binding affinity, directly determines a drug candidate's efficacy and is a critical parameter for lead optimization [30]. For decades, the development of scoring functions capable of reliably estimating this affinity has been a primary research focus. These functions aim to correlate the three-dimensional structural information of a protein-ligand complex with experimentally measured binding constants (Ki, Kd, IC50), providing a computational substitute for costly and time-consuming laboratory assays [30] [31].

However, a significant and persistent challenge plagues the field: the poor correlation between computationally predicted affinities and experimentally validated results. This gap severely limits the utility of these methods in real-world drug discovery pipelines, where decisions about which compounds to synthesize and test often hinge on computational predictions [30] [28]. Insufficient conformational sampling, oversimplified energy functions, and an inability to accurately model critical solvation and entropic effects are frequently cited as traditional culprits [30]. While deep learning has emerged as a promising paradigm, offering computational efficiency and the ability to learn complex patterns from data, its performance is often overestimated due to benchmark datasets plagued by data leakage and redundancy [28]. This whitepaper examines the core limitations of both classical and machine learning-based affinity prediction methods, framed within the broader thesis that current scoring functions, despite their sophistication, are not yet robust or generalizable enough to replace experimental validation.

Core Challenges in Achieving Experimental Correlation

The discrepancy between in silico predictions and experimental binding constants arises from a confluence of factors that affect both traditional and modern deep learning approaches.

Fundamental Methodological Limitations

Conventional physics-based methods face intrinsic hurdles. Molecular dynamics (MD) simulations for binding free energy calculations, such as those using the Bennett Acceptance Ratio (BAR), are computationally intensive. Achieving sufficient sampling is difficult because the inclusion of explicit solvent or membrane environments requires extensive equilibration to ensure system stability [30]. Furthermore, as a state function, binding free energy calculation requires finely dividing the perturbation range into multiple intermediate lambda (λ) states to control energy transitions, adding to the computational burden [30]. Classical scoring functions embedded in docking tools like AutoDock Vina or Glide rely on empirical rules and heuristic search algorithms, which often result in inaccuracies and an inability to fully capture the complexity of molecular interactions [32].

Data Biases and Benchmarking Pitfalls

A critical, and often underestimated, challenge is the issue of data quality and evaluation. The performance of deep-learning models is highly dependent on their training data. A 2025 study highlighted that a significant train-test data leakage exists between the widely used PDBbind database and the Comparative Assessment of Scoring Functions (CASF) benchmark [28]. This leakage, stemming from structural similarities between training and test complexes, severely inflates the performance metrics of models, leading to a substantial overestimation of their generalization capabilities [28]. Alarmingly, some models perform well on benchmarks even when protein information is omitted, suggesting they rely on memorizing ligand-specific patterns rather than learning genuine protein-ligand interactions [28]. This problem is compounded by redundancies within the training data itself, which can encourage models to settle for a local minimum in the loss landscape through memorization instead of developing a robust predictive understanding [28].

Generalization and Physical Plausibility

Even the most accurate models on paper can fail in practical applications. A comprehensive evaluation of deep learning-based docking methods revealed significant challenges in generalization, particularly when encountering novel protein binding pockets not represented in the training data [32]. Furthermore, many deep learning methods, especially generative diffusion models, can produce poses with favorable root-mean-square deviation (RMSD) scores but that are physically implausible. They may exhibit steric clashes, incorrect bond lengths/angles, or fail to recapitulate key protein-ligand interactions essential for biological activity [32]. This indicates that while these models learn to generate geometrically correct poses, they may not fully grasp the underlying physicochemical principles governing binding.

Table 1: Core Challenges in Binding Affinity Prediction

| Challenge Category | Specific Limitations | Impact on Prediction |

|---|---|---|

| Methodological Limits | Insufficient sampling in MD simulations; Oversimplified scoring functions [30] [32]. | Inaccurate energy estimates; Failure to capture key interaction dynamics. |

| Data Bias & Leakage | Structural similarities between PDBbind training and CASF test sets; Redundant training data [28]. | Overestimated model performance; Poor generalization to novel targets. |

| Generalization Failure | Inability to handle novel protein pockets or ligand topologies; Production of physically invalid poses [32] [28]. | Models fail in real-world virtual screening and lead optimization. |

| Evaluation Deficits | Over-reliance on a single metric (e.g., RMSD); Lack of target identification benchmarks [32] [9]. | Incomplete picture of model utility for drug discovery. |

Quantitative Performance Gaps

The theoretical challenges manifest in concrete performance gaps when methods are rigorously evaluated. When state-of-the-art models like GenScore and Pafnucy were retrained on a cleaned dataset (PDBbind CleanSplit) designed to eliminate data leakage, their performance on the CASF benchmark dropped markedly [28]. This confirms that previously reported high scores were largely driven by data leakage rather than genuine learning. In molecular docking, a multidimensional evaluation shows a wide variation in success rates. The "combined success rate" – which considers both pose accuracy (RMSD ≤ 2 Å) and physical validity – reveals that even the best methods have significant room for improvement.

Table 2: Performance Comparison of Docking Methods on Benchmark Datasets [32]

| Method Type | Representative Method | Combined Success Rate (Astex Diverse Set) | Combined Success Rate (DockGen - Novel Pockets) |

|---|---|---|---|

| Traditional | Glide SP | >85% (inferred) | High (inferred as top tier) |

| Hybrid (AI Scoring) | Interformer | Second highest tier | Second highest tier |

| Generative Diffusion | SurfDock | 61.18% | 33.33% |

| Regression-Based | KarmaDock, QuickBind | Lowest tier | Lowest tier |

Another telling benchmark is the "inter-protein scoring noise" problem. Classical functions can enrich active molecules for a single target but fail to identify the correct protein target for a given active molecule due to scoring variations between different binding pockets [9]. A test of the Boltz-2 model, a biomolecular foundation model, on a target identification benchmark revealed it was still unable to correctly identify the target of active molecules by predicting a higher binding affinity compared to decoy targets [9]. This indicates a lack of generalizable understanding of protein-ligand interactions.

Detailed Experimental Protocols

To illustrate the complexities involved in affinity prediction, we detail two key experimental approaches: one based on molecular dynamics and another on modern deep learning model training.

BAR-Based Binding Free Energy Calculation Protocol

The following workflow outlines the protocol for achieving efficient sampling and binding free energy calculation using a re-engineered Bennett Acceptance Ratio (BAR) method, as applied to GPCR targets [30].

Workflow Description: This protocol [30] begins with a prepared structure of the protein-ligand complex, such as a G-protein coupled receptor (GPCR) with a bound agonist or antagonist. For membrane proteins like GPCRs, the complex is embedded within an appropriate membrane model and solvated with explicit water molecules, followed by ion addition for physiological ionic strength. A multi-step equilibration through molecular dynamics is then critical to ensure the stability of the entire system—protein, ligand, membrane, and solvent. The core of the alchemical method involves defining a pathway between the bound and unbound states by dividing the transformation into numerous intermediate steps, represented by scaling factors known as lambda (λ) values. Extensive molecular dynamics sampling is performed at each of these lambda states to collect energy data for both forward and backward transitions. Finally, the binding free energy (ΔGbind) is calculated by applying the re-engineered BAR method to this collected data. The validity of the computational approach is demonstrated by correlating the calculated ΔGbind values with experimental binding affinity data (pK_D).

Protocol for Training a Robust Deep Learning Affinity Predictor

This protocol focuses on mitigating data bias to improve model generalization, a key challenge identified in recent research [28].

Workflow Description: This protocol [28] starts with the raw PDBbind database. The first and most crucial step is structure-based filtering using a multimodal clustering algorithm. This algorithm assesses similarity between protein-ligand complexes by combining protein similarity (TM-score), ligand similarity (Tanimoto score), and binding conformation similarity (pocket-aligned ligand RMSD). This identifies and removes complexes in the training set that are overly similar to those in the test set (e.g., the CASF benchmark), effectively eliminating train-test data leakage. The result is a curated training dataset, such as PDBbind CleanSplit. The protocol also involves reducing redundancy within the training set itself by resolving large similarity clusters, forcing the model to learn general rules rather than memorizing specific examples. The model architecture, such as a Graph Neural Network (GNN), is designed to sparse graph modeling of protein-ligand interactions and can be enhanced with transfer learning from large protein language models. Finally, the model is evaluated on a strictly independent test set, with ablation studies conducted to verify that its predictions are based on a genuine understanding of interactions and not data leakage.

Table 3: Essential Resources for Binding Affinity Prediction Research

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| PDBbind [31] [28] | Database | Comprehensive collection of protein-ligand complex structures with experimentally measured binding affinity data. Serves as a primary source for model training. |

| CASF Benchmark [31] [28] | Benchmark Set | Curated dataset used for the comparative assessment of scoring functions' performance in scoring, ranking, docking, and screening powers. |

| GROMACS [30] | Software | High-performance molecular dynamics toolkit used for running simulations, system equilibration, and alchemical free energy calculations. |

| AutoDock Vina [32] [28] | Software | Widely used molecular docking program with an empirical scoring function, often used as a baseline for comparison. |

| Glide [32] | Software | A robust molecular docking tool known for its accurate pose prediction and rigorous sampling algorithms. |

| Boltz-2 [9] | AI Model | A biomolecular foundation model claimed to approach the performance of FEP in estimating binding affinity. |

| PoseBusters [32] | Validation Tool | Toolkit to systematically evaluate the physical plausibility and chemical correctness of predicted docking poses. |

| CleanSplit [28] | Curated Dataset | A filtered version of PDBbind designed to minimize train-test data leakage and redundancy, enabling genuine evaluation of model generalization. |

The challenge of achieving a strong correlation between predicted and experimental binding constants remains a significant bottleneck in computational drug discovery. The limitations are deeply rooted and multifaceted, extending beyond simple algorithmic improvements. While deep learning offers new avenues, its current promise is tempered by critical issues of data bias, overestimation of capabilities, and poor generalization on truly novel targets. The path forward requires a concerted shift in the research community's approach. This includes the development and adoption of rigorously curated, non-redundant datasets, the implementation of more demanding benchmarks that test for target identification and generalization, and a holistic evaluation of models that prioritizes physical plausibility and biological relevance alongside raw predictive accuracy. Overcoming the affinity prediction challenge is not merely a computational problem but a interdisciplinary endeavor that demands a more nuanced understanding of both biological complexity and the limitations of our data-driven models.

Accurate prediction of protein-ligand binding affinity is a cornerstone of structure-based drug design. While classical scoring functions are often adequate for evaluating ligands similar to their training data, their performance significantly degrades when applied to novel chemical scaffolds or diverse protein targets—a limitation termed congeneric bias. This whitepaper analyzes the fundamental origins of this bias, rooted in statistical learning theory and exacerbated by dataset construction flaws. We demonstrate through quantitative analysis that generalized models possess inherent accuracy limits, with protein-specific models consistently outperforming universal functions. Furthermore, we document how data leakage and redundancy in common benchmarks artificially inflate performance metrics, creating a false impression of generalizability. Emerging solutions, including advanced graph neural networks, multitask learning architectures, and rigorous data curation protocols, show promise for overcoming these limitations. The findings underscore the necessity of developing next-generation scoring functions that transcend simple pattern matching to genuinely learn the biophysical principles of molecular recognition.

The accurate prediction of binding affinity remains one of the great challenges in computational chemistry [33]. Classical scoring functions were developed to provide fast assessment of protein-ligand complexes using single structural snapshots, offering an essential tool for virtual screening and lead optimization in drug discovery. These functions traditionally compromise between physical accuracy and computational efficiency, employing empirical, force-field-based, or knowledge-based approaches to score complexes.