Beyond the Hype: A Practical Guide to Training Robust Binding Affinity Models with PDBbind CleanSplit

Accurately predicting protein-ligand binding affinity is a cornerstone of computational drug discovery, yet the field has been hampered by overstated model performance due to pervasive data leakage in standard benchmarks.

Beyond the Hype: A Practical Guide to Training Robust Binding Affinity Models with PDBbind CleanSplit

Abstract

Accurately predicting protein-ligand binding affinity is a cornerstone of computational drug discovery, yet the field has been hampered by overstated model performance due to pervasive data leakage in standard benchmarks. This article provides a comprehensive guide for researchers and drug development professionals on the PDBbind CleanSplit dataset, a newly curated resource designed to eliminate train-test leakage and enable genuine assessment of model generalizability. We explore the foundational reasons for its development, detail methodological approaches for effective model training, address common troubleshooting and optimization challenges, and present a rigorous validation framework for comparing model performance. By adopting CleanSplit, the scientific community can build more reliable and trustworthy predictive models, ultimately accelerating the development of new therapeutics.

The Data Leakage Problem: Why PDBbind CleanSplit is a Necessity, Not an Option

The accurate prediction of protein-ligand binding affinity is a critical objective in structure-based drug design (SBDD). For years, the scientific community has relied on benchmarks derived from the PDBbind database and the Comparative Assessment of Scoring Functions (CASF) to evaluate the performance of novel computational models [1] [2]. However, a growing body of evidence reveals a fundamental flaw in this evaluation paradigm: widespread train-test data leakage has severely inflated performance metrics, creating an illusion of progress while masking poor generalization on truly novel complexes [1].

Data leakage occurs when information from outside the training dataset is used to create the model, leading to overly optimistic performance estimates that would not be achievable in real-world prediction scenarios [3] [4]. This issue is particularly pervasive in binding affinity prediction, where the standard practice of training on PDBbind and testing on CASF benchmarks has been compromised by undisclosed similarities between the training and test complexes [1] [2]. This review examines the sources and impacts of this leakage, presents a rigorous solution in the form of the PDBbind CleanSplit dataset, and provides protocols for developing leakage-free binding affinity models with genuinely generalizable performance.

The Data Leakage Problem in PDBbind-CASF

Mechanisms and Magnitude of Leakage

The data leakage between PDBbind and CASF benchmarks is not merely theoretical but stems from concrete structural similarities that enable models to "memorize" rather than "learn" true binding principles. A multimodal filtering algorithm analyzing protein similarity, ligand similarity, and binding conformation similarity has revealed alarming levels of contamination [1].

Table 1: Quantified Data Leakage Between PDBbind and CASF Benchmarks

| Similarity Metric | Threshold Value | Percentage of CASF Complexes Affected | Number of Similar Train-Test Pairs |

|---|---|---|---|

| Protein Similarity (TM-score) | >0.7 | 49% | ~600 |

| Ligand Similarity (Tanimoto) | >0.9 | Not specified | Significant |

| Binding Conformation (pocket-aligned RMSD) | Low values | Correlated with protein/ligand similarity | Part of the ~600 pairs |

The structural analysis demonstrates that nearly half of all CASF complexes share striking similarities with complexes in the PDBbind training set, complete with closely matched affinity labels [1]. This means models can achieve apparently state-of-the-art performance simply by recognizing structural patterns they encountered during training, rather than by genuinely understanding protein-ligand interactions.

Impact on Model Performance and Generalization

The consequences of this data leakage are profound. When state-of-the-art models like GenScore and Pafnucy were retrained on a leakage-free dataset (PDBbind CleanSplit), their performance on the CASF benchmark dropped substantially [1]. This performance collapse confirms that previously reported metrics were artificially inflated and did not reflect true generalization capability.

This phenomenon extends beyond structural bioinformatics. A systematic review found that data leakage has affected at least 294 scientific publications across 17 different scientific fields, potentially contributing to a broader reproducibility crisis in machine learning-based science [4]. In medical applications, models trained with leakage can fail catastrophically when deployed in real-world clinical settings, sometimes misclassifying most healthy patients as diseased when overt diagnostic features are removed [5].

Solution: PDBbind CleanSplit Protocol

Multimodal Filtering Algorithm

The PDBbind CleanSplit methodology employs a sophisticated structure-based clustering algorithm that simultaneously evaluates three dimensions of similarity to identify and remove problematic overlaps [1].

Table 2: Similarity Metrics in the CleanSplit Filtering Algorithm

| Metric | Measurement Target | Technical Implementation | Purpose in Leakage Prevention |

|---|---|---|---|

| TM-score | Protein structure similarity | Protein structure alignment | Eliminates test complexes with highly similar protein folds |

| Tanimoto coefficient | Ligand chemical similarity | Molecular fingerprint comparison | Removes training complexes with nearly identical ligands |

| Pocket-aligned RMSD | Binding conformation similarity | Ligand alignment within binding pocket | Filters complexes with similar binding modes |

The filtering workflow operates iteratively, first addressing train-test leakage between PDBbind and CASF, then resolving redundancies within the training set itself. This process ultimately removes approximately 11.8% of training complexes (4% for direct train-test leakage and 7.8% for internal redundancies) [1].

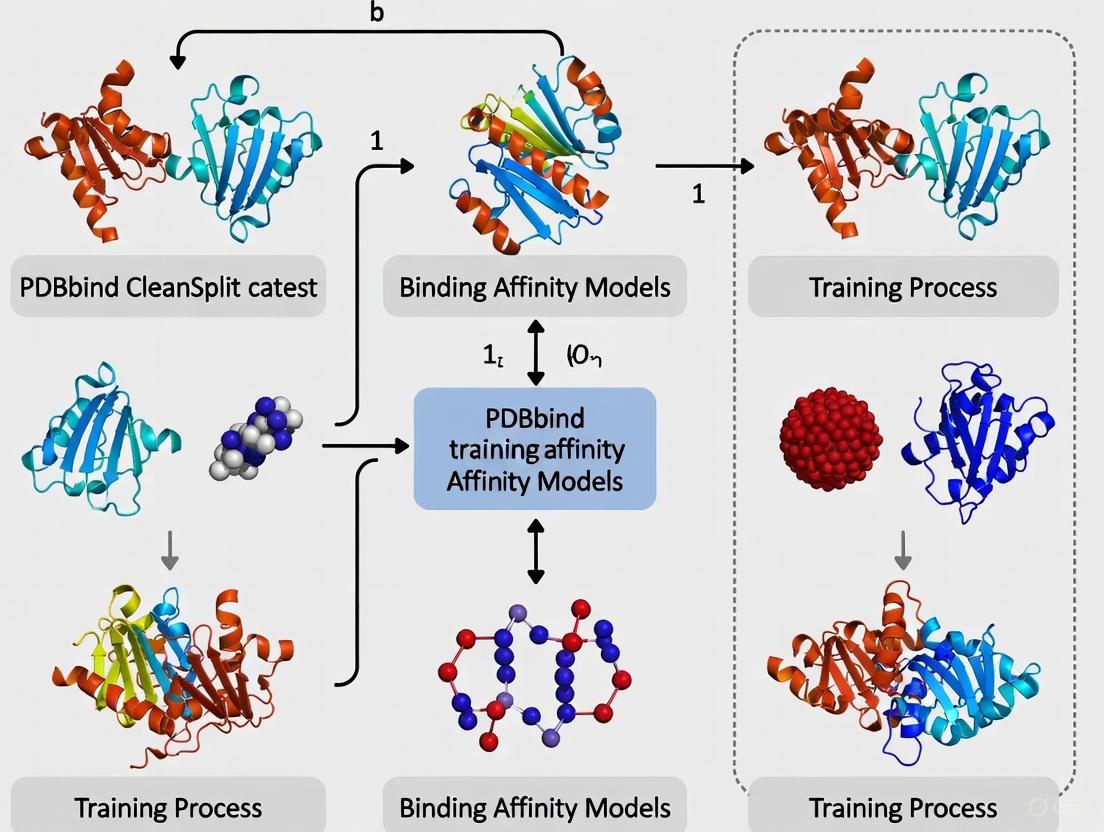

The following diagram illustrates the comprehensive filtering workflow:

Independent Validation with BDB2020+

To enable truly external validation, researchers have created BDB2020+, an independent dataset constructed by matching high-quality binding free energies from BindingDB with co-crystalized ligand-protein complexes from the PDB deposited since 2020 [2]. This dataset is filtered using the same similarity control criteria as LP-PDBBind (a related leakage-proof dataset), ensuring no overlap with the training data and providing a rigorous testbed for model generalization [2].

Experimental Validation of the CleanSplit Approach

Performance Comparison on Standard Benchmarks

Retraining existing models on PDBbind CleanSplit provides a sobering reality check for the field. The following table compares benchmark performance before and after addressing data leakage:

Table 3: Model Performance With and Without Data Leakage

| Model | Original CASF Performance (with leakage) | Performance on CleanSplit (leakage-free) | Performance Change | Generalization to BDB2020+ |

|---|---|---|---|---|

| GenScore | High (original paper) | Substantially dropped | Significant decrease | Not specified |

| Pafnucy | High (original paper) | Substantially dropped | Significant decrease | Not specified |

| GEMS (GNN) | Not applicable | Maintains high performance | Minimal decrease | Good performance |

| IGN (retrained) | Not applicable | Improved compared to original | Increase | Better generalization [2] |

The performance degradation observed in models like GenScore and Pafnucy confirms that their original high performance was largely driven by data leakage rather than genuine learning of protein-ligand interactions [1]. In contrast, the Graph Neural Network for Efficient Molecular Scoring (GEMS) maintains high benchmark performance even when trained on CleanSplit, suggesting it possesses more robust generalization capabilities [1].

Ablation Study: Validating Learning Mechanisms

To confirm that model predictions are based on genuine understanding rather than spurious correlations, ablation studies are essential. When protein nodes were omitted from the GEMS graph architecture, the model failed to produce accurate predictions, confirming that its performance derives from actual protein-ligand interaction patterns rather than memorization of ligand structures alone [1].

This approach aligns with best practices for detecting data leakage, which include analyzing feature importance and verifying that models rely on logically relevant features rather than counter-intuitive proxies [3] [4].

Practical Implementation Protocols

Protocol 1: Creating a Leakage-Free Dataset Split

Purpose: To generate a customized leakage-free dataset for binding affinity prediction that ensures rigorous evaluation of model generalizability.

Materials:

- PDBbind general set (or other structural binding data)

- Structural similarity tools (TM-align for proteins, OpenBabel for ligand fingerprints)

- Clustering software (custom Python scripts)

Procedure:

- Protein Similarity Filtering:

- Calculate all-against-all TM-scores for proteins in candidate training and test sets

- Apply threshold: exclude training complexes with TM-score >0.7 to any test complex

- Retain only complexes with low structural similarity across splits

Ligand Similarity Filtering:

- Generate molecular fingerprints (ECFP4 or similar) for all ligands

- Calculate Tanimoto coefficients between all ligand pairs

- Apply threshold: exclude training complexes with Tanimoto >0.9 to any test ligand

Binding Conformation Validation:

- For remaining complexes, align binding pockets and calculate RMSD of ligand poses

- Manually inspect complexes with low RMSD values for similar binding modes

- Exclude training complexes with nearly identical binding conformations to test complexes

Internal Redundancy Reduction:

- Identify similarity clusters within the training set using the same metrics

- Iteratively remove complexes from each cluster until maximum within-cluster TM-score <0.8 and Tanimoto <0.85

- Preserve diverse representation across protein folds and ligand scaffolds

Validation: Confirm that no test complex has close analogs in training set using the defined similarity metrics. Verify dataset diversity through principal component analysis of ligand chemical space.

Protocol 2: Training a Robust Binding Affinity Model

Purpose: To train a graph neural network model for binding affinity prediction that generalizes to novel protein-ligand complexes.

Materials:

- PDBbind CleanSplit or LP-PDBBind dataset

- Graph neural network framework (PyTorch Geometric/DGL)

- Pretrained language models (ProtBERT for proteins, ChemBERTa for ligands)

- High-performance computing resources with GPU acceleration

Procedure:

- Data Preprocessing:

- Extract protein-ligand complexes from cleaned dataset

- Represent proteins as graphs: nodes as amino acids with structural features, edges based on spatial proximity

- Represent ligands as graphs: nodes as atoms with chemical features, edges as bonds

- Generate protein sequence embeddings using ProtBERT

- Generate ligand molecular embeddings using ChemBERTa

Model Architecture (GEMS-inspired):

- Implement sparse graph representation of protein-ligand interactions

- Incorporate pretrained embeddings as node features

- Use message-passing neural network layers with attention mechanisms

- Add rotational invariant spatial encoding for 3D structural information

- Include distance-aware aggregation functions

Training Protocol:

- Initialize model with transfer learning from language model embeddings

- Use robust loss function (Huber loss) for affinity prediction

- Apply regularization techniques: dropout, weight decay, early stopping

- Optimize using AdamW optimizer with learning rate scheduling

- Implement gradient clipping for training stability

Validation and Testing:

- Evaluate on CleanSplit test set using RMSE and Pearson correlation

- Test on completely independent dataset (BDB2020+)

- Perform ablation studies to verify protein contribution to predictions

- Compare against baseline models trained with standard splits

Expected Outcomes: Model should maintain performance on leakage-free test sets and show robust generalization to independent benchmarks like BDB2020+, with minimal performance gap between validation and external test sets.

Research Reagent Solutions

Table 4: Essential Tools for Leakage-Free Binding Affinity Prediction

| Resource | Type | Function | Application Notes |

|---|---|---|---|

| PDBbind CleanSplit | Dataset | Leakage-free training data for affinity prediction | Curated via multimodal filtering; strictly separated from CASF |

| LP-PDBBind [2] | Dataset | Reorganized PDBbind with minimal similarity between splits | Controls for protein sequence and ligand chemical similarity |

| BDB2020+ [2] | Benchmark | Independent validation set from post-2020 structures | True external test for generalization capability |

| TM-align [1] | Software tool | Protein structure similarity assessment | Used for calculating TM-scores in filtering algorithm |

| GEMS Framework [1] | Model architecture | Graph neural network with transfer learning | Maintains performance on leakage-free data |

| IGN (Interaction GraphNet) [2] | Model architecture | Graph neural network for protein-ligand structures | Recommended for scoring/ranking after retraining on LP-PDBBind |

| ProtBERT [6] | Pretrained model | Protein sequence representation | Provides transfer learning for protein encoding |

| ChemBERTa [6] | Pretrained model | Molecular representation from SMILES | Enables transfer learning for ligand encoding |

The following diagram illustrates the complete experimental workflow for developing and validating a leakage-free binding affinity prediction model:

The discovery of extensive train-test leakage between PDBbind and CASF benchmarks represents a critical inflection point for computational drug discovery. The field must transition from evaluating models on compromised benchmarks to adopting rigorous, leakage-free evaluation frameworks like PDBbind CleanSplit. The protocols and reagents outlined here provide a pathway for developing binding affinity models with genuinely generalizable performance, ultimately accelerating the identification of therapeutic candidates through more reliable computational predictions.

The accurate prediction of protein-ligand binding affinity is a cornerstone of computational drug design. For years, the field has relied on benchmarks that suggested continuous improvement in model performance. However, recent research has revealed a critical flaw in this narrative: widespread data leakage between the primary training dataset, PDBbind, and the standard evaluation benchmarks from the Comparative Assessment of Scoring Functions (CASF) has severely inflated performance metrics and led to an overestimation of model generalization capabilities [1] [2].

This data leakage occurs when models encounter test complexes that are highly similar to those seen during training, enabling prediction through memorization rather than learning fundamental principles of molecular recognition [1]. Alarmingly, some models maintain competitive benchmark performance even when critical structural information is omitted, suggesting they are not genuinely learning protein-ligand interactions [1] [7].

To address this fundamental challenge, we introduce PDBbind CleanSplit, a rigorously curated training dataset created via a novel structure-based filtering algorithm that eliminates train-test data leakage and reduces internal redundancies [1]. This application note provides a comprehensive overview of the CleanSplit methodology, validation protocols, and implementation guidelines to enable robust binding affinity prediction.

The Data Leakage Problem in PDBbind

The PDBbind database serves as the primary resource for training protein-ligand binding affinity prediction models. Its standard organization includes "general" and "refined" sets for training, with a separate "core" set used for testing, typically through the CASF benchmark [2]. This arrangement has been shown to contain significant data leakage, fundamentally compromising model evaluation.

Quantifying the Data Leakage

Analysis using structure-based clustering revealed extensive similarities between training and test complexes [1]:

| Similarity Type | Impact on CASF Complexes | Number of Similar Pairs |

|---|---|---|

| High structural similarity (Similar proteins, ligands, and binding conformation) | 49% of CASF complexes affected | Nearly 600 similarities identified |

| Ligand-based leakage (Tanimoto score > 0.9) | Additional data leakage pathway | Training complexes with identical ligands removed |

Consequences of Data Leakage

The impact of this data leakage on model performance evaluation is profound:

- Performance Inflation: Models achieve artificially high benchmark scores by memorizing structural similarities rather than learning generalizable interaction principles [1]

- Overestimated Generalization: Reported performance metrics do not reflect true capability on novel protein-ligand complexes [2]

- Impeded Progress: The field cannot accurately determine whether new scoring functions represent genuine improvements [2]

When state-of-the-art models like GenScore and Pafnucy were retrained on CleanSplit, their performance on the CASF benchmark dropped substantially, confirming that their previously reported high performance was largely driven by data leakage rather than true generalization capability [1].

The CleanSplit Methodology

PDBbind CleanSplit addresses data leakage through a multi-stage filtering approach that ensures strict separation between training and test complexes while simultaneously reducing redundancies within the training set.

Structure-Based Filtering Algorithm

The core innovation of CleanSplit is a structure-based clustering algorithm that performs multimodal similarity assessment between protein-ligand complexes. This algorithm evaluates three complementary dimensions of similarity:

Protein Similarity Assessment

- Metric: TM-score [1]

- Purpose: Quantifies protein structural similarity

- Advantage: More sensitive than sequence-based metrics, identifies structurally similar proteins even with low sequence identity [1]

Ligand Similarity Assessment

- Metric: Tanimoto score [1]

- Purpose: Quantifies chemical similarity between ligands

- Threshold: Training complexes with ligands having Tanimoto score > 0.9 to any CASF test ligand are removed [1]

Binding Conformation Assessment

- Metric: Pocket-aligned ligand root-mean-square deviation (RMSD) [1]

- Purpose: Quantifies similarity of ligand positioning within the protein binding pocket

- Significance: Ensures complexes with similar interaction patterns are identified

Redundancy Reduction within Training Set

Beyond addressing train-test leakage, CleanSplit also reduces internal redundancies within the training dataset:

- Problem: Approximately 50% of training complexes belong to similarity clusters [1]

- Solution: Iterative removal of complexes until striking similarity clusters are resolved [1]

- Result: 7.8% of training complexes removed to enhance dataset diversity [1]

This redundancy reduction discourages memorization and encourages learning of generalizable patterns, providing a more robust foundation for model training.

Experimental Validation

The effectiveness of PDBbind CleanSplit was validated through rigorous experimentation comparing model performance when trained on standard PDBbind versus the cleaned dataset.

Performance Comparison on CASF Benchmark

Retraining existing models on CleanSplit revealed their true generalization capabilities:

Table 1: Model Performance Comparison on CASF Benchmark

| Model | Performance Trained on Standard PDBbind | Performance Trained on CleanSplit | Performance Change |

|---|---|---|---|

| GenScore | High benchmark performance | Substantially dropped performance | Significant decrease |

| Pafnucy | High benchmark performance | Substantially dropped performance | Significant decrease |

| GEMS (Novel GNN) | Not applicable | Maintained high performance | State-of-the-art |

The GEMS Model: Maintaining Performance on CleanSplit

In contrast to existing models, the novel Graph Neural Network for Efficient Molecular Scoring (GEMS) maintained high benchmark performance when trained on CleanSplit, demonstrating genuine generalization capability [1]. Key architectural features include:

- Sparse Graph Modeling: Efficiently represents protein-ligand interactions [1]

- Transfer Learning: Leverages pre-trained language models [1]

- Ablation Study Validation: Model fails to produce accurate predictions when protein nodes are omitted, confirming predictions are based on genuine understanding of protein-ligand interactions [1]

Implementation Protocols

Dataset Acquisition and Preparation

Researchers can implement the CleanSplit methodology using the following protocol:

Table 2: Research Reagent Solutions for CleanSplit Implementation

| Resource | Type | Function in Protocol | Access Information |

|---|---|---|---|

| PDBbind Database | Data | Source of protein-ligand complexes and affinity data | http://www.pdbbind.org.cn/ [2] |

| CASF Benchmarks | Data | Evaluation datasets for generalization assessment | Included with PDBbind distribution |

| CleanSplit Filtering Algorithm | Software | Structure-based clustering and similarity assessment | Publicly available code [1] |

| Structural Biology Tools | Software | TM-score calculation, structural alignment | Publicly available (e.g., MMalign for TM-score) |

| Cheminformatics Toolkit | Software | Ligand similarity calculations (Tanimoto scores) | Open-source options (e.g., RDKit) |

Structural Data Preparation

- Download PDBbind general set (latest available version)

- Extract protein-ligand complexes and associated binding affinity data

- Standardize structures by adding missing hydrogen atoms and correcting bond orders [8] [9]

- Remove covalent complexes using CONECT record analysis [8] [9]

- Filter structures with steric clashes (heavy atom pairs < 2Å) [8] [9]

CleanSplit Filtering Protocol

The core filtering process follows these methodological steps:

Train-Test Separation Phase

- For each CASF test complex:

- Calculate TM-score against all PDBbind training complexes

- Compute Tanimoto scores for ligand pairs

- Determine pocket-aligned ligand RMSD for high-scoring pairs

- Identify and remove training complexes exceeding similarity thresholds:

- Verify separation: Ensure no high-similarity pairs remain between training and test sets

Internal Redundancy Reduction Phase

- Apply adapted filtering thresholds (slightly relaxed compared to train-test separation)

- Iteratively identify and resolve similarity clusters within training data

- Remove complexes until all striking similarity clusters are eliminated

- Preserve dataset size while maximizing diversity (balance between data quantity and quality)

Model Training and Evaluation Protocol

To ensure fair comparison and reproducible results:

Training Configuration:

- Train models on both standard PDBbind and CleanSplit for comparison

- Use identical hyperparameters and training procedures for both datasets

- Implement k-fold cross-validation with structure-based splitting

Evaluation Methodology:

- Assess performance on CASF benchmarks (2016 and later versions)

- Include additional independent test sets (e.g., BDB2020+ [2]) for generalization validation

- Report multiple metrics: Pearson R, RMSE, and ranking power

Ablation Studies:

- Test model performance with omitted protein or ligand information

- Validate that predictions require both protein and ligand inputs [1]

Integration with Broader Research Context

PDBbind CleanSplit represents part of a larger movement addressing data quality issues in computational drug discovery. Several related initiatives share similar goals:

Table 3: Related Data Curation Efforts in Binding Affinity Prediction

| Dataset/Approach | Primary Focus | Relationship to CleanSplit |

|---|---|---|

| LP-PDBBind [2] [10] | Minimize sequence and chemical similarity between splits | Complementary approach using different similarity metrics |

| HiQBind-WF [8] | Correct structural artifacts in protein-ligand complexes | Can be used as preprocessing step before CleanSplit filtering |

| PDBBind-Opt [9] | Automated workflow for structural preparation | Addresses complementary structural quality issues |

| Low Similarity Splits [11] | Minimize similarity leakage for benchmarking | Shared goal of improving generalization assessment |

These complementary approaches can be integrated into a comprehensive pipeline for preparing high-quality training data for binding affinity prediction.

PDBbind CleanSplit establishes a new standard for training and evaluating binding affinity prediction models. By addressing the critical issue of data leakage through rigorous structure-based filtering, it enables genuine assessment of model generalization capabilities. The substantial performance drop observed when existing models are retrained on CleanSplit reveals that previous benchmark results were largely driven by memorization rather than true learning of protein-ligand interactions.

The research community is encouraged to adopt CleanSplit as a benchmark for developing new scoring functions, particularly as the field advances toward more complex generative AI approaches for drug design [1]. Only through rigorous evaluation on truly independent test complexes can we develop models with genuine predictive power for novel drug targets.

Future directions include expanding the filtering approach to larger datasets, developing standardized benchmarking protocols, and integrating with structural quality improvement workflows to provide a comprehensive foundation for the next generation of binding affinity prediction models.

In the field of computational drug design, the accuracy of binding affinity predictions is paramount for effective structure-based drug design (SBDD). Benchmark datasets have long served as the gold standard for evaluating and advancing scoring functions. However, a critical issue has emerged: train-test data leakage between popular training sets and benchmark datasets has severely inflated performance metrics, leading to overestimation of model generalization capabilities [1] [12]. This application note examines how data similarity artificially boosts benchmark scores within the context of binding affinity prediction, focusing specifically on the PDBbind database and Comparative Assessment of Scoring Function (CASF) benchmarks. We present a detailed analysis of the leakage problem, quantify its effects, and provide validated protocols for creating leakage-free dataset splits using methods such as the PDBbind CleanSplit approach [1].

Quantitative Analysis of Data Leakage

Magnitude of Train-Test Similarity

Analysis using structure-based clustering algorithms has revealed substantial similarity between standard training datasets and evaluation benchmarks. The following table summarizes key quantitative findings from studies investigating the PDBbind-CASF relationship:

Table 1: Quantified Data Leakage Between PDBbind and CASF Benchmarks

| Metric | Value | Impact/Interpretation |

|---|---|---|

| Similar train-test pairs | Nearly 600 pairs | High structural similarity identified between PDBbind training and CASF test complexes [1] |

| Affected CASF complexes | 49% | Nearly half of benchmark complexes not presenting new challenges due to similarities [1] |

| Performance drop post-cleaning | Substantial | Retraining top models on cleaned data caused significant performance decreases [1] |

| Training set redundancy | ~50% of complexes | Approximately half of training complexes part of similarity clusters within training data [1] |

Impact on Model Performance

The consequences of this data leakage become evident when models are evaluated on properly cleaned datasets:

Table 2: Performance Impact of Data Leakage Removal

| Model/Training Condition | Performance Observation | Implication |

|---|---|---|

| State-of-the-art models (on original data) | Excellent benchmark performance | Overestimation of generalization capabilities [1] |

| Same models (on CleanSplit) | Marked performance drop | Previous performance largely driven by data leakage [1] |

| Graph Neural Network model (on CleanSplit) | Maintained high performance | Genuine generalization capability demonstrated [1] |

| Simple similarity-based algorithm | Competitive performance (R=0.716) | Performance achievable without understanding protein-ligand interactions [1] |

Detection Methodologies

Structure-Based Clustering Algorithm

The identification of data leakage requires a multi-modal approach to similarity assessment. The structure-based clustering algorithm proposed for creating PDBbind CleanSplit employs three complementary metrics [1]:

- Protein similarity: Quantified using TM-scores [1]

- Ligand similarity: Measured via Tanimoto scores [1]

- Binding conformation similarity: Calculated as pocket-aligned ligand root-mean-square deviation (r.m.s.d.) [1]

This combined approach robustly identifies complexes with similar interaction patterns, even when proteins share low sequence identity [1].

Data Leakage Root Causes

Understanding the fundamental causes of data leakage is essential for developing effective detection strategies:

- External Factors: Data acquisition methods and inherent data similarities can create leakage opportunities, particularly with correlated samples or repetitive content [13].

- Implementation Errors: Incorrect data splitting, improper data augmentation, and faulty synthetic data generation can introduce leakage during dataset preparation [13].

- Group Leakage: Multiple samples from the same source (e.g., same patient in medical imaging) distributed across training and test sets [13].

- Temporal Leakage: Time-series data split without regard to chronological order, training on future data to predict past events [13].

Figure 1: Workflow for detecting and mitigating data leakage in protein-ligand complex datasets using multi-modal similarity assessment.

Experimental Protocols

Protocol: Creating a Clean Dataset Split

This protocol describes the procedure for generating a leakage-free dataset based on the PDBbind CleanSplit methodology [1].

Materials and Equipment

- Hardware: Standard workstation with sufficient storage for structural datasets

- Software: Molecular visualization software, similarity calculation tools (TM-score, Tanimoto coefficients, RMSD calculation)

- Data: PDBbind database (or relevant structural dataset)

Procedure

Data Collection and Preprocessing

- Download the complete PDBbind dataset

- Standardize protein and ligand representations

- Resolve any missing residues or atoms

Similarity Calculation

- Compute all pairwise protein similarities using TM-score

- Calculate all pairwise ligand similarities using Tanimoto coefficients

- Determine binding conformation similarities using pocket-aligned ligand RMSD

Threshold Application

- Set similarity thresholds based on biological relevance

- Protein similarity threshold: Based on structural homology

- Ligand similarity threshold: Tanimoto > 0.9 for identifying nearly identical ligands [1]

- Binding conformation threshold: RMSD value indicating similar binding modes

Similarity Cluster Identification

- Identify complexes exceeding similarity thresholds

- Mark protein-ligand complexes sharing high similarity with benchmark sets

- Flag redundant complexes within the training set itself

Dataset Filtering

- Remove all training complexes closely resembling any test complex

- Eliminate training complexes with ligands identical to those in test set

- Resolve internal training set redundancies by removing similar complexes

Validation

- Verify cleaned dataset maintains sufficient size for training

- Ensure diverse representation of protein families and ligand types

- Confirm elimination of high-similarity pairs between training and test sets

Protocol: Evaluating Data Leakage in Existing Models

This protocol assesses whether a model's performance is inflated by data leakage.

Materials and Equipment

- Trained binding affinity prediction models

- Original and cleaned dataset splits

- Standard benchmarking environment

Procedure

Baseline Performance Establishment

- Evaluate model performance on standard benchmark using original training data

- Record key metrics (Pearson R, RMSD, etc.)

Retraining on Cleaned Data

- Retrain the same model architecture on the cleaned dataset split

- Maintain identical hyperparameters and training procedures

Performance Comparison

- Evaluate retrained model on the same benchmark

- Compare performance metrics with original model

Interpretation

- Significant performance drops suggest previous metrics were inflated by data leakage

- Maintained performance indicates genuine generalization capability

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools

| Reagent/Tool | Function | Application Notes |

|---|---|---|

| PDBbind CleanSplit | Leakage-free training dataset | Filtered using structure-based clustering; eliminates train-test similarity [1] |

| Structure-based clustering algorithm | Multi-modal similarity assessment | Combines protein, ligand, and binding conformation metrics [1] |

| Graph Neural Network (GEMS) | Binding affinity prediction | Maintains performance on cleaned data; sparse graph modeling [1] |

| LP-PDBBind | Alternative reorganized dataset | Controls for protein and ligand sequence/structural similarity [14] |

| TM-score | Protein structural similarity metric | Identifies similar protein folds beyond sequence identity [1] |

| Tanimoto coefficient | Ligand similarity metric | Quantifies 2D molecular similarity; threshold >0.9 for near-identical ligands [1] |

| Pocket-aligned ligand RMSD | Binding conformation similarity | Measures similar ligand positioning in protein binding sites [1] |

Figure 2: Framework for evaluating whether model performance is artificially inflated by data leakage or reflects genuine generalization capability.

The quantification of data leakage between the PDBbind database and CASF benchmarks reveals that nearly half of all test complexes share strong similarities with training data, significantly inflating perceived model performance [1]. The implementation of cleaned dataset splits such as PDBbind CleanSplit provides a necessary correction, enabling proper assessment of model generalization. The experimental protocols presented herein offer researchers practical methodologies for both creating leakage-free datasets and evaluating the true capabilities of binding affinity prediction models. As the field advances toward more reliable computational drug design, addressing data leakage systematically is essential for developing scoring functions with genuine predictive power for novel protein-ligand interactions.

The Impact of Dataset Redundancy on Model Training and Memorization

Dataset redundancy and data leakage represent critical, often overlooked, challenges in developing machine learning models for scientific applications, particularly in computational drug discovery. In the field of protein-ligand binding affinity prediction, these issues have led to widespread overestimation of model capabilities, with models learning to exploit statistical artifacts rather than underlying biological principles. The PDBbind database, a cornerstone resource for training scoring functions, has been shown to contain significant structural similarities and overlaps with standard benchmark sets like the Comparative Assessment of Scoring Functions (CASF). This redundancy creates a scenario where models can achieve impressive benchmark performance through memorization and pattern matching rather than genuine understanding of protein-ligand interactions [1] [15]. This application note examines the impact of dataset redundancy on model training, documents the creation of rigorously curated alternatives, and provides protocols for developing models that generalize to truly novel complexes.

The Data Redundancy Problem in Structural Biology

Quantifying Data Leakage in PDBbind

The standard practice of training on the PDBbind general set and evaluating on the CASF benchmark has been fundamentally compromised by data leakage. A rigorous structure-based analysis revealed alarming levels of similarity between training and test complexes:

Table 1: Quantified Data Leakage Between PDBbind and CASF Benchmarks

| Similarity Metric | Threshold Value | Percentage of CASF Complexes Affected | Impact on Model Performance |

|---|---|---|---|

| Overall Complex Similarity | TM-score, Tanimoto, & RMSD | 49% of CASF complexes had highly similar counterparts in training [1] | Enables near-direct label memorization |

| Ligand Similarity | Tanimoto > 0.9 | Significant number of ligands nearly identical between sets [1] | Models memorize ligand-affinity relationships |

| Protein Similarity | High TM-score | Structural similarities even with low sequence identity [1] | Exploitable through protein structure matching |

This leakage explains the paradoxical findings that some models maintain high performance even when critical input information (e.g., protein or ligand structures) is omitted, indicating they are not learning genuine interaction principles [15].

Memorization Versus Generalization

When models train on redundant datasets, they gravitate toward memorization-based shortcuts rather than learning the underlying relationship between structure and function. Studies systematically investigating these biases found that Atomic Convolutional Neural Network (ACNN) models performed comparably well on binding affinity prediction whether they were provided with full complex structures, ligand-only information, or protein-only information [15]. This clearly demonstrates that the models were leveraging dataset-specific biases rather than learning true structure-activity relationships.

Protocols for Creating Non-Redundant Datasets

The PDBbind CleanSplit Framework

The PDBbind CleanSplit methodology establishes a new standard for creating training datasets with minimized redundancy and data leakage [1]. The protocol employs a structure-based clustering algorithm that performs multimodal filtering based on three key similarity metrics:

- Protein Similarity: Calculated using TM-scores [1]

- Ligand Similarity: Calculated using Tanimoto scores [1]

- Binding Conformation Similarity: Calculated using pocket-aligned ligand root-mean-square deviation (RMSD) [1]

Protocol: Implementing the CleanSplit Filtering Algorithm

- Input: PDBbind general set (training) and CASF core sets (test)

- Step 1 - Cross-Set Filtering: Identify and remove all training complexes with:

- Combined protein, ligand, and binding pose similarity above threshold to any test complex

- Ligands with Tanimoto similarity >0.9 to any test ligand

- Step 2 - Intra-Set Deduplication: Iteratively identify similarity clusters within training data using adapted thresholds and remove complexes until all striking clusters are resolved

- Output: PDBbind CleanSplit training set (4% reduction from original) with strict separation from test benchmarks

The following workflow diagram illustrates the CleanSplit creation process:

Complementary Data Quality Improvements

Concurrent efforts address additional data quality issues in PDBbind that further hamper model generalizability. The HiQBind workflow applies systematic structural corrections through several automated modules [16] [17]:

Protocol: HiQBind-WF Structural Correction Steps

- Input: Raw PDB files from PDBbind or other sources

- Step 1 - Filtering: Apply successive filters to remove:

- Covalent binders (using CONECT records)

- Ligands with rare elements (beyond H, C, N, O, F, P, S, Cl, Br, I)

- Very small ligands (<4 heavy atoms)

- Complexes with severe steric clashes (<2Å heavy atom distances)

- Step 2 - Ligand Fixing: Correct bond orders, protonation states, and aromaticity

- Step 3 - Protein Fixing: Add missing atoms and residues in binding site regions

- Step 4 - Structure Refinement: Add hydrogens to the protein-ligand complex state (rather than separately)

- Output: High-quality, structurally plausible complexes for training

Experimental Validation of Redundancy Impact

Benchmarking Performance Drops with CleanSplit

Retraining existing models on PDBbind CleanSplit provides striking evidence of how data leakage had inflated reported performance metrics:

Table 2: Model Performance Comparison on Original vs. CleanSplit Training Data

| Model | Architecture Type | Performance on Original PDBbind | Performance on CleanSplit | Performance Drop |

|---|---|---|---|---|

| GenScore | Graph Neural Network | High benchmark performance (R² ~0.7 range) | Substantially reduced performance [1] | Up to 40% drop in R² score [18] |

| Pafnucy | 3D Convolutional Neural Network | High benchmark performance (R² ~0.49-0.73) [15] | Substantially reduced performance [1] | Significant drop (exact value not specified) [1] |

| Simple Search Algorithm | k-NN style similarity matching | Competitive with deep learning models [1] | N/A (demonstrates leakage mechanism) | Highlights memorization potential [1] |

The GEMS Model: Generalization Through Improved Architecture

To address the generalization challenge exposed by CleanSplit, the Graph neural network for Efficient Molecular Scoring (GEMS) was developed with specific architectural innovations:

Key Features of the GEMS Architecture:

- Sparse Graph Modeling: Represents protein-ligand interactions as sparse graphs rather than dense grids, improving efficiency and generalization [1]

- Transfer Learning Integration: Leverages pre-trained language models (ESM2 for proteins, ChemBERTa for ligands) to incorporate evolutionary and chemical information [1] [18]

- Ablation-Validated Learning: Performance collapses when protein nodes are removed, confirming genuine interaction learning rather than ligand memorization [1] [18]

When trained on CleanSplit, GEMS maintains high CASF benchmark performance where previous models show significant drops, demonstrating true generalization rather than data exploitation [1].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Resources for Robust Binding Affinity Model Development

| Resource Name | Type | Primary Function | Key Features |

|---|---|---|---|

| PDBbind CleanSplit | Curated Dataset | Training set with minimized redundancy | Strict separation from CASF benchmarks; reduced internal redundancy [1] |

| HiQBind-WF | Computational Workflow | Structural correction of protein-ligand complexes | Automated fixing of bonds, protonation, clashes; open-source [16] [17] |

| GEMS | Graph Neural Network | Binding affinity prediction | Sparse graph modeling; transfer learning integration; demonstrated generalization [1] [18] |

| DecoyDB | Pre-training Dataset | Self-supervised learning for complexes | 61K ground truth + 5.3M decoy structures; enables contrastive pre-training [19] |

| CASF Benchmark | Evaluation Suite | Standardized model assessment | Scoring, ranking, docking, and screening power metrics [1] [16] |

Future Directions and Implementation Recommendations

The field is moving toward more rigorous training paradigms to combat dataset redundancy:

- Self-Supervised Learning: DecoyDB enables contrastive pre-training on large-scale unlabeled complexes before fine-tuning on limited affinity data, improving data efficiency [19]

- Structural Quality Focus: HiQBind and similar initiatives address fundamental data quality issues beyond redundancy [16] [17]

- Ablation Testing: Rigorous validation should include controls that remove key inputs (e.g., protein structure) to confirm models learn genuine interactions [1] [15]

Implementation Protocol for Model Development:

- Step 1: Start with structurally validated datasets (HiQBind-WF processed)

- Step 2: Apply rigorous data splits (CleanSplit methodology) to ensure test independence

- Step 3: Consider pre-training on decoy datasets (DecoyDB) when labeled data is limited

- Step 4: Employ architectures with structural and biochemical priors (GEMS-style sparse graphs)

- Step 5: Validate with ablation studies to confirm learning of genuine protein-ligand interactions

The recognition of dataset redundancy as a critical factor in binding affinity prediction represents a maturation of the field. By adopting these curated datasets, rigorous protocols, and validated architectures, researchers can develop models with genuine generalization capability, ultimately accelerating robust drug discovery.

Building on a Clean Slate: Methodologies and Architectures for CleanSplit Training

Accurate prediction of protein-ligand binding affinity is a cornerstone of modern computational drug discovery, enabling researchers to identify promising therapeutic candidates more efficiently. The performance of machine learning models in this domain heavily depends on both the architectural choices and the quality of the training data. Historically, many models have been trained on benchmark datasets like PDBBind, but emerging research reveals that conventional data splitting methods can introduce significant data leakage, compromising model generalizability. Data leakage occurs when highly similar proteins or ligands appear in both training and testing sets, leading to artificially inflated performance metrics that do not reflect true predictive capability on novel complexes [10]. This application note examines the integration of three prominent neural network architectures—Graph Neural Networks (GNNs), Convolutional Neural Networks (CNNs), and Transformers—with the rigorously curated LP-PDBBind (Leak-Proof PDBBind) dataset. We provide a detailed comparative analysis and experimental protocols to guide researchers in developing more generalizable and reliable binding affinity models, framed within the broader thesis that data cleanliness is equally as critical as model architecture for success in real-world drug discovery applications.

The LP-PDBBind Dataset: A Foundation for Generalizable Models

The standard PDBBind dataset is a widely used resource containing protein-ligand complexes and their experimentally measured binding affinities. However, its standard "general," "refined," and "core" sets are cross-contaminated with proteins and ligands of high sequence and structural similarity. This overlap means that models evaluated on the standard core set are often tested on data very similar to their training sets, rather than on truly novel complexes [2]. The LP-PDBBind dataset was created specifically to address this fundamental flaw.

LP-PDBBind reorganizes the PDBBind data through a meticulous splitting procedure that minimizes sequence and chemical similarity between the training, validation, and test datasets. This process involves:

- Similarity Control: Implementing stringent controls on both protein sequence similarity and ligand chemical similarity across dataset splits to prevent memorization and overfitting [10] [2].

- Data Cleaning: Removing covalently bound ligand-protein complexes to focus on non-covalent binding, filtering out ligands with rare atomic elements, and correcting inconsistencies in reported binding free energies and units [2] [20].

- Stratified Splitting: Creating data splits that ensure the protein-ligand structural interaction patterns are distinct among the training, validation, and test sets, providing a more challenging and realistic benchmark [2].

Retraining models on LP-PDBBind leads to more accurate assessments of their capabilities. While performance on the standard PDBBind test set may drop due to the removal of data leakage, the models demonstrate superior generalizability on truly independent test sets like BDB2020+, which is compiled from recent BindingDB entries and filtered with the same similarity criteria [10]. This makes LP-PDBBind an essential resource for developing scoring functions that perform reliably in prospective drug discovery campaigns.

Key Architectures for Binding Affinity Prediction

Table 1: Core Architectures for Protein-Ligand Binding Affinity Prediction

| Architecture | Core Input Representation | Key Strengths | Inherent Limitations |

|---|---|---|---|

| Convolutional Neural Networks (CNNs) | 3D voxelized grid of the binding pocket, with channels representing different atom types or chemical features [21]. | - Excels at extracting spatially local patterns and interactions.- Directly models the 3D structural environment.- Proven success in pose prediction and virtual screening [21]. | - Computationally intensive due to 3D convolutions.- Limited explicit modeling of long-range interactions or graph-structured data.- Resolution of the grid can impact performance. |

| Graph Neural Networks (GNNs) | Molecular graphs where nodes represent atoms (with features like type, charge) and edges represent bonds or distances [22]. | - Naturally represents molecular topology and non-Euclidean data.- Captures both local and global dependencies through message passing.- Models such as IGN show strong performance on cleaned datasets [10]. | - Performance can be sensitive to the definition of nodes, edges, and their features.- May require sophisticated architectures to capture complex 3D geometric relationships. |

| Transformers | Sequences (e.g., amino acid sequences, SMILES strings) or tokenized structural representations [23] [24]. | - Powerful attention mechanism captures long-range, global dependencies within and between sequences.- Can integrate information from multiple modalities (sequence, structure).- Enables prediction of conformational changes and population shifts [24]. | - High computational demand and data requirements for effective training.- Less intuitive for direct spatial reasoning compared to CNNs and GNNs. |

Quantitative Performance Benchmarking

The true efficacy of an architecture is revealed through its performance on leak-proof datasets. The following table summarizes benchmark results for various architectures retrained on the LP-PDBBind dataset and evaluated on independent test sets.

Table 2: Performance Benchmark of Architectures on Clean and Independent Datasets

| Model/Architecture | LP-PDBBind Test Set (Performance Metric) | BDB2020+ Independent Set (Performance Metric) | Key Application Context |

|---|---|---|---|

| InteractionGraphNet (IGN) [10] | Improved performance post-retraining | Significant improvement in generalizability | Scoring and ranking new protein-ligand systems [10] |

| GNNSeq [22] | Pearson Correlation Coefficient (PCC): ~0.784 (on PDBBind v.2020 refined set) | N/A | Sequence-based prediction; Virtual screening (AUC: 0.74 on DUDE-Z) |

| Ligand-Transformer [24] | Comparably better correlation on PDBBind2020 | N/A | Predicts affinity & conformational space; Hit identification (58% hit rate vs. EGFRLTC) |

| CNN (3D Grid-Based) [21] | Outperformed AutoDock Vina in pose ranking and virtual screening (on its test sets) | N/A | Pose prediction and virtual screening using 3D structural data |

Model Development on Clean Data

Detailed Experimental Protocols

Protocol 1: Training a GNN on LP-PDBBind for Binding Affinity Prediction

This protocol details the process for training a Graph Neural Network, specifically using the InteractionGraphNet (IGN) architecture, on the LP-PDBBind dataset to achieve robust binding affinity prediction.

- Objective: To develop a GNN-based scoring function that generalizes effectively to novel protein-ligand complexes by leveraging the leak-proof data splits of LP-PDBBind.

Research Reagent Solutions:

- LP-PDBBind Dataset: The foundational cleaned dataset with predefined train/validation/test splits, ensuring minimal similarity leakage [20].

- IGN Model Code: Implementation of the InteractionGraphNet, which uses GNNs to represent raw 3D protein and ligand structures [10].

- RDKit: Open-source cheminformatics toolkit used for processing ligand structures, calculating molecular descriptors, and handling SMILE strings [22] [20].

- PyTor Geometric (PyG): A library for deep learning on irregularly structured input data such as graphs, providing the core infrastructure for GNN implementation.

Step-by-Step Workflow:

- Data Acquisition and Preparation:

- Download the LP-PDBBind meta-information file (

LP_PDBBind.csv) from the THGLab GitHub repository [20]. - Obtain the corresponding protein (PDB) and ligand (SDF/MOL2) structure files from the official PDBBind website, using the PDB IDs listed in the meta-file.

- Filter the dataset for non-covalent binders by selecting only entries where the

covalentcolumn isFALSEand the desired clean level (e.g.,CL1) isTRUE[20].

- Download the LP-PDBBind meta-information file (

- Feature Engineering and Graph Construction:

- For each protein-ligand complex, represent the ligand as a molecular graph where nodes are atoms and edges are bonds or interatomic distances within a cutoff.

- Extract atom-level features for ligand atoms (e.g., atom type, hybridization, partial charge) and protein atoms (e.g., amino acid type, residue identity).

- Construct a heterogeneous graph that connects the ligand subgraph to nearby protein residues, defining edges based on spatial proximity to capture intermolecular interactions.

- Model Training and Optimization:

- Initialize the IGN model architecture, which processes the graph to learn a representation for the complex and outputs a binding affinity score.

- Train the model using the predefined LP-PDBBind training set, employing a regression loss function like Mean Squared Error (MSE) between predicted and experimental binding affinities (e.g., pKd).

- Use the LP-PDBBind validation set for hyperparameter tuning and early stopping to prevent overfitting.

- Model Evaluation:

- Perform the primary evaluation on the held-out LP-PDBBind test set to measure performance in a leak-proof setting.

- For a true test of generalizability, benchmark the final model on the independent BDB2020+ dataset [10] [2]. Report key metrics such as Pearson Correlation Coefficient (PCC), Root Mean Square Error (RMSE), and Mean Absolute Error (MAE).

- Data Acquisition and Preparation:

GNN Training Workflow

Protocol 2: Implementing a Sequence-Based Transformer for Virtual Screening

This protocol outlines the use of a Transformer model, such as Ligand-Transformer, for sequence-based virtual screening, which can be particularly powerful when structural data is limited or for large-scale screening.

- Objective: To employ a Transformer architecture for predicting protein-ligand binding affinity using primarily sequence information, enabling the screening of large compound libraries against target proteins.

Research Reagent Solutions:

- Ligand-Transformer Framework: A deep learning method based on the transformer architecture that takes the amino acid sequence of the target protein and the topology of the small molecule as input [24].

- BindingDB or TargetMol: Public and commercial databases of experimental binding data and purchasable compounds for sourcing active and decoy molecules for screening [24].

- GraphMVP Framework: Used within Ligand-Transformer to generate informed ligand representations by injecting knowledge of 3D molecular geometry into a 2D molecular graph encoder [24].

Step-by-Step Workflow:

- Data Preprocessing and Encoding:

- For the target protein, input the amino acid sequence. For the ligand, input a representation of its topology, such as a SMILES string or a 2D molecular graph.

- Tokenize the protein sequence and the ligand representation (e.g., using a chemical vocabulary for SMILES).

- Use pre-trained encoders (e.g., from AlphaFold for the protein and GraphMVP for the ligand) to generate high-dimensional initial representations for both molecules, capturing their intrinsic structural and chemical properties [24].

- Model Architecture and Fine-Tuning:

- Process the protein and ligand representations through a cross-modal attention network. This allows the model to exchange information between the protein and ligand representations, effectively "reasoning" about their potential interaction [24].

- The architecture typically includes downstream prediction heads for binding affinity (e.g., pKd) and optionally for other properties, such as interatomic distances.

- If a sufficiently large, task-specific dataset is available (e.g., EGFRLTC-290), fine-tune the pre-trained Ligand-Transformer model on this data to enhance its predictive accuracy for the specific target [24].

- Virtual Screening Execution:

- Apply the trained/fine-tuned model to a large library of compounds (e.g., the TargetMol subset used in the Ligand-Transformer study [24]).

- Rank the compounds based on their predicted binding affinity (e.g., lowest IC50 or highest pKd).

- Apply additional criteria based on the model's outputs, such as consistency across ensemble models or predicted binding mode characteristics, to select a shortlist of top candidates for experimental validation [24].

- Experimental Validation:

- Procure the top-ranked compounds and test them experimentally using binding assays (e.g., measuring IC50 values) to validate the model's predictions and identify true hits.

- Data Preprocessing and Encoding:

Integrated Discussion and Architectural Selection Guide

The choice of architecture is not a one-size-fits-all decision but should be guided by the specific research question, data availability, and application context. When working with the LP-PDBBind dataset, the following integrated considerations emerge:

- For Direct 3D Structure Utilization: If high-quality, co-crystalized protein-ligand structures are available and the primary goal is to leverage explicit 3D spatial information, CNN-based models are a strong choice. Their ability to learn from voxelized representations of the binding pocket makes them exceptionally suited for tasks like pose prediction and scoring when the 3D complex is known or can be reliably docked [21].

- For Robust Representation of Molecular Topology: If the research aims to balance structural information with robustness to conformational changes, or requires a natural representation of molecular connectivity, GNNs like IGN are highly recommended. Their performance on the LP-PDBBind benchmark and subsequent strong generalizability to new systems like those in BDB2020+ make them a top contender for robust scoring and ranking applications [10]. They are particularly powerful when the input is a molecular graph derived from a 3D structure.

- For Sequence-Based Screening and Leveraging Pre-trained Models: When 3D structural data is unavailable, unreliable, or when screening vast libraries against a target using only its amino acid sequence, Transformer models like Ligand-Transformer offer a powerful and flexible solution. Their ability to capture long-range dependencies and integrate information from sequences and ligand topologies enables them to predict not only affinity but also aspects of the bound conformational landscape, providing deeper mechanistic insight [24]. They are ideal for large-scale virtual screening in the absence of explicit structural complexes.

Ultimately, the most profound insight from recent research is that the careful curation of training data, as embodied by the LP-PDBBind dataset, is a force multiplier for any architectural choice. A simpler model trained on a rigorously leak-proof dataset can often generalize more effectively than a complex model trained on a contaminated benchmark. Therefore, the architectural selection should be made in concert with a commitment to utilizing the highest-quality, most generalizable data available.

Leveraging Pre-trained Models and Transfer Learning for Enhanced Feature Extraction

The field of computational drug design relies on accurate scoring functions to predict protein-ligand binding affinities, a critical task for structure-based drug design (SBDD). For years, the standard practice has involved training deep learning models on the PDBbind database and evaluating their generalization capability using the Comparative Assessment of Scoring Functions (CASF) benchmark datasets. However, recent research has exposed a fundamental flaw in this paradigm: widespread train-test data leakage between these datasets has severely inflated performance metrics, leading to overestimation of model generalization capabilities [25].

The groundbreaking PDBbind CleanSplit study revealed that nearly 49% of all CASF complexes had exceptionally similar counterparts in the training data, sharing not only similar ligand and protein structures but also comparable ligand positioning within protein pockets and closely matched affinity labels [25]. This redundancy enabled models to achieve high benchmark performance through simple memorization rather than genuine understanding of protein-ligand interactions. Alarmingly, some models performed comparably well on CASF benchmarks even after omitting all protein or ligand information from their input data, confirming they were not learning fundamental interaction principles [25] [26].

This data leakage crisis necessitates a fundamental shift in approach. This Application Note provides detailed protocols for leveraging pre-trained models and transfer learning to build robust binding affinity predictors that generalize effectively to novel protein-ligand complexes when trained on rigorously curated datasets like PDBbind CleanSplit.

The PDBbind CleanSplit Solution: A New Foundation for Model Training

The CleanSplit Filtering Methodology

PDBbind CleanSplit was created using a novel structure-based filtering algorithm that eliminates data leakage and reduces internal redundancies through a multi-stage process [25]:

- Structure-based clustering: Similarity between protein-ligand complexes is computed using a combined assessment of protein similarity (TM scores), ligand similarity (Tanimoto scores), and binding conformation similarity (pocket-aligned ligand root-mean-square deviation)

- Train-test separation: All training complexes closely resembling any CASF test complex are excluded, along with training complexes containing ligands identical to those in the test set (Tanimoto > 0.9)

- Redundancy reduction: Similarity clusters within the training dataset itself are identified and resolved using adapted filtering thresholds, removing 7.8% of training complexes to enhance diversity

Impact on Model Performance Assessment

Retraining existing top-performing models on CleanSplit caused substantial performance drops on benchmark tests, confirming their previous high scores were largely driven by data memorization [25]. This establishes CleanSplit as a more reliable foundation for developing truly generalizable binding affinity prediction models.

Table 1: Performance Impact of PDBbind CleanSplit on Existing Models

| Model Type | Performance on Original PDBbind | Performance on CleanSplit | Interpretation |

|---|---|---|---|

| GenScore | High benchmark performance | Substantially reduced performance | Previous performance inflated by data leakage |

| Pafnucy | High benchmark performance | Substantially reduced performance | Previous performance inflated by data leakage |

| Simple similarity-based algorithm | Competitive performance (Pearson R = 0.716) | N/A | Confirms benchmarks can be gamed through memorization |

Transfer Learning Framework for Binding Affinity Prediction

Meta-Learning Enhanced Transfer Learning Protocol

The following protocol combines meta-learning with transfer learning to mitigate negative transfer—a phenomenon where knowledge from source domains negatively impacts target task performance [27].

Phase 1: Source Domain Pre-processing

- Step 1: Collect abundant source domain data (e.g., protein kinase inhibitors from ChEMBL and BindingDB)

- Step 2: Standardize molecular representations (ECFP4 fingerprints, 4096 bits)

- Step 3: Transform affinity data to binary classification (active/inactive using threshold of 1000 nM)

- Step 4: Apply structural clustering to identify representative subsets

Phase 2: Meta-Learning for Sample Weighting

- Step 5: Define meta-model architecture with parameters φ

- Step 6: Initialize base model with parameters θ

- Step 7: For each iteration:

- Meta-model predicts weights for source data points

- Base model trains on weighted source data

- Base model predicts on target validation set

- Validation loss updates meta-model parameters

- Step 8: Output optimal source sample weights and base model initialization

Phase 3: Transfer Learning Execution

- Step 9: Pre-train base model on optimally weighted source data

- Step 10: Fine-tune on target domain (PDDBind CleanSplit)

- Step 11: Evaluate on strictly independent test sets (CASF benchmarks)

GEMS Architecture: Integrating Transfer Learning and Sparse Graph Modeling

The Graph Neural Network for Efficient Molecular Scoring (GEMS) demonstrates how transfer learning principles can be successfully applied within the CleanSplit framework [25]:

Architecture Components:

- Sparse graph modeling: Represents protein-ligand interactions as graphs with minimal redundant connections

- Transfer learning from language models: Leverages pre-trained protein language models for enhanced feature extraction

- Multi-scale feature integration: Combines atomic, residue, and molecular-level features

Implementation Protocol:

- Step 1: Initialize protein feature extractor with weights from pre-trained language model (e.g., ProtBERT, ESM)

- Step 2: Construct graph representation with:

- Nodes: Protein residues and ligand atoms

- Edges: Physicochemical interactions within cutoff distance

- Step 3: Implement message-passing layers with attention mechanisms

- Step 4: Train on PDBbind CleanSplit with standard affinity prediction loss function

- Step 5: Validate on strictly independent CASF benchmarks

Research Reagent Solutions for Transfer Learning Implementation

Table 2: Essential Research Reagents and Computational Tools

| Reagent/Tool | Type | Function in Protocol | Implementation Notes |

|---|---|---|---|

| PDBbind CleanSplit | Curated Dataset | Primary training data | Provides leakage-free foundation for model development |

| CASF 2016/2020 | Benchmark Dataset | Model evaluation | Strictly independent test sets for generalization assessment |

| ECFP4 Fingerprints | Molecular Representation | Compound structure encoding | 4096-bit fixed length, RDKit implementation |

| Protein Language Models (ESM, ProtBERT) | Pre-trained Models | Feature extraction initialization | Transfer learned protein representations |

| GEMS Architecture | Graph Neural Network | Binding affinity prediction | Sparse graph modeling of interactions |

| Meta-Weight-Net Algorithm | Meta-Learning Framework | Sample weighting optimization | Mitigates negative transfer between domains |

| RF-Score Features | Traditional ML Features | Baseline comparison | Atom-pair distance counts for random forest models |

| HiQBind-WF | Quality Control Workflow | Data preprocessing and validation | Corrects structural artifacts in protein-ligand complexes |

Experimental Protocols and Validation Methodologies

Protocol: Negative Transfer Mitigation in Kinase Inhibitor Prediction

This protocol demonstrates the meta-learning framework for predicting protein kinase inhibitors while mitigating negative transfer [27].

Materials:

- Kinase inhibitor data from ChEMBL (version 34) and BindingDB

- 7098 unique PKIs with activity against 162 protein kinases

- RDKit for molecular representation

- Meta-learning framework with base model and meta-model

Procedure:

- Data Curation:

- Filter to include only Ki values with molecular mass < 1000 Da

- Standardize structures and generate canonical nonisomeric SMILES strings

- Calculate geometric mean for multiple Ki values per compound

- Transform Ki values to binary classification (active/inactive using 1000 nM threshold)

Domain Specification:

- Define target data set:

T^(t) = {(x_i^t, y_i^t, s^t)}(inhibitors of data-reduced PK) - Define source data set:

S^(-t) = {(x_j^k, y_j^k, s^k)}_(k≠t)(PKIs of multiple PKs excluding target)

- Define target data set:

Model Definition:

- Base model

fwith parametersθfor classifying active/inactive compounds - Meta-model

gwith parametersφfor predicting sample weights

- Base model

Meta-Training Loop:

- For each iteration:

- Meta-model predicts weights for source data points

- Base model trains on weighted source data using weighted loss function

- Base model predicts on target training data

- Validation loss calculated and used to update meta-model parameters

- For each iteration:

Transfer Learning Execution:

- Pre-train base model using optimal weights from meta-learning

- Fine-tune on reduced target data

- Evaluate generalization on held-out test set

Validation:

- Compare against baseline models without meta-learning

- Statistical significance testing of performance differences

- Ablation studies on meta-learning components

Protocol: Binding Affinity Prediction with GEMS on CleanSplit

This protocol details the implementation of the GEMS model trained on PDBbind CleanSplit for binding affinity prediction [25].

Materials:

- PDBbind CleanSplit training dataset

- CASF 2016 and 2020 benchmark sets

- Graph neural network framework (PyTorch Geometric/DGL)

- Pre-trained protein language models

Procedure:

- Data Preparation:

- Load PDBbind CleanSplit training complexes

- Extract protein sequences and ligand structures

- Generate graph representations with:

- Protein residue nodes (features from language model)

- Ligand atom nodes (chemical features)

- Edges based on spatial proximity and interaction types

Model Initialization:

- Initialize protein feature extractor with pre-trained language model weights

- Initialize graph neural network with sparse attention mechanisms

- Set optimization parameters (learning rate, batch size, epochs)

Training Loop:

- For each batch of protein-ligand complexes:

- Construct graph representation

- Forward pass through GEMS architecture

- Calculate loss between predicted and experimental binding affinities

- Backward pass and parameter updates

- For each batch of protein-ligand complexes:

Validation:

- Evaluate on CASF benchmarks after each epoch

- Monitor for overfitting despite reduced leakage

- Conduct ablation studies (e.g., omitting protein nodes to test for genuine learning)

The integration of pre-trained models and transfer learning with rigorously curated datasets like PDBbind CleanSplit represents a paradigm shift in binding affinity prediction. The protocols outlined in this Application Note provide researchers with practical methodologies for developing models that generalize to novel protein-ligand complexes rather than merely memorizing training data.

Future directions in this field include:

- Expansion of the "smarter data" approach combining AI-generated synthetic structures with rigorous quality filtering [28]

- Integration of molecular dynamics simulations to capture conformational dynamics beyond static snapshots

- Participation in initiatives like Target2035 that aim to create massive, high-quality, standardized protein-ligand binding datasets [28]

- Development of more sophisticated meta-learning frameworks that dynamically balance positive and negative transfer across diverse biological targets

By adopting these protocols and contributing to the ongoing refinement of data curation and transfer learning methodologies, researchers can accelerate progress toward truly predictive computational drug design.

The accurate prediction of protein-ligand binding affinity is a fundamental challenge in computational drug design. Traditional scoring functions have shown limited accuracy, prompting the development of deep-learning-based alternatives [1]. However, a critical issue has undermined confidence in these new models: train-test data leakage between the primary training database (PDBbind) and standard evaluation benchmarks (CASF) [1] [2]. This leakage has artificially inflated performance metrics, leading to overestimation of model generalization capabilities [1].

This case study examines the implementation of a novel graph neural network model (GEMS) trained on PDBbind CleanSplit, a rigorously filtered dataset designed to eliminate data leakage and redundancy [1]. We present comprehensive application notes and experimental protocols for reproducing this approach, which demonstrates robust generalization to strictly independent test datasets through sparse graph modeling and transfer learning from language models [1].

Background and Problem Formulation

The Data Leakage Problem in PDBbind

The PDBbind database has served as the primary training resource for most scoring functions, with evaluation typically performed using the Comparative Assessment of Scoring Function (CASF) benchmark [2]. Studies have revealed that significant structural similarities exist between these datasets, creating a form of train-test contamination [1]. When models encounter test complexes that closely resemble training examples, they can achieve high performance through memorization rather than genuine learning of protein-ligand interactions [1].

Analysis using structure-based clustering algorithms identified that approximately 49% of CASF test complexes have exceptionally similar counterparts in the training set, sharing analogous ligand and protein structures with comparable binding conformations and affinity labels [1]. This fundamental flaw in dataset construction has compromised the evaluation of model generalizability.

Existing Solutions and Limitations

Previous attempts to address this issue included:

- Scaffold or protein family-based splits: These ensure proteins in test sets are dissimilar from training proteins but typically ignore ligand similarities [2].

- Time-based splits: Using chronological cutoff dates mimics blind testing but fails to account for similar proteins/ligands appearing across time periods [2].

Neither approach comprehensively addresses the multimodal nature of structural similarity in protein-ligand complexes.

The CleanSplit Dataset: Methodology and Characteristics

Filtering Algorithm and Creation Protocol

The PDBbind CleanSplit dataset was created using a novel structure-based clustering algorithm that performs multimodal assessment of complex similarity [1]. The filtering protocol involves these critical steps:

Multimodal Similarity Assessment: Compute similarity between all protein-ligand complexes using:

Train-Test Separation: Remove all training complexes that closely resemble any CASF test complex according to the combined similarity metrics [1].

Ligand-Based Filtering: Eliminate training complexes with ligands identical to those in the CASF test set (Tanimoto > 0.9) to prevent ligand memorization [1].

Redundancy Reduction: Identify and resolve similarity clusters within the training dataset itself by iteratively removing complexes until all striking similarities are eliminated [1].

This filtering process resulted in the removal of approximately 4% of training complexes due to train-test similarity and an additional 7.8% to address internal redundancy [1].

Dataset Composition and Statistics

Table 1: PDBbind CleanSplit Composition and Filtering Impact

| Metric | Original PDBbind | CleanSplit | Reduction |

|---|---|---|---|

| Training complexes with CASF similarities | ~600 complexes | 0 complexes | 100% |

| CASF complexes with training similarities | 49% | 0% | 100% |

| Internal training redundancy | ~50% in similarity clusters | Minimal clusters | >90% reduction |

| Training set size | Full PDBbind refined set | ~88.2% of original | 11.8% removed |