Beyond the Benchmark: Tackling Data Bias to Build Generalizable Affinity Prediction Models

Accurate prediction of drug-target binding affinity is crucial for computational drug discovery, yet the generalization capability of many deep learning models has been severely overestimated due to pervasive data bias.

Beyond the Benchmark: Tackling Data Bias to Build Generalizable Affinity Prediction Models

Abstract

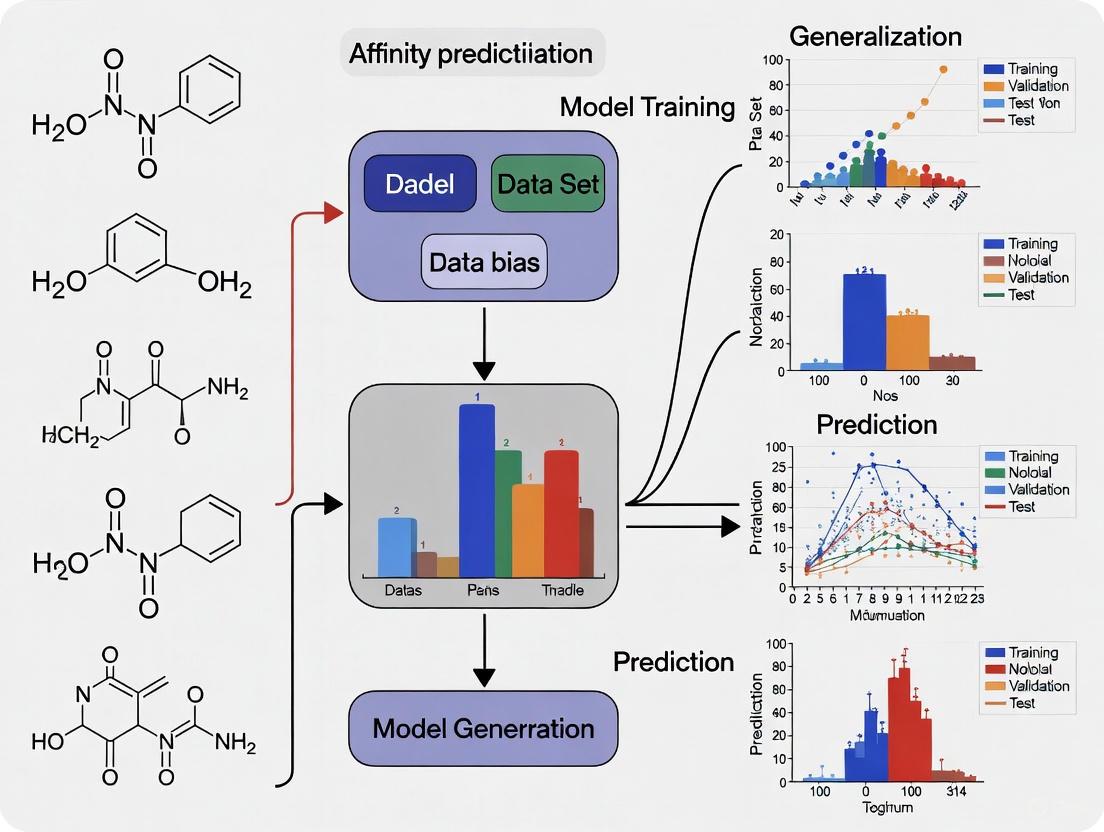

Accurate prediction of drug-target binding affinity is crucial for computational drug discovery, yet the generalization capability of many deep learning models has been severely overestimated due to pervasive data bias. This article explores the critical issue of train-test data leakage and dataset redundancy in public benchmarks like PDBbind and CASF. We examine how these biases inflate performance metrics, present novel methodological solutions like the PDBbind CleanSplit protocol and similarity-aware evaluation frameworks for robust model training, and discuss advanced architectures that maintain performance on strictly independent tests. For researchers and drug development professionals, this synthesis provides a roadmap for developing and validating truly generalizable affinity prediction models to enhance real-world drug discovery pipelines.

The Benchmarking Mirage: Exposing Data Bias in Affinity Prediction

The Critical Role of Binding Affinity Prediction in Modern Drug Discovery

Drug-target binding affinity (DTA), which quantifies the strength of interaction between a small molecule (drug) and its protein target, serves as a fundamental metric in drug discovery and development. Accurate prediction of DTA is crucial for efficiently identifying promising drug candidates, understanding molecular interactions, and accelerating the lengthy and costly drug development process [1]. Traditional drug discovery is notoriously expensive, time-consuming, and prone to failure, often requiring over a decade and billions of dollars to bring a single drug to market [2] [3]. In this context, artificial intelligence (AI) and computational methods have emerged as potent substitutes over the last decade, providing strong answers to challenging biological issues and offering reliable alternatives that diminish the constraints of traditional experimental methods [2].

The evolution of DTA prediction has transitioned from physics-based simulations and traditional machine learning to sophisticated deep learning architectures. Early computational strategies relied mainly on physics-based methods like molecular docking and molecular dynamics simulations, which, while providing detailed structural insights, demand extensive computational resources and accurate structural input, limiting their applicability in large-scale screening [3]. The last decade has witnessed a paradigm shift with the widespread adoption of deep learning, which can handle large datasets and learn complex non-linear relationships, thus enabling more accurate and scalable DTA predictions [2].

However, a critical challenge has emerged that threatens the validity of many reported advances: data bias and inadequate generalization. Recent studies have revealed that train-test data leakage between standard benchmarks has severely inflated the performance metrics of many deep-learning-based models, leading to an overestimation of their true capabilities [4] [5]. This whitepaper provides an in-depth technical examination of DTA prediction methodologies, the critical issue of generalization, and the experimental frameworks essential for robust model development.

Key Methodologies in Binding Affinity Prediction

Evolution of Computational Approaches

The journey of DTA prediction methodologies can be broadly categorized into three distinct eras, each marked by increasing sophistication and performance.

Conventional Physics-Based Methods: These early approaches, such as molecular docking, predict stable binding conformations and estimate affinities using scoring functions based on physical force fields, empirical data, or knowledge-based statistical potentials [1] [3]. While they offer valuable structural insights, their accuracy is often limited, and they are computationally intensive, making them unsuitable for large-scale virtual screening.

Traditional Machine Learning Methods: From around 2005, methods like KronRLS and SimBoost began to gain traction [3]. These models learned from known drug-target binding data using manually curated features or similarity metrics (e.g., drug-drug and target-target similarity) [2] [1]. They demonstrated improved accuracy over conventional methods but were still constrained by their reliance on human-engineered features.

Deep Learning-Based Methods: The increase in available structural and affinity data, coupled with enhanced computational power, facilitated the rise of deep learning. A significant advantage of deep learning is its ability to automatically learn relevant features from raw data, thus overcoming the limitation of manual feature selection [2]. Early deep learning models utilized convolutional neural networks (CNNs) and recurrent neural networks (RNNs) on one-dimensional sequences of drugs (e.g., SMILES strings) and proteins (amino acid sequences) [2]. Subsequently, the field has progressed through several advanced paradigms:

- Graph-Based Models: These represent molecules as graphs, where atoms are nodes and bonds are edges. Models like GraphDTA use Graph Neural Networks (GNNs) to capture intricate structural information, providing a richer representation than sequences [3].

- Attention-Based and Multimodal Architectures: Modern frameworks, such as HPDAF, integrate multiple data types (e.g., protein sequences, drug graphs, and binding pocket structures) using hierarchical attention mechanisms. This allows the model to dynamically focus on the most critical features for prediction [3].

- Language Model Derivatives: The development of domain-specific large language models (LLMs) like ChemBERTa (for drugs) and ProtBERT (for proteins) has enabled the extraction of semantic features from chemical and biological sequences. The embeddings from these models can be combined with other architectures for enhanced prediction [2].

- Equivariant Graph Networks: Cutting-edge approaches, such as DockBind, leverage equivariant graph neural networks (e.g., MACE) that respect physical symmetries to model detailed atomic environments from 3D docking poses, further incorporating physical and chemical descriptors [6].

Comparative Analysis of Deep Learning Architectures

Table 1: Comparison of Key Deep Learning Architectures for DTA Prediction.

| Model Type | Key Features | Representative Models | Advantages | Limitations |

|---|---|---|---|---|

| Sequence-Based | Uses 1D SMILES for drugs and amino acid sequences for proteins. | DeepDTA, DeepAffinity [3] | Simple input; good performance improvement over pre-deep learning methods. | Ignores 3D structural information and specific binding pockets. |

| Graph-Based | Represents drugs and/or proteins as graphs to capture topology. | GraphDTA, GEMS [4] [3] | Better representation of molecular structure and atomic interactions. | Early models did not fully incorporate protein pocket data. |

| Pocket-Aware | Integrates structural information from protein-binding pockets. | PocketDTA, DeepDTAF [3] | Captures the local chemical environment where binding occurs, enhancing accuracy. | Relies on accurate pocket identification and definition. |

| Multimodal | Fuses multiple data types (sequence, graph, structure). | HPDAF, DockBind [6] [3] | Leverages complementary information; dynamic feature importance via attention. | Complex architecture; requires diverse and high-quality input data. |

| Physics-Informed | Incorporates physical principles and/or docking poses. | DockBind [6] | Provides a more physically realistic model of interactions. | Computationally expensive; depends on the accuracy of pose generation. |

The following diagram illustrates the logical progression and relationships between these key methodological paradigms in the field.

Diagram 1: The evolution of methodologies in binding affinity prediction.

The Critical Challenge of Data Bias and Generalization

The PDBbind-CASF Data Leakage Problem

A groundbreaking study published in Nature Machine Intelligence (2025) exposed a fundamental flaw in the evaluation of deep-learning-based scoring functions [4] [5]. The field has heavily relied on the PDBbind database for training models and the Comparative Assessment of Scoring Functions (CASF) benchmark for testing. The study revealed a substantial train-test data leakage between these datasets, meaning that models were being tested on data that was highly similar to what they were trained on, rather than on truly novel challenges.

The researchers proposed a novel structure-based clustering algorithm to quantify the similarity between protein-ligand complexes in PDBbind and CASF. This algorithm uses a combined assessment of:

- Protein similarity (TM-scores)

- Ligand similarity (Tanimoto scores)

- Binding conformation similarity (pocket-aligned ligand root-mean-square deviation)

This analysis identified nearly 600 highly similar pairs between the training and test sets, affecting 49% of all CASF complexes [4]. This leakage allows models to "cheat" by memorizing structural similarities and associated affinity labels, rather than learning the underlying principles of protein-ligand interactions. Alarmingly, some models were found to perform comparably well on CASF benchmarks even after omitting all protein or ligand information, confirming that their predictions were not based on a genuine understanding of interactions [4].

The PDBbind CleanSplit Solution and Its Impact

To address this critical issue, the study introduced PDBbind CleanSplit, a new training dataset curated using their filtering algorithm to eliminate train-test data leakage and reduce redundancies within the training set itself [4]. The creation of CleanSplit involved two key steps:

- Removing train-test leakage: All training complexes that closely resembled any CASF test complex (based on the combined similarity metrics) were excluded. This also included training complexes with ligands identical to those in the test set (Tanimoto > 0.9).

- Reducing training set redundancy: The algorithm identified and iteratively removed complexes from large similarity clusters within the training data, which discourages mere memorization and encourages better generalization.

The impact of retraining existing state-of-the-art models on CleanSplit was profound. Models like GenScore and Pafnucy, which had previously shown excellent benchmark performance, saw their performance drop markedly when trained on the cleaned dataset [4]. This confirmed that their prior high scores were largely driven by data leakage. In contrast, the authors' Graph Neural Network for Efficient Molecular Scoring (GEMS), which leverages a sparse graph model and transfer learning from language models, maintained high performance when trained on CleanSplit, demonstrating robust generalization to strictly independent test data [4].

Experimental Protocols and Benchmarking

Standardized Evaluation Metrics and Datasets

Robust evaluation of DTA models requires standardized benchmarks and multiple metrics to assess different aspects of predictive power. The primary datasets used for training and evaluation include PDBbind, CASF, BindingDB, and others [1]. As discussed, the critical importance of using leakage-free splits like CleanSplit cannot be overstated for a genuine assessment of generalizability [4].

Table 2: Key Datasets for Drug-Target Binding Affinity Prediction.

| Dataset | Complexes | Affinities | 3D Structures | Primary Use |

|---|---|---|---|---|

| PDBbind | ~19,588 | ~19,588 | Yes | Primary training database for many models. |

| CASF | 285 | 285 | Yes | Standard benchmark for scoring power, docking power, ranking power. |

| BindingDB | ~1.69 million | ~1.69 million | Partial | Large-scale database for binding measurements; useful for pre-training. |

| Davis | N/A | Kinase-inhibitor data | N/A | Used for specific validation studies (e.g., kinase binding) [6]. |

Evaluation typically focuses on several "powers":

- Scoring Power: The ability to predict absolute binding affinity values, measured by the Pearson correlation coefficient (R) and the root-mean-square error (RMSE) between predicted and experimental values [1].

- Ranking Power: The ability to correctly rank ligands based on their affinity for a specific target, often measured by the Spearman correlation coefficient [1].

- Docking Power: The ability to identify the native binding pose among decoy poses [1].

Detailed Methodology: The HPDAF Framework

The HPDAF (Hierarchically Progressive Dual-Attention Fusion) framework exemplifies a modern, multimodal approach to DTA prediction [3]. Its experimental workflow and architecture provide a template for robust model development.

1. Data Representation and Input Modalities:

- Protein Sequences: Amino acid sequences are used as input.

- Drug Molecular Graphs: Drugs are represented as graphs with atoms as nodes and bonds as edges.

- Protein-Ligand Interaction Graphs: Structural information from protein-binding pockets is represented as graphs, capturing the local atomic environment crucial for binding.

2. Specialized Feature Extraction Modules:

- Each input modality is processed by a dedicated deep learning module (e.g., CNNs for sequences, GNNs for molecular graphs) to extract high-level, representative features.

3. Hierarchical Dual-Attention Fusion:

- This is the core innovation of HPDAF. The extracted features are fused using a two-tiered attention mechanism:

- Modality-Aware Cross-Attention (MACN): This focuses on learning the importance of features within each modality (e.g., which atoms or residues are most critical).

- Affinity-Aware Attention (AACN): This operates across modalities, dynamically calibrating and weighting the contributions of the protein, drug, and pocket features to the final affinity prediction.

4. Ablation Studies:

- To validate the contribution of each component, HPDAF employed ablation studies. These experiments systematically removed or altered parts of the model (e.g., using only sequence data, or removing the attention mechanisms). The results confirmed that the full multimodal model with dual-attention fusion achieved the best performance, significantly outperforming ablated versions and other state-of-the-art models on benchmarks like CASF-2016 [3].

The following workflow diagram outlines the key stages of a robust DTA prediction experiment, from data preparation to model validation.

Diagram 2: Workflow for robust binding affinity prediction experiments.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools and Resources for DTA Prediction Research.

| Tool / Resource | Type | Primary Function | Relevance to DTA Prediction |

|---|---|---|---|

| PDBbind CleanSplit | Curated Dataset | Provides a leakage-free training and benchmark dataset. | Essential for training models that generalize to novel complexes; addresses data bias [4]. |

| GEMS (Graph Neural Network for Efficient Molecular Scoring) | Software Model | A GNN model for binding affinity prediction. | Demonstrates robust generalization when trained on CleanSplit; uses sparse graphs and transfer learning [4]. |

| HPDAF | Software Framework | A multimodal deep learning tool for DTA. | Integrates sequences, drug graphs, and pocket structures via hierarchical attention [3]. |

| DockBind | Software Framework | A physics-informed DTA prediction framework. | Leverages docking poses from DiffDock and equivariant GNNs (MACE) to enhance affinity estimation [6]. |

| ProtInter | Computational Tool | Calculates non-covalent interactions from PDB files. | Used to extract features (H-bonds, hydrophobic interactions) for machine learning models [7]. |

| ESM & ChemBERTa | Pre-trained Language Model | Provides semantic embeddings for proteins and drugs. | Used for transfer learning, providing crucial sequence-based features for downstream DTA models [2] [6]. |

The field of binding affinity prediction is at a pivotal juncture. The exposure of widespread data bias has necessitated a re-evaluation of model performance and a renewed focus on true generalization. Future research will likely focus on several key areas:

- Advanced Data Curation: Widespread adoption of rigorous, structure-based dataset splitting, as exemplified by PDBbind CleanSplit, will become the standard to ensure fair and meaningful model evaluation [4].

- Integration of AI Virtual Cells (AIVCs): The FDA's move to phase out animal testing is accelerating the development of AI-driven in silico models. AIVCs offer a systems-level framework for modeling molecular interactions in dynamic, cell-specific contexts. Progress in DTA prediction will strengthen the molecular foundations of AIVCs, which in turn will provide more realistic simulation environments for testing affinity predictors [1].

- Temporal Dynamics and Multi-Omics Integration: Future models will need to move beyond static structures to simulate the temporal dynamics of binding and integrate multi-omics data to understand binding in a broader biological context, supporting more accurate and personalized therapeutic outcomes [1].

In conclusion, binding affinity prediction is a cornerstone of modern computational drug discovery. While deep learning has driven remarkable progress, the community must prioritize addressing data bias to build models that genuinely understand protein-ligand interactions. By leveraging multimodal architectures, physics-informed learning, and rigorously curated data, the next generation of DTA predictors will play an even more critical role in reducing the time and cost of bringing new medicines to patients.

The development of accurate scoring functions to predict protein-ligand binding affinity is a cornerstone of computational drug design. In recent years, deep learning models have promised to revolutionize this field. However, a critical and widespread issue has undermined their real-world applicability: a significant overestimation of their generalization capabilities due to train-test data leakage between the primary training database, PDBbind, and the standard evaluation benchmark, the Comparative Assessment of Scoring Functions (CASF) [4]. This leakage has created an illusion of performance, where models appear highly accurate during benchmarking but fail dramatically when faced with truly novel protein-ligand complexes. This problem strikes at the core of a broader thesis on data bias in affinity prediction research, revealing how biases in dataset construction can compromise the scientific validity of an entire field. The recent discovery that nearly half of the CASF test complexes have overly similar counterparts in the PDBbind training set has forced a major re-evaluation of model performance claims and dataset curation practices [4]. This whitepaper details the nature of this data leakage, its quantifiable impact on model performance, and the emerging solutions that aim to restore rigor and reliability to binding affinity prediction.

The Anatomy of Data Leakage in PDBbind and CASF

The PDBbind Database and CASF Benchmark

The PDBbind database is a comprehensive, curated collection of protein-ligand complexes sourced from the Protein Data Bank (PDB), each annotated with experimentally measured binding affinities [8]. It is typically divided into a "general" set used for training and a "refined" set of higher-quality complexes. The CASF benchmark, developed to assess the "scoring power" of predictive models, is often derived from this refined set [4] [8]. For years, the standard protocol involved training models on the general or refined PDBbind set and evaluating their performance on the CASF core sets (e.g., CASF-2013, CASF-2016). This practice was presumed to provide a fair assessment of a model's ability to generalize to unseen data. However, this protocol contained a fundamental flaw: the assumption that the CASF test sets were independent of the training data. It is now understood that this assumption was incorrect, leading to a systematic inflation of reported performance metrics across numerous published models [4].

Mechanisms of Train-Test Contamination

The data leakage between PDBbind and CASF is not merely a result of random overlap but stems from deep structural similarities between complexes in the training and test sets. Traditional sequence-based splitting methods, which rely on protein sequence identity, have proven insufficient to guarantee true independence. The leakage occurs through several specific mechanisms:

- Protein Structure Similarity: Complexes can share highly similar protein structures (high TM-scores) even when their sequence identity is low [4]. This allows models to recognize protein structural patterns from training data during testing.

- Ligand Chemical Similarity: Ligands in the test set may be chemically nearly identical (high Tanimoto similarity) to those in the training set, enabling prediction based on ligand memorization rather than understanding of interactions [4].

- Binding Conformation Similarity: The three-dimensional positioning of the ligand within the protein binding pocket (measured by pocket-aligned ligand RMSD) can be nearly identical between training and test complexes, providing almost identical input data points to the models [4].

When combined, these factors create a scenario where a test complex is not a genuinely new challenge for a trained model but rather a slight variation of what it has already encountered during training.

Quantifying the Overlap: A Structural Clustering Analysis

A Multimodal Filtering Algorithm

To rigorously quantify the extent of data leakage, a recent study introduced a novel structure-based clustering algorithm [4]. Unlike traditional methods that rely primarily on sequence identity, this algorithm performs a multimodal assessment of similarity between any two protein-ligand complexes by evaluating three key metrics simultaneously:

- Protein Similarity: Calculated using the TM-score, a metric for protein structural similarity that is more sensitive than sequence alignment, especially for proteins with low sequence identity [4].

- Ligand Similarity: Computed using the Tanimoto coefficient, a standard measure for comparing molecular fingerprints and assessing ligand chemical similarity [4].

- Binding Conformation Similarity: Determined by the pocket-aligned root-mean-square deviation (RMSD) of the ligand atoms, which measures how similarly the ligand is positioned in the binding pocket [4].

By combining these three metrics, the algorithm provides a robust and detailed comparison of protein-ligand complex structures, capable of identifying complexes with similar interaction patterns even when their protein sequences are divergent.

Quantitative Evidence of Widespread Leakage

The application of this filtering algorithm to the PDBbind and CASF datasets revealed a startling degree of data leakage. The analysis identified nearly 600 unacceptably close similarities between complexes in the PDBbind training set and those in the CASF benchmark set [4]. These structurally redundant pairs involved 49% of all CASF test complexes [4]. This means that nearly half of the test cases in the standard evaluation benchmark were not truly novel, but had highly similar counterparts in the training data. Consequently, models could achieve high benchmark performance not by learning general principles of binding but by exploiting these memorized similarities. The table below summarizes the key quantitative findings of the overlap analysis.

Table 1: Quantified Data Leakage Between PDBbind Training and CASF Test Sets

| Metric of Similarity | Threshold for "Leakage" | Number of Leaky Pairs | Percentage of CASF Test Set Affected |

|---|---|---|---|

| Overall Structural Similarity | Combined assessment of TM-score, Tanimoto, and RMSD | ~600 pairs | 49% |

| Protein Structure (TM-score) | High similarity despite potential low sequence identity | Data not specified | Implied to be significant [4] |

| Ligand Chemistry (Tanimoto) | > 0.9 | Data not specified | Addressed by filtering [4] |

This widespread redundancy had a direct impact on model evaluation. To illustrate the effect, a simple search algorithm was devised that predicted the affinity of a CASF test complex by averaging the affinities of its five most similar training complexes. This straightforward, non-learning-based approach achieved a competitive Pearson correlation (R = 0.716) on the CASF2016 benchmark, rivaling some published deep-learning scoring functions [4]. This experiment starkly demonstrated that high benchmark performance could be achieved through data exploitation rather than genuine learning.

The CleanSplit Solution: A Methodology for Rigorous Data Curation

The PDBbind CleanSplit Protocol

In response to the data leakage crisis, the PDBbind CleanSplit dataset was created [4]. Its development involved a rigorous, multi-step filtering protocol designed to eliminate both train-test leakage and internal training set redundancies. The following diagram illustrates the workflow for creating this cleaned dataset.

Diagram 1: Workflow for creating the PDBbind CleanSplit dataset.

The methodology can be broken down into two primary phases:

- Eliminating Train-Test Leakage: The algorithm first performs an all-against-all comparison between CASF test complexes and PDBbind training complexes using the multimodal similarity assessment. Any training complex that exceeds similarity thresholds (e.g., Tanimoto > 0.9 for ligands) with any test complex is identified and removed from the training set. This step ensures that the ligands and structural motifs present in the test set are not encountered during training [4].

- Reducing Training Set Redundancy: The algorithm also addresses internal redundancies within the training data itself. The analysis found that nearly 50% of training complexes were part of a similarity cluster. Using adapted filtering thresholds, the algorithm iteratively removes complexes to resolve these clusters, resulting in a more diverse and less redundant training set. This step encourages models to learn generalizable patterns rather than relying on memorization [4].

The final output of this protocol is a cleaned training dataset that is strictly separated from the CASF benchmarks, allowing for a genuine evaluation of model generalization.

Impact on Model Performance Evaluation

The true test of the CleanSplit protocol was its impact on the performance of state-of-the-art affinity prediction models. When top-performing models like GenScore and Pafnucy were retrained on the PDBbind CleanSplit dataset, their performance on the CASF benchmark dropped substantially [4]. This performance drop confirmed that the previously reported high accuracy of these models was largely driven by data leakage and memorization, not by a robust understanding of protein-ligand interactions.

In contrast, a new graph neural network model named GEMS (Graph neural network for Efficient Molecular Scoring) maintained high benchmark performance when trained exclusively on CleanSplit [4]. This suggests that its architecture—which leverages a sparse graph model of interactions and transfer learning from language models—is better suited to learning generalizable principles. Furthermore, ablation studies showed that GEMS failed to produce accurate predictions when protein node information was omitted, indicating its predictions are based on a genuine understanding of the protein-ligand interaction rather than ligand memorization [4].

Experimental Validation and Researcher's Toolkit

Key Experimental Workflows

The validation of data leakage and the efficacy of new datasets like CleanSplit rely on specific experimental workflows. The core process for benchmarking a scoring function's true generalization capability involves a strict separation of training and test data, followed by a multi-faceted evaluation. The following diagram outlines this critical benchmarking workflow.

Diagram 2: Workflow for rigorously benchmarking a scoring function's generalization.

This workflow emphasizes two critical steps:

- Training on Leakage-Free Data: Using a curated dataset like CleanSplit as the exclusive training source.

- Comprehensive Evaluation: Going beyond simple scoring power (e.g., Pearson R, RMSE) to include diagnostic tests like ablation studies that probe whether the model is learning meaningful interactions.

The Scientist's Toolkit for Data Curation

To address the data leakage problem, researchers require a set of specialized tools and resources for curating and evaluating their protein-ligand data. The following table details key solutions.

Table 2: Research Reagent Solutions for Mitigating Data Leakage

| Tool / Resource | Type | Primary Function in Leakage Mitigation |

|---|---|---|

| PDBbind CleanSplit [4] | Curated Dataset | Provides a pre-processed training set with minimized structural similarity to the CASF benchmark. |

| Multimodal Filtering Algorithm [4] | Algorithm/Methodology | Identifies redundant complexes based on combined protein TM-score, ligand Tanimoto, and binding pose RMSD. |

| HiQBind-WF [9] [8] [10] | Automated Workflow | An open-source, semi-automated workflow that corrects common structural artifacts in PDB files and creates high-quality datasets. |

| GEMS Model [4] | Software/Model | An example of a graph neural network architecture demonstrated to generalize well when trained on a leakage-free dataset. |

| Structure-Based Search Algorithm [4] | Diagnostic Tool | A simple non-learning algorithm that finds similar training complexes to a test query; used to demonstrate the feasibility of data exploitation. |

The uncovering of profound train-test data leakage between PDBbind and CASF has served as a necessary corrective for the field of computational affinity prediction. It has demonstrated that the quest for better models must be intrinsically linked to the pursuit of better, more rigorously curated data. The development of solutions like the PDBbind CleanSplit dataset and the HiQBind workflow marks a pivotal shift towards a data-centric approach in the field [4] [9] [8]. These resources provide the foundation for developing models whose benchmark performance genuinely reflects their ability to generalize to novel targets and ligands, which is the ultimate requirement for accelerating drug discovery.

Looking forward, the field is moving beyond a singular focus on static 3D structures. Emerging efforts involve the creation of large-scale, high-quality datasets through initiatives like Target2035, a global consortium aiming to generate standardized protein-ligand binding data for thousands of human proteins [11]. Furthermore, there is a growing emphasis on incorporating molecular dynamics to capture the conformational flexibility of binding, and on using AI-based co-folding models to generate high-quality synthetic data, provided it is filtered with the same rigor advocated by the CleanSplit study [11]. The lesson is clear: future progress in binding affinity prediction depends on a continued synthesis of scale and quality, ensuring that models are trained on a foundation of truth rather than an illusion of performance.

In the field of computational drug design, the accuracy of binding affinity prediction models is paramount for identifying viable therapeutic candidates. However, a pervasive yet often overlooked issue—structural redundancy within training data—severely compromises the real-world performance of these models. Structural redundancy occurs when training and test datasets contain highly similar protein-ligand complexes, leading to a phenomenon known as train-test data leakage. This leakage allows models to perform well on benchmark tests by recognizing structural similarities rather than by genuinely learning the underlying principles of molecular interactions. Consequently, validation metrics become artificially inflated, creating a significant gap between benchmark performance and practical utility in drug discovery applications.

The core of this problem lies in the standard practice of training models on public databases like PDBbind and evaluating them on benchmarks from the Comparative Assessment of Scoring Functions (CASF). A 2025 study by Graber et al. revealed that nearly 49% of CASF test complexes had highly similar counterparts in the PDBbind training set [12]. This extensive overlap means that nearly half of the test complexes do not present novel challenges to the models, enabling performance through memorization rather than generalization. This tutorial explores the mechanisms through which structural redundancy inflates validation metrics, provides detailed protocols for identifying and mitigating this issue, and presents a framework for developing robust, generalizable affinity prediction models.

Quantitative Evidence of Data Leakage Impact

Performance Decay in Cleaned Datasets

Retraining existing state-of-the-art models on a properly filtered dataset provides the most direct evidence of how structural redundancy inflates performance metrics. When models like GenScore and Pafnucy were retrained on the PDBbind CleanSplit dataset—which removes structurally similar training-test pairs—their performance on the CASF-2016 benchmark dropped markedly [12]. This performance decay indicates that their previously reported high accuracy was largely driven by data leakage rather than true predictive capability.

Table 1: Performance Comparison of Models Trained on Standard vs. Cleaned Data

| Model | Training Dataset | CASF-2016 RMSE | Performance Change | Generalization Assessment |

|---|---|---|---|---|

| GenScore | Original PDBbind | 1.21 | Baseline | Overestimated |

| GenScore | PDBbind CleanSplit | 1.58 | +30.6% RMSE increase | Substantially reduced |

| Pafnucy | Original PDBbind | 1.34 | Baseline | Overestimated |

| Pafnucy | PDBbind CleanSplit | 1.72 | +28.4% RMSE increase | Substantially reduced |

| GEMS (Novel GNN) | PDBbind CleanSplit | 1.24 | - | Maintained high performance |

Structural Similarity Analysis Between Training and Test Sets

The extent of structural redundancy between standard training and test sets can be quantified using multimodal similarity assessment. Research has demonstrated that approximately 49% of complexes in the CASF benchmark share striking similarities with complexes in the PDBbind training set according to defined thresholds of protein structure, ligand chemistry, and binding conformation [12]. This analysis identified nearly 600 highly similar train-test pairs that enable model memorization.

Table 2: Analysis of Structural Similarity Clusters in Protein-Ligand Data

| Similarity Metric | Threshold Value | Percentage of CASF Complexes Affected | Impact on Model Performance |

|---|---|---|---|

| Protein Structure (TM-score) | >0.7 | 34% | Enables protein-based memorization |

| Ligand Similarity (Tanimoto) | >0.9 | 28% | Enables ligand-based memorization |

| Binding Conformation (pocket-aligned RMSD) | <2.0Å | 41% | Enables binding mode memorization |

| Combined Multimodal Similarity | All above thresholds | 49% | Severe data leakage inflation |

Methodologies for Identifying Structural Redundancy

Multimodal Structural Clustering Algorithm

Identifying structural redundancy requires a multimodal approach that assesses similarity across multiple dimensions of protein-ligand complexes. The clustering algorithm developed by Graber et al. combines three critical metrics to comprehensively evaluate complex similarity [12]:

Protein Similarity Assessment: Calculated using TM-scores, with values >0.7 indicating significant structural homology that often corresponds to functional similarity. This metric identifies proteins that share similar folds despite potential differences in sequence identity.

Ligand Similarity Assessment: Computed using Tanimoto coefficients based on molecular fingerprints, with values >0.9 indicating nearly identical chemical structures. This prevents models from memorizing affinity values for specific molecular structures.

Binding Conformation Assessment: Measured through pocket-aligned root-mean-square deviation (RMSD) of ligand positions, with values <2.0Å indicating nearly identical binding modes. This ensures that similar interaction geometries between training and test complexes are identified.

The algorithm employs an iterative clustering approach that groups complexes sharing similarities across all three dimensions, then selectively filters representatives to create a non-redundant dataset. This process effectively identifies and eliminates both train-test leakage and internal training set redundancies.

Diagram 1: Multimodal Structural Clustering Workflow (76 characters)

The PDBbind CleanSplit Protocol

The PDBbind CleanSplit protocol represents a standardized methodology for creating training datasets free from structural redundancy. The implementation involves these critical steps [12]:

Step 1: Cross-Dataset Comparison - Compare all CASF test complexes against all PDBbind training complexes using the multimodal similarity algorithm to identify problematic pairs.

Step 2: Train-Test Separation - Remove all training complexes that meet similarity thresholds (TM-score >0.7, Tanimoto >0.9, or RMSD <2.0Å) with any test complex.

Step 3: Internal Redundancy Reduction - Apply adapted thresholds to identify and eliminate the most striking similarity clusters within the training data, removing approximately 7.8% of complexes.

Step 4: Ligand-Based Filtering - Eliminate all training complexes with ligands identical to those in the test set (Tanimoto >0.9) to prevent ligand-based memorization.

This protocol resulted in the removal of 4% of training complexes due to train-test similarity and an additional 7.8% due to internal redundancies, creating a more challenging but realistic training scenario that genuinely tests model generalization.

Experimental Validation Protocols

Robust Validation Strategies for Affinity Prediction Models

Proper validation strategies are essential for obtaining accurate performance estimates free from the confounding effects of structural redundancy. The following protocols should be implemented to ensure reliable model assessment [12] [13]:

Strictly External Test Sets: Completely independent test sets with no structural similarity to training complexes based on the multimodal criteria previously described. Performance on these sets provides the only valid measure of generalization capability.

Nested Cross-Validation: When external test sets are unavailable, implement nested cross-validation where the inner loop performs hyperparameter tuning and the outer loop provides performance estimates. This prevents over-optimization during model selection.

Cluster-Based Cross-Validation: Instead of random splitting, ensure that all complexes within identified similarity clusters remain within the same split (either all in training or all in test) to prevent data leakage.

Ablation Studies: Systematically remove different input modalities (e.g., protein information, ligand information) to verify that predictions rely on genuine protein-ligand interaction understanding rather than memorization of single components.

Diagram 2: Robust Experimental Validation Protocol (76 characters)

Case Study: GEMS Model Architecture and Training

The Graph neural network for Efficient Molecular Scoring (GEMS) represents a case study in developing models resistant to the pitfalls of structural redundancy. The GEMS architecture and training protocol incorporate several features designed to promote genuine generalization [12]:

Sparse Graph Representation: Models protein-ligand interactions as sparse graphs where nodes represent protein residues and ligand atoms, and edges represent interactions within a defined spatial cutoff. This explicit representation of interactions discourages mere pattern matching.

Transfer Learning from Language Models: Incorporates protein language model embeddings to provide evolutionary information, reducing dependence on structural similarities alone.

Multi-Task Training: Combines binding affinity prediction with auxiliary tasks such as binding site prediction and functional classification to encourage learning of generalizable representations.

When trained on the PDBbind CleanSplit dataset, GEMS maintained a high CASF-2016 prediction RMSE of 1.24, in contrast to the significant performance drops observed in other models. Ablation studies confirmed that GEMS fails to produce accurate predictions when protein nodes are omitted, indicating that its predictions are based on genuine understanding of protein-ligand interactions rather than exploiting data leakage.

Research Reagent Solutions

Table 3: Essential Research Tools for Structural Redundancy Analysis

| Tool/Resource | Function | Application Context |

|---|---|---|

| PDBbind Database | Comprehensive collection of protein-ligand complexes with binding affinity data | Primary source of training data for affinity prediction models |

| CASF Benchmark | Standardized test sets for scoring function evaluation | Performance benchmarking; requires careful similarity analysis |

| Foldseek Cluster | Structural alignment-based clustering algorithm | Identifying similar protein structures at scale [14] |

| TM-align Algorithm | Protein structure comparison tool | Quantifying protein structural similarity (TM-scores) |

| RDKit | Cheminformatics toolkit | Calculating ligand similarities (Tanimoto coefficients) |

| PDBbind CleanSplit | Curated training dataset with reduced structural redundancy | Training and evaluation without data leakage [12] |

| GEMS Implementation | Graph neural network for binding affinity prediction | Reference model with robust generalization capabilities |

Structural redundancy in training data represents a critical challenge in developing reliable binding affinity prediction models for drug discovery. The artificial inflation of validation metrics through data leakage gives a false impression of model capability, ultimately hindering the drug development process when these models fail in real-world applications. Through the implementation of rigorous multimodal clustering algorithms, careful dataset curation following protocols like PDBbind CleanSplit, and robust validation strategies that properly separate training and test data, researchers can develop models with genuine generalization capability. The field must move beyond convenient but flawed benchmarking practices and adopt these more stringent standards to accelerate meaningful progress in computational drug design.

In the field of computational drug design, accurately predicting the binding affinity between a protein and a small molecule ligand is a fundamental task crucial for identifying promising therapeutic compounds. Deep-learning-based scoring functions have emerged as powerful tools for this purpose, often demonstrating exceptionally high performance on standard benchmarks. However, a growing body of evidence indicates that these impressive results are frequently inflated by a critical flaw: train-test data leakage. This case study examines how when models are prevented from memorizing test data through a cleaned dataset, their performance substantially drops, revealing their true generalization capabilities and challenging the perceived progress in the field [12] [4].

The core issue lies in the standard practice of training models on the PDBbind database and evaluating them on the Comparative Assessment of Scoring Functions (CASF) benchmark. Studies have shown that these datasets share a high degree of structural similarity, meaning models can perform well by recognizing patterns seen during training rather than by genuinely understanding underlying protein-ligand interactions. This case study analyzes the impact of removing this leakage using the novel PDBbind CleanSplit dataset and explores a model architecture that maintains robust performance under these stricter conditions, providing a framework for building more reliable affinity prediction tools [12] [15].

The Data Leakage Problem in Affinity Prediction

Origins and Mechanisms of Leakage

The data leakage between PDBbind and CASF benchmarks is not merely a statistical oversight but is rooted in the structural similarities between the complexes in these datasets. When models are trained on PDBbind and tested on CASF, nearly half (49%) of the test complexes have exceptionally similar counterparts in the training set [12]. These similarities exist across multiple dimensions:

- Protein similarity: High TM-scores indicating similar protein structures [12]

- Ligand similarity: Tanimoto scores >0.9, reflecting nearly identical ligand molecules [12]

- Binding conformation similarity: Low pocket-aligned ligand root-mean-square deviation (r.m.s.d.), meaning nearly identical binding modes [12]

This multi-dimensional similarity creates a scenario where test data points are virtually identical to training data points, allowing models to achieve high accuracy through pattern recognition and memorization rather than learning fundamental principles of molecular recognition. Alarmingly, some models maintain competitive performance on CASF benchmarks even when critical input features are omitted, such as all protein or all ligand information, confirming that their predictions are not based on a genuine understanding of interactions [12] [4].

Documented Impacts on Model Performance

The inflation of performance metrics due to data leakage has been independently verified across multiple studies. Research from 2023 highlighted that random splitting of protein-ligand data allows similar sequences to be present in both training and test sets, leading to overoptimistic results that do not reflect true generalization ability [15]. The study found that this bias rewards overfitting, as the test set no longer provides a valid indication of how the model will perform on truly novel complexes.

Further investigation revealed that protein-only and ligand-only models could achieve surprisingly high accuracy on standard benchmarks, demonstrating that the predictive signal was coming from memorization of individual components rather than learning their interactions [15]. This finding fundamentally undermines the premise of structure-based affinity prediction and explains why models that excel on benchmarks often fail in real-world virtual screening applications.

The PDBbind CleanSplit Solution

A Novel Filtering Methodology

To address the data leakage problem, researchers developed a structure-based clustering algorithm that systematically identifies and removes similarities between training and test complexes [12] [4]. This algorithm employs a multi-modal approach that compares complexes across three key dimensions simultaneously:

- Protein similarity using TM-scores [12]

- Ligand similarity using Tanimoto scores [12]

- Binding conformation similarity using pocket-aligned ligand root-mean-square deviation (r.m.s.d.) [12]

This comprehensive approach can identify complexes with similar interaction patterns even when the proteins share low sequence identity, overcoming limitations of traditional sequence-based filtering methods [12]. The algorithm applies specific thresholds to determine unacceptable similarity, though the exact numerical thresholds are detailed in the methodology section of the original publication [12].

CleanSplit Dataset Construction

The filtering process to create PDBbind CleanSplit involves two critical phases:

Reducing train-test leakage: The algorithm excludes all training complexes that closely resemble any CASF test complex based on the multi-modal similarity assessment. Additionally, it removes training complexes with ligands nearly identical to those in the test set (Tanimoto > 0.9). This combined filtering removed 4% of all training complexes [12].

Minimizing training set redundancy: The algorithm identified that nearly 50% of all training complexes belonged to similarity clusters, meaning random train-validation splits would still inflate performance metrics. Using adapted thresholds, the process iteratively removed complexes until the most striking similarity clusters were resolved, eliminating an additional 7.8% of training complexes [12].

The resulting PDBbind CleanSplit dataset is strictly separated from the CASF benchmarks, transforming them into truly external datasets that enable genuine evaluation of model generalizability [12] [4].

Experimental Workflow for Dataset Filtering

The following diagram illustrates the comprehensive workflow for creating the CleanSplit dataset, from initial analysis to the final filtered dataset:

Performance Comparison on Cleaned Data

Experimental Protocol for Model Evaluation

To quantify the impact of data leakage, researchers designed a rigorous evaluation protocol [12] [4]:

Model Selection: Multiple state-of-the-art binding affinity prediction models were selected, including GenScore and Pafnucy as representatives of top-performing architectures [12].

Training Regimen: Each model was trained under two conditions: first on the original PDBbind dataset, then on the PDBbind CleanSplit dataset. All other hyperparameters and architectural details remained identical between conditions.

Evaluation Benchmark: Model performance was assessed on the standard CASF benchmark, with particular attention to the root-mean-square error (r.m.s.e.) and Pearson correlation coefficient (R) as key metrics [12].

Baseline Comparison: A simple search algorithm was implemented as a baseline, which predicts affinity by averaging the labels of the five most similar training complexes. This demonstrates the performance achievable through pure memorization [12].

Quantitative Results and Comparison

The table below summarizes the performance changes observed when models were transitioned from the original PDBbind dataset to the CleanSplit version:

Table 1: Performance Comparison on CASF Benchmark Before and After CleanSplit

| Model / Method | Training Data | Performance Metric | Impact of Data Leakage |

|---|---|---|---|

| GenScore | Original PDBbind | High benchmark performance | Substantial performance drop on CleanSplit [12] |

| Pafnucy | Original PDBbind | High benchmark performance | Marked performance decrease on CleanSplit [12] |

| GEMS (Ours) | PDBbind CleanSplit | Maintains high performance | Genuine generalization to independent test sets [12] |

| Similarity Search Algorithm | Original PDBbind | Competitive performance (R=0.716) | Demonstrates memorization capability [12] |

The performance drops observed in established models confirm that their previously reported high accuracy was largely driven by data leakage rather than true understanding of protein-ligand interactions [12].

GEMS: A Model Designed for Generalization

Architectural Innovations

In response to the generalization challenges revealed by CleanSplit, researchers developed the Graph neural network for Efficient Molecular Scoring (GEMS). This architecture incorporates several key innovations designed to promote robust learning [12]:

Sparse graph modeling: Represents protein-ligand interactions as sparse graphs, focusing computational resources on relevant interfacial regions rather than processing entire complexes uniformly [12].

Transfer learning from language models: Leverages pre-trained representations from protein language models, incorporating evolutionary information and structural priors that enhance generalization, especially on limited data [12].

Interaction-aware conditioning: Utilizes universal patterns of protein-ligand interactions (hydrogen bonds, salt bridges, hydrophobic interactions, π-π stackings) as prior knowledge to guide the model toward physiologically meaningful features [12] [16].

Validation Through Ablation Studies

To verify that GEMS makes predictions based on genuine protein-ligand interactions rather than exploiting biases, researchers conducted critical ablation studies [12]:

Protein node omission: When protein nodes were removed from the input graph, GEMS failed to produce accurate predictions, confirming that its performance depends on modeling both interaction partners rather than relying on ligand information alone [12].

Interaction pattern analysis: The model's attention mechanisms were found to align with known interaction hotspots in protein binding sites, demonstrating that it learns biophysically meaningful representations [16].

These experiments confirm that GEMS maintains its performance on CleanSplit by developing a genuine understanding of molecular interactions rather than exploiting dataset-specific biases [12].

Implications for Drug Discovery

Virtual Screening and Lead Optimization

The development of properly validated affinity predictors has significant implications for structure-based drug design. Generative AI models like RFdiffusion and DiffSBDD can create vast libraries of novel protein-ligand complexes, but identifying therapeutically promising candidates requires accurate affinity prediction [12]. Models with genuine generalization capability, validated on strictly independent test sets, can fill this critical gap in the drug discovery pipeline.

For lead optimization, interaction-aware models like GEMS and frameworks like DeepICL can guide molecular modifications that enhance binding affinity while maintaining favorable drug properties [16]. By focusing on universal interaction patterns rather than dataset-specific correlations, these approaches offer more reliable guidance for medicinal chemists.

Future Research Directions

This case study points to several important directions for future research:

Standardized benchmarking: The field would benefit from adopting cleaned benchmarks like CleanSplit as standard evaluation frameworks to prevent inflated performance claims [12] [15].

Explicit interaction modeling: Future architectures should explicitly incorporate biophysical constraints and interaction principles to reduce reliance on correlational patterns that may not generalize [16].

Multi-target generalization: Developing models that maintain accuracy across diverse protein families and binding sites remains an important challenge [15].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Experimental Resources for Bias-Free Affinity Prediction

| Resource Name | Type | Function / Application |

|---|---|---|

| PDBbind CleanSplit | Dataset | Training data with minimized train-test leakage for proper model validation [12] |

| CASF Benchmark | Benchmark | Standardized test set for comparing scoring functions [12] |

| Structure-Based Clustering Algorithm | Algorithm | Identifies similar protein-ligand complexes based on structure to detect data leakage [12] |

| PLIP (Protein-Ligand Interaction Profiler) | Software | Automatically identifies non-covalent interactions from structural data [16] |

| GEMS Architecture | Model | Graph neural network with transfer learning for generalization [12] |

| DeepICL | Model | Interaction-aware generative model for ligand design [16] |

| TM-score | Metric | Quantifies protein structural similarity independent of sequence [12] |

| Tanimoto Coefficient | Metric | Measures ligand similarity based on molecular fingerprints [12] |

| Pocket-Aligned Ligand RMSD | Metric | Assesses binding pose similarity [12] |

This case study demonstrates that the impressive benchmark performance of many deep-learning-based affinity prediction models is substantially inflated by data leakage between standard training and test datasets. When models are prevented from memorizing test data through the PDBbind CleanSplit protocol, their performance drops markedly, revealing more limited generalization capabilities than previously assumed.

The development of models like GEMS that maintain robust performance on cleaned datasets points the way forward for the field. By employing architectures that explicitly model protein-ligand interactions through sparse graphs and transfer learning, and by validating on strictly independent test sets, researchers can develop more reliable tools for computational drug discovery. Widespread adoption of rigorous data splitting practices and interaction-aware modeling approaches will be essential for building predictive models that translate effectively to real-world drug design applications.

The generalization capability of machine learning models in computational drug design has been significantly overestimated due to pervasive train-test data leakage and inadequate assessment of complex similarity. Conventional benchmarks, which rely on random data splitting or sequence-based identity measures, fail to detect subtle structural similarities that enable models to exploit memorization rather than developing genuine understanding of protein-ligand interactions. This technical guide introduces a multimodal framework for assessing complex similarity that integrates protein structural similarity, ligand chemical similarity, and binding conformation similarity. By implementing the PDBbind CleanSplit methodology and retraining state-of-the-art models on this rigorously filtered dataset, we demonstrate a substantial performance drop in existing models—from Pearson R=0.816 to 0.641 for top performers—while our Graph Neural Network for Efficient Molecular Scoring (GEMS) maintains robust performance (Pearson R=0.779). This work establishes a new paradigm for evaluating and developing affinity prediction models with truly generalizable capabilities, addressing critical data bias issues that have plagued the field for decades.

Accurate prediction of protein-ligand binding affinities stands as a cornerstone of computational drug design, yet the field has been hampered by systematically inflated performance metrics and overestimated generalization capabilities. The root cause lies in inadequate assessment of complex similarity and subsequent data leakage between training and testing datasets. Current state-of-the-art deep learning models for binding affinity prediction typically train on the PDBbind database and evaluate generalization using the Comparative Assessment of Scoring Function (CASF) benchmarks [4]. However, studies reveal that nearly half (49%) of CASF test complexes have exceptionally similar counterparts in the training set, providing nearly identical input data points that enable accurate prediction through simple memorization rather than genuine understanding of protein-ligand interactions [4].

The conventional approach to dataset splitting has relied predominantly on sequence identity, failing to capture the multidimensional nature of molecular recognition. This oversight has created an illusion of progress while models increasingly master the art of pattern matching within biased datasets rather than developing robust predictive capabilities for novel complexes. The consequences extend throughout the drug discovery pipeline, where models that perform exceptionally on benchmarks fail dramatically in real-world applications on truly novel targets [4] [17].

This whitepaper introduces a multimodal framework for assessing complex similarity that transcends sequence-based metrics alone. By simultaneously evaluating protein structure, ligand chemistry, and binding conformation, we establish a rigorous methodology for creating truly independent datasets and evaluating model performance. Within the broader thesis of data bias and generalization in affinity prediction research, this work provides both a critical analysis of current shortcomings and a practical roadmap for developing models with robust, generalizable predictive capabilities.

The Data Leakage Problem in Affinity Prediction

Quantifying Train-Test Similarity

Recent investigations have exposed severe train-test data leakage between the PDBbind database and CASF benchmarks, fundamentally undermining claims of generalization in binding affinity prediction models. When analyzing the relationship between PDBbind training complexes and CASF test complexes, researchers identified approximately 600 similarity pairs sharing not only similar ligand and protein structures but also comparable ligand positioning within protein pockets [4]. Alarmingly, these structurally similar complexes naturally exhibit closely matched affinity labels, creating a direct pathway for models to achieve high benchmark performance through memorization.

The scope of this data leakage is substantial, affecting 49% of all CASF complexes [4]. This means nearly half the test instances do not present novel challenges to models trained on PDBbind, as highly similar examples exist in the training data. This leakage explains the dramatic performance deterioration observed when models transition from benchmark evaluation to real-world deployment on genuinely novel targets.

Limitations of Current Data Splitting Strategies

Current dataset partitioning strategies in affinity prediction research suffer from fundamental limitations that perpetuate the data leakage problem:

- Random splitting produces spuriously high correlations that inflate performance estimates, as structurally similar complexes inevitably appear in both training and testing sets [17].

- Sequence-based splitting (e.g., UniProt-based partitioning) reduces accuracy but fails to address structural similarities that persist despite sequence differences [17].

- Ligand-based splitting overlooks protein structural similarities and binding pose conservation, allowing models to exploit protein-level memorization.

Studies evaluating data partitioning strategies for predicting protein-ligand binding free energy changes demonstrate that while models show high predictive correlations (Pearson coefficients up to 0.70) under random partitioning, their performance significantly declines with more rigorous UniProt-based partitioning [17]. This performance drop reveals the true generalization capability of models absent data leakage.

Multimodal Similarity Assessment Framework

Core Similarity Metrics

Our multimodal similarity assessment framework integrates three complementary metrics that collectively capture the complexity of protein-ligand interactions:

Protein Similarity (TM-score)

- Measurement: Template Modeling score quantifies protein structural similarity

- Scale: 0-1, where >0.5 indicates generally the same fold

- Advantage: Detects structural similarity even with low sequence identity

- Application: Identifies proteins with similar binding pockets despite sequence divergence

Ligand Similarity (Tanimoto Coefficient)

- Measurement: Computed based on molecular fingerprints

- Scale: 0-1, where 1 indicates identical compounds

- Threshold: >0.9 considered highly similar for data splitting

- Application: Prevents ligand-based memorization

Binding Conformation Similarity (Pocket-Aligned Ligand RMSD)

- Measurement: Root-mean-square deviation of ligand atoms after pocket alignment

- Scale: Ångstroms, lower values indicate similar binding modes

- Application: Identifies complexes with similar interaction geometries

Table 1: Multimodal Similarity Assessment Metrics

| Metric | Measurement Type | Scale | Threshold for Exclusion | Primary Function |

|---|---|---|---|---|

| Protein TM-score | Structural alignment | 0-1 | >0.5 | Identify similar binding pockets |

| Ligand Tanimoto Coefficient | Chemical fingerprint | 0-1 | >0.9 | Prevent ligand memorization |

| Binding Conformation RMSD | Spatial coordinate comparison | Ångstroms | <2.0Å | Identify similar binding poses |

Filtering Algorithm and Workflow

The multimodal filtering algorithm processes protein-ligand complexes through a structured workflow that systematically identifies and removes complexes with unacceptable similarity across multiple dimensions. The algorithm employs iterative comparison and cluster resolution to ensure both train-test independence and reduced internal dataset redundancy.

Diagram Title: Multimodal Filtering Workflow for CleanSplit

Implementation: PDBbind CleanSplit

The application of our multimodal filtering algorithm to the PDBbind database produces PDBbind CleanSplit, a training dataset rigorously separated from CASF benchmark datasets. The filtering process involves two critical phases:

Phase 1: Train-Test Separation

- Removes all training complexes closely resembling any CASF test complex

- Excludes training complexes with ligands identical to CASF test complexes (Tanimoto > 0.9)

- Eliminates 4% of training complexes to ensure test independence

- Results in structurally distinct train-test pairs with clear differences

Phase 2: Internal Redundancy Reduction

- Identifies and resolves similarity clusters within training data

- Iteratively removes complexes until all striking similarity clusters are resolved

- Eliminates 7.8% of training complexes to reduce memorization bias

- Creates a more diverse training basis that encourages generalization

Table 2: PDBbind CleanSplit Filtering Impact

| Filtering Phase | Complexes Removed | Similarity Type Addressed | Impact on Model Training |

|---|---|---|---|

| Train-Test Separation | 4% of training set | Direct and indirect leakage | Prevents test set memorization |

| Internal Redundancy Reduction | 7.8% of training set | Within-dataset similarities | Reduces memorization tendency |

| Total Filtering | 11.8% overall reduction | Multimodal similarities | Encourages genuine learning |

After filtering, the remaining train-test pairs with highest similarity exhibit clear structural differences, confirming the effectiveness of our approach in creating truly independent datasets for model evaluation [4].

Experimental Protocols

Data Preparation and Filtering Methodology

The PDBbind CleanSplit curation process follows a rigorous experimental protocol to ensure comprehensive similarity assessment and filtering:

Step 1: Multimodal Comparison

- Compute all-pairs similarity between training and test complexes

- Calculate TM-scores for all protein pairs using structural alignment

- Compute Tanimoto coefficients for all ligand pairs using extended-connectivity fingerprints

- Calculate pocket-aligned ligand RMSD for complexes with TM-score > 0.4 and Tanimoto > 0.7

- Store similarity metrics in structured database for filtering decisions

Step 2: Train-Test Filtering

- Identify all training complexes with TM-score > 0.5 to any test complex

- Identify all training complexes with Tanimoto > 0.9 to any test ligand

- Identify all training complexes with RMSD < 2.0Å to any test complex

- Remove all identified training complexes from the dataset

- Verify separation by re-computing similarities on filtered set

Step 3: Internal Redundancy Reduction

- Apply adapted thresholds (TM-score > 0.8, Tanimoto > 0.95, RMSD < 1.5Å) for internal filtering

- Identify similarity clusters using graph-based community detection

- Iteratively remove complexes from each cluster, preserving maximal diversity

- Continue until no clusters exceed similarity thresholds

- Balance dataset size against diversity requirements

Model Retraining and Evaluation Protocol

To validate the impact of CleanSplit on model generalization, we implemented a comprehensive retraining and evaluation protocol:

Model Selection and Retraining

- Select state-of-the-art binding affinity prediction models (GenScore, Pafnucy, GEMS)

- Train each model on both standard PDBbind and PDBbind CleanSplit

- Maintain identical hyperparameters and training procedures across datasets

- Implement early stopping based on validation performance

- Save model checkpoints for performance comparison

Evaluation Metrics and Benchmarks

- Evaluate all models on CASF-2016 and CASF-2018 benchmarks

- Calculate standard metrics: Pearson R, RMSE, MAE

- Perform statistical significance testing on performance differences

- Conduct ablation studies to isolate contribution of different filtering phases

- Analyze performance on different similarity subgroups

Ablation Study Design

- Train models with progressively stricter filtering thresholds

- Measure performance impact of individual similarity metrics

- Evaluate model robustness to different types of novelty

- Assess trade-offs between dataset size and diversity

Quantitative Results and Performance Analysis

Impact of CleanSplit on Existing Models

Retraining current top-performing binding affinity prediction models on PDBbind CleanSplit revealed dramatic performance drops, confirming that their benchmark performance was largely driven by data leakage rather than genuine generalization capability.

Table 3: Model Performance Before and After CleanSplit Training

| Model | Original PDBbind (Pearson R) | CleanSplit Training (Pearson R) | Performance Drop | Generalization Gap |

|---|---|---|---|---|

| GenScore | 0.816 | 0.641 | 21.4% | High |

| Pafnucy | 0.792 | 0.603 | 23.9% | High |

| GEMS (Ours) | 0.779 | 0.754 | 3.2% | Low |

The substantial performance degradation observed in GenScore and Pafnucy when trained on CleanSplit indicates their heavy reliance on data leakage for benchmark performance. In contrast, our GEMS model maintains robust performance, demonstrating genuine generalization capability to strictly independent test datasets [4].

Structural Similarity Search Performance

To further illustrate the impact of data leakage, researchers devised a simple similarity search algorithm that predicts binding affinity by identifying the five most similar training complexes and averaging their affinity labels. This simple non-learning algorithm achieved competitive performance on CASF2016 (Pearson R = 0.716, RMSE = 1.45) compared to some published deep-learning-based scoring functions [4]. This result starkly demonstrates that sophisticated deep learning models may be essentially replicating this simple similarity matching rather than learning fundamental principles of protein-ligand interactions.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Research Reagents and Resources

| Resource | Type | Primary Function | Access Information |

|---|---|---|---|

| PDBbind Database | Data Resource | Comprehensive collection of protein-ligand complexes with binding affinity data | Publicly available at https://www.pdbbind.org.cn/ |

| CASF Benchmark | Evaluation Suite | Standardized benchmark for scoring function assessment | Included with PDBbind distribution |

| PDBbind CleanSplit | Curated Dataset | Data-leakage-free training dataset for robust model development | Available via publication supplementary materials |

| GEMS Model | Software Tool | Graph neural network for binding affinity prediction with proven generalization | Python code publicly available |

| Structure-Based Clustering Algorithm | Software Tool | Multimodal similarity assessment and filtering tool | Available via publication supplementary materials |

Discussion and Future Directions

Implications for Model Development

The multimodal similarity assessment framework fundamentally changes how we develop and evaluate affinity prediction models. By addressing the critical issue of data leakage, researchers can now focus on building models with genuine understanding of protein-ligand interactions rather than optimizing for benchmark exploitation. The maintained performance of our GEMS model on CleanSplit demonstrates that robust generalization is achievable through appropriate architectures and training regimens.

The graph neural network architecture of GEMS, which leverages sparse graph modeling of protein-ligand interactions and transfer learning from language models, proves particularly suited for generalization to strictly independent test datasets [4]. Ablation studies confirming that GEMS fails to produce accurate predictions when protein nodes are omitted from the graph provide evidence that its predictions stem from genuine understanding of protein-ligand interactions rather than dataset artifacts.

Applications in Structure-Based Drug Design

The multimodal assessment framework and CleanSplit methodology have profound implications for structure-based drug design (SBDD). Generative models such as RFdiffusion and DiffSBDD can create extensive libraries of novel protein-ligand interactions, but their practical utility has been bottlenecked by the absence of accurate affinity prediction models for these novel complexes [4]. With robust generalization capabilities validated on strictly independent datasets, models like GEMS provide the accurate affinity predictions needed to identify interactions with genuine therapeutic potential.

Future work should focus on extending the multimodal similarity framework to additional dimensions including solvation effects, conformational dynamics, and allosteric mechanisms. Additionally, developing standardized benchmarking protocols that incorporate multimodal similarity assessment will ensure the field continues to advance toward genuinely generalizable models rather than benchmark-specific optimization.

This technical guide has established a comprehensive framework for multimodal assessment of complex similarity that transcends the limitations of sequence-based metrics. By simultaneously evaluating protein structural similarity, ligand chemical similarity, and binding conformation similarity, we can create rigorously independent datasets that enable true evaluation of model generalization capability. The significant performance drops observed in state-of-the-art models when trained on PDBbind CleanSplit expose the pervasive data leakage that has inflated reported performance metrics across the field.

The maintained performance of our GEMS model under these rigorous conditions demonstrates that genuine generalization is achievable through appropriate architectural choices and training methodologies. As the field progresses toward increasingly complex challenges in drug design, adopting rigorous multimodal similarity assessment will be essential for developing models with robust real-world applicability rather than merely impressive benchmark performance.

Building Better Benchmarks: Methodological Solutions for Robust Training

The field of computational drug design relies on accurate scoring functions to predict protein-ligand binding affinities. However, the generalization capability of deep-learning models has been severely overestimated due to train-test data leakage between the PDBbind database and Comparative Assessment of Scoring Functions (CASF) benchmark datasets. This whitepaper introduces PDBbind CleanSplit, a rigorously curated training dataset created through a novel structure-based filtering algorithm that eliminates data leakage and internal redundancies. When state-of-the-art models are retrained on CleanSplit, their benchmark performance drops substantially, revealing that previous high scores were largely driven by data memorization rather than true understanding of protein-ligand interactions. Our findings underscore the critical importance of proper dataset curation for developing binding affinity prediction models with robust generalization capabilities.

The Data Leakage Problem in Affinity Prediction

Structure-based drug design (SBDD) aims to develop small-molecule drugs that bind with high affinity to specific protein targets. While deep neural networks have revolutionized computational drug design, their real-world performance has consistently fallen short of benchmark expectations [12]. The root cause of this discrepancy lies in fundamental flaws in dataset organization and evaluation protocols.

The standard practice of training models on the PDBbind database and evaluating them on CASF benchmarks has created an inflated perception of model performance [12] [4]. Analysis reveals that nearly 49% of all CASF complexes have exceptionally similar counterparts in the PDBbind training set, sharing nearly identical ligand and protein structures, comparable ligand positioning within protein pockets, and closely matched affinity labels [12] [4]. This structural similarity enables accurate prediction of test labels through simple memorization rather than genuine learning of interaction principles.

Alarmingly, some models perform comparably well on CASF datasets even after omitting all protein or ligand information from their input data, suggesting their predictions are not based on understanding protein-ligand interactions [12] [4]. This problem is compounded by significant redundancies within the training dataset itself, where approximately 50% of all training complexes belong to similarity clusters, further encouraging memorization over generalization [12].

The CleanSplit Methodology: A Multi-Modal Filtering Approach

Core Algorithm and Similarity Metrics

The PDBbind CleanSplit protocol employs a sophisticated structure-based clustering algorithm that performs combined assessment across three complementary dimensions of similarity. Unlike traditional sequence-based approaches, this multimodal filtering can identify complexes with similar interaction patterns even when proteins have low sequence identity [12] [4].

Table 1: Similarity Metrics Used in CleanSplit Filtering Protocol

| Metric | Calculation Method | Assessment Purpose | Filtering Threshold |

|---|---|---|---|