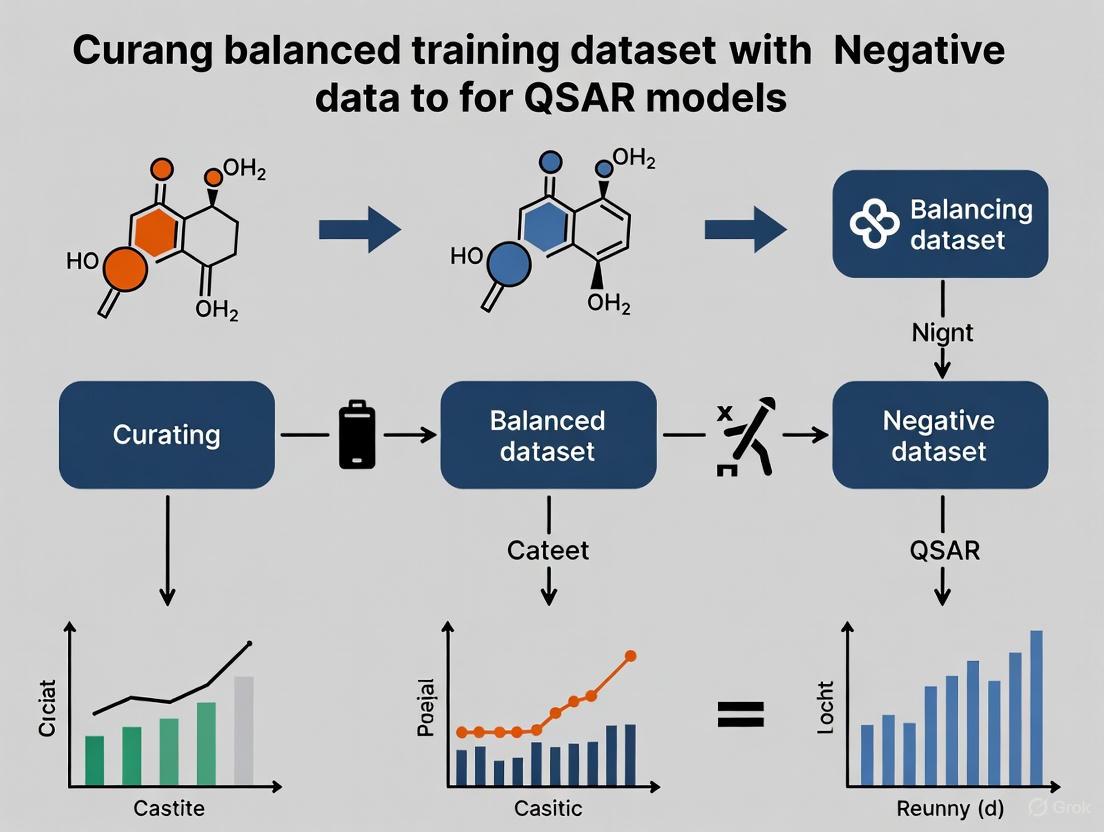

Beyond Balance: A Strategic Guide to Curating QSAR Training Datasets with Negative Data for Robust Drug Discovery

Curating high-quality training datasets is a pivotal yet challenging step in developing reliable Quantitative Structure-Activity Relationship (QSAR) models.

Beyond Balance: A Strategic Guide to Curating QSAR Training Datasets with Negative Data for Robust Drug Discovery

Abstract

Curating high-quality training datasets is a pivotal yet challenging step in developing reliable Quantitative Structure-Activity Relationship (QSAR) models. This article provides a comprehensive guide for researchers and drug development professionals on the strategic integration of negative (inactive) data to build predictive and generalizable models. We explore the foundational importance of dataset balance, detail practical methodologies for data collection—including the use of public databases, text mining with AI tools like BioBERT, and synthetic data generation. The article further addresses critical troubleshooting steps for handling data quality and imbalance, and concludes with a rigorous framework for model validation using advanced statistical metrics and applicability domain assessment. By synthesizing modern best practices and emerging paradigms, this guide aims to equip scientists with the knowledge to construct datasets that significantly enhance the efficiency and success rate of early-stage drug discovery and virtual screening campaigns.

The Why and What: Foundational Principles of Balanced Datasets and Negative Data in QSAR

Frequently Asked Questions (FAQs)

What is 'negative data' in the context of QSAR modeling?

In QSAR modeling, 'negative data' refers to compounds that have been experimentally tested and found to be inactive against the specific biological target or endpoint of interest. These are not just missing data points, but robustly confirmed inactives. In high-throughput screening (HTS) data, which is often used for QSAR, these inactive compounds significantly outnumber the active ones, creating a class-imbalance problem [1]. For instance, in a typical PubChem HTS assay, there can be hundreds of thousands of inactive compounds compared to a much smaller set of actives [1].

Why is the inclusion of negative data critical for building a generalizable QSAR model?

Including robust negative data is fundamental because it teaches the model what chemical features are not associated with the desired activity. This prevents the model from learning overly simplistic rules that classify everything as active. Models built without a careful selection of inactives can have a high false positive rate and poor predictive power for new compounds. The model's applicability domain is better defined when it is trained on a balanced representation of both the active and inactive chemical space [2].

My HTS dataset has over 99% inactive compounds. How can I possibly use this for modeling?

This is a common challenge, known as the class-imbalance problem, and several data-balancing methods can be applied [3]:

- Random Undersampling (RUS): Randomly selects a subset of the inactive compounds to balance the number of actives and inactives.

- Random Oversampling (ROS): Randomly duplicates active compounds to increase their representation in the dataset.

- Synthetic Minority Oversampling Technique (SMOTE): Generates new, synthetic active compounds by interpolating between existing active compounds in the descriptor space [1] [3].

- Sample Weight (SW): Assigns a higher weight to the active compounds during the model training process to increase their influence on the model algorithm [3].

What are the key criteria for selecting high-quality inactive compounds from my screening data?

Simply designating all non-active compounds as "inactive" can introduce noise. A robust curation procedure selects inactives based on specific criteria [4]:

- Potency Threshold: Compounds must show no significant activity up to a defined concentration level (e.g., >10 µM) [4] [1].

- Cytotoxicity Confirmation: The lack of activity should not be due to general cell death; the compound must be non-cytotoxic at the tested concentrations [4].

- Assay Interference: Compounds that show signals of assay interference (e.g., luciferase inhibition in a luminescence-based assay) should be filtered out and not used as true inactives [4].

- High Purity: Only substances tested in high purity should be included to ensure the result is reliable [4].

How can I identify and handle potential experimental errors in my activity data?

QSAR models can themselves be used to help prioritize compounds for data verification. By performing cross-validation and analyzing prediction errors, compounds with large discrepancies between their experimental and predicted values can be flagged for potential experimental errors [5]. However, blindly removing these compounds based on cross-validation alone does not always improve external predictions and may lead to overfitting. The consensus predictions from multiple models are often more reliable for this error-detection task [5].

Are there automated tools available to help with data curation and balancing?

Yes, several open-source platforms can automate much of the curation workflow. For example, KNIME (Konstanz Information Miner) can be used to create workflows that [2] [6]:

- Standardize chemical structures from a raw input file.

- Remove duplicates and inorganic compounds.

- Apply down-sampling methods (both random and rational selection based on chemical similarity) to balance active and inactive compounds.

- Output curated modeling and validation sets ready for descriptor calculation and model building.

Troubleshooting Guides

Problem: Model has high accuracy but poor predictive value for new compounds.

This is a classic sign of a model biased by imbalanced data.

- Potential Cause: The model is "cheating" by always predicting the majority class (inactive). With a 99:1 inactive-to-active ratio, a model that predicts everything as inactive will still be 99% accurate, but useless for finding active compounds.

- Solution:

- Apply Data-Balancing: Use one of the sampling techniques (e.g., ROS, SMOTE, RUS) described above to create a more balanced training set [3].

- Use Appropriate Metrics: Stop using accuracy as your primary metric. Switch to metrics that are robust to imbalance, such as the F1 score, Balanced Accuracy (BA), or Area Under the Receiver Operating Characteristic Curve (ROC AUC) [3].

- Validate Properly: Ensure your external validation set reflects the true, imbalanced distribution of activities to get a realistic estimate of model performance in the real world.

Problem: Model performance deteriorates significantly after introducing data-balancing methods.

- Potential Cause: The balancing method may have introduced artifacts or reduced the chemical diversity of your training set. For example, random undersampling might have removed informative inactive compounds, while oversampling might cause overfitting to the replicated active compounds.

- Solution:

- Compare Methods Systematically: Test different balancing methods (ROS, SMOTE, SW) on your specific dataset. The best method can depend on the data and the algorithm [3].

- Use Rational Selection: Instead of random undersampling, use a rational selection that retains inactive compounds which are structurally similar to the actives, thereby better defining the applicability domain [2].

- Check for Overfitting: If using oversampling, ensure you are using rigorous cross-validation and inspect learning curves to see if the model is overfitting the training data.

Problem: Inconsistent model results after curating chemical structures.

- Potential Cause: The same compound can be represented in different ways (e.g., different tautomers, salts, or neutral forms). If these are not standardized, the same molecule may be perceived as different by the model, introducing noise.

- Solution:

- Implement a Standardization Workflow: Apply a standardized procedure to all structures before modeling. This includes [4] [2]:

- Removing salts and solvents.

- Standardizing tautomers to a single, uniform representation.

- Aromatizing Kekulé structures.

- Generating canonical SMILES.

- Use Automated Tools: Leverage automated curation workflows in platforms like KNIME or RDKit to ensure consistency across all structures in your dataset [2] [6].

- Implement a Standardization Workflow: Apply a standardized procedure to all structures before modeling. This includes [4] [2]:

Experimental Protocols & Data

Protocol: Data Curation and Balancing for a QSAR Modeling Set

Objective: To transform a raw, imbalanced HTS dataset into a curated, balanced set suitable for robust QSAR model development.

Materials:

- Input Data: A tab-delimited file containing compound IDs, SMILES strings, and activity data (e.g., from PubChem BioAssay) [2].

- Software: KNIME Analytics Platform with appropriate chemistry extensions (e.g., RDKit, CDK).

- Workflow: The "Structure Standardizer" and down-sampling workflows available from public repositories (e.g., https://github.com/zhu-lab) [2].

Procedure:

- Structure Standardization:

- Import your raw data file into the KNIME workflow.

- The workflow will execute a series of steps: salt stripping, neutralization, generation of canonical tautomers, and removal of inorganic compounds and mixtures.

- Outputs: Three files are generated: a file with successfully standardized compounds (

FileName_std.txt), a file with compounds that failed standardization (FileName_fail.txt), and a file with warnings (FileName_warn.txt) [2].

- Activity Labeling:

- Using the standardized structures, apply a defined potency threshold (e.g., minimum absolute potency level) to confidently label compounds as "Active" or "Inactive." Filter out compounds that do not meet quality criteria (e.g., those showing cytotoxicity or assay interference) [4].

- Data Balancing:

- Input the curated

FileName_std.txtinto a down-sampling workflow. - For Random Selection: Use the workflow to randomly select a number of inactive compounds equal to the number of active compounds.

- For Rational Selection: Use the workflow to select inactive compounds that fall within the chemical space (e.g., defined by Principal Component Analysis) of the active compounds [2].

- Outputs: Two files are generated: a balanced modeling set (

ax_input_modeling.txt) and an imbalanced validation set (ax_input_intValidating.txt) that holds the remaining compounds for external validation [2].

- Input the curated

Comparative Performance of Data-Balancing Methods on a Genotoxicity Dataset

A study on genotoxicity prediction (OECD TG 471 data) compared the effectiveness of different balancing methods using the F1 score. The results below demonstrate that balancing methods, particularly oversampling, generally improve model performance [3].

- Table: Impact of Data-Balancing Methods on Model Performance (F1 Score) [3]

| Machine Learning Algorithm | Molecular Fingerprint | No Balancing | Random Oversampling (ROS) | SMOTE | Sample Weight (SW) |

|---|---|---|---|---|---|

| Gradient Boosting Tree (GBT) | MACCS | 0.501 | 0.637 | 0.659 | 0.653 |

| Gradient Boosting Tree (GBT) | RDKit | 0.511 | 0.605 | 0.622 | 0.644 |

| Random Forest (RF) | MACCS | 0.495 | 0.612 | 0.631 | 0.624 |

| Support Vector Machine (SVM) | MACCS | 0.478 | 0.589 | 0.601 | 0.592 |

The Researcher's Toolkit: Essential Reagents & Software for QSAR Data Curation

- Table: Key Resources for Curating QSAR Datasets

| Item Name | Type | Function in Experiment |

|---|---|---|

| KNIME Analytics Platform | Software | An open-source platform for creating automated data workflows, including chemical data curation, standardization, and balancing [2] [6]. |

| RDKit | Software/Chemoinformatics Library | An open-source toolkit for cheminformatics, used for calculating molecular descriptors, generating fingerprints, and standardizing structures [2]. |

| PubChem BioAssay | Database | A public repository of HTS data from which raw compound structures and activity data can be sourced for modeling [1]. |

| SMOTE | Algorithm | A synthetic oversampling technique used to generate new examples for the minority (active) class to balance the dataset [1] [3]. |

| Morgan Fingerprints (ECFP) | Molecular Descriptor | A circular fingerprint that captures atomic environments and is widely used for chemical similarity analysis and as input for machine learning models [7]. |

Workflow Diagrams

Data Curation and Modeling Workflow

Sampling Strategy Comparison

Frequently Asked Questions

What constitutes an "imbalanced dataset" in QSAR modeling? An imbalanced dataset is one where the distribution of activity classes is unequal. In the context of public High-Throughput Screening (HTS) data, it is very common to have a substantially larger number of inactive compounds compared to active ones [2]. The more common label is the majority class (typically inactives), and the less common is the minority class (actives) [8]. In severe cases, the active compounds might make up less than 1% of the total dataset [1].

Why do standard machine learning models perform poorly on imbalanced data? Most standard machine learning algorithms are based on the premise that all data points have equal importance. This causes the model to become biased toward the majority class, as optimizing for overall accuracy will favor simply predicting the majority class most of the time. Consequently, the model may fail to learn the distinguishing features of the minority class, leading to poor predictive accuracy for the active compounds you are most interested in identifying [1] [8].

What is the difference between data-based and algorithm-based solutions?

- Data-based methods involve manipulating the training dataset itself to create a more balanced distribution. These methods, such as down-sampling the majority class, are independent of the specific machine learning algorithm used [1] [2].

- Algorithm-based methods involve modifying the learning algorithm to make it more sensitive to the minority class. This can include cost-sensitive learning, which assigns a higher penalty for misclassifying minority class examples [1]. These often require specific software implementations.

What are the pros and cons of down-sampling? Pros: Down-sampling is a straightforward data-based method that can significantly reduce dataset size, making it easier to manage. It also increases the probability that each batch during model training contains enough minority class examples for the model to learn effectively [8] [2]. Cons: The primary drawback is that down-sampling discards a large amount of data from the majority class, which could potentially contain useful information about the boundaries between active and inactive chemical space [1] [8].

Troubleshooting Guides

This is a classic symptom of a model trained on a severely imbalanced dataset. The model has learned that always predicting "inactive" yields a high accuracy.

Diagnosis and Solutions:

Analyze Your Data Distribution

- Check the ratio of active to inactive compounds in your training set. A highly skewed ratio (e.g., 1:100 or more) is a strong indicator of imbalance issues [2].

Apply Down-sampling and Up-weighting This two-step technique separates the goal of learning what each class looks like from learning how common each class is [8].

- Step 1: Down-sample the majority class. Artificially create a more balanced training set by randomly selecting a subset of the inactive compounds so that their number is similar to the actives [2]. For example, from a set of 200 inactives and 2 actives, you might down-sample to 20 inactives and 2 actives.

- Step 2: Up-weight the down-sampled class. To correct for the prediction bias introduced by down-sampling, increase the loss penalty for the down-sampled class (inactives) during model training. The up-weighting factor is typically the inverse of the down-sampling ratio [8].

Use Ensemble Methods with Multiple Under-sampling To mitigate the information loss from simple down-sampling, build multiple models, each trained on a different bootstrap sample of the majority class that is balanced with the minority class. The predictions of these models are then combined into an ensemble model for a more robust prediction [1].

Explore Rational (Similarity-Based) Selection Instead of random down-sampling, select inactive compounds that share the same descriptor space or chemical similarity with the active compounds. This approach helps to better define the applicability domain of the model by focusing on the chemical space that is most relevant to the actives [2].

Problem: QSAR model performs well on training data but generalizes poorly to new compound libraries.

This can be caused by a biased training set that does not adequately represent the chemical space of the compounds you want to screen.

Diagnosis and Solutions:

Implement Rigorous Data Curation Poor model generalization can stem from data quality issues, not just imbalance. Before modeling, apply a rigorous curation process [4] [2]:

- Standardize Structures: Convert all structures to a canonical representation (e.g., canonical SMILES) to ensure the same compound is represented consistently [2].

- Filter for Robust Data: Select actives based on the quality of concentration-response curves, apply minimum potency cut-offs, and ensure activity is not due to cytotoxicity or assay interference artifacts [4].

- Remove Unsuited Compounds: Filter out inorganic compounds, mixtures, and salts that are not suitable for traditional QSAR modeling [2].

Ensure a Representative Validation Set When partitioning your data, ensure your external validation set retains the original, imbalanced distribution of the real world. This provides a realistic assessment of your model's performance in a virtual screening scenario [2].

Experimental Protocols for Handling Imbalanced Data

Protocol 1: Random Down-sampling using KNIME

This protocol uses the open-source Konstanz Information Miner (KNIME) platform to randomly select inactive compounds for a balanced modeling set [2].

- Objective: To create a balanced modeling set by randomly selecting an equal number of inactive compounds compared to the actives.

- Materials:

- KNIME Analytics Platform (ver. 2.10.1 or newer).

- "Random Selection" KNIME workflow (available from https://github.com/zhu-lab).

- Input file: A tab-delimited file with columns for

ID,SMILES, andActivity[2].

- Method:

- Import the random selection workflow into KNIME.

- Configure the

File Readernode to point to your curated input file. - Set the

activitycolumn type to "String". - In the workflow, configure the number of active and inactive compounds to select (e.g., 500 each).

- Execute the workflow.

- Output:

- A balanced modeling set file (e.g.,

ax_input_modeling.txt). - A validation set file (e.g.,

ax_input_intValidating.txt) containing the remaining compounds, which retains the original imbalanced distribution [2].

- A balanced modeling set file (e.g.,

Protocol 2: Rational Down-sampling based on Chemical Similarity

This protocol uses a rational, similarity-based approach to select the most informative inactive compounds for the modeling set [2].

- Objective: To select inactive compounds that are structurally similar to the active compounds, thereby focusing the model on the relevant chemical space.

- Materials:

- KNIME Analytics Platform.

- "Rational Selection" KNIME workflow (available from https://github.com/zhu-lab).

- Input file: A tab-delimited file with columns for

ID,Activity, and calculated chemical descriptors.

- Method:

- Import the rational selection workflow into KNIME.

- Configure the

File Readernode to point to your input file with pre-calculated chemical descriptors. - The workflow uses Principal Component Analysis (PCA) to define a quantitative similarity threshold.

- Inactive compounds that fall within the same descriptor space as the active compounds are selected for the modeling set.

- Output:

- A balanced modeling set enriched with inactives that are structurally analogous to the actives.

- A validation set with the remaining compounds.

Comparison of Sampling Methods for Imbalanced HTS Data

The table below summarizes the key characteristics of different sampling methods.

| Method | Description | Advantages | Limitations |

|---|---|---|---|

| Random Down-sampling [2] | Randomly selects a subset of the majority class to match the size of the minority class. | Simple and fast to implement; reduces dataset size and training time [8] [2]. | Discards potentially useful data; may reduce model accuracy by ignoring much of the chemistry space [1]. |

| Rational Down-sampling [2] | Selects majority class examples based on chemical similarity to the minority class. | Defines a more relevant applicability domain; can lead to more robust models. | More complex to implement; requires calculation of chemical descriptors and similarity metrics. |

| Ensemble Down-sampling [1] | Builds multiple models, each on a different balanced bootstrap sample of the data. | More robust than single down-sampling; makes better use of the majority class data. | Computationally more expensive; requires building and combining multiple models. |

| SMOTE (Over-sampling) [1] | Generates synthetic minority class examples by interpolating between existing ones. | Avoids loss of information from the majority class; can expand the minority class space. | May lead to overfitting if synthetic examples are too simplistic; can create implausible molecules. |

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table lists key resources for curating data and building QSAR models from imbalanced HTS data.

| Item | Function in Research | Relevance to Imbalanced Data |

|---|---|---|

| KNIME Analytics Platform [2] | An open-source platform for data pipelining ("workflows"). | Used to build automated workflows for data curation, standardization, and both random and rational down-sampling [2]. |

| PubChem BioAssay [1] | A public repository of chemical compounds and their biological activities. | A primary source of large, often severely imbalanced, HTS datasets for QSAR modeling [1] [2]. |

| Chemical Descriptor Generators (e.g., RDKit, MOE, Dragon) [2] | Software tools that calculate numerical representations of chemical structures. | Essential for converting structures into a format for modeling and for performing rational, similarity-based down-sampling [2]. |

| GUSAR Software [1] | A program for generating QSAR models using various descriptor types and machine learning methods. | Cited in research for testing and developing strategies to build robust QSAR models from imbalanced PubChem HTS data sets [1]. |

| Data Curation Workflow [2] | A standardized procedure for cleaning and preparing HTS data. | Critical first step to remove duplicates, artifacts, and inorganic compounds, ensuring data quality before addressing imbalance [4] [2]. |

Workflow Diagram: From Raw Data to Balanced QSAR Model

The diagram below illustrates a recommended workflow for handling imbalanced HTS data, from initial curation to model building.

Detailed Workflow: Rational Down-Sampling for QSAR

For a more in-depth look at the rational down-sampling process, the following diagram details the key steps involved in creating a balanced and chemically meaningful modeling set.

FAQs: Data Curation for QSAR Modeling

1. Why is data curation critical for developing reliable QSAR models? Data curation is fundamental because QSAR models are inherently dependent on the quality of the input data. Public chemogenomics repositories often contain inaccuracies, such as invalid chemical structures and inconsistent biological measurements [9]. These errors compromise model performance, leading to unreliable predictions and poor reproducibility. Proper curation ensures that the mathematical relationships learned by the model are based on accurate and consistent data, which is crucial for guiding chemical probe and drug discovery projects [9] [10].

2. What are the common types of errors found in chemogenomics datasets? Errors can be broadly categorized into chemical and biological data issues [9].

- Chemical Data Errors: Include invalid structures (e.g., valence violations, extreme bond lengths), undefined stereocenters, incorrect tautomeric forms, and the presence of salts or mixtures that are not properly standardized [9].

- Biological Data Errors: Stem from experimental variations, such as differences in screening technologies, and can include significant uncertainties in activity measurements (e.g., mean error of 0.44 pKi units as found in one analysis) [9]. Another common issue is the presence of multiple activity entries for the same compound, which can skew model performance [9].

3. How can I handle a severely class-imbalanced dataset? In a class-imbalanced dataset, where the majority class (e.g., inactive compounds) significantly outnumbers the minority class (e.g., active compounds), standard training often fails to learn the minority class effectively [8]. A proven technique is a two-step process:

- Step 1: Downsample the majority class. Artificially create a more balanced training set by training on a disproportionately low percentage of the majority class examples. This increases the probability that training batches contain enough minority class examples [8].

- Step 2: Upweight the downsampled class. To correct the bias introduced by downsampling, treat the loss on each majority class example more harshly (e.g., multiply the loss by the downsampling factor). This teaches the model the true distribution of the classes [8]. The optimal rebalancing ratio should be treated as a hyperparameter and determined through experimentation [8].

4. What is the recommended workflow for integrated chemical and biological data curation? A comprehensive workflow addresses both chemical structures and bioactivities [9]. Key steps include:

- Chemical Curation: Remove incomplete records (inorganics, mixtures), clean structures, standardize tautomers, and verify stereochemistry [9].

- Processing Bioactivities: Identify and handle chemical duplicates by comparing the bioactivities reported for structurally identical compounds [9].

- Identifying Outliers: Flag compounds with suspicious data, such as those that are activity outliers within a cluster of structural analogs, for manual inspection [9].

Troubleshooting Guides

Issue: Model Performance is Over-Optimistic or Unreliable

Potential Cause 1: Presence of chemical duplicates and data leakage. If the same compound appears multiple times in the dataset, it can artificially inflate performance metrics if those duplicates end up in both training and test sets [9].

- Methodology for Resolution:

- Standardize Structures: Apply a consistent standardization protocol to all structures (e.g., using RDKit or ChemAxon) to ensure identical molecules have the same representation [9] [10].

- Find Duplicates: Calculate molecular fingerprints and identify duplicates based on a similarity threshold of 1.0.

- Consolidate Activities: For each set of duplicates, compare the reported bioactivities. If the activities are concordant, keep a single representative data point. If activities are discordant, investigate the source data or consider removing the compound [9].

Potential Cause 2: Improper handling of class-imbalanced data. The model may be biased towards predicting the majority class and perform poorly on the minority class [8].

- Methodology for Resolution:

- Analyze Class Distribution: Calculate the ratio of majority to minority class examples.

- Apply Downsampling and Upweighting: Follow the two-step protocol described in FAQ #3 [8].

- Experiment with Ratios: Systematically test different downsampling factors (e.g., 10, 25, 50) and evaluate model performance on a held-out validation set to find the optimal balance.

Issue: Inability to Generalize Predictions to New Chemical Series

Potential Cause: Narrow or non-diverse chemical space in the training data. The model has not learned a generalizable relationship between structure and activity because the training data lacks diversity [10].

- Methodology for Resolution:

- Assess Chemical Space: Calculate a set of physicochemical descriptors (e.g., molecular weight, logP, topological surface area) for your dataset.

- Visualize Diversity: Use principal component analysis (PCA) to project the high-dimensional descriptor space into 2D or 3D plots to visually inspect the coverage and identify gaps.

- Curate for Diversity: When collecting data, prioritize sources that cover a broad range of structural classes. Actively seek to include compounds that fill gaps in the chemical space of interest [10].

Experimental Protocols & Workflows

Protocol 1: Integrated Chemical and Biological Data Curation

This protocol outlines a systematic approach to curating molecular datasets prior to QSAR model development [9].

- Objective: To ensure the accuracy, consistency, and reproducibility of both chemical structures and associated bioactivities.

- Materials:

- Methodology:

- Chemical Structure Curation:

- Filter: Remove inorganic, organometallic compounds, and mixtures.

- Standardize: Clean structures, normalize tautomers, and aromatize rings using a standardized protocol.

- Check Stereochemistry: Verify and correct defined stereocenters.

- Manual Inspection: Manually check a subset of complex structures and all flagged "suspicious" compounds.

- Biological Data Curation:

- Identify Duplicates: Find all structurally identical compounds.

- Compare Activities: For duplicates, compare pIC50 or pKi values. If the standard deviation is greater than a set threshold (e.g., 0.5 log units), flag the records for further investigation.

- Outlier Detection: Cluster compounds using structural fingerprints. Flag any compound whose bioactivity is a statistical outlier (e.g., beyond 2 standard deviations) within its cluster.

- Finalize Dataset: Resolve or remove all flagged entries to produce a high-quality, curated dataset.

- Chemical Structure Curation:

Protocol 2: Addressing Class Imbalance via Downsampling

This protocol details the process of rebalancing a dataset to improve model learning of the minority class [8].

- Objective: To create a training set where the model is exposed to a sufficient number of minority class examples without losing information on class distribution.

- Materials: A curated dataset with a defined majority and minority class.

- Methodology:

- Calculate Imbalance Ratio: Determine the ratio of majority class examples (Nmaj) to minority class examples (Nmin).

- Select Downsampling Factor (K): Choose a factor, K, which will determine the number of majority class examples retained (Nmaj / K). A starting point is often the square root of the imbalance ratio.

- Downsample: Randomly select (Nmaj / K) examples from the majority class to create a new training subset.

- Upweight: During model training, assign a weight of K to each of the retained majority class examples in the loss function. Minority class examples retain a weight of 1.

- Validate: Train the model on this artificially balanced set and validate its performance on a held-out test set that reflects the true, imbalanced class distribution.

Workflow Diagrams

DOT Script for Integrated Curation Workflow

Diagram Title: Molecular Data Curation Pipeline

DOT Script for Handling Imbalanced Data

Diagram Title: Downsampling and Upweighting Process

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Tools for Data Curation in Cheminformatics

| Tool / Resource | Type | Primary Function in Curation |

|---|---|---|

| RDKit [9] [10] | Open-Source Software | Calculates molecular descriptors, performs structural standardization, and handles tautomer normalization. |

| ChemAxon JChem(Free for Academic Use) [9] | Commercial Software Suite | Provides robust tools for structure checking, standardization, and database management. |

| PaDEL-Descriptor [10] | Software | Calculates a comprehensive set of molecular descriptors and fingerprints for QSAR modeling. |

| KNIME [9] | Open-Source Platform | Allows creation of visual, reproducible workflows that integrate various curation and analysis steps. |

| PubChemChEMBL [9] | Public Database | Sources of experimental bioactivity data; PubChem has a built-in standardization workflow [9]. |

| Downsampling &Upweighting [8] | Algorithmic Technique | Mitigates model bias in class-imbalanced datasets by rebalancing training data and loss function. |

Frequently Asked Questions

Q1: What is the key difference between ChEMBL and PubChem for drug discovery research? ChEMBL is a manually curated database focused on bioactive molecules with drug-like properties, containing detailed information on approved drugs and clinical candidates, along with their mechanisms, indications, and related bioactivity data [11]. In contrast, PubChem is a larger, more comprehensive public repository that aggregates data from over 1,000 sources, providing a wider range of chemical information but with less manual curation [12]. For constructing reliable QSAR models, ChEMBL's curated bioactivity data is often preferred for building training sets, whereas PubChem is invaluable for gathering a broad spectrum of chemical structures and properties.

Q2: How can I obtain negative (inactive) data for my QSAR model from these databases? Retrieving high-quality negative data is crucial for training balanced QSAR models. In ChEMBL, you can often find compounds reported as "inactive" in specific bioactivity assays [11]. When searching, use filters for "inactive" outcomes or low potency values. In PubChem, bioactivity data from high-throughput screening (HTS) assays often includes both active and inactive results [12]. Be aware that underreporting of inactive compounds is a common challenge, so you may need to infer inactivity from data where a compound was tested but showed no significant activity at relevant concentrations [13].

Q3: Which database should I use for finding genotoxicity data for my chemicals? For specialized genotoxicity data, you will typically need to consult regulatory sources and specialized databases. Key sources include:

- OECD Test Guidelines: Such as OECD 471 (Ames test) and OECD 487 ( in vitro micronucleus assay) [14]

- ICH S2(R1) Guideline: Provides standards for genotoxicity testing of pharmaceuticals [15] While ChEMBL may contain some toxicity data [11], and PubChem integrates hazard information from sources like the EPA IRIS program [12], for comprehensive genotoxicity assessment, consult the primary regulatory guidelines directly or specialized toxicology databases that implement these standards.

Q4: What are common data quality issues when sourcing data for QSAR models? Common issues include:

- Inconsistent data quality from multiple sources [16]

- Inadequate consideration of the underlying data relevance and consistency [16]

- Insufficient negative data leading to model bias [13]

- Structural representation errors in molecular notations like SMILES [13]

- Failure to account for metabolic activation in toxicity data [16]

- Predictions made outside the model's applicability domain [16]

Always verify data provenance, check for standardization, and ensure your compounds fall within the applicability domain of any model you build.

Troubleshooting Guides

Problem: High False-Positive Rate in Virtual Screening Potential Causes and Solutions:

- Cause: Training dataset with insufficient negative/inactive examples [17].

- Solution: Actively curate a balanced dataset. Use HTS data from PubChem BioAssay that explicitly reports inactive compounds [12]. In ChEMBL, extract compounds tested in the same assay series but reported with no activity or high IC50 values [11].

- Cause: Predictions are made outside the model's Applicability Domain (AD) [16].

- Solution: Define your model's AD using appropriate chemical descriptors. Before using a prediction, check if the query compound is sufficiently similar to the training set compounds in your model's chemical space.

Problem: Inconsistent or Contradictory Data from Different Sources Potential Causes and Solutions:

- Cause: Differences in curation principles and experimental protocols between databases [11].

- Solution: Prioritize data from manually curated sources like ChEMBL for core bioactivity data [11]. For any critical data point, trace it back to the original primary reference to understand the experimental context.

- Cause: Variability in assay protocols, target definitions, or measurement units.

- Solution: Standardize and normalize data before use. When merging data from multiple sources, create a strict protocol for resolving conflicts (e.g., taking the most recent measurement, or the value from the most trusted source).

Problem: Difficulty in Representing Complex Chemicals for QSAR Potential Causes and Solutions:

- Cause: Limitations of standard molecular notations (like SMILES) for representing stereochemistry, tautomers, or metal complexes [13].

- Solution: Use standardized and canonicalized representations. Convert all structures to a standard format (e.g., canonical SMILES) and check for explicit stereochemistry representation. Consider using InChI keys for unique identification [13].

- Cause: Molecular descriptors are not capturing features relevant to the endpoint (e.g., genotoxicity) [16].

- Solution: Select descriptors mechanistically linked to the endpoint. For genotoxicity, ensure descriptors can capture structural alerts known to be associated with DNA reactivity [16].

Database Comparison and Key Data

Table 1: Comparison of Key Public Chemical Databases

| Feature | ChEMBL | PubChem |

|---|---|---|

| Primary Focus | Bioactive molecules & drug discovery [11] | Comprehensive chemistry & biology [12] |

| Curation Level | High (Manual & semi-automated) [11] | Variable (Aggregated from contributors) [12] |

| Key Data Types | Approved drugs, clinical candidates, bioactivity, mechanisms, indications [11] | Compounds, substances, bioassays, bioactivities, patents, literature [12] |

| Approx. Compound Count | ~17.5k (Drugs & candidates) + ~2.4M (Research compounds) [11] | 119 Million Compounds [12] |

| Negative Data Availability | Yes (from bioactivity assays) [11] | Yes (from HTS and other assays) [12] |

| Access | Fully Open [11] | Fully Open [12] |

Table 2: Essential Genotoxicity Assays and Guidelines for Data Curation

| Assay/Guideline | Endpoint Measured | Regulatory Context | Data Use in Modeling |

|---|---|---|---|

| Ames Test (OECD 471) [14] | Gene mutation in bacteria | ICH S2(R1) standard battery [15] | Provides robust in vitro mutagenicity data for model training. |

| In Vitro Micronucleus (OECD 487) [14] | Chromosomal damage | ICH S2(R1) standard battery [15] | Data on clastogenicity and aneugenicity. |

| In Vivo Genotoxicity Tests | Chromosomal damage in animals | ICH S2(R1) follow-up testing [15] | Provides in vivo relevance; crucial for assessing false positives from in vitro assays. |

Experimental Protocols

Protocol 1: Curating a Balanced Dataset for a QSAR Model from ChEMBL

- Define Your Target and Endpoint: Clearly specify the protein target and biological activity (e.g., IC50 for inhibition).

- Extract Active Compounds: Query the

compound_structuresandactivitiestables. Filter for your target and a potency threshold (e.g., IC50 < 10 µM). Use standard data types (e.g., 'IC50', 'Ki') [11]. - Extract Inactive Compounds: From the same source assays, extract compounds reported with no activity or with potency above a high threshold (e.g., IC50 > 10 µM or listed as 'inactive') [11].

- Apply Data Quality Filters: Remove records with missing or conflicting structures. Standardize structures to a common form (e.g., remove salts, generate canonical tautomers).

- Define and Apply the Applicability Domain: Characterize the chemical space of your curated set using descriptors. This defined space is your model's Applicability Domain (AD) [16].

Protocol 2: Integrating Genotoxicity Data from Regulatory Sources

- Identify Relevant Guidelines: For pharmaceuticals, start with the ICH S2(R1) guideline, which recommends a standard battery of tests [15].

- Source Data from Standard Assays: Collect data from studies conducted under OECD guidelines (e.g., 471, 487). Prioritize data that includes results both with and without metabolic activation [14].

- Annotate with Mechanistic Information: Where available, tag compounds with known structural alerts or mechanisms of action (e.g., intercalation, alkylation) [16].

- Categorize Results: Classify compounds clearly as positive, negative, or equivocal for genotoxicity based on the regulatory assessment criteria.

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Computational Toxicology

| Item / Resource | Function in Research |

|---|---|

| ChEMBL Database | Provides high-quality, curated bioactivity data for approved drugs and clinical candidates, essential for building predictive models in drug discovery [11]. |

| PubChem BioAssay | Supplies large-scale bioactivity data, including high-throughput screening (HTS) results with active and inactive outcomes, crucial for balanced dataset creation [12]. |

| OECD Test Guidelines | Provide the internationally recognized standard protocols (e.g., for Ames test) for generating reliable and reproducible experimental toxicity data for model training and validation [14]. |

| Structural Alerts | Known chemical moieties associated with toxicity (e.g., for mutagenicity). Used as descriptors or for rational interpretation of QSAR model predictions [16]. |

| Standard Molecular Descriptors | (e.g., logP, molecular weight, topological indices). Quantifiable properties that describe the structure of a molecule and form the input variables for QSAR models [16]. |

| Applicability Domain (AD) Definition | A critical methodological step to define the chemical space of a QSAR model, ensuring that predictions are only made for compounds within this domain, improving reliability [16]. |

Workflow and Pathway Diagrams

Data Sourcing and QSAR Modeling Workflow

QSAR Model Development and Critical Factors

The How: Methodologies for Sourcing, Curating, and Balancing QSAR Datasets

Troubleshooting Guide: BioBERT for Biomedical Text Mining

Data Preprocessing and Curation

Problem: My dataset contains significant noise, leading to poor model performance.

- Cause: Public chemogenomics repositories can contain errors in both chemical structures and bioactivities. Studies have found error rates for chemical structures ranging from 0.1% to 3.4%, and biological assertions can have reproducibility rates as low as 11%-25% [9].

- Solution: Implement an integrated chemical and biological data curation workflow [9] [4].

- Chemical Curation: Remove incomplete records (inorganics, mixtures), clean structures (fix valence violations, normalize tautomers), and verify stereochemistry using tools like RDKit or Chemaxon [9].

- Biological Curation: Identify and process chemical duplicates. Multiple entries for the same compound must be consolidated, as they can artificially skew model predictivity [9].

- Bioactivity Criteria: For QSAR, define robust endpoint criteria. Select actives based on curve-fitting quality, enforce minimum potency cut-offs, require non-cytotoxicity at activity concentration, and account for assay signal interference [4].

Problem: How do I standardize different tautomeric forms of molecules in my dataset?

- Cause: Tautomers are different structural forms of the same compound that can interconvert. Inconsistent representation confuses models [9].

- Solution: Establish empirical rules for consistent tautomer representation, aiming for the most populated tautomer. Software tools can automate this, but manual verification is recommended for complex cases [9] [4].

Model Training and Fine-Tuning

Problem: BioBERT performs poorly on my specific task, like recognizing gene names.

- Cause: The base BioBERT model is a general-purpose biomedical language representation model and requires task-specific fine-tuning [18] [19].

- Solution: Fine-tune BioBERT on your labeled dataset.

- Set Hyperparameters: As a starting point, use a learning rate of 5e-5, batch size of 32, and train for 20-50 epochs. These can be adjusted based on dataset size [19] [20].

- Run Fine-tuning: Use the provided scripts for tasks like Named Entity Recognition (NER). For an NER dataset in a directory

$NER_DIR, the command resembles:python run_ner.py --do_train=true --do_eval=true --data_dir=$NER_DIR --vocab_file=$BIOBERT_DIR/vocab.txt --bert_config_file=$BIOBERT_DIR/config.json --init_checkpoint=$BIOBERT_DIR/model.ckpt --max_seq_length=128 --train_batch_size=32 --learning_rate=5e-5 --num_train_epochs=20.0 --output_dir=$OUTPUT_DIR[19]. - Evaluation: After training, use

--do_predict=trueto evaluate on the test set. Use provided biocodes (e.g.,ner_detokenize.pyandre_eval.py) for official entity-level evaluation [19].

Problem: Fine-tuning is slow, and I run out of GPU memory.

- Cause: BioBERT is a large model, and default settings may exceed your hardware limits [20].

- Solution:

- Reduce the

train_batch_size(e.g., to 16 or 8). - Reduce the

max_seq_length(e.g., from 512 to 128 or 256). - Use gradient accumulation to simulate a larger batch size.

- Leverage mixed-precision training if supported by your hardware [19].

- Reduce the

Model Interpretation and Deployment

Problem: The model's predictions are a "black box"; how can I trust them for critical research?

- Cause: Deep learning models like BioBERT are inherently complex and lack built-in explainability [20].

- Solution: While a complete solution is an active research area, you can:

- Perform error analysis by manually reviewing a sample of false positives and false negatives.

- Use attention visualization techniques to see which words in the input text the model focused on when making a prediction.

- Implement model calibration to ensure that prediction probabilities reflect true likelihoods.

Problem: My model doesn't generalize well to new data or publications.

- Cause: BioBERT has a static knowledge cutoff based on its pre-training data and may not be aware of recent discoveries [20].

- Solution:

- Continuously fine-tune the model on newly available data from the latest literature.

- Consider alternatives like PubMedBERT, which is trained from scratch on PubMed and may have more up-to-date or robust representations [20].

Frequently Asked Questions (FAQs)

Q1: What is BioBERT, and how is it different from BERT? BioBERT (Bidirectional Encoder Representations from Transformers for Biomedical Text Mining) is a domain-specific language representation model pre-trained on large-scale biomedical corpora, such as PubMed abstracts and PMC full-text articles. While it uses the same architecture as BERT, its continued pre-training on biomedical text allows it to understand complex medical terminology far better, leading to significant performance improvements on biomedical text mining tasks [18] [20].

Q2: Why is data curation so critical for building QSAR models from mined data? The accuracy of both chemical structures and biological activities in your training data directly determines the accuracy and reliability of your QSAR models. Errors in chemical structures (e.g., incorrect tautomers or stereochemistry) or bioactivities (e.g., inconsistent measurements for the same compound) propagate through the model, leading to poor predictive performance and non-reproducible results. Proper curation is a non-negotiable prerequisite for robust modeling [9] [4].

Q3: What are some common biomedical tasks that BioBERT can be used for? BioBERT has been successfully applied to a variety of tasks, including [18] [20]:

- Named Entity Recognition (NER): Identifying and classifying entities like diseases, drugs, genes, and chemicals in text.

- Relation Extraction: Determining how two medical entities are related (e.g., drug-treats-disease).

- Question Answering: Building systems that can answer questions based on biomedical literature.

Q4: What are the main limitations of BioBERT? Researchers should be aware of several limitations [20]:

- It is primarily trained on English biomedical text, limiting its use for non-English content.

- It has a static knowledge cutoff and does not automatically learn from new research published after its training date.

- It requires significant computational resources (GPUs) for fine-tuning.

- It is not specifically trained on clinical notes, so performance on electronic health records (EHRs) may be suboptimal without further fine-tuning.

Q5: Are there alternatives to BioBERT for specific use cases? Yes, several other domain-specific BERT models exist [20]:

- ClinicalBERT: Fine-tuned on clinical notes from EHRs (e.g., MIMIC-III), making it more suitable for real-world patient data.

- SciBERT: Trained on a broad corpus of scientific papers, useful for multi-disciplinary research.

- PubMedBERT: Trained from scratch on PubMed abstracts, offering a potentially more robust biomedical language understanding.

Experimental Protocols & Data Summaries

Protocol: Fine-tuning BioBERT for Named Entity Recognition

Objective: To adapt a pre-trained BioBERT model to recognize specific biomedical entities (e.g., genes, cell lines) from text.

Materials:

- Pre-trained BioBERT weights (e.g.,

dmis-lab/biobert-base-cased-v1.1). - A labeled NER dataset (e.g., NCBI Disease corpus), formatted with token-per-line and BIO tags.

- A machine with a GPU (e.g., NVIDIA TITAN Xp with 12GB RAM) and the required Python environment (Tensorflow 1.x or PyTorch,

transformerslibrary).

Methodology [19]:

- Data Preparation: Place your dataset in a designated folder (e.g.,

$NER_DIR) with standard splits (train.tsv,devel.tsv,test.tsv). - Environment Setup: Set environment variables for the BioBERT directory (

$BIOBERT_DIR) and the desired output directory ($OUTPUT_DIR). - Run Fine-tuning: Execute the

run_ner.pyscript with appropriate parameters (see troubleshooting guide above for an example command). - Inference & Evaluation: After training, run prediction on the test set using

--do_train=false --do_predict=true. Use the providedner_detokenize.pyandre_eval.pyscripts for official entity-level evaluation.

Protocol: Curating a Dataset for QSAR Modeling

Objective: To create a high-quality, balanced dataset of chemical structures and bioactivities from public repositories suitable for QSAR model development.

Materials:

- Source data from public chemogenomics repositories (e.g., ChEMBL, PubChem).

- Cheminformatics software (e.g., RDKit, Chemaxon).

- Chemical Curation:

- Filtering: Remove inorganic, organometallic compounds, mixtures, and salts.

- Standardization: Clean structures, correct valence errors, normalize tautomers to a consistent representation, and check stereochemistry.

- Manual Inspection: Manually check a sample of compounds, especially those with complex structures or many atoms.

- Biological Curation:

- Duplicate Management: Identify all chemical duplicates. For duplicates with varying bioactivity values, apply a consistent rule (e.g., take the mean, median, or most recent value) or flag them for manual review.

- Endpoint Definition: For QSAR, clearly define the activity endpoint and its units (e.g., pIC50 > 6 as active). Apply criteria for data quality, such as minimum potency levels and absence of cytotoxicity at the measured activity [4].

- Balancing: Actively curate negative data (inactives) by extracting only robust inactives—compounds tested and shown to be inactive under the same experimental conditions [4].

Quantitative Performance of BioBERT

Table 1: Performance improvement of BioBERT over the original BERT model on key biomedical text mining tasks [18].

| Biomedical Text Mining Task | Performance Metric | BioBERT | BERT | F1 Score Improvement |

|---|---|---|---|---|

| Biomedical Named Entity Recognition | F1 Score | Better | Baseline | +0.62% |

| Biomedical Relation Extraction | F1 Score | Better | Baseline | +2.80% |

| Biomedical Question Answering | Mean Reciprocal Rank (MRR) | Better | Baseline | +12.24% |

Research Reagent Solutions

Table 2: Essential materials and resources for experiments involving BioBERT and biomedical data curation.

| Item / Resource | Function / Description | Example / Source |

|---|---|---|

| Pre-trained BioBERT Weights | The core pre-trained model that can be fine-tuned for specific tasks. | dmis-lab/biobert-base-cased-v1.1 (Hugging Face Model Hub) [19]. |

| Biomedical NER Datasets | Labeled datasets for fine-tuning and evaluating Named Entity Recognition models. | NCBI Disease Corpus, BC4CHEMD, BC5CDR, CHEMPROT (provided in BioBERT repository) [19]. |

| RDKit | Open-source cheminformatics toolkit used for chemical structure standardization, curation, and descriptor calculation. | RDKit (https://www.rdkit.org) [9]. |

| Chemaxon JChem | Commercial software suite for chemical structure standardization, tautomer normalization, and database management. | JChem Base (https://chemaxon.com) [9]. |

| ChEMBL | A manually curated database of bioactive molecules with drug-like properties, a key source for chemical bioactivity data. | ChEMBL (https://www.ebi.ac.uk/chembl/) [9]. |

| PubChem | A public repository of chemical substances and their biological activities, providing a vast source of screening data. | PubChem (https://pubchem.ncbi.nlm.nih.gov) [9]. |

Workflow and Pathway Visualizations

BioBERT QSAR Data Curation Workflow

BioBERT Fine-Tuning Process

The predictive power of any Quantitative Structure-Activity Relationship (QSAR) model is fundamentally constrained by the quality of its training data. The principle of congenericity—that similar structures confer similar properties—relies entirely on consistent molecular representation [21]. Curating a balanced training dataset, which includes both active (positive) and inactive (negative) compounds, is essential for developing robust models that can accurately distinguish between them [22]. However, chemical structures from public databases often contain inconsistencies in the representation of salts, tautomers, and stereochemistry, leading to errors in descriptor calculation and, consequently, unreliable models [21] [23].

This guide provides a detailed technical framework for standardizing chemical structures to ensure the creation of "QSAR-ready" and "MS-ready" datasets, a critical step for successful model development in drug discovery and regulatory toxicology [21] [24].

Frequently Asked Questions (FAQs)

Q1: Why is the removal of salts a critical step in preparing structures for QSAR? Salts are often part of a chemical's formulation but are typically not responsible for its biological activity. If not removed, the presence of counterions can lead to the calculation of incorrect molecular descriptors, which do not represent the bioactive form of the molecule. Standard practice involves identifying and separating counterions from the main structure, then neutralizing the parent molecule when possible. The information about the original salt form should be retained as metadata for traceability [23] [25].

Q2: How do tautomers affect QSAR model performance, and how should they be standardized? Tautomers are alternate forms of the same compound that exist in equilibrium by the migration of a hydrogen atom. A molecule represented in different tautomeric states can yield vastly different values for descriptors that depend on hydrogen bonding or charge distribution. This inconsistency introduces "noise" that obscures the true structure-activity relationship. Automated workflows should include a tautomer standardization step that normalizes all structures to a single, canonical tautomeric form based on a defined set of rules, ensuring all identical molecules are represented uniformly before descriptor calculation [21].

Q3: When should stereochemistry be retained or stripped from molecular data? The handling of stereochemistry depends on the endpoint being modeled and the available data.

- Strip Stereochemistry: For many QSAR models, particularly those predicting broad biological endpoints or built on data with unspecified stereochemistry, stripping stereochemical information is recommended. This simplifies the chemical space and avoids descriptor miscalculations from arbitrary stereochemical assignments [21] [26].

- Retain Stereochemistry: For stereospecific activities, such as binding to chiral receptors or modeling pharmacokinetics, accurate stereochemistry is critical. Errors in stereochemical representation can propagate through models, leading to misleading virtual screening results and flawed chemical design [27]. The FDA requires stereochemical investigation for chiral drug candidates, making accurate data essential for regulatory submissions [27].

Q4: Why is negative data important for a balanced QSAR training set? Machine learning models, including QSAR classifiers, require balanced training datasets that include compounds with both desirable (active) and undesirable (inactive) properties. The availability of high-quality negative data is essential for teaching the model to distinguish between active and inactive compounds, thereby improving its reliability and generalizability. A dataset containing only active compounds would be unable to predict inactivity [22].

Troubleshooting Guides

Problem 1: Inconsistent Biological Activity for the "Same" Compound After Data Aggregation

Possible Cause: The most common cause is the presence of tautomers. The same chemical compound may have been entered into different source databases in different tautomeric forms. While chemically interchangeable, these forms are computationally distinct, leading to their treatment as different structures during descriptor calculation.

Solution:

- Implement a Tautomer Normalization Tool: Integrate a tool like the RDKit MolStandardize algorithm or the standardizer in the Chemical Development Kit (CDK) into your preprocessing workflow.

- Apply Canonical Tautomer Rules: These tools apply a set of rules to protonate or deprotonate atoms, reorganize bonds, and generate a single, canonical representative for all possible tautomers of a given molecule.

- Re-check for Duplicates: After standardization, re-check the dataset for duplicates using canonical SMILES or InChI keys to merge the previously inconsistent entries and their associated biological data [21].

Problem 2: Poor Model Performance and Physicochemically Illogical Descriptors

Possible Cause: The presence of salts and counterions. Descriptors like molecular weight, log P, and topological polar surface area will be severely skewed if descriptors are calculated for a structure that includes sodium chloride or other counterions attached to the main molecule.

Solution:

- Desalting and Neutralization:

- Use a cheminformatics toolkit (e.g., RDKit, CDK) to disconnect molecular fragments.

- Identify the largest organic fragment as the parent structure.

- Neutralize the parent structure by adding or removing hydrogens to achieve a neutral charge state. The RDKit Structure Normalizer node is designed for this task [23].

- Flag and Record: Maintain a record of the original salt form and the removed counterions as separate attributes in your dataset. This ensures information is not lost [23].

- Re-calculate Descriptors: Always calculate molecular descriptors on the neutralized, parent structure.

Problem 3: Model Fails to Predict Activity of New Stereoisomers Accurately

Possible Cause: Inconsistent or missing stereochemistry in the training data. If the training set contains a racemic mixture (listed as a single compound with unspecified stereochemistry) but the biological activity is driven by a single enantiomer, the model learns an "average" of the active and inactive forms, reducing its predictive power.

Solution:

- Audit Data Sources: Scrutinize the original data sources (literature, patents) to determine if stereochemistry was specified and tested.

- Define a Project-Specific Policy: Based on the audit, decide whether to:

- Remove Stereochemistry: For non-stereospecific endpoints, strip all stereochemical information to ensure consistency [21] [26].

- Curate and Retain Stereochemistry: For stereospecific endpoints, manually curate and correctly annotate the stereochemistry of each active compound. This may require treating different enantiomers as distinct data points [27].

- Validate File Formats: Ensure that file conversions between formats (e.g., SDF, SMILES) do not lose stereochemical markers (wedges, dashes, @ symbols) [27].

Experimental Protocols & Workflows

Detailed Methodology: The QSAR-Ready Standardization Workflow

The following protocol, adapted from a freely available KNIME workflow, describes an automated process for generating standardized "QSAR-ready" structures [21].

Objective: To convert a raw set of chemical structures from various sources into a curated, standardized dataset suitable for reliable molecular descriptor calculation and QSAR modeling.

Step-by-Step Procedure:

Data Retrieval and Input:

- Input: A list of chemical structures encoded as SMILES, InChI, or other common formats, along with identifiers like CAS numbers or chemical names.

- Action: If identifiers are used, resolve them to structures using reliable REST services (e.g., NIH CACTUS, EPA CompTox Dashboard, PubChem) [23].

Initial Filtering:

Salt Disconnection and Neutralization:

- Use a connectivity checker (e.g., CDK connectivity node) to disconnect unconnected structures (e.g., sodium from a carboxylate).

- Isolate the main parent molecule (typically the fragment with the highest molecular weight).

- Neutralize the parent molecule using a standardizer (e.g., RDKit Structure Normalizer node). This step adds or removes hydrogens to achieve a neutral charge state where possible [23].

- Output: The neutralized parent structure. A flag should be created to indicate successful neutralization, and the removed counterions should be stored as metadata [23].

Stripping of Stereochemistry (for 2D QSAR):

Tautomer Standardization and Functional Group Normalization:

- Apply a set of chemical rules to transform all tautomers into a single, canonical form.

- Standardize the representation of functional groups, such as nitro groups (

[N+](=O)[O-]toN(=O)=O), to ensure consistency across the dataset [21].

Valence Correction and Sanity Checking:

- Run a valence check to identify and correct atoms with impossible valences, which are a common error in chemical databases.

- Check for and remove any remaining structures with abnormal valences or other structural impossibilities.

Deduplication:

- Generate canonical SMILES or InChIKeys for all processed structures.

- Identify and merge duplicate structures. For duplicates with associated experimental data, calculate the mean and standard deviation of the activity values. Establish a coefficient of variation (CV) cut-off (e.g., 0.1) to remove duplicates with highly variable data, and use the mean activity value for the retained duplicate [26].

Table 1: Common Software Tools for Implementing the Standardization Workflow

| Tool/Software | Function | Availability / Reference |

|---|---|---|

| KNIME | Workflow environment for building and executing the entire standardization pipeline. | Freely available [21] [23] |

| RDKit | Open-source cheminformatics toolkit; provides nodes for neutralization, stereo removal, and canonicalization. | Freely available [23] [25] |

| Chemical Development Kit (CDK) | Open-source library for bio- and chemo-informatics; used for structure connectivity and manipulation. | Freely available [23] |

| Mordred | A tool for calculating a comprehensive set of molecular descriptors. | Python package [26] |

| MolVS | A library for molecular validation and standardization, including tautomer normalization. | Python library [21] |

Workflow Visualization

The diagram below illustrates the logical sequence of the key steps in the QSAR-ready standardization workflow.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Resources for Chemical Data Curation and QSAR Modeling

| Item | Function in Research | Explanation |

|---|---|---|

| KNIME Analytics Platform | Workflow Integration | A graphical platform that allows researchers to visually design, execute, and share the entire data curation and modeling pipeline without extensive programming. [21] [23] |

| RDKit | Chemical Programming | An open-source C++ and Python library for cheminformatics, essential for performing custom structure standardization, descriptor calculation, and machine learning tasks. [26] [25] |

| PaDEL-Descriptor & Mordred | Descriptor Calculation | Software tools designed to calculate a comprehensive set of molecular descriptors and fingerprints directly from molecular structures, which are the inputs for QSAR models. [10] [26] |

| CompTox Chemicals Dashboard | Data Retrieval & Validation | An EPA-provided web application offering access to a large, curated database of chemicals, which can be used to verify and cross-reference structures and properties. [21] [23] |

| OrbiTox Platform | Read-Across & QSAR | A specialized platform integrating similarity searching, QSAR models, and metabolism prediction to support regulatory-grade read-across and toxicity predictions. [24] |

| GitHub | Protocol Sharing | A repository hosting service where many pre-built and community-improved data curation workflows (e.g., KNIME) are shared and version-controlled. [21] [23] |

FAQs on Data Augmentation for QSAR

Q1: What are the primary causes of data scarcity and imbalance in QSAR modeling? Data scarcity and imbalance in QSAR often arise from the high cost and time required to generate high-quality experimental biological data [28]. Furthermore, some chemical classes or activity outcomes (like potent inhibitors versus inactive compounds) may be naturally underrepresented in available datasets [29] [5]. This can lead to models biased toward the majority class, reducing predictive accuracy for the scarce class [30].

Q2: Which data augmentation techniques are most effective for categorical bioactivity data? For categorical data, such as active/inactive classifications, combining oversampling and under-sampling techniques has proven highly effective [29]. Specifically, using the Synthetic Minority Over-sampling Technique (SMOTE) to generate synthetic examples of the minority class, alongside Random Under-Sampling (RUS) to reduce the majority class, can successfully create balanced training datasets [29].

Q3: How can I augment data for a regression-based QSAR model with continuous endpoints? While SMOTE is designed for categorical data, introducing controlled noise or variation to existing continuous data points can effectively augment regression datasets. However, caution is required, as simulated experimental errors can significantly deteriorate model performance if the noise level is too high [5]. Using domain knowledge to guide the plausible range of variation is crucial.

Q4: Can a QSAR model help identify potential errors in my dataset? Yes, QSAR modeling itself can be a tool to identify potential experimental errors. Compounds with consistently large prediction errors during cross-validation may be flagged for having potential data quality issues [5]. However, simply removing these compounds based on cross-validation errors is not always recommended, as it can lead to overfitting and does not guarantee improved predictions on new, external compounds [5].

Q5: What role do molecular descriptors play in data augmentation strategies? Molecular descriptors are the numerical features representing chemical structures. Using multiple types of descriptors (e.g., AP2D, Morgan fingerprints, RDKit descriptors) creates "multi-view" features of each molecule [29]. When combined with deep learning architectures, these rich feature sets allow the model to learn more robust patterns, enhancing the utility of both original and augmented data [29].

Troubleshooting Common Experimental Issues

Problem: Model performance is poor despite data augmentation.

- Potential Cause 1: The quality of the initial dataset is low, with structural errors or significant experimental noise [28] [5]. Augmentation cannot compensate for fundamentally flawed data.

- Solution: Rigorously curate your dataset before augmentation. This includes standardizing chemical structures (e.g., removing salts, handling tautomers), verifying chemical structures, and consolidating biological data from multiple reliable sources where possible [10] [5].

- Potential Cause 2: The augmented data does not realistically represent the chemical space or the structure-activity relationship.

- Solution: Apply domain knowledge when generating synthetic data. For SMILES augmentation, ensure the generated strings correspond to valid and chemically plausible structures [31]. The applicability domain of the model should be considered.

Problem: The model is biased after balancing the dataset with augmentation.

- Potential Cause: The undersampling step may have removed too much critical information from the majority class, or the oversampling may have created unrealistic synthetic samples that overfit the training data [29].

- Solution: Experiment with different ratios of SMOTE and RUS (e.g., 25%, 50%, 75%) to find the optimal balance for your specific dataset [29]. Use robust validation techniques like external test sets and cross-validation to detect overfitting.

Problem: How to handle missing values in a scarce dataset before augmentation?

- Solution: For small datasets, removing compounds with missing data might not be feasible. Instead, consider imputation methods such as k-nearest neighbors (KNN) imputation or QSAR-based prediction to fill in missing values, ensuring these methods are applied carefully to avoid introducing bias [10].

Experimental Protocols for Data Augmentation

Protocol 1: Handling Class Imbalance with SMOTE and RUS

This protocol is based on the methodology successfully applied to identify Glucocorticoid Receptor (GR) antagonists [29].

- Data Curation: Collect and curate your dataset. Classify compounds into active (positive) and inactive (negative) classes based on a defined activity threshold (e.g., pIC50 > 6 for active, pIC50 < 5 for inactive) [29].

- Descriptor Calculation: Calculate a diverse set of molecular descriptors (e.g., AP2D, CDKExt, Morgan fingerprints) using software like PaDEL-Descriptor or RDKit [29].

- Create Multi-view Features: Combine different descriptor types to form a comprehensive feature vector for each compound [29].

- Apply SMOTE and RUS:

- Use the SMOTE algorithm to generate synthetic samples for the minority (inactive) class. The proportion of SMOTE can be varied (e.g., 25%, 50%, 75%, 100%) to determine the optimal level [29].

- Simultaneously, use Random Under-Sampling (RUS) on the majority (active) class to the same proportion, achieving a balanced 1:1 dataset [29].

- Model Building and Validation: Build your QSAR model on the balanced dataset and validate rigorously using internal cross-validation and an external test set that was not used in any augmentation steps [29].

Protocol 2: SMILES-Based Data Augmentation for Deep Learning

This protocol leverages Natural Language Processing (NLP) techniques for data augmentation [31].

- Data Collection: Compile a dataset of active and inactive compounds, represented by their SMILES strings [31].

- SMILES Augmentation: Generate multiple, valid SMILES representations for each molecule in your dataset. This increases data variability as the model learns to recognize the same molecule from different string representations [31].

- Leverage Transfer Learning: Use a pre-trained model like a BERT model from the Hugging Face repository that has been trained on a large corpus of chemical SMILES strings [31].

- Fine-Tuning: Fine-tune this pre-trained model on your specific, augmented dataset of alpha-glucosidase inhibitors (or your target endpoint). This transfers the general chemical knowledge to your specific task [31].

- Prediction and Validation: Use the fine-tuned model to predict new compounds and validate predictions with external test sets and, if possible, follow-up molecular docking or dynamics simulations [31].

Comparative Table of Data Augmentation Techniques

The table below summarizes the pros, cons, and applications of common data augmentation strategies in QSAR.

| Technique | Best For | Key Advantages | Key Limitations |

|---|---|---|---|

| SMOTE + RUS [29] | Imbalanced classification datasets (Active/Inactive). | Creates a perfectly balanced dataset; improves model focus on minority class. | May remove informative majority samples; synthetic samples might be noisy. |

| SMILES Augmentation [31] | Deep learning models using SMILES strings as input. | Simple to implement; increases data variability without new descriptors. | Limited to SMILES-based models; may not explore new chemical space. |

| Introducing Controlled Noise [5] | Simulating experimental variability in continuous data. | Can help models become more robust to small measurement errors. | High risk of significantly degrading model performance if noise level is inappropriate [5]. |

| Consensus Predictions [5] | Improving robustness of predictions from error-ridden datasets. | Can average out individual model errors; more reliable identification of problematic compounds. | Does not generate new data; requires building multiple models. |

The Scientist's Toolkit: Essential Research Reagents

| Tool / Resource | Function in Data Augmentation & QSAR | Reference |

|---|---|---|

| PaDEL-Descriptor | Software to calculate molecular descriptors and fingerprints for feature generation. | [29] |

| RDKit | Open-source cheminformatics toolkit used for descriptor calculation and chemical structure handling. | [29] [10] |

| SMOTE | Algorithm to generate synthetic samples for the minority class in an imbalanced dataset. | [29] |

| Pre-trained BERT Models (Hugging Face) | Provides a foundation model with chemical knowledge that can be fine-tuned on small, specific datasets. | [31] |

| QSAR Toolbox | Software that supports chemical hazard assessment, offering data retrieval, profiling, and read-across for data gap filling. | [32] [33] |

Workflow Diagram: Data Augmentation for QSAR

Data Augmentation Workflow for QSAR

Workflow Diagram: SMILES Augmentation with BERT

SMILES Augmentation with BERT Model

In Quantitative Structure-Activity Relationship (QSAR) modeling, the challenge of imbalanced datasets is pervasive, particularly when working with High-Throughput Screening (HTS) data from public repositories like PubChem, where the ratio of active to inactive compounds can be extremely skewed [1]. This imbalance causes standard classifiers to become biased toward the majority class, leading to poor predictive performance for the rare but often critically important minority class, such as active drug compounds or toxic substances [34] [1]. This guide provides practical, data-level solutions to curate balanced training datasets for more robust and reliable QSAR models.

Core Concepts: Resampling Techniques

Data-level methods address imbalance by modifying the dataset's composition before model training. They are primarily divided into three categories [34]:

- Oversampling: Increasing the number of minority class instances.

- Undersampling: Decreasing the number of majority class instances.

- Hybrid Methods: Combining both oversampling and undersampling.

The table below summarizes the core resampling techniques used in QSAR and chemoinformatics.

Table 1: Core Resampling Techniques for Imbalanced QSAR Data

| Technique | Type | Core Principle | Best Suited For | Key Considerations |

|---|---|---|---|---|

| Random Oversampling (ROS) [35] | Oversampling | Randomly duplicates existing minority class samples. | Simple, fast baseline; very low-computational cost. | High risk of overfitting; does not add new information [35]. |

| SMOTE [36] | Oversampling | Generates synthetic minority samples by interpolating between neighboring instances. | General-purpose use; introduces new data points beyond duplication. | Can generate noise in overlapping class regions; can create "line bridges" to majority classes [36]. |