Benchmarking Computational Methods for Multi-Omics Data Integration: A Comprehensive Guide for Biomedical Research

The integration of multi-omics data is revolutionizing biomedical research, particularly in drug discovery and clinical outcome prediction.

Benchmarking Computational Methods for Multi-Omics Data Integration: A Comprehensive Guide for Biomedical Research

Abstract

The integration of multi-omics data is revolutionizing biomedical research, particularly in drug discovery and clinical outcome prediction. However, the rapid development of diverse computational methods presents a significant challenge for researchers in selecting and applying the most appropriate techniques. This article provides a systematic benchmark and comprehensive guide to navigating this complex landscape. We explore the foundational principles of multi-omics integration, categorize and evaluate state-of-the-art methodological frameworks, address common troubleshooting and optimization challenges, and present rigorous validation and comparative analysis strategies. By synthesizing insights from recent large-scale benchmarking studies, we equip researchers and drug development professionals with the knowledge to effectively leverage multi-omics data, enhance predictive accuracy, and derive robust biological insights.

The Multi-Omics Integration Landscape: Why Benchmarking is Essential for Modern Biology

Defining the Multi-Omics Data Integration Challenge

Multi-omics data integration represents a paradigm shift in biomedical research, moving from the isolated analysis of individual biological layers to a holistic approach that combines genomics, transcriptomics, proteomics, epigenomics, and metabolomics. This integrated strategy enables researchers to construct comprehensive models of biological systems, revealing the complex interplay between different molecular levels that underpin health and disease [1] [2]. The fundamental challenge lies in developing computational methods capable of harmonizing these diverse data types, which vary in scale, structure, and biological context, to extract meaningful biological insights that would remain hidden when analyzing each dataset independently [3].

The urgency of addressing this challenge is reflected in major research initiatives, such as the NIH's $50.3 million Multi-Omics for Health and Disease program, which recognizes the transformative potential of these approaches for precision medicine [2]. As the field rapidly advances, researchers face a critical need for objective benchmarks to navigate the growing landscape of computational integration methods and select the most appropriate tools for their specific biological questions and data types [4] [5].

Performance Benchmarking: Comparative Analysis of Integration Methods

Single-Cell Multimodal Omics Integration

Systematic benchmarking of single-cell multimodal integration methods has revealed significant performance variations across different data modalities and analytical tasks. A comprehensive 2025 evaluation of 40 integration methods categorized approaches into four prototypical integration types—vertical, diagonal, mosaic, and cross—and assessed them across seven common computational tasks [4].

Table 1: Top-Performing Single-Cell Multi-Omics Integration Methods by Data Modality

| Data Modality | Top-Performing Methods | Key Strengths | Reference |

|---|---|---|---|

| RNA + ADT | Seurat WNN, sciPENN, Multigrate | Effective preservation of biological variation in cell types | [4] |

| RNA + ATAC | Seurat WNN, Multigrate, Matilda, UnitedNet | Strong performance across diverse datasets | [4] |

| RNA + ADT + ATAC | Seurat WNN, MIRA, scMoMaT | Capable of handling trimodal integration | [4] |

| General Prediction | totalVI, scArches (protein); LS_Lab (chromatin) | Top-performing for cross-modality prediction | [6] |

For feature selection tasks, which identify molecular markers associated with specific cell types, benchmarking has shown that method performance varies significantly. Matilda and scMoMaT demonstrate strength in identifying cell-type-specific markers, while MOFA+ generates more reproducible feature selection results across different data modalities despite its limitation in selecting only cell-type-invariant markers [4].

Bulk Multi-Omics Integration for Cancer Subtyping

In bulk multi-omics integration for cancer subtyping, comprehensive benchmarking using The Cancer Genome Atlas (TCGA) data has identified several high-performing methods. A recent study evaluated twelve established machine learning methods across nine cancer types and eleven combinations of four multi-omics data types (genomics, transcriptomics, proteomics, and epigenomics) [5].

Table 2: Performance Benchmarking of Machine Learning Methods for Multi-Omics Cancer Subtyping

| Method | Silhouette Score | Clinical Relevance (log-rank p-value) | Robustness (NMI) | Computational Efficiency (seconds) |

|---|---|---|---|---|

| iClusterBayes | 0.89 | 0.75 | 0.85 | 180 |

| Subtype-GAN | 0.87 | 0.72 | 0.82 | 60 |

| SNF | 0.86 | 0.76 | 0.84 | 100 |

| NEMO | 0.84 | 0.78 | 0.86 | 80 |

| PINS | 0.82 | 0.79 | 0.83 | 120 |

| LRAcluster | 0.81 | 0.74 | 0.89 | 200 |

The benchmarking revealed that NEMO achieved the highest composite score (0.89), excelling in both clustering performance and clinical significance, while LRAcluster demonstrated exceptional robustness to noise, maintaining an average normalized mutual information (NMI) score of 0.89 with increasing noise levels [5]. Interestingly, the study found that using combinations of two or three omics types frequently outperformed configurations incorporating four or more types, highlighting the challenge of noise and redundancy in highly multidimensional data [5].

Spatial Multi-Omics Integration

Spatial transcriptomics technologies present unique integration challenges due to the added dimension of spatial context. A 2025 benchmarking study evaluated 12 multi-slice integration methods across 19 diverse datasets from seven technologies, including 10X Visium, MERFISH, and STARMap [7].

The evaluation revealed substantial task-dependent and data-dependent performance variations. For batch effect correction, GraphST-PASTE demonstrated superior performance (mean bASW 0.940, mean iLISI 0.713, mean GC 0.527), while MENDER, STAIG, and SpaDo excelled at preserving biological variance in spatial data [7]. The study also identified strong interdependencies between upstream integration quality and downstream application performance, emphasizing the importance of selecting methods based on specific analytical goals [7].

Experimental Protocols and Benchmarking Methodologies

Benchmarking Frameworks for Single-Cell Multimodal Data

The registered report protocol from Nature Methods outlines a comprehensive benchmarking framework for single-cell multimodal omics integration methods [4]. The experimental design incorporates multiple evaluation tasks assessed through tailored metrics:

- Dimension Reduction: Evaluated using cell-type separation metrics in low-dimensional embeddings

- Batch Correction: Assessed through batch mixing metrics while preserving biological variance

- Clustering: Measured by clustering accuracy against known cell-type labels

- Classification: Evaluated by cell-type prediction accuracy

- Feature Selection: Assessed by marker relevance and reproducibility

- Imputation: Measured by accuracy in predicting missing values

- Spatial Registration: Evaluated for spatial data alignment accuracy

The protocol employs 64 real datasets and 22 simulated datasets encompassing various modality combinations, including RNA+ADT, RNA+ATAC, and RNA+ADT+ATAC. Evaluation metrics include adjusted rand index (ARI), normalized mutual information (NMI), average silhouette width (ASW), and label transfer accuracy, providing a multi-faceted assessment of method performance [4].

Bulk Multi-Omics Cancer Subtyping Benchmarking

The benchmarking methodology for bulk multi-omics cancer subtyping utilizes TCGA data across nine cancer types, creating eleven possible combinations of four omics types [5]. The experimental protocol includes:

- Data Preprocessing: Standardized normalization and quality control across all omics datasets

- Method Evaluation: Four key performance dimensions assessed for each method

- Robustness Testing: Introduction of progressive noise levels to evaluate method stability

- Clinical Validation: Survival analysis to assess clinical relevance of identified subtypes

Performance metrics include silhouette scores for clustering quality, log-rank p-values for clinical significance, normalized mutual information (NMI) for robustness, and execution time for computational efficiency [5]. The framework employs k-fold cross-validation to ensure reliable performance estimates.

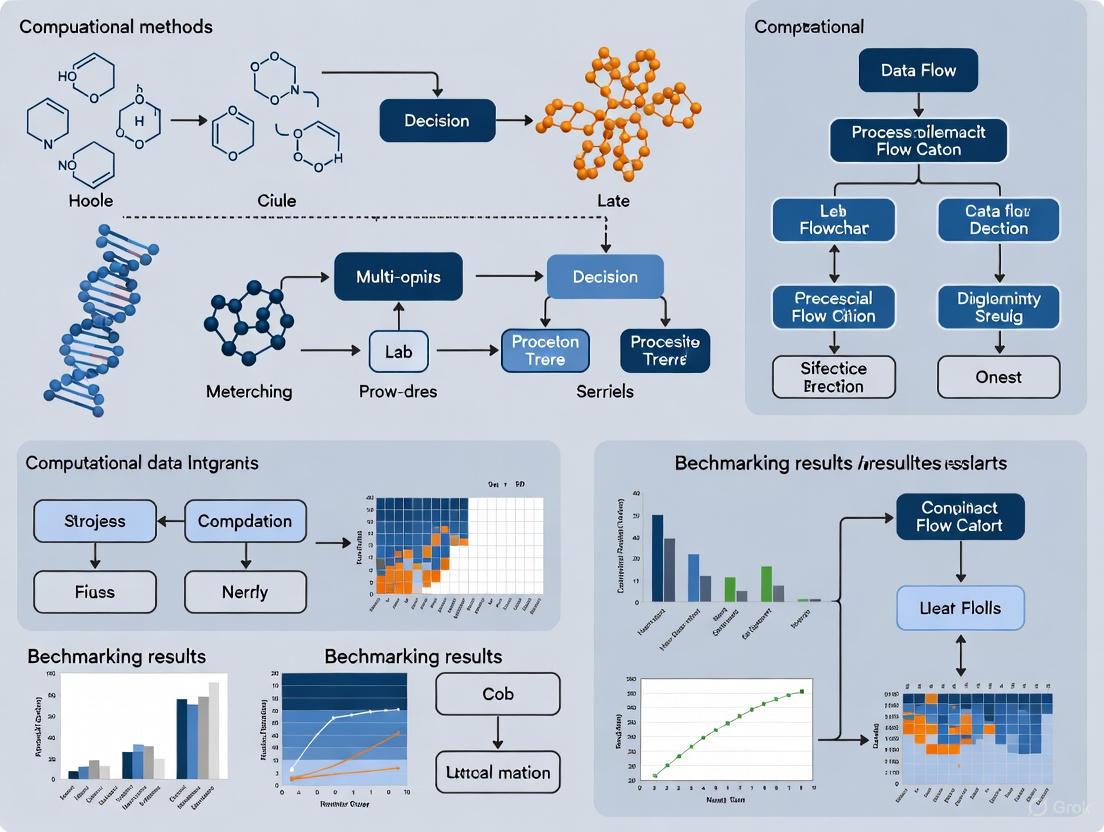

Workflow Visualization of Multi-Omics Benchmarking

The following diagram illustrates the comprehensive benchmarking workflow for multi-omics integration methods:

Multi-Omics Benchmarking Workflow

Successful multi-omics integration requires not only computational methods but also specialized data resources and analytical tools. The following table outlines key components of the multi-omics research toolkit:

Table 3: Essential Research Reagents and Computational Tools for Multi-Omics Integration

| Resource Type | Specific Examples | Function and Application | Reference |

|---|---|---|---|

| Data Repositories | TCGA, CCLE, Human Cell Atlas | Provide standardized multi-omics datasets for method development and testing | [8] |

| ncRNA Databases | ncRNA-disease databases (Lnc2Cancer, ncRPheno) | Curated associations for non-coding RNA disease research | [9] |

| Analysis Platforms | GraphOmics, Flexynesis, CustOmics | Integrated environments for multi-omics data visualization and analysis | [8] [9] |

| Benchmarking Frameworks | iSTBench, Multi-omics method benchmarks | Standardized pipelines for method evaluation and comparison | [4] [7] |

| Visualization Tools | UMAP, t-SNE, spatial mapping tools | Dimensionality reduction and spatial data visualization | [4] [7] |

Flexynesis represents a notable advancement in deep learning toolkits, addressing critical limitations in reusable, modular multi-omics analysis by providing standardized interfaces for data processing, feature selection, and hyperparameter tuning across diverse modeling tasks including regression, classification, and survival analysis [8]. This toolkit supports both deep learning architectures and classical machine learning methods, enabling comprehensive benchmarking within a unified framework.

Methodological Relationships and Integration Categories

The landscape of multi-omics integration methods can be categorized by their underlying computational approaches and integration strategies, as visualized in the following diagram:

Method Relationships and Categories

The benchmarking data presented in this guide reveals a consistent theme: there is no universally superior method for multi-omics data integration. Method performance is highly dependent on specific data modalities, analytical tasks, and dataset characteristics [4] [5] [7]. This context-dependent performance underscores the importance of selective method adoption based on specific research objectives rather than seeking a one-size-fits-all solution.

Several key findings emerge from current benchmarking studies. First, method performance varies significantly across different data modalities—a method excelling with RNA+ADT data may not maintain its advantage with RNA+ATAC data [4]. Second, more data does not always yield better outcomes, as evidenced by the superior performance of two or three-omics combinations compared to four or more types in cancer subtyping [5]. Third, strong interdependencies exist between upstream integration quality and downstream application performance, particularly in spatial transcriptomics [7].

As the field advances, promising directions include the development of flexible, modular frameworks like Flexynesis that support both deep learning and classical machine learning approaches [8], increased attention to model interpretability [9], and the creation of comprehensive benchmarking resources that enable researchers to select optimal methods for their specific multi-omics integration challenges.

The field of biomedical research has witnessed a paradigm shift from single-omics analyses toward integrated multi-omics approaches, driven by the recognition that complex biological systems cannot be fully understood by examining individual molecular layers in isolation. This transition is particularly evident in precision oncology and drug discovery, where molecular heterogeneity demands sophisticated analytical frameworks that can capture interactions across genomic, transcriptomic, proteomic, and epigenomic strata [10]. The integration of these diverse data types enables researchers to move beyond snapshots of individual biological processes toward system-level understanding of disease mechanisms and therapeutic responses.

Multi-omics integration faces significant computational challenges stemming from data heterogeneity, with dimensional disparities ranging from millions of genetic variants to thousands of metabolites, creating a "curse of dimensionality" that necessitates sophisticated feature reduction techniques [10]. Additional complexities include temporal heterogeneity in molecular processes, platform-specific technical variability, and pervasive missing data issues. Despite these challenges, the potential rewards are substantial, with integrated multi-omics approaches demonstrating transformative potential across the therapeutic development spectrum, from initial target identification to clinical outcome prediction and treatment optimization [11] [12].

Computational Method Benchmarking: Strategies and Performance Metrics

Benchmarking Frameworks and Evaluation Metrics

The growing diversity of multi-omics integration methods has created an urgent need for comprehensive benchmarking studies to guide researchers in selecting appropriate analytical tools. Effective benchmarking requires careful consideration of evaluation metrics that assess different aspects of method performance across multiple data processing stages. Current benchmarking efforts typically evaluate methods at three distinct levels: cell embeddings, graph structure, and final partitions, employing a suite of metrics to provide a comprehensive assessment [13].

For cancer subtyping applications, key performance metrics include clustering accuracy, clinical relevance, robustness, and computational efficiency [5]. The Adjusted Rand Index (ARI) measures similarity between computational clustering results and known biological classifications, while the Silhouette Width assesses separation between identified clusters. Clinical relevance is often evaluated through survival analysis, with methods that identify subtypes showing significant differences in patient outcomes considered more clinically meaningful [14]. Robustness measures a method's stability when dealing with noisy data, and computational efficiency evaluates scalability to large datasets.

Table 1: Key Performance Metrics for Multi-Omics Integration Methods

| Metric Category | Specific Metrics | Interpretation | Ideal Value |

|---|---|---|---|

| Clustering Accuracy | Adjusted Rand Index (ARI) | Similarity between computational clusters and true biological classes | Closer to 1.0 |

| Silhouette Width | Measure of cluster separation and cohesion | Closer to 1.0 | |

| Normalized Mutual Information (NMI) | Information-theoretic measure of clustering quality | Closer to 1.0 | |

| Clinical Relevance | Log-rank p-value | Significance of survival differences between subtypes | < 0.05 |

| Hazard Ratio | Effect size for survival differences between subtypes | > 1.0 or < 1.0 | |

| Robustness | Consistency across noise levels | Performance maintenance with added noise | Minimal degradation |

| Computational Efficiency | Execution time | Time required for analysis | Shorter preferred |

| Memory usage | Computational resources required | Lower preferred |

Performance Comparison of Integration Methods

Recent benchmarking studies have evaluated numerous multi-omics integration methods across diverse datasets and cancer types. One comprehensive assessment of twelve machine learning methods for cancer subtyping revealed that iClusterBayes achieved an impressive silhouette score of 0.89 at its optimal k, followed closely by Subtype-GAN (0.87) and SNF (0.86), indicating their strong clustering capabilities [5]. Notably, NEMO and PINS demonstrated the highest clinical significance, with log-rank p-values of 0.78 and 0.79, respectively, effectively identifying meaningful cancer subtypes with survival differences.

In robustness testing, LRAcluster emerged as the most resilient method, maintaining an average normalized mutual information (NMI) score of 0.89 even as noise levels increased, a crucial attribute for real-world data applications where technical and biological noise is inevitable [5]. For computational efficiency, Subtype-GAN stood out as the fastest method, completing analyses in just 60 seconds, while NEMO and SNF demonstrated commendable efficiency with execution times of 80 and 100 seconds, respectively. When considering overall performance across multiple metrics, NEMO ranked highest with a composite score of 0.89, showcasing its strengths in both clustering and clinical applications [5].

For single-cell chromatin data analysis, benchmarking of eight feature engineering pipelines revealed that feature aggregation, SnapATAC, and SnapATAC2 generally outperformed latent semantic indexing-based methods [13]. For datasets with complex cell-type structures, SnapATAC and SnapATAC2 were preferred, while for large datasets, SnapATAC2 and ArchR demonstrated superior scalability.

Table 2: Performance Comparison of Multi-Omics Integration Methods for Cancer Subtyping

| Method | Category | Clustering Accuracy (Silhouette Score) | Clinical Relevance (Log-rank p-value) | Robustness (NMI with Noise) | Computational Efficiency (Execution Time) |

|---|---|---|---|---|---|

| NEMO | Network-based | 0.84 | 0.78 | 0.85 | 80 seconds |

| iClusterBayes | Statistics-based | 0.89 | 0.75 | 0.82 | >300 seconds |

| Subtype-GAN | Deep Learning | 0.87 | 0.72 | 0.80 | 60 seconds |

| SNF | Network-based | 0.86 | 0.76 | 0.83 | 100 seconds |

| PINS | Consensus Clustering | 0.82 | 0.79 | 0.84 | 120 seconds |

| LRAcluster | Statistics-based | 0.80 | 0.70 | 0.89 | 150 seconds |

Key Application 1: Drug Target Identification and Validation

Technological Advances and Workflows

Drug target identification represents one of the most significant applications of multi-omics integration, with technological advances enabling a systematic approach to discovering and validating therapeutic targets. The traditional reliance on single-omics technologies has progressively shifted toward integrated multi-omics techniques, as it has become increasingly apparent that no single omics level can adequately elucidate the causal connections between drugs and the emergence of complex phenotypes [11]. This evolution has been facilitated by progress in large-scale sequencing and the development of high-throughput technologies that simultaneously capture multiple molecular dimensions.

Multi-omics approaches are now utilized throughout the drug discovery process, addressing challenges in target identification, target validation, and preclinical development [12]. In target identification, techniques such as laser-capture microdissection coupled with RNA sequencing enable characterization of rare cell populations, as demonstrated in schizophrenia research where this approach identified parvalbumin interneurons and specifically pinpointed GluN2D as a potential drug target [12]. Proteogenomic integration further enhances this process by connecting genomic alterations with their functional protein-level consequences, providing stronger evidence for target-disease relationships.

Diagram 1: Multi-omics drug target identification workflow

Experimental Protocols and Applications

A representative experimental protocol for target identification integrates genomic, transcriptomic, and epigenomic data from patient samples to pinpoint dysregulated pathways and novel therapeutic targets. The process begins with sample preparation using techniques such as laser-capture microdissection to isolate specific cell populations of interest, followed by multi-omic profiling through RNA sequencing, ATAC-seq for chromatin accessibility, and whole-genome or exome sequencing [12]. The resulting data undergoes computational integration using methods such as network-based approaches that map multiple omics datasets onto shared biochemical networks to improve mechanistic understanding [1].

In one exemplar application, researchers used this approach to identify GluN2D as a potential target for schizophrenia treatment. After identifying parvalbumin interneurons as crucial players in the disease pathology, they employed laser-capture microdissection followed by RNA-seq to characterize the druggable transcriptome of this rare cell population [12]. Integration of this transcriptomic data with genomic association studies enabled prioritization of GluN2D as a specific target within the glutamate receptor system. This case highlights how multi-omics integration can overcome the limitations of bulk tissue analysis and enable target discovery in specific cellular subpopulations.

Key Application 2: Cancer Subtyping and Molecular Stratification

Methodologies and Data Combinations

Cancer subtyping represents one of the most mature applications of multi-omics integration, addressing the critical need to decompose cancer heterogeneity into molecularly distinct subgroups with clinical relevance. The foundational principle is that different omics layers provide complementary information that collectively enables more accurate classification of cancer subtypes than any single data type alone. Genomics identifies DNA-level alterations including single-nucleotide variants and copy number variations; transcriptomics reveals gene expression dynamics; epigenomics characterizes DNA methylation and chromatin accessibility; and proteomics catalogs functional effectors of cellular processes [10].

Benchmarking studies have yielded the surprising finding that more data does not always equate to better outcomes; in fact, using combinations of two or three omics types frequently outperformed configurations that included four or more types due to the introduction of increased noise and redundancy [5]. Specifically, certain combinations have demonstrated particular effectiveness: genomics + transcriptomics, genomics + epigenomics, and transcriptomics + proteomics combinations consistently showed strong performance across multiple cancer types [14]. This counterintuitive finding highlights the importance of strategic data selection rather than simply maximizing data types.

Evaluation Frameworks and Clinical Translation

Robust evaluation of cancer subtyping methods requires frameworks that assess both computational performance and clinical relevance. A comprehensive benchmarking study evaluated ten representative integration methods across nine cancer types from TCGA, considering all eleven possible combinations of four multi-omics data types [14]. The evaluation encompassed clustering accuracy measured by how well methods recapitulated known biological classifications, clinical significance assessed through survival analysis and correlation with clinical parameters, robustness to noise and data perturbations, and computational efficiency including runtime and memory requirements.

The clinical translation of multi-omics subtyping is particularly evident in oncology, where molecular stratification now guides standard care protocols. In breast cancer, ESR1 mutations direct endocrine therapy selection; in non-small cell lung cancer, EGFR/ALK alterations predict tyrosine kinase inhibitor efficacy; and in diffuse large B-cell lymphoma, cell-of-origin transcriptomic subtyping informs chemotherapy response [10]. Importantly, multi-omics approaches can reveal resistance mechanisms that single-modality biomarkers miss, such as parallel pathway activation or epigenetic remodeling that drives resistance to targeted therapies like KRAS G12C inhibitors [10].

Key Application 3: Clinical Outcome Prediction and Predictive Allocation

Predictive Modeling and Validation

Clinical outcome prediction represents a frontier application of multi-omics integration, moving beyond descriptive classification toward prognostic forecasting and treatment response prediction. Artificial intelligence approaches, particularly machine learning and deep learning, have emerged as essential scaffolds bridging multi-omics data to clinical predictions by identifying non-linear patterns across high-dimensional spaces [10]. For example, convolutional neural networks automatically quantify immunohistochemistry staining with pathologist-level accuracy, graph neural networks model protein-protein interaction networks perturbed by somatic mutations, and multi-modal transformers fuse MRI radiomics with transcriptomic data to predict glioma progression [10].

A critical advancement in this domain is the concept of predictive allocation, a two-stage approach where outcome models first derive expected treatment benefit, followed by treatment assignment based on minimizing the individual's probability of experiencing a negative outcome [15]. This approach addresses the fundamental limitation of traditional evidence-based medicine, which relies on population-level evidence from randomized clinical trials that overlook heterogeneity of treatment effects between individuals. Simulation studies using data from pediatric cardiology trials demonstrated that predictive allocation could yield absolute risk reductions of 13.8-15.6%, corresponding to numbers needed to treat of 6.4-7.3, significantly improving upon guideline-based therapy [15].

Diagram 2: Predictive allocation for treatment optimization

Implementation Considerations and Challenges

The implementation of multi-omics predictive models in clinical settings faces several significant challenges. Data harmonization issues arise when integrating multi-omics data from different cohorts and laboratories, complicating integration [1]. The "four Vs" of big data—volume, velocity, variety, and veracity—pose formidable analytical challenges, with volume overwhelming conventional biostatistics as dimensionality dwarfs sample sizes in most cohorts [10]. Additionally, model generalizability remains a concern, as performance often degrades when applied to independent datasets due to batch effects, population differences, and technical variability.

Explainable AI techniques such as SHapley Additive exPlanations adress the "black box" nature of complex models by clarifying how genomic variants contribute to clinical outcome predictions [10]. The net benefit of predictive allocation is directly proportional to the performance of the prediction models and disappears as model performance degrades below an area under the curve of 0.55, highlighting the importance of robust model development and validation [15]. Emerging approaches to these challenges include federated learning for privacy-preserving multi-institutional collaboration, quantum computing for enhanced computational scalability, and patient-centric "N-of-1" models that signal a paradigm shift toward dynamic, personalized cancer management [10].

Successful multi-omics integration requires both wet-lab reagents for data generation and computational tools for analysis. The table below details key resources essential for conducting multi-omics studies across application domains.

Table 3: Essential Research Reagents and Computational Resources for Multi-Omics Studies

| Category | Specific Resource | Function/Application | Key Features |

|---|---|---|---|

| Sequencing Technologies | scATAC-seq | Profiling chromatin accessibility at single-cell resolution | Identifies open chromatin regions |

| scCUT&Tag | Mapping histone modifications and transcription factor binding | Low background noise, high sensitivity | |

| Whole Genome Sequencing (WGS) | Comprehensive genomic variant detection | Identifies SNVs, CNVs, structural variants | |

| Spatial Transcriptomics | Mapping gene expression in tissue context | Preserves spatial localization information | |

| Computational Tools | Signac | scATAC-seq analysis | Latent Semantic Indexing approach |

| ArchR | scATAC-seq analysis | Iterative LSI, scalability to large datasets | |

| SnapATAC2 | scATAC-seq analysis | Laplacian eigenmaps, handles complex structures | |

| NEMO | Multi-omics integration | Network-based, high clinical relevance | |

| iClusterBayes | Multi-omics integration | Statistics-based, high clustering accuracy | |

| Subtype-GAN | Multi-omics integration | Deep learning-based, high computational efficiency | |

| Data Resources | The Cancer Genome Atlas (TCGA) | Pan-cancer multi-omics reference dataset | Genomic, epigenomic, transcriptomic data |

| 100,000 Genomes Project | Genomic-phenotypic dataset | Links genomic variants with clinical outcomes |

The integration of multi-omics data represents a transformative approach in biomedical research, with key applications in drug target identification, cancer subtyping, and clinical outcome prediction driving methodological innovation. Benchmarking studies have provided critical insights into the performance characteristics of diverse integration methods, revealing that network-based approaches like NEMO often excel in clinical relevance, while statistics-based methods like iClusterBayes demonstrate strong clustering accuracy, and deep learning approaches like Subtype-GAN offer superior computational efficiency [5] [14].

Future methodological development will likely focus on several key areas: improved scalability to handle increasingly large datasets; enhanced interpretability through explainable AI techniques; better management of missing data through advanced imputation strategies; and more effective data harmonization to integrate disparate data sources [1] [10]. As the field progresses, the combination of multi-omics integration with emerging technologies such as spatial profiling, liquid biopsies, and real-time monitoring promises to further advance personalized medicine, ultimately enabling more precise diagnostic, prognostic, and therapeutic strategies tailored to individual molecular profiles.

The Critical Need for Standardized Benchmarking in a Rapidly Evolving Field

The field of multi-omics data integration is experiencing unprecedented growth, driven by advances in high-throughput technologies that generate complex, multi-dimensional biological data. This explosion of data has led to a proliferation of computational methods designed to integrate different omics layers—including genomics, transcriptomics, epigenomics, and proteomics—to uncover comprehensive biological insights. However, this rapid innovation has created a significant challenge: the lack of standardized benchmarking frameworks to objectively evaluate and compare these methods. Without consistent evaluation standards, researchers struggle to select appropriate integration methods for their specific biological questions and data types, potentially compromising scientific conclusions and hindering translational applications.

This comparison guide examines the current landscape of benchmarking studies for multi-omics integration methods, synthesizing quantitative performance data across different methodologies and applications. By providing structured comparisons of method performance, experimental protocols, and key resources, we aim to equip researchers with the evidence needed to navigate this complex field and make informed methodological choices for their multi-omics studies.

Method Categories and Performance Benchmarks

Computational methods for multi-omics integration employ diverse strategies, each with distinct strengths and limitations. Understanding these categories provides essential context for interpreting benchmarking results and selecting appropriate methods for specific research objectives.

Table 1: Multi-omics Integration Method Categories and Characteristics

| Category | Strengths | Limitations | Typical Applications |

|---|---|---|---|

| Network-based [14] | Robust to missing data, represents complex relationships | Sensitive to similarity metrics, may require extensive tuning | Disease subtyping, patient similarity analysis, regulatory mechanisms |

| Statistics-based [14] [16] | Interpretable, captures uncertainty, probabilistic inference | Computationally intensive, may require strong model assumptions | Disease subtyping, latent factor discovery, biomarker identification |

| Deep Learning-based [17] [16] | Learns complex nonlinear patterns, supports missing data and denoising | High computational demands, limited interpretability, requires large datasets | High-dimensional integration, data imputation, disease subtyping |

| Matrix Factorization [16] | Efficient dimensionality reduction, identifies shared and specific factors | Assumes linearity, doesn't explicitly model uncertainty | Disease subtyping, molecular pattern identification, biomarker discovery |

Performance Benchmarks Across Applications

Recent benchmarking studies have evaluated method performance across different data types and analytical tasks. The results demonstrate significant performance variation depending on application context, data modalities, and specific tasks.

Table 2: Performance Benchmarks for Single-Cell Multi-omics Integration Methods [4]

| Method Category | Data Modalities | Top-Performing Methods | Key Performance Metrics |

|---|---|---|---|

| Vertical Integration | RNA + ADT | Seurat WNN, sciPENN, Multigrate | iF1, NMIcellType, ASWcellType, iASW |

| Vertical Integration | RNA + ATAC | Seurat WNN, Multigrate, Matilda | iF1, NMIcellType, ASWcellType, iASW |

| Vertical Integration | RNA + ADT + ATAC | Seurat WNN, MIRA, scMoMaT | iF1, NMIcellType, ASWcellType, iASW |

| Feature Selection | RNA + ADT | Matilda, scMoMaT, MOFA+ | Marker correlation, clustering, and classification accuracy |

Table 3: Performance of Deep Learning-based Multi-omics Methods in Cancer Applications [17]

| Method | Classification Performance | Clustering Performance | Key Strengths |

|---|---|---|---|

| moGAT | Best classification performance (Accuracy, F1 macro, F1 weighted) | Moderate | Effective for prediction tasks |

| efmmdVAE, efVAE, lfmmdVAE | Moderate | Most promising performance across clustering contexts | Effective for patient stratification |

| lfAE, efAE | Variable | Variable | Architecture flexibility |

For cancer subtyping using bulk multi-omics data, benchmarking has revealed a critical insight: incorporating more types of omics data does not always improve performance [14]. Counter to widespread intuition, there are situations where integrating additional omics data negatively impacts integration method performance, highlighting the importance of selecting optimal data combinations rather than maximizing data type quantity.

Experimental Protocols for Benchmarking

Standardized benchmarking requires carefully designed experimental protocols that assess methods across multiple performance dimensions. Comprehensive evaluations typically examine accuracy, robustness, computational efficiency, and scalability using diverse datasets with known ground truth.

Dataset Construction and Curation

Benchmarking studies employ multiple dataset types to evaluate different aspects of method performance:

- Simulated Datasets: Allow controlled evaluation against known ground truth, but may lack the complex latent structure of real biological data [4].

- Real Biological Datasets: Provide authentic evaluation contexts but may have incomplete ground truth. Common sources include:

- The Cancer Genome Atlas (TCGA): Provides bulk multi-omics data for various cancer types [14] [18].

- Single-cell Multimodal Omics Datasets: Include paired RNA+ADT, RNA+ATAC, and trimodal RNA+ADT+ATAC data from technologies like CITE-seq and SHARE-seq [4].

- Spatial Transcriptomics Datasets: Capture gene expression within spatial tissue context from technologies like 10X Visium, MERFISH, and STARmap [7].

Evaluation Metrics and Frameworks

Comprehensive benchmarking employs multiple task-specific metrics to evaluate different aspects of method performance:

Multi-task Evaluation Framework

For spatial transcriptomics, specialized benchmarking frameworks evaluate methods across four key tasks: multi-slice integration, spatial clustering, spatial alignment, and slice representation [7]. Performance in upstream tasks (like integration) strongly influences downstream application success, highlighting the importance of evaluating method performance across the entire analytical workflow.

Research Reagent Solutions

Successful multi-omics benchmarking requires both computational tools and data resources. The following essential components form the foundation of rigorous method evaluation.

Table 4: Essential Research Reagents for Multi-omics Benchmarking

| Resource Category | Specific Tools/Platforms | Primary Function | Application Context |

|---|---|---|---|

| Data Resources | TCGA [14] [18], ICGC [16], CITE-seq data [4] | Provide standardized multi-omics datasets for benchmarking | Cancer subtyping, method validation, cross-platform comparison |

| Benchmarking Frameworks | CMOB [18], iSTBench [7] | Offer curated datasets, tasks, and baseline evaluations | Large-scale method comparison, standardized performance assessment |

| Evaluation Metrics | bASW, iLISI, dASW, ARI, NMI [4] [7] | Quantify specific performance aspects (batch correction, biological conservation, clustering accuracy) | Performance benchmarking across diverse tasks and data types |

| Complementary Resources | STRING [18], Clinical health records [18] | Provide biological context and clinical correlation | Biological validation, clinical translation assessment |

The field of multi-omics data integration has progressed beyond simply developing new methods to focusing on rigorous, standardized evaluation. Benchmarking studies have revealed that method performance is highly context-dependent, varying significantly with data types, analytical tasks, and biological applications. No single method consistently outperforms others across all scenarios, emphasizing the need for task-specific method selection.

Future benchmarking efforts must address several critical challenges: the rapid pace of method development, the growing diversity of omics technologies, and the need for biologically relevant evaluation criteria. Community-wide adoption of standardized benchmarking frameworks, shared datasets, and reproducible evaluation pipelines will accelerate methodological advances and enhance the reliability of biological insights derived from multi-omics data integration.

In computational biology, the proliferation of single-cell and spatial multi-omics technologies has necessitated the development of sophisticated data integration methods. These methods enable researchers to jointly analyze diverse molecular measurements, providing a more comprehensive understanding of cellular systems. Based on input data structure and modality combination, integration tasks are systematically categorized into four prototypical scenarios: vertical, diagonal, mosaic, and cross integration [4] [19]. This classification framework helps researchers navigate the complex landscape of computational tools by precisely defining the relationships between datasets across batches (sources) and modalities (measurement types) [19].

The terminology originates from how datasets are arranged in a conceptual grid where rows represent different modalities and columns represent different batches [20]. Vertical integration involves multiple modalities measured on the same set of cells or samples. Horizontal integration addresses batch effects when the same modality is measured across different batches. Diagonal integration handles cases where neither cells nor modalities are shared between data matrices. Mosaic integration represents the most general case, accommodating any combination of the other scenarios [20] [19]. Understanding these categories is essential for selecting appropriate computational methods that can handle the specific data relationships present in a research study.

Defining the Integration Categories

Theoretical Framework and Definitions

The four primary integration categories are defined by the specific relationships between datasets in terms of shared cells and shared features [19]:

Vertical Integration (VI): Each dataset contains a set of measurements carried out on the same set of samples (separate bulk experiments with matched samples in different modalities or single-cells measured through joint assays) [19]. VI identifies links between biological features, such as scRNA-seq transcript counts and scATAC-seq peaks, which can help formulate mechanistic hypotheses across modalities [19].

Diagonal Integration (DI): DI describes the framework where each dataset is measured in a different biological modality, and there is no shared set of cells or samples across these datasets [19]. This represents a more challenging scenario than vertical integration due to the lack of direct correspondence between the measured entities.

Mosaic Integration (MI): MI allows pairs of datasets to be measured in overlapping modalities in any combination [19]. It is the most general and challenging case, accommodating any subset of data matrices from an m × b grid corresponding to m modalities and b batches [20]. Few methods have been developed specifically for this comprehensive scenario [20].

Cross Integration: Building upon this framework, some benchmarking studies further specify a "cross" integration category. In one extensive benchmark, cross integration was evaluated alongside vertical, diagonal, and mosaic integration, with 15 methods assessed for this specific task [4].

Visual Framework of Data Integration Categories

The following diagram illustrates the conceptual relationships between the four primary data integration categories:

Benchmarking Experimental Design and Protocols

Comprehensive Evaluation Framework

Systematic benchmarking of data integration methods requires a rigorously designed experimental protocol that can objectively assess performance across diverse scenarios. A landmark Registered Report in Nature Methods established a comprehensive framework for multitask benchmarking of single-cell multimodal omics integration methods [4]. This protocol was accepted in principle on 30 July 2024, ensuring methodological rigor through peer review before result collection [4].

The benchmarking study evaluated 40 integration methods across the four data integration categories: 18 vertical integration methods, 14 diagonal integration methods, 12 mosaic integration methods, and 15 cross integration methods [4]. These methods were tested on 64 real datasets and 22 simulated datasets representing various modality combinations, including paired RNA and ADT (RNA+ADT), paired RNA and ATAC (RNA+ATAC), and trimodal data containing all three modalities (RNA+ADT+ATAC) [4]. This extensive design ensures robust evaluation across diverse biological contexts and technical challenges.

Evaluation Metrics and Tasks

The benchmarking framework assessed method performance across seven common computational tasks that integration methods are designed to address [4]:

- Dimension reduction: Evaluating the preservation of biological variation in low-dimensional embeddings

- Batch correction: Assessing the removal of technical artifacts while preserving biological signals

- Clustering: Measuring the ability to identify biologically meaningful cell groups

- Classification: Testing performance in predicting cell type labels

- Feature selection: Evaluating identification of molecular markers associated with cell types

- Imputation: Assessing reconstruction of missing data values

- Spatial registration: Testing alignment of spatial coordinates in spatial transcriptomics

For each task, tailored evaluation metrics were employed. For dimension reduction and clustering, metrics included iF1 (clustering accuracy), NMIcellType (Normalized Mutual Information), ASWcellType (Average Silhouette Width), and iASW (integration ASW) [4]. Feature selection performance was evaluated using clustering, classification, and reproducibility metrics applied to selected marker features [4].

Performance Comparison Across Integration Categories

Vertical Integration Performance

Vertical integration methods were systematically benchmarked on dimension reduction and clustering tasks using datasets of varying modalities. The evaluation included 14 methods on 13 paired RNA and ADT datasets, 14 methods on 12 paired RNA and ATAC datasets, and 5 methods on 4 trimodal datasets (RNA+ADT+ATAC) [4].

Table 1: Top-Performing Vertical Integration Methods by Data Modality

| Rank | RNA+ADT Methods | RNA+ATAC Methods | RNA+ADT+ATAC Methods |

|---|---|---|---|

| 1 | Seurat WNN | UnitedNet | Multigrate |

| 2 | sciPENN | Seurat WNN | Seurat WNN |

| 3 | Multigrate | Multigrate | Matilda |

| 4 | Matilda | scMoMaT | scMoMaT |

| 5 | BREMSC | Matilda | totalVI |

Performance analysis revealed that method effectiveness is both dataset-dependent and modality-dependent [4]. For RNA+ADT data, Seurat WNN, sciPENN, and Multigrate demonstrated generally better performance, effectively preserving biological variation of cell types [4]. In RNA+ATAC integration, UnitedNet, Seurat WNN, and Multigrate performed well across diverse datasets [4]. For the more challenging trimodal integration, Multigrate, Seurat WNN, and Matilda emerged as top performers [4].

For feature selection tasks in vertical integration, only Matilda, scMoMaT, and MOFA+ support identification of molecular markers from single-cell multimodal omics data [4]. Notably, Matilda and scMoMaT identify distinct markers for each cell type, while MOFA+ selects a single cell-type-invariant set of markers for all cell types [4].

Diagonal and Mosaic Integration Performance

Diagonal and mosaic integration present more challenging scenarios due to limited shared information across datasets. Benchmarking results indicate that performance varies significantly based on data complexity and method design:

Table 2: Performance Leaders in Diagonal and Mosaic Integration

| Integration Category | Top-Performing Methods | Key Strengths |

|---|---|---|

| Diagonal Integration | totalVI, UINMF, MOJITOO, scAI | Effective integration of different cells and different modalities |

| Mosaic Integration | totalVI, UINMF, scMoMaT | Handles mixed shared/different cells and modalities |

| Cross Integration | Methods specifically benchmarked for cross integration tasks | Performance dependent on data complexity |

For diagonal integration, which involves different cells and different modalities, totalVI and UINMF excel beyond their counterparts according to benchmarking studies [21]. Another benchmark showed that MOJITOO and scAI also emerge as leading algorithms for vertical integration scenarios [21].

Mosaic integration, being the most general case, requires methods that can handle arbitrary combinations of data matrices. scMoMaT (single cell Multi-omics integration using Matrix Tri-factorization) specifically addresses this challenge using a matrix tri-factorization framework that can integrate an arbitrary number of data matrices under the mosaic integration scenario [20]. The method simultaneously learns cell representations and marker features across modalities for different cell clusters, allowing interpretation of cell clusters from different modalities [20].

Method Performance Across Multiple Tasks

The comprehensive nature of the benchmarking reveals that few methods excel across all tasks. The following table summarizes the performance of selected top methods across key integration tasks:

Table 3: Multi-Task Performance of Leading Integration Methods

| Method | Dimension Reduction | Batch Correction | Clustering | Feature Selection | Data Modalities |

|---|---|---|---|---|---|

| Seurat WNN | Excellent | Good | Excellent | Not supported | RNA+ADT, RNA+ATAC |

| Multigrate | Excellent | Good | Excellent | Limited | RNA+ADT, RNA+ATAC, Multi-modal |

| Matilda | Good | Good | Good | Excellent | RNA+ADT, RNA+ATAC, Multi-modal |

| scMoMaT | Good | Good | Good | Excellent | Mosaic integration |

| totalVI | Good | Excellent | Good | Limited | Diagonal, Mosaic |

Performance assessments indicate that while Seurat WNN performs well on dimension reduction and clustering tasks, it does not support feature selection [4]. In contrast, Matilda and scMoMaT provide robust feature selection capabilities, identifying cell-type-specific markers that lead to better clustering and classification of cell types than markers selected by MOFA+ [4]. The evaluations also demonstrated that dataset complexity significantly affects integration performance, with simulated datasets (which may lack latent data structure observed in real data) often being easier to integrate [4].

Research Reagent Solutions for Data Integration

Successful implementation of data integration methods requires appropriate computational tools and frameworks. The following essential "research reagents" represent key resources used in the field:

scMoMaT: A computational method designed for mosaic integration using matrix tri-factorization [20]. It jointly performs single-cell mosaic integration and interprets results using multi-modal biomarkers [20].

Seurat WNN: A widely used method for vertical integration that employs weighted nearest neighbor approaches to combine multiple modalities [4] [21]. It demonstrates strong performance in dimension reduction and clustering tasks.

Multigrate: A versatile integration method that performs well across multiple modality combinations, including trimodal data (RNA+ADT+ATAC) [4].

totalVI: A top-performing method for diagonal and mosaic integration scenarios, employing deep generative modeling to integrate multimodal data [21].

UINMF: An integration method that extends iNMF by adding an unshared weights matrix term, enabling it to incorporate features belonging to only one or a subset of omics datasets and perform mosaic integration [16].

Matilda: A vertical integration method that supports feature selection of molecular markers from single-cell multimodal omics data, capable of identifying distinct markers for each cell type [4].

Benchmarking Frameworks: Standardized evaluation protocols, such as the Registered Report methodology [4], and specialized benchmarking pipelines for assessing multi-slice integration in spatial transcriptomics [7].

Synthetic Data Generation Tools: Approaches like the

synthpopR package that generate synthetic data through classification and regression trees (CART) methods, useful for evaluating data integration utility while addressing privacy concerns [22].

Based on the comprehensive benchmarking evidence, selecting appropriate data integration methods requires careful consideration of both the data structure (defining the integration category) and the specific analytical tasks. For vertical integration tasks involving paired multi-omics data, Seurat WNN and Multigrate consistently demonstrate strong performance across multiple modalities [4]. For studies requiring feature selection alongside integration, Matilda and scMoMaT provide superior capabilities for identifying cell-type-specific markers [4].

For the more challenging diagonal and mosaic integration scenarios, totalVI and UINMF excel according to comparative benchmarks [21]. Specifically for mosaic integration, scMoMaT offers a specialized solution using matrix tri-factorization that can handle arbitrary combinations of data matrices while simultaneously learning multi-modal biomarkers for cell type interpretation [20].

The performance evaluations consistently show that method effectiveness is context-dependent, varying by data modalities, dataset complexity, and the specific analytical tasks required [4]. Researchers should therefore consider their specific data characteristics and analytical goals when selecting integration methods, potentially consulting updated benchmarking studies as the field rapidly evolves. The emergence of comprehensive benchmarking frameworks [4] [7] provides valuable guidance for method selection, but researchers should validate performance on their specific data types to ensure optimal results.

A Taxonomy of Integration Methods: From Network Biology to Ensemble Machine Learning

The integration of multi-omics data represents a cornerstone of modern computational biology, enabling unprecedented insights into complex disease mechanisms and accelerating therapeutic discovery. Network-based approaches provide a powerful framework for this integration by contextualizing disparate molecular data within the interconnected structure of biological systems. These methods effectively map heterogeneous omics data—including genomics, transcriptomics, proteomics, and metabolomics—onto underlying biological networks such as protein-protein interactions, metabolic pathways, and gene regulatory networks. This systematic review objectively compares the performance of three principal computational families—network propagation, graph neural networks (GNNs), and network inference models—within the specific application domain of multi-omics integration for drug discovery. By synthesizing experimental data and benchmarking results, this guide aims to equip researchers with the evidence necessary to select appropriate methodologies for specific research scenarios, ultimately enhancing the efficacy of computational strategies in biomedical research and development.

Network-based multi-omics integration methods can be systematically categorized into distinct classes based on their underlying algorithmic principles and applications in drug discovery [23]. This classification framework provides researchers with a structured understanding of the methodological landscape.

Network Propagation/Diffusion: These algorithms integrate information from input data by spreading node signals across connected neighbors in a given biological network. They function as powerful regularization approaches that amplify network regions enriched for phenotype-associated molecules while dampening technical noise and biological variation [24]. Popular implementations include Random Walk with Restart (RWR) and Heat Diffusion (HD) models, which redistribute molecular measurements (e.g., gene expression changes) through protein-protein interaction or gene co-expression networks to identify conditionally altered subnetworks.

Graph Neural Networks (GNNs): GNNs leverage deep learning architectures to learn node representations by recursively aggregating feature information from neighboring nodes through message-passing mechanisms. Unlike traditional propagation methods, GNNs can integrate multiple graph-structured prior knowledge sources simultaneously and learn task-specific representations in an end-to-end fashion [25]. Recent innovations include frameworks like GNNRAI, which uses GNNs to model correlation structures among omics features, and MPK-GNN, which incorporates multiple prior biological networks.

Network Inference Models: These methods focus on reconstructing the map of interactions among a system's constituents by resolving dependencies from experimental readouts [26]. They include statistical approaches (correlation, mutual information), information-theoretic methods (ARACNe, CLR), and graphical models (Bayesian networks) that infer regulatory relationships from multi-omics data, effectively building networks de novo rather than propagating signals through pre-defined networks.

Table 1: Methodological Classification of Network-Based Multi-Omics Approaches

| Category | Core Principle | Representative Algorithms | Typical Applications |

|---|---|---|---|

| Network Propagation | Spreading node scores to neighbors in pre-defined networks | RWR, Heat Diffusion, Network Smoothing | Gene prioritization, Functional module identification, Noise reduction |

| Graph Neural Networks | Message-passing neural networks on graph structures | GNNRAI, MPK-GNN, MOGONET | Patient classification, Biomarker identification, Drug response prediction |

| Network Inference | Reconstructing interaction networks from data | ARACNe, GENIE3, Bayesian Networks | Regulatory network reconstruction, Mechanism elucidation, Novel interaction discovery |

Performance Benchmarking and Comparative Analysis

Quantitative Performance Metrics Across Methodologies

Rigorous benchmarking of computational methods requires standardized evaluation using multiple performance metrics. The following comparative analysis synthesizes experimental results from recent studies to provide objective performance assessments.

Table 2: Performance Comparison of Network-Based Methods on Multi-Omics Tasks

| Method Category | Specific Method | Application Context | Performance Metrics | Key Findings |

|---|---|---|---|---|

| Graph Neural Networks | GNNRAI [27] | Alzheimer's disease classification (ROSMAP cohort) | Accuracy: 2.2% improvement over benchmarks | Outperformed MOGONET; Effective integration of transcriptomics and proteomics |

| Graph Neural Networks | MPK-GNN [25] | Cancer molecular subtype classification | State-of-the-art performance vs. multi-view learning | Successfully integrated multiple prior biological networks |

| Network Propagation | RWR vs. HD [24] | Aging studies in rat brain/liver; Prostate cancer | Parameter optimization critical for performance | Maximizing inter-omics agreement improved biological consistency |

| Network Inference | DOMINO [28] | Disease module identification | Improved information exploitation from expression data | Identified disjoint connected Steiner trees with over-represented active genes |

Task-Specific Performance Considerations

Different network-based approaches demonstrate variable efficacy depending on the specific bioinformatics task and data characteristics:

Drug Target Identification: Network propagation excels in prioritizing disease-associated genes and proteins by diffusing known disease signals through molecular interaction networks. The optimal parameterization of propagation algorithms can be achieved by maximizing the agreement between different omics layers (e.g., proteome and transcriptome) or by maximizing the consistency between biological replicates [24]. Methods like SigMod and IODNE implement aggregate scoring approaches to identify optimally enriched disease modules within protein-protein interaction networks [28].

Drug Response Prediction: GNNs demonstrate superior performance in predicting patient-specific drug responses by integrating multi-omics profiles with prior knowledge graphs. The GNNRAI framework showed particular effectiveness in balancing the greater predictive power of proteomics with the larger sample size available for transcriptomics in the ROSMAP cohort [27]. The method's architecture accommodates samples with incomplete omics measurements, preventing reduction in statistical power.

Drug Repurposing: Network inference methods facilitate drug repurposing by reconstructing condition-specific networks that reveal novel mechanistic relationships. Approaches that leverage de novo network enrichment (DNE) can identify connected subnetworks of the human interactome that link known drug targets to new disease indications [28]. Methods like PCSF and Omics Integrator have been successfully applied to link drugs to new therapeutic applications through multi-omics integration.

Experimental Protocols and Methodologies

Benchmarking Framework for Multi-Omics Integration Methods

Standardized evaluation protocols are essential for meaningful comparison across different network-based approaches. The following experimental framework has emerged as a consensus methodology in computational biology:

Data Preparation and Preprocessing:

- Collect multi-omics datasets with minimum of two omics layers (e.g., transcriptomics and proteomics)

- Apply appropriate normalization techniques to address technical variation between platforms

- Map molecular entities to standardized identifiers for network integration

- Split data into training/validation sets using cross-validation (typically 3-fold)

Network Resource Curation:

- Compile relevant biological networks from databases (e.g., STRING, Pathway Commons)

- For GNN approaches, construct prior knowledge graphs representing biological relationships

- For inference methods, establish gold-standard networks for validation

Model Training and Validation:

- Implement method-specific parameter optimization procedures

- For propagation methods: optimize spreading coefficients using bias-variance tradeoff or inter-omics agreement

- For GNNs: Train with modality-specific feature extractors and representation alignment

- For inference methods: Apply appropriate statistical tests and multiple testing corrections

Performance Assessment:

- Evaluate predictive accuracy using standard metrics (AUROC, AUPR, F-score)

- Assess biological relevance through enrichment analysis and literature validation

- Compare computational efficiency and scalability

Case Study: GNNRAI Framework for Alzheimer's Disease Classification

The GNNRAI (GNN-derived Representation Alignment and Integration) framework exemplifies a rigorous experimental approach for supervised multi-omics integration [27]:

Experimental Design:

- Data Source: Religious Order Study/Memory Aging Project (ROSMAP) cohort

- Omics Modalities: Transcriptomics and proteomics from dorsolateral prefrontal cortex

- Sample Characteristics: 228 samples with both modalities, plus additional samples with single modalities

- Biological Priors: 16 Alzheimer's disease biodomains with co-expression relationships from protein-protein interaction databases

Methodological Implementation:

- Constructed separate graphs for each biodomain and modality

- Implemented GNN-based feature extractors to process each omics modality

- Aligned low-dimensional embeddings across modalities using representation alignment

- Integrated aligned representations using set transformer for final prediction

- Employed integrated gradients for biomarker identification

Validation Approach:

- Three-fold cross-validation for robust performance estimation

- Comparison against MOGONET as benchmark method

- Evaluation of both predictive accuracy and biomarker relevance

Diagram Title: GNNRAI Multi-Omics Integration Workflow

Successful implementation of network-based multi-omics analysis requires access to specific computational resources, biological datasets, and software tools. The following table catalogs essential "research reagents" for this domain.

Table 3: Essential Research Reagents for Network-Based Multi-Omics Analysis

| Resource Category | Specific Resource | Function and Application |

|---|---|---|

| Biological Network Databases | STRING, Pathway Commons | Provide protein-protein interaction networks for propagation and prior knowledge |

| Omics Data Repositories | TCGA, GEO, ROSMAP | Source of multi-omics datasets for model training and validation |

| Software Libraries | PyTor Geometric, DGL | Graph neural network implementation frameworks |

| Propagation Algorithms | BioNetSmooth, NetworkX | Implement network propagation and smoothing operations |

| Inference Tools | ARACNe, GENIE3 | Reconstruct regulatory networks from expression data |

| Benchmarking Suites | Open Graph Benchmark | Standardized datasets for method comparison |

| Visualization Tools | Cytoscape, Gephi | Visualization and exploration of biological networks |

This comprehensive comparison of network-based approaches for multi-omics integration reveals a dynamic methodological landscape where each major category offers distinct advantages for specific applications in drug discovery research. Network propagation methods provide computationally efficient signal amplification and noise reduction, particularly valuable for gene prioritization and functional module identification. Graph neural networks demonstrate superior predictive performance in classification tasks and biomarker discovery, especially when integrating multiple prior knowledge sources. Network inference approaches excel in reconstructing novel regulatory relationships and elucidating disease mechanisms from high-dimensional omics data.

The benchmarking data presented indicates that methodological selection should be guided by specific research objectives, data characteristics, and computational resources. While GNNs generally achieve higher predictive accuracy, they require larger sample sizes and more computational intensive training procedures. Network propagation offers greater interpretability and computational efficiency, making it suitable for exploratory analysis. Network inference methods remain essential for hypothesis generation and mechanistic insight.

Future methodological development should focus on several critical challenges: improving computational scalability for large-scale multi-omics datasets, enhancing model interpretability for biological insight, establishing standardized evaluation frameworks, and incorporating temporal and spatial dynamics of biological systems. The emerging trend of hybrid models that combine elements from multiple approaches—such as GNNs with explainable propagation mechanisms or inference methods with deep learning components—represents a promising direction for advancing network-based multi-omics integration in biomedical research.

Multi-omics data integration represents a transformative approach in biomedical research, enabling a comprehensive understanding of complex biological systems by combining genomic, transcriptomic, proteomic, and metabolomic information [1]. The simultaneous analysis of these complementary biological layers provides unprecedented opportunities for modeling patient disease states, understanding underlying disease mechanisms, and predicting clinical outcomes with enhanced accuracy [29]. However, the integration of multi-modal, multi-omics data presents significant computational challenges, including high dimensionality, dataset heterogeneity, and the "big P, small N" problem where features vastly outnumber samples [30] [29].

Ensemble machine learning methods have emerged as powerful tools for addressing these challenges through late integration strategies that combine predictions from multiple models or data modalities [29]. These techniques—including voting ensembles, meta-learners, and boosted methods—leverage complementary information from different omics layers to improve the accuracy and stability of clinical outcome predictions in multi-class classification problems [31]. This guide provides a comprehensive benchmarking comparison of these ensemble approaches, offering researchers experimentally-validated insights for selecting appropriate methods based on specific multi-omics data integration needs.

Core Ensemble Machine Learning Strategies for Multi-Omics Integration

Late Integration: A Flexible Framework for Multi-Modal Data

Late integration, also known as decision-level fusion, has emerged as a particularly effective strategy for multi-omics data integration [29]. This approach trains separate machine learning models on each omics dataset independently, then aggregates their predictions to generate a final classification. The fundamental advantage of this strategy lies in its ability to address the inherent heterogeneity of multi-omics data—where different modalities may have varying statistical distributions, scales, and feature dimensions—by allowing tailored preprocessing and model selection for each data type [29].

Figure 1: Late Integration Workflow for Multi-Omics Data

Ensemble Method Taxonomy

Three primary categories of ensemble methods have been systematically evaluated for multi-class, multi-omics data integration:

Voting Ensembles combine predictions through majority-based consensus mechanisms, including hard voting (selecting the class with the most votes) and soft voting (averaging predicted probabilities) [29]. Advanced variations include performance-weighted voting models that assign weights to classifiers based on their predictive performance [32].

Meta-Learners employ a two-stage approach where base-level models trained on individual omics modalities make initial predictions, and a meta-learner model then learns from these predictions to generate the final output [29]. This approach can capture complex relationships between different omics data types.

Boosted Methods adapt traditional boosting algorithms for multi-modal data by iteratively training weak learners on different omics modalities and adjusting weights based on classification errors [31]. These include multi-modal AdaBoost variations and the specialized PB-MVBoost algorithm that considers both accuracy and diversity across modalities [29].

Benchmarking Performance Across Cancer Types and Diseases

Experimental Design and Protocols

Recent comprehensive benchmarking studies have evaluated ensemble methods across multiple disease domains using consistent experimental protocols. The key aspects of these benchmarking methodologies include:

Dataset Selection: Studies utilized in-house hepatocellular carcinoma (HCC) data along with publicly available datasets for breast cancer and inflammatory bowel disease (IBD) to ensure broad applicability [29]. These datasets typically included multiple omics modalities such as clinical measurements, transcriptomics, proteomics, metabolomics, and microbiome data.

Evaluation Metrics: Performance was assessed using area under the receiver operating characteristic curve (AUC-ROC) for multi-class classification, with additional analysis of feature stability and clinical signature size [29]. Cross-validation approaches ensured robust performance estimation.

Comparison Baseline: All ensemble methods were compared against simple concatenation (early integration) as a baseline, which combines all omics features into a single dataset before model training [29].

Table 1: Benchmarking Performance of Ensemble Methods Across Disease Models

| Ensemble Method | Hepatocellular Carcinoma (AUC) | Breast Cancer (AUC) | Inflammatory Bowel Disease (AUC) | Feature Stability |

|---|---|---|---|---|

| PB-MVBoost | 0.85 | 0.83 | 0.82 | High |

| AdaBoost with Soft Vote | 0.84 | 0.82 | 0.81 | High |

| Meta-Learner | 0.82 | 0.80 | 0.79 | Medium |

| Voting Ensemble (Soft) | 0.80 | 0.78 | 0.78 | Medium |

| Mixture of Experts | 0.81 | 0.79 | 0.77 | Medium |

| Simple Concatenation (Baseline) | 0.77 | 0.75 | 0.74 | Low |

Performance Insights and Method Selection

The benchmarking results demonstrate that boosted methods consistently outperform other ensemble approaches across multiple disease models and omics data types [29]. The PB-MVBoost algorithm achieved the highest AUC scores (up to 0.85), particularly excelling in complex classification tasks with heterogeneous omics data. AdaBoost with soft vote also showed robust performance, making it a strong alternative.

The superior performance of boosted methods can be attributed to their ability to handle class imbalance and give more weight to difficult-to-classify samples across modalities [29]. Additionally, these methods produced more stable predictive features—a critical consideration for clinical applications where interpretability and reproducibility are essential.

Table 2: Comparative Analysis of Ensemble Method Characteristics

| Method Category | Specific Algorithms | Strengths | Limitations | Ideal Use Cases |

|---|---|---|---|---|

| Boosted Methods | PB-MVBoost, Multi-modal AdaBoost | Highest accuracy, handles class imbalance, stable feature selection | Computational intensity, parameter sensitivity | Clinical outcome prediction with complex multi-omics data |

| Meta-Learners | Stacked Generalization | Captures complex modality relationships, flexible base model selection | Risk of overfitting, complex implementation | Research settings with sufficient data for meta-training |

| Voting Ensembles | Hard Voting, Soft Voting, Performance-Weighted | Simple implementation, parallelizable, interpretable | Assumes modality independence, limited complex interaction capture | Initial multi-omics integration projects with clearly separable modalities |

| Early Integration | Simple Concatenation | Simple baseline, captures cross-modality correlations | Prone to overfitting, curse of dimensionality | Not recommended except as performance baseline |

Experimental Protocols and Implementation Guidelines

Data Preprocessing and Feature Selection

Successful implementation of ensemble methods for multi-omics data requires careful data preprocessing:

Missing Value Imputation: Studies utilized k-nearest neighbors (KNN) imputation with k=1 for clinical and proteomics datasets with missing values [30]. For microbiome data, appropriate zero-handling techniques such as pseudo-count addition or model-based imputation may be necessary.

Normalization: Each omics modality typically requires tailored normalization approaches. RNA-seq data often benefits from variance-stabilizing transformations, while metabolomics data may require probabilistic quotient normalization or similar techniques.

Feature Selection: Given the high dimensionality of omics data, feature selection is critical. Methods including variance filtering, recursive feature elimination, or domain-knowledge-driven selection help reduce dimensionality and mitigate overfitting [29].

Computational Implementation Framework

Figure 2: Implementation Workflow for Ensemble Multi-Omics Analysis

Model Training and Validation Protocols

Implementation of ensemble methods follows these key experimental steps:

Base Model Training: For late integration strategies, individual models are first trained separately on each omics modality. Studies have successfully employed random forests, support vector machines, XGBoost, and neural networks as base models [32]. Model selection should consider the specific characteristics of each data type.

Ensemble Integration: Predictions from base models are combined using the chosen ensemble strategy. For voting ensembles, this involves implementing hard or soft voting mechanisms. Meta-learners require training a secondary model on base model predictions, while boosted methods implement iterative weighting schemes across modalities.

Cross-Validation: Nested cross-validation is recommended, with an outer loop for performance estimation and an inner loop for hyperparameter optimization. This approach provides unbiased performance estimates and reduces overfitting.

Interpretation and Validation: Advanced interpretation methods such as DeepLIFT (Deep Learning Important FeaTures) can be applied to understand feature contributions [30]. Biological validation through pathway enrichment analysis connects computational findings to established biological knowledge.

Essential Research Reagents and Computational Tools

Table 3: Research Reagent Solutions for Multi-Omics Ensemble Learning

| Resource Category | Specific Tools/Databases | Key Functionality | Application Context |

|---|---|---|---|

| Multi-Omics Data Repositories | TCGA (The Cancer Genome Atlas), GEO (Gene Expression Omnibus) | Provide curated multi-omics datasets across diverse conditions | Model training and validation, benchmarking studies |

| Bioinformatics Platforms | Python Scikit-learn, TensorFlow, PyTorch | Implement machine learning algorithms and ensemble strategies | General-purpose implementation of ensemble methods |

| Specialized Multi-Omics Tools | MOFA+, mixOmics, OmicsNet | Provide dedicated frameworks for multi-omics integration | Comparison with specialized multi-omics approaches |

| Pathway Analysis Resources | g:Profiler, Gene Set Enrichment Analysis (GSEA), STRING database | Biological interpretation of identified features | Validation of biological relevance of predictive features |

| Ensemble-Specific Libraries | ML-Ensemble, H2O.ai, XGBoost | Streamlined implementation of complex ensemble architectures | Efficient deployment of voting, stacking, and boosting methods |

Benchmarking studies demonstrate that ensemble machine learning methods, particularly boosted approaches like PB-MVBoost and multi-modal AdaBoost, provide superior performance for multi-class, multi-omics data integration compared to traditional single-modality analysis or simple concatenation approaches [29]. These methods achieve higher predictive accuracy while producing more stable and interpretable features—critical considerations for clinical translation.

The field continues to evolve with several promising directions. Deep learning-based ensemble methods are showing potential for capturing complex nonlinear relationships across omics modalities [17]. Meta-learning approaches that adapt quickly to new tasks and cancer types offer advantages for pan-cancer analysis [30]. Additionally, enhanced interpretability methods are making complex ensemble models more transparent and biologically actionable.