Benchmarking Chemogenomic Libraries: Strategies for Navigating Billion-Scale Chemical Spaces in Drug Discovery

This article provides a comprehensive framework for researchers and drug development professionals to benchmark chemogenomic libraries against diverse bioactive compound sets.

Benchmarking Chemogenomic Libraries: Strategies for Navigating Billion-Scale Chemical Spaces in Drug Discovery

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to benchmark chemogenomic libraries against diverse bioactive compound sets. As chemical spaces now exceed billions of make-on-demand molecules, effective benchmarking is crucial for identifying relevant chemistry, uncovering library blind spots, and optimizing virtual screening campaigns. We explore foundational concepts in chemical space mapping, methodological approaches using multiple search algorithms, strategies for troubleshooting coverage gaps, and comparative validation of commercial sources. By integrating the latest research and benchmark sets, this guide aims to enhance the efficiency and success of hit-finding and lead optimization in modern drug discovery.

Navigating the Expanding Universe of Chemical Space: From Compound Libraries to Make-on-Demand Billions

The field of chemical library design has undergone a seismic shift, moving from traditional enumerated libraries to the era of ultra-large combinatorial chemical spaces. Enumerated libraries are physical collections of compounds, explicitly listed and stored in databases. In contrast, modern combinatorial chemical spaces are virtual collections of billions to trillions of compounds defined by chemical reaction rules and available building blocks; compounds are synthesized on-demand only after computational screening identifies promising candidates [1]. This paradigm change addresses the critical limitation of physical screening collections, which represent only a tiny fraction of synthetically accessible chemical space due to storage and logistics constraints [2].

The drive toward ultra-large libraries is fueled by evidence that screening larger, more diverse compound collections significantly increases the probability of finding potent, novel hits [3]. This guide provides an objective comparison of these competing approaches, benchmarking their performance against standardized bioactive molecule sets to inform strategic decision-making for drug discovery researchers and organizations.

Experimental Benchmarking: Methodology and Protocols

Benchmark Compound Set Design

A rigorous 2025 benchmarking study established standardized sets to evaluate how well compound collections supply relevant chemistry for hit finding and analog expansion [3] [4]. Researchers mined the ChEMBL database for molecules with demonstrated biological activity, applying systematic filtering to create three benchmark sets of successive magnitudes:

- Set L (Large-sized): ≈379,000 potency-filtered "motif representatives" [4]

- Set M (Medium-sized): ≈25,000 compounds from Bemis-Murcko scaffold clustering [4]

- Set S (Small-sized): ≈3,000 compounds forming a PCA-balanced subset for broad, uniform coverage of physicochemical and topological space [3] [4]

Set S was specifically designed for diversity analysis, created by mapping chemical space, removing outliers, segmenting a 10×10 grid, and sampling up to 30 molecules per cell to ensure unbiased representation [4].

Search Methodologies and Performance Metrics

The study employed three complementary search methods to evaluate how effectively different compound sources retrieve relevant structures [4]:

- FTrees: Pharmacophore-based similarity searching, retrieving compounds with similar feature distributions rather than structural similarity.

- SpaceLight: Molecular fingerprint-based screening identifying close structural analogs based on Tanimoto similarity.

- SpaceMACS: Maximum common substructure approach balancing structural and pharmacophore similarity.

For each molecule in benchmark Set S, these methods retrieved the top 100 hits from various commercial sources. Performance was quantified using:

- Mean similarity to query structures

- Exact and near-exact match rates

- Scaffold uniqueness and diversity

- Coverage across chemical space map quadrants

- Computational efficiency (screening time per compound)

Comparative Analysis of Commercial Chemical Spaces and Libraries

Scale and Accessibility of Modern Compound Collections

The table below summarizes key specifications of major commercial compound sources, highlighting the dramatic scale differences between traditional enumerated libraries and modern combinatorial spaces:

| Source | Type | Compound Count | Synthetic Feasibility | Shipping Time |

|---|---|---|---|---|

| eXplore (eMolecules) | Combinatorial Space | 5.3 trillion | >85% | 3-4 weeks [5] |

| xREAL (Enamine) | Combinatorial Space | 4.4 trillion | >80% | 3-4 weeks [1] |

| Synple Space | Combinatorial Space | 1.0 trillion | Not specified | Several weeks [1] |

| Freedom Space 4.0 (Chemspace) | Combinatorial Space | 142 billion | >80% | 5-6 weeks [5] |

| REAL Space (Enamine) | Combinatorial Space | 83 billion | >80% | 3-4 weeks [1] [5] |

| GalaXi (WuXi) | Combinatorial Space | 25.8-28.6 billion | 60-80% | 4-8 weeks [1] [5] |

| Mcule | Enumerated Library | Multi-billion scale | 100% (in-stock) | Immediate [3] |

| Molport | Enumerated Library | Multi-billion scale | 100% (in-stock) | Immediate [4] |

Performance Benchmarking Results

The 2025 benchmark study revealed consistent performance advantages for combinatorial spaces across multiple metrics [3] [4]:

- Hit Quantity and Quality: Combinatorial spaces generally yielded more and closer analogs than enumerated libraries. The eXplore and REAL spaces consistently performed best, with Mcule being the strongest performer among enumerated libraries.

- Scaffold Diversity: Each search method identified distinct, often unique scaffolds across different sources, providing flexibility for project-specific library design.

- Method-Specific Performance: FTrees results were farthest from query compounds due to its pharmacophore-based approach, while SpaceLight and SpaceMACS delivered closer structural analogs due to their reliance on heavy atom connectivity.

- Computational Efficiency: Search algorithms performed more efficiently on combinatorial spaces versus enumerated libraries based on computation time per compound.

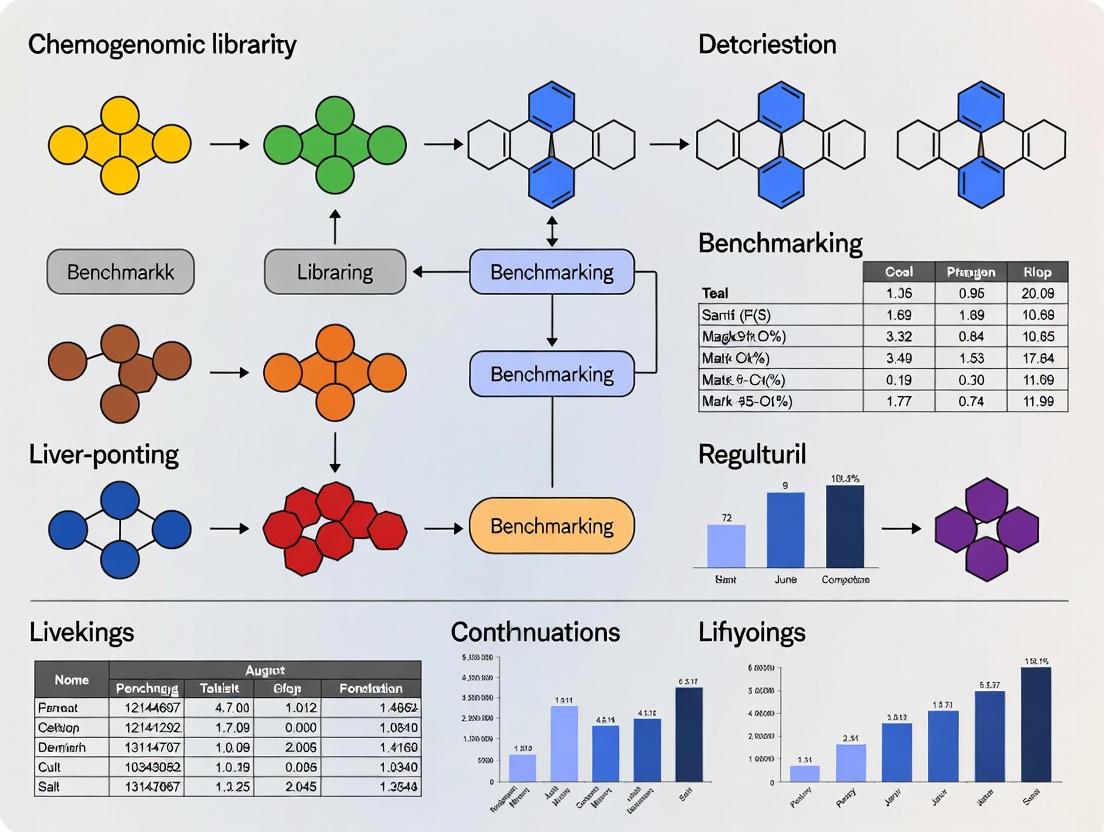

Experimental Workflow for Chemical Space Exploration

The following diagram illustrates the standardized experimental workflow for benchmarking compound collections, from initial dataset preparation through to performance evaluation:

Chemical Space Mapping and Coverage Analysis

The benchmark study mapped the coverage of different compound sources across chemical space, revealing both strengths and limitations:

The analysis revealed that all sources showed good coverage of classic "drug-like" structures but significant blind spots for more complex, hydrophilic compounds (e.g., nucleotides or those with charged groups) and natural-product-like compounds (e.g., sp3-rich carbon systems) [4]. Researchers attributed these gaps to lack of available building blocks, challenging synthetic reactions, or increased reactivity of problematic building blocks.

| Resource Category | Specific Tools/Sources | Function & Application |

|---|---|---|

| Combinatorial Spaces | eXplore, xREAL, REAL Space, Freedom Space, GalaXi | Ultra-large make-on-demand compound collections for initial hit discovery and scaffold hopping [1] [5] |

| Enumerated Libraries | Mcule, Molport, Life Chemicals, ChemDiv | Physical compound collections for immediate screening and validation [4] |

| Search Algorithms | FTrees, SpaceLight, SpaceMACS | Computational methods for navigating chemical spaces with different similarity approaches [4] |

| Benchmark Sets | ChEMBL-derived Sets S, M, L | Standardized bioactive molecule collections for objective performance comparison [3] [4] |

| Data Curation Tools | RDKit, Molecular Checker/Standardizer | Software for verifying chemical structure accuracy and bioactivity data quality [6] |

The benchmarking data clearly demonstrates that combinatorial chemical spaces generally provide superior performance compared to enumerated libraries for discovering novel chemical matter and close analogs [3] [4]. However, enumerated libraries maintain value for rapid access to physical compounds for initial validation.

For strategic compound sourcing, researchers should consider:

- Lead Discovery: Prioritize combinatorial spaces like eXplore and REAL Space for their superior diversity and ability to deliver novel scaffolds [4].

- Method Selection: Employ multiple search algorithms (FTrees, SpaceLight, SpaceMACS) to maximize scaffold diversity and identify complementary hit structures [4].

- Library Enhancement: Use combinatorial spaces to escape the availability bias of traditional screening collections and access intellectual property-free regions [1].

- Blind Spot Awareness: Acknowledge current limitations in complex, hydrophilic chemical space and plan complementary strategies for these target classes.

The modern chemical landscape offers unprecedented opportunities for hit discovery through trillion-sized combinatorial spaces, with rigorous benchmarking now enabling data-driven decisions for library design and compound sourcing strategies.

In modern drug discovery, the ability to objectively assess the quality and coverage of compound libraries is paramount. The continuous growth of commercially available compounds, which now reach billion- to trillion-sized combinatorial chemical spaces, has created an urgent need for standardized benchmark sets that enable unbiased comparison of different screening collections [7] [3]. These benchmark sets serve as crucial reference points for evaluating whether compound libraries contain chemically relevant structures with potential pharmaceutical value.

The ChEMBL database stands as a cornerstone resource for constructing these benchmarks, providing manually curated data on bioactive molecules with drug-like properties [8]. By systematically mining ChEMBL's vast repository of chemical and bioactivity data, researchers can create benchmark sets tailored for broad coverage of the physicochemical and topological landscape relevant to drug discovery [3]. This approach facilitates the translation of genomic information into effective new drug candidates by ensuring screening libraries are enriched with structures capable of meaningful biological interactions.

This article examines the critical role of benchmark sets derived from ChEMBL data, comparing the performance of various compound libraries and chemical spaces against these standardized references. We present experimental data and methodologies that enable researchers to make informed decisions about library selection for specific drug discovery applications.

The ChEMBL-Based Benchmark Sets

Construction and Composition

Neumann and colleagues have created a series of benchmark sets specifically designed for diversity analysis of compound libraries and combinatorial chemical spaces [7] [3]. These sets were constructed through systematic filtering and processing of molecules from the ChEMBL database displaying documented biological activity. The resulting benchmarks comprise three distinct sets of successive orders of magnitude:

- Set L (Large-sized): 379,000 molecules

- Set M (Medium-sized): 25,000 molecules

- Set S (Small-sized): 3,000 molecules

These benchmark sets are specifically tailored for broad coverage of both physicochemical properties and topological landscape, making them ideal for assessing how well different compound libraries cover pharmaceutically relevant chemistry space [3]. The hierarchical structure allows researchers to select the appropriate benchmark scale for their specific evaluation needs, from rapid screening to comprehensive analysis.

Comparison to Real-World Drug Discovery Data

The CARA (Compound Activity benchmark for Real-world Applications) benchmark provides complementary perspective by focusing on the practical challenges of compound activity prediction [9]. This benchmark addresses critical characteristics of real-world compound activity data, including:

- Multiple data sources from scientific literature and patents with different experimental protocols

- Existence of congeneric compounds with high pairwise similarities in lead optimization assays

- Biased protein exposure with uneven exploration of protein targets across existing studies

- Sparse, unbalanced data distributions that more accurately reflect experimental realities

The integration of these real-world data characteristics makes CARA particularly valuable for evaluating computational models intended for practical drug discovery applications where data limitations and biases are inevitable [9].

Experimental Methodologies for Benchmarking

Benchmarking Design Principles

Rigorous benchmarking requires careful experimental design to generate accurate, unbiased, and informative results. Essential guidelines for computational method benchmarking include [10]:

- Clearly defining purpose and scope - determining whether the benchmark serves to demonstrate merits of a new method, provide neutral comparison of existing methods, or function as a community challenge

- Comprehensive method selection - including all available methods for a specific analysis type or a representative subset with clearly defined inclusion criteria

- Appropriate dataset selection - incorporating varied datasets that represent different conditions, either simulated (with known ground truth) or real (from experimental sources)

- Consistent parameterization - applying equivalent tuning efforts across all methods to avoid disadvantaging certain approaches

- Multiple evaluation criteria - employing diverse performance metrics that reflect different aspects of method performance

Neutral benchmarking studies conducted independently of method development are particularly valuable for the research community, as they minimize perceived bias and provide more objective comparisons [10].

Search Methods for Chemical Space Analysis

In benchmarking chemical libraries, multiple computational approaches are typically employed to evaluate different aspects of chemical similarity and diversity. The benchmark study by Neumann et al. utilized three distinct search methods [3]:

- FTrees - based on pharmacophore features, focusing on three-dimensional molecular interaction capabilities

- SpaceLight - utilizing molecular fingerprints to assess structural similarity

- SpaceMACS - employing maximum common substructure analysis to identify shared molecular frameworks

The combination of these methods provides a comprehensive assessment of how well different compound libraries and chemical spaces can provide compounds similar to pharmaceutically relevant benchmark molecules across multiple similarity definitions.

Experimental Workflow for Library Evaluation

The following diagram illustrates the complete experimental workflow for benchmarking compound libraries against ChEMBL-derived benchmark sets:

Comparative Performance of Compound Libraries and Chemical Spaces

Performance Against Benchmark Sets

Evaluation of commercial compound libraries and combinatorial chemical spaces against the ChEMBL-derived benchmark sets reveals important performance differences. According to Neumann et al., each chemical space was able to provide a larger number of compounds more similar to the respective query molecule than the enumerated libraries, while also individually offering unique scaffolds for each search method [3].

Among the evaluated options, the eXplore and REAL chemical spaces consistently performed best across the three utilized search methods (FTrees, SpaceLight, and SpaceMACS) [3]. This superior performance demonstrates the value of large, accessible chemical spaces that can be rapidly synthesized on-demand for drug discovery applications.

Representative Compound Libraries in Drug Discovery

Various types of compound libraries serve different roles in drug discovery campaigns. The table below summarizes key library types and their characteristics:

Table 1: Types of Compound Libraries in Drug Discovery

| Library Type | Size Range | Key Characteristics | Primary Applications | Examples |

|---|---|---|---|---|

| Diversity Libraries | 10,000-430,000 compounds [11] [12] | Selected for broad structural diversity and drug-like properties; often contain tens of thousands of unique Murcko scaffolds [11] | Hit identification for novel targets; broad screening | BioAscent Diversity Set (86,000 compounds) [11]; Purdue Institute collections (430,000 compounds) [12] |

| Focused/Targeted Libraries | 1,000-80,000 compounds [12] | Enriched for specific target classes (e.g., kinases, GPCRs, ion channels) | Screening against target families; mechanism of action studies | Kinase sets, GPCR sets, CNS-targeting compounds [12] |

| Fragment Libraries | 1,600-10,000 compounds [11] [12] | Low molecular weight compounds (<300 Da) with high solubility | Fragment-based screening; SPR-driven approaches | BioAscent Fragment Library (10,000 compounds) [11]; Various fragment libraries (7,200 total) [12] |

| Chemogenomic Libraries | 1,600-1,700 compounds [11] [13] | Selective, well-annotated pharmacologically active probes | Phenotypic screening; target deconvolution; mechanism of action studies | BioAscent Chemogenomic Library (1,600 probes) [11]; Chemical Probes.org recommended probes [13] |

| Ultra-large Virtual Libraries | Hundreds of millions to billions [14] | REAL (REadily AvailabLe) compounds that can be synthesized on-demand; enormous structural diversity | Structure-based virtual screening; lead discovery | SuFEx-based library (140 million compounds) [14]; REAL Space libraries |

Quantitative Performance Comparison

The application of benchmark sets enables direct quantitative comparison of different compound libraries and chemical spaces. The following table summarizes key evaluation metrics:

Table 2: Performance Metrics for Library Evaluation Using Benchmark Sets

| Evaluation Metric | Calculation Method | Interpretation | Application in Studies |

|---|---|---|---|

| Scaffold Diversity | Number of unique Murcko scaffolds or frameworks | Higher values indicate greater structural diversity | BioAscent Diversity Set: 57k Murcko Scaffolds, 26.5k Frameworks [11] |

| Hit Identification Rate | Percentage of predicted compounds confirming activity in experiments | Measures practical utility for lead discovery | Ultra-large library screening: 55% hit rate for CB2 antagonists [14] |

| Benchmark Coverage | Ability to find similar compounds to benchmark molecules | Higher coverage indicates better pharmaceutical relevance | eXplore and REAL Space consistently provided most compounds similar to benchmarks [3] |

| Selectivity | Percentage of selective compounds against target families | Critical for chemical probes and target validation | SGC Chemical Probes: >30-fold selectivity over proteins in same family [13] |

Research Reagent Solutions Toolkit

Successful benchmarking and compound library evaluation requires specific research tools and resources. The following table outlines essential solutions for researchers in this field:

Table 3: Essential Research Reagent Solutions for Compound Library Benchmarking

| Resource Category | Specific Examples | Key Function | Access Information |

|---|---|---|---|

| Bioactivity Databases | ChEMBL [8], BindingDB [9], PubChem [9] | Source of bioactive molecules for benchmark construction; reference data for validation | Publicly accessible databases |

| Commercial Compound Libraries | BioAscent Libraries [11], Purdue Institute collections [12] | Provide physically available compounds for experimental screening | Available through commercial providers or core facilities |

| Chemical Probe Resources | Chemical Probes.org [13], SGC Probes [13], opnMe portal [13] | Source of high-quality, selective compounds for target validation and mechanism studies | Various accessibility (open source to commercial) |

| Specialized Compound Sets | PAINS Set [11], LOPAC1280 [12], NIH Molecular Libraries Program [13] | Provide specialized compounds for assay validation, interference testing, and control experiments | Available through commercial and academic sources |

| Ultra-large Chemical Spaces | eXplore, REAL Space [3] [14] | Source of synthetically accessible compounds for virtual screening | Available through commercial providers |

Discussion and Practical Implications

Interpreting Benchmarking Results

The benchmarking approaches discussed provide critical insights for drug discovery researchers. The consistent superior performance of large combinatorial chemical spaces like eXplore and REAL Space compared to enumerated libraries suggests a paradigm shift in early-stage hit identification [3]. These spaces offer both greater numbers of similar compounds to pharmaceutically relevant benchmarks and unique scaffolds, potentially increasing the chances of finding innovative starting points for medicinal chemistry optimization.

The real-world considerations highlighted by the CARA benchmark emphasize the importance of evaluating compound activity prediction methods under conditions that reflect practical drug discovery constraints [9]. The distinction between virtual screening (VS) and lead optimization (LO) assays is particularly important, as these represent fundamentally different compound distribution patterns and require different computational approaches for optimal performance.

Limitations and Future Directions

Current benchmarking approaches face several limitations that represent opportunities for future development:

- Representation gaps in benchmark sets may not fully capture emerging target classes or therapeutic modalities

- Computational burden of evaluating ultra-large chemical spaces against comprehensive benchmarks remains significant

- Integration of multi-parameter optimization beyond simple activity measures, including ADMET properties

- Standardization of evaluation metrics across studies to enable direct comparison of results

Future work should focus on developing more comprehensive benchmark sets that incorporate additional dimensions of drug-likeness, including pharmacokinetic and toxicity profiles, while maintaining practical computational requirements.

Benchmark sets derived from ChEMBL provide critical tools for objective evaluation of compound libraries and chemical spaces in drug discovery. The rigorous construction of these benchmarks, through systematic filtering of pharmaceutically relevant structures, enables unbiased comparison of different screening approaches. Experimental results demonstrate that large combinatorial chemical spaces consistently outperform traditional enumerated libraries in their ability to provide compounds similar to bioactive benchmarks while offering unique scaffold diversity.

The availability of standardized benchmark sets, coupled with clearly defined experimental methodologies for library evaluation, empowers researchers to make informed decisions about resource allocation for drug discovery campaigns. As chemical spaces continue to grow in size and complexity, these benchmarks will play an increasingly important role in ensuring that screening efforts remain focused on chemically tractable, biologically relevant regions of chemical space. Through continued refinement of benchmark sets and evaluation methodologies, the drug discovery community can accelerate the identification of high-quality starting points for the development of new therapeutics.

The systematic assessment of chemical diversity is a cornerstone of modern drug discovery. Effectively benchmarking chemogenomic libraries against diverse compound sets requires a robust framework built on specific, quantifiable metrics. These metrics allow researchers to move beyond subjective comparisons and objectively evaluate factors such as a library's coverage of chemical space, its structural novelty, and its potential to provide hits against novel biological targets. This guide provides a comparative analysis of the key experimental protocols and metrics used to dissect chemical diversity through the lenses of physicochemical properties, scaffold distribution, and topological landscapes, providing a standardized approach for library evaluation.

Core Metrics and Experimental Protocols

Analysis of Physicochemical Properties

The physicochemical profile of a compound library determines its drug-likeness and influences its pharmacokinetic and pharmacodynamic behavior. Standard analysis involves calculating a set of fundamental molecular descriptors.

Experimental Protocol:

- Step 1 - Descriptor Calculation: For each compound in the library, compute key physicochemical properties. These typically include Molecular Weight (MW), Octanol-Water Partition Coefficient (logP), Number of Hydrogen Bond Donors (HBD), Number of Hydrogen Bond Acceptors (HBA), Topological Polar Surface Area (TPSA), and the number of rotatable bonds [15] [9].

- Step 2 - Data Aggregation: Calculate the mean, median, and range for each property across the entire library.

- Step 3 - Space Mapping: Properties are often projected into a reduced-dimensional space using Principal Component Analysis (PCA) to visualize the library's coverage of the physicochemical landscape [4]. For a focused benchmark set, the space is segmented (e.g., a 10x10 grid), and molecules are sampled from each cell to ensure uniform coverage [7] [4].

- Step 4 - Comparison: Compare the property distributions and spatial coverage of the test library against a reference benchmark set.

Table 1: Key Physicochemical Properties for Diversity Analysis

| Property | Description | Role in Diversity Analysis | Typical Drug-Like Range |

|---|---|---|---|

| Molecular Weight (MW) | Mass of the molecule. | Influences permeability and absorption; higher MW can complicate drug delivery. | ≤ 500 g/mol |

| logP | Logarithm of the octanol-water partition coefficient. | Measures lipophilicity, critical for membrane permeability and solubility. | ≤ 5 |

| Hydrogen Bond Donors (HBD) | Number of OH and NH groups. | Impacts solubility and binding to biological targets. | ≤ 5 |

| Hydrogen Bond Acceptors (HBA) | Number of O and N atoms. | Affects solubility and molecular interactions. | ≤ 10 |

| Topological Polar Surface Area (TPSA) | Surface area over polar atoms. | Strong predictor of cell permeability and bioavailability. | ≤ 140 Ų |

Assessment of Scaffold Distribution

Scaffold analysis evaluates the diversity of core structures in a library, indicating the breadth of distinct chemotypes and the presence of singletons, which are unique scaffolds represented by only a single molecule [7] [15].

Experimental Protocol:

- Step 1 - Scaffold Extraction: Apply a standardized algorithm (e.g., the Bemis-Murcko method) to remove side chains and generate the molecular scaffold for each compound [4].

- Step 2 - Frequency Analysis: Count the number of unique scaffolds and the number of compounds associated with each scaffold.

- Step 3 - Diversity Quantification: Employ several metrics:

- Scaffold Count: The total number of unique scaffolds.

- Singletons Ratio: The fraction of scaffolds that are represented by only one compound. A high ratio indicates high scaffold diversity [7] [15].

- Scaffold Recovery Curves: Plot the cumulative fraction of compounds recovered against the cumulative fraction of scaffolds analyzed. The Area Under the Curve (AUC) is a key metric, with a lower AUC indicating higher diversity (a few scaffolds account for many compounds) [15].

- Shannon Entropy (SE): Measures the uniformity of compound distribution across scaffolds. Higher SE indicates a more even distribution [15].

Table 2: Key Metrics for Scaffold Distribution Analysis

| Metric | Description | Interpretation |

|---|---|---|

| Scaffold Count | Total number of unique molecular frameworks. | Higher count indicates greater structural variety. |

| Singletons Ratio | Proportion of scaffolds appearing only once. | High ratio suggests a high degree of novelty and diversity. |

| F50 | Fraction of scaffolds needed to cover 50% of the library. | Lower F50 value indicates higher scaffold diversity. |

| Shannon Entropy (SE) | Measures the evenness of compound distribution across scaffolds. | Higher SE indicates a more balanced distribution. |

| Scaled Shannon Entropy (SSE) | SE normalized to the number of scaffolds. | Allows for comparison between libraries of different sizes. |

Exploration of Topological Landscapes

This approach uses molecular fingerprints to capture the overall topological structure of molecules, providing a high-dimensional representation of chemical space.

Experimental Protocol:

- Step 1 - Fingerprint Generation: Encode each molecule into a binary bit string using structural fingerprint algorithms. Common choices include ECFP4 (Extended Connectivity Fingerprints) and MACCS keys [15].

- Step 2 - Similarity Calculation: Compute the pairwise Tanimoto similarity between all fingerprints in the library. A Tanimoto coefficient ranges from 0 (no similarity) to 1 (identical structures).

- Step 3 - Diversity Assessment: The mean pairwise similarity of the library is calculated. A lower mean similarity indicates a more diverse collection [15].

- Step 4 - Search and Recovery: In benchmark studies, molecules from a reference set (e.g., Bioactive Set S) are used as queries. The capability of a chemical library or a make-on-demand "Chemical Space" to provide close analogs is evaluated using search tools like SpaceLight (fingerprint-based) and FTrees (pharmacophore-based) [7] [4]. Performance is measured by the similarity of the retrieved hits and the uniqueness of the scaffolds they represent.

Integrated Workflow for Comprehensive Diversity Analysis

A robust assessment requires the integration of all three metric categories. The following workflow outlines this process, from data preparation to multi-faceted analysis and final interpretation.

Diagram Title: Chemical Diversity Analysis Workflow

Successful diversity analysis relies on specific computational tools and compound resources.

Table 3: Essential Research Reagents and Resources

| Category | Item / Software | Function in Diversity Analysis |

|---|---|---|

| Reference Compounds | ChEMBL Database | A public repository of bioactive molecules used to create benchmark sets (e.g., Sets L, M, S) for unbiased comparison [7] [9]. |

| Software & Algorithms | RDKit / KNIME | Open-source cheminformatics toolkits for calculating molecular descriptors, generating fingerprints, and processing chemical data [16]. |

| Software & Algorithms | FTrees, SpaceLight, SpaceMACS | Specialized search methods for identifying similar compounds in large databases using pharmacophores, fingerprints, and maximum common substructures, respectively [7] [4]. |

| Chemical Spaces & Libraries | eXplore, REAL Space, Mcule | Examples of commercial combinatorial "Chemical Spaces" (on-demand) and enumerated libraries used to assess the ability to source relevant chemistry [7] [4]. |

| Analysis Frameworks | Consensus Diversity Plots (CDPs) | A method to visualize the "global diversity" of a library by simultaneously plotting its scaffold diversity against its fingerprint diversity [15]. |

Comparative Performance in Benchmarking Studies

Applying these metrics reveals significant differences between compound sources. A 2025 benchmark study using the bioactive Set S showed that large, make-on-demand combinatorial Chemical Spaces (eXplore, REAL Space) consistently provided a higher number of compounds similar to query molecules and offered more unique scaffolds than traditional enumerated libraries [7] [4]. However, a significant blind spot for more complex, hydrophilic, and natural-product-like compounds was identified across all commercial sources [4]. Furthermore, search methods impact results; FTrees (pharmacophore-based) retrieved more distant analogs, while SpaceLight and SpaceMACS (structure-based) found closer matches [4]. This underscores the need for a multi-method, multi-metric approach for a complete picture of a library's diversity and utility in drug discovery.

Public chemical and biological databases constitute a foundational resource for modern drug discovery and chemogenomics research. These repositories provide the critical compound and bioactivity data necessary to benchmark novel chemogenomic libraries, understand structure-activity relationships, and prioritize compounds for experimental testing. Among the most widely used resources are PubChem, DrugBank, ZINC, and ChEMBL, which collectively offer complementary data types ranging from commercial compound availability to detailed pharmacological profiles. This guide provides an objective comparison of these four key databases, detailing their respective scopes, data characteristics, and appropriate applications within a benchmarking framework. By understanding the distinct strengths and specializations of each resource, researchers can make informed decisions when selecting baseline comparators for evaluating novel compound sets [17] [18].

Each database serves a unique primary function within the research ecosystem, which directly influences its content composition and curation approach.

PubChem functions as a comprehensive public repository, aggregating chemical structures and biological screening data from hundreds of sources worldwide. It operates on a submitter-based model where data contributions from organizations and researchers are merged into unique compound identifiers, creating an extensive resource for chemical structure lookup and bioactivity exploration [19] [20].

ChEMBL is a manually curated knowledgebase of bioactive molecules with drug-like properties. Its core strength lies in its expert curation of quantitative bioactivity data (e.g., IC₅₀, Ki) extracted directly from published medicinal chemistry and pharmacology literature, making it invaluable for structure-activity relationship (SAR) analysis [17] [18].

ZINC specializes in providing commercially available compounds in ready-to-dock formats for virtual screening. It focuses on curating purchasable chemical space and preparing molecules in biologically relevant protonation and tautomeric states, streamlining the early drug discovery pipeline from computational prediction to experimental testing [17] [21].

DrugBank offers detailed information on approved and investigational drugs, along with their target pathways, mechanisms, and pharmacokinetic properties. This makes it an essential resource for drug development, pharmacovigilance, and repurposing studies [17].

Table 1: Core Characteristics and Primary Applications

| Database | Primary Content Focus | Data Curation Method | Key Applications in Research |

|---|---|---|---|

| PubChem | Chemical structures & bioassay data [20] | Hybrid (automated integration with manual oversight) [17] | High-throughput screening, toxicity prediction, chemical structure lookup [17] |

| ChEMBL | Bioactive molecules & drug-target interactions [17] | Manual (expert-curated from literature/patents) [17] [18] | Drug discovery, target identification, SAR analysis, polypharmacology studies [17] |

| ZINC | Commercially available compounds [17] | Automated (vendor catalogs with standardized formats) [17] | Virtual screening, hit identification, lead optimization [17] [21] |

| DrugBank | Approved/experimental drugs & pharmacokinetics [17] | Hybrid (manually validated + automated updates) [17] | Drug development, ADMET prediction, pharmacovigilance [17] |

Quantitative Comparison of Database Contents

Significant differences exist in the scale and type of data contained within each database, which should guide their selection for specific benchmarking scenarios.

Content Volume and Specialization

As of 2025, PubChem stands as the largest free chemical repository with over 119 million compounds, reflecting its role as a comprehensive aggregator [17]. ChEMBL, while smaller in compound count, distinguishes itself with over 20 million quantitative bioactivity measurements, providing deep SAR context [17]. ZINC contains a massive collection of over 54 billion molecules, among which over 5 billion are provided as 3D structures for virtual screening, emphasizing its focus on purchasable chemical space [17]. DrugBank is the most specialized, containing approximately 17,000 drug entries linked to 5,000 protein targets, offering depth over breadth for pharmaceutical compounds [17].

Table 2: Quantitative Content Comparison for Benchmarking

| Database | Compound Count | Bioactivity Records | Target Coverage | Key Quantitative Metrics |

|---|---|---|---|---|

| PubChem | 119 Million+ compounds [17] | Extensive bioassay results [17] | Broad, via bioassays [17] | 33k+ citations (for PDB); 1.7k+ citations (for PubChem) [17] |

| ChEMBL | 2.4 Million+ bioactive compounds [17] | 20.3 Million+ bioactivity measurements [17] | Extensive drug targets with quantitative data [17] | 4.5k+ citations; Focus on IC₅₀, Ki values [17] |

| ZINC | 54 Billion+ compounds (commercially available) [17] | Limited bioactivity annotations | N/A (focus on purchasability) | 5k+ citations; 5.9 billion ready-to-dock 3D structures [17] |

| DrugBank | 17,000+ drugs (approved/experimental) [17] | Pharmacokinetic and target data | 5,000+ protein targets [17] | 3.4k+ citations; Detailed drug-target pathways [17] |

Data Provenance and Curation Quality

The curation approach significantly impacts data reliability and appropriate use cases. ChEMBL and DrugBank employ substantial manual curation, with ChEMBL specifically involving expert extraction of bioactivity data from literature, resulting in highly reliable quantitative data for SAR modeling [17] [18]. PubChem utilizes a hybrid approach, with automated data integration from hundreds of contributors but with manual oversight, creating a comprehensive but potentially less standardized resource [17] [19]. ZINC relies primarily on automated processing of vendor catalogs with structural standardization, optimizing for throughput and docking readiness rather than bioactivity annotation [17] [21].

Database Data Provenance and Research Applications

Experimental Methodologies for Database Comparison

Researchers can employ several methodological approaches to objectively compare database contents and performance for benchmarking studies.

Structural Feature Interrelation Analysis Using PMI

Pointwise Mutual Information (PMI) provides a quantitative method to profile and compare chemical databases based on the co-occurrence patterns of structural features [22]. This approach, adapted from information theory, measures the strength of association between molecular fragments within a compound set.

Experimental Protocol:

- Fingerprint Generation: Encode all compounds in each database using structural fingerprints (e.g., MACCS keys, PubChem fingerprints, ECFP4/6).

- Co-occurrence Matrix Construction: For each database, compute a Co-occurrence Relation Matrix (CORM) by counting fragment pair occurrences across all molecules.

- Probability Calculation: Convert CORM to a Co-occurrence Probability Relation Matrix (COPRM) by normalizing counts by the total number of compounds.

- PMI Computation: Calculate pairwise PMI values using the formula: PMI = log₂[p(x,y)/(p(x)p(y))], where p(x,y) is the co-occurrence probability of fragments x and y, and p(x), p(y) are their individual occurrence probabilities.

- Comparative Profiling: Construct PMI Relation Matrices (PMIRM) for each database and compare distributions to identify database-specific structural feature associations [22].

This method has demonstrated effectiveness in distinguishing database-specific chemical landscapes, with studies revealing unusual properties of DrugBank compounds compared to broader screening collections, validating the approach's sensitivity to pharmacological content [22].

Coverage Analysis and Identifier Mapping

Comparative content analysis examines the overlap and unique elements across databases, essential for understanding complementarity in benchmarking studies.

Experimental Protocol:

- Identifier Extraction: Collect canonical compound identifiers (e.g., InChIKeys, SMILES) for a target set of compounds or across all entries in each database.

- Cross-Reference Mapping: Use exact structure matching or identifier resolution services to establish equivalence between database entries.

- Overlap Calculation: Compute pairwise and multi-database overlaps using set operations, identifying compounds unique to each resource and those shared across multiple databases.

- Content Specialization Analysis: Characterize the chemical and biological properties of unique versus shared compounds to understand database specialization.

Studies applying this methodology have revealed significant differences between major chemistry databases, with PubChem, ChemSpider, and UniChem showing substantial discordance in structure counts even for nominally the same sources, primarily due to differences in loading dates and structural standardization protocols [19].

Database Comparison Methodological Workflow

Successful benchmarking studies require both computational tools and chemical resources to validate findings.

Table 3: Essential Research Reagents and Resources

| Resource Category | Specific Examples | Function in Benchmarking Studies |

|---|---|---|

| Chemical Libraries | EUbOPEN Chemogenomic Library [23], BioAscent Compound Libraries [24] | Provide well-annotated, target-focused compound sets for experimental validation of database mining results |

| Fragment Libraries | Maybridge Ro3 Diversity Fragment Library [25] | Enable fragment-based screening approaches and assessment of chemical starting point quality |

| Known Bioactives | LOPAC1280, NIH Clinical Collection, Microsource Spectrum [25] | Serve as positive controls and validation standards in assay development and benchmarking |

| Computational Tools | Pointwise Mutual Information (PMI) algorithms [22], Chemical fingerprinting tools | Enable quantitative comparison of database contents and chemical space characteristics |

| Curation Resources | External peer review committees [23], Community annotation platforms | Provide quality assessment and validation of chemical probe compounds and annotations |

Application in Benchmarking Chemogenomic Libraries

Within the context of benchmarking novel chemogenomic libraries against diverse compound sets, each database offers distinct value.

ChEMBL serves as the benchmark for bioactivity data quality, providing reference standards for potency and selectivity measurements. Its manually curated data enables reliable comparison of activity profiles across target families [17] [23].

ZINC provides the reference standard for purchasable chemical space, offering a baseline for assessing the commercial accessibility and structural readiness (e.g., 3D conformers) of novel library compounds [17] [21].

PubChem offers the most comprehensive coverage of assayed compounds, enabling benchmarking of screening hit rates and promiscuity patterns across a diverse assay landscape [17] [20].

DrugBank establishes the gold standard for approved drug properties, providing reference pharmacokinetic and safety profiles for assessing the drug-likeness of new chemical entities [17].

The EUbOPEN initiative exemplifies this integrated approach, utilizing public bioactivity data from sources like ChEMBL to assemble chemogenomic libraries covering one-third of the druggable proteome, then benchmarking their performance in patient-derived disease assays [23]. This demonstrates how strategic use of public databases accelerates the development of well-characterized chemical tools for target validation and drug discovery.

The concept of chemical space provides a fundamental framework for organizing and navigating the vast universe of possible molecules. In chemoinformatics, chemical space is defined as a multi-dimensional descriptor space where each point represents a chemical structure, enabling quantitative analysis of molecular relationships and properties [26]. For researchers in drug discovery and development, visualizing this high-dimensional space is crucial for tasks ranging from compound library design and diversity analysis to exploring complex structure-activity relationships [27]. Chemical space mapping has become increasingly important in the era of large-scale chemical databases, with public resources like ChEMBL, BindingDB, and PubChem containing millions of experimentally characterized compounds [9] [28].

The core challenge in chemical space visualization lies in transforming high-dimensional molecular representations into human-interpretable two or three-dimensional maps while preserving meaningful relationships [29]. This process, known as dimensionality reduction, allows scientists to identify patterns, clusters, and diversity hotspots that might not be apparent in the original high-dimensional space. Among the various techniques available, Principal Component Analysis (PCA) stands as one of the most widely used methods, though it is joined by several other powerful algorithms including t-Distributed Stochastic Neighbor Embedding (t-SNE), Uniform Manifold Approximation and Projection (UMAP), and Generative Topographic Mapping (GTM) [29] [30].

This guide provides a comprehensive comparison of chemical space mapping techniques, with particular emphasis on PCA visualization and the identification of molecular diversity hotspots. Through objective performance evaluation and experimental data, we aim to equip researchers with the knowledge needed to select appropriate mapping strategies for benchmarking chemogenomic libraries against diverse compound sets—a critical task in modern drug discovery pipelines.

Fundamental Techniques in Chemical Space Mapping

Molecular Representation and Descriptors

Before any visualization can be performed, molecules must be translated into numerical representations that capture their structural and physicochemical characteristics. The choice of molecular representation significantly influences the resulting chemical space map and the insights that can be derived from it [26]. Common descriptor types include:

- Extended Connectivity Fingerprints (ECFP): Circular fingerprints that capture topological structure by representing each atom and its circular neighborhood up to a specified diameter. ECFP6 is a specific implementation that identifies functional groups in each molecule and is well-suited for large molecular datasets [28].

- MACCS Keys: A set of 166 structural fragments encoded as binary bits (present or absent) in a molecule [29].

- Whole-Molecule Descriptors: Physicochemical properties such as molecular weight (MW), hydrogen bond donors (HBD), hydrogen bond acceptors (HBA), topological polar surface area (TPSA), number of rotatable bonds (RB), and partition coefficient (LogP) [26].

- ChemDist Embeddings: Continuous vector representations obtained from graph neural networks trained using deep metric learning, where Euclidean distances between embeddings simulate chemical similarity [29].

The concept of the "chemical multiverse" acknowledges that multiple valid chemical spaces can exist for the same set of molecules, each defined by a different set of descriptors [26]. This highlights the importance of selecting representations aligned with specific research questions, whether focused on structural similarity, property distributions, or bioactivity relationships.

Dimensionality Reduction Algorithms

Dimensionality reduction techniques transform high-dimensional descriptor data into lower-dimensional representations suitable for visualization. These algorithms can be broadly categorized into linear and non-linear approaches:

- Principal Component Analysis (PCA): A linear technique that identifies orthogonal axes (principal components) that capture maximum variance in the data. PCA is computationally efficient and deterministic but may struggle with complex non-linear relationships [31] [32].

- t-Distributed Stochastic Neighbor Embedding (t-SNE): A non-linear method that preserves local neighborhood structure by minimizing the divergence between probability distributions in high and low dimensions. t-SNE excels at revealing cluster patterns but can be computationally demanding for very large datasets [31].

- Uniform Manifold Approximation and Projection (UMAP): A relatively recent non-linear technique that assumes data is uniformly distributed on a Riemannian manifold. UMAP typically preserves more global structure than t-SNE while maintaining computational efficiency [29].

- Generative Topographic Mapping (GTM): A probabilistic alternative to self-organizing maps that fits a manifold to the data and provides an inverse transformation from low to high dimensions [29].

Each algorithm employs different mathematical principles to balance the preservation of local versus global structure, with significant implications for chemical space interpretation and analysis.

Comparative Analysis of Mapping Techniques

Performance Metrics and Benchmarking Approaches

Evaluating the effectiveness of chemical space mapping techniques requires careful consideration of performance metrics that quantify how well the low-dimensional representation preserves relationships from the original high-dimensional space. Key metrics include:

- Neighborhood Preservation: Measures the extent to which nearest neighbors in the original space remain neighbors in the reduced space. Common implementations include PNNk (percentage of preserved nearest neighbors) and QNNk (co-k-nearest neighbor size) [29].

- Trustworthiness and Continuity: Assess the preservation of local and global structure by quantifying the extent to which neighbors in the low-dimensional space were also neighbors in the original space, and vice versa [29].

- Area Under the QNN Curve (AUC): Provides a global assessment of neighborhood preservation across different neighborhood sizes [29].

- Local Continuity Meta Criterion (LCMC): Combines local and global preservation metrics into a single score [29].

These metrics enable quantitative comparison of mapping techniques, complementing qualitative assessment of visualization utility for specific research tasks.

Technique Comparison and Experimental Data

Table 1: Comparative Performance of Dimensionality Reduction Techniques Based on Neighborhood Preservation Metrics

| Technique | Neighborhood Preservation (Average) | Local Structure Preservation | Global Structure Preservation | Computational Efficiency | Best Use Cases |

|---|---|---|---|---|---|

| PCA | Moderate | Moderate | Strong | High | Initial exploration, linear datasets |

| t-SNE | Strong | Strong | Moderate | Low to Moderate | Cluster identification, pattern recognition |

| UMAP | Strong | Strong | Moderate to Strong | Moderate | Large datasets, balance of local/global structure |

| GTM | Moderate to Strong | Strong | Moderate | Moderate | Probabilistic interpretation, property landscapes |

| ChemTreeMap | Strong for hierarchical data | Strong within branches | Represents diversity through branch lengths | Moderate | Structural relationships, diverse datasets |

Recent benchmarking studies have provided quantitative comparisons of these techniques using standardized datasets and evaluation metrics. One comprehensive evaluation utilized subsamples from the ChEMBL database focusing on compounds tested against specific biological targets, with various molecular representations including Morgan fingerprints, MACCS keys, and ChemDist embeddings [29]. The study employed a grid-based search to optimize hyperparameters for each method using neighborhood preservation as the objective function.

The results demonstrated that non-linear methods generally outperform PCA in neighborhood preservation metrics. Specifically, UMAP and t-SNE showed superior performance in maintaining local neighborhoods while preserving reasonable global structure. However, PCA remains valuable for initial exploratory analysis due to its computational efficiency and interpretability [29]. The performance differences between techniques were consistent across different molecular representations, though the absolute values of preservation metrics varied with descriptor choice.

Table 2: Variance Explanation Capability of PCA Versus Alternative Techniques

| Technique | Dataset | Variance Explained (First 2 Components) | Variance Explained (First 50 Components) | Notes |

|---|---|---|---|---|

| PCA | DUD-E MK01 dataset | 5% | ~40% | Limited representation in 2D [31] |

| t-SNE | DUD-E MK01 dataset | N/A (non-linear) | N/A (non-linear) | Revealed active compound clusters not visible in PCA [31] |

| UMAP | ChEMBL subsets | N/A (non-linear) | N/A (non-linear) | Strong neighborhood preservation with optimized parameters [29] |

| GTM | ChEMBL subsets | N/A (non-linear) | N/A (non-linear) | Supports highly NB-compliant property landscapes [29] |

A critical finding from comparative studies is that the first two principal components in PCA often capture only a small fraction (e.g., 5%) of the total variance in the data [31]. This limitation underscores the importance of considering multiple dimensions or alternative techniques when analyzing complex chemical datasets. Nevertheless, PCA remains widely used in chemical space visualization, particularly for initial data exploration and when interpretability of components is valuable.

PCA Visualization: Methodology and Workflow

Experimental Protocol for PCA-based Chemical Space Mapping

Implementing PCA for chemical space visualization involves a systematic process from data preparation to interpretation:

Data Collection and Standardization: Compile molecular dataset and standardize structures using tools like RDKit or MolVS. This includes neutralizing charges, generating canonical tautomers, and removing duplicates or compounds with undesirable elements [26].

Descriptor Calculation: Compute molecular descriptors or fingerprints. For PCA, whole-molecule descriptors (HBD, HBA, TPSA, RB, MW, LogP) or dimensionality-reduced fingerprints are commonly used [26] [32]. Mordred is a comprehensive descriptor calculation tool that can compute over 1,800 molecular descriptors [32].

Data Preprocessing: Address missing values, remove zero-variance features, and standardize remaining features (mean-centered and scaled to unit variance) before applying PCA [29].

PCA Implementation:

Visualization and Interpretation: Plot the first two principal components (PC1 vs. PC2), optionally coloring points by molecular properties, bioactivity, or compound origins. Hover functionality can be implemented to display associated structures when exploring the plot [32].

Advanced PCA Applications and Limitations

While basic PCA provides valuable insights, researchers have developed advanced implementations to address specific challenges in chemical space analysis:

Chemical Satellite Approaches (ChemMaps): Utilizes reference compounds ("satellites") to project large libraries into a consistent chemical space. Sampling strategies include medoid sampling (center-to-outside), medoid-periphery sampling (alternating center and outlier selection), uniform sampling, and periphery sampling (outside-to-center) [27].

Extended Similarity Indices: Enables efficient comparison of multiple molecules simultaneously with O(N) scaling instead of traditional O(N²), facilitating the identification of high-density and low-density regions in chemical space [27].

Complementary Similarity Analysis: Calculates the effect of removing individual molecules from a library to identify compounds in high-density (central) versus low-density (peripheral) regions, informing satellite selection strategies [27].

Despite these advancements, PCA maintains inherent limitations. The technique assumes linear relationships between variables and may fail to capture complex non-linear patterns in molecular data [31]. Additionally, as noted previously, the first two components often explain only a small fraction of total variance, potentially misleading interpretation if considered in isolation. Researchers should always report the cumulative variance explained by visualized components and consider complementary non-linear techniques when analyzing complex chemical relationships.

Diversity Hotspot Identification

Methodologies for Detecting Chemical Diversity Hotspots

Chemical diversity hotspots represent regions of structural novelty or high variability within chemical space, often prioritized in drug discovery for identifying novel scaffolds or expanding structure-activity relationships. Multiple computational approaches facilitate hotspot detection:

Tree-Based Methods (ChemTreeMap): Synergistically combines extended connectivity fingerprints with a neighbor-joining algorithm to produce hierarchical trees with branch lengths proportional to molecular similarity. Longer distances between chemical families highlight more diverse regions of chemical space, enabling intuitive identification of diversity hotspots [28].

Clustering-Based Approaches: For very large datasets (e.g., ChEMBL, BindingDB), molecules are initially clustered by similarity (e.g., using MiniBatchKMeans) to reduce computational complexity. The number of molecules in each cluster can be represented by leaf size in subsequent visualizations, highlighting regions of high density versus sparse, diverse regions [28].

Dimensionality Reduction with Density Analysis: Applying density-based algorithms (e.g., DBSCAN) to low-dimensional projections from PCA, t-SNE, or UMAP to identify sparse regions representing structural outliers or diversity hotspots.

Cartographic Chemical Visualization: Mapping chemical diversity onto geographic representations using collection site information, revealing geographical areas with high chemical diversity. This approach has been applied to marine cyanobacterial and algal collections, identifying regions with distinctive metabolomes [33].

The effectiveness of these methods depends on the research context. For example, in analysis of food chemicals from FooDB, t-SNE effectively separated compounds from different flavor categories (earthy, herbaceous, green, fruity, floral, fatty, spicy, medicinal), revealing both shared chemical features and diversity hotspots between categories [26].

Workflow for Diversity Hotspot Analysis

Systematic identification of diversity hotspots involves:

Chemical Space Mapping: Generate 2D or 3D chemical space projection using PCA or alternative dimensionality reduction technique.

Density Calculation: Compute point density across the chemical space map using kernel density estimation or similar approaches.

Cluster Analysis: Apply clustering algorithms to identify grouped compounds and isolate outliers.

Diversity Metrics Calculation: Quantify diversity using metrics like within-cluster similarity, between-cluster distances, or scaffold complexity.

Hotspot Identification: Flag low-density regions and structural outliers as diversity hotspots.

Structural Validation: Analyze identified hotspots for novel scaffolds or underrepresented structural motifs.

Discovery Applications: Prioritize hotspots for compound acquisition or synthesis in library expansion efforts.

This workflow successfully identified previously unexplored regions in marine natural product collections, leading to the discovery of new chemical entities like yuvalamide A from marine cyanobacteria [33]. The approach demonstrates how chemical space mapping can directly guide discovery efforts toward structurally novel compounds.

Advanced Applications and Future Directions

Machine Learning-Enhanced Chemical Space Navigation

Recent advances in machine learning are revolutionizing chemical space navigation, particularly for ultra-large compound libraries. One promising approach combines machine learning classification with molecular docking to enable rapid virtual screening of billion-compound libraries [34]. The workflow involves:

Training a classification algorithm (e.g., CatBoost with Morgan fingerprints) to identify top-scoring compounds based on molecular docking of a subset (e.g., 1 million compounds).

Applying the conformal prediction framework to make selections from the multi-billion-scale library, reducing the number of compounds requiring explicit docking.

Experimental validation of predictions to identify novel ligands [34].

This approach reduced the computational cost of structure-based virtual screening by more than 1,000-fold while successfully identifying ligands for G protein-coupled receptors, demonstrating how machine learning can dramatically enhance efficiency in navigating vast chemical spaces [34].

Emerging Trends and Applications

Chemical space mapping continues to evolve with several emerging trends:

Multi-Target Chemical Space Analysis: Mapping compounds against multiple protein targets to identify selective compounds or multi-target ligands, as demonstrated in the discovery of compounds with activity against both A2A adenosine and D2 dopamine receptors [34].

Art-Driven Chemical Visualization: Leveraging visually appealing chemical space maps as artistic expressions while communicating chemical information. This approach can increase engagement with chemical data and facilitate science communication [26].

Real-World Benchmarking (CARA): The Compound Activity benchmark for Real-world Applications (CARA) addresses gaps between idealized benchmark datasets and real-world scenarios by incorporating characteristics like multiple data sources, congeneric compounds, and biased protein exposure [9].

Integration with Generative Models: Combining chemical space visualization with deep generative models to guide exploration and design of novel compounds with desired properties [30].

These advancements highlight the growing sophistication of chemical space analysis and its expanding applications across drug discovery and development.

Essential Research Reagents and Tools

Table 3: Key Research Reagents and Computational Tools for Chemical Space Mapping

| Tool/Reagent | Type | Function | Implementation Examples |

|---|---|---|---|

| RDKit | Open-source cheminformatics library | Molecular standardization, descriptor calculation, fingerprint generation | Calculate ECFP4/MACCS keys, whole-molecule descriptors [26] |

| Mordred | Molecular descriptor calculator | Computes 1,800+ 2D and 3D molecular descriptors | Comprehensive descriptor calculation for PCA input [32] |

| scikit-learn | Machine learning library | PCA implementation, data preprocessing, clustering | from sklearn.decomposition import PCA [31] |

| OpenTSNE | Dimensionality reduction library | Efficient t-SNE implementation | Alternative to PCA for non-linear dimensionality reduction [29] |

| umap-learn | Dimensionality reduction library | UMAP implementation | Balance of local and global structure preservation [29] |

| GNPS Platform | Mass spectrometry data analysis | Molecular networking, chemical diversity analysis | Analyze chemical diversity of natural product collections [33] |

| FooDB | Chemical database | Food chemical compounds with flavor categories | Example dataset for flavor chemical space analysis [26] |

| ChEMBL | Bioactivity database | Curated bioactivity data for drug discovery | Source of benchmarking datasets [9] |

| ChemTreeMap | Visualization tool | Tree-based chemical space visualization | Represent hierarchical chemical relationships [28] |

Chemical space mapping represents a cornerstone technique in modern chemoinformatics, enabling researchers to visualize and navigate complex molecular relationships. Through comparative analysis of techniques including PCA, t-SNE, UMAP, and specialized methods like ChemTreeMap, this guide provides a framework for selecting appropriate visualization strategies based on specific research objectives.

PCA remains a valuable tool for initial exploratory analysis due to its computational efficiency and interpretability, though researchers should acknowledge its limitations in capturing non-linear relationships and typically low variance explanation in two-dimensional projections. For diversity hotspot identification, tree-based methods and density analysis in non-linear projections often provide superior performance in detecting structurally novel regions.

As chemical datasets continue to grow in scale and complexity, integration of machine learning with chemical space visualization will play an increasingly important role in efficient navigation and design. The benchmarking approaches and experimental protocols outlined here provide a foundation for rigorous evaluation of chemical space mapping techniques in real-world drug discovery applications, particularly in the context of benchmarking chemogenomic libraries against diverse compound sets.

By understanding the strengths, limitations, and appropriate applications of each technique, researchers can leverage chemical space mapping to uncover meaningful patterns, identify novel chemical matter, and accelerate the drug discovery process.

Multi-Algorithmic Screening Approaches: Leveraging FTrees, SpaceLight, and SpaceMACS for Comprehensive Coverage

In modern computational drug discovery, effectively representing molecular structures is paramount for tasks ranging from virtual screening to chemical space exploration. The performance of these in silico models is highly dependent on the chosen molecular representation, which must capture essential structural and chemical features relevant to biological activity. Within the context of benchmarking chemogenomic libraries—systematic collections of compounds designed to probe diverse regions of the druggable genome—selecting optimal representation methodologies becomes particularly critical. This guide provides an objective comparison of three foundational approaches: pharmacophore features, molecular fingerprints, and maximum common substructure (MCS).

Robust benchmarking, as demonstrated in large-scale studies on drug combination sensitivity, requires supplementing quantitative performance metrics with qualitative considerations of interpretability and robustness, which vary significantly across methodologies and throughout preclinical projects [35]. The following sections compare these methodologies' underlying principles, performance characteristics, and practical applications, providing researchers with a framework for selecting appropriate tools for chemogenomic library analysis.

The table below summarizes the core characteristics, strengths, and limitations of the three complementary methodologies.

Table 1: Core Methodologies for Molecular Comparison and Search

| Methodology | Core Principle | Data Format | Key Strengths | Primary Limitations |

|---|---|---|---|---|

| Pharmacophore Features | Abstraction to steric and electronic features essential for molecular recognition [36]. | 3D spatial points (e.g., H-bond donor/acceptor, hydrophobic regions) [36]. | Direct encoding of binding interactions; scaffold hopping capability [36]. | Conformational dependence; can overlook specific atom connectivity. |

| Molecular Fingerprints | Vector representation of structural or chemical properties [37]. | Binary, count, or continuous vectors of fixed or variable length. | High-speed similarity search; vast benchmark data [35] [37]. | Performance is fingerprint-type and dataset dependent [35] [37]. |

| Maximum Common Substructure (MCS) | Identification of the largest shared structural fragment between molecules [38]. | Subgraph (connected or disconnected) common to two or more molecular graphs. | High chemical interpretability; direct scaffold identification [38] [39]. | High computational cost (NP-complete); less direct for similarity searching [40]. |

Performance and Experimental Data

Quantitative Benchmarking of Molecular Fingerprints

Molecular fingerprints have been extensively benchmarked across various tasks. Performance is highly dependent on the fingerprint type and the specific chemical space under investigation.

Table 2: Fingerprint Performance in Key Benchmarking Studies

| Application / Task | Fingerprint Types Compared | Key Performance Findings | Reference |

|---|---|---|---|

| Drug Combination Sensitivity & Synergy Prediction | 7 Data-Driven (GAE, VAE, Transformer, Infomax) vs. 4 Rule-Based (E3FP, Morgan, Topological) [35]. | No single fingerprint type was universally optimal; best performer varied by specific dataset and endpoint (CSS/Bliss/HSA/Loewe/ZIP synergy scores). | [35] |

| E3 Ligase Binder Classification | ErG (Pharmacophore), MACCS, RDKit, Avalon, ECFP4 [41]. | ErG achieved 93.8% accuracy using a multi-class XGBoost model, demonstrating the power of pharmacophore fingerprints for binding selectivity prediction. | [41] |

| Natural Products Bioactivity Prediction (QSAR) | 20 fingerprints from 5 categories (Path-based, Pharmacophore, Substructure, Circular, String-based) on 12 datasets [37]. | While ECFP is a default for drug-like compounds, other fingerprints (e.g., certain path-based and string-based) matched or outperformed it for natural products, highlighting the need for domain-specific evaluation. | [37] |

| Side-Effect Frequency Prediction | MACCS, Morgan, RDKIT, ErG integrated into a deep learning model (MultiFG) [42]. | Integration of multiple fingerprint types (structural, circular, topological, pharmacophore) yielded state-of-the-art performance (AUC: 0.929), showing the value of hybrid fingerprint approaches. | [42] |

Experimental Protocols in Benchmarking Studies

Standardized protocols are critical for meaningful methodology comparisons. Key experimental steps from cited studies include:

Data Curation and Standardization: High-quality input data is essential. Protocols typically involve:

- Source Data: Using publicly available databases (e.g., DrugComb for drug combinations [35], ChEMBL for structures [35], PROTAC databases for E3 ligase binders [41]).

- Structure Standardization: Stripping salts, neutralizing charges, and removing solvents using toolkits like the ChEMBL curation package or RDKit [35] [37].

- Dataset Splitting: Implementing stratified splits or cold-start protocols (where drugs in the test set are entirely unseen during training) to rigorously assess generalizability [42].

Molecular Representation Generation:

- Fingerprints: Calculated using standard software (e.g., RDKit, MOE) with default parameters unless specified. Studies often compare multiple types and lengths [35] [37].

- Pharmacophore Models: For structure-based approaches, protein-ligand complexes are used to define essential features [43] [36]. Ligand-based models are generated from multiple active conformers of known ligands to find common pharmacophore hypotheses [36].

- MCS Computation: Using efficient algorithms (e.g., RIMACS) that can handle connected or disconnected subgraphs under constraints, as this is an NP-complete problem [40] [39].

Downstream Analysis and Model Training:

- Similarity Assessment: Using metrics like Tanimoto/Jaccard similarity for fingerprints [37] or maximum common property (MCPhd) for descriptor-based substructures [38].

- Machine Learning: Training models (e.g., XGBoost, CNN) on the molecular representations for tasks like classification or regression, followed by rigorous cross-validation [41] [42].

- Cluster Analysis & Visualization: Applying techniques like t-SNE to visualize the chemical space defined by different representations [41].

Research Reagent Solutions

The table below lists key computational tools and data resources essential for implementing the discussed methodologies.

Table 3: Key Research Reagents and Computational Tools

| Item Name | Function / Application | Brief Description & Utility |

|---|---|---|

| RDKit | Cheminformatics Toolkit | Open-source platform for calculating fingerprints, generating descriptors, and general molecular informatics [37]. |

| Molecular Operating Environment (MOE) | Integrated Drug Design Software | Commercial software suite with robust implementations for pharmacophore modeling (e.g., ErG fingerprint) and molecular docking [41]. |

| RIMACS | MCS Computation | Open-source algorithm for computing maximum common substructures, with control over connected components [39]. |

| EUbOPEN Chemogenomic Library | Benchmark Compound Set | Annotated set of chemical probes and chemogenomic compounds covering a significant portion of the druggable proteome for benchmarking [23]. |

| COCONUT & CMNPD | Natural Product Databases | Extensive, curated databases of natural products for testing methodologies on chemically diverse and complex structures [37]. |

| DrugComb | Drug Combination Data Portal | Provides standardized data on drug combination sensitivity and synergy, useful for benchmarking predictive models [35]. |

| ConPhar | Consensus Pharmacophore Tool | Open-source informatics tool for generating robust consensus pharmacophores from multiple ligand-bound complexes [43]. |

Workflow and Decision Pathways

The following diagram illustrates a recommended workflow for selecting and applying these complementary methodologies, based on common research objectives in chemogenomic library benchmarking.

Pharmacophore features, molecular fingerprints, and maximum common substructure represent complementary methodologies with distinct strengths for analyzing chemogenomic libraries. The experimental data confirms that no single method is universally superior. Fingerprints offer speed and are excellent for machine learning, but their performance depends heavily on type and context [35] [37]. Pharmacophore models provide intuitive insights into binding interactions and are powerful for scaffold hopping [36]. MCS delivers high interpretability for identifying common cores but is computationally intensive [40] [38].

The most effective strategy for benchmarking and drug discovery projects involves selecting the methodology based on the specific objective, as outlined in the workflow diagram. Furthermore, hybrid approaches that integrate multiple representation types, such as combining structural and pharmacophore fingerprints or using MCS to inform feature selection, are increasingly shown to provide more robust and predictive models, ultimately enhancing the exploration and development of novel therapeutic agents [41] [42].

In the field of chemogenomics, the quality of a compound collection is paramount for discovering novel therapeutics. Assessing this quality requires unbiased comparison against a standardized set of pharmaceutically relevant structures. This guide details the creation of benchmark sets of bioactive molecules at different scales—Large (L), Medium (M), and Small (S)—to serve as references for evaluating the diversity and relevance of combinatorial chemical spaces and commercial compound libraries [7]. By providing a structured approach to benchmark set creation, this guide aids researchers in making informed decisions during the early stages of drug discovery.

A Taxonomy of Filtering Strategies for Reference Collections

The creation of robust benchmark sets relies on a variety of data filtering strategies. The table below summarizes key strategies identified from a systematic survey of methodological approaches in scientific literature [44].

Table 1: A Taxonomy of Data Filtering Strategies for Reference Collections

| Filtering Strategy | Description | Applicability in Chemogenomics |

|---|---|---|

| Authoritative Source | Relies on pre-curated, high-quality data sources as the foundation for the collection. | Using established databases like ChEMBL as the primary data source [45] [7]. |

| Quality-Based | Implements metrics to remove low-quality or unreliable data points. | Filtering molecules based on the quality and reliability of bioactivity data (e.g., Ki, IC50) [45]. |

| Rule-Based | Applies predefined rules or heuristics to include or exclude data. | Using deterministic rules for scaffold analysis or filtering based on physicochemical properties [45]. |

| Toxicity/Safety Policy | Filters out content deemed unsafe, harmful, or toxic. | Potentially used to remove compounds with known adverse effects or problematic structural alerts. |

| Human-in-the-Loop | Involves expert curation at various stages of the filtering process. | Manual verification of target annotations or mechanism of action [45]. |

The creation of three benchmark sets of successive orders of magnitude allows for flexible application across different research scenarios. The following table summarizes the quantitative characteristics of these sets, which were mined from the ChEMBL database for molecules displaying biological activity [7].

Table 2: Summary of Benchmark Set Sizes and Scales [7]

| Benchmark Set | Size (Number of Molecules) | Primary Use Case |

|---|---|---|

| Set L (Large-sized) | 379,000 | Large-scale virtual screening and exhaustive diversity analyses. |

| Set M (Medium-sized) | 25,000 | Standard library comparison and validation studies. |

| Set S (Small-sized) | 3,000 | Rapid prototyping and high-level diversity assessment. |