Benchmarking Chemogenomic Libraries: Design Strategies for Precision Oncology and Phenotypic Screening

This article provides a comprehensive benchmark analysis of modern chemogenomic library design strategies, addressing the critical needs of researchers and drug development professionals.

Benchmarking Chemogenomic Libraries: Design Strategies for Precision Oncology and Phenotypic Screening

Abstract

This article provides a comprehensive benchmark analysis of modern chemogenomic library design strategies, addressing the critical needs of researchers and drug development professionals. It explores the foundational principles of constructing targeted small molecule libraries, evaluates methodological advances for applications in precision oncology and phenotypic profiling, and systematically addresses key limitations and optimization techniques. By presenting rigorous validation and comparative frameworks, this review synthesizes performance data across diverse screening environments—from glioblastoma patient cells to large-scale fitness signatures—offering a practical guide for developing more effective, targeted chemogenomic tools to accelerate therapeutic discovery.

The Foundations of Chemogenomic Libraries: From Target Coverage to Polypharmacology

Chemogenomic libraries represent a powerful paradigm in modern drug discovery, designed to systematically probe the relationship between chemical compounds and their biological targets. Unlike general compound libraries, these are curated collections of small molecules selected for their known or predicted interactions with specific protein families or biological pathways. The primary value of these libraries lies in their ability to deconvolute complex biological phenomena and identify novel therapeutic targets, particularly in phenotypic screening approaches where the molecular mechanisms of action are initially unknown. Current design strategies predominantly follow two complementary philosophies: the scaffold-based approach, which builds libraries around core chemical structures informed by medicinal chemistry expertise, and the reaction-based make-on-demand approach, which leverages vast combinatorial chemistry spaces for maximum structural diversity. This guide provides an objective comparison of these strategies through experimental data and benchmarking studies, offering researchers evidence-based framework for library selection in precision drug discovery programs.

Library Design Philosophies: Core Strategic Differences

Scaffold-Based Design Approach

The scaffold-based methodology employs a structured, knowledge-driven strategy for library construction. This approach begins with the identification of core chemical scaffolds, often derived from compounds with demonstrated biological activity or favorable drug-like properties. Through collective efforts of chemoinformaticians and medicinal chemists, these scaffolds are then decorated with customized collections of R-groups to generate virtual libraries, which can be subsequently synthesized or acquired for screening [1]. This method prioritizes chemical tractability and expert curation over sheer size, resulting in focused libraries with high potential for lead optimization. The essential eIMS library containing 578 in-stock compounds and its virtual companion vIMS library of 821,069 compounds exemplify this approach, where virtual enumeration is guided by chemical expertise rather than purely computational parameters [1].

Make-on-Demand Chemical Space Approach

In contrast, the make-on-demand methodology, exemplified by commercial offerings like the Enamine REAL Space library, employs a reaction- and building block-based strategy. This approach leverages vast collections of available chemical building blocks and validated chemical reactions to create theoretically accessible compounds on demand [1]. The primary advantage of this strategy is the enormous structural diversity available, often encompassing billions of theoretically accessible compounds. However, this approach may include compounds with more challenging synthetic routes and potentially lower synthetic accessibility compared to carefully curated scaffold-based libraries [1].

Hybrid and Specialized Design Strategies

Beyond these two primary approaches, specialized strategies have emerged for specific applications. Chemogenomic library design for precision oncology emphasizes coverage of protein targets and biological pathways implicated in cancer, with careful adjustment for library size, cellular activity, chemical diversity, availability, and target selectivity [2] [3]. These libraries are specifically optimized for identifying patient-specific vulnerabilities, as demonstrated in glioblastoma patient cell profiling [3]. Similarly, phenotypic screening-optimized libraries integrate chemogenomic data with morphological profiling from assays like Cell Painting to facilitate target identification and mechanism deconvolution in phenotypic drug discovery [4].

Comparative Performance Analysis: Experimental Data

Chemical Space Coverage and Diversity

Independent benchmarking studies provide quantitative assessment of how different library strategies cover pharmaceutically relevant chemical space. Researchers have developed benchmark sets from the ChEMBL database to enable unbiased comparison of compound collections, with Set S (3,000 molecules) tailored for broad coverage of physicochemical and topological landscapes [5].

Table 1: Chemical Space Coverage of Different Library Types

| Library Type | Number of Compounds | Coverage Capacity | Unique Scaffolds | Primary Strengths |

|---|---|---|---|---|

| Scaffold-Based (vIMS) | 821,069 | Moderate | Limited but focused | High synthetic accessibility, expert curation |

| Make-on-Demand (REAL Space) | Billions (theoretical) | Extensive | High diversity | Maximum structural diversity, novelty potential |

| Targeted Cancer Library | 1,211 | Focused | Disease-relevant | Optimized for anticancer target coverage |

| Phenotypic Screening Library | 5,000 | Broad | Balanced diversity | Target identification capability |

Analysis using multiple search methods (FTrees, SpaceLight, and SpaceMACS) reveals that make-on-demand Chemical Spaces consistently provide a larger number of compounds similar to query molecules from benchmark sets compared to enumerated libraries [5]. However, each approach offers unique scaffolds for each method, suggesting complementary rather than strictly superior strategies.

Functional Performance in Biological Screening

Direct comparison of library performance in actual screening scenarios provides the most meaningful metrics for researchers. The functional hit rates and target identification capabilities vary significantly based on library design and application context.

Table 2: Functional Performance Metrics Across Library Types

| Application Context | Library Size | Hit Rate | Target Coverage | Key Findings |

|---|---|---|---|---|

| Glioblastoma Patient Cell Profiling [3] | 789 compounds | Highly variable across patients | 1,320 anticancer targets | Identified patient-specific vulnerabilities; highly heterogeneous responses |

| Macrofilaricidal Screening [6] | 1,280 compounds | 2.7% (35 hits) | Diverse target classes | Bivariate screening identified compounds with submicromolar potency |

| Phenotypic Screening [4] | 5,000 compounds | Not specified | Broad druggable genome | Enabled target identification from morphological profiling |

In the macrofilaricidal study, the chemogenomic library approach achieved a remarkable >50% hit rate in identifying compounds with submicromolar macrofilaricidal activity by leveraging abundantly accessible microfilariae for primary screening [6]. This demonstrates how library design adapted to specific biological constraints can dramatically improve screening efficiency.

Experimental Protocols: Methodologies for Library Evaluation

Protocol 1: Comparative Assessment of Chemical Content

This methodology directly compares scaffold-based and make-on-demand libraries through chemoinformatic analysis [1].

Workflow:

- Library Curation: Develop scaffold-focused datasets from both library types containing the same core scaffolds

- Overlap Analysis: Calculate strict structural overlap between libraries using fingerprint-based similarity methods

- R-Group Analysis: Identify and categorize R-groups not shared between libraries

- Synthetic Accessibility Scoring: Apply computational metrics to assess synthetic difficulty of compound sets

Key Metrics:

- Jaccard similarity indices for library overlap

- R-group frequency and uniqueness analysis

- Synthetic accessibility scores (low to moderate range preferred)

Experimental Insight: The results showed similarity between scaffold-based and make-on-demand approaches but with limited strict overlap. A significant portion of the R-groups in the scaffold-based library were not identified as such in the make-on-demand library, suggesting complementary chemical space coverage [1].

Protocol 2: Phenotypic Screening and Target Deconvolution

This methodology, applied in glioblastoma research, integrates chemogenomic screening with multi-parametric phenotypic assessment [3].

Workflow:

- Library Design: Select compounds covering defined anticancer target space (1,386 proteins)

- Cell Model Preparation: Culture patient-derived glioma stem cells representing GBM subtypes

- High-Content Imaging: Treat cells with library compounds and image using automated microscopy

- Multivariate Phenotyping: Analyze cell survival, morphology, and pathway activation phenotypes

- Target Annotation: Correlate phenotypic responses with compound target annotations

Key Metrics:

- Cell viability and proliferation rates

- Phenotypic heterogeneity scores across patients

- Target-pathway enrichment statistics

Experimental Insight: The cell survival profiling revealed highly heterogeneous phenotypic responses across patients and GBM subtypes, highlighting the importance of patient-specific screening approaches in precision oncology [3].

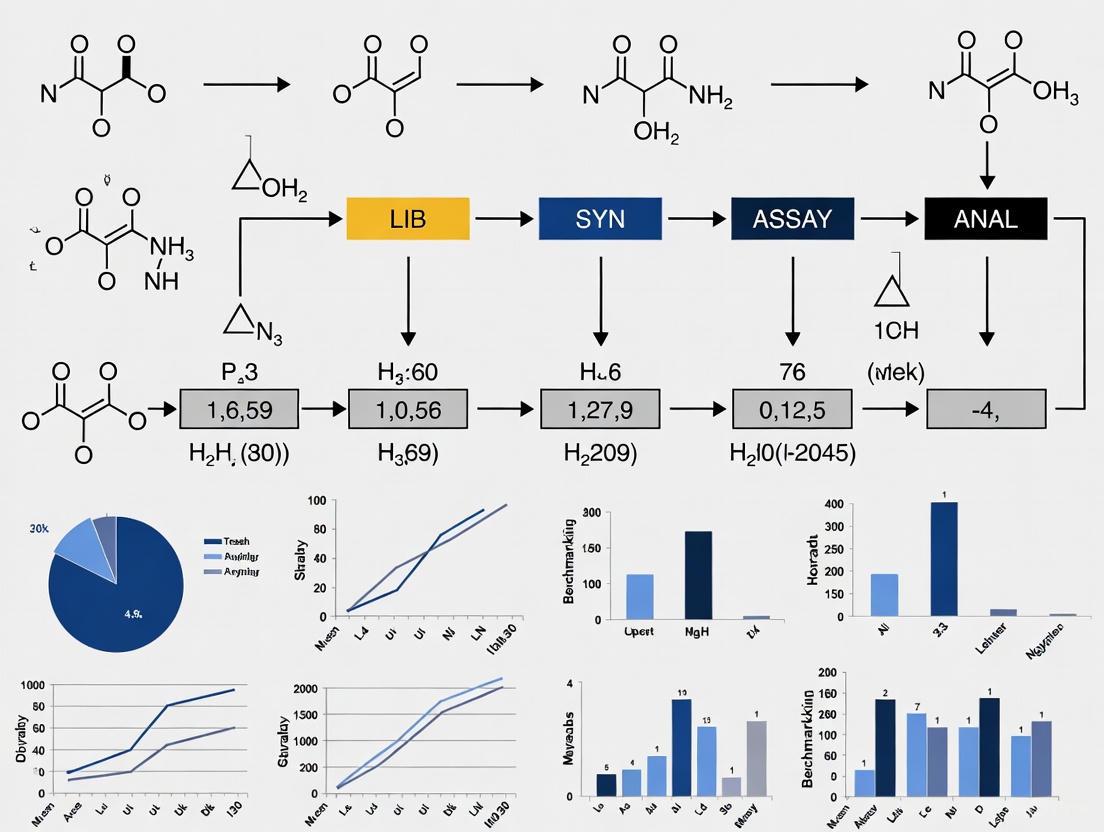

Diagram 1: Phenotypic Screening Workflow for Chemogenomic Libraries. This workflow integrates phenotypic screening with morphological profiling and network pharmacology for target identification.

Protocol 3: Bivariate Screening for Parasite Life Stages

This innovative approach, developed for antifilarial discovery, leverages different parasite life stages for efficient lead identification [6].

Workflow:

- Primary Microfilariae Screen:

- Test compounds against abundantly available microfilariae

- Assess motility (12 hours post-treatment) and viability (36 hours post-treatment)

- Implement optimized imaging and normalization protocols

- Secondary Adult Parasite Screen:

- Multiplex adult assays across multiple fitness traits

- Assess neuromuscular control, fecundity, metabolism, and viability

- Characterize stage-specific potency differences

- Target Validation:

- Compare human targets with parasite homologs

- Identify selective targeting opportunities

Key Metrics:

- Z'-factors for assay quality (>0.7 motility, >0.35 viability)

- Stage-specific EC50 values

- Phenotypic discorrelation indices

Experimental Insight: The use of microfilariae in primary screening outperformed model nematode developmental assays and virtual screening of protein structures inferred with deep learning, demonstrating the value of disease-relevant phenotypic screening [6].

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Essential Research Reagents and Platforms for Chemogenomic Research

| Reagent/Platform | Function | Application Context |

|---|---|---|

| Enamine REAL Space | Make-on-demand compound source | Billion-scale combinatorial chemistry |

| ChEMBL Database | Bioactivity data resource | Benchmark set creation, target annotation |

| Cell Painting Assay | Morphological profiling | Phenotypic screening, mechanism deconvolution |

| ScaffoldHunter Software | Scaffold analysis and visualization | Library diversity analysis, chemoinformatics |

| Neo4j Graph Database | Network pharmacology platform | Integrating drug-target-pathway-disease relationships |

| CACTI Analysis Tool | Chemical annotation and target prediction | Bulk compound analysis, target hypothesis generation |

| Tocriscreen Library | Bioactive compound collection | Chemogenomic screening, target discovery |

Strategic Implementation Guide

Library Selection Framework

Choosing between library design strategies requires careful consideration of research objectives, resources, and constraints:

Select Scaffold-Based Libraries When:

- Lead optimization is the primary goal [1]

- Medicinal chemistry expertise is available for library design

- Synthetic accessibility and compound availability are priorities

- Focused target coverage is preferred over maximum diversity

Select Make-on-Demand Libraries When:

- Maximum structural novelty and diversity are required [1] [5]

- Screening resources can accommodate larger compound sets

- Synthetic tractability is secondary to chemical space coverage

- Exploring underutilized chemical space is desirable

Select Specialized Chemogenomic Libraries When:

- Specific disease areas are targeted (e.g., oncology) [2] [3]

- Phenotypic screening requires target deconvolution capability [4]

- Balanced coverage of target classes is necessary

- Annotated bioactivity data enhances screening value

Future Directions and Emerging Trends

The field of chemogenomic library design continues to evolve with several emerging trends:

- DNA-encoded libraries (DELs) represent a growing technology that synergistically combines combinatorial chemistry with genetic barcoding, enabling screening of incredibly large libraries [7]

- Integrated bioinformatics platforms like CACTI facilitate automated multi-compound analysis across multiple chemogenomic databases, addressing the challenge of non-standardized compound identifiers [8]

- High-throughput chemogenomics is being facilitated by improved methods for working with challenging samples, such as degraded DNA from museum specimens [9]

- Artificial intelligence and machine learning are increasingly employed to predict compound-target interactions and optimize library design [8]

Diagram 2: Strategic Selection Framework for Chemogenomic Libraries. This decision pathway helps researchers select appropriate library strategies based on specific research objectives.

The comparative assessment of chemogenomic library design strategies reveals a nuanced landscape where different approaches offer complementary strengths rather than absolute superiority. Scaffold-based libraries provide curated chemical spaces with high synthetic accessibility and lead optimization potential, while make-on-demand spaces offer unprecedented structural diversity for novel hit identification. Specialized chemogenomic libraries bridge both approaches by incorporating target annotation and pathway coverage tailored to specific disease areas or screening paradigms.

The experimental data presented in this guide demonstrates that library performance is highly context-dependent, influenced by biological system, screening methodology, and research objectives. The most successful implementations will likely continue to leverage multiple library types in integrated screening strategies, combining the precision of scaffold-based design with the exploratory power of make-on-demand chemistry. As chemical biology continues to evolve, the strategic design and application of chemogenomic libraries will remain fundamental to accelerating drug discovery and target identification across therapeutic areas.

Chemogenomic libraries are carefully curated collections of small molecules designed to perturb a wide range of protein targets and biological pathways in a systematic manner. These libraries serve as critical tools in phenotypic drug discovery and chemical biology, enabling researchers to identify novel therapeutic targets and deconvolute complex mechanisms of action. The fundamental challenge in library design lies in balancing three competing priorities: library size (practicality and cost), chemical diversity (coverage of chemical space), and target selectivity (specificity versus polypharmacology). This guide examines the core design principles underlying modern chemogenomic libraries, comparing alternative strategies through quantitative data and experimental frameworks to inform library selection and implementation for drug discovery professionals.

Comparative Analysis of Chemogenomic Library Design Strategies

The table below summarizes key design parameters and performance characteristics of different chemogenomic library approaches, synthesized from current research:

Table 1: Quantitative Comparison of Chemogenomic Library Design Strategies

| Design Strategy | Library Size (Compounds) | Target Coverage | Chemical Diversity Approach | Selectivity Considerations | Reported Applications |

|---|---|---|---|---|---|

| Minimal Screening Library | 1,211 | 1,386 anticancer proteins | Bioactive compound prioritization; cellular activity filters | Balanced potency and selectivity; multi-target modulation | Glioblastoma patient cell profiling [2] [3] |

| System Pharmacology Network | 5,000 | Broad druggable genome | Scaffold-based diversity; target involvement in diverse biological effects | Polypharmacology focused; network-based target relationships | Phenotypic screening; target identification [4] |

| Physical Screening Library | 789 | 1,320 anticancer targets | Availability-adjusted; cellular activity confirmed | Patient-specific vulnerability identification | Glioma stem cell imaging; phenotypic responses [2] |

| Targeted Protein Family Libraries | Variable (kinase, GPCR-focused) | Specific protein families | Family-specific chemotypes | High intra-family selectivity | Mechanism-directed screening [4] |

Experimental Protocols for Library Validation

Protocol 1: Phenotypic Screening for Patient-Specific Vulnerabilities

This methodology was applied to identify patient-specific vulnerabilities in glioblastoma using a physical chemogenomic library [2] [3].

- Cell Preparation: Isolate and culture glioma stem cells from patient-derived glioblastoma samples

- Compound Treatment: Apply physical library of 789 compounds covering 1,320 anticancer targets using appropriate DMSO controls

- Viability Assessment: Employ high-content imaging to quantify cell survival and phenotypic responses

- Data Analysis:

- Calculate percent inhibition compared to controls

- Determine IC50 values using 4-parameter logistic (4PL) nonlinear regression models

- Analyze heterogeneous response patterns across patients and molecular subtypes

Key technical considerations: Include a minimum of 8-10 concentration points spaced equally across the expected response range, with at least three biological replicates per data point to ensure statistical robustness [10].

Protocol 2: Proteome-Wide Selectivity Profiling for Covalent Inhibitors

This competitive residue-specific proteomics workflow determines proteome-wide selectivity of covalent inhibitors [11].

- Sample Treatment: Divide proteome samples (e.g., bacterial or human cell lysates) into two aliquots

- Compound Exposure: Treat one sample with covalent ligand; maintain the other as vehicle control

- Probe Labeling: Apply broadly reactive alkyne probe to label unengaged residues

- Tag Conjugation: Attach isotopically differentiated isoDTB tags using copper-catalyzed azide-alkyne cycloaddition

- Sample Processing:

- Mix compound-treated and vehicle-treated samples

- Enrich modified peptides

- Proteolytically digest and elute modified peptides

- LC-MS/MS Analysis: Identify and quantify modified peptides using liquid chromatography coupled to tandem mass spectrometry

Data Interpretation: Peptides with high heavy:light ratios indicate residues engaged by the covalent ligand, enabling proteome-wide selectivity assessment [11].

Visualizing Chemogenomic Library Design Workflows

Diagram: Chemogenomic Library Design and Application Pipeline

The Scientist's Toolkit: Essential Research Reagents and Platforms

The table below catalogues critical resources employed in chemogenomic library development and implementation:

Table 2: Essential Research Reagents and Platforms for Chemogenomic Studies

| Reagent/Platform | Function | Application Context |

|---|---|---|

| ChEMBL Database | Bioactivity data repository | Compound-target annotation; bioactivity filtering [4] |

| Cell Painting Assay | High-content morphological profiling | Phenotypic screening; mechanism of action prediction [4] |

| isoDTB-ABPP Platform | Competitive residue-specific proteomics | Proteome-wide covalent ligand selectivity assessment [11] |

| ScaffoldHunter Software | Scaffold-based compound diversity analysis | Chemical space visualization; library diversity optimization [4] |

| Neo4j Graph Database | Network pharmacology integration | Drug-target-pathway-disease relationship mapping [4] |

| FragPipe Computational Platform | MS data analysis for modified peptides | Unbiased proteome-wide electrophile selectivity analysis [11] |

| PocketVec | Binding site descriptor generation | Druggable pocket characterization; binding site similarity assessment [12] |

The strategic design of chemogenomic libraries requires thoughtful balancing of multiple competing parameters. Smaller, focused libraries (∼800-1,200 compounds) demonstrate practical utility for identifying patient-specific vulnerabilities in disease models, while larger collections (∼5,000 compounds) enable broader exploration of chemical and target space. Successful implementation integrates multiple data types - from chemical bioactivity to structural proteomics - within a network pharmacology framework that acknowledges the inherent polypharmacology of most bioactive compounds. As chemical biology continues to evolve, the optimal library design will increasingly reflect the specific research question, whether targeting defined protein families, exploring phenotypic responses, or comprehensively mapping the druggable proteome.

The concept of the druggable genome, defined as the subset of human genes encoding proteins that can be targeted by therapeutic compounds, has fundamentally reshaped drug discovery over the past two decades [13]. By focusing research efforts on this biologically actionable subset of the genome, scientists can systematically prioritize targets with higher probabilities of therapeutic success. However, as technological advances in genomics, chemoproteomics, and structural biology have accelerated, the boundaries of the druggable genome have continuously expanded, creating both opportunities and challenges for target identification and validation. This guide provides a comparative analysis of current methodologies for mapping the druggable genome, evaluates the coverage and persistent gaps in existing chemogenomic libraries, and benchmarks experimental strategies for illuminating understudied targets, with a specific focus on their applications in precision oncology and autoimmune disease.

Defining the Druggable Genome: Estimates and Evolution

The original definition of "druggable" focused primarily on proteins capable of binding orally bioavailable, drug-like molecules [13]. Contemporary definitions have expanded to include additional parameters such as disease modification capability, tissue-specific expression, and absence of on-target toxicity. Table 1 compares key druggable genome estimates and their characteristics, highlighting the evolution of target coverage over time.

Table 1: Comparative Estimates of the Druggable Genome

| Source/Study | Estimated Size | Key Characteristics/Focus | Notable Inclusions |

|---|---|---|---|

| Hopkins & Groom (2002) [13] | ~3,000 genes | Original definition; focus on drug-like binding | Proteins with binding pockets for small molecules |

| Finan et al. [14] | ~4,500 genes | Expanded to include targets of biologics | Includes kinases, GPCRs, ion channels |

| DGIdb Database [14] | ~5,000 genes | Focus on genes with known drug interactions | Clinically investigated targets |

| IDG "Dark" Genome [15] [16] | 162 understudied protein kinases alone | Focus on chemically underexplored targets | Understudied kinases, ion channels, GPCRs |

Significant gaps persist despite these expanding estimates. The Illuminating the Druggable Genome (IDG) initiative, led by the NIH, has identified a "dark kinome" of 162 understudied human protein kinases, representing targets with interesting disease biology but a lack of high-quality chemical inhibitors for therapeutic intervention [15]. Similar understudied regions exist across other druggable gene families, including ion channels and G protein-coupled receptors (GPCRs) [16].

Methodological Approaches: A Comparative Framework

Multiple experimental and computational approaches are employed to define and explore the druggable genome. The choice of methodology directly influences the coverage, biases, and ultimate utility of the resulting chemogenomic library. Table 2 benchmarks the primary methodologies, highlighting their respective applications and limitations.

Table 2: Benchmarking Methodologies for Druggable Genome Exploration

| Methodology | Primary Application | Key Strengths | Inherent Limitations/Gaps |

|---|---|---|---|

| Multi-omics Mendelian Randomization [14] | Causal inference for target-disease relationships | Integrates genomics (eQTLs, pQTLs) with disease GWAS; validates causality using natural genetic variation | Limited by power and coverage of available omics datasets |

| Functional CRISPR Screening [17] | Unbiased identification of gene functions and pathways | High-throughput; directly tests gene function in relevant cellular contexts | Hit validation can be complex; may miss certain target classes |

| High-Throughput Imaging (HiDRO) [18] | Identifying 3D genome regulators | Quantitative measurement of complex phenotypes (e.g., chromatin interactions) in single cells | Technically challenging; requires specialized instrumentation and analysis |

| Chemical Proteomics [3] | Direct profiling of small molecule-protein interactions | Empirically maps compound interactions across the proteome | Limited by the diversity and design of the chemical probes |

| Structure-Based Assessment [13] | In silico prediction of ligandability | Scalable; provides residue-level druggability annotations | Relies on available protein structures; may miss allosteric sites |

Experimental Protocols in Practice

Protocol: Multi-omics Mendelian Randomization for Target Identification

This protocol, as applied to Sjögren's disease, identifies causal therapeutic targets by integrating genetic variants with multi-omics data [14].

- Step 1: Druggable Genome Curation. Curate a list of druggable genes from databases like the Drug-Gene Interaction Database (DGIdb) and published literature (e.g., Finan et al.), yielding ~6,800-7,000 candidate genes.

- Step 2: Instrumental Variable Selection. Obtain blood-derived cis-eQTL (expression), cis-mQTL (methylation), and cis-pQTL (protein) datasets. Select independent genetic variants within 1 Mb of the gene coding region that meet genome-wide significance (P < 5×10⁻⁸), have a high F-statistic (F > 10), and are in low linkage disequilibrium (r² < 0.001).

- Step 3: Mendelian Randomization Analysis. Perform two-sample MR using the omics data (exposure) and disease genome-wide association study (GWAS) summary statistics (outcome) to test for causal relationships.

- Step 4: Validation. Apply Bayesian colocalization to confirm shared causal genetic variants between exposure and outcome. Clinically validate findings by quantifying protein levels of prioritized genes in patient serum samples using ELISA.

Protocol: Druggable Genome CRISPR Screening for Immune Checkpoint Regulation

This protocol details a screen to identify druggable regulators of PD-L1 expression [17].

- Step 1: Library Design. Design a custom sgRNA library targeting ~1,400 druggable genes (approximately 10,000 sgRNAs total, with 7 sgRNAs per gene and ~500 control sgRNAs).

- Step 2: Cell Line Selection and Screening. Lentivirally transduce the sgRNA library into Cas9-expressing cancer cell lines (e.g., pancreatic MiaPaca2, ovarian OVCAR4) at a low multiplicity of infection (MOI ≈ 0.25). Select with puromycin and expand cells to maintain ~500x coverage per sgRNA.

- Step 3: Phenotypic Sorting. Treat cells with IFNγ (0.05 µg/ml) for 48 hours to induce PD-L1 expression. Use fluorescence-activated cell sorting (FACS) to isolate the top 25% (PD-L1-high) and bottom 25% (PD-L1-low) of cells.

- Step 4: Hit Identification. Isolate genomic DNA, amplify sgRNA barcodes by PCR, and sequence. Perform differential enrichment analysis (e.g., using Beta-binomial modeling in the CB2 tool) to identify sgRNAs and genes enriched in the PD-L1-low population.

Key Signaling Pathways and Workflows

The KEAP1/NRF2 Axis in PD-L1 Regulation

A druggable genome CRISPR screen identified the KEAP1/NRF2 pathway as a novel regulator of immune checkpoint protein PD-L1 [17]. The following diagram illustrates this signaling relationship.

This pathway reveals a counterintuitive role for NRF2 activation, which transcriptionally represses PD-L1, establishing the KEAP1/NRF2 axis as a druggable mechanism for modulating tumor immunity [17].

Integrated Workflow for Target Discovery and Validation

The following diagram outlines a comprehensive workflow for druggable genome screening and validation, integrating multiple modern methodologies.

This workflow demonstrates how computational and empirical approaches converge to prioritize high-confidence targets from the vast druggable genome [14] [17] [16].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful navigation of the druggable genome requires a carefully selected toolkit of reagents and resources. The following table catalogues key solutions used in the featured studies.

Table 3: Research Reagent Solutions for Druggable Genome Studies

| Reagent/Resource | Type | Primary Function | Example Application |

|---|---|---|---|

| DGIdb Database [14] | Database | Catalogues known drug-gene interactions and druggable genes | Curating starting gene lists for screening or analysis |

| Druggable Genome sgRNA Library [17] | Molecular Biology Reagent | Enables systematic knockout of ~1,400 druggable genes | Identifying regulators of a specific phenotype (e.g., PD-L1 expression) |

| eQTLGen Consortium Data [14] | Dataset | Provides blood-derived expression quantitative trait loci | Mendelian randomization to find causal gene-disease links |

| SHAPE-MaP Reagents [19] | Chemical Probe | Maps RNA secondary structure in living cells | Identifying druggable, functional regions in viral RNA genomes |

| Open Targets Platform [13] | Database | Integrates target-disease evidence from genetics, drugs, and more | Assessing the therapeutic potential of a novel target |

| ELISA Kits [14] | Assay Kit | Quantifies protein levels in biological samples | Validating differential protein expression in patient samples |

Discussion and Future Perspectives

Current chemogenomic library design strategies effectively cover the "illuminated" regions of the druggable genome but exhibit significant biases. Libraries based on historic drug targets or literature-curated genes may systematically overlook understudied ("dark") targets with novel biology [15] [16]. Precision oncology efforts, which use chemogenomic libraries to profile patient-derived cells, reveal highly heterogeneous phenotypic responses, underscoring the need for libraries with broader target coverage to address diverse disease mechanisms [3].

The future of navigating the druggable genome lies in integrating AI with expanding knowledge graphs that connect gene-level, protein-level, and residue-level data [13]. Furthermore, defining the "druggable RNome" represents a new frontier, with techniques like SHAPE-MaP enabling the identification of functional, targetable RNA structures within viral genomes, expanding the druggable universe beyond proteins [19]. As these tools mature, the next generation of chemogenomic libraries will provide more comprehensive, unbiased coverage, accelerating the discovery of therapies for previously untreatable diseases.

The design of effective chemogenomic libraries represents a critical step in modern drug discovery, bridging the gap between phenotypic screening and target-based approaches. As the field has evolved from a reductionist "one target—one drug" vision to a more complex systems pharmacology perspective, the integration of diverse data sources has become increasingly important for understanding polypharmacology and complex disease mechanisms [4]. Chemogenomic approaches aim to model the complex relationships between chemical compounds, genes, and protein targets, requiring sophisticated data integration strategies to be effective [20]. The challenges in this domain are substantial—most compounds modulate their effects through multiple protein targets with varying degrees of potency and selectivity, and designing targeted screening libraries of bioactive small molecules requires careful consideration of library size, cellular activity, chemical diversity, availability, and target selectivity [2].

Three data sources have emerged as particularly valuable for chemogenomic library design: ChEMBL, a manually curated database of bioactive molecules with drug-like properties; KEGG (Kyoto Encyclopedia of Genes and Genomes), a collection of manually drawn pathway maps representing molecular interactions and relations networks; and Disease Ontologies (DO), which provide a standardized classification of human disease terms and relationships [4] [21]. When integrated effectively, these resources enable researchers to construct comprehensive system pharmacology networks that connect drug-target-pathway-disease relationships, significantly enhancing the ability to identify potential therapeutic targets and deconvolute mechanisms of action observed in phenotypic screens [4]. The power of these integrated approaches has been demonstrated in various applications, from precision oncology strategies for glioblastoma [2] to understanding the toxicological mechanisms of emerging plasticizers [21].

ChEMBL: Bioactive Compound Data

ChEMBL is a manually curated database of bioactive molecules with drug-like properties, maintained by the European Bioinformatics Institute (EBI) [22]. It serves as a primary resource for chemical biology and drug discovery research, bringing together chemical, bioactivity, and genomic data to aid the translation of genomic information into effective new drugs. As of version 22, the database contained 1,678,393 molecules with defined bioactivities (including Ki, IC50, and EC50 values) and 11,224 unique targets across different species [4]. Each activity record is linked to the original publication, providing traceability and context for the data.

The database's primary strength lies in its structure-activity relationship (SAR) information, which is manually curated from peer-reviewed publications [23]. This curation process provides a degree of reliability that distinguishes it from non-curated resources. ChEMBL supports various search capabilities, including exact structure searches using SMILES strings or MOL files, substructure searches, and similarity searches based on fingerprint comparisons [23]. These features make it particularly valuable for chemogenomic library design, where understanding the relationship between chemical structure and biological activity is paramount.

KEGG: Pathway Integration

The KEGG pathway database provides a collection of manually drawn pathway maps representing known molecular interactions, reactions, and relation networks across multiple categories, including metabolism, cellular processes, genetic information processing, human diseases, and drug development [4]. The resource offers a systematic understanding of how small molecules and drugs modulate metabolic pathways and broader biological systems [23]. For chemogenomic applications, KEGG enables researchers to contextualize drug targets within broader biological pathways, helping to identify potential polypharmacological effects and compensatory mechanisms that might impact therapeutic efficacy [4].

KEGG's value in chemogenomic library design lies in its ability to connect compound-target interactions to downstream biological effects. When a compound modulates multiple targets within a pathway, or when multiple compounds target different nodes in the same pathway, KEGG annotations can help researchers understand the potential systems-level effects of these interventions. This pathway-centric view is particularly valuable for complex diseases like cancer, where multiple molecular abnormalities often coexist and require multi-target therapeutic strategies [4] [2].

Disease Ontologies: Standardized Disease Classification

The Disease Ontology (DO) resource provides a human-readable and machine-interpretable classification of biomedical data associated with human disease [4]. This standardized vocabulary enables consistent annotation of disease-related data across different resources and experiments. The DO resource includes thousands of DO identifiers (DOID) disease terms, creating a structured framework for connecting molecular data to human pathology [4] [20].

In chemogenomic library design, Disease Ontologies facilitate the connection between compound mechanisms and disease relevance. By annotating targets and pathways with relevant disease associations, researchers can prioritize compounds and targets based on their potential therapeutic applications. The structured nature of the ontology also enables computational analysis of disease relationships, such as identifying shared mechanisms between seemingly distinct conditions or understanding comorbidity patterns from a molecular perspective [4] [21].

Table 1: Key Characteristics of Core Data Resources for Chemogenomics

| Resource | Data Type | Content Scope | Update Frequency | Primary Applications in Chemogenomics |

|---|---|---|---|---|

| ChEMBL | Bioactive compounds & activities | 1.68M molecules, 11K targets (v22) [4] | Regular versions | SAR analysis, target deconvolution, selectivity profiling |

| KEGG | Pathways & networks | Manually drawn pathway maps for metabolism, cellular processes, human diseases [4] | Periodic releases | Pathway analysis, polypharmacology prediction, mechanism understanding |

| Disease Ontology | Disease terminology & relationships | 9,069 DOID disease terms (release 45) [4] | Ongoing revisions | Disease annotation, target prioritization, clinical translation |

While ChEMBL, KEGG, and Disease Ontologies form a core triad for chemogenomic research, several additional resources complement these databases. Gene Ontology (GO) provides computational models of biological systems at the molecular level, containing over 44,500 GO terms across biological processes, molecular functions, and cellular components [4]. The GO resource is particularly valuable for functional enrichment analysis, helping researchers understand the biological implications of compound-induced gene expression changes or genetic perturbations [4].

Other specialized resources include DrugBank, which integrates small molecule structure information with extensive annotations on drug targets, dosage, side effects, and interactions [23]; STITCH, which collects known and predicted interactions between small molecules and proteins [23]; and canSAR, which focuses primarily on cancer drug discovery by integrating chemical screening data with RNAi, mRNA, and 3D structural data [23]. The availability of these diverse resources, each with specialized strengths, highlights the importance of strategic resource selection based on specific research questions in chemogenomic library design.

Experimental Protocols for Data Integration

Protocol 1: Network Pharmacology Construction

The integration of ChEMBL, KEGG, and Disease Ontologies into a unified network pharmacology framework enables sophisticated analysis of drug-target-pathway-disease relationships. A representative protocol, adapted from recent chemogenomic library development efforts, involves multiple stages of data extraction, processing, and integration [4]:

Step 1: Data Extraction and Filtering Begin by extracting compound and bioactivity data from ChEMBL, selecting only those compounds with defined bioactivity data (e.g., Ki, IC50, EC50) from reliable assays. Apply appropriate activity thresholds (e.g., < 10 μM) to focus on potentially relevant compounds. For the resulting compound set, identify molecular targets and map them to standardized gene identifiers using resources like UniProt [4] [21].

Step 2: Pathway and Disease Annotation For each identified target, retrieve pathway annotations from KEGG and disease associations from Disease Ontology. This step contextualizes targets within broader biological systems and connects them to relevant human pathologies. Additional functional annotations can be obtained from Gene Ontology to understand the biological processes, molecular functions, and cellular components associated with each target [4].

Step 3: Network Construction and Analysis Import the integrated data into a graph database system such as Neo4j, creating nodes for compounds, targets, pathways, and diseases. Establish relationships between these nodes based on the annotated interactions (e.g., "compound A inhibits target B," "target B participates in pathway C," "pathway C implicated in disease D"). This network structure enables complex queries across the integrated data space, facilitating tasks such as target deconvolution from phenotypic screens or identification of novel therapeutic opportunities [4].

Step 4: Functional Enrichment Analysis Perform Gene Ontology, KEGG pathway, and Disease Ontology enrichment analyses using tools like the R package clusterProfiler. Apply appropriate multiple testing corrections (e.g., Bonferroni or Benjamini-Hochberg) with significance thresholds (e.g., adjusted p-value < 0.05) to identify statistically overrepresented terms and pathways [4] [21].

Figure 1: Experimental workflow for integrating ChEMBL, KEGG, and Disease Ontology data into a unified network pharmacology model.

Protocol 2: Chemogenomic Library Design for Phenotypic Screening

Designing targeted screening libraries for phenotypic applications requires careful consideration of multiple factors, including target coverage, chemical diversity, and biological relevance. A recently described protocol for precision oncology applications demonstrates this process [2]:

Step 1: Target Space Definition Define the biological domain of interest (e.g., oncology) and identify relevant protein targets through literature mining and database searches. Focus on targets with strong biological rationale and disease association evidence. For precision oncology applications, this might include kinases, epigenetic regulators, metabolic enzymes, and other cancer-relevant target classes [2].

Step 2: Compound Selection and Prioritization Select compounds that modulate the identified targets, prioritizing those with well-characterized activity profiles, adequate potency (typically IC50 < 1 μM), and demonstrated cellular activity. Apply chemical diversity filters to avoid overrepresentation of similar chemotypes and ensure broad coverage of chemical space. Tools like ScaffoldHunter can assist in analyzing molecular scaffolds and enforcing diversity at the structural level [4] [2].

Step 3: Selectivity and Polypharmacology Assessment Evaluate compound selectivity using bioactivity data from ChEMBL and other sources. Rather than exclusively seeking highly selective compounds, intentionally include compounds with defined polypharmacology profiles when such multi-target activity is therapeutically relevant. For cancer applications, this might include compounds that simultaneously target multiple kinase pathways or hit both epigenetic and metabolic targets [2].

Step 4: Functional Annotation and Categorization Annotate the selected compounds with information on primary targets, secondary targets, pathway associations (from KEGG), and disease relevance (from Disease Ontology). This annotation facilitates interpretation of screening results and enables hypothesis generation about mechanisms of action [4] [2].

Step 5: Experimental Validation Screen the designed library in relevant phenotypic assays, such as high-content imaging of patient-derived cells. For glioblastoma applications, this might involve screening against glioma stem cells from multiple patients to identify patient-specific vulnerabilities [2]. Analyze the resulting data to identify hit compounds and patterns of response, then use the annotated network to generate hypotheses about mechanisms underlying the observed phenotypes.

Benchmarking Studies and Performance Metrics

Case Study: Phenotypic Screening Library Development

A 2021 study developed a chemogenomic library of 5,000 small molecules representing a large and diverse panel of drug targets involved in various biological effects and diseases [4]. This effort integrated ChEMBL (version 22), KEGG (Release 94.1), Gene Ontology (release 2020-05), and Disease Ontology (release 45) into a systems pharmacology network using Neo4j graph database technology. The resulting library was designed to assist with target identification and mechanism deconvolution for phenotypic assays, particularly those using morphological profiling approaches like Cell Painting [4].

The integration methodology enabled coverage of a significant portion of the druggable genome while maintaining chemical diversity through scaffold-based filtering. When applied to phenotypic screening data from the Broad Bioimage Benchmark Collection (BBBC022), which included morphological profiling of 20,000 compounds in U2OS cells, the approach demonstrated utility in connecting compound-induced morphological changes to specific targets and pathways [4]. This case highlights how integrating multiple data sources can enhance the interpretability of complex phenotypic data.

Case Study: Precision Oncology Application

A 2023 study implemented analytic procedures for designing anticancer compound libraries adjusted for library size, cellular activity, chemical diversity, availability, and target selectivity [2]. The researchers created a minimal screening library of 1,211 compounds targeting 1,386 anticancer proteins, then applied a physical library of 789 compounds covering 1,320 anticancer targets to profile glioma stem cells from glioblastoma patients [2].

The resulting phenotypic profiling revealed highly heterogeneous responses across patients and glioblastoma subtypes, underscoring the importance of patient-specific approaches in precision oncology [2]. By integrating compound-target annotations with high-content cellular imaging data, the study demonstrated how chemogenomic libraries built from integrated data sources can identify patient-specific vulnerabilities that might be missed with more targeted approaches. This case study illustrates the translational potential of well-designed chemogenomic libraries in challenging clinical contexts.

Performance Metrics for Integrated Data Approaches

Table 2: Performance Comparison of Integrated Data Approaches in Chemogenomic Studies

| Study | Library Size | Target Coverage | Key Integration Features | Reported Outcomes |

|---|---|---|---|---|

| Phenotypic Screening Library (2021) [4] | 5,000 compounds | Large panel of drug targets | ChEMBL + KEGG + DO + Cell Painting morphology data | Improved target identification and mechanism deconvolution for phenotypic assays |

| Precision Oncology Library (2023) [2] | 1,211 compounds (virtual) 789 compounds (physical) | 1,386 anticancer targets (virtual) 1,320 targets (physical) | Focus on cellular activity, target selectivity, cancer pathway coverage | Identified patient-specific vulnerabilities in glioblastoma; heterogeneous responses across subtypes |

| Toxicology Application (2024) [21] | 2 plasticizers (ATBC, ESBO) | 5 core targets (EGFR, STAT3, TLR4, JUN, AR) | ChEMBL + KEGG + DO + molecular docking | Identified lipid metabolism disruption mechanisms via HIF-1 and immune-endocrine pathways |

Implementation Tools and Technical Solutions

Database and Visualization Technologies

Successful integration of ChEMBL, KEGG, and Disease Ontologies requires appropriate computational infrastructure. Neo4j, a high-performance NoSQL graph database, has been effectively used to create network pharmacology databases that integrate heterogeneous data sources [4]. Its graph-based architecture naturally represents the complex relationships between compounds, targets, pathways, and diseases, enabling efficient querying of multi-hop relationships that would be challenging in traditional relational databases.

For visualization and network analysis, Cytoscape provides a powerful platform for exploring and analyzing integrated networks. The CytoHubba plugin enables identification of core targets within complex networks using multiple topological parameters, including Maximal Clique Centrality (MCC), Degree, and Betweenness [21]. Similarly, the MCODE (Molecular Complex Detection) plugin facilitates module clustering analysis to identify densely connected regions of the network that may represent functional complexes or key regulatory modules [21].

Programming Environments and Analytical Tools

The R programming environment, particularly with packages like clusterProfiler, DOSE, and org.Hs.eg.db, provides robust capabilities for functional enrichment analysis [4]. These tools enable statistical assessment of overrepresented GO terms, KEGG pathways, and disease associations within target sets, with appropriate multiple testing corrections to control false discovery rates [4] [21].

For chemical informatics aspects, tools like ScaffoldHunter support the analysis of molecular scaffolds and fragments, enabling chemical diversity assessment and compound selection based on structural characteristics [4]. These analyses help ensure that designed libraries cover appropriate chemical space while maintaining structural integrity and synthetic feasibility.

Semantic Integration Approaches

Advanced integration approaches using semantic web technologies have been developed to address the challenges of combining heterogeneous data sources. The Chem2Bio2OWL ontology provides a formal description of knowledge in chemogenomics and systems chemical biology, describing the semantics of chemical compounds, drugs, protein targets, pathways, genes, diseases, and side-effects, along with the relationships between them [20].

This ontological approach enables more sophisticated querying and reasoning across integrated datasets. For example, it allows queries that find "all bioassays that contain activity data for a particular target" or "liver-expressed proteins that a given compound can interact with" by understanding the semantic relationships between these entities [20]. Such capabilities significantly enhance the utility of integrated data resources for complex chemogenomic questions.

Essential Research Reagents and Computational Tools

Table 3: Key Research Reagent Solutions for Chemogenomic Data Integration

| Tool/Resource | Type | Primary Function | Application Example |

|---|---|---|---|

| Neo4j | Graph database | Network construction and querying | Integrating drug-target-pathway-disease relationships [4] |

| Cytoscape with CytoHubba | Network analysis | Visualization and core target identification | PPI network analysis for key toxicological targets [21] |

| clusterProfiler (R) | Statistical analysis | Functional enrichment analysis | GO, KEGG, and DO enrichment calculations [4] |

| ScaffoldHunter | Chemical informatics | Scaffold analysis and diversity assessment | Chemical library design based on structural cores [4] |

| AutoDock Vina | Molecular docking | Binding affinity and interaction prediction | Plasticizer binding to core targets like EGFR, STAT3 [21] |

| STRING database | Protein interactions | PPI network construction | Building interaction networks for potential targets [21] |

The integration of ChEMBL, KEGG, and Disease Ontologies provides a powerful foundation for chemogenomic library design and phenotypic screening applications. By combining detailed compound-target information from ChEMBL with pathway context from KEGG and disease relevance from Disease Ontologies, researchers can create comprehensively annotated libraries that bridge the gap between phenotypic observations and mechanistic understanding. The experimental protocols and case studies discussed demonstrate the practical utility of these integrated approaches across various applications, from general phenotypic screening to precision oncology and toxicological assessment [4] [2] [21].

As the field advances, several trends are likely to shape future developments in data integration for chemogenomics. First, the increasing availability of high-content phenotypic data, such as morphological profiles from Cell Painting assays, creates opportunities for more sophisticated connections between compound-induced phenotypes and underlying mechanisms [4]. Second, the application of semantic web technologies and ontologies will enhance the ability to reason across integrated datasets and answer complex biological questions [20]. Finally, the growing emphasis on precision medicine will drive demand for patient-specific chemogenomic approaches that can identify individualized therapeutic vulnerabilities [2].

The continuous development and integration of these key data resources will remain essential for advancing chemogenomic research and accelerating the discovery of novel therapeutic strategies. As these resources evolve and improve, so too will our ability to design targeted libraries that effectively probe biological systems and identify promising therapeutic opportunities.

The Shift from 'One Target-One Drug' to Systems Pharmacology

For decades, the predominant paradigm in drug discovery has been the 'one drug-one target' approach, founded on the principle that highly specific drugs interacting with single molecular targets would yield optimal efficacy with minimal side effects [24]. This reductionist model has demonstrated success in treating infectious diseases and monogenic disorders but has proven inadequate for addressing complex, multifactorial diseases such as cancer, neurodegenerative conditions, and metabolic syndromes [25]. The limitations of single-target therapies have catalyzed a fundamental shift toward systems pharmacology, which embraces a holistic understanding of biological systems as interconnected networks and deliberately designs interventions that modulate multiple targets simultaneously [26].

This transition reflects an acknowledgment that complex diseases arise from disturbances across biological networks rather than isolated molecular defects. Systems pharmacology leverages advances in systems biology, high-throughput omics technologies, and computational modeling to develop multi-target therapeutics that can potentially yield enhanced efficacy, reduced side effects, and improved clinical outcomes for complex diseases [25] [27]. The following sections compare these paradigms, present experimental evidence, and detail the methodological frameworks driving this transformative shift in pharmaceutical research and development.

Comparative Analysis of Drug Discovery Paradigms

The table below summarizes the fundamental distinctions between the classical 'one target-one drug' approach and the emerging systems pharmacology paradigm.

Table 1: Key Features of Traditional Pharmacology versus Systems Pharmacology

| Feature | Traditional Pharmacology | Systems Pharmacology |

|---|---|---|

| Targeting Approach | Single-target | Multi-target / network-level [25] |

| Disease Suitability | Monogenic or infectious diseases | Complex, multifactorial disorders [25] |

| Model of Action | Linear (receptor–ligand) | Systems/network-based [25] |

| Risk of Side Effects | Higher (off-target effects) | Lower (network-aware prediction) [25] |

| Failure in Clinical Trials | Higher (60–70%) | Lower due to pre-network analysis [25] |

| Technological Tools | Molecular biology, pharmacokinetics | Omics data, bioinformatics, graph theory [25] |

| Personalized Therapy | Limited | High potential (precision medicine) [25] |

The Rationale for a Paradigm Shift

The limitations of the single-target paradigm are rooted in the inherent complexity and resilience of biological systems. Biological networks possess redundant functions and compensatory mechanisms that allow them to maintain function despite single-point perturbations [24]. Consequently, modulating a single target often proves insufficient to reverse a disease state that is sustained by network-wide dysregulation [24]. Furthermore, the single-target model frequently fails to account for the promiscuous nature of most drug molecules, which on average can interact with an estimated 6-28 off-target moieties, leading to unpredictable side effects or efficacy issues [26].

Systems pharmacology addresses these challenges by designing therapeutic strategies that mirror the complexity of the diseases they intend to treat. This approach recognizes that drug effects are not merely the result of isolated ligand-target interactions but emerge from the propagation of these perturbations through complex biological networks [24] [27]. This holistic perspective is particularly valuable for addressing drug resistance, a common challenge in epilepsy and oncology, as it is less probable for resistance to develop simultaneously against multiple targets [24] [28].

Experimental Evidence: Efficacy of Multi-Target Agents

Quantitative data from preclinical models provide compelling evidence for the superior efficacy of multi-target agents, particularly in difficult-to-treat conditions. The table below summarizes the efficacy of selected antiseizure medications (ASMs) with single versus multiple mechanisms of action across various rodent seizure models.

Table 2: Comparative Efficacy (ED50 in mg/kg) of Single-Target vs. Multi-Target Antiseizure Medications in Preclinical Models [28]

| Compound | Targets | MES Test | s.c. PTZ Test | 6-Hz Test (44 mA) | SRS in i.h. Kainate Model |

|---|---|---|---|---|---|

| Multi-Target ASMs | |||||

| Valproate | GABA synthesis, NMDA receptors, Na+ & Ca2+ channels | 271 | 149 | 310 | 190 |

| Topiramate | GABAA & NMDA receptors, Na+ channels | 33 | NE | 241 | 13.3 |

| Cenobamate | GABAA receptors, persistent Na+ currents | 9.8 | 28.5 | 16.4 | 16.5 |

| Single-Target ASMs | |||||

| Phenytoin | Voltage-activated Na+ channels | 9.5 | NE | NE | NE |

| Lacosamide | Voltage-activated Na+ channels | 4.5 | NE | 13.5 | - |

| Ethosuximide | T-type Ca2+ channels | NE | 130 | NE | NE |

Abbreviations: ED50: Median Effective Dose; MES: Maximal Electroshock Seizure; PTZ: Pentylenetetrazole; 6-Hz: Psychomotor Seizure Model; SRS: Spontaneous Recurrent Seizures; i.h.: Intrahippocampal; NE: Not Effective.

Interpretation of Experimental Data

The data reveal a clear trend: single-target ASMs like phenytoin and ethosuximide are often highly effective in one specific model but lack a broad spectrum of efficacy [28]. In contrast, multi-target ASMs such as valproate, topiramate, and cenobamate demonstrate activity across multiple models, including the pharmacoresistant 6-Hz (44 mA) test and chronic models of spontaneous recurrent seizures [28]. This broad-spectrum activity is critical for treating epilepsies with diverse and complex underlying pathophysiologies.

The clinical success of cenobamate, discovered via phenotypic screening and later found to possess a dual mechanism of action (enhancing GABAA receptor function and inhibiting persistent sodium currents), underscores the therapeutic value of multi-targeting [28]. Its efficacy in treatment-resistant focal epilepsy patients has been shown to surpass that of many other newer ASMs, providing clinical validation for the systems pharmacology approach [28].

Methodological Framework: Implementing Systems Pharmacology

The application of systems pharmacology relies on a robust methodological workflow that integrates diverse data types and computational analyses. The following diagram illustrates the key stages of a network pharmacology analysis.

Network Pharmacology Workflow

The successful implementation of a systems pharmacology approach depends on rigorously executed protocols at each stage of the workflow:

Data Retrieval and Curation: Researchers collect large-scale datasets from established databases. Drug-related data (chemical structures, targets, pharmacokinetics) are sourced from DrugBank, PubChem, and ChEMBL. Disease-associated genes and molecular targets are obtained from DisGeNET, OMIM, and GeneCards. Omics data (genomics, transcriptomics, proteomics, metabolomics) are retrieved from repositories like GEO, TCGA, and ProteomicsDB [25]. Data curation involves standardizing identifiers, removing duplicates, and filtering based on confidence scores and disease relevance.

Target Prediction and Filtering: Prospective drug targets are identified using both ligand-based (e.g., QSAR modeling, Similarity Ensemble Approach - SEA) and structure-based (e.g., molecular docking with AutoDock Vina or Glide) strategies [25]. Predicted targets are then evaluated against criteria including binding affinity profiles, expression in diseased tissue, and functional relevance based on Gene Ontology annotations.

Network Construction and Analysis: Networks (drug-target, target-disease, protein-protein interactions) are constructed using tools like Cytoscape and NetworkX [25]. Protein-protein interaction (PPI) networks are built from databases such as STRING, BioGRID, and IntAct, focusing on high-confidence interactions. Topological analysis using graph-theoretical measures (degree centrality, betweenness) identifies hub nodes and bottleneck proteins critical to network stability and function [25]. Community detection algorithms (e.g., MCODE) identify functional modules, which are then subjected to pathway enrichment analysis.

The following table catalogs key resources required for conducting systems pharmacology research, as applied in chemogenomic library design and phenotypic screening studies.

Table 3: Essential Research Reagent Solutions for Systems Pharmacology

| Category | Tool/Database | Functionality |

|---|---|---|

| Drug Information | DrugBank, PubChem, ChEMBL | Provides drug structures, protein targets, and pharmacokinetic data [25]. |

| Gene-Disease Associations | DisGeNET, OMIM, GeneCards | Catalogs disease-linked genes, mutations, and gene functions [25]. |

| Target Prediction | SwissTargetPrediction, SEA, PharmMapper | Predicts protein targets for small molecule compounds [25]. |

| Protein-Protein Interactions | STRING, BioGRID, IntAct | Databases of known and predicted protein-protein interactions [25]. |

| Pathway Analysis | KEGG, Reactome | Curated databases of biological pathways and processes [25]. |

| Network Visualization & Analysis | Cytoscape, Gephi, NetworkX | Software platforms for constructing, visualizing, and analyzing biological networks [25]. |

| Chemogenomic Library | Custom-designed libraries (e.g., 789-compound set) | Targeted compound collections covering specific protein target spaces for phenotypic screening [2] [3]. |

Case Study: Precision Oncology in Glioblastoma

A practical application of these principles is demonstrated in a recent chemogenomic library design strategy for precision oncology. Researchers designed a targeted screening library of bioactive small molecules, optimized for library size, cellular activity, chemical diversity, and target selectivity [2] [3]. The resulting minimal screening library of 1,211 compounds was curated to target 1,386 anticancer proteins implicated in various cancers.

In a pilot screening study, a physical library of 789 compounds covering 1,320 anticancer targets was applied to glioma stem cells derived from patients with glioblastoma (GBM) [2] [3]. The phenotypic profiling, conducted via high-content imaging, revealed highly heterogeneous cell survival responses across different patients and GBM subtypes. This underscores the critical need for patient-specific therapeutic approaches and demonstrates how targeted multi-compound libraries can efficiently identify patient-specific vulnerabilities within a systems pharmacology framework [2].

The following diagram conceptualizes how a single multi-target drug can modulate a disease network, in contrast to a combination of single-target drugs.

Drug Action Models Comparison

The shift from the 'one target-one drug' paradigm to systems pharmacology represents a fundamental transformation in drug discovery, moving from a reductionist view to a holistic, network-based understanding of disease and therapeutic intervention [24] [25]. The experimental evidence and methodological frameworks presented demonstrate the clear advantages of multi-target approaches for treating complex diseases, including enhanced efficacy, reduced potential for drug resistance, and better overall clinical outcomes [24] [28].

Future developments in this field will be driven by deeper integration of multi-omics data, advances in artificial intelligence and machine learning for target prediction and drug combination optimization, and the creation of more sophisticated computational models that incorporate structural systems pharmacology to understand the energetics and dynamics of drug interactions across biological networks [25] [27]. Furthermore, the application of these principles to chemogenomic library design is poised to enhance the efficiency of drug discovery pipelines, enabling more rapid identification of effective therapeutic strategies for complex diseases and ultimately facilitating the implementation of truly personalized medicine [2] [3] [27].

Design and Implementation: Building Targeted Libraries for Precision Oncology

The systematic design of high-quality small-molecule libraries is a cornerstone of modern drug discovery and chemical biology. In the context of precision oncology and chemogenomic research, the challenge lies in assembling compound collections that are optimally balanced for library size, biological activity, and chemical availability to maximize target coverage while ensuring practical utility in phenotypic screening campaigns [29] [2]. This guide objectively compares the performance of different library design strategies and assembly methodologies, providing researchers with experimental data and protocols to inform their selection process. The evaluation is framed within the broader thesis that data-driven, multi-parameter optimization is superior to traditional, intuition-based library assembly for identifying patient-specific therapeutic vulnerabilities [29] [30].

Comparative Performance of Library Design Strategies

Key Design Strategies and Performance Metrics

Library design strategies generally fall into several categories: target-based approaches (focusing on specific protein families or pathways), drug-based approaches (utilizing approved and investigational drugs), and diversity-oriented approaches (maximizing structural variety) [29] [30] [31]. The performance of these strategies can be evaluated based on their target coverage efficiency, hit identification rates, and practical screening feasibility.

Table 1: Comparative Analysis of Library Design Strategy Performance

| Design Strategy | Typical Library Size | Target Coverage Efficiency | Hit Rate in Phenotypic Screens | Key Advantages | Primary Limitations |

|---|---|---|---|---|---|

| Target-Based (Focused) | 30 - 3,000 compounds [30] | High for specific target class [30] | Variable; highly dependent on assay relevance [29] | High relevance for specific pathways; enables mechanistic follow-up [30] | Limited scope; may miss novel biology or polypharmacology [29] |

| Drug-Based (Repurposing) | 100 - 2,000 compounds [29] | Moderate (covers 'liganded genome') [30] | Provides clinically actionable hits [30] | Favorable pharmacokinetics and safety profiles; rapid translation potential [29] [30] | Limited to known target space; less novel chemical matter [29] |

| Comprehensive Chemogenomic | 500 - 10,000+ compounds [29] | High (designed for broad coverage) [29] [2] | Identifies patient-specific vulnerabilities [29] [2] | Broad target space exploration; identifies novel mechanisms [29] [2] | Higher screening costs; complex data analysis [31] |

| DNA-Encoded | 10^6 - 10^10 compounds [32] | Massive theoretical coverage [32] | Not applicable (biochemical selection) [32] | Unprecedented library size for biochemical screening [32] | Requires specialized DNA-tagging and sequencing; no cellular context [32] |

Quantitative Benchmarking Data

Specific studies provide quantitative data on the performance of optimized libraries. The C3L (Comprehensive anti-Cancer small-Compound Library) development demonstrates the efficiency of a target-based, multi-objective optimization approach [29] [2].

Table 2: Performance Data from the C3L Library Assembly Pipeline [29] [2]

| Library Assembly Stage | Number of Compounds | Cancer-Associated Targets Covered | Coverage Efficiency (Targets/Compound) | Key Filtering Criteria |

|---|---|---|---|---|

| Theoretical Set (in silico) | 336,758 | 1,655 | 0.005 | Compound-target interactions from public databases |

| Large-Scale Set | 2,288 | 1,655 | 0.72 | Cellular activity, similarity filtering |

| Final Screening Set (C3L) | 1,211 | 1,386 | 1.14 | Cellular potency, commercial availability |

The pilot application of a 789-compound physical library derived from C3L for phenotypic screening of patient-derived glioblastoma stem cells revealed highly heterogeneous patient-specific vulnerabilities, validating the library's utility in precision oncology [29]. In a separate study focusing on kinase inhibitors, an optimized library (LSP-OptimalKinase) was designed to outperform six widely available kinase libraries (including SelleckChem, PKIS, Dundee, EMD, LINCS, and SP) in terms of target coverage and compound selectivity [30].

Experimental Protocols for Library Assembly and Validation

Protocol 1: Multi-Objective Optimization for Targeted Library Assembly

This protocol is adapted from the C3L design strategy, which treats library assembly as a multi-objective optimization problem to maximize target coverage while ensuring cellular activity and minimizing library size [29] [2].

Step 1: Define Target Space

- Curate a comprehensive list of proteins associated with the disease of interest using resources like The Human Protein Atlas and PharmacoDB [29].

- Expand the list to include influencer targets and nearest neighbors within biological networks.

- Expected Outcome: A target list of 1,000-2,000 proteins [29].

Step 2: Identify Bioactive Compounds

- Mine public databases (e.g., ChEMBL, PubChem) for compound-target interactions.

- Include both approved/investigational drugs and experimental probe compounds.

- Quality Control: Manually curate compound-target pairs to ensure data reliability [29].

Step 3: Apply Multi-Stage Filtering

- Activity Filtering: Remove compounds lacking demonstrated cellular activity [29].

- Potency Filtering: For each target, select the most potent compounds to reduce redundancy [29].

- Availability Filtering: Filter based on commercial availability for physical screening [29].

- Note: Filtering parameters (e.g., IC50 cutoffs, similarity thresholds) should be adjustable based on research goals.

Step 4: Validate Library Performance

- Execute a pilot phenotypic screen in biologically relevant models (e.g., patient-derived cells) [29].

- Use high-content imaging to measure multiple cellular phenotypes.

- Analyze heterogeneity of responses across different models to identify patient-specific vulnerabilities [29].

Protocol 2: Cheminformatic Analysis for Library Optimization

This protocol describes the use of cheminformatics tools to analyze and optimize compound libraries, based on methodologies used to compare kinase inhibitor libraries [30].

Step 1: Data Collection and Curation

- Gather chemical structures, target profiling data, and phenotypic profiling data from ChEMBL, vendor information, and literature [30].

- Standardize compound structures and resolve different naming conventions (e.g., research codes vs. generic names vs. brand names) using structural similarity (Tanimoto similarity of Morgan2 fingerprints) [30].

Step 2: Assess Chemical Similarity and Diversity

- Calculate pairwise Tanimoto similarities across the library [30].

- Visualize using similarity matrices to identify clusters of structural analogs [30].

- Quantify diversity by scoring the frequency and size of clusters above a structural similarity threshold (e.g., ≥0.7) [30].

Step 3: Evaluate Target Coverage and Selectivity

- Map compounds to their primary (nominal) and secondary (off-) targets using biochemical profiling data [30].

- Use algorithms to select the minimal set of compounds that maximally covers the desired target space with minimal off-target overlap [30].

Step 4: Assay Readiness Filtering

- Apply functional group filters (e.g., PAINS, REOS) to remove compounds with suspected assay interference properties [31].

- Assess physicochemical properties (e.g., solubility, lipophilicity) to ensure compatibility with assay systems [31].

Visualizing Library Assembly Workflows

Comprehensive Library Design and Screening Workflow

The following diagram illustrates the integrated process of designing a optimized screening library and applying it to identify patient-specific vulnerabilities, synthesizing concepts from the C3L and related methodologies [29] [2] [30].

Cheminformatic Library Optimization Process

This diagram details the computational workflow for analyzing and optimizing a compound library's properties, based on methodologies used to compare kinase inhibitor libraries [30].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful assembly and screening of compound libraries relies on specific reagents, databases, and software tools. The following table details key solutions used in the featured studies and their critical functions in the library assembly process.

Table 3: Essential Research Reagent Solutions for Library Assembly and Screening

| Tool/Resource | Type | Primary Function | Application in Library Design |

|---|---|---|---|

| ChEMBL | Database | Curated bioactivity data from scientific literature, patents, and screening assays [30] | Identifying compound-target interactions; sourcing activity data for filtering [29] [30] |

| The Human Protein Atlas/PharmacoDB | Database | Protein expression and cancer genomics data [29] | Defining initial cancer-associated target space [29] |

| Structural Similarity (Tc) | Computational Metric | Tanimoto similarity of Morgan2 fingerprints to quantify molecular similarity [30] | Assessing library diversity; identifying analog clusters; removing redundant structures [30] |

| PAINS/REOS Filters | Computational Filter | Structural alerts for compounds with promiscuous activity or undesirable properties [31] | Removing problematic compounds that may cause assay interference or exhibit poor drug-likeness [31] |

| C3L Explorer | Web Platform | Interactive visualization of compound libraries and screening data [29] [2] | Data exploration and sharing of library annotations and screening results [29] |

| LIFDI-MS | Analytical Instrument | Soft ionization mass spectrometry for labile metal clusters [33] | Characterizing composition of complex molecular libraries without separation [33] |

| Target-Annotated Compound Libraries | Physical Resource | Collections of compounds with known protein targets (e.g., C3L, PKIS) [29] [30] | Phenotypic screening to deconvolute mechanism of action from cellular responses [29] |

Glioblastoma (GBM) remains the most aggressive and lethal primary brain tumor in adults, with a median survival of only 15-18 months despite aggressive standard-of-care treatment involving maximal surgical resection, radiotherapy, and temozolomide chemotherapy [34]. Its pronounced intratumoral heterogeneity, diffuse infiltration into healthy brain parenchyma, and adaptive resistance mechanisms define GBM as a critical unmet need in oncology [35]. Precision oncology approaches aim to overcome these challenges by moving beyond generic treatments to therapies targeted against patient-specific molecular vulnerabilities. Chemogenomic libraries—systematically designed collections of bioactive small molecules—represent a powerful tool for functional phenotyping in this context, enabling the identification of patient-specific therapeutic susceptibilities directly in patient-derived cellular models [2] [3].