Benchmarking Bioinformatics Tools in 2025: A Performance and Application Guide for Life Science Researchers

This article provides a comprehensive comparative analysis of bioinformatics tool performance for specific genomic tasks, addressing the critical need for informed software selection in 2025.

Benchmarking Bioinformatics Tools in 2025: A Performance and Application Guide for Life Science Researchers

Abstract

This article provides a comprehensive comparative analysis of bioinformatics tool performance for specific genomic tasks, addressing the critical need for informed software selection in 2025. It first establishes a foundational overview of the current tool landscape, then details methodological applications for key research areas like variant calling, protein structure prediction, and metagenomic binning. The guide offers practical troubleshooting and optimization strategies, grounded in real-world benchmarking studies, to enhance analysis reproducibility and efficiency. Finally, it synthesizes validation frameworks and comparative performance metrics from recent independent benchmarks, empowering researchers, scientists, and drug development professionals to choose the optimal tools for their specific research goals and computational environments.

The 2025 Bioinformatics Toolbox: A Landscape of Essential Software for Modern Biology

Bioinformatics tools are indispensable for interpreting the vast biological datasets generated by modern high-throughput technologies, serving critical roles in genomics, proteomics, and systems biology [1]. These tools enable researchers to decipher complex biological processes, identify genetic markers, and facilitate discoveries in personalized medicine and drug development [2]. The selection of an appropriate tool depends on multiple factors, including the specific research question, the user's computational expertise, available hardware resources, and budget constraints [1]. This guide provides a comparative analysis of bioinformatics tools across core categories—sequence alignment, genomic analysis, protein structure prediction, and systems biology—by synthesizing their features, performance metrics, and optimal use-case scenarios to inform researchers, scientists, and drug development professionals in their selection process.

Core Tool Categories and Comparative Analysis

Sequence Alignment and Analysis Tools

Sequence alignment forms the foundation of comparative genomics, enabling researchers to infer structural, functional, and evolutionary relationships between genes or proteins by determining sequence similarity [3]. These tools operate by comparing sequences nucleotide-by-nucleotide or amino acid-by-amino acid, employing sophisticated algorithms to optimize matches while accounting for insertions, deletions (indels), and substitutions through gaps and gap penalties [3].

Table 1: Sequence Alignment and Analysis Tools

| Tool Name | Primary Function | Key Features | Pros | Cons | Pricing |

|---|---|---|---|---|---|

| BLAST [1] [2] | Sequence similarity searching | Rapid DNA/RNA/protein alignment; NCBI database integration; Customizable parameters | Highly reliable & widely cited; Extensive documentation | Slow for very large datasets; Limited to sequence similarity | Free |

| Clustal Omega [1] [2] | Multiple Sequence Alignment (MSA) | Progressive alignment; Handles large datasets; Phylogenetic tree visualization | User-friendly; Fast & accurate for large alignments | Performance drops with highly divergent sequences | Free |

| EMBOSS [1] [2] | Comprehensive sequence analysis | 200+ molecular biology tools; Multiple file format support; Command-line & web interfaces | Comprehensive suite; Highly customizable | Outdated interface; Steep learning curve for beginners | Free |

| VectorBuilder Alignment Tool [3] | DNA/protein sequence comparison | DNA alignment based on translated protein; Gap penalty optimization; Frame adjustment | Bridges DNA-protein sequence gap; Useful for cloning applications | Max sequence length 10,000 bases/amino acids | Free |

Genomic Analysis and Variant Calling Tools

Genomic analysis tools process and interpret high-throughput sequencing data, enabling variant discovery, genome assembly, and functional annotation. These tools are essential for identifying genetic variations, reconstruct genomic sequences, and associating genotypes with phenotypes.

Table 2: Genomic Analysis and Variant Calling Tools

| Tool Name | Primary Function | Key Features | Pros | Cons | Pricing |

|---|---|---|---|---|---|

| GATK [2] | Variant discovery | Variant calling, filtering & annotation; Optimized for NGS data; SNP/INDEL detection | Extremely accurate variant detection; Strong community support | Computationally intensive; Requires bioinformatics expertise | Free (license required) |

| Bioconductor [1] [2] | Genomic data analysis | 2,000+ R packages; RNA-seq/ChIP-seq/variant analysis; Reproducible research framework | Highly customizable; Powerful statistical capabilities | Steep learning curve for non-R users; Significant computational demands | Free |

| DeepVariant [1] | Variant calling | Deep learning for variant detection; Supports whole-genome & exome sequencing; High sensitivity for rare variants | Highly accurate; Strong performance on diverse data | Computationally intensive; Complex setup for non-experts | Free |

| GNNome [4] | De novo genome assembly | Geometric deep learning on assembly graphs; Handles repetitive regions; Symmetry-aware architecture | Comparable contiguity to state-of-art tools; Reduces fragmentation | Optimized for haploid genomes; Emerging technology | Free |

Protein Structure Prediction and Analysis

Protein structure prediction tools have revolutionized structural biology by enabling accurate 3D modeling of proteins from their amino acid sequences. These tools are particularly valuable for understanding protein function, interactions, and facilitating drug discovery efforts.

Table 3: Protein Structure Prediction Tools

| Tool Name | Primary Function | Key Features | Pros | Cons | Pricing |

|---|---|---|---|---|---|

| Rosetta [1] | Protein structure prediction & design | AI-driven 3D structure prediction; Protein-protein/ligand docking; de novo protein design | Highly accurate modeling; Versatile for drug design | Computationally intensive; Complex setup; Commercial licensing fees | Free (academic)/Custom |

| DeepSCFold [5] | Protein complex structure modeling | Sequence-derived structure complementarity; Enhanced paired MSA construction; Interface accuracy improvement | 11.6% TM-score improvement over AlphaFold-Multimer; Excellent for antibody-antigen complexes | Specialized for complexes; Requires complementary databases | Information missing |

Systems Biology and Visualization Platforms

Systems biology tools enable the integration and analysis of complex biological networks, pathways, and multi-omics data, providing a holistic view of biological systems rather than focusing on individual components.

Table 4: Systems Biology and Visualization Tools

| Tool Name | Primary Function | Key Features | Pros | Cons | Pricing |

|---|---|---|---|---|---|

| Galaxy [1] [2] | Bioinformatics workflow platform | Drag-and-drop interface; Extensive tool integration; Reproducible research; Collaborative features | Beginner-friendly, no coding required; Highly scalable | Limited advanced features; Performance depends on server resources | Free |

| Cytoscape [2] | Network visualization & analysis | Molecular interaction networks; Biological pathway visualization; Extensive plugin support | Powerful visualization; Highly customizable | Steep learning curve; Resource-heavy with large networks | Free |

| KEGG [1] | Pathway analysis & databases | Comprehensive pathway database; Pathway mapping & network analysis; Multi-omics integration | Extensive systems biology database; User-friendly interface | Subscription for full access; Overwhelming for beginners | Free/Subscription |

Experimental Protocols and Performance Benchmarks

Protein Complex Structure Prediction with DeepSCFold

Experimental Objective: To assess the accuracy of DeepSCFold in predicting protein complex structures compared to state-of-the-art methods including AlphaFold-Multimer and AlphaFold3 [5].

Methodology:

- Benchmark Datasets: The protocol was evaluated on two distinct datasets: (1) multimer targets from the CASP15 competition, and (2) antibody-antigen complexes from the SAbDab database [5].

- Input Preparation: Protein complex sequences were used as input. Monomeric multiple sequence alignments (MSAs) were generated from multiple sequence databases (UniRef30, UniRef90, UniProt, Metaclust, BFD, MGnify, and ColabFold DB) [5].

- Paired MSA Construction: DeepSCFold constructed paired MSAs using two sequence-based deep learning models: (1) a protein-protein structural similarity predictor (pSS-score), and (2) an interaction probability estimator (pIA-score). These models enabled ranking and selection of monomeric homologs based on structural compatibility rather than just sequence similarity [5].

- Structure Prediction: The series of constructed paired MSAs were fed into AlphaFold-Multimer for complex structure prediction [5].

- Model Selection & Refinement: The top-1 model was selected using an in-house complex model quality assessment method (DeepUMQA-X) and used as an input template for AlphaFold-Multimer for one additional iteration to generate the final structure [5].

Performance Metrics: Accuracy was evaluated using TM-score for global structure similarity and success rates for predicting binding interfaces specifically in antibody-antigen complexes [5].

Key Results:

- On CASP15 multimer targets, DeepSCFold achieved an improvement of 11.6% and 10.3% in TM-score compared to AlphaFold-Multimer and AlphaFold3, respectively [5].

- For antibody-antigen complexes from SAbDab, DeepSCFold enhanced the prediction success rate for binding interfaces by 24.7% and 12.4% over AlphaFold-Multimer and AlphaFold3, respectively [5].

- The method demonstrated particular effectiveness for complexes lacking clear inter-chain co-evolutionary signals, such as antibody-antigen and virus-host systems, by leveraging structural complementarity information [5].

De Novo Genome Assembly with GNNome

Experimental Objective: To evaluate the performance of GNNome, a geometric deep learning framework for path identification in assembly graphs, compared to state-of-the-art algorithmic assemblers [4].

Methodology:

- Data Simulation & Training: The model was trained on a dataset constructed from six chromosomes of the human HG002 reference genome using the PBSIM3 simulator (v3.0.0) to generate PacBio HiFi reads. Assembly graphs were generated with hifiasm (v0.18.7-r514) without any graph simplification steps to preserve edge information [4].

- Graph Processing: The framework used a novel Graph Neural Network (GNN) layer named SymGatedGCN that leverages the inherent symmetries of assembly graphs, where each read is represented by two nodes (original sequence and its reverse complement) [4].

- Path Identification: The trained model assigned probabilities to each edge in the assembly graph, reflecting its likelihood of contributing to the optimal assembly. A search algorithm then navigated through these probabilities to generate contigs [4].

- Evaluation Genomes: The framework was evaluated on the homozygous human genome CHM13, inbred genomes of Mus musculus and Arabidopsis thaliana, and the maternal genome of Gallus gallus [4].

Performance Metrics: Assembly quality was assessed using contiguity metrics (NG50, NGA50), completeness (percentage of genome assembled), and quality value (QV) for base-level accuracy [4].

Key Results:

- On CHM13, GNNome achieved an NG50 of 111.3 Mb and NGA50 of 111.0 Mb, outperforming hifiasm (87.7 Mb for both metrics) and other assemblers like HiCanu and Verkko [4].

- For Mus musculus, GNNome achieved an NG50 of 23.0 Mb and NGA50 of 19.3 Mb with 99.62% completeness, demonstrating robust performance across species [4].

- The framework produced assemblies with contiguity and quality comparable to state-of-the-art tools while relying solely on learned edge probabilities, without incorporating algorithmic simplification heuristics [4].

Research Reagent Solutions and Essential Materials

Successful implementation of bioinformatics analyses often requires both computational tools and specific data resources. The following table outlines key reagents and data solutions essential for the experiments discussed in this guide.

Table 5: Research Reagent Solutions for Bioinformatics Experiments

| Reagent/Data Solution | Function in Experiments | Example Sources |

|---|---|---|

| Reference Genomes | Provides ground truth for training and benchmarking assembly and variant calling tools | HG002 [4], CHM13 [4], species-specific references |

| Multiple Sequence Alignment Databases | Supplies evolutionary information crucial for structure prediction and homology modeling | UniRef30/90 [5], UniProt [5], Metaclust [5] |

| Protein Structure Databases | Offers templates and experimental data for structure validation and method training | Protein Data Bank (PDB) [5], SAbDab [5] |

| Benchmark Datasets | Enables standardized performance comparison across different tools and methods | CASP15 targets [5], SAbDab complexes [5] |

| Sequencing Read Simulators | Generates realistic training data for machine learning approaches in genome assembly | PBSIM3 [4] |

The bioinformatics tool landscape in 2025 is characterized by increasing specialization, with distinct tool categories addressing specific analytical needs from basic sequence alignment to complex systems biology. Performance benchmarks reveal that while established tools like BLAST and Clustal Omega remain essential for fundamental analyses, AI-driven approaches like DeepSCFold and GNNome are setting new standards for accuracy in protein complex prediction and genome assembly, particularly for challenging cases lacking clear evolutionary signals [5] [4].

Future developments will likely focus on enhanced integration of multi-omics data, improved handling of protein dynamics and conformational ensembles [6], and more accessible interfaces that democratize advanced bioinformatics capabilities. As these tools evolve, maintaining rigorous benchmarking standards and transparent reporting of limitations will be crucial for their responsible application in biomedical research and drug discovery. The integration of AI methods with traditional algorithmic approaches represents a promising pathway for addressing the persistent challenges in structural biology and genomics.

In the field of modern biological research, bioinformatics tools have become indispensable for transforming raw data into biological insights. Positioned at the intersection of biology, computer science, and data analysis, these tools are revolutionizing how we understand complex biological systems [1]. By 2025, the field is characterized by the exponential growth of genomic, proteomic, and metagenomic data, driving an increased demand for robust, scalable, and precise analytical software. Breakthroughs in genomics, precision medicine, and biotechnology are propelling this demand, requiring powerful tools to process, visualize, and interpret vast biological datasets efficiently and accurately [2]. The emergence of artificial intelligence has further transformed the landscape, with AI-powered tools achieving accuracy improvements of up to 30% while significantly reducing processing times [7].

This comparative analysis provides a structured framework for researchers, scientists, and drug development professionals to evaluate leading bioinformatics tools against objective performance criteria. The guide focuses on practical utility for specific research tasks, examining tools based on their analytical capabilities, computational requirements, and suitability for different user expertise levels. The evaluation encompasses sequence analysis, genomic data interpretation, structural biology, and workflow management, with particular attention to the growing integration of AI and machine learning. The objective is to deliver a data-driven resource that enables informed tool selection, enhancing research efficiency and reliability in 2025's competitive scientific environment.

Comprehensive Tool Comparison Tables

To facilitate direct comparison, the tables below summarize the key features, performance characteristics, and practical considerations for the top bioinformatics tools in 2025.

Table 1: Core Features and Applications of Leading Bioinformatics Tools

| Tool Name | Primary Function | Best For | Standout Feature | Platform Support | Pricing Model |

|---|---|---|---|---|---|

| BLAST | Sequence similarity searching | Sequence alignment & comparison [1] | Rapid local alignment against large databases [1] | Web, Linux, Windows, macOS [1] | Free [1] |

| Bioconductor | Genomic data analysis | Statistical analysis of high-throughput genomic data [1] | 2,000+ R packages for precise genomic analysis [1] [8] | Linux, Windows, macOS [1] | Free [1] |

| Galaxy | Workflow management | Accessible, reproducible analysis pipelines [1] | Drag-and-drop interface with no coding required [1] | Web-based, Cloud, Linux [1] | Free (academic) [1] |

| Rosetta | Protein structure prediction | Protein structure prediction & molecular modeling [1] | AI-driven 3D structure prediction with high accuracy [1] | Linux, Windows, macOS [1] | Free (academic) / Commercial license [1] |

| DeepVariant | Variant calling | Identifying genetic variants from sequencing data [1] | Deep learning for highly accurate variant detection [1] | Linux, Cloud [1] | Free [1] |

| Clustal Omega | Multiple sequence alignment | Evolutionary studies & molecular biology [1] | Progressive alignment for large datasets [1] | Web, Linux, Windows, macOS [1] | Free [1] |

| GATK | Variant discovery | Variant calling in high-throughput sequencing data [2] | Comprehensive variant detection & filtering [2] | Linux, Windows [2] | Free (license required) [2] |

| Cytoscape | Network visualization | Molecular interaction networks & biological pathways [2] | Visualization of complex biological networks [2] | Web, Linux, Windows [2] | Free [2] |

| EMBOSS | Comprehensive sequence analysis | Diverse molecular biology tasks [1] | 200+ tools for sequence analysis [1] | Linux, Windows, macOS [1] | Free [1] |

| MAFFT | Multiple sequence alignment | Large-scale DNA/RNA/protein alignments [1] | Fast Fourier Transform for rapid processing [1] | Web, Linux, Windows, macOS [1] | Free [1] |

Table 2: Performance Metrics and Experimental Considerations

| Tool Name | Accuracy Claims | Speed & Scalability | Technical Requirements | Limitations |

|---|---|---|---|---|

| BLAST | Statistical significance scores for matches [1] | Can be slow for very large datasets [1] | Web interface or command-line; computational expertise needed for advanced use [1] | Limited to sequence similarity, not structural analysis [1] |

| Bioconductor | High for statistical genomics [1] | Requires significant computational resources [1] | R programming knowledge essential [1] | Steep learning curve for non-R users [1] |

| Galaxy | Reproducible workflow results [1] | Performance depends on server resources; scalable in cloud environments [1] | No programming skills required [1] | Limited advanced features compared to commercial platforms [1] |

| Rosetta | High accuracy for protein modeling [1] | Computationally intensive, requires high-performance systems [1] | Complex setup for new users [1] | Licensing fees for commercial use [1] |

| DeepVariant | High sensitivity for rare variants [1] | Requires significant computational resources [1] | Complex setup for non-experts [1] | Limited to variant calling, not general analysis [1] |

| MAFFT | High accuracy for diverse sequences [1] | Extremely fast for large-scale alignments [1] | Command-line interface may be complex for beginners [1] | Less effective for highly divergent sequences [1] |

| GATK | Extremely accurate in variant detection [2] | Computationally intensive [2] | Solid understanding of bioinformatics required [2] | Requires significant hardware resources [2] |

Experimental Protocols and Performance Validation

Benchmarking Sequence Alignment Tools

Experimental Objective: To quantitatively compare the accuracy and efficiency of multiple sequence alignment tools (Clustal Omega and MAFFT) when processing datasets of varying sizes and evolutionary divergence.

Methodology:

- Test Datasets: Curate three distinct sequence sets: (1) a small dataset (50 sequences) of closely related protein homologs; (2) a medium dataset (500 sequences) with moderate divergence; and (3) a large-scale dataset (2,000 sequences) including highly divergent members [1].

- Alignment Execution: Process each dataset through both Clustal Omega and MAFFT using default parameters on identical computational infrastructure [1].

- Accuracy Assessment: Compare generated alignments to a manually curated and biologically verified reference alignment using quantitative scoring metrics like Sum-of-Pairs and Column Scores.

- Performance Metrics: Measure and record execution time and memory usage for each tool-dataset combination to evaluate computational efficiency [1].

Expected Outcomes: MAFFT is anticipated to demonstrate significantly faster processing times for large-scale datasets (2,000 sequences) due to its implementation of the Fast Fourier Transform algorithm [1]. Clustal Omega is expected to maintain high accuracy for datasets with moderate divergence, though both tools may show reduced performance with highly divergent sequences [1]. This experiment provides researchers with objective data to select the optimal alignment tool based on their specific dataset characteristics and computational constraints.

Evaluating Variant Calling Precision

Experimental Objective: To assess the sensitivity and specificity of AI-driven variant callers (DeepVariant) against traditional tools (GATK) using both simulated and real genomic data.

Methodology:

- Data Preparation: Utilize publicly available benchmark genomes (e.g., Genome in a Bottle Consortium) with well-characterized variant profiles, alongside in-house whole-genome sequencing data from matched tumor-normal samples [1] [2].

- Variant Calling Pipeline: Process all datasets through both DeepVariant (using its deep learning models) and GATK's Best Practices workflow (including HaplotypeCaller) [1] [2].

- Validation: Employ orthogonal validation methods, such as Sanger sequencing or microarray genotyping, for a subset of identified variants to establish ground truth.

- Analysis: Calculate precision (positive predictive value), recall (sensitivity), and F1-scores for each tool by comparing identified variants against known variant positions.

Expected Outcomes: Based on published claims, DeepVariant should demonstrate superior accuracy in variant detection, particularly for identifying difficult-to-call variants like indels in complex genomic regions, leveraging its deep learning architecture [1]. GATK is expected to provide robust, reliable performance across diverse genomic contexts, benefiting from its comprehensive filtering and annotation capabilities [2]. This protocol enables genomics researchers to benchmark variant calling performance in their specific experimental context, informing pipeline development for clinical or research applications.

Bioinformatics Workflow Integration

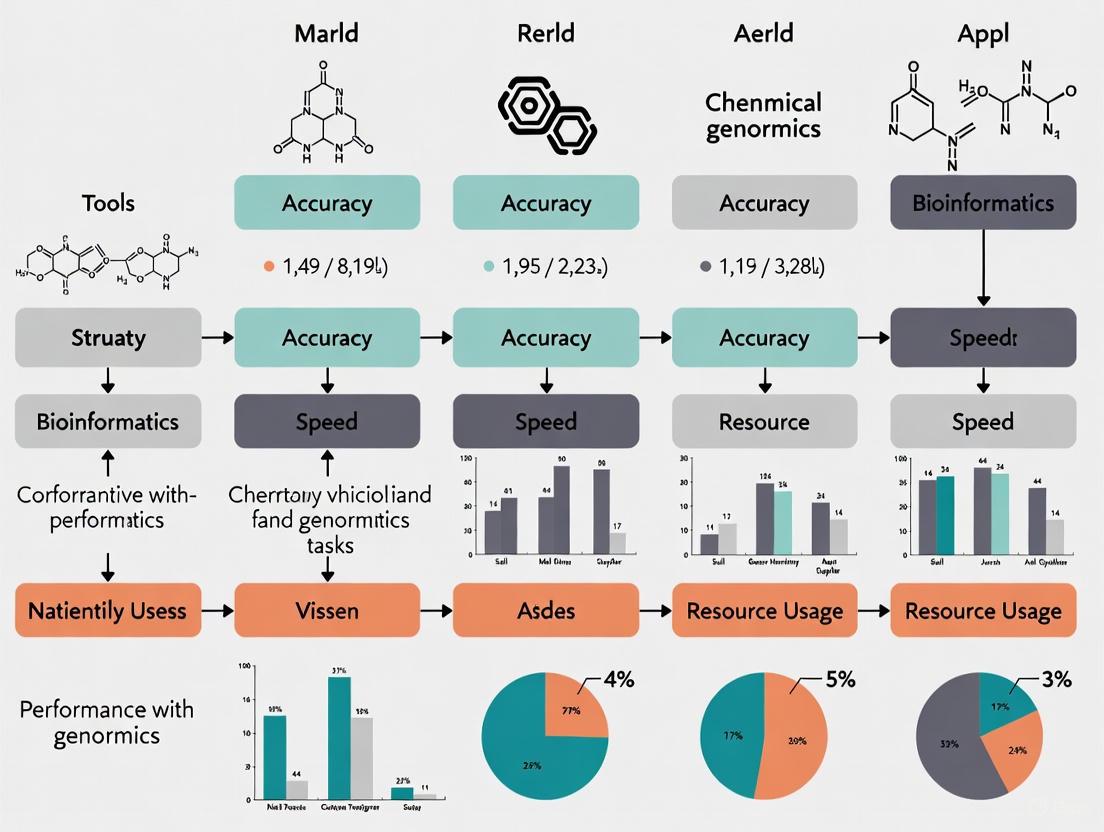

Modern bioinformatics research rarely relies on a single tool, but rather on integrated workflows that combine multiple specialized applications. The diagram below illustrates a representative analysis pipeline for variant discovery and interpretation, highlighting how different tools interact sequentially.

Diagram 1: Integrated variant discovery and interpretation workflow showing the sequence of analytical steps from raw data to biological insight, with associated tools for each stage.

This workflow demonstrates how specialized tools connect to form a complete analytical pipeline. Platforms like Galaxy excel in managing such integrated workflows by providing a unified interface where tools like BLAST, MAFFT, DeepVariant, and Bioconductor packages can be connected through a drag-and-drop interface without coding [1]. This integration capability is crucial for reproducible research, as it allows entire analytical pathways to be saved, shared, and executed consistently across different computing environments. The emphasis on workflow integration in 2025 reflects the growing complexity of biological research questions that require multi-faceted analytical approaches combining sequence analysis, statistical genomics, and functional interpretation.

Essential Research Reagent Solutions

Successful bioinformatics analysis requires not only software tools but also critical data resources and computational infrastructure. The following table details essential "research reagents" for computational biology.

Table 3: Essential Research Reagents for Bioinformatics Analysis

| Resource Category | Specific Examples | Function in Research | Application Context |

|---|---|---|---|

| Reference Databases | NCBI GenBank, UniProt, PDB [1] | Provide reference sequences, functional annotations, and 3D structures | Essential for BLAST searches, sequence annotation, and structural modeling [1] |

| Genome Browsers | UCSC Genome Browser [2] | Visualize genomic annotations and experimental data in genomic context | Critical for interpreting variant calls in regulatory regions and gene contexts [2] |

| Pathway Resources | KEGG PATHWAY Database [1] | Maps genes and variants to biological pathways for functional interpretation | Systems biology analysis to understand phenotypic impact of genetic findings [1] |

| Containerization | Docker, Bioconductor Docker images [8] | Ensures computational reproducibility and simplified software deployment | Maintaining consistent analysis environments across different research phases [8] |

| Package Managers | Bioconda [9] | Simplifies installation and dependency management for bioinformatics tools | Efficient setup of analysis environments, particularly for tools like SAMtools [9] |

| Format Standards | FASTA, SAM/BAM, VCF [1] [9] | Standardized file formats ensure tool interoperability and data exchange | Essential for transferring data between different analytical tools in a workflow |

Discussion and Future Directions

The comparative analysis of bioinformatics tools in 2025 reveals several dominant trends shaping the field. AI integration now powers many genomics analysis tools, with demonstrated improvements in accuracy and efficiency [7]. Tools like DeepVariant and Rosetta exemplify this trend, leveraging deep learning and AI-driven approaches to solve problems that were previously intractable with traditional algorithms [1]. The expanding accessibility of bioinformatics platforms, particularly through web-based interfaces like Galaxy, is democratizing complex数据分析 by enabling researchers without programming expertise to perform sophisticated analyses [1] [9]. Simultaneously, growing data volumes have intensified focus on security protocols to protect sensitive genetic information through advanced encryption and strict access controls [7].

Looking forward, several developments are poised to further influence the bioinformatics tool landscape. The treatment of genetic code as a biological "language" that can be interpreted by large language models represents an emerging frontier with potential implications for understanding gene regulation, predicting protein function, and identifying disease-associated variants [7]. The continued growth of cloud-based genomic platforms connecting hundreds of institutions globally is making advanced genomics accessible to smaller labs and fostering unprecedented collaboration [7]. The formation of the Galaxy and Bioconductor Community Conference (GBCC) in 2025 exemplifies the increasing collaboration between major open-source bioinformatics communities, promising enhanced interoperability and more integrated analytical ecosystems [10] [11].

For researchers selecting tools in this evolving landscape, the decision should be guided by specific research questions, computational resources, and technical expertise. Beginners and those prioritizing accessibility should consider Galaxy for its user-friendly interface, while computational biologists comfortable with R will find Bioconductor offers unparalleled analytical flexibility [1]. Structural biologists focused on protein modeling will benefit from Rosetta's AI-driven capabilities, while genomics researchers working with variant detection should evaluate both DeepVariant and GATK based on their specific accuracy requirements and computational resources [1] [2]. As the field continues to evolve at a rapid pace, maintaining awareness of these tools' comparative strengths and limitations remains essential for conducting cutting-edge biological research in 2025 and beyond.

Selecting the optimal bioinformatics tool is a critical step that directly impacts the efficiency, accuracy, and success of modern biological research. With the diversity of available software, a strategic approach aligned with specific research objectives and data characteristics is essential. This guide provides a comparative analysis of bioinformatics tools based on key selection criteria and experimental data to inform decision-making for researchers and drug development professionals.

The expansion of high-throughput technologies has generated vast amounts of biological data across genomics, transcriptomics, proteomics, and other omics fields [12]. This data deluge presents both opportunities and challenges, as the value extracted depends significantly on the analytical tools employed. Different research strategies demand specialized bioinformatics software, and selecting an inappropriate tool can lead to inaccurate results, wasted resources, and missed biological insights [12] [13]. This guide establishes a framework for matching tools to research goals through systematic evaluation criteria, performance comparisons, and experimental methodologies.

Key Selection Criteria for Bioinformatics Platforms

Evaluating bioinformatics tools requires assessing multiple technical and operational factors that determine their suitability for specific research contexts. The table below summarizes the primary criteria researchers should consider during the selection process.

Table 1: Key Evaluation Criteria for Bioinformatics Platforms

| Criterion | Description | Key Considerations |

|---|---|---|

| Data Integration Capabilities [13] | Ability to consolidate diverse data types (genomic, proteomic, clinical) | Reduces manual effort and errors; supports multi-omics approaches |

| Analytical Tools & Algorithms [13] | Quality and robustness of built-in algorithms for specific analyses | Validation status; accuracy for tasks like variant calling, pathway analysis |

| Scalability & Performance [13] | Handling of increasing data volumes efficiently | Cloud compatibility; parallel processing; large dataset management |

| User Interface & Usability [13] | Intuitiveness for users with varying computational expertise | Ease of use; training time required; graphical vs. command-line interface |

| Collaboration Features [13] | Support for multi-user access, data sharing, and version control | Facilitates teamwork across institutions; reproducible workflows |

| Security & Compliance [13] | Adherence to data privacy standards (HIPAA, GDPR) | Critical for clinical data; patient privacy protection |

| Cost & Licensing Models [13] | Transparency and flexibility of pricing plans | Long-term sustainability; budget constraints for academic vs. commercial use |

Beyond these technical factors, researchers should also consider the availability and responsiveness of vendor support, as well as the existence of an active user community for additional resources and troubleshooting [13]. Tools with strong community support often have more extensive documentation and troubleshooting resources.

Comparative Analysis of Bioinformatics Tools

This section provides a detailed comparison of commonly used bioinformatics tools across different categories, highlighting their specific strengths, limitations, and optimal use cases.

General-Purpose Platforms & Analysis Suites

These platforms offer broad functionality across multiple analysis types, often integrating various tools into cohesive workflows.

Table 2: Comparison of General-Purpose Bioinformatics Platforms

| Tool | Primary Function | Key Features | Pros | Cons |

|---|---|---|---|---|

| Galaxy [2] | Web-based platform for data integration, analysis, and visualization | Drag-and-drop interface; reproducible workflows; extensive tool integration | Open-source; highly customizable; excellent for collaboration | Performance issues with large datasets; steep learning curve |

| Bioconductor [2] | R-based analysis of high-throughput genomic data | Comprehensive R packages; statistical analysis; data visualization | Highly extensible; powerful for statistical analysis; open-source | Requires R programming knowledge; less intuitive interface |

| QIAGEN CLC Genomics Workbench [13] [2] | Comprehensive NGS data analysis | Integrated workflows for DNA, RNA, protein data; user-friendly interface | Comprehensive solution; robust visualization; drag-and-drop functionality | Expensive licensing; advanced features require experience |

| EMBOSS [2] | Comprehensive software suite for sequence analysis | Over 100 tools for sequence analysis; supports various file formats | Extensive toolkit; well-documented; highly customizable | Outdated interface; difficult for beginners |

Specialized Tools for Specific Analytical Tasks

These tools focus on particular types of biological data analysis, often providing more optimized performance for their specialized tasks.

Table 3: Comparison of Specialized Bioinformatics Tools

| Tool | Specialization | Key Features | Optimal Use Cases |

|---|---|---|---|

| BLAST [2] | Sequence alignment and similarity search | Sequence-to-sequence comparison; multiple database support; various output formats | Identifying homologous genes; predicting gene function; comparative genomics |

| GATK [2] | Variant discovery in NGS data | Variant calling, filtering, and annotation; SNP, INDEL, and structural variant detection | Genome-wide association studies (GWAS); precision oncology; population genetics |

| Cytoscape [2] | Network visualization and analysis | Molecular interaction networks; pathway analysis; plugin architecture | Protein-protein interaction networks; systems biology; pathway enrichment analysis |

| UCSC Genome Browser [2] | Genome data visualization | Genomic data visualization; custom data integration; comparative genomics | Exploring gene annotations; regulatory elements; visualizing sequencing data |

| Tophat2 [2] | RNA-seq data alignment | Splice junction detection; supports various sequencing technologies | Transcriptome analysis; alternative splicing studies; differential gene expression |

| Clustal Omega [2] | Multiple sequence alignment | Progressive alignment methods; DNA and protein sequences; visual output | Phylogenetic analysis; evolutionary studies; conserved domain identification |

Tool Performance in Specific Research Scenarios

The suitability of a bioinformatics tool varies significantly depending on the research context. The following section matches tools to common research scenarios.

Academic Research: Platforms like Geneious Prime or CLC Genomics Workbench offer user-friendly interfaces and flexible licensing suitable for labs with limited budgets [13]. Galaxy provides an excellent web-based option for collaborative academic projects with its reproducible workflows and extensive tool integration [2].

Clinical Genomics: Bioinformatics Solutions Inc. (BSI) and Roche NimbleGen provide validated tools compliant with regulatory standards, making them ideal for diagnostic applications [13]. GATK offers extremely accurate variant detection, which is critical for clinical interpretation [2].

Large-Scale Genomics Projects: Seven Bridges and DNAnexus excel in cloud scalability, supporting massive data volumes and collaboration across institutions [13]. These platforms are particularly suited for consortia-level projects involving thousands of samples.

Pathway & Functional Analysis: Ingenuity Pathway Analysis (IPA) by QIAGEN offers deep insights into biological pathways, making it suitable for functional genomics studies [13] [14]. Cytoscape provides powerful network visualization capabilities for analyzing molecular interactions [2].

Experimental Protocols and Validation

Validating bioinformatics tools through well-designed experiments and pilot projects is essential for demonstrating their reliability and suitability for specific research needs.

Experimental Design for Tool Evaluation

Rigorous assessment of bioinformatics tools requires controlled experiments comparing performance on benchmark datasets. The following protocol outlines a standardized approach for tool evaluation:

Table 4: Experimental Protocol for Bioinformatics Tool Validation

| Protocol Step | Description | Key Parameters |

|---|---|---|

| 1. Benchmark Dataset Selection | Curate standardized datasets with known characteristics | Include positive and negative controls; varying complexity levels |

| 2. Experimental Setup | Configure tools according to developer recommendations | Parameter settings; hardware allocation; version documentation |

| 3. Performance Metrics | Apply quantitative measures for comparison | Accuracy; precision; recall; computational efficiency; scalability |

| 4. Result Interpretation | Analyze outputs for biological relevance | Statistical significance; concordance with established knowledge |

This experimental framework ensures fair and reproducible comparisons between tools, providing empirical evidence to support selection decisions.

Case Studies in Tool Validation

Real-world implementations provide valuable insights into tool performance across different research scenarios:

Large-Scale Sequencing Project: A university utilized DNAnexus for a 10,000-sample sequencing project, achieving faster turnaround times and seamless data sharing between collaborating institutions [13]. The cloud-based platform demonstrated superior scalability compared to local computing resources.

Routine Gene Editing Analysis: A biotech firm adopted Geneious Prime for routine CRISPR analysis, reporting improved accuracy in guide RNA design and ease of use for both bioinformaticians and biologists [13]. The platform's intuitive interface reduced training time and increased productivity.

Clinical Diagnostics Integration: A clinical laboratory integrated BSI's bioinformatics tools for diagnostic applications, meeting regulatory compliance requirements while reducing analysis time by 30% [13]. The validated workflows ensured reproducible results for patient care decisions.

Visualization of Tool Selection Workflows

Effective visualization of analytical workflows helps researchers understand and communicate complex bioinformatics processes. The following diagrams illustrate key relationships and workflows in tool selection and application.

Bioinformatics Tool Selection Algorithm

Diagram 1: Tool Selection Workflow. This flowchart illustrates the decision-making process for selecting appropriate bioinformatics tools based on research goals, data characteristics, and resource constraints.

Multi-Omics Data Integration Framework

Diagram 2: Multi-Omics Integration Framework. This diagram shows how different omics data types are integrated through bioinformatics platforms for comprehensive biological analysis.

Essential Research Reagent Solutions

Beyond software tools, successful bioinformatics research requires various data resources and computational components. The table below outlines key "research reagents" in the bioinformatics context.

Table 5: Essential Bioinformatics Research Reagents and Resources

| Resource Category | Examples | Primary Function |

|---|---|---|

| Public Data Repositories [14] [12] | TCGA, GEO, Array Express, GenBank, Ensembl | Provide reference datasets for analysis; enable meta-analyses |

| Reference Genomes [14] | GRCh38 (human), GRCm39 (mouse) | Serve as alignment templates; provide genomic context |

| Analysis Toolkits [14] [2] | ANNOVAR, GSEA, OpenMS | Perform specific analytical tasks (variant annotation, enrichment) |

| Programming Environments [2] | R, Python with bioinformatics libraries | Enable custom analysis development; statistical computing |

| Visualization Tools [2] | UCSC Genome Browser, Cytoscape | Create publication-quality figures; explore data interactively |

Selecting the appropriate bioinformatics tool requires careful consideration of research goals, data types, scalability needs, and available expertise. As the field evolves toward more integrated AI-driven approaches, tool selection will continue to be a critical factor in research success. By applying the systematic framework presented in this guide—incorporating defined evaluation criteria, experimental validation, and workflow visualization—researchers can make informed decisions that maximize the value of their biological data and advance their scientific objectives.

The selection of bioinformatics platforms is a critical strategic decision for modern research institutions. This guide provides an objective, data-driven comparison between open-source and commercial bioinformatics platforms, focusing on their performance across core genomic analysis tasks. Framed within a broader thesis on comparative bioinformatics tool performance, we evaluate platforms based on experimental data, computational efficiency, and total cost of ownership. Below is a structured summary of key trade-offs to inform selection decisions for researchers, scientists, and drug development professionals.

Key Trade-offs at a Glance

| Evaluation Dimension | Open-Source Platforms | Commercial Platforms |

|---|---|---|

| Total Cost | Free or low-cost software; higher personnel/infrastructure investment [15] | Significant licensing/subscription fees; lower setup overhead [2] [16] |

| Customization & Flexibility | High; modular, script-based, and highly adaptable (e.g., Bioconductor, Nextflow) [1] [17] | Low to moderate; standardized workflows with limited modification options [15] |

| Ease of Use & Support | Steep learning curve; reliant on community forums and documentation [1] | User-friendly GUI, dedicated vendor support, and extensive training resources [16] [2] |

| Reproducibility & Compliance | Achievable via containerization (Docker) and workflow managers (Nextflow); user-managed [16] [17] | Built-in features for audit trails, GxP-compliance, and validated pipelines [16] |

| Best-Suited For | Computational biologists, method developers, and budget-conscious teams [1] | Regulated environments, diagnostic labs, and teams with limited bioinformatics staff [16] [15] |

Bioinformatics platforms form the operational backbone of modern life sciences, integrating data management, workflow orchestration, and analysis tools to process complex biological datasets [16]. The fundamental division in this landscape lies between open-source platforms, which are typically free, modular, and community-developed, and commercial platforms, which are paid, integrated, and vendor-supported. This analysis moves beyond subjective preference to a performance-based comparison, examining how each platform type handles specific, computationally intensive tasks. The exponential growth in genomic data—with genomics data doubling every seven months—makes this choice more critical than ever, as it directly impacts research velocity, reproducibility, and operational costs [16]. Understanding the inherent trade-offs enables organizations to align their strategic investments with their technical capabilities, research objectives, and operational constraints.

Methodological Framework for Comparison

To ensure an objective and repeatable analysis, we established a rigorous methodological framework centered on benchmarking core genomic tasks.

Experimental Protocols for Benchmarking

Our comparative analysis is grounded in standardized experimental protocols that reflect real-world research scenarios. The methodologies below are designed to quantify performance across key bioinformatics workflows.

Protocol 1: RNA-Seq Analysis for Differential Expression

- Objective: To compare the accuracy, runtime, and resource consumption of RNA-seq data analysis pipelines.

- Input Data: High-throughput RNA sequencing (RNA-seq) data in FASTQ format [18].

- Tools & Parameters:

- Alignment: STAR (open-source) and proprietary aligners within commercial platforms were used with default parameters [18].

- Quantification: Transcript-level abundance was estimated using Salmon (open-source) and commercial equivalent tools [18].

- Differential Expression: Statistical analysis was performed using DESeq2 and edgeR (open-source) and their commercial counterparts [18].

- Output Metrics: The protocol measures gene/transcript abundance estimates (TPM), counts of differentially expressed genes, false discovery rates (FDR), pipeline wall-clock time, and peak memory usage (RAM) [18].

Protocol 2: SARS-CoV-2 Subgenomic RNA (sgRNA) Identification

- Objective: To evaluate the concordance and sensitivity of different software in identifying canonical and non-canonical sgRNAs [19].

- Input Data: Amplicon-based sequencing data (Illumina MiSeq) from SARS-CoV-2 infected cell lines [19].

- Tools: The open-source tools Periscope, LeTRS, and sgDI-tector were evaluated. Commercial platform performance was inferred from published validations [19].

- Method: Tools were run on down-sampled datasets to normalize the number of input fragments. The analysis focused on identifying reads supporting known canonical sgRNAs (e.g., for N, M, S ORFs) and non-canonical species [19].

- Output Metrics: Key metrics included the percentage of initial fragments supporting sgRNAs, the concordance rate of identification between tools, and sensitivity in detecting low-abundance nc-sgRNAs [19].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution of bioinformatics analyses requires a combination of software tools and data resources. The following table details key components of a standard bioinformatics research environment.

Table: Key Research Reagent Solutions for Bioinformatics Analysis

| Item Name | Type | Function in Analysis |

|---|---|---|

| GGD (Go Get Data) [17] | Data Tool | A command-line interface for the standardized and reproducible downloading of genomic data (e.g., reference genomes, annotations). |

| Bioconda [17] | Package Suite | A channel for the Conda package manager that specializes in bioinformatics software, enabling easy installation and version management of over 3,000 tools. |

| Nextflow/Snakemake [16] [17] | Workflow Manager | Frameworks for defining, executing, and managing portable and scalable bioinformatics pipelines, ensuring reproducibility across different computing environments. |

| Docker/Singularity [16] | Containerization | Technologies that package software and all its dependencies into isolated containers, guaranteeing consistent performance and eliminating "works on my machine" problems. |

| FASTQ File [18] | Data Format | The standard raw data output from sequencing instruments, containing the nucleotide sequences and corresponding quality scores for each read. |

| BAM/SAM File [18] | Data Format | The standard format for storing aligned sequencing reads, indicating the position of each read relative to a reference genome. |

| GTF/GFF File [18] | Data Format | File formats containing genomic annotations, such as the locations of genes, transcripts, and exons, which are essential for quantifying expression. |

| Reference Genome [20] | Data Resource | A representative example of a species' DNA sequence, used as a scaffold for aligning sequencing reads to identify genetic variation (e.g., GRCh38 for human). |

Comparative Workflow Architecture

The fundamental difference between open-source and commercial platforms often lies in how analysis workflows are constructed and managed. The diagram below illustrates the typical architectural flow for each approach.

Diagram: Architectural comparison of typical analysis workflows.

Performance Analysis by Research Task

The performance gap between open-source and commercial platforms varies significantly depending on the specific research task. This section breaks down experimental results across common genomic analyses.

Sequencing Read Alignment and Variant Calling

Read alignment is a foundational step in genomic analysis, and tool choice directly impacts the accuracy of all downstream results [20].

Table: Performance of Alignment & Variant Calling Tools

| Tool / Platform | Type | Key Algorithm/Feature | Reported Accuracy | Resource Profile |

|---|---|---|---|---|

| STAR [18] | Open-Source | Spliced alignment via large genome indexing | High accuracy for splice junction mapping [18] | High memory usage, fast runtime [18] |

| HISAT2 [18] | Open-Source | Hierarchical FM-index for splice-aware mapping | Competitive accuracy with STAR [18] | Lower memory footprint, balanced runtime [18] |

| BWA [17] | Open-Source | Burrows-Wheeler Transform for pairwise alignment | Industry standard for DNA read alignment [17] | Efficient memory and CPU use [17] |

| DeepVariant [1] [17] | Open-Source | Deep learning for variant calling from sequencing data | High sensitivity for rare variants [1] | Computationally intensive, requires significant resources [1] |

| DRAGEN (Illumina) [21] | Commercial | Hardware-accelerated via FPGA | Equivalent to BWA-GATK Best Practices [21] | Ultra-rapid analysis, optimized cloud resource use [21] |

A critical study highlighted the profound impact of aligner choice on downstream results. When comparing splice-aware aligners (HISAT2, STAR, Subread) for RNA variant calling, researchers found that less than 2% of identified potential RNA editing sites were common across all tools [18]. The primary source of discrepancy was reads mapped to splice junctions, underscoring that alignment algorithm selection is a major source of technical variation in research findings [18].

RNA-Seq and Transcriptomic Analysis

For RNA-seq, the choice often lies between integrated commercial solutions and flexible, best-in-class open-source pipelines.

Table: Performance of RNA-Seq Analysis Tools

| Tool / Platform | Type | Best For | Pros | Cons |

|---|---|---|---|---|

| Salmon/Kallisto [17] [18] | Open-Source | Rapid transcript-level quantification | Fast, avoids alignment; reduced storage needs [18] | "Lightweight" mapping may miss some complex events [18] |

| DESeq2 / edgeR [18] | Open-Source | Differential expression analysis | Robust statistical models, highly customizable [18] | Steep learning curve (R programming) [1] |

| Galaxy [1] [2] | Open-Source Platform | Accessible, reproducible workflow creation | User-friendly web interface, no coding required [1] [2] | Can be slow with large datasets; cloud setup can be complex [1] |

| CLC Genomics Workbench [2] | Commercial Platform | Integrated NGS data analysis | User-friendly GUI, comprehensive workflows [2] | Expensive licensing; limited advanced customization [2] |

| Partek Flow [18] | Commercial Platform | GUI-driven statistical analysis | Intuitive visual pipeline builder | High subscription cost, "black box" processes |

Experimental data shows that quasi-mapping tools like Salmon and Kallisto provide dramatic speedups and reduced storage needs while maintaining high accuracy for standard differential expression tasks [18]. For the differential expression step itself, DESeq2 is often preferred for studies with low sample sizes due to its stable statistical shrinkage, while Limma-voom excels in large cohorts with complex designs [18].

Specialized and Emerging Applications

Performance can be highly task-specific. For example, in SARS-CoV-2 research, a comparison of open-source sgRNA identification tools (Periscope, LeTRS, sgDI-tector) showed a high concordance rate in identifying canonical sgRNAs, but significant differences emerged in detecting non-canonical species [19]. This illustrates that for novel or specialized applications, open-source tools may offer leading-edge functionality that is not yet available in standardized commercial packages.

Total Cost of Ownership and Operational Considerations

The financial decision extends far beyond initial software licensing fees to encompass the total cost of ownership (TCO), which includes personnel, infrastructure, and maintenance.

Table: Comprehensive Cost-Benefit Analysis

| Cost Factor | Open-Source Platforms | Commercial Platforms |

|---|---|---|

| Software Licensing | Free [21] [17] | High annual subscription or per-user fees [2] |

| Personnel & Training | Requires expensive, highly-skilled bioinformaticians [15] | Lower skill barrier; analysts can run analyses with less training [16] |

| Hardware & Infrastructure | User-managed HPC or cloud clusters, requiring internal expertise [1] | Often cloud-optimized; vendor may provide managed infrastructure [16] |

| Implementation & Maintenance | Significant time investment in installation, dependency management, and pipeline development [16] | Faster setup; vendor handles updates, maintenance, and support [16] |

| Value Proposition | Maximum flexibility and no vendor lock-in; ideal for method development and novel analyses [1] [17] | Faster time-to-insights for standard analyses; support and compliance are key value drivers [16] |

A core flaw in the "self-service" bioinformatics model is that data preprocessing, while computationally intensive, is only a small part of the value chain and is often not truly standard. Configuring pipelines for different organisms or sample types is "full of edge cases," leading teams to build one-off automations that don't transfer easily [15]. This heterogeneity has challenged many well-funded commercial platforms, some of which have pivoted to consultancy or narrowed their scope to a single data type [15].

Selecting the right bioinformatics platform is not about finding the "best" tool in absolute terms, but about finding the best fit for an organization's specific context. The following decision pathway provides a structured method for making this choice.

Diagram: A decision pathway for selecting between platform types.

Conclusive Recommendations

Based on the comparative data and analysis, we arrive at the following conclusive recommendations:

- For computationally skilled teams and pioneering research, the investment in open-source platforms is justified. The flexibility to customize pipelines using tools from communities like Bioconductor and BioPython, coupled with the power of workflow managers like Nextflow, is essential for tackling novel biological questions [1] [17]. The lack of licensing fees also frees up budget for high-performance computing infrastructure.

- For regulated industries and core service facilities, commercial platforms offer superior value. In diagnostic labs or biopharma settings requiring GxP-compliance, the built-in audit trails, validated pipelines, and vendor support provided by commercial platforms are not just convenient—they are necessary [16]. They enable biologists and analysts to generate consistent, reproducible results with less dependency on scarce bioinformatics expertise.

- For the majority of academic and biotech research groups, a hybrid strategy often proves most effective. This involves using commercial platforms for standardized, high-throughput analyses (e.g., routine RNA-seq) to ensure consistency and speed, while simultaneously maintaining an open-source environment for exploratory research, algorithm development, and analyzing data types not yet supported by commercial solutions.

In summary, the trade-off is a continuum between control and convenience. Open-source platforms offer maximum control and flexibility at the cost of higher internal complexity and personnel requirements. Commercial platforms offer greater convenience, support, and standardization at the cost of financial investment and analytical flexibility. The optimal choice is uniquely determined by an organization's technical capabilities, strategic research goals, and operational constraints.

Precision in Practice: Applying Bioinformatics Tools to Specific Research Tasks

Accurate genomic variant discovery is a foundational step in modern genetics, enabling breakthroughs in understanding inherited diseases, population diversity, and personalized medicine. Next-generation sequencing (NGS) generates vast amounts of data where precise identification of genetic variants is crucial for downstream analysis and clinical interpretation. The selection of optimal computational tools for variant calling significantly impacts the reliability and accuracy of research outcomes and diagnostic conclusions.

This guide provides a comprehensive comparative analysis of two leading variant discovery tools: the Genome Analysis Toolkit (GATK) and DeepVariant. GATK represents a sophisticated statistical framework that has long been the industry standard, while DeepVariant exemplifies the innovative application of deep learning to genomic analysis. We objectively evaluate their performance, technical approaches, and practical implementation through synthesized experimental data and benchmarking studies, providing researchers with evidence-based guidance for tool selection.

GATK: Statistical Framework

Developed by the Broad Institute, GATK is an industry-standard toolkit focused on variant discovery and genotyping. Its powerful processing engine and high-performance computing features make it capable of handling projects of any size [22]. GATK employs a sophisticated statistical approach centered on its HaplotypeCaller algorithm, which identifies variants through local de novo assembly of haplotypes followed by pair hidden Markov model (PairHMM)-based genotyping [23]. This method detects single nucleotide variants (SNVs), insertions, and deletions (indels) by comparing assembled haplotypes to the reference genome.

The toolkit provides "Best Practices" workflows that are battle-tested in production at the Broad Institute and optimized to produce accurate results with computational efficiency [22]. These workflows encompass all major classes of variants for genomic analysis in gene panels, exomes, and whole genomes. While originally developed for human genetics, GATK has evolved to handle genome data from any organism with any level of ploidy.

DeepVariant: Deep Learning Approach

DeepVariant, developed by Google Health, represents a paradigm shift in variant calling by reformulating the problem as an image classification task. This open-source tool uses deep convolutional neural networks (CNNs) to analyze pileup image tensors of aligned reads, effectively distinguishing true genetic variants from sequencing artifacts [24]. Instead of relying on hand-crafted statistical models, DeepVariant learns discriminative features directly from the data during training on known variant sets.

The tool creates multi-channel tensors from read alignments, with each channel representing different aspects of the sequencing data, such as read bases, base qualities, mapping qualities, and strand information. These tensors are processed through a CNN architecture that outputs genotype probabilities [25]. A key advantage of this approach is its ability to automatically produce filtered variants without requiring complex post-processing steps, significantly simplifying the analysis pipeline.

Performance Comparison

Accuracy Metrics Across Multiple Studies

Multiple independent benchmarking studies have systematically evaluated the performance of GATK and DeepVariant using gold-standard reference samples from the Genome in a Bottle (GIAB) consortium. The table below summarizes key accuracy metrics from these comprehensive assessments:

Table 1: Performance comparison of GATK and DeepVariant across multiple benchmarking studies

| Study & Context | Metric | GATK | DeepVariant |

|---|---|---|---|

| Sporadic Epilepsy & ASD Cohorts [26] | SNV Precision | Lower | Higher |

| SNV Sensitivity | Lower | Higher | |

| Rare Variant Detection | Distinct Advantage | Limited | |

| Trio WES (80 trios) [27] | Mendelian Error Rate | 5.25 ± 0.91% | 3.09 ± 0.83% |

| Ti/Tv Ratio | 2.04 ± 0.07 | 2.38 ± 0.02 | |

| Diagnostic Variants Detected | 61/63 (96.8%) | 62/63 (98.4%) | |

| GIAB WES Benchmarking [28] | SNV Precision | >99% | >99% |

| SNV Recall | >99% | >99% | |

| Indel Precision | >96% | >96% | |

| Indel Recall | >96% | >96% | |

| Systematic Benchmark (14 GIAB samples) [29] | Overall Performance | Robust | Best Performance & Highest Robustness |

| Consistency Across Samples | Moderate | High |

Computational Requirements and Scalability

Computational efficiency is a critical consideration for large-scale genomic studies. The following table compares the resource requirements and scalability characteristics of both tools:

Table 2: Computational requirements and scalability comparison

| Aspect | GATK | DeepVariant |

|---|---|---|

| Hardware Requirements | CPU-intensive, benefits from Intel optimizations [23] | Supports both CPU and GPU, higher computational cost on CPU [24] |

| Processing Time (Trio WES) [27] | ~3851 seconds for variant calling | ~425 seconds for variant calling |

| Scalability | Engineered for cloud environments with Spark architectures [22] | Used in large-scale projects (UK Biobank WES) despite computational costs [24] |

| Recent Optimizations | 3.9x speedup with optimized PDHMM implementation [23] | Active development but inherent computational demands |

| Ease of Deployment | Complex workflow setup, Best Practices documentation available [22] | Simplified pipeline, fewer implementation barriers [25] |

Experimental Protocols and Benchmarking Methodologies

Standardized Benchmarking Frameworks

Robust evaluation of variant calling performance requires standardized benchmarking approaches. Most contemporary studies utilize the following methodology:

Reference Datasets: The GIAB consortium provides gold-standard reference genomes with highly accurate variant calls derived from multiple sequencing technologies and orthogonal validation methods [28] [29]. Commonly used samples include:

- HG001 (NA12878): European ancestry

- HG002-HG004: Ashkenazi Jewish trio

- HG005-HG007: Chinese Han trio

Analysis Regions: Benchmarking is typically performed within high-confidence regions of the genome, which cover approximately 75-79% of known pathogenic variants from ClinVar, making them highly relevant for clinical variant discovery [29].

Evaluation Metrics: Standard metrics include:

- Precision: Proportion of true variants among all called variants

- Recall/Sensitivity: Proportion of known variants correctly identified

- F1 Score: Harmonic mean of precision and recall

- Mendelian Concordance: Inheritance consistency in family trios

- Transition/Transversion (Ti/Tv) Ratio: Quality indicator for SNV calls

Analysis Tools: The GA4GH benchmarking toolset, particularly hap.py, is widely used for stratified performance evaluation across different genomic contexts [29].

Specialized Experimental Designs

Beyond standard benchmarking, researchers have employed specialized experimental designs to evaluate specific aspects of performance:

Trio-Based Analysis: Studies using family trios enable assessment of Mendelian consistency and de novo mutation detection. This approach provides a realistic evaluation without requiring predetermined "truth" sets [27] [25].

Cross-Species Validation: Performance has been evaluated in non-human genomes to assess generalizability beyond human genomics, revealing limitations of human-trained models [25].

Challenging Sample Types: Both tools have been tested with suboptimal samples, such as formalin-fixed paraffin-embedded (FFPE) tissues, which present additional challenges due to DNA fragmentation and artifacts [30].

Workflow and Implementation

Analysis Pipelines

The variant discovery process follows a structured workflow from raw sequencing data to finalized variant calls. The diagram below illustrates the key stages where GATK and DeepVariant employ different methodological approaches:

Variant Discovery Workflow Comparison

Key Research Reagents and Solutions

Successful variant discovery requires not only computational tools but also carefully selected genomic resources and reagents. The following table details essential components for establishing a robust variant calling pipeline:

Table 3: Key research reagents and solutions for genomic variant discovery

| Resource Category | Specific Examples | Function in Variant Discovery |

|---|---|---|

| Reference Genomes | GRCh38, T2T-CHM13, species-specific references | Standardized coordinate system for read alignment and variant reporting |

| Validation Standards | GIAB reference materials (HG001-HG007) | Gold-standard truth sets for pipeline validation and performance benchmarking |

| Capture Kits | Agilent SureSelect, Illumina Nextera | Target enrichment for whole exome sequencing studies |

| Alignment Tools | BWA-MEM, Bowtie2, Isaac, Novoalign | Map sequencing reads to reference genome |

| Benchmarking Tools | hap.py, VCAT, rtg-tools | Performance assessment against known variants |

| Variant Annotation | SnpEff, VEP, ANNOVAR | Functional interpretation of called variants |

| Data Sources | NCBI SRA, ENA, TCGA | Publicly available datasets for method development |

Strengths, Limitations, and Optimal Use Cases

Comparative Advantages and Constraints

Both tools exhibit distinct profiles of strengths and limitations that make them suitable for different research scenarios:

GATK Advantages:

- Established rare variant detection capabilities, particularly valuable for novel disease-gene discovery [26]

- Comprehensive "Best Practices" documentation and active user community [22]

- Ongoing performance optimizations, such as the recent PDHMM implementation delivering 3.9x speedup [23]

- Flexible filtering approaches that can be customized for specific research needs

GATK Limitations:

- Higher Mendelian error rates in family-based studies compared to DeepVariant [27]

- More complex implementation requiring multiple processing steps

- Historically slower processing times, though recent optimizations have addressed this

DeepVariant Advantages:

- Superior accuracy metrics in multiple independent benchmarks [27] [29]

- Lower Mendelian error rates, making it particularly suitable for trio and family studies [27] [25]

- Simplified workflow with integrated filtering, reducing implementation barriers [25]

- Better performance in challenging genomic regions and with lower coverage data [27]

DeepVariant Limitations:

- Higher computational requirements, especially without GPU acceleration [24]

- Potential need for species-specific retraining when working with non-human genomes [25]

- Less established rare variant detection in some study designs [26]

Contextual Application Guidelines

Based on the accumulated evidence, the following guidelines emerge for tool selection:

Choose GATK When:

- Studying sporadic diseases where rare variant detection is prioritized [26]

- Working with non-human species without established DeepVariant models [25]

- Operating in environments with limited computational resources

- Leveraging existing institutional expertise with GATK pipelines

Choose DeepVariant When:

- Maximum accuracy is the primary consideration [29]

- Analyzing family trios or other pedigree-based designs [27]

- Working with challenging samples or suboptimal sequencing data [30]

- Prioritizing implementation simplicity over computational efficiency

Hybrid Approaches: For critical applications where the highest possible accuracy is required, some studies suggest using both tools in combination to leverage their complementary strengths [29].

The comparative analysis of GATK and DeepVariant reveals a nuanced landscape where tool superiority depends heavily on specific research contexts and priorities. GATK maintains strengths in rare variant detection and possesses a mature, well-documented ecosystem with ongoing performance optimizations. DeepVariant consistently demonstrates superior accuracy metrics, particularly in family-based study designs, albeit with higher computational demands.

The evolution of both tools continues, with GATK addressing performance gaps through algorithmic optimizations and DeepVariant expanding its applicability across sequencing technologies and species. Researchers must consider their specific experimental requirements, sample characteristics, and computational resources when selecting between these best-in-class variant discovery tools. As genomic technologies advance and datasets expand, the ongoing benchmarking and refinement of these tools remain essential for maximizing the value of genomic sequencing in both research and clinical applications.

The field of structural biology has undergone a profound transformation with the integration of artificial intelligence, moving from purely experimental determination of protein structures to computational prediction with remarkable accuracy. This paradigm shift, recognized as Science's 2021 Breakthrough of the Year [31], has empowered researchers to explore protein structures and functions at an unprecedented scale. At the forefront of this revolution are tools like AlphaFold, developed by DeepMind, and Rosetta, a sophisticated molecular modeling suite. These platforms, alongside newer entrants such as ESMFold and OmegaFold, provide researchers with diverse approaches to tackling one of biology's most fundamental challenges: predicting the three-dimensional structure of a protein from its amino acid sequence. Understanding the relative strengths, limitations, and optimal application domains of each tool is crucial for researchers, scientists, and drug development professionals who rely on accurate structural models to drive discovery in areas ranging from therapeutic design to understanding fundamental biological mechanisms [31] [32].

The performance of these tools is typically benchmarked using standardized assessments like the Critical Assessment of protein Structure Prediction (CASP), where AlphaFold demonstrated revolutionary accuracy competitive with experimental structures in a majority of cases [33]. However, real-world application extends beyond single-structure prediction to include modeling of protein complexes, refinement of structures with experimental data, and resource optimization for large-scale studies. This comparative guide provides an objective analysis of current AI-driven protein analysis tools, presenting quantitative performance data, detailed experimental protocols, and practical implementation frameworks to inform their effective application in research and development contexts.

Comparative Performance Analysis of Major Protein Structure Prediction Tools

Quantitative Benchmarking of AlphaFold, ESMFold, and OmegaFold

Independent benchmarking studies provide critical insights into the practical performance of leading protein structure prediction tools. The following data, derived from comparative analysis on a g5.2xlarge A10 GPU system, highlights key operational differences between AlphaFold (via ColabFold), ESMFold, and OmegaFold across sequences of varying lengths [34].

Table 1: Runtime and Resource Utilization Comparison

| Sequence Length | Tool | Running Time (seconds) | PLDDT Accuracy | GPU Memory Usage |

|---|---|---|---|---|

| 50 | ESMFold | 1 | 0.84 | 16 GB |

| OmegaFold | 3.66 | 0.86 | 6 GB | |

| ColabFold | 45 | 0.89 | 10 GB | |

| 400 | ESMFold | 20 | 0.93 | 18 GB |

| OmegaFold | 110 | 0.76 | 10 GB | |

| ColabFold | 210 | 0.82 | 10 GB | |

| 800 | ESMFold | 125 | 0.66 | 20 GB |

| OmegaFold | 1425 | 0.53 | 11 GB | |

| ColabFold | 810 | 0.54 | 10 GB | |

| 1600 | ESMFold | Failed (OOM) | - | 24 GB |

| OmegaFold | Failed (>6000s) | - | 17 GB | |

| ColabFold | 2800 | 0.41 | 10 GB |

Table 2: Overall Performance Characteristics and Optimal Use Cases

| Tool | Key Strength | Key Limitation | Optimal Sequence Length | Best Application Context |

|---|---|---|---|---|

| ESMFold | Extreme speed for short sequences | Lower accuracy on longer sequences; High memory usage | < 400 residues | High-throughput screening of short proteins |

| OmegaFold | Balanced accuracy and efficiency for short sequences | Performance degradation on longer sequences | < 400 residues | Resource-constrained environments with shorter sequences |

| AlphaFold (ColabFold) | Highest accuracy across diverse lengths | Significant computational demands; Slowest runtime | All lengths, especially >800 residues | Research requiring maximum accuracy regardless of resources |

Performance Metrics and Interpretation

The benchmarking data reveals distinct performance profiles for each tool. ESMFold demonstrates remarkable speed, processing a 50-residue sequence in approximately one second, making it approximately 45 times faster than ColabFold for this sequence length [34]. However, this speed comes with trade-offs in accuracy and memory utilization, particularly for longer sequences where its PLDDT (predicted local distance difference test) score decreases significantly. The PLDDT metric, which ranges from 0 to 1 with higher values indicating greater confidence, provides a per-residue estimate of prediction reliability [33].

OmegaFold strikes a balance between computational efficiency and accuracy, particularly for shorter sequences where it achieves superior PLDDT scores compared to ESMFold while using less GPU memory [34]. This combination of reasonable accuracy, moderate resource requirements, and cost-effectiveness makes OmegaFold particularly suitable for public-serving platforms and research groups with limited computational resources.

AlphaFold (assessed here through its ColabFold implementation) maintains the highest accuracy standards across diverse sequence lengths, with robust performance even on sequences up to 1600 residues where other tools fail [34]. This accuracy comes at the cost of significantly longer runtimes, making it best suited for research scenarios where precision is paramount and computational resources are adequate. AlphaFold's demonstrated median backbone accuracy of 0.96 Å RMSD95 in CASP14 assessments underscores its revolutionary position in the field [33].

Experimental Protocols and Methodologies

Workflow for Protein Structure Prediction

The process of predicting protein structures using AI tools follows a systematic workflow that integrates sequence input, computational processing, and output analysis. The following diagram illustrates the generalized workflow applicable to tools like AlphaFold, ESMFold, and OmegaFold:

AlphaFold's Architectural Innovation

AlphaFold's breakthrough accuracy stems from its novel neural network architecture that incorporates physical and biological knowledge about protein structure [33]. The system operates through two main stages:

Evoformer Processing: The input sequence and multiple sequence alignments (MSAs) are processed through repeated Evoformer blocks. These blocks employ attention-based mechanisms to exchange information between the MSA representation and a pair representation, enabling direct reasoning about spatial and evolutionary relationships between residues [33]. The Evoformer uses triangular multiplicative updates and attention to enforce geometric consistency, essentially solving a graph inference problem in 3D space where edges represent residues in proximity.

Structure Module: This component generates explicit 3D atomic coordinates through a series of transformations. Starting from initial identity rotations and origin positions, the module progressively refines the structure using equivariant transformations that respect rotational and translational symmetry. Key innovations include breaking the chain structure to allow simultaneous local refinement and employing intermediate losses to achieve iterative refinement through a process called "recycling" [33].

The network is trained on structures from the Protein Data Bank and uses a combination of structural loss functions that place substantial weight on both positional and orientational correctness of residues, leading to highly accurate backbone and side-chain predictions [33].

Integrating Computational Predictions with Experimental Data

While AI-based predictions have transformed structural biology, integration with experimental data remains crucial for modeling complex biological systems. Researchers have developed hybrid approaches that combine tools like AlphaFold and Rosetta with experimental techniques such as mass spectrometry-based covalent labeling (CL) [35].

Table 3: Research Reagent Solutions for Hybrid Experimental-Computational Approaches