Benchmarking Binding Affinity Predictions: A Practical Guide for Computational Drug Discovery

Accurate prediction of protein-ligand binding affinity is a cornerstone of computational drug discovery, directly impacting the efficiency of lead optimization.

Benchmarking Binding Affinity Predictions: A Practical Guide for Computational Drug Discovery

Abstract

Accurate prediction of protein-ligand binding affinity is a cornerstone of computational drug discovery, directly impacting the efficiency of lead optimization. This article provides a comprehensive framework for researchers and drug development professionals to evaluate the accuracy of diverse computational models, from physics-based simulations to modern machine learning approaches. We explore the foundational principles of binding affinity, detail the mechanisms and optimal applications of key methodologies, address common pitfalls and optimization strategies, and finally, establish robust validation and benchmarking practices based on community standards to ensure reliable and predictive results in real-world drug discovery projects.

The Fundamentals of Binding Affinity: From Theory to Predictive Challenge

Defining Binding Affinity and its Critical Role in Drug Discovery

Binding affinity is the strength of the interaction between a single biomolecule (such as a protein) and its binding partner (known as a ligand, e.g., a drug or inhibitor) [1]. It is quantitatively measured and reported by the equilibrium dissociation constant (KD), a key parameter for evaluating and rank-ordering the strength of bimolecular interactions [1]. The KD value represents the concentration of ligand required to occupy half of the available binding sites on the target protein at equilibrium. A smaller KD value indicates a greater binding affinity, meaning the ligand and target are strongly attracted and bind tightly to one another. Conversely, a larger KD value signifies weaker binding [1].

This intermolecular binding is governed by non-covalent interactions, including hydrogen bonding, electrostatic interactions, and hydrophobic and van der Waals forces [1]. Accurately predicting this binding strength computationally is a central challenge in modern biology and a critical bottleneck in drug discovery [2].

The Critical Role of Binding Affinity in Drug Discovery

In drug discovery, the ultimate goal is to develop a small molecule that potently and selectively binds to a specific protein target to modulate its function. Binding affinity directly influences the potency and efficacy of a potential drug, determining whether it will act on its intended target and be powerful enough to produce a therapeutic effect [3].

The ability to predict binding affinity is crucial because running laboratory experiments to measure it is a significant time and cost bottleneck in early-stage research and development (R&D) [2]. Although long physics-based simulations have been the primary computational alternative, they are extremely slow and expensive. Therefore, accurate and fast computational prediction of binding affinity is essential for accelerating the drug discovery process, from initial hit identification to lead optimization [2] [3].

Comparative Analysis of Binding Affinity Prediction Methods

Various computational methods have been developed to predict binding affinity, each with different underlying principles, data requirements, and performance characteristics. The table below provides a high-level comparison of the main categories of approaches.

Table 1: Categories of Binding Affinity Prediction Methods

| Method Category | Description | Typical Data Input | Key Characteristics |

|---|---|---|---|

| Experimental Methods [1] | Laboratory techniques to physically measure affinity. | Purified protein and ligand. | Considered the "gold standard"; can be low-throughput and resource-intensive. |

| Physics-Based Simulations (e.g., FEP) [2] [3] | Uses quantum mechanics and molecular dynamics to simulate interactions. | 3D structures of the protein and ligand. | High accuracy but computationally expensive and slow (days per prediction). |

| Traditional Machine Learning (ML) [4] [5] | Learns relationship between human-engineered features and affinity from data. | Human-defined features from complex structures. | Less rigid than conventional functions; performance depends on feature quality. |

| Deep Learning (DL) [6] [5] | Uses neural networks to learn patterns from raw or minimally processed data. | Often 3D structures or sequences of protein and ligand. | High potential with large datasets; can be vulnerable to data leakage if not carefully trained. |

To objectively compare the performance of different computational methods, researchers use standardized benchmarks. The following table summarizes the reported performance of several leading models on such benchmarks.

Table 2: Performance Comparison of Leading Binding Affinity Prediction Models

| Model Name | Model Type | Key Benchmark | Reported Performance | Computational Speed vs. FEP |

|---|---|---|---|---|

| Boltz-2 [2] [3] | Deep Learning Foundation Model | FEP+ Benchmark | Pearson ~0.62 (Approaches FEP accuracy) | >1000x faster |

| GEMS [6] | Graph Neural Network (GNN) | CASF Benchmark | State-of-the-art performance after data leakage fixed | Information Missing |

| RF-Score [4] | Random Forest | PDBbind Benchmark | Competitive scoring function at the time of publication | Information Missing |

| OpenFE (FEP) [2] | Physics-Based Simulation | FEP+ Benchmark | Gold standard for accuracy | Baseline (Very Slow) |

Experimental Protocols for Benchmarking

To ensure fair and meaningful comparisons, the evaluation of binding affinity predictors follows rigorous experimental protocols centered on standardized benchmarks and robust dataset splitting.

Standardized Evaluation Benchmarks

- The CASF Benchmark: A widely used benchmark based on the PDBbind database. It is designed to evaluate the "scoring power" of a function—its ability to predict the binding affinities of diverse protein-ligand complexes with known 3D structures [4] [5].

- The FEP+ Benchmark: A benchmark used to evaluate a model's accuracy in predicting binding affinity, often for lead optimization tasks. Its targets are typically held out of model training to ensure a fair test [2] [3].

- The MF-PCBA Benchmark: A benchmark focused on "hit discovery," which tests a model's ability to discriminate true binders from non-binders in high-throughput virtual screens [3].

The Critical Protocol: Addressing Data Leakage

A critical methodological step in training and evaluating modern data-driven models is ensuring a strict separation between training and test data. A 2025 study exposed a data leakage crisis in the field, where models were achieving high performance by "memorizing" structural similarities between training complexes in the PDBbind database and test complexes in the CASF benchmark, rather than learning generalizable principles [6] [7].

The solution is a rigorous filtering protocol, which led to the creation of PDBbind CleanSplit [6]. The protocol involves a structure-based clustering algorithm that removes from the training set any complexes that are overly similar to those in the test set, based on:

- Protein similarity (TM-score)

- Ligand similarity (Tanimoto score)

- Binding conformation similarity (pocket-aligned ligand root-mean-square deviation) [6]

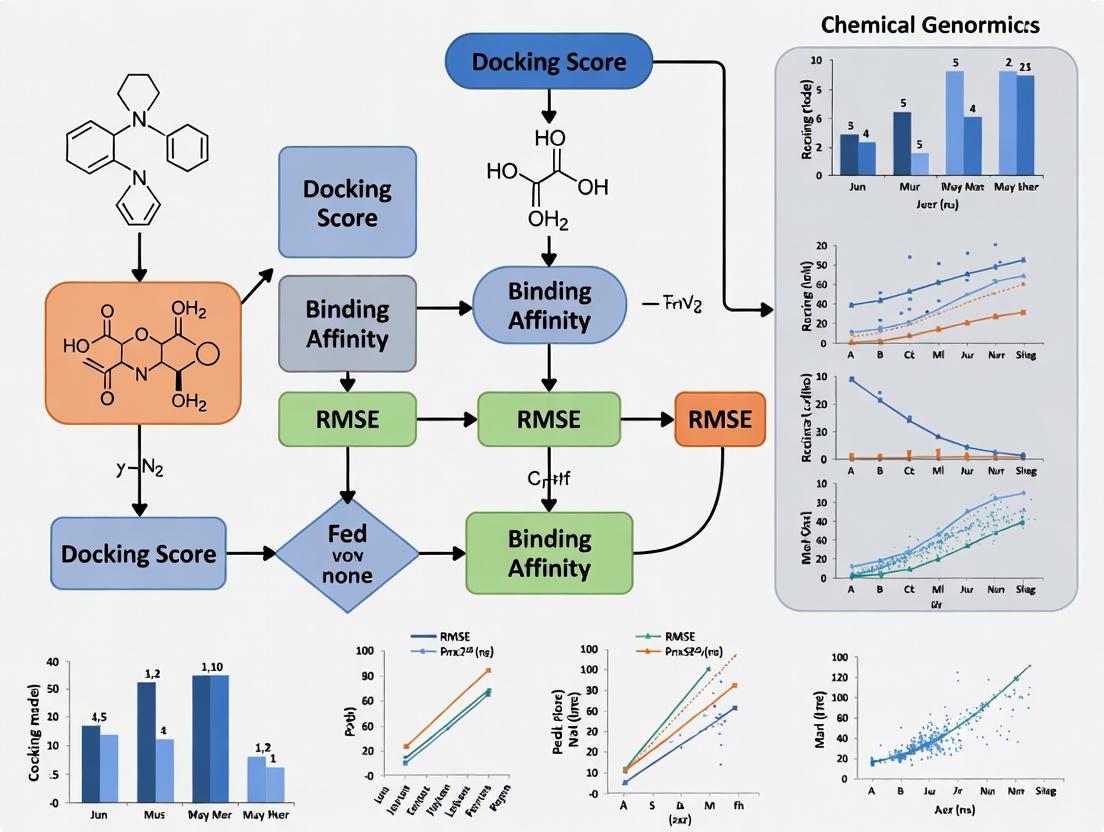

This workflow for creating a leakage-free dataset can be visualized as follows:

Diagram 1: Data Curation Workflow for PDBbind CleanSplit

When models that previously showed top-tier performance were retrained on this cleaned data, their performance dropped substantially, revealing that their reported capabilities were overestimated [6]. This underscores that rigorous dataset splitting is a non-negotiable protocol for assessing the true generalization of a binding affinity predictor [6] [7].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key resources, both computational and experimental, that are essential for research in this field.

Table 3: Key Research Reagent Solutions for Binding Affinity Analysis

| Resource Name | Type | Primary Function / Application | Relevance to Research |

|---|---|---|---|

| PDBbind Database [6] [5] | Computational Dataset | Curated collection of protein-ligand complexes with binding affinity data. | The primary database for training and benchmarking structure-based scoring functions. |

| CleanSplit Protocol [6] | Computational Method | Algorithm for creating leakage-free training/test splits for PDBbind. | Essential for rigorously evaluating the true generalization power of new models. |

| Boltz-2 Model [2] [3] | Computational Model (AI) | Predicts 3D structure and binding affinity of biomolecular complexes. | Used for fast, accurate affinity prediction and virtual screening in drug discovery. |

| WAVEsystem (GCI) [1] | Experimental Instrument | Label-free measurement of binding affinity and kinetics using Grating-Coupled Interferometry. | Provides high-throughput, high-sensitivity experimental validation of binding events. |

| MicroCal PEAQ-ITC [1] | Experimental Instrument | Label-free measurement of binding affinity, stoichiometry, and thermodynamics using Isothermal Titration Calorimetry. | Provides gold-standard experimental validation, including thermodynamic parameters. |

Integrated Workflow for Modern Binding Affinity Prediction

The field is moving towards a synthesis of scale and quality. Modern workflows leverage AI-generated data but apply rigorous quality control. The following diagram illustrates this integrated "smarter data" approach for training a robust affinity predictor, which combines insights from recent advancements [7] [3].

Diagram 2: Integrated "Smarter Data" Training Workflow

Accuracy, Computational Cost, and Domain Applicability

Accurately predicting the binding affinity between a protein and a small molecule is a cornerstone of computer-aided drug design. The ability to reliably forecast the strength of this interaction directly impacts the efficiency of screening and optimizing new drug candidates. Currently, the field is dominated by two primary computational approaches: physics-based simulation methods and machine learning (ML)-based models. Each paradigm presents a distinct set of trade-offs concerning predictive accuracy, computational expense, and applicability to novel chemical or protein targets. This guide provides an objective comparison of these methodologies, drawing on recent research and benchmark data to inform researchers and drug development professionals in selecting the appropriate tool for their projects.

The following table summarizes the core characteristics, advantages, and limitations of the primary binding affinity prediction methods in use today.

Table 1: Key Characteristics of Binding Affinity Prediction Methods

| Method Category | Key Examples | Theoretical Basis | Primary Advantages | Core Challenges |

|---|---|---|---|---|

| Physics-Based Simulation | Free Energy Perturbation (FEP), Molecular Dynamics (MD) | Statistical thermodynamics, molecular mechanics [8] | High theoretical accuracy for congeneric series; directly models physical interactions [9] | Extremely high computational cost (hours to days per compound); requires high-quality protein structures [9] [10] |

| Machine Learning (ML) | Graph Neural Networks (GNNs), CNN-based models (e.g., Pafnucy, GenScore) [6] [10] | Statistical learning from existing protein-ligand complex data | High throughput (~1000x faster than FEP); lower computational cost; can learn complex patterns from data [9] [10] | Generalization concerns due to data leakage [6]; performance drop on novel scaffolds [9] |

| Hybrid / Physics-Informed ML | Multiple-instance learning, SEGSA_DTA, GEMS [9] [6] [11] | Combines physical principles with data-driven learning | Incorporates physical constraints (e.g., electrostatics, shape); better generalization than pure ML; more efficient than pure physics [9] [11] | Developing architectures that seamlessly integrate physics; reliance on quality data for training [9] |

A critical challenge, particularly for ML models, is generalization—the model's ability to make accurate predictions on new, previously unseen protein-ligand complexes. A seminal 2025 study highlighted that the standard practice of training models on the PDBbind database and testing them on the Comparative Assessment of Scoring Functions (CASF) benchmark suffers from severe train-test data leakage [6]. This leakage, stemming from high structural similarities between training and test complexes, artificially inflates benchmark performance. When models like GenScore and Pafnucy were retrained on a rigorously filtered dataset (PDBbind CleanSplit) that eliminates this leakage, their performance dropped substantially, revealing that their high benchmark scores were partly due to memorization rather than genuine learning of interactions [6].

Quantitative Performance Comparison

To objectively compare performance, the following table synthesizes key quantitative findings from recent studies and benchmarks. It is essential to note that these values, particularly for ML models, are highly dependent on the training data and test set used, with CleanSplit benchmarks representing a more rigorous assessment of generalizability.

Table 2: Quantitative Performance and Resource Comparison

| Method | Reported Pearson (R) on CASF | Computational Cost | Key Experimental Findings |

|---|---|---|---|

| Free Energy Perturbation (FEP) | Not directly comparable (predicts relative ΔΔG) | ~1,000 CPU/GPU hours per compound [9] | High accuracy for small, congeneric chemical changes; target-to-target accuracy variation is high [9] |

| ML Model (Standard Training) | Up to ~0.85+ (inflated by data leakage) [6] | ~1 CPU/GPU hour per compound [9] | Performance is heavily reliant on chemical space similarity between training and test sets [6] |

| ML Model (CleanSplit Training) | ~0.5-0.7 (e.g., for retrained Pafnucy/GenScore) [6] | ~1 CPU/GPU hour per compound [9] | Shows true generalization capability; performance drop underscores previous overestimation [6] |

| Graph Neural Network (GEMS - CleanSplit) | >0.8 (state-of-the-art on clean data) [6] | ~1 CPU/GPU hour per compound (estimated) | Maintains high accuracy on CleanSplit; uses sparse graph modeling and transfer learning for robust generalization [6] |

| Active Learning (GP Model) | Varies by dataset (e.g., R² up to ~0.7 on TYK2) [12] | Cost is focused on iterative labeling | Achieved high recall (>80%) of top binders by selectively labeling 3.6% of a 10,000-compound library [12] |

Detailed Experimental Protocols

Understanding the methodology behind the data is crucial for critical evaluation. This section details two key experimental protocols cited in the comparison.

Benchmarking Protocol for Generalization Capability

Objective: To rigorously evaluate the true generalization performance of deep-learning scoring functions by eliminating data leakage between training and test sets [6].

Workflow:

- Dataset Curation (PDBbind CleanSplit):

- Apply a structure-based clustering algorithm to the PDBbind database.

- The algorithm uses a combined assessment of protein similarity (TM-score), ligand similarity (Tanimoto score), and binding conformation similarity (pocket-aligned ligand RMSD).

- Filtering Step 1 (Train-Test Separation): Remove any training complex that is structurally similar to any complex in the CASF test sets. This step addressed 49% of CASF complexes that had nearly identical counterparts in the training data.

- Filtering Step 2 (Reduction of Redundancy): Iteratively remove complexes from the training set to resolve internal similarity clusters, reducing the overall training set size by ~12%.

- Model Retraining: Retrain state-of-the-art models (e.g., GenScore, Pafnucy) on the newly curated PDBbind CleanSplit training set.

- Evaluation: Test the retrained models on the strictly independent CASF benchmark. The resulting performance (e.g., Pearson R) reflects the model's genuine ability to generalize.

Active Learning Protocol for Binding Affinity Prediction

Objective: To efficiently identify top-binding ligands from vast molecular libraries at a reduced computational cost by iteratively selecting the most informative compounds for "labeling" (e.g., experimental assay or computational scoring) [12].

Workflow:

- Initialization: Start with a large library of unlabeled compounds and a very small, randomly selected initial batch (e.g., 50-100 compounds).

- Iterative Cycle:

- Labeling: The current batch of compounds is labeled with binding affinities (e.g., via experimental Ki/IC50 or RBFE calculations).

- Model Training: A machine learning model (e.g., Gaussian Process or Chemprop) is trained on all accumulated labeled data.

- Prediction & Acquisition: The trained model predicts affinities for all remaining unlabeled compounds. An "acquisition function" selects the next batch of compounds based on an exploration-exploitation strategy.

- Exploitation selects compounds the model predicts to be high-binders.

- Exploration selects compounds the model is most uncertain about, improving its overall knowledge.

- Termination: The cycle repeats until a predefined budget (e.g., number of compounds labeled) is exhausted. Performance is evaluated by the recall of top binders from the full library.

The following diagram illustrates this iterative workflow.

Research Reagent Solutions

The following table lists key computational and data resources essential for research in binding affinity prediction.

Table 3: Key Research Reagents and Resources

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| PDBbind Database [6] [10] | Curated Database | Provides a comprehensive collection of experimental protein-ligand complex structures and their binding affinity data for training and benchmarking ML models. |

| CASF Benchmark [6] [10] | Benchmarking Suite | Serves as a standard set for comparative assessment of scoring functions; requires careful use with CleanSplit to avoid overestimation. |

| PDBbind CleanSplit [6] | Curated Dataset | A filtered version of PDBbind that removes data leakage and redundancy, enabling robust model training and genuine evaluation of generalization. |

| AutoDock Vina [8] [10] | Docking Software | A widely used molecular docking program for predicting bound poses and providing a fast, empirical affinity estimate. |

| Gaussian Process (GP) / Chemprop [12] | ML Model Architectures | Core machine learning models used in active learning protocols for regression and uncertainty quantification. |

| TYK2, USP7, D2R, Mpro Datasets [12] | Benchmarking Datasets | Publicly available affinity datasets for specific protein targets used to benchmark active learning and ML performance. |

Integrated Application & Decision Workflow

Given the complementary strengths of different methods, a synergistic approach is often most effective. The following diagram outlines a recommended decision workflow for employing these tools in a drug discovery campaign.

This workflow emphasizes that the choice of method is not binary. Researchers can achieve optimal efficiency by using faster, physics-informed ML models [9] or active learning protocols [12] to triage large chemical spaces and identify promising regions. Subsequently, more computationally intensive and accurate physics-based simulations like FEP can be deployed for lead optimization on a focused set of compounds [9]. This sequential strategy allows for the exploration of a much wider chemical space using the same computational resources. Furthermore, for problems where a high-resolution protein structure is unavailable, physics-informed ML methods that can operate without a defined structure provide a crucial advantage, extending the reach of predictive modeling [9].

Accurately predicting the binding affinity between a protein and a small molecule (ligand) is a central challenge in modern computational drug discovery. Binding affinity, which quantifies the strength of interaction, directly influences a drug candidate's efficacy and potency [13]. The predictive landscape is dominated by two philosophically distinct paradigms: physical simulation-based methods, which computationally model the physics of molecular interactions, and machine learning (ML) approaches, which learn patterns from existing biochemical data [9].

The choice between these approaches often involves a fundamental trade-off between computational expense, interpretability, and accuracy. This guide provides an objective comparison of these methodologies, detailing their underlying principles, performance metrics, and optimal use cases to inform researchers and drug development professionals.

Physical Simulation-Based Approaches

Core Principles and Methodologies

Physical simulation methods rely on explicitly modeling atomic interactions using molecular mechanics force fields. These approaches are grounded in statistical thermodynamics and aim to calculate the free energy of binding, a key thermodynamic quantity directly related to affinity.

- Free Energy Perturbation (FEP) / Thermodynamic Integration (TI): These are considered the gold-standard, high-accuracy methods. They work by alchemically transforming one ligand into another within the binding pocket via a series of intermediate states. The total free energy change for this transformation is calculated, providing the relative binding affinity [13] [9]. The extensive molecular dynamics (MD) sampling required is computationally intensive but provides a physically meaningful result.

- Molecular Dynamics (MD) with End-Point Methods (MM/PBSA, MM/GBSA): These are medium-compute approaches. They involve running an MD simulation to generate an ensemble of protein-ligand conformations (snapshots). The binding free energy for each snapshot is estimated by decomposing it into components: the gas-phase enthalpy (calculated using a force field), a solvation correction (calculated using implicit solvent models like Poisson-Boltzmann (PB) or Generalized Born (GB)), and sometimes an entropy term [13]. The results are averaged across all snapshots.

The following workflow diagram illustrates the typical process for an MM/GBSA calculation, a common simulation-based approach:

Performance and Experimental Data

The table below summarizes the typical performance characteristics of physical simulation methods, based on reported benchmarks.

Table 1: Performance Profile of Physical Simulation Methods

| Method | Typical RMSE (kcal/mol) | Typical Correlation (Pearson R) | Compute Time (GPU) | Key Strengths | Key Limitations |

|---|---|---|---|---|---|

| Docking (e.g., AutoDock Vina) | 2.0 - 4.0 | ~0.3 [13] | < 1 min (CPU) | Very fast, high-throughput screening | Low accuracy, high error rate |

| MM/GBSA & MM/PBSA | ~1.5 - 3.0 (system-dependent) | Variable | Hours to days (GPU) | More accurate than docking, medium throughput | Noisy results, sensitive to input structures [13] |

| FEP/TI | ~0.8 - 1.2 [13] [3] | ~0.65+ [13] | >12 hours per calculation [13] | High accuracy, considered a gold standard | Extremely high computational cost, narrow applicability domain [9] |

Machine Learning-Based Approaches

Core Principles and Methodologies

Machine learning approaches bypass explicit physical modeling in favor of learning a direct mapping from molecular structure data to binding affinity values. These models are trained on large, curated datasets of protein-ligand complexes with experimentally measured affinities.

- Graph Neural Networks (GNNs): These are a leading architecture. The protein-ligand complex is represented as a graph where nodes are atoms and edges are bonds or interactions. GNNs learn to propagate information across this graph to extract features predictive of binding affinity [6]. Models like GEMS (Graph neural network for Efficient Molecular Scoring) leverage this architecture to achieve state-of-the-art performance [6].

- Convolutional Neural Networks (CNNs): These models treat the 3D protein-ligand binding pocket as a structural image, using voxels to represent properties like atomic density or charge. CNNs apply filters to this 3D grid to learn spatial features relevant to binding [14].

- Foundation Models (e.g., Boltz-2): Newer models like Boltz-2 are pre-trained on vast amounts of structural biology data and can be fine-tuned for affinity prediction. Boltz-2 acts as a foundational model that improves upon its predecessors by incorporating diverse training data, including molecular dynamics ensembles, and is reportedly the first AI model to approach FEP-level accuracy while being vastly more efficient [3].

A critical challenge in ML is data leakage, where high structural similarity between training and test sets leads to inflated performance metrics. The PDBbind CleanSplit dataset has been recently proposed to address this by using a structure-based clustering algorithm to ensure training and test complexes are strictly independent [6].

Performance and Experimental Data

The performance of ML models is highly dependent on the training data and the rigor of the evaluation split.

Table 2: Performance Profile of Machine Learning Methods

| Method / Model | RMSE (kcal/mol) / CASF Benchmark | Correlation (Pearson R) / CASF Benchmark | Compute Time | Key Strengths | Key Limitations |

|---|---|---|---|---|---|

| Classical ML/QSAR | Variable, often high | Variable | Seconds to minutes | Very fast, no protein structure needed | Poor generalization to novel chemotypes [9] |

| Standard GNNs/CNNs (trained on PDBbind) | Reportedly low* | Reportedly high* | Minutes (GPU) | High speed, good benchmark performance | Performance drops on independent tests due to data leakage [6] |

| GEMS (trained on CleanSplit) | State-of-the-art on CleanSplit [6] | State-of-the-art on CleanSplit [6] | Minutes (GPU) | Robust generalization, less prone to data leakage | Performance depends on quality of input structure |

| Boltz-2 | Approaches FEP accuracy [3] | Strong correlation on FEP+ benchmark [3] | ~1000x faster than FEP [3] | High accuracy with high efficiency, foundation model | Model complexity, requires significant resources for training |

*Note: Performance metrics for models trained on standard PDBbind splits are often inflated due to data leakage. When retrained on the strict PDBbind CleanSplit, the performance of many top models dropped substantially, indicating their previous high scores were driven by memorization [6].

Comparative Analysis: A Side-by-Side Evaluation

Direct Comparison of Performance and Resource Use

The following table provides a consolidated view to facilitate direct comparison between the two paradigms and their sub-methods.

Table 3: Head-to-Head Comparison of Key Approaches

| Evaluation Metric | FEP/TI (Physical) | MM/GBSA (Physical) | Docking (Physical) | GNNs like GEMS (ML) | Foundation Models like Boltz-2 (ML) |

|---|---|---|---|---|---|

| Theoretical Basis | Statistical thermodynamics, molecular physics | Molecular mechanics, continuum solvation | Empirical/Knowledge-based force fields | Data-driven pattern recognition | Data-driven + pre-trained structural knowledge |

| Accuracy (RMSE) | High (~1 kcal/mol) [13] | Medium | Low | Medium-High (with robust splits) [6] | High (approaches FEP) [3] |

| Speed | Very Slow (days) | Slow (hours-days) | Very Fast (minutes) | Fast (seconds-minutes) | Very Fast (1000x faster than FEP) [3] |

| Interpretability | High (energy components) | Medium (energy decomposition) | Low (black-box scoring) | Low (black-box) | Low (black-box) |

| Domain of Applicability | Narrow (congeneric series) | Medium | Broad | Broad (depends on training data) | Very Broad |

| Generalization | Physically grounded | System-dependent prone to noise | Poor | Good (if data leakage is minimized) [6] | Good (as reported on benchmarks) [3] |

Decision Workflow for Method Selection

The following diagram outlines a logical workflow for selecting the most appropriate predictive method based on project goals and constraints:

Experimental Protocols and Research Reagents

Key Experimental Methodologies

For researchers seeking to implement or benchmark these methods, understanding the core experimental protocols is essential.

Protocol for FEP/TI Calculations:

- System Preparation: Obtain a high-resolution protein-ligand structure (e.g., from PDB). Parameterize the ligands, solvate the system in an explicit water box, and add ions to neutralize charge.

- Equilibration: Run molecular dynamics simulations to equilibrate the system at the desired temperature and pressure.

- Lambda Window Setup: Define a series of intermediate states (lambda windows) that gradually transform the initial ligand (A) into the final ligand (B).

- Sampling: Run independent simulations at each lambda window to sample the conformational space.

- Free Energy Analysis: Use the Bennett Acceptance Ratio (BAR) or Thermodynamic Integration (TI) to compute the free energy difference from the sampled energies across all lambda windows.

Protocol for Training a GNN on PDBbind CleanSplit:

- Data Curation: Use the PDBbind CleanSplit dataset to avoid data leakage [6]. This involves a structure-based filtering algorithm that removes training complexes with high similarity (in protein structure, ligand, and binding pose) to test complexes.

- Graph Representation: For each protein-ligand complex, generate a graph. Nodes represent protein and ligand atoms, encoded with features like atom type, charge, and hybridization. Edges represent bonds or spatial proximity within a cutoff distance.

- Model Training: Train a GNN architecture (e.g., with message-passing layers) to map the input graph to a scalar binding affinity value (e.g., pIC50, pKi). Use the training set of CleanSplit for learning and a held-out validation set for hyperparameter tuning.

- Rigorous Evaluation: Evaluate the final model's performance on the strictly independent test set of the CleanSplit or on external benchmarks like CASF, reporting metrics such as RMSE and Pearson R.

Essential Research Reagent Solutions

Table 4: Key Computational Tools and Databases for Binding Affinity Prediction

| Item Name | Type | Function in Research | Example Tools / Databases |

|---|---|---|---|

| Molecular Dynamics Engine | Software Suite | Performs the atomic-level simulations for FEP and MD-based methods. | GROMACS, AMBER, OpenMM, NAMD |

| Free Energy Calculation Package | Software Plugin | Implements FEP and TI algorithms on top of MD engines. | FEP+, CHARMM-GUI, SOMD |

| Docking Software | Software Suite | Rapidly predicts binding poses and scores affinity using empirical functions. | AutoDock Vina, GOLD, Glide, DOCK 6 |

| Curated Affinity Database | Database | Provides experimental binding data for training and benchmarking ML models. | PDBbind, PDBbind CleanSplit, BindingDB, ChEMBL |

| Deep Learning Framework | Software Library | Provides the environment for building and training GNNs and other ML models. | PyTorch, PyTorch Geometric, TensorFlow, DeepGraph |

| Protein Language Model | Pre-trained Model | Generates informative protein sequence embeddings that can be used as input features for ML models. | ESM-2 (as used in [15]) |

| Structure-Based Filtering Tool | Algorithm | Identifies and removes structurally similar complexes from datasets to prevent data leakage. | Custom clustering algorithms (e.g., as used for PDBbind CleanSplit [6]) |

The field of binding affinity prediction is not characterized by a single superior method, but rather a portfolio of complementary tools. Physical simulation methods like FEP provide high accuracy and physical interpretability for lead optimization but at an extreme computational cost. Machine learning approaches, particularly modern GNNs and foundation models like Boltz-2, offer a compelling balance of high speed and increasing accuracy, demonstrating strong performance on rigorous benchmarks when trained on leakage-free datasets [6] [3].

The emerging trend is not one of replacement but of synergy. As noted by industry experts, using physics-informed ML for high-throughput screening followed by FEP for final validation on top candidates creates an efficient and powerful pipeline [9]. This hybrid approach leverages the respective strengths of both paradigms, enabling researchers to explore wider chemical spaces and accelerate the drug discovery process with greater confidence in their computational predictions.

The Critical Importance of High-Quality Experimental Data for Benchmarking

In the rapidly advancing field of computational biology, and particularly in structure-based drug design, researchers are frequently confronted with a choice between numerous computational methods for predicting key biological interactions. Benchmarking studies serve as critical tools for rigorously comparing the performance of different methods using well-characterized reference datasets, with the goal of determining the strengths of each method and providing actionable recommendations to the scientific community [16]. The accuracy and reliability of these benchmarks are fundamentally dependent on the quality of the experimental data upon which they are built. Nowhere is this more evident than in the prediction of protein-ligand and antibody-antigen binding affinity, where improved prediction accuracy directly influences the efficacy of therapeutic drug design [17] [18].

High-quality benchmarking data enables method developers to validate new approaches, helps independent groups perform neutral comparisons, and allows the broader research community to make informed choices about which methods to adopt for specific applications. However, the design and implementation of these benchmarks must be carefully considered to avoid bias and ensure biologically relevant conclusions [16]. This guide examines the essential components of effective benchmarking, using the evaluation of binding affinity predictions as a central case study to illustrate both methodologies and best practices.

Essential Components of a Rigorous Benchmarking Framework

Defining Purpose, Scope, and Method Selection

The foundation of any meaningful benchmarking study is a clearly defined purpose and scope. According to guidelines for computational benchmarking, studies generally fall into three categories: those conducted by method developers to demonstrate the merits of a new approach; neutral studies performed by independent groups to systematically compare existing methods; and community challenges organized by consortia [16]. Each type requires different levels of comprehensiveness, with neutral benchmarks ideally including all available methods for a specific type of analysis.

The selection of methods must be guided by inclusion criteria that do not favor any particular approach. Common criteria include freely available software, compatibility with standard operating systems, and the ability to be installed without excessive troubleshooting. When developing a new method, it is generally sufficient to compare against a representative subset including current best-performing methods, a simple baseline method, and any widely used established methods [16].

The Critical Importance of Reference Datasets

The selection of reference datasets represents perhaps the most critical design choice in benchmarking, as the quality of this data directly determines the validity of the benchmark's conclusions. Reference datasets generally fall into two categories: simulated data and real experimental data [16].

Simulated data offers the advantage of known "ground truth," enabling precise quantitative performance metrics. However, simulations must accurately reflect relevant properties of real data, which requires careful validation against empirical datasets. Real experimental data, while sometimes lacking complete ground truth, provides the ultimate test of a method's performance in real-world conditions. For binding affinity prediction, this typically involves standardized measurements like dissociation constants (Kd) [17].

A robust benchmark should incorporate multiple datasets representing diverse conditions. For antibody binding affinity, this might include measurements across different antibody classes and antigen targets. The AbBiBench framework, for example, curates over 184,500 experimental measurements of antibody mutants across 14 antibodies and 9 antigens, including influenza, HER2, VEGF, and SARS-CoV-2 targets [17].

Table: Types of Reference Datasets for Benchmarking Binding Affinity Prediction

| Dataset Type | Advantages | Limitations | Examples |

|---|---|---|---|

| Simulated Data | Known ground truth, customizable scenarios, scalable | May not capture all real-world complexities | Structure-based simulations of mutant antibodies |

| Real Experimental Data | Biological relevance, real-world conditions | Measurement noise, limited scale, potential gaps | PDBBind database, AbBiBench curated measurements |

| Standardized Benchmarks | Enables direct method comparison, community standards | May not address all research questions | AbBiBench, ProteinGym, FLAb, BindingGYM |

Quantitative Performance Metrics and Evaluation

Selecting appropriate evaluation metrics is essential for meaningful method comparison. For binding affinity prediction, the correlation between computational predictions and experimental measurements serves as the primary validation. Common metrics include Pearson's correlation coefficient (R), which measures linear relationships, and root-mean-square error (RMSE), which quantifies prediction errors [18].

The AbBiBench framework introduces an important advancement by treating the antibody-antigen complex as the fundamental unit of evaluation rather than assessing antibodies in isolation. This approach acknowledges that binding affinity is determined not just by the antibody sequence, but by the quality of the interface it forms with the antigen [17]. High-affinity binding typically arises from complexes with structural integrity—stable, well-packed interfaces with favorable conformations and minimal strain.

Beyond accuracy metrics, benchmarks should consider secondary measures such as computational efficiency, scalability, and usability. However, the primary focus should remain on metrics that directly translate to real-world performance for the intended application [16].

Experimental Protocols for Binding Affinity Benchmarking

Standardized Experimental Measurement Techniques

Experimental validation remains the gold standard for binding affinity assessment. Several established techniques provide the reference data against which computational methods are benchmarked:

Surface Plasmon Resonance (SPR): SPR measures biomolecular interactions in real-time without labeling, providing quantitative data on binding affinity (Kd), kinetics (kon, koff), and specificity. The technique is widely used for characterizing antibody-antigen interactions and is considered one of the most reliable methods for obtaining experimental binding affinities [17].

Enzyme-Linked Immunosorbent Assay (ELISA): ELISA provides a high-throughput method for detecting and quantifying antibody-antigen interactions. In the AbBiBench framework, ELISA binding assays were used to validate computational predictions by testing sampled antibody variants for binding capability to target antigens like influenza H1N1 [17].

Isothermal Titration Calorimetry (ITC): ITC directly measures the heat released or absorbed during biomolecular binding, providing comprehensive thermodynamic parameters including binding affinity (Kd), enthalpy (ΔH), and stoichiometry (n). While highly informative, ITC typically requires larger sample quantities than other methods.

These experimental techniques generate the reference data that forms the foundation of binding affinity benchmarks. The consistency and reliability of these measurements are paramount, as any errors or variability in the experimental data will necessarily compromise the benchmarking results.

Computational Evaluation Workflow

The evaluation of computational methods follows a structured workflow to ensure fair comparison and biologically meaningful results. The following diagram illustrates the key stages of binding affinity prediction benchmarking:

Diagram: Binding Affinity Benchmarking Workflow

This workflow begins with careful curation of experimental data, ensuring datasets are comprehensive and properly standardized. Method selection follows, with attention to including both established approaches and newer methods. The evaluation phase generates predictions and calculates performance metrics, culminating in interpretation and reporting of results.

Case Study: The AbBiBench Framework

The AbBiBench framework provides a concrete example of rigorous benchmarking implementation for antibody binding affinity. This framework addresses a critical limitation of previous benchmarks by incorporating the antigen when evaluating binding affinity, recognizing that antibody-antigen interactions are highly specific and require modeling the complete complex [17].

In practice, AbBiBench evaluates protein models by measuring the correlation between model likelihood and experimental affinity values across curated datasets. The framework employs a zero-shot evaluation approach, assessing how well models can predict affinity without specific training on the benchmark data. This tests the fundamental understanding of binding principles rather than mere pattern recognition in the data [17].

The generative utility of the benchmark was demonstrated through application to antibody F045-092, where researchers sampled new antibody variants with top-performing models, ranked them by structural integrity and biophysical properties of the antibody-antigen complex, and validated the predictions with in vitro ELISA binding assays. This end-to-end validation process represents best practices in benchmarking methodology [17].

Key Reagent Solutions for Binding Affinity Research

Table: Essential Research Reagents and Tools for Binding Affinity Studies

| Reagent/Tool | Function/Purpose | Application Examples |

|---|---|---|

| Protein Language Models | Learn evolutionary patterns from protein sequences | AntiBERTy, ESM models for antibody representation |

| Structure-Based Generative Models | Design proteins based on structural constraints | ProteinMPNN, RFdiffusion for antibody design |

| Inverse Folding Models | Predict sequences compatible with given structures | ESM-IF, PiFold for generating binding-optimized sequences |

| Molecular Dynamics Software | Simulate physical movements of atoms and molecules | GROMACS, AMBER for calculating binding free energies |

| Binding Affinity Databases | Curated experimental measurements for validation | PDBBind, AbBiBench dataset, SAbDab structural database |

| Surface Plasmon Resonance | Measure binding kinetics and affinity experimentally | Biacore systems for characterizing antibody-antigen interactions |

These tools and resources form the essential toolkit for researchers working on binding affinity prediction and benchmarking. The selection of appropriate tools depends on the specific research question, with some methods specializing in sequence-based predictions while others focus on structure-based approaches or experimental validation.

Comparative Analysis of Benchmarking Performance

Evaluation Metrics and Method Comparison

Rigorous benchmarking requires multiple evaluation metrics to assess different aspects of performance. The table below summarizes key metrics used in binding affinity prediction benchmarks:

Table: Performance Metrics for Binding Affinity Prediction Methods

| Method Category | Key Metrics | Typical Performance Range | Strengths | Limitations |

|---|---|---|---|---|

| Structure-Based Geometric Models | Pearson's R, RMSE | R: 0.65-0.83 [18] | Physical interpretability, structure-awareness | Computational intensity, template dependence |

| Language Model-Based Approaches | Perplexity, amino acid recovery | Varies by task and dataset | Capture evolutionary information, fast inference | May miss structural determinants of binding |

| Inverse Folding Models | Correlation with experimental affinity | Top-performing in AbBiBench [17] | Balance of sequence and structure information | Limited by accuracy of input structures |

| Biophysics-Based Methods | ΔΔG prediction accuracy | Context-dependent | mechanistic insights, physical principles | Often lower accuracy than machine learning methods |

The performance comparison across these method categories reveals that structure-conditioned inverse folding models generally outperform other approaches in both affinity correlation and generation tasks, as demonstrated in the AbBiBench evaluation [17]. However, different methods may excel in specific scenarios, highlighting the importance of context in method selection.

Visualization of Method Evaluation Logic

The process of evaluating and comparing computational methods follows a logical structure that ensures comprehensive assessment:

Diagram: Method Evaluation and Comparison Logic

This evaluation logic begins with experimental binding data as the ground truth reference. Multiple computational model types are evaluated against this data using correlation analysis and other statistical measures. The results across different performance metrics are then synthesized to generate overall method rankings and practical recommendations for researchers.

High-quality experimental data forms the irreplaceable foundation of rigorous benchmarking in computational biology. Without accurate, comprehensive, and biologically relevant reference data, even the most sophisticated computational methods cannot be properly evaluated or improved. The critical importance of this data is particularly evident in binding affinity prediction, where incremental improvements in accuracy can significantly accelerate therapeutic development.

The field continues to evolve with frameworks like AbBiBench addressing previous limitations by incorporating structural context and antibody-antigen complex evaluation. Future benchmarking efforts should build upon these principles, emphasizing biological relevance, comprehensive method comparison, and rigorous validation against experimental data. By adhering to these standards, the scientific community can ensure that benchmarking studies provide meaningful insights that genuinely advance computational method development and application.

A Deep Dive into Predictive Methodologies: Mechanisms and Use Cases

Accurate prediction of protein-ligand binding affinity is a central challenge in computational chemistry and structure-based drug design. Among physics-based methods, alchemical binding free energy calculations have emerged as the most consistently accurate approaches for predicting relative binding affinities [19]. Two rigorous methodologies dominate this field: Free Energy Perturbation (FEP) and Thermodynamic Integration (TI). Both methods calculate free energy differences by simulating non-physical (alchemical) transitions between states of interest, but they differ in their underlying formalism, implementation specifics, and practical application [20]. Understanding their comparative performance, accuracy, and limitations is essential for researchers seeking to apply these methods in drug discovery pipelines. This guide provides an objective comparison of FEP and TI methodologies, supported by experimental data and detailed protocols from recent literature.

Theoretical Foundations and Methodological Comparison

Fundamental Principles

Free Energy Perturbation (FEP) is based on the Zwanzig equation, which provides a direct method for computing the free energy difference between two states [20]. For two systems with potential energies U₁ and U₂, the Helmholtz free energy difference is given by:

ΔA = -kₚT ln⟨exp[-(U₂ - U₁)/kₚT]⟩₁

where kₚ is the Boltzmann constant, T is the temperature, and ⟨⟩₁ represents an ensemble average over configurations sampled from state 1 [20]. In practice, FEP calculations are performed using multiple intermediate states (λ windows) to ensure sufficient phase space overlap between adjacent states [20].

Thermodynamic Integration (TI) employs an alternative approach by integrating the derivative of the Hamiltonian with respect to the coupling parameter λ [21] [20]:

ΔA = ∫⟨∂U(λ)/∂λ⟩λ dλ

where the integral is evaluated numerically over λ from 0 to 1, and ⟨∂U(λ)/∂λ⟩λ is the ensemble average of the derivative at a specific λ value [20]. This method avoids the exponential averaging of FEP but requires numerical integration.

Key Technical Differences

Table 1: Fundamental differences between FEP and TI

| Aspect | Free Energy Perturbation (FEP) | Thermodynamic Integration (TI) |

|---|---|---|

| Fundamental Equation | Zwanzig exponential averaging [20] | Numerical integration of ∂H/∂λ [21] [20] |

| Free Energy Estimator | Direct exponential mean or Bennett Acceptance Ratio (BAR) [22] | Numerical integration (e.g., trapezoidal rule) |

| λ-dependence | Discrete λ windows [20] | Continuous λ integral [21] |

| Handling of End States | Can be challenging for λ = 0,1 [21] | Avoids physical end states with soft-core potentials [21] |

| Enhanced Sampling | Often combined with REST [21] [23] or H-REMD [20] [24] | Can utilize H-REMD for improved convergence [24] |

Performance Comparison and Experimental Validation

Accuracy Benchmarks

Multiple studies have systematically evaluated the performance of FEP and TI across diverse protein systems and ligand sets. The maximal achievable accuracy of these methods is fundamentally limited by the reproducibility of experimental affinity measurements, which Kramer et al. found to range from 0.77 to 0.95 kcal/mol for independent measurements of the same protein-ligand complex [19].

Table 2: Performance comparison of FEP and TI across different studies

| Study | System | Method | Performance | Key Findings |

|---|---|---|---|---|

| Merck–Rutgers Collaboration [21] | Factor Xa inhibitors | AMBER TI vs. Schrödinger FEP+ | Comparable promising results | Careful protonation state consideration crucial for accuracy |

| Lu et al. [19] | Diverse protein-ligand systems (512 pairs) | FEP+ (OPLS4) | Accuracy approaching experimental reproducibility | Demonstrated broad applicability across protein classes |

| Zhang et al. [25] | Class A GPCRs (53 transformations) | AMBER TI vs. AToM-OpenMM | Good agreement with experimental data | Validated applicability to membrane protein targets |

| Wang et al. [24] | Antibody-antigen complexes (38 mutations) | Optimized TI with HREMD | Pearson's r = 0.74, RMSE = 1.05 kcal/mol | Significant improvement over conventional TI |

| Schied et al. [22] | Antibody variants for SARS-CoV-2 | FEP with uncertainty estimation | Qualitative consistency with experimental stability | Demonstrated applicability to antibody design |

| Abel et al. [23] | HIV-1 gp120/bNAbs (55 mutations) | FEP/REST | RMSE = 0.68 kcal/mol | Near-experimental accuracy for protein-protein interactions |

Practical Considerations for Method Selection

The choice between FEP and TI often depends on specific application requirements:

System Size and Complexity: For large systems like antibody-antigen complexes, both methods require enhanced sampling techniques. Wang et al. demonstrated that Hamiltonian Replica Exchange MD (HREMD) significantly improved TI performance for antibody design, increasing Pearson's correlation from 0.55 to 0.74 and reducing RMSE from 1.8 to 1.05 kcal/mol [24].

Chemical Space Coverage: FEP+ has demonstrated particular strength in handling diverse modifications common in drug discovery, including R-group modifications, scaffold hopping, macrocyclization, and charge-changing perturbations [19] [26].

Computational Efficiency: Recent optimizations have improved the efficiency of both methods. Kniazkov et al. found that sub-nanosecond simulations per λ window could achieve accurate results for many systems, though larger perturbations (|ΔΔG| > 2.0 kcal/mol) exhibited higher errors [27].

Experimental Protocols and Methodologies

Standard Implementation Workflows

Diagram 1: General workflow for FEP and TI calculations

Detailed Methodological Protocols

System Preparation (Structure Preparation) For the Factor Xa dataset studied in the Merck-Rutgers collaboration, structures were carefully prepared from high-resolution crystal complexes (PDB: 2RA0). The protocol included: back-mutation of L88V, addition of capping groups (NME to C-termini, ACE to N-termini), placement of structurally important Ca²⁺ and Na⁺ ions aligned with PDB 2W26, and thorough checking of residue protonation states, rotamers, disulfide bond connections, and ligand atom types [21]. Protonation states of inhibitors were estimated using ACD Labs/pKa DB algorithm, leading to significant changes from neutral states used in original studies [21].

AMBER FEW TI Protocol The AMBER FEW workflow automates TI calculations through: automatic atom type assignment from GAFF force field, AM1-BCC atomic partial charges, and dual topology soft-core approach for relative binding free energies [21]. The alchemical space is typically divided into 9 λ values from 0.1 to 0.9 with Δλ = 0.1, avoiding endpoints as recommended for soft-core potentials. Default simulation length is 5 ns per λ window, with convergence measured every 250 ps [21]. Free energy differences are computed according to:

ΔΔGbind = ΔGcomplex - ΔG_ligand

with numerical integration performed with and without linear extrapolation of dV/dλ to physical end states [21].

Schrödinger FEP+ Protocol FEP+ employs the OPLS force field with CM1A-BCC charges for ligands [21]. A key differentiator is the implementation on GPU platforms with FEP/REST (Replica Exchange with Solute Tempering) algorithm to accelerate conformational sampling [21] [23]. For challenging mutations in antibody design, additional strategies include: extended sampling times for bulky residues like tryptophan, continuum solvent-based loop prediction for glycine to alanine mutations, and incorporation of important glycan residues where structurally relevant [23].

Optimized TI Protocol with HREMD Wang et al. developed an optimized TI protocol specifically for antibody-antigen systems, incorporating: smooth step function to reduce energy spikes during charge-changing mutations, identification and exclusion of problematic λ windows with significant dV/dL deviation, and HREMD to enhance sampling convergence [24]. This protocol achieved optimal performance with 12 λ windows, 3 ns production time per window, and 6Å waterbox size [24].

Research Reagent Solutions

Table 3: Essential tools and resources for FEP/TI calculations

| Category | Specific Tools | Application Context | Key Features |

|---|---|---|---|

| Software Platforms | Schrödinger FEP+ [21] [26], AMBER [21] [24], GROMACS [21], OpenMM [25] | Commercial and academic implementations | FEP+ offers automated workflow with REST enhanced sampling; AMBER provides TI implementation with soft-core potentials |

| Force Fields | OPLS4 [19] [26], GAFF [21], ff19SB [24] | Parameterization of proteins and small molecules | OPLS4 demonstrated high accuracy in large-scale benchmarks; GAFF widely used for small organic molecules |

| System Setup Tools | FESetup [21], LOMAP [21], PMX [21], alchemical-setup.py [21] | Automated preparation of free energy calculations | LOMAP optimizes ligand transformation maps; FESetup supports multiple simulation packages |

| Enhanced Sampling | REST [21] [23], HREMD [20] [24], FEP/H-REMD [20] | Improved convergence for challenging transformations | REST applies local heating to perturbation region; HREMD exchanges configurations between λ windows |

| Analysis Tools | alchemical-analysis.py [21], alchemlyb [27], Bennett Acceptance Ratio [22] | Free energy estimation and uncertainty quantification | BAR method provides optimal estimator when sampling both forward and reverse directions |

Applicability and Limitations

Domain of Applicability

Both FEP and TI have demonstrated success across diverse target classes:

Membrane Proteins: Zhang et al. successfully applied both AMBER-TI and AToM-OpenMM to Class A GPCRs, demonstrating good agreement with experimental data for 53 transformations and validating the applicability of ΔΔG methods to membrane protein targets [25].

Protein-Protein Interactions: Abel et al. achieved remarkable accuracy (RMSE = 0.68 kcal/mol) for antibody-gp120 binding affinity predictions using FEP/REST, demonstrating applicability to large protein-protein interfaces with appropriate protocol adjustments [23].

Antibody Design: Both methods have been successfully applied to antibody optimization. Wang et al.'s optimized TI protocol identified beneficial mutations that improved binding affinity and neutralization potency of antibody 10-40 against SARS-CoV-2 omicron variants [24], while Schied et al. implemented large-scale FEP calculations for antibody variants with automated uncertainty estimation [22].

Current Limitations and Best Practices

System Preparation Challenges: Protonation states and tautomerization are easily overlooked but critically important. The Merck-Rutgers collaboration emphasized that careful consideration of ligand protonation and tautomer states significantly impacts accuracy [21].

Sampling Requirements: For perturbations with large free energy changes (|ΔΔG| > 2.0 kcal/mol), errors tend to increase significantly [27]. Such large perturbations should be treated with caution regardless of the method used.

Convergence Considerations: Kniazkov et al. found that most systems achieved accurate results with sub-nanosecond simulations per λ window, though some systems like TYK2 required longer equilibration (~2 ns) [27].

Transformation Planning: Structural similarity between transformed compounds significantly impacts accuracy. Planning tools like LOMAP can optimize transformation networks to minimize error accumulation [21].

Both FEP and TI provide rigorously physics-based approaches for predicting relative binding affinities with accuracy approaching experimental reproducibility. The choice between methods often depends on specific implementation details, available software infrastructure, and target system characteristics. Commercial implementations like Schrödinger FEP+ offer automated workflows with sophisticated enhanced sampling, while academic implementations of TI in packages like AMBER provide flexibility for method development and customization. Recent advances in force fields, enhanced sampling algorithms, and system preparation protocols have significantly expanded the domain of applicability for both methods to include challenging targets like membrane proteins, antibody-antigen complexes, and protein-protein interactions. When carefully applied with attention to system preparation, sampling adequacy, and uncertainty quantification, both FEP and TI can provide valuable insights for drug discovery and biomolecular engineering projects.

The accurate prediction of binding affinity represents a central challenge in computational drug discovery, directly impacting the efficiency of identifying and optimizing lead compounds. The journey from classical Quantitative Structure-Activity Relationship (QSAR) modeling to contemporary physics-informed artificial intelligence reflects a continuous pursuit of greater predictive accuracy and mechanistic insight. Traditional 2D-QSAR methods, which correlate molecular descriptors with biological activity using statistical approaches, have long served as foundational tools in cheminformatics [28] [29]. These methods utilize descriptors such as molecular weight, lipophilicity (LogP), and polar surface area to establish predictive relationships through algorithms including Multiple Linear Regression (MLR) and Partial Least Squares (PLS) [30] [29].

The evolution to 3D-QSAR methodologies marked a significant advancement by incorporating spatial molecular properties—such as shape, electrostatic potentials, and stereochemistry—into the predictive framework [31] [9]. This transition acknowledged that binding affinity is fundamentally governed by three-dimensional molecular interactions rather than merely two-dimensional structural patterns. Contemporary innovations have further advanced this field through physics-informed machine learning that integrates physical laws and quantum mechanical principles into deep learning architectures [32] [33]. This progression from correlative 2D descriptors to physics-based 3D models represents a paradigm shift toward more accurate, interpretable, and scientifically grounded binding affinity predictions.

Methodological Foundations: From 2D Descriptors to 3D Feature Spaces

Classical 2D-QSAR Approaches

Classical 2D-QSAR methodologies establish mathematical relationships between readily calculable molecular descriptors and biological activity using statistical modeling techniques. These approaches typically employ molecular descriptors including molecular weight, octanol-water partition coefficient (LogP), topological polar surface area (TPSA), hydrogen bond donor/acceptor counts, and various electronic parameters [28] [29]. The statistical foundation relies heavily on Multiple Linear Regression (MLR), Partial Least Squares (PLS), and Principal Component Regression (PCR) to construct predictive models [30] [28]. These methods are valued for their computational efficiency, interpretability, and minimal data requirements, making them particularly useful for preliminary screening and analyzing congeneric series with linear structure-activity relationships.

The robustness of classical 2D-QSAR models depends critically on rigorous validation protocols. Internal validation metrics include the coefficient of determination (R²) and cross-validated R² (Q²), while external validation assesses model performance on completely unseen compounds [28] [29]. For example, in developing 2D-QSAR models for Vesicular Acetylcholine Transporter (VAChT) inhibitors, researchers employed Genetic Algorithms for feature selection followed by PLS regression to identify the most relevant molecular descriptors [29]. Despite their utility, these methods face inherent limitations in capturing complex nonlinear relationships and properly representing the three-dimensional nature of molecular recognition events that govern binding affinity.

Advanced 3D-QSAR and Machine Learning Integration

Three-dimensional QSAR methodologies address fundamental limitations of 2D approaches by explicitly incorporating spatial molecular properties critical to binding interactions. Modern 3D-QSAR implementations utilize sophisticated machine learning algorithms including Random Forests (RF), Support Vector Machines (SVM), and Multilayer Perceptrons (MLP) to model the complex relationships between 3D molecular features and biological activity [31] [34]. These approaches featurize molecules using properties derived from their three-dimensional structure—such as molecular shape (from tools like ROCS), electrostatic potentials (calculated with EON), and directional hydrogen-bonding preferences [31] [9].

A key advantage of 3D-QSAR lies in its ability to provide structural interpretations of binding interactions by identifying favorable regions for specific molecular features within the binding site [31]. For instance, in predicting estrogen receptor-binding activity, MLP-based 3D-QSAR models demonstrated superior performance compared to traditional VEGA models, offering enhanced accuracy and sensitivity for assessing endocrine disruption potential [34]. Contemporary implementations also address the critical challenge of prediction confidence by providing error estimates that help researchers identify when predictions extend beyond the model's applicability domain and require more rigorous computational methods [31].

Physics-Informed and Quantum Machine Learning Approaches

The most recent evolutionary stage integrates physical laws and quantum computational principles into machine learning frameworks, creating a new class of physics-informed molecular models. These approaches address the fundamental mismatch between purely statistical correlations and the physical reality of protein-ligand binding [9] [32]. Techniques such as the Boltzmann-Gaussian Mixture (BGM) kernel incorporate force-field energies and physical constraints directly into the training process, enforcing molecular stability and realistic configurations [32]. This physics-aware training suppresses the generation of physically impossible "hallucinated" structures that can occur with purely data-driven generative models.

At the quantum computing frontier, Variational Quantum Regression (VQR) represents an emerging methodology that encodes classical molecular descriptors into parameterized quantum circuits [35]. These hybrid quantum-classical frameworks leverage quantum feature maps to capture higher-order correlations between molecular properties, demonstrating particular advantage in data-limited scenarios common during early-stage drug discovery [35]. In benchmark studies, VQR achieved a 32% improvement in Mean Squared Error compared to Support Vector Regression and maintained superior performance (R² > 0.85) with fewer than 500 training molecules, where classical methods required over 800 molecules to achieve comparable accuracy [35].

Table 1: Evolution of QSAR Methodologies in Drug Discovery

| Methodology | Molecular Representation | Key Algorithms | Representative Features | Interpretability |

|---|---|---|---|---|

| Classical 2D-QSAR | 1D/2D descriptors | MLR, PLS, PCR | Molecular weight, LogP, TPSA, HBD/HBA counts | High - Direct descriptor-activity relationships |

| 3D-QSAR with ML | 3D shape and electrostatics | RF, SVM, MLP | Shape overlap, electrostatic complementarity, pharmacophore features | Medium - Site interaction maps and region importance |

| Physics-Informed ML | 3D coordinates with physical constraints | Diffusion models, GNNs with physics loss | Force-field energies, symmetry operations, conformational strain | Medium-High - Physical plausibility and energy components |

| Quantum-Enhanced QSAR | Physicochemical descriptors in Hilbert space | Variational Quantum Circuits | Quantum kernels, entanglement-enhanced correlations | Medium - Gradient-based sensitivity analysis |

Comparative Performance Analysis

Accuracy Metrics Across Methodologies

Direct comparison of QSAR methodologies reveals a progressive improvement in predictive accuracy as models incorporate more sophisticated representations and physical constraints. In a comprehensive evaluation of histamine H3 receptor antagonists, classical 2D-QSAR methods including Multiple Linear Regression and Artificial Neural Networks demonstrated comparable performance, with Mean Absolute Percentage Error (MAPE) values ranging from 2.9 to 3.6 and Standard Deviation of Error of Prediction (SDEP) between 0.31-0.36 [30]. Notably, the HASL 3D-QSAR method in this study underperformed relative to the 2D approaches, highlighting that early 3D methodologies did not universally outperform well-constructed 2D models [30].

Contemporary 3D-QSAR implementations with advanced machine learning have demonstrated substantial improvements over these traditional approaches. For estrogen receptor-binding activity prediction, 3D-QSAR models employing Multilayer Perceptrons significantly outperformed established VEGA models in accuracy, sensitivity, and selectivity [34]. The most dramatic advances emerge with physics-informed frameworks, where MolEdit—a physics-aligned diffusion model—generated structurally valid molecules with comprehensive symmetry while maintaining an optimal balance between configuration stability and conformer diversity [32]. In the quantum computing domain, Variational Quantum Regression achieved a Mean Squared Error of 0.056 ± 0.009, representing a 28-32% improvement over classical Random Forest and Support Vector Regression baselines [35].

Table 2: Quantitative Performance Comparison Across QSAR Methodologies

| Methodology | Application Context | Performance Metrics | Comparative Performance |

|---|---|---|---|

| Classical 2D-QSAR (MLR/ANN) | Histamine H3 receptor antagonists | MAPE: 2.9-3.6; SDEP: 0.31-0.36 [30] | Reference baseline |

| HASL 3D-QSAR | Histamine H3 receptor antagonists | Lower predictive accuracy than 2D methods [30] | Underperformed 2D approaches |

| MLP 3D-QSAR | Estrogen receptor binding | Superior accuracy, sensitivity, selectivity vs. VEGA models [34] | Outperformed established QSAR platform |

| Physics-Informed ML (MolEdit) | 3D molecular generation | High validity, symmetry preservation, stable configurations [32] | Superior structural quality and stability |

| Variational Quantum Regression | Multi-target binding affinity | MSE: 0.056 ± 0.009; R²: 0.914 [35] | 32% improvement over SVR, 3.3× data efficiency |

Domain Applicability and Data Efficiency

Beyond raw accuracy, QSAR methodologies differ significantly in their domain applicability and data efficiency—critical considerations for practical drug discovery applications. Classical 2D-QSAR methods exhibit strong performance within their applicability domain but struggle with scaffold hopping and predicting activities for structurally novel compounds [9] [28]. Modern 3D-QSAR approaches demonstrate broader applicability across diverse chemical scaffolds by focusing on complementary 3D properties rather than specific structural motifs [31] [9].

Physics-informed models further extend the applicability domain by incorporating fundamental physical principles that generalize beyond training data distributions [32]. These approaches automatically respect molecular symmetry, stability constraints, and energy preferences, reducing dependence on extensive training data. The most pronounced data efficiency advantages appear in quantum-enhanced approaches, where Variational Quantum Regression maintained R² > 0.85 with as few as 200 training molecules, while classical methods required >800 molecules to achieve comparable accuracy [35]. This 4-fold improvement in data efficiency presents a compelling advantage for early-stage discovery programs with limited experimental data.

Experimental Protocols and Methodological Implementation

3D-QSAR Model Development Protocol

The implementation of robust 3D-QSAR models follows a structured protocol to ensure predictive validity and interpretability. The process initiates with molecular dataset preparation, where compounds with experimentally determined binding affinities are collected and standardized. For the estrogen receptor-binding study, this involved compiling a benchmark dataset with consistent binding measurements [34]. Subsequently, molecular alignment establishes a common reference frame by superimposing compounds based on their putative binding mode or pharmacophore features [31].

The critical featurization stage employs tools such as ROCS for shape description and EON for electrostatic characterization, generating 3D molecular field representations that capture steric and electronic complementarity [31]. These feature sets then train machine learning algorithms—typically Random Forest, Support Vector Machines, or Multilayer Perceptrons—using appropriate cross-validation strategies to prevent overfitting [34]. The final model interpretation phase identifies regions within the binding site where specific molecular features (hydrogen bond donors/acceptors, hydrophobic groups) correlate with enhanced binding affinity, providing medicinal chemists with actionable structural insights [31].

Physics-Informed Molecular Generation with MolEdit

The MolEdit framework implements a sophisticated physics-informed generative approach through a multi-stage protocol [32]. The process begins with asynchronous multimodal diffusion (AMD), which decouples the diffusion of molecular constituents from atomic positions through a two-stage generation strategy. This probabilistic decomposition handles discrete and continuous molecular variables separately, effectively managing the combinatorial complexity of 3D molecular structures [32].

A crucial innovation is group-optimized (GO) labeling, which reformulates training labels for denoising diffusion probabilistic models to respect translational, rotational, and permutation symmetries inherent in molecular systems [32]. This non-invasive, model-agnostic strategy ensures the learned diffusion process is symmetry-aware without requiring architectural modifications. The framework further incorporates physical constraints through Boltzmann-Gaussian Mixture (BGM) kernels that align the diffusion process with force-field energies and physical stability criteria [32]. This physics-informed preference alignment prioritizes realistic molecular configurations during both training and inference, suppressing physically implausible "hallucinated" structures that commonly occur with purely data-driven generative models.

Quantum Machine Learning Implementation

The Variational Quantum Regression (VQR) protocol implements a hybrid quantum-classical framework for binding affinity prediction [35]. The process initiates with molecular descriptor calculation, focusing on seven key physicochemical properties: molecular weight (MW), logP, topological polar surface area (TPSA), hydrogen bond donors (HBD), hydrogen bond acceptors (HBA), rotatable bonds, and aromatic ring count [35]. These classical descriptors undergo quantum encoding into a 6-qubit variational circuit using parameterized R𝑦 and R𝑧 rotations with controlled-Z entanglement gates.

The quantum circuit training optimizes parameters using a classical optimizer to minimize the difference between predicted and experimental binding affinities [35]. The resulting quantum kernels capture higher-order correlations between molecular features in Hilbert space, providing representational advantages particularly in low-data regimes. For model interpretation, an Explainable Quantum Pharmacology (EQP) framework performs gradient-based sensitivity analysis to identify dominant molecular descriptors, revealing TPSA and logP as critically important features consistent with established medicinal chemistry principles [35].

Diagram 1: QSAR Model Development Workflow - This flowchart illustrates the standardized protocol for developing QSAR models, encompassing data collection, descriptor calculation, model selection, training, validation, and interpretation stages.

Research Reagent Solutions: Computational Tools for Binding Affinity Prediction

Table 3: Essential Computational Tools for Modern QSAR Research

| Tool Category | Representative Software/Libraries | Primary Function | Methodological Application |

|---|---|---|---|

| Molecular Descriptors | alvaDesc [29], DRAGON [28], RDKit [28] | Calculation of 1D-3D molecular descriptors | Feature generation for classical and machine learning QSAR |

| 3D Molecular Alignment | ROCS [31], EON [31] | Shape-based superposition and electrostatic comparison | Molecular featurization for 3D-QSAR |

| Machine Learning Frameworks | Scikit-learn, TensorFlow, PyTorch | Implementation of ML algorithms (RF, SVM, ANN) | Model training and validation for 2D/3D-QSAR |

| Physics-Informed Modeling | MolEdit [32], Theory-Guided Neural Networks | Incorporation of physical constraints into AI models | Physics-aware molecular generation and property prediction |

| Quantum Machine Learning | Qiskit [35], Pennylane | Implementation of variational quantum circuits | Quantum-enhanced binding affinity prediction |

| Free Energy Calculations | FE-NES [31], FEP simulations | Physics-based binding affinity prediction | High-accuracy validation and complementary approach |

The comprehensive evaluation of machine learning approaches for binding affinity prediction reveals a clear evolutionary trajectory from classical 2D-QSAR to sophisticated physics-informed 3D models. While classical 2D methodologies remain valuable for congeneric series and interpretable screening, 3D-QSAR with machine learning demonstrates superior performance for scaffold hopping and structurally diverse compound sets. The emerging paradigm of physics-informed molecular learning addresses fundamental limitations of purely data-driven approaches by embedding physical constraints directly into model architectures, generating more realistic and stable molecular structures [32].

Future advancements will likely focus on hybrid workflows that leverage the complementary strengths of different approaches. As noted in recent commentary, "Using the two methods in parallel and averaging their predictions has been shown to improve accuracy" when combining physics-based simulation with physics-informed ML [9]. The sequential application of rapid 3D-QSAR screening followed by more computationally intensive free energy perturbation (FEP) calculations on top candidates represents an efficient strategy for exploring expanded chemical space with limited resources [9]. Emerging quantum machine learning approaches offer particular promise for data-limited scenarios common in early-stage discovery programs, though practical quantum advantage requires further validation on larger pharmaceutical datasets [35].